Reposted from The Cliff Mass Weather and Forecasting Blog

Last quarter I taught Atmospheric Sciences 101 and as a fun extra-credit activity students had the opportunity to participate in a forecasting competition in which they predicted temperatures and probability of precipitation at Sea-Tac Airport. The National Weather Service forecast is scored as well to provide a comparison to highly trained and experienced forecasts. In addition, we averaged the prediction of all the students, producing what is known as a consensus forecasts.

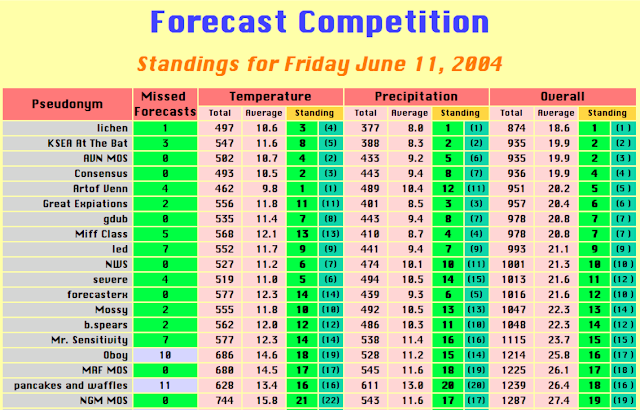

Now who do you think won? The pros at the Seattle National Weather Service office or the average of the inexperienced, weather newbies in my class? The answer is found below–the consensus of the students was considerably superior to the Weather Service folks (click on image to enlarge).

Students were number two overall and the NWS was in sixth place.

A fluke ? No–it happens this way virtually EVERY YEAR. To illustrate this, here are the results for 2004. In that year, the average of the students was fourth, the NWS experts were in 10th place. You will notice that some individual students sometimes came in first or ahead of the NWS…that could be just random luck due to the brevity of the forecast contest (1-1.5 months).

This phenomenon is often called the Wisdom of Crowds and has been the subject of a number of journal articles. So why might an average of the students be better than a NWS forecaster? Some possibilities include:

1. The average forecast of a group will tend to damp out forecast extremes, which produce very bad scores when they are wrong.

2. Students look at many different sources of information, using weather information in different ways and viewing many different forecasts (e.g., from various private sector groups). Forecasts derived from an average of many different sources tends to be more skillful on average.

3. Some of them might have took at look at superior forecasts, say form weather.com or accuweather.

I can think of other possibilities…perhaps you can too.

This wisdom of crowds finding is closely relate to why we make ensemble forecasts, running models many times, each slightly differently. The average of these many forecasts is on average the best forecast to use.

So next time you need a forecast, trying averaging the guesses of your friends or classmates. Does this idea apply to elections? Now that is a subject I think I want to avoid.

I was going to guess the best students used time travel, but everyone here told me I was wrong.

No, we didn’t.

At least, not yet. Give it time.

The astral plane they were tapping into is timeless.

The best way to get a reasonable forecast is to look out the window.

Believe me it works!

Cheers

Roger

if people are allowed to make dispassionate guesses with no influence then they will probably provide a ‘sensible’ output.

However, people are social animals, readily influenced by a variety of techniques, and if they have been exposed to political propaganda then they may well provide outputs which are biased…

Charles MacKay would disagree, saying that crowds popularize extraordinary delusions and are mad. My observations of politics suggests he is right.

The question is whether the people affect each others’ guesses.

Philip Tetlock points out that simple algorithms are often better predictors than experts are. Will a criminal re-offend? You’re often better off asking the psychiatrist’s secretary than asking the psychiatrist herself.

Experts are crappy at predicting things and they are annoyingly over-confident. As well, when you point out their errors, they have a huge armory of excuses.

If lay people don’t influence each others’ guesses, they are usually as accurate as the experts, and often much better.

BTW, Tetlock arranged his experiments so the experts couldn’t just make a 50/50 guess. In that light, guessing right 50% of the time would have been remarkably accurate. When we say that lay people are usually better than experts, we’re still talking way less than 50% accurate. A dart-throwing chimp can do as well.

Does the chimp need to have imbibed half its bodyweight in beer first?

Auto – yes, I want to know!

Sort of. Chimps have a taste for alcohol. link You can use beer as a motivator to keep them throwing darts.

The Canadian story used to be that American beer was notoriously low alcohol. Back in the 1960s, some of my Saskatchewan biker buddies went down to South Dakota and tried to get drunk on American beer. They told me they drank vast quantities and the only result was their kidneys got a real workout. This leads me to conclude that a chimp could indeed drink half its body weight in beer, given the right circumstances.

It’s interesting to note the difference between “wisdom of crowds” and “groupthink”

In wisdom of crowds each member acts independent and members do not influence each other

In groupthink members of the group do influence each other

The average prediction in wisdom of the crowds tends to be very good whilst in groupthink it’s very poor

Maybe this should be “the wisdom of aggregates” and the “madness of crowds”.

Excellent!

Many years ago I compiled the results and starting prices (odds) of about 60,000 UK races.

One of the most surprising things I found was that if you bet £1 on the favourite in every race you would, as near as makes no difference, break even. At first I was surprised about this as it meant that the odds on the favourite are the correct odds, but then I realised that they are determined by how much the public bets on them so they are, in effect, determined by the wisdom of crowds. This is why bookmakers don’t like favourites winning.

NOTE: Always betting on the 2nd, 3rd or nth favourite would always lose you money in the long term because these horses have their odds lowered.

If your knee which one was going to be favourite before time, ie which one must people would back, you would make a killing because at that time the odds would be higher (or lower, depending on nomenclature).

Having said that, if I got to know in advance I’d just pick the winner, I guess…

Your knee = you knew

If you can predict the way the odds will move then you can make money, but there is no reliable way of predicting this.

It was analysing the racing results that made me distrust climate models. I found that I could always find a betting strategy that would have made a profit had I used it on the 60,000 races whose data I had but they failed when used with current races.

Like climate models there were so many parameters (previous result, weight, stall, conditions, distance, course, jockey etc) that could be tweaked that I could produce any result I wanted for the past, but always failed when applied to the future.

For the sake of maximizing my enjoyment the time I went to the track, I bet on horses to “Show” (finish 1, 2, or 3). The payoff was less, but I lost much less frequently. Do you have any idea what might be the best betting strategy for picking horses to Show?

Possibly their previous record at placing 4th or higher. Certainly horses that are consistently in the last half of the pack are less likely long shots.

…if the kids looked out the window first….that’s cheating

weather records here truly suc….if not out and out f r a u d

I have extremely accurate thermometers…..greenhouses/orchids….this morning right at sunrise…69F

…checked official weather station.. 8 blocks away….they said it was 74F

can’t blame it on sun….wasn’t up yet…..5 degree difference is impossible…and they are consistently higher

8 blocks away wouldn’t happen to be in the middle of a concrete lot would it? Or maybe the courtyard of several large office blocks?

Morning temperatures at my place or consistently 5 to 10 degrees cooler than the radio station 8 miles away. (same elevation – my thermometer is in the middle of a cow pasture, theirs in the middle of their equipment lot.)

about the same thing Owen…..it’s at the Coast Guard station….next to the helicopter pad

So for this to work for climate forecasts you would need to get together both a dozen or so serious alarmists and a dozen serious deniers and have them do their best to forecast climate details for, say, 25 years in the future for a wide area (i.e. North America).

Their average forecast then becomes the consensus which according to this theory would be more accurate than the professional climate scientist and their models.

Do we even need to wait 25+ years to see who is right? Or should we accept the wisdom of crowds theory itself is good enough to bet our economic future on it now?

Weather forecasting is obsolete anyway. If I want to know what the weather is going to be tomorrow, I can just pick up the phone and call Tokyo or Sydney where it is already tomorrow and ask what the weather is like.

I heard the rimshot at the end of that sentence.

When I was a kid 60 years ago, Dad always told me that the way to know what the weather was going to be at our Southern Indiana farm tomorrow was to look at what it was doing in St Louis now. He was right more often than not.

So averaging guesses yields a better result than one individual guess.

Better maybe, but were any of the guesses correct? Better in that it was closer to the real number, but off by how much?

Averaging many guesses may have a better chance due to the central limiting theorem, but they are still guesses.

IMHO we should not begin collapsing western civilization based upon guesses.

If there is a modal or typical weather for a day of the year, and the average of the students tends towards the mode, then presto chango, you have a prediction that will be correct equal to the frequency with which the mode occurs. Throw in the persistence of weather and who knows. I had a student who tracked weather for several months one fall. Predicting that the weather tomorrow would be the same as today gave very close to the same accuracy as Environment Canada.

Averaging ensemble weather model forecasts may make for better overall weather forecasts but averaging ensemble climate model forecasts have shown no efficacy at improving average climate forecasts, unless you consider an absurd forecast an improvement over an unbelievably absurd forecast.

As for crowd forecasting, the average citizen of the USA ranks ‘climate change/AGW’ as one of the lowest priority issues.

https://www.people-press.org/2019/01/24/publics-2019-priorities-economy-health-care-education-and-security-all-near-top-of-list/

As usual, George Carlin nailed weather forecasting years ago:

https://youtu.be/Z2HpB5CGfLQ

Students and people in general are unique individuals. Even the most similar will still prioritize information differently, process data differently and choose their conclusions differently.

Models are programs.

Inputs are the same.

Priorities are the same.

Processing is the same.

Conclusions are only different because of programs initializing the next module differently because of random factors included in the programming.

A claim that conflates individuals with identical program logic.

The “Wisdom of Crowds” claim for multiple model runs is false.

ATheoK: As you noted, the article claims: ” ensemble forecasts .. running models many times, each slightly differently”

That seems odd to me, too.

You said: ” … because of random factors included in the programming … ”

And that does seem like the only way to get a variety of results from the same model (unless parameters are altered between runs). But that means these climate models are non-deterministic.

“But that means these climate models are non-deterministic.”

Actually, a non-linear chaotic system is 100% deterministic and yet extremely small differences in initial conditions will quickly lead to very different outcomes of the system (see The Butterfly Effect). So all they actually need to do is to make very small changes to the initial conditions and they will end up with very different outcomes.

However, like ATheoK, I am very doubtful that averaging the output of multiple runs of a chaotic system is the same as averaging the outputs of many unique individuals.

“However, like ATheoK, I am very doubtful that averaging the output of multiple runs of a chaotic system is the same as averaging the outputs of many unique individuals.”

1. Multiple models are used, not just multiple runs of a single model.

2. It’s an established fact that averaging the models and runs provides more skill.

3. Nobody has a good theory about why #2 is true.

4. Only skeptics deny the established fact.

S Mosher it does not matter how models you have if all the models have a bias which is wrong eg all the climate models which included CO2 as an important variable will be wrong and has been shown by numerous articles.

Looking at historical true records and sighting periodic changes will give a much better forecast. I have 126 years of monthly rainfall close to my place and decades of daily data.

I know that rainfall is seasonal. I also know that after a dry period of some seven years (often an official drought) there is a wet period ( with often with floods). One should note dry and hot go together. There is no evidence over that 126 years that rainfall has any relationship to CO2. In fact in Australia the highest rainfall was at the end of the 19th century and the lowest at the beginning of the 20th century (called the Federation drought)

Nick Stokes tried to convince me the weather predictions are over 90% accurate out to 5 days. I might have bought it but of course it was on a day that we had just had 6” of snow when we were supposed to get rain.

A plug for the IPCC “ensemble” projections! The trouble with ensemble projections done by activist scientists is the forecasts are virtually all extremely warm by design. If each forecast added a ‘Le Chatlelier Principle factor’ which appears to have virtually universal application in physical systems and has even been used by economist Samuelson in econometric operations. Le Chatelier observed it in chemical reactions in which the system reacts to counter changes – i.e. it’s as if a given equilibrium condition has some “momentum” and the change is less than expected. From Wiki (to satisfy the farthest out critics):

https://en.wikipedia.org/wiki/Le_Chatelier%27s_principle

“When a settled system is disturbed, it will adjust to diminish the change that has been made to it..”

Strangely, although chemists are familiar with it, it doesn’t seem to be understood as being applicable to other sciences (Le Chatelier himself apparently thought it only applied to chemical reactions). Things like the ‘back EMF’ of motors and generators, and even Newton’s laws of motion might be understood as having the effect. ‘If I push against a wall, it pushes back with an equal and opposite force until the initiating force overcomes the strength of the wall.

Willis’s thermostat or ‘governor’ mechanism for tropical ocean heating can also be seen in this way. Emergent phenomena ‘appear’ to resist the heating of the sea surface. “The more ‘agents’ a system has in its make-up, the greater will be the resistance” (This is Pearse’s axiom (?)). So you see, “it’s the (bare bones) physics” isn’t exactly so in climate science.

Most interesting. However, I’ll point out one thing. Cliff says:

There are two very, very different things that people mean when they say “ensemble forecast”.

One is where you run a model many times with slightly different initializations. This can indeed lead to better forecasts.

The other is where you run many different models once … and that one can easily lead to garbage.

Just saying, we need a bit of clarity in the terminology.

w.

Well if it happens “virtually EVERY YEAR” , why did he have to go back to 2004 to “illustrate” the effect?

Why not just show last year, or the year before. Hey, it happens “virtually EVERY YEAR”.

What was that thing about the significance of a result diminishing as a function of the number of times you have to look for it happening?

running multiple models is exactly like wisdom of the crowds.

see huricane forecasting FFS

simple fact is the multi model mean has skill. fact.

Is the skill of the multi model mean better than the skill of a single model from the ensemble?

I ask this because AFAIK the models in such an ensemble are highly correlated. After all they are all based on the same set of physical laws. They only differ in small details (mostly parameterisation schemes) and the numerical method used to solve the resulting differential equations (e.g. spatial vs integration).

Averaging highly correlated models does not reduce the uncertainty of the average much because such an average contains covariance terms which cancel uncertainty reduction in the average.

If you all overly weight CO2 and high ECS of a doubling because that is what the consensus insists, then you dont have the benefit of other ideas. You may get a better forecast because of averaging down outlier forecasts but a 300% overestimate of warming is not qualitatively a better forecast. It is rather a clarion call to slash consensus biases on parameters of the models. A better way would appear to be to use the ensemble as a filter for redoing the models to better match observations. In the condiderably over warm forecasts, throw away 75% of the upper range models and look for ways to flatten the remaining ones down a bit. With uncertainty about feedbacks, put in a “Le Chatelier” negative feedback factor to force them int line with ibservations

See comment above: https://wattsupwiththat.com/2019/04/03/the-genius-of-crowd-weather-forecasting/#comment-2671897

What was the scoring algorithm?

How did you reward high or low probabilities

When comparing actuals?

How does the scoring work on a coin flip or calling a 6 on a six-sided die?

Perhaps the official forecast must lean towards the worse case scenario. If you forecast cloudy skies & light rain and it ends up partly cloudy & dry, nobody cares. If you forecast partly cloudy & dry and it gets cloudy with light rain you are a know nothing bum.

… or yet get sued for not having provided sufficient warning of an oncoming danger.

Yes, official forecasts do seem to err on the side of caution by giving worst case scenarios.

Bingo. Whatever the official forecasters assess is most likely, their report is frequently adjusted in a way not to inconvenience an unprepared public too much. A crowd of students will have no such comparable motive and so will stick closer to their best estimate on the merits (such as they may be).

Maybe it’s as simple as the fact that NWS is a government agency, and when you work for the government there are little to no repercussions when you get things wrong. Try that in the private sector and see how long you last (unless you’re really good at the heinie-lick maneuver).

It has nothing to do with wisdom, but just indicates that reasonably informed wild-ass guesses are just as good as the highly paid forecasters. That, should tell you everything you need to know.

he took at look at ; they took at look at ….

he may have taken; they may have taken.

Damned trickily language, English 😉

And the evidence that weather.com and accuweather are superior forecasts is…?

Yes… Weather.com and accuweather are frequently the worst forecasts for my area. NWS is usually better.

The usual crowd has been at this for thirty years, how well has the ensemble forecast done so far?

300% too hot.

So he cherry picks two years out of the last 20 , presumably those which best support his claim, without reporting the rest. His table of results is meaningless as it is presented.

Does this show that “consensus science” is best or that NWS climate model is hopeless ( no one would have suspected that ).

How would a “consensus” of random model runs score, given the mean and std dev of previous years for the same month?

Is this supposed to boost our faith in “consensus” science or emsemble means of flakey CMIP models?

Good teaching exercise. I hope it didn’t teach the wrong thing.

Meteorologists and climatologists get paid to warn the public.

The location gave me a chuckle. Picnic forecasts.

On second reading I am not really convinced.

We are averaging the results of the entire class here. If we assume the class is filled with reasonably intelligent people then the secondary assumption is they did some background research on the area. So, with access to historical records, our class may have taken the average max and min based on records and plugged that data into their predictions. So we get guesses, based on averages, that are then averaged and surprise! they match the observed.

Are we really surprised by this? Looking at the average recorded anything gives you the safe middle of the road guess. We already know what this is going to be. Ask anyone who is a mid to long term resident in their area what the weather is likely to be like for any given future month and they are likely to be correct. Summer months will be warm and sunny. Winter, cold and wet. These are safe guesses and give safe results.

Where the system falls over is asking for predictions on the significant deviations. It is stating well in advance that it is going to snow on 17th September, despite the fact that everyone knows it never snows in September and then being completely on the money. It is predicting these significant deviations to the normal that are useful and, on casual inspection at least, I see no evidence that our crowd based weather forecasters are able to do this.

Also I am not convinced that the ‘Wisdom of Crowds’ is literally what is claims to be. The idea of a ‘Wise Crowd’ as described by Surowiecki is actually less about a crowd collectively producing a ‘better answer’ and, from my understanding of his claims, actually simply an extension of the fact that TEAMS produce better work due to the increased skill sets being combined. This ignores the fact that for a team to work they still need direction and are usually brought together for a defined objective.

The atom was not split by quizzing people each morning when they went to the subway. Asking 100 people who know 1% about a topic does not mean you now know 100% of the problem, it means you get 90 people telling you that atoms are really small and maybe, if you are lucky, the last 10 telling you that there is ‘protons and electrons and stuff’.

Crowds are random people. They know random things and interact only in the sense they stand in the same area. Unless you collect experts and manage them with a clear objective you are not going to get amazing results and if you collect and manage then you no longer have a crowd, you have a team.

So, in summary, I am not convinced.

Hmmm. Predicting seasonal temperatures and precipitation with a known range that is fairly tight (because it is seasonal) seems an exercise in “what’s the point”? Warmers will guess on the warm side of the range. Skeptics will guess on the cooler side of the range. Wisdom will guess in the middle of the range with slight adjustments. All guesses are likely within the range. I don’t think much can be determined here with any significance.

Pamela – Cold outbreaks are slightly colder and more frequent in the storm tracks because warming has shifted the wave pattern. Noticeable since 2011 if you look at the 700mb flow..