Guest Post by Willis Eschenbach

I’m 74, and I’ve been programming computers nearly as long as anyone alive.

When I was 15, I’d been reading about computers in pulp science fiction magazines like Amazing Stories, Analog, and Galaxy for a while. I wanted one so badly. Why? I figured it could do my homework for me. Hey, I was 15, wad’ja expect?

I was always into math, it came easy to me. In 1963, the summer after my junior year in high school, nearly sixty years ago now, I was one of the kids selected from all over the US to participate in the National Science Foundation summer school in mathematics. It was held up in Corvallis, Oregon, at Oregon State University.

It was a wonderful time. I got to study math with a bunch of kids my age who were as excited as I was about math. Bizarrely, one of the other students turned out to be a second cousin of mine I’d never even heard of. Seems math runs in the family. My older brother is a genius mathematician, inventor of the first civilian version of the GPS. What a curious world.

The best news about the summer school was, in addition to the math classes, marvel of marvels, they taught us about computers … and they had a real live one that we could write programs for!

They started out by having us design and build logic circuits using wires, relays, the real-world stuff. They were for things like AND gates, OR gates, and flip-flops. Great fun!

Then they introduced us to Algol. Algol is a long-dead computer language, designed in 1958, but it was a standard for a long time. It was very similar to but an improvement on Fortran in that it used less memory.

Once we had learned something about Algol, they took us to see the computer. It was huge old CDC 3300, standing about as high as a person’s chest, taking up a good chunk of a small room. The back of it looked like this.

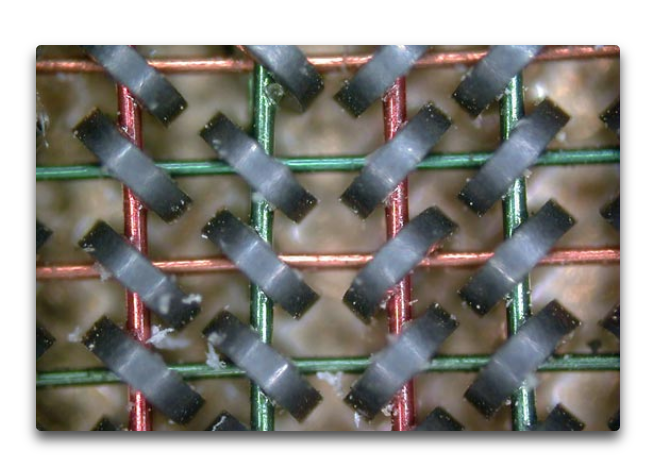

It had a memory composed of small ring-shaped magnets with wires running through them, like the photo below. The computer energized a combination of the wires to “flip” the magnetic state of each of the small rings. This allowed each small ring to represent a binary 1 or a 0.

How much memory did it have? A whacking great 768 kilobytes. Not gigabytes. Not megabytes. Kilobytes. Thats one ten-thousandth of the memory of the ten-year-old Mac I’m writing this on.

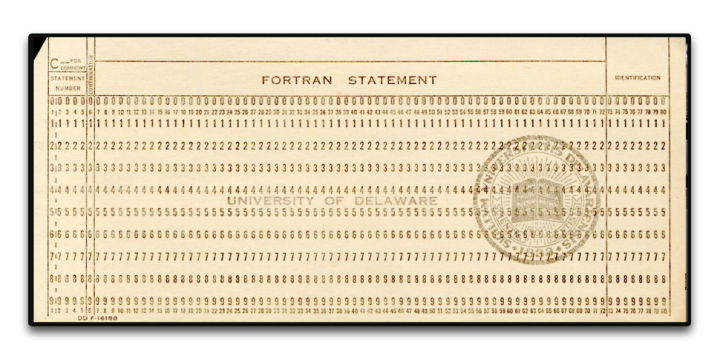

It was programmed using Hollerith punch cards. They didn’t let us anywhere near the actual computer, of course. We sat at the card punch machines and typed in our program. Here’s a punch card, 7 3/8 inches wide by 3 1/4 inches high by 0.007 inches thick. (187 x 83 x.018 mm).

The program would end up as a stack of cards with holes punched in them, usually 25-50 cards or so. I’d give my stack to the instructors, and a couple of days later I’d get a note saying “Problem on card 11”. So I’d rewrite card 11, resubmit them, and get a note saying “Problem on card 19” … debugging a program written on punch cards was a slooow process, I can assure you

And I loved it. It was amazing. My first program was the “Sieve of Eratosthenes“, and I was over the moon when it finally compiled and ran. I was well and truly hooked, and I never looked back.

The rest of that summer I worked as a bicycle messenger in San Francisco, riding a one-speed bike up and down the hills delivering blueprints. I gave all the money I made to our mom to help support the family. But I couldn’t get the computer out of my mind.

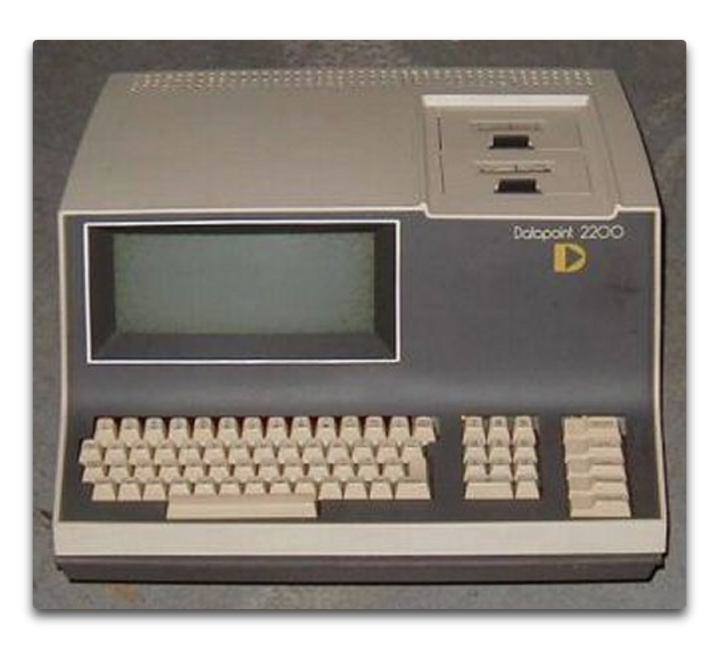

Ten years later, after graduating from high school and then dropping out of college after one year, I went back to college specifically so I could study computers. I enrolled in Laney College in Oakland. It was a great school, about 80% black, 10% Hispanic, and the rest a mixed bag of melanin-deficient folks. (I’m told than nowadays the polically-correct term is “melanin-challenged”, to avoid offending anyone.) The Laney College Computer Department had a Datapoint 2200 computer, the first desktop computer.

It had only 8 kilobytes of memory … but the advantage was that you could program it directly. The disadvantage was that only one student could work on it at any time. However, the computer teacher saw my love of the machine, so he gave me a key to the computer room so I could come in before or after hours and program to my heart’s content. I spent every spare hour there. It used a language called Databus, my second computer language.

The first program I wrote for this computer? You’ll laugh. It was a test to see if there was “precognition”. You know, seeing the future. My first version, I punched a key from 0 to 9. Then the computer picked a random number, and recorded if I was right or not.

Finding I didn’t have precognition, I re-wrote the program. In version 2, the computer picked the number before, rather than after, I made the selection. No precognition needed. Guess what?

No better than random chance. And sadly, that one-semester course was all that Laney College offered. That’s the extent of my formal computer education. The rest I taught myself, year after year, language after language, concept after concept, program after program.

Ten years after that, I bought the first computer I ever owned — the Radio Shack TRS-80, AKA the “Trash Eighty”. It was the first notebook-style computer. I took that sucker all over the world. I wrote endless programs on it, including marine celestial navigation programs that I used to navigate by the stars between islands the South Pacific. It was also my first introduction to Basic, my third computer language.

And by then IBM had released the IBM PC, the first personal computer. When I returned to the US I bought one. I learned my fourth computer language, CPM. I wrote all kinds of programs for it. But then a couple years later Apple came out with the Macintosh. I bought one of those as well, because of the mouse and the art and music programs. I figured I’d use the Mac for creating my art and my music and such, and the PC for serious work.

But after a year or so, I found I was using nothing but the Mac, and there was a quarter-inch of dust on my IBM PC. So I traded the PC for a piano, the very piano here in our house that I played last night for my 19-month-old granddaughter, and I never looked back at the IBM side of computing.

I taught myself C and C++ when I needed speed to run blackjack simulations … see, I’d learned to play professional blackjack along the way, counting cards. And when my player friends told me how much it cost for them to test their new betting and counting systems, I wrote a blackjack simulation program to test the new ideas. You need to run about a hundred thousand hands for a solid result. That took several days in Basic, but in C, I’d start the run at night, and when I got up the next morning, the run would be done. I charged $100 per test, and I thought “This is what I wanted a computer for … to make me a hundred bucks a night while I’m asleep.”

Since then, I’ve never been without a computer. I’ve written literally thousands and thousands of programs. On my current computer, a ten-year-old Macbook Pro, a quick check shows that there are well over 4,000 programs I’ve written. I’ve written programs in Algol, Datacom, 68000 Machine Language, Basic, C/C++, Hypertalk, Forth, Logo, Lisp, Mathematica (3 languages), Vectorscript, Pascal, VBA, Stella computer modeling language, and these days, R.

I had the immense good fortune to be directed to R by Steve McIntyre of ClimateAudit. It’s the best language I’ve ever used—free, cross-platform, fast, with a killer user interface and free “packages” to do just about anything you can name. If you do any serious programming, I can’t recommend it enough.

Oh, yeah, somewhere in there I spent a year as the Service Manager for an Apple Dealership. As you might guess given my checkered history, it wasn’t in some logical location … it was in downtown Suva, in Fiji. There I fixed a lot of computers and I learned immense patience dealing with good folks who truly thought that the CD tray that came out of the front of their computer when they did something by accident was a coffee cup holder … oh, and I also installed the Macintosh hardware for the Fiji Government Printers and trained the employees how to use Photoshop. I also taught two semesters of Computers 101 at the Fiji Institute of Technology.

I bring all of this up to let you know that I’m far, far from being a novice, a beginner, or even a journeyman programmer. I was working with “computer based evolution” to try to analyze the stock market before most folks even heard of it. I’m a master of the art, able to do things like write “hooks” into Excel that let Excel transparently call a separate program in C for its wicked-fast speed, and then return the answer to a cell in Excel …

Now, folks who’ve read my work know that I am far from enamored of computer climate models. I’ve been asked “What do you have against computer models?” and “How can you not trust models, we use them for everything?”

Well, based on a lifetime’s experience in the field, I can assure you of a few things about computer climate models and computer models in general. Here’s the short course.

• A computer model is nothing more than a physical realization of the beliefs, understandings, wrong ideas, and misunderstandings of whoever wrote the model. Therefore, the results it produces are going to support, bear out, and instantiate the programmer’s beliefs, understandings, wrong ideas, and misunderstandings. All that the computer does is make those under- and misunder-standings look official and reasonable. Oh, and make mistakes really, really fast. Been there, done that.

• Computer climate models are members of a particular class of models called “Iterative” computer models. In this class of models, the output of one timestep is fed back into the computer as the input of the next timestep. Members of his class of models are notoriously cranky, unstable, and prone to internal oscillations and generally falling off the perch. They usually need to be artificially “fenced in” in some sense to keep them from spiraling out of control.

• As anyone who has ever tried to model say the stock market can tell you, a model which can reproduce the past absolutely flawlessly may, and in fact very likely will, give totally incorrect predictions of the future. Been there, done that too. As the brokerage advertisements in the US are required to say, “Past performance is no guarantee of future success”.

• This means that the fact that a climate model can hindcast the past climate perfectly does NOT mean that it is an accurate representation of reality. And in particular, it does NOT mean it can accurately predict the future.

• Chaotic systems like weather and climate are notoriously difficult to model, even in the short term. That’s why projections of a cyclone’s future path over say the next 48 hours are in the shape of a cone and not a straight line.

• There is an entire branch of computer science called “V&V”, which stands for validation and verification. It’s how you can be assured that your software is up to the task it was designed for. Here’s a description from the web

What is software verification and validation (V&V)?

Verification

820.3(a) Verification means confirmation by examination and provision of objective evidence that specified requirements have been fulfilled.

“Documented procedures, performed in the user environment, for obtaining, recording, and interpreting the results required to establish that predetermined specifications have been met” (AAMI).

Validation

820.3(z) Validation means confirmation by examination and provision of objective evidence that the particular requirements for a specific intended use can be consistently fulfilled.

Process Validation means establishing by objective evidence that a process consistently produces a result or product meeting its predetermined specifications.

Design Validation means establishing by objective evidence that device specifications conform with user needs and intended use(s).

“Documented procedure for obtaining, recording, and interpreting the results required to establish that a process will consistently yield product complying with predetermined specifications” (AAMI).

Further V&V information here.

• Your average elevator control software has been subjected to more V&V than the computer climate models. And unless a computer model’s software has been subjected to extensive and rigorous V&V. the fact that the model says that something happens in modelworld is NOT evidence that it actually happens in the real world … and even then, as they say, “Excrement occurs”. We lost a Mars probe because someone didn’t convert a single number to metric from Imperial measurements … and you can bet that JPL subjects their programs to extensive and rigorous V&V.

• Computer modelers, myself included at times, are all subject to a nearly irresistible desire to mistake Modelworld for the real world. They say things like “We’ve determined that climate phenomenon X is caused by forcing Y”. But a true statement would be “We’ve determined that in our model, the modeled climate phenomenon X is caused by our modeled forcing Y”. Unfortunately, the modelers are not the only ones fooled in this process.

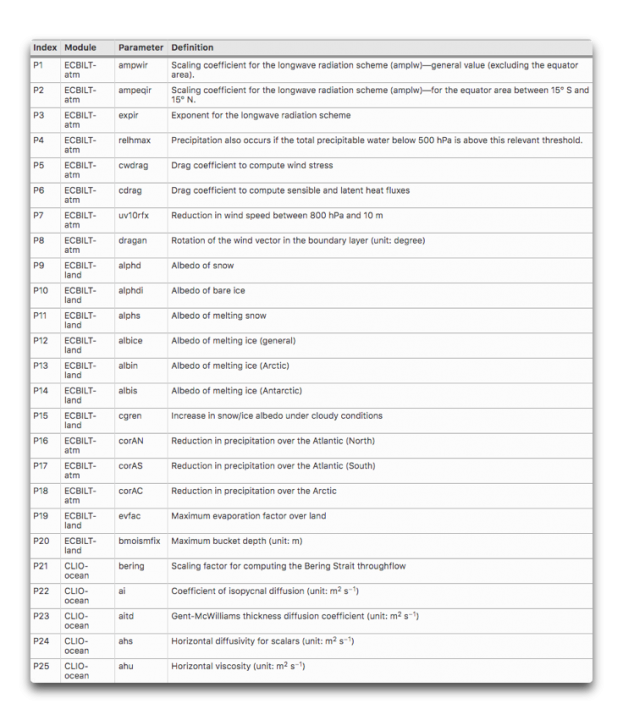

• The more tunable parameters a model has, the less likely it is to accurately represent reality. Climate models have dozens of tunable parameters. Here are 25 of them, there are plenty more.

What’s wrong with parameters in a model? Here’s an oft-repeated story about the famous physicist Freeman Dyson getting schooled on the subject by the even more famous Enrico Fermi …

By the spring of 1953, after heroic efforts, we had plotted theoretical graphs of meson–proton scattering. We joyfully observed that our calculated numbers agreed pretty well with Fermi’s measured numbers. So I made an appointment to meet with Fermi and show him our results. Proudly, I rode the Greyhound bus from Ithaca to Chicago with package of our theoretical graphs to show to Fermi.

When I arrived in Fermi’s office, I handed the graphs to Fermi, but he hardly glanced at them. He invited me to sit down, and asked me in a friendly way about the health of my wife and our new-born baby son, now fifty years old. Then he delivered his verdict in a quiet, even voice. “There are two ways of doing calculations in theoretical physics”, he said. “One way, and this is the way I prefer, is to have a clear physical picture of the process that you are calculating. The other way is to have a precise and self- consistent mathematical formalism. You have neither.”

I was slightly stunned, but ventured to ask him why he did not consider the pseudoscalar meson theory to be a self- consistent mathematical formalism. He replied, “Quantum electrodynamics is a good theory because the forces are weak, and when the formalism is ambiguous we have a clear physical picture to guide us. With the pseudoscalar meson theory there is no physical picture, and the forces are so strong that nothing converges. To reach your calculated results, you had to introduce arbitrary cut-off procedures that are not based either on solid physics or on solid mathematics.”

In desperation I asked Fermi whether he was not impressed by the agreement between our calculated numbers and his measured numbers. He replied, “How many arbitrary parameters did you use for your calculations?” I thought for a moment about our cut-off procedures and said, “Four.” He said, “I remember my friend Johnny von Neumann used to say, with four parameters I can fit an elephant, and with five I can make him wiggle his trunk.” With that, the conver- sation was over. I thanked Fermi for his time and trouble, and sadly took the next bus back to Ithaca to tell the bad news to the students.

• The climate is arguably the most complex system that humans have tried to model. It has no less than six major subsystems—the ocean, atmosphere, lithosphere, cryosphere, biosphere, and electrosphere. None of these subsystems is well understood on its own, and we have only spotty, gap-filled rough measurements of each of them. Each of them has its own internal cycles, mechanisms, phenomena, resonances, and feedbacks. Each one of the subsystems interacts with every one of the others. There are important phenomena occurring at all time scales from nanoseconds to millions of years, and at all spatial scales from nanometers to planet-wide. Finally, there are both internal and external forcings of unknown extent and effect. For example, how does the solar wind affect the biosphere? Not only that, but we’ve only been at the project for a few decades. Our models are … well … to be generous I’d call them Tinkertoy representations of real-world complexity.

• Many runs of climate models end up on the cutting room floor because they don’t agree with the aforesaid programmer’s beliefs, understandings, wrong ideas, and misunderstandings. They will only show us the results of the model runs that they agree with, not the results from the runs where the model either went off the rails or simply gave an inconvenient result. Here are two thousand runs from 414 versions of a model running first a control and then a doubled-CO2 simulation. You can see that many of the results go way out of bounds.

As a result of all of these considerations, anyone who thinks that the climate models can “prove” or “establish” or “verify” something that happened five hundred years ago or a hundred years from now is living in a fool’s paradise. These models are in no way up to that task. They may offer us insights, or make us consider new ideas, but they can only “prove” things about what happens in modelworld, not the real world.

Be clear that having written dozens of models myself, I’m not against models. I’ve written and used them my whole life. However, there are models, and then there are models. Some models have been tested and subjected to extensive V&V and their output has been compared to the real world and found to be very accurate. So we use them to navigate interplanetary probes and design new aircraft wings and the like.

Climate models, sadly, are not in that class of models. Heck, if they were, we’d only need one of them, instead of the dozens that exist today and that all give us different answers … leading to the ultimate in modeler hubris, the idea that averaging those dozens of models will get rid of the “noise” and leave only solid results behind.

Finally, as a lifelong computer programmer, I couldn’t disagree more with the claim that “All models are wrong but some are useful.” Consider the CFD models that the Boeing engineers use to design wings on jumbo jets or the models that run our elevators. Are you going to tell me with a straight face that those models are wrong? If you truly believed that, you’d never fly or get on an elevator again. Sure, they’re not exact reproductions of reality, that’s what “model” means … but they are right enough to be depended on in life-and-death situations.

Now, let me be clear on this question. While models that are right are absolutely useful, it certainly is also possible for a model that is wrong to be useful.

But for a model that is wrong to be useful, we absolutely need to understand WHY it is wrong. Once we know where it went wrong we can fix the mistake. But with the complex iterative climate models with dozens of parameters required, where the output of one cycle is used as the input to the next cycle, and where a hundred-year run with a half-hour timestep involves 1.75 million steps, determining where a climate model went off the track is nearly impossible. Was it an error in the parameter that specifies the ice temperature at 10,000 feet elevation? Was it an error in the parameter that limits the formation of melt ponds on sea ice to only certain months? There’s no way to tell, so there’s no way to learn from our mistakes.

Next, all of these models are “tuned” to represent the past slow warming trend. And generally, they do it well … because the various parameters have been adjusted and the model changed over time until they do so. So it’s not a surprise that they can do well at that job … at least on the parts of the past that they’ve been tuned to reproduce.

But then, the modelers will pull out the modeled “anthropogenic forcings” like CO2, and proudly proclaim that since the model no longer can reproduce the past gradual warming, that demostrates that the anthropogenic forcings are the cause of the warming … I assume you can see the problem with that claim.

In addition, the gridsize of the computer models are far larger than important climate phenomena like thunderstorms, dust devils, and tornados. If the climate model is wrong, is it because it doesn’t contain those phenomena? I say yes … computer climate modelers say nothing.

Heck, we don’t even know if the Navier-Stokes fluid dynamics equations as they are used in the climate models converge to the right answer, and near as I can tell, there’s no way to determine that.

To close the circle, let me return to where I started—a computer model is nothing more than my ideas made solid. That’s it. That’s all.

So if I think CO2 is the secret control knob for the global temperature, the output of any model I create will reflect and verify that assumption.

But if I think (as I do) that the temperature is kept within narrow bounds by emergent phenomena, then the output of my new model will reflect and verify that assumption.

Now, would the outputs of either of those very different models be “evidence” about the real world?

Not on this planet.

And that is the short list of things that are wrong with computer models … there’s more, but as Pierre said, “the margins of this page are too small to contain them” …

My very best to everyone, stay safe in these curious times,

w.

PS—When you comment, quote what you’re talking about. If you don’t, misunderstandings multiply.

H/T to Wim Röst for suggesting I write up what started as a comment on my last post.

A comment for Brian Jackson, who for some reason has an antipathy to me and is doing his best to tear me down. He claims I’m boasting about my computer skills. Not the case.

In fact, there are plenty of people who can program rings around me. They usually have PhD’s in Computer Something, like say Gavin Schmidt.

Me, I’m self-taught in programming. I learned all of my computer languages but the first two by the RTFM method. In fact, I’m self-taught in all of science—college Intro to Physics and Intro to Chemistry are as far as I ever went.

As a result, when I propose some scientific idea or make some scientific claim, far too many people like Brian attack my credentials rather than attacking my ideas. Note that he hasn’t said one word about the subject of the post, the problems with computer models.

So for this post, I figured I’d see if I could short-circuit some of those attacks on my obvious lack of any scientific credentials by describing how I mastered computer programming—through unending personal effort.

Still, that’s not enough for Brian Jackson. I well remember using CP/M to do all kinds of things on my IBM PC, so I called it a “computer language”, which I take to be any way to communicate with a computer. Sadly Brian views this as some kind of indicator that I’m truly clueless, and not what he loudly proclaims that he is, a “real” computer programmer.

Call it what you like, Brian, a language or an operating system. I’m used to folks like you who think that ad hominem attacks and nit-picking about semantics and meaningless details are a valuable substitute for actually discussing the ideas I present.

Pass … go convince someone else that you’re a “real” computer programmer, Brian. Here, you’ve already convinced most people that you are a real pric … pri … prince.

w

Take heart Willis. I’ve seen Brian’s type, probably a “young” 30’s something with Masters in something and he thinks because he learned some climate stuff from a few books and professors who acted like gods, he knows all there is to know. One thing I learned going from Masters to PhD work, is you realize that what the books say (Master level – take it on faith, replicate some well proven fact in masters thesis-graduate) and then going to: trying to fully replicate it and then go on to a new level or direction yourself (PhD work) you realize the first guys were either incredibly lucky or liars. Getting a PhD in a hard lab science at a real institution with a real thesis committee is a humbling experience, in realizing both how much of what is in college text books, and especially peer reviewed papers, is likely wrong, and how to figure out “right” answers leads down so many rabbit holes.

As such, I know Brian. He’s like the “Karens” of the social media media, all huff and puff and outrage. But Brian and his arguments wouldn’t last 3 minutes in a debate with an informed skeptic on climate change. PhD or not. It’s why the PhD Gavin Schmidt’s runs from the PhD “Roy Spencers.” Just like Dementia Biden, they’re in hid’in. They know in a live Q&A with an informed questioner will show to world they “have no clothes on.”

Sometimes it’s easier to pull someone down rather than climb to their level.

Willis,

Do what I do and just ignore Brian Jackass.

Thanks, Paul. I’m to that point. Up until now, I figured there was a chance he was convincing some of the lurkers. At this point, he’s just taking a shotgun to his own toes …

w.

Willis,as my dad use to say: “Just consider the source. It ain’t worth worrying about.”

Ric Werme’s Three Rules for dealing with trolls:

1) Don’t reply right away.

Trolls thrive on acknowledgement. The content doesn’t matter, the attention does. The quicker you reply, the stronger the reward.

2) Don’t reply unless you are adding to the discussion.

I hate the he said, she said, he said again, she said again nature of trolling.

By the time repetition sets in, other readers (if there are any left) realize its trolling.

See point 1).

3) Let the troll have the last word.

He will take it anyway.

See point 1).

Science is all about nit-picking semantics and details. That is why you are not a a scientist. You are an amateur trying to play with the big boys. I don’t have to convince anybody about anything, but I will call you out on your BS and poke at that inflated ego of yours. Your misuse of the term “computer language” make computer professionals laugh at you. (ha ha ha ha)

.

” I mastered computer programming” not you have not. You are an amateur. You attack computer models because of your ignorance. Climate models are all wrong, and they are skillful. You miss the fact that they are skillful. I challenge you as an amateur hack, to get the source code of a modern climate model, and use your script kiddie skills to IMPROVE it. That is what a real scientist would do, instead of wasting your time impressing the dweebs that inhabit this blog. The uniformed may praise you, and their feedback inflates your enormous ego, but in the end, you have contributed noting at all to science.

.

1) Science is not about nit picking things that have absolutely nothing to do with the subject at hand. Your comments are the logical equivalent of declaring that someone can’t be a scientist because they used “their” when they should have used “they’re”. The only thing you are proving is that you yourself know nothing about science.

2) The only one displaying an inflated ego is you Brian. You just can’t accept that nobody here takes you as seriously as you take yourself.

3) Despite your repeated claims, there is not a single computer professional on this site who is laughing at Willis. On the other hand, quite a few of us have demonstrated how full of sh1t you are.

4) Because he disagrees with you regarding the dividing line between OS and language, therefore he’s can’t have mastered computer programming? Once again Brian demonstrates that he considers himself to be the standard against which all others must be measured. In other words, Brian’s enormous ego is getting in the way of actually thinking for himself.

5) Willis attacks computer models because they demonstratably are not skillful and all fail even the most basic of tests.

6) To be a “scientist” Willis has to volunteer to help others fix their broken code? What makes you think any of the programmers want any help from outsiders?

7) Proving existing theories wrong does nothing to advance science? Really? And to think you consider yourself to be an expert on “science”.

8) Once again Brian demonstrates his stellar sized ego by declaring that anyone who doesn’t agree with him is an ignorant amateur.

9) BTW, I notice that once again, you don’t actually refute anything Willis wrote, you just declare that since you disagree with him, he must be wrong. That’s not exactly a scientific attitude.

“I mastered computer programming” no you have not. You are an amateur. You attack computer models because of your ignorance.”

Now I think I’m starting to get why Brian goes so hard after Willis. Willis is bursting Brian’s climate alarmist bubble with his excellent posts.

Delighted. I had to evaluate the Datapoint for the National Provident Fund I was tasked with bringing into existence. I met the programming team of the Systems Corp, based then in Hawaii, who created the elegant Databus. They came out of the payroll team in the Vietnam War.

We didn’t use them eventually as the Govt of American Samoa allowed us graveyard shift time on their IBM System 3–a 96 hole punched card input machine with tape drives and 29Mb hard disk units.

Worked with numerous programming languages including Wang 2200T Basic. (48Kb rom which even allows access to the 8″ disk functions.)

Learnt to program on a Monroe 1620 calculator which had a portapunch. Unfortunately the holes could drop out of the program cards which made fixing the life table programs impossible.

In 1976 worked on the design and build of a Z80 based 4Mhz, 64Kb ram, S-Bus with screen , plotter, teletype, 8″ FDD with CPM 1 O/S. That was Zilab 1. It was to run house plan drafting software.Originally ran extended Basic but that morphed into a variety of HP9000 calculator basic which the original software was developed in.

I much appreciate your common sense and work. I’m a fresh 81. Have looked at R, much tempted but I’m more verbal these days. Interesting too, how musicians pop up so frequently among us numeric types. My youngest daughter, 19, has just started in the vocal school at Uni.

FREEBIE: Anyone interested can get a free copy of my Bio, “My First 80 Years” which gives a much enlarged version of my historical entanglement with computing, Just go to

lambtonpublishing.com/contact

and give me the address to send it to.

Happiness and blessings to all.

Kev.

Hi Willis, thanks for reminiscending about the past and your computer experiences…. All very interesting and now documented for our future generations. I am the same age but from a different field, from focussing onto a very wide range of science and knowledge. How, in fact, the input into computers is carried out in detail, is unrelevant for me. This is nothimg but pure skills (not bad if someone can do it) and not real creative thinking….. you admit that models are only the preconceptions of model authors, who put unproven ideas into models, which makes them ‘”official science”.

This was introduction. Now to the point: For models, input variables need to be complete. If one major climate forcing is missing/ignored/not known/excluded….. then models cannot produce the realidad and do not withstand the test of accuracy.

Concerning climate modelling: The most and major climate forcing is “orbital forcing”. Not the long-term processes such as obliquity and precession/ Those two are always pulled out of basement, when the question to orbital forcing arises. Orbital forcing, which is active every couple of weeks, consists of orbital osculation, perturbation, oscillation. The orbit is subject to ‘Gauss’ Perturbing Equations, which are also used to calculate practical osculating satellite motions.

And this osculation of the Earth orbit was/is left out of climate models, inclusive in all your climate analyses.

Therefore: The firstmost question for computer programming is: Are all major variables present?

There is no answer from you on this question. You refuse to read my papers, because what you do not know, this must be wrong for you from the beginning. You know what I mean.

In June this year, I will have the paper completed, demonstrating empirical meteorological evidence from all over the continents and oceans, how orbital forcing, without any modelling, impacts our daily weather and the climate evolution.

As I say, clinkering on keyboards does not get us closer to the understanding of the climate…the proof is the divergence of CMPI6 climate models and the differences in ECS, which are incorrct, because one major climate forcing was, knowingly or unknowingly, ignored.

In any physical system in which man tries to understand, there known unknowns and unknown unknowns. Climate modeling is no different.

Men trying to understand women exhibits and proves this point pretty well.

Joaquin, you say:

Since I don’t do “climate analyses” based on climate models, I have no idea what this means.

Nonsense. I said in this very post that I think major variables, to wit emergent climate phenomena, are not present in climate models. So your claim is falsified by this post itself, and that doesn’t count the many other times I’ve said that models leave out important variables.

Actually, Joaquin, I have no idea what you do mean. I have no memory of “refusing” to read your papers, but having published over 900 posts on this site and given my pre-doomed but still fixed intention to answer all relevant comments, I might have done so. Seems unlikely, though, not my style. I may have started reading a paper of yours and given up, but I don’t recall even that.

As to the orbital perturbations of the planetary orbit from external forces, these would presumably be from the gravitational forces of the moon and the major planets. I’ve looked at some of these, for example calculating the tidal forces from the major planets. They are trivially small. The moon perturbs the earth’s orbit, and it leads to the tides. I’ve also analyzed the tides, as well as the underlying lunar tidal force itself, to see what effect they might have on surface climate datasets of several types. I found no significant correlations.

So please, dial back on the accusations. At this point, I wouldn’t look at your paper if you paid me, although tomorrow when my blood is less angrified I likely would. But insulting a man is a very poor way to get him to pay attention to your whizbang theory of everything …

w.

Lets leave things as they stand… lets avoid polemics. The new paper will not mention Moon and tides, the so-called 3-body problem, which is only relevant to a certain small. degree. The new paper deals with the “true Earth orbit trajectory” ,which is different to the generally, in climate science, assumed elliptical Kepler line. The true trajectory impacts climate and weather and I will show massive meteorological proof.

And you write you do not know what I mean.

And to polemics:You started with the “cyclemania” some years ago – the fight is still open-… but this time there will be overwhelming evidence that the the true orbit has massive cyclic imprints on global climate, observable roughly about every 2 months at fixed dates. The “cycle fighters” will, this time, retreat into their (where they live).

Thanks, Joachim. Let us know when the new paper is ready for comment, and I will read it and see what I think.

As to “cyclemania”, it’s a real thing. Doesn’t mean it applies to you, or to any given study of cycles … only those for whom the shoe fits.

Best regards,

w.

The climate models do EXACTLY the two things they are intended to do:

1) Provide a nice paycheck to the computer engineers and climate pseudoscientists who write, debug, and run them, and then advertise the outputs in journal papers, conferences, and IPCC reports.

2) Provide an alarmist CO2-climate message for the paymasters to ensure 1) continues.

Ive only found models to be useful to scope and design an experiment. They are particularly useful in convincing the budget folks that you understand what you are doing.

All I can say is that I have worked as a programmer for a hedge fund for over 20 years. I have seen many PhDs from Ivy League schools come through the door at work and despite their pedigree never ended up making any money off their sophisticated models.

Thanks, Willis. This is by far the best contribution you have made to wattsupwiththat.com over the years. Have you written a memoir?

Forrest, coming from you that is high praise indeed, and greatly appreciated. I’m working on putting my various autobiographical pieces together into a memoir … but I have about 1,200 pages of them. I’ll likely publish them as ebooks in several volumes. In the meantime, you can read the individual posts here. They’re also available in the “Autobiography” section of my 2021 index to all my WUWT posts, which is here.

My best to you, and many thanks for all of your scientific contributions over your most inventive and productive life.

w.

(For those unaware of Forrest, he was one of my heroes as a kid because after Jearl Walker left, for several months Forrest wrote the column called “The Amateur Scientist” in the once-great but now rubbish “Scientific American” magazine.)

And here I mistakenly thought that I was the only person to recognize his name. I became aware of his contributions to monitoring UV when I first got involved in researching the so-called “Ozone Hole.”

And a column in Popular Electronics. Lessee, no he didn’t write the article on Big TC and Little TC (Tesla Coil). Both I and a college housemate saved that issue – I helped him build Big TC, it looked almost exactly like the cover photo.

And of course, after reading https://journals.ametsoc.org/view/journals/bams/92/10/2011bams3215_1.xml I rushed right over to Amazon to buy a Kintrex infrared thermometer.

Too many names to thank these days. Thank you Martin Gardner. Thank you Jearl Walker. Thank you Forrest Mimms. Thank you Katalin Kariko.

Willis, for me CPM was the operating system for the Osborne and similar computers (Kaypro and a few others). I learned Fortran and the computer department had a shiny new mainframe and plenty of stations with a keyboard and CRT. No punch cards any more, although plenty of my coworkers still had boxes of them from before they switched to workstations.

Even before you get into the shortfalls of modeling I also believe as Fermi said: “One way, and this is the way I prefer, is to have a clear physical picture of the process that you are calculating” IMO Climate Science got that wrong. They picked CO2 and since 1988 about 7 molecules of water vapor have been added for each molecule of CO2. Only about 2/3 of the 7 are because it got warmer and CO2 had no significant contribution to that.

The first computer I used was a CDC 6600. Really good architecture, but CDC marketed it for the scientific and engineering fields, so it couldn’t beat the IBM 360/370 series which were marketed for the business and banking fields. Pity, since its architecture was so good.

Mild nitpick – TRS-80 was the line, with various models. I had both a portable Model 100 (which is what you had) and a desktop Model III.

Willis, thank you for the excellent post. I’m sure there are a lot of readers like myself who have much appreciation for your insight into the world of computer modeling.

Thanks for the encouragement, eyesonu. Since my youth, I’ve seen part of my purpose as being to venture to parts of the physical (and intellectual) planet where most folks don’t have the opportunity to go, and then come back and report my findings.

I’m slowly getting better at it.

w.

Think about the ‘spaghetti models” offered each time a hurricane approaches North America. They all start with the same initial data, make different assumptions, and produce wildly different results, showing a landfall from Cape Cod to Miami to Merida. Each one of them has been run and it’s forecasts compared to reality many times and been tuned and improved as a result, yet they all differ. If we evacuated Miami each time an early model forecast a hurricane ending up there, we would have foolishly spent billions. Yet all of these models are have much more “V&V” than any climate model, and deal with fewer parameters over a much shorter timespan. The climate models would have us foolishly spend trillions.

Hurricane models are getting better. The 3 day forecasts are about as good as the 2 day forecasts were 15 to 20 years ago.

The difference is, with hurricanes we have a variety of predictions from models where there is no reason to prefer one to the other. So basically this is just reflecting uncertainty about the dynamics.

We don’t have one proven accurate one and a bunch of proven crappy ones, and then average the lot of them to product nonsense.

With climate we have one decent model, consistently accurate, the Russian one. And a whole bunch of others which are inconsistent with observed outcomes.

So what do we do? We average the one good ones predictions with those of the proven failures, and proclaim the result as good enough to drive policy.

Someone tell me what I am missing, why this is not insane!

I reckon you could be just the guy to give me a hand to disprove the idea that the movement of the planets affects the short-term climate/weather on Earth.

Farmers really want to know what each season will be like, either hot or cold ; wet or dry, as experienced by plants and animals.

I have what I call my “eye of newt” weather almanac (because it is so wacky) , but I’ve used it for decades because its helpful. Even my hay and silage contractor calls up for a “reading”.

I’d love to prove that it has no predictive value i.e. falsify the null hypothesis.

Whadda y reckon?

“One of the factors that cause urban environments to reach sweltering temperatures is a lack of vegetation. Trees alone could make a big difference: One model suggests that urban temperatures in Phoenix, Arizona, the hottest city in the US, could be reduced by over four degrees Fahrenheit if more trees provided cooling canopies over the scorching hot city.”

Can planting more trees keep cities from heating up? (msn.com)

The climate changers don’t do irony well. Still there is some healthy skepticism with the model-

‘“I think that there’s typically this sort of blind faith that we place in trees, that they will provide all of these wonderful social benefits,” says V. Kelly Turner, assistant professor of urban planning at UCLA. “But the environmental benefits that trees provide are entirely context-dependent,” she says. Patchy adoption of sprawling tree planting plans may not lead to the cooling bliss we desire in urban places.’

Thanks for the word “lithosphere”, complained just yesterday we don’t have a word for the more solid part of our biosphere. So I learn, love this site!

True ‘dat … WUWT ROOLZ!

w.

Definitely a memory lane post as well as a manual for modeling.

No one has mentioned Pascal. Why not? Not that I have written any programs in say the last 14 years or so but Pascal is still my go to language.

I remember Pascal from the 80s when I was at HP. If I recall correctly, C and C+ were coming up then, those three along with Unix and Basic were my programming mediums.

A quick search reveals that in addition to my mentioning Pascal in the head post, five commenters mentioned it …

w.

I’m impressed at the number of mentions of Algol. (Even discounting mine!)

Sorry missed those 5 mentions. :))

By the 1980’s Pascal was a very good language for teaching programming. Since it became all the rage we had several minicomputer reps that told us they were soon coming out with CPU chips that would execute P-Code directly. And, we had a product manage that thought it was so great you could develop an operating system in it. That project turned out to be a waste of several years and a few million dollars. Don’t know how it is now, but at least back then, there were an awful lot of incompetent people in the industry (i.e., salesmen, development managers, etc.) chasing the latest buzzword.

I the same age and with the same computers.I remember those cards and the hardship to get points in right place and the looong time waiting for next try. I left the field as a programmer when I realized that the assumptions I made (about the soil properties) where of more importance than he math.

Regarding computer models I listened to Roy Spencer and his one dimensional model.It fits!

Best regards!

Thanks Willis, Highly interesting stuff, reminds me of the computers used in the Apollo programme to land on the moon: https://www.youtube.com/watch?v=B1J2RMorJXM

I remember being told that the Apple II had more processing power than the Apollo computers.

I don’t know if that is true or not.

Most modern calculators have more processing power than the Apollo computers had. Heck, even 5 year old cell phones have more processing power than the Apollo computers did.

Thanks for the video ref. If I remember correctly the Launch Vehicle Digital Computer (LVDC) that was the autopilot on the Saturn 5 rocket was a one-bit serial computer that executed around 12k instructions per second.

Tank you for this, it really brought back the memories. It is good to see the comments from so many people who have long experience of computers and all the programming languages and operating systems.

How many of today’s computer ‘experts’ have this depth of experience and knowledge?

One of my memories of an IBM 360 was of a very large room full of large cabinets, and how the IBM engineers seemed to take up half the morning testing the computers.

Their ‘uniform’ was a white shirt and dark trousers, and they annoyed the commissionaires by insisting on parking right outside the main entrance, such was their self-imortance. The commissionaires soon put a stop to that by sticking a large message on the drivers side of the windscreen asking them not to block access for the emergency services. It was good fun seeing them taking ages trying to scrape it off with a razor blade.

The big problem now is the blind belief in the infallibility of computer output.

“Computer says so” seems to be the buzzword, or “no” in financial matters.

a veritable blast of nostalgia there! I instantly recognised the rings from core memory and the punch card… I started my programming in IBM assembler on a knock off copy of an early IBM mainframe…

Sometimes we used to hand punch individual punched cards if it was a minor change on a huge and noisy hand punch… what great fun to ‘have’ to make such a change when your colleague was suffering from a hangover! (how thoughtless is youth!)

I think that is one of the best articles I can remember you writing.

i remember a room sized punch card computer too, and the tinybbc? macs

and they both put me off of using pcs until the 2000s

now i can follow instruction to put code into my linux terminal

and sometimes get it right

lol;-)

Thank you Willis – your story brought back a flood of memories. One of my first job’s when an engineering student at Queen’s circa 1970 was to hand-process a ton of data. After a few days sweating in a hot room, I taught myself Fortran and got a mountain of work done while my employer took two weeks vacation. He was great guy but with no computer skills, so he thought I was a genius and I did nothing to dissuade him.

I thought Algol was a huge improvement over the Fortran of that day. I’ve learned many languages over the years as needed.

My most significant work was to model the economics of the Alberta oilsands and spot some future crises that would cause the owners great financial harm. I took my proposal for new Crown Royalty terms to the Syncrude Management Committee, and we got new Royalty terms that revitalized the industry. I had previously obtained new tax terms that were highly beneficial – allowing major capital expenses to be deducted in two years rather than ~ten.

The oil sands industry became the mainstay of the Canadian economy for 15 years, with over

$250 billion in new capital investments and approximately 500,000 new jobs created. Canada became the fifth-largest oil producer in the world, the largest foreign supplier of energy to the USA and the most successful economy of the G8 countries.

Subsequent idiot federal and provincial politicians trashed these fiscal terms, and together

with the actions of paid anti-pipeline thugs, the Alberta oilsands industry is now suffering. There is nothing that good men can create that imbeciles and scoundrels cannot destroy.

Excellent. You told the story of Datapoint in one line.

“There is nothing that good men can create that imbeciles and scoundrels cannot destroy.

Great overview for a non computer persn. Now do the covid models that lead to the great lockdowns causing immediate harm to tens of millions of people for no real benefit.

Uber-Geeks used to inhabit the Computer Sciences Building 24/7, intently working on their favorite projects. Punch cards were the input medium, and they had to be kept in perfect order. A common practice was to write a diagonal line with a felt pen on the card stack, to prevent disasters. A vivid recollection was some kid with a 30-inch high stack of cards tripping and spilling his card deck down the hall. Without that diagonal line, the card-sorting job was challenging – the resulting melt-downs were not pretty.

And as students invariably discovered, hanging out in the computer center after 10pm had great advantages because the turnaround time from job submission to the output bins was at a minimum.

Hah. Except for the “standalone” time by the OS developers working on improvements. I became one of those myself on CMU’s PDP-10s. At least they were timesharing systems and we didn’t have long overnight batch jobs.

I did much of my computer work late at night and early in the morning, to avoid crowding and resulting delays. Some of the late-night geeks were truly weird – guys who could communicate with machines, but not with people. We had an IBM360, which they viewed as a lesser God, or maybe not so lesser.

Some idiot foreign activists at a Montreal university destroyed their mainframe computer in a protest, and our geeks went into mourning for days – sackcloth and ashes, wailing and moaning, rocking and rolling, tearing their hair and gnashing their teeth – the whole enchilada…

If we could gather all those who took Fortran in community college, from their assisted living facilities, and flocculate them in just one, we could hear the audio version of these comments…