Guest Post by Willis Eschenbach

I’m 74, and I’ve been programming computers nearly as long as anyone alive.

When I was 15, I’d been reading about computers in pulp science fiction magazines like Amazing Stories, Analog, and Galaxy for a while. I wanted one so badly. Why? I figured it could do my homework for me. Hey, I was 15, wad’ja expect?

I was always into math, it came easy to me. In 1963, the summer after my junior year in high school, nearly sixty years ago now, I was one of the kids selected from all over the US to participate in the National Science Foundation summer school in mathematics. It was held up in Corvallis, Oregon, at Oregon State University.

It was a wonderful time. I got to study math with a bunch of kids my age who were as excited as I was about math. Bizarrely, one of the other students turned out to be a second cousin of mine I’d never even heard of. Seems math runs in the family. My older brother is a genius mathematician, inventor of the first civilian version of the GPS. What a curious world.

The best news about the summer school was, in addition to the math classes, marvel of marvels, they taught us about computers … and they had a real live one that we could write programs for!

They started out by having us design and build logic circuits using wires, relays, the real-world stuff. They were for things like AND gates, OR gates, and flip-flops. Great fun!

Then they introduced us to Algol. Algol is a long-dead computer language, designed in 1958, but it was a standard for a long time. It was very similar to but an improvement on Fortran in that it used less memory.

Once we had learned something about Algol, they took us to see the computer. It was huge old CDC 3300, standing about as high as a person’s chest, taking up a good chunk of a small room. The back of it looked like this.

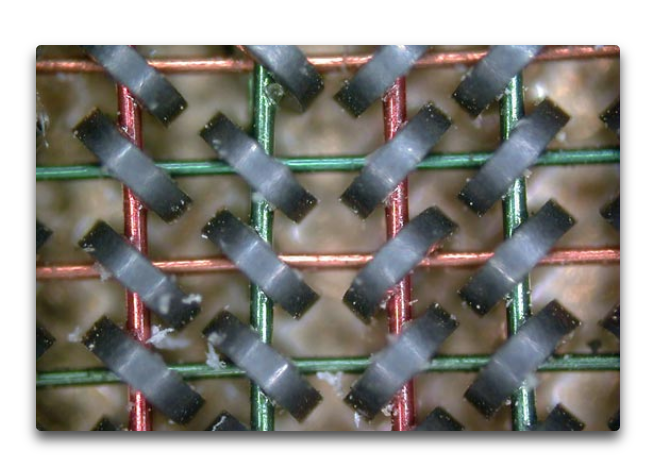

It had a memory composed of small ring-shaped magnets with wires running through them, like the photo below. The computer energized a combination of the wires to “flip” the magnetic state of each of the small rings. This allowed each small ring to represent a binary 1 or a 0.

How much memory did it have? A whacking great 768 kilobytes. Not gigabytes. Not megabytes. Kilobytes. Thats one ten-thousandth of the memory of the ten-year-old Mac I’m writing this on.

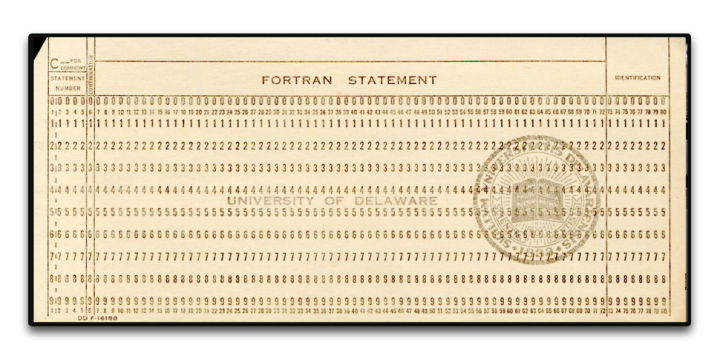

It was programmed using Hollerith punch cards. They didn’t let us anywhere near the actual computer, of course. We sat at the card punch machines and typed in our program. Here’s a punch card, 7 3/8 inches wide by 3 1/4 inches high by 0.007 inches thick. (187 x 83 x.018 mm).

The program would end up as a stack of cards with holes punched in them, usually 25-50 cards or so. I’d give my stack to the instructors, and a couple of days later I’d get a note saying “Problem on card 11”. So I’d rewrite card 11, resubmit them, and get a note saying “Problem on card 19” … debugging a program written on punch cards was a slooow process, I can assure you

And I loved it. It was amazing. My first program was the “Sieve of Eratosthenes“, and I was over the moon when it finally compiled and ran. I was well and truly hooked, and I never looked back.

The rest of that summer I worked as a bicycle messenger in San Francisco, riding a one-speed bike up and down the hills delivering blueprints. I gave all the money I made to our mom to help support the family. But I couldn’t get the computer out of my mind.

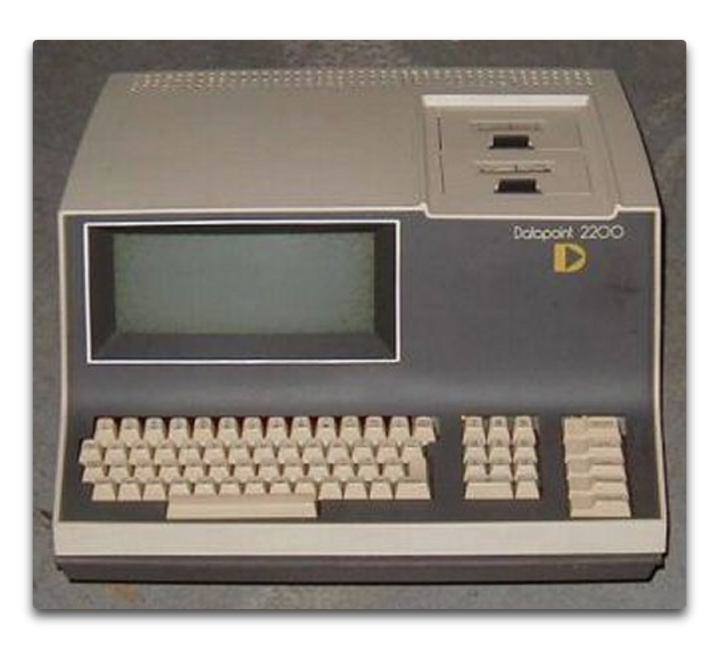

Ten years later, after graduating from high school and then dropping out of college after one year, I went back to college specifically so I could study computers. I enrolled in Laney College in Oakland. It was a great school, about 80% black, 10% Hispanic, and the rest a mixed bag of melanin-deficient folks. (I’m told than nowadays the polically-correct term is “melanin-challenged”, to avoid offending anyone.) The Laney College Computer Department had a Datapoint 2200 computer, the first desktop computer.

It had only 8 kilobytes of memory … but the advantage was that you could program it directly. The disadvantage was that only one student could work on it at any time. However, the computer teacher saw my love of the machine, so he gave me a key to the computer room so I could come in before or after hours and program to my heart’s content. I spent every spare hour there. It used a language called Databus, my second computer language.

The first program I wrote for this computer? You’ll laugh. It was a test to see if there was “precognition”. You know, seeing the future. My first version, I punched a key from 0 to 9. Then the computer picked a random number, and recorded if I was right or not.

Finding I didn’t have precognition, I re-wrote the program. In version 2, the computer picked the number before, rather than after, I made the selection. No precognition needed. Guess what?

No better than random chance. And sadly, that one-semester course was all that Laney College offered. That’s the extent of my formal computer education. The rest I taught myself, year after year, language after language, concept after concept, program after program.

Ten years after that, I bought the first computer I ever owned — the Radio Shack TRS-80, AKA the “Trash Eighty”. It was the first notebook-style computer. I took that sucker all over the world. I wrote endless programs on it, including marine celestial navigation programs that I used to navigate by the stars between islands the South Pacific. It was also my first introduction to Basic, my third computer language.

And by then IBM had released the IBM PC, the first personal computer. When I returned to the US I bought one. I learned my fourth computer language, CPM. I wrote all kinds of programs for it. But then a couple years later Apple came out with the Macintosh. I bought one of those as well, because of the mouse and the art and music programs. I figured I’d use the Mac for creating my art and my music and such, and the PC for serious work.

But after a year or so, I found I was using nothing but the Mac, and there was a quarter-inch of dust on my IBM PC. So I traded the PC for a piano, the very piano here in our house that I played last night for my 19-month-old granddaughter, and I never looked back at the IBM side of computing.

I taught myself C and C++ when I needed speed to run blackjack simulations … see, I’d learned to play professional blackjack along the way, counting cards. And when my player friends told me how much it cost for them to test their new betting and counting systems, I wrote a blackjack simulation program to test the new ideas. You need to run about a hundred thousand hands for a solid result. That took several days in Basic, but in C, I’d start the run at night, and when I got up the next morning, the run would be done. I charged $100 per test, and I thought “This is what I wanted a computer for … to make me a hundred bucks a night while I’m asleep.”

Since then, I’ve never been without a computer. I’ve written literally thousands and thousands of programs. On my current computer, a ten-year-old Macbook Pro, a quick check shows that there are well over 4,000 programs I’ve written. I’ve written programs in Algol, Datacom, 68000 Machine Language, Basic, C/C++, Hypertalk, Forth, Logo, Lisp, Mathematica (3 languages), Vectorscript, Pascal, VBA, Stella computer modeling language, and these days, R.

I had the immense good fortune to be directed to R by Steve McIntyre of ClimateAudit. It’s the best language I’ve ever used—free, cross-platform, fast, with a killer user interface and free “packages” to do just about anything you can name. If you do any serious programming, I can’t recommend it enough.

Oh, yeah, somewhere in there I spent a year as the Service Manager for an Apple Dealership. As you might guess given my checkered history, it wasn’t in some logical location … it was in downtown Suva, in Fiji. There I fixed a lot of computers and I learned immense patience dealing with good folks who truly thought that the CD tray that came out of the front of their computer when they did something by accident was a coffee cup holder … oh, and I also installed the Macintosh hardware for the Fiji Government Printers and trained the employees how to use Photoshop. I also taught two semesters of Computers 101 at the Fiji Institute of Technology.

I bring all of this up to let you know that I’m far, far from being a novice, a beginner, or even a journeyman programmer. I was working with “computer based evolution” to try to analyze the stock market before most folks even heard of it. I’m a master of the art, able to do things like write “hooks” into Excel that let Excel transparently call a separate program in C for its wicked-fast speed, and then return the answer to a cell in Excel …

Now, folks who’ve read my work know that I am far from enamored of computer climate models. I’ve been asked “What do you have against computer models?” and “How can you not trust models, we use them for everything?”

Well, based on a lifetime’s experience in the field, I can assure you of a few things about computer climate models and computer models in general. Here’s the short course.

• A computer model is nothing more than a physical realization of the beliefs, understandings, wrong ideas, and misunderstandings of whoever wrote the model. Therefore, the results it produces are going to support, bear out, and instantiate the programmer’s beliefs, understandings, wrong ideas, and misunderstandings. All that the computer does is make those under- and misunder-standings look official and reasonable. Oh, and make mistakes really, really fast. Been there, done that.

• Computer climate models are members of a particular class of models called “Iterative” computer models. In this class of models, the output of one timestep is fed back into the computer as the input of the next timestep. Members of his class of models are notoriously cranky, unstable, and prone to internal oscillations and generally falling off the perch. They usually need to be artificially “fenced in” in some sense to keep them from spiraling out of control.

• As anyone who has ever tried to model say the stock market can tell you, a model which can reproduce the past absolutely flawlessly may, and in fact very likely will, give totally incorrect predictions of the future. Been there, done that too. As the brokerage advertisements in the US are required to say, “Past performance is no guarantee of future success”.

• This means that the fact that a climate model can hindcast the past climate perfectly does NOT mean that it is an accurate representation of reality. And in particular, it does NOT mean it can accurately predict the future.

• Chaotic systems like weather and climate are notoriously difficult to model, even in the short term. That’s why projections of a cyclone’s future path over say the next 48 hours are in the shape of a cone and not a straight line.

• There is an entire branch of computer science called “V&V”, which stands for validation and verification. It’s how you can be assured that your software is up to the task it was designed for. Here’s a description from the web

What is software verification and validation (V&V)?

Verification

820.3(a) Verification means confirmation by examination and provision of objective evidence that specified requirements have been fulfilled.

“Documented procedures, performed in the user environment, for obtaining, recording, and interpreting the results required to establish that predetermined specifications have been met” (AAMI).

Validation

820.3(z) Validation means confirmation by examination and provision of objective evidence that the particular requirements for a specific intended use can be consistently fulfilled.

Process Validation means establishing by objective evidence that a process consistently produces a result or product meeting its predetermined specifications.

Design Validation means establishing by objective evidence that device specifications conform with user needs and intended use(s).

“Documented procedure for obtaining, recording, and interpreting the results required to establish that a process will consistently yield product complying with predetermined specifications” (AAMI).

Further V&V information here.

• Your average elevator control software has been subjected to more V&V than the computer climate models. And unless a computer model’s software has been subjected to extensive and rigorous V&V. the fact that the model says that something happens in modelworld is NOT evidence that it actually happens in the real world … and even then, as they say, “Excrement occurs”. We lost a Mars probe because someone didn’t convert a single number to metric from Imperial measurements … and you can bet that JPL subjects their programs to extensive and rigorous V&V.

• Computer modelers, myself included at times, are all subject to a nearly irresistible desire to mistake Modelworld for the real world. They say things like “We’ve determined that climate phenomenon X is caused by forcing Y”. But a true statement would be “We’ve determined that in our model, the modeled climate phenomenon X is caused by our modeled forcing Y”. Unfortunately, the modelers are not the only ones fooled in this process.

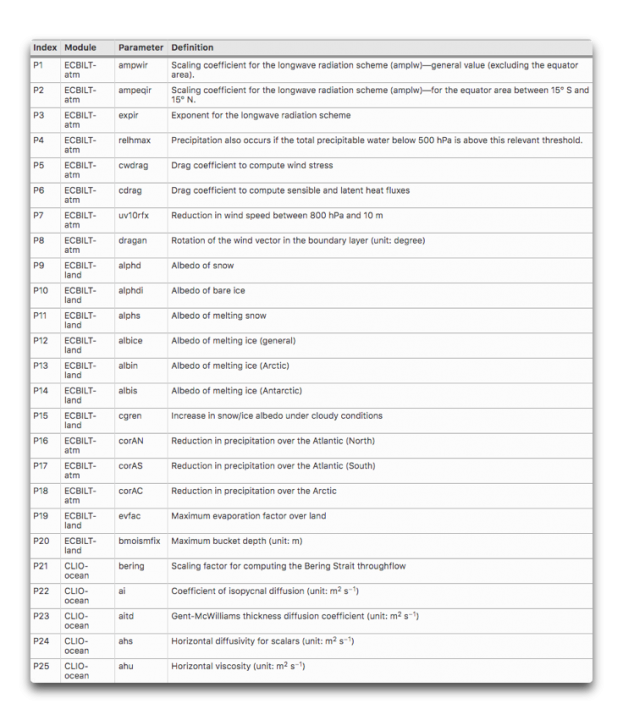

• The more tunable parameters a model has, the less likely it is to accurately represent reality. Climate models have dozens of tunable parameters. Here are 25 of them, there are plenty more.

What’s wrong with parameters in a model? Here’s an oft-repeated story about the famous physicist Freeman Dyson getting schooled on the subject by the even more famous Enrico Fermi …

By the spring of 1953, after heroic efforts, we had plotted theoretical graphs of meson–proton scattering. We joyfully observed that our calculated numbers agreed pretty well with Fermi’s measured numbers. So I made an appointment to meet with Fermi and show him our results. Proudly, I rode the Greyhound bus from Ithaca to Chicago with package of our theoretical graphs to show to Fermi.

When I arrived in Fermi’s office, I handed the graphs to Fermi, but he hardly glanced at them. He invited me to sit down, and asked me in a friendly way about the health of my wife and our new-born baby son, now fifty years old. Then he delivered his verdict in a quiet, even voice. “There are two ways of doing calculations in theoretical physics”, he said. “One way, and this is the way I prefer, is to have a clear physical picture of the process that you are calculating. The other way is to have a precise and self- consistent mathematical formalism. You have neither.”

I was slightly stunned, but ventured to ask him why he did not consider the pseudoscalar meson theory to be a self- consistent mathematical formalism. He replied, “Quantum electrodynamics is a good theory because the forces are weak, and when the formalism is ambiguous we have a clear physical picture to guide us. With the pseudoscalar meson theory there is no physical picture, and the forces are so strong that nothing converges. To reach your calculated results, you had to introduce arbitrary cut-off procedures that are not based either on solid physics or on solid mathematics.”

In desperation I asked Fermi whether he was not impressed by the agreement between our calculated numbers and his measured numbers. He replied, “How many arbitrary parameters did you use for your calculations?” I thought for a moment about our cut-off procedures and said, “Four.” He said, “I remember my friend Johnny von Neumann used to say, with four parameters I can fit an elephant, and with five I can make him wiggle his trunk.” With that, the conver- sation was over. I thanked Fermi for his time and trouble, and sadly took the next bus back to Ithaca to tell the bad news to the students.

• The climate is arguably the most complex system that humans have tried to model. It has no less than six major subsystems—the ocean, atmosphere, lithosphere, cryosphere, biosphere, and electrosphere. None of these subsystems is well understood on its own, and we have only spotty, gap-filled rough measurements of each of them. Each of them has its own internal cycles, mechanisms, phenomena, resonances, and feedbacks. Each one of the subsystems interacts with every one of the others. There are important phenomena occurring at all time scales from nanoseconds to millions of years, and at all spatial scales from nanometers to planet-wide. Finally, there are both internal and external forcings of unknown extent and effect. For example, how does the solar wind affect the biosphere? Not only that, but we’ve only been at the project for a few decades. Our models are … well … to be generous I’d call them Tinkertoy representations of real-world complexity.

• Many runs of climate models end up on the cutting room floor because they don’t agree with the aforesaid programmer’s beliefs, understandings, wrong ideas, and misunderstandings. They will only show us the results of the model runs that they agree with, not the results from the runs where the model either went off the rails or simply gave an inconvenient result. Here are two thousand runs from 414 versions of a model running first a control and then a doubled-CO2 simulation. You can see that many of the results go way out of bounds.

As a result of all of these considerations, anyone who thinks that the climate models can “prove” or “establish” or “verify” something that happened five hundred years ago or a hundred years from now is living in a fool’s paradise. These models are in no way up to that task. They may offer us insights, or make us consider new ideas, but they can only “prove” things about what happens in modelworld, not the real world.

Be clear that having written dozens of models myself, I’m not against models. I’ve written and used them my whole life. However, there are models, and then there are models. Some models have been tested and subjected to extensive V&V and their output has been compared to the real world and found to be very accurate. So we use them to navigate interplanetary probes and design new aircraft wings and the like.

Climate models, sadly, are not in that class of models. Heck, if they were, we’d only need one of them, instead of the dozens that exist today and that all give us different answers … leading to the ultimate in modeler hubris, the idea that averaging those dozens of models will get rid of the “noise” and leave only solid results behind.

Finally, as a lifelong computer programmer, I couldn’t disagree more with the claim that “All models are wrong but some are useful.” Consider the CFD models that the Boeing engineers use to design wings on jumbo jets or the models that run our elevators. Are you going to tell me with a straight face that those models are wrong? If you truly believed that, you’d never fly or get on an elevator again. Sure, they’re not exact reproductions of reality, that’s what “model” means … but they are right enough to be depended on in life-and-death situations.

Now, let me be clear on this question. While models that are right are absolutely useful, it certainly is also possible for a model that is wrong to be useful.

But for a model that is wrong to be useful, we absolutely need to understand WHY it is wrong. Once we know where it went wrong we can fix the mistake. But with the complex iterative climate models with dozens of parameters required, where the output of one cycle is used as the input to the next cycle, and where a hundred-year run with a half-hour timestep involves 1.75 million steps, determining where a climate model went off the track is nearly impossible. Was it an error in the parameter that specifies the ice temperature at 10,000 feet elevation? Was it an error in the parameter that limits the formation of melt ponds on sea ice to only certain months? There’s no way to tell, so there’s no way to learn from our mistakes.

Next, all of these models are “tuned” to represent the past slow warming trend. And generally, they do it well … because the various parameters have been adjusted and the model changed over time until they do so. So it’s not a surprise that they can do well at that job … at least on the parts of the past that they’ve been tuned to reproduce.

But then, the modelers will pull out the modeled “anthropogenic forcings” like CO2, and proudly proclaim that since the model no longer can reproduce the past gradual warming, that demostrates that the anthropogenic forcings are the cause of the warming … I assume you can see the problem with that claim.

In addition, the gridsize of the computer models are far larger than important climate phenomena like thunderstorms, dust devils, and tornados. If the climate model is wrong, is it because it doesn’t contain those phenomena? I say yes … computer climate modelers say nothing.

Heck, we don’t even know if the Navier-Stokes fluid dynamics equations as they are used in the climate models converge to the right answer, and near as I can tell, there’s no way to determine that.

To close the circle, let me return to where I started—a computer model is nothing more than my ideas made solid. That’s it. That’s all.

So if I think CO2 is the secret control knob for the global temperature, the output of any model I create will reflect and verify that assumption.

But if I think (as I do) that the temperature is kept within narrow bounds by emergent phenomena, then the output of my new model will reflect and verify that assumption.

Now, would the outputs of either of those very different models be “evidence” about the real world?

Not on this planet.

And that is the short list of things that are wrong with computer models … there’s more, but as Pierre said, “the margins of this page are too small to contain them” …

My very best to everyone, stay safe in these curious times,

w.

PS—When you comment, quote what you’re talking about. If you don’t, misunderstandings multiply.

H/T to Wim Röst for suggesting I write up what started as a comment on my last post.

I was taught R in undergrad, but I switched to Bash/Awk after finding a good job after school. I taught myself by patience in RTFM. It was quite a challenge and no one expected that from me.

I became that much more efficient than others stuck in Excel, R or Python, I got an award for it 🙂

I haven’t been able to find an easier, terser and cleaner way to manipulate plain financial tables.

I like Linux because it’s a gui wrapped on top of a complete programming environment, rather than a programming environment you have to install on top of a black box gui.

After more than a decade of financial fortune telling (still do it), I decided to put my skills to something else. I hope some people are enjoying mommy’s amateur science hobby other than my children.

Thanks for the post, Willis. -Z

“ it’s a gui wrapped on top of a complete programming environment”

..

No, Linux is not a gui. Linux is not a programming environment. Linux is an operating system. The programming environment runs on top of Linux. The gui also runs on top of Linux. Linux can be run without either a gui or a programming environment.

Then Windows and Mac is just a kernel too. Weird way to see things. No?

I like to include basic user utils as well. Linux uses them when booting into a USEABLE environment.

Current Macs are unix based. Windows is a bowl of spaghetti masquerading as an operating system (which you correctly called a black-box gui.)

Zoe, while we disagree on many things to do with the physics of this strange universe, I totally admire both your persistence, your imagination, and your programming skills. Always good to hear from you.

w.

Been through much the same history on computing. At school I learned Algol and FORTRAN, and we had access to time on the local university’s computer (limited to 1 minute of runtime per programme). Punched out cards with a hand punch. Those doing economics were able to run a macroeconomic model at LSE via a teletype machine: today’s Sim-Econ games are probably much more sophisticated.

My introduction to modelling applications was somewhat different. I was fortunate enough to be employed in looking at some of the implications of the infamous Limits to Growth study, so the next language I learned was DYNAMO (BASIC and 8080 assembler came later, when I got my first computer – a ZX81 which I soon expanded to 16k of RAM in which I contrived to run refinery LP simulations), in which their mode was written. I spent some time picking over its entrails and writing other simultaneous differential equation models both as programming practice and as numerical testing of the tendency of solutions to blow up because of the limitations of rounding errors and Runge-Kutta fourth order integrations. I then helped design (my co-designer was a physicist by training, and insisted on things like dimensional analysis) and did the writing and punching in FORTRAN and the many runs of a resource model. We had the benefit of some expert input from mining and geological and metallurgical specialists. We tried to capture some of their ideas in the modelling. Despite 4 card trays (about 2 ft long each), in reality it was fairly rudimentary. But it was more than enough to teach me that Limits to Growth was a prisoner of its assumptions for data and modelling: there were other answers that were more plausible.

Looking back on it decades later, I am pleased to report that our modelling turned out to be much closer to reality than that implied by Limits to Growth. Perhaps we were just lucky. Perhaps because we didn’t start by assuming the answer and tweaking the modelling to fit. The other great lesson was from seeing modelling used for political purposes. It has made me naturally suspicious of models, and insistent on proper relation to real world physics and measurement ever since. Not long after, I got introduced to some of the math of chaos and catastrophe theory which provided more insight on the need for caution in following models.

who needs GCMs when anyone can model what the IPCC will decide what the models should say

Since most of the models turn into nothing more than y = mx+b equations after about 50 years all the complexity of the models is just wasted effort.

Where y = temperature and x = CO2 concentration 🙂

Actually its y = temperature and x = input forcings in W/m^2

Another big problem , is that if they are hindcasting to say, GISS,

.. they are hindcasting to something that is fabrication in the first place. !

If they reproduce that temperature fabrication,

… they are almost certainly WRONG before they even start

eg, If your elevator shaft is 100m tall, and you give it data that says its 110m tall

Things aren’t going to work very well !!

Might work fine until someone press the wrong button :<)

That’s right, the alarmists are hindcasting the bogus, bastardized instrument-era Hockey Stick temperature profile.

They should try hindcasting for the *real* global temperature profile as represented here by the U.S. regional surface temperature chart, which is also representative of regional surface temperature charts from around the world.

U.S. regional surface temperatue chart (Hansen 1999):

Hindcast this.

About a decade behind you, e.g. PLATO IV, OPM. Modelers best assume Constructal law.

It’s not just computer models.

My favorite paper was one in which a colleague had a strange result. The measured rate coefficient should have been constant but was inversely proportional to the reactant concentration. I thought of a reason why this should occur and a quantitative model that was just basic arithmetic. A computer was only used to calculate the values of three variable paramers for a best fit to the data.

The final equation had k inversely proportional to the concentration and variable parameters that were realistic when fitted to the data. Good enough to claim that we figured out what was happening. The maths of the model was easy to check, just arithmetic that barely filled half a page. That the fit was good was easy to check. It was a paper that would have passed peer review and been accepted as the truth.

But! We could measure one variable parameter using a completely different experiment, so we did, and got a result a factor of ten different. We still published it as kind of close-but-no-cigar paper.

The point of the anecdote is that the model did not have the complexity of climate models. A chemical engineer could have used it as reality, plugging in variables and trusting the calculation. In reality, the mistake would have been picked up earlier but imagine if 97% of chemists said that they were 95% confident in it so ignore those who measured the variable parameter, and those sceptical of it should just shut up? Go to jail for racketeering, even.

Well done Willis. I could follow what you were saying even though I’m not a programmer. I fell in love with Chemistry after getting my first chemistry set in Junior High. Went on to become a high school chemistry teacher. I always included a section of what models are and aren’t so the students wouldn’t get a false impression that models are reality.

Brilliant synopsis.

Combine it with this interview of Judith Curry: https://www.youtube.com/watch?v=BOO9mafcA3s&t=2s

After understanding what you both wrote it makes me wonder how many people have to die when the electric energy system is totally revamped because the models said we had to.

Wow Willis. So cool to read your history. I followed a similar path until I didn’t. 2 10th grade students from every school in the city were chosen after candidates wrote a science/math exam and I got to be one of those in 1964. (Joe Berg Seminars). We got to listen to and meet professors from the university once a week and I found it a lot of fun. When we were invited to the university to learn a bit about computers I was already learning about Eccles-Jordan circuits(inventors of the flip-flop) and those punch cards we stole from the keypunch room were also good for making toy rockets!

I still have my 8k Commodore PET (Personal Electronic Transactor) with chiclet keys in the basement along with an aluminized paper printer and 20 virgin rolls of paper.

First commercial programming was done on Z80 s100 computer using CPM and Bill Gates’ first Fortran compiler to control a wood processing machine. I actually worked on a control system that had that magnetic donut memory so you didn’t have to reload the program if the power died. It came as part of the huge waferboard press from Germany in ’84.

The model of our manufacturing process that I wrote in VBA and Excel showed me how easy it is to fool yourself with these programs. I have never understood how they manage the cumulative errors in iterative climate models. Maybe they don’t?

I do not trust computer models because I worked with computer models going back as far as 1968.

That’s an excellent synopsis of my whole post.

w.

Indeed!

That brings back memories. My first employer in 1980 was Datapoint and the first computer I learned the internal workings of and how to repair was the 2200.

Two years later I joined the Amsterdam Stock Exchange as junior Cobol programmer. Punchcards were still used and very important for the department. They were our mail system, calendar, notepad, predecessor of the stickies, we could not function without them.

“I learned my fourth computer language, MS-DOS.”

..

MS-DOS is not a computer language.

You write commands in MS-DOS language. That’s all it recognizes.

If I entered “Brian is an asshat” as an MS-DOS command, it would not know what I meant.

(on second thoughts . . . )

You really are showing yourself to be monumentally STUPID, brianless. !

MS-DOS is a set of words or commands that is used for communication between the user and the computer.

It is a computer language..

Its main function just happens to be user interface and disc operation instructions.

Ha ha. Of course you’re both right. DOS is a computer program that is both an operating system (“cleverly” masquerading as a set of interrupt calls) and a user interface which includes the ability to run a rudimentary scripting language (bat files). Some people are overly picky, but if someone says they programmed in DOS, I’m going to assume they mean bat file scripting unless they specifically mention an interpreted language like early BASIC running under DOS or an early compiler (or even assembler). The best was Turbo Pascal. Most C compilers were agonizingly slow and they had a bad habit of having wayward pointers that could destroy the operating system, require a reset button or the infamous three fingered salute to recover.

One of the reasons I liked Pascal was that it forced a discipline that increased the chances that the program would actually do what you wanted. The fact that you had to define things before using them meant that it was a 1-pass compiler, vital when processors ran at the rate of a few MHz. And it also meant that the compiler and you had to be on the same page as to what variables meant. That confusion and the insistence on strict type checking meant that you tended to avoid the spectacular explosions caused by sloppy C code. Object Pascal’s fingerprints are all over C++ and although you can be sloppy with it, if you write programs as if they were in Pascal, you will be rewarded in the end.

One thing I just remembered. If you watch the original Terminator movie, the world from Ahnold’s point of view always has computer code scrolling by. I recognized where that code comes from because I once had to write subroutines in it to control lab equipment that was called from UCSD Pascal. It’s 6502 assembler code from an Apple ][, a computer not quite up to the job of controlling a Terminator.

Ah, the old Apple ][e. Did some early programming on them.

The “peak and poke” were fun because I could build little interface boxes to control external machines.

Made a cute little car that could follow a curvy line on a sheet of card.

Hmmm . . . core memory invented by Dr. An Wang, founder of Wang Laboratories.

You left out mention of the cert from Aames, and your stint at Sonoma,

Hey, I left out most of my life … you could start with this, more here.

w.

I thought is was a blog about climate, not the life and times of a wanna-be computer programmer.

As usual, what you think and reality have little in common.

The point of the article, which you once again go out of your way to miss, was about why climate models range from bad to useless.

The life history was to show why Willis is qualified to make such judgements.

Well put, MarkW.

You are being very reasonable. I fear you may be wasting your time though, since Brian seems intent on disrupting the conversation with his angry comments, regardless of topic.

BJ you are such a blowhard.

Yet brian is making it about brian being a wannabe something.. anything!!

… and its failing. !

You are still an abyss of empty blah !!

Your facade of egotistical bravado is hilarious. 🙂

You really do have deep-seated emotional and mental issues.

Pseudo intellectual is becoming a synonym for progressive.

Brain-dead, read the “About” link at the top of the page. This blog is about much more than just climate.

Brian, you are getting tiresome. Are you a thirteen year old that is trying to start a fight from a safe distance? Your constant rudeness is just ridiculous.

“Members of his class of models are notoriously cranky, unstable, and prone to internal oscillations and generally falling off the perch. “…..especially when their predictions tell you to bring an umbrella to work.

Do you think climate models are skillful?

Define “skillful”.

w.

1) https://dictionary.cambridge.org/dictionary/english/skillful

.

2) https://scienceblogs.com/stoat/2017/01/11/climate-models-have-proven-extremely-skillful-in-predicting-the-warming-that-has-already-been-observed

stoat ! ROFLMAO

The very bottop of the fetid abyss when it comes to anything related to science or morality.

You really know how to dig deep in to the putrid slime !.

Climate models have proven extremely skillful at reproducing the bogus, bastardized, instrument-era Hockey Stick chart profile.

That’s because the climate models were tuned to reproduce the bogus Hockey Stick chart “hotter and hotter and hotter” temperature profile.

You have a bogus Hockey Stick and you have bogus Climate models that reproduce the bogus Hockey Stick profile and it’s all dishonest computer manipulation of the data.

The real temperture profile of the Earth doesn’t look anything like the bogus Hockey Stick profile.

The real temperature profile of the Earth is based on actual temperature readings taken by human being over many years.

The Hockey Stick chart and the Global Climate Models are nothing more than the programming of computers to reach a certain outcome.

A huge fraud has been perpetrated on the world by alarmist computer data manipulators. That’s what we have with the bogus Hockey Stick chart and the Global Climate Models that aim to duplicate a false temperature history.

NO

They are not testable in practice, because Climate change is not a 30 year period phenomena, just because a bunch of weather record editors, with nothing but current data and altered record declared it to be so.

Earth itself does not work in such corrupted ways to make a crust.

In geology unambiguous climate change that we know of (and there is no other we know of, btw) is on a ~500 year time-scale resolution.

The LIA cycle was detected on a similar time scale.

All else is the product of unscrupulous corrupting of recent more detailed records.

While everything shorter in time-scale on the observational level is the net of weather cycle noise.

So the question of model predictive ‘skill’ is pure nonsense as applied to climate models, as they have no skill which they can demonstrate without recourse to a ‘time machine’ to sample a trend in 500 year future sample increments, which would negate the need for a GCM prediction in the first place.

Blah blah blah ‘skill’ blah blah blah.

Stop kidding yourself Brian, you fool no one here but yourself.

40 years worth of wrong predictions show that climate models have no skill.

One of them demonstrably does have skill, the Russian one. The problem is they take their one good proven successful model and then for some unaccountable reason mix it up with ones proven to have failed (by averaging their results). The result is they have degraded the one model that is probably fit for purpose.

If this were medicine, drug A, the equivalent would be saying we have 50 different models of the effects of large scale administration of drug A on the target population.

Some show mortality of 60%, some show cures of 70%. None come very close to predicting the results of previous real world trials. There is one model and only one which has successfully predicted the results of previous uses of A in this context, and it shows mortality of 20% and 5% cures, which tallies pretty well with field experience.

So what we do is average those results with all the ones that have failed, and tell our policy officials that this shows what is needed is large scale immediate dosing of the entire country…

Well, maybe I am missing something. Very much like to know what.

“Do you think climate models are skillful”

MOST CERTAINLY NOT !!

They are totally lacking is SO, SO MANY areas, and can’t even hit the side of a barn with their scatter gun. !!

They barely reach the level of a low-end computer game

Willis, that was a trip down memory lane for me. My main thing in college turned out to be math modeling of all sorts (an example, the classical predator prey equations done three ways: in calculus, in numerically solved Fortran, and via probabilistic Markov chain matrices). My senior/PhD thesis (separate story) input/output (I/O) dynamic model of nuclear power was written in Fortran on Hollerith cards—two big boxes holding about 1000 cards. And yes, debugging was a real PITA. Bonus was that Vassily Leontief won his Nobel prize in Economics for I/O that year, and as a prized pupil I got to celebrate with him.

You DO get around. When I was the first global sr partner head of BCG’s then new ‘Time Based Competition’ operations practice in the late 1980s, I bought Stella for each office’s TBC team. Stocks, flows, converters, connectors. Visually simple so our clients could understand our work and recommendations. Got to know creator Barry Richmond very well during his time at Dartmouth. He used our stuff (disguised) to market Stella at his High Performance Systems, and he brought us new clients. Win-win.

Thanks, Rud, your stories and insights are always welcome. Stella was an awesome language for constructing models of many things. Is it still around?

w.

I think I’ve been programming computers even longer than you, Willis. The first ones were IBM plug boards. But throughout my history with computers, one phenomenon stands out: people trust whatever they say. An example. I wanted a bank loan for a startup (involving computers, of course), so I did a simple model showing projected revenues and expenses, with the revenues exceeding the expenses (of course). I expected the bank manager to grill me about my assumptions. Never happened. He said, “This was done by a computer?” I didn’t correct him that it was done on a computer. Then he said, “It must be accurate. How much do you need?” I wish time had eroded this blind trust in electronics, but given the fealty paid to models–whether climate or COVID–I remain disappointed.

“The first ones were IBM plug boards.”

I learned how to program IBM plugboard machines in college though never actually did it for a living; IBM Accounting machines, Collators and Reproducers and of course keypunch and sorters which were not programmable but necessary to feed the beasts with cards. Those plugboard machine could be pretty cantankerous things to program but it was was fun when, with enough plug wires and patience, you got them to do a complex job correctly.

Regarding core memory: about 1965 or 66 as a kid I went on a tour of IBM-Boulder (Colo.) which included a walk through the huge room dedicated to fabricating magnetic core memory planes. I remember row-after-row of people sitting at benches and peering through magnifiers to thread the x, y, and sense wires by hand. Those hundreds of kilobytes of main memory were exorbitantly expensive.

The first thing I noticed in the core store memory photo was the lack of sense wires. How did the one shown sense if the polarity changed on a ‘read’?

FYI, in the Oz navy I maintained an Oz-designed anti-submarine system (Ikara) that used 32K core store memory to track submarines and guide a missile-mounted torpedo over same. It could process inputs from radar, sonar and external data links (other ships and/or helos).

Amazing what could be programmed into such a small amount of memory.

Booting the system required switch inputs using octal numbering for the code, then a magnetic tape. Lots of wire wrap backplanes as well.

Memories!!!!!

I was puzzled by the lack of sense wires in the photo also.

Sounds a bit like Apollo programming.

Spent about 10 years running an HP-1000 RTE-VI data acquisition system, it also had a 16-bit front panel switch on the CPU. Had the switch setting memorized that booted it up from the disk drive. Wrote many lines of Pascal for it.

Yes, the first thing I also noticed was the missing sensing wire that ran diagonally through the ferrite donuts. Maybe the photo was taken during manufacturing before the sense wires were threaded.

The sense wire is probably on the other side of the circuit board

>>

The first thing I noticed in the core store memory photo was the lack of sense wires.

<<

Core memory I studied usually had four wires–two for half-select, one for inhibit, and the sense wire.

Jim

There may have been a scheme that fished the polarity changes by, umm, “back EMF” on the X and Y wires. That could have been a big win, as people may never have gotten good at automating threading the sense wire.

DEC hired skilled seamstresses to “sew” their core planes.

Likely boring work that paid well.

Wow, great essay! I’ve wanted to get some understanding of climate models and what’s wrong with computer models in general. So now when some bozo tells me that climate science is settled because of definitive climate models- I’ll tell them to read your essay.

Enjoyed this a lot (maybe in part because I agree with it…!)

You allude in passing to something that has always troubled me about the models and particularly the spaghetti graphs.

It cannot be right, surely, to take a bunch of models many or most of which are demonstrably failing, and then average their forecasts and claiming that the result has any kind of validity.

If this were medicine, for instance. We had a drug whose function we did not understand, made a bunch of different models of its working, then generated forecasts for treatment of the target population. The results vary from 70% cured to 60% dying. So we average them and say on balance it is forecast to be significantly beneficial, lets go?

Or if we were designing a bridge. The results are all the way from, at a given sample set of girder parameters, all the way from failing under load once every five years to failing once in a hundred. We then average them to find out what girder parameters we should be using?

I would love to get an answer on this from someone who understand the subject better. It looks to me like a totally insane and unscientific procedure, completely unfit for purpose of generating forecasts to be used in policy selection.

But maybe someone knows different. I am very willing to be persuaded, but so far have not only found no satisfactory explanation of why averaging the bad with the good makes sense, I haven’t even come across any explanation of any sort.

Surely the only rational way is reject the failing ones, stick with the better ones, until finally we get to one decent one. Then use it.

Probably, from what I read, if we did that we’d end up with the Russian one, and all the alarm would evaporate.

Or if we were designing a bridge.

Idiotic things happen with “best models” 😀

On Christmas Day 2003, everyone was already about to put the shovel and concrete machine in the corner and ring in Christmas Eve, when a final routine check by the construction management took place.

They found that there was a difference of 54 centimeters between the bridge construction on the German side and the construction on the Swiss side. If one wanted to join the bridge in the middle, it would be difficult. “Laufenburger we have a problem” radioed the construction management to the headquarters.

Reference horizon

“The height difference can be corrected with minimal effort during route construction on the German side,” it was appeased. But clearly pointed out, “The fault is on the Swiss side.”

The overall project manager Beat von Arx also admitted this in no uncertain terms. However, he too did not know why at first.

Later, the public was enlightened. The cause of the error, he said, was the fact that in the area of road and bridge construction, the horizons on the German and Swiss sides were based on different reference horizons.

With it one did not say with a technical phrase the following: Germany refers with all these calculations to the sea level of the North Sea. Switzerland, which obviously prefers to look south, takes its reference from the Mediterranean Sea. The layman learns: Sea level is not equal to sea level!

Minus became plus

But that is not all. This difference between the two reference seas results in a difference of 27 centimeters. This was known when the bridge was planned 12 years earlier and included in the calculations accordingly.

Unfortunately, someone must have made a minus sign out of a plus. Because the values were corrected to the wrong side. And so, in the end, the two bridge sections differed by 27 times 2, which equals 54 centimeters.

Translated with http://www.DeepL.com/Translator (free version)

German source

Wonderful. If it had been climate science… what would they have done? Split the difference maybe, and had a 13.5 bump?

Climate science seems to have problems with sea levels 😀

Logically, there can only be one best simulation. If one averages that with all the others, then the result will be a degraded result.

Yes, that is exactly what I would have thought, and I am desperately though vainly seeking some explanation of why perfectly intelligent people with advanced degrees and lots of publications do not also see it that way.

I remember visiting the Smithsonian museum in D.C. decades ago, they had a Cray X-MP on display. It look like a modernist bench for people to sit on, it was comfortable…

If you are receiving a paper check from the U.S. Treasury from the recently passed stimulus bill, you’ll note it is also: “ 7 3/8 inches wide by 3 1/4 inches high ”

And $600 less than you were told you were getting?

I wonder why none of the usual trolls have shown up to declare that anyone who doesn’t trust models is a science denier?

Yes, where is Stokes when you most expect him?

Haven’t seen him for awhile. Maybe he lost his funding.

>>

We lost a Mars probe because someone didn’t convert a single number to metric from Imperial measurements … and you can bet that NASA subjects their programs to extensive and rigorous V&V.

<<

I wish you wouldn’t repeat this inaccurate view. This is from NASA’s Climate Orbiter Failure Board report released Nov 10, 1999:

The board’s report cites the following contributing factors:

The units problem–pounds force vs. newtons–is not an order-of-magnitude difference–only 4.45. And why didn’t they include the units like “good engineers?” Was the communications channel so limited that they couldn’t add a “nt” or “lbf” on the end?

Then there’s this from an IEEE Spectrum article: “. . . JPL’s process of ‘cowboy’ programming, and their insistence on using 30-year-old trajectory code that can neither be run, seen, or verified by anyone or anything external to JPL.”

Also from that Spectrum article: “. . . somebody at JPL ran the data through the 1998 Mars Pathfinder navigation code (different from the Mars probe code). It showed the spacecraft was off course by hundreds of kilometers, which turned out to be correct.”

Jim

How about the 1202 alarm on the LEM “Eagle” (apollo 11)

.

https://www.discovermagazine.com/the-sciences/apollo-11s-1202-alarm-explained

Total non-sequitur, Brainiac. The 1202 alarm had nothing to do with bad engineering, rather it was tribute to what they accomplished with the incredibly minimal computing hardware.

The 1202 alarm just indicated that the computer was being asked to do more than it could do, in the time allotted. The list of things to do was ordered by importance. The engineers determined that the things that weren’t getting done weren’t critical. Which is why they advised that the landing could continue.

I consider Willis’s view quite accurate. You only give eight reasons why the error had not been discovered in time.

>>

You only give eight reasons . . . .

<<

I’m sorry. I’ll try to do better in the future.

Jim