Guest Post by Willis Eschenbach

I’m 74, and I’ve been programming computers nearly as long as anyone alive.

When I was 15, I’d been reading about computers in pulp science fiction magazines like Amazing Stories, Analog, and Galaxy for a while. I wanted one so badly. Why? I figured it could do my homework for me. Hey, I was 15, wad’ja expect?

I was always into math, it came easy to me. In 1963, the summer after my junior year in high school, nearly sixty years ago now, I was one of the kids selected from all over the US to participate in the National Science Foundation summer school in mathematics. It was held up in Corvallis, Oregon, at Oregon State University.

It was a wonderful time. I got to study math with a bunch of kids my age who were as excited as I was about math. Bizarrely, one of the other students turned out to be a second cousin of mine I’d never even heard of. Seems math runs in the family. My older brother is a genius mathematician, inventor of the first civilian version of the GPS. What a curious world.

The best news about the summer school was, in addition to the math classes, marvel of marvels, they taught us about computers … and they had a real live one that we could write programs for!

They started out by having us design and build logic circuits using wires, relays, the real-world stuff. They were for things like AND gates, OR gates, and flip-flops. Great fun!

Then they introduced us to Algol. Algol is a long-dead computer language, designed in 1958, but it was a standard for a long time. It was very similar to but an improvement on Fortran in that it used less memory.

Once we had learned something about Algol, they took us to see the computer. It was huge old CDC 3300, standing about as high as a person’s chest, taking up a good chunk of a small room. The back of it looked like this.

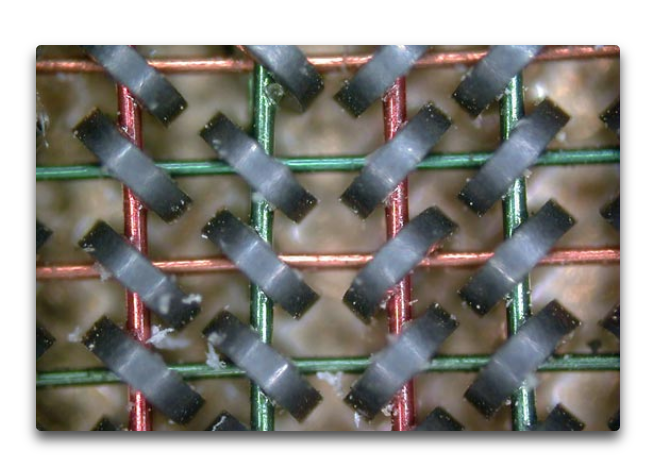

It had a memory composed of small ring-shaped magnets with wires running through them, like the photo below. The computer energized a combination of the wires to “flip” the magnetic state of each of the small rings. This allowed each small ring to represent a binary 1 or a 0.

How much memory did it have? A whacking great 768 kilobytes. Not gigabytes. Not megabytes. Kilobytes. Thats one ten-thousandth of the memory of the ten-year-old Mac I’m writing this on.

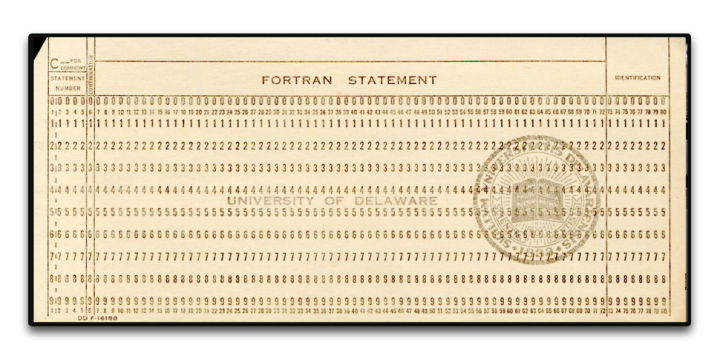

It was programmed using Hollerith punch cards. They didn’t let us anywhere near the actual computer, of course. We sat at the card punch machines and typed in our program. Here’s a punch card, 7 3/8 inches wide by 3 1/4 inches high by 0.007 inches thick. (187 x 83 x.018 mm).

The program would end up as a stack of cards with holes punched in them, usually 25-50 cards or so. I’d give my stack to the instructors, and a couple of days later I’d get a note saying “Problem on card 11”. So I’d rewrite card 11, resubmit them, and get a note saying “Problem on card 19” … debugging a program written on punch cards was a slooow process, I can assure you

And I loved it. It was amazing. My first program was the “Sieve of Eratosthenes“, and I was over the moon when it finally compiled and ran. I was well and truly hooked, and I never looked back.

The rest of that summer I worked as a bicycle messenger in San Francisco, riding a one-speed bike up and down the hills delivering blueprints. I gave all the money I made to our mom to help support the family. But I couldn’t get the computer out of my mind.

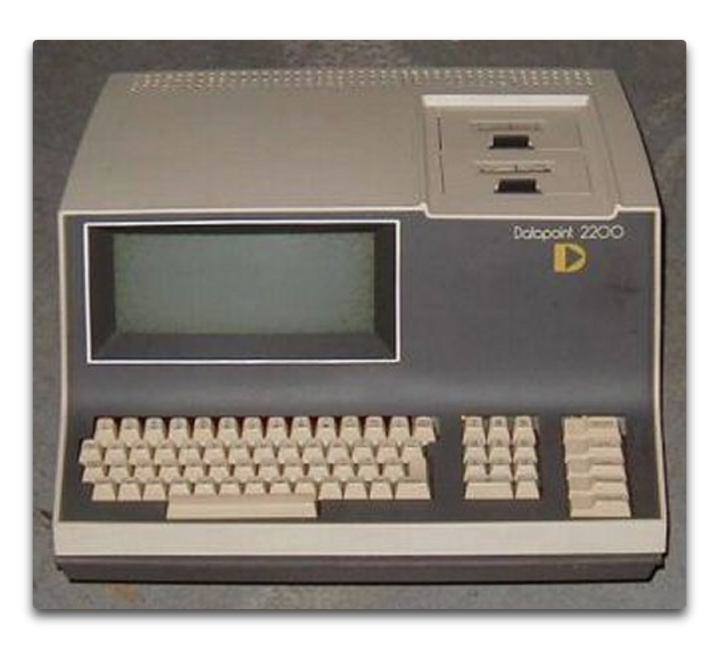

Ten years later, after graduating from high school and then dropping out of college after one year, I went back to college specifically so I could study computers. I enrolled in Laney College in Oakland. It was a great school, about 80% black, 10% Hispanic, and the rest a mixed bag of melanin-deficient folks. (I’m told than nowadays the polically-correct term is “melanin-challenged”, to avoid offending anyone.) The Laney College Computer Department had a Datapoint 2200 computer, the first desktop computer.

It had only 8 kilobytes of memory … but the advantage was that you could program it directly. The disadvantage was that only one student could work on it at any time. However, the computer teacher saw my love of the machine, so he gave me a key to the computer room so I could come in before or after hours and program to my heart’s content. I spent every spare hour there. It used a language called Databus, my second computer language.

The first program I wrote for this computer? You’ll laugh. It was a test to see if there was “precognition”. You know, seeing the future. My first version, I punched a key from 0 to 9. Then the computer picked a random number, and recorded if I was right or not.

Finding I didn’t have precognition, I re-wrote the program. In version 2, the computer picked the number before, rather than after, I made the selection. No precognition needed. Guess what?

No better than random chance. And sadly, that one-semester course was all that Laney College offered. That’s the extent of my formal computer education. The rest I taught myself, year after year, language after language, concept after concept, program after program.

Ten years after that, I bought the first computer I ever owned — the Radio Shack TRS-80, AKA the “Trash Eighty”. It was the first notebook-style computer. I took that sucker all over the world. I wrote endless programs on it, including marine celestial navigation programs that I used to navigate by the stars between islands the South Pacific. It was also my first introduction to Basic, my third computer language.

And by then IBM had released the IBM PC, the first personal computer. When I returned to the US I bought one. I learned my fourth computer language, CPM. I wrote all kinds of programs for it. But then a couple years later Apple came out with the Macintosh. I bought one of those as well, because of the mouse and the art and music programs. I figured I’d use the Mac for creating my art and my music and such, and the PC for serious work.

But after a year or so, I found I was using nothing but the Mac, and there was a quarter-inch of dust on my IBM PC. So I traded the PC for a piano, the very piano here in our house that I played last night for my 19-month-old granddaughter, and I never looked back at the IBM side of computing.

I taught myself C and C++ when I needed speed to run blackjack simulations … see, I’d learned to play professional blackjack along the way, counting cards. And when my player friends told me how much it cost for them to test their new betting and counting systems, I wrote a blackjack simulation program to test the new ideas. You need to run about a hundred thousand hands for a solid result. That took several days in Basic, but in C, I’d start the run at night, and when I got up the next morning, the run would be done. I charged $100 per test, and I thought “This is what I wanted a computer for … to make me a hundred bucks a night while I’m asleep.”

Since then, I’ve never been without a computer. I’ve written literally thousands and thousands of programs. On my current computer, a ten-year-old Macbook Pro, a quick check shows that there are well over 4,000 programs I’ve written. I’ve written programs in Algol, Datacom, 68000 Machine Language, Basic, C/C++, Hypertalk, Forth, Logo, Lisp, Mathematica (3 languages), Vectorscript, Pascal, VBA, Stella computer modeling language, and these days, R.

I had the immense good fortune to be directed to R by Steve McIntyre of ClimateAudit. It’s the best language I’ve ever used—free, cross-platform, fast, with a killer user interface and free “packages” to do just about anything you can name. If you do any serious programming, I can’t recommend it enough.

Oh, yeah, somewhere in there I spent a year as the Service Manager for an Apple Dealership. As you might guess given my checkered history, it wasn’t in some logical location … it was in downtown Suva, in Fiji. There I fixed a lot of computers and I learned immense patience dealing with good folks who truly thought that the CD tray that came out of the front of their computer when they did something by accident was a coffee cup holder … oh, and I also installed the Macintosh hardware for the Fiji Government Printers and trained the employees how to use Photoshop. I also taught two semesters of Computers 101 at the Fiji Institute of Technology.

I bring all of this up to let you know that I’m far, far from being a novice, a beginner, or even a journeyman programmer. I was working with “computer based evolution” to try to analyze the stock market before most folks even heard of it. I’m a master of the art, able to do things like write “hooks” into Excel that let Excel transparently call a separate program in C for its wicked-fast speed, and then return the answer to a cell in Excel …

Now, folks who’ve read my work know that I am far from enamored of computer climate models. I’ve been asked “What do you have against computer models?” and “How can you not trust models, we use them for everything?”

Well, based on a lifetime’s experience in the field, I can assure you of a few things about computer climate models and computer models in general. Here’s the short course.

• A computer model is nothing more than a physical realization of the beliefs, understandings, wrong ideas, and misunderstandings of whoever wrote the model. Therefore, the results it produces are going to support, bear out, and instantiate the programmer’s beliefs, understandings, wrong ideas, and misunderstandings. All that the computer does is make those under- and misunder-standings look official and reasonable. Oh, and make mistakes really, really fast. Been there, done that.

• Computer climate models are members of a particular class of models called “Iterative” computer models. In this class of models, the output of one timestep is fed back into the computer as the input of the next timestep. Members of his class of models are notoriously cranky, unstable, and prone to internal oscillations and generally falling off the perch. They usually need to be artificially “fenced in” in some sense to keep them from spiraling out of control.

• As anyone who has ever tried to model say the stock market can tell you, a model which can reproduce the past absolutely flawlessly may, and in fact very likely will, give totally incorrect predictions of the future. Been there, done that too. As the brokerage advertisements in the US are required to say, “Past performance is no guarantee of future success”.

• This means that the fact that a climate model can hindcast the past climate perfectly does NOT mean that it is an accurate representation of reality. And in particular, it does NOT mean it can accurately predict the future.

• Chaotic systems like weather and climate are notoriously difficult to model, even in the short term. That’s why projections of a cyclone’s future path over say the next 48 hours are in the shape of a cone and not a straight line.

• There is an entire branch of computer science called “V&V”, which stands for validation and verification. It’s how you can be assured that your software is up to the task it was designed for. Here’s a description from the web

What is software verification and validation (V&V)?

Verification

820.3(a) Verification means confirmation by examination and provision of objective evidence that specified requirements have been fulfilled.

“Documented procedures, performed in the user environment, for obtaining, recording, and interpreting the results required to establish that predetermined specifications have been met” (AAMI).

Validation

820.3(z) Validation means confirmation by examination and provision of objective evidence that the particular requirements for a specific intended use can be consistently fulfilled.

Process Validation means establishing by objective evidence that a process consistently produces a result or product meeting its predetermined specifications.

Design Validation means establishing by objective evidence that device specifications conform with user needs and intended use(s).

“Documented procedure for obtaining, recording, and interpreting the results required to establish that a process will consistently yield product complying with predetermined specifications” (AAMI).

Further V&V information here.

• Your average elevator control software has been subjected to more V&V than the computer climate models. And unless a computer model’s software has been subjected to extensive and rigorous V&V. the fact that the model says that something happens in modelworld is NOT evidence that it actually happens in the real world … and even then, as they say, “Excrement occurs”. We lost a Mars probe because someone didn’t convert a single number to metric from Imperial measurements … and you can bet that JPL subjects their programs to extensive and rigorous V&V.

• Computer modelers, myself included at times, are all subject to a nearly irresistible desire to mistake Modelworld for the real world. They say things like “We’ve determined that climate phenomenon X is caused by forcing Y”. But a true statement would be “We’ve determined that in our model, the modeled climate phenomenon X is caused by our modeled forcing Y”. Unfortunately, the modelers are not the only ones fooled in this process.

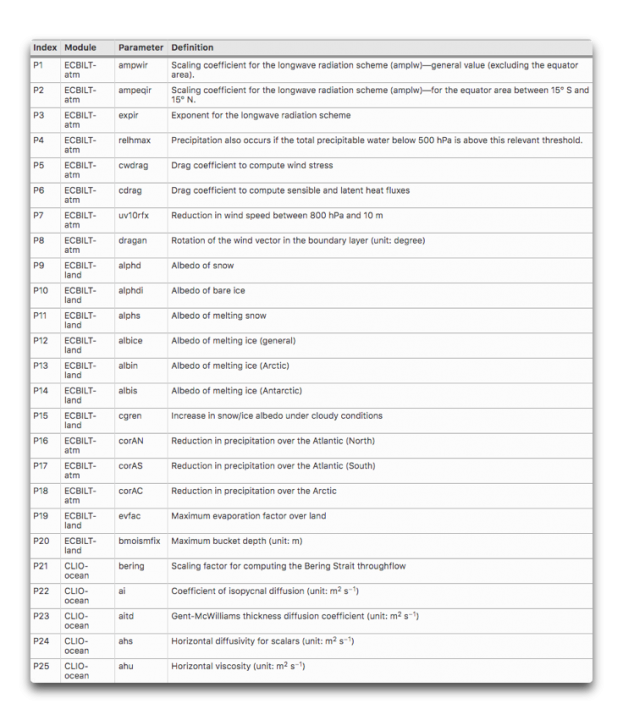

• The more tunable parameters a model has, the less likely it is to accurately represent reality. Climate models have dozens of tunable parameters. Here are 25 of them, there are plenty more.

What’s wrong with parameters in a model? Here’s an oft-repeated story about the famous physicist Freeman Dyson getting schooled on the subject by the even more famous Enrico Fermi …

By the spring of 1953, after heroic efforts, we had plotted theoretical graphs of meson–proton scattering. We joyfully observed that our calculated numbers agreed pretty well with Fermi’s measured numbers. So I made an appointment to meet with Fermi and show him our results. Proudly, I rode the Greyhound bus from Ithaca to Chicago with package of our theoretical graphs to show to Fermi.

When I arrived in Fermi’s office, I handed the graphs to Fermi, but he hardly glanced at them. He invited me to sit down, and asked me in a friendly way about the health of my wife and our new-born baby son, now fifty years old. Then he delivered his verdict in a quiet, even voice. “There are two ways of doing calculations in theoretical physics”, he said. “One way, and this is the way I prefer, is to have a clear physical picture of the process that you are calculating. The other way is to have a precise and self- consistent mathematical formalism. You have neither.”

I was slightly stunned, but ventured to ask him why he did not consider the pseudoscalar meson theory to be a self- consistent mathematical formalism. He replied, “Quantum electrodynamics is a good theory because the forces are weak, and when the formalism is ambiguous we have a clear physical picture to guide us. With the pseudoscalar meson theory there is no physical picture, and the forces are so strong that nothing converges. To reach your calculated results, you had to introduce arbitrary cut-off procedures that are not based either on solid physics or on solid mathematics.”

In desperation I asked Fermi whether he was not impressed by the agreement between our calculated numbers and his measured numbers. He replied, “How many arbitrary parameters did you use for your calculations?” I thought for a moment about our cut-off procedures and said, “Four.” He said, “I remember my friend Johnny von Neumann used to say, with four parameters I can fit an elephant, and with five I can make him wiggle his trunk.” With that, the conver- sation was over. I thanked Fermi for his time and trouble, and sadly took the next bus back to Ithaca to tell the bad news to the students.

• The climate is arguably the most complex system that humans have tried to model. It has no less than six major subsystems—the ocean, atmosphere, lithosphere, cryosphere, biosphere, and electrosphere. None of these subsystems is well understood on its own, and we have only spotty, gap-filled rough measurements of each of them. Each of them has its own internal cycles, mechanisms, phenomena, resonances, and feedbacks. Each one of the subsystems interacts with every one of the others. There are important phenomena occurring at all time scales from nanoseconds to millions of years, and at all spatial scales from nanometers to planet-wide. Finally, there are both internal and external forcings of unknown extent and effect. For example, how does the solar wind affect the biosphere? Not only that, but we’ve only been at the project for a few decades. Our models are … well … to be generous I’d call them Tinkertoy representations of real-world complexity.

• Many runs of climate models end up on the cutting room floor because they don’t agree with the aforesaid programmer’s beliefs, understandings, wrong ideas, and misunderstandings. They will only show us the results of the model runs that they agree with, not the results from the runs where the model either went off the rails or simply gave an inconvenient result. Here are two thousand runs from 414 versions of a model running first a control and then a doubled-CO2 simulation. You can see that many of the results go way out of bounds.

As a result of all of these considerations, anyone who thinks that the climate models can “prove” or “establish” or “verify” something that happened five hundred years ago or a hundred years from now is living in a fool’s paradise. These models are in no way up to that task. They may offer us insights, or make us consider new ideas, but they can only “prove” things about what happens in modelworld, not the real world.

Be clear that having written dozens of models myself, I’m not against models. I’ve written and used them my whole life. However, there are models, and then there are models. Some models have been tested and subjected to extensive V&V and their output has been compared to the real world and found to be very accurate. So we use them to navigate interplanetary probes and design new aircraft wings and the like.

Climate models, sadly, are not in that class of models. Heck, if they were, we’d only need one of them, instead of the dozens that exist today and that all give us different answers … leading to the ultimate in modeler hubris, the idea that averaging those dozens of models will get rid of the “noise” and leave only solid results behind.

Finally, as a lifelong computer programmer, I couldn’t disagree more with the claim that “All models are wrong but some are useful.” Consider the CFD models that the Boeing engineers use to design wings on jumbo jets or the models that run our elevators. Are you going to tell me with a straight face that those models are wrong? If you truly believed that, you’d never fly or get on an elevator again. Sure, they’re not exact reproductions of reality, that’s what “model” means … but they are right enough to be depended on in life-and-death situations.

Now, let me be clear on this question. While models that are right are absolutely useful, it certainly is also possible for a model that is wrong to be useful.

But for a model that is wrong to be useful, we absolutely need to understand WHY it is wrong. Once we know where it went wrong we can fix the mistake. But with the complex iterative climate models with dozens of parameters required, where the output of one cycle is used as the input to the next cycle, and where a hundred-year run with a half-hour timestep involves 1.75 million steps, determining where a climate model went off the track is nearly impossible. Was it an error in the parameter that specifies the ice temperature at 10,000 feet elevation? Was it an error in the parameter that limits the formation of melt ponds on sea ice to only certain months? There’s no way to tell, so there’s no way to learn from our mistakes.

Next, all of these models are “tuned” to represent the past slow warming trend. And generally, they do it well … because the various parameters have been adjusted and the model changed over time until they do so. So it’s not a surprise that they can do well at that job … at least on the parts of the past that they’ve been tuned to reproduce.

But then, the modelers will pull out the modeled “anthropogenic forcings” like CO2, and proudly proclaim that since the model no longer can reproduce the past gradual warming, that demostrates that the anthropogenic forcings are the cause of the warming … I assume you can see the problem with that claim.

In addition, the gridsize of the computer models are far larger than important climate phenomena like thunderstorms, dust devils, and tornados. If the climate model is wrong, is it because it doesn’t contain those phenomena? I say yes … computer climate modelers say nothing.

Heck, we don’t even know if the Navier-Stokes fluid dynamics equations as they are used in the climate models converge to the right answer, and near as I can tell, there’s no way to determine that.

To close the circle, let me return to where I started—a computer model is nothing more than my ideas made solid. That’s it. That’s all.

So if I think CO2 is the secret control knob for the global temperature, the output of any model I create will reflect and verify that assumption.

But if I think (as I do) that the temperature is kept within narrow bounds by emergent phenomena, then the output of my new model will reflect and verify that assumption.

Now, would the outputs of either of those very different models be “evidence” about the real world?

Not on this planet.

And that is the short list of things that are wrong with computer models … there’s more, but as Pierre said, “the margins of this page are too small to contain them” …

My very best to everyone, stay safe in these curious times,

w.

PS—When you comment, quote what you’re talking about. If you don’t, misunderstandings multiply.

H/T to Wim Röst for suggesting I write up what started as a comment on my last post.

Wow! Talk about memory lane!!

(and thanks for that first picture, makes my Wire Spaghetti seem less horrendous now)

– The more tunable parameters a model has, the less likely it is to accurately represent reality.

My thesis advisor in College had a similar conclusion essentially- the more parameters your algorithm needed, the less real it was. This was for Computer Vision Algorithms, but I’ve found it fits everywhere….

Spaghetti wire wrap was fairly common, and repairable too. I built an 8085 based computer using wire wrap. I also fixed a radio observatory ADC board that used wire wrap all in the early 80s. In regards to an old computer with 768KB of core memory, I can only imagine that would take up an enormous amount of space. I saw 4KB of core memory in an old BASIC-4 machine and the core memory was 1/2″ thick and the depth and width of the steel cabinet it was in.

The problem with wire wrap is that as clock frequencies go up, the wires stop being wires, and start being antennas.

Mark, you actually have the same problem with long traces on PC boards. Especially on single layer boards. Multi-layer boards make that less of a problem now.

True, however PC traces are both more controllable as well as being completely repeatable. In PC traces you can lay down guard traces surrounding problematic traces. This is not possible with wire wrap.

Sure, but I have had to wire wrapped a few twisted pair to fix problems that had no easier solution and rerouting didn’t take care of ’em.

In 1973, I wire wrapped several 11 inch by 8 inch boards populated with sockets to hold 7400 series gate ICs. It was a 4 bit microcontroller, complete with magnetic core memory. We got the thing going, but only briefly and only if it never moved.

As soon as we put it into a truck to gather data, it stopped working forever.

The problem was sockets and wire wrap, and connectors between boards.

The reason that computers work today is the massive integration of the transistors, gates and functions…all done on one chip. Interconnects are the killer. Connections are unreliable. Put in thousands of socket pins and wire wrap connections and you have junk.

Indeed, wire wrap was often used in small volume electronics. Even big things like computers, but when only a few were made, it was much cheaper than designing PCBs.

Wire wrap got really interesting back in the early 60’s when they were using Teflon for insulation. Took ’em a while to figure out that it suffered from cold flow and when wrapped under even slight pressure it eventually developed highly intermittent shorts. We had to field replace a whole lot of backplanes when it was finally discovered.

Yes, but core memory was great fun. I had the job of running a PDP11 and you could halt the processor, switch the power off overnight, go back and switch it on the next morning and it simply continued from where it had stopped.

Great post, Willis, as usual.

Wow, that Datapoint 2200 brought back memories for me. I worked with Datapoint systems in the late 70s and 80s, then for Datapoint themselves. All the Ford dealers in Europe used business systems written in Databus. Sadly, Datapoint lost its way along with most other minicomputer manufacturers, but for a while led the industry in networking (ARCnet) and desktop videoconferencing, used by the US military and I think NATO.

Anyway, back on topic, you are so right about models. The only 100% accurate model of reality is reality itself. Everything else is a more or less useful approximation and subject to significant amplification of human error.

https://www.edify.org/steve-james/

https://www.nytimes.com/1982/05/14/business/company-news-executives-resign-posts-at-datapoint.html

http://www.datapointremembered.org/in-memoriam/

You sure that was not Datashare?

Our Datapoint 2200s used Datashare.

R

Datashare was the multi-user evironment. Databus was the programming language. Datashare allowed multiple intelligent terminals to communicate with each other without a host. Datashare was the multi user interpreter.

They had a DOS also, and to go with it a sort of primitive Bash type scripting language called Chain.

Took a lot of hard work to destroy Datapoint.

They were the original contractors with Intel for the 8008, I think I remember. Didn’t like the result, came up with their own instruction set (and I think they did a better job, especially with the followon 5500). They told us once we were the second biggest customer after Safeway, and every once in a while they’d ship us an extra printer or processor, then take it back with apologies a week later. A year or two later, they imploded when a new accountant got curious about a bunch of hotel rooms being rented long-term, and found that someone had wanted to continue their streak of profitable quarters, ordered and shipped a few extra units, then took them back. Eventually it got out of hand, and when the financial skulduggery went public, they fell apart. Or so I heard. It did explain the extra equipment they’d send and take back. Might just be confirmation bias….

Great post Willis. It is nice to be reminded of the old days with computers and models and numerical solutions to the models.

I still have a “Trash 80” somewhere in my office. Fun little machine.

About models and their stability, I have a short story that happened in a partial differential equation (PDE) class I took in a class on Engineering Mathematics.

One of our homework assignments was to use a particular numerical method to solve a given PDE, Well it turned out later this tricky professor asked us to use an unstable numerical scheme that was unconditional unstable for any (delta x, delta t) we selected. In previous assignments we had learned that too large of (dx,dt) choices could be improved by using smaller (dx,dt) values.

When we turned our homework in, he took a few minutes to go through our homework papers. Most of the class gave up when the procedure blew up and blew up sooner and more with smaller delta t values. However there was one clever student who turned in some results that appeared to be a solution.

The next class day the professor handed back our homework papers and everyone who gave up and said that a numerical solution could not be found got an A on the assignment. He proceed to ask the one guy who got a solution to stay after class. He then put the problem and the numerical method on the blackboard and demonstrated to us that no solution could be obtained with that numerical scheme, and that the smaller delta t we used the quicker and more violently the “solution” blew up. And he wrote on the blackboard the numerical scheme and labeled it the “unstable scheme”. He then demonstrated that what weighting factors we had to use to make the scheme conditionally stable.

That was over 50 years ago and I still remember the lessons (not the details) of checking (V&V) our numerical solutions under a variety of circumstances. Here is a small list of the lessons learned that day but appreciated more and more with the passing years.

1) When presented with a numerical solution to differential equation(s) the solution is always an approximation.

2) Good engineers, scientists always include some analyses of the likely range of errors in the results.

3) Producing reasonable numerical results to PDES is not for the novice, tricky, or dishonest person.

4) Numerical analyses solutions to a single PDE is often difficult and quantifying the errors is also an approximation.

5) God save us from those who produce numerical solutions to a large number of coupled or linked PDES and claim they know the solutions to the models are correct and accurate to a specified range of uncertainty, or worse, they give the solutions as tested and perfect.

6) In hindsight, it was a blessing to learn mathematics and ways to apply and developing computer solutions to mathematical problems in the dawning age of digital computers.

Thanks again Willis.

I hope to see more posts from you on these modeling/computer topics.

That’s not technically true.

The models are true enough to commit to building engineering models. Those models are tested thoroughly. Then full sized units are built, and those are again tested thoroughly. Only at that point do you commit to production.

We actually have certain configurations that we know the model output will not be up to snuff. Most of them work great if you keep the flow in the laminar configuration, but they can get a little hinky when turbulent flow emerges, particular in the transonic arena. That is why P-51 and P-38s that broke the sound barrier in a dive tended to lose their wings (the models don’t show that). The forces were definitely not linear. There are models for transonic flight, but the physical models still get put through their paces in a wind tunnel to double check the output and surprises still occur.

The F-86 Sabre could also go supersonic in a dive — they fared better w/the swept wings & fully-movable elevators.

I can relate to MarkW’s comment:

Back around late 1980s to early 1990s, I worked on a hybrid AI and numerical based code to “tune” an high current, pulsed particle accelerator. The code needed to adjust various “steering” coils to keep the beam going down the (near) center of the beam tube. The accelerator pulsed at approximately 1 Hz, with the pulses lasting 10s of nanoseconds. The beam tube could not withstand continued strikes of the multi mega-volt by few kilo-amp electron beam. The beam position was measured at intervals. Thus, the task was to adjust the coils to center the next beam pulse based on the previous pulse positions down the beam tube.

One day, I was talking with the chief numerical modeling physicist about issues we had encountered between the actual beamline performance vs the modeled predictions. He made the remark (which I have to paraphrase as its been to long to provide an actual quote): “Our models are good enough to design a beamline, but not good enough to predict exactly how they will operate.” At the time, I was taken aback. After all, this was just particles (albeit relativistic ones) interacting with electric and magnetic fields. But then, as I considered the issues with all the manufacturing imperfections of all the magnets and electric coils, cascaded with all their alignment errors, his statement made complete sense; this was the whole point of the “tuning” system we were constructing.

Mr. Eschenbach,

Brilliant. Thanks.

It’s good to be reminded of things like this. We forget— and we also forget that the young have little or no knowledge or experience of history.

CDC = Control Data Corporation

DataPoint Corporation

DEC = Digital Equipment Corporation

Data General Corporation

Like you, I started young. I’ve been through punched tape and Hollerith cards and Visicalc and Lotus 1-2-3 and Excel and Reverse Polish Notation (RPN) and Fortran and Cobol and Basic.

Throughout it all, I’ve watched with astonishment and amazement as people treated the output from computer models as if it were the revealed truth.

God bless von Neumann. He knew— and he communicated that knowledge better than anybody ever did or ever will:

“Give me four parameters, and I can fit an elephant. Give me five, and I can wiggle its trunk”.

Started with Algol on an Elliot 503. Most fun language was Forth (still used to control telescopes, I understand). You could buy the core on a plugin cartridge for the Commodore 64 I got for the kids. It’s a threaded compact language that runs faster than interpreted languages like the Basic in the C64.

The C64 was the last machine I ever bothered getting down to machine code. The Cannon SE-100 100 memory desktop programmable calculator (early 70s) was probably the penultimate. That’s all you got.

The IPCC process for climate modelling is part of the problem, but if you must have it then how about a requirement to hold out all information on 1930-1990 in their construction (including parameter estimation), and then verify out of sample against that (plus report actual propagation of uncertainty)?

I too recall the horrors of dropping a stack of Hollerith cards and trying to splice together punched paper tape.

Phillip, I wondered if someone would mention the dreaded calamity of a dropped deck of punch cards.

Back in ’69 we had to suffer the boss’ high-school son coming in to work during his school vacation breaks.

Nice kid, but he had feet 4 sizes larger than his adolescent frame required, and we used to have bets on just when he would stumble while carrying a tray of card decks.

Of course it happened, and as Murphy’s Law dictates, it was month-end data entry.

His Dad the boss accepted the overtime cost philosophically.

Willis, thanks for the memories. I recall from card days that there was a command for the card punch to put in sequential card numbering in columns out in right(?) field. The sequential numbers would print out along the tops of the cards. If dropped, the deck could be reconstructed. I failed to find the command for you. As a consolation, if your computer does not have “Pi”, try ARCTAN(one radian)*4. You will get Pi to the limit of the computer innards instead of what you might define Pi to be, like 3.14.

For a reasonable approximation try 355/113

That’s why we drew a line across the top of the deck in its box. If you DID drop it the line helped to show if any of the cards were out of sequence when you put them back in the box.

You meant a diagonal line, right? Otherwise it wouldn’t be a help.

I recall always wearing a rubber band around my wrist, like many other co-workers, to always have one at hand to keep card decks together.

I remember a closet with paper tape subroutine rolls… Fortran I believe. Card stacks, Algol60 and Algol68 came later.

Might I add:

Bunker Ramo (used on the Ikara system I describe further down) and

Perkin-Elmer (which became, in part, Concurrent Computer Corp that I worked for over 17 years)

More memories

My first computer was an Apple11 which only had 16k bytes but had lots of mathematical operators and you could save a program and data on a tape recorder. One of the my first programs was a spreadsheet before Visicalc was available. Spreadsheets normally have only two dimensions x &y but each sheet can be piled up and referenced eg monthly accounts which can add to a year end sheet. My spreadsheet program using to for loops could have as many dimensions as one wished. That is a feature of mathematics which is not bound by physical limits. I have a book on string theory (The Shape of Inner Space by Shing-Tung Yau) which mentions 10 dimensions for the universe. Most (if not all) climate models ignore physical reality eg they ignore the 2nd law of thermodynamics, they ignore that above a wavelength of 10 micron which is about the peak emitted from the Earth’s surface CO2 only absorbs and emitts at a wavelength around 14.8 micron (ie the absorptivity is close to zero not 1 as claimed). I do not program any more. I can do with Excel all I want in my old age.

> Your average elevator control software has been subjected to more V&V than the computer climate models.

That’s because elevator software has direct impacts on people’s expected comfort, livelihoods and behavior unlike clima… oh… wait. Never mind.

Hi Willis, nice article! I am 4 years younger (less old?) than you but had somewhat of a similar start with computers, and therefore really enjoyed your article. However I am surprised you called MS-DOS a language. I first used it as QDOS (Quick and Dirty Operating System) from Seattle Computers before Bill turned it into MSDOS, but is was never a language, just an OS. Why do you call it a language?

I think DOS stood for Disk Operating System. Datapoint had its own DOS operating system. It’s first microprocessor – used in the 2200 – was claimed by some to be the direct forerunner of the first Intel chip – the 8086 I think.

It was possible to make batch programming, the later versions got more complexity, since Win NT, if I remeber well.

Yes, I was taken back a little by calling a Disk Operating System a computer language. However, it was the ability to write batch files to tell the OS what to do and when, particularly when booting, that I think qualifies it as a language.

Batch processing made a big difference, especially when you started the job just before leaving for lunch.

I thought the very first Intel microprocessor chip was the 4004. That’s what we studied in college right from the Intel manual.

It was; the four indicated it was a 4-bit processor. Intel made it big with the later 8-bit 8080. Motorola had the 6800 8-bit processor.

There was an 8008 that was I believe, an 8 bit version of the 4004. The op-code set was fairly limited.

I thought the 6500 line was Motorola’s first microprocessor.

Commodore Vic-20 had the Motorola 6502 8-bit processor — my first computer. Ran on “Vic–Basic” or straight machine-language if you could do it.

I developed some of the earliest electronic ticketing systems for public transport by writing assembly code for the 6502. Amazing what we could achieve with a 2k EEPROM.

That’s what we programmed in machine code in college – had to ‘jam’ the instructions.

Oh wow, my first computer was also an SCP machine, though the OS was called 86-DOS by the time I bought it. The Seattle small assembler was really fast.

Willis’s comments about computer models reminds me of Bob Pease’s comments on SPICE, “SPICE will lie to you”. Modeling circuitry is a much simpler problem than modeling than climate and also orders of magnitude easier to verify. This also goes along with “all models are wrong, but some are useful” in that while SPICE does not give a perfect answer to circuit simulation, the answer it gives is generally close enough to be useful – provided that the circuit model was sufficiently detailed.

Like, Willis, my first exposures to computer programming CDC machines, first being a CDC 1700 and second being a CDC 6400.

I’m right with you. I remember taking a stack of computer punch cards to be processed (FORTRAN) and dropping them on the floor in 1969. About 4 hrs of work scrambled beyond use. My first company I started was providing computer services to IBM midrange customers. The second was based on OS2 servers and dialup. Other than hardware, the biggest step forward has been object based programming languages. You can hire an experienced programmer and turn out the work of literally 60 programmers from the 1980’s.

A classic story of a guy with the same scrambled mishap. He used a version of Fortran that allowed more than one statement on a card. He then developed a programming style where each card had a label, and read like

860 X=X+1; GOTO 870

Now he could shuffle cards and the program still ran ..

Very quickly started putting a commented out sequence number at the end of each card.

Just remember to not number sequentially. Just in case you discover you have to add a couple of lines in the middle of your program.

I soon learnt that a diagonal line drawn across the edge of the stack made re-sorting a lot easier 🙂

And I too was a taken aback by the statement that “Microsoft Disk Operation System” (aka MS-DOS) was a programming language

Maybe it’s a reference to the scripted .bat files you can create for Ms Dos?

We actually had a sorter in the computer room that would sort the cards if you put a sequence number on the card.

This is why a big magic marker was an essential accessory for punch card programming—with a diagonal stripe across the top it was way easier to recover from disaster.

Ah yes – the fat felt-tip marker-pen.

I remember now…

RE

You can program in DOS using script or .bat files

I was going to comment about MSDOS being an OS rather than a language. It often came with Basic bundled with it.

A few years before SB/MS/PC DOS, there was CP/M 80.

The CP/M distribution typically came with a macro assembler – that was useful as common word processors often needed some customization to deal with particular printers. WordStar for example came with source listing for Diablo 630 daisywheel printers and Epson ‘graphtrax’ augmented dot matrix printers.

Yep. Used them to make instruction manuals in the 80’s.

WordStar and Superalc. Supercalc was an early speadsheet. It had a feature I still haven’teen in Excel – being able to copy a formula or group of formulas with eoither relative or absolute references without having written the formula specifically with either type of reference.

My ex-wife worked for a lawyer who had purchased some kind of word processing system. Might have been a Wang. What I remember about it was that it used a non-standard formatting for it’s floppy disks. It also didn’t include a formatting program. If you wanted new floppy’s, you had to buy them from the company. Something like $10 per disk.

My first experience was also in college. A CDC, I do not remember the number.

In my first class we used punch cards. A few months later they were using teletype machines for data entry. Used a single line editor to do text entry. Might have been ed.

The first four languages that I learned were Fortran, Pascal, PL/M and ASM86.

For the ASM86 we used an Intel development station. It had 4 8 inch floppy drives, and the OS disk was always put in the first drive. The editor/compiler went in the second slot and the third and fourth slots were for your program and data.

I never tried to move one of those development stations, but it looked like it would take at least 2 people.

My first job out of college we used 6510 assembly. I also redesigned the circuitry and relaid out the circuit boards. There was something very satisfying about laying out tape. Both puzzle and art. In my second job I learned C, and that’s pretty much all I’ve used since then. Have done some C++ and Python though.

Interestingly enough, a few years back I interviewed with an elevator company that was still using PL/M for most of their code. All the new stuff was being done in C++, but they had decades worth of code that hadn’t been retired yet that still had to be maintained. There PL/M guy had indicated a desire to retire.

I thought I was a shoe in for that job. After all, how many people have ever worked with PL/M? I was wrong.

Back when all of us were learning programming along with being keypunch operators, a friend (a joker, no less) inserted his stack of cards into the reader and headed out the door, just a moment before the wall of printers erupted, tractoring their entire box of paper onto the floor.

Of course, he said it was an unplanned event.

I’m only 2 years younger and started with Algol on a Burroughs B5500, which I’m guessing ran around 2 MHz (that’s 0.002 GHz). I recall it had a couple megabytes of hand-woven-in-Haiti core memory cards. Punch card programs and one run overnight to get results from a chain printer. Graduated to BASIC, then C, then 286/287 ASM. My masterpiece was loading the Mandelbrot algorithm entirely onto the 287 math coprocessor. Aaaannd, I have proof:

My bad, I was thinking about CPM. Fixed.

w.

CPM is not a programming language.

.

https://en.wikipedia.org/wiki/CP/M

,

Computer professionals reading this see it as a heck of a lot of BS.

From your link:

Commands[edit]

The following list of built-in commands are supported by the CP/M Console Command Processor:[14]

Transient commands in CP/M include:[14]

CP/M Plus (CP/M Version 3) includes the following built-in commands:[15]

I call that a language, although it is indeed an operating system. Me, I used to use it regularly, so I thought of it as a language. So sue me.

w.

Consider yourself sued. All your are doing is bullshitting the non-programmers on this site.

.

“I call that a language”

…

That shows how ignorant you are of computer programming.

Brian, you are hilarious. You come in, and the biggest thing you can find to whine about is that I called CP/M a “computer language”, since it had words that I could use to make the computer do what I wanted??

That’s it? That’s your evidence that I’m not a “real programmer”?

As to “bullshitting non-programmers”, there have been dozens of programmers with much more experience than I who have commented on this thread … but none with your combination of arrogance, vitriol, and unpleasantness. And guess what?

Not one of them has accused me of “bullshitting” anyone. You’re the only one.

More to the point, you STILL have not said one word or noted one complaint or pointed out one error regarding the actual subject of this post, which as the title makes clear is computer models. You just want to accuse me of meaningless “wrongdoing” like calling CP/M a language. What’s next? You gonna blame me for misgendering a potato?

All you’re doing at this point is further destroying your own reputation. Whether or not I’m good at programming computers has NOTHING to do with what term I might use to describe CP/M. In addition, whether I am right about the computer climate models has NOTHING to do with whether I can program. You’re off on a meaningless rant about trivia.

Get a life, Brian! Do you want your obituary to read something along the lines of “Brian Jackson was really good at dragging things down, sidetracking interesting conversations, ignoring the actual issues, accusing people of meaningless offenses, and was widely known for his amazing ability to piss on birthday cakes?”

w.

Willis

What Jackson did is called “nit picking,” a form of red herring.

Brian does things using grunts and groans and huffing and puffing !

That is his language. !

As to his knowledge of computer programming….. roflmao !!

Brainless, you are the lowest sort of troll, and full of hatred.

Mr. Jackson: Thank you so much, I am a non-programmer and I was totally bull-shitted into thinking CPM was a language. Since it was so critical to the article, you sure showed him, huh? Anyway I sure would have been embarrassed at my next soiree, where I planned to impress the ladies with my “what’s your sign? Did you know CPM is a language?” patter. Shame on Mr. E for bull-shitting like that.

Mr. Jackson, THAT is bull-shitting. Hope it helps you recognize.

Willis, were you sufficiently masochistic to use MP/M?

Never.

w.

From that, one might expect that your tendencies to recognize usefulness vs. time spent, to have kicked in when confronted with an Altair 8800.

CP/M (Control Language for microprocessor, trying to make /M looking like Greek mu, for micro) was not a language either, but still an operating system made by Digital Research for the 8-bit microprocessor 8080 made by Intel.

Don’t confuse simple commands in an OS (DIR, etc.) with instructions in a programming language which can be used to write any sort of program.

I am sorry to sound pedantic but with your programming skills (way superior to mine) I thought this was understood.

Thanks, Michael. Using CP/M I can tell a computer to do a variety of things, and it will do them, but only simple things. It has a very small vocabulary.

Using Basic I can tell a computer to do more complex things.

Using Stella I can tell a computer to do even more complex things.

I have a 19-month old granddaughter. She has a very small vocabulary. She can tell me to do a variety of things, and I’ll do them … but only simple things.

So your claim is, she’s not using a language, just running my operating system?

You do sound pedantic. A “language” is, inter alia, a way to give commands, and some have larger vocabularies than others. I speak three versions of Pijin—Tok Pisin, Bislama, and Solomon Islands Pijin. None of them have more than about a thousand words, while English has about 170,000 words … does that mean that Bislama is not a language because it doesn’t have a large vocabulary?

Finally, so freaking what? I’m self-taught in everything, so it’s not unusual that I don’t use some ivory-tower-approved term. Here’s an example.

For thirty years I called the moon’s “terminator wind” the “moon wind”. Why? Because I’d often observed it during the long nights I’d spent at sea, and wondered about it, and eventually I figured out what caused it all by myself, and that’s what I named it years before I’d ever heard of a terminator.

So … does that make me more, or less, of an expert on the moon wind than some guy who learned the official name of it in a book? I think more, although of course YMMV.

However, when a man starts focusing on what I call things, rather than on my ideas and accomplishments, all I can do is shake my head and wonder why he’s wasting his time on such trivial BS … call it what you like, my friend, and let’s get back to the real issues.

w.

Michael,

Depending on the hardware design it doesn’t take but a single instruction to build any program (without I/O of course): https://en.wikipedia.org/wiki/One-instruction_set_computer.

Add some form of input and output instruction and you can then build a 3-instruction computer that will perform most programming tasks.

I too noticed the DOS/language comment and my first thought was that I didn’t agree. However since it was neither relevant, nor important, I said nothing.

However as certain individuals kept trying to turn this molehill into a mountain, I thought more about it.

The more I thought about the difference between an operating system and a computer language, the more vague it became. Eventually I decided that it didn’t really matter and that the whole issue was rapidly becoming a debate on how many angels could dance on a pin head type argument.

Brian, your apparent belief that you, and only you, have the right and intelligence to decide such issues for everyone is rapidly becoming your trademark.

This re-enforces my perception that computer modeling of climate is the ultimate intellectual hubris

Modeling can be useful in helping you figure out what it is you don’t know yet.

It’s not useful for anything beyond that.

Thank you Willis, I enjoyed your post and learned something too….

Thanks for the enjoyable read/ride Willis. I am reminded of a Kraftwerk song listened to while punching holes in cards back in the day. “Das Modell” from the album, yep, album “Die Mensch-Maschine”

I was not a Fortran Fan.

Hey, Fortran was limited but useful. (It was a lot younger in 1959 when I started on an IBM 704, as were all the other languages.) But the best programming language I have used was a strange hybrid that ran on the Control Data 3100 in our lab in the middle Sixties. You could throw both Fortran and assembly language into the same program if you knew what you were doing. I reworked Spacewar to run on the 3100 using that hybrid.

And the spaceships in the first version got bigger and bigger as the game went on. I used too large a dt, and not enough terms in the expression. The second version was better, and the third good.

Ben Franklin, asked in 1747 what electricity was good for, replied, “If there is no other Use discover’d of Electricity, this, however, is something considerable, that it may help to make a vain Man humble.” Computer programming and modeling can serve the same purpose.

.. and how many hours were spent looking for the bug just knowing it can’t be a result of your code.. only to have someone come in behind you and find it in one minute.

I didn’t spend much time at all – it was a compact section of code. In those days, mathematical operations ate up cycles, so the program used scaling, which was a shift operation. The location of the problem was intuitively obvious to even the casual observer.

Most languages that I know of will let you mix assembly language into the code.

There are a few versions of C that use pragmas to allow you to insert the assembly language directly in-line. (You have to be careful doing this as it will make porting your code a lot more difficult.) In more general circumstances, you need to create subroutines in a different module, compile the module as a library and then make a library call to pull in the ASM.

Logically one conclusion is obvious.

Isn;t that what AOC wants to do? At least the first part…

Isn’t that what was tried on January 6th, 2021?

Liar.

Yes Pelosi did

Not even close, but I’m sure that thinking this way will help you keep your sense of superiority intact.

But AOC (et al) don’t want to plug the US back in.

That sign is ahead of its time.

I have had IT support jobs in the past where actually I have had to ask ‘have you tried switching it of and on again?’ and that worked…!

Griff, I had to drive 27 miles one time to plug in a keypunch for a customer.

Griff, have you tried just switching off?

It would make no difference to the worth of your comments.

Great story, I remember some of that early on almost all watching on the side. Saw many, including family, immediately adapted to it. I played (real) softball with the brilliant man that started our university computer program, cautioned me about such. Huge machine, fortunately got smaller, didn’t cure the caution.

Why is it not understood that that is why we call them models? Cars, planes, once had one of the WWII B-17 models that were used for teaching identification. Never could get it to glide, but it had been very useful for its purpose. Ok, that’s too simple.

There’s an old movie about a plane crash in the desert. The crash survivors decide to use the parts of the crashed plane to build a new plane to fly them out of the desert.

The guy who did the design near the end admitted that while he was trained as an aeronautical engineer, he had spent his career designing model planes. When the other passengers started getting upset, he told them that models have to be better designed than full sized planes. In his words, a model has to fly without the benefit of a pilot.

Flight of the Phoenix, based on a true story.

“Flight of the Phoenix”

I recommend the 1965 version with Jimmy Stewart.

Yep, that’s it, a classic; never bothered with the modern remake.

In my experience, remakes are rarely as good as the origninal.

yes, the remake was rubbish!

My recollections:

Adjusting the tone and volume controls to get the program saved on cassette tape to load.

The evil words: Syntax Error

dBase II

Our first 5-1/4 floppy drive for the Apple.

Wow! Fast and loaded every time

Adding a Z80 CPM card and 8″ floppies to the Apple II.

A 14″ plate 10meg hard drive (Altos 4 user CPM system.

Punch tapes for a wire edm.

Love the HP-15C

Still have mine from 1983.

Lou Ottens, inventor of the cassette tape, died yesterday aged 94<a href=” https://www.theguardian.com/world/2021/mar/11/lou-ottens-inventor-of-the-cassette-tape-dies-aged-94 “> link </a>

The sound of an 8” hard sector floppy disk (on a Cromemco S-100 Z-80) click click click click …. click click click click. Microfocus Cobol. Z-80 assembler.

http://bubek.net/pics/disklocher1.jpg

I remember I got a hardware tool to upgrade a 5-1/4 180 KB memory to 360 KB make it usable both sides in a C64 floppy drive.

Amazing times 😀

“I remember I got a hardware tool to upgrade a 5-1/4 180 KB memory to 360 KB make it usable both sides ”

One of the early computers I worked on was an IBM System/3 Mod 12 mini computer. It used 8 inch singled sided floppy diskettes as input in place of a card reader. The Diskettes had a notch on one site, that if covered, prevented you from writing on the diskette. We learned that if you cut a similar notch on the other side of the disk a flipped the disk over you could also write on the back sided of the diskette. IBM frowned on this but we never had a problem with it. We used “Dykes” (Diagonal Wire Cutting Pliers) to cut the notch.

Thanks, Willis!

I had always thought that one of the biggest problems with climate models was trying to work with two massive, chaotic systems simultaneously! Per usual your posts make understanding easier; a skill that far too few ‘professional’ educators possess!

One of the few regrets I have in my life besides turning down a scholarship to Stanford was not accepting my parents offer of piano lessons when I was seven! I’ve added playing the first movement of “Moonlight Sonata” to my bucket list; Beethoven being one of the original rock stars in my opinion. Guitars are great fun, but if you’re serious about composition and songwriting piano is unbeatable! Stay safe and healthy!

I spent a good part of my career in computer service management and can attest to your observations about programming and programmers. What I noticed about Climate modeling is a tendency to either compromise, discount, ignore, or be selective about the data to fit a desired outcome. “Forcings” are the elephant in the room. When doing compute intensive programs with finite element modeling that affect design parameters of say airplanes, bridges, cars etc. one always assumes the underlying material data is correct because it has been time tested. Not so much with climate modeling. It’s the result of producing a desired outcome vs. an accurate outcome.

I was also in the hardware side of computers and found programmers to have a narrow view of how their programs would be used.

They never allowed for a cat walking over a keyboard and the subsequent effect of random inputs.

Back in the days when I was doing a lot of programming, I spent more time trapping unreasonable input than writing the core program, so that the person using it wouldn’t crash it with out of range values. I also developed Computer Assisted Instruction software to supplement my labs. I considered the input trapping to be part of the learning experience because the students (if they were paying attention) would soon learn what wouldn’t work if they didn’t know what they were doing.

Now, now – SOME of us did. Although in my professional career, I didn’t concern myself with cats; <i>users</i>, particularly from the marketing department or (shudder) HR, were much, much worse hazards.

In my day, we considered software development experience as limited by the amount of time spent supporting code you had developed in multiple installations. Until then you didn’t have the foggiest idea of how reliable, usable or functional it was.

So true! Long, long, ago I worked for Big Blue as a (rare) professional hire. I was running an in-house expected resource capacity planning (modeling) program. The program kept crashing and pissed me off so I tried to see why. Low and behold the program had no input bounds checking. At the company, EBCDIC was the encoding of choice and bounds checking non-trivial, but still necessary. To make matters worse, the program was written in APL version 1. I found two locations where bounds checking was making the program crash and filed bug reports.

The point of emphasizing that I was a professional hire, was that I had a lot of experience in programming and hardware design so picking up on the problem was relatively easy for me. The normal hires for this division were non-engineering/non-hard science types, for example my immediate supervisor was a history major. You get the picture. Everyone working with the capacity planner who hit a wrong key would worry that they had “broken” the program and restart without telling anyone.

Shortly thereafter I was offered a position with another company and since BB couldn’t/wouldn’t match it I resigned. The day I left the Branch Manager conducted the exit interview and told me the program had been used for two years before the first bug was reported. That bug was one of the two I reported, and reported just a week before I reported it. The other bug had never been reported.

Funny that many of the CVSs reported on programs to this day are essentially bounds checking problems. BUFFER OVERRUN is a bounds checking problem!

My language knowledge parallels many of others in this thread. Maybe the TI 9900 assembly, and being in the first programming class in the world, taught by Nikolaus Wirth required to learn Pascal might also be interesting.

William

You said,

When I was a young man working at Lockheed MSC, they were so desperate to hire college graduates for managers that they had a music major managing engineers.

Yea, Big Blue went through a phase where too many engineers promoted to management weren’t working out and had to be reassigned back to engineering jobs. So, they started hiring non-engineers, mostly with degrees in Business Management, for those positions. I left in the early phase of that trial. Don’t know whether it eventually worked better or not. Fortunately other companies and start-ups I work for after that still had mostly engineers and/or scientists in management. At least you could have a reasonable discussion with them.

In my company we have a core product that we use to support multiple customers.

For each new customer, we develop translators to convert the customer’s files into a standardized format that our core program processes.

We start when the customer sends us their documentation that describes their data formats. We write the translators and test it with internally generated test data.

Then we have the customer send us some of their data

There’s nothing like counting bytes in a record to try and figure out which field was increased or decreased in size, or where an extra field was squeezed in, and what kind of data is in that new field.

One of these days I may get a file from a customer that actually matches their documentation, but I’m not optimistic.

In most cases, we have to adapt our code to match the actual data, because the guy who wrote the customers code has retired, and nobody in that company knows the code well enough to make changes to it.

My cat knows more shortcuts than I do. I’ve never been able to manipulate windoze better than she can.

We’ve got a few things in common:

1. age

2. same first laptop , except I also got the serial FDD and barcode wand, upgraded memory to 32KB (voiding the warranty), but never learned Basic, or any other programming language)

3. dropped out of high school, then dropped out of grad school twice, but only diminished my earning power through these academic feats

4. have (putative) grandchildren, but have never met or talked to them, and don’t know their birthdate (twins) or location

I guess I’m like a Bizarro to your Superman (except I only have a cat)

I wish there were some way for (non-billionaire) programming-challenged people like me to access the programming skills of people like you.

For example, I’ve been looking for years for a simple database or even just a spreadsheet template to let me enter the details of my various nutritional supplements and medications so I can see if I’m getting too much or too little of each, how much each product is costing me per day and when I need to order more, etc.

A simpler problem concerns digital micrometers. I’ve bought half a dozen over the past two decades, and every one of them has a serial port. But none of the various vendors offer a data cable or software to permit them to be used with a PC to quickly record a series of measurements (as in checking neck expansion of cartridge brass). Things are actually going backwards for consumers in this respect.

Thirty years ago I could buy a multimeter from Radio Shack Canada that had a serial port and software for DOS and Windows. Ten years ago, or so, you couldn’t buy such a thing in Canada anymore AND you could no longer import it from Radio Shack USA. I had to get someone in the USA to buy one for me in a RS store and mail it to me here in Canada (thanks so much NAFTA!).

Get Livecode. Successor to Hypercard. Very, very easy to learn, and very powerful. Mac, Windows, Linux, Android. Here is the opensource version:

https://livecode.org/download-member-offer/

Thanks for the tip. I AM impressed that Livecode will run under Windows 7 with only 256MB of RAM and 150MB of disk space, and runs in compatibility mode in Windows 10, but otherwise the FAQ/FAQ left me mostly scratching my head.

Easy for you, maybe…

Very very off topic, but OK, here is how to get started. You create what LC refers to as a ‘stack’, which contains ‘cards’. A card is basically just a graphical interface background, its something to put your components on.

You then start by dragging components across to it. These are of two sorts, purely graphical elements, which are not part of the programming, just look and feel. More important, you also place things like buttons, menus, fields which are part of the programming.

Start with something really simple, like the usual ‘Hello World’. You will need to drag over two components, a field and a button. Give each of them a name, like eg ‘field1’ and ‘calcbutton’.

Next you write little scripts for each of your active components. In this case you have only one, your button. So your program will consist of a script of that button, and it will be something like

on mouseup, put ‘Hello World’ into field1

generally speaking, programming in LC consists of writing scripts for objects and events. Events can be stuff like mouseup, mousedown. Objects can be stacks, cards, buttons, fields…

Buy a copy of Mark Schonewille’s book ‘Programming Livecode for the real beginner’. I guarantee if you work through it, you’ll be able to program. Its about 200 pages and very clear and gradually leads you into how to use all the different features.

Use tab separated text files to hold any data. Don’t worry if you find his section on arrays difficult. Its just about the only section of the book that is less than clear to a beginner, but if you get to arrays in your programming, you can see the finish line and can find someone to explain them to you more fully than he does.

There are tutorials on the LiveCode site also.

The great thing about it, apart from the ease of configuring the gui, is that its almost automatically structured at some level. For any given script, you can insist on writing spaghetti. But the underlying structure of scripting components means that your overall program will be in manageable self contained blocks.

Its not R, as recommended by Willis. Its not C++. But it will get you well started.

Good luck!

Should have given a link. Buy it here:

https://www3.economy-x-talk.com/file.php?node=programming-livecode-for-the-real-beginner

I can write you a program to do this. What caliper are you using… a quick internet search doesn’t show one with serial or usb port.

That would be greatly appreciated. Of all that I’ve owned over the past 30 years, only the most recent purchase (a couple of years ago) had a name and it’s shown here:

https://www.amazon.com/Mastercraft-Digital-Caliper/dp/B07K8JVS5W

The serial port is neither shown nor described, but you can see its sliding cover at the top right corner of the display case. I’ve attached an image of it. BTW all of the digital calipers I’ve owned, and all that I’ve examined (ie. cheap ones) have the identical flat board with four flat contact strips. The second picture is of the port of a no-name caliper that’s at least 20 years older than the Mastercraft one. Their measurements differ by less than a tenth of a millimeter. Oops! The site will only let me attach one image.

Komerade,

After writing my first response to your kind offer, I found a link offering something close.

http://robotroom.com/Caliper-Digital-Data-Port.html

I haven’t studied the contents closely, but most of it is clearly beyond my technical competence. The cable he proposes would have to be modified by replacing the DIN connector with a usb one – something I think I could manage if I knew which of the USB pins to connect. And his software is meant to send the caliper data to a milling machine(?), not a PC. But I assume his notes would help in writing a suitable program for sending data to a PC.

cheers,

Otro

Nice, Willis. How anybody less than 70? and with a modicum of science/engineering training can appreciate the modern age, nor its vast complexity I don’t know. I regularly pay homage to the billions of transistors between here and there -created by human beings, not to mention the billions of lines of code -written by fallible human beings. My first experience was programming an HP 2116? to play ping pong with the lights and switches at Stanford in 1970. I think we programmed it with the switches….. IBM cards came later. Who will appreciate this when we are gone? Certainly not the modern crowd I think.

John

Yep.

I remember sitting at a pc with one of my nieces who recently got her degree in IT.

I brought up the DOS command screen.

She said – “what’s THAT?”

I had a on and off relationshipwith computers. I had a couple of visits to CDC in Bloomington Minneapolis, It was a fascinating place and made a big impression on me. As a result I still take an interest in the Vikings, Twins and Timberwolves.

My experience with computers and languages is in the field of test engineering, finding other people’s dropoffs. Most had bespoke systems and languages. One was a 10 bit system by Elliott Automation, I think, that used two and a half 54 series logic circuits.

As a fellow programmer, though not nearly as seasoned as you, I wholeheartedly agree. I’ve built models for artillery shells and other well understood, narrowly defined physical processes. Even these can go horribly wrong. Garbage in, Garbage out. Computers are finite machines. Regardless of how slick the interface or how many processors you cram into it, it’s just a really fast Abacus. There’s no way you can predict the future of the weather, the stock market, or any other chaotic, nondeterministic process with a finite machine. That does not compute!

An abacus can’t do logic tests and alter what it does based on the outcome of the logic test.

Also has non-existent undo support…

Do NOT fold, spindle, or mutilate.

Think or thwim.

Sigh, all this reminds me of all the web pages I’m getting close to writing but haven’t had time to for 25 years yet.

Brief notes:

Dad taught me the binary number system when I was about 7, then how to count in binary on my fingers – they go from 0 to 1023.

In 1963 he had designed the Bailey Meter 756, the first commercially successful parallel processor. No one knows that because it was a process control computer. It was successful because it was decimal machine and that made it a lot easier for power plant people to deal with. He said it was so easy to program a 12 year old could do it, and taught me how to program the I/O processor. That was before core memory was common (your photo), the system executed off a drum – think spinning cylinder with a magnetic coating on the outside.

It wasn’t until I got to CMU in 1968 that I realized I was born to be a programmer. The first course was using Algol on the Univac 1108. The lecturer was Alan Perlis, one of the inventors. In meeting the keypunch, which did not have a backspace key to put the chads back in, you had to duplicate the card to the point of the error and deal with it there.

The duplicate function brought an epiphany – copying audio tape or paper lost information. Here I could type a card, duplicate it 100 times, and the data on the first and last cards would be identical. Absolutely amazing.

Near the end of the semester my first on my own, for the heck of it program had a goal to simulate the trajectory of an Apollo module on its flight to the moon. First came Earth – print filled in circles and open ellipses on the line printer. Then Mercury – an orbit around the Earth. Those orbits kept spiraling inward. Thinking that might have been related to discontinuities in the atan() function, I changed to atan2() [hmm, very fuzzy memory, I should check the code], but the spiraling still happened. The problem might have been single precision floating point, but there’s little reason to explore that.

I set it aside as the end of the semester approached and never got back to it, but I learned a tremendous amount about simulations, cometary (parabolic and hyperbolic) trajectories did bizarre things until I adjusted the time steps as a function of distance between Earth and the comet, and all the “1 plus epsilon” issues with floating point.

In my sophomore year I got a parttime job operating the Computer Science Dept’s new DEC PDP-10. It remains my most favorite computer ever, a position that no computer today or in the future can ever equal. OTOH, I can’t go back to it either. Progress marches on, but at least we can look back!

One thing I did write recently was in response to the Y2K transition – can you believe there are actually people who don’t remember it?

Before that, there was the DATE-75 problem on the PDP-10’s “TOPS-10” operating system. I figured I better write that up as a reply. Old PDP-10 fans might like http://wermenh.com/folklore/dirlop.html – covers that, but also has examples about why assembler programming on the -10 was more productive than pretty much any other system I’ve encountered. A wonderful machine on many levels.

I never verified it but was told by several friends familiar it that DEC’s Fortran compiler wrote tighter code than most assembler programmers could.

Ahem. I was at DEC and I was told that by one of the compiler’s authors. That was an utterly ridiculous claim, as TOPS-10 and most user level programs dedicated several of the 16 general purpose registers to purposes like point to important data structure. In assembly code, they were “just there” across a module’s subroutines whereas Fortran had to pass them on the stack or other memory. I think my reply started out with “Well maybe….”

Around 2005, I was back at DEC and decided to see if I could make the IP checksum routine faster on DEC’s Alpha processor. It wasn’t much code, but I had already come up with better byte swapping routines, and well, manually writing RISC assembler code is difficult, what with dealing with caches, pipelines and other cruft that original (discreet transistors!) PDP-10 didn’t implement.

I came up with code that looked pretty good to me, nicely unrolled, did 32 bit math instead of the 16 bit inherent in the checksum. (I couldn’t do 64 bit because I had to handle overflows.) There were a couple wait states, but better than the old code.

As a lark (and recalling a CMU case where someone found his LISP code was faster than his compiled code), I rewrote things in C to try out. The C code was faster. The C compiler used a different computation that allowed some intermediate results to make it through the pipeline and the generated code had no wait states.

With my 30 year sense of superiority over compilers nearly completely smashed, I rewrote my code to use the compiler’s algorithm and came up with something that was a little faster checksumming data that was not in the processor’s cache.

Between multiple levels of caching, data and instruction pipelines, look ahead processing you need to be an absolute ASM guru to have a chance of outperforming most modern compilers.

Beyond that, every time you code gets ported to a new computer, you have to go through it all over again.

Yes, and I was absolute ASM guru back in the day. And the IP checksum code is heavily used by NFS, and I saw I could do a better job than the original engineer did. The exercise was worthwhile.

Ever played with Grey code?

All the time. But that is a thing more applicable to those of us that design the hardware.

I didn’t learn about that until I got to college. I was pleased to realize the PDP-10’s line printer had an optical drum position sensor built around a spinning disk that used Gray code. I didn’t try hard to do that on my fingers. Even if I figured it out it would be a challenge to convert between the two.

https://www.allaboutcircuits.com/technical-articles/gray-code-basics/