Guest Post by Willis Eschenbach

I’m 74, and I’ve been programming computers nearly as long as anyone alive.

When I was 15, I’d been reading about computers in pulp science fiction magazines like Amazing Stories, Analog, and Galaxy for a while. I wanted one so badly. Why? I figured it could do my homework for me. Hey, I was 15, wad’ja expect?

I was always into math, it came easy to me. In 1963, the summer after my junior year in high school, nearly sixty years ago now, I was one of the kids selected from all over the US to participate in the National Science Foundation summer school in mathematics. It was held up in Corvallis, Oregon, at Oregon State University.

It was a wonderful time. I got to study math with a bunch of kids my age who were as excited as I was about math. Bizarrely, one of the other students turned out to be a second cousin of mine I’d never even heard of. Seems math runs in the family. My older brother is a genius mathematician, inventor of the first civilian version of the GPS. What a curious world.

The best news about the summer school was, in addition to the math classes, marvel of marvels, they taught us about computers … and they had a real live one that we could write programs for!

They started out by having us design and build logic circuits using wires, relays, the real-world stuff. They were for things like AND gates, OR gates, and flip-flops. Great fun!

Then they introduced us to Algol. Algol is a long-dead computer language, designed in 1958, but it was a standard for a long time. It was very similar to but an improvement on Fortran in that it used less memory.

Once we had learned something about Algol, they took us to see the computer. It was huge old CDC 3300, standing about as high as a person’s chest, taking up a good chunk of a small room. The back of it looked like this.

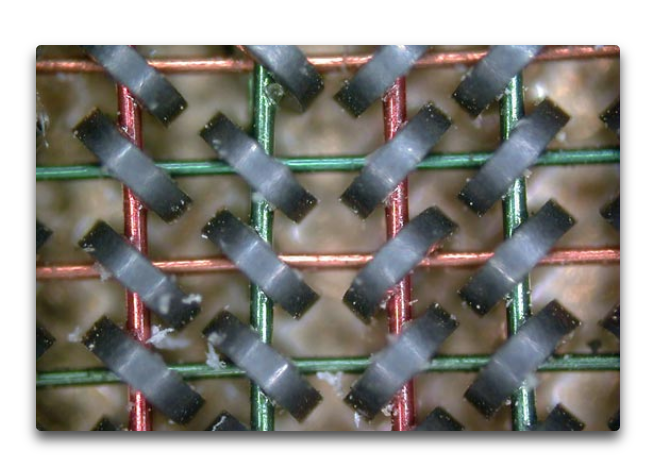

It had a memory composed of small ring-shaped magnets with wires running through them, like the photo below. The computer energized a combination of the wires to “flip” the magnetic state of each of the small rings. This allowed each small ring to represent a binary 1 or a 0.

How much memory did it have? A whacking great 768 kilobytes. Not gigabytes. Not megabytes. Kilobytes. Thats one ten-thousandth of the memory of the ten-year-old Mac I’m writing this on.

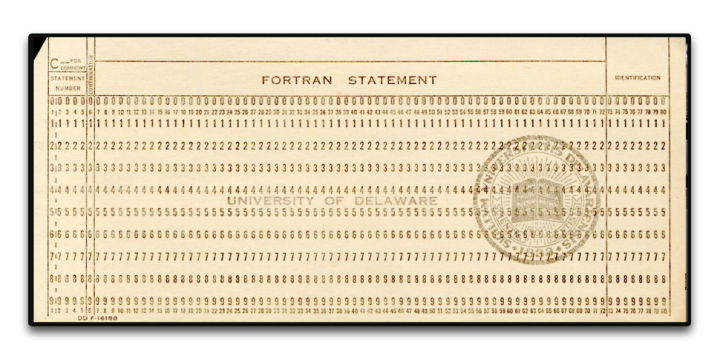

It was programmed using Hollerith punch cards. They didn’t let us anywhere near the actual computer, of course. We sat at the card punch machines and typed in our program. Here’s a punch card, 7 3/8 inches wide by 3 1/4 inches high by 0.007 inches thick. (187 x 83 x.018 mm).

The program would end up as a stack of cards with holes punched in them, usually 25-50 cards or so. I’d give my stack to the instructors, and a couple of days later I’d get a note saying “Problem on card 11”. So I’d rewrite card 11, resubmit them, and get a note saying “Problem on card 19” … debugging a program written on punch cards was a slooow process, I can assure you

And I loved it. It was amazing. My first program was the “Sieve of Eratosthenes“, and I was over the moon when it finally compiled and ran. I was well and truly hooked, and I never looked back.

The rest of that summer I worked as a bicycle messenger in San Francisco, riding a one-speed bike up and down the hills delivering blueprints. I gave all the money I made to our mom to help support the family. But I couldn’t get the computer out of my mind.

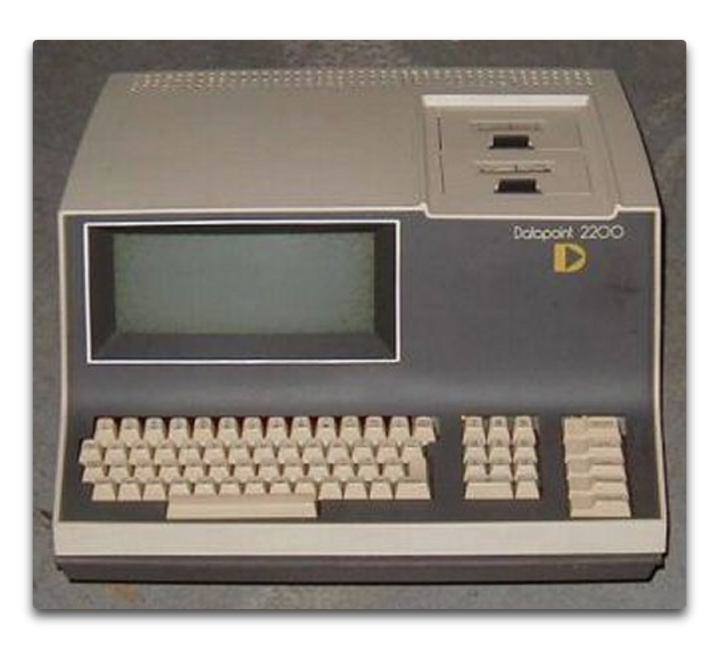

Ten years later, after graduating from high school and then dropping out of college after one year, I went back to college specifically so I could study computers. I enrolled in Laney College in Oakland. It was a great school, about 80% black, 10% Hispanic, and the rest a mixed bag of melanin-deficient folks. (I’m told than nowadays the polically-correct term is “melanin-challenged”, to avoid offending anyone.) The Laney College Computer Department had a Datapoint 2200 computer, the first desktop computer.

It had only 8 kilobytes of memory … but the advantage was that you could program it directly. The disadvantage was that only one student could work on it at any time. However, the computer teacher saw my love of the machine, so he gave me a key to the computer room so I could come in before or after hours and program to my heart’s content. I spent every spare hour there. It used a language called Databus, my second computer language.

The first program I wrote for this computer? You’ll laugh. It was a test to see if there was “precognition”. You know, seeing the future. My first version, I punched a key from 0 to 9. Then the computer picked a random number, and recorded if I was right or not.

Finding I didn’t have precognition, I re-wrote the program. In version 2, the computer picked the number before, rather than after, I made the selection. No precognition needed. Guess what?

No better than random chance. And sadly, that one-semester course was all that Laney College offered. That’s the extent of my formal computer education. The rest I taught myself, year after year, language after language, concept after concept, program after program.

Ten years after that, I bought the first computer I ever owned — the Radio Shack TRS-80, AKA the “Trash Eighty”. It was the first notebook-style computer. I took that sucker all over the world. I wrote endless programs on it, including marine celestial navigation programs that I used to navigate by the stars between islands the South Pacific. It was also my first introduction to Basic, my third computer language.

And by then IBM had released the IBM PC, the first personal computer. When I returned to the US I bought one. I learned my fourth computer language, CPM. I wrote all kinds of programs for it. But then a couple years later Apple came out with the Macintosh. I bought one of those as well, because of the mouse and the art and music programs. I figured I’d use the Mac for creating my art and my music and such, and the PC for serious work.

But after a year or so, I found I was using nothing but the Mac, and there was a quarter-inch of dust on my IBM PC. So I traded the PC for a piano, the very piano here in our house that I played last night for my 19-month-old granddaughter, and I never looked back at the IBM side of computing.

I taught myself C and C++ when I needed speed to run blackjack simulations … see, I’d learned to play professional blackjack along the way, counting cards. And when my player friends told me how much it cost for them to test their new betting and counting systems, I wrote a blackjack simulation program to test the new ideas. You need to run about a hundred thousand hands for a solid result. That took several days in Basic, but in C, I’d start the run at night, and when I got up the next morning, the run would be done. I charged $100 per test, and I thought “This is what I wanted a computer for … to make me a hundred bucks a night while I’m asleep.”

Since then, I’ve never been without a computer. I’ve written literally thousands and thousands of programs. On my current computer, a ten-year-old Macbook Pro, a quick check shows that there are well over 4,000 programs I’ve written. I’ve written programs in Algol, Datacom, 68000 Machine Language, Basic, C/C++, Hypertalk, Forth, Logo, Lisp, Mathematica (3 languages), Vectorscript, Pascal, VBA, Stella computer modeling language, and these days, R.

I had the immense good fortune to be directed to R by Steve McIntyre of ClimateAudit. It’s the best language I’ve ever used—free, cross-platform, fast, with a killer user interface and free “packages” to do just about anything you can name. If you do any serious programming, I can’t recommend it enough.

Oh, yeah, somewhere in there I spent a year as the Service Manager for an Apple Dealership. As you might guess given my checkered history, it wasn’t in some logical location … it was in downtown Suva, in Fiji. There I fixed a lot of computers and I learned immense patience dealing with good folks who truly thought that the CD tray that came out of the front of their computer when they did something by accident was a coffee cup holder … oh, and I also installed the Macintosh hardware for the Fiji Government Printers and trained the employees how to use Photoshop. I also taught two semesters of Computers 101 at the Fiji Institute of Technology.

I bring all of this up to let you know that I’m far, far from being a novice, a beginner, or even a journeyman programmer. I was working with “computer based evolution” to try to analyze the stock market before most folks even heard of it. I’m a master of the art, able to do things like write “hooks” into Excel that let Excel transparently call a separate program in C for its wicked-fast speed, and then return the answer to a cell in Excel …

Now, folks who’ve read my work know that I am far from enamored of computer climate models. I’ve been asked “What do you have against computer models?” and “How can you not trust models, we use them for everything?”

Well, based on a lifetime’s experience in the field, I can assure you of a few things about computer climate models and computer models in general. Here’s the short course.

• A computer model is nothing more than a physical realization of the beliefs, understandings, wrong ideas, and misunderstandings of whoever wrote the model. Therefore, the results it produces are going to support, bear out, and instantiate the programmer’s beliefs, understandings, wrong ideas, and misunderstandings. All that the computer does is make those under- and misunder-standings look official and reasonable. Oh, and make mistakes really, really fast. Been there, done that.

• Computer climate models are members of a particular class of models called “Iterative” computer models. In this class of models, the output of one timestep is fed back into the computer as the input of the next timestep. Members of his class of models are notoriously cranky, unstable, and prone to internal oscillations and generally falling off the perch. They usually need to be artificially “fenced in” in some sense to keep them from spiraling out of control.

• As anyone who has ever tried to model say the stock market can tell you, a model which can reproduce the past absolutely flawlessly may, and in fact very likely will, give totally incorrect predictions of the future. Been there, done that too. As the brokerage advertisements in the US are required to say, “Past performance is no guarantee of future success”.

• This means that the fact that a climate model can hindcast the past climate perfectly does NOT mean that it is an accurate representation of reality. And in particular, it does NOT mean it can accurately predict the future.

• Chaotic systems like weather and climate are notoriously difficult to model, even in the short term. That’s why projections of a cyclone’s future path over say the next 48 hours are in the shape of a cone and not a straight line.

• There is an entire branch of computer science called “V&V”, which stands for validation and verification. It’s how you can be assured that your software is up to the task it was designed for. Here’s a description from the web

What is software verification and validation (V&V)?

Verification

820.3(a) Verification means confirmation by examination and provision of objective evidence that specified requirements have been fulfilled.

“Documented procedures, performed in the user environment, for obtaining, recording, and interpreting the results required to establish that predetermined specifications have been met” (AAMI).

Validation

820.3(z) Validation means confirmation by examination and provision of objective evidence that the particular requirements for a specific intended use can be consistently fulfilled.

Process Validation means establishing by objective evidence that a process consistently produces a result or product meeting its predetermined specifications.

Design Validation means establishing by objective evidence that device specifications conform with user needs and intended use(s).

“Documented procedure for obtaining, recording, and interpreting the results required to establish that a process will consistently yield product complying with predetermined specifications” (AAMI).

Further V&V information here.

• Your average elevator control software has been subjected to more V&V than the computer climate models. And unless a computer model’s software has been subjected to extensive and rigorous V&V. the fact that the model says that something happens in modelworld is NOT evidence that it actually happens in the real world … and even then, as they say, “Excrement occurs”. We lost a Mars probe because someone didn’t convert a single number to metric from Imperial measurements … and you can bet that JPL subjects their programs to extensive and rigorous V&V.

• Computer modelers, myself included at times, are all subject to a nearly irresistible desire to mistake Modelworld for the real world. They say things like “We’ve determined that climate phenomenon X is caused by forcing Y”. But a true statement would be “We’ve determined that in our model, the modeled climate phenomenon X is caused by our modeled forcing Y”. Unfortunately, the modelers are not the only ones fooled in this process.

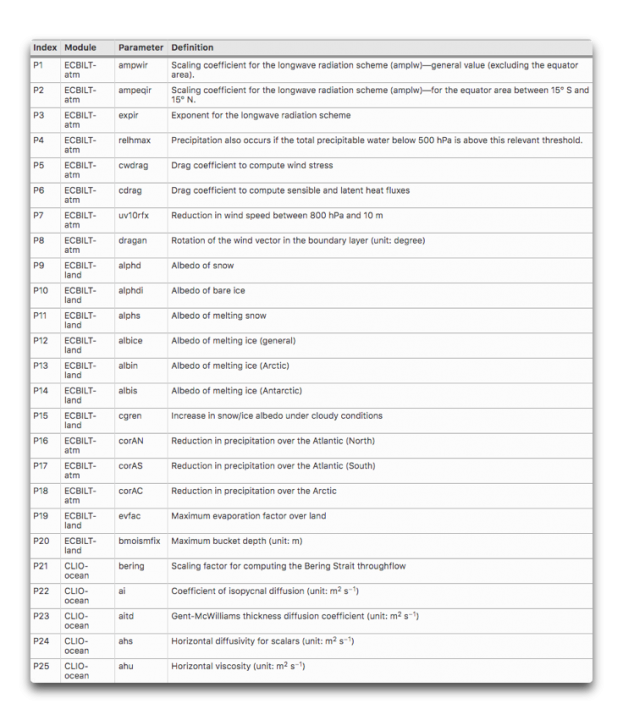

• The more tunable parameters a model has, the less likely it is to accurately represent reality. Climate models have dozens of tunable parameters. Here are 25 of them, there are plenty more.

What’s wrong with parameters in a model? Here’s an oft-repeated story about the famous physicist Freeman Dyson getting schooled on the subject by the even more famous Enrico Fermi …

By the spring of 1953, after heroic efforts, we had plotted theoretical graphs of meson–proton scattering. We joyfully observed that our calculated numbers agreed pretty well with Fermi’s measured numbers. So I made an appointment to meet with Fermi and show him our results. Proudly, I rode the Greyhound bus from Ithaca to Chicago with package of our theoretical graphs to show to Fermi.

When I arrived in Fermi’s office, I handed the graphs to Fermi, but he hardly glanced at them. He invited me to sit down, and asked me in a friendly way about the health of my wife and our new-born baby son, now fifty years old. Then he delivered his verdict in a quiet, even voice. “There are two ways of doing calculations in theoretical physics”, he said. “One way, and this is the way I prefer, is to have a clear physical picture of the process that you are calculating. The other way is to have a precise and self- consistent mathematical formalism. You have neither.”

I was slightly stunned, but ventured to ask him why he did not consider the pseudoscalar meson theory to be a self- consistent mathematical formalism. He replied, “Quantum electrodynamics is a good theory because the forces are weak, and when the formalism is ambiguous we have a clear physical picture to guide us. With the pseudoscalar meson theory there is no physical picture, and the forces are so strong that nothing converges. To reach your calculated results, you had to introduce arbitrary cut-off procedures that are not based either on solid physics or on solid mathematics.”

In desperation I asked Fermi whether he was not impressed by the agreement between our calculated numbers and his measured numbers. He replied, “How many arbitrary parameters did you use for your calculations?” I thought for a moment about our cut-off procedures and said, “Four.” He said, “I remember my friend Johnny von Neumann used to say, with four parameters I can fit an elephant, and with five I can make him wiggle his trunk.” With that, the conver- sation was over. I thanked Fermi for his time and trouble, and sadly took the next bus back to Ithaca to tell the bad news to the students.

• The climate is arguably the most complex system that humans have tried to model. It has no less than six major subsystems—the ocean, atmosphere, lithosphere, cryosphere, biosphere, and electrosphere. None of these subsystems is well understood on its own, and we have only spotty, gap-filled rough measurements of each of them. Each of them has its own internal cycles, mechanisms, phenomena, resonances, and feedbacks. Each one of the subsystems interacts with every one of the others. There are important phenomena occurring at all time scales from nanoseconds to millions of years, and at all spatial scales from nanometers to planet-wide. Finally, there are both internal and external forcings of unknown extent and effect. For example, how does the solar wind affect the biosphere? Not only that, but we’ve only been at the project for a few decades. Our models are … well … to be generous I’d call them Tinkertoy representations of real-world complexity.

• Many runs of climate models end up on the cutting room floor because they don’t agree with the aforesaid programmer’s beliefs, understandings, wrong ideas, and misunderstandings. They will only show us the results of the model runs that they agree with, not the results from the runs where the model either went off the rails or simply gave an inconvenient result. Here are two thousand runs from 414 versions of a model running first a control and then a doubled-CO2 simulation. You can see that many of the results go way out of bounds.

As a result of all of these considerations, anyone who thinks that the climate models can “prove” or “establish” or “verify” something that happened five hundred years ago or a hundred years from now is living in a fool’s paradise. These models are in no way up to that task. They may offer us insights, or make us consider new ideas, but they can only “prove” things about what happens in modelworld, not the real world.

Be clear that having written dozens of models myself, I’m not against models. I’ve written and used them my whole life. However, there are models, and then there are models. Some models have been tested and subjected to extensive V&V and their output has been compared to the real world and found to be very accurate. So we use them to navigate interplanetary probes and design new aircraft wings and the like.

Climate models, sadly, are not in that class of models. Heck, if they were, we’d only need one of them, instead of the dozens that exist today and that all give us different answers … leading to the ultimate in modeler hubris, the idea that averaging those dozens of models will get rid of the “noise” and leave only solid results behind.

Finally, as a lifelong computer programmer, I couldn’t disagree more with the claim that “All models are wrong but some are useful.” Consider the CFD models that the Boeing engineers use to design wings on jumbo jets or the models that run our elevators. Are you going to tell me with a straight face that those models are wrong? If you truly believed that, you’d never fly or get on an elevator again. Sure, they’re not exact reproductions of reality, that’s what “model” means … but they are right enough to be depended on in life-and-death situations.

Now, let me be clear on this question. While models that are right are absolutely useful, it certainly is also possible for a model that is wrong to be useful.

But for a model that is wrong to be useful, we absolutely need to understand WHY it is wrong. Once we know where it went wrong we can fix the mistake. But with the complex iterative climate models with dozens of parameters required, where the output of one cycle is used as the input to the next cycle, and where a hundred-year run with a half-hour timestep involves 1.75 million steps, determining where a climate model went off the track is nearly impossible. Was it an error in the parameter that specifies the ice temperature at 10,000 feet elevation? Was it an error in the parameter that limits the formation of melt ponds on sea ice to only certain months? There’s no way to tell, so there’s no way to learn from our mistakes.

Next, all of these models are “tuned” to represent the past slow warming trend. And generally, they do it well … because the various parameters have been adjusted and the model changed over time until they do so. So it’s not a surprise that they can do well at that job … at least on the parts of the past that they’ve been tuned to reproduce.

But then, the modelers will pull out the modeled “anthropogenic forcings” like CO2, and proudly proclaim that since the model no longer can reproduce the past gradual warming, that demostrates that the anthropogenic forcings are the cause of the warming … I assume you can see the problem with that claim.

In addition, the gridsize of the computer models are far larger than important climate phenomena like thunderstorms, dust devils, and tornados. If the climate model is wrong, is it because it doesn’t contain those phenomena? I say yes … computer climate modelers say nothing.

Heck, we don’t even know if the Navier-Stokes fluid dynamics equations as they are used in the climate models converge to the right answer, and near as I can tell, there’s no way to determine that.

To close the circle, let me return to where I started—a computer model is nothing more than my ideas made solid. That’s it. That’s all.

So if I think CO2 is the secret control knob for the global temperature, the output of any model I create will reflect and verify that assumption.

But if I think (as I do) that the temperature is kept within narrow bounds by emergent phenomena, then the output of my new model will reflect and verify that assumption.

Now, would the outputs of either of those very different models be “evidence” about the real world?

Not on this planet.

And that is the short list of things that are wrong with computer models … there’s more, but as Pierre said, “the margins of this page are too small to contain them” …

My very best to everyone, stay safe in these curious times,

w.

PS—When you comment, quote what you’re talking about. If you don’t, misunderstandings multiply.

H/T to Wim Röst for suggesting I write up what started as a comment on my last post.

in fact if you listen or read carefully they are just saying we are <i>convince</i> they our results are good/robust/useful to demonstrate that a climate catastrophe is not impossible..

Well the weather models are showing that the potential for some severe weather hitting the Texas and Oklahoma panhandles this afternoon and this time I think they have nailed it.

And of course if there is a severe tornado outbreak, and it sure is looking likely, it will be hyped as evidence of climate change by some fools.

Thanks, rah. Although I did love the idea of a “severed tornado outbreak”, I fixed the typo …

w.

Thanks!

Hi Willis,

Excellent article as usual.

Would you be able to provide a link to the original of the climate model tunable parameters table please?

Thx TS

Sorry, the image is lost in the flood, but there’s a paper about them here.

w.

Much obliged, sir!

I am in awe of your knowledge and experience with computers and programming Willis and couldnt agree with you more! (I am not a programmer however). I think probably by far the most accurate model is a standard ruler and pencil. Just extend the line forward from the top and bottom of all the sinusoidal curves from the past to work out the range and put in a median line!!

On a short timeline this will show a possible increase but over the longer timeframes the range will probably show a decrease and the upward limit of the shorter timeframe! Certainly will produce a result considerably higher in credibility than the spaghetti mess of ‘computer modeled’ prediction!

A really interesting and fascinating look into your experience with computers, programming and modeling. Bravo Willis!

As I said in a comment on previous posts, climate models are wrong, but that does not mean they aren’t useful. If only we could construct a second planet earth to do experiments on we’d never need another one. Alas.

They are actually the ABSOLUTE OPPOSITE of USEFUL (except for propaganda)

The fact that they are provably WRONG and yet they are still used….

…. is causing a whole heap of wasted money, environmental degradation, electrical supply system instability, and general human suffering etc around the world.

Stop turning a blind eye to the IMMENSE DAMAGE DONE by these provably WRONG climate models.

OMG, when you fall back on that little piece of anti-science nonsense…

you show that you really have been taking the Klimate Kool-aide intravenously.

A deep insidious infection becomes obvious!

OK, enough seriousness. Time for programmer in-jokes. I’ll start.

Back when memory was limited, a shoe company goes to a programmer to automate their inventory system. He asks them “Are there any special characters in your inventory codes”? Told no, he writes up a system that works and sorts flawlessly for just letters and numbers.

But when they bring in their inventory list, the first entry is “Boot#32$1sz_9” … he says “I thought you said there were no special characters in your inventory codes!”

“Those characters aren’t special”, the shoe guy says, “we have those in all of our inventory codes” …

w.

How about anecdotes? One day at DEC when I was working third shift to get some standalone time, I bicycled into work around midnight. I went home for breakfast and changed into business attire, drove back to DEC for the bus trip to the DECUS User Society meeting that day. Then managed to miss the bus back that evening. So I joined some coworkers who were staying late, had dinner with them, talked to customers, a friend drove me back to DEC and I drove home, getting back – – – around midnight.

It was the first (and only!) time I spent a full day at work.

One time we needed to burn a whole bunch of PROMs to ship to our customers. We were a development site, not a production site, so our one and only PROM burner could only burn one set at a time I also couldn’t get started until everyone else left for the day because of ongoing development work. I was warned the day before that I was going to have to do this so I brought about 3 books to work with me.

Insert a set of PROMS, press the start program button. 20 minutes take the PROMS out and slip them into their respective tubes. Put the next set in, press the programming button. And so on, all night. I finally finished right about the time the boss pulled in. I handed him the tubes and let him know that if anyone tried to contact me that day, they would come in to work the next day about a head shorter.

did that same kind of shit working for Toyota. It’s a funny story that i hope nobody ever repeats. Except i know they will.

Yes!!

My son had a summer job where he took calls re technical problems. A good number of people had to be guided on the phone to a certain button that caused the screen to light up.

Then there was a weekend where he went speechless after a number of calls like that and had to go home to recover.

Aw, I was convinced it was a photo of the memory from the Apollo guidance system 🙁

For the curious amongst you, there is an excellent series over on youtube covering a restoration project of one of the AGMs: https://www.youtube.com/watch?v=2KSahAoOLdU

Well worth a watch. It contains plenty of closeup of its memory modules and I was absolutely fascinated by the repairs they did.

Kildall’s CP/M was an OS not a computer language. Ask Bill, he copied it to write MSDOS . He changed the slash to backslash, so no one would notice. 😉

No, CP/M was taken from DEC systems (OS8/RT11/whatever) that used ‘/’ for specifying options.

Then Bell Labs wrote Unix, and they used ‘/’ to separate files in path names and ‘-‘ to specify options, e.g. “kill -f /bin/laden”.

When Microsoft wanted a multi-level file system, they copied Unix’s layout but changed ‘/’ to ‘\’.

Wonderful post, thank you for doing it. I love reading about your personal experiences, which are always fascinating, and your technical exposition is both dead-on and yet highly understandable. I’m almost 67, and had many of the same kinds of computer experience as you early on; ah, the good old days!

I have wondered about another potential source of error in climate model calculations of the climate state in 100 years, and have researched it enough to think it possible but not enough to find out whether anyone has actually tried to find an example. Semiconductor memory and logic circuits are susceptible to bit errors, and though the bit error rate is extremely low in modern computers, it isn’t zero. Error detecting/correcting firmware exists to handle a corrupted bit, but the more better it is, the more additional overhead it places on every computation. A study I read found that supercomputers of the type used only limited error checking/correction to trade for speed. There are classes of bit error they cannot detect. So the authors reported a series of test runs they performed with one computer, using external monitoring to check for the occurrence of any of those type errors. They found that the rate of such errors was low, it was not trivial.

I hark back to an article posted by Judith Curry citing a set of climate runs on one computer in which the initial temperature conditions were altered by 1 trillionth of a degree, and the run results began to drift apart until eventually they were completely different. With the stupendous number of computations performed in a 100-year simulation, on a high spatial resolution grid, the types of bit errors that evade supercomputer check/correct would have a high probability of altering some of the numbers by enough to affect results, but not so much as to be noticeable when they occurred in an output review.

It would be simple enough to test, though expensive. Just do consecutive 100 year runs of a climate model on the same computer, using identical initial conditions, and see if they differ from one another. It may take several runs to get some statistics, and that, as I say, would be very expensive. Is it worth it? I don’t know.

Even cheap computers include parity bits with every byte. A singe bit being changed would trigger the guard circuits to shut down the computer.

More advanced computer and all mainframes, include error detection and correction circuitry. A singe bit error would be detected and repaired without interrupting computer operation. With the simple EDC circuits, 2 bit errors would be detected, but they couldn’t be corrected.

Perhaps I shouldn’t have tried to simplify my presentation so much, since you’ve simplified the problem out of existence – except that it still exists. Parity bits were not included in early PCs, or even in Seymour Cray’s first supercomputers. Now they are, but to what effect? The multi-billion dollar Littoral Combat Ship relied on simple parity checks in its fire control system, and wound up shutting down every few minutes. You’re right in that simple EDC circuits could detect even bit errors, but not correct them, which is kinda the point here. There are classes of errors that need higher overhead EDC, and those higher overhead EDC algorithms are not included in the kind of computers used in climate modeling.

Given the huge amount of memory in a computer used for climate modeling, and the extremely long run times for a century climate prediction, the odds of even-bit memory errors go up tremendously. If they can’t be corrected, then they propagate.

And it’s not just memory errors, but errors along “noisy” data channels – such as the buses that feed the CPUs. And the CPUs themselves are prone to bit flips.

Google has found a much higher incidence of memory errors than are predicted by EDC models. Fortunately, these are in situations where data are stored statically, and redundantly. In a marching algorithm, there is no way at present to stop things and correct even bit memory errors, and no way to even detect certain bus noise errors or CPU bit errors.

>>

Heck, we don’t even know if the Navier-Stokes fluid dynamics equations as they are used in the climate models converge to the right answer, and near as I can tell, there’s no way to determine that.

<<

The Navier-Stokes Equation is one of the Clay Mathematics Institute’s Millennium Problems:

“Navier-Stokes Equation

This is the equation which governs the flow of fluids such as water and air. However, there is no proof for the most basic questions one can ask: do solutions exist, and are they unique?”

You can win a million dollars if you find a proof.

Jim

Willis, that is a great analysis of the state of modelling with respect to climate “science”.

The top “climate scientists” and politicians should be required to read it. I know that some will peek here.

I will send it to people who should read it.

You articles are always unbiased and form conclusions based on evidence.

Meanwhile, I did not see our first computer mentioned. I waited a bit and bought the Apple IIGS which was great for graphics and video. It helped our older son get into IT. After that purchase I think the subsequent computers cost less.

I only did some Fortran post university in the 1970’s but did not get into it. I think I would like to learn R..

It was humorous at work in the 70’s. Some could talk the whole shift about Ram and Rom and were really excited when an outfit doubled capacity from 1024 to 2048 kb or something like that.

The last paragraph may have been in the 1980’s.

I’d just like to add that while modern computational fluid dynamic models are indeed useful tools for aircraft design, they do so ONLY for modeling specific regimes of flight where air flow is relatively well behaved (i.e. they work well for modeling how typical commercial passenger jets are flown). As soon as you try to model high angles of attack (turning and burning in jet fighter parlance), all bets are off as turbulent flow becomes dominant and we have no good ways to accurately model turbulent flow. So, if you think airflow and ocean currents are important to climate modeling, I think it is pretty obvious we have a problem here.

A great example I remember reading about some years back was a buoy study run to map currents in the Atlantic ocean. They deployed a number of buoys that sank to target depths to cruise along, then popped to the surface to get a GPS fix, radio that in, and then drop back to depth on a regular basis to map their routes. The expectation was that they would all flow from the arctic where they were deployed down the US Eastern seaboard along the much lauded “conveyor belt.” But in reality they ended up scattered all over the Atlantic in an almost random pattern that surprised everyone. So I have always considered climate modeling a fools errand, and my only surprise has been the number of fools we have purporting to be scientists.

Just imagine a model of a working internal combustion engine where it shows: the inputs of fuel, air & electricity; a rotating shaft representing the output; and a black box with a simplified mathematical equation to approximate the output based on the inputs; no explanation of the pistons; no explanation of actual combustion & expansion; no inner workings sufficient for replication.

A climate model is like that, more like a black box full of complexity but approximates some larger functions and glosses over the smaller intricacies with parameters based on averages. No explanation of the small scale interactions which lead to weather, no inner workings of the climate ‘motor’. The daily output could then be averaged to accurately model long term climate. But all we get are guesses multiplied by more guesses and parameters adjusted until the output matches their assumptions.

I think in 2019 it was said that: If you used every supercomputer in the world (top500) combined to accurately model the climate it would take about 100 years to model 100 years.

Willis, great article, thanks.

You say “Next, all of these models are “tuned” to represent the past slow warming trend.”

Isn’t it the case that this slow warming trend has itself been “tuned” to match CO2 changes in the atmosphere through the temperature adjustments that have been made by those responsible for the official records.

Hence the models assume that CO2 is the main driver and they are tuned to a temp curve that reflects this assumption. They are truly GIGO, except that the results are treated as Gospel!

IMHO the IBM PC wrecked the microcomputer market – there were dozens of companies making micros but ‘business’ wouldn’t buy them until they had the ‘badge’ even though the first PC was very plain vanilla (until the XT and a hard drive). Taught me a life lesson about restricted corporate thinking causing mediocrity. My road less traveled:: Intel 4004, Assembly, Fortran, Basic, Pascal. A hunt and pecker typer at the punch card machine -> a long engineering formula with a lot of parens would take multiple tries for me. Really enjoyed this essay by Willis – much thanks.

Thanks for another great article. As one other commenter noted, you took a very complex topic and distilled it in a way non-technical people like me can understand. That is a rare talent. I have a modest technical background with some minor programming experience, but nothing remotely close to virtually everyone else posting here. The one similarity with many is my age – my first experience with computers was with a Data General with (if I remember correctly) 512 k memory and 2.5 MB (memorex?) disk drives. When the large washer machine size 25 MB multi-platter disk drives came out, we thought no one would ever run out of disk space.

Anyway, based on what I have read about models, my description of climate models has been reduced to a simple statement. Climate models are used to manipulate data to achieve a predetermined desired outcome and present it as fact. That, IMO is not science, it is fraud.

I look forward to your next article.

Great nostalgia……I am 77, so just a bit younger than you……so I did the punch card drill in the mid-60’s doing my zoology Masters, did my PhD stats on a Programma101 and an HP9100 desktop in the late 60’s…..as I returned to work in an Ottawa government lab in 1971, I talked my director into buying a Wang shared desktop calculator system (4 terminals on one central processor all hard wired together….way better than the standalone calculators then available)……a few weeks later though the local HP sales guy walked in with the first HP pocket calculator (~$1000)…..and right after that Xerox Data Systems (XDS) rep sold our lab a time share subscription via telephone modem to Xerox PARC in Calif….this worked really well, but very slow compared to today, and the phone bills were scary…..and we could only print out the data on a tractor feed terminal (with a paper punch tape for memory)…..no video display, but we could run our stats programs in near real time. And by the mid 70’s our Ag Department ran a similar time share facility based on an Ottawa minicomputer for its labs across the Canada; this was overtaken by the rise of “micro” computers like Apple ][e and then IBM PCs, and the rest is history.

With my kids at home we had a friend with a TRS-80 that induced me to get them a VIC-20, and very soon after a Commodore64….I was never much of a programmer, but have always been close to the industry and its developments for use in my field work research on soil fauna.

One of our research necessities was taking soil and air temps on a continuous basis for which we used Campbell Scientific data loggers with wired temp sensors; initially we set their sampling frequency way too high (say every 10 seconds) because we thought all that data could now be readily managed on our PCs….no problem. Except we quickly discovered that driving our vehicle too close to the air temp sensors in the Stevenson Screen to collect the data every couple of weeks caused a spike from the hot vehicle engine, and even from the body temp of the person collecting the data. The simplest fix was to increase the read frequency to about 10 minutes rather than trying to mess around “cleaning” up the high frequency data.

Which leads me to a couple of observations about scientists from different generations: When I started my research career as a grad student in the mid-60’s there were still a lot of old science guys who had begun their work in the 1930’s still around, and me being a big history buff, I was always happy to listen to their stories (Canadians, Americans, Brits, French, Aussies)…..and I was always most impressed with their attention to detail and their meticulous record keeping (diaries, log books, field notes). So I am just appalled when I see, for example, all those wonderful old temp records prior to about 1970 “adjusted” by some computer algorithm to fit some pre-conceived notion of what is surmised to be going on now.

My other observation about the current generation of scientists is that many of them are far too happy doing their research “on the computer”. This was something that I, then almost a grey beard in the early 1990’s, noticed and remarked upon at the time along with my peer colleagues. Whether doing lab or field research, actually collecting data from well designed experiments is critical to the integrity of science. Subsequent analysis and interpretation are important, but early experimental steps define science integrity.

Willis…..about 4 lines from the end of your excellent essay, should not the “be” precede “evidence”?…….cheers……..Alan T

Thanks, Alan, typo fixed. Of course, Brian will take that typo as evidence that I’m not a “real” writer …

w.

Excellent article, Willis.

I see there are 456 comments below for me to read.

Upon re-reading this, I realized I’d left part of my computer history out. So I added this to the head post …

Onwards, ever onwards,

w.

Willis, I enjoy reading your articles. They always provide clear insight into complicated problems. You have about a decade more experience than me (I was limited to Algol, Fortran, and Basic – I never really got into C or C++ although I managed a large software effort where the programmers used C). I will add a comment to the Verification & Validation (V&V) portion from the Systems Engineering perspective which I think is applicable to any engineering endeavor. We would start with a customer need for which we would create a “system” to satisfy that need. We then wrote a set of cascading requirements (Mission, System, Major Subsystems, and lower level subsystems). As part of this we would review the work to ensure that the Requirements we defined will satisfy the customer’s need and then we reviewed our work to ensure what was implemented (actually created) covered all the Requirements we defined. A lot of models were used in this effort but all the models had to eventually be checked against the real world. Real world checks were not ‘optional’.

Thank you for your contribution to the enlightenment of future generations.

complete drivel – the giveaway is this – So if I think CO2 is the secret control knob for the global temperature, the output of any model I create will reflect and verify that assumption.

Unfortunately for the author, Arrhenius had this sorted out a long time ago. Engineers should try to learn some science before pontificating about things that they dont understand.

>>

Engineers should try to learn some science before pontificating about things that they dont understand.

<<

Back in Roman days, Roman engineers were considered part of the common working class, while Roman scientists considered themselves part of the aristocracy. Because of the class differences, Roman scientists wouldn’t be caught dead talking to Roman engineers and vice versa. That was lucky for the rest of the world, because Roman scientific knowledge was sufficiently advanced that had they talked to their engineers, Roman weaponry would have been far more formidable than it was.

Many years ago, I was told by my engineering professors that Roman snobbery was no longer the case. In modern times, engineers and scientists freely talk to each other, and they recognized each other’s strengths. Modern society has benefited. In recent years, I have noticed an increase of anti-engineering bias by so-called scientists. In fact, it’s so bad now that I consider things like “climate science” to be an oxymoron. And my original assumption is confirmed by each passing day.

Jim

Arrhenius had the relationship between computer models and computer modelers “sorted out a long time ago”?

Who knew?

w.

I guess I’d say: Great minds rarely follow the same rut. I did follow a simiar rut, though.

Regarding fluid dynamics- I’m sure Boeing’s procedures work, at least for them.

i have a friend,a pilot in the military and last notice flying for Braniff(he’as old as we are).

A friend of his, probably 20 years ago, decided he was going to try for a speed record in a homebuilt plane. Jim was supposed to check the aerodynamics with the current “best” cfd program they could get. The design turned out to be a very small canard with the pilot crammed in in front of the wing, the motor behind, as light as possible. It didn’t even have rollbar protection for the pilot.

Jim gave him his results and it pointed to the canard being heavily loaded but within a range of speeds, weights, and wind it should be fine. The pilot/builder took it and ran. The plane was built and ready for test. They were running a 2 week flight test program which verified the speed performance and it handled pretty much as predicted.

Near the end of the second week of testing a thunderstorm popped up some miles away. The pilot wanted one last flight that day, so up he went. It was again successful and he brought the plane in. As he slowed down to land a heavy gust, estimated at 25mph or more, hit the plane at about 45deg on the left. The left canard lifted and started to roll the plane(the right canard was in the lee of the fuselage and had virtually no lift). The pilot simply couldn’t keep the left wing and canard down. In less than a couple seconds the plane flipped over and crashed.

The pilot died instantly due to the lack of a rollbar.

There are a bunch of mistakes there, primarily on the part of the builder/pilot. At the time canard homebuilts had some popularity. But starting out with a completely new design without an adequate design review by a bunch of knowledgeable eyes a CFD program alone just wouldn’t do the trick.

The hubris of the builder/pilot is matched, I think, by many of the scientists involved in climate models. Computers make it way to easy to make people with limited experience and skills think they can do miracles. Especially when lots of cohorts, $$$, and fame are deathly attractors. (pun intended).

Sorry for the length. Hope all your(as in everybody) has better luck!!! and results.