Guest post by Pat Frank

My September 7 post describing the recent paper published in Frontiers in Earth Science on GCM physical error analysis attracted a lot of attention, consisting of both support and criticism.

Among other things, the paper showed that the air temperature projections of advanced GCMs are just linear extrapolations of fractional greenhouse gas (GHG) forcing.

Emulation

The paper presented a GCM emulation equation expressing this linear relationship, along with extensive demonstrations of its unvarying success.

In the paper, GCMs are treated as a black box. GHG forcing goes in, air temperature projections come out. These observables are the points at issue. What happens inside the black box is irrelevant.

In the emulation equation of the paper, GHG forcing goes in and successfully emulated GCM air temperature projections come out. Just as they do in GCMs. In every case, GCM and emulation, air temperature is a linear extrapolation of GHG forcing.

Nick Stokes’ recent post proposed that, “Given a solution f(t) of a GCM, you can actually emulate it perfectly with a huge variety of DEs [differential equations].” This, he supposed, is a criticism of the linear emulation equation in the paper.

However, in every single one of those DEs, GHG forcing would have to go in, and a linear extrapolation of fractional GHG forcing would have to come out. If the DE did not behave linearly the air temperature emulation would be unsuccessful.

It would not matter what differential loop-de-loops occurred in Nick’s DEs between the inputs and the outputs. The DE outputs must necessarily be a linear extrapolation of the inputs. Were they not, the emulations would fail.

That necessary linearity means that Nick Stokes’ entire huge variety of DEs would merely be a set of unnecessarily complex examples validating the linear emulation equation in my paper.

Nick’s DEs would just be linear emulators with extraneous differential gargoyles; inessential decorations stuck on for artistic, or in his case polemical, reasons.

Nick Stokes’ DEs are just more complicated ways of demonstrating the same insight as is in the paper: that GCM air temperature projections are merely linear extrapolations of fractional GHG forcing.

His DEs add nothing to our understanding. Nor would they disprove the power of the original linear emulation equation.

The emulator equation takes the same physical variables as GCMs, engages them in the same physically relevant way, and produces the same expectation values. Its behavior duplicates all the important observable qualities of any given GCM.

The emulation equation displays the same sensitivity to forcing inputs as the GCMS. It therefore displays the same sensitivity to the physical uncertainty associated with those very same forcings.

Emulator and GCM identity of sensitivity to inputs means that the emulator will necessarily reveal the reliability of GCM outputs, when using the emulator to propagate input uncertainty.

In short, the successful emulator can be used to predict how the GCM behaves; something directly indicated by the identity of sensitivity to inputs. They are both, emulator and GCM, linear extrapolation machines.

Again, the emulation equation outputs display the same sensitivity to forcing inputs as the GCMs. It therefore has the same sensitivity as the GCMs to the uncertainty associated with those very same forcings.

Propagation of Non-normal Systematic Error

I posted a long extract from relevant literature on the meaning and method of error propagation, here. Most of the papers are from engineering journals.

This is not unexpected given the extremely critical attention engineers must pay to accuracy. Their work products have to perform effectively under the constraints of safety and economic survival.

However, special notice is given to the paper of Vasquez and Whiting, who examine error analysis for complex non-linear models.

An extended quote is worthwhile:

“… systematic errors are associated with calibration bias in [methods] and equipment… Experimentalists have paid significant attention to the effect of random errors on uncertainty propagation in chemical and physical property estimation. However, even though the concept of systematic error is clear, there is a surprising paucity of methodologies to deal with the propagation analysis of systematic errors. The effect of the latter can be more significant than usually expected.

…

“Usually, it is assumed that the scientist has reduced the systematic error to a minimum, but there are always irreducible residual systematic errors. On the other hand, there is a psychological perception that reporting estimates of systematic errors decreases the quality and credibility of the experimental measurements, which explains why bias error estimates are hardly ever found in literature data sources.”

…

“Of particular interest are the effects of possible calibration errors in experimental measurements. The results are analyzed through the use of cumulative probability distributions (cdf) for the output variables of the model.

…

“As noted by Vasquez and Whiting (1998) in the analysis of thermodynamic data, the systematic errors detected are not constant and tend to be a function of the magnitude of the variables measured.

“When several sources of systematic errors are identified, [uncertainty due to systematic error] beta is suggested to be calculated as a mean of bias limits or additive correction factors as follows:

“beta = sqrt[sum over(theta_S_i)^2],

“where “i” defines the sources of bias errors and theta_S is the bias range within the error source i. (my bold)”

That is, in non-linear models the uncertainty due to systematic error is propagated as the root-sum-square.

This is the correct calculation of total uncertainty in a final result, and is the approach taken in my paper.

The meaning of ±4 W/m² Long Wave Cloud Forcing Error

This illustration might clarify the meaning of ±4 W/m^2 of uncertainty in annual average LWCF.

The question to be addressed is what accuracy is necessary in simulated cloud fraction to resolve the annual impact of CO2 forcing?

We know from Lauer and Hamilton, 2013 that the annual average ±12.1% error in CMIP5 simulated cloud fraction (CF) produces an annual average ±4 W/m^2 error in long wave cloud forcing (LWCF).

We also know that the annual average increase in CO₂ forcing is about 0.035 W/m^2.

Assuming a linear relationship between cloud fraction error and LWCF error, the GCM annual ±12.1% CF error is proportionately responsible for ±4 W/m^2 annual average LWCF error.

Then one can estimate the level of GCM resolution necessary to reveal the annual average cloud fraction response to CO₂ forcing as,

(0.035 W/m^2/±4 W/m^2)*±12.1% cloud fraction = 0.11%

That is, a GCM must be able to resolve a 0.11% change in cloud fraction to be able to detect the cloud response to the annual average 0.035 W/m^2 increase in CO₂ forcing.

A climate model must accurately simulate cloud response to 0.11% in CF to resolve the annual impact of CO₂ emissions on the climate.

The cloud feedback to a 0.035 W/m^2 annual CO2 forcing needs to be known, and needs to be able to be simulated to a resolution of 0.11% in CF in order to know how clouds respond to annual CO2 forcing.

Here’s an alternative approach. We know the total tropospheric cloud feedback effect of the global 67% in cloud cover is about -25 W/m^2.

The annual tropospheric CO₂ forcing is, again, about 0.035 W/m^2. The CF equivalent that produces this feedback energy flux is again linearly estimated as,

(0.035 W/m^2/|25 W/m^2|)*67% = 0.094%.

That is, the second result is that cloud fraction must be simulated to a resolution of 0.094%, to reveal the feedback response of clouds to the CO₂ annual 0.035 W/m^2 forcing.

Assuming the linear estimates are reasonable, both methods indicate that about 0.1% in CF model resolution is needed to accurately simulate the annual cloud feedback response of the climate to an annual 0.035 W/m^2 of CO₂ forcing.

This is why the uncertainty in projected air temperature is so great. The needed resolution is 100 times better than the available resolution.

To achieve the needed level of resolution, the model must accurately simulate cloud type, cloud distribution and cloud height, as well as precipitation and tropical thunderstorms, all to 0.1% accuracy. This requirement is an impossibility.

The CMIP5 GCM annual average 12.1% error in simulated CF is the resolution lower limit. This lower limit is 121 times larger than the 0.1% resolution limit needed to model the cloud feedback due to the annual 0.035 W/m^2 of CO₂ forcing.

This analysis illustrates the meaning of the ±4 W/m^2 LWCF error in the tropospheric feedback effect of cloud cover.

The calibration uncertainty in LWCF reflects the inability of climate models to simulate CF, and in so doing indicates the overall level of ignorance concerning cloud response and feedback.

The CF ignorance means that tropospheric thermal energy flux is never known to better than ±4 W/m^2, whether forcing from CO₂ emissions is present or not.

When forcing from CO₂ emissions is present, its effects cannot be detected in a simulation that cannot model cloud feedback response to better than ±4 W/m^2.

GCMs cannot simulate cloud response to 0.1% accuracy. They cannot simulate cloud response to 1% accuracy. Or to 10% accuracy.

Does cloud cover increase with CO₂ forcing? Does it decrease? Do cloud types change? Do they remain the same?

What happens to tropical thunderstorms? Do they become more intense, less intense, or what? Does precipitation increase, or decrease?

None of this can be simulated. None of it can presently be known. The effect of CO₂ emissions on the climate is invisible to current GCMs.

The answer to any and all these questions is very far below the resolution limits of every single advanced GCM in the world today.

The answers are not even empirically available because satellite observations are not better than about ±10% in CF.

Meaning

Present advanced GCMs cannot simulate how clouds will respond to CO₂ forcing. Given the tiny perturbation annual CO₂ forcing represents, it seems unlikely that GCMs will be able to simulate a cloud response in the lifetime of most people alive today.

The GCM CF error stems from deficient physical theory. It is therefore not possible for any GCM to resolve or simulate the effect of CO₂ emissions, if any, on air temperature.

Theory-error enters into every step of a simulation. Theory-error means that an equilibrated base-state climate is an erroneous representation of the correct climate energy-state.

Subsequent climate states in a step-wise simulation are further distorted by application of a deficient theory.

Simulations start out wrong, and get worse.

As a GCM steps through a climate simulation in an air temperature projection, knowledge of the global CF consequent to the increase in CO₂ diminishes to zero pretty much in the first simulation step.

GCMs cannot simulate the global cloud response to CO₂ forcing, and thus cloud feedback, at all for any step.

This remains true in every step of a simulation. And the step-wise uncertainty means that the air temperature projection uncertainty compounds, as Vasquez and Whiting note.

In a futures projection, neither the sign nor the magnitude of the true error can be known, because there are no observables. For this reason, an uncertainty is calculated instead, using model calibration error.

Total ignorance concerning the simulated air temperature is a necessary consequence of a cloud response ±120-fold below the GCM resolution limit needed to simulate the cloud response to annual CO₂ forcing.

On an annual average basis, the uncertainty in CF feedback into LWCF is ±114 times larger than the perturbation to be resolved.

The CF response is so poorly known that even the first simulation step enters terra incognita.

The uncertainty in projected air temperature increases so dramatically because the model is step-by-step walking away from an initial knowledge of air temperature at projection time t = 0, further and further into deep ignorance.

The GCM step-by-step journey into deeper ignorance provides the physical rationale for the step-by-step root-sum-square propagation of LWCF error.

The propagation of the GCM LWCF calibration error statistic and the large resultant uncertainty in projected air temperature is a direct manifestation of this total ignorance.

Current GCM air temperature projections have no physical meaning.

Dr Frank

Many thanks for your fine exposition. This, and the more intelligent ripostes to your work, have been an education.

“…The CMIP5 GCM annual average 12.1% error in simulated CF is the resolution lower limit. This lower limit is 121 times larger than the 0.1% resolution limit needed to model the cloud feedback due to the annual 0.035 W/m^2 of CO₂ forcing…”

That’s the average. Are there any GCMs that get much closer in tracking CF? Forgive me if you have covered this already.

First, let me take this opportunity to once again thank Anthony Watts and Charles the Moderator.

We’d (I’d) be pretty much lost without you. You’ve changed the history of humankind for the better.

mothcatcher, different GCMs are parameterized differently, when they’re tuned to reproduce target observables such as the 20th century temperature trend.

None of them are much more accurate than another. Those that end up better at tracking this are worse at tracking that. It’s all pretty much a matter of near happenstance.

Thank you Dr. Frank for bringing this to the world’s attention. Both error and uncertainty analysis are traditional engineering concepts. As an EE who has done design work of electronic equipment, I have a very good expectation of what uncertainty means.

You can sit down and design a 3 stage RF amplifier with an adequate noise figure and bandwidth on paper. You can evaluate available components and how tolerance statistics affect the end result. But, when you get down to the end, you must decide, will this design work when subjected to manufacturing. This is where uncertainty reigns. Are component specs for sure? Will permeability of ferrite cores be what you expected and have the bandwidth required. Will there be good trace isolation? Will the ground plane be adequate? Will unwanted spurious coupling be encountered? Will interstage coupling be correct? And on and on. These are all uncertainties that are not encountered in (nor generally allowed for in) the design equations.

Do these uncertainties ring any bells with the folks dealing with GCM’s? Are all their inputs and equations and knowledge more than adequate to address the uncertainties in the parameters and measurements they are using? I personally doubt it. The world wide variables in the atmosphere that are not part of the equations and models must be massive. The possibility of wide, wide uncertainty in the outputs must be expected and accounted for if the models are to be believed.

Pat,

“It’s all pretty much a matter of near happenstance.” Trade offs! However, it calls into serious question the claim that the models are based on physics when they don’t have a general solution for all the output parameters and have to customize the models for the parameters they are most interested in.

Pat Frank Thanks. Here is a link to the pdf (via Scholar.google.com) of:

Vasquez VR, Whiting WB. Accounting for both random errors and systematic errors in uncertainty propagation analysis of computer models involving experimental measurements with Monte Carlo methods. Risk Analysis: An International Journal. 2005 Dec;25(6):1669-81.

It’s a very worthwhile paper, isn’t David.

When I was working on the study, I wrote to Prof. Whiting to ask for a reprint of a paper in the journal Fluid Phase Equilibria, which I couldn’t access.

After I asked for the reprint (which he sent), I observed to him that, “If you don’t mind my observation, after scanning some of your papers, which I’ve just found, you are in an excellent position to very critically assess the way climate modelers assess model error.

Climate modelers never propagate error through their climate models, and never publish simulations with valid physical uncertainty limits. From my conversations with one or two of them, model error propagation seems to be a completely foreign idea.”

Here’s what he wrote back, “Yes, it is surprisingly unusual for modelers to include uncertainty analyses. In my work, I’ve tried to treat a computer model as an experimentalist treats a piece of laboratory equipment. A result should never be reported without giving reasonable error estimates.”

It seems modelers elsewhere are also remiss.

“From my conversations with one or two of them, model error propagation seems to be a completely foreign idea.”

It’s how they are trained. They are more computer scientists than physical scientists. The almighty computer program will spit out a calculation that is the TRUTH – no uncertainty allowed.

Most of them have never heard of significant digits or if they have it didn’t mean anything to them. Most of them have never set up a problem on an analog computer where inputs can’t be set to to an arbitrary precision and therefore the output is never the same twice.

“0.1When reporting the result of a measurement of a physical quantity, it is obligatory that some quantitative indication of the quality of the result be given so that those who use it can assess its reliability. Without such an indication, measurement results cannot be compared, either among themselves or with reference values given in a specification or standard. It is therefore necessary that there be a readily implemented, easily understood, and generally accepted procedure for characterizing the quality of a result of a measurement, that is, for evaluating and expressing its uncertainty.”

https://www.isobudgets.com/pdf/uncertainty-guides/bipm-jcgm-100-2008-e-gum-evaluation-of-measurement-data-guide-to-the-expression-of-uncertainty-in-measurement.pdf

“They are more computer scientists than physical scientists.”

By looking over code of ‘state of the art’ climate computer models, I can be almost sure they aren’t either.

Not computer scientists, not physicists. They are simply climastrologers.

I call them grantologists.

Quote: “Many thanks for your fine exposition. This, and the more intelligent ripostes to your work, have been an education.”

This article made it for me. Clear as crystal. Reminds me of some years back I understood the fabrication of hockey sticks.

Pat, thanks for the plain language explanation of your paper.

Question: Given current and past temperature stability during ever-increasing CO2 fractions, should we not expect the probability of cloud formation to follow a normal distribution curve.

That is to say: Scientists are duty-bound to enumerate the maximum uncertainty in the entire universe of possibilities. Nonetheless, an outcome near the mean of the reference fraction, seems far more probable, than one at either extreme.

There’s no reason to expect a normal curve. This is the same conceptual shortcut that leads people to incorrectly apply stochastic error corrections to systematic error. In general, the distinction is precisely that systemic effects are highly unlikely to be normally distributed.

Breeze,

I’m postulating that a dramatic change is less likely to occur than one closer to a current reference.

If I understand you correctly, you’re arguing for an equal probability for the gamut of potential outcomes. This position defies logic, as prevailing conditions indicate a muted response at best.

Probability forecasting is based on analysis of past outcomes.

Robr: ” This position defies logic, as prevailing conditions indicate a muted response at best.”

The natural variability of the just the annual global temperature average would argue against a normal probability distribution for the outcomes in our ecosphere, let alone on a regional basis. It is highly likely that there is a continuum of near-equal possibilities with extended tails in both directions. On an oscilloscope it would look like a rounded off square wave pulse with exponential rise times and fall times.

Tim G.,

I beg to differ as global temperature (in the face of rapidly increased CO2) have been remarkably flat; albiet with some warming.

Arguing for an even probability distribution, in the face of said stability, requires certitude in an exponential CF response to rising CO2

RobR,

go here: http://images.remss.com/msu/msu_time_series.html

You will see significant annual variation in the average global temperature. If you expand the time series, say by 500-700 years the variation is even more pronounced.

“Arguing for an even probability distribution, in the face of said stability, requires certitude in an exponential CF response to rising CO2”

Huh? How do you figure an even probability distribution requires an exponential response of any kind driven by anything? A Gaussian distribution has an exponential term. Not all probability distributions do. See the Cauchy distribution.

RobR,

The normal distribution is a very specific distribution, it is not just the general idea that dramatic change is less likely to occur than one closer to current reference.

Specifically, the central limit theorem (which justifies the assumption of normality) applies to “any set of variates with any distribution having a finite mean and variance tends to the normal distribution.” (http://mathworld.wolfram.com/NormalDistribution.html). The problem is that this describes a set of variables, not a phenomenon under investigation.

So while the set of variables that fully describe climate will obey the theorem, and any set of explanatory variables which we may choose to invoke to explain that phenomenon will also explain it. Since our collection of explanatory variables is almost certainly incomplete for a complex problem, the two distributions won’t line up. This will skew your observation, because it then isn’t governed by a fix set of variables that obey theorem.

That’s why Zipfian (long tailed) distribution are the norm. That means that, even though you might pin down the main drivers, the smaller ones can always have a larger effect on the outcome, since the harmonic series doesn’t converge, and the more terms you have, the quicker this can happen.

Six sigma may work in the engineering world, but not in the world of observing complex phenomena.

Rob, I can’t speak at all to how the climate self-adjusts to perturbations, or what clouds might do.

The climate does seem to be pretty stable — at least presently. How that is achieved is the big question.

Possibly if we believe cloud formation is caused by many independent random variables. This is central limit theorem.

There lies the rub: There’s no reason to believe “cloud formation is caused by many independent random variables”.

Surface irregularities creating local air turbulence. Humidity-driven vertical convection. Liquid water droplet (fog) density-driven vertical convection. Solar-heat internal cloud vertical convection. Water condensation via local temperatures. Solar vaporization of cloud droplets. Local air pressure variations. Wind driven evaporation of fog. Microclimate interactions. Rain. Wind-driven particulates. Ionization from electrical charges. Warmed surface convection currents. Mixing of air currents. Asymmetry throughout. Then add nighttime radiation to a non-uniform 4K night sky.

RobR

“Question: Given current and past temperature stability during ever-increasing CO2 fractions, should we not expect the probability of cloud formation to follow a normal distribution curve.”

No. We would probably find that the distribution is log normal if we looked at it. Many years ago I studied airborne radioactivity emission distributions and found that almost always the distributions were log normal.

There is so much chaos, uncertainty and feedbacks in the Earth’s climate it really doesn’t matter what the climate modelers do or don’t do, they will never get it right.. or even close.. ever.

Touché!

Yes rbabcock,

In Nick Stokes’ recent post I alluded to that when I asked —

“Nick,

Your diagram of Lorenz attractor shows only 2 loci of quasi-stability, how many does our climate have? And what evi

sdence do you have to show this.”[corrected the dumb speling mastaeke]

To which the answer was —

Nick Stokes

September 16, 2019 at 11:04 pm

“Well, that gets into tipping points and all that. And the answer is, nobody knows. Points of stability are not a big feature of the scenario runs performed to date.”

So we don’t know how many stable loci there are, nor do we know the probable way the climate moves between them. Not so much truly known about our climate then, especially the why, when, and how the climate changes direction. So just how can you possibly model long term climate with so much ignorance of the basic mechanisms?

“So just how can you possibly model long term climate with so much ignorance of the basic mechanisms?”

Easy: “🎶Razzle-dazzle ’em!🎶”

I have been wondering this for years.

Nick said, “Points of stability are not a big feature…to date.”

They are everything in a complex non-linear system. We simply cannot assess what is “not normal” if we do not know what is normal, and understand the bounds of “normal” – i.e., for each loci there will be a large number (essentially an infinite number) of bounded states available, all perfectly “normal,” and at some point, the system will flip to another attractor.

We know of two loci over the last 800,000 years: quasi-regular glacial and interglacials (e.g., see NOAA representation here: ).

).

We really can’t model “climate” unless we can model that.

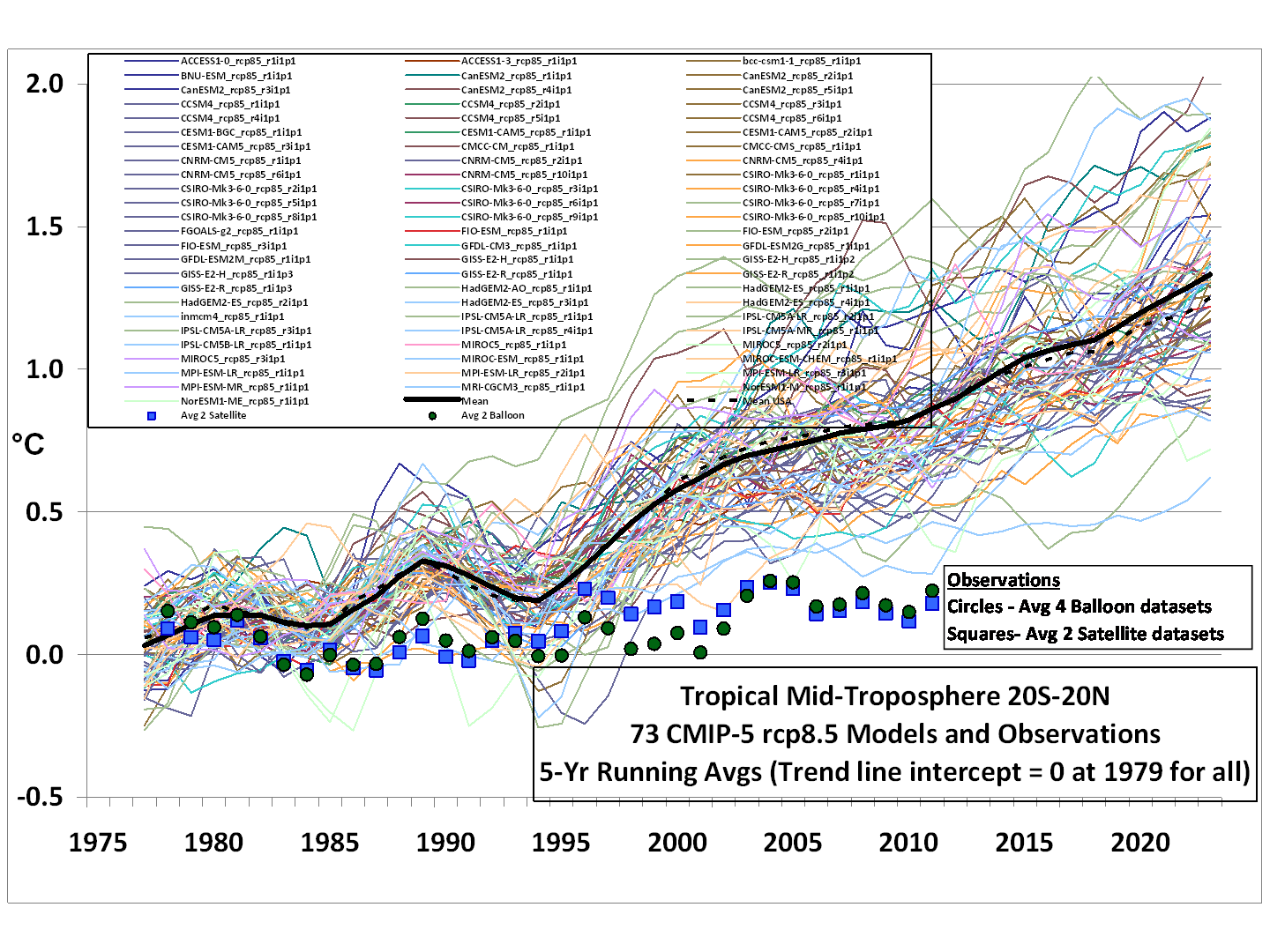

never…..when you do this to the input data

…you get this as the result

But the GCMs are so beautiful we must keep using them even if they disagree with reality. We can’t have spent all this money on nothing.

Honestly, the GCMs have failed to accurately predict anything for the past 30 years. Have we found the main root cause or is this 1 of a 100 causes in the chain any on of which causes them to fail?

“We can’t have spent all this money on nothing.”

You are correct. It was spent to justify arbitrary taxation by the UN, politicians and bureaucrats across the globe. Everyone responsible for this scam should be forced to find real work, especially the politicians.

Eric Barnes

” Everyone responsible for this scam should be forced to find real work, …”

Like breaking rocks on a chain gang!

“But the GCMs are so beautiful we must keep using them even if they disagree with reality. We can’t have spent all this money on nothing.”

That may be the funniest comment I have heard this month!

Bravo!

Unfortunately, like much comedy, it is also incredibly sad when you really think about it for a while.

Dr Frank,

I think you have taken a wise path by directly addressing the concern raised by Dr. Spencer (i.e., that with each model iteration, inputs change in response to model outputs and those changes are not adequately modeled), Well done.

I agree. While Franks explanation is rather wordy for us lay folk, I noted to Nick Stokes, the difference between a CFD equation used in the normal sense, and the application of CFD in weather and GCMs, is that industrial CFDs use known parameters to calculate the unknown. GCMs use a bunch of unknowns to calculate a desired outcome.. It is a matter of days before the error in the CFD/GCM used in weather “blows up” as Nick put it … and that is because the parameters become more and more unknown over time. It stands to reason, if the equation blows up in a few days, it doesn’t stand a chance at calculating 100 years.

As such, all of the “gargoyles” of a GCM are nothing more than decoration. The reality is, the GCM ends up being a linear calculation based on the creators input of an Estimated Climate Sensitivity to CO2. Frank just made a linear equation using the same premise. For that matter, the other part of Franks paper dealing with a GCM calculating CF as a function of CO2 forcing is a false assumption. We have no empiric proof that CO2 forcing has doodley squat impact on CF. … thus its imaginary number for LWCF is just fiction. But … it doesn’t matter, it is the ECS that counts.

Good Job Dr. Frank

“that industrial CFDs use known parameters to calculate the unknown”

I don’t think you know anything about industrial CFD. Parameters are never known with any certainty. Take for example the modelling of an ICE, which is very big business. You need a model of combustion kinetics. Do you think that is known with certainty? You don’t, of course, even know the properties of the fuel that is being used in any instance. They also have a problem of modelling IR, which is important. The smokiness of the gas is a factor.

Which raises the other point in CFD simulations; even if you could get perfect knowledge in analysing a notional experiment, it varies as soon as you try to apply it. What is the turbulence of the oncoming flow for an aircraft wing? What is the temperature, even? Etc.

“It is a matter of days before the error in the CFD/GCM used in weather “blows up” as Nick put it”

No, it isn’t, and I didn’t. That is actually the point of GCM’s. They go beyond the time when weather can be predicted, but they don’t blow up. They keep calculating perfectly reasonable weather. It isn’t a reliable forecast any more, but it has the same statistical characteristics, which is what determines climate.

LOL!!!

Stokes thinks our climate is as simple as an ICE! Priceless!

Nick, sometimes it is best to say nothing, rather than prove yourself to be a zealot and a fool. Please learn this lesson, and grow up.

See Nick. See shark. See Nick jump shark. Jump Nick, jump! LOL

“…You don’t, of course, even know the properties of the fuel that is being used in any instance…”

Of course you do. You think GM or BMW or whoever doesn’t know if it will be fed by E85, kerosene, Pepsi, or unicorn farts?

“…That is actually the point of GCM’s. They go beyond the time when weather can be predicted, but they don’t blow up. They keep calculating perfectly reasonable weather…”

Climate models don’t do weather.

could set up a bounded random weather generator that would do the same thing, doesn’t mean that it has any practical application

“GM or BMW or whoever doesn’t know”

They don’t know exactly what owners are going to put in the tank in terms of fuel type, octane number etc. But there are a lot of properties that matter. Fuel viscosity varies and is temperature dependent. It’s pretty hard to even get an accurate figure to put into a model, let alone know what it will be in the wild. Volatility is very dependent on temperature – what should it be? Etc.

Due to the needs of engineering, a great deal of experimental work goes in to determining relevant physical constants of the materials. Density and viscosity of various possible fuels as a function of temperature is no different. Several examples are

https://www.researchgate.net/publication/273739764_Temperature_dependence_density_and_kinematic_viscosity_of_petrol_bioethanol_and_their_blends

and

https://pubs.acs.org/doi/abs/10.1021/ef2007936

A big difference between inputs to engineering models and climate models is that such fundamental, needed, inputs are obtainable from controlled laboratory experiments, with well characterized uncertainties. When such values are used in engineering models, error propagation can proceed with confidence.

A starter on fuel standards and definitions here:

https://www.epa.gov/gasoline-standards

Plenty more info just a google away.

“A big difference between inputs to engineering models and climate models is that such fundamental, needed, inputs are obtainable from controlled laboratory experiments, with well characterized uncertainties”

Ha! From the abstract of your first link:

” The coefficients of determination R2 have achieved high values 0.99 for temperature dependence density and from 0.89 to 0.97 for temperature dependence kinematic viscosity. The created mathematical models could be used to the predict flow behaviour of petrol, bioethanol and their blends.”

0.89 to 0.97 may be characterised, but it isn’t great. But more to the point, note the last. They use models to fill in between the sparse measurements.

“…They don’t know exactly what owners are going to put in the tank in terms of fuel type, octane number etc…”

Amazing. You’re doubling-down on possibly the dumbest comment I have ever read on this site (and that includes griff thinking 3 x 7 = 20).

Gasoline refiners and automakers have had a good handle on this for a few decades. Things are pretty-well standardized and even regulated. Running a CFD on a few different octane ratings is nothing. And then a prototype can be tested using actual fuel (which is readily available). Manufacturer’s will even recommend a certain octane level (or higher) for some vehicles.

You really think engineers just throw their hands up in the air and start designing an ICE considering every sort of fuel possibility and then hope they get lucky? They design based on a fuel from the start.

“Running a CFD on a few different octane ratings is nothing. “

Yes, that’s how you deal with uncertainty – ensemble.

“You really think engineers just throw their hands up in the air and start designing an ICE”

I’m sure that they design with fuel expectations. But they have to accommodate uncertainty.

Written in all seriousness.

Then again this is the same individual who thinks the atmosphere is a heat pump and not a heat engine. *shrug*

“…Yes, that’s how you deal with uncertainty – ensemble…”

These are discrete analyses performed on specific and standardized formulas with known physical and chemical characteristics. The ICE design is either compatible and works with a given formulation or it doesn’t.

Unlike most climate scientists, engineers work with real-world problems. Results need to be accurate, precise, and provable. Ensembles? Pfffft. Save those for the unicorn farts.

” They don’t know exactly what owners are going to put in the tank in terms of fuel type, octane number etc. ”

Balderdash, my BMW manual tells me what the optimum fuel is for use in my high performance engine … why would an owner even contemplate using inferior fuel ?

Maybe your old banger still uses chip oil, eh Nick ?

While you’re here Nick would it be fair to say what Kevin Trenberth said in journal Nature (“Predictions of Climate”) about climate models in 2007 still stands —

Are the model initialize with realistic values before they are run?

“would it be fair to say”

Yes, and I’ve been saying it over and over. Models are not initialised to current weather state, and cannot usefully be (well, people are trying decadal predictions, which are something different). In fact, they usually have a many decades spin-up period, which is the antithesis of initialisation to get weather right. It relates to this issue of chaos. The models disconnect from their initial conditions; what you need to know is how climate comes into balance with the long term forcings.

Dear Dr. Nick Stokes (In deference to your acolytes who complained I wasn’t being sufficiently reverential.)

You said, “They keep calculating perfectly reasonable weather. It isn’t a reliable forecast any more, …” I didn’t think that there was so much difference between Aussie English and American English that your you would consider unreliable to be reasonable.

Weather could be reasonable before we had reliable forecasts.

BoM finds it difficult to provide reliable weather forecasts for 24 hours ahead. How “reasonable” is that ?

Ummm … Nick … I may not be as well versed about CFDs as you, but I’m pretty proficient when in comes to ICE. There is this thing called an ECM, and it hooks to the MAP, the O2 sensor, and a few others …. and as such, the ECM adjust the behavior based on known incoming data. That is how you get multi fuel ICE, … the ECM makes the adjustments to fuel mix and timing to insure that the engine is running within preset parameters that were determined using … drum role … KNOWN PARAMETERS. I would guess that a CFD was used to model the burn with those known parameters in the design phase, such that they can pre-program the ECM to make its adjustments … but again … you are talking about a live data system, with feedback and adjustment. The adjustments are made using KNOWN data and KNOWN standards.

But what I was talking about, is in fluid dynamics, you know the viscosity of the liquid, you know the diameter of the pipe, you know the pressure, you know the flow rate … you KNOW a lot of things … then your CFD shows how the liquid moves within the system. A GCM can take the current data, pressure systems, temperatures, etc, and calculate how a weather system is likely to move, however, the certainty of those models, as any Meteorologists will tell you becomes less certain with each passing day …. and when you get out to next week, it’s pretty much just a guess. A GCM run for next year is doing nothing but using averages over time. Problem with those averages, is they have confidence intervals, there are standard deviations for each parameter. The CF for Texas in July will be for example 30% plus or minus 12%., thus solar insolation at the surface will be X w/m2, plus or minus Y w/m2, Pressure will be … , etc etc etc. The problem with GCMs in Climate is they do not express their results as plus or minus the uncertainty. They give you a single number, then they run the same model 100 times. THIS IS A FLAWED way of doing things. In order to get a true confidence interval for these calculation, you have to run the model with all possible scenarios, which will be a probability in the 10s of thousands per grid point using the uncertainty ranges for each parameter, and that for each time interval, …. which would be a guaranteed “blow up” of the CFD. Further, the error Frank points to will begin to be incorporated into the individual parameter standard deviations, … ie., a systematic error in the system. Kabooom.

That is why its just easier to make a complicated program that in the end, cancels out all the parameters and uses the ECS …… same as a linear equation using the ECS.

Just sayin.

“you know the viscosity of the liquid”

I wish. You never do very well, especially its temperature dependence, let alone variation with impurities.

“you know the pressure, you know the flow rate”

Actually, mostly the CFD has to work that out. You may know the atmospheric pressure, and there may be a few manometers around, but you won’t have a proper pressure field by observation.

One needs to be clear about what one means by “model.” When it is used in the engineering sense of fitting a proposed equation to experimental data, such as the data for physical constants, “model” literally means that, a specific determinate, equation with specific parameter choices. (In the climate science regime, a more proper word would be “simulation.”) In engineering, the equation may be theoretically motivated or may simply be an explicit function that captures certain kinds of behavior such as a polynomial. That equation is then “fit” to the data and the variation of the measured values from the predicted values is minimized by that simple fit. Various conditions usually are also tested to see if the deviations from the fit correspond well or ill to what would be expected of “random” errors, such as a normal distribution. Such tests can typically show if there is some non-random systematic error either due to the apparatus or a mis-match of the equation to the behavior.

The Rsquared value provides the relative measure of the percentage of the dependent variable variance that the equation explains (from that particular experiment) over the range for which it was measured, under the conditions (i.e. underlying experimental uncertainties) of the experiment. In other words it is a measure of how well the function seems to follow the behavior of the data. A value of 0.9 in general is considered quite good in the sense that that particular equation is useful. Further, the values that come from the fit, including the uncertainty estimates, can be used directly in an error propagation of a calculation that uses the value. A more meaningful quantity for predictive purposes is the standard error of the regression, which gives the percent error to be expected from using that equation, with those parameters, to predict an outcome of another experiment. By that measure, engineering “models” typically are not considered useful unless the standard errors are less than a few percent, as is the case for the particular works cited. Experimental procedures are generally improved over time which generally reduce the uncertainties and provide more accurate (lower uncertainties).

By contrast, one should compare the inputs to climate simulations. Of particular interest would be what is the magnitude of the standard errors associated with them, whether estimates of them are point values, or functional relations, and whether the variations from the estimate used are normally distributed or otherwise.

Nick writes

They do unless their parameters are very carefully chosen. But choosing those parameters isn’t about emulating weather, its about cancelling errors.

And then

The only characteristics that might be considered “reliable” are those near to today. There is no reason to believe future characteristics will be reliable and in fact the model projections have already shown them not to be reliable.

Slight correction to the previous comment: where it said the standard error measures how well the function predicts the outcome of a future measurement, it should have included the words “in an absolute sense” meaning that it gives the expected percent error in the predicted future value. In that sense it can be used directly in a propagation of error calculation. Rsquared does not serve that purpose since it only measures the closeness to which the functional form conforms to the functional form of the data.

Thanks, Andrew. Your point is in the paper, too.

Excellent summary that shows how the differential equation argumentation is just a big red herring.

“even the first simulation step enters terra incognita.”

Dr. Frank–

Your propagation of error graphs are hugely entertaining to me, showing the enormous uncertainty that develops with every step of a simulation. This is shocking to the defenders of the consensus and they bring up the artillery to focus on that wonderful expanding balloon of uncertainty.

But now with your new article on the error in the very first step, why bother propagating the error beyond the first step? Let the defenders defend against this simpler statement.

Lance Wallace

There have been complaints that the +/- 4 W uncertainty should only be applied once.

Arbitrarily select a year (Tsub0) of unknown or assumed zero uncertainty for the temperature to apply the uncertainty of cloud fraction. The resultant predicted temperature of year Tsub1, now has a known minimum uncertainty. Now, since we selected the initial year arbitrarily, what is to prevent us from repeating the selection, this time using year Tsub1? Doing so, we are required to account for the uncertainty of the cloud forcing just as we did the first time, only this time we know what the minimum uncertainty of the temperature is before doing the calculation! We can continue to do this ad infinitum. That is, as long as there is an iterative chain of calculations, the known uncertainties of variables and constants must be taken into account every time the calculations are performed. It is only systematic offsets or biases that need only be adjusted once.

A bias adjustment of known magnitude will affect the nominal value of the calculation, but not the uncertainty. However, the uncertainty, which has a range of possible values, will not affect the nominal value, but WILL affect the uncertainty.

Clyde,

“Now, since we selected the initial year arbitrarily, what is to prevent us from repeating the selection, this time using year Tsub1?”

And for year, read month. Or iteration step in the program (about 30 min). What is special about a year?

In fact, the 4 Wm⁻² was mainly spatial variation, not over time.

Stokes,

The point being that the calculations cannot be performed in real time, nor at the spatial resolution at which the measured parameters vary. Therefore, coarse resolutions of both time and area have to be adopted for practical reasons.

Other than the fact that 1 year nearly averages out the land seasonality, it could be a finer step. However, using finer temporal steps not only increases processing time, but increases the complexity of the calculation by having to use appropriate variable values for the time steps decided on. But, as is often the case with you, you have tossed a red herring into the room. Using finer temporal resolutions does not get around the requirement of accounting for the uncertainty at every time-step.

The problem with CF is just the tip of the iceberg. Also consider high altitude water vapor. As theorized by Dr. William Gray, it must also change due to any surface warming and/or changes in evaporation rates. Once again the models cannot track these changes at the needed accuracy to produce a valid result.

Just how many more of these factors exist? Each one adds exponentially to the already impossible task facing models.

It is entirely obvious that GCMs disguise a (relatively) simple relationship between CO2 and temperature with wholly unnecessary (and necessarily inaccurate) complexity. A couple of lines on an Excel sheet will do just as well in terms of forecasting temperature – delta CO2, sensitivity of temperature to changes in CO2, one multiplied by the other. All you need to know to forecast temperature with any skill is the sensitivity. GCMs can then be run to show the effects on the climate of the increase in temperature.

But a simple model does not work, because a single sensitivity figure will not both hindcast and forecast accurately, even of a long term trend that allows for a significant amount of natural variation. So we get these huge GCMs which do not improve the situation but allow modelers to hide the problem, which is that any sensitivity to CO2 is swamped by natural variation at every level we can model the physical properties of the climate.

The fundamental problem remains, that sensitivity to CO2 is still unclear, and estimates vary widely. Yet that sensitivity is absolutely the key thing we need to know if we are to understand and forecast the impact of increased CO2 on the climate. If we actually knew sensitivity, and GCMs were a reasonable approximation of our climate, there would be no debate amongst climate scientists and no reasonable basis for scepticism.

Phoenix44: “The fundamental problem remains, that sensitivity to CO2 is still unclear”

Precisely! We can’t even determine how to derive CO2 sensitivity on Mars where it makes up 95% of the atmosphere. We don’t even know what to measure so that we can begin to derive the answer.

“If we actually knew [CO2] sensitivity, and GCMs were a reasonable approximation of our climate”

Then we could prove out the GCM by inputting Mars’ parameters and verifying the resulting output.

It truly is glaring how pitiful the “science” is that has been dedicated to deriving CO2 sensitivity.

Simple and complex GCMs give the same values for climate sensitivities and also for the warming values of different RCP’s. There is no conflict. Or can you show an example that they give different results?

Yes. It’s all offsetting errors, Antero. Also known as false precision.

Kiehl JT. Twentieth century climate model response and climate sensitivity. Geophys Res Lett. 2007;34(22):L22710.

http://dx.doi.org/10.1029/2007GL031383

Abstract: Climate forcing and climate sensitivity are two key factors in understanding Earth’s climate. There is considerable interest in decreasing our uncertainty in climate sensitivity. This study explores the role of these two factors in climate simulations of the 20th century. It is found that the total anthropogenic forcing for a wide range of climate models differs by a factor of two and that the total forcing is inversely correlated to climate sensitivity. Much of the uncertainty in total anthropogenic forcing derives from a threefold range of uncertainty in the aerosol forcing used in the simulations.

p.2 “Note that the range in total anthropogenic forcing is slightly over a factor of 2, which is the same order as the uncertainty in climate sensitivity. These results explain to a large degree why models with such diverse climate sensitivities can all simulate the global anomaly in surface temperature. The magnitude of applied anthropogenic total forcing compensates for the model sensitivity.“

An impressive and insightful treatise of errors and error propagation in the context of GCM. Thank you for explaining in plain language.

Just a few sentences in to this article, and I think I just had my “Aha!” moment I have been waiting and hoping for.

A black box.

That crystalizes it very clearly.

I think it would be more appropriately called …. [The Wiggly Box]. This is what a GCM represents.

dT=dCO2*[wiggly box]*ECS

Where dCO2 * ECS establishes the trend … and [wiggly box] adds the wiggles in the prediction line to make it look like a valid computation.

“dT=dCO2*[wiggly box]*ECS”

Even better.

Dr. Frank: “beta = sqrt[sum over(theta_S_i)^2],

“where “i” defines the sources of bias errors and theta_S is the bias range within the error source i. (my bold)”

Is it an issue that you are only evaluating a single bias error source; whereas, for the reasons previously discussed, we know the net energy budget to be essentially accurate. Errors in the LWCF are demonstrably being offset by errors in other energy flux components at each time step such that the balance is maintained (?)

How can introducing another “error” increase your understanding of the real world.

It doesn’t.

Does it allow your model to better emulate a linear forcing model? Yes. Does it model the physical world? No.

S. Geiger, please call me Pat.

I’m evaluating an uncertainty based upon the lower limit of resolution of GCMs. Off-setting errors do not improve resolution. They just hide the uncertainty in a result.

Off-setting errors do not improve the physical description. They do not ensure accurate prediction of unknown states.

In a proper uncertainty analysis, offsetting errors are combined into a total uncertainty as their root-sum-square.

An energy budget in balance in a theoretical computation of decidedly many unknowns means what? Since the historical record indicates rather large changes in cycles of 60 to 70 years, 100 years, 1000 years, 2500 years, 24000 years, 40,000 years, etc. what is the logic that says any particular range of years should find a balance in input vs output? Perhaps pre-tuning for any particular short period intrinsically introduces a large bias because that balance is not there is reality.

Assuming a balance exists integrated over a time span of anything shorter than a few centuries is crazy to assume. The obvious existence of far longer period (than centuries) oscillations guarantees periodic imbalances of inputs and outflows on grand scales.

Quote:

“I posted a long extract from relevant literature on the meaning and method of error propagation, here. Most of the papers are from engineering journals.”

I have worked 40 years in product development using measurements and simulations to improve rock drilling equipment and heavy trucks and buses.

We have a saying:

“When a measurement is presented no one but the performer thinks it is reliable, but when a simulation is presented everyone except from the performer thinks it is the truth.”

Thank you for publishing results that I hope will call out the modellers to give evidence of the uncertainties in their work!

If I understand this correctly:

I find a stick on the ground that’s about a foot long in length. It’s somewhere between 10 and 14 inches long. I decide to measure the length of my living to the nearest 1/16th of an inch using that stick.

My living room is exactly 20 “stick lengths” long. I can repeat the measurement experiment 10 times and get 20 sticks long. My wife can repeat the measurement experiment and get 20 sticks long. A stranger off the street gets the same result. But despite dozens of repeated experiments, I can’t be sure, at a 1/16 of an inch accuracy, how long my living room really is because of the uncertainty in the length of that stick.

I need a properly calibrated stick, a ruler or a tape measure, before I can claim to know how long my living room is at that level of accuracy. If your device used to measure something is garbage, the results are going to be incredibly uncertain.

Jim Allison, good use of the measuring stick analogy.

“I need a properly calibrated stick, a ruler or a tape measure, before I can claim to know how long my living room is at that level of accuracy.”

No, all you need is to find out the length of the stick in metres. Then you can take all your data, scale it, and you have good results. That is of course what people do when they adjust for thermal expansion of their measures, for example.

The point relevant here is that the error didn’t compound. It was out by a constant factor, even with multiple measures.

Nick Stokes: No, all you need is to find out the length of the stick in metres.

That’s true. All you ever have to do to remove uncertainty is learn the truth. For a meter stick, however, the measurement of its length will have still an unknown error. Uncertainty will be smaller than in Jim Allison’s description of the problem, maybe only +/- 0.1mm; that uncertainty compounds. He can now measure the room with perhaps only 1/16″ error.

For Pat Frank’s GCM, of which the unknown meter stick is an analogy , what is needed is a much more accurate estimate of the cloud feedback, which may not be available for a long time. It’s as though there is no way to measure the meter stick.

In this analogy, there was no error – everyone measured exactly 20 lengths. The measurement experiment delivered consistent results – it was a very precise experiment.

The uncertainty did compound though. Each stick length added to the overall uncertainty of the length of the room. Something that is exactly as long as the stick would be 10-14 inches long. If something is two “lengths’ long, it would be 20-28 inches long. Twenty “lengths” means that the room is anywhere between 200-280 inches long. See how the more you measure with an uncertain instrument, the more the uncertainty increases? This is accuracy, a different beast than precision.

I agree with you that if we could remove the uncertainty of the stick, and cloud formation, than we could find out exactly how long my room is and have better climate models. But billions of dollars later, we’re still scratching our heads at how much carpet I need to buy, and what climate will do in the future.

“The point relevant here is that the error didn’t compound. It was out by a constant factor, even with multiple measures.”

Huh? Did you stop to think for even a second before posting this? If the stick is off by an inch then the first measurement will be off by an inch. The second measurement will be off by that first inch plus another inch! And so on. The final measurement will be off by 1inch multiplied by the number of measurements made! The error certainly compounds!

It is the same with uncertainty. If the uncertainty of the first measurement is +/- 1 inch then the uncertainty of the second measurement will be the first uncertainty doubled. If the uncertainty of the first measurement is +/- 1 inch, i.e. from 11inches to 13inches, then the second measurment will have an uncertainty of 11inches +/- 1inch, i.e. 10inches to 12 inches coupled with 13inches +/- 1inch or 12 inches to 14inches. So the total uncertainty will range from 10 inches to 14 inches, or double the uncertainty of the first measurement. Sooner or later the uncertainty will overwhelm the measurement tool itself!

Its simply a matter of units. If the stick is in fact marked with some antique marking like feet and inches, you’d still have a consistent measure, with no compounding. And when you looked up, in some dusty tome, the units conversion factor, you could do a complete conversion. Or if your carper supplier happened to remember these units, maybe he could still meet your order.

“Its simply a matter of units. If the stick is in fact marked with some antique marking like feet and inches, you’d still have a consistent measure, with no compounding.”

You *still* don’t get it about uncertainty, do you? It’s *not* just a matter of units. You are specifying the total length in *inches*, not in some unique unit. The uncertainty of the length of the measuring stick in inches is the issue. Remember, there are no markings on the stick, it is assumed to be 12 inches long no matter how long it actually is, that is the origin of the uncertainty interval! And that uncertainty interval does add with each iteration of using the stick!

I don’t believe that you can be this obtuse on purpose. Are you having fun trolling everyone?

Tim +1

Nick Stokes –> “you’d still have a consistent measure, with no compounding”. You are still dealing with error, not uncertainty.

Measurement errors occur when you are measuring the same thing with the same device multiple times. You can develop a probability distribution and calculate a value of how accurate the mean is and then assume this is the “true value”. This doesn’t include calibration error or how close the “true value” as measured is to the defined value.

You’ll note that these measurement errors do not compound, they provide a probability distribution. However, the calibration uncertainty is a systemic error that never disappears.

The mean also carries the calibration uncertainty since there is no way to eliminate it. In fact, it is possible that the uncertainty CAN compound. It can compound in either direction or it is possible that the uncertainties DO offset. The point is that you have no way to know or determine the uncertainty in the final value. The main thing is measuring the same thing, multiple times with the same device.

Now, for compounding, do the following, go outside and get a stick from a tree. Break it off to what you think is 12 inches. Visually assess the length and write down what you think may be the uncertainty value as compared to the NIST standard for 12 inches. Then go make 10 consecutive measurements to obtain a length of 120 inches. How would you convey the errors in that? You could assume the measurement errors offset because you may overlap the line you drew for the previous measurement one time while you missed the line the next time so that the errors offset. It would be up to you do the sequence multiple times and assess the differences and define a +/- error component to the measurement error.

Now, how about calibration error. Every time you take a sequential measurement the calibration error will add to the previous measurement. Why? When you check your stick against the NIST standard lets assume that it comes out 13 inches (+/- an uncertainty by the way). Your end result will be 1 inch times 10 measurements = 10 inches off. The 1 inch adds each time you make a sequential measurement! This is what uncertainty does.

Jim Gorman

Not only is there an inherent potential error in the assumed length of the measuring stick, which is additive or systematic, but the error in placing the stick on the ground is a random error that varies every time it is done, and is propagated as a probability distribution.

Actually, in the sense of climate models, this isn’t relevant.

Take a metal rod, we measure it with a ruler we believe to be 100cm but we’re uncertain so it’s really 90cm +/- 10cm. The rod measures 10 ruler lengths so it’s length is 1000cm +/-100cm. That’s quite a bit of uncertainty!

Now, we paint the stick black and leave it in the sun (the black paint being analogous to adding co2). We remeasure and find it 10cm longer. Since this is less than the +/- 100cm original length of the rod it’s claimed that the uncertainty overwhelms the result.

But no, we can definitively say it’s longer and, if the expansion is roughly proportional, the uncertainty of the expansion is +/- 1cm.

The GCM’s don’t stand on their own. They are also calibrated (which from what Pat quotes below suggests the uncertainty doesn’t propagate) and we only want to know the difference when varying a parameter (in this case co2). So putting the uncertainty of an uncalibrated model around the difference from 2 runs of a calibrated model doesn’t seem to be relevant. Perhaps that’s why he has the +/-15C around the 3C anomoly but can’t explain exactly what it means?

Brigitte, it is know there is a +/- 12.1% error in annual cloud fraction in the GCMs. Put another way, the models include a cloud fraction that could be 12.1% higher or lower than reality. The GCMs apply a cloud fraction at every iterative step, i.e. annually. So the resulting temperature after the first iteration is affected by this +/- 12.1% in CF with maps to a +/- 4 w m-2 in forcing. Then in year 2 the GCM applies a cloud fraction which again has the +/- 12.1% error which further affects the temperature output in year 2. And again in year 3, etc. In this way, that one imperfection in understanding of cloud fraction propagates into huge uncertainty in the output temperature.

Exactly right, Kenneth, thanks. 🙂

Quote from the story: “We know the total tropospheric cloud feedback effect of the global 67% in cloud cover is about -25 W/m^2.” That is not cloud feedback, it is cloud forcing. They are two different concepts. Cloud forcing is the sum of two opposite effects of clouds on the radiation budget on the surface. Clouds reduce the incoming solar insolation in the energy budget (my research studies) from 287.2 W/m^2 in the clear sky to 240 W/m^2 in all-sky. At the same time, the GH effect increases from 128.1 W/m^2 to 155.6 W/m^2, and thus the change is +27.2 W/m^2. The net effect of clouds in all-sky conditions is cooling by -19.8 W/m^2. A quite big variety of cloud forcing numbers can be found in the scientific literature from -17.0 to -28.0 W/m^2.

Cloud feedback is a measure in which way cloud forcing varies when the climatic conditions change along the time and mainly in which way it varies according to varying global temperatures.

IPCC has written this way in AR4, 2007 (Ch. 8, p. 633): “Using feedback parameters from Figure 8.14, it can be estimated that in the presence of water vapour, lapse rate and surface albedo feedbacks, but in the absence of cloud feedbacks, current GCMs would predict a climate sensitivity (±1 standard deviation) of roughly 1.9°C ± 0.15°C (ignoring spread from radiative forcing differences).” My comment: this is TCS, which is a better measure of warming in the century-scale than ECS.

In AR5 the TCS value is still in the range from 1.8°C to 1.9°C. It means that according to IPCC cloud forcing has been the same since AR4 and there is no cloud feedback applied. It means that the IPCC does not know in which way cloud forcing would change as the temperature changes.

I think that Pat Frank is driving himself into deeper problems.

Antero, your post contradicts itself.

You wrote, “Quote from the story: “We know the total tropospheric cloud feedback effect of the global 67% in cloud cover is about -25 W/m^2.” That is not cloud feedback, it is cloud forcing. ”

Followed by, “Cloud feedback is a measure in which way cloud forcing varies when the climatic conditions change along the time and mainly in which way it varies according to varying global temperatures.”

So, net cloud forcing is not a feedback, except that it’s a result of cloud feedback. Very clear, that.

I discussed net cloud forcing is the over all effect of cloud feedback; pretty much the way it’s discussed in the literature (<a href="https://doi.org/10.1175/1520-0442(1992)0052.0.CO;2“>Hartmann, et al., 1992, for example). Your explanation pretty much agrees with it, while your claim expresses disagreement.

It seems to me you’re manufacturing a false objection.

This has to be a media blitz about this. These alarmists are pouring it on thick right right now. The claim that the turning point has arrived is true in one regard. They should be shown as the frauds they are, and that is they who have run out of time with their shams.

This absolutely must become major news.

Dr. Frank,

This is very helpful. Bottom line, I take this to mean that even if one conceded that GCM tuning eliminated errors over the interval where we supposedly have accurate GAST data, the limited resolving power of the models means that projections of temperature impacts solely related to incremental CO2 forcing are essentially meaningless. Thank you.

You’ve pretty much got it, Frank. Tuning the model just gets the known observables right. It doesn’t get the underlying physical description right.

That means there’s a large uncertainty even in the tuned simulation of the known observables, because getting them right tells us little or nothing about the physical processes that produced them.

It doesn’t solve the underlying problem of physical ignorance.

And then, when the simulation is projected into the future, there’s no telling how far away from correct the predictions are.

Pat F., I like your style! Accumulated errors are the bain of scientists in whatever sector they reside. Yes, I have had occasions where I predicted a great gold ore intercept and when the drill core was coming out, was forced to say “what the hell is this?”. What a complex topic the climate is in general, and then try to add anthropogenic forcing and feedback and it’s impossible.

The uncertainty monster bites again! 🙂

Consequences for you, Ron, but not for climate modelers.

This is obvious to anybody that has given thought to how GCMs have been programmed. GHGs are the only “known” factor affecting climate that can be projected. GCMs take the different GHG concentration pathways (RCPs) and project a temperature change. Although very complex, GCMs produce a trivial result.

Yet they appear to be dominating the social and political discourse. In the Paris 2015 agreement most nations on Earth agreed to commit towards limiting global warming partly by reducing GHG emissions. The main evidence that GHGs are responsible for the warming are GCMs.

When most people believe [in] something, the fact that it is [not] real is small consolation to those that don’t believe. In this planet you can lose your head for refusing to believe [in] something that is not real. Literally.

…and the proof is

When you put this in….

..you get this out…

Exactly, the effect of man-made CO2 is so small, relative to the error in estimating low-level cloud cover, it can never be seen or estimated. I think everyone agrees with that. To me, Dr. Stokes and Dr. Spencer are clouding (pun fully intentional) the issue with abstruse, and mostly irrelevant arguments.

How does cloudiness increase during the maximum height of galactic radiation during the minimum solar activity? How much ionization in the lower stratosphere and upper troposphere increases?

http://sol.spacenvironment.net/raps_ops/current_files/Cutoff.html

http://cosmicrays.oulu.fi/webform/monitor.gif

“We also know that the annual average increase in CO₂ forcing is about 0.035 W/m^2.”

The GCM operators have pulled this number from obscurity. This flux cannot be calculated from First Principles, so where did they get it? I assume it is based on the increase in CO2 since 1880, or some year, and the increase in Global Average Surface Temperature from 1880, or some year. These are hugely unscientific assumptions.

It is a good question, from which source Pat Frank has taken this CO2 forcing value 0.035 W/m^2. As we know very well, the IPCC hs used since TAR the CO2 forcing equation from Myhre et al. and it simply

RF = 5.35 * ln (CO2/280), where CO2 is the CO2 concentration in ppm.

This equation gives the climate sensitivity forcing of 3.7 W/m^2, which is one of the best-known figures among climate change scientists. Gavin Schmidt calls it “a canonic” figure meaning that its correctness is beyond any questions. The concentration of 400 ppm gives RF-values 1.91 W/m^2. According to the original IPCC science, the CO2 concentration has increased to this present number from the year 1750. It means a time span of 265 years till 2015 and an average annual increase of 0.0072 W/m^2. So, it is a very good question from which source comes this figure 0.035 W/m^2?

I figured it out, maybe. If an annual CO2-increase is 2,6 ppm, then the annual RF-value for CO2 is 0.035 W/m^2. It cannot be called an average value but it is in the high end of the present CO2 annual growth variations. A long term annual CO2 growth rate has been something like 2.2 ppm.

It’s the average change in GHG forcing since 1979, Antero.

The source of that number is given in the abstract and on page 9 of the paper.

But how was it calculated?

So I read Page 9 of your paper. Language a bit thick, but it did mention temperature records, sounded like from 1900 or thereabouts, or was it 1750?

So, all the GCM’s ignore the concept of Natural Variation, ascribe all warming from some year to CO2 “Forcing?”

This is not science, this is Chicken Little, “The Sky is Falling!!!”

What if it starts to cool a bit? GCM’s pack it in and go home?

This is ludicrous, and I do not mean Tesla’s Ludicrous Speed…

Michael, it’s just the sum of all the annual increases in GHG forcing since 1979, divided by the number of years.

That gives the average annual increase in forcing since 1979.

“Michael, it’s just the sum of all the annual increases in GHG forcing since 1979, divided by the number of years.

That gives the average annual increase in forcing since 1979.”

This tells us nothing. How is a GHG “forcing” measured? I suggest that it cannot be.

It is fine to try to beat the GCM operators at their own game. Fun, a challenge, a debate.

Do none of you know anything about radiation?

“We also know the annual average increase in CO2 forcing is about O.O35 W/m2.”

We know no such thing! Bring this number back, show me that it is not, instead, 0.00 W/m2!

From where came this number? Not from First Principles. This is the crux of the matter, no one has established the physics of any CO2 forcing whatsoever, all from unscientific assumptions that All of the Warming from some year, 1750, 1880, 1979, was caused by CO2!!!

Freaking kidding me, billions and billions of dollars on fake science?

What are you people doing?????

I think there is first principle support for CO2 forcing (e.g.: http://climateknowledge.org/figures/Rood_Climate_Change_AOSS480_Documents/Ramanathan_Coakley_Radiative_Convection_RevGeophys_%201978.pdf).

I think there are huge problems with the modeling (but I’m not sure the physics behind the hypothesized CO2-forcing this is one of them):

The uncertainty issue (across a multitude of inputs, not just LWCF), the fact that temperature anomalies may be modeled by a relatively simple linear relation to GHG forcing (implicating a modeling bias that mutes the actual complex non-linear nature of the climate to ensure temperature goes up with CO2), the lack of fundamental understanding of the character of equilibrium states of the climate across glacial and inter-glacial periods, among a myriad of other issues, along with uncertainties associated with GHG-forcing estimates, but not the physics of GHG-forcing.

“The absorption coefficient (K) is independent of wavelength”??? It most certainly is not, and they do not derive it, just pull it from obscurity.

Please, someone, anyone, tell me where this number 0.035 W/m2 comes from. I suggest that there is no evidence showing that, instead, it is not O.000 W/m2.

Is it so hard to search the paper, Michael?

From the abstract: “This annual (+/-)4 Wm^2 simulation uncertainty is

(+/-)114 x larger than the annual average ~0.035 Wm^2 change in tropospheric

thermal energy flux produced by increasing GHG forcing since 1979..

Page 9: “the average annual ~0.035 Wm^-2 year^-1 increase in greenhouse gas forcing since 1979”

The average since 1979. Is that really so hard?

I posted some more recent numbers here Michael.

It’s no mystery. It just takes checking the paper.

Pat Frank,

Non-responsive. You are a physicist. Someone else must have written down this number and you have posted it here, not questioning its derivation.

Once again, I suggest that it could just as easily be O.OOO W/m2.

“The average since 1979. Is that really so hard?” Are you giving me a simple statement of fact? Facts according to whom, derived how?

This is the basis of your entire contention, but it comes from just an assumption that all the warming since 1979, or some other year, is due to CO2.

This is bizarre now, a simple question, prove to us that this number has been established from First Principles.

I want to shoot down the entire basis of CAGW from CO2. Seems like you do too. There is no obvious physical basis. Have you considered this, or are you just debating the GCM’s?

Wow…

Alright, so I followed your link, from the EPA?

You are not a physicist at all. No physicist would just write down a number without being able to back it up, as I was taught in engineering school.

Back it up.

This is the entire basis of your paper, which, if true, could be a huge victory, help stop this gigantic fraud. If you cannot back it up, you got nothing, just debating statistics, another soft science.

You understand that what the value actually is doesn’t really matter don’t you? Dr Frank took the value used in the climate models to develop his emulation and to show that the uncertainty overwhelms the ability of the models to predict anything. He wasn’t trying to validate the value, he was trying to calculate the uncertainty. When you are playing away from home then you use the home team’s ball so to speak.

It’s an empirical number, Michael. Do you understand what that means?

I didn’t “write it down.” It’s a per-year average of forcing increase, since 1979, taken from known values. Is that too hard for you to understand?

Other people here have had trouble understanding per-year averages. And now you, too. Maybe it’s contagious. I hope not.

Using a mathematical model of a physical process is a valid endeavor when the model matches reality to the degree of accuracy required for practical uses. A model can be created with any combination of inductive and deductive reasoning, but any model can only be validated by comparing its predictions against careful observations of the physical world. A model’s inputs and outputs are data taken and compared to reality. A model’s mathematics follow processes that can be observed through physical experimentation.

A good example of such a model is used to explain the workings of a semiconductor transistor. Without such a proven-useful model all of our complex electronic devices would have been impossible to develop.

GCM’s fail the basic premises of modeling. There practically can never be a complete input data set due to their gridded nature. Their architecture includes processes that have not been observed in nature. Their outputs do not match observed reality. They have no practical use for explaining the climate or predicting how it will change. They are simply devices to give a sciency feel to political propaganda.

Explaining why they are failures is interesting to a point. But after decades of valiant attempts to explain their obvious shortcomings they still are in widespread use (and misuse). This fact only bolsters the argument that they are not for scientific uses but political tools.

They are doing tremendous political damage – a class already skittish with any physics, will become anti-scientific. To a modern industrial civilization, that is Aztec poison.

An Engineer‟s Critique of Global Warming „Science‟

Questioning the CAGW* theory

http://rps3.com/Files/AGW/EngrCritique.AGW-Science.v4.3.pdf

Using Computer Models to Predict Future Climate Changes

Engineers and Scientists know that you cannot merely extrapolate data that are scattered due to chaotic effects. So, scientists propose a theory, model it to predict and then turn the dials to match the model to the historic data. They then use the model to predict the future.

A big problem with the Scientist – he falls in love with the theory. If new data does not fit his prediction, he refuses to drop the theory, he just continues to tweak the dials. Instead, an Engineer looks for another theory, or refuses to predict – Hey, his decisions have consequences.

The lesson here is one that applies to risk management

This article is a Tour de force. It is a single broadside that reduces the enemy’s pretty ship, bristling with guns and fluttering sails, to a gutted, burning, sinking hull. Hopefully we see people abandoning ship very soon.

Matthew, It shows how much money and time can be spent to replace something that can be done with a linear extrapolation or a ruler and graph paper. GCM’s are models built by a U.N. committee of scientists and they look exactly like that.