Geoff Sherrington

Part One opened with 3 assertions.

“It is generally agreed that the usefulness of measurement results, and thus much of the information that we provide as an institution, is to a large extent determined by the quality of the statements of uncertainty that accompany them.”

“The uncertainty in the result of a measurement generally consists of several components which may be grouped into two categories according to the way in which their numerical value is estimated: A. those which are evaluated by statistical methods, B. those which are evaluated by other means.”

“Dissent. Science benefits from dissent within the scientific community to sharpen ideas and thinking. Scientists’ ability to freely voice the legitimate disagreement that improves science should not be constrained. Transparency in sharing science. Transparency underpins the robust generation of knowledge and promotes accountability to the American public. Federal scientists should be able to speak freely, if they wish, about their unclassified research, including to members of the press.”

They led to a question that Australia’s Bureau of Meteorology, BOM, has been answering in stages for some years.

“If a person seeks to know the separation of two daily temperatures in degrees C that allows a confident claim that the two temperatures are different statistically, by how much would the two values be separated?”

Part Two now addresses the more mathematical topics of the first two assertions.

In short, what is the proper magnitude of the uncertainty associated with such routine daily temperature measurements? (From here, the scope has widened from a single observation at a single station, to multiple years of observations at many stations globally.)

We start with where Part One ceased.

Dr David Jones emailed me on June 9, 2009 with this sentence:

“Your analogy between a 0.1C difference and a 0.1C/decade trend makes no sense either – the law of large numbers or central limit theorem tells you that random errors have a tiny effect on aggregated values.”

The Law of Large Numbers. LOLN, and the Central Limit Theorem. CLT, are often used to justify estimations of small measurement uncertainties. A general summary could be like “the uncertainty of a single measurement might be +/-0.5⁰C for a single measurement, but if we take many measurements and average them, the uncertainty can become smaller.”

This thinking has to bear on the BOM table of uncertainty estimates shown in Part One and more below.

If the uncertainty of a single reading is indeed +/-0.5⁰C, then what mechanism is at work to reduce the uncertainty of multiple observations to lower numbers such as +/-0.19⁰C? It has to be almost total reliance on the CLT. If so, is this reliance justified?

……………………………………..

Australia’s BOM authors have written a 38-page report that describes some of their relevant procedures. It is named “ITR 716 Temperature Measurement Uncertainty – Version 1.4_E” (Issued 30 March 2022). It might not yet be easily available in public literature.

http://www.geoffstuff.com/bomitr.pdf

The lengthy tables in that report need to be understood before proceeding.

…………………………………………………..

(Start quote).

Sources of Uncertainty Information.

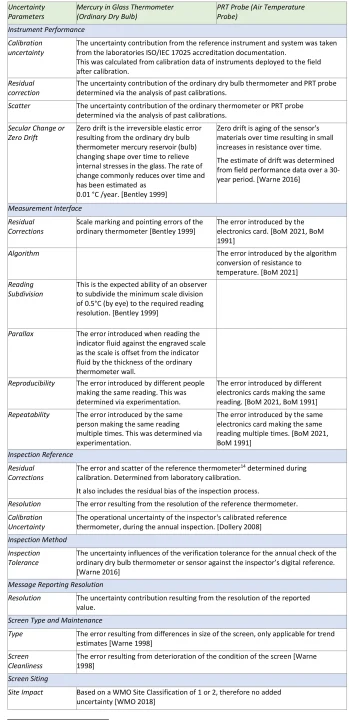

The process of identifying sources of uncertainty for near surface atmospheric temperature measurements was carried out in accordance with the International Vocabulary of Metrology [JCGM 200:2008]. This analysis of the measurement process established seven root causes and numerous contributing sources. These are described in Table 3 below. These sources of uncertainty correlate with categories used in the uncertainty budget provided in Appendix D.

Uncertainty Estimates

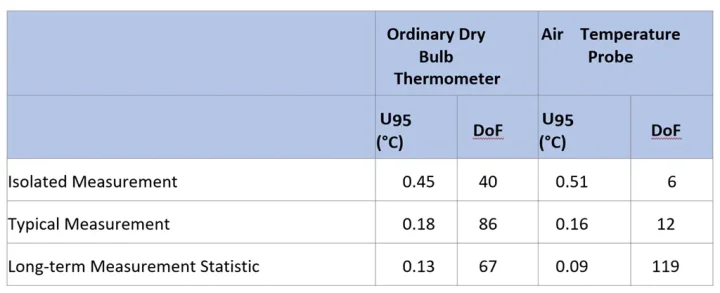

The overall uncertainty of the mercury in glass ordinary dry bulb thermometer and PRT probe to measure atmospheric temperature is given in Table 4. This table is a summary of the full measurement uncertainty budget given in Appendix D.

Table 4 – Summary table of uncertainties and degrees of freedom (DoF) [JCGM 100:2008] for ordinary dry bulb thermometer and electronic air temperature probes also referred to as PRT probes.

A detailed assessment of the estimate of least uncertainty for the ordinary dry bulb thermometer and air temperature probes is provided in Appendix D. This details the uncertainty contributors mentioned above in Table 3.

(End quote).

………………………………………..

There are some known sources of uncertainty that are not covered, or perhaps not covered adequately, in these BOM tables. One of the largest sources is triggered by a change in the site of the screen. The screen has shown itself over time to be sensitive to disturbance such as site moves. The BOM, like many other keepers of temperature records, has engaged in homogenization exercises that the public has seen successively as “High Quality” data set that was discontinued, then ACORN-SAT versions 1, 2, 2.1 and 2.2.

The homogenization procedures are described several reports including:

http://www.bom.gov.au/climate/data/acorn-sat/

The magnitude of changes due to site shifts are large compared to changes from the effects listed in the table above. They are also widespread. Few if any of the 112 or so official ACORN-SAT stations have escaped adjustment for this effect.

This table shows some daily adjustments for Alice Springs Tmin, with differences between raw and ACORN-SAT version 2.2 shown, all in ⁰C. Data are taken from

http://www.waclimate.net/acorn2/index.html

| Date | Min v2.2 | Raw | Raw minus v2.2 |

| 1944-07-20 | -6.6 | 1.1 | 7.7 |

| 1943-12-15 | 18.5 | 26.1 | 7.6 |

| 1942-04-05 | 5.8 | 13.3 | 7.5 |

| 1942-08-22 | 8.1 | 15.6 | 7.5 |

| 1942-09-02 | 3.1 | 10.6 | 7.5 |

| 1942-09-18 | 13.4 | 20.6 | 7.2 |

| 1942-07-05 | -2.8 | 4.4 | 7.2 |

| 1942-08-23 | 4.1 | 11.1 | 7.0 |

| 1943-12-12 | 19.8 | 26.7 | 6.9 |

| 1942-05-08 | 7.7 | 14.4 | 6.7 |

| 1942-02-15 | 18.4 | 25.0 | 6.6 |

| 1942-10-18 | 11.7 | 18.3 | 6.6 |

| 1943-07-05 | 2.3 | 8.9 | 6.6 |

| 1942-05-10 | 4 | 10.6 | 6.6 |

| 1942-09-24 | 5.1 | 11.7 | 6.6 |

These differences add to the uncertainty estimates being sought. The are different ways to do this, but BOM seems not to include them in the overall uncertainty. They are not measured differences, so they are not part of measurement uncertainty. They are estimates by expert staff, but nevertheless they need to find a place in overall uncertainty.

We question whether all or even enough sources of uncertainty have been considered. If the uncertainty was as small as is indicated, why would there be a need for adjustment, to produce data sets like ACORN-SAT? This was raised with BOM by this letter.

………………………………………

(Sent to Arla Duncan BOM Monday, 2 May 2022 5:42 PM )

Thank you for your letter and copy of BOM Instrument Test Report 716, “Near Surface Air Temperature Measurement Uncertainty V1.4_E.” via your email of 1st April, 2022.

This reply is in the spirit of seeking further clarification of my question asked some years ago,

“If a person seeks to know the separation of two daily temperatures in degrees C that allows a confident claim that the two temperatures are different statistically, by how much would the two values be separated?”

Your response has led me to the centre of the table in your letter, which suggested that historic daily temperatures typical of many would have uncertainties of the order of ±0.23 °C or ±0.18 °C.

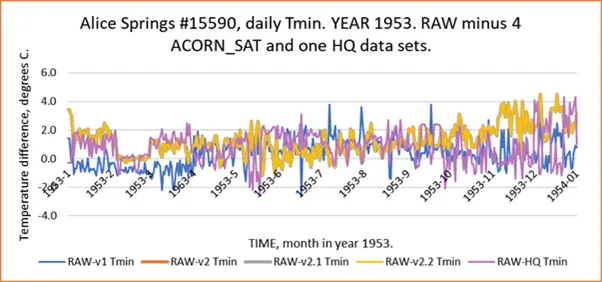

I conducted the following exercise. A station was chosen, here Alice Springs airport BOM 15590 because of its importance to large regions in central Australia. I chose a year, 1953, more or less at random. I chose daily data for granularity. Temperature minima were examined. There is past data on the record for “Raw” from CDO, plus ACORN-SAT versions so far numbered 1, 2, 2.1 and 2.2; there is also the older High Quality BOM data set. With a day-by-day subtraction, I graphed their divergence from RAW. Here are the results.

It can be argued that I have chosen a particular example to show a particular effect, but this is not so. Many stations could be shown to have a similar daily range of temperatures.

This vertical range of temperatures at any given date can encompass a range of up to some 4 degrees C. In a rough sense, that can be equated to an uncertainty of +/- 2 deg C or more. This figure is an order of magnitude greater than your uncertainty estimates noted above.

Similarly, I chose another site, this time Bourke NSW, #48245 an AWS site, year 1999.

This example also shows a large daily range of temperatures, here roughly 2 deg C.

In a practical use, one can ask “What was the hottest day recorded in Bourke in 1993?

The answers are:

- 43.2 +/- 0.13 ⁰C from the High Quality and RAW data sets

- 44.4 +/- 0.13 ⁰C from ACORN-SAT versions 2.1 and 2.2

- or 44.7 +/- 0.13 ⁰C from ACORN-SAT version 1

The results depend on the chosen data set and use the BOM estimates of accuracy for a liquid-in-glass thermometer and a data set of 100 years duration from the BOM Instrument Test Report 716 that was quoted in the table in your email.

This example represents a measurement absurdity.

There appears to be a mismatch of estimates of uncertainty. I have chosen to use past versions of ACORN-SAT and the old High Quality data set because each was created by experts attempting to reduce uncertainty.

How would BOM resolve this difference in uncertainty estimates?

(End of BOM letter)

……………………

BOM replied on 12 July 2022, extract follows:

“In response to your specific queries regarding temperature measurement uncertainty, it appears they arise from a misapplication of the ITR 716 Near Surface Air Temperature Measurement Uncertainty V1.4_E to your analysis. The measurement uncertainties in ITR 716 are a measurement error associated with the raw temperature data. In contrast, your analysis is a comparison between time series of raw temperature data and the different versions of ACORN-SAT data at particular sites in specific years. Irrespective of the station and year chosen, your analysis results from differences in methodology between the different ACORN-SAT datasets and as such cannot be compared with the published measurement errors in ITR 716. We recommend that you consider submitting the results of any further analysis to a scientific journal for peer review.”

(End of part of my reply).

BOM appears not to accept that site move effects should be included as part of uncertainty estimates. Maybe they should be. For example, in the start of the Australian Summer there are often news reports that claim a record new hot temperature has been reached on a particular day at some place. This is no easy task. See this material for the “hottest day ever in Australia.”

The point is that BOM are encouraging use of the ACORN-SAT data set as the official record, while often directing inquiries also to the raw data at one of their web sites. This means that a modern temperature like one today, from an Automatic weather Station with a Platinum Resistance Thermometer, can be compared with an early temperature after 1910 (when ACORN-SAT commences) taken by a Liquid in Glass Thermometer in a screen of different dimensions, shifted from its original site and adjusted by homogenisation.

Thus the comparison can be made between raw (today) and historic (early homogenised and moved).

Surely, that procedure is valid only of the effects of site moves are included in the uncertainty.

………………………………

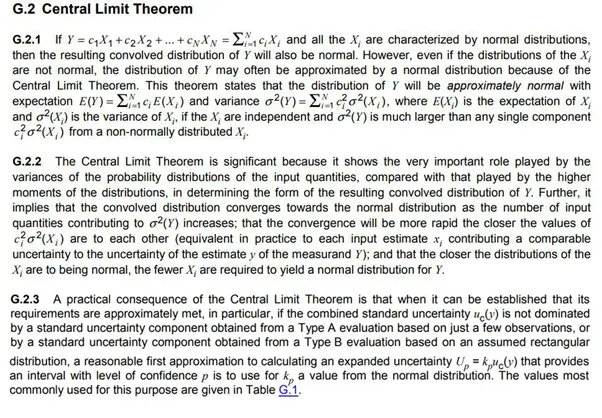

We return now to the topic of the Central Limit Theorem and the Law of Large Numbers, LOLN.

The CLT is partly described in the BIPM GUM here, a .jpg file to preserve equations:

These words are critical – “even if the distributions of the Xi are not normal, the distribution of Y may often be approximated by a normal distribution because of the Central Limit Theorem.” They might be the main basis for justifying uncertainty reductions statistically. What is the actual distribution of a group of temperatures from a time series?

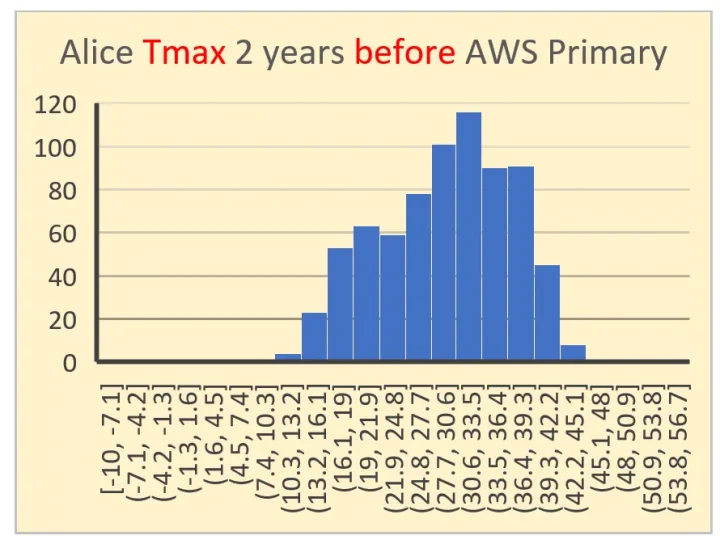

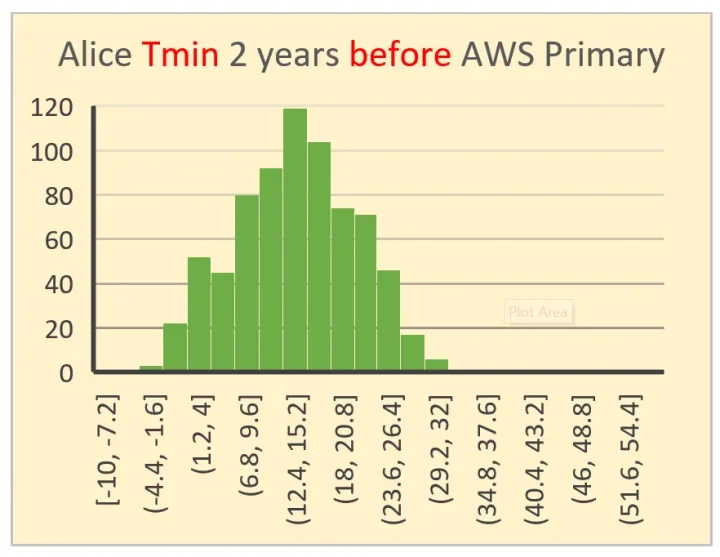

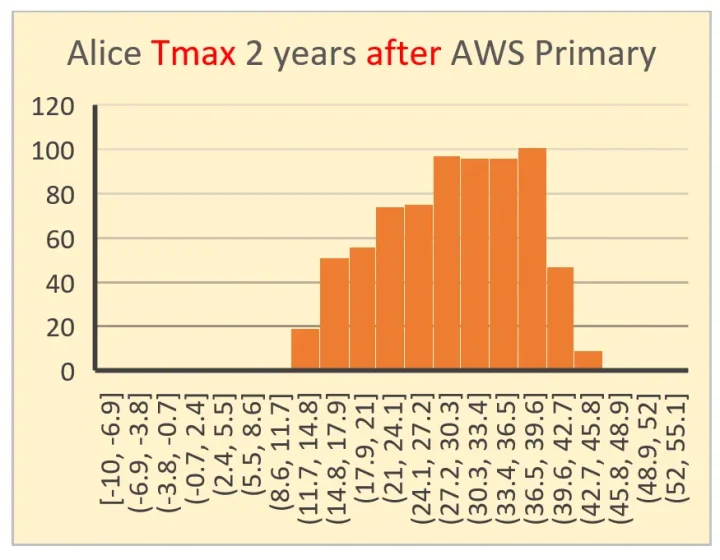

These 4 histograms below were chosen to find if there was a signature of change from 2 years before Alice Springs weather station changed from Mercury-in-Glass to Platinum Resistance thermometry, compared to 2 years after the change of instrument. Upper pair (Tmax and Tmin) are before the change on 1 November 1996, lower pair are after the change. Both X and Y axes are scaled comparably.

In gross appearance, only one of these histograms visually approaches a normal distribution.

The principal question that arises is: “Does this disqualify the use of the CLT in the way that BOM seems to use it?”

Known mathematics can easily devolve these graphs into sub-sets of normal distributions (or near enough to). But that is an academic exercise, one that becomes useful only when the cause of each sub-set is identified and quantified. This quantification step, linked to a sub-distribution, is not the same as the categories of sources errors listed in the BOM tables above. I suspect that it is not proper to assume that their sub-distributions will match the sub-distributions derived from the histograms.

In other words, it cannot be assumed carte-blanche that the CLT can be used unless measurements are made of the dominant factors contributing to uncertainty.

In seeking to conclude and summarise Part two, the main conclusions for me are:

- There are doubts whether the central Limit Theory is applicable to the temperatures of the type described.

- The BIPM Guide to Uncertainty might not be adequately applicable in the practical sense. It seems to be written more for controlled environments like national standards institutions where attempts are made to minimise and/or measure extraneous variables. It seems to have limits when the vagaries of the natural environment are thrust upon it. However, it is useful for discerning if authors of uncertainty estimates are following best practice. From the GUM:

The term “uncertainty”

The concept of uncertainty is discussed further in Clause 3 and Annex D.

The word “uncertainty” means doubt, and thus in its broadest sense “uncertainty of measurement” means doubt about the validity of the result of a measurement. Because of the lack of different words for this general concept of uncertainty and the specific quantities that provide quantitative measures of the concept, for example, the standard deviation, it is necessary to use the word “uncertainty” in these two different senses.

In this Guide, the word “uncertainty” without adjectives refers both to the general concept of uncertainty and to any or all quantitative measures of that concept.

- There are grounds for adopting an overall uncertainty of at least +/- 0.5 degrees C for all historic temperature measurements when they are being used for comparison to each to another. It does not immediately follow that other common uses, such as in temperature/time series should use this uncertainty.

This article is long enough. I now plan a Part Three, that will mainly compare traditional statistical estimates of uncertainty with newer methos like “bootstrapping” that might well be suited for the task.

…………………………………..

Geoff Sherrington

Scientist

Melbourne, Australia.

30th August, 2022

Geoff,

Do you accept that the NIST uncertainty machine, which uses the technique specified in the GUM document (JCGM 100:2008) produces the correct result when given the measurement model y = f(x1, x2, …, xn) = Σ[xi, 1, n] / n or in the R syntax required by the site (x1 + x2 + … + xn) / n?

Do you accept that the uncertainty evaluation techniques 1) GUM linear approximation described in JCGM 100:2008 and 2) GUM monte carlo described in JCGM 101:2008 and JCGM 102:2011 produce correct results [1]?

The usual nonsense from bgwxyz…edition 674…

You’re counting?

It’s an automated process…

RTFM on the link and it tells you the answer 🙂

So can you read and you tell us the answer because it is expressly told to you.

I’ve read the manual several times. It does not mention Geoff.

BTW…the question is not posed to Geoff per se, but anyone who wants to give their yay or nay to the GUM procedures and NIST uncertainty machine.

What do you think? Do you think the GUM procedures produce the correct result? Do you think the NIST uncertainty machine produces the correct result?

“I’ve read the manual several times. It does not mention Geoff”

There is a difference between reading and seeing words. Someone who thinks such a childish reply is sagacious ain’t going to comprehend much of it.

There seems to be some confusion here. Let me see if I can clear it up now. I’m not asking if NIST thinks their own calculator and GUM procedures produce the correct results. I’m assuming they do since…ya know…it’s their product and they specifically cite the GUM. I’m asking if Geoff accepts the NIST uncertainty machine and the GUM procedures. And like I said above I’m inviting anyone to answer the question.

Just another verse of your song and dance routine that bypasses the truth. You’ve been told the answer to this question innumerable times yet you continue to pluck the same old out-of-tune banjo.

Why is this?

There is no confusion. You are cut and pasting stuff you don’t understand in place of a good argument. Be specific. You read it. Point out how this addresses Geoff’s concerns.

The BOM Near Surface Air Temperature Measurement Uncertainty report relies heavily on the methods and procedures in the GUM. If Geoff (or anyone else for that matter) do not accept the methods and procedures in the GUM then they aren’t going to accept the uncertainty analysis and resulting combined uncertainties in appendix D and any answer to the question: “If a person seeks to know the separation of two daily temperatures in degrees C that allows a confident claim that the two temperatures are different statistically by how much would the two values be separated.”

And no. The NIST uncertainty machine manual does not tell me if Geoff, a random WUWT commentor, or anyone else for that matter accept their product. It would be rather bizarre if they mentioned any of us don’t you think?

What is not being accepted are your faulty interpretations and cherry picking of what you read in the GUM.

HTH

The NIST calculator is for multiple measurements of the SAME THING *only*. It simply doesn’t work for situations where you have multiple measurements that are of different things.

This has been pointed out to you MULTIPLE times and you continue with your delusional view of how to handle uncertainty!

+1000 and they even expressly say that and discuss things you might try if you have problematic measurement or data.

LdB said: “+1000 and they even expressly say that and discuss things you might try if you have problematic measurement or data.”

Where do they say that? And how do you reconcile you belief with their examples where the measurement model combines input quantities of different things?

3.3.2 In practice, there are many possible sources of uncertainty in a measurement, including:

a) incomplete definition of the measurand;

b) imperfect reaIization of the definition of the measurand;

c) nonrepresentative sampling — the sample measured may not represent the defined measurand;

1.2 This Guide is primarily concerned with the expression of uncertainty in the measurement of a well-defined physical quantity — the measurand — that can be characterized by an essentially unique value. If the phenomenon of interest can be represented only as a distribution of values or is dependent on one or more parameters, such as time, then the measurands required for its description are the set of quantities describing that distribution or that dependence.

2.2.3 The formal definition of the term “uncertainty of measurement” developed for use in this Guide and in

the VIM [6] (VIM:1993, definition 3.9) is as follows:

uncertainty (of measurement) parameter, associated with the result of a measurement, that characterizes the dispersion of the values that could reasonably be attributed to the measurand

NOTE 1 The parameter may be, for example, a standard deviation (or a given multiple of it), or the half-width of an

interval having a stated level of confidence.

NOTE 2 Uncertainty of measurement comprises, in general, many components. Some of these components may be

evaluated from the statistical distribution of the results of series of measurements and can be characterized by experimental standard deviations. The other components, which also can be characterized by standard deviations, are evaluated from assumed probability distributions based on experience or other information.

NOTE 3 It is understood that the result of the measurement is the best estimate of the value of the measurand, and that all components of uncertainty, including those arising from systematic effects, such as components associated with

corrections and reference standards, contribute to the dispersion.

-combined standard uncertainty

standard uncertainty of the result of a measurement when that result is obtained from the values of a number of other quantities, equal to the positive square root of a sum of terms, the terms being the variances or covariances of these other quantities weighted according to how the measurement result varies with changes in these quantities

2.3.5

expanded uncertainty

quantity defining an interval about the result of a measurement that may be expected to encompass a large fraction of the distribution of values that could reasonably be attributed to the measurand

3.1.4 In many cases, the result of a measurement is determined on the basis of series of observations obtained under repeatability conditions (B.2.15, Note 1).

3.1.5 Variations in repeated observations are assumed to arise because influence quantities (B.2.10) that can affect the measurement result are not held completely constant.

3.1.6 The mathematical model of the measurement that transforms the set of repeated observations into the measurement result is of critical importance because, in addition to the observations, it generally includes various influence quantities that are inexactly known. This lack of knowledge contributes to the uncertainty of the measurement result, as do the variations of the repeated observations and any uncertainty associated with the mathematical model itself.

——————————————————————————

Different temperatures taken using different devices are not repeated observations of the same measurand.

I’m not a scientist and yet even I understand that temperature readings taken at two different times or two different places (or a combination thereof) are not the same thing. What a maroon.

I don’t see how it does measurements at all. The manual clearly states that there must be a y = f{X1 . . . Xn} relationship for the data sets. This doesn’t exist in our case.

JS said: “The manual clearly states that there must be a y = f{X1 . . . Xn} relationship for the data sets.”

y = f(X1 . . . Xn) = (X1 + … + Xn) / n is a functional relationship between the inputs X1…Xn and the output y.

You can write the equation but how do you evaluate it?

What is the value of X1?

What is the value of X1 if it is a function of time and space?

What is the value of Xn?

What is the value of Xn if it is a function of time and space?

How do you combine f(X1(t,s)) with f(X2(t,s))

You *must* know this in order to actually define

y = f(X1…Xn)

And if “n” is the number of elements then it is *NOT* a functional relationship, it is a statistical descriptor.

-A functional relationship is defined by measurands, measurands in – measurands out.

-How do you measure “n” physically?

-What units is it measured in?

Right there is the problem .. LEARN TO READ

They don’t claim it produces the right answer it produces AN ANSWER whether or not it is correct depends on the measurement function F and the actual distribution. They even give examples with problems if you actually read and how you might address them.

Geoff’s post is about “measurement function F” if you want to follow it in the NIST manual.

They discuss all that because they are not eco activist whack jobs or climate scientists and the actual right answer matters to them not the preferred answer to fit a narrative.

His use of the NIST Calculator is a classic example of garbage-in-garbage-out.

I was thinking of the claim that a million monkeys pounding on a typewrite will eventually output Shakespeare writings.

Yes, GIGO!

In budgerigar’s case, anything but garbage out, just as impossible as monkeys writing Shakespeare.

LdB said: “They don’t claim it produces the right answer it produces AN ANSWER whether or not it is correct depends on the measurement function F and the actual distribution.”

First, I think you’re misunderstanding what I mean by “correct”. All I mean by that is that it produces u(y) which is the standard uncertainty of the measurement model Y. Does Geoff, you, or anyone else willing give their yay or nay accept that?

Second, are you suggesting that the NIST uncertainty machine does not produce the correct result when the measurement model is Y = Σ[(1/n)*x_i, 1, n] or more concretely Y = (1/2)*x0 + (1/2)*x1, but that it works in all other cases?

Not a measurement model, this is beyond goofy.

“ measurement model is Y = Σ[(1/n)*x_i, 1, n]”

As MC points out, this is *NOT* a measurement model!

It is a statistical description of a population.

Let me see you plug those numbers into the UM. It won’t work, and I’ll tell you why.

The UM is designed to give the uncertainty in your measurements of something, which are then plugged into the measurement model.

Look at the thermal expansion coefficient example.

To measure the coefficient of linear thermal expansion of a cylindrical copper bar, the length L0 = 1.4999m of the bar was measured with the bar at temperature T0 = 288.15K, and then again at temperature T1 = 373.10K, yielding L1 = 1.5021m.

The measurement model is

A= (L1 – L0) / (L0(T1 – T0))

.Their setup looks like this:

That can’t be done with your equation, because it is not a measurement model. The measurement model for the temperature data set would look something like

L0 = “03-MAR-2021”

L1 = “ASN00023034”

T = L0 somethingsomething L1

It can’t be done, because they’re no relationship between the date and the station, and the temp.

JS said: “Let me see you plug those numbers into the UM.”

Select 4 inputs for simplicity…

y = 0.25*x0 + 0.25*x1 + 0.25*x2 + 0.25*x3

let x0 = 11±1, x1 = 12±1, x2 = 13±1, x3=13±1

The result is y = 12.25 and u(y) = 0.5

I don’t know how UM got u(y) = 0.5. This is not standard uncertainty

I used three different uncertainty calculators and they each got 2.

Doing it manually I get sqrt(1+1+1+1) = 2

go here:

https://nicoco007.github.io/Propagation-of-Uncertainty-Calculator/

or here

https://hub-binder.mybinder.ovh/user/sandiapsl-suncal-web-xa6cvj36/voila/render/Uncertainty%20Propagation.ipynb?token=9oioyqxxSI–5MfMvfuVaA

go here:

https://thomaslin2020.github.io/uncertainty/#/

I’ve even attached a screenshot from one of them. NIST is dividing the standard uncertainty by 4.

That is *NOT* standard uncertainty.

This is where you keep going wrong! (as do they)

Not a surprise considering their temperature averaging example uses sigma/root(N).

Does the equation need to be entered as (x1+x2+x3+x4)/4?

I screwed up the formula. I forgot the parenthesis.

But yes, it’s because they divide by four – thinking they are finding the uncertainty of the average when all they are getting is the average uncertainty. They are *NOT* the same thing.

You are misusing the GUM procedures. The GUM does *NOT* say that you lower the uncertainty by dividing by N.

We have been over this before. From the NIST manual:

“”The NIST Uncertainty Machine (https://uncertainty.nist.gov/) is a Web Based software application to evaluate the measurement uncertainty associated with an output quantity defined by a measurement model of the form y = f(x1,…, xn).””

Please notice the terms “measurement uncertainty” and “measurement model”. A measurement model IS NOT calculating a mean of unrelated measurements. A measurement model is “length + width” or “π•r•r” or something similar.

A mean can provide a better estimate of a “true value” if all values and errors are random (i.e., a normal distribution) and if and only if the same thing is being measured multiple times by the same device.

Section 2 goes on to say:

“”Section 12, beginning on Page 27, shows how the NIST Uncertainty Machine may also be used to produce the elements needed for a Monte Carlo evaluation of uncertainty for a multivariate measurand:””

Please note the term “multivariate measurand”! This doesn’t refer to a mean of many independent measurements of different measurands. It is referring to a measurement that has several underlying measurements such as the volume of a cylinder or the volume of a conical device.

Please quit cherry picking formulas without studying and understanding the assumptions that must be met to use them.

These apply to a known distribution.

There is no issue with using this sort of analysis on measurements with purely random errors. Treating lots of systematic errors like random errors is the issue.

Robert B said: “These apply to a known distribution.”

The NIST uncertainty machine certainly does. The manual even says so. But the GUM only says that the standard uncertainty u(xi) be known. There is no requirement that the distribution of u(xi) itself be known.

Robert B said: “There is no issue with using this sort of analysis on measurements with purely random errors. Treating lots of systematic errors like random errors is the issue.”

NIST TN 1297 says that there are components of uncertainty arising from systematic effects and arising from random effect and that both can be evaluated by either the type A or B procedure in the GUM. You would then combine all components of uncertainty regardless of whether they are systematic or random using “the law of propagation of uncertainty” in appendix A (which happens the same as GUM section 5). If each observation has a different systematic effect like might be the case if they are from independent instruments then you can model that as a random variable (GUM C.2.2) since it would have a probability distribution. In that case you can treat the set of systematic effects as if they were one random effect. Don’t hear what isn’t being said. It isn’t being said that all systematic effects can be treated this way. Nor is is being said that these observations don’t also have a systematic effect that is common to them all.

Nice word salad.

Do you understand any of this? Does “the law of propagation of uncertainty” mean that they are speaking from authority?

Its about calculating an error of a function when the variables have a random error. The scientists and engineers that you want to argue with (belittle?) have been doing it for years in their work. It’s clear that you have read it for the first time this evening.

I’m not sure that I can dumb it down enough for you, but I’ll give it go.

I can tell what the outside temperature is, sheltered from sun and wind, by the clothes that I need to wear. Under 17°C and I’ll need light second layer over my shirt. Over 19°C and anything other than a shirt is too warm. Similar for woolly socks, beanie, scarf etc. Quite a lot of uncertainty but I can assume errors are a symmetrical distribution (including the many systematic errors from my dubious calibration) and if I do the measurement 10 000 times I’ll get the outside temperature to 0.02°C. I can justify it using the arguments above but it’s still effing stupid.

Robert B said: “Do you understand any of this?”

I would never be so foolish as to claim that I understand all of it. But yes, I do understand some of it.

Robert B said: “Does “the law of propagation of uncertainty” mean that they are speaking from authority?”

No. It means they are speaking from the equation σ_z^2 = Σ[(∂f/∂w_i)^2 * σ_i, 1, N] + 2 * ΣΣ[∂f/∂w_i * ∂f/∂w_j * σ_i * σ_j * p_ij, i+1, N, 1, N-1]. They call this equation the “law of propagation of uncertainty”. Some texts call it the “law of propagation of error”.

Robert B said: “The scientists and engineers that you want to argue with (belittle?) have been doing it for years in their work.”

I’m not arguing with the scientists and engineers that developed uncertainty. And I’m certainly not belittling them or anyone else. Why would I? I happily accept the GUM and NIST contributions to the field.

Robert B said: “It’s clear that you have read it for the first time this evening.”

I’ve been studying the GUM ever since I was told that I had to use it early last year. I’ve read it “cover-to-cover” multiple times since then. It’s a lot to take in.

Robert B said: “Quite a lot of uncertainty but I can assume errors are a symmetrical distribution (including the many systematic errors from my dubious calibration) and if I do the measurement 10 000 times I’ll get the outside temperature to 0.02°C.”

I’m guessing that was sarcasm. Right?

When are you going to stop telling lies about what you’ve read in the GUM?

You lie a lot. Very doubtful that you understood anything at all that you have read.

I can gather from others that you have used this source to cut and paste sections as if you are arguing a point, many times before. That all you can add is “They call this equation the “law of propagation of uncertainty”. Some texts call it the “law of propagation of error” outs you as not spending even an hour to grasp any of the concepts – or it’s completely beyond your capabilities.

Belittling of Geoff with a childish comment, and everyone one else reading this with the childish stunt of cut and pasting complex stuff to pretend that you have put forward a insightful argument. You get caught out and you double down on doing it again. Nearly everyone reading this might not have delved deep into the theory but have done some propagation of errors for at least simple functions and so can appreciate much better the arguments than a twit who merely cuts and pastes as if doing a high-school assignment.

“That’s sarcasm right”. That’s how I feel when I see a change in measured average ocean temperatures of a thousandth of a degree being taken seriously because 10 000 measurements automatically reduce the uncertainty by a factor of 100 – or didn’t you realize that is what you are defending?

Robert B said: “Very doubtful that you understood anything at all that you have read.”

I would never be foolish enough to say that I understand everything in the GUM. But I can confidently say that I understood some of it.

Robert B said: “I can gather from others that you have used this source to cut and paste sections as if you are arguing a point, many times before.”

Correct. I use the GUM all of the time. I was told I had to. Geoff cited it in his previous article in the series so I feel like it is a source that Geoff might consider seriously.

Robert B said: “Belittling of Geoff with a childish comment”

Geoff, be honest…brutally honest if you must. Have I offended you in anyway?

Robert B said: ““That’s sarcasm right” That’s how I feel…”

That was a serious question. I’m terrible at sarcasm. I’m so bad, in fact, that my family often calls me Sheldon. I honestly thought it was sarcasm because assessing the temperature by repeated evaluations of the clothing you wear seemed absurd to me. But I’ve learned I should always ask and not assume lest I be accused of putting words in people’s mouths.

You’re terrible at a lot of things.

What’s so hard to understand?

I can also assume that the LAW of large numbers makes it correct. I can also cut and paste equations to support my assertion, when they are merely the theory behind proper error propagation. Arguing that I must disagree with the theory if I disagree with how it’s applied to a specific case is offensive – pigeon on a chess board stuff.

Yes. I am terrible at a lot of things. Picking up on sarcasm is the least of them.

The GUM is hard to understand.

I thought the law of propagation of uncertainty made it correct. Anyway, it seems like you are super knowledgeable when it comes to the GUM. Maybe you could help us go through some examples.

Let y = (1/2)x1 + (1/2)x2, u(x1) = 0.5, and u(x2) = 0.5. Using the methods, procedures, and symbology from the GUM compute u_c(y). I’d be very interested in seeing how you approach the problem if you don’t mind.

Another round of “stump the professor”?

Go play with the NIST web page instead.

Where does the 1/2 come from? What measurement model are you using?

I am having a difficult time coming up with a measurement model where the output would be half of each measurement added together.

If you are trying to justify this as an example of an average then the actual measurements are x1 and x2. The 1/2 is meaningless. The uncertainties then add by root-sum-square.

To use the UM correctly there must be a function f{x} that gives y. In our case, what is the f{“25-March-1996”} that gives 13.4C?

Short answer: there isn’t one. The UM is unsuitable for our purpose.

I’m not sure I’m understanding the intent of using a date as an input. Can you clarify what the goal is here?

Temperatures are time-vaying. In order to have a value to evaluate you must specify a point in time where it will exist.

It’s easy to write y = f(X1…XN), it is far more difficult to actually evaluate X1 … XN. If you can’t evaluate them then you can’t calculate “y”.

You nailed it!

You STILL don’t divide each component in the relationship by the number of elements in order to determine overall uncertainty!

“ If each observation has a different systematic effect like might be the case if they are from independent instruments then you can model that as a random variable (GUM C.2.2) since it would have a probability distribution. “

You are STILL trying to say that this applies to all situations! It ONLY applies if you have multiple measurements of the same thing. If you have multiple measurements of different things you don’t even have a true value which the random variable probability distribution can center on!

Nick Stokes has now hopped onto this same train of stupidity, see below.

Have you ever seen Nick Stokes and bdgrx in the same room at the same time? 🤨

Fortunately, no.

bdgwx,

Define “correct results”.

Geoff S

Correct as in given measurement model Y the NIST uncertainty machine will compute u(Y) that represents the uncertainty of Y.

Correct as in given measurement model Y the procedure described in section 5 can be used to compute u(Y) that represents the uncertainty of Y.

Correct as in given measurement model Y the procedure defined in JCGM 101:2008 (monte carlo) can be used to compute u(Y) that represents the uncertainty of Y.

Correct as in given example E2 in NIST TN 1900 the uncertainty of the average of the Tmax observations u(Tmax_avg) = s/sqrt(m) = 0.872 C.

By your repetition of this garbage over and over, at this point you are just a nutter.

No NIST doesn’t say that is the “right answer” they expressly tell you that in the sections above. It is “an answer” whether it is right or not needs proper evaluation of the measurement function F and the distribution.

It isn’t that hard to understand just read it again.

Just to confirm:

You do not accept the NIST uncertainty machine as being capable of computing u(y) of the measurement model Y. Yes/No?

You do not accept that the methods and procedures in the GUM are adequate to compute u(y) of the measurement model Y. Yes/No?

You put garbage into the NIST web page, you get garbage out of the NIST web page.

Clown, do you think a computer program can tell you the measurement uncertainty of a system for which it knows nothing about the nature or distribution of the measurement errors, let alone what it’s supposed to be measuring?

Yes. The NIST uncertainty machine is capable of doing that. I reviewed the source code and I don’t see anything in there specific to a particular measurement model. That is not surprising since it would be rather odd to program it that way as it would then be limited to only those measurement models programmed. That’s one of the points of the tool. That is it works for any measurement model as long as it can be defined with an R expression.

The problem here is with the operator, who chooses cluelessness.

It’s been pointed out to you MANY times that the NIST machine has no input available for a a data set consisting of different measurements of different things, only multiple measurements of the same thing. It doesn’t even offer a way to enter skewness or kurtosis – primary statistical descriptors for a data set consisting of multiple measurements of different things using different devices.

The NIST machine simply can’t handle data sets generated by multiple measurements of different things. Standard statistical descriptions like mean and standard deviation can’t do it either!

It only works when you have multiple measurements of the same thing.

The average of a quantity of temperatures is *NOT* a measurement model, it is the calculation of a statistical description of a probability distribution.

From the GUM:

Y = f (X1, X2, …, XN ) Eq. (1)

4.1.5 The estimated standard deviation associated with the output estimate or measurement result y, termed combined standard uncertainty and denoted by uc(y), is determined from the estimated standard deviation associated with each input estimate xi, termed standard uncertainty and denoted by u(xi) (bolding mine, tg)

5.1.2 The combined standard uncertainty u_c(y) is the positive square root of the combined variance u_c^2 ( y), which is given by

u_c^2(y) = Σ(∂f/∂x_i) u^2((x_i) where the sum is from 1 to N Eq (10)

You’ve been given this multiple times. When you are doing an average the function Y is:

Y = f(x1, x2, …, xN, N)

The undertainty of each component is considered separately.

Thus the uncertainty formula for temperatures becomes

u_c^2(y) = u^2(x1) + u^2(x2) + … + u^2(xN) = u^2(N)

You continue to want to find the uncertainty of x1/N, x2/N, etc when that is *NOT* what the GUM says. You add the uncertainties of each individual component, including N.

Look up how they handle the relationship between the mass of a spring, gravity, extension, etc.

m = kX/g

They find the uncertainty of each component, “k”, “X”, and “g”. They do *NOT* divide the uncertainty of “k’ and the uncertainty of “X” by g when determining the total uncertainty. The constant “g” does *NOT* lower the uncertainty of “k” and “X”! Which is what you are trying to do by trying to find the uncertainty of x1/N, x2/n, etc.

He doesn’t care, reality doesn’t give him the answer he wants/needs to see.

I don’t know how long he’s been commenting here, but he seems like a basement keyboard warrior and this is how he derives satisfaction.

He is also a dyed-in-the-wool data mannipulator who believes it is possible and ethical to modify old temperature data for “biases”.

This has been an interesting subthread. I was told that I had to use the methods and procedures described in the GUM for assessing uncertainty. Naturally I was expecting an overwhelming yes response to my questions. Yet here we are. Not a single person has said they are willing to accept the evaluation techniques described in the GUM for assessing uncertainty or the NIST uncertainty machine. In fact, there has been a surprising amount of resistance here so far at least.

So this begs the question…since BOM expressly relied heavily on the methods and procedures in the GUM do you accept any of the uncertainty estimates whatsoever from the Near Surface Air Temperature Measurement Uncertainty report? If no, then what methods and procedures do you recommend using?

How about an adjudged uncertainty for the measurement you are taking? Every single measurement has that. They add up. They don’t go away.

Notice how bgwxyz has had exactly zero to say about Geoff’s distribution graphs, instead he endlessly spams this root(N) garbage.

Perhaps you can go into detail. Considering pg. 34 for the PRT uncertainty in the isolated case as starting point what would you do differently? How would you compute the final “Combined Standard Uncertainty”?

Read Pat Frank 2010 and skip down to 2.3.2 Case 3b.

Once you’ve read and understood that, you have permission to speak from all of us.

I’ve read it many times. I’ve even had a few conversations with Dr. Frank regarding it. Thank you for allowing me to discuss this with you.

Considering pg. 34 for the PRT uncertainty in the isolated case as starting point what would you do differently? How would you compute the final “Combined Standard Uncertainty”?

Re-read the paper (again), because somehow you’re asking (again) how to do what the paper tells you to do. Maybe it’s willful helplessness?

Moreover, skip to this part:

“Thus when calculating a measurement mean of temperatures appended with an adjudged constant average uncertainty, the uncertainty does not diminish as 1/sqrt(N).”

CC said: “Re-read the paper (again), because somehow you’re asking (again) how to do what the paper tells you to do.”

Are you saying you would scrap all of the uncertainty analysis on pg. 34 and replace it with σ’u from Frank 2010 pg. 974?

Dr. Frank said: “Thus when calculating a measurement mean of temperatures appended with an adjudged constant average uncertainty, the uncertainty does not diminish as 1/sqrt(N).”

Let’s talk about this. Where did Dr. Frank get the formula σ’u = sqrt[N * σ’_noise_avg^2 / (N-1)]? I’m asking because he cites Bevington 2003 pg. 58 with w_i = 1. Yet that formula is no where to be found on pg. 58 or anywhere in Bevington. I assumed that he derived it from equation 4.22 but I don’t see how since I cannot replicate it nor does he show his work. And he repeats this formula in multiple places. Bellman and I have asked him multiple times where he gets it and all we get is either deflections and diversions or silence. And as best we can tell it is inconsistent with the formulas in the GUM and his own reference Bevington itself.

Again?!?? Let’s not and say we did…

Here are some excerpts from the BOM report.

The last measurand applies to aggregated data sets across many stations and over extended periods. This aggregation further mitigates random errors and is suitable for use in determining changes in trends and overall climatic effects. These are measurements constructed from aggregating large data sets where there is supporting evidence or experience of the performance of the observation systems. Typically, these will be for groups of stations with several years operation and where there are nearby locations to verify the quality of the observations.”

“The last measurand applies to aggregated data sets across many stations and over extended periods. This aggregation further mitigates random errors “

How can it do that? This is the very same problem *YOU* exhibit. When aggregating “many stations” there is simply no guarantee that you will wind up with an identically distributed distribution necessary for random errors to to totally cancel. You may get root-sum-square cancellation but that still grows the uncertainty with each station added, it just doesn’t add up as fast as direct addition. BUT IT DOES *NOT* REDUCE THE TOTAL UNCERTAINTY.

Concerning inspection of the measurement stations:

“The Inspection Process aims to verify if the sensor and electronics are performing within specification. This involves the comparison of the field instrument with a transfer reference. The Inspector makes a judgement on whether the sensor is in good condition and reporting reliably based on this comparison and the physical condition of the sensor. If the inspection differences is less than or equal to 0.3°C, there is no statistically detectable change in the instrument and it will be left in place. A difference of greater than the tolerance of either 0.4 or 0.5°C (see Table 2) implies a high likelihood the sensor is faulty, and it is replaced. In the case of an observed difference of between 0.3°C and the tolerance, the inspector has the authority and training to decide if the instrument needs replacement. This discretion is to allow for false negatives due to the observing conditions. ” (bolding mine, tg)

This means the actual assumed uncertainty that *must* be assumed for each station is at least +/- 3C.

Yet in Table 8 the BOM gives an uncertainty factor ranging from +/- .16C to +/- .23C.

And in Table 9, they show the long term measurement uncertainties as ranging from +/- 0.09C to +/- 0.14C.

As I said, this is no different than what you are trying to push here. Multiple measurements of different things can decrease uncertainty. That is a physical impossibility as well as a violation of the requirements for statistical analysis.

Debunked almost as soon as published….

https://noconsensus.wordpress.com/2011/08/08/a-more-detailed-reply-to-pat-frank-part-1/

Criticized is NOT “debunked.” As of today, Pat Frank’s analysis stands unrefuted.

“unrefuted?”

I spoon fed you a link that does just that. And FYI, the paper is essentially uncited – the method that we use scientific literature to advance science – as his alt,statistics are justifiably rejected in superterranea. Even counting his multiple pocket pool self cites….

Word salad time!

Oh, malarky!

Take just one criticism: “This standard deviation is the difference betweeen true temepratures at different stations so it is again ‘weather noise’.”

Since when is natural variation, i.e. standard deviation, “weather noise”? This is just a meaningless criticism, not a refutation of anything!

Take this one: “Again, ‘s’ is defined as a ‘magnitude’ uncertainty and is incorrectly calculated.”

The correct calculation is never given. So this is just one more unsupported criticism and is not an actual refutation of anything.

Or this one: “This is where Pat makes the claims that stationary noise variances are unjustified. This is key to the conclusions and equations presented throughout the paper. I also believe that has problems but they are more subtle than the more obvious problems of the previous equations. ”

Again, a criticism with no actual backup shown. WHY is the claim unjustified? Just saying it is means nothing.

As usual, you are just spouting religious dogma and claiming it to be the “truth”. This isn’t a religious issue, it’s a scientific issue. Actual refutation must be shown.

BTW, did you read *ANY* of Pat’s rebuttal messages at all?

“BTW, did you read *ANY* of Pat’s rebuttal messages at all?”

Long ago. He, and a very few of you here are in your own alt.world. Complete with claiming “victory” and walking away.

The best metric is the Darwinian lack of response to his papers. In spite of the Dr. Evil conspiracy theories that have all climate scientists in a secret cabal, if he had any valid points, the above grounders would be running over each other to cite him. Instead, he channels the regular Simpson’s scene where Homer says something so ridiculous that 15 seconds of silence ensues, followed by Maggie changing the subject.

Oh, BTW, I just read him going full QAnon in another thread, on COVID. W.r.t. his health, he seems fine from the last pic I saw. A little sun, some crunches, easy peasy…

What is “QAnon”, blob? Sounds like ALAnon…

In other words you have absolutely NOTHING to offer in rebuttal. Just more ad hominems.

ROFL!!

It is unrefuted because climate science fails to understand they are dealing with measurements. They wouldn’t last a minute in a job where tolerances of individual parts are a required part of maintaining employment.

They would measure the tolerance of 16 bearings from all over the machine, average them and work out the uncertainty, and then claim they knew the tolerance of each bearing to 0.001 inches.

I have used several examples like that.

One was a mechanic who ran around the shop measuring all the brake rotors and then telling the customer his rotors needed replacing because the average measurement showed too much wear.

Or the quality guy tracking door production who had a mean of 7′ and an SD of +/- 2″ yet divided the SD by the sqrt of the sample size and told the boss that they were putting out doors of 7′ that were accurate to +/-0.01″.

JS said: “They would measure the tolerance of 16 bearings from all over the machine, average them and work out the uncertainty, and then claim they knew the tolerance of each bearing to 0.001 inches.”

No they wouldn’t. No one is saying that u(X_avg) can be used as an estimate of u(X_i). They are completely different things.

The only statement being made by scientists of all disciplines (climate or otherwise) relevant to this discussion is that u(X_avg) < u(X_i) when the sample X has correlations r(X_i, X_j) < 1. And when r(X_i, X_j) = 0 and u(x) = u(X_i) for all X_i then u(X_avg) = u(x)/sqrt(n).

The contrarians here are distorting and misrepresenting that statement to form the erroneous strawman argument that increasing n decreases u(X_i) as a way of undermining the entirety of the theory of uncertainty analysis developed over at least the last 80 years. I will say they’re doing a good job as not a single commentor including the article author will accept the NIST uncertain machine results or the methods and procedures defined in the GUM. They even have the guy who posted a real NIST certificate questioning the methods and procedures NIST uses.

Liar, no one is doing anything of the sort.

Looking at the Uncertainty Machine website, there is the description of the purpose of the Machine, thus:

Now, what function f is there that produces temperature observations based on some other variable? To use the Machine with these numbers must be wrong, as there is no “algorithm that, given vectors of values of the inputs, all of the same length, produces a vector of values of the output”.

JS said: “Now, what function f is there that produces temperature observations based on some other variable?”

There are countless functions that can produce a temperature output from other variables. For example you could do y = h / ((R/g)* ln(p1/p2)) to produce the mean temperature between between pressure surfaces p1 and p2 with thickness h. Or you could do y = PV/nR to produce the temperature of an ideal gas given pressure (P), volume (V), gas constant (R), and moles (n). Or you could do y = (Tmin + Tmax) / 2 to produce the daily mean temperature. The possibilities are endless.

JS said: “To use the Machine with these numbers must be wrong, as there is no “algorithm that, given vectors of values of the inputs, all of the same length, produces a vector of values of the output””

This is an R thing. The only requirement for y is that it be a function that generates an output with the same vector length as its inputs. 99% of the time you’ll be plugging in scalar values as inputs and producing a single scalar output. The concept of vectors is really more of an advanced use case. And that’s it. That’s the only requirement. You are free to make y as arbitrarily complex as you want as long as it can be defined with an R expression. It can even be so complicated that it executes an algorithm with loops, decision trees, calls to other R functions, etc. To say the NIST uncertainty machine is powerful is a massive understatement.

Temperature observations are not dependent upon the day on which they are recorded. What is the function that can be applied against 1 May to give 17.3 C and against 3 May to get 19.5 C and 4 May to get 10.6 C and etc.? A computer can take 1,3, and 4 and calculate a function f that will come arbitrarily close to 17.3, 19.5, and 10.6, but it will have no predictive power.

And I think that’s a big part of the problem.

JS said: “What is the function that can be applied against 1 May to give 17.3 C and against 3 May to get 19.5 C and 4 May to get 10.6 C and etc.?”

Assuming these temperature values are daily means you have a couple of options. The first is the simplest and most widely used.

y = (Tmin + Tmax) / 2

The second can be used when you have a much larger sample of temperature observations throughout the days. w_i is the weight given to each temperature. For example, if you have 24 hourly observations w_i = 1 h for all T_i.

y = Σ[w_i * T_i] / Σ[w_i]

The methods and procedures defined in the GUM and the NIST uncertainty machine work perfectly fine with both cases.

Just like Nitpick Nick, you ignore JS’ main point.

Typical of trendology.

NOTHING YOU LISTED IS AN AVERAGE EXCEPT (Tmin+Tmax)/2. And THAT IS NOT A MEASUREMENT!

NOTHING YOU LISTED IS A STANDARD DEVIATION OF THE SAMPLE MEANS!

And, once again, the NIST machine is not powerful enough to handle distributions with skewness and kurtosis. So just how powerful can it be?

If you have a skewed population the standard deviation is pretty much useless. Unless you are a climate scientist I guess.

“No they wouldn’t. No one is saying that u(X_avg) can be used as an estimate of u(X_i). They are completely different things.”

Again, you aren’t calculating the uncertainty of the average, you are calculating the average uncertainty. You get a value that, when multiplied by “N”) gives you back the total uncertainty. You already had to calculate the total uncertainty in order to divide it by “N” so what is the purpose?

“ u(X_avg) < u(X_i) when the sample X has correlations r(X_i, X_j) < 1. And when r(X_i, X_j) = 0 and u(x) = u(X_i) for all X_i then u(X_avg) = u(x)/sqrt(n).”

Again, you are *NOT* calculating u(X_avg), you are calculating the average uncertainty.

If m = kX/g then does u^2(m) = u^2(k/g) + u^2(X/g) ???

“The contrarians here are distorting and misrepresenting that statement to form the erroneous strawman argument that increasing n decreases u(X_i) as a way of undermining the entirety of the theory of uncertainty analysis developed over at least the last 80 years.”

Stop whining. No one is saying that

They are saying that the average uncertainty is not the same thing as the uncertainty of the average.

You’ve been given example after example that uncertainty can’t be decreased by just dividing by a constant. The uncertainty of a constant is zero and it neither adds to or subtracts from the total uncertainty of the average.

The example that breaks your method is if all individual uncertainties are the same. In that case u(X_avg) will equal u(X_i) no matter how large your sample is. That’s because you are calculating the average uncertainty and not the uncertainty of the average!

“No they wouldn’t. No one is saying that u(X_avg) can be used as an estimate of u(X_i). They are completely different things.”

Then what use is u(X_avg)? How can it tell you if the distribution changed or not?

The uncertainty of X_avg is *NOT* the standard deviation of the sample means. It is not the standard deviation of the population unless you have a normal distribution or something similar.

“ I will say they’re doing a good job as not a single commentor including the article author will accept the NIST uncertain machine results or the methods and procedures defined in the GUM. “

Stop lying. What we won’t accept is YOUR MISUSE of the GUM.

“And when r(X_i, X_j) = 0 and u(x) = u(X_i) for all X_i then u(X_avg) = u(x)/sqrt(n).”

Again, this appears to be the standard deviation of the sample means. That is *NOT* the accuracy of the mean!

See the attached picture. The target on the right shows the situation where the standard deviation of the sample means is small but the accuracy is terrible. The target on the right shows where the standard deviation of the sample means is wide but the accuracy is good.

You keep trying to convince us that the target on the right is giving us the accuracy of the mean. It doesn’t!

He will either never understand, or refuse to understand.

“They would measure the tolerance of 16 bearings from all over the machine, average them and work out the uncertainty, and then claim they knew the tolerance of each bearing to 0.001 inches.”

That’s EXACTLY what they would do!

JM said: “Criticized is NOT “debunked.” As of today, Pat Frank’s analysis stands unrefuted.”

BOM is refuting it [1].

Lenssen et al. 2019 are refuting it [2].

Rohde et al. 2013 are refuting it [3].

Morice et al. 2020 are refuting it [4].

Brohan et al. 2006 are refuting it [5].

Haung et al. 2020 are refuting it [6].

Vose et al. 2021 are refuting it [7].

And those are only the ones I bothered mentioning. Those alone will cause this post to get moderated and delayed. Everybody is refuting Frank 2010…everybody.

Climastrologers all, who understand uncertainty no better than YOU.

Do any of these refute the assertion that the GCM’s turn into y=mx+b evaluations? If not, then they haven’t refuted anything.

TRYING to refute is not to succeed at refuting. Frank’s work stands.

Janice Moore said: “TRYING to refute is not to succeed at refuting.”

You don’t think that shoe fits on the other foot?

At any rate, where did Frank get the formula σ_avg = sqrt[N * σ^2 / (N-1)]? How do you know it’s right? Why is he combining the Folland 2001 and Hubbard 2002 uncertainties? And why did he not even attempt to propagate the instrumental uncertainty through the gridding, infilling, and spatial averaging steps? And most importantly do you really think one publication with a mere 6 citations really takes down the entirety of the field of uncertainty analysis in one fell swoop?

These questions have been answered again and again, FOOL.

Did you actually bother to read any of Frank’s answers to those criticizing his work? I’m guessing no.

Give me a break.

From just your number 2 link:

“ Since monthly temperature anomalies are strongly correlated in space, spatial interpolation methods can be used to infill sections of missing data.”

This statement alone disqualifies the entire document. Monthly temperature anomalies are *NOT* strongly correlated in space. Temperature correlation is a function of distance, terrain, geography, elevation, barometric pressure, humidity, wind, etc. Even stations as close as 20 miles apart can have different temperature and anomalies because of intervening bodies of water, being on the east side of a mountain vs the west side, having vastly different elevations, having different humidity because of intervening land use, and even the terrain under the measurement device.

My guess is that the other links suffer from the very same assumption. Every paper I have read on homgenization of temperatures and infilling data suffer from this. The assumption is made for convenience sake and not from any actual scientific fact or observation.

In other words you are just doing your typical cherry picking without actually understanding the scientific concepts being put forth and questioning their validity.

blob is in da house!

You may think debunked but you’ll have to explain why the GCM’s all end up with an output of y=mx+b that never ends. What do you think the error bars and uncertainty should be to encompass this kind of prediction?

Nobody will accept the uncertainties because they don’t meet the requirements of using the GUM when you have single measurements of different things with different devices.

The GUM is not a process control design document for Quality Control of manufacturing parts. It is designed to allow you to achieve the most accurate measurement of each individual part with the best uncertainty possible. That allows Quality folks to know when a machine or process is going out of control.

Using averages can and will cover up problems. Especially if the errors are random a process can go entirely out of control by only looking at averages. Using statistics is fine but one needs to know the assumptions and limits behind them. That is why you are having a problem convincing folks that have dealt with this of what you believe is correct. Liars figure and figures lie. Take that as your mantra and you’ll will be on the road to being a physical scientist.

You were told to use the GUM for assessing the uncertainty of MULTIPLE MEASUREMENTS OF THE SAME THING!

Yet you insist on trying to apply the GUM to multiple measurements of different things.

EVERYONE here accepts the GUM for assessing uncertainty of MULTIPLE MEASUREMENTS OF THE SAME THING!. Same for the NIST machine – as long as the measurements define a probability distribution that is identically distributed.

Stop your whining.

No I do not accept that “the NIST uncertainty machine, which uses the technique specified in the GUM document (JCGM 100:2008) produces the correct result”

You have missed the whole point of the article. All sources of uncertainty must be considered other wise it is a point less exercise.NIST know what they are doing and I am sure the calculator is robust but the onus is still on the user to use it properly with robust assumptions and intellectual honesty.

Below are two examples of NIST’s work and attention to detail re UoM, 2 numbers accompanied by 4 paragraphs of explanation.

number 2

Your own certificate says it uses the methods in the JCGM guide. The NIST uncertainty machine uses those exact same methods. How do you reconcile accepting one but not the other? Is there is a bug in the their software they aren’t telling us about? Is it not really using the methods in the JCGM guide despite advertising that it does? Or is there another reason you don’t accept it?

We are now 80 posts in on these two questions and not a single person accepts the NIST uncertainty machine or the methods and procedures described in JCGM 100:2008, JCGM 101:2008, or JCGM 102:2011. In fact, there is overwhelming resistance to it. That is over 80 years of statistical theory development just in the first order citations alone that is apparently rejected wholesale by the WUWT audience. Considering I was told that I had to use the GUM for uncertainty analysis by authors and commenters on WUWT this is a twist I never saw coming.

LIAR, does your trolling and sophistry know ANY bounds?

The argument is not “Are the equations and results correct?”

The argument is that you are misapplying them by using them on measurements that are NOT of the same thing with the same instrument.

James Schrumpf said: “The argument is that you are misapplying them by using them on measurements that are NOT of the same thing with the same instrument.”

Really? Where does the GUM say that for the measurement model Y?

And why is it literally every single example provide by the NIST uncertainty machine are of measurements of different things including one with two different temperatures?

Why does NIST TN 1900 E2 literally average multiple Tmax observations and conclude that the uncertainty of that average is σ/sqrt(n)?

8 hours later, the bgwxyz troll back at it.

What a nutter.

You’re talking about example E2. Very well. First off, there’s this:

Point 1: all of the 22 observations were made at the same site, not 1 observation at 22 different sites.

Point 2: The precision of the tmax was not improved. In fact, the initial observations were made to hundredths of a degree C, but the mean he calculated was only to tenths, as was the uncertainty.

I don’t see how any of that example supports your position.

I would like to see this example extended to determining the standard deviation of an anomaly.

You’ll also notice the lack of uncertainty analysis for the measuring device. I didn’t realize that LIG thermometers were read to one hundredths of a degree. Even then, if you notice the temps are all rounded to the nearest 0.25 value. That means the uncertainty in measurement alone is +/- 0.25. The author did not even evaluate how this affects the average calculation. It is reminiscent of how climate science is done.

How long would these LIG thermometers have to be in order to resolve 0.01°C? Seems like the answer would have to be “very”.

The very length would actually add to the uncertainty because of gravitational impacts on the liquid as well has hysteresis because of friction between the liquid and the total area of the tube! You would need at least two different sets of graduations – one for when the temp is going up and one for when it is going down and the gradations would not be linear either.

I’ve never seen an LIG with 0.01°C gradations, it would have to have a very limited temperature range, like 10°C total.

I did that this evening with an Australian GHCN station that had a lovely long unbroken seriies of observations. Turned out the standard deviation of the anomalies was the same as for the temps.

Makes sense. All an anomaly is, is y in y = mx +b where m=1 and x is the mean of the temps between 1981 and 2010.

JS said: “Point 1: all of the 22 observations were made at the same site, not 1 observation at 22 different sites.”

It doesn’t make a difference. Those 22 observations are no more or less different whether they were at the same site or different sites. And the GUM never says the measurement model Y can only ever accept input quantities X1, …, Xn that are of the same thing. In fact, nearly all of the examples out there are of measurement models where Y accepts input quantities that are different.

JS said: “Point 2: The precision of the tmax was not improved. In fact, the initial observations were made to hundredths of a degree C, but the mean he calculated was only to tenths, as was the uncertainty.”

Yep. u(T_i) does not improve with more observations. But u(T_avg) does. Specifically in this example notice that σ_Ti = 4.1 C, but σ_T_avg = 0.872 C. That is the salient point here. And if we happen to use a reasonable type B estimate of say u(T_i) = 0.5 C as opposed to the type A evaluation of 4.1 C then we get u(T_avg) = 0.5/sqrt(22) = 0.1 C assuming the T_i observations are uncorrelated.

BTW…just because the NIST instrument had a resolution of 0.01 C does not mean that u(T_i) = 0.01 C. In fact, because the observations all seem to be in multiples of 0.25 we know that u(T_i) >= 0.25 C.

As the droid loops back around and repeats the same old same old.

From the NIST document.

You said:

The item you call σ_Ti is the standard deviation of the population. What you call σ_T_avg is actually the Standard Error or more accurately, the Standard Deviation of the Sample Means (SEM).

Please note that these only apply when you have the entire population of data. NIST has chosen to make this choice.

The error NIST has made is that once you declare that you have a population, you no longer need a Sample Mean or an SEM. They have no purpose. Sampling is not done when you already know the population. It is not needed. The relation between SEM and σ is:

SEM = SD / √N,

where N = Sample Size.

Remember the SEM only tells you the interval around the estimated mean (sample mean) where the true mean may lie. If you already know the true mean, what is the purpose of calculating an SEM?

This is basic statistics for using sampling. Do you need some references?

“ In fact, nearly all of the examples out there are of measurement models where Y accepts input quantities that are different.”

The input quantities are measurands! The value of those measurands are determined through multiple measurements of those measurands.

X1 .. Xn have to be done the same way! They are determined through multiple measurements of each, and those measurements have a propagated uncertainty.

And these measurands are part of a functional relationship.

y = f(x_i)

You use measurands to determine an output which is itself an estimated measurand.

AN AVERAGE IS NOT A MEASURAND. It is *NOT* measured in any fashion, either directly or by using a functional relationship.

Let me reiterate this as well. The standard deviation of the sample means does *NOT* determine the accuracy of the mean! It only determines the interval within which the population mean might lie. There is nothing in that statistical description that implies any kind of accuracy of the mean. The accuracy of that mean *has* to be determined via propagation of the uncertainty of the individual elements in either the sample or the population.

Trying to say that the standard deviation of the sample means is the accuracy of the calculated mean is a fraud and only shows that you have no basic understanding of metrology.

Nick Stokes is now pushing this fraudulent accounting trick, see below.

JS,

Please note that in Example 2 not a single entry shows an uncertainty.

The example goes on to state:

“This so-called measurement error model (Freedman et al., 2007)may be specialized further by assuming that E1, …, Eare modeled independent random m variables with the same Gaussian distribution with mean 0 and standard deviation (. In

these circumstances, the {ti} will be like a sample from a Gaussian distribution with mean r and standard deviation ( (both unknown).”

“Assuming that the calibration uncertainty is negligible by comparison with the other uncertainty components, and that no other significant sources of uncertainty are in play, then the

common end-point of several alternative analyses is a scaled and shifted Student’s t distibution as full characterization of the uncertainty associated with r.”

These are assumptions that simply can *NOT* be made when you are using widely separated field measurement stations of unknown calibration. In essence their assumptions are such as to make this into a multiple measurement of the same thing situation. In other words they assumed their conclusion as their premise ==> circular reasoning at its finest!

And, of course, bdgwx just sucks it up as gospel because he simply doesn’t understand the basic concepts of measurement!

I think everyone agrees the Machine produces the correct results, but that’s not the issue here.

The issue is that a series of temperature observations, such as from the GHCN stations, do NOT have a measurement model y = f(x1, x2, …, xn), which is CLEARLY stated to be the sine qua non of using the machine.

IOW, there is no temp = f{station_id, date, time} function in play, which is a requirement of using the Machine.

Global Ave=(ΣwₖTₖ)/(Σwₖ)

where T are anomalies for a month and w a set of (area) weights, prescribed by geometry. k ranges over stations.

JS said: “IOW, there is no temp = f{station_id, date, time} function in play, which is a requirement of using the Machine.”

So do f(T1, …, Tn, w1, …, wn) instead where T1…Tn are temperatures and w1…wn are weights that you have already acquired by other means and enter y = (ΣwₖTₖ)/(Σwₖ) instead.

BTW…you can create your own R functions. There is nothing stopping you from creating the gettemp(station_id, date, time) function and calling it in the R expression for Y (you’ll probably need to run the software on your own machine to actually do this though). The Allende example shows how to create and call your own R functions.

“So do f(T1, …, Tn, w1, …, wn) instead where T1…Tn are temperatures and w1…wn are weights that you have already acquired by other means and enter y = (ΣwₖTₖ)/(Σwₖ) instead.”

You punted! Since the weighting would have multiple, interactive factors such as latitude, humidity, elevation, barometer, wind, terrain, geography, etc of which some are time varying how do you actually determine the weighting? If some of the terms are time varying how do you even combine them for different locations? If the barometer reading is f(t) and you have two locations f_1(t1) and f_2(t2) just how do you relate those in a functional relationship?

If it was as simple as you make it all the CGM’s would be accurate and similar.

Paragraph 1 is “no”, because what is your x that you can plug into that equation to get the temp for that station and date? The equation is not a measurement model; it doesn’t give you y for some value of x and any other parameters.

I’m not sure what the concern is here. The x’s are the inputs into the model. The function y = f(x1,…xn) operates on those inputs and produces an output just like any other measurement model. If the x’s are temperature quantities then plug in temperature values as inputs not unlike how the Thermal example handles it for T0 and T1.

I tried it with 4 inputs t1 = 15.1, t2 = 15.5, t3 = 15.6, t4 = 14.9, u(t1) = u(t2) = u(t3) = u(t4) = 0.2 where y = (t1+t2+t3+t4)/4 and t1-t4 were gaussian. The result was y = 15.275 and u(y) = 0.1. It worked perfectly when I did it.

“I’m not sure what the concern is here. The x’s are the inputs into the model. The function y = f(x1,…xn) operates on those inputs and produces an output just like any other measurement model.”

You are not calculating standard uncertainty. You are calculating an average uncertainty, not the uncertainty of the average. So is the NIST

If your formula is t1+t2+t3+t4 then the uncertainties add by root-sum-square and come out to be +/- 0.4.

If you want the average uncertainty then it becomes +/- 0.1 (0.4/4)

That is nothing more than mental masturbation.

0.1 x 4 = 0.4 ==> the actual uncertainty you would get if these were four boards laid end-to-end.