Guest essay by Eric Worrall

Last Halloween, Naomi Oreskes unsettled the climate community by suggesting the work of WG1 scientists is done, and that they should move on to other fields. Climate scientist have now published a response in Scientific American detailing the big gaps in their understanding, and a detailed explanation of why they still need money.

Seeking Certainty on Climate Change: How Much Is Enough?

Two physicists object to a Scientific American essay calling for an end to one climate report. A science historian counters that the report has done its job

Sabine Hossenfelder is a physicist and research fellow at the Frankfurt Institute for Advanced Studies in Germany. She is author of the book Lost in Math: How Beauty Leads Physics Astrayand creator of the YouTube channel Science without the Gobbledygook. Credit: Nick Higgins

Tim Palmer is a Royal Society Research Professor in Climate Physics at the University of Oxford.

In a recent column in Scientific American, Naomi Oreskes argues that we understand the physics of climate change well enough now. She writes that the scientists of the Intergovernmental Panel on Climate Change’s (IPCC’s) Working Group 1 (WG1)—the ones tasked with assessing the physical science basis of climate change—should “declare their job done.” According to Oreskes, we should instead now deal with the problem by focusing on adaptation and mitigation.

It is true that the scientific basis of global long-term trends is settled. We know that sea levels are rising, average temperatures are increasing, and glaciers are dying. We know that business as usual will put our and future generations at risk of great suffering. But we do not have a good understanding of the regional impacts of climate change, and uncertainties in the long-term predictions currently span a range that could mean anything from a serious but manageable inconvenience to an existential threat.

Indeed, Oreskes has previously been critical of WG1’s reports: a February 2013 paper she co-authored in Global Environmental Change argued that the IPCC reports have consistently underpredicted ”at least some of the key attributes of global warming from increased atmospheric greenhouse gases.” But why is that? It’s because the job of climate scientists is not done.

A key reason for the underestimates that Oreskes and her colleagues belabored is that current-generation climate models are crude representations of the complex dynamical system that is our climate. For example, current global climate models can’t represent cloud systems using the laws of physics because the grid spacing is too coarse (a hundred kilometers or more). In the models, therefore, clouds are represented by highly simplified empirical formulas that describe the clouds’ true properties in a relatively crude way.

The consequence of our inability to model essential climate processes very accurately is that we cannot correctly simulate extreme weather and climate events. The horrendous weather events of 2021—the near-50-degree-Celsius heat in British Columbia and the devastating flooding in the Eifel region in Germany, China’s province of Henan and New York City—are completely outside the range of what current-generation climate models can simulate.

…

Read more: https://www.scientificamerican.com/article/seeking-certainty-on-climate-change-how-much-is-enough/

It is fascinating they mention clouds, because in 2019 when our Dr. Pat Frank pointed to gaps in our understanding of clouds as a major reason climate models have no predictive skill, he provoked a vigorous response from the climate community.

Yet as soon as someone like Oreskes suggests their work is done, suddenly the science of modelling clouds seems very unsettled indeed.

Note Dr. Roy Spencer also criticised Pat’s work. But Dr. Spencer wrote a paper in 2007 in support of Dr. Richard Lindzen’s Iris hypothesis, the theory that surface warming triggers net negative feedback changes in cloudiness which oppose the surface warming. Pat Frank’s response to Dr. Spencer’s criticism is available here.

Whatever your views on Dr. Spencer and Dr. Frank’s position, and anyone else involved in the climate model cloud debate, everyone seems to more or less agree clouds are a problem. Understanding clouds seems pretty fundamental to being able to model the global climate in detail. Modelling of clouds in current generation climate models is deeply flawed.

So I think we can safely conclude that Naomi Oreskes is wrong about the science being settled.

The following is a lecture by Dr. Pat Frank explaining his concerns about climate models and clouds.

I’ve never understood Dr. Spencer’s position on the accumulation of uncertainty in iterative models.

The models run an iteration and the results include various endpoint values AND ERROR MARGINS around each of those values.

So, for the next iteration, the starting points are uncertain…a range of starting points exist. No? Each of those myriad of starting points ends up with its own result and error margins. Errors can add or cancel, but the magnitude if *possible* errors always grows (there is always error in each direction).

Roy must be asserting that the error in one iteration will usually be negated in subsequent iterations. Is that possible? Yes, but what exactly is Roy’s basis for that assertion? That behavior could occur in a system where maximum possible errors are definable… like a phase change temperature barrier condition that has ZERO chance of ever being exceeded. I don’t see that in this discussion where hundredths of a degree errors must accumulate into tenths of a degree uncertainty in the *starting points* in subsequent iterations.

I suspect that the expansion of uncertainty is more constrained than Dr. Frank suggests, but the Modelers seem to be conceeding NO GROWTH of uncertainty, and that is certainly not plausible. I’ve never seen such a model when trying to model reality… where uncertainty is unavoidable.

It boils down to looking at the last iteration and seeing uncertainties of lots of degrees — and then deciding that because there is no way “errors” could be this large, the UA must be wrong. Spencer falls into a common trap by thinking uncertainty is the same as error, it is not.

The question at this point is not what the uncertainty value truly is but whether the predicted value is inside the uncertainty range. A value of 0.001±0.1 is beyond uncertain.

Pat Frank cleared that up here at WUWT – numerous climateers confuse uncertainty with ACTUAL temp. swings, a really basic failure.

Hmmmm . . . just noting that in the top graphic above the ensemble-of-climate computer models’ range of total cloud fraction errors/disagreements from North pole to South pole is ± 30%. Cloud coverage is a very key determinate of solar energy that reaches Earth’s surface and thereby warms the planet on a daily-yearly basis.

Nevertheless, to adapt an old saying: ± 30%? . . . close enough for intergovernmental panel work.

/sarc off

Presumably, the purpose of climate modeling is to simulate what happens to the magnitude and disposition of energy absorbed from the sun with increasing GHG concentrations in the atmosphere: will there really be more energy stored in the land and oceans, or will it all end up being emitted back to space with little or no effect?

The problem with expecting any reliable answer, ever, from the large-grid, discrete-layer, step-iterated, parameter-tuned models is that, “you can’t get there from here” numerically. Pat Frank has shown this formally, as given here again in this posting.

For those so inclined, go to this paper, “Structure and Performance of GFDL’s CM4.0 Climate Model” by Held, et al.

https://agupubs.onlinelibrary.wiley.com/doi/full/10.1029/2019MS001829

About clouds, there was purposeful tuning prioritizing TOA fluxes over cloud simulation itself:

“The tuning of AM4.0’s cloud scheme focused on these TOA fluxes rather than on cloud process level constraints on water/ice content or droplet size. While the results do not guarantee accurate cloud feedbacks to climate change, the ability to fit seasonal changes is a relevant constraint. Future development will need to focus on satisfying observational constraints on process level cloud variables while minimizing any loss of realism in the TOA fluxes.”

About the RMSE (root mean square error) of TOA (top of atmosphere) shortwave and longwave fluxes, see figures 15 to 17.

About precipitation, see figure 18. Note that a RMSE of 1 mm/day of precipitation represents about 29 W/m^2 (of latent energy conversion to heat or work.)

You would need, say, a hundred-fold or a thousand-fold improvement in the RMSE to ever reliably diagnose or project the single-digit W/m^2 theoretical “forcing” from GHG increases. And then the question will be, what is the uncertainty of the observed data to begin with? That’s why I say, “You can’t get there from here!”

The modelers’ dream (our nightmare) is to have a National Climate Service along the predictive lines of the National Weather Service, and they have a long way to go. All nonsense of course but the US still throws $2.6 billion a year at so-called climate research, which modeling is not.

Gotta keep the grant money flowing.

After watching the Climate Change/Global Warming circus for all these years, I am convinced we do indeed need to invest more into understanding the entire dynamics of climate and weather, but we need to invest those resources in people who actually dedicate themselves to the principles of science, not political/social advocacy which seems where most of the money goes these days. We need transparency, objective oversight and high levels of accountability if we are going to bring true understanding from the efforts and resources expended. This means real consequences for shoddy, dishonest or totally incompetent work and rewards for getting it right. Everyone who deliberately lies to promote their own agenda should face a real risk of defunding followed by prosecution/litigation. If that could happen the air might clear very rapidly because the advocates of this nonsense are all cowards at heart.

Would be good if they could predict the weather on Christmas Day, and the major weather events in 2022. Instead we get this from the Australian Bureau of Meteorology on Nov 25:

“December to February rainfall is likely to be above median for parts of eastern Australia, with highest chances for eastern Queensland.”

“December to February maximum temperatures are likely to be above median for most of Australia, with below median daytime temperatures likely for eastern NSW.”

“The La Niña in the Pacific Ocean and the positive Southern Annular Mode (SAM) state are likely influencing the above median rainfall outlooks.”

Their modelling is so imprecise, yet they know for sure what the temperatures will be in 2050 and 2100. Would be useful if they could predict the next drought.

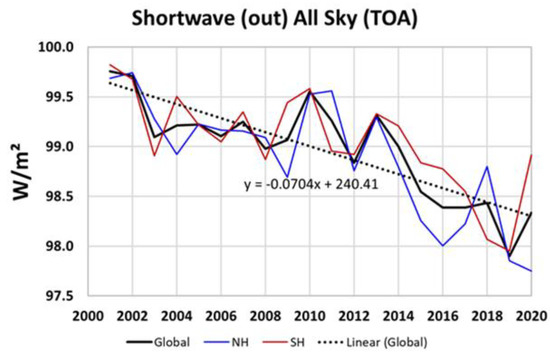

From the latest NASA CERES data it appears that models will need to add ocean cycles such as the PDO and AMO to get even close to accurate cloud data. The data shows a significant reduction in clouds around 2014 that appears to be tied to the PDO. The added solar energy from fewer clouds led to an increase of +1.42 W/m2 from 2001 to 2020 (most of it in the last 6 years)

This energy is actually more than enough to explain all the warming over those 20 years. One might even conclude increasing CO2 provided cooling to offset some of that warming.

Very interesting. Thanks.

Eric Warrell,

You say,

Yes, and the problem has been known for much longer that 2019 when Pat Frank published his report of the problem.

In 2005, Ron Miller and Gavin Schmidt, both of NASA GISS, provided an update of an evaluation of the leading US GCM which they first reported in 2001. They are U.S. climate modelers who used the NASA GISS GCM and they strongly promoted – and still promote – the AGW hypothesis. Their paper titled ‘Ocean & Climate Modeling: Evaluating the NASA GISS GCM’ was updated on 2005-01-10 and is available at

http://icp.giss.nasa.gov/research/ppa/2001/oceans/

Its abstract says:

Subsequently, although the report is called a “preliminary investigation”, there has been no publication to refute its findings. This is not surprising because the difficulty was – and still is – lack of computing power to provide adequate spatial resolution for prediction of the physical effects of cloud formation and, therefore, those effects need to be parametrised.

The abstract was written by strong proponents of AGW but admitted the NASA GISS GCM had “problems representing variables in geographic areas of sea ice, thick vegetation, low clouds and high relief.” These still unresolved problems are severe. For example, clouds reflect solar heat and a mere 2% increase to cloud cover would more than compensate for the maximum possible predicted warming due to a doubling of carbon dioxide in the air.

Good records of cloud cover are very short because cloud cover is measured by satellites that were not launched until the mid 1980s. But it appears that cloudiness decreased markedly between the mid 1980s and late 1990s. Over that period, the Earth’s reflectivity decreased to the extent that if there were a constant solar irradiance then the reduced cloudiness provided an extra surface warming of 5 to 10 Watts/sq metre. This is a lot of warming. It is between two and four times the entire warming estimated to have been caused by the build-up of human-caused greenhouse gases in the atmosphere since the industrial revolution. (The UN’s Intergovernmental Panel on Climate Change says that since the industrial revolution, the build-up of human-caused greenhouse gases in the atmosphere has had a warming effect of only 2.4 W/sq metre). So, the fact that the NASA GISS GCM has problems representing clouds must call into question the entire performance of the GCM.

The abstract says;

but this adjustment is a ‘fiddle factor’ because both the radiance and the saturation must be correct if the effect of the clouds is to be correct. There is no reason to suppose the adjustment will not induce the model to diverge from reality if other changes – e.g. alterations to greenhouse gas concentration in the atmosphere – are introduced into the model. Indeed, this problem of erroneous representation of low-level clouds could be expected to induce the model to provide incorrect indication of effects of changes to atmospheric GHGs because changes to clouds have much greater effect on climate than changes to GHGs.

Richard

Excellent post, Richard. As usual.

You wrote: “For example, clouds reflect solar heat and a mere 2% increase to cloud cover would more than compensate for the maximum possible predicted warming due to a doubling of carbon dioxide in the air.”

Muller also says a two percent increase in clouds would offset all CO2 warming. I think he was figuring this based on an ECS of about 3C.

Global warming is seriously dangerous give us money to understand it and spread the word that it’s a problem. Oh no you all think it’s a problem now well hold on a second we’re not sure we need more money to study it further.