Guest post by Pat Frank

My September 7 post describing the recent paper published in Frontiers in Earth Science on GCM physical error analysis attracted a lot of attention, consisting of both support and criticism.

Among other things, the paper showed that the air temperature projections of advanced GCMs are just linear extrapolations of fractional greenhouse gas (GHG) forcing.

Emulation

The paper presented a GCM emulation equation expressing this linear relationship, along with extensive demonstrations of its unvarying success.

In the paper, GCMs are treated as a black box. GHG forcing goes in, air temperature projections come out. These observables are the points at issue. What happens inside the black box is irrelevant.

In the emulation equation of the paper, GHG forcing goes in and successfully emulated GCM air temperature projections come out. Just as they do in GCMs. In every case, GCM and emulation, air temperature is a linear extrapolation of GHG forcing.

Nick Stokes’ recent post proposed that, “Given a solution f(t) of a GCM, you can actually emulate it perfectly with a huge variety of DEs [differential equations].” This, he supposed, is a criticism of the linear emulation equation in the paper.

However, in every single one of those DEs, GHG forcing would have to go in, and a linear extrapolation of fractional GHG forcing would have to come out. If the DE did not behave linearly the air temperature emulation would be unsuccessful.

It would not matter what differential loop-de-loops occurred in Nick’s DEs between the inputs and the outputs. The DE outputs must necessarily be a linear extrapolation of the inputs. Were they not, the emulations would fail.

That necessary linearity means that Nick Stokes’ entire huge variety of DEs would merely be a set of unnecessarily complex examples validating the linear emulation equation in my paper.

Nick’s DEs would just be linear emulators with extraneous differential gargoyles; inessential decorations stuck on for artistic, or in his case polemical, reasons.

Nick Stokes’ DEs are just more complicated ways of demonstrating the same insight as is in the paper: that GCM air temperature projections are merely linear extrapolations of fractional GHG forcing.

His DEs add nothing to our understanding. Nor would they disprove the power of the original linear emulation equation.

The emulator equation takes the same physical variables as GCMs, engages them in the same physically relevant way, and produces the same expectation values. Its behavior duplicates all the important observable qualities of any given GCM.

The emulation equation displays the same sensitivity to forcing inputs as the GCMS. It therefore displays the same sensitivity to the physical uncertainty associated with those very same forcings.

Emulator and GCM identity of sensitivity to inputs means that the emulator will necessarily reveal the reliability of GCM outputs, when using the emulator to propagate input uncertainty.

In short, the successful emulator can be used to predict how the GCM behaves; something directly indicated by the identity of sensitivity to inputs. They are both, emulator and GCM, linear extrapolation machines.

Again, the emulation equation outputs display the same sensitivity to forcing inputs as the GCMs. It therefore has the same sensitivity as the GCMs to the uncertainty associated with those very same forcings.

Propagation of Non-normal Systematic Error

I posted a long extract from relevant literature on the meaning and method of error propagation, here. Most of the papers are from engineering journals.

This is not unexpected given the extremely critical attention engineers must pay to accuracy. Their work products have to perform effectively under the constraints of safety and economic survival.

However, special notice is given to the paper of Vasquez and Whiting, who examine error analysis for complex non-linear models.

An extended quote is worthwhile:

“… systematic errors are associated with calibration bias in [methods] and equipment… Experimentalists have paid significant attention to the effect of random errors on uncertainty propagation in chemical and physical property estimation. However, even though the concept of systematic error is clear, there is a surprising paucity of methodologies to deal with the propagation analysis of systematic errors. The effect of the latter can be more significant than usually expected.

…

“Usually, it is assumed that the scientist has reduced the systematic error to a minimum, but there are always irreducible residual systematic errors. On the other hand, there is a psychological perception that reporting estimates of systematic errors decreases the quality and credibility of the experimental measurements, which explains why bias error estimates are hardly ever found in literature data sources.”

…

“Of particular interest are the effects of possible calibration errors in experimental measurements. The results are analyzed through the use of cumulative probability distributions (cdf) for the output variables of the model.

…

“As noted by Vasquez and Whiting (1998) in the analysis of thermodynamic data, the systematic errors detected are not constant and tend to be a function of the magnitude of the variables measured.

“When several sources of systematic errors are identified, [uncertainty due to systematic error] beta is suggested to be calculated as a mean of bias limits or additive correction factors as follows:

“beta = sqrt[sum over(theta_S_i)^2],

“where “i” defines the sources of bias errors and theta_S is the bias range within the error source i. (my bold)”

That is, in non-linear models the uncertainty due to systematic error is propagated as the root-sum-square.

This is the correct calculation of total uncertainty in a final result, and is the approach taken in my paper.

The meaning of ±4 W/m² Long Wave Cloud Forcing Error

This illustration might clarify the meaning of ±4 W/m^2 of uncertainty in annual average LWCF.

The question to be addressed is what accuracy is necessary in simulated cloud fraction to resolve the annual impact of CO2 forcing?

We know from Lauer and Hamilton, 2013 that the annual average ±12.1% error in CMIP5 simulated cloud fraction (CF) produces an annual average ±4 W/m^2 error in long wave cloud forcing (LWCF).

We also know that the annual average increase in CO₂ forcing is about 0.035 W/m^2.

Assuming a linear relationship between cloud fraction error and LWCF error, the GCM annual ±12.1% CF error is proportionately responsible for ±4 W/m^2 annual average LWCF error.

Then one can estimate the level of GCM resolution necessary to reveal the annual average cloud fraction response to CO₂ forcing as,

(0.035 W/m^2/±4 W/m^2)*±12.1% cloud fraction = 0.11%

That is, a GCM must be able to resolve a 0.11% change in cloud fraction to be able to detect the cloud response to the annual average 0.035 W/m^2 increase in CO₂ forcing.

A climate model must accurately simulate cloud response to 0.11% in CF to resolve the annual impact of CO₂ emissions on the climate.

The cloud feedback to a 0.035 W/m^2 annual CO2 forcing needs to be known, and needs to be able to be simulated to a resolution of 0.11% in CF in order to know how clouds respond to annual CO2 forcing.

Here’s an alternative approach. We know the total tropospheric cloud feedback effect of the global 67% in cloud cover is about -25 W/m^2.

The annual tropospheric CO₂ forcing is, again, about 0.035 W/m^2. The CF equivalent that produces this feedback energy flux is again linearly estimated as,

(0.035 W/m^2/|25 W/m^2|)*67% = 0.094%.

That is, the second result is that cloud fraction must be simulated to a resolution of 0.094%, to reveal the feedback response of clouds to the CO₂ annual 0.035 W/m^2 forcing.

Assuming the linear estimates are reasonable, both methods indicate that about 0.1% in CF model resolution is needed to accurately simulate the annual cloud feedback response of the climate to an annual 0.035 W/m^2 of CO₂ forcing.

This is why the uncertainty in projected air temperature is so great. The needed resolution is 100 times better than the available resolution.

To achieve the needed level of resolution, the model must accurately simulate cloud type, cloud distribution and cloud height, as well as precipitation and tropical thunderstorms, all to 0.1% accuracy. This requirement is an impossibility.

The CMIP5 GCM annual average 12.1% error in simulated CF is the resolution lower limit. This lower limit is 121 times larger than the 0.1% resolution limit needed to model the cloud feedback due to the annual 0.035 W/m^2 of CO₂ forcing.

This analysis illustrates the meaning of the ±4 W/m^2 LWCF error in the tropospheric feedback effect of cloud cover.

The calibration uncertainty in LWCF reflects the inability of climate models to simulate CF, and in so doing indicates the overall level of ignorance concerning cloud response and feedback.

The CF ignorance means that tropospheric thermal energy flux is never known to better than ±4 W/m^2, whether forcing from CO₂ emissions is present or not.

When forcing from CO₂ emissions is present, its effects cannot be detected in a simulation that cannot model cloud feedback response to better than ±4 W/m^2.

GCMs cannot simulate cloud response to 0.1% accuracy. They cannot simulate cloud response to 1% accuracy. Or to 10% accuracy.

Does cloud cover increase with CO₂ forcing? Does it decrease? Do cloud types change? Do they remain the same?

What happens to tropical thunderstorms? Do they become more intense, less intense, or what? Does precipitation increase, or decrease?

None of this can be simulated. None of it can presently be known. The effect of CO₂ emissions on the climate is invisible to current GCMs.

The answer to any and all these questions is very far below the resolution limits of every single advanced GCM in the world today.

The answers are not even empirically available because satellite observations are not better than about ±10% in CF.

Meaning

Present advanced GCMs cannot simulate how clouds will respond to CO₂ forcing. Given the tiny perturbation annual CO₂ forcing represents, it seems unlikely that GCMs will be able to simulate a cloud response in the lifetime of most people alive today.

The GCM CF error stems from deficient physical theory. It is therefore not possible for any GCM to resolve or simulate the effect of CO₂ emissions, if any, on air temperature.

Theory-error enters into every step of a simulation. Theory-error means that an equilibrated base-state climate is an erroneous representation of the correct climate energy-state.

Subsequent climate states in a step-wise simulation are further distorted by application of a deficient theory.

Simulations start out wrong, and get worse.

As a GCM steps through a climate simulation in an air temperature projection, knowledge of the global CF consequent to the increase in CO₂ diminishes to zero pretty much in the first simulation step.

GCMs cannot simulate the global cloud response to CO₂ forcing, and thus cloud feedback, at all for any step.

This remains true in every step of a simulation. And the step-wise uncertainty means that the air temperature projection uncertainty compounds, as Vasquez and Whiting note.

In a futures projection, neither the sign nor the magnitude of the true error can be known, because there are no observables. For this reason, an uncertainty is calculated instead, using model calibration error.

Total ignorance concerning the simulated air temperature is a necessary consequence of a cloud response ±120-fold below the GCM resolution limit needed to simulate the cloud response to annual CO₂ forcing.

On an annual average basis, the uncertainty in CF feedback into LWCF is ±114 times larger than the perturbation to be resolved.

The CF response is so poorly known that even the first simulation step enters terra incognita.

The uncertainty in projected air temperature increases so dramatically because the model is step-by-step walking away from an initial knowledge of air temperature at projection time t = 0, further and further into deep ignorance.

The GCM step-by-step journey into deeper ignorance provides the physical rationale for the step-by-step root-sum-square propagation of LWCF error.

The propagation of the GCM LWCF calibration error statistic and the large resultant uncertainty in projected air temperature is a direct manifestation of this total ignorance.

Current GCM air temperature projections have no physical meaning.

Pat Frank, thank you for another good essay.

“What happens inside the black box is irrelevant.”

This doesn’t sound quite sound, principally. Or perhaps more like: what’s expected to come out the box is very relevant. Since the box is a simulation with it’s own sets of feedback loops and equilibria, made invisible because of the nature of the defined box, one cannot simply make statements about the kind of propagation. There’s no simulation possible (in theory even) which actually reflects the exact processing. What matters is if the emulation can be used to approach real data, if it behaves *close* enough, even while perpetually “wrong”.

In the end it’s about about Dr. Frank’s conclusions being wrong but more how they’re closer to irrelevant to the models in use. The suggested uncertainty range and its relevant is uncertain as well. What matters in the end is if the model turns out to be useful and use it until it doesn’t.

If someone wants to base climate policies on JUST that, well, that’s another question.

Aren’t climate models themselves a sort of “black box”, since they are abstractions from reality?

And, in the sense that the models cannot “know” what some of the real quantities are, isn’t this a sort of “it doesn’t matter what goes on in the black box” state of knowledge? Yet, we value the output as reasonable.

We value output from these black boxes (climate models), where we don’t know what is going on precisely, and yet we should question models of these black boxes (Pat’s emulation equation) that produce their (climate models’) outputs with the same inputs?

It seems like the same sound reasoning is being applied to the climate-model emulation as is being applied to the climate models being emulated.

Emulations of simulations. A black box mimicking a group of black boxes. Lions and tigers and bears, oh my! — it’s all part of Oz — We’rrrrrrrrrrrrrrrrrrrrrrrrr off to see the Wizard ….

Pat Frank,

Well done, but it highlights how many people struggle with the concept of error propagation and how many hoops they will jump through to avoid doing the proper math and producing error bars or some other means of showing what the uncertainty is within their calculations.

v/r,

Dave Riser

Sad if true, David.

I recall reading a paper some long time ago, where the author expressed amazement that a group of researchers were using a slightly exotic way to calculate uncertainties in their results, they said, because it gave smaller intervals.

So it goes.

What happens when the GCMs are tuned to match the pristine temperature station data?

And therein lies the rub. Whatever they may be, climate modelers are most certainly NOT experimentalists.

Hi Pat Frank

This may be of interest to you a ASP colloquium discussing modelling tuning / parameterizations including clouds in the context of model uncertainty

“This is why the uncertainty in projected air temperature is so great. The needed resolution is 100 times better than the available resolution.”

No, I do not agree… Even if resolution is 100 times better for clouds, it does not follow that they have captured all of the various processes and feed backs that contribute to the overall climate. One has to assume that the models have captured all of the important processes, and that no undiscovered process or feedback will shift a yearly output enough to start a cascade effect. Its called a complex chaotic system (more than one chaotic process is involved).

One CANNOT predict the behavior of a natural chaotic system within a narrow-margin over enough time. One might be able to say, statistically, there is a “n”% chance of an outcome, but that “n”% will grow to a meaningless margin after enough time. 100 years is simply beyond any models – we just do not understand the climate processes well enough – clouds is just one set of processes we don’t understand well.

For example, let’s say that there is natural heating (forget CO2 as the magic molecule) and it drives a change in wind patterns over North Africa which carry greater loads of dust over the Atlantic. This then drives changes to condensation, which drives changes in temperatures for a large region, which…etc. The models simply cannot capture all this complexity. If you change the climate for a large enough portion of the Earth (say 10% to 20%) through such an unpredicted change, then the odds that it will impact other regions climate are large.

Climate models depend on averaging the measurements we think are important over a period we have made measurements in…they cannot predict the unknown, unmeasured, completely surprising changes that will occur. It is pure arrogance to believe we can “see” the effects of trace amounts of CO2 on the climate system.

Discovering that a complex computer model filled with differential formulas acts in a linear manner doesn’t surprise me one bit. I ran into this kind of behavior again and again when working on mathematical algorithms back in the 1980’s. I keep finding that all my tweaks and added complexity were completely overwhelmed by the already existing established more simple equations. It is a matter of scale…if the complex formulas that make up 99% of the code produce only 1% of the output, and the other 1% of the simple code produces 99% of the output then you will get a nasty surprise when you finally analyze the result. That is what you just did for them.

Your example of increased dust from North Africa entering the Atlantic is even more complex. One result of that can be an increase in plankton by orders of magnitude from year to year. This then results in a similar increase in recruitment of various fish species dependent upon timing of plankton blooms (primary production has ramped up big time in the north east atlantic this year as will be seen in plankton surveys) for survival post larval stage.

All that increase in oceanic biomass is retained energy,not to mention that water with high concentrations of plankton also has the potential to store more heat in a similar way that silt laden shallow water warms quicker and to a higher temperature than clear water. Yes in the big scheme of things the above may be small potatoes (though i think the plankton alone might be a very large potato ) but there are many natural variations and processes that are not even considered in climate models, never mind “parameterized” .

“but there are many natural variations and processes that are not even considered in climate models, never mind “parameterized” .”

It’s what Freeman Dyson pointed out several years ago. The climate models are far from being holistic simulation of the Earth. We know the Earth has “greened” somewhere between 10% and 15% since 1980. That has to have some impact on the thermodynamics, from evapotranspiration if nothing else. It could be a prime example of why temperatures are moderating in global warming “holes” around the globe. Yet I’ve never read where the climate models consider that at all.

It’s worse than that. Those are second-order effects which, yes, any half decent scientist would expect to be factored into any ‘grand view’ of the system produced by climate models.

But the enthalpy of evaporation of water changes by about 5% over temperature ranges experienced on the Earth. When someone postulated the question on Judith Curry’s blog a few years ago “Do climate models take this into account?”, the answer was “No.”

No scientist should take these models seriously. They are toys, not serious research tools.

But the point of Pat Frank’s hypothesis is not that the climate models are right or wrong, only that the uncertainty of projected temperatures is so large that it is not possible to know if the answers are any good or not.

“The paper presented a GCM emulation equation expressing this linear relationship, along with extensive demonstrations of its unvarying success.

In the paper, GCMs are treated as a black box. GHG forcing goes in, air temperature projections come out. These observables are the points at issue. What happens inside the black box is irrelevant.”

Who needs models when Par Frank can emulate them (modeling models) with a simple linear equation? Who needs physics when what happens in “the back box is irrelevant”? Who needs science when we have Pat Frank?

Who needs your comment when it’s pointless?

There’s a crying need here to clarify how iterative calculations, no matter how precise numerically, may or may not produce highly erroneous, misleading results in modeling. A simple, physically meaningful example is provided by the recursive relationship

y(n) = a y(n-1) + b x(n)

where y is the output, n is the discrete step-number and x is the input. Both a and b are positive constants.

If a + b = 1, then we have an exponential filter, which mimics the response of a low-pass RC circuit. The output is stable and there is no scale distortion. The effect of error in specifying the initial, or any subsequent, input will not accumulate, but will die off exponentially. Only systematic, not random, errors persisting over long-enough intervals will show a pronounced effect in the output. But if a + b > 1, then there’s an instability, i.e. an artificial amplification of output due to ever-increasing scale distortion. This is an unequivocal system specification error that can grow uncontrollably.

The critical question here should be whether the “cloud forcing error,” while undoubtedly introducing a serious error, can be treated as a system specification error, or is it just a randomly varying error in specifying the system input?

That is indeed the key question and the modelers must answer it. Right now ball is in their court.

Climate modelers indeed have much for which to answer; see second paragraph of my response to Stokes below. But insofar as a plus/minus interval of “cloud forcing error,” upon which Frank insists, smacks of random error in system input, rather than system specification error, the ball is in his court to defend his very specific claim of error propagation.

BTW, the problem of detecting a very gradually varying signal amidst much random noise depends upon the spectral structure of both and is not simply a matter of overall S/N ratio.

“There’s a crying need here to clarify how iterative calculations, no matter how precise numerically, may or may not produce highly erroneous, misleading results in modeling”

It was done in my post on error propagation in DE’s. You have given a first order inhomogeneous recurrence relation, analogous to a DE. The standard solution I gave was

y(t) = W(t) ∫ W⁻¹(u) f(u) du, where W(t) = exp( ∫ A(v) dv ), and integrals are from 0 to t

The analogous solution for your relation is

y(n)=aⁿΣa⁻ⁱxᵢ … summing from 0 to n, and absorbing b into x (try it!).

So the issue is whether a is >1, when the sum converges unless x is growing faster, and the errors grow as aⁿ, or <1, when the final term is a⁻ⁿx and the sum approaches a finite limit. This is exactly analogous to my special case 3, and govern what happens to positive and negative eigenvalues of the A matrix. Time discretisation of that linear de gives exactly the recurrence relation that you describe.

As to the cloud uncertainty, I think it has been misread throughout, but no-one including Pat Frank has been interested in finding out what it really is. As I understand, it comes from the correlation of sat and GCM values at locations separated in time but mainly space. It is predominantly spatial uncertainty, and would largely disappear on taking a spatial average.

Nick Stokes: It was done in my post on error propagation in DE’s.

…

As to the cloud uncertainty, I think it has been misread throughout, but no-one including Pat Frank has been interested in finding out what it really is.

What do you think of my proposal of using bootstrapping to get an improved propagation of the uncertainty in the parameter?

“my proposal of using bootstrapping to get an improved propagation of the uncertainty in the parameter”

Well, my first point is that everyone seems to think they know what the parameter is, and I don’t think they do. I think it represents total correlation, or lack of it, over space and time, and predominantly space, so if you take a global average at a point in time, there is a lot of cancellation.

But I don’t think bootstrapping to isolate that parameter is a good idea, and I’m pretty sure it wouldn’t work. The reason is the diffusiveness. As I mentioned, after a few steps (or days) error propagated from one source is fairly indistinguishable from that from another. There isn’t any point in trying to untangle that. The loss of distinctness would disable bootstrapping, but is a great help in ensemble, because it reduces the space you have to span.

Nick Stokes: Well, my first point is that everyone seems to think they know what the parameter is, and I don’t think they do.

What you also wrote is that the parameter is known because it is written in the program. Every other writer has claimed that the parameter is not known.

The reason is the diffusiveness. As I mentioned, after a few steps (or days) error propagated from one source is fairly indistinguishable from that from another.

I am more and more persuaded that you do not understand Pat Frank’s algorithm. But if you are estimating the resultant uncertainty of multiple sources of uncertainty in parameters and other details such as grid size an initial value, bootstrapping can certainly handle that.

To me there is a disparity between claiming that the only way to propagate error is to run the whole program and saying that bootstrapping can not estimate the uncertainty distribution, when bootstrapping does what you recommend many, many times.

Anyway, I appreciate your answer.

As I put all your answers together, there is no way to estimate the uncertainty in the computational results even with infinite computing power.

“What you also wrote is that the parameter is known because it is written in the program”

I said that parameters that are written in the program are known. That is trivially true. The point is that once you have done that you can run perturbations, as I hve shown.

But the 4 W/m2 is not written into GCMs. It is something that Pat has dug out of a paper. He rests his analysis but neither he nor his fans seems to have the slightest interest in finding out what it actually means. Nor its units.

Nick Stokes, “neither he nor his fans seems to have the slightest interest in finding out what it actually means. Nor its units.”

I’ve clarified the units for you ad nauseam, Nick. It never seems to take.

As to meaning, the (+/-)4 W/m^2 is a lower limit of resolution for model simulations of tropospheric thermal energy flux. Also discussed extensively in the paper. Also something you never seem to get.

Inconvenient truths of another kind, perhaps.

I’m keenly aware that linear system behavior can be described by DEs as well as by impulse response functions, continuous or discrete. My aim here was to CLARIFY the different types of error that can arise in ITERATIVE calculations in terms more far more accessible to the WUWT audience than those implicit in DE theory.

Moreover, I’ve long pointed out that the very concept of “cloud forcing” prevalent in “climate science” is physically tenuous, at best. While clouds, no doubt, modulate the SW solar forcing in producing the insolation that actually thermalizes the surface, their LWIR is merely an INTERNAL system flux, inseparably tied to the terrestrial LWIR insofar as actual heat transfer to the atmosphere is concerned. The uncertainty that arises from conflating these two distinct functions goes beyond any uncertainty of cloud fraction arising from lack of model-grid resolution.

Nick, “ … would largely disappear on taking a spatial average.”

Refuted by paper Figure 4.

“Discovering that a complex computer model filled with differential formulas acts in a linear manner doesn’t surprise me one bit. I ran into this kind of behavior again and again when working on mathematical algorithms back in the 1980’s”.

Back in my Saturn S-IVB days, I joined a project that was using a huge relaxation network to calculate thermal conductivities from ten radiometer measurements. For an offering, the high priests in white coats would grant us the k value a week later. One afternoon I sat down with pencil and paper and discovered that for almost every material, k could be calculated in minutes by averaging the front and back measurements, subtracting, and multiplying by a constant. No computer required. Oopsie.

You bring up a good point. Climate doesn’t just change drastically. You don’t see deserts go to lush forests in the wink of an eye. You don’t see marsh lands turn into farm land overnight. These are generally slow, gradual changes that are linear, not exponential.

If you have a model that can not run continuously without “going off the rails”, then you don’t have all the equations driving it correct. I don’t know how else to say it, you just don’t have it correct. Would it surprise anyone that the models are a Rube Goldberg device that end up depicting a linear output of temperature?

I have a bank account that pays 2 percent interest. They tell me that my money will double because compound interest. But the bank keeps taking these little bits of money out.

All this discussion has centered around just ONE aspect of climate-model uncertainty. Aren’t there other aspects open to an equal amount of argumentation? Put all that together with this, and the conclusion arrived at is … worse than we thought … that is, climate models are worse than useless, as far as being constructive tools for society. They are destructive tools, because of their highly inappropriate application in policy decisions.

There is a HUGE uncertainty with the models that no one has addressed yet! Even Pat Frank has only addressed the *average* temperature projection. But the average temperature is pretty much meaningless, it has no certainty associated with it at all when it comes to actual reality.

Once you take an *average* you lose data. You no longer have any data on what is happening to either the maximum temperatures or the minimum temperatures, both globally and regionally. And it is the maximum temperatures and the minimum temperatures that will determine what happens to the globe, not the average. If high temperatures are moderating and minimum temperatures are as well, the globe is headed into an era of high food productivity and decreased human mortality. It is maximum temps that have the biggest impact on food production during the main growing season yet we know almost nothing about where that is going. Based on the consecutive global record grain harvests over the past six years it is highly unlikely that maximum temps are going up, it is far more likely they are moderating.

Yet the climate alarmists, and this include the modelers, assume that an increasing average means that maximum temperatures are going up as well. Yet the uncertainty associated with the maximum temps and the average temps tracking is very high. The average can go up because of moderating minimum temps just as easily as it going up because of increasing maximum temps!

When was the last time you heard of large scale starvation occurring over a long time scale anywhere on the globe? I don’t know of any over the past two decades, not in Africa, Asia, South America, etc. Short term localized problems sure, but nothing widespread and lasting decades. And yet this is what the AGW modelers want us to believe their models are predicting!

Talk about uncertainty!

It’s been more than 40 years since the record high temp for any continent on earth (Antarctica) was broken. The next youngest continental record high temp is Asia’s, set in 1942.

“It’s been more than 40 years since the record high temp for any continent on earth (Antarctica) was broken.”

And yet we are to believe that the Earth is “burning up”? And we’ll all be crispy critters by 2035?

A question for Nick Stokes (or anyone else !!)

Have any of the GCMs been used to replicate the climate from, say, the year 1650 to say 1800, or have they been able to replicate the climate from the start of the Medieval Warm Period up to the end of the Little Ice Age?

Or perhaps to model the onset of the most recent ice age and its subsequent demise?

Since these time periods were before the industrial revolution and presumably before humans could have impacted the climate, there perhaps would be one less “variable” to consider; maybe this will make modeling the climate for these time periods a little bit easier.

If GCMs have not yet been back tested for time periods prior to the industrial revolution to assess their reliability, why haven’t they been?

Should not this “test” be conducted to assess the reliability of the models?

If they have, how did the results compare to the actual climate?

Many doubts about GCMs presently used would disappear if it could be shown that these models accurately (more or less) can replicate the historical climate. One would think that the AGW proponents would be working overtime to achieve good results in this endeavor.

You know, in engineering all mathematical models are compared to and/or calibrated to real world experimental and/or field test results. So even if the input parameters are not “exactly” known – say, in your example of an ICE – after enough real world testing, a model(s) can be “calibrated” and/or modified to provide results whose reliability is consistent with that required to model a physical process and perhaps to help make predictions.

And just as important, this continual comparison of real world vs model results allows the experimenter to KNOW its deficiencies and its in-applicability.

Anyway, I think it would interest many folks to have answers to my very basic questions.

+1

John,

The simple answer would be that in order to compare the results of computer models to

past climates two things need to be known. Firstly the climate needs to be known sufficiently

accurately — a few data points from Europe and China are not enough. Secondly the forcings

which are input into the climate models are also unknown. Then you need estimates for solar variability,

volcanic eruptions, CO2 levels etc going back hundreds of years. Neither of these things (the forcings

and the actual climate) are known to sufficient level to allow the tests you describe to be carried out

to the level that would convince a skeptic.

One way to look at it is that a climate model starting from scratch can predict the global temperature to

within 0.2% (i.e. roughly 0.5 degrees K error out of about 300K). Would the ability to predict temperature to with 0.2% convince you that global climate models are accurate? If not how accurately do you think

global climate models must be able to predict the temperature before you would accept that they are accurate?

“One way to look at it is that a climate model starting from scratch can predict the global temperature to

within 0.2%”

Really? Then why is there such a large variation in output among the different models? And why does their output differ from the satellite record by more than this?

And why did none of the models predict the global warming pause?

It’s not obvious that they can predict anything to within .2%!

Nick Stokes says the initial conditions are irrelevant to the models, that the forcing of initial conditions in the models quickly disappears after several steps. So why would actual conditions in 1650 make much difference to the models. Just pick some numbers and go for it!

Tim,

the average temperature of the earth is about 288 degrees Kelvin. Global climate models

can predict an average temperature of about 288 degrees to within 0.5 degrees. That

corresponds to an error in temperature of 0.5/288 or about 0.2%.

“Tim,

the average temperature of the earth is about 288 degrees Kelvin. Global climate models

can predict an average temperature of about 288 degrees to within 0.5 degrees. That

corresponds to an error in temperature of 0.5/288 or about 0.2%.”

You didn’t answer a single question I asked. Why is that?

And you’ve apparently missed the whole conversation about uncertainty associated with the models!

Izaak;

Thanks for your response.

Just wondering; do GCMs account for solar variability ?

Apparently this variable is important, so I assume the GCMs do in fact account for this.

Am I mistaken?

Also, there is ample documentation of volcanic activity since at least 300 to 500 years ago; certainly since 1900 or so.

Why then can’t GCM modelers input this information into their models; say since the year 1900?

Also, do current GCMs account for volcanic activity over, say , the last 50 to 100 years or so?

Michael Mann produced a temperature record going back to the year 1000; that’s 1000 years of data. If he was able to reproduce the temperature record over this time span, why can’t GCM modelers use his data – or other data – as input to model, say, the last 1000 years or 500 years or 300 years or even since 1900 or so?

It would seem fairly simple to use a GCM to model the climate since the year 1900 given that Mann was able to replicate more than 1000 years of temperature data.

It seems to me that “turning on” a GCM in the year 1900 (this is only 120 years ago – very recent) should produce results that model the actual, documented climate fairly accurately . This is much easier than trying to reproduce Mann’s 1000 year record of temperature.

Has this been done?

If so, why not?

I assume that if this “experiment” were conducted, the results would fit very closely the actual climate since the year 1900.

Don’t you agree?

Also, if executed it will immediately silence all the “deniers,” since “back predicting” should be much easier than “forward predicting.”

Thanks again for your initial response and I look forward to the response to my new questions.

Hi John,

The short answer is that it has been done and by using revised estimates of forcings

climate models can explain 98% of the variability in the climate since 1850. Have a look

at “A Limited Role for Unforced Internal Variability in Twentieth-Century Warming”

by Karsten Hausteina et al. at

https://journals.ametsoc.org/doi/10.1175/JCLI-D-18-0555.1

But note in order to get this level of agreement they used new estimates for the sea temperatures based on corrections to measurements that almost everyone here would

disagree with. So as I said originally there are plausible estimates for both forcings and

temperatures that allow GCM to explain 98% of the temperature variations since 1850.

Hence my question would be, having read the paper above are you convinced and do you

think that paper would silence even one denier?

“It is difficult to get a man to understand something, when his salary depends on his not understanding it.”

somehow this seems relevant here…

Leaving aside the errors that Nick and Dr. Spencer have pointed out there is at least one more

major error in Dr. Frank’s paper as far as I can see. He proposes the following emulation equation

for the global temperature (Eq. 1 in his paper):

ΔTt(K)=fCO2×33K×[(F0+∑iΔFi)/F0]+a

which can be trivially rewritten as:

ΔTt(K)=fCO2×33K×[1+(∑iΔFi)/F0]+a

now none of the terms in this equation have any direct connection with reality. Rather they are

an attempt to fit the outputs of global climate models. As Dr. Frank says “This emulation equation is not a model of the physical climate”. So there is a very natural question as to which of these parameters

best describes the effects of long-wave cloud forcing. Dr. Frank claims with no evidence that the long

wave cloud forcing is represented by the ΔFi term which would appear to be incorrect since he states

“ΔFi is the incremental change in greenhouse gas forcing of the ith projection time-step”. Hence that

term only represents the change in greenhouse gas concentrations. The other two terms would appear

to F0 and a.

Now “a” represents a constant off-set temperature in Eq. 1 and by simple error analysis an error in “a”

would give a constant off-set temperature independent of time. The errors would not grow in the fashion that

Dr. Frank describes. The other alternative would be that the 4 W/m^2 represents an error in the estimate of

F0. Which does at least have the right units. But then Eq. 5.1 should read:

ΔTi(K)±ui=0.42×33K×[1+ΔFi/(F0±4Wm2)]

which gives for Eq. 5.2

ΔTi(K)±ui=0.42×33K×[1+ΔFi/F0]±[0.42×33K×4Wm2/(F0*F0)]

which is smaller than Dr. Frank’s estimate by a factor of F0 which is about 30. The additional factor of

F0 comes from Eq. 3 when you take the derivative of 1/F0 to get 1/F0^2.

We now have an error term that is 30 times smaller than Dr. Frank’s estimate using his same methodology

and if we the propagate that for 100 years as he did the net result of the error in forcing is about 0.5 degrees

which is significantly smaller than the temperature change due to CO2 levels. Thus Dr. Frank is wrong about

GCM models being unable to predict global temperatures because the error terms are too large.

ΔTt(K)=fCO2×33K×[(F0+∑iΔFi)/F0]+a

which can be trivially rewritten as:

ΔTt(K)=fCO2×33K×[1+(∑iΔFi)/F0]+a

Try your math again.

(∑iΔFi)/F0) * (1/F0) is not (∑iΔFi)/F0)

Dr. Frank also states “In equations 5, F0 + ΔFi represents the tropospheric GHG thermal forcing at simulation step “i.” The thermal impact of F0 + ΔFi is conditioned by the uncertainty in atmospheric thermal energy flux. ”

F0 is not the uncertain flux value, F0 + ΔFi is. So your factor F0±4Wm2 is incorrect.

Hi Tim,

The point is that the uncertainty in thermal energy flux corresponds to an error in F0

not in Delta Fi which is the additional forcing due to rising CO2 simulations. The change

in forcing due to CO2 levels are well known but there is still an error in the other terms

which must correspond to errors in F0.

Izaak, “The point is that the uncertainty in thermal energy flux corresponds to an error in F0…”

No, it does not. F_0 is the constant unperturbed GHG forcing, calculated from Myhre’s equations. Totally muddled thinking, Izaak.

Dr. Frank,

You explicitly claim that your equation one is not a model of the physical world

rather it is a emulation model of the global climate models. Hence you cannot

calculate the value of F0 using values from a physical theory but rather it must

simply represent a best fit to the outputs of GCMs.

Suppose that a GCM is run with no increase in CO2 levels. Would you claim that

in that case it would have no error? Or would there still be an error due to the

long wave cloud forcing uncertainty? In which case which term in your Eq. 1 with

Delta F=0 represents that error?

Izaak, F_0 is projection year t = 0 forcing. I explain that in the paper, and how it’s calculated.

You wrote, “Suppose that a GCM is run with no increase in CO2 levels. Would you claim that in that case it would have no error?”

My paper is about uncertainty, not error. How many times must this be repeated?

The uncertainty comes from deficient theory within the model. Do you suppose the theory remains deficient inside the model, whether CO2 is increased or not?

“Or would there still be an error due to the long wave cloud forcing uncertainty?”

If a GCM is simulating the climate with (delta)F_i = 0, does the deficient theory within the model still cause it to simulate global cloud fraction incorrectly?

If the answer is yes (and it is), then why should there not be an uncertainty in simulated LWCF?

“ In which case which term in your Eq. 1 with Delta F=0 represents that error?”

Look at the right side of eqn. 5.2, Izaak. That answers your question.

In eqn. 1, when (delta)F_i = 0, and a = 0, then the eqn. calculates the unperturbed green house temperature, with f_CO2 varying with the given climate model.

When f_CO2 = 0.42, the Manabe and Wetherald fraction (see SI section 2), the unperturbed temperature is 13.9 C.

You clearly need to do some careful reading.

Izaak,

The term F0 + ΔFi represents the input to the next iteration of the model. The entire term is what is uncertain, not just F0.

Error is the difference between a measurement and the true value. That is not uncertainty. Uncertainty envelopes the range of possible errors but does not specify the actual error itself.

It’s why uncertainty doesn’t cancel out like random errors do according to the central limit theory – uncertainty is not a random variable.

Tim,

There is no term “F0 + Delta Fi” in Dr. Frank’s equation. The full expression is

(F0+Delta Fi)/F0

which can be rewritten as

1+ Delta Fi/F0

where Delta Fi and only Delta Fi is the input to the next iteration of the model. F0 is

a constant that represents some element of the physics while Delta Fi is the additional

forcing due to rising CO2 levels.

“The full expression is

(F0+Delta Fi)/F0

which can be rewritten as

1+ Delta Fi/F0”

Really nice math skills, eh?

[(F0+Delta F)/F0]*(F0/F0)

becomes

(F0)[1 + (Delta F/F0)]

which becomes the value for the next iteration.

Why did you drop the F0 multiplicative term?

Rather than shooting from the hip on this why don’t you read Frank’s writings?

Just because F_0 can be factored out doesn’t change anything, Izaak.

The uncertainty term on the right side of eqn. 5.2 remains the same.

Just curious, Izaak — doesn’t your “(F0+Delta Fi)/F0” contain the term F0+Delta Fi, which is the term you wrote is not present?

Dr. Frank,

As you surely know

(F0+ Delta Fi)/F0 = 1+Delta Fi/F0

This means that errors in F0 have a proportionally smaller

effect than an error in Delta Fi. The error term for F0 is proportional

the derivative of the emulation equation with respect to F0 so it

is Delta F/F0^2 where Delta F is the uncertainty in F0 which must include

the uncertainty in the long wave cloud forcing.

“This means that errors in F0 have a proportionally smaller

effect than an error in Delta Fi. ”

This isn’t about errors! How many times must that be repeated. It is about uncertainty!

Izaak, F_0 is a given. It has no error.

The (delta)F_i is a given. It has no error.

The (+/-) comes from calibration uncertainty due to model simulated cloud fraction error.

You are getting everything wrong.

The reason you are getting everything wrong is because you are imposing your mistaken understanding on what I have written.

Izaak Walton, “Leaving aside the errors that Nick and Dr. Spencer have pointed out…”

Another guy who thinks a calibration error statistic is an energy flux. There’s a good critical start.

IW, “Dr. Frank claims with no evidence that the long wave cloud forcing is represented by the ΔFi term …”

Not correct. Nowhere do I claim the ΔFi is long wave cloud forcing.

IW “The errors [in term a]would not grow in the fashion that Dr. Frank describes.”

I describe no such error.

IW “The other alternative would be that the 4 W/m^2 represents an error in the estimate of

F0.”

How about the alternative it actually means, Izaak, which is the CMIP5 calibration error statistic in long wave cloud forcing.

And it’s ±4 W/m^2, not +4 W/m^2. None of my critics seem able to get the difference straight. The difference between ± and + is deep science I know, but still…

I was going to go through the rest of your analysis, Izaak, but it is so muddled there’s no point.

Dr. Frank,

Again the point is that no of the terms in your emulation equation correspond to physical

effects. Which is what you explicitly state — rather they are fitting parameters to the outputs

of global climate models. As such there is no one-to-one correspondence between the parameters

in a GCM and your emulation equation. So why would the CMIP5 calibration error statistic

directly enter your equation exactly as does a changing in Forcing due to rising C02 ? Rather it

should enter as a error in the estimate of F0 which includes all of the forcings due to the

current levels of greenhouse gases.

” Rather it

should enter as a error in the estimate of F0 which includes all of the forcings due to the

current levels of greenhouse gases.”

To repeat (seemingly endlessly) error and uncertainty are not the same thing. You require a specific number for the size of the error. Uncertainty cannot give you that specific number, it can only tell you the range that the error can occur in. As you perform successive iterations that range of possible error gets bigger. Which is exactly what Dr Frank’s analysis shows.

Tim,

the issue is which number in Dr. Frank’s equation is uncertain. Suppose all of the

error lies in the offset value “a”. This would not propagate through in the manner

Frank suggestions but would rather just give a constant error. Errors in Delta F

have a bigger effect.

“the issue is which number in Dr. Frank’s equation is uncertain.”

Huh? Dr. Frank explains this very well, there is no uncertainty in his presentation.

“F0 is the total forcing from greenhouse gases in Wm–2 at projection time t = 0, and ΔFi is the incremental change in greenhouse gas forcing of the ith projection time-step, i.e., as i-1→i.”

“The finding that GCMs project air temperatures as just linear extrapolations of greenhouse gas emissions permits a linear propagation of error through the projection. In linear propagation of error, the uncertainty in a calculated final state is the root-sum-square of the error-derived uncertainties in the calculated intermediate states (see Section 2.4 below) (Taylor and Kuyatt, 1994).”

“The thermal impact of F0 + ΔFi is conditioned by the uncertainty in atmospheric thermal energy flux. ”

——————————

“Suppose all of the error lies in the offset value “a”.”

The value of “a” is calculated from the curves of the various GCM’s. See Table S4-3 in Frank’s supplementary documentation. If “a” has an error it is caused by errors in the curves from the GDM’s! Meaning the GCM’s are the source of uncertainty!

So Tim,

A question for you — why is it possible to know F0 with absolute precision

and ΔFi only to within an accuracy of +/1 4 W/m^2? Surely if F0 is the

“total forcing from greenhouse gases” then that term contains the uncertainty of

+/- 4 Wm^2? In contrast ΔFi is the input to the model and is used to make

predictions. Hence in principle it is know exactly. All of the error must be in

F0.

Another reason why the error must be in F0 is consider what would happen if you ran a climate model with zero additional forcing (i.e. constant CO2 levels).

In that case ΔFi =0 exactly but there would still be an uncertainty in the long wave cloud feedback. The only way that uncertainty could enter Dr. Frank’s model is through the parameter F0.

The uncertainty range, however large it is, does not say that the answer is wrong. It could be exactly correct to the precision calculated but there is no way to know that it is except to wait out the time and find out what happens. The calculated answer could be very correct or wildly wrong. With such large uncertainty there is no way to even make a reasonable guess which.

Izaak Walton, “A question for you — why is it possible to know F0 with absolute precision and ΔFi only to within an accuracy of +/1 4 W/m^2?”

The ΔFi are the SRES and RCP forcings from the IPCC, Izaak. They are givens, and have no uncertainty. That point is clearly made in the paper.

The (+/-)4 W/m^2 uncertainty in simulated tropospheric thermal energy flux comes from the models. Let me repeat that: it comes from the models.

It means the models cannot simulated the tropospheric thermal flux to better than (+/-)4 W/m^2 resolution, all the while they are trying to resolve a 0.035 W/m^2 perturbation.

The perturbation is (+/-)114 times smaller than they are able to resolve. The models are a jeweler’s loupe, and people claim to see atoms using it.

Izaak Walton, “So why would the CMIP5 calibration error statistic directly enter your equation exactly as does a changing in Forcing due to rising C02 ?”

Because it is an uncertainty in simulated tropospheric thermal energy flux, Izaak. This point is discussed in the paper.

GHG forcing enters the tropospheric thermal energy flux, and becomes part of it. However, the resolution of the model simulation for this flux is not better than (+/-)4 W/m^2.

This means the uncertainty in simulated forcing is (+/-)114 times larger than the perturbation in the forcing.

This is why the (+/-)4 W/m^2 enters into eqn. 1 at eqn. 5. It includes the impact of simulation uncertainty on the predicted effect. It means the effects due to GHG forcing cannot be simulated to better than (+/-)4 W/m^2.

It is impossible to resolve the effect of a perturbation that is 114 times smaller than the lower limit of resolution.

Dr. Frank,

You stated in the comment about that the Delta Fi are givens and have no

uncertainties. In that case the errors in the models must enter your emulation

equation through the parameter F0. Is this not correct?

No, that is not correct, Izaak. The F_0 is also a given. It has no error.

From page 8 in the paper: “Lauer and Hamilton (2013) have quantified CMIP3 and CMIP5 TCF model calibration error in terms of cloud forcings.”

That is from where the (+/-) uncertainty comes.

It is not in F_i, it is not in F_0. It is in the simulation.

The GCM’s are a hoax.

Dr. Frank correctly demonstrates that the aggregation of error vastly exceeds the relatively minor temperature change predictions in GCMs, but this alone is not damning enough for diehard adherents.

Others cite the well-known inaccuracy of the predictions, which also ought to be proof of invalidity. However, it is also true (though rarely stated) that GCM inaccuracies are one-sided: they all overestimate predicted temperatures. They all shoot high. There are no undershooting GCM’s. They miss, but always in one direction.

The black boxes are rigged, obviously. All the palaver about physical laws and balances is snake oil. If the GCMs were fair dice, some would guess too low and some too high. But they don’t. The dice are loaded.

Defenders of GCMs are dishonest. This is a logical conclusion. Defenders may be scientifically literate in the particulars, but to ignore the overwhelming one-sided bias in the outputs is not scientific. It is intentional blindness. Intentional, as in they ignore the obvious on purpose — which is unforgivable mendacity in support of a hoax.

I tend to agree that it’s “unforgivable mendacity”, but I’m prepared to allow for simple self-delusion, combined with a certain “I’m never wrong” arrogance (CC advocacy does seem to attract a certain character type!)

Or as Nick might say, with plaintive desperation: “Maybe the Earth’s climate is running cool.”

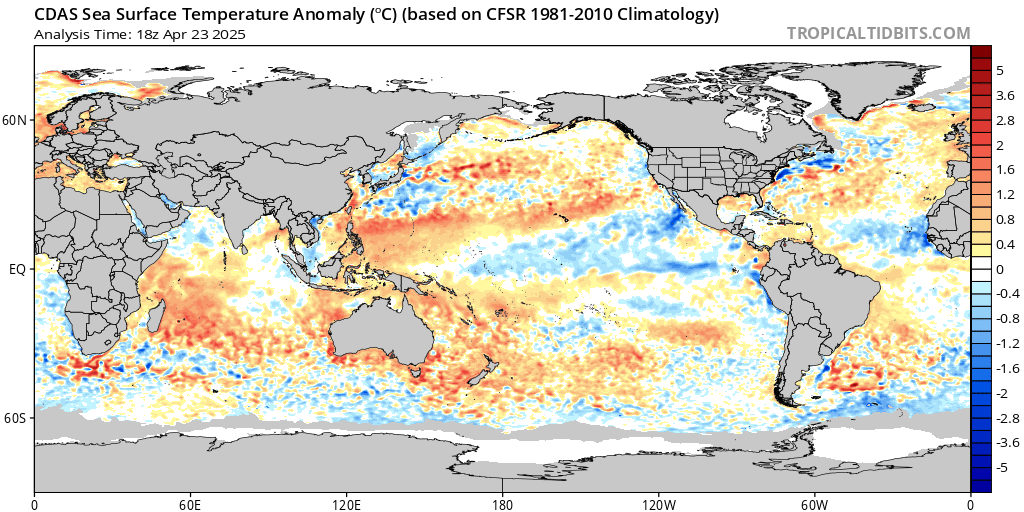

Many variables decide about the temperature. It is important to focus on the two most important:

1. The course of the northern jetstream.

2. ENSO cycle.

ren – please consider putting together a guest post for WUWT setting out your knowledge and views from start to finish.

Your in-line comments and links suggest you have an informed handle on the here and now workings of the global weather system, but, appearing as they do as random arrivals, it is hard to piece together what your “future climate big picture” is.

Thanks, and apologies if you have done this already but I’ve missed it. If so, please post a link.

I invite you to my website.

https://www.facebook.com/pages/category/Blogger/Sunclimate-719393721599910/

Thanks – I’m not on Facebook but it looks as if I can see your posts. If you have one which summarises your views, please flag it up. Also I wonder if you are an active researcher or worker in this field?

Miskolczi showed how to model radiative flux in the atmosphere.

His modelling was closely constrained by experimental measurements, from radiosonde balloons.

Not the theory-only models refuted here by Pat Frank.

What has happened to radiosonde balloons? Do they exist anymore? Does anyone use them anymore?

Or is everyone terrified that if they use radiosonde data they will make themselves look like Ferenc Miskolczi?

To quote the great man:

It seems that the Earth’s atmosphere maintains the balance between the absorbed short wave and emitted long wave radiation by keeping the total flux optical depth close to the theoretical equilibrium values.

…

On local scale the regulatory role of the water vapor is apparent. On global scale, however, there can not be any direct water vapor feedback mechanism, working against the total energy balance requirement of the system. Runaway greenhouse theories contradict to the energy balance equations and therefore, can not work.

…

An other important consequence of the new equations is the significantly reduced greenhouse effect sensitivity to optical

36

depth perturbations. Considering the magnitude of the observed global average surface temperature rise and the consequences of the new greenhouse equations, the increased atmospheric greenhouse gas concentrations must not be the reason of global warming. The greenhouse effect is tied to the energy conservation principle through the [not copy-able] equations and can not be changed without increasing the energy input to the system.

To stop Miskolczi publishing his findings as a NASA scientist (as he then was) a colleague logged into Miskolczi’s account without his knowledge, went to the journal site (archiv) as withdrew the submitted paper. That’s the kind of people climate scientists are. He was immediately dismissed from NASA, and his work was so violently marginalised by Mafia-like activity by NASA and the warmist crowd that he published it in a journal of his native country Hungary:

file:///C:/Users/PhilS/Documents/HOME/climate/miskolczi%20in%20hungarian%20journal.pdf

https://www.friendsofscience.org/assets/documents/The_Saturated_Greenhouse_Effect.htm

https://friendsofscience.org/assets/documents/E&E_21_4_2010_08-miskolczi.pdf

Miskolczi is the only serious atmospheric modeling of radiative flux that exists to date.

The BIGGEST problem with all climate models are that the caveats are NOT PRINTED LARGE ENOUGH for the politician (including all the numbskulls in the UN) to notice or understand adequately.

The current batch of climate models are only a first approximations for how the climate evolve, they are not particularly accurate, or complete in assessing this planet’s many climatic factors, or particularly trustworthy in their output. They are a research tool nothing more.

Climate Models are WORK IN PROGRESS, and may ultimately be proved to be entirely erroneous, or of very low utility.

IMO every other page of the UN-IPCC reports should have the words —

“CLIMATE MODELS RESULTS ARE ONLY APPROXIMATE!”

Pat Frank

“Izaak Walton, “Leaving aside the errors that Nick and Dr. Spencer have pointed out…”

Another guy who thinks a calibration error statistic is an energy flux. There’s a good critical start.”

A key to understanding (and hence being able to intelligently critic) Pat Frank’s analysis starts with the above comment. “Error statistic” vice “energy flux”. The prior represents uncertainty, the latter infers error. Understanding these two terms in the context of PFs analysis is paramount. Failure to agree on a common understanding of these terms results in discussion and critic that has no solid base. Its rather like some of the discussions involving radiation energy transfer…….

There are some posters on WUWT that are truly gifted in presenting complex concepts in ways that many different people can digest, I ask them to continue to present explanations, examples, and discussion of this core subject. This is where real progress in meaningful discussion of AGW can be made.

It’s worse than that, Ethan. Those folks think the calibration error statistic itself represents an energy flux.

Not the error in an energy flux, but an energy flux itself. As in something that perturbs the modeled climate.

They have less than no clue.

Pat,thanks. Hence my comments to work on the root problem many are having with the definition or concept of outcome uncertainty Vs data error, or as you adroitly state, treating outcome uncertainty as an actual data variation within the process creating the outcome. Agreed that this is a glaring problem in understanding your analysis. Unless progress is made in a common understanding of these terms, no progress can made in evaluating the actual merits of your analysis.

To get some clarity, couldn’t you try to prove the uncertainty and just run the model a thousand times with random cloud fraction averages for each year within a plausible parameter and see how much variation you get in the total warming? Or to put it another way, can anyone clearly explain why this debate is so redundantly stuck in the mud?

Dana, I don’t know, but I can guess. The error in predicting (estimating) low-level cloud cover and high level cloud cover is the stake in the heart of the man-made dangerous climate change vampire. It is the one thing that completely destroys the IPCC’s estimates of human influence on climate change and they can do nothing about it. Cloud cover error swamps the measurement of human influence and cloud cover defies all attempts to estimate it, it happens on too small a spatial scale. No papers have been attacked more ruthlessly (and with so little justification) as Richard Lindzen’s (Lindzen and Choi, 2011) on this very subject and I predict the same with Pat Frank’s, probably with less justification. Nick Stoke’s and Roy Spencer’s criticisms are hairsplitting and make no difference in my opinion, for what that is worth.

Ever try to get an elephant out of the mud it was stuck in? (^_^)

Something that big and set is really hard to move. The “stuckness” is NOT because of those trying to free the poor beast, but because of the sheer weight of the beast who cannot free itself because of its own weight. And, in case you can’t see the metaphor, the massive weight here is on the side of the critics of Pat’s analysis.

Congratulations on not being an elephant.

That would not give you uncertainty error. Each run would have the same uncertainty. That is the whole point. The output is uncertain regardless of what you do with the run. Think of it this way, how certain are you that the program gives you the correct value when you run it. (This doesn’t include what you think is correct output.) In other words, how reliable do you think the output is when considering all the inputs and relationships in the program?

dana_casun: To get some clarity, couldn’t you try to prove the uncertainty and just run the model a thousand times with random cloud fraction averages for each year within a plausible parameter and see how much variation you get in the total warming?

That is part of the idea of bootsrapping (though sampling would not be done exactly as you describe). The problem is that the GCMs take a long time to run on current computing equipment. No one, apparently, want, to contemplate actually waiting for the results of a thousand runs.

I provide the following in hopes it will help to foster common ground with the use of terminology.

Term definitions, NO context (ie common usage, google search result):

Uncertainty: “the state of being uncertain”

Uncertain :”not able to be relied on; not known or definite”

Error: “a mistake”

Term discussions, from The NIST Reference on Constants, Units, and Uncertainty (https://physics.nist.gov/cuu/Uncertainty/glossary.html), see

“NIST Technical Note 1297

1994 Edition

Guidelines for Evaluating and Expressing

the Uncertainty of NIST Measurement Results”

https://emtoolbox.nist.gov/Publications/NISTTechnicalNote1297s.pdf

Uncertainty :”parameter, associated with the result of a measurement, that characterizes the dispersion of the values that could reasonably be attributed to the measurand”

The following quotes are from NIST Technical Note 1297 (link above).

“NOTE – The difference between error and uncertainty should always

be borne in mind. For example, the result of a measurement after

correction (see subsection 5.2) can unknowably be very close to the

unknown value of the measurand, and thus have negligible error, even

though it may have a large uncertainty (see the Guide [2]).” (Page 7)

“NOTES

1 The uncertainty of a correction applied to a measurement result to

compensate for a systematic effect is not the systematic error in the

measurement result due to the effect. Rather, it is a measure of the

uncertainty of the result due to incomplete knowledge of the required

value of the correction. The terms “error” and “uncertainty” should not

be confused (see also the note of subsection 2.3).” (Page 9)

“2 In general, the error of measurement is unknown because

the value of the measurand is unknown. However, the

uncertainty of the result of a measurement may be

evaluated.” (Page 20)

“3 As also pointed out in the Guide, if a device (taken to

include measurement standards, reference materials, etc.) is

tested through a comparison with a known reference

standard and the uncertainties associated with the standard

and the comparison procedure can be assumed to be

negligible relative to the required uncertainty of the test, the

comparison may be viewed as determining the error of the

device.” (Page 20)

“In fact, we recommend that the terms “random uncertainty”

and “systematic uncertainty” be avoided because the

adjectives “random” and “systematic,” while appropriate

modifiers for the word “error,” are not appropriate modifiers

for the word “uncertainty” (one can hardly imagine an

uncertainty component that varies randomly or that is

systematic).” (Page 21)

Thanks Ethan, very helpful. Especially this:

Andy May and Ethan Brand: In fact, we recommend that the terms “random uncertainty” and “systematic uncertainty” be avoided because the adjectives “random” and “systematic,” while appropriate modifiers for the word “error,” are not appropriate modifiers for the word “uncertainty”

I was going to elaborate a little on this: since its inception, mathematical probability has been used as a model for two sorts of things; (a) confidence (or uncertainty) in a proposition, or outcome (called epistemic or personal probability and (b) relative frequency of event outcomes subject to random variability (called “aleatory” probability.) Not everyone agrees that the two uses have been equally successful in applications. While it is obvious that random variability in the phenomena (hence data collected from studies) produces uncertainty in any parameter estimates (or other summaries of what might be called “noumena” or “empirical knowledge”), it is not obvious how to translate the random variation in the data to uncertainty in the outcome of research.

One way, illustrated by Pat Frank, is to take the estimate of standard deviation of the random variation in the parameter estimate as a representation of the uncertainty in the point estimate, treat that uncertainty via a distribution of possible outcomes that have the same standard deviation, and propagate that standard deviation through subsequent calculations, as the standard deviation itself has been derived by propagating the standard deviation of the random part of the observations through the calculations that produce the parameter estimate. That provides an estimate of the random variation in the forecast errors induced by the random variation in the observations.

Note how loaded some of the terms are: “random” variation is variation that is not reproducible or predictable, but you can get sidetracked into the question of whether it is in some sense “truly random” (which Einstein objected to by mocling the idea that God throws dice), or “merely empirically random” because there are gaps in the knowledge of the causal mechanism producing the outcome. Operationally they can’t be distinguished because the empirical random variation (the most thoroughly replicated research outcome in all of science) makes measurement of any hypothetical true random variation subject to great uncertainty.

“Estimate”, “estimation” and “approximation” dominate the discussion. Only an estimate of the “true” value of the parameter is available from data. Only an estimate of the random variaion in the data is available, not its true distribution. All the mathematical equations are approximations to the true relations that we might be hoping to know. And the result of an uncertainty propagation of the sort carried out by Pat Frank is an approximation to the random variation in the forecasts induced by the random variation in the data. One can elaborate this endlessly, and find many books on the topic in the philosophy of science and statistics sections of a university library.

One is left with the usual questions of pragmatism in applied math: are the approximations accurate enough? How can you tell whether they are accurate enough? Have they been shown to be accurate enough? Accurate enough for some purposes but not for others?

And so forth.

Getting back to the specific case. Is it true as claimed by Nick Stokes that Pat Frank’s procedure implements a random walk? No. Conditional on a parameter unserted into the model, and the increases in CO2 year by year, the sequence of yearly point estimates would be deterministic. Is it true that you can not propagate uncertainty without propagating particular errors, as claimed by Nick Stokes? No. If you are willing to treat the uncertainty in the parameter estimate as resulting from the random variation in the data, you can propagate the uncertainty in forecasts as the standard deviation of the forecast random variation? Is the estimate of the standard deviation of the parameter estimate good enough for this propagation? Well, people seem willing to accept the estimate of the parameter, and the empirical estimate of its uncertainty shows that it is indistinguishable from 0. That information is too important to ignore, but ignoring it has been the standard up till now. Is there a better procedure for propagating the uncertainty in the parameter estimate? The only alternative proffered in this series of interchanges is bootstrapping, which will not be possible until available computers are about 1000 times as fast as the supercomputers available now.

Is there a better estimate of the uncertainty in the GCM forecasts than what Pat Frank has calculated? Not that anyone has nominated. Is there any reason to think that Pat Frank’s calculation has produced a gross overestimate of the uncertainty? Not that anyone has mentioned so far.

While yearly point estimates of a variable are produced deterministically by GCMs, the estimates themselves need not be at all predictable. The chaotic nature of numerical solutions for N-S equations virtually guarantees an apparent randomness, which needs to be properly characterized.

Unless one is willing to fly on the wings of naked probabilistic premise, this necessarily involves such physical constraints as conservation of energy, inertia, etc. Thus, there are definite limits to how fast and far physically-anchored uncertainty can grow. And if one is interested not in specific yearly predictions but only in some very-low-frequency features often called “trends,” then the uncertainty can be narrowed even further. (In practice, as time advances beyond the effective prediction horizon set by autocorrelation, physically-blind Wiener filters devolve to simply projecting the mean value of the random signal.)

If you want to do realistic science, you CANNOT recursively “propagate the uncertainty in forecasts as the standard deviation of the forecast random variations.” Mistaking the practical nature of the problem as one of point-prediction of the accumulation of essentially uncorrelated random increments indeed leads to an ever-expanding random walk.

sky,

“Mistaking the practical nature of the problem as one of point-prediction of the accumulation of essentially uncorrelated random increments indeed leads to an ever-expanding random walk.”

Uncertainty is not random and therefore cannot lead to a random walk. You are confusing error with uncertainty. Even the true answer is contained in an uncertainty interval, the uncertainty just makes it impossible to *know* what the true answer is. Someone earlier used the example of a crowd size estimate for an event. If you tell someone that you are 99% sure it will be between 400 and 600 then exactly what will the crowd size turn out to be? Even if it is a ticketed event and all the tickets are sold there will be an uncertainty interval because of people that simply won’t be able to make it to the event.

Nowhere do I claim that uncertainty itself is random. What I’m trying to convey is that recursive projection of a confidence interval would be correct if one were trying to actually predict a random walk. Otherwise, it should remain fixed, or in the case of nonstationary processes, change along with changes in signal variance.

1sky1: If you want to do realistic science, you CANNOT recursively “propagate the uncertainty in forecasts as the standard deviation of the forecast random variations.” Mistaking the practical nature of the problem as one of point-prediction of the accumulation of essentially uncorrelated random increments indeed leads to an ever-expanding random walk.

I think that post is incidental to what I wrote. I did not, for example, describe “recursively” propagating the uncertainty, and I explicitly wrote that Pat Frank’s procedure does not create a random walk.

We are getting a little afield, but imagine choosing randomly between 2 6-sided dice; one has the usual markings, a dot on one side, …, up to 6 dots on the last side; the other has 1 dot on each side. After choosing, you throw the die 20 times; for the first die the outcomes of the first die are random variables; for the second die, the outcomes are a non-random sequence of 1s. If the outcomes are added to a running sum, the first case produces a random walk; the second does not produce a random walk. That illustrates the possibility that, conditional on the random outcome, the subsequent trajectory can be non-random.

If one conceptualizes the “choice” of cloud feedback parameter as a random outcome from a distribution with a large variance, hence an uncertain estimate of the true parameter, the subsequent series of yearly forecasts (given a sequence of CO2 values) is non-random, not a random walk. The uncertainty in the final year prediction results from the uncertainty of the outcome of estimating the cloud feedback parameter, not on the random variation of the cloud feedback parameter from year to year.

However one may object to representing uncertainty as a probability distribution of possible parameter values, states of “knowledge”, “bets”, mentation, or “choices”, Pat Frank’s procedure does not produce a random walk. Whatever the unknown error in the parameter estimate is, that error, and hence uncertainty over its value, propagates deterministically. If at some later date we were to learn exactly what the error had been all along, we could adjust the annual forecasts accordingly, as Nick Stokes describes for the meter stick analogy. Meanwhile we are uncertain as to whether the error was small, medium, or large relative to our purposes, and the uncertainty propagates as described by Pat Frank.

Tim Gorman and Matthew Marler are exactly right.

Over and over, we see critics seeing predictive uncertainty as physical error. It’s not.

Exactly this mistake powers 1sky1’s argument about random walks, as both Tim and Matthew point out.

I’ve put together a set of extracts from the literature on the meaning and calculation of predictive uncertainty. I’ll post the whole thing below.

But here, because it’s particularly relevant, I’ll quote an extract from Kline, 1985:

Kline SJ. The Purposes of Uncertainty Analysis Journal of Fluids Engineering. (1985) 107(2), 153-160.

The Concept of Uncertainty

An uncertainty is not the same as an error. An error in measurement is the difference between the true value and the recorded value; an error is a fixed number and cannot be a statistical variable. An uncertainty is a possible value that the error might take on in a given measurement. Since the uncertainty can take on various values over a range, it is inherently a statistical variable. (my bold)”

While the word “recursively” was not explicitly used, it’s implicit in Pat Frank’s uncertainty propagation scheme, to wit: