Guest Essay by Kip Hansen

It seems that every time we turn around, we are presented with a new Science Fact that such-and-so metric — Sea Level Rise, Global Average Surface Temperature, Ocean Heat Content, Polar Bear populations, Puffin populations — has changed dramatically — “It’s unprecedented!” — and these statements are often backed by a graph illustrating the sharp rise (or, in other cases, sharp fall) as the anomaly of the metric from some baseline. In most cases, the anomaly is actually very small and the change is magnified by cranking up the y-axis to make this very small change appear to be a steep rise (or fall). Adding power to these statements and their graphs is the claimed precision of the anomaly — in Global Average Surface Temperature, it is often shown in tenths or even hundredths of a Centigrade degree. Compounding the situation, the anomaly is shown with no (or very small) “error” or “uncertainty” bars, which are, even when shown, not error bars or uncertainty bars but actually statistical Standard Deviations (and only sometimes so marked or labelled).

It seems that every time we turn around, we are presented with a new Science Fact that such-and-so metric — Sea Level Rise, Global Average Surface Temperature, Ocean Heat Content, Polar Bear populations, Puffin populations — has changed dramatically — “It’s unprecedented!” — and these statements are often backed by a graph illustrating the sharp rise (or, in other cases, sharp fall) as the anomaly of the metric from some baseline. In most cases, the anomaly is actually very small and the change is magnified by cranking up the y-axis to make this very small change appear to be a steep rise (or fall). Adding power to these statements and their graphs is the claimed precision of the anomaly — in Global Average Surface Temperature, it is often shown in tenths or even hundredths of a Centigrade degree. Compounding the situation, the anomaly is shown with no (or very small) “error” or “uncertainty” bars, which are, even when shown, not error bars or uncertainty bars but actually statistical Standard Deviations (and only sometimes so marked or labelled).

I wrote about this several weeks ago in an essay here titled “Almost Earth-like, We’re Certain”. In that essay, which the Science and Environmental Policy Project’s Weekly News Roundup characterized as “light reading”, I stated my opinion that “they use anomalies and pretend that the uncertainty has been reduced. It is nothing other than a pretense. It is a trick to cover-up known large uncertainty.”

Admitting first that my opinion has not changed, I thought it would be good to explain more fully why I say such a thing — which is rather insulting to a broad swath of the climate science world. There are two things we have to look at:

- Why I call it a “trick”, and 2. Who is being tricked.

WHY I CALL THE USE OF ANOMALIES A TRICK

What exactly is “finding the anomaly”? Well, it is not what it is generally thought. The simplified explanation is that one takes the annual averaged surface temperature and subtracts from that the 30-year climatic average and what you have left is “The Anomaly”.

That’s the idea, but that is not exactly what they do in practice. They start finding anomalies at a lower level and work their way up to the Global Anomaly. Even when Gavin Schmidt is explaining the use of anomalies, careful readers see that he has to work backwards to Absolute Global Averages in Degrees — by adding the agreed upon anomaly to the 30-year mean.

“…when we try and estimate the absolute global mean temperature for, say, 2016. The climatology for 1981-2010 is 287.4±0.5K, and the anomaly for 2016 is (from GISTEMP w.r.t. that baseline) 0.56±0.05ºC. So our estimate for the absolute value is (using the first rule shown above) is 287.96±0.502K, and then using the second, that reduces to 288.0±0.5K.”

But for our purposes, let’s just consider that the anomaly is just the 30-year mean subtracted from the calculated GAST in degrees.

As Schmidt kindly points out, the correct notation for a GAST in degrees is something along the lines of 288.0±0.5K — that is a number of degrees to tenths of a degree and the uncertainty range ±0.5K. When a number is expressed in that manner, with that notation, it means that the actual value is not known exactly, but is known to be within the range expressed by the plus/minus amount.

This illustration shows this in actual practice with temperature records….the measured temperatures are rounded to full degrees Fahrenheit — a notation that represents ANY of the infinite number of continuous values between 71.5 and 72.4999999…

It is not a measurement error, it is the measured temperature represented as a range of values 72 +/- 0.5. It is an uncertainty range, we are totally in the dark as to the actual temperature — we know only the range.

Well, for the normal purposes of human beings, the one-degree-wide range is quite enough information. It gets tricky for some purposes when the temperature approaches freezing — above or below frost/freezing temperatures being Climatically Important for farmers, road maintenance crews and airport airplane maintenance people.

No matter what we do to temperature records, we have to deal with the fact that the actual temperatures were not recorded — we only recorded ranges within which the actual temperature occurred.

This means that when these recorded temperatures are used in calculations, they must remain as ranges and be treated as such. What cannot be discarded is the range of the value. Averaging (finding the mean or the median) does not eliminate the range — the average still has the same range. (see Durable Original Measurement Uncertainty ).

As an aside: when Climate Science and meteorology present us with the Daily Average temperature from any weather station, they are not giving us what you would think of as the “average”, which in plain language refers to the arithmetic mean — rather we are given the median temperature — the number that is exactly halfway between the Daily High and the Daily Low. So, rather than finding the mean by adding the hourly temperatures and dividing by 24, we get the result of Daily High plus Daily Low divided by 2. These “Daily Averages” are then used in all subsequent calculations of weekly, monthly, seasonal, and annual averages. These Daily Averages have the same 1-degree wide uncertainty range.

On the basis of simple logic then, when we finally arrive at a Global Average Surface Temperature, it still has the original uncertainty attached — as Dr. Schmidt correctly illustrates when he gives Absolute Temperature for 2016 (link far above) as 288.0±0.5K. [Strictly speaking, this is not exactly why he does so — as the GAST is a “mean of means of medians” — a mathematical/statistical abomination of sorts.] As William Briggs would point out “These results are not statements about actual past temperatures, which we already knew, up to measurement error.” (which measurement error or uncertainty is at least +/- 0.5).

The trick comes in where the actual calculated absolute temperature value is converted to an anomaly of means. When one calculates a mean (an arithmetical average — total of all the values divided by the number of values), one gets a very precise answer. When one takes the average of values that are ranges, such as 71 +/- 0.5, the result is a very precise number with a high probability that the mean is close to this precise number. So, while the mean is quite precise, the actual past temperatures are still uncertain to +/-0.5.

Expressing the mean with the customary ”+/- 2 Standard Deviations” tells us ONLY what we can expect the mean to be — we can be pretty sure the mean is within that range. The actual temperatures, if we were to honestly express them in degrees as is done in the following graph, are still subject to the uncertainty of measurement: +/- 0.5 degrees.

[ The original graph shown here was included in error — showing the wrong Photoshop layers. Thanks to “BoyfromTottenham” for pointing it out. — kh ]

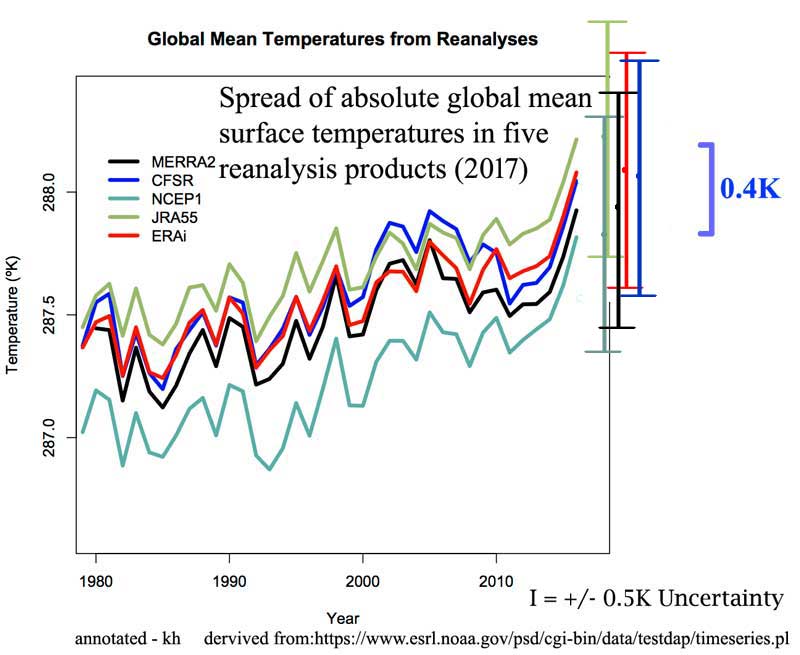

The illustration was used (without my annotations) by Dr. Schmidt in his essay on anomalies. I have added the requisite I-bars for +/- 0.5 degrees. Note that the results of the various re-analyses themselves have a spread of 0.4 degrees — one could make an argument for using the additive figure of 0.9 degrees as the uncertainty for the Global Mean Temperature based on the uncertainties above (see the two greenish uncertainty bars, one atop the other.)

This illustrates the true uncertainty of Global Mean Surface Temperature — Schmidt’s acknowledged +/- 0.5 and the uncertainty range between reanalysis products.

In the real world sense, the uncertainty presented above should be considered the minimum uncertainty — the original measurement uncertainty plus the uncertainty of reanalysis. There are many other uncertainties that would properly be additive — such as those brought in by infilling of temperature data.

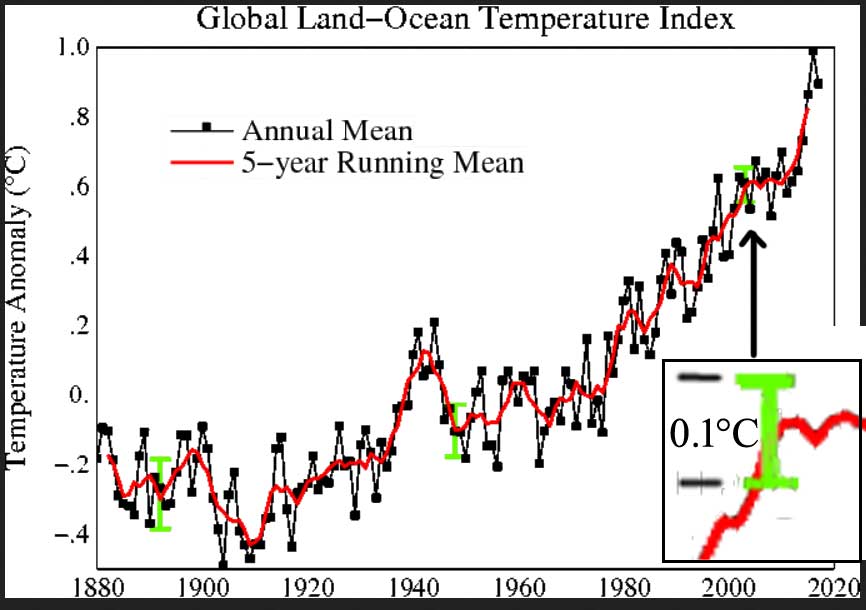

The trick is to present the same data set as anomalies and claim the uncertainty is thus reduced to 0.1 degrees (when admitted at all) — BEST doubles down and claims 0.05 degrees!

Reducing the data set to a statistical product called anomaly of the mean does not inform us of the true uncertainty in the actual metric itself — the Global Average Surface Temperature — any more than looking at a mountain range backwards through a set of binoculars makes the mountains smaller, however much it might trick the eye.

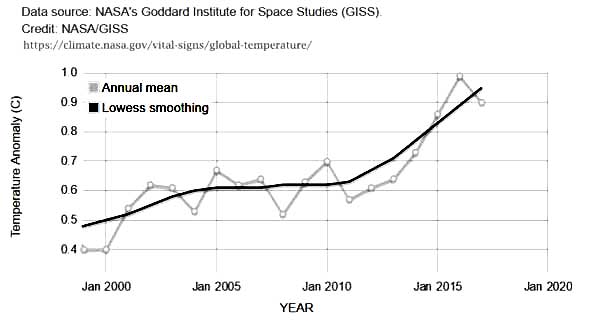

Here’s a sample from the data that makes up the featured image graph at the very beginning of the essay. The columns are: Year — GAST Anomaly — Lowess Smoothed

2010 0.7 0.62

2011 0.57 0.63

2012 0.61 0.67

2013 0.64 0.71

2014 0.73 0.77

2015 0.86 0.83

2016 0.99 0.89

2017 0.9 0.95

The blow-up of the 2000-2017 portion of the graph:

We see global anomalies given to a precision of hundredths of a degree Centigrade. No uncertainty is shown — none is mentioned on the NASA web page displaying the graph (it is actually a little app, that allows zooming). This NASA web page, found in NASA’s Vital Signs – Global Climate Change section, goes on to say that “This research is broadly consistent with similar constructions prepared by the Climatic Research Unit and the National Oceanic and Atmospheric Administration.” So, let’s see:

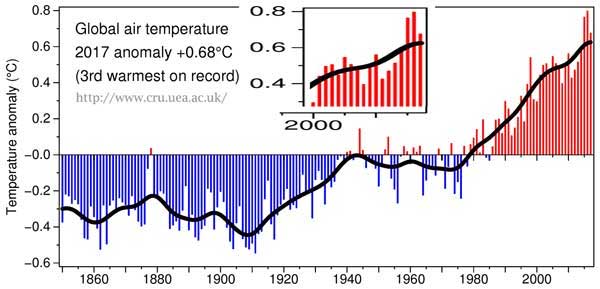

From the CRU:

Here we see the CRU Global Temp (base period 1961-90) — annoyingly a different base period than NASA which used 1951-1980. The difference offers us some insight into the huge differences that Base Periods make in the results.

2010 0.56 0.512

2011 0.425 0.528

2012 0.47 0.547

2013 0.514 0.569

2014 0.579 0.59

2015 0.763 0.608

2016 0.797 0.62

2017 0.675 0.625

The official CRU anomaly for 2017 is 0.675 °C — precise to thousandths of a degree. They then graph it at 0.68°C. [Lest we think that CR anomalies are really only precise to “half a tenth”, see 2014, which is 0.579 °C. ] CRU manages to have the same precision in their smoothed values — 2015 = 0.608.

And, not to discriminate, NOAA offers these values, precise to hundredths of a degree:

2010, 0.70

2011, 0.58

2012, 0.62

2013, 0.67

2014, 0.74

2015, 0.91

2016, 0.95

2017, 0.85

[Another graph won’t help…]

What we notice is that, unlike absolute global surface temperatures such as those quoted by Gavin Schmidt at RealClimate, these anomalies are offered without any uncertainty measure at all. No SDs, no 95% CIs, no error bars, nothing. And precisely to the 100th of a degree C (or K if you prefer).

Let’s review then: The major climate agencies around the world inform us about the state of the climate through offering us graphs of the anomalies of the Global Average Surface Temperature showing a steady alarmingly sharp rise since about 1980. This alarming rise consists of a global change of about 0.6°C. Only GISS offers any type of uncertainty estimate and that only in the graph with the lime green 0.1 degree CI bar used above. Let’s do a simple example: we will follow the lead of Gavin Schmidt in this August 2017 post and use GAST absolute values in degrees C with his suggested uncertainty of 0.5°C. [In the following, remember that all values have °C after them – I will use just the numerals from now on.]

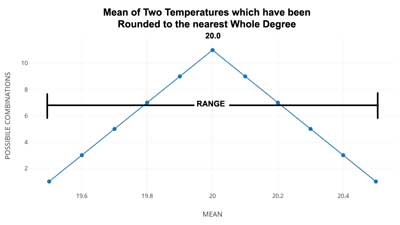

What is the mean of two GAST values, one for Northern Hemisphere and one for Southern Hemisphere? To make a real simple example, we will assign each hemisphere the same value of 20 +/- 0.5 (remembering that these are both °C). So, our calculation: 20 +/- 0.5 + 20 +/- 0.5 divided by 2 equals ….. The Mean is an exact 20. (now, that’s precision…)

What about the Range? The range is +/- 0.5. A range 1 wide. So, the Mean with the Range is 20 +/- 0.5.

But what about the uncertainty? Well the range states the uncertainty — or the certainty if you prefer — we are certain that the mean is between 20.5 and 19.5.

Let’s see about the probabilities — this is where we slide over to “statistics”.

Here are some of the values for the Northern and Southern Hemispheres, out of the infinite possibilities inferred by 20 +/- 0.5: [we note that 20.5 is really 20.49999999999…rounded to 20.5 for illustrative purposes.] When we take equal values, the mean is the same, of course. But we want probabilities — so how many ways can the result be 20.5 or 19.5? Just one way each.

NH SH

20.5 —— 20.5 = 20.5 only one possible combination

20.4 20.4

20.3 20.3

20.2 20.2

20.1 20.1

20.0 20.0

19.9 19.9

19.8 19.8

19.7 19.7

19.6 19.6

19.5 —— 19.5 = 19.5 only one possible combination

But how about 20.4 ? We could have 20.4-20.4, or 20.5-20.3, or 20.3-20.5 — three possible combinations. 20.3? 5 ways 20.2? 7 ways 20.1? 9 ways 20.0? 11 ways . Now we are over the hump and 19.9? 9 ways 19.8? 7 ways 19.7? 5 ways 19.6? 3 ways and 19.5? 1 way.

You will recognize the shape of the distribution:

As we’ve only used eleven values for each of the temperatures being averaged, we get a little pointed curve. There are two little graphs….the second (below) shows what would happen if we found the mean of two identical numbers, each with an uncertainty range of +/- 0.5, if they had been rounded to the nearest half degree instead of the usual whole degree. The result is intuitive — the mean always has the highest probability of being the central value.

Now, that may seem so obvious as to be silly. After all, that’s that a mean is — the central value (mathematically). The point is that with our evenly spread values across the range — and, remember, when we see a temperature record give as XX +/- 0.5 we are talking about a range of evenly spread possible values, the mean will always be the central value, whether we are finding the mean of a single temperature or a thousand temperatures of the same value. The uncertainty range, however, is always the same. Well, of course it is! Yes, has to be.

Therein lies the trick — when they take the anomaly of the mean, they drop the uncertainty range altogether and concentrate only on the central number, the mean, which is always precise and statistically close to that central number. When any uncertainty is expressed at all, it is expressed as the probability of the mean being close to the central number — and is disassociated from the actual uncertainty range of the original data.

As William Briggs tells us: “These results are not statements about actual past temperatures, which we already knew, up to measurement error.”

We already know the calculated GAST (see the re-analyses above). But we only know it being somewhere within its known uncertainty range, which is as stated by Dr. Schmidt to be +/- 0.5 degrees. Calculations of the anomalies of the various means do not tell us about the actual temperature of the past — we already knew that — and we knew how uncertain it was.

It is a TRICK to claim that by altering the annual Global Average Surface Temperatures to anomalies we can UNKNOW the known uncertainty.

WHO IS BEING TRICKED?

As Dick Feynman might say: They are fooling themselves. They already know the GAST as close as they are able to calculate it using their current methods. They know the uncertainty involved — Dr. Schmidt readily admits it is around 0.5 K. Thus, their use of anomalies (or the means of anomalies…) is simply a way of fooling themselves that somehow, magically, that the known uncertainty will simply go away utilizing the statistical equivalent of “if we squint our eyes like this and tilt our heads to one side….”.

Good luck with that.

# # # # #

Author’s Comment Policy:

This essay will displease a certain segment of the readership here but that fact doesn’t make it any less valid. Those who wish to fool themselves into disappearing the known uncertainty of Global Average Surface Temperature will object to the simple arguments used. It is their loss.

I do understand the argument of the statisticians who will insist that the mean is really far more precise than the original data (that is an artifact of long division and must be so). But they allow that fact to give them permission to ignore the real world uncertainty range of the original data. Don’t get me wrong, they are not trying to fool us. They are sure that this is scientifically and statistically correct. They are however, fooling themselves, because, in effect, all they are really doing is changing the values on the y-axis (from ‘absolute GAST in K’ to ‘absolute GAST in K minus the climatic mean in K’) and dropping the uncertainty, with a lot of justification from statistical/probability theory.

I’d like to read your take on this topic. I am happy to answer your questions on my opinions. Be forewarned, I will not argue about it in comments.

# # # # #

I’ve always used anomaly analysis to identify anomalous data, where in this case, the anomalous errors being identified are methodological.

There can be no doubt that the uncertainty claimed by the IPCC and its self serving consensus is highly uncertain. Consider the claimed ECS of 0.8C +/- 0.4C per W/m^2 of forcing. Starting from 288K and its 390 W/m^2 of emissions, this means that 1 W/m^2 of forcing can increase the surface emissions from between 2.25 W/m^2 and 6.6 W/m^2, where even the lower limit of 2.25 W/m^2 of emissions per W/m^2 of forcing is larger than the measured steady state value of 1.62 W/m^2 of surface emissions per W/m^2 of solar forcing. Clearly, the IPCC’s uncertain ECS, as large as the uncertainty already is, isn’t even large enough to span observations!

“… rather than finding the mean by adding the hourly temperatures and dividing by 24, we get the result of Daily High plus Daily Low divided by 2. ”

Taking the Daily High plus the Daily Low and dividing by 2 can easily give a trend in the opposite direction of actual temperatures. IOW, a set of these values could show a warming trend when it is actually cooling.

Many times I have worked outside all day for several days in a row. Often my perception was that one particular day was cooler than the others, because most of that day was partly cloudy.

Often, when I see the weather record for those days, I am surprised to learn that the record shows the day I thought was cooler was actually just as warm, and sometimes warmer, than the other days. This happens when there is a short period of full sunshine during mid-afternoon.

Even though many hours of that day were cooler than the corresponding hours of the other days, that day got recorded as just as warm, and sometimes warmer, than the other days.

SR

That is why a world average temperature is a nonsense… too many variables and too many adjustments have been made. One can only get a reliable record from a set of single sources that are comparable and which have been obtained using the same method. For the world the oceans are the only places always at sea level so land based measures have to be adjusted for altitude to be comparable and of course land radiates more heat which bounces back off clouds than sea. It’s all nonsense based on groggy data.

One can only really look at one place and compare it over time, so long as the Stevenson screen remains in an open place away from development and close by trees.

That feeling of being cooler is because you are in the shade of the clouds right? Weather thermometers are always in the shade, so are not affected much by the cloud cover per-se…

Mark ==> The phenomena that Steve is mentioning is related to determining the “Daily Average” temperature from the median of the Max/Min for the day. To a relatively cool day with a short period of high temps (a spike in late afternoon, say) and be reported as “warmer” (Daily Average) than a day with the same Min but an evenly moderately warm afternoon with no temp spike.

The cooler day was cooler most of the time but is reported higher because of a temp spike.

co2 and Steve ==> Both examples of what happens when we use the Hi+Low/2 method — pretending it is the daily average.

This problem is magnified throughout the rest of the “average temperature system.”

Hi Kip,

quick question, are the daily min and max actually recorded in the data archives or just the midpoint? Plotting both min/max and how they develop over time would give a better indication of where the climate might be going, at least in my opinion but i have never seen such a graph.

Best,

Willem

Willem ==> Usually the Min/Max are recorded along with the Daily Average. Modern AWS/ASOS stations record a great deal more detail, but still figure the Daily Average the same way. There are some such graph out there somewhere — but in my opinion are only useful at a local level.

It would seem to be seasonally exaggerated, longer cold nights in winter would average colder with 24 hourly readings averaged, as would summer’s long days be actually warmer than recorded. The real difference between summer and winter is much larger than what is recorded by averaging two daily measurements, which is all our records show.

Do I not recall correctly, in a number of articles on adjustments to temperature measurements, there is always listed a time of day adjustment?

If only the min and max temperatures are recorded, how could any adjustment for time be relevant?

Andy ==> “Understanding Time of Observation Bias” – – – for what it is worth.

Kip,

Ideally one would replicate the work to verify its consistency and accuracy but I believe I get the idea, at least to the extent that there are different results from the same data depending on how one uses the data. The TOB adjustment described may be done entirely honestly and with good intentions but the result is still a calculated guess of what might have happened because of how things were done, not actually know values, no?

Andy ==> I am suspicious of the standardized size of the adjustment — if you have the energy and the time, you might take some modern complete station record with six minute values and calculate the Daily Average (Max + Min/2) using all 24 of the hours of the day as the start of day and see if the change in Daily average is really as great as they say.

“not actually know values, no?”

Values are known, as explained here. The time and amount of TOBS is known. The effect can be deduced from the diurnal pattern. There is often hourly or better data now at the actual site, from MMTS; if not, there are usually similar MMTS locations nearby.

Averaging temperature is misleading at best no matter how many samples are used. To compute averages with any relation to the energy balance and the ECS, you must convert each temperature sample into equivalent emissions using the SB Law, add the emissions, divide by the number of samples and convert back to a temperature by inverting the SB Law. 2 samples per day are nowhere near enough to establish the average with any degree of certainty, even if they are the min and max temperatures of the day. If the 2 samples are provided with time stamps allowing the change from one to another to be reasonably interpreted, it gets a little better, but is still no where near as good as 24 real samples per day.

It’s worse than that. Temperature is an intensive property of the point in time and space the measurement was taken. Averaging that with other points in time and space doesn’t give you anything meaningful.

Yes, isn’t that convenient? Allows them to continue to bray about nothing for far longer that way.

Thomas Homer

I wonder … for the majority of Earth’s surface

where there are no thermometers, and the

temperature numbers are wild guesses,

by government bureaucrats with science degrees,

do they wild guess a Daily High and Daily Low number

for each “empty grid”, or do they save time and

just wild guess the Daily Average, ‘

or save even more time

and just wild guess the Monthly Average

for that grid?

That question has been keeping me up at night.

No other field of science would take the

surface temperature numbers seriously,

and it always amazes me when people here,

who are skeptics, and should know better,

do just that.

Surface “temperature numbers” =

Over half wild guess “infilling” plus

Under half “adjusted raw data”

No real raw data are used in the average.

When you “adjust” data, you are claiming

the initial measurements were wrong,

and you believe you know what they

should have been.

That’s no longer real data.

“Adjustments” can make

raw data move closer,

or farther away,

from reality.

Interesting that having weather satellite

data, that requires far less infilling,

and correlates well with weather balloon data,

that any people here would use the horrible

surface temperature “data”

that DOES NOT CORRELATE WELL

with the other two measurement methodologies.

That’s something I’d expect only of Dumbocrats

— They’ll always choose the measurement methodology

that produces the scariest numbers !

Because truth is not a leftist value.

Thanks for the interesting and informative article, Kip, but shouldn’t the error bars on your ‘Global mean temperatures from reanalyses’ Br twice as long as you have shown? Each one appears to me to be equal to about 0.5 degrees on the left hand scale, not 1 degree (+/-0.5 degrees).

BoyfromTottenham ==> Well Done, sir. You have caught me using the wrong version of this graph –with the wrong Photoshop layers showing. I”ll replace it with an explanation in the text.

I do so appreciate readers you really look at the graphs and words — and thus see mistakes that I have missed.

Kip, I will take you up on your request.

First, degrees C means Celsius not Centigrade. But that is too easy.

I appreciate that you have made a good presentation, but you have a problem.

“These “Daily Averages” are then used in all subsequent calculations of weekly, monthly, seasonal, and annual averages. These Daily Averages have the same 1-degree wide uncertainty range.”

This is not correct. That is not how uncertainties are carried forward through calculations. You could perhaps look at some worked examples in Wikipedia which for mathematical things is a pretty reliable source.

See here for examples:

https://en.m.wikipedia.org/wiki/Propagation_of_uncertainty

I will first present an oversimplified example of adding two numbers with an uncertainty of half a degree: 20±1 + 20±1 = 40±2

Possible values are 19+19 = 38, up to 21+21 = 42

This ±2 is not the correct answer, but it is a demonstration that adding two equally uncertain numbers doesn’t mean the final uncertainty is from only one, unless the second number is absolutely known, which is not the case with an anomaly.

The correct answer is the square root of the sum of the squares of the two uncertainties.

Square root of (1.0 squared + 1.0 squared) = 1.41

The real answer is 40±1.41

If you want the average, it is 20±0.71 because both values have to be divided by the same divisor so the Relative Error remains the same.

This is the uncertainty of the sum of the two inputs. Similarly, subtraction generates the same increase in uncertainty. Try it.

An anomaly is the result of a subtraction involving two numbers that each have an uncertainty. You do not mention this. Errors propagate. The anomaly does not have an uncertainty equal to the new value because the baseline also has an uncertainty.

Suppose the baseline is 20.0 ±0.5 and the new value is 21.0 ±0.5.

The anomaly is 1 ±0.71. Why? Because the propagated error magnitude is

±SQRT( 0.5^2 + 0.5^2) = ±0.71

In all cases the anomaly has a greater uncertainty than the two input numbers because it involves a subtraction.

Crispin,

I think your math is good for “random” errors. What about “systemic” errors? I think much of what is being pointed out by some here has to do with systemic errors.

Good point , well presented Crispin.

Another point where Kip goes wrong is claiming that the uncertainty of the original measurement can not be reduced: the +/-0.5 is always there.

While it is correct to call this an “uncertainty”, it can also be more precisely described as quantisation error. The smallest recorded change or “quantum” is one degree. No factions. This adds a random error to the actual temperature. A rounding or quantisation error.

Measuring at different times in a day ( min, max ) or at different sites will involved unstructured, random errors. If you average a number of such readings the quantisation errors will be distributed both + and – and of varying magnitudes and will ‘average out’. This allows reducing the expected uncertainty by dividing by SQRT(N), as Crispin does above for N=2.

This is based on the assumption that the errors are “random” or normally distributed. There are other systematic errors but that is a separate question.

If you have sufficiently large number of readings the effect of quantisation error in the original readings will become insignificantly small. There are many other errors involved in this process and the claimed uncertainties are very optimistic. However the nearest degree issue Kip goes into here is not one of them and his claim this propagates is incorrect.

auto-correction. This is not a case of normally distributed errors. The root N factor derives from the normal distribution and is not the correct factor here.

As Kip correctly shows in the article, the distribution is flat and finite. There is just the same change of having a 0.1 error as a 0.5 error. This does not negate that the errors will average out over a large sample and become insignificant.

To say the uncertainty in the mean is +/- 0.5 is to say that there is an equal chance that all values had an error of +0.5 as there was a chance that there was an even mix which averaged out. That is obviously not the case for a flat distribution.

As long that the number of samples is sufficiently large for this theoretical flat distribution to be well represented in the sample the error in the mean will tend to zero. That is where the stats theory comes in in relation to the sample sixe, the expected distribution and the uncertainty levels to attribute to the mean.

Greg

You are violating the Central Limit Theorem which is what the Error in The Mean relies on.

The uncertainty in a measurement is a hard limit based on characterisation and repeatability. This is defined by metrology.

It means that the “resolution” of the sample distribution is +/- 0.5 degrees. To demonstrate identically distributed samples they need to vary by more than this.

You have assumed that other errors are random and follow a similar distribution. The MET Office made the same assumption with SST.

It is an unverified assumption. If applied your data becomes hypothetical and unfit for real life use.

It doesn’t matter how many meaurements you have. It’s like measuring a human hair multiple times with a ruler marked in cms and claiming you can get the value to microns.

Beware of slipping into hypothetical.

Greg, are you sure that “Large Number” theory applies to a measurement of something that changes every minute of every day, with different equipment and in different locations all over the world?

I thought it applied to repetitive measurement of an object.

A catch with temperatures is that, typically, there is only one sensor involved. Each temperature is a sample of one, at one place and at one time. You could use the CLT if you had 30 or more sensors in that Stevenson screen, for that screen’s value; but only for that value. Extrapolation and interpolation add their own errors and uncertainties to the mix. NB that errors and uncertainties are not synonyms. In the damped=driven mathematically chaotic, dynamic system that is Earth’s weather, ceteris paribus will almost always be false.

cdquarles==> You point out one of the fallacies of climate modeling — which attempts prediction of the future by running their models with one factor changing, all else ceteris paribus.

See mine @ur momisugly Dr. Curry’s blog “Lorenz validated” https://judithcurry.com/2016/10/05/lorenz-validated/

These are not repeated measures for the same phenomena, these are singular measures (with ranges) for multiple phenomena (temperature measured at multiple locations).

The reduction in uncertainty by averaging ONLY applies for repeated measures of the (exact) same thing, i.e. the temperature at a single location (at a single point in time).

NOT to the averaging of singular measures for multiple phenomena.

Jaap ==> Yes, exactly right.

Also measurement error is nice to know, but when small to stddev of sample population it hardly matters. What one does when averaging temperatures across the global is asking what is the typical temperature (on that day).

Say you do that with height of recruits for the army. We use a standard procedure to get good results and a nice measurement tool. Total expected measurement error is say 0.5 cm. We measure 20 recruits (not same recruit 20x).

Here are the results:

# height

1 182

2 178

3 175

4 183

5 177

6 176

7 168

8 193

9 181

10 187

11 181

12 172

13 180

14 175

15 175

16 167

17 186

18 188

19 193

20 180

Average 179.85

StDev (s) 7.19

95% range

min max

165.47 194.23

Remember that these are different individuals, so not repeated measures of the same thing, but multiple measures of different things, which are then averaged to get an estimate of the midpoint (average) and range (variance).

Both those min, avg and max still also have that measurement error, but we usually forget all about that because it is so small compare to the range of the sample set.

The Central Limit Theorem gets misunderstood a lot. It doesn’t mean that measuring each temperature a million times yields any different distribution from the base data. That is the accursed error called autocorrelation. Instead, what it means is that if each sample is hundreds of random measurements from the entire overall population, THOSE sample means have a different distribution from the overall population. But that’s never how these samples are taken.

Crispin ==> Caught me with my age showing — “cen·ti·grade

ˈsen(t)əˌɡrād/ adjective adjective: centigrade another term for Celsius.”

As for the rest — you are doing statistics — not mathematics.

We are not dealing with “error” here, we are dealing with temperatures which have been recorded as ranges 1 degree (F) wide. That range is not reduced — ever.

This is not a matter of “propagation of error”.

Kip, cdquarles, A C Osborn, Mickey, Greg and William

There are some good points made above, by which I mean issues are raised that have to be considered when determining the reliability of a calculated result.

The most important to the conversation is that the calculation of an “anomaly” requires subtracting one value with an uncertainty from another value with its own uncertainty and there is a standard manner in which to do this correctly. Invariably the final answer will have a greater uncertainty than the two inputs because we do not know the absolute values.

A separate matter is how the temperature “averages” were produced. Addressing the example given above:

Measure the temperature of one object 30 times in quick succession using the same instrument which has a known uncertainty about its reporting values. The distribution of the readings may be Normal. One could say they will “probably be Normal”.

Let’s assume that the instrument was calibrated perfectly at the start. If the time period is long, perhaps a year, the manufacturer usually provides information on the drift of the instrument so the uncertainty of the measurement can be reported as different from when it was last calibrated.

Now consider using 30 instruments to measure 30 different objects that are 30 different temperatures to find the “average” of a large object like a bulldozer. It is not true to claim that the measurement errors are “Normally distributed” because there is no distribution pattern available for each single measurement. You could assume that the drift of the instruments over time has a normal distribution, but it probably isn’t. So we have two things to address: measurement uncertainty and instrument drift. The first is a random error and the second is a systematic error. The easy answer is the increase the expressed uncertainty with time to accommodate drift and that is what people (should) do.

I cannot possibly present all the considerations that go into the production of a global surface temperature anomaly so let’s stick to the topic of the day.

“Error propagation : A term that refers to the way in which, at a given stage of a calculation, part of the error arises out of the error at a previous stage. This is independent of the further roundoff errors inevitably introduced between the two stages. Unfavorable error propagation can seriously affect the results of a calculation.”

https://www.encyclopedia.com/computing/dictionaries-thesauruses-pictures-and-press-releases/error-propagation

There are no “favourable” error propagations. Unless the calculation involves a constant such as dividing by 100, the uncertainty increases with each processing step. And don’t get me going about the “illegal” averaging averages. The example of the diameter of a human hair and the centimetre ruler is helpful. Using that instrument read to within 1 mm, the diameter of a single hair is 0±0.1 centimetres, every time. That includes the rounding error which is in addition to the measurement uncertainty. Averaging 30, nay, 300 measurements does not improve the result at all.

Claiming to have calculated a global temperature anomaly value with 10 times or 50 times lower uncertainty than for the two values used in the subtraction is hogwash. If the baseline is 20.0±0.5 with 68% confidence and the new value is 20.3±0.5 also with the same confidence index, then the anomaly is 0.3±0.71 [68% CI]. If you want 95% confidence you need a more accurate instrument and more readings for each initial value in the data set being averaged. There is a trend towards doing exactly this: multiple instruments at each site.

Crispin ==> Thanks for your exposition on errors . their propagation, and uncertainty.

Kip,

I just re-read the whole article again and if you have sent it to me for review, I would had insisted on at least a dozen changes. The wording is too casual, given that it attempts to point out something quite technical.

One more example:

“When one calculates a mean (an arithmetical average — total of all the values divided by the number of values), one gets a very precise answer.”

This confuses accuracy and precision. First, these are not multiple measurements of a single thing. Second, the average of a number of readings cannot be more precise than the precision of the contributing values. That would be false precision. In any case, precision has to be stated within a range defined by the accuracy.

What you are alluding to (Willis often does the same thing so don’t feel lonely) is that one can claim “to more precisely estimate” (not “know”) the position of the centre of the range of uncertainty with additional measurements. It is not an increase in the precision or accuracy of the reported value. This is basic metrology, freely abandoned in the climate science community when it comes to anomalies. They are making fantastical claims.

One cannot laugh hard enough at the silly claim that an anomaly is known to 0.05 C using a baseline value subtracted from the current average, both with uncertainties of 0.5 C. They literally cannot do the math. One cannot treat calculated values based on measurements if they are known constants.

This key error of claiming false precision for anomalies needs to be addressed in a reviewed article (posted here) delineating the steps taken to calculate an anomaly and where rules are being broken, and what the real answers are. As one of my physicist friends says, “They are trying to rewrite metrology.”

Very easy to debunk the GISS global “temperature” record. Snow cover.

Can’t fool snow, it melts at 0 C. It ignores adjustments. Because snow cover trend has been flat since the late ’90’s it means GISS’s data is fiction.

Incidentally snow cover anomaly is consistent with the UAH temperature anomaly dataset, which along with the radiosonde balloon measurements doubly verifies UAH’s accuracy.

Thanks for this – didn’t know that anyone was tracking Snow Cover Extent.

It’s easy for ordinary people like me to understand a”big picture” story to the effect that significant increases in temperatures should cause significant decreases in SCE.

At least unchanging SCE ought to raise questions.

Your proxy is about as convincing as tree rings being thermometers. What you are looking at is the geographic distribution of the 0 deg C isotherm. This is not a measure of global average temperature.

Greg ==> I think that Bruce is using a pragmatic, “works for me” , rule-of-thumb standard when he mentions “snow extent”…. I don’t think he really believes it is a scientifically defendable idea.

Kip – No, I’d completely serious. I’ve worked with data for forty years in my field of science.

Unfortunately I can’t display a graph with the current WUWT commenting system, but I’ve just put it on an old Flickr account I’ve had for a while:

UAH NH land anomaly and Rutgers NH snow anomaly

That is an apples to apples comparison*. But if you check the UAH global dataset it is still a pretty good match.

I’ve added vertical gridlines so you can see how the peaks line up. The UAH data does seem to be too warm – it warmed a bit going from UAH 5.0 to 6.0 as I recall. It looks like that adjustment isn’t very supportable on the snow cover evidence.

Even so the UAH data is much closer to the snow cover data than the lurid NASA GISS data is.

(* Rather than 2m temperature anomalies I’ve used the lower troposphere UAH data as it was easier to get from Roy Spencer’s blog. Note that I’ve inverted the temperature graph so that it’s easier to line up the peaks.)

Bruce==Trying to understand what you are on about with this — what I see is that when NH Tropo is warmer there is generally less snow cover. Have I got that right so far?

Kip – Snow cover is a direct measurement by satellite. Difficult to get wrong. Temperature by AMSU is an indirect measurement with a lot of data processing required, but better than the adjusted UHIE contaminated mess of GISStemp.

Therefore the flat trend of snow cover anomaly indicates essentially no warming since the late 1990’s. It is a crosscheck for the temperature datasets: if a temperature dataset doesn’t match the trend of the snow cover anomaly graph then the temperature dataset is wrong, and the adjustments of it are wrong.

Perhaps I should have dug out the 2m UAH anomaly data, but the lower troposphere data is probably pretty good. Clouds are some way up in the atmosphere, even if not quite LT level.

I am actually agreeing with you. You are addressing the relative errors in the surface temperature datasets, I am pointing out that snow cover represents a crosscheck of the systematic errors – ie the actual variance from the real temperature. Snow cover extent represents a metric of the area of land at or below 0 C. Thus it can be regarded as an internal standard if you like.

Bruce

Yes its a real world guide to temperature changes in the NH landmasses.

As it gives extended real world data on how much of the NH land mass is at or below 0 C at any one time, and of just as much important is that it unlike climate science has no agenda to peddle.

I have lived in the same location south west of Cleveland Ohio, USA now for 30 years. Over that period of time freezing has gone from 32 degrees F to 37 degrees F. Thirty years ago a prediction of 32-33 degrees F meant bring in the freezable’s. Now it is a prediction of 37 degrees for frost.

When I have checked the official temperatures for those frost days a month or two later, the official temperatures are always well above freezing. I can’t tell you what is going on but I can tell you that they correctly predict frost even though the low is suppose to be not less than 37 degrees.

Pierre ==> Frost warnings are not the same as Freeze Warnings. Details here > http://www.crh.noaa.gov/Image/pah/pdf/frostfreeze.pdf

Ok, that throws everything I thought I knew into disarray. So, water can freeze above freezing. Now I gotta figure out why. In the end it will probably make sense.

Kip, read what Pierre is actually saying.

Hunter ==> Read the NOAA pdf linked. It answers his specific question, which paraphrased is “How can there be a frost (which is freezing dew, basically) at a temperature above 32F?”

The ice/snow-cover issue illustrates another important point which I don’t think Kip addressed directly: By using anomalies the dishonest can forever claim that, say, this year/decade is x degrees hotter than last year/decade. But if absolute temperatures are always quoted then sooner or later the quoted temperature will become so high as to be clearly erroneous to even the casual observer. The melting point of frozen water is thus a useful internal standard to keep them honest when the temperatures of interest are close to 0°C/32°F

And, needless to say, the boiling point of water at 100°C is sufficient to prove that people like James Hansen are speaking out of where the sun don’t shine when they talk of the earth becoming so hot that the oceans boil away.

Water in a bucket can freeze on a clear, still night at air temperature up to 59 degrees F.

Thank you for this article. Using anomalies is a trick because the real world is compared to an artificial ideal. Therefore the real world will easily become anomalous.

While we get years that are warm or cool or wet or dry the experience of these has now become an anomaly.

Anomaly definition – something that deviates from what is standard, normal, or expected. Based on that definition, how does one define something like the UK weather where variation is the norm; the standard, normal and expected weather is now anomalous?

Here’s another WUWT article on using anomalies, and it explains why determining global temperature is not as easy as determining the anomaly thereof: https://wattsupwiththat.com/2014/01/26/why-arent-global-surface-temperature-data-produced-in-absolute-form/

That’s why I’ve always detested the use of “anomalies.” It assumes any departure from an AVERAGE of some 30-year period to be some kind of “yardstick” against which any departure is “anomalous.” Which is ridiculous. “Average” weather metrics, be they temperature, precipitation, whatever, are not “expected norms,” as the use of “anomalies” suggests – they are nothing more than MIDPOINTS OF EXTREMES.

Kip says : ” remember, when we see a temperature record give as XX +/- 0.5 we are talking about a range of evenly spread possible values”

Kip has made an assumption here that may or may not be correct. He says “evenly spread” but that is not the case. Specifically it is not the case when the values are not “evenly” spread, but spread as are in a normal distribution. Kip’s error is the assumption that they are distributed uniformly.

But isn’t that precisely what accuracy means, ie: for any given reading recorded, the true value is equally likely to be anywhere along the range (uniformly distributed), NOT normally distributed along the range.

David ==> We don’t know what the actual temperature was when we see a record of “72”. The “72” is really the range from 72.5 down to 71.5 and any of the infinite possible values in between. Since temperature is a continuous, infinite value metric, when we know nothing except the range, then all possible values within that range are equally possible — Nature has no preference for any particular value within the range.

The possible temperatures between 72.5 and 71.5 are NOT distributed in a normal distribution.

This is an extremely important point. Any and all values within the range are equally possible.

Wrong Kip, taking the measurement is normally distributed. There is a very low non-zero probability that the actual temperature is 70 degrees, and the human reader observes and records 71. There is also a low non-zero probability that the actual temperature is 72 degrees, and the human again reads 71. Pretty hard to get +/- 0.5 degree 95% confidence interval when the standard deviation of a uniform distribution is = 1/12*(a-b)

David,

Let’s try this one more time. A single thermometer is sitting in a box in the middle of a grassy field. You go out to read the thermometer. You carefully read and see the alcohol or mercury line is between the major marks on the scale. You write down the value on the closest major mark.

What in that scenario is going to give the temperature inside that box a preference to line up with one of the arbitrary (as far as nature is concerned) major marks over some random space in between? When you say “normally distributed” , you are saying that the air inside the box has a preference for heating the alcohol or mercury to expand or contract until it lines up with those major lines but is sometimes a bit off. This is most definitely a uniform distribution.

Now if you want to say you send 1000 people out to read that thermometer and each of them read a value, then you would have a normal distribution of readings about that major line. That is a totally different scenario and not applicable in the reading of thermometers. They were always read once by one person, and if the top of the alcohol or mercury were between 69 and 70, but closer to 70, then 70 is what was recorded.

It is impossible for it to be a normal distribution around a value. The temperature is a continuous linear value between it’s possible range for any given area. There may be a normal distribution within the whole range, but not for any given temperature reading as you are stating. As Kip points out, it could be any value within X to X+1 with no bias toward any value centre.

OweninGA and Greg…..did both of you miss the word MEASUREMENT?

..

The actual temperature is unknown. The reading you get off of the measuring device is normally distributed.

..

Because you cannot measure any other way, you do not have any evidence or data on what the actual distribution of the temperature really is. Assuming it is uniformly distributed is not proof that it is.

..

Why don’t one or both of you give me the explanation of how you would determine the actual distirbuiton is uniform when the only way you can measure it is with something that provides you with a normally distributed result?

David. We could be arguing the same thing using different language. My attempt at an explanation wasn’t a good one, I’ll admit.

Between the minimum and maximum temperature range, there will assumed to be a normal distribution curve. Within a single degree measurement error (24.5-25.49999) will be near linear probability of any given REAL value. We can’t measure those real values, so it gets rounded to the nearest 0.5C.

Greg, Owen, David ==> For any one temperature record officiually recorded as “72” there are an infinite number of possible vaues between 72.5 and 71.5. All of those infinite values have an equal probability of having been the real temperature at the moment of measurement. The record “72” literally means “one of the infinite values between 72.5 and 71.5” — no particular value has a higher probability. There is no Normal Distribution involved.

How many of the official temperature stations are human read rather than electronically recorded? Probably not many in the US or other more technically advanced societies.

I’m afraid that’s wrong.

You are assuming a normal distribution not demonstrating it with a more accurate instrument to calibrate it.

This is a fundamental problem with applying theory rather than characterisation. You also need to account for drift and other effects.

This is basic metrology.

Kip says: , “then all possible values within that range are equally possible — Nature has no preference for any particular value within the range.”

…

Thank you Kip for clearing up your misconception. You obviously don’t understand Quantum Mechanics. Based on QM, Nature actually HAS a preference for particular values, and most of the time they are discrete integer values.

DD,

You said, “…most of the time they are discrete integer values.” That is true at the level of quantum effects, but not at the macro-scale of degrees Celsius or Fahrenheit.

Clyde, please do not forget that the macro property of “temperature” is the statistical average of the normally distributed velocity of a discrete number of particles. Now all I ask is that you tell me how you determine that is value of said temperature is uniformity distributed on the interval between N and N+1 degrees on the measuring instrument? Also please tell me how you measure the individual velocity of one of these particles so that you can arrive at the average. My understanding of QM says you can’t even do that.

DD,

Yes, Heisenberg’s uncertainty principle implies that the act of measuring a single particle will alter its properties. When dealing with a very large number of them, one expects a probability distribution that smears out the quantum velocity fluctuations and provides individual particle ‘temperatures’ that are much smaller than can be measured with any thermometer. You are NOT going to see a preference for an integer temperature change!

At the macro level you might get the impression that the “smearing out” makes the measured item continuous, but the underlying physical theory says it is quantized and actually has values in the interval that cannot be. When Kip states: “then all possible values within that range are equally possible” he is wrong as dictated by QM. QM says there are values in the interval that are not possible, or that the value has two different measures at the same time (i.e. Schrödinger’s cat)

Clyde == Thank you for trying to help David. Like many, he is confusing and conflating Quantum Mechanic theory with realk world macro effects.

Even if QM effects were seen in 2-meter air temperature readings, the probability of those Quantum effects landing preferentially at our arbitrarily assigned whole degree values would still be infinitesimal.

And QM nature is always clued into whatever human devised scale each instrument is using — and how accurately each instrument is manufactured and calibrated!

That is at least as good a trick as noting the fall of every sparrow, probably better.

They also forgot that the Human is “adjusting the data” either up or down, plus the temperature is as percieved by the human, someone 6″ taller may see it slightly differently and “adjust” it in the opposite direction.

No, Kip is correct. The uncertainty that arises due to recording data rounded to the nearest whole number is Properly characterized by the uniform (or rectangular) distribution. However, uncertainty due to instrument calibration always includes both a systematic and random component. The systematic component is the difference between the reference’s stated and true values. The random component is determined by repeated comparisons between the reference and instrument measurement and is normally distributed.

So Kip’s essay is actually very generous in only looking at the +/- 0.5 half interval uncertainty. The real uncertainty would be the root of the sum of the squares of the half interval plus systematic plus random uncertainties. e.g. Assume half interval MU = 0.5, MU of reference = 0.2, MU due to random error = 0.3, then overall MU = 0.62

I probably should have said that we never actually know the systematic component since we never know the “true value” of calibration references. Calibration certificates report the MU of the reference which is used.

Rick, thanks supporting the “normally distributed method” of error propagation. 🙂

I presume you agree that the anomaly cannot have a lower uncertainty than the contributing measurements.

The most egregious case of misrepresentation of facts (I know of) is the NASA/GISS claim that 2015 was 0.001 C warmer than 2014. That is a true to life example of making a silk purse out of a sow’s ear.

For NOAA’s official details, see the post:

Global Temperature Uncertainty

<a href=https://www.ncdc.noaa.gov/monitoring-references/faq/anomalies.phpBackground Information – FAQ

The two citation titles mentioning uncertainties are:

Folland, C. K., and Coauthors, 2001: Global temperature change and its uncertainties since 1861. Geophys. Res. Lett., 28, 2621–2624.

Rayner, N. A, P. Brohan, D. E. Parker, C. K. Folland, J. J. Kennedy, M. Vanicek, T. J. Ansell, and S. F. B. Tett, 2006: Improved analyses of changes and uncertainties in sea surface temperature measured in situ since the mid-nineteenth century: The HadSST2 dataset. J. Climate, 19, 446–469.

See BIPM’s JCGM_100_2008_E international standard on how to express uncertainties:

GUM: Guide to the Expression of Uncertainty in Measurement

Guide to the Expression of Uncertainty in Measurement. JCGM_100_2008_E, BIPM

https://www.bipm.org/utils/common/documents/jcgm/JCGM_100_2008_E.pdf

David L Hagen ==> And they really truly believe that that represents the real uncertainty. Unfortunately, it is simply the “uncertainty” that the MEAN (a mean of means of means of medians) is close to the value given as the anomaly.

The anomaly of the mean and their uncertainty say nothing about the temperature of the past (past year, month, or whatever). It only speaks for the uncertainty of the mean — the actual temperature, at the Global Average Surface Temperature level is still uncertainty to a minimum of +/- 0.5 K.

You citations do show exactly how badly they have fooled themselves and how convinced they are that it makes sense.

They know very well that the absolute GAST (in degrees k) carries a KNOWN UNCERTAINTY of at least 0.5K. That known uncertainty does not disappear just because they choose to look at the anomaly of the GAST.

Kip,

As to how badly they are fooling themselves, I’d suggest what I have written before. A probability distribution function for all the temperatures for Earth for a year is an asymmetric curve with a long tail on the cold side. The peak of the curve is close to the calculated annual mean temperature. However, Tschbycheff’s Theorem provides an estimate of the standard deviation based on the range of values. Fundamentally, any way you cut it, the standard deviation about the mean is going to be some tens of degrees, not hundredths or thousandths of a degree.

https://wattsupwiththat.com/2017/04/23/the-meaning-and-utility-of-averages-as-it-applies-to-climate/

Clyde Spencer ==> I have no doubt that you are right with “A probability distribution function for all the temperatures for Earth for a year is an asymmetric curve with a long tail on the cold side”.

If only we were dealing with something as simple as that…..a data set of the temperature of every 5 degree grid of the Earth taken accurately every ten minutes then we might be able to come up with something that might pragmatically be called the “Global Average Surface Temperature” to some functional degree of precision.

I agree that the true uncertainty surrounding GAST is far greater than +/- 0.5K — and have stated that this is the absolute minimum uncertainty…. The true total range of uncertainty is probably greater than the whole change since 1880.

The real reason that NASA and the other agencies use anomalies; are to be able to extrapolate temperatures to areas where there are no temperature stations. Read below.

https://data.giss.nasa.gov/gistemp/faq/abs_temp.html

Read the following from the NASA site

“If Surface Air Temperatures cannot be measured, how are SAT maps created?

A. This can only be done with the help of computer models, the same models that are used to create the daily weather forecasts. We may start out the model with the few observed data that are available and fill in the rest with guesses (also called extrapolations) and then let the model run long enough so that the initial guesses no longer matter, but not too long in order to avoid that the inaccuracies of the model become relevant. This may be done starting from conditions from many years, so that the average (called a ‘climatology’) hopefully represents a typical map for the particular month or day of the year.”

So in the end temperature datasets like the above are computer generated with FAKE data.

Kip has correctly pointed out the junk science of dropping of the uncertainty range but the whole anomaly method was started by James Hansen in 1987 see below

https://pubs.giss.nasa.gov/docs/1987/1987_Hansen_ha00700d.pdf

In this above paper, Hansen has admitted in his own words ; that he did not follow the scientific method of testing a null hypothesis when it comes to analyzing the effects of CO2. I quote his paper.

“Such global data would provide the most appropriate comparisons for global climate models and would enhance our ability to detect possible effects

of global climate forcings, such as increasing atmospheric CO2.”

In that one statement he has admitted that up to then he had no evidence that CO2 affects temperature. The only indication that it might was from a US Air force study (see below) . This is true even in the face of him producing 8 prior different studies on CO2 and the atmosphere starting in 1976. It seems that somebody in the World Meterological organization actually beat Hansen to the alarmist podium, since Hansen references a paper (in his 1st study on CO2 in 1976) by the WMO introduced at their Stockholm conference in 1974. However Hansen in his 1976 paper gave the 1st clue that he had already condemned CO2 and the other trace radiative gases.

https://pubs.giss.nasa.gov/docs/1976/1976_Wang_wa07100z.pd

In that study Hansen said “By studying and reaching a quantitative understanding of the evolution of planetary atmospheres we can hope to be able to predict the climatic consequences of the accelerated atmospheric evolution that man is producing on Earth.”

He had already developed a 1 dimensional radiative convective model to compute the climate sensitivity of each radiative gas by 1976. it is interesting that his model divided the solar radiation into 59 frequencies and the thermal spectrum(IR) into 49 frequencies. However it seems that we can blame the US Air force with their 8 researchers who came up with an actual greenhouse temperature effect in 1973. So it seems that Hansen just took their numbers and ran with it. The same numbers are probably in the code today in all the world’s climate models.

They are ; quoting from Hansen’s study paper above :

” CO2 doubling greenhouse effect Fixed cloud top temperature 0.79K

Fixed cloud top height 0.53K

Factor modifying concentration 1.25

This was based on then concentration of 330ppm in 1973.

H2O Fixed cloud top temperature 1.03K

Fixed cloud top height 0.65K

Don’t forget that if you are looking at table 3 in that study where I quote the above figures, according to Hansen you have to add up all the temperatures if there are also doublings of the other trace gases. It is interesting that doubling of ozone gives negative temperature forcings -0.47K and -0.34K.

Also interesting are the methane numbers 0.4K and 0.2K.

If you add the highest doubling forcing of both CO2 and methane you get 0.79K + 0.4K

= ~1.2K. That is very suspiciously close to many researchers of the present day to the climate sensitivity numbers.

Alan ==> A great deal of the calculated data about climate can correctly called “fictional data” or “fictitious data sets” — in which the data is neither measured nor observed, but depends on functions based on assumptions not in evidence. Some of those fictitious data sets are useful, some not.

… “synthetic data”

That person who beat Hansen to the alarmist podium was the Swedish scientist Bert Bolin. However Bolin himself didnt have any experimental proof of CO2 raising temperature. He basically took Hansen’s numbers which as I said came from the 8 US air force researchers in a study done in 1973. How they came up with the forcing temperature numbers from a doubling I dont know; because I cant find that study and since I am not an American I cannot access their Freedom of information requests. The names of those 8 Air Force researchers are 1)R.A. McClatchey 2) W.S. Benedict 3) S.A. Clough 4) D.E. Burch 5) R.F. Calfee 6) K. Fox 7) I.S. Rothman 8)J.S. Garing

The only reference to the study is AirForce Camb, Res. Lab. Rep. AFCRI-TR-73-0096 (1973)

That has to be the most important document in the history of mankind, seeing that the CO2 scam is the most costly scam in human history.

Alan ==> That paper is found here in .pdf format.

Can you explain how an estimate is “data”?

lee ==> An estimate is an estimate — less generously called a “guess”. Hopefully the estimate will be based on real data which has been measured or scientifically observed in some way.

Solar Changes

For context, paleo-reconstruction estimates of solar insolation show the change from the Maunder Minimum to the present to be about twice that of the Maunder Minimum to the Medieval Warm Period insolation. See:

Estimating Solar Irradiance Since 850 CE, J. L. Lean

Space Science Division, Naval Research Laboratory, Washington, DC, USA

Abstract

https://agupubs.onlinelibrary.wiley.com/doi/pdf/10.1002/2017EA000357

Add to the uncertainties discussed here the FACT that up until 2003(ocean buoys) the ocean temperatures are simply made up. The likely error from 1850 to 1978 is about +-3C, and after 1978, with satellites, probably +-1.5C, and with buoys after 2003, +-0.1C.

That is for 70% of the earth.

Then there is the Arctic and Antarctic. Add in more made up numbers.

Other than a few long term, good quality Stevenson screen readings, climate science has little to work with to calculate long term GAT. See: Lansner and Pepke Pederson 2018

http://notrickszone.com/2018/03/23/uncertainty-mounts-global-temperature-data-presentation-flat-wrong-new-danish-findings-show/

Can someone tell me how you can come up with a global/land temperature index. The heat capacity of the 2 are hugely different

I meant to say land/sea temperature

Mike ==> The usual response is that it is an INDEX — like the Dow Jones Stock Index — of unlike values but looking at the combined index can tell us something.

Mixing sea surface (skin) temperature with 2-meter land air temperatures is one of the extremely odd practices of CliSci.

Mike, what you ask is a big part of my counter argument against alarmists. Don’t forget the thermal capacity of the polar ice caps as well. The stored thermal energy in the ice below 0C is close to the stored thermal energy in the oceans above 0C and both are approximately 1000 times the thermal energy stored in the atmosphere above 0C. My cynical view is that global average temperature is used as the metric because the end goal is to sell this to (force this on) the population. Temperature is “intuitive”. People can be scared with a story about temperature. If thermodynamics is brought into the discussion or Joules of energy is the metric then it will not be possible to con the public – because they can’t understand it.

“As an aside: when Climate Science and meteorology present us with the Daily Average temperature from any weather station, they are not giving us what you would think of as the “average”, which in plain language refers to the arithmetic mean — rather we are given the median temperature — the number that is exactly halfway between the Daily High and the Daily Low. So, rather than finding the mean by adding the hourly temperatures and dividing by 24, we get the result of Daily High plus Daily Low divided by 2. These “Daily Averages” are then used in all subsequent calculations of weekly, monthly, seasonal, and annual averages.”

This is a major problem in climate science. It hides what is really going on by taking a false average at the very beginning and using it going forward. That number is not the average at all. The high/low can occur at different times of the day depending on the weather. For instance, on a mostly cloudy day, the high might occur when the sun peeks through the clouds. It may be the high for the day but using it and one other to compute the average is bogus. The average should be over many samples. Perhaps one sample per minute giving 1440 samples per day, each one with equal weight. Even better, keep all the samples. Storage is cheap.

Another example is when a cold front comes through at 2:00 AM, and the high for the 24-hour period (“day”) occurs in the middle of the night (at midnight!). Using that high and averaging with the low hides the fact that the day was cold.

The fact that this is how it has been done for a long time is no excuse. It is a problem, so fix it.

It was fixed. That (and other “fixes”) is why we have Global Warming.

coaldust ==> The Hi+Low/2 daily average is an historical artifact left over from when weather stations used Hi/Low recording thermometers. The His and Lows were all that they recorded, and the daily average was figured from them. In order to be able to compare modern records with older records they have continued with the same method — nutty as it is — out of necessity.

The Hi+Low/2 method does not give what your sixth grader would call the average temperature for the day. It is really the median of a two-value record.

Kip,

I wasn’t sure where to put this, or whether you will see it, or even whether it’s relevant, but I live in SE Virginia. Several years ago in January (not sure which year now, but can look it up) when I first heard of the Polar vortex, we had temperatures drop over 50 °F in less than 24 hours (mild winter day, ~67 °F one afternoon to 14 °F early the next morning). If you just looked at the daily or even weekly average temperature, you would have never picked this up. The average T for the first day was, I think in the 40’s or 50’s (have calcs, but not with me). The second day was colder, but probably around 18-19°.

Phil ==> Weather is highly changeable and can be wild. Daily Averages hide more information than they reveal.

Tell us Kip, what do daily averages “hide?”

Remy ==> such a basic question….daily averages hide everything about the daily temperatures except the Daily Median…they even hide the Max and Min. used to derive them. we no longer know whether we had a cool morning or a warm morning, an overnight freeze followed by a warm spring day, or a mild night followed by a mild day, we lose all the temperature information except that one tiny bit of information, the Median between the Max and the Min.

Thanks for asking.

(The other casze is the full daily record of the temperatures as measured, say at six ,minute intervals by an ASOS automatic weather station. )

No Kip, you are building a huge strawman. The “daily average” from the National Climatic Data Center/NESDIS/NOAA tells me that on Sept 27th (tomorrow) where I live, is 59. None of the things you mention are “hidden,” because none of them HAVE HAPPENED YET !

…

What it does tell me is that shorts and a tee shirt might be uncomfortable for a wardrobe choice for outside activities.

That is why Kip, in Math/Stat the average (arithmetic mean) is referred to as the “Expected Value” of a random variable.

…

https://en.wikipedia.org/wiki/Expected_value

…

Emphasis on the word EXPECTED

Arrhenius gave the average surface temp of earth as 15C in 1896 and 1906 papers. Today it is no different within error (15C is 288.15K). NOAA gave earth’s temperature as 14.4C in 2011. If one is to believe NOAA’s precision, it might have actually cooled in the past 100 years or so.

R Shearer ==> “Arrhenius gave the average surface temp of earth as 15C in 1896 and 1906 papers.” and we are almost there — just a little warmer and we will be Earth-like!

So climate is not only getting worse than we could ever imagine we also know less about it then we ever have.

“But for our purposes, let’s just consider that the anomaly is just the 30-year mean subtracted from the calculated GAST in degrees.”

I don’t know what your purposes are, but that is strawman stuff. No-one does that.

“The trick comes in where the actual calculated absolute temperature value is converted to an anomaly of means.”

Wearily, no, it is a mean of anomalies.

“Reducing the data set to a statistical product called anomaly of the mean does not inform “

Wearily, again…

“No matter what we do to temperature records, we have to deal with the fact that the actual temperatures were not recorded — we only recorded ranges within which the actual temperature occurred.”

Literally, not true. Ranges were not recorded, only the estimate. Every measurement ever made, of anything, could have that said about it. You never know the actual …. You have an estimate.

Nick ==> Thanks for checking in — sorry to weary you so.

When you wake up,you can admit to the real uncertainty in the Global Average Surface Temperature. Gavin did….

Kip,

You’ve been writing about this for a long time, so you should have got on top of the basic difference between a mean of anomalies and an anomaly of means. It matters.

Gavin was saying that an anomaly of mean temperature would, like the mean itself, have a large uncertainty. He isn’t “admitting” to anything – he’s simply explaining why neither GISS, nor anyone else sensible, calculate such a mean. A mean of anomalies does not have that error. That is a basic distinction that you never seem to get on top of.

“A mean of anomalies does not have that error.”

Sorry, but it DOES. !!

The mean itself has an error margin of +/- 0.5, so the anomalies can be no better.

The laws of large numbers DO NOT APPLY

This is a basic fact you never seem to comprehend.

For both of you.

An anomaly of means is a meaningless quantity on an unknown sample space. It has no error because it has no meaning without putting it into a background and in doing so you have to plot it in an error range.

Nick is correct but it appears to me he does not know the second part that the moment you try and use that errorless number you have to put it in an error range.

Want to try it, roll a dice 6 times and each number is supposed to come up once. So an number not turning up or any number coming up more than once is your anamoly count. Now take the mean of the anomolies and it tells you what?

Even if you were trying to work out if a dice was loaded to use the mean of the anamolies you have to now bring in the distribution range and deviation you would expect and now you get your error back. Your errorless, meaningless number when put into a background now has an error range.

If you want to see real scientists do it here is the Higgs discovery in it’s background distribution

http://cms.web.cern.ch/sites/cms.web.cern.ch/files/styles/large/public/field/image/Fig3-MassFactSoBWeightedMass.png?itok=mrA7uJV2

“Want to try it, roll a dice 6 times and each number is supposed to come up once. ”

..

FALSE.

..

You do not have a basic understanding of probability theory. Probability theory says that if you get six ones in a row, the chances of that happening are 1 in 46656. The probability of getting each number to come up once is (6*5*4*3*2*1)/46656 = 720/46656 = 0.015432

Yes and that is the point you need to bring in the distribution and to prove the dice is loaded you would very quickly establish you have to roll the dice a lot more than 6 times.

I guess for you David I can reverse the question how do I record the mean of anomoly of a range of rolls, and what is it relative to?.

This is where you lose it LdB: “So an number not turning up or any number coming up more than once is your anamoly count.”

…

That happens with a probability of 0.984568, so in 2000 rolls, your anomaly count would be about 1969. Taking a mean of this number over a bunch of tries doesn’t tell you anything. You are not using the correct procedure to detect a loaded die.

The topic at hand is not what about any of that .. can we stick to the subject this is just junk discussion about the bleeding obvious 🙂

So getting this back on track .. if anyone wishes to pick it up

So if we wish to talk about means of anomolies you must first define what our definition of anomoly is. The only way to define an anomoly is by reference.