Guest Essay by Kip Hansen

There has been a lot of news recently about exoplanets. An extrasolar planet is a planet outside our solar system. The Wiki article has a list of exoplanets. I only mention exoplanets because there is a set of criteria for specifications of what could turn out to be an “Earth-like planet”, of interest to space scientists, I suppose, as they might harbor “life-as-we-know-it” and/or be a potential colonization target.

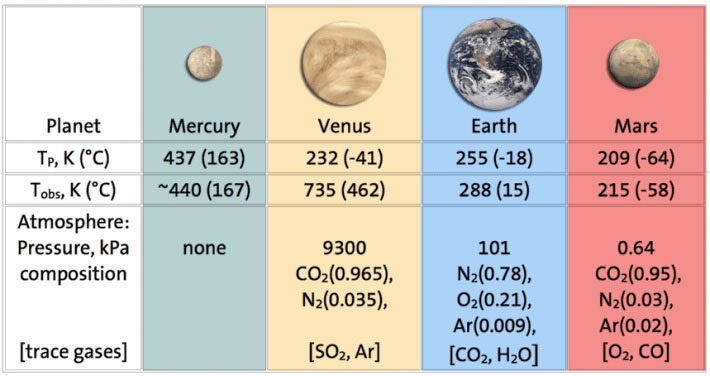

One of those specifications for an Earth-like planet is an appropriate average surface temperature, usually said to be 15 °C. In fact, our planet, Sol 3 or simply Earth, is very close to qualifying as Earth-like as far as surface temperature goes. Here’s that featured image full-sized:

This chart from the Chemical Society, [shows] that Earth’s observed average temperature should be about 15°C, and note that our atmosphere contains mostly Nitrogen (78%), Oxygen (21%) and Argon (0.9%) which makes up 99.9% of the total — leaving about one-tenth of one percent for the trace gases water (H2O and CO2).

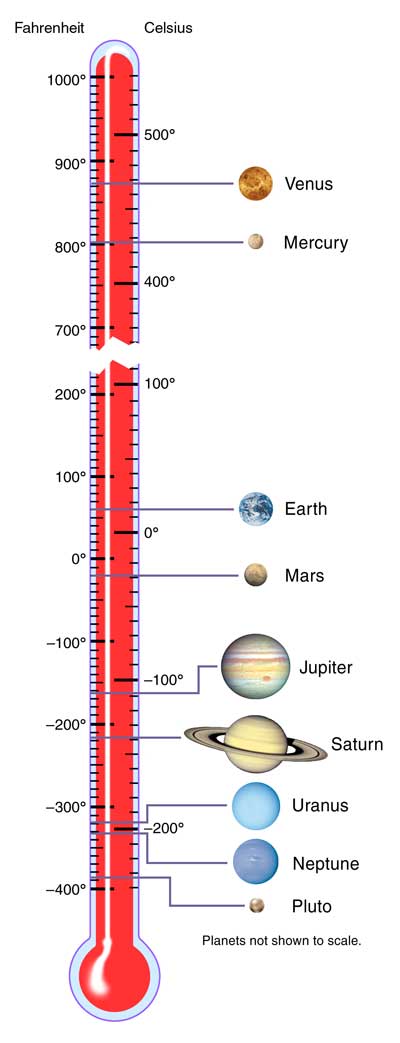

Let’s look at the thermometer:

We see the temperatures believed to exist on the surfaces of the eight planets and Pluto (poor Pluto…).

The ideal Earth is right there sat 15°C or 59 °F.

Mercury and Venus are up at the top, one due to proximity to the Sun and the other due to a crushingly dense atmosphere, well out of range for Earth-like planets.

Mars is down below the freezing temperature of water, due to distance from the sun and mostly a lack of atmosphere coming in at 70°F (20°C) near the equator but at night plummeting to about minus 100° F (minus 73°C) with an estimated average of about -28°C. The average is a little low, but mankind lives on Earth in places with a similar temperature range, at least on an annual basis, so with adequate shelter and clothing (modified for lack of breathable atmosphere), it might do.

The other four planets and Pluto (poor Pluto) don’t have a chance of being Earth-like.

This next thermometer shows that Earth provides a temperature range suitable for human and Earth-type life, ranging from 56.7°C (134°F) at the high end down to -89.2°C (-128.6°F), with an average of 15°C (59°F).

Like Mars, Earth has a comfortable average that falls easily in what most people would consider to be a comfortable range, avoiding extremes, if properly dressed for the weather. For me, a southern California surfer boy by birth, 59°F (15°C) is sweater weather – or more properly, Pendleton wool shirt weather. 59°F (15°C) is the average Fall/Winter temperature of the surf at Malibu and most of us required wetsuits that would keep us warm in the water.

Taking a closer peep at the middle of our little graphic, we see that the IDEAL Earth-like planet would have an average surface temperature of 15°C. But, in the 1880s through 1910, we were running a bit cool — 13.7°C. Luckily, after the mid-century point of 1950, we started to warm up a little and got all the way up to 14°C, just 1°C short of the ideal.

Taking a closer peep at the middle of our little graphic, we see that the IDEAL Earth-like planet would have an average surface temperature of 15°C. But, in the 1880s through 1910, we were running a bit cool — 13.7°C. Luckily, after the mid-century point of 1950, we started to warm up a little and got all the way up to 14°C, just 1°C short of the ideal.

So, how have we done since then?

There is good news. Since the middle of the last century, when Earth was running a little cool compared to the ideal Earth-like temperature expected of it, we have made some gains.

By 2014, Earth has warmed up to an almost-there 14.55°C (with an uncertainty of +/- 0.5°C).

With the uncertainty in mind, we can see how close we came to the target of 15°C. The uncertainty bracket on the left for 2014 almost reaches 15°C.

2016 was a banner year, at 14.85°C and could have been, uncertainty taken in, a tiny bit over 15°C!

The numbers used in this image come from NASA GISS’s Director (and co-founder of the private climate blog that he and his pals work on while being paid by the government with your taxes) Gavin Schmidt. They are from his blog post in August 2017 — and, as always, have already been adjusted to be a bit higher. The current, adjusted-higher, numbers show 2017 0.1°C lower than 2016, which is what I have used.

That RealClimate blog post is quite a wonderful thing — it reveals to us several things, some of which I have written about in the past, which is the part I quote under the image. Dr. Schmidt kindly informs us about one of the miracles of modern climate science. This miracle involves taking data that has rather wide uncertainty — a full degree Centigrade wide, being plus 0.5°C or minus 0.5°C and turning it to accurate and precise data with almost no uncertainty at all!

Dr. Schmidt explained to us why GISS uses “anomalies” instead of “absolute global mean temperature” (in degrees) in the blog post (repeating the link):

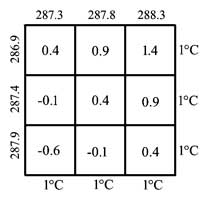

“But think about what happens when we try and estimate the absolute global mean temperature for, say, 2016. The climatology for 1981-2010 is 287.4±0.5K, and the anomaly for 2016 is (from GISTEMP w.r.t. that baseline) 0.56±0.05ºC. So our estimate for the absolute value is (using the first rule shown above) is 287.96±0.502K, and then using the second, that reduces to 288.0±0.5K. The same approach for 2015 gives 287.8±0.5K, and for 2014 it is 287.7±0.5K. All of which appear to be the same within the uncertainty. Thus we lose the ability to judge which year was the warmest if we only look at the absolute numbers.”

So, by changing the annual temperatures to “anomalies” they get rid of that nasty uncertainty and produce near certainty!

Source: https://data.giss.nasa.gov/gistemp/graphs_v3/ annotated-kh

Source: https://data.giss.nasa.gov/gistemp/graphs_v3/ annotated-kh

Dr. Schmidt and the ClimateTeam have managed to take very uncertain data, so uncertain that the last four years of Global Average Surface Temperature data, when straightforwardly presented as degrees Centigrade with its proper +/- 0.5°C uncertainty, cannot be distinguished from one another, have now, through the miracle of “anomalization” been turned into a new, improved sort of data, an anomaly, which is so precise that they don’t even bother to mention the uncertainty — except to add (at least on the above graph) a single uncertainty bar for the modern data which is 0.1°C wide, or in the language used in science, +/- 0.05°C. The uncertainty in the Global Average Surface Temperature has magically become a whole order of magnitude less uncertain…. And all that without a single new measurement being made.

The miracle is accomplished by the marvel of subtraction! That is, one simply has to take the current temperature in degrees, which has an uncertainty of +/- 0.5°C, and subtract from that the climatic-term mean (current 1981-2010) and “voila” — the anomaly with a wee tiny uncertainty of only +/- 0.1°C.

Let’s see if that really works:

Here’s the grid of all the possibilities with a range of +/- 0.5°C for the 2015 temperature average in absolute degrees, and the 1981-2010 climatic mean in degrees, with the same +/- 0.5°C uncertainty range, both of which are given by Dr. Schmidt in his blog post. One still gets the +/- 0.5°C or 1°C wide uncertainty range. It did not magically reduce to a range one-tenth of that — it didn’t turn out to be 0.1°C wide as shown in Dr. Schmidt’s graph. I’m pretty sure of my arithmetic, so what happened?

How does GISS justify the new-improved wee-tiny uncertainty? Ah — they use statistics! They ignore the actual uncertainty in the data itself, and shift to using the “the 95% uncertainties on the estimate of the mean.” Truthfully, they fudge on that a little bit as well, which you can see in their original data. [ In their monthly figures, the statistical uncertainty (+/- 2 Standard Deviations) is a bit wider than the illustrated “0.1°C”.]

So rather than use the actual original measurement uncertainty, they use subtraction to find the difference from the climatic mean and then pretend that this allows the uncertainty to be reduced to the statistical construct of the “uncertainties” of the mean — standard deviations.

This is a fine example of what Charles Manski is talking about in his new paper:

“The Lure of Incredible Certitude”, a paper recently highlighted at Judith Curry’s Climate Etc. While Dr. Curry accepts Manski’s compliments paid to climate science based on his perception that many “Published articles on climate science often make considerable effort to quantify uncertainty.”, we see here the purposeful obfuscation of the real uncertainty of Global Average Surface Temperature annual data, replacing the admitted wide uncertainty ranges with the narrow “uncertainties on the estimate of the mean”.

Graphically, it looks like this:

Although I was a semi-professional magician in my youth, I have nothing that compares to the magic trick shown above — a totally scientifically spurious transformation of Uncertain Data into Certain Anomalies, reducing the uncertainty of annual Global Average Surface Temperatures by a whole order of magnitude — using only subtraction and a statistical definition. Note that the data and its original uncertainty are not affected by this magical transformation at all — like all stage magic, it’s just a trick.

A trick to fulfill the need of the science field we call Climate Science to hide the uncertainty in global temperatures — an act of “disregard of uncertainty when reporting research findings” that will “harm formation of public policy.” (quotes from Manski).

The true answer to “Why does Climate Science report temperature anomalies instead of just showing us the temperature graphs in absolute degrees?” is exactly as Gavin Schmidt has stated — if Climate Science used absolute numbers they annual temperatures would “All of which appear to be the same within the uncertainty. Thus we lose the ability to judge which year was the warmest if we only look at the absolute numbers.”

Thus, they use anomalies and pretend that the uncertainty has been reduced.

It is nothing other than a pretense. It is a trick to cover-up known large uncertainty.

# # # # #

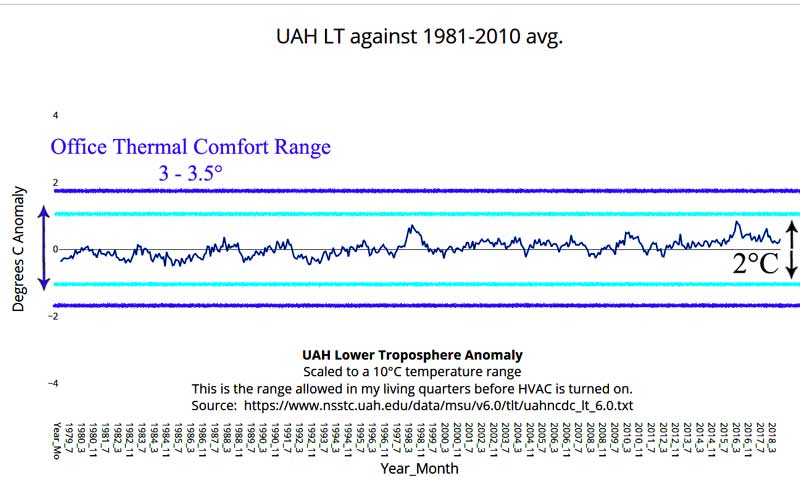

While I was preparing this essay, I thought to attempt to illustrate the true magnitude of the recent warming (since 1880) in a way that would satisfy the requirements of a Climatically Important Difference. Below we see the UAH Lower Troposphere temperatures (even these as errorless anomalies) graphed at a scale of temperatures allowed in my personal living quarters before our family resorts to either heating or cooling for comfort — about 8°C (15 °F — as in 79°F down to 64°F). Overlaid in light blue is a 2°C range, into which the entire most recent satellite record fits comfortably and in purple, the prescribed 3-to-3.5°C comfort range from the Canadian Centre for Occupational Health & Safety for an office setting. If the office temperature varies more than this, then the HVAC system is meant to correct it by heating or cooling. As we can see, the Global Average lower troposphere is very well regulated by this standard.

An interesting aside is that the Canadian COHS allows an extra 0.5°C in the winter, increasing the comfort range to 3.5°C, accounting for the differences in perception of temperature during the colder months.

An interesting aside is that the Canadian COHS allows an extra 0.5°C in the winter, increasing the comfort range to 3.5°C, accounting for the differences in perception of temperature during the colder months.

# # # # #

Author’s Comment Policy:

Hope you enjoyed this rather light reading.

I am not so convinced by the hopeful thinking of astronomers regarding exoplanets. I believe they are out there (the planets, not the astronomers), and for the record I believe there are other intelligent beings out there as well, I just have serious doubts that our rather primitive instruments can identify them at such great distances.

For you budding scientists out there, Gavin Schmidt has given you a useful tool for turning your sloppy uncertain data in highly certain data using nothing more complicated than subtraction and a dictionary. Good luck to you!

Feel free to leave your interpretations of what the GISS Temp global graph in absolute temperatures (degrees) really tells us based on the two uncertainty ranges shown.

Oh, and against all odds, some things are better than we thought. The Earth, if we allow her to warm up just a tiny bit more, will finally be at the expected, ideal temperature for an Earth-like planet. Who could ask for more?

# # # # #

Good comments Kip. The people who want to get rid of that nasty uncertainty probably think denial is a river in Egypt. Another characteristic for an earth-like exoplanet would be a magnetosphere, wherein the gases that form an atmosphere are protected from solar winds.

Ron ==> Magnetosphere….Mars? It was my understanding that Mars is thought to have once had a robust atmosphere but has lost it for unknown reasons.

The books that I’ve read say that Mars lost it’s atmosphere when it’s magnetosphere became weak enough that it could no longer protect the atmosphere from the solar wind.

Exactly. By the way our magnetic field strength is weakening substantially, but it may be a lead-in to a reversal and not something more alarming.

The Earth’s magnetic field has been reversing about every 100K to 120K for at least as long as the Atlantic has been growing.

The current reversal is nothing unusual.

Mars is slowly losing its atmosphere even today, as H (from H2O disassociation) , N2, He, Ne,and Ar are not fully bound.

And Venus has lost its H20 from disassociation and H escape.

Also, Mars does not have sufficient mass to create enough gravity to hold these gases in it’s atmosphere, hence most of what is left is CO2 – a ‘heavier’ gas (higher molecular weight). Mars was never going to hold it’s atmosphere long term because of this.

Why hasn’t the Sun stripped the atmosphere from Venus?

Tom,

Good question.

Venus doesn’t presently have an internally-generated magnetosphere, for whatever reasons. It does however sport an external magnetosphere, thanks (ironically?) to the solar wind.

Venus’ small magnetic field is created by interaction of its ionosphere with the solar wind. This weak field differs from the common intrinsic magnetic fields (generated by planetary cores).

It’s possible that Venus has an intrinsic field, but has been in a polarity reversal since its magnetism has been observed. But probably it simply lacks a core-generated magnetosphere.

Whether the small field generated in its ionosphere is sufficient to protect its atmosphere from the solar wind, I don’t know.

Thanks for that, John. It was also my understanding that the Sun’s magnetic field was possibly involved in protecting the atmosphere of Venus. I haven’t heard about any other theories to explain it That was the reason for my question, to see if there were other theories out there.

our atmosphere contains mostly Nitrogen (78%), Oxygen (21%) and Argon (0.9%) which makes up 99.9% of the total — leaving about one-tenth of one percent for the trace gases water (H2O and CO2).

I think this is wrong. Water vapour makes (ON AVERAGE) 1% of our atmosphere (this was the case last time I checked). The other percentages add up to 99,9% because those values are their concentrations IN ABSENCE OF water vapour. They are generally very well mixed, which is totally not the case for water vapour, that’s why the water vapour concentration is provided separately.

I think the average water vapor is between 0 4 and 0.5%. CO2 0.04%.

That is mass %

Mass yes, but volume, roughly 1% (H2O is quite light compared to O2, N2 and Ar). In the end it is the volume that tells you about the number of molecules, which is also what matters for the GH effect.

Nylo & Henry ==> I am not sure what the Chemical Society used as their standard for “%”…mass or volume. I think it is only meant to be “illustrative”, not strictly quantitative.

Kip

Either way

Mass/mass

or

Vols/vols

your Society is wrong?

Important to note is that mass / mass, H2O is 10 x higher than CO2.

That makes nuclear not more beneficial than e.g. burning gas (since it produces a lot more water vapor) in respect of GH gases

I.e if you believe there is some man made warming caused by GH gases.

henry ==> Nuclear cooling water (steam) that goes up the stack can easily be recaptured as clean fresh water — and if salt water is used for cooling, the plant becomes a desal plan as well.

yes, Kip,

one of the reasons I dislike nuclear is that the one plant here in the Cape (South Africa) killed all the fish in the surrounding ocean where the warmer water is being dumped. If one plant can do so much damage to the [local] climate, we donot want to build anymore plants?

Warmer ocean/river water ultimately leads to more H2O (g) in the atmosphere?

It is simple arithmetic?

Not that I believe there even is a GH effect but if there were, then thinking that nuclear is the solution seems to me like not such a bright idea.

Since the Cape here has such a shortage of water I am certainly interested to hear your plan in changing this warmer water to de-ionized / distilled water. If it were that easy I am sure the powers that be here would be making a plan?

“one of the reasons I dislike nuclear is that the one plant here in the Cape (South Africa) killed all the fish in the surrounding ocean where the warmer water is being dumped. ”

I have no doubt that the local enviro’s blamed the power plant, but I doubt that is what happened.

BTW, pretty much all power plants require cooling, the amount needed for nuclear is the same that’s needed for an equally sized fossil fuel plant.

MarkW

No, I will disagree with you there. because of their more conservative departure from nucleate boiling criteria, most nuclear plants do not use superheated steam in their secondary cycle process through the turbines, so an equally-sized (electric delivery) nuclear plant will discharge slightly hotter water into its cooling tower, cooling ponds, or cooling lake, or through-pass river. But BECAUSE the difference in heat energy is easily calculated, is prevented by extra cooling towers or lower heat output in very hot weather – depending on the local mitigation approved process – and is much smaller in any case than “life threatening” cases, there is great doubt that the wtory spread by the enviro claims is correct.

Thx. To stop a nuclear reaction needs a lot of cooling water. Just to switch off the gas needs how much cooling water?

HenryP

Sort of. To “stop” a nuclear reactor only requires that the control rods be inserted. Reaction stops, reactor (and steam generators and turbines and pipes and oncdensors) begins cooling down < Because the primary heat source is, indeed, shut down. A reactor does continue to generate decay heat from the core (which begins around 7% of the previous power level), then goes down exponentially with time. This is the decay heat that must continually be removed after shut down. But 7%-3%-1.5%-0.75% are small amounts of the previous 100% cooling water flow needed at 100% power levels.

Now, in sharp contrast, a gas turbine combined cycle plant (3 x 250 Megawatt for example) is very different. The two gas turbines generate 500 Meg's of power with almost 0.0 cooling water: they want their blades and burners running as hot as possible, only the lube oil and secondary air must be cooled a little bit. So, on shutdown and while running, there is almost no cooling water needed at all. The tertiary steam generator runs on the steam generated from the waste heat from the two GT's, so it must reject all of its waste energy to the cooling water-condenser water just like any other steam plant. But only 250 Meg of cooling water is needed from a 750 Meg GT+GT+ST combined cycle plant. The actual numbers are a bit more complex to calculate, but I hope you get the point.

Thx for the explanation. But I I can see the results [of more nuclear]. Some have reported an increase in growth both in size and numbers of the fish \round the plant when it is using river water as cooling water….

Well, yes, henryp – I believe that there IS a shortage of fish around The Cape. But maybe all of the uncontrolled (mainly Korean) fishing offshore, and the poaching inshore, just MIGHT have some effect on the populations?

no. a lot of fish cannot stand the warmer water,

Exoplanets… fantastic

And on current technology at least 40,000 years away.

We have plenty of time. We still have to finish the search for intelligent life on this planet… /Sarc

“We still have to finish the search for intelligent life on this planet.”

News flash . . . we found it, but it is dying off rapidly.

fretslider ==> Yeah, I don’t think we’re dropping in on any exoplanets for tea this afternoon…..

If we figure out fusion power sometime soon, it may lead to a drive source for interplanetary exploration. Interstellar will take a new understanding of basic physics…

This is a very interesting article and demonstrates the miss use of statistics. But the problems are even deeper rooted since it is absurd to claim that there is GLOBAL data going back to the 19th century. Even in the mid 1950s, the Southern Hemisphere data, at any rate that south of the tropics, is largely made up as Phil Jones so candidly noted in the Climategate emails. In truth, we only have worthwhile data covering teh Northern Hemisphere.

Then the data has been so heavily massaged with adjustments exceeding 1 degC to render it worthless for scientific scrutiny and study. An example is that a couple of months back Willis reviewed the BEST data set to see whether the 20 largest volcano eruptions could be found in the data sets. Despite the scientific consensus that volcanoes have a material impact on temperatures. I suggested that the reason one could discern the impact of the largest 20 volcanic eruptions on temperature was due the adjustments/homogenistion of the data rendering it worthless.

At the moment Tony Heller is running an article on “Close enough for Government work” It is well worth reading this since it is right on point:

https://realclimatescience.com/2018/09/close-enough-for-government-work/

The National Climate Assessment (https://science2017.globalchange.gov/chapter/6/) has the below graph, which shows how much hotter the US used to be.

I will set out a couple of more of hiss plots (but read the article for more detail).

And to show the impact of adjustmenst:

The truth of the matter is that we have no idea as to the temperature of the planet such that all we can say is that it has warmed since the depth of the the LIA and that there are large amounts of multidecadal variation but we do not know whether the planet today is any warmer than it was in the 1940s, or for that matter the 1980s.

Even claiming we have useful information for the Northern Hemisphere is a bit much.

It’s more like we have useful information for the Eastern US and most of Northern Europe.

It get’s pretty spotty outside those regions.

Richard ==> Thanks for the link to Heller’s essay. The whole CliSci field is polluted with “hypothesis confirming” statistical manipulations of of messy data sets.

Using “cute tricks” as proofs….

“…the miss use of statistics…”

I thought that was more on the lines of 39-24-36.

[The mods point out that example may be a miss using statistics, and mrs’ing her target. .mod] …

>>

I suggested that the reason one could discern the impact . . . .

<<

I think Mr. Verney meant “could not discern.” His statement would then make more sense in context. I agree with the points he’s making.

Jim

Thermometers aren’t scary enough.

That said, there is a scientific basis for using anomalies rather than absolute temperature values. It’s the best way to evaluate tiny variations in a highly variable time series, with a baseline 15 to 30 times the size of the anomalies. It’s similar to the rationale for logarithmic scales.

However, the Climatariat clearly do it to make a minuscule number look very big. If geology was done by the Climatariat, no photos would ever include a lens cap for scale… 😎

David ==> Oh yes, anomalies are quite proper where they are used honestly to discover something about a deep highlhy variable data set….what they DON’T DO is get rid of the original measurement uncertainty. Used to disguise uncertainty, it is just yet another way of scientists fooling themselves….

David ==> I did a series of digital photos of flowers in the Caribbean….I took at least two shots of each subject — one macro of the flower and one that included my left thumb for a size reference.

http://groups.colgate.edu/geologicalsociety/Features/Geology%20Jokes.htm

😉

I got 5 out of 10. So am I half a geologist?

OMG – guilty as charged and I left geology related employment decades ago. Amateur geology highlights include having to hand over a carefully packed box of rock samples to the Irish army at a roadblock near the Northern Ireland border (they thought the heavy box could contain armaments) and, much more recently, being prevented from taking a cracking layered igneous pebble (destined for inclusion in my garden wall) in my hand luggage on a flight from Jersey as the officers believed I could use it as a weapon on board.

To make it even less scary, convert it to Kelvin and include zero in the scale.

I had desired to post something much more detailed but if one sets out a number of citations then the comment is chucked into moderation.

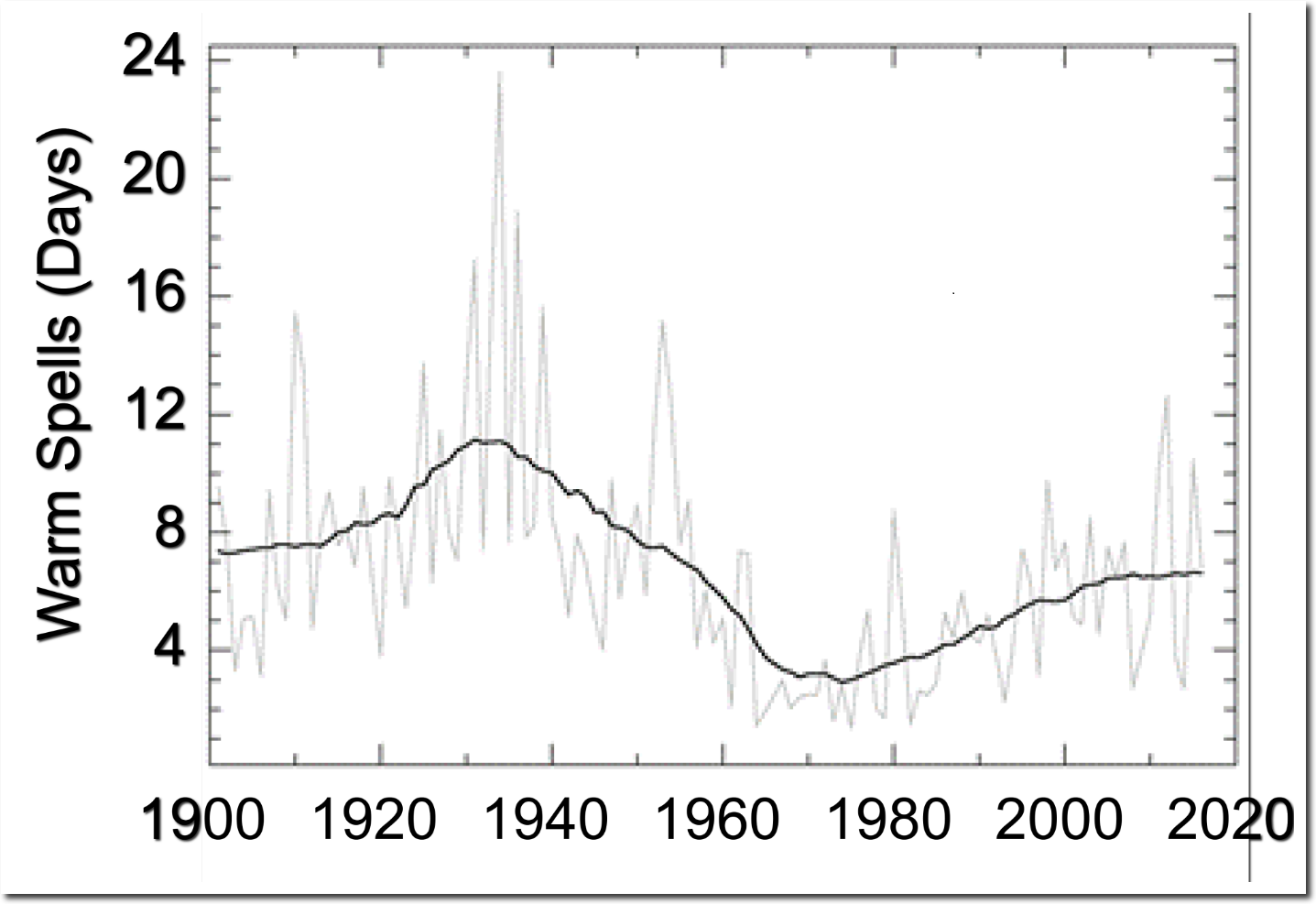

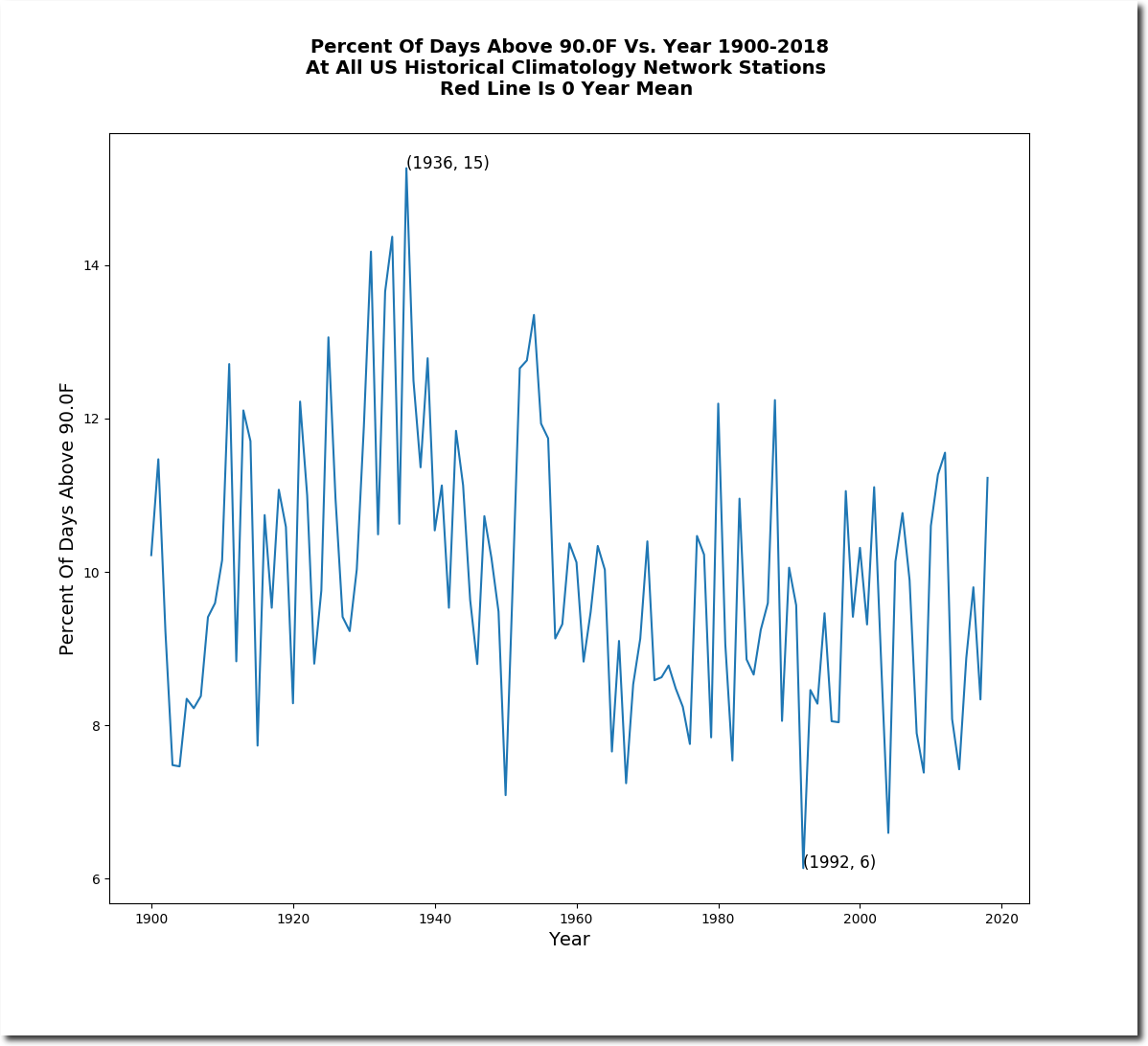

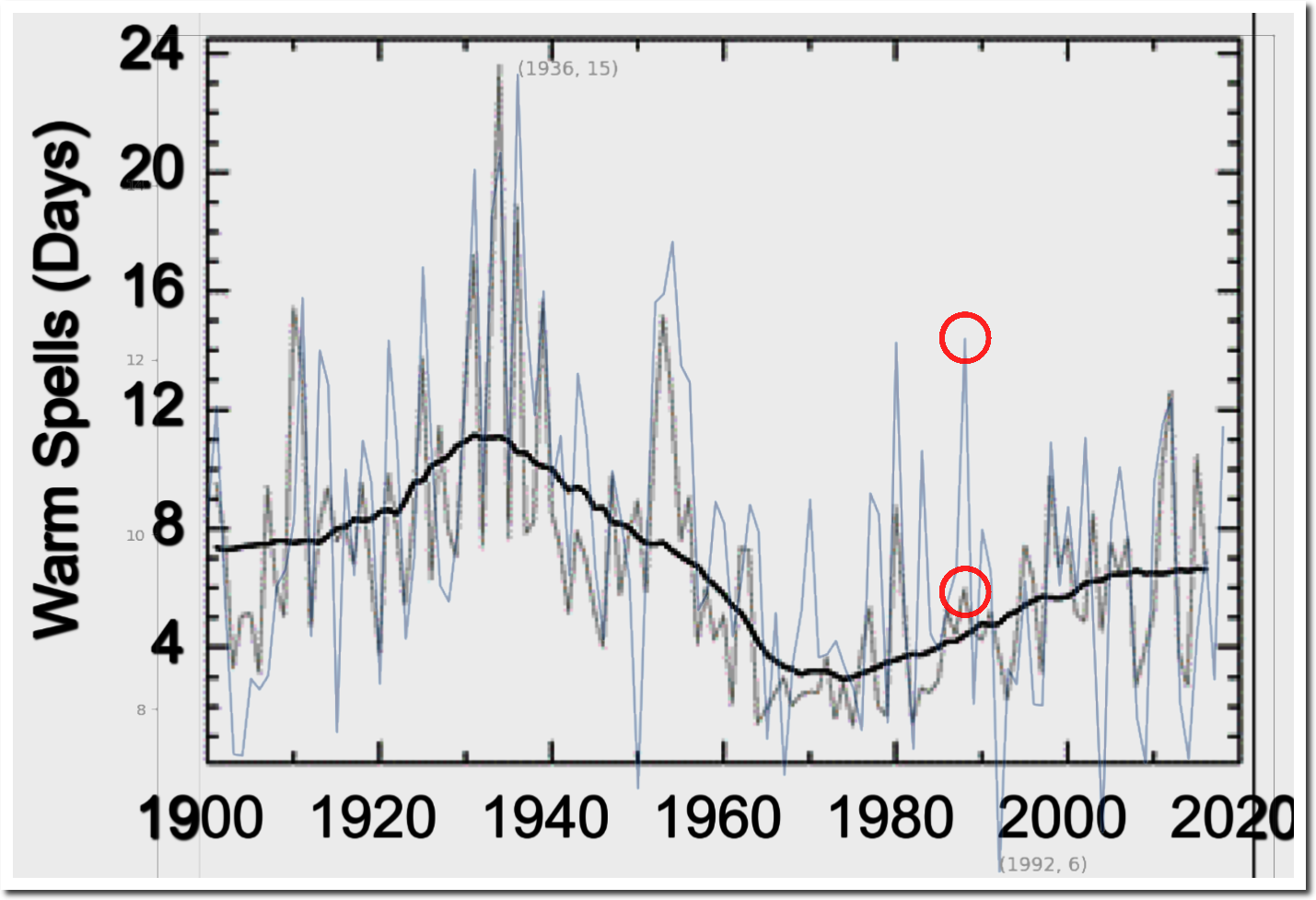

It is well worth having a look at the recent National Climate Assessment Report, and in particular Chapter 6.1.2 Temperature Extremes. I will set out some of their plots which demonstrate the misuse of statistics.

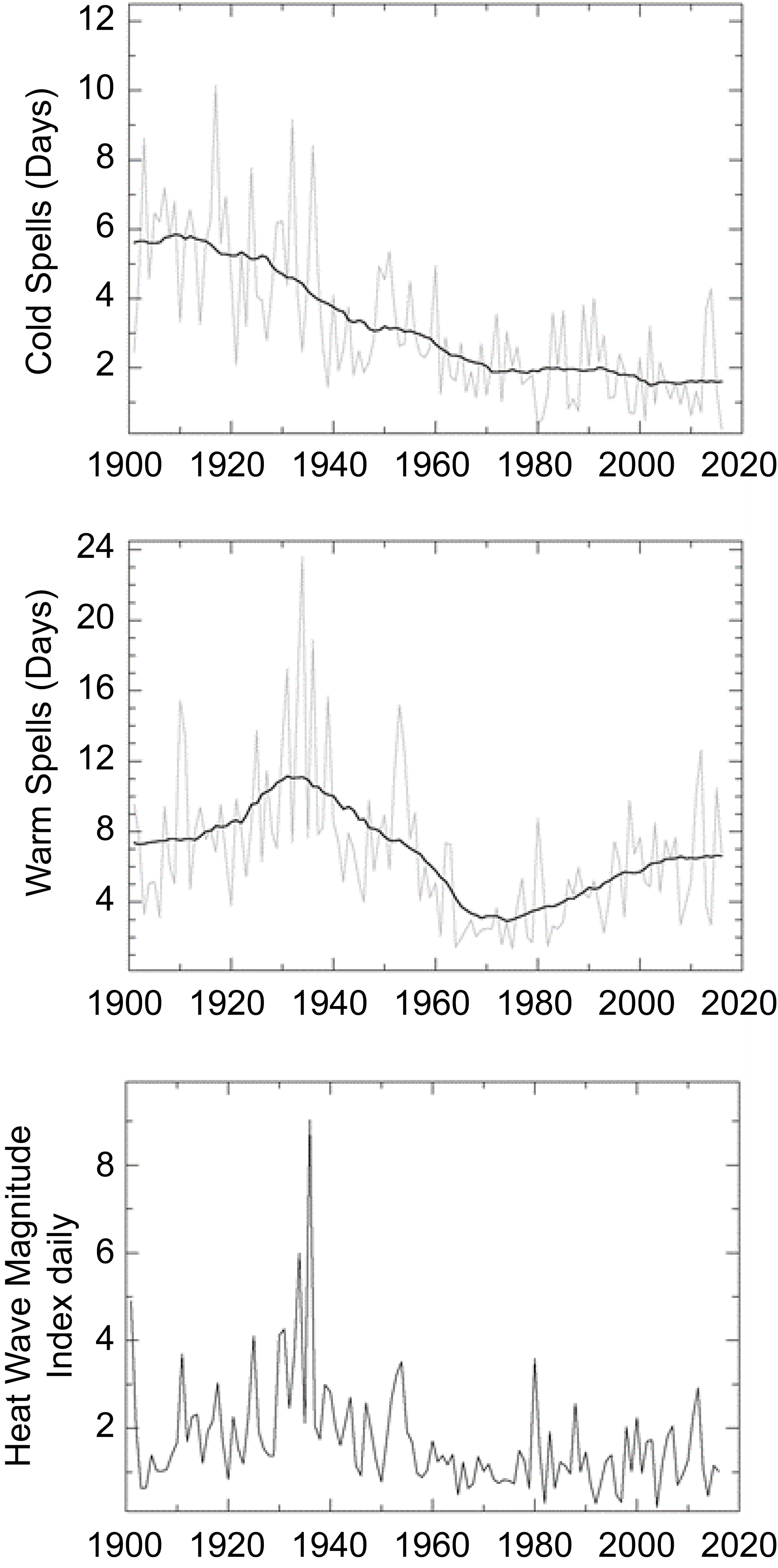

See figure 6.4:

Note the large number of warm days and hot spells in the 1930s, and also the large number of cold days in the 1930s.

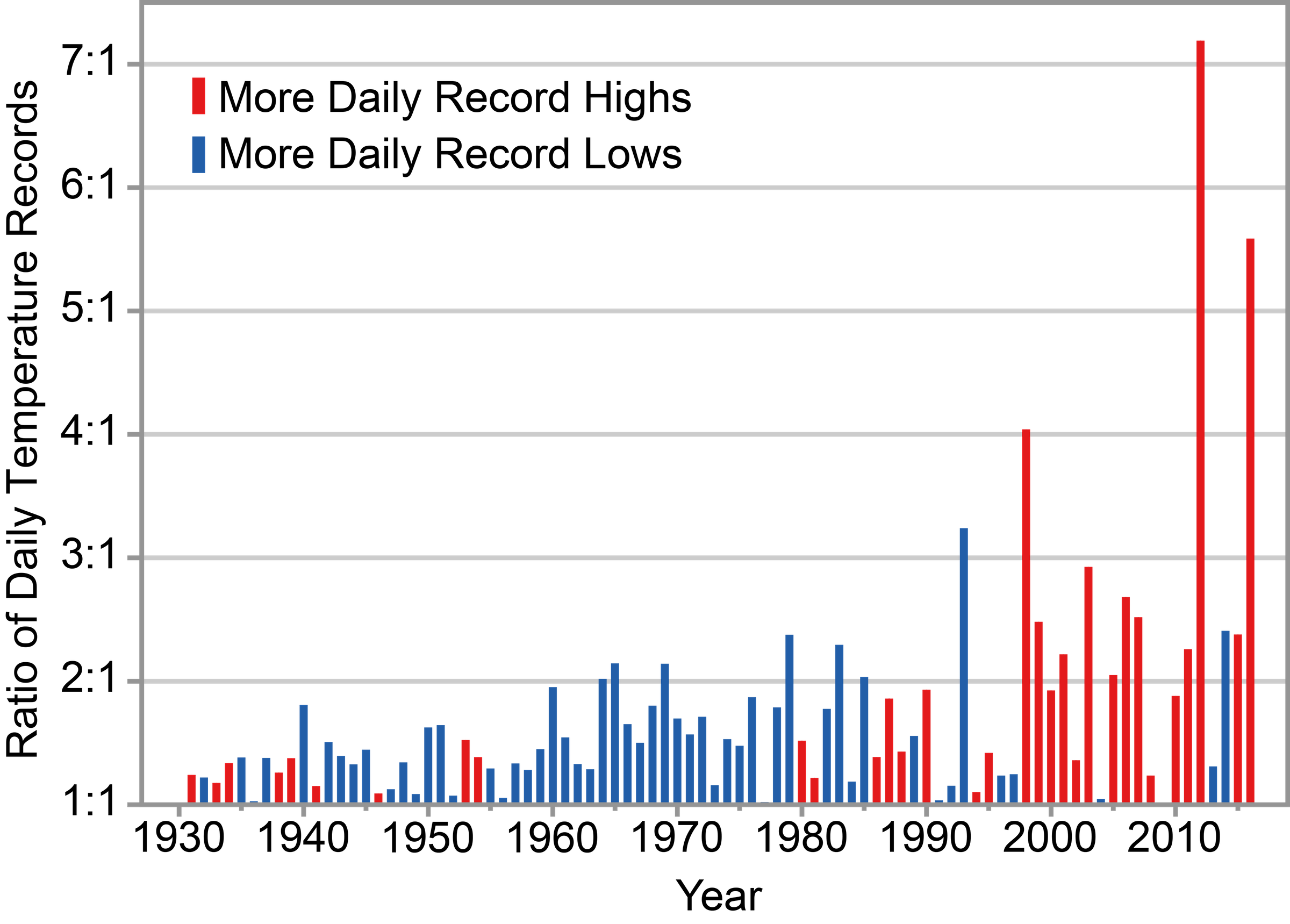

Now look at how they combine this data (in figure 6.5) to show the number of extremes to give the impression that extremes were very minimal in the 1930s and the climate is far more extreme today:

Tony Heller has done a very good video discussing the extremes of 1936.

Richard ==> Yes, propaganda is the art of showing the people only what you want them to see about a topic. If I could teach a Critical Thinking class at Uni, I would include my essay on What Are They Really Counting?.

You show Hot Days, Colds Days and they show “Ratio of Daily Temperature Records” — as if that were a measure of whether or not the country is warming.

Thanks, Kip.

You made my day.

John ==> You are welcome…like everyone, I need a little back patting once in a while….

I don’t wish to put words into Gavin’s mouth but I’m pretty certain that he’d say that if the uncertainty was wider, it’d mean that things could be worse than we thought. He does, after all, like to look on the gloomy side.

Good work Kip, I enjoy your writing particularly.

I’ve long be struck by the fact that the reason given in Meteorology for the use of anomalies is that they provide more useful information about a particular place – against the background of its local climate – than absolute temperature, which does not indicate that information directly.

That this “usefulness” now extends to the globe* has always seemed a rather anomalous usage to me! 😉

*The use of local anomalies from vastly different climates to calculate a single global temperature.

Scott ==> Thanks … Anomalies don’t provide more useful information…a properly scaled graph, maybe with a bit of smoothing, shows the data, and if informed with the true uncertainty, let’s us see what is going on with that metric. If the data has 1°C wide uncertainty, then the public MUST be shown that, and it must be explained so they understand what they uncertainty means in practical terms.

Hiding uncertainty is a Bad Thing — it is Bad for everyone.

Kip Hansen, replying to Scott Bennett (Adding Joe Bastardi to the conversation search)

No, I will politely disagree with you there.

Anomalies can be very, very useful. But the entire calculation of the anomaly MUST BE considered, the purpose of using the anomaly (instead of the actual temperature itself, and the temperature error analysis and its std deviation) and the anomaly’s error analysis and its std deviation.

This generalization will be true for all anomalies, not just temperature – but the CAGW climate community has seized on the difference of a Single Temperature Anomaly from what they have chosen as the “Global Average Temperature” (for a flat plate earth irradiating a uniform average atmosphere in a constant orbit around a constant average sun), so that classroom environment is their world. So let’s use the Global Average Temperature as they do. More accurately, the Global Average Temperature Anomaly (difference).

If all of the world’s surface (air) temperatures were accurately known for each hour of each day of the year.

If those surface temperatures were accurately recorded for a sufficient nomber of years so each hour’s “weather” could be averaged for a sufficient number of seasons.

If those seasonal average daily temperatures did accurately reflect the local climate (not local ever-changing urban-suburban-asphalt-concrete-farming-forests-fields-brush and woodlands and deserts and beach conditions and ocean and sea conditions).

Then you could average together sufficient local hourly records to calculate that thermometer’s average daily (seasonal) changing temperature.

Then you could subtract the hourly measured temperature from the long-term seasonal hourly average and claim you have generated one anomaly. For one place, for one location of that specific thermometer – assuming (as above) that the local environment around the thermometer has not affected your recent temperature measurements.

Given enough accurate hourly temperature anomalies from enough locations worldwide, theoretically you now have a global average temperature ANOMALY. (Not global average temperature for that hour, but the theoretical hourly temperature difference from your assumed local standard hourly temperature. )

But that’s the problem – not just all of the assumptions about measuring each hour’s temperature accurately, but the assumptions about what that entire process requires. Including the fundamental assumption that the earth (globally) was in a stable thermal equilibrium at some global average temperature at some time in the past.

The earth has never been at thermal equilibrium – it can’t be because there is no natural “thermostat” set by an Infinite Mother Nature. Rather, the earth continuously cycles from a “too hot” condition (when it loses more heat to space than is gained for that period of time and thus cools), through an unstable transient period somewhere near the average of “too hot” and “too cool”, towards a period when too little heat energy is being lost to space (and thus the received energy is more than is being radiated to space.)

Like a swing whose “average speed” is only momentarily ever measured “at average” – but whose “average speed” can be constantly measured at always changing values; whose height is always changing but which only momentarily is ever at its “average height”; and whose average potential energy is thus also always changing; and whose average kinetic energy is also ever-changing – you can best discuss its state by using the anomaly (position, speed, mass, velocity) of that swing from the “instantaneous expected perfect state.”

Go in the classroom, and you can perfectly calculate every perfect theoretical piece of information you wish. Then determine a difference from that perfect theoretical state, then write a paper about the anomaly, the value of that anomaly, and the trend of that anomaly into the infinite future.

But the actual state of even the edge of that swing in the real world? Can’t predict it even above the molecular (much less atomic level.) Too many real world interferences such as wind, air friction, pivoting friction, motion and rocking of the impulse pushing the swing, movement of the body on the swing, changes in mass of the swing, its chain, the body on the swing, and the friction between every link of every point on the chain changing due to wear and air friction.

The myth of the climatrologists is that they CAN predict the far future of the earth’s weather by ignoring all of the small parts of each of these events by concentrating on the global averages of events, yet pretend they are calculating every effect by focusing on the individual dust particles everywhere, the individual CO2 concentrations as they control the global average clouds and global average humidity and global average pressure.

“but the CAGW climate community has seized on the difference of a Single Temperature Anomaly from what they have chosen as the “Global Average Temperature” (for a flat plate earth irradiating a uniform average atmosphere in a constant orbit around a constant average sun)”

No, that is exactly what they don’t do, and I keep explaining why. What you describe would be quite wrong. Instead they form anomalies locally, by subtracting local climatology (averaged from that environment) from each region, before any spatial averaging. They avoid computing a global average temperature.

Kip ==> Sure, I don’t disagree because I see what you are saying… it is even more complicated than has been explicated in comments here and the errors are large even at the local climate stage of anomaly preparation/homogenisation.

I also have many more concerns about the use of anomalies but one thing that hasn’t been discussed here yet, is their unequal application.

To restate, the problem with the use of anomalies globally, is that their relevance is unequally represented.

The greatest variation – in terms of anomalous temperature – occurs in the temperate zones – which just happens to be where the vast majority of the world’s population resides (Especially in the Northern Hemisphere, due to its greater land mass). While the least anomalous zones,** the oceanic (Surrounded by sea), the tropical/subtropical and the cold/polar regions are often also the most sparsely measured!

To repeat; the most thoroughly and carefully monitored places on Earth, also happen to be the the most anomalous!

I fear that this weighting is not being accounted for adequately and therefore any result* will carry significant bias.

*Global average

**Particularly in the Southern Hemisphere

Scott ==> Well, no one is tying to be “fair” — they are trying to find a way to discover if “the Earth is warming because of CO2”. In the last 20 years, they have been scrambling to find even the tiniest changes as long as they are generally UP. (See Cowtan and way).

One accurate way of illustrating the magnitude of the problem is illustrated in the essay “GISS Temps” in the animated gif. When scaled in absolute degrees with the 0.5°C uncertainty range shown, the first 100 years (1880-1980) all fall inside of the uncertainty range of 1880. 1980-Present all fall into their own uncertainty range.

“reducing the uncertainty of annual Global Average Surface Temperatures by a whole order of magnitude”

Well, we’ve been through all that before. Yes, if you subtract the variable that incorporates most of the uncertainty (location/seasonal mean), you know the remainder much better.

But there is a clear contradiction in the broad fuzz that is supposed to be the alternative fact. Expected variability actually means something. It means that you expect to see randomness of that amplitude. And you simply don’t see it. It isn’t there. There is nothing like variability of the order of ±0.5°C (sd). The graph is self-disproving.

Maybe you just need to remove your special Warmunist goggles, which filter what you don’t want to see.

The absolute errors in any measurement do (almost always) follow a statistical curve, with the true value being in the most probable region of that curve.

But you CANNOT change the shape of that curve with ANY mathematical operation. If, as Schmidt claims, his anomalies are within the 95% region at 0.1C – this is identical to a claim that his absolute measurements are also within the 95% region at 0.1C.

Does he claim this? No. Which leads to a simple binary conclusion – statistical incompetency, or pseudo-statistical fraud.

Writing Observer ==> Nick just mixes up statistical concepts with measurement concepts. The whole trick relies on overlaying a statistical construct on top of a measurement to hide the actual measurement uncertainty.

“overlaying a statistical construct on top of a measurement”

You don’t measure a global average. You calculate it. And you calculate the effect of the measurement variability on the calculated average. That is statistics.

>>

That is statistics.

<<

That may be statistics, but there’s no way to calculate a global average using physics. Temperature is an intensive thermodynamic property of a system. It applies to systems that are in equilibrium.

Jim

Jim,

“That may be statistics, but there’s no way to calculate a global average using physics.”

It’s the only way to calculate an average of anything. Temperature applies to any system with local thermodynamic equilibrium. If you don’t have that, you can’t get a temperature in the first place. If you do (and we do), you can average.

>>

If you don’t have that, you can’t get a temperature in the first place. If you do (and we do), you can average.

<<

Okay. I have two beakers of hot water. One’s at 50 degrees Celsius and the other is at 80 degrees Celsius. The beakers each contain different amounts of water. I pour both beakers into a third beaker that can contain all the water. What is the final temperature of the water after the temperature equalizes (assuming no heat loss or gain)? And just for grins, what’s the temperature of the water mix halfway through the process?

Jim

Jim

“The beakers each contain different amounts of water.”

That then is the sampling issue. If you want to say that the final temperature is the average of the water before (reasonable) you have to decide how to sample that, so the sample average reflects the final. Each beaker is presumably homogeneous, so you can get those averages easily. But the between beakers is not homogeneous, so you have to sample in correct proportions – ie weighted according to mass in each beaker. Then you’ll get the right answer. Standard stats. Or, you could say, averaging by volume integration.

And if you have combined them with limited mixing, again the average is the same, subject to proper sampling (which would be hard, since it is changing). The sampling issue diminishes as you mix, decreasing inhomogeneity.

>>

That then is the sampling issue.

<<

Heh, and where is my answer? You got two temperatures with an LTE exact temperature. I even gave you a final LTE condition for the answer. The in-between value resembles the atmosphere. The atmosphere is never in equilibrium.

>>

The sampling issue diminishes as you mix, decreasing inhomogeneity.

<<

So no temperature with hundredths of a degree precision. How about tenths of a degree? Maybe even within a degree? I’m flexible here.

Jim

Jim

“Heh, and where is my answer?”

As I said, more information needed, namely, the masses of the two beaker contents. If it’s 200 gm at 50C, and 100 gm at 80C, then the answer is (50*200+80*100)/300 = 60C. That is just properly weighted sampling, or, equivalently, volume integration.

The in-between average is also 60C. We know that from conservation. Sampling needs care then, and will introduce some error. But not much, and diminishing as mixing proceeds. A few well-placed thermometers would get it very accurately, as on Earth.

>>

As I said, more information needed . . . .

<<

Finally we get to the crux of the situation. Yes, you need more information, as the rest of us knew from the beginning. But when doing the Earth’s atmosphere, you’re fine with the information I gave you. There is no way you can “calculate” a global temperature from the few thermometers we have access to. AND it is impossible–to boot.

>>

We know that from conservation.

<<

No we don’t. You don’t know how I mixed the water. I could have poured all the water from one beaker in first and then all the water from the other beaker–or any combination and order from the two beakers. You’re assuming I poured both in simultaneously. The midway case is totally unknown and totally incalculable.

>>

A few well-placed thermometers would get it very accurately, as on Earth.

<<

Phooey!

Jim

“The midway case is totally unknown and totally incalculable.”

No. There are x Joules in the water before mixing, and density and specific heat are assumed uniform (OK, you can correct for temperature if you want). The average T before, during and after mixing is x/ρcₚ. Confirming that by measure is a matter of sampling. Simple before, with correct mass weighting. Simple after, if well mixed. Needs care in between, but can be done with good sampling.

>>

Simple before, with correct mass weighting. Simple after, if well mixed. Needs care in between, but can be done with good sampling.

<<

You don’t know how much water is in either beaker, so you can’t calculate the final temperature. There’s no sampling during or after–we’re supposed to calculate all that. The mixing action is obviously chaotic. There’s not enough sampling in the Universe to capture all of that. What a dream-world you live in.

Jim

A few missing pieces of information:

What is room temperature, and air velocity in the room?

Is the room substantially larger than the three containers?

What is the material coefficients of the containers, their three masses, and each surface area and wall thickness of the three containers?

Is the wall thickness constant cross all three surfaces of all three containers?

Are the three containers insulated on the bottom from the table? (If so, i will ignore conduction losses to the (unnamed, unspecified table top.)

Are the two beakers poured into the third from a height that generates a spray and droplets, or are they poured in a continuous steady stream?

Is the top of the containers closed, open, or insulated?

What is the time from start to stop of the evolution? (If short, I will ignore evaporation losses from the liquids. If not, what is the relative humidity in the room?)

Are the room walls at “room temperature”? (If so, I will ignore radiation losses.)

Unless otherwise notified, I will assume pure water, if that’s an adequate assumption.

RACook, you forgot about altitude above MSL, barometric pressure, and the orientation of the room with respect to the magnetic field of the earth. The presence or absence of Leprechaun farts is not important.

Nah. Covered relative humidity, so that will pick up the pressure effect on temperature coefficients of the assumed pure water convection and evaporation, won’t it? if we’ve got relative humidity, would the evaporation effects of water at 80 deg C change with absolute pressure of the atmosphere?

Yes, I will have to assume outside cosmic radiation influenced by the regional magnetic field and latest solar flares is too small to measure. Good point! Thank you -> Prompt, Proper, Polite, Public Peer review is essential.

Leprechaun farts? Got to think about that. Those do flow with the ley lines above the pot of gold, ebbing as the exchange rate varies.

And Stokes was only worried about mass of the two water volumes, thermal mass of the thermometers! Guess he didn’t think of remote IR thermometers = No thermal influence on the object measured, if the proper emissitivity is chosen for calibration beforehand.

“And Stokes was only worried about mass of the two water volumes, thermal mass of the thermometers!”

It’s nothing to do with thermal mass of a thermometer. And all your nonsense about humidity etc is irrelevant. Surface temperature measurements on earth have nothing to do with possible variations during a physical mixing experiment. The question only makes sense in this context in terms of assessing the average temperature of a mass of water, as expressed after mixing. And I describe what you have to do to calculate that average temperature.

>>

RACookPE1978

A few missing pieces of information:

<<

Did you really miss the point of my example? It’s obvious Mr. Stokes did (or pretends he did).

Jim

Yes, I most likely did – Taking it near-seriously at one point. Sorry about that.

Then again, it IS easier to “measure the real world” rather than ASSUME you have accounted for all of the approximations needed to “calculate” the approximations necessary in even beginning the calculations for dynamic heat transfer. For one example, in a separate engineering web site, we are debating the change in heat transfer for a 100 deg C fluid in a vertical tank with round corners, or square corners – and does not even take into effect the extra cost of fabricating the rounded corners and insulating them, compared to a simpler/cheaper square corner. (That Original Poster still has not answered what the air flow through the room is – another part of the problem.

In another example, I DID “measure” the surface temperature at each 50 mm from the end of a horizontal mild steel bar 25 mm x 25 mm suspended in mid-air in the 23 deg C workshop with still air (natural convection only) with one end heated to 1350 deg F by an oxy-acetylene torch. The measurements over 45 minutes by IR thermometer at one minute intervals down the steel bar did correspond closely (+/- 15 deg) with the theoretical dynamic values for the same points over the same period. Calculations “can be” correct, but ONLY if all of the conditions are known correctly, if ALL of the correct equations for the physical coefficients for the physical conditions are correctly approximated, and if the margin of error between the real world and calculated approximations are acceptable.

Your “beakers” for example, do almost certainly have rounded corners, are most likely made of un-insulated glass or Pyrex, and most likely are not receiving solar IR if they are indoors in a “room temperature” lab, but are the they cylindrical walls or have a conical shape? How far up the walls of the three beakers does the initial water go? 8<)

>>

[Y]es, I most likely did – Taking it near-seriously at one point.

. . .

Your “beakers” for example . . . .

<<

I did state that no heat was gained or lost–and if you assume that no matter was gained or lost then we are dealing with an isolated system.

In any case, I apologize for being snippy.

It was obvious to you, to me, and even to Mr. Stokes that more was needed to answer that problem correctly. Yet Mr. Stokes claims that with a few randomly placed thermometers we can calculate the average temperature of the entire surface (atmosphere?) of the Earth. And that we can start with a set of temperatures with one-degree precision and obtain hundredths of a degree accuracy. It does boggle the mind.

Jim

Jim Masterson

Ah, but dear sir! Mass IS lost (in a real-world basis) if no cover/lid is assumed on the beakers!

And Energy IS LOST from the moment the “experiment” begins, even if the original two beakers are plugged up and have no evaporative/convective losses as the vapors exchange:

Heat energy is lost from the 80 deg C water through the water-film barrier to the first beaker wall (probably Pyrex) to the outer air-film wall to the (probably natural convection) heat transfer to the (near-infinite) room air and room walls (include radiation losses here to the room walls-floor-ceiling (each has a different view factor, probably a different emmissivity as well), conduction losses to the table (probably at room temperature at t=0) through the beaker base plate (if not perfectly insulated).

OK, so now you have the dynamic heat exchange for beaker_80 deg C (at t=0) to the room environment.

Repeat for beaker-50 deg C.

Assume the beaker-final started at room temperature on a table at room temperature … And that is just some of the initial conditions!

IF you are going to “calculate the final temperature of …” then you CANNOT remove ANY simplification to the process, UNLESS you also remove your calculation from any relevence to the real world! That is what I was trying to say to the engineer asking about the rounded edges of his “tank holding 100 deg C water” : What difference does it make?

What is important? (Energy lost, energy potentially saved?

Total cost?

“Keep the water at 100 deg C regardless of expense and material!!!”

“NEVER let the water heat up to 100 deg C regardless of cost, time, money, material, investment, instruments!!!!”

Or even, what difference does it make it I make the tank out of a round pipe with flat ends? (As long as I keep a vent on the tank so it can never turn into an unlicensed, deadly pressure vessel.” )

Nick ==> Calculating is not statistics. Statistics is “Branch of mathematics concerned with collection, classification, analysis, and interpretation of numerical facts, for drawing inferences on the basis of their quantifiable likelihood (probability)”.

Averages, means, etc have calculations from measurements — they are not inferences on the basis of probability.

Mixing the the two fields is where scientists get into trouble.

The average of 2, 8, and 10 is 10. There are no probabilities involved.

When Gavin Schmidt says the GAST for 2016 was 288.0±0.5K , he is talking an average of measurements (a complicated average, but an average none the less). He is not talking the probability of the GAST. His GAST figure has an uncertainty range, because the measurement natively had that range — it must remain. He gives the same range for the Climatic Mean — which is a simple arithmetic average of 30 years of annual data. None of these are probabilities.

Pretending that uncertain measurement data can be magically reduced to precise anomalies is FALLACY – and a misuse of statistics to produce unjustifiable certainty about uncertain data.

“The average of 2, 8, and 10 is 10. There are no probabilities involved.”

Not statistics as I learnt it. An average is a statistic. But the point is that the dependence of the mean on the variation of the data is certainly a matter of statistics. And probability is basic to the notion of error.

Nick ==> That’s alright then — the average of 12, 8 and 10 is 10 though.

I have always suspected that you see everything as statistics…..

Kip,

“His GAST figure has an uncertainty range, because the measurement natively had that range — it must remain.”

Not true. The uncertainty of GAST comes mainly from the sampling error (choice of locations) amplified by the great inhomogeneity of absolute temperature (altitude etc). It doesn’t come from the measurement error at an individual location. The reason the anomaly average error is so much lower is that the anomalies are much more homogeneous.

Kip Hansen

Check the arithmetic in your example, please: (2+8+10)/3 = 6.66 Not 10. 8<) Ask the mods to edit, if you wish.

RA ==> Quite right, typed and calculated mental in haste…I meant to type 12, 8 and 10…..somehow that little sneaky “1” got away.

[A 20 (vice 10) could work as well. .mod]

Kip you are flat out wrong. The average of all the measurements is a statistical estimator of the GAST, and being an estimator there is a “probability” of it being correct. As you well know, the probability of it’s “correctness” can be increased with increasing numbers of observations that go into calculating the estimator. I hope everyone is aware that you cannot measure the Earth’s surface temperature, we can only estimate it based on a multitude of distinct individual thermometer readings in space and time. Please tell me Kip that I don’t need to go into a discussion with you about the relationship between population means and sample means

David ==> All temperature data used in all temperature calculations are RANGES, one degree F wide in the US, auto-converted to degrees C rounded to 1/10 degree C. The uncertainty range is 1 degree F from the get-go. (That range widens to bit due to all the other uncertainties involved in measuring temperature.) A daily average temperature is not the average temperature of the day, but the arithmetical mid-point between the high and low for the day. The monthly average for a station is the arithmetical mean of all the day’s averages (add all the days, divide by number of days). Nothing so far is anything other than elementary school arithmetic, some of it technically wrong (day average). Nothing in this involves the probability of anything — it is arithematic.

There are no population means or sample means — there is no sample (and where there is a sample — daily mean – it is the wrong sample).

GAST has almost nothing to do with the actual temperatures records of actual stations over time — it is a smeared and leveled hodge-podge of a metric. Read the BEST Methods paper. According to Stokes and Mosher, they don’t even try to find the Global Average of anything — they do something quite different, and then just call it the GAST.

Had they actually been trying to find the average, it would be an arithmetical MEAN of all the points — not a probability. (Granted, they would have fudged a majority of the data filling the grid, kriging or smoothing or just outright guessing) but in the end, they find the arithmetical mean of all the means of all the grids.)

Arithmetical means are just that — there is no probability figured anywhere.

And IF you are NOAA you can assume to two decimal places. Despite climate dot gov saying this –

“Across inaccessible areas that have few measurements, scientists use surrounding temperatures and other information to estimate the missing values.”

I agree that what we are measuring, within uncertainty, corresponds to what is happening. Many proxies agree with the periods of warming and cooling for the past 170 years indicating that they are real.

And all that debate about the right or wrong databases is just silly. They are all measuring essentially the same.

What people have trouble understanding is that a difference of ±0.5°C is very little on a daily or monthly scale, but it is huge on a millennial scale. The 6000-year Neoglacial cooling has taken place at a rate of ~ 0.2°C/millennium.

Javier ==> Thus, my last graph with bounds….

In measurement of Global Temperature, an UNCERTAINTY of 0.5°C is HUGE — and yet is purposefully hidden in modern CliSci.

Javier ==> Here’s what your graph above looks like when informed of the true original measurement uncertainty overlaid on the anomaly.

I disagree that the uncertainty is as high as you represent it. If that was true we should see much bigger oscillations and a much bigger spread in the measurements. The uncertainty is probably half that.

Javier ==> Here we are talking Original Measurement Uncertainty — which Dr. Gavin Schmidt and I agree is properly represented as +/- 0.5°C for all of the following: individual station temperature measurements, monthly and annual station averages, climatic period averages, and annual global averages.

Thus, the original measurement uncertainty also properly devolves to the ANOMALY of those last two metrics if we want to show the REAL uncertainty of the “thing” (global temperature) that the anomalies are meant to represent.

This is the truth nugget of the Manski paper — there are “statistically valid” ways to show data that totally misrepresent the uncertainty in the data itself.

In my re=draw of the graph, I added 0.5°C to the top-most trace and subtracted 0.5°C from the bottom-most trace, so the extra width comes from the inclusion of the spread of the data traces selected as well …. this adds about 0.55°C to each set of uncertainty ranges….it would have been about about 2/3 the width if I had used the mean of the traces, so it does look a little exaggerated. Good eye!

The problem with the global uncertainty is that we have several orders of magnitude too few stations to accurately portray the planets temperature. Even if we had a way to perfectly measure the temperature at the stations we do have.

“Here we are talking Original Measurement Uncertainty — which Dr. Gavin Schmidt and I agree is properly represented as +/- 0.5°C”

You are misrepresenting Gavin. He does not say (there) that 0.5 is Original Measurement Uncertainty. He says it is the expected error of a global average of climatology (time averaged temperature). It isn’t the error in individual temperature readings. The expected error of a global anomaly average is something quite different again.

Nick ==> The data given by Schmidt is what he gives. I can’t read his mind — so you might be right — he may think that it is merely a coincidence that the uncertainty in the GAST and Climatic Mean are the same as the uncertainty in the original measurements. But when one averages any data that is most correctly represented as a range, the average — the mean — of the data maintains the same uncertainty range.

It can’t be otherwise.

Kip,

“I can’t read his mind”

One can read the link, though. He says:

“However, and this is important, because of the biases and the difficulty in interpolating, the estimates of the global mean absolute temperature are not as accurate as the year to year changes.”

He does not mention thermometer error. Biases relates to things like latitudinal difference, and interpolation to variations on the interpolation scale, such as topography and land/sea boundary.

You mean like reader error? Like the Australia post employee who was reading temperatures from the wrong end of the slide? Reported in The Australian.

Nick ==> They are “estimates” because of the process which is not statistical in nature, but simply arithmetical — starting with the original individual measurements we have uncertainties (much wider than the eventual global anomalies). The year-to-year changes are only considered more accurate because the uncertainties are dropped off and ignored.

Nick ==> As you well know, we don’t see “variability of the order of ±0.5°C (sd)” in the annual GAST because it has been smoothed out by prior rounding of daily then monthly then annual averages. Original measurement uncertainty is not, and never was, a “standard deviation”. That is a statistical concept, not a measurement concept.

You’ll have to take this up with Dr. Schmidt — the _/- 0.5°C is his (and I thoroughly agree). It is also his (correct) claim that using the actual uncertainty in Absolute GAST in degrees means that we cannot distinguish between the reported temperatures of recent years (see the last graph in the essay proper).

I’d love to see you argue the point with Gavin…..

Kip,

“it has been smoothed out by prior rounding of daily then monthly then annual averages”

Well, yes, that is the point. Averaging, whether over time and space, reduces variability. That is often the reason for seeking an average. It isn’t added smoothing; it is intrinsic.

“You’ll have to take this up with Dr. Schmidt — the _/- 0.5°C is his (and I thoroughly agree).”

I agree too. The 0.5 is the error you would expect in an average of absolute temperature. It comes from the lack of knowledge of the average climatology – the spatial average of the time averages. What GISS, and everyone else with sense, calculate is the average anomaly. That doesn’t have that uncertainty. You don’t calculate the average absolute, then subtract the average climatology – that can’t work. You subtract the local climatology (not subject to the 0.5 uncertainty) from the local temperature (==>anomlay), then average. That is Gavin’s point, long made by GISS and others. One way has big errors, the other doesn’t. They use the right one.

Nick ==> Well, we’ll have to disagree. You cannot get rid of the original uncertainty by simply claiming that the “local climatology” is not subject to uncertainty. Of course it is — there is no way to remove it that is not just PRETENSE.

By the way, read Gavin’s post — he subtracts the Annual GAST from the Climatic Mean — not some local climatology statistic — to find the Annual Anomaly.

Making public policy depends on a proper reporting of the uncertainty in scientific reports. The insistence that GAST Anomalies are [nearly] uncertainty free is just a way of covering up that we can’t really be sure of any difference between recent years. This “problem” is a feature — the real representation of scientific uncertainty regarding Global Temperature annual averages. It is not a “bug” to be overcome through shifting to anomalies with wee-tiny SDs pretending to represent our uncertainty.

Kip,

“he subtracts the Annual GAST from the Climatic Mean — not some local climatology statistic — to find the Annual Anomaly.”

He may do that for working with reanalysis data. You can do that there because the values are on a regular grid which never changes, so whether you subtract the local or global climatology gives exactly the same result. But with GISS and other GAST products (including TempLS), it isn’t the same. There is always a different mix of stations each month. That means the climatology average (spatial) would change each month. You could calculate that, but simpler and equivalent is to subtract the climatology locally (station-wise) to get a local anomaly before averaging. This is fundamental, and all products do it. Well, almost all – USHCN used to average absolute temperatures, but to make that work they had to interpolate missing values, so that they could fulfil the alternative requirement of having an unchanging set of sample locations. Not optimal.

There is no claim that local climatology is free of uncertainty. What matters is that the spatial anomaly average is much less uncertain than spatial temperature average, as Gavin is explaining.

Nick ==> Data with uncertainty is a RANGE. All subsequent calculations must use the entire range.

You are using a trick of definitions to reduce the uncertainty about Global Temperature and its changes.

The GAST is uncertain to 0.5….thus a range from GAST+0.5 down to GAST-0.5 — one degree. The ClimaticMean is uncertain to 0.5 …thus is a range from CM+0.5 to CM-0.5. Subtract CM from GAST. The correct answer is a range from (GAST+0.5-CM-0.5) down to (GAST-0.5)-(CM+0.5). The new range — the range of the anomaly — is 2 degrees top to bottom.

One only gets your tiny nearly-uncertainty-free anomalies by dropping the uncertainty of both the GASTannual and CM.

Why poor Pluto? If he had not kidnapped Proserpine and fed her pomegranates there would be no winter, just balmy comfortable temperatures and continuously growing crops. I feel it is Pluto we have to blame for all of our problems.

/grins

Richard ==> I have pity on poor Pluto — having been demoted by the high-minded autocrats in astronomy, simply because he is too small, an obvious case of iotaphobia.

Pluto wasn’t demoted because it was too small, it was demoted because it hasn’t cleared it’s orbit of other objects.

The Earth hasn’t cleared its orbit of other objects either, yet we call it a planet. Poor Pluto is right! As far as I’m concerned, Pluto is still the Ninth planet. 🙂

Tom ==> That’a boy! Danged Pluto-demoters!

It seems that most scientists think that if you average enough samples, you will reduce uncertainty and get a more accurate result.

It’s common to call our measurements the signal and to call the errors noise. If the errors are truly random then the assumption, that averaging reduces uncertainty, is correct. One of my favorite demonstrations is to extract a small signal from data where the noise is a hundred times as strong as the signal. It’s a powerful technique.

The problem comes when the noise is not truly random. Then we can have red noise. If you play red noise and random noise over a speaker you can hear the difference. The red noise has more low frequencies and fewer high frequencies.

Natural processes tend to produce red noise. Averaging does not reduce uncertainty where there is red noise. link

I’m not a statistician but I have lots of experience processing signals. I strongly suspect that the uncertainty of the global temperature is understated. The idea that any kind of processing can reduce that uncertainty doesn’t pass the smell test.

Just as James Hansen, with no rigorous mathematical justification, threw Bode feedback analysis at climate sensitivity, it seems that climate scientists have done the same with averaging. In both cases the method is unjustified. There should be a rule that, where statistics is invoked, a statistician should be involved.

commie ==> As you might remember, I wrote a whole series on averages. I think the whole signal/noise thing is as misplaced as Bode feedback.

Anomalies are an easy way to look at a changing metric, but it is not scientifically proper to remove the Original Measurement Uncertainty — that is not valid and masks, intentionally or not, the true uncertainty. Masking uncertainty leads to the weird beliefs we see in CliSci today — where tiny changes in long-term averages are considered not only significant (scientifically) but Important and Dangerous.

I urge all readers here to read Manski’s paper which under discussion over at

Climate Etc..

A lot of statistics was developed in the context of electronic communications, starting with the telegraph. That’s why the math texts refer to signal and noise. You can think of signal as error free data and noise as errors.

The main point is that it is very easy, and super tempting to misapply math, be it boxcar averaging or feedback analysis.

Early in my education, a friend’s thesis advisor told me that students tend to apply any old formula in situations where it totally doesn’t apply. By the end of my career, I realized that that unfortunate habit lasts the whole of some scientists’ careers. The good thing is that it gets crushed out of engineers’ souls within about a year of graduation. 🙂

With a background in mechanical, not electrical, engineering, I am willing to state categorically that I cannot measure an object with a ruler denominated in eighths of an inch (0.125in) 1000 times and pronounce its length to an accuracy of 0.001 in. Nor can I measure 1000 similar objects with that ruler and pronounce their difference to an accuracy of 0.001 in. I leave it to the readers to guess whether “averaging” thousands of readings of thousands of thermometers by thousands of different people, recorded to the nearest 0.5 K, can produce a meaningful “anomaly” of 0.01K. The characteristics of “signal” and “noise” be damned.

skorrent1 ==> Thousands of different thermometers measuring millions of different temperatures in thousands of different places read by thousands of different people (or electronics) then rounded to the nearest degree F — then automagically converted to degrees C to the nearest 0.5 — then hundreds of measurements used to find the daily mean of Highest and Lowest, then averaged over a month, then averaged over a year…….eventually reduced to an “annual anomaly” (sans uncertainty) to the nearest 1/100th of a degree C.

Is is, really, much worse than we thought….

Any attempt to separate the noise from the signal will by necessity create an additional error.

If you follow that logic, your cell phone can’t possibly work.

As an example, consider the problem of extracting the tiny signal from the Voyager 1 satellite from the background noise. link

The reason folks think they can improve their uncertainty is that such things are possible given the right circumstances. My beef is with the scientists who don’t understand the ‘circumstances’ part.

The problem most are seeing is the obvious on that statistics are pointless without understanding what is under them on. The classic is the average number of children per couple which is some fraction 1.5 or 2.5 … everyone knows you can’t have a fractional child.

The problem with what they are doing is along the same lines. The system has feedbacks and delays and the force/driver they are measuring is a Quantum process (it is what makes radiative transfer difficult).

They may have convinced themselves that the statistics mean something but the laws of nature have a habit of making statisticians look stupid.

“Averaging does not reduce uncertainty where there is red noise.”

Just not true, and your link does not say that. There are degrees of redness. If numbers are correlated, the uncertainty of the mean does not reduce by the simple OLS quadrature, but it does reduce. In fact, the covariance matrix simply enters the quadratic sum.

Red noise usually implies a slow drift. In that case the uncertainty/error of the individual measurements may well be better (ie. lower) than that of the average of all the samples. Been there, done that, got the t-shirt.

Kip,

Excellent.

Jim ==> Thanks, glad you liked it.

Just a retired engjneer and amateur astronomer but my confidence in the minuscule star wobbles in defining the very existence of a planet and ‘habitable zones’ definitions is low. Too many other variables effect habitability; stellar eruptions, solar wind, planet rotation, magnetic field, atmosphere and its density and composition and on and on. Most of the “earthlike” planet hype is just that, hype. But the actual search is great for expanding science. Much too much certainty is implied, however, in most of the hype.

Jim ==> Yes, Manski again — way way too much certainty.

“In astronomy and astrobiology, the circumstellar habitable zone (CHZ or sometimes “ecosphere”, “liquid-water belt”, “HZ”, “life zone” or “Goldilocks zone”) is the region around a star where a planet with sufficient atmospheric pressure can maintain liquid water on its surface.”

A distant planet is considered a good place to colonize by above definition once we ruin Earth by raising the temperature by a few degrees?

Earth would still have water on the surface if temperatures rose 10 degrees.

SR

How would earth get ruined even with a 10 C average increase? Most of the ice sheets would still not melt with that temperature rise. Inland Antarctica is way below -10C even only 10 metres under the surface even in the summer time.

Christy and Spencer report UAH results as anomalies as well. How does that figure into this analysis?

Mark ==> The use of anomalies is just so “standard” in CliSci that it may be impossible to rectify — part of the problem, as Dr. Schmidt admitted in his blog post, is that they keep changing the absolute data points….they are forever and ever “adjusting” the annual mean GAST (for myriad reasons, some valid, some spurious) that everyone notices….and advancing the climatic period mean. What they want to show is “it is getting hotter” — and “we’re sure”.

The reality is: “It may be getting a little warmer, we’re pretty sure on the century scale, not so sure on the decadal scale”.

I made a very similar case in my papers examining uncertainty due to systematic measurement error in the global air temperature record, here (900 kb pdf), and here (1 mb pdf).

Everyone in the field, GISS, UKMet/UEA, BEST, and RSS and even UA Huntsville for the satellite temps, assume measurement error is a constant that subtracts away in an anomaly.

That assumption is untested and unverified. As you directly imply, Kip, the entire field rests on false precision.

Unfortunately the UAH data is the only temp data that both sides trust. Nick Stokes has said he doesnt even trust that data set but he has inadvertently argued countless times that it represents his alarmist viewpoint. That is because some skeptic will point out some UAH decrease and Nick will jump in arguing that the decrease is meaningless when you look at the overall trend of the UAH data. That proves that Nick Stokes is willing to accept the UAH figures. All the other alarmists implicitly accept that data as well , even though they are loath to admit it. Unfortunately for us skeptics the satellite era of temp measurement began in 1979. That was a low point for actual global temp. We will have to live with that cherry picked starting point. For now there is a definite short term upward trend. However if there is no long term trend then the data will sort itself out eventually. The alarmists cannot really argue against the UAH dataset because that would be saying that they think that Christy and Spencer are fudging the figures to make it look as if there will never be any warming. However if there really was CAGW and Christy and Spencer were fudging the figures then the alarmists would have to argue that those 2 scientists would be putting all humanity at risk by doing that. What possible gain to Christy and Spencer would there be, to put all of humanity at risk? That assertion is ludicrous in the extreme.

The other side of the coin is not true however. When an alarmist argues for CAGW he is not putting all of humanity at risk by him being wrong. He is only condemning humanity to poverty by carbon taxes.

Well, if Spencer and Christy are fudging the figures, then so are the operators of the weather balloons since both sets of temperature data are in agreement.

http://www.cgd.ucar.edu/cas/catalog/satellite/msu/comments.html

“A recent comparison (1) of temperature readings from two major climate monitoring systems – microwave sounding units on satellites and thermometers suspended below helium balloons – found a “remarkable” level of agreement between the two.

To verify the accuracy of temperature data collected by microwave sounding units, John Christy compared temperature readings recorded by “radiosonde” thermometers to temperatures reported by the satellites as they orbited over the balloon launch sites.

He found a 97 percent correlation over the 16-year period of the study. The overall composite temperature trends at those sites agreed to within 0.03 degrees Celsius (about 0.054° Fahrenheit) per decade. The same results were found when considering only stations in the polar or arctic regions.”

end excerpt

I asked Roy Spencer about systematic error in the satellite measurements when I met him at the Heartland Institute. He agreed the systematic measurement error was ±0.3 C, but then said it subtracted away in an anomaly. It’s an untested assumption.

Agreement between measurements doesn’t dismiss uncertainty. Radiosondes have problems of their own.

No Frank, Spencer is correct…….when anomalies are used, the systematic error is eliminated. It’s not an assumption, it can be shown to be true mathematically.

Anomalies show differences from the mean, not the absolute value of the item being measured by the instrument.

If you use a broken ruler to measure the height of your growing child, you can easily see the growth, even if you don’t know how many centimeters tall your child is.

David Dirkse

No, I cannot. Unless I scratch a line in the wall at uniform periods using the sharpened tip of the broken ruler at exactly the same relative height from the child’s head. This assumes the broken ruler is long enough to reach the wall from the top of the child’s head. Then I cannot use the broken ruler at all.

You missed the point RACook. If the thermometer used to collect temperature data at a location was off by -3 degrees for every reading it took in it’s 30 years of data collection, the anomalies calculated from it would be correct.

“off by -3 degrees for every reading”

And how do you know that’s true, David? It’s never been ascertained to be true for satellite temperatures.

Pat Frank needs to learn how to read. My statement was: ” IF the thermometer used to collect temperature data at a location was off by -3 degrees”

…

Look up how the word “if” is used in logic Mr. Frank. You of all people should understand what “hypotheses” means.

I pointed out that your assumption is wrong, David. Figure out the adult response.

Dear Mr Frank. I guess I will have to school you in predicate logic. Here is the truth table for implication

..

You will note that even if the “assumption” (hypothesis) is false, the implication is true irrespective of the truth value of the consequence. So your claim that my assumption is wrong holds no weight.

..

Is that “adult” enough for you? ( I guess you missed the fact that the “if” in my statement was both capitalized and bold faced )

…

What you have to show to object to my logic is that a true hypothesis leads to a false conclusion.

[???? .mod]

Pretty simple Mr “mod” The only time an implication is false, is when the hypothesis is true, and the consequence is false. See the truth table I posted. Frank makes no mention of the consequence in my argument. .

We’re talking science, not logic David. Your rules of logic are irrelevant.

In science, a false hypothesis predicts incorrect physical states. Such a hypothesis can be logically sound and internally coherent. But it’s false, nevertheless.

On the other hand, and again in science, a hypothesis is physically true when its predictions (your implications) are found physically correct.

Your constant negative-3-C-off thermometer was wrong in concept and, as you later interpreted it, your revised “IF” statement carried your argument into fatuity-land.

What makes you think science follows your linear logic, David?

Science is deductive, falsifiable, and causal. If your assumption is false, your theory is wrong, and the deduced physical state is incorrect.

It doesn’t matter if a value predicted by your wrong theory matches some observation. It tells you nothing about physical reality.