By Christopher Monckton of Brenchley,

A recent paper by Hausfather et al. purports to demonstrate that models “are accurately projecting global warming”. In reality, and stripped of the now-routine hype and editorializing with which the paper is riddled, the results plainly demonstrate precisely the opposite – that models have exaggerated global warming – and continue to do so.

Here is the “plain-language summary” of Evaluating the performance of past climate model projections, by Hausfather et al. (2019):

“Climate models provide an important way to understand future changes in the Earth’s climate. In this paper we undertake a thorough evaluation of the performance of various climate models published between the early 1970s and the late 2000s. Specifically, we look at how well models project global warming in the years after they were published by comparing them to observed temperature changes. Model projections rely on two things to accurately match observations: accurate modeling of climate physics, and accurate assumptions around future emissions of CO2 and other factors affecting the climate. The best physics‐based model will still be inaccurate if it is driven by future changes in emissions that differ from reality. To account for this, we look at how the relationship between temperature and atmospheric CO2 (and other climate drivers) differs between models and observations. We find that climate models published over the past five decades were generally quite accurate in predicting global warming in the years after publication, particularly when accounting for differences between modeled and actual changes in atmospheric CO2 and other climate drivers. This research should help resolve public confusion around the performance of past climate modeling efforts, and increases our confidence that models are accurately projecting global warming.”

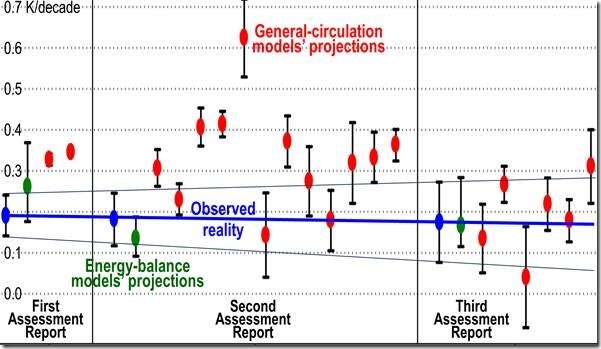

Fig. 1. Projections by general-circulation models (red) in IPCC (1990, 1995, 2001) and energy-balance models (green), compared with observed temperature change (blue) in Kelvin per decade, from surface temperature datasets only (Hausfather et al. 2019).

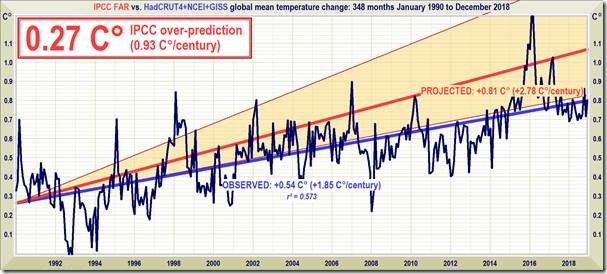

As Fig. 1 shows, the simple energy-balance models [such as Monckton of Brenchley et al. 2015] have done a much better job of prediction than the general-circulation models. In IPCC (1990), the models were predicting midrange warming of 2.78 or 0.33 K/decade. By 1995 the projections were still more extreme. In 2001 the projections were more realistic, though they have become still more extreme in IPCC’s 2006 and 2013 Assessment Reports. Terrestrial warming since 1990, at 1.85 K/decade, has been little more than half the rate predicted by IPCC that year:

Fig. 2. Terrestrial warming, 1990-2018 (mean of HadCRUT4, GISS and NCEI datasets). Even assuming the lesser of the two intervals of global-warming predictions in IPCC (1990), and even assuming that the terrestrial temperature record is not itself exaggerated, observed warming is scraping along the bottom of the interval.

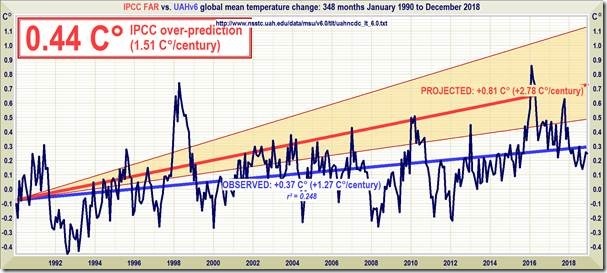

Fig. 3. Lower-troposphere warming (UAH), 1990-2018, is well below even the lower bound of the models’ projections on which IPCC (1990) made its forecast of medium-term global warming.

Hausfather et al. make it appear that the models have been accurate in their projections by comparing the observed warming with the projection by the energy-balance model in IPCC (1990). However, IPCC based its original projections, as it does today, on the more complex and more exaggeration-prone general-circulation models:

Fig. 4. Were it not for the 2016 el Niño, IPCC’s original medium-term prediction, made in 1990, would be still more excessive than it is.

Notwithstanding the repeated exaggerations in the general-circulation models’ projections, exaggerations that Hausfather et al. have in effect sought to minimize, the modelers continue to flog the dead horse Global Warming by making ever more extreme projections:

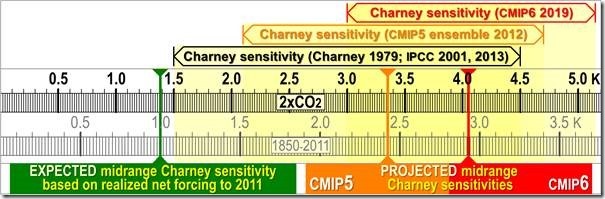

Fig. 5. Charney-sensitivity projections in 21 models of the CMIP5 ensemble.

In 1979 Charney had predicted 2.4 to 3 K midrange equilibrium global warming per CO2 doubling. IPCC (1990) chose the higher value as its midrange prediction. Now, however, the CMIP6 models are taking that midrange prediction as their lower bound, and their new midrange projection, shown above, is 4.1 K.

Since the warming from doubled CO2 concentration is roughly the same as the warming to be expected over the 21st century from all anthropogenic influences, today’s general-circulation models are in effect projecting some 0.41 K/decade of warming. Let us add that to Fig. 4 to show how excessive are the projections on the basis of which current policymakers and banks are refusing to lend to third-world countries for urgently-needed electrification:

Fig. 6. Prediction vs. reality, this time showing the implicit CMIP5 prediction.

Line-graphs such as Fig. 6 tend to conceal the true extent of the over-prediction. Fig. 7 corrects the distortion and shows the true extent of the over-prediction:

Fig. 7. Projected midrange Charney sensitivities (CMIP5 3.35 K, orange; CMIP6 4.05 K, red) are 2.5-3 times the 1.4 K (green) to be expected given 0.75 K observed global warming from 1850-2011 and 1.87 W m–2 realized anthropogenic forcing to 2011. The 2.5 W m–2 total anthropogenic forcing to 2011 is scaled to the 3.45 W m–2 estimated forcing in response to doubled CO2. Thus, the 4.05 K CMIP6 Charney sensitivity would imply almost 3 K warming from 1850-2011, thrice the 1 K to be expected and four times the 0.75 K observed warming.

Though the analysis here is simple, it is just complicated enough to go over the heads of scientifically-illiterate politicians easily swayed by climate Communists who menace their reputations if they dare to join us in speaking out against the Holocaust of the powerless.

Let me conclude, then, by simplifying the argument. It is what is not said in any “scientific” paper about global warming that is most revealing. It is what is not said that matters. I cannot discover any paper in which the ideal global mean surface temperature is stated and credibly argued for.

The fact that climate “scientists” do not appear to have asked that question – or, as Sherlock Holmes would put it, that the dog did not bark in the night-time – demonstrates that the global-warming issue is political, not scientific.

The fact that the answer to that question is unknown demonstrates that there is no rational basis for doing anything at all about the generally warmer weather which is proving most beneficial where it is occurring fastest – in the high latitudes and particularly at the Poles.

There is certainly no case, scientific, economic, moral or other, for denying electrical power to the 1.2 billion who do not have it, and who die on average 15-20 years before their time because they do not have it.

I don’t know what the fuss is about. If the observations don’t match the models, can’t we just change the observations until they do?

You think like Michael Mann. Is that you?

Michael Mann, Hide the Decline

The models all assume that natural variability is not significant.

This has never been proven and renders model projections meaningless

Observational evidence such as the Pause says that natural variability is significant and not accounted for in model physics.

The assumption is not just “unproven”, it’s contradicted by the evidence.

We’ve had 3 bouts of warming in the last 3000 years where temperatures got well above what they are currently, all without the benefit of any extra CO2.

You can get a job with the IPCC with that attitude!

Or just stop looking out the window and look at the computer screen. Oh wait…

They do match.

Lord Monkfish, earl of obfuscation refuses to share the data he used and the code he used to generate his charts.

Maybe he will share it here.

1. The ACTUAL DATA used in making the charts

2. The actual code used in making the charts.

pretty simple.

he wont.

Mosh, setting all the charts aside for the moment, his main claim seems to be this:

“Hausfather et al. make it appear that the models have been accurate in their projections by comparing the observed warming with the projection by the energy-balance model in IPCC (1990). However, IPCC based its original projections, as it does today, on the more complex and more exaggeration-prone general-circulation models.”

Do you know if this is true?

Thanks as always for your contributions,

w.

Hi Willis, that is not accurate. The summary for policymakers of the IPCC FAR only featured the simple energy balance model. In fact, the AR4 was the first IPCC report to feature the multimodel mean and spread in the summary for policymakers; all prior reports used a simple EBM tuned to reflect the more complex GCMs. This is all discussed rather straightforwardly in our paper. For this reason the main text of our paper focuses on the primary projection featured in each IPCC report, at least before the AR4. As Monckton has discovered, we also looked at individual GCMs in the first three IPCC reports in the supplementary materials.

Part of the reason EMBs are used in the summary projections is because the early GCM runs didn’t use particularly realistic forcing assumptions. Many of the IPCC SAR GCMs, for example, used a simple assumption of 1% increasing radiative forcing from 1990 (considerably higher than what actually happened), and many did not include aerosols.

Hope that helps,

-Zeke

some of the models were accurate…

…but we didn’t know which ones for 50 years

they can’t predict crap

Thanks, Zeke, that answers my question.

Best to you, my friend,

w.

mosher,

Why do you never say that about Jones or, Mann?

* Why should I give sources of data and code if you are only going try and find something wrong with it? * Phil Jones

Court Battle: Michael Mann Losing, Gives Tim Ball ‘Concessions’

http://bit.ly/2HbByrK

“.…Seems more like it is Mann who is assaulting science. If temperatures have risen “dramatically” and Mann’s work is “key” to proving it then journalists should do their job and challenge him for cynically keeping secret his disputed R2 regression data.”

Then, it seems your fellow Alarmists don’t need no stink’in data so, why bother asking for it, steve?

“The data doesn’t matter. We’re not basing our recommendations on the data. We’re basing them on the climate models.”

– Prof. Chris Folland, Hadley Centre for Climate Prediction and Research

You should be complaining about these people who won’t release data, sources and codes. They are the powerful ones who have major impacts on the science of climate change but these scientists hiding their data, sources and code never bother you. Why not?

Because climate realists (legitimately) raise questions of due diligence and transparency, it is a fair criticism if they don’t consistently model their own (valid) principles.

Mosh, if you had something valid to say you wouldn’t have needed to resort to ad hominem. I’d say how you lose credibility by doing so, but that ship sailed ages ago as you’ve long since ran out of credibility to lose. sad. How about you make an on-topic post for once (instead of your usual ad hominem drive-by) and address the points Lord M made. What’s that? you can’t? yeah, we all saw that loud and clear already and no amount of ad hominem drive-bys on your part can hide that fact.

He’s using your data.

Indeed. everyone one of those charts appears to already say where the data came from (either directly on the chart of in the text immediately below it) – it’s all from datasets that are publicly available/already published by others. The English major has all he needs to know to try and replicate those charts, but he doesn’t even try. says it all really.

In response to RoHa:

“I don’t know what the fuss is about. If the observations don’t match the models, can’t we just change the observations until they do?”

Let’s change this to:

I don’t know what the fuss is about. If the observations don’t match the models, can’t we just change the MODELS until they do?

Of course, that would make the models’ predictions less scary, with less incentive to push massively expensive socialist schemes to deprive the world of cheap energy, in order to avoid a slower predicted “global warming” which would not be catastrophic. Most people would be happy about that, but what happens to the grant money for the climate elites?

You are not suggesting that the models could be wrong, are you?

RoHa,

Climate ‘science’ frequently does just that.

In genuine science, when a hypothesis does not match the observations, the hypothesis is changed. In global warming, the opposite occurs.

Have I got this right?

The energy balance model is fairly close to the observations.

Hausfather says this shows that the models are accurate.

The alarmist use the general circulation models.

Those models exaggerate warming.

But the alarmists will use Hausfather to imply their GC models are accurate.

Zeke’s paper was mainly about the EB models, because they have a longer prediction run. But on the GCMs, from this article’s Fig 1, the SAR predictions were high. FAR was above observed, but within error range. And TAR seems on average neither high nor low.

…. but within HUGE error range.

fixed for you Nick. !

And only just. When it cools this/next year what will they do?

Yep.

The models have actually predicted that temperatures have reduced over the last 10 years.

Or that they have remained completely flat for the last 15 years.

The error range is so large temperatures have zigzagged all over the place.

All equally valid.

The error range of trend is a function of time since the prediction. If the time is short, the range is wide. Not a fault of the model; just trying to evaluate on too short a time. That is why Zeke’s paper was actually about the success of the older EB models. We have had much longer to evaluate them, so the error ranges are less.

Nick, you know that politicians will only jump on the headline. They – and many of those in XR – have no idea of the differences between EB and GC models. All that will come out of this paper is a headline that ‘the models are right’.

While I’m here, one of the last paras in CM’s post pointed out that:

Which is a question I posed nearly twenty years ago – to which I have never found an answer. Do you have an answer to it? (And don’t start with the IPCC’s ridiculous and politically motivated 1.5 Deg C ‘tipping point’)

“Do you have an answer to it?”

No. It isn’t a scientific question. Climate science can tell you what the climate will be (subject to scenario). It can’t tell you whether you will like it.

The fact is that there will be winners and losers. Arrhenius, in Sweden, thought warming would be a good thing. Watching our forests burn, I am not so sure.

Nick Stokes January 13, 2020 at 6:16 am

It’s pretty well established that a) a warmer world is a wetter world, and b) the slight warming has NOT increased the number or severity of droughts.

w.

The standard deviation of the GC models outputs, i.e. “error ranges”, is highly meaningless because they are not random samples of the same quantity.

Absolutely disgusting Mr Stokes to equate the bush fires in your home country with Climate Change.

Do you not know any of your own country’s history?

You don’t know about the previous fires of 1911, 1939 and 1974?

Also typical that you refuse to answer the question as asked, just like every other climate activist.

Rainfall distributions are changing and variability is likely to increase (more droughts and more extreme rainfall events). Both the Indian Ocean Dipole and the Southern Annular Mode have been shown to be affected by global warming which will result in drier summers for southern Australia.

On the contrary, it is disgusting that certain politicians will not equate the Australian bush-fires with climate change as that is the root cause for the unprecedented intensity of these fires.

Willis,

“It’s pretty well established that a) a warmer world is a wetter world, and b) the slight warming has NOT increased the number or severity of droughts.”

Yes, on average. But not locally. SW Australia was predicted to become drier, and has, due to the westerly winds not coming so far north in winter. SE Australia is not so clear, and the north is getting wetter because of the stronger monsoons, which tips the overall average.

If there is a drought, as there has been here, then hot days are dangerous. Hotter days are worse.

A C Osborn January 13, 2020 at 8:13 am

“Absolutely disgusting Mr Stokes to equate the bush fires in your home country with Climate Change.”

Huh? NS is not the only one doing this. In fact I think you will find pretty much everyone with any clue has worked out that Australia’s record year of heat and drought contributed to this disaster of gigantic proportions. In fact, I would go as far as to say it is dishonest people like you who are the disgusting ones. Happy to ignore the obvious and twist the truth, just so you can maintain your selfish lifestyles…. just saying.

The fact is that there will be winners and losers. Arrhenius, in Sweden, thought warming would be a good thing. Watching our forests burn, I am not so sure.

More unscientific alarmism from Mr Stokes, a truly loyal doomer.

“Rainfall distributions are changing”

That’s been happening since forever.

There isn’t a shred of evidence that the current variation is outside the range of normal.

Nick writes

GCMs are no good for regional projections. The regional effects of climate in Australia are driven by ENSO, IOD and SAM. GCMs are terrible at modelling those too.

Claims about Australian fires are simply nonsense. How dry does it have to be to burn? It is simply wrong to claim that you need the fuel load to be dry as a result of a record drought to burn. It simply needs to be dry. The tenth driest year is dry enough to have very bad fires, probably the twentieth as well. And who cares about the year? It’s the recent past that matters in fires, not what happened eleven months ago.

I have a house in France, and by August the fire risk was very high. The last few months have seen record rain. So we could have had extremely bad fires but in a year that was, on average, average,

So don’t talk nonsense.

“GCMs are no good for regional projections.”

You don’t need a GCM to predict what will happen to the SW rains )or the monsoons). I told the pre-GCM story here.

“…You don’t need a GCM to predict what will happen to the SW rains )or the monsoons). I told the pre-GCM story here…”

GCMs SHOULD be able to predict and simulate them. I

Your pre-GCM story is just so interesting. Anyone could look at the historical data leading up to 1976 and predict what would happen based on the long-term trend.

No. Here is the plot to end of 1975 (latest data then available). Not at all obvious where it is going. Certainly not a big drop around the corner.

That argument doesn’t explain why Victoria or Tasmania are dryer than normal. Its easy to make up explanations in hindsight or ones that might come true over a few decades. The same could be said if the SAM stayed further South. Or indeed interactions between ENSO, IOD and SAM conspired to dry out some regions of Australia over a period.

For example from https://agupubs.onlinelibrary.wiley.com/doi/full/10.1002/2016GL072353

And so to predict widening over Australia would be based on the assumption of global widening. Or lucky in hindsight.

“Its easy to make up explanations in hindsight”

It wasn’t hindsight. And it came true rather suddenly (an element of chance there). And it does suggest that all places getting rain from westerlies might be affected. That includes Tasmania and a lot of Victoria.

That is way too far a shift. A much more likely explanation is that ENSO/IOD/SAM interactions over the period dried out the regions.

Besides to say “climate change” caused the drying over maybe a dozen event interactions because they happen over multiple years is not enough events IMO. Its one thing to look at 10k daily readings and see a trend but another entirely to see a handful of longer term events and call climate change on those.

Gator

“The fact is that there will be winners and losers. Arrhenius, in Sweden, thought warming would be a good thing. Watching our forests burn, I am not so sure.

More unscientific alarmism from Mr Stokes, a truly loyal doomer.”

How is watching your country burn and commenting on it being an alarmist? I get that people who make scary predictions could be termed alarmist, but his is happening now. And it is seriously, serious. Anyone who minimised this shocking natural and human tragedy needs their head read.

The fires have nothing to do with climate change. The sky is not falling.

Willis Eschenbach January 13, 2020 at 9:06 am

It’s pretty well established that a) a warmer world is a wetter world, and b) the slight warming has NOT increased the number or severity of droughts.

—

Long-lived GBR coral cores going back continuously for 600 years viewed under UV light show Australia during the LIA had very weak summer wet seasons, which routinely failed, and QLD was dominated by a 300 year period of droughts which of course hasn’t been experienced since European settlement.

The coral shows these harsh dry conditions fortunately ameliorated in the decades just prior to Cook’s mapping of the east coast at the end of the LIA. So we know that cooler equals lower rainfall, decades of drought, vegetation atrophy resulting in aeolian erosion.

“In fact I think you will find pretty much everyone with any clue has worked out that Australia’s record year of heat and drought contributed to this disaster of gigantic proportions.”

I think just about everyone can agree on that.

What we don’t agree on is the cause of the heat and drought. You think CO2 is causing all these problems, but there is no evidence to support that claim. We can’t even say how much warmth CO2 is adding to the atmosphere right now, if any (after feedbacks), much less connect CO2 to a particular weather event. There are natural variations in the Earth’s weather that could easily be the cause of Australia’s current problems, and probably are the cause, imo, since I see no evidence for a CO2 role.

Perturbations upon perturbations. Nature, for her part, denies the consensus. However, with regular injections of brown matter (“fudging”), the models (“hypotheses”) are retrained, and forced to reach an anthropogenic consensus that could plausibly approximate reality, and thereby – garner – democratic support.

Okay

The x-axis in Lord M’s Fig 1 is a puzzle. It seems to be time, but I think the models are just grouped as FAR,SAR and TAR and spaced out arbitrarily. But the “observed reality” is a line with error bars. Does this mean a continuous variation of trend to present, with the axis as starting year? Is that really linear?

According to the text below it, that is from Hausfather et al. 2019

Looking at Hausfather et al. (2019) it’s obvious it is the same axis except grouped in 3 time zones IPCC 1st assessment, 2nd assessment and 3rd assessment. The blue being observation is first the same as Hausfather et al. (2019). If you wanted the red node model names I guess you could squeeze them in next to each node.

In fact it seems to be a version of Fig S5 from the SI. A line has been added to join the few blue dots, which seems to imply that the x-axis is time, or some continuum variable. But it is just the sequence of the models. It doesn’t matter very much because the slope of the blue line is small. I have no idea where he gets the error bars on the added line.

I see now that he has just drawn a lint through the three blue dots, which do coincidentally align, and other lines through the error bars. OK, but it gives the wrong idea. There isn’t a linear behaviour implied by the graph.

It isn’t implying linear anything it is just showing range are you really trying a Stokes deflection here?

he has adjust the column widths so they line up to extend the line. If you don’t like that then extend each of the blue bar limits as horz lines right across the graph. What it shows you is F1 range FITS in S2, and F1 and S2 ranges both FIT in T3 .

So the observed reality range has got wider which is all it shows no matter how you draw it and what the comment says … doh real hard.

From the paper “Physics-based models provide an important tool to assess changes in the Earth’s climate due to external forcing and internal variability”

What physics based models?

We dont have the physics for clouds. They’re a parameterised fit. Tell me Nick, how can a calculation that includes a component that is fitted, be called “physics-based” ?

TTTM,

He’s actually talking about the EB (energy-balance) models, which are the main focus of his paper.

The main focus? How?

The paper explicitly says

They also said (and Monkton quoted)

I mean, really? That statement is pure weasel. It leaves the impression the model projections are “correct” except the future emissions that are unknown. Oh and “other factors affecting climate” which also excludes issues with the models.

The whole thing is an attempt at justification of the models. The use of the term “physics-based” is just an attempt to create a public illusion that they’re correct because “physics”

“The main focus? How?”

Explaining the rationale for the paper, it says:

“However, to-date there has been no systematic review of the performance of past

climate models, despite the availability of warming projections starting in 1970.

This paper analyses projections of global mean surface temperature (GMST) change, one of the most visible climate model outputs, from several generations of past models.”

The methods section begins:

“We conducted a literature search to identify papers published prior to the early-1990s that include climate model outputs containing both a time-series of projected future GMST (with a minimum of two points in time) and future forcings (including both a publication date and future projected atmospheric CO2 concentrations, at a minimum).”

Most of the paper is justifying the current crop of models. This paper may claim to be about evaluation of the older models but a lot of it is not.

For example

They’re setting a scene and creating a new meme here, Nick.

Do you remember when the original meme was “We know anthropogenic CO2 caused the warming because the models say so” and nobody seriously questioned them at that time. At least that’s my recollection.

Now they’re attempting to build on that by asserting they’re correct and saying “other human factors” can throw out the projections. Control runs prove the models can only show warming with the CO2 and therefore must be the cause. That’s not proving anything.

Meanwhile most skeptics are back at questioning how much of the observed warming is natural and how much is caused by CO2.

There is way too much emphasis on global values.

The GCMs fail far worse regionally and locally. They’re utter garbage – temperature, precipitation, clouds…you name it. Adding that all up to get a global temperature anomaly that is reasonable is…well, utter garbage.

And what is the standard deviation of garbage?

Crumpled-up wrappers on the minus side, used coffee-grounds on the plus side.

I think I can simplify this in order to create a computer model that is predictive and accurate you must have a very strong understanding of that which one is modelling. Then there must be a way of testing the model for its accuracy. Don’t get me wrong computer models can be very useful for instance in engineering they are used on many things. For instance jet engines I’m very glad though any design so produced is tested to destruction.

So we model something that we don’t really have a much understanding of and then we find that it is untestable. In other words scientific method is inapplicable. We’re going to rely on that?

Mike,

“Don’t get me wrong computer models can be very useful for instance in engineering they are used on many things.”

Unfortunately, not always in engineering, either, with disastrous results, of course.

Anonymous Boeing engineer.

“The 737 Max was designed by clowns supervised by monkeys.”

In plain words, shareholder value.

It really amazes me how people who know nothing about economics or running large companies feel compelled to offer their ignorant opinions.

How much have the crashes improved shareholder value?

CEOs have to balance many interests. They need to make the planes as cheaply as possible so that the airlines will buy their models, and not the competitions. But not so cheap as to compromise safety.

In most cases, designs err on the side of safety. Unfortunately, nobody is perfect. They screwed up big time it will take Boeing decades to recover from the economic damage. Bigger companies have failed from such mistakes.

Mark W

“It really amazes me how people who know nothing about economics or running large companies feel compelled to offer their ignorant opinions.”

And it constantly amazes me you feel you have the knowledge to comment on climate change…but it doesn’t stop you

It was bad programming that caused the planes to crash. If we cannot have reliable programming in flight systems how do we expect climate model programming to be good at predictions out decades?

It was bad engineering to rely on a single sensor.

Yes, not bad programming. I expect that the computer code does exactly what the system designers told the software guys to do. It was a bad system design, from it’s inception.

It was also bad business practice to not inform the pilots of the system, how it works, or how to turn it off. And as it was known that the pitot-static tube data disagree between the two sensors, why make the error message part of an extra-cost kit?

Mark W says , “it was bad engineering to rely on a single sensor”

Or on a single tree?

I have followed non-climate models for over three decades and wonder how the development occurred, statistical mismanagement, ease of computer access without understanding, whatever. In the early to mid 1990s I worked with three good modelers, open to alternative conclusions and reanalysis. Fisheries models, complex because they also rely on climate, have a similar failure rate. This is a second hand quote, haven’t chased down the original yet, but suspect that it is accurate.

“These models generate such masses of tabular data that they are as much a challenge to understand as the ocean itself. Consequently, the numerical results seldom get the detailed study, interpretation, and explanation they deserve. In the hands of some they are a wasteful exercise.”

In, Stommel, H.1987. The View of the Sea: a discussion between a chief engineer and an oceanographer about the machinery of the ocean circulation. Princeton Univ. Press.

For example–de Mutsert, K. J. H.Cowan, Jr., T. E. Essington and R. Hilborn. 2008. Reanalyses of Gulf of Mexico fisheries data: landings can be misleading in assessments of fisheries and fisheries ecosystems. Proceedings National Academy Science. 105(7):2740-2744.

” I cannot discover any paper in which the ideal global mean surface temperature is stated and credibly argued for.”

Great point.

” I cannot discover any paper in which the ideal global mean surface temperature is stated and credibly argued for.”

According to an article published here at WUWT a few months ago, the Earth’s global temperature would have to exceed the “hottest year evah!” temperature of 2016 by 0.4C, to reach the “critical” tipping point of 1.5C above the global average from 1850 to the present.

The Global average temperature is currently 0.3C cooler than 2016, which makes us 0.7C away from the supposed tipping point of 1.5C above the 1850-to-present average.

Btw, the United States hit that so-called tipping point of 1.5C above the average from 1850 to the present, in 1934, when it was 0.4C warmer than 2016. We’re still here. No tipping point seen.

UAH satellite chart:

http://www.drroyspencer.com/wp-content/uploads/UAH_LT_1979_thru_December_2019_v6.jpg

“The Global average temperature is currently 0.3C cooler than 2016”

No, it is 0.034°C cooler. Easily second warmest, and very close to warmest. And that without an El Nino.

That is, the annual average for 2019 is 0.034°C cooler than annual 2016.

Man that whole “tipping point” thing is such an emotive, alarmist expression too. You’ve got extremely questionable models being used to promote some unproven near future apocalyptic scenario

“Though the analysis here is simple, it is just complicated enough to go over the heads of” me.

Oh for a rewrite in plain English.

As for ” I cannot discover any paper in which the ideal global mean surface temperature is stated and credibly argued for.”

The implicit temperature is that of circa 1850. does not need stating when you assume the world was perfect then.

–

Nonetheless the takeaway is that Hausfather hangs his credibility on a very high and unrealistic figure of

“CMIP6 models are taking that midrange prediction as their lower bound, and their new mid range projection, shown above, is 4.1 K.”

That should go seriously amiss very, very quickly seeing it is starting underwater anyway.

Even his vaunted take downs of past temperatures cannot hide this degree of miscalculation*.

* being nice

“There is certainly no case, scientific, economic, moral or other, for denying electrical power to the 1.2 billion who do not have it, and who die on average 15-20 years before their time because they do not have it.”

–

Steady on.

You cannot backdate progress.

And you certainly should not promise people things just because it sounds good and feels right.

The majority of people live in tropical to temperate climates and electricity is not a panacea for that.

Overpopulation, lack of food, malaria TB and lack of antibiotics. Cigarette consumption.

–

Life expectancy however is higher than it was 100 years ago even in the third world. Some resources do have limitations and complications with their extraction and use.

There is no argument that we and they could try to provide better living conditions and electricity to everyone in the world, they and you go for it.

I am not stopping or denying them.

I am sure that the number of people on electricity now far outsizes the world population in 1850 and even 1940.

Perhaps they should all have cars, roads and lifetime pensions as well??

angech, I don’t understand your argument here.

Electricity is the friend of the poor housewife and the poor farmer, whether you live where it’s hot or cold. It’s always a good thing to have, for hundreds of reasons and uses. Plus it’s impossible to have a modern manufacturing economy without it.

Regardless of that, however, I think you are reversing Christopher Monckton’s argument.

He’s not saying we should PROVIDE cheap power to the masses.

He’s saying we should not DENY them cheap power through the insane war on fossil fuels.

Best to you,

w.

Well said, Mr. Eschenbach.

Without reliable, 24/7 electricity, we all would be back in that time of the “perfect”, per the IPCC, global mean temperature and we would all be living just like they did in 1850 as well.

The poor nations of the world need 24/7 reliable electricity and a grid that connects every home, just like in the first world has. That the CAGWers want to keep the poorest of the world in abject poverty and cooking over dung fires and without the means to have refrigerators, stoves, heat and air conditioning, manufacturing, hospitals and clinics that are full-time and not just when the wind blows or the sun shines (think of having surgery performed on you and having the lights go out), for just a few of the benefits that we enjoy but the IPCC and AGWers want to deny to the second and third world.

I’m sure it’s just an accident that the people they want to keep living in the 1850s instead of the 21st century and who want the populations of same decline, are all brown, black or yellow. It is just a coincidence, no?

Or, is it?

David Attenborough says sending food to famine-ridden countries is ‘barmy’

Veteran broadcaster has called for a debate on population control

http://bit.ly/2Okcchp

It will all be an historical footnote once next generation nuclear reactors come on line

I think air conditioning is a top 25 invention for all of humanity. I am sure people will argue 🙂 Poor people would love to have this “luxury.”

Trying running air conditioning on solar panels.

I confess. I have solar panels, and I do run my AC from them. I don’t use the AC at night.

angech,

Electricity *is* a panacea for potable water. And potable water *is* one of the main things lacking in the third world and is a major contributor to lowered average lifespan.

You can add refrigeration for food.

Are you actually trying to argue that electricity doesn’t improve the lives of those who live in the tropics?

Brilliant post. Thank you sir.

The other question is this. If climate models have it figured out, why are there significant differences among climate sensitivity values that are computed from observations, from models, from observations constrained by models, and from models constrained by observations? And what is the correct value of climate sensitivity?

https://tambonthongchai.com/2020/01/09/agwmath/

Btw, I play golf with Lord XXXXXX XXXXXXX, a fine golfer and a fine human being. Not as well of course but he tolerates me.

Hello Mr Moderator

A brilliant solution!

Thank you very much

Chris also thanks you.

Chaamjamal, I happened to see your comments, and that was my solution. I was unwilling to get rid of the rest of your thoughts on the subject.

We are nothing if not a full-service website …

Best regards,

w.

Quote from Hausfather paper: “Climate models provide an important way to understand future changes in the Earth’s climate. ”

If this is the first sentence I read of this paper the BS flag is initiated already and the rest will require very close scrutiny. Even the IPCC admits the GCMs don’t do predictions but projections of possible scenarios. Studying their output leads to no knowledge of the future, just, hopefully, better understanding of the climate system. If Pat Frank’s work is to be trusted, as I believe it should be, the GCM outputs are incapable of giving us any information about the future.

DMA, thanks for your positive comment about my published work on the reliability of GCM air temperature projections.

The supposed uncertainty bars in Figure 1 above are purely fake. They show nothing but model precision; nothing about accuracy. Their implicit message is that climate modelers know nothing of physical error analysis.

Were they to include a true measure of accuracy, the uncertainty bars would go well beyond the margins of the chart.

Figure 5 includes further evidence of the fakeness. All those different climate models closely reproduce the 20th century temperature trend despite sensitivities that range between 2.3 and 5.6 K.

The models are tuned to reproduce the 20th century. Tuning makes the 20th century correspondence artificial. Model tuning both suppresses and hides the uncertainty of the projections by way of offsetting errors.

Offsetting errors do not improve predictive accuracy. I have yet to encounter a climate modeler who understands that (or perhaps who will admit to it). But they all make their living from within that state of ignorance.

Based on your work that indicates the GC models are in effect simple linear projections of carbon dioxide concentration, is it fair to conclude that the ranges in figures such as Figs. 5 and 7 are really just the individual model operators’ predictions about future CO2 versus time?

C,M — as regards your question: in effect, the answer is ‘yes.’

The air temperature projections are based upon CO2 growth scenarios. In effect, modelers (or the IPCC) suppose this or that trend in CO2 concentration, and then use it to calculate the supposed increase in air temperature.

The model projected air temperature trend exactly reflects a linear relationship with the proposed fractional CO2 growth, scaled by the simulated climate sensitivity of the model.

“Figure 5 includes further evidence of the fakeness. All those different climate models closely reproduce the 20th century temperature trend despite sensitivities that range between 2.3 and 5.6 K.

The models are tuned to reproduce the 20th century. Tuning makes the 20th century correspondence artificial.”

And the models are reproducing a bogus, bastardized 20th century surface temperature trend. The Climategate Charlatans created a bogus, bastardized 20th century global surface temperature chart, and now the Computer Climate Models are reproducing this manipulated, fraudulent temperature record!

Walter Scott: “Oh, what a tangled web we weave…when first we practice to deceive.”

It’s a joke to show a match between “observations” and the Global Climate Models. First of all, they are not matching “observations” they are matching computer-generated numbers that distort the actual temperature observations, so they are matching their “garbage-in/garbage-out” Global Climate Models with the “garbage-in/garbage-out” fraudulent temperature record. And they match! What a surprise! They can make those computers jump through all sorts of hoops!

Charlatans in action, trying to fleece us out of our money by distorting the truth.

We’re incessantly told by climate scientists that “weather” is not climate, and that it takes averaging over many decades of observations to distinguish changes in climate, as opposed to merely the “noise” of weather. Assuming that’s the case, how could it be possible to determine whether the models are accurately forecasting changes in climate when the earliest models are only a few decades old? The models haven’t been at it long enough to have any confidence in their predictive ability. We’ll have to revisit this in a few centuries.

The other thing I wanted to mention was this little gem of a quote, which vitiates the whole purpose for which climate scientists use models:

“We find that climate models published over the past five decades were generally quite accurate in predicting global warming in the years after publication, particularly when accounting for differences between modeled and actual changes in atmospheric CO2 and other climate drivers.”

Catch that fallacy? Notice how they pulled a bait-and-switch from an introductory explanation of differing INPUT assumptions about emissions scenarios to instead emphasizing models whose OUTPUT of atmospheric CO2 concentration turned out to be relatively accurate. If you are only looking for correlation between temperatures and atmospheric concentration of CO2, then this procedure might be perfectly fine. Maybe, after all, natural warming is occurring and that warming is what is causing CO2 concentrations to follow (see Greenland ice core data).

But that’s not what the models are used for. The models are used by climate scientists to project the consequences of our EMISSIONS, not merely the consequences of a battery of differing potential abstract atmospheric CO2 concentrations. You have to group models by their emissions forecasts, limit your analysis to the entire set of models who correctly assumed the emission scenario the world actually followed, and find out the statistics on how accurate as a whole that set was. You cannot ex-post-facto filter your models by selecting all those that correctly forecast one OUTPUT (CO2 concentration), irrespective of differing input assumptions (emission rate), and on that basis draw a conclusion about those models’ predictive ability for a second output (temperature).

To put it bluntly, they cheated. By cherry-picking the models to emphasize those that happened to match actual atmospheric CO2 concentration trends, in a manner indifferent to whether those models assumed the correct input emission scenario, they rigged the analysis to get the results they wanted.

“Assuming that’s the case, how could it be possible to determine whether the models are accurately forecasting changes in climate when the earliest models are only a few decades old? “

Or, as the paper says:

“However, evaluating future projection performance requires a sufficient period of time post-publication for the forced signal present in the model projections to be differentiable from the noise of natural variability”

The emphasis of the paper is on evaluation of the older energy-based models, where there is now up to 50 years of prediction time. They are what are shown in the actual Fig 1 and Fig 2 of the paper. The plot here is from Fig S5 of the Supplementary Information.

“Catch that fallacy? Notice how they pulled a bait-and-switch from an introductory explanation of differing INPUT assumptions about emissions scenarios to instead emphasizing models whose OUTPUT of atmospheric CO2 concentration turned out to be relatively accurate.”

No, you have that the wrong way around. The OUTPUT of models is not a CO2 concentration. That is the scenario (the INPUT). The models calculated future climate according to an assumed progress of [CO2]. Science cannot calculate how much C will be burned in the future. That is a human decision.

All models up to at least Hansen 88, and probably up to TAR, used an assumption of [CO2] as the scenario. In earlier days there simply wasn’t data about emissions (tonnage), but there was a [CO2] record. You can’t usefully assume in the future what you don’t know in the past. Modern GCM’s often do incorporate a model converting emission tonnage to [CO2], but it isn’t their core function.

“The emphasis of the paper is on evaluation of the older energy-based models, where there is now up to 50 years of prediction time.”

Where the standard period of averaging state variables is thirty years to determine “climate” a mere fifty years isn’t nearly long enough to determine predictive ability of what changes in “climate” will result due to a change in one variable that is at best coarsely controlled. We’re talking centuries here.

“No, you have that the wrong way around. The OUTPUT of models is not a CO2 concentration. That is the scenario (the INPUT). ”

That’s not remotely true, and you know it. This is a direct quote from the paper at issue. “Model projections rely on two things to accurately match observations: accurate modeling of climate physics, and accurate assumptions around future emissions of CO2 and other factors affecting the climate.” The modelers assume an emissions level, and their models first rely on those assumed emissions levels to determine an associated level of GHG concentrations at those assumed emissions, and only then do they try to determine a resulting temperature series.

You don’t even have to take my word for it – you can take Gavin Schmidt’s (a coauthor) who specifically wrote that the paper tried to “account[ ] for differences between modeled and actual changes in atmospheric CO2 and other climate drivers.” You’re trying to now say that “changes in atmospheric CO2” aren’t modeled at all, despite this plain language, but are just some “input” to the model.

Hansen’s 1988 model, which this paper specifically cited, started with “emissions” scenarios – not CO2 concentration scenarios – and based on those scenarios, predicted a series of temperature predictions for each “emission” scenario. That paper specifically quantified the presumed rate of increase/decrease for all three “emissions” scenarios, and when the prediction for the scenario the world actually followed turned out to be wildly wrong, Hansen and his ilk deliberately obfuscated that fact by pretending that the prediction was merely for different possible sets of CO2 concentrations – which would have been an utterly useless scientific endeavor, and contradicted not only his Senate testimony but his original paper when he presented his temperature projections as a consequence of the “emissions” scenarios, and not some hypothetical number of uncontrollable CO2 concentrations.

You say that “[s]cience cannot calculate how much C will be burned in the future. That is a human decision.” That may be true. but it’s a dodge. Scientists can assume a range of scenarios for how much “C” is burned in the future, then when we actually know how much was burned, go back and compare what temperatures resulted in the real world against the modeled predictions for the particular scenarios closest to it. That would be the scientific way of testing the model, and thereby testing our understanding of the system being modeled.

You’d have us believe that you, and the authors of this paper, and all the other climate scientists don’t understand the difference between correlation and causation. If I do nothing but watch CO2 levels and temperatures rise and fall, without ever being able to demonstrate any numerical control over either of those two variables, I’m never going to scientifically prove causation, in either direction. I’m at best going to show a mere correlation.

The only scientific way of using a prediction to demonstrate understanding of how a physical system works is to predict a change that results from some input to the system that we as humans change. Thus, the measured outcome has to be matched against the predicted outcome of that thing that the experiment treats as a variable that can be changed. In this case, Hansen, the IPCC, and all other climate models start by assuming several “emission scenarios” and give a temperature prediction for each “emission” scenario. Those predictions fail. The climate scientists then grasp at pathetic excuses, or as in this case, they cheat by rigging the “study” or “analysis” to give them the result they wanted.

The instant these coauthors decided to limit their analysis to only model runs matching atmospheric CO2 levels that occurred after the predictions were made, they cheated. This analysis provides no information on the causal effect on temperatures of changing CO2 emissions, and no information on how well we understand how much CO2 emissions affect the climate.

Thus spake Zeke (as quoted above):

“Model projections rely on two things to accurately match observations: accurate modeling of climate physics, and accurate assumptions around future emissions of CO2 and other factors affecting the climate”

and it is absolutely true. You need those two things to match observations. And we don’t know what future emissions will be. The solution is widely discussed, and elementary. Multiple scenarios are calculated. None is a prediction (else why so many?). But there is a projection for each. Afterward, you have to decide which scenario played out, and see if the corresponding projection matched the observation. That is why, as Gavin said, you have to account for the difference between the CO2 rise that was modelled (scenario) and the actual change. Sometimes there was a scenario that fits well, otherwise you have to adjust expectation for the deviation.

Hansen was probably the first to do this systematically, but it is implicit in earlier work. No-one claims that their model tells you how much C will be burned in the future.

“Hansen’s 1988 model, which this paper specifically cited, started with “emissions” scenarios – not CO2 concentration scenarios “

This is just a quirk of language, as described by Steve McIntire here:

“One idiosyncrasy that you have to watch in Hansen’s descriptions is that he typically talks about growth rates for the increment , rather than growth rates expressed in terms of the quantity. Thus a 1.5% growth rate in the CO2 increment yields a much lower growth rate than a 1.5% growth rate (as an unwary reader might interpret). For CO2, he uses Keeling values to 1981, then:…”

Hansen’s scenarios are absolutely described in terms of concentrations; Steve M set out and graphed the original numbers. Hansen never used or cited any emissions in tons, despite using language as if he was. Emission amounts were not generally available at the time.

“Scientists can assume a range of scenarios for how much “C” is burned in the future, then when we actually know how much was burned, go back and compare what temperatures resulted in the real world against the modeled predictions for the particular scenarios closest to it. That would be the scientific way of testing the model, and thereby testing our understanding of the system being modeled.”

And that ius exactly what they do. It is exactly what the bits you quote from Zeke and Gavin are describing.

The authors give the impression that the mismatch between forecasted CO2 concentrations and the concentrations that actually happened is because of emissions. But they don’t provide data on emissions at all; figures S3 and S4 show only *concentrations*.

Forecasted CO2 concentrations can turn out to be wrong for two reasons:

-Emissions forecasts were off. (Some emission forecasts definitely were wrong, but the authors provide no evidence on this)

-The airborne fraction for CO2 implied by forecasts was wrong. Actually, there is accumulating evidence that the real-world airborne fraction is lower than in modern climate models, see for example https://www.nature.com/articles/s41467-019-08633-z

Whether the old climate models assessed by Hausfather used a “wrong” airborne fraction is unclear, but one cannot claim that if concentrations today are lower than forecast it’s because emissions turned out to be lower. At least, one cannot make that claim without providing some evidence.

The Hausfather paper does something very useful, which is to calculate the implied TCR of old climate models; it also shows several famous forecasts were unphysical or unrealistic, e.g. the three Hansen 1988 scenarios imply very different TCRs, which makes no sense if they come from the same authors and study. The implied TCRs also change dramatically from one IPCC report to the next.

The paper then makes the claim that, because the implied TCR of most old models falls within the confidence interval of the “observed” TCR, then these models’ projections were “accurate” (their word). This is where they go wrong; first and most obviously, the confidence interval is so wide that claiming “accuracy” just because a value happens to fall inside is absurd. Second, the “observed” TCR bounces up an down precisely because they calculate a different value for each period, instead of just getting one value for all of the thermometer record.

You mentioned in other comment that for the earliest models there are now 50 years of data available, but the longest period used by Hausfather is only 40 years long. Of the eight models considered by Hausfather that made forecasts out to 2017, in five cases the “observed” TCR is calculated with 30 years’ worth of data or less, and in one case only with 17 years. Imagine if someone tried to publish a paper claiming to have calculated TCR from “observations” and used only data from 2001 to 2017. It would be desk-rejected, and for good reason – the period is not nearly long enough to estimate climate sensitivity, whether transient or equilibrium.

“but the longest period used by Hausfather is only 40 years long”

Yes, you’re right. We have 50 years of data, but not 50 years of prediction to test.

But it all starts from the assumption that CO2 does “affect the climate”. So far that is only an assertion; nobody has yet come close to incontrovertible evidence that there is a causal relationship between CO2 and changes to the climate.

In so far as any such evidence does exist it is the reverse of that assertion, ie that changes to the climate affect CO2 levels. Observations indicate a time lag between temperature increase and increased CO2, which is logical since increased sea temperatures will lead to increased CO2 outgassing from the oceans, not the other way round.

The anthropogenic contribution is nowhere near enough to account for the 30% increase in atmospheric CO2 over the last century; increased temperatures following the end of the LIA, ie natural climatic variation, which climatologists are so keen to dismiss, are a much more likely culprit.

I think you can find calculations that show your third paragraph is incorrect (it’s closer to 40% total increase) and actually about half of our emissions are taken up by sinks. Perhaps you are considering assumptions of which you don’t agree.

Hansen’s 1988 model, which this paper specifically cited, started with “emissions” scenarios – not CO2 concentration scenarios – and based on those scenarios, predicted a series of temperature predictions for each “emission” scenario. That paper specifically quantified the presumed rate of increase/decrease for all three “emissions” scenarios, and when the prediction for the scenario the world actually followed turned out to be wildly wrong, Hansen and his ilk deliberately obfuscated that fact by pretending that the prediction was merely for different possible sets of CO2 concentrations – which would have been an utterly useless scientific endeavor, and contradicted not only his Senate testimony but his original paper when he presented his temperature projections as a consequence of the “emissions” scenarios, and not some hypothetical number of uncontrollable CO2 concentrations.

No you’re completely wrong, Hansen’s projections of CO2 gave the following values for 2019:

Scenario A 412.8, B 406.2, C 367.8

Annual CO2 2019 Mauna Loa 411.4

I wouldn’t call that ‘wildly wrong’ for over 30 years ago!

The emissions were wrong ( emissions were on the very high end) and the actual trophsphere T measurements ( where GHG T effect should be measured) is on the very low end.

Conclusion – effect of emissions on T is very much over inflated to observations of emissions and T.

David A January 14, 2020 at 12:59 am

The emissions were wrong ( emissions were on the very high end)

You appear to have reading comprehension issues, Hansen’s projections showed that the actual observed CO2 was slightly lower than his Scenario A.

If you program the physics into the model, and the model displays an Equilibrium Climate Sensitivity (ECS) as a result of that physics, then we say that the ECS is emergent. On the other hand, if the ECS is merely a product of tuning …

If we had a really clear understanding of the models, we could say with some confidence if their resulting ECS is emergent or not. What we can say with some certainty is that GCMs are tuned. link As far as I can tell, Edward Norton Lorenz, arguably one of the fathers of climate modelling, would say that the tuning process invalidates the ECS provided by the models.

The models are all tuned classical models, none of them consider the QM domain.

Lets give you a different way to look at the problem the 2007 mass of CO2 on Earth is 2.996×10E12 tonnes = 2.996×10E15Kg. The total energy most of which is in the quantum domain can be calculated as E=MC2. E = 2.996×10E15 * 3×10E8 * 3×10E8 = 27×10E31 Joules

Lets put that against the incoming solar energy to Earth of 1.75×10E17 Joules per second

60 secs * 60min * 24 hr * 365 = 31,536,000 seconds per year

E sun per year = 31,536,000 * 1.75×10E17 Joules per second = 55.188×10E23 Joules per year

It takes 100 Million years of solar energy to equal the energy in the 2007 CO2 in the atmosphere.

There is no direct connection between the two numbers it is just giving you scales of energy in each domain.

OK … if we convert the atmospheric CO2 to energy we get a lot of energy. As far as I can tell, that isn’t an issue that affects the climate.

I don’t see how your comment is relevant to my comment on whether the ECS values provided by the GCMs are, in any way, valid.

What you think it just sits there and you add and subtract energy thru the system without it having it’s own pathways and effects … wow go classical physics all that energy just sitting there like a big dead lump.

Pressure, temperature changes, ionization, radiation all have effects it is what makes putting an exact number of the greenhouse effect so hard.

Even the best attempts to measure it directly all you can end up with is the effect of all the greenhouse gases by doing subtracting of things you can measure

https://www.carbonbrief.org/new-study-directly-measures-greenhouse-effect-at-earths-surface

No you don’t get a lot of CO2 energy because the majority of that energy originates from LWIR at -50C and the Sun’s Energy comes from much higher actual energy of UV, SW and the rest of white light.

One lot of energy is coming in and the other is going out.

See

https://wattsupwiththat.com/2019/06/07/latest-global-temp-anomaly-may-19-0-32c-a-simple-no-greenhouse-effect-model-of-day-night-temperatures-at-different-latitudes/#comment-2719642

Personally I’m waiting for level of atmospheric CO2 correlates to sea level as that’s the one true temperature proxy to rule them all. Not interested in Mike’s Nature trick and the travesty they couldn’t explain what started all their tea leaf readings fed into the computers. The Noah’s Ark dooming rerun meme aint about H2O bubbling up out of the mantle or coming in from outer space so you reckon you’ve got a handle on CO2 down the ages then show me the correlation or desist shucksters.

Lord Monkton’s final sentence: “There is certainly no case, scientific, economic, moral or other, for denying electrical power to the 1.2 billion who do not have it, and who die on average 15-20 years before their time because they do not have it.”, does a great job of summarizing the current situation. Where is the proof basis for the Climate Alarm? We have yet to see any proof yet that CO2 drives temperature, whereas it is easy to demonstrate that temperature drives CO2 emissions.

Science and its hypotheses lives or dies from an analysis of its uncertainties – like error bounds.

The hard scientist would not write that climate models “. … were generally quite accurate in projecting global warming …” as Hasfather put in his plain word abstract.

The hard scientist might write that climate models ” … predicted a rise of X +/- Y degrees C per decade, where Y shows the spread of all model runs. ….” Better than the spread of model runs would be an honest range of the calculated uncertainty of every model run, including those held back from inclusion in presentations like the CMIP series. No cherry picking without full explanation, please.

The inability or unwillingness of modern climate researchers to use proper conventions must represent one or more of three factors, ignorance of convention, purposeful avoidance of convention, or deliberate intent to debase conventional, successful science. Geoff S

You nailed it, Geoff.

Thanks, Pat.

So did you, before me. Geoff

“The inability or unwillingness of modern climate researchers to use proper conventions…”

However blog posts such as this one should be just taken at face value?

Loydo,

You know as well as I do that my convention refers to conventional, formal, published methods for calculating and expressing uncertainty or observation error, as prescribed years ago by BIPM, the international Bureau of Weights and Measures, based in France. One can argue that conventions for weights and Measures are simple and that conventions for climate models need to be modified and mdernised. I agree with that. However, I strongly arge that there is no case for failing to apply the correct formalism when the data allow for it.

For example, the correct formality requires inclusion of all model runs in uncertainty estimates, not arbitrary or subjective exclusion of results that do not seem right. The TOA energy balance results, where some satellites gave results so different to others that massive adjustments were performed – this is another example where uncertainty properly spreads over the whole, unadjusted range. Yet another example is dismissing the results of some Argo floats whose ocean temperatures did not seem right. The list of abuses is much larger than these examples of just this one type of poor practise, this cherry picking.

Geoff S

Exactly. Outliers tell you something. Don’t be too quick to dismiss them.

They define range and variance.

If you can find anything wrong. Go for it.

However you’ll just continue to whine, as you always do.

YES x 1000!

Been preaching this on Twitter lately but you can’t believe how many postmodern folks totally ignore accuracy, precision, uncertainty. Too many think you can average 10,000 integer data points and get 100ths place precision because of the Central Limit Theory.

“I cannot discover any paper in which the ideal global mean surface temperature is stated and credibly argued for.”

Well, what are you waiting for?

Here is another gap in the research: what is the maximum rate of temperature change that complex, inter-dependant societies can tolerate? Pity we aren’t running this experiment on some other planet.

So far, the experiment shows no results distinguishable from null.

Loydo, you are on planet B but don’t you belong on planet A where Hillary Clinton was elected President in 2016?

“Well, what are you waiting for?”

That is the question that really should have first been first addressed by the climate alarmist community. No matter what we do as a peoples, the temperature and CO2 is going to change so they should be taking a more balanced approach and looking at net positives and net negatives of climate change, irrespective of drivers or which way it goes. Who knows, a warmer planet, when looked at from a balanced perspective, maybe an overall net positive position to work toward and plan around. There is usually two sides to the coin, but the MSM and AGW’s seem intent on pushing only one at the moment.

“Who knows, a warmer planet, when looked at from a balanced perspective, maybe an overall net positive position to work toward and plan around.”

That may very well be correct diggs. I would qualify that by saying “slightly” warmer – say the Holocene average. The problem is two-fold: 0.2C/decade is way too fast for many species to adapt to and we’re already way above the Holocene average with a long way to go before our effect plateaus. If we’d eased into it at a controlled rate over a few thousand years…

What species?

0.2C/decade is way too fast for many species to adapt to

How do you know?

He doesn’t, he’s just desperate to change the subject.

“0.2C/decade is way too fast for many species to adapt to”

Perhaps, but perhaps not. First we would need to see solid long-term real world data on that before it could be broadcast as another string to the alarmist bow. “Way too fast” / “many species” is simply language designed to scare. Of course that also assumes we actually see a future warning tend of that rate.

Almost all species already exist across ranges with 5 to 10C or more in temperature variance from north to south. The idea that they can’t adapt to a 0.2C/decade change is ludicrous.

(BTW, the actual temperature change is close less than 1/4 the amount Loydo claims.)

.2°C/decade….Not even stupid.

1) The Holocene average is at least 3C warmer than today.

2) You insist on demonstrating your innumeracy. You can’t compare proxies that average temperatures over 100 to 1000 years with modern thermometer records that record on a day to day basis. That you insist on doing so either indicates your incompetence your your inability to argue in good faith. I’ll leave it up to the reader to indicate which you are trying for this time.

1) The Holocene average is at least 3C warmer than today.

Make-it-up-Mark hard at it.

How come this sort of garbage goes unchallenged around here? It was not even 1°C warmer than the LIA. Today is WARMER than the Holocene, ffs.

http://andymaypetrophysicist.files.wordpress.com/2017/05/053117_1728_aholocenete1.png?w=700

Mark W

Past Holocene sea levels ( 1 to 2 m higher then today, support your assertions.

Freeman Dyson spoke about this a decade ago. The climate models are *not* climate models, they are temperature models. Dyson spoke of the need to evaluate climate on a holistic basis, not just based on one factor.

The growth in the green area on earth is a prime example of this. More green area implies several things, among these are an increased capability of removing CO2 from the atmosphere as well as an increased supply of food on a global basis.

Think about this for a moment. The climate alarmists keep claiming that the increased temperatures projected by the models will result in decreased food production and decreased lifespans. Yet we see a continued record growth in global food production over the past twenty years as well as increased lifespans even in the third world. Why is this? Is it because more CO2 in the atmosphere stimulates food growth? Or is it because maximum temperatures are not actually increasing (i.e. the average is going up because the minimum is going up)?Or is it a combination of these plus other facdors, e.g. better seed? (note: the climate models predict an increase in average anomaly size from a baseline – and an *average* actually tells you nothing about what is really happening at the edges which is where the climate impact will actually be seen!)

The climate models alone are totally unable to address this holistically in any shape, form, or fashion. Gloomy predictions based on *one* output from models are no better than the predictions given by the zealot on the corner predicting the end of the world (e.g. Alexandria-Cortez).

p.s. if you don’t know if the average maximum is going up or the average minimum is going up then how do you predict anything? The climate models would only be useful if they gave average minimum and average maximum projections!

Increased greening impacts the climate in a number of ways.

More plants changes the local albedo.

More plants changes the total amount of water in the air through both transpiration as well as helping dew to form on cold mornings.

More plants changes how rocks weather and soil erodes.

I’m sure others could come up with more examples.

Since not a single one of the so called climate models predicted this greening, none of them are accounting for these changes that result from greening.

“Well, what are you waiting for?”

Anything and everything nature throws up but if you believe CO2 drives global warming show us the correlation with all the dooming sea level movements or desist with your nonsense and adapt. Coal fired power runs reverse cycle air-conditioning just fine you’ll find after you discover the truth for yourself.

OTOH you might possibly stumble upon the ideal global sea level for us all in your scientific endeavours and from that deduce the ideal global mean surface temperature level you’re aiming at with your global thermostat twiddlings to date. A true Nobel awaits.

FROM An Engineer‟s Critique of Global Warming „Science‟ The Challenge is Massive for the Alarmist

By Burt Rutan

http://rps3.com/Files/AGW/EngrCritique.AGW-Science.v4.3.pdf

To track and to forecast miniscule global-average temperature changes.

The temperature trend is so slight that, were the global average temperature change which has taken place during the 20th and 21st centuries were to occur in an ordinary room, most of the people in the room would be unaware of it. The CO2 % in this room will increase more during this talk than the atmospheric CO2 % did in the last 100 years…

… and temperature can vary more than 6 or 8C from day to day but that is the difference between the Holocene and the depths of a glaciation.

It varies 19°C from dawn to mid-afternoon here in western Colorado. BFD

50C in the high dry deserts.

Most engineers are rooted in reality.

They do not have time for CAGW fairy tales.

Burt Rutan is sceptical of CAGW – as are most engineers!

George Orwell Animal Farm

Futile project to distract all & sundry from Socialist reality – Build a windmill

Climate Scientists Funny Farm

Futile project to distract all and sundry from Socialist reality – Build lots of windmills

In your home you may have around 20 °C. When you leave your home in wintertime, outside you have maybe -10 °C – do you survive ?

Nomades in Africa have no probs to live in desert-like surroundings, 50°C, maybe 60°C in the sun.

Inuit have no probs to live in regions with less than -20 to-30°C.

So, what will you tell about an ideal temperature ?

Loydo, show me one animal, plant, human living with that global mean temperature.

The global mean ist a statistical mean, not more, not less.

There are cooling regions, there are warming regions, the ones more, the others less.

So, where is the problem with an increase of 0.2°C/dec globally . Point on it.

Apparently Loydo can’t handle 0.2 deg C per decade.

I’m searching what Loydo may be able to handle.

Why should such a study be done?

There isn’t a shred of evidence to support the belief that temperatures are going to rise by more than a few tenths of a degree.

Beyond that, it’s easy to demonstrate that individual sectors of the economy have no trouble at all dealing with temperature swings of several degrees or more from year to year.

Or is it the case that once again you are just whining and trying anything to divert attention away from the failings of your Gods?

Thanks, Pat.

So did you, before me. Geoff

I did a Google search on volcanic eruptions that happened in 2019; I found a site, https://www.thebigwobble.org/ that listed in it’s articles 15 volcanoes around the world that

erupted at least once in 2019, and three in 2020, which has barely started. How do we differentiate between

anthropogenic CO2 and CO2 released by these events? and, since anthropogenic CO2 emissions are dwarfed by

those of volcanic eruptions, is there a point in even trying? Can our models predict with any amount of accuracy how much CO2 will be released by Volcanic activity going forward? Besides, the results of the one study on increased CO2 in the air, where plants use less water, seems to indicate that more CO2 in the atmosphere will lead to decreased temperatures and increased aridity. Quo Vadis, climate models?

That is no basis to presume anything.

Is there a competition for dumbest regular poster on WUWT? Because I am definitely a contender for it. I don’t really understand much of the lead article or the comments arguing its merits. I just kind of blank out.

But listen, dumbbells like me are the folks you have to convince that this whole panic is in any way justified, and I am not convinced. This is because I live in Edmonton (where we’re all nice people, but none too bright), where there is nothing wrong with the climate, other than that the winters are too cold. Spring, summer and fall here are pleasant and temperate. When the seas rise (and they are now surging upwards at the rate of an inch every eight years) we’re not going to care, because the Rocky Mountains will make us a natural dyke. (If that is still a permissible term. I’m stupid, and I wouldn’t know.) That’s how we think out here.

I love those websites that counsel people on how to explain climate change to doubters. And the trick is, you have to make things really, really simple to convince us. I’m so stupid that even the determined simplicity of the climate change guys stumps me entirely. It’s just a damn shame that I’m allowed to vote.

Interesting posting by Lord M of B. We geologists simply look at sea level for the earths temperature history (and project it forward for predictions). The sea level rise has been amazingly steady, and slight, during all of the proposed AGW, etc, events, as it recovers from the LIA. Persons with AGW views who comment here, like Mosher, Stokes, Loydo, and Griff (has left the building), cannot show any change in sea level movement, ie, there is no AGW in the only actual Reality Check available to us.

I wonder how the models handled clouds, since the ipcc still can’t decide the sign of any effect…

Chris, while discussing CLIMATE models you might also want to gasp at the nonsense (IMHO) coming from Attenborough when, in his latest propaganda series, he claims that the changing CLIMATE is effecting WEATHER patterns. I was always of the belief that weather (times 30 years) effected climate, not the other way round.

Your belief is/was incorrect.

Weather cannot ‘create’ climate.

In the sense that climate means the overall energy in vs energy out balance.

Weather just moves energy about before it exits to space.

Climatologists refer to climate as energy available to create ‘weather’.

So in order to remove the NV of weather then at least a 30 year average of weather data should be used to reveal the underlying climate trend.