KEVIN KILTY

Introduction

A guest blogger recently1 made an analysis of the twice per day sampling of maximum and minimum temperature and its relationship to Nyquist rate, in an attempt to refute some common thinking. This blogger concluded the following:

(1) Fussing about regular samples of a few per day is theoretical only. Max/Min temperature recording is not sampling of the sort envisaged by Nyquist because it is not periodic, and has a different sort of validity because we do not know at what time the samples were taken.

(2) Errors in under-sampling a temperature signal are an interaction of sub-daily periods with the diurnal temperature cycle.

(3) Max/Min sampling is something else.

The purpose of the present contribution is to show that these first two conclusions are misleading without further qualification; and the third conclusion could use fleshing out to explain Max/Min values being “something else”.

1. Admonitions about sampling abound

In the world of analog to digital conversion admonitions to bandlimit signals before conversion are easy to find. For example, consider this verbatim quotation from the manual for a common microprocessor regarding use of its analog to digital (A/D or ADC) peripheral. The italics are mine.

“…Signal components higher than the Nyquist frequency

(fADC/2) should not be present to avoid distortion from unpredictable signal convolution. The user is advised to remove high frequency components with a low-pass filter before applying the signals as inputs to the ADC.”

![]()

Date: February 14, 2019.

1 Nyquist, sampling, anomalies, and all that, Nick Stokes, January 25, 2019

2. Distortion from signal convolution

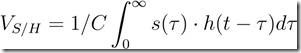

What does distortion from unpredictable signal convolution mean? Signal convolution is a mathematical operation. It describes how a linear system, like the sample and hold (S/H) capacitor of an A/D, attains a value from its input signal. For a specific instance, consider how a digital value would be obtained from an analog temperature sensor. The S/H circuit of an A/D accumulates charge from the temperature sensor input over a measurement interval, 0 → t, between successive A/D conversions.

(1)

Equation 1 is a convolution integral. Distortion occurs when the signal (s(t)) contains rapid, short-lived changes in value which are incompatible with the rate of sampling with the S/H circuit. This sampling rate is part of the response function, h(t). For example the S/H circuit of a typical A/D has small capacitance and small input impedance, and thus has very rapid response to signals, or wide bandwidth if you prefer. It looks like an impulse function. The sampling rate, on the other hand, is typically far slower, perhaps every few seconds or minutes, depending on the ultimate use of the data. In this case h(t) is a series of impulse functions separated by the sampling rate. If s(t) is a slowly varying signal, the convolution produces a nearly periodic output. In the frequency domain, the Fourier transform of h(t), the transfer function (H(ω)), also is periodic, but its periods are closely spaced, and if the sample rate is too slow, below the Nyquist rate, spectra of the signal (S(ω)) overlap and add to one another. This is aliasing, which the guest blogger covered in detail.

From what I have just described, several things should be apparent. First, the problem of aliasing cannot be undone after the fact. It is not possible to figure the numbers making up a sum from the sum itself. Second, aliasing potentially applies to signals other than the daily temperature cycle. The problem is one of interaction between the bandwidth of the A/D process and the rate of sampling. It occurs even if the A/D process consists of a person reading analog records, and recording by pencil. Brief transient signals, even if not cyclic, will enter the digital record so long as they are within the passband of the measurement apparatus. This is why good engineering seeks to match the bandwidth of a measuring system to the bandwidth of the signal. A sufficiently narrow bandwidth improves the signal to noise ratio (S/N), and prevents spurious, unpredictable distortion.

One other thing not made obvious in either my discussion, or that of the guest blogger, concerns the diurnal signal. While a diurnal signal is slow enough to be captured without aliasing by a twice per day measurement cycle, it would never be adequately defined by such a sample. One would be relatively ignorant of the phase and true amplitude of the diurnal cycle with twice per day sampling. For this reason most people sample at least as fast as 2 and one-half times the Nyquist rate to obtain usefully accurate phase and amplitude measurements of signals near the Nyquist rate.

3. An example drawn from real data

![clip_image005[4] clip_image005[4]](https://wattsupwiththat.com/wp-content/uploads/2019/02/clip_image0054.jpg)

Figure 1. A portion of AWOS record.

As an example of distortion from unpredictable signal convolution refer to Figure 1. This figure shows a portion of temperature history drawn from an AWOS station. Note that the hourly temperature records from 23:53 to 4:53 show temperatures sampled on schedule which vary from −29◦F to −36◦F, but the 6 hour records show a minimum temperature of −40◦F.

Obviously the A/D system responded to and recorded a brief duration of very cold air which has been missed in the periodic record completely, but which will enter the Max/Min records as Min of the day. One might well wonder what other noisy events have distorted the temperature record. Obviously the Max/Min temperature records here are distorted in a manner just like aliasing– a brief, high frequency, event has made its way into the slow, twice per day Max/Min record. The distortion is about 2◦F difference between Max/Min and the mean of 24 hourly temperatures–a difference completely unanticipated by the relatively high sampling rate of once per hour, if one accepts the blogger’s analysis uncritically. Just as obviously, if such event had occurred coincident with one of the hourly measurement schedules, it would have become a part of the 24 samples per day spectrum, but at a frequency not reflective of its true duration. So, there are two issues here. The first one being the distortion from under-sampling, and the second being that transient signals possibly aren’t represented at all in some samples but are quite prevalent in others.

In summary, while the Max/Min records are not the sort of uniform sampling rate that the Nyquist theorem envisions, they aren’t far from being such. They are like periodic measurements with a bad clock jitter. It is difficult to argue that a distortion from unpredictable convolution does not have an impact on the spectrum resembling aliasing. Certainly sampling at a rate commensurate with the brevity of events like that in Figure 1 would produce a more accurate daily “mean” than does midpoint of the daily range; or, alternatively one could use a filter to condition the signal ahead of the A/D circuit, just as the manual for the microprocessor suggests, and just as anti-aliasing via the Nyquist criterion, or improvement of S/N would demand. Trying to completely fix the impact of aliasing from digital records is impossible after the fact. The impact is not necessarily negligible, nor is it mainly an interaction with the diurnal cycle. This is not just a theoretical problem; especially considering that Max/Min temperatures are expected to detect even brief temperature excursions, there isn’t any way to mitigate the problem in the Max/Min records themselves. This provides a segue into a discussion about the “something otherness” of Max/Min records.

4. Nature of the Midrange

The midpoint of the daily range of temperature is a statistic. It is among a group known as order statistics, as it comes from data ordered from low to high value. It serves as a measure of central tendency of temperature measurements, a sort of average; but is different from the more common mean, median, and mode statistics. To speak of the midpoint range as a daily mean temperature is simply wrong.

If we think of air temperature as a random variable following some sort of probability distribution, possessing a mean along with a variance, then the midpoint of range may serve as an estimator of mean so long as the distribution is symmetric (kurtosis, excess, and higher moments are zero). It might also be an efficient or robust estimator if the distribution is confined between two hard limits, a form known as platykurtic for having little probability in the distribution tails. In such case we could also estimate a monthly mean temperature using a midrange value from the minimum and maximum temperatures of the month or even an annual mean using the highest and lowest temperatures for a year.

In the case of the AWOS of Figure 1 the annual midpoint is some 20◦F below the mean of daily midpoints, and even a monthly midpoint is typically 5◦F below the mean of daily values. The midpoint is obviously not an efficient estimator at this station, although it could work well perhaps at tropical stations where the distribution of temperature is more nearly platykurtic.

The site from which the AWOS data in Figure 1 was taken is continental; and while this particular January had a minimum temperature of −40◦F, it is not unusual to observe days where the maximum January temperature rises into the mid 60s. The weather in January often consists of a sequence of warm days in advance of a front, with a sequence of cold days following. Thus the temperature distribution at this site is possibly multimodal with very broad tails and without symmetry. In this situation the midrange is not an efficient estimator. It is not robust either, because it depends greatly on extreme events. It is also not an unbiased estimator as the temperature probability distribution is probably not symmetric. It is, however, what we are stuck with when seeking long-term surface temperature records.

One final point seems worth making. Averaging many midpoint values together probably will produce a mean midpoint that behaves like a normally distributed quantity, since all elements to satisfy the central limit theorem seem present. However, people too often assume that averaging fixes all sorts of ills–that averaging will automatically reduce variance in a statistic by the factor 1/√n. This is strictly so only when samples are unbiased, independent and identically distributed. The subject of data independence is beyond the scope of this paper, but here I have made a case that the probability distribution of the maximum and minimum values are not necessarily the same as one another, and may vary from place to place and time to time. I think precision estimates for “mean surface temperature” derived from midpoint of range (Max/Min) are too optimistic.

‘In summary, while the Max/Min records are not the sort of uniform sampling rate that the Nyquist theorem envisions, they aren’t far from being such. They are like periodic measurements with a bad clock jitter. ”

precious.

With respect to your AWOS.. post a link.

And say his name.

It’s Nick Stokes.

Eh? There is a link, and a name. Or did the author add these after posting?

I checked the edit log. The name and link were there from the get-go. It was just Steven Mosher playing out his role as drive-by hack again.

I still don’t understand his smug attitude. Is this just a character flaw or has the actor become stuck in a role?

There is no need for such arrogance, especially when being so painfully misguided

arrogance is a learned behaviorial trait. that becomes a permanently ingrained personality flaw of the pseudo intellectual babbling class.

It is how they talk to each other about the great unwashed that have yet to be indoctrinated. It is a off putting attempt to gain an advantage by talking down to others. They confuse arrogance for wit and in truth they don’t really like each other very much once the others turn their back.

Bill, that is exactly what I was thinking! Thanks for putting it into print.

I used to be that way for a brief stint in my 20’s until i recognized how obnoxious and conceited it is.

Funny: Mosher pretends to be a real scientists who knows numbers and stuff, even though his entire background is in words and stuff.

http://www.populartechnology.net/2014/06/who-is-steven-mosher.html

So here, instead of agreeing/disagreeing with the numbers, and showing his work (as he demands, constantly, of others), he uses his Awesome English Skillz, “proofreads” a post, finds it lacking in something that is actually there, farts into the wind, then leaves.

Not exactly bringing the A game there…

I didn’t want this to look like an attack on Nick Stokes, himself, who I have regard for. I just wanted to fill in things I thought were vague, and correct what I saw as mistakes in his thinking.

Once again, Mosh only sees what his paycheck requires him to see.

The final sentence of the article:

“I think precision estimates for “mean surface temperature” derived from midpoint of range (Max/Min) are too optimistic.”

I don’t know if Mosh just stops reading when he sees something he can use to support his paycheck, or if he really isn’t able to understand these papers.

He only understands what his paycheck lets him understand, anything else he just sneers at.

The name and link were there the whole time. with respect to your drive-by – learn to read before posting.

Mosher

What percentage of all surface “data”

are actually not “sampled” at all —

they are numbers wild guessed

by government bureaucrats,

and calling them “infilled” data

does not change that fact.

Do you know the percentage ?

Do you even care ?

If you don’t care, then why not ?

I expected the usual silence from

Steven al-ways clue-less Mosher,

so I was not disappointed !

“Thus the temperature distribution at this site is possibly multimodal with very broad tails and without symmetry. In this situation the midrange is not an efficient estimator. It is not robust either, because it depends greatly on extreme events. It is also not an unbiased estimator as the temperature probability distribution is probably not symmetric. It is, however, what we are stuck with when seeking long-term surface temperature records.”

So what can be said of using such ‘averages’ for homogenizing temperatures over very wide areas?

I believe the technical term for what may be said regarding this is the ‘square root of Sweet Fanny Adams.

Sadly the whole of climate science – and it is prevalent on the ‘denier’ side, as well as endemic on the ‘warmist’ side – is pervaded by people using tools and techniques whose applicability they do not understand, beyond their (and the tools) spheres of competence….

What we have really is a flimsy structure of linear equations supported by savage extrapolation of inadequate and often false data, under constant revision, that purports to represent a complex non linear system for which the analysis in incomputable, and whose starting point cannot be established by empirical data anyway. That in the end cant even be forced to fit the clumsy and inadequate data we do have.

Frankly, those who think they can see meaningful patterns in it might as well engage in tasseography…

There are only two things that years of climate science have unequivocally revealed, about the climate, and they are firstly that whatever makes it change, CO2 is a bit player, as the correlation between CO2 and temperature change of the sort we allegedly can measure, is almost undetectable, and secondly that we don’t have any accurate or robust data sets for global temperature anyway.

Other things that it has revealed are the problems of science itself in a post truth world. What, after all, is a ‘fact’ ? If nobody hears or sees the tree fall has it in fact fallen? (Schrödinger etc).

Has the climate ‘got warmer’? By how much? What does it mean to say that? How do we know that it has? How reliable is that ‘knowledge’? Is some ‘knowledge’ more reliable than other ‘knowledge’ ? Is there any objective truth that is not already irrevocably relative to some predetermined assumption? I.e. is there such a things as an objective irrevocable inductive truth?.

[ I.e. why when faced with a spiral grooved horn, some blood on the path and equine feces, do I assume that someone has dropped a narwhal horn, a fox has killed a pigeon and a pony has defecated there rather than assuming the forces of darkness killed a unicorn].

I think there are answers to these questions, but they will not, I fear, please either side in this debate.

Since both are redolent of the stench of sloppy one-dimensional thinking.

Chiefly important, ” There are only two things that years of climate science have unequivocally revealed, about the climate, and they are firstly that whatever makes it change, CO2 is a bit player, as the correlation between CO2 and temperature change of the sort we allegedly can measure, is almost undetectable, and secondly that we don’t have any accurate or robust data sets for global temperature anyway.”

Good comment.

Oh, I don’t know Leo we are able to measure the global temperatures to the tenth of a degree (doesn’t matter if it is C or F). I see this published all the time.

And better yet we can actually measure temperatures of vast areas of the Arctic and Antarctic with just a couple of thermometers to high precision. How impressive is that?

And let’s include knowing exactly what the temperature of the Pacific Ocean 1581 meters deep, 512 km east of Easter Island is to the tenth of a degree C!!

I mean with an internet connection, a super computer and a few thousand lines of code, we can do pretty much anything.

And of course we also know the temperature of the Pacific Ocean at 1581 meters deep, 512 km east of Easter Island to a tenth of a degree- – – 50 years ago, so we can “prove” a warming trend.

“I think there are answers to these questions, but they will not, I fear, please either side in this debate.

Since both are redolent of the stench of sloppy one-dimensional thinking.”

Ouch!

I am not sure why you are concerned with ‘pleasing’ one side or the other. If there are answers to your questions, please share, and damn the torpedoes. I, for one, wish to be enlightened, not ‘pleased’.

In the meantime, Mr. Smith, please pardon the stench of our ‘sloppy, one-dimensional thinking’.

Pardon my ignorance and off topic. Is anyone measuring and monitoring global heat content of the atmosphere? This seems the better parameter to follow than temperature when looking for a GHG signal.

In a word, no.

They could do but they won’t.

Due to the presence of water vapor the enthalpy of the various volumes of air vary considerably . The heat content should be reported in kilojoules per kilogram. Take air in a 100% humidity bayou in Louisiana at 75F, it has twice the heat content of a similar volume of close to zero humidity air in Arizona at 100F.

Averaging the intensive variable ‘air temperature’ is a physical nonsense. Claiming that infilling air temperatures makes any sense is a demonstration of ignorance or malfeasance.

“Averaging the intensive variable ‘air temperature’ is a physical nonsense.”

Well said!

The contents of this article are quite complex, but do point to potential difficulty with temperature records, particularly if they are not continuously sampled and then the data collected subjected to the correct processing (which might be called averaging, but this opens a whole can of worms). Strictly speaking this is not an alias problem addressed by Nyquist, which makes sampled frequencies above half the sampling frequency appear as low frequency data, but the simple question of “what is the average temperature”? Measurements at a normal weather station are sampled at a convenient high rate, but taking peak low and high readings is clearly not right to get a daily temperature record. Even averaging these numbers (or some other simple data reduction) will not give the same result as correct sampling of the temperature with the data low pass filtered before sampling. However the data from the A/D converter can be digitally filtered to give a true correctly sampled result, but this raw data is not normally available. The difference between averaged min and max numbers and correctly sampled data may not be large, but when one is looking for fractional degree changes may well be important. As usual the climate change data is not the same as simple weather, but often assumed to be the same. Note that satellite temperatures will suffer from a sampling problem as the record is once per orbit at each point, and again we do not know how this is processed as the “temperature” readings!

I would like to see a simple, cleverly illustrated explanation of how the temperature is measured and averaged. Having lived in seven climatic zones the differences between the max and min, as well as when these occur, as well as fluctuations have all been different. Even working out an average for the seven areas where I have lived is a major headache. How anyone can be so confident of the average temperature of our whole world, of the average increase, of the relationship of increases and decreases at various times in different areas and how this impacts on the world average, baffles me.

Of course, valid statistical methodology is key to measurement in detail. But on broad, long-term semi-millennial and geologic time-scales, climate patterns are sufficiently crude-and-gruff to distinguish 102-kiloyear Pleistocene glaciations (“Ice Ages”) from median 12,250-year interstadial remissions such as the Holocene Interglacial Epoch which ended 12,250+3,500-14,400 = AD 1350 with a 500-year Little Ice Age (LIA) through c. AD 1850/1890.

In this regard, aside from correcting egregiously skewed official data, recent literature makes two main points:

First: NASA’s recently developed Thermosphere Climate Index (TCI) “depicts how much heat nitric oxide (NO) molecules are dumping into space. During Solar Maximum, TCI is high (Hot); during Solar Minimum, it’s low (Cold). Right now, Earth’s TCI is … 10 times lower than during more active phases of the solar cycle,” as NASA compilers note.

“If current trends continue, Earth’s overall, non-seasonal temperature could set an 80-year record for cold,” says Martin Mlynczak of NASA’s Langley Research Center. “… (pending a 70-year Grand Solar Minimum), a prolonged and very severe chill-phase may begin in a matter of months.” [How does this guy still have a job?]

Second: Australian researcher Robert Holmes’ peer reviewed Molar Mass Version of the Ideal Gas Law (pub. December 2017) definitively refutes any possible CO2 connection to climate variations: Where Temperature T = PM/Rp, any planet’s near-surface global Temperature T equates to its Atmospheric Pressure P times Mean Molar Mass M over its Gas Constant R times Atmospheric Density p.

Accordingly, any individual planet’s global atmospheric surface temperature (GAST) is proportional to PM/p, converted to an equation per its Gas Constant reciprocal = 1/R. Applying Holmes’ relation to all planets in Earth’s solar system, zero error-margins attest that there is no empirical or mathematical basis for any “forced” carbon-accumulation factor (CO2) affecting Planet Earth.

As the current 140-year “amplitude compression” rebound from 1890 terminates in 2030 amidst a 70+ year Grand Solar Minimum similar to that of 1645 – 1715, measurements’ “noise levels” will certainly reflect Earth’s ongoing reversion to continental glaciations covering 70% of habitable regions with ice sheets 2.5 miles thick [see New York City’s Central Park striations]. If statistical armamentaria fail to register this self-evident trend from c. AD 2100 and beyond, so much the worse for self-deluded researchers.

I see a definite problem with this formulation. While temperature is related to those parameters, without an external heat source (i.e. a star), all those theoretical (and actual) planets would very quickly approach the 4K of space. There has to be a term for the heating of the atmosphere by external radiation or the whole thing collapses.

OweninGa, your 4K of space

is https://www.google.com/search?client=ms-android-samsung&ei=28RnXNHZFa2FrwTih7rgDA&q=+space+min+temperature&oq=+space+min+temperature&gs_l=mobile-gws-wiz-serp.

Every planet IN THE UNIVERSE maintains its own VERY SPECIAL base temperature by

The air column above the ground floor generates heat by its own weight / pressure.

OweninGa,

the pressure / heat problem again:

The air column above the ground floor generates heat by its own weight / pressure.

ever worked with air pressure operated machines?

you go in Bermuda shorts and Hawaii shirts into the machine hall.

pressure –> compression

OweninGa,

the pressure / heat problem again:

The air column above the ground floor generates heat by its own weight / pressure.

ever worked with air compression operated machines?

you go in Bermuda shorts and Hawaii shirts into the machine hall.

And need lots of beverages during working hours.

My fault :

OweninGa, your 4K of space

is https://www.google.com/search?client=ms-android-samsung&ei=28RnXNHZFa2FrwTih7rgDA&q=+space+min+temperature&oq=+space+min+temperature&gs_l=mobile-gws-wiz-serp.

Every planet IN THE UNIVERSE maintains its own VERY SPECIAL base temperature by

UNIVERSE BASE TEMPERATURE +

The air column above the ground floor generated heat by the planets own weight / pressure

air column above the ground floor.

One analysis is here. It looks at Hadcrut and shows that it is simply awful.

https://judithcurry.com/2011/10/18/does-the-aliasing-beast-feed-the-uncertainty-monster/

Using a digit filter post sampling will not “give a true correctly sample result”. The information has already been lost.

Fractional degree changes, yes, this has been the point that I have tried to emphasize on several occasions in my peon comments.

All this highly technical mathematical tooling seems ludicrous, with regard to seemingly tiny fractional differences that it produces, … in general and in relation to the precision of instrumental measurements.

You said the magic words: “identically distributed”. When considering a signal to be measured you need to consider if your tools are able to produce the necessary condition for analysis. For identically distributed data, each data point in the sample needs to have sufficiently less uncertainty compared to the variation of their nominal values (nominal being the 4 in say 4 +/- 0.5 units for example). Otherwise you cannot determine the distribution to sufficient resolution to achieve the conditions to meet the Central Limit Theorem, which underpins the Standard Error of the Mean.

The majority of textbook examples you see of this use populations with discrete sample elements, not individual measurements with their own intrinsic uncertainty. And if you read the scientific papers by people such as the Met Office, they take the same approach considering uncertainties in the data. They have to make assumptions about the sample measurement distribution. A wet finger in the air basically.

Bottom line: the derived temperature anomaly data is a hypothetical data set since determination of identical distributions cannot be obtained from the intrinsic measurement appartus tolerances. The original measurement apparatuses (I’ll stick to English plurals) were never designed to give this level of uncertainty. From the get-go climate science was about creating suppostion from data with little possibility of it ever being definitive.

And yet results from exercises are taken as real results applicable to the real world.

Make no mistake climate science advocacy is very little to do with logic and fact, or ethics for that matter. Climate scientists who push their results as being indicative of Nature have no skin in the game or accountability.

As I have said before, if they think their methods are sufficient then they should spend a month or two eating food and drinking water deemed safe for consumption under the same standards. Standards where the certification equipment has orders of magnitude more uncertainty than the variation of the impurities they are trying to minimise. And where a “safe” value is obtained by averaging many noisy measurements to somehow produce lower uncertainty.

I’m pretty sure physical reality would teach them some humility. They may even lose a few pounds.

I wonder why not record WHEN the temperature change!

Let say record the time when temperature change of 1°C. This way would be easy to follow what really happen.

Of course there will be a BIG DATA to deal with but …

Just an idea.

?

The temperature is always changing. 24 hours a day.

Temperature changes constantly, from moment to moment. Historically, it was a manual process (a person would read the thermometer and jot down the results a handful of times a day) before it was automated. Today, in theory, modern equipment could make a near continuous recording of temperature (to the tune of however fast the computer is that is doing the recording) but you’d quickly run out of storage space at the shear volume of data making such continuous recording impractical for little to no gain. Somewhere between those two extremes is a happy medium. I leave it to others to figure out where that is.

Kevin,

Thank you for the very interesting post. I would like to think about it more and then discuss a few things with you. Nick Stokes’ essay that you refer to was a response to the essay I presented. I would like to calibrate with you – can you tell me if you read my post as well? It is located here:

https://wattsupwiththat.com/2019/01/14/a-condensed-version-of-a-paper-entitled-violating-nyquist-another-source-of-significant-error-in-the-instrumental-temperature-record/

If you have not, and you would like to read it, then I recommend the full version of the paper located here:

https://wattsupwiththat.com/wp-content/uploads/2019/01/Violating-Nyquist-Instrumental-Record-20190101-1Full.pdf

The full version covers some basics about sampling theory, which you clearly do not need – but there are some comments and points made that might be lost if you just read the short version published on WUWT. The full paper version is a superset of the WUWT post. Because I cover a few points in the full version not covered in the WUWT post I have a few differences in the conclusions as well. For example, in the full version I detail what frequencies in the signal are critical for aliasing and where they land in the sampled result. (For 2-samples/day, the spectral content at 1 and 3-cycles/day aliases and corrupts the daily-signal and spectral content near 2-cycles/day aliases and corrupts the long-term signal.)

In my paper I stated the max and min samples, with their irregular periodic timing as being related to clock jitter, practically speaking – although the size of the jitter is quite large compared to what you would see from an ADC clock. This was a point of much discussion between Nick and me. We also debated whether or not Tmax and Tmin were technically samples. My position was/is that Nyquist provides us with a transform for signals to go between the analog and digital domains. The goal is to make sure that the integrity of the equivalency of domains is maintained. In the real world the signal we start with is in the analog domain. If we end up with discrete values related to that analog signal then we have samples and they must comply with Nyquist if the math operations on those samples are to have any (accurate) relevance to the original analog signal. In the discussion there was also some misunderstanding that needed to be cleared up and that was just what does the Nyquist frequency mean: was it what nature demanded or what the engineer designed to. There were discussions about what was signal and what was noise – did that higher frequency content matter. My position as an engineer is that the break frequency of the anti-aliasing filter needs to be decided – perhaps by the climate scientist. Then the sample rate had to be selected to correspond to Nyquist based upon the filter. Another key point was that sampling faster is good to ease the filter requirements and lessen aliasing – and practically speaking, the rates required for an air temperature signal are glacial – all good commercial ADCs can do that with no additional cost. The belief is that the cost of bandwidth and data storage are also relatively low so if we sample adequately fast then accurate data can be obtained and after sampling any DSP operation desired can be done on that data. Sample properly and the secrets of the signal are yours to play with. Alias and you have error that can not be corrected post sampling. No undo button available – despite many creative claims to the contrary.

Anyway, if you wouldn’t mind letting me know if you have read my paper (and which version), it will help me to communicate with you most efficiently. I’m pleased that this subject is getting more attention.

Thank you,

William

I’d be happy to cooperate on this topic. Beyond issues of sampling, I was hoping to raise some awareness about why Max/Min temperatures, by design, might not be well suited to the purpose of “mean surface temperature” calculated to the hundredth of a degree. Let me go read the papers you have referenced, which I am not familiar with. I’m not sure how to make contact.

Thanks for the reply Kevin – I appreciate it. Also, thanks for taking the time to read my paper. Let me know what you think.

I have some thoughts about your Figure 1 and the “missing” -40 reading.

You said: “Obviously the A/D system responded to and recorded a brief duration of very cold air which has been missed in the periodic record completely, but which will enter the Max/Min records as Min of the day.”

I agree with most of what you said in your paper but if I’m understanding you correctly I don’t think I agree with your assessment about this point. At this particular station, samples are taken far more frequently than 1 hour intervals. Consulting the ASOS guides (thanks to Clyde and Kip for supplying them recently) we see that samples occur every 10 seconds and these are averaged every 1 minute and then the 1 minute data is further averaged to 5 minutes. (A strange practice – but this is what is done.) So from this data we achieve the ability to get the max and min values for a given period. The data is also presented in an “hourly sample package”, and I would not expect to see any of those hourly samples contain the max min values – except by luck. I don’t see this as a problem. I see the hourly packaging as the problem – well maybe “problem” is too strong of a description – hourly packaging seems to be an unnecessary practice. We have more data – just publish all of it and let the user of the data process it appropriately. I don’t think we have a loss of data in your example – just a presentation of a subset that doesn’t include the max/min. What do you think?

For any properly designed system, whatever the chosen Nyquist rate is, assuming the anti-aliasing filter is implemented properly, we can use DSP to retrieve the actual “inter-sample peaks”. It is a common operation performed when mastering audio recordings as the levels are being set for the final medium (CD, iTunes, Spotify, etc.). A device called a “look-ahead peak limiter” is used on the samples in the digital domain. Through proper filtering and upsampling, the “true-peak” values can be discovered and the gains used in the limiting process can be set according to the actual true-peak instead of the sample peak values. For a sinusoid, the actual true-peak can be 3dB higher in level than the samples show. For a square wave the true-peak can be 6dB or more above the samples! This can, of course, be observed by converting the samples properly back into the analog domain. However, the problem in audio is that the DAC (Digital to Analog Converter) has a maximum limit to the analog voltage it can generate. If the input samples are max value for the DAC but the actual true-peak is +3 or +6dB higher than the DAC’s limit then the DAC “clips” and the result is an awful “pop” or “crackle” sound in the audio. The goal when mastering is to set levels with enough margin to handle the inter-sample peaks not visible from the samples – so that the DAC is never forced to clip.

In my paper (partly due to trying to keep the size of it under control) I do not mention inter-sample peaks or true-peak. In my analysis I simply select the highest value sample from the 5-minute samples and call this the max. This is because that is what NOAA does. A more accurate second pass analysis would actually show that the real “mean” error between 5-minute samples and max/min samples is even greater than I presented. The proper method would be to do DSP on the samples to integrate upsampled data (equivalent to analog integration of the reconstructed signal) and compare this to the retrieved inter-sample true-peaks. I suspect that analysis would yield even more error than I showed in my paper. This is because the full integrated mean would not change much as the energy in that content is small. But the max and min values could swing by several °C, more greatly influencing the midrange value between those 2 numbers.

What do you think?

William, good to see your response. Kevin, thanks for a most interesting post and your reply to William. I’ve learned much from both of your posts.

I particularly enjoyed your example of the six-hour min-max being different from the hourly. It made the issues clear.

Onwards,

w.

I missed out a point which is the low pass filter cutoff frequency, which should obviously match the day length, in other words something close to 1/24 hours. This will produce a true power accumulated temperature reading, to be measured at the same time each day. We do not want short period change readings for the climate data, and this filter will reduce all the HF noise variations to essentially zero, and so be able to be measured with great accuracy. Sampling noise reduction can be by averaging many samples taken over a time interval significantly less than a day length, so be capable of reproducing very accurate change data, if not absolute data due to instrument inherent accuracy.

An analog filter with that low of a cutoff frequency would be pretty hard to do. It’s simply not practical. It can be done in the digital domain by down sampling. The signal is first run through a digital low pass filter then is resampled at a lower frequency. For example, to reduce the amount of data points to half you apply a digital filter to the original data to remove all the frequency components above 1/4 the the original sampling rate. You would then decimate (resample) the data by dropping every other sample.

Greg F

One approach is to design a collection system with a specific thermal inertia to dampen high-frequency impulses. Actually, Stevenson Screens already do that, but they may not be the optimal low-pass filtering for climatology.

Greg F

Decimating the sampled data set exacerbates the aliasing problem by making the sampling rate effectively a fraction of the original sampling rate. If the initial sampling rate meets the Nyquist Criteria, to capture the highest frequency component(s), thereby preventing aliasing and assuring faithful reproduction of the signal, then and only then, digital low-pass filters can be applied to suppress the high-frequency component(s).

Perhaps I didn’t explain it well enough as you appear to think I didn’t LP filter the raw data before decimating it.

analog signal –> sampled data–> digital filter (low pass) –> decimate

I thought my example was simple and clear enough.

All temperature sensors have some thermal inertia. A/D converters and processing is sufficiently cheap enough that adding thermal inertia to the sensor (to effectively low pass filter the signal) is likely not the most economical solution.

David Stone,

I suggest that we should be careful to assess what is signal and what is noise. How do we know that higher frequency information doesn’t tell us important things about climate? There is energy in those frequencies and capturing them properly allows us to analyze it. I recommend erring on the side of designing the system to capture as wide a bandwidth as possible. If properly captured then all options are at our disposal. We can filter away as much of it as we desire or as much as the science supports. Likewise we can use as much as desired or as much as the science supports. If we don’t capture it then the range of options is reduced. It is difficult to make a case that there are any benefits (economic or technical) to capturing less data up front. But we do agree that the system design must have integrity: the anti-aliasing filter and the sample rate must comply with the chosen Nyquist frequency. Standardized front-end circuits should also be used with specifications for response times, drift, clock jitter, offsets, power supply accuracy, etc, etc. And with that of course the siting of the instrument. I’m not advocating any specific formulation – just stating that these are important parameters that should be standardized so that we know each station is common to those items. The design should be done to allow a station to be placed anywhere around the world and not fail to capture according to the design specifications.

Others have said correctly that there is a lot of hourly data out there and we should use it. I support that and an effort to understand how the way it was captured could contribute to inaccuracies. That data is likely a big improvement over the max/min data and method. There may or may not be much difference between that and a system engineered by even better standards. At this point I don’t know, but if any new designs are undertaken, then they should be undertaken with best engineering practices.

David Stone, note that satellites will suffer from sampling issues as the sample the same spot on (or over) the earth once every 16 days, approximately twice per month.

You can read more here:

https://atrain.nasa.gov/publications/Aqua.pdf

Look at page one, ‘repeat cycle’.

You can see the list of other satellites within the A-train here:

https://www.nasa.gov/content/a-train

Obviously all of the instruments on board each satellite will pass over once per fortnight…

It is all very complex, clever but the data is very ‘smeary’.

And of course there is that pervading idea from some climate scientists that they ‘know’ the average temperature of the world to hundredths of a degree centigrade!

” It describes how a linear system ” … and here lies a problem in climastrology, many times they use linear systems methodology that is not applicable to the non-linear system they study. Ex falso, quodlibet.

+100

Jim

Theoretically, the equations (“models”) of physics precisely and exactly represent only what we _believe_ is Reality.

In practice, observations tend to corroborate these models, or not. We are happy if our observations are approximately in agreement with the models, more or less.

For example, Shannon’s sampling theorem states that a signal may be perfectly reconstructed from band-limited signals if the Nyquist limit is satisfied. In the same sense that a line may be perfectly reconstructed from two distinct points.

But we all know that Bias and Variance dominate our observations, explaining why draftsmen prefer to use at least three points to determine a line

And using MinMax temps is a reasonable “sawtooth” basis for mean temp, if that is the only data you have.

Nick was replying to William’s article.

In William’s article is “Figure 1: NOAA USCRN Data for Cordova, AK Nov 11, 2017”. He does a numerical analysis and shows an error of about 0.2C comparing “5 minute sample mean vs. (Tmax+Tmin)/2”.

The point that (Tmax+Tmin)/2 produces an error is adequately demonstrated by William’s numerical analysis. The question is about how often you get a temperature profile like the one shown in Fig. 1. In my neck of the woods, within 25 miles of Lake Ontario, in the winter, anomalous temperature profiles are very common. In fact, the winter temperature profile looks nothing at all like a nice tame sine wave.

So, why does it matter whether the temperature profile looks like a sine wave? The simplest way to think about the Nyquist rate is to ask what is the waveform that could be reconstructed from a data set that does not violate the Nyquist criterion. The answer is that you could reconstruct an unchanging sine wave whose frequency is half the sampling rate.

What happens when you don’t have a sine wave? The answer is that the wave form contains higher frequencies. The Nyquist rate applies to those and their frequencies go all the way to infinity. Thus, you have to set an acceptable error and set your sample rate based on that.

The daily temperature profile is nothing like a sine wave (where I live) and does not repeat. Nyquist is a red herring. William’s numerical analysis is sufficiently compelling to make the point that (Tmax+Tmin)/2 produces an error.

Oops.

Should be.

CommieBob

You said, “The Nyquist rate applies to those and their frequencies go all the way to infinity.” That is why it is necessary to pre-filter the analog signal before sampling. We can’t have a discretized sample with an infinite number of sinusoids.

Exactly so. Of course, when you do the LPF, you lose information and that produces an error.

Thank you commieBob.

That convolution discussion is very confusing.

I’m sure that what the author meant to say is true. But when he says, “In this case h(t) is a series of impulse functions separated by the sampling rate,” it sounds as though he wants us to convolve a signal consisting of a sequence of mutually time-offset impulses with the signal being sampled.

I haven’t spoken with experts on this stuff for awhile, so I’m probably rusty. But what I think they said is that in this case the convolution occurs in the frequency domain, not in the time domain.

“… convolution occurs in the frequency domain, not in the time domain”

Actually, convolution is strictly a mathematical operation and attaches no specific physical interpretation to the integration variable.

Or perhaps you are referring to the so-called ‘convolution theorem’, in Fourier analysis, which states that a convolution of two variables in one domain is equal to the product of their Fourier transforms on the other domain

That is indeed what I’m referring to. But if, as the author seems to say, h(t) is a sequence of periodically occurring impulses, then sampling is the product of h(t) and s(t), not their convolution. So this situation is unlike the typical one, in which h(t) is a system’s impulse response and convolution therefore occurs in the time domain, with multiplication occurring in the frequency domain. In this situation the frequency domain is where the convolution occurs.

As Jim Masterson pointed out below, convolving any function with the unit impulse is mathematically identical to the function itself.

But such ideal unit impulses do not exist in Nature. What we really have is a noisy, short interval in which a signal is present.

As I pointed out above the sampling theorem guarantees, in theory, perfect reconstruction of band-limited sampled signals, if the Nyquist limit is obeyed. In practice it means you can reconstruct a sampled signal with arbitrarily small error.

Any time function can be represented as a summation or integral of unit impulses as follows:

The function is the unit impulse. Of course, you must be dealing with a linear system where the principle of superposition holds.

function is the unit impulse. Of course, you must be dealing with a linear system where the principle of superposition holds.

Jim

Yes, the convolution of a function with the unit impulse is the function itself. But I don’t see how that makes sampling equivalent to convolving with a sequence of impulses

https://www.google.com/url?sa=t&source=web&rct=j&url=http://nms.csail.mit.edu/spinal/shannonpaper.pdf&ved=2ahUKEwjPh5atzr7gAhUHVd8KHaZyBiwQFjAAegQIBRAB&usg=AOvVaw0_2SG_yumr7ovVqV5JR3R1

Equation 7 is the working version of the reconstruction part of the sampling theorem.

Proof is based on constructing Fourier series coefficients.

Yes, yes, yes. All of Shannon’s papers are sitting in my summer home’s basement, so, although I can’t say I carry around all of his teachings in my head, you needn’t recite elementary signal-processing results. Doing so doesn’t address Mr. Kilty’s apparent belief that the output of a sampling operation is the result of convolving the signal to be sampled with a function h(t) that consists of a sequence of unit impulses.

I appreciate your trying to help, but you seem unable to grasp what the issue is.

The function above is not a convolution integral. It’s an identity. The identity can be used to solve convolution integrals. A convolution integral involves two signals as follows:

Essentially you are taking two signals and multiplying them together. The second signal is flipped end-for-end and multiplied with the first one–one impulse pair at a time. You start at minus infinity for both signals and go all the way to plus infinity. (Some convolution integrals start at zero.)

This is useful for solving the response to a network with a specific input. The network is reduced to a transfer function–f1 above. The input signal is f2. The convolution is the response of the network to the input signal. Using Laplace transforms, convolution is simply multiplying the transforms together. You then convert the Laplace transforms to their time domain equivalent. It takes some effort, but it’s a lot easier than doing everything in the time domain.

Jim

Again, I know what convolution is. I have for over half a century. The issue isn’t how convolution works. I know how it works.

The issue is Mr. Kilty’s apparent belief that sampling is equivalent to convolving with an h(t) (i.e., your f_2) that consists of a sequence of impulses that recur at the sampling rate.

I hope and trust that he doesn’t really believe that, but that’s how his post reads.

If you have something to say that’s relevant to that issue, I’m happy to discuss it. But I see no value in responding to further comments that merely recite rudimentary signal theory.

>>

But I see no value in responding to further comments that merely recite rudimentary signal theory.

<<

I don’t mean to insult you with rudimentary theories. But convolution requires linear systems. Weather, and by extension climate, are not linear systems. Therefore, convolution can’t be used for these systems. And as I and many others have said repeatedly, temperature is an intensive thermodynamic property. Intensive thermodynamic properties can’t be averaged to obtain a physically meaningful result. (However, you can average any series of numbers–it just may have no physical significance.)

After further consideration–your initial concern is a valid point.

Jim

Jim

Joe – I don’t get it either. Is it clumsy writing or clumsy understanding (or both).

Just in the first paragraph of the top post:

“. . . . . . . . . . This sampling rate is part of the response function, h(t). For example the S/H circuit of a typical A/D has small capacitance and small input impedance, and thus has very rapid response to signals, or wide bandwidth if you prefer. It looks like an impulse function. . . . . .”

No – the Sample/Hold response is a rectangle in time. If that is h(t), you convolve a weighted (weighted by the signal samples) delta-train with the rectangle to get the S/H output (to hold still) prior to A/D conversion. But this is NOT what he says h(t) is a few lines lower!

“. . . . . . . . . . In this case h(t) is a series of impulse functions separated by the sampling rate. . . . . . . .”

As suggested, h(t) is a rectangle of width 1/fs. A “series of impulse functions separated by the sampling rate” (a frequency) would be a Dirac delta comb in frequency. That is the function with which the original spectrum is convolved (in frequency) to get the (periodic) spectrum of the sampled signal. But he says h is a function of time h(t)!

“. . . . . . . . . . If s(t) is a slowly varying signal, the convolution produces a nearly periodic output. . . . . . . . “

What convolution? Equation (1)? What domain? He must mean the periodic sampled spectrum. Why “nearly periodic?” Is it the sync roll-off of the rectangle due to the S/H?

“. . . . . . . . . . In the frequency domain, the Fourier transform of h(t), the transfer function (H(ω)), also is periodic, but its periods are closely spaced. . . . . . . .”

Why does he say “also is periodic?” What is ORIGINALLY periodic in this statement? In what sense are the periods “closely spaced”? It depends on fs.

The rest of the paragraph is correct.

Bernie

“Doing so doesn’t address Mr. Kilty’s apparent belief that the output of a sampling operation is the result of convolving the signal to be sampled “

It isn’t a convolution. Sampling multiplies the signal by the Dirac comb. This becomes convolution in the frequency domain, also with a Dirac comb (one comb transforms into another). As convolving with δ just regenerates the function, convolving with a comb generates an equally spaced set of copies of the spectrum, which then overlap. That overlapping is another way to see aliasing.

Sampling multiplies the signal by the Dirac comb. This becomes convolution in the frequency domain, also with a Dirac comb

Indeed, but only for strictly periodic sampling in the time domain. Otherwise, there’s no Nyquist frequency defined and there can be no periodic spectral folding in the frequency domain, a.k.a aliasing.

Sadly, entire threads have been wasted here on egregious misconceptions.

1sky1,

You said: “Indeed, but only for strictly periodic sampling in the time domain. Otherwise, there’s no Nyquist frequency defined and there can be no periodic spectral folding in the frequency domain, a.k.a aliasing.”

Can you give us a definition of “strictly periodic”? In that, can you explain how sampling works with semiconductor ADCs which operate with jitter? I assume you agree that not 1 clock pulse has ever triggered on planet Earth in the history of clock pulses that didn’t have some quantity of jitter. So how do you resolve your statement? What are the limits of jitter allowed for Nyquist to be defined? It sounds like you are saying the value is exactly 0 in every possible unit of time. If zero is the answer then how do you explain the function of an ADC in the real world? What does an imperfect clock mean for the operation? Does aliasing (spectral folding) get eliminated in reality? What then is the cause of imperfections of sampling when the clock frequency is < 2BW?

Responding to William at Feb 17, 2019 at 7:02 pm:

The suggestion that timing discrepancies due to the substantial ignorance of the actual times of Tmax and Tmin are analogous to the tiny “jitter” in familiar sampling situations (such as digital audio) is a stretch, and then some.

Might I suggest that you consider the practice of “oversampling” (OS) that is pretty much universal in digital audio. This (with “noise shaping” (NS) ) allows one to trade off expensive divisions for additional amplitude resolution (more bits) for inexpensive additional divisions in time (smaller sampling intervals). [An OSNS CD player (one bit D/A) can be purchased for less than the cost of even a single 16 bit D/A chip.] Note that Digital audio is something like a 44.1 kHz sampling rate (very low frequency as compared to things like radio signals). We are limited, for practical purposes, with amplitude resolution; but easily work at higher and higher data rates.

The timing jitter and amplitude quantization errors (round-off noise) are very similar effects, handled as random noise. This can be seen by considering that, for a bandlimited signal, any sample might be taken slightly early or slightly late, perhaps with no error after quantization, and at least is unlikely to be much more than the LSB. That is, given an error (noise), the choice of assigning it to timing jitter, or to limited amplitudes, is your choice. For the most part, you CAN arguably assume that the sampling grid is PERFECT and the signal slightly more noisy.

The point is that we have an IMMENSE amount of “wiggle room” with respect to precise timing of samples. Even a millisecond of error in weather data recording would be unusual. In contrast, the unknown times of Tmax and Tmin are hours.

Jitter seems a very poor analogy here.

Bernie

Hello Bernie,

Consider taking 5-minute samples and discarding all but the max and min. For this discussion we retain the timing of those samples.

Jitter is when a clock pulse deviates from its ideal time by some amount ∆t. Assuming the clock pulse happens inside of its intended period, what are the limits of ∆t before it is no longer jitter and becomes something else. And what is the something else?

Please be as succinct as possible because I think this should be answerable with brevity.

I’m open to being convinced otherwise. I know the amount of ∆t is large relative to the period as compared to modern ADC applications, but I could not find a limit so I went with it as an analogy. It has garnered a lot of attention. I’m not sure if it is or isn’t critical to anything I have said. So my intention at this point is to have some fun to see what we can learn on this.

My thinking so far is that the noise/error just scale with what I’m calling jitter. Its larger than we normally encounter but nothing else in the theory breaks down.

What do you think?

Replying to William Ward at February 17, 2019 at 11:11 pm

An explanatory model, or theory, usefully applies (or breaks down) according to overall circumstances, not to some threshold.

Jitter is small (perhaps 1% of a sampling interval), usually adequately modeled as random noise. For a substantial fraction of the sampling interval or greater, we need your “something-else”.

Jitter is when you show up for a party 5 minutes early or 5 minutes late (different watch settings, traffic, expected indifference). “Something-else” is when you show up the wrong evening!

Taking just two numbers (Tmax and Tmin), when much more and better data (with actual times included) is possible, is definitely a something-else.

Bernie

Hello Bernie,

I hope you are well.

Your said: “An explanatory model, or theory, usefully applies (or breaks down) according to overall circumstances, not to some threshold.”

Ok, you are on record here, as you were in our previous discussions, with your opinion about the applicability of jitter. But you offer no empirical evidence, math, rationale, nor cite any source to refute it.

I’m open to being convinced otherwise, but so far my rationale has stood up to the critique. I will continue to use it until someone can show my how it breaks down.

I’m still not sure that it is worth arguing about. 2 samples/day, whether poorly timed or not, don’t provide an accurate daily mean. Most people seem to think the trends are the more important issue. I have shown good evidence of trend error that results as well. This trend error is small to my standards of measurement, but then again so are the claimed warming trends small by my standards. The fact that they (trend error and trends) are of similar magnitude is what is most important in my assessment. No one yet has shown that the trends Paramenter and I have presented are not correct. There have been a lot of claims to that effect, and alternate forms of analysis that contradict it, but no findings of error in our analysis. And that is where we are with it.

The decision to measure max and min daily, was probably an intuitive decision – not one based upon science or signal analysis. It is a fortunate thing that the spectrum of the temperature signal does allow for sampling 2-samples/day without completely destructive effects. If there was more energy in 1, 2, 3-cycles/day, then the result would be different. It turns out to work ok – but not so good that we can claim records to 0.1C or 0.01C or trends of 0.1C/decade.

For those with even a modicum of comprehension of DSP math, the rationale is perfectly clear: there’s a categorical difference between periodic, clock-DRIVEN sampling of the ordinates of a continuous signal and clock-INDEPENDENT recording of phenomenological features (e.g. zero-upcrossings, peaks, troughs) of the signal wave-form. The former may contain some random clock-jitter in practice (e.g., imprecise timing of thermometer readings at WMO synoptic times), whereas the latter is entirely signal-dependent (although some features may be missed in periodic sampling).

There’s simply no way that the highly asymmetric diurnal wave-form that produces daily Min/ Max readings at very irregular times, clustered near dawn at mid-afternoon, can be reasonably attributed to any clock-jitter of regular twice-daily sampling. Jitter smears, but does not change the average time between samples. Ward starts with a highly untypical diurnal wave in Figure 1 of his original posting and proceeds to insist against all analytic reason that kiwi-fruit are apples.

Thanks 1sky1 – you said: “Jitter smears, but does not change the average time between samples. . . . . . . “ Good point .

On the “In search of the standard day” thread (Feb 16, 2019) I had a relataed observation (Feb 16 at 6:13 pm) while illustrating the problem of fitting sinewave min-max that were not 12 hours apart! The illustration is here:

http://electronotes.netfirms.com/StandDay.jpg

Below at 5:41 pm I just minutes ago posted an interesting result of sampling a one-day cycle at two samples a day where aliasing is “undone” by well-known FFT interpolation.

Bernie

Jitter is defined simply as the deviation of an edge (sample) from where it should be.

Nothing more and nothing less.

Total Jitter can be decomposed into bounded (deterministic jitter) and unbounded (random jitter). Bounded jitter can be decomposed into bounded correlated and bounded uncorrelated jitter. Bounded correlated jitter can be decomposed into duty cycle distortion (DCD) and intersymbol interference (ISI). Bounded uncorrelated jitter can be decomposed into periodic jitter and “other” jitter. Other jitter – meaning anything else not described that explains a sample deviating from its correct time.

DCD fits well with our temperature signal max/min issue. Feel free to call it whatever you prefer – it will not change the results. We have sample values that deviate from where they need to be for Nyquist. There is no other mathematical basis for working with digital signals except Nyquist. There is no Phenomenological Sampling Theorem. If you are working with discrete values from an analog signal, then they have to comply with Nyquist.

It does not matter if you get the sample values through an ADC or through a max/min thermometer or a crystal ball. It does not matter what provides the timing to get the digital samples. **It doesn’t matter if we obtain or keep the timing information. **

Here is the reason. Nyquist requires perfect correspondence between the analog and digital domains. We can demonstrate this correspondence by reconstructing back to the analog domain. Nyquist insists upon the perfect timing and perfect values for reconstruction. Nyquist provides the timing by forcing it to the rate inferred by the samples. We provide the values of the samples obtained. Jitter in the conversion in either direction deviates from the “strict periodicity” required for perfect reconstruction. This is seen as quantization error when we compare the sampled signal to the original signal at the perfect sampling times.

If you want to find the actual analog signal you are working with digitally then you simply run the sample values through a DAC at the sample rate inferred by your samples.

Examples: Assume typical air temperature signal and ADC with anti-aliasing filter compatible with 288-samples/day.

1) We sample at 288-samples/day (5-minute samples) with no perceptible jitter. If we run the samples back through a DAC set at 288-samples/day we get the original signal.

2) We sample at 2-samples/day, clocked with no perceptible jitter. We must run the samples back through the DAC at 2-samples/day. The reconstructed signal will differ from the original as a function of the aliasing.

3) We sample at 2-samples/day, clocked with DCD (jitter). The samples happen to correspond to the timing and values of Tmax and Tmin. To reconstruct, Nyquist requires we run this back through the DAC with a clock rate of 2-samples/day. The reconstructed signal will differ from the original as a function of the aliasing *and* as a function of the quantization error resulting from measuring the analog signal at the wrong time compared to what Nyquist required. The reconstruction places the samples at the time location they should have come from originally if the sampling was “strictly periodic.” However, the sample values used in the reconstruction are not what they should have been for that time.

This is an egregious display of illogic developed by blind fixation upon ADC hardware operation. With Min/Max thermometry we are NOT “working with digital signals,” but with phenomenological features of the CONTINUOUS temperature signal. It’s a straightforward matter of waveform analysis (q.v.), no different than registering the elevation of crests and troughs of waves above prevailing sea level or the directed zero-crossings. The number of such features observed per unit time has nothing to do with the discrete sampling frequency and everything to do with the original signal.

If the tortured rationale that claims this to be a DSP problem were ever submitted to any IEEE-refereed publication, it would elicit only head-shaking and laughter.

William Ward at February 19, 2019 at 10:49 pm said:

“. . . . . . . . . . DCD fits well with our temperature signal max/min issue. . . . . . . . . “

It would seem logical, but you don’t know the duty-cycle nor is it constant, so what is gained as opposed to just saying you don’t know the two times? You just postulate one of the times and postulate the wait till the second time. Right?

“ . . . . . . . . . . There is no other mathematical basis for working with digital signals except Nyquist. There is no Phenomenological Sampling Theorem. If you are working with discrete values from an analog signal, then they have to comply with Nyquist. . . . . . . . . . . .”

Of course there are – alternative signal models. For example, I curve-fitted a sinusoid of known frequency to non-uniform min-max. Four equations in four unknowns.

http://electronotes.netfirms.com/StandDay.jpg

“. . . . . . . . . . 3) We sample at 2-samples/day, clocked with DCD (jitter). The samples happen to correspond to the timing and values of Tmax and Tmin. . . . . . . . . . “

You have to describe this (happen to correspond) better. Is the temperature accommodating the physical clocking or the clocking accommodating the physical temperature max-min? How would you expect this to happen? Thanks.

Bernie

Bernie,

You said: “You have to describe this (happen to correspond) better. …”

I think you misunderstand my point. In the example (#3), I’m showing that the max and min values are not special. The ADC samples can hypothetically land on the max and min values, via some clocking jitter. Or they can land on other values similarly far from the ideal “strictly periodic” sample time. All that matters is the time deviation from the ideal, which results in quantization error for that sample.

Nyquist requires clocking to be strictly periodic when we convert from analog and when we convert to digital. Tmax and Tmin are periodic (2-samples/day) but not strictly periodic. But no sample is strictly periodic in reality. Every sample has some timing deviation from the ideal.

What happens when sampling analog at times that deviate from the ideal? (This is jitter by definition). We measure a value that is correct for the moment of sampling, but not correct for when Nyquist is expecting the sample to be taken. If we reconstruct, the DAC uses the correct sample-rate and sample-time (because Nyquist requires it) but with the wrong value. This is quantization error when we view any individual sample.

See this image for an illustration: https://imgur.com/KSgcDxm

In this example, the clock is supposed to trigger a sample at point #1, which corresponds to x=π/3. The correct value of the sample at x=π/3 is 0.866. But what if the clock pulse arrives early? Regardless of the cause, what if it arrives at point #2, which happens to correspond to π/4? The ADC samples and reads the correct value for x=π/4, which is 0.707, but not the correct value for what Nyquist expects (0.866) We have quantization error of 0.866-0.707 for this sample, due to the jitter. If reconstruction is performed, we get error compared to the original signal. The DAC will sample at x=π/3 but with the value from x=π/4. So reconstruction uses 0.707 instead of 0.866. Again, quantization error for that sample.

If we just sampled temperature twice a day (every 12-hours), we would have aliasing due to higher frequency components between 1 and 3-cycles/day, but no jitter related quantization error. If we move the sample times (perhaps such that they line up with where max and min occur – just to make the point), then this increases jitter and now we add jitter related quantization error.

Whether we know the times of these samples doesn’t change the fact that the reconstruction takes place where they were supposed to have happened. Digital audio is a good example. There is no sample timing information included with the sample values – just the overall sample rate required.

If you think some of your methods can be used to reconstruct the signal using max and min, then we have USCRN data you can use. The timing is available for these samples. You also have the 5-minute samples with which to use as your comparison. To test your results you can simply invert one of the signals and sum them. The closer to a null the closer the match.

The problem of unknown times corresponding to Tmax and Tmin can be approached by guessing likely times, perhaps 7AM for a min and 4 PM for a max. Assuming these times will do, we still have non-uniform sampling – the times are not 12 hours apart. If they were equally spaced, VERY standard recovery (interpolation) would be achieved with sinc functions. Instead, different interpolation functions (due to Ron Bracewell) are necessary, but work in VERY much the same way the sincs do:

http://electronotes.netfirms.com/BunchedSamples.jpg

After trying a number of approaches with the FFT, I succeeded instead with the continuous-time case as shown here:

http://electronotes.netfirms.com/NonUniform.jpg

The top panel shows one day with Tmin=50 at 7AM and Tmax=80 at 4PM. The interpolated (black) curve goes exactly through both samples. In order to get a better idea as to what an ensemble of days might look like, this day is placed between two other days in the bottom panel. Again, the interpolation is exact. Note that all other days are Tmax=0, Tmin=0 by default (so avoid the ends!).

The daily sinusoidal-like interpolated curve is evident, but the min and max of this black curve are not Tmin or Tmax, but the actual timing of these suggests better guesses for a second run (etc.) of an iterative program.

-Bernie

Moderator: I see I made one mistake by saying “variance reduced in a statistic by the factor 1/(sqrt n)” It should read (1/n). Also why does the font switch here and there?

This is just one problem with the temperature databases.

1) Precision – For an example look at the BEST data. They take data from 1880 onward and somehow get precision out to one one-hundredth of a degree. In other words, when they average 50 and 51, they keep the answer of 50.5. This is adding precision that is not available in the real world. Any college professor in chemistry or physics would eat a students lunch for not using the rules for significant digits when doing this. If recorded temperatures are in integer values, then every subsequent mathematical operation needs to end up with integer values!

2) The Central Limit Theory and Uncertainty of Mean have very specific criteria when using them to increase the accuracy of measurements. The measurements must be random, normally distributed, independent, and OF THE SAME THING. You simply can’t take measurements of different things, i.e. temperature at different times, average them and say you can increase the accuracy and precision because you can divide the errors by the sqrt(1/N). They are different things and the measurement of one simply can not affect the accuracy of the other.

3) Temperature is a continuous function. It is not a discreet function with only certain allowed values. Consequently, one must have sufficient sampling in order to accurately recreate the continuous function. As the author describes, what we have now is not something that accurately describes what the actual temperature function does.

Jim Gorman

+1

Excellent points.

Thank you Jim. I agree with Clyde, and will raise him one. +2 for your comments.

Jim, ++++10000. From an industrial chemist.

Macha

Its people like you who cause ‘Thumb Up’ inflation! 🙂

The best way to resolve the discussion is with a simulation.

You can create a made a made up temperature history spanning a 100 year period, knowing the continuous function of each day as if you measured once every 5 seconds. Against this set of data you can apply whatever sampling methods you choose and evaluate the usefulness and reliability of each.

Hello Steve O,

I did some of that in my paper:

https://wattsupwiththat.com/2019/01/14/a-condensed-version-of-a-paper-entitled-violating-nyquist-another-source-of-significant-error-in-the-instrumental-temperature-record/

Using USCRN data I was able to do it for up to 12 years using 5-minute sample data and compare it to the mix/max data method. I presented the trend errors in a table at the end.

Here are some charts not presented in the paper, showing yearly offset and long term linear trend errors. Note, these graphs were never intended for publication so some labeling might be below my normal standards.

https://imgur.com/xA4hGSZ

https://imgur.com/cqCCzC1

https://imgur.com/IC7239t

https://imgur.com/SaGIgKL

What do you think?

Leo Smith sums things up petty well IMO:

“What we have really is a flimsy structure of linear equations supported by savage extrapolation of inadequate and often false data, under constant revision, that purports to represent a complex non linear system for which the analysis in incomputable, and whose starting point cannot be established by empirical data anyway, that in the end can’t even be forced to fit the clumsy and inadequate data we do have”

The time aspect is missing from this analyses.

Even though any specific stations’s day value can (and will) be way off it is hard to see how this can create any lasting bias over longer time periods. The error will be random and stay within boundaries and hence not affect the overall long term trend. Moreover any possible bias should cancel out between multiple stations.

There is a much bigger problem how multiple station data records are aggregated to faithfully represent a larger area especially when locations and area coverage constantly changes. There you have the real “sampling error”.

MrZ

You said, “The error will be random and stay within boundaries and hence not affect the overall long term trend.”

Not strictly random. Most of the Tmins will be at night and most of the Tmaxes will be in the day time. That is over a 24-hour day, the bulk of the lows will be during the nominal 12-hour night and the bulk of the highs will be when the sun is shining.

Hi Clyde,

True, I can not object to that but how could that fact affect any average bias over time?

Are you saying in context of sampling theory or TOBS adjustments? I am asking because the mercury MIN/MAX is an almost perfect sample for those two points (the article discuss how relevant those are) but very much depending on WHEN you read and reset.

Your error is your assumption that the error is truly random.

That has not been shown to be the case.

Indeed there is evidence that it is not the case.

OK MarkW,

If so please, take me through how that creates a consistent and growing bias across stations and time. My main point was simply it does NOT affect the overall trend.

Next step i.e station aggregation DO affect those trends.

It may or may not create a trend. That’s the problem, the data is so bad that there is no way to tell for sure.

The reality is that it is absurd to claim that we can measure the temperature of the planet to with 0.01C today. It is several orders of magnitude more absurd to claim we can do it with the records from 1850.

Hi Mark!

Agree 0.001 precision is a joke.

The main error however does not originate from sampling the aggregation of stations is the main source of error

MrZ,