By William Ward, 1/01/2019

The 4,900-word paper can be downloaded here: https://wattsupwiththat.com/wp-content/uploads/2019/01/Violating-Nyquist-Instrumental-Record-20190112-1Full.pdf

The 169-year long instrumental temperature record is built upon 2 measurements taken daily at each monitoring station, specifically the maximum temperature (Tmax) and the minimum temperature (Tmin). These daily readings are then averaged to calculate the daily mean temperature as Tmean = (Tmax+Tmin)/2. Tmax and Tmin measurements are also used to calculate monthly and yearly mean temperatures. These mean temperatures are then used to determine warming or cooling trends. This “historical method” of using daily measured Tmax and Tmin values for mean and trend calculations is still used today. However, air temperature is a signal and measurement of signals must comply with the mathematical laws of signal processing. The Nyquist-Shannon Sampling Theorem tells us that we must sample a signal at a rate that is at least 2x the highest frequency component of the signal. This is called the Nyquist Rate. Sampling at a rate less than this introduces aliasing error into our measurement. The slower our sample rate is compared to Nyquist, the greater the error will be in our mean temperature and trend calculations. The Nyquist Sampling Theorem is essential science to every field of technology in use today. Digital audio, digital video, industrial process control, medical instrumentation, flight control systems, digital communications, etc., all rely on the essential math and physics of Nyquist.

NOAA, in their USCRN (US Climate Reference Network) has determined that it is necessary to sample at 4,320-samples/day to practically implement Nyquist. 4,320-samples/day equates to 1-sample every 20 seconds. This is the practical Nyquist sample rate. NOAA averages these 20-second samples to 1-sample every 5 minutes or 288-samples/day. NOAA only publishes the 288-sample/day data (not the 4,320-samples/day data), so to align with NOAA the rate will be referred to as “288-samples/day” (or “5-minute samples”). (Unfortunately, NOAA creates naming confusion with their process of averaging down to a slower rate. It should be understood that the actual rate is 4,320-samples/day.) This rate can only be achieved by automated sampling with electronic instruments. Most of the instrumental record is comprised of readings of mercury max/min thermometers, taken long before automation was an option. Today, despite the availability of automation, the instrumental record still uses Tmax and Tmin (effectively 2-samples/day) instead of a Nyquist compliant sampling. The reason for this is to maintain compatibility with the older historical record. However, with only 2-samples/day the instrumental record is highly aliased. It will be shown in this paper that the historical method introduces significant error to mean temperatures and long-term temperature trends.

NOAA’s USCRN is a small network that was completed in 2008 and it contributes very little to the overall instrumental record. However, the USCRN data provides us a special opportunity to compare a high-quality version of the historical method to a Nyquist compliant method. The Tmax and Tmin values are obtained by finding the highest and lowest values among the 288 samples for the 24-hour period of interest.

NOAA USCRN Examples to Illustrate the Effect of Violating Nyquist on Mean Temperature

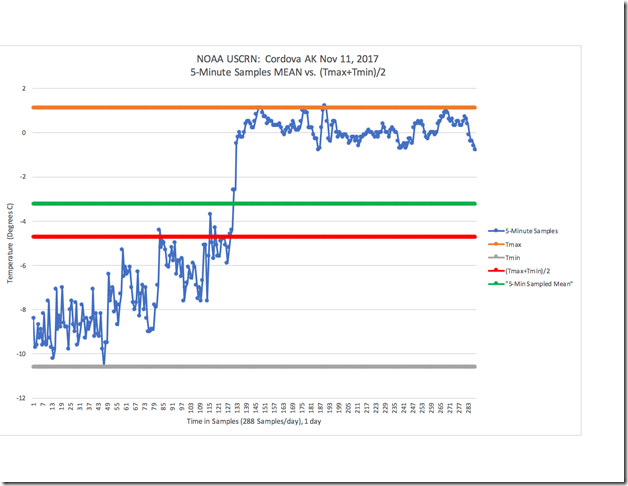

The following example will be used to illustrate how the amount of error in the mean temperature increases as the sample rate decreases. Figure 1 shows the temperature as measured at Cordova AK on Nov 11, 2017, using the NOAA USCRN 5-minute samples.

Figure 1: NOAA USCRN Data for Cordova, AK Nov 11, 2017

The blue line shows the 288 samples of temperature taken that day. It shows 24-hours of temperature data. The green line shows the correct and accurate daily mean temperature that is calculated by summing the value of each sample and then dividing the sum by the total number of samples. Temperature is not heat energy, but it is used as an approximation of heat energy. To that extent, the mean (green line) and the daily-signal (blue line) deliver the exact same amount of heat energy over the 24-hour period of the day. The correct mean is -3.3 °C. Tmax is represented by the orange line and Tmin by the grey line. These are obtained by finding the highest and lowest values among the 288 samples for the 24-hour period. The mean calculated from (Tmax+Tmin)/2 is shown by the red line. (Tmax+Tmin)/2 yields a mean of -4.7 °C, which is a 1.4 °C error compared to the correct mean.

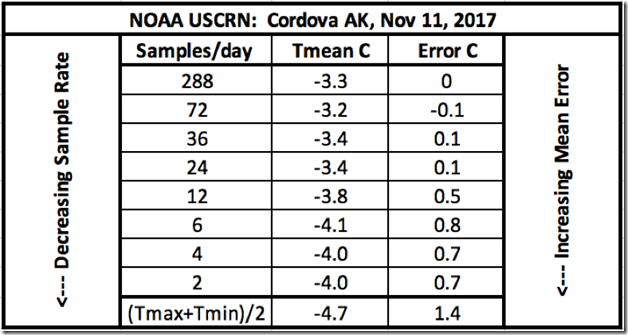

Using the same signal and data from Figure 1, Figure 2 shows the calculated temperature means obtained from progressively decreased sample rates. These decreased sample rates can be obtained by dividing down the 288-sample/day sample rate by a factor of 4, 8, 12, 24, 48, 72 and 144. Therefore, the sample rates will correspond to: 72, 36, 24, 12, 6, 4 and 2-samples/day respectively. By properly discarding the samples using this method of dividing down, the net effect is the same as having sampled at the reduced rate originally. The corresponding aliasing that results from the lower sample rates, reveals itself as shown in the table in Figure 2.

Figure 2: Table Showing Increasing Mean Error with Decreasing Sample Rate

It is clear from the data in Figure 2, that as the sample rate decreases below Nyquist, the corresponding error introduced from aliasing increases. It is also clear that 2, 4, 6 or 12-samples/day produces a very inaccurate result. 24-samples/day (1-sample/hr) up to 72-samples/day (3-samples/hr) may or may not yield accurate results. It depends upon the spectral content of the signal being sampled. NOAA has decided upon 288-samples/day (4,320-samples/day before averaging) so that will be considered the current benchmark standard. Sampling below a rate of 288-samples/day will be (and should be) considered a violation of Nyquist.

It is interesting to point out that what is listed in the table as 2-samples/day yields 0.7 °C error. But (Tmax+Tmin)/2 is also technically 2-samples/day with an error of 1.4°C as shown in the table. How can this be possible? It is possible because (Tmax+Tmin)/2 is a special case of 2-samples per day because these samples are not spaced evenly in time. The maximum and minimum temperatures happen whenever they happen. When we sample properly, we sample according to a “clock” – where the samples happen regularly at exactly the same time of day. The fact that Tmax and Tmin happen at irregular times during the day causes its own kind of sampling error. It is beyond the scope of this paper to fully explain, but this error is related to what is called “clock jitter”. It is a known problem in the field of signal analysis and data acquisition. 2-samples/day, regularly timed, would likely produce better results than finding the maximum and minimum temperatures from any given day. The instrumental temperature record uses the absolute worst method of sampling possible – resulting in maximum error.

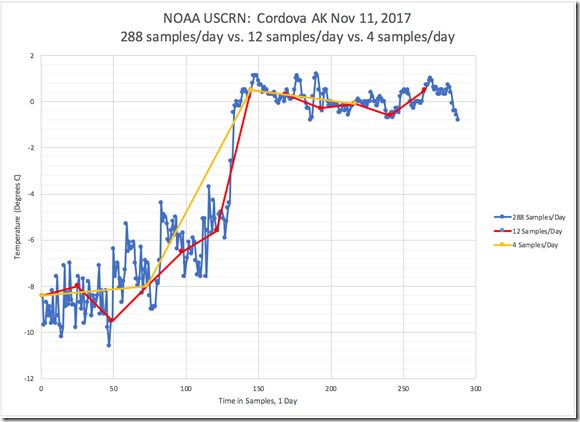

Figure 3 shows the same daily temperature signal as in Figure 1, represented by 288-samples/day (blue line). Also shown is the same daily temperature signal sampled with 12-samples/day (red line) and 4-samples/day (yellow line). From this figure, it is visually obvious that a lot of information from the original signal is lost by using only 12-samples/day, and even more is lost by going to 4-samples/day. This lost information is what causes the resulting mean to be incorrect. This figure graphically illustrates what we see in the corresponding table of Figure 2. Figure 3 explains the sampling error in the time-domain.

Figure 3: NOAA USCRN Data for Cordova, AK Nov 11, 2017: Decreased Detail from 12 and 4-Samples/Day Sample Rate – Time-Domain

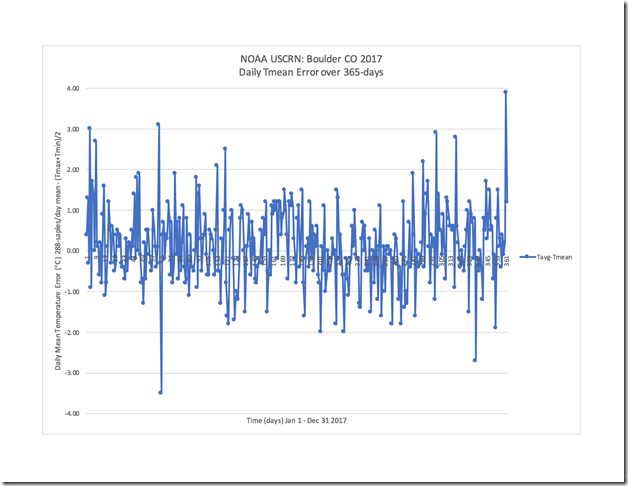

Figure 4 shows the daily mean error between the USCRN 288-samples/day method and the historical method, as measured over 365 days at the Boulder CO station in 2017. Each data point is the error for that particular day in the record. We can see from Figure 4 that (Tmax+Tmin)/2 yields daily errors of up to ± 4 °C. Calculating mean temperature with 2-samples/day rarely yields the correct mean.

Figure 4: NOAA USCRN Data for Boulder CO – Daily Mean Error Over 365 Days (2017)

Let’s look at another example, similar to the one presented in Figure 1, but over a longer period of time. Figure 5 shows (in blue) the 288-samples/day signal from Spokane WA, from Jan 13 – Jan 22, 2008. Tmax (avg) and Tmin (avg) are shown in orange and grey respectively. The (Tmax+Tmin)/2 mean is shown in red (-6.9 °C) and the correct mean calculated from the 5-minute sampled data is shown in green (-6.2 °C). The (Tmax+Tmin)/2 mean has an error of 0.7 °C over the 10-day period.

Figure 5: NOAA USCRN Data for Spokane, WA – Jan13-22, 2008

The Effect of Violating Nyquist on Temperature Trends

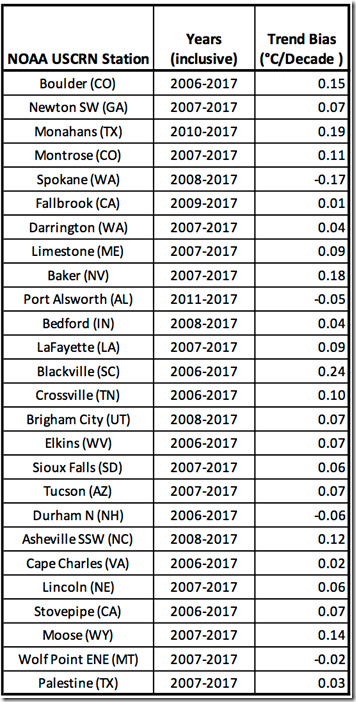

Finally, we need to look at the impact of violating Nyquist on temperature trends. In Figure 6, a comparison is made between the linear temperature trends obtained from the historical and Nyquist compliant methods using NOAA USCRN data for Blackville SC, from Jan 2006 – Dec 2017. We see the trend derived from the historical method (orange line) starts approximately 0.2 °C warmer and has a 0.24 °C/decade warming bias compared to the Nyquist compliant method (blue line). Figure 7 shows the trend bias or error (°C/Decade) for 26 stations in the USCRN over a 7-12 year period. The 5-minute samples data gives us our reference trend. The trend bias is calculated by subtracting the reference from the (Tmaxavg+Tminavg)/2 derived trend. Almost every station exhibits a warming bias, with a few exhibiting a cooling bias. The largest warming bias is 0.24 °C/decade and the largest cooling bias is -0.17 °C/decade, with an average warming bias across all 26 stations of 0.06C. According to Wikipedia, the calculated global average warming trend for the period 1880-2012 is 0.064 ± 0.015 °C per decade. If we look at the more recent period that contains the controversial “Global Warming Pause”, then using data from Wikipedia, we get the following warming trends depending upon which year is selected for the starting point of the “pause”:

1996: 0.14°C/decade

1997: 0.07°C/decade

1998: 0.05°C/decade

While no conclusions can be made by comparing the trends over 7-12 years from 26 stations in the USCRN to the currently accepted long-term or short term global average trends, it can be instructive. It is clear that using the historical method to calculate trends yields a trend error and this error can be of a similar magnitude to the claimed trends. Therefore, it is reasonable to call into question the validity of the trends. There is no way to know for certain, as the bulk of the instrumental record does not have a properly sampled alternate record to compare it to. But it is a mathematical certainty that every mean temperature and derived trend in the record contains significant error if it was calculated with 2-samples/day.

Figure 6: NOAA USCRN Data for Blackville, SC – Jan 2006-Dec 2017 – Monthly Mean Trendlines

Figure 7: Trend Bias (°C/Decade) for 26 Stations in USCRN

Conclusions

1. Air temperature is a signal and therefore, it must be measured by sampling according to the mathematical laws governing signal processing. Sampling must be performed according to The Nyquist Shannon-Sampling Theorem.

2. The Nyquist-Shannon Sampling Theorem has been known for over 80 years and is essential science to every field of technology that involves signal processing. Violating Nyquist guarantees samples will be corrupted with aliasing error and the samples will not represent the signal being sampled. Aliasing cannot be corrected post-sampling.

3. The Nyquist-Shannon Sampling Theorem requires the sample rate to be greater than 2x the highest frequency component of the signal. Using automated electronic equipment and computers, NOAA USCRN samples at a rate of 4,320-samples/day (averaged to 288-samples/day) to practically apply Nyquist and avoid aliasing error.

4. The instrumental temperature record relies on the historical method of obtaining daily Tmax and Tmin values, essentially 2-samples/day. Therefore, the instrumental record violates the Nyquist-Shannon Sampling Theorem.

5. NOAA’s USCRN is a high-quality data acquisition network, capable of properly sampling a temperature signal. The USCRN is a small network that was completed in 2008 and it contributes very little to the overall instrumental record, however, the USCRN data provides us a special opportunity to compare analysis methods. A comparison can be made between temperature means and trends generated with Tmax and Tmin versus a properly sampled signal compliant with Nyquist.

6. Using a limited number of examples from the USCRN, it has been shown that using Tmax and Tmin as the source of data can yield the following error compared to a signal sampled according to Nyquist:

a. Mean error that varies station-to-station and day-to-day within a station.

b. Mean error that varies over time with a mathematical sign that may change (positive/negative).

c. Daily mean error that varies up to +/-4°C.

d. Long term trend error with a warming bias up to 0.24°C/decade and a cooling bias of up to 0.17°C/decade.

7. The full instrumental record does not have a properly sampled alternate record to use for comparison. More work is needed to determine if a theoretical upper limit can be calculated for mean and trend error resulting from use of the historical method.

8. The extent of the error observed with its associated uncertain magnitude and sign, call into question the scientific value of the instrumental record and the practice of using Tmax and Tmin to calculate mean values and long-term trends.

Reference section:

This USCRN data can be found at the following site: https://www.ncdc.noaa.gov/crn/qcdatasets.html

NOAA USCRN data for Figure 1 is obtained here:

NOAA USCRN data for Figure 4 is obtained here:

https://www1.ncdc.noaa.gov/pub/data/uscrn/products/daily01/2017/CRND0103-2017-AK_Cordova_14_ESE.txt

NOAA USCRN data for Figure 5 is obtained here:

NOAA USCRN data for Figure 6 is obtained here:

https://www1.ncdc.noaa.gov/pub/data/uscrn/products/monthly01/CRNM0102-SC_Blackville_3_W.txt

“NOAA, in their USCRN (US Climate Reference Network) has determined that it is necessary to sample at 4,320-samples/day to practically implement Nyquist. 4,320-samples/day equates to 1-sample every 20 seconds. This is the practical Nyquist sample rate. ”

How about far simple alternative: put a digital thermometer inside a 5 gallon bucket of water, housed in a Stevenson screen, then read temperature just once a day at 2pm.

From where I am standing that bucket of water would have been frozen solid a couple of weeks ago. ;o)

Greg F: LOL! Maybe antifreeze needs to be added.

vukcevic: Interesting idea: using a specified thermal mass to integrate the heat energy. I’m not sure if this would be good or not, but might be worthy of exploring. Of course, you would still need to determine what the maximum frequency content was for that situation and sample at the rate that complied with Nyquist. You still have a signal, but likely an even slower one. But what NOAA did with the USCRN is probably the way to go. We would need a much better global coverage with stations of this quality and then we would need to process all of the 288 samples/day for each location. Not mentioned in the paper but is one of the next obvious failings of the way climate science is done, is the concept of “global average temperature”. What is the science – what is the thermodynamics that says averaging temperature signals from multiple locations has any meaning at all? In engineering, we feed signals into characteristic equations. What do we feed the “global average temperature” into? First, we don’t even end up with a signal. We end up with a number. This is a science fail. But what it is fed into is the Climate Alarmism machine and thats about all.

Quoting from article: William Ward, 1/01/2019

The author, William Ward, is assuming things that are not based in fact or reality.

First of all, there is no per se “169-year long instrumental temperature record” that defines or portrays global, continental, national or regional near-surface air temperatures. But now there is, or is claimed to be, 1 or 2 or 3 or so, LOCAL +/- 169-year long instrumental temperature records whose contents covering their 1st 100 years have to be adjudged as highly questionable and of little importance other than use as “reference data/info”.

Secondly, the same as above is true for the per se, United States’ 148-year long instrumental temperature record.

And thirdly, in the early years of recording the US’s “temperature record” there were only 19 stations reporting temperatures, ……. all of them east of the Mississippi River …… and those temperature were recorded twice per day, at specified hours, ……. which had absolutely nothing to do with the daily Tmax or Tmin temperatures.

Now just about everything you wanted to know about the US Temperature Record and/or the NWS, …… and maybe a few things you don’t want to know, …… can be found at/on the following web sites, ….. just as soon as the current “government shutdown” is resolved, 😊 😊 ….. to wit:

The beginning of the National Weather Service

http://www.nws.noaa.gov/pa/history/index.php

National Weather Service Timeline – October 1, 1890:

http://www.nws.noaa.gov/pa/history/timeline.php

History of the NWS – 1870 to present

http://www.nws.noaa.gov/pa/history/evolution.php

Thank you, Mr. Cogar, for commenting on the first sentence:

“The 169-year long instrumental temperature record …”

There is no 169-year real-time record

of global temperatures !

The Southern Hemisphere had insufficient

coverage until after World War II — an argument

could be made that pre-World War II

surface temperatures represent only

the Northern Hemisphere !

How can we take this author seriously

when his FIRST sentence is wrong !

In addition, the author seems obsessed

with the sampling rate of surface

thermometers.

There are MANY major problems

with surface temperature “data”

BEFORE we get to the sampling rate !

How about a MAJORITY of the data

being infilled (wild guessed) by government

bureaucrats with science degrees, for grids

with no data, or incomplete data?

How about the CHARACTER of the

people compiling the data — the same

people who had predicted a lot of

global warming, have a huge

conflict of interest because they

also get to compile the “actuals”,

and they certainly want to show

their predictions were right,

so they make “adjustments”

that push the actuals closer

to their computer game predictions ?

How about the fact that weather satellite data,

with far less infilling, has been available

since 1979, but are ignored simply because they

show less warming than the surface numbers?

How about the fact that weather balloon data

confirm that satellite data are right, but the

surface data are “outliers” — yet the weather

balloon data are also ignored ?

The biggest puzzle of all is why one number,

the global average temperature, is the

right number to represent the “climate”

of our planet. After all, not one person

actually lives in the “average temperature” !

My climate science blog:

http://www.elOnionBloggle.Blogspot.com

Samuel and Richard,

Thanks for commenting here. I actually agree with some of what you said. However, I think maybe you judged my paper too quickly based upon your critiques about the instrumental record comments. My point here is to show problems with how climate science is conducted with the use of the data in the record. With a limited number of words for this post, I didn’t want to waste them on details that were not relevant to the core message. Please feel free to add details about the record history – that is helpful. And I’m not negating all of the other problems with temperature measurements you mention. In a post to someone else I listed out 12 things wrong with the “record”.

I have cataloged 12 significant “scientific” errors with the instrumental record:

1) Instruments not calibrated

2) Violating Nyquist

3) Reading error (parallax, meniscus, etc) – how many degrees wrong is each reading?

4) Quantization error – what do we call a reading that is between 2 digits?

5) Inflated precision – the addition of significant figures that are not in the original measurements.

6) Data infill – making up data or interpolating readings to get non-reported data.

7) UHI – ever encroaching thermal mass – giving a warming bias to nighttime temps.

8) Thermal corruption – radio power transmitters located in the Stevenson Screen under the thermistor or a station at the end of a runway blasted with jet exhaust.

9) Siting – general siting problems – may be combined with 7 and 8

10) Rural station dropout – loss of well situated stations.

11) Instrument changes – changing instruments that break with units that are not calibrated the same or instruments that are electronic where previous instruments were not. Response times likely increase adding greater likelihood to capture transients.

12) Data manipulation/alteration – special magic algorithms to fix decades old data.

This paper just focuses on 1 of them. Many others have already written about the other 11 here.

William Ward – January 16, 2019 at 1:31 pm

It is MLO that, the per se, US Historical Temperature Record (circa 1870-2018), …… that is controlled/maintained by the NWS and/or NOAA, …… is so corrupted that it is utterly useless for conducting any research or investigations of an actual, factual scientific nature.

William Ward, I would like to point out the fact that your …… “list of 12 things wrong with the record” ……. are only a minor part of “the problem”.

In actuality, there is NO per se, actual, factual US Historical Temperature Record prior to 1970’s, or possibly prior to 1980’s. And the reason I say that is, all temperature data recorded at specified locations by government employees and/or volunteers, from the early days in 1870/80’s, up thru the 1960’s/1970’s, was transmitted daily to the NWS where its ONLY use was for generating 3 to 7 day weather forecasts, ….. originally only for areas east of the Mississippi River.

“DUH”, the first 70+ years of the aforesaid “daily temperatures” were only applicable for 7 days max, and then of no value whatsoever, other than garbage reference data.

And that NWS archived near-surface temperature “data base” didn’t become the US Historical Temperature Record until the proponents of AGW/CAGW gave it its current name after adopting/highjacking “it” to prove their “junk science”.

/sarc is in short supply, to be use in moderation.

[Oh yes, we use /sarc all time in our secret moderator forum. Oh wait, you meant something else. Nevermind. -mod]

Because the constant lag time of you integrating scheme would produce a variable error as the temperature derivative is never constant.

vukcevic – January 14, 2019 at 2:12 pm

“HA”, apparently no one liked that “idea” when I proposed a similar one 3 or 4 years ago ….. even though my proposal suggested using a one (1) gallon enclosed container (aluminum), ….. a -30F water-antifreeze mixture ……. and two (2) temperature sensors, ……. with said sensors connected to a radio transmitter located underneath or on top of the Stevenson screen ….. and which is programmable to transmit temperature data at whatever frequency desired.

The liquid in the above noted container …… NEGATES any further “averaging” of daily temperatures ….. because it is a 100% accurate real-time “average’er”.

Thank you Vuk – nailed it

Is it really beyond possibility that the observed temp changes are due to a decrease in te water comtent of the land/ground/Earth’s land surface?

Might explain an ever so gentle sea-level rise in places?

If you wanna measure Earth Surface Temperature – measure it.

Put a little datalogger running at 4 samples per day max, pop it in a plastic bag with some silica gel, put that inside an old jam-jar with a good fitting/sealing lid..

THEN: bury it under 18″ of dirt.

Return once per month to unload it and check its battery.

If you fancy an average of a wide area of ground, locate the underground stop-cock tap of your home’s water supply pipe and put the data-logger in there.

Saves digging a hole in your garden too.

If you wanna be *really* scientific, run a twin data-logger, in a classic style Stephenson Screen directly above or nearby.

Maybe then, get Really Hairy and put a *third* logger inside a nearby forest or densely wooded area.

A city slicking pent-house dwelling friend might be a handy thing to have for even greater excitement.

😀

Then you might realise that temp is maybe not the driver or the cause of Climate – temp is the symptom or the effect. As Vuk intimates by attempting to measure *energy* rather than temperature.

If anyone is being violated by Climate Science, it is in fact Boltzmann, Wien and Planck.

Nyquist is ‘a squirrel’. A distraction.

Peta => Nyquist is another way of demonstrating that the long-term historical surface temperature record is not fit for purpose when applied to create a global anomaly in the range of less than 1 or 2 degrees.

You are quite right that climate models particularly violate a lot of “more important” physical laws by using “false” (simplified) versions of non-computable non-linear equations.

If one considers the temperature record as a “signal” (which is one valid way of looking at it) then Nyquist applies if one expects an accurate and precise result. Since the annual GAST anomaly changes by a fraction of a degree C, the errors and uncertainty resulting from the (necessary for historical records) violation of Nyquist.

Maximum error is what the Warmistas want as it best fits the CAGW theory.

What if, instead of taking 2 samples per day we took 730 samples per year?

Randomly of course!

730 random samples would actually give a more reliable annual average.

Steve

Make it 732, then you would see some real aliasing.

Steve O – LOL!

Nonsense about Nyquist

“The Nyquist-Shannon Sampling Theorem tells us that we must sample a signal at a rate that is at least 2x the highest frequency component of the signal. This is called the Nyquist Rate. Sampling at a rate less than this introduces aliasing error into our measurement.”

The Theorem tells you that you can’t resolve frequencies beyond that limit. But we aren’t trying to resolve high frequencies. We are trying to get a monthly average. It isn’t a communications channel.

As for aliasing, there are no frequencies to alias. The sampling rate is locked to the diurnal frequency.

As for the examples, Fig 1 is just 1 day. 1 period. You can’t demonstrate Nyquist, aliasing or whatever with one period.

Fig 2 shows the effect of sampling at different resolution. And the differences will be due to the phase (unspecified) at which sampling was done. But the min/max is not part of that sequence. It occurs at times of day depending on the signal itself.

There is a well-known issue with min/max; it depends on when you end the 24 hour period. This is the origin of the TOBS adjustment in USHCN. I did a study here comparing different choices with hourly sampling for Boulder Colorado. There are differences, but nothing to do with Nyquist.

Fig 3 again is just one day. Again there is no way you can talk about Nyquist or aliasing for a single period.

If you miss fast moving changes in temperature then you can’t claim to have an accurate estimate of the daily average.

If you don’t have an accurate daily average then there’s no way to get an accurate monthly average.

If your monthly averages aren’t accurate, then there’s no way to claim that you have accurately plotted changes over time.

Let’s say your instruments could take continual measurements such that you capture every moment for a year and you compared that to my measurements, where I record the high and the low, each rounded to the nearest whole number, and divided by two.

Your computation of the average temperature would be a precise measurement, but how close do you suppose my average for the year would be to your average for the year?

Steve O,

You can do this with the USCRN data for a station. You can compare both methods as follows:

For “historical method” [(Tmax+Tmin)/2): Take NOAA published monthly averages for the year and add them up and divide by 12. The data for each month is arrived at (by NOAA) by averaging Tmax for the month and averaging Tmin for the month. Then these 2 numbers are averaged to give you the monthly average/mean.

For Nyquist method: Add up every sample for the station over the year and divide by the number of samples. There are 105,120 samples for the year (288*365).

Try Fallbrook CA station for example. You will find that each year from 2009 through 2017 the yearly mean error is between 1.25C and 1.5C.

Some stations have more absolute mean error than others and some stations show more error in trends and less in means. Some show large error in both and some show little error in both.

The reason for the variance is explained in the frequency analysis. The daily temperature signal as seen by plotting the 288-samples/day varies quite a bit from day-to-day and station-to-station. The shape of the signal defines the frequency content. At 2 samples per day, you always have aliasing unless you have a purely sinusoidal signal. We never see this. The amount of mean error and trend error is defined by the amount of the aliasing, where it lands in frequency (daily signal or longer-term trend), and the phase of the aliasing compared to the phase of the signal at those critical frequencies.

I encourage you and all readers to read the full paper if you want to learn more about the theory and mechanics of how aliasing manifests. I give simple graphical examples that explain this.

I’ll definitely need to read the full paper. Thanks.

We see above (in the post, with real data) the error can be 1.4 deg C; when we’re talking about a 1.5 deg C change being a calamity, that much error is, itself, a calamity.

Amen Shanghai Dan!

A published report of last week’s (month’s/year’s) average near-surface temperatures ….. doesn’t have as much “value” as does a copy of last week’s newspaper. 😊 😊

Yep and my fancy dancy RTD probe reacts a lot quicker than that good old mercury thermometer did, on the other hand mercury thermometers don’t drift over time RTDs do and all in the same direction to boot.

See:

https://www.nist.gov/sites/default/files/documents/calibrations/sp819.pdf

Where NIST describes why a liquid in glass thermometer needs re-calibration….

All measurement sensors require calibration.

Hey Steve,

Your computation of the average temperature would be a precise measurement, but how close do you suppose my average for the year would be to your average for the year?

Do you mean comparing arithmetic mean, per each year, calculated directly from the 5-min sampled data with the ‘standard’ procedure of averaging per each year monthly averages that in turn come from averaging of daily midrange values (Tmin+Tmax)/2? I did a quick comparison for boulder, Colorado 2006-2017: as expected errors propagate from daily errors higher up, for instance for 2006 the error is 0.3 C; for 2010 the error is 0.35 C; for 2012 the error is 0.25 C. Full list below:

Year Error

2006 0.31

2007 0.26

2008 0.26

2009 0.23

2010 0.35

2011 0.17

2012 0.25

2013 0.14

2014 0.07

2015 0.19

2016 0.15

2017 0.16

Global warming is about energy, not temperature per se, which is acknowledged above as a proxy for energy. Without considering the humidity and air pressure as well as its temperature at the time the instruments are read, the energy has not been ascertained, merely a proxy for it.

Is this really so difficult to comprehend?

The claim is simple enough: human activities are heating the atmosphere. The heat energy in the atmosphere is not well represented by thousands of temperature measurements. If the humidity were zero or fixed, it could be, but it is not.

The whole world could “heat up” and the temperature could go down if there is a positive water vapour feedback (currently not observed).

“Climate scientists” are wandering in the realm of thermodynamicists as effectively as they wandered about in the realm of statisticians. They must hand out hats with those degrees because they are always talking through them.

Nyquist is fundamental to signal analysis, and temperature is a signal, as is humidity and air pressure. With those three the enthalpy of an air parcel can be determined accurately. If there are trends in the results at the surface, we might project them, whether up or down.

The rest is, as that say, noise. Turn up the squelch and it disappears. Wouldn’t that be nice?

Crispin you nailed it!!! Everything you said hits the bullseye!

Crispin

What you say is true Xcept it is the temperature that extinguishes species and magnifies wildfires. Your argument may be inverted to “energy interesting, but temperature kills.”

There are no species being “extinguished” and there has been no increase in wildfires.

Fires in California have been tragic because Moonbeam and his cronies do not know how to manage forests.

You make a statement of fact that may or may not be true. Temperature for cold blooded animals and insects may be important but I suspect that it is different for different species. Same for warm blooded animals. For them I suspect humidity plays a role also, otherwise why do weather folks always dwell on “feels like” temperatures.

I think you are relying on too many studies that quote “climate change” as the reason for species population changes without doing the hard work to actually determine how temperatures changes actually affect a species. What studies have you read that have data that shows what higher temps and lower temps do to a species so that one can determine the “best range” of temperatures. Basically the ASSUMPTION is that higher temps are bad. You never see where lower temps are either good or bad.

How does a higher average annual global temperature magnify a local wildfire?

Gordon,

Also, apparently, because PG&E and its regulators do not understand how to manage and maintain electrical infrastructure.

“The whole world could “heat up” and the temperature could go down if there is a positive water vapour feedback…”

Crispin, as usual you are absolutely correct. But I really wish you hadn’t given the climate alarmists another angle from which to argue. Now I expect to see one or more papers published in Nature Climate Change using this excuse to explain the pause or some other nonsense.

The claim is NOT that human activity is heating anything other than in urban environments, which is quite a different thing from heating the surface fluids generally. The claim is that CO2 is causing the heating by retaining solar energy, not human activity energy. Possibly the CO2 comes from human activity.

Love it Crispin….especially the “wandering and hats” paragraph…..

It sort of works like Nick wants if the heat up and cool down behaviour is identical so it averages out (the first comment by vukcevic with the bucket is the same sort of idea).

Whether the behaviour up and down is symmetrical I have no idea has anyone measured. What is amusing is they do put the sites in a white box which inverts the argument … ask Nick why a white box 🙂

The point made however is correct they are trying to turn a temperature reading to a proxy for energy in the earth system balance which Nick does not address in his answer.

So I guess it comes down to are you trying to measure temperature for human use or trying to proxy energy .. make your choice.

As for Blackville SC, where the discrepancy between daily average of 5-minute sampling and (Tmax+Tmin)/2 is greatest: The .24 degree/decade discrepancy is between 1.11 and 1.35 degrees C per decade. This is almost 22% overstatement of warming at the station with warming rate most overstated (in degree/decade terms) by using (Tmax+Tmin)/2.

Notably, all other stations mentioned in the Figure 7 list have lesser error upward in degree/decade terms – and some of these have negative errors in degree/decade trends from using (Tmax+Tmin)/2 instead of average of more than 2 samples per day. The average of the ones listed in Figure 7 is .066 degree/decade, and I suspect these are a minority of the stations in the US Climate Reference Network.

And, isn’t USCRN supposed to be a set of pristine weather stations to be used in opposition to USHCN and US stations in GHCN?

Hi Donald,

If I understand your comment correctly, let me clarify. My apology in advance if I’m misunderstanding you.

What I show in Fig 7 is not critical of USCRN. What it shows, using USCRN data, is how 2 competing methods work to deliver accurate trends. The “Nyquist-compliant” method uses all of the 5-minute samples over the period, which may be 12 years in some cases. The linear trend is calculated from this data. I’m considering it the reference standard. If the “historical method” of using max and min values worked correctly, without error, then when the trend generated from this method is subtracted from the reference there should be no error – the result should be zero. It is not zero. I refer to error as “bias” in that figure. Each station shows a bias. A positive sign means the method gives a trend warmer than the reference. A negative sign means the method gives a trend that is cooler than the reference. But to be clear, it is the historical method that is being exposed and criticized, not USCRN or its data. Maybe I should say I’m criticizing how that data is used. We have the potential to get good results from USCRN but the method used gives results worse than the reference.

“I suspect these are a minority of the stations in the US Climate Reference Network.”

I calculated below all stations in ConUS with 10 years of data. The Min/max showed less warming than the integrated. But I think it was just chance. The variability of these 10 year trends is large.

Interesting Nick.

Anyone who believes you can get an accurate estimate of the daily average from just the high and the low, has never been outside.

“has never been outside”

You’d have to stay outside 24 hrs to get an accurate average. But the thing about min/max is that it is a measure. It might not be the measure one would ideally choose, but we have centuries of data in those terms. We have about 30 years of AWS data. So the question is not whether it is exactly the same as hourly integration, but whether it is an adequate measure for our needs.

Again, I looked at that over a period of three years for Boulder Colorado.

True but meaningless. We may have centuries of Tmin\Tmax temperature data, but we don’t have centuries of Global Tmin\Tmax temperature data. To suggest so is simply absurd. And even if we did, the Earth is 4.5 billions of years old — a few centuries is nothing, and to make inferences from such a minuscule data record is unscientific and deliberately misleading.

Once again, Nick admits that the data they have isn’t fit for the purpose they are using it for, but they are going to go ahead and use it because it’s all they’ve got. As the article demonstrated, daily high and low is not adequate for the purpose of trying to tease a trend of only a few hundredths of a degree out of the record.

What a sad post to hang an entire ideology on.

The maximum and minimum temperature of the day is of interest, and are real values; but averaging those two values gives you a nonsense value that tells you nothing.

Greg

I agree that that high and low are of interest and probably of more value than the mid-range value calculated from them. The information content is actually reduced by averaging. We don’t know whether an increasing mid-range value is the result of increasing highs, lows, or both. If both, we don’t know what proportion each contributes, which may have a bearing on the mechanism or process causing the change.

But, if the public were made aware that the primary result of “Global Warming” was milder Winters, not unbearable Summer heat waves, they might not get very excited.

It would be easy enough to cover Nick just make a couple of high precision sites on existing temp sites and integrate the energy into and out of the sites at millisecond resolution and check the temperature to energy proxy. You could also do the same with sites near oceans etc to get proper values for land/ocean interface when you try and blend stuff.

Nick,

You allude to the problem. We have centuries of data and “we” feel compelled to use it, knowing it is bad. The question is often asked, “what are we supposed to do, nothing?”. As an engineer I can answer that question. Yes – do nothing with it. It is not suitable for any honest scientific work. No engineering company would ever commission a design, especially if public safety or corporate profit were at risk, with data as flawed as the instrumental record. If a bridge were to be designed with data this bad would you put your family under it while it is load tested? If an airplane was designed and built with data this flawed would you put your family on the first flight? We have known for a long time about a long list of problems with the record. Violating Nyquist is a pretty “hard” issue – not really something anyone can honestly dismiss. We have the theory. USCRN allows us to demonstrate the extent of the problem. The full range of error cannot be fully known but we are seeing magnitudes of error that stomp all over the claimed level of changes that are supposed to cause alarm.

Are there any engineers reading this who want to say they disagree? In other words, you think engineering projects are routinely undertaken with data as bad as the instrumental record.

As an engineer I can say with confidence that we use the data we have, not the data we’d wish for in a perfect world. We are constantly faced with the constraints of inadequate data for purposes of design.

We deal with imperfect data through several methods:

1) Statistical analysis that allows us to determine variability, and confidence limits of limited data sets

2) We use analysis of systems and components and materials to determine modeled performance under various condititions, such as “finite element analysis”, that are subject to digital modeling, including extensive analysis of identified failure modes

3) We use “safety factors”, or what some may call “fudge factors” to account for residual uncertainty that otherwise is not subject to direct analysis

4) We attempt to identify, quantify, and account for measurement errors

5) We use extensive peer review design administration processes to avoid design errors or blunders in assessing all of the above.

The bottom line is that engineering is primarily a matter of risk analysis that is built upon the notion of imperfect and insufficient data and how to deal with that while developing economical, efficient designs.

In the real world you never have sufficient or good enough data. Engineers are trained to design stuff anyway.

William, it is Tmin/Tmax data. It is not “bad data”. And it is NOT “2 samples a day”; it is a true Tmin and Tmax for each 24-hour period, recorded maybe an hour from each other; it has nothing to do with Nyquist (regular sampling). And if you demand nonexistent data, maybe you are not a good engineer. An engineer works with the best available data and methods. For example, a bridge designer may be highly interested in the minimum and maximum temperatures.

Duane,

Although you seem to counter my statement, I think you actually agree with it. I asked if any engineering project uses data as bad as the instrumental record. I didn’t say or suggest the data is perfect.

I didn’t hear you say you use uncalibrated instruments and get mail clerks to run them with out training. You didn’t say you ignore quantization error and inflate precision.

You said you do examine the confidence limits of your data and add in guard bands and safety factors. You conduct design reviews and assess your risks. You model, emulate, simulate. Note stated but I assume you build prototypes and do functional testing and reliability testing, etc.

I’m not sure why you took a counter position as I’m not saying anything different. Are you saying you think the instrumental record and the way climate science uses the record matches your processes?

If climate science used the “bad” data they have and bound the error and stopped making up data and changing it continually decade after decade then they could approach what engineers do. But the data is still aliased. Would you design with data aliased as bad as the instrumental record?

Curious George,

A very accurately measured Tmax and Tmin are bad for calculating mean temperature. I’m sorry if you object to the word “bad” but you need to show how it is “good” and what it is “good” for. Since mean temperatures and temperatures trends are what drives climate science you need to show they are “good” for this purpose.

“If a bridge were to be designed with data this bad would you put your family under it while it is load tested? ”

Apparently some people would, with the exception that they would test it with random strangers under it.

https://www.nbcmiami.com/news/local/Six-Updates-on-Bridge-Collapse-Investigation-492009331.html

William

“No engineering company would ever commission a design, especially if public safety or corporate profit were at risk, with data as flawed as the instrumental record.”

The engineering project, in this case, is to put a whole lot of CO2 in the air, and see what happens. We work that out with the data we have. By all means cancel the project if you think the data is inadequate.

Menicholas – Did I hear them say that the bridges collapsed due to too much anthropogenic CO2 reacting with their concrete mix?

@ur momisugly Nick Stokes January 14, 2019 at 8:34 pm

Actually only about 2 parts per million per year.

Nick said: “The engineering project, in this case, is to put a whole lot of CO2 in the air, and see what happens. We work that out with the data we have. By all means cancel the project if you think the data is inadequate.”

I see no evidence to fear CO2. But pumping CO2 is not the project. The “project” is pumping countless billions of taxpayer dollars into the research world in a Faustian Bargain that clubs the public into a political and social agenda based upon bad science – all the while claiming to be the champions, protectors and sole wielders of science. I’d cancel that one.

Nick, I don’t believe for a nano-second that politicians actually believe in the nonsense they pedal. Or if they do they are completely incompetent. How much would it cost the developed governments of the world to blanket the world with USCRN type stations? This could have been started in the 1980s or 1990’s for sure. With good data we can analyze both the Nyquist compliant way and the historical way. How much is the US spending on the new USS Gerald R Ford – next gen carrier with full task force and compliment of F35s, submarines and Aegis destroyers? $40B? How about annually to maintain it? How much are we wasting on redundant social programs? How much are we spending on a network that allows us to collect better data globally? Why aren’t you and other in the field demanding better instruments and data? Why, because the tall peg would get beaten down. No one wants to do anything except keep the money flowing and keep the nonsensical research pumping out day after day.

For example, a bridge designer may be highly interested in the minimum and maximum temperatures.

========

Correct. But the engineer would have almost no interest in (min+max)/2. Bridges don’t fail because of averages.

As usual Nick’s best effort amounts to a pathetic attempt to change the subject.

We do have data regarding what happens to the globe when CO2 levels increase.

CO2 levels have been 15 to 20 times above what we have now in the past and nothing bad happen. Not only did nothing bad happen, life flourished.

William,

You asked if engineers use data as bad or as imperfect as the environmental temperature record. Civil engineers use exactly that environmental data as the basis for loads or effects on a wide variety of engineered systems. I”m a civil engineer. We use the existing environmental record for air and water temperatures, humidity, wind speeds and directions, rainfall (depths, durations, and frequency of storms), water flow rates and flood stages for rivers and channels and surface runoff, seismic data, aquifer depth data, water quality data, just to name a few of the very many types of environmental data routinely used just in civil engineering design.

Most of these environmental data have relatively short records of data (very frequently just a few decades worth), with frequently missing data, or questionable data.

Fortunately, a combination of government agencies like NOAA or USGS and private standards setting organizations like ASTM have invested many decades in data collection and analysis made readily available with pre-calculated statistical analyses.

So then we use all the various tools such as the ones I listed above to provide the safest yet still economical design we can produce.

If we refused to use or settle for “bad data” or incomplete data or questionable data, in many instances we’d have no data at all. The better the data we have, the better, as in safer and more economical, designs we can produce.

Duane,

You said: “You asked if engineers use data as bad or as imperfect as the environmental temperature record. Civil engineers use exactly that environmental data as the basis for loads or effects on a wide variety of engineered systems…”

Again, I really don’t see any disagreement with you… for some reason it feels like you have taken that position. Civil engineers have a lot of standards they adhere to and I doubt a 1C increase in global average temperature makes you rewrite your standards or run out and reinforce a dam. Right? Sure you use rainfall data to make decisions, but I’m sure there is a lot of careful margin built in. Right? But you would not specify a concrete slump or strength or size a critical beam with methods similar to those in the instrumental record would you? I won’t labor over this, but I think/hope we agree more than disagree. I suspect you took issue with the way I made my point and that is ok, but I’m not seeing anything of substance here that is in contention.

William Ward wrote: “I see no evidence to fear CO2.”

Obviously William knows nothing about radiative transfer of heat – the process that removes heat from our planet. An average of 390 W/m2 of thermal IR is leaving the surface of the planet, but only 240 W/m2 is reaching space. GHGs are responsible for this 160 W/m2 reduction and the current relative warmth of this planet. However, there is no doubt that rising GHGs will someday be slowing down radiative cooling by another 5 W/m2.

We don’t need a Nyquist-compliant temperature record – or any temperature record at all – to know that some danger exists and that it is potentially non-trivial. Energy that doesn’t escape to space as fast as it arrives from the sun – a radiative imbalance – must cause warming somewhere. That is simply the law of conservation of energy.

“But the thing about min/max is that it is a measure” Looking into goat entrails to ‘guess’ the future is also a measure. Really, Nick, you are funny. Really funny.

MarkW, you raise an important point most people here seems to have missed since the reliance on just two data points a day barely cover anything of the day itself.

For a recorded high of 102 degrees F at 4:40 pm doesn’t tell us how long it was over 100 degrees F, how long it was over 95 degrees F, how long it was over 90 degrees F.

A 100 degree F day can actually be hotter than a 102 degree day when it reached 90 degrees F two hours earlier and 95 degrees 1 1/2 hours earlier than the 102 degrees F day did.

It is the total amount of heat of the whole sunny day into the late evening that counts the most, not the daily high that might have lasted just 5 minutes when it was recorded.

It was a LOT hotter in the early 1990’s in my area than now due to the summer heat persisting long into the night, when it used to stay over 90 degrees late as 10:00 pm., now it never does that anymore since then often dips below 90 by around 8:30 or so. The average high still about the same, even reached a RECORD high of 112 degrees F with additional days of 110, 111 and a couple of 109, just 4 years ago.

The nights no longer so hot as it used to be.

True. I live in a hot place, Phoenix, Arizona. Some years back I started recording and plotting temperature with a sample rate of 3 minutes. It is clear to see that the hottest day in terms or max T may not be the hottest day if one considers, for example, a day that is slightly cooler by say 2 deg F; but the high is reached earlier in the day and persists for a longer time. It’s easy to see graphically. Many of the hot days peak and then cool off.

That is regional weather. Here, last summer, it stayed hot late very often. It was still near 100 at midnight more than a few times. Of course, things could have been different on the next block.

Sunsettommy:

Yep: it really is about the temperature-time product, if we are going to use temperature.

There is a difference between your pizza being in the 400F oven for 1 minute and 20 minutes.

Clyde: But I do agree with you. A better use of the record (although still not good) would be to work with the max and min temperatures independently (do not average them) and add error bars to each. When things like reading error, quantization error, UHI, etc are added in for an honest range, the problem is we get a range so wide that I doubt we can say we know what has or is happening. We just know things are happening in a large range.

When violating Nyquist is factored in, I don’t think we can even really say it has warmed since the end of the Little Ice Age. I’m NOT making the claim that it didn’t warm. I’m making no claims except that the data is so bad that we need to admit we really don’t know. We have anecdotes and they may be correct, but we don’t really have the science or data to back it up honestly.

This.

The other point is that as Curious George said the design of the Max/Min Thermometer was to derive the max and min readings per day, not measure the heat content, or the daily variations, just the max and min.

So in that respect it is NOT a 2 sample frequency from hundreds of samples.

It has worked well and did it’s job.

The introduction of continuously reading Electronics has introduced it’s own Errors, some of which are even worse.

As has been shown in the Australian BOM records if the data is mis-handled, ie not averaging over a long enough period the new Electronic devices pick up transient peaks.

These peaks can have many causes but do not actually impact the overall temperature.

The other classic example of course is using Electronics at Airports where they don’t measure the Climate Temperature, but how many Jet Engines pass by.

“Clyde: But I do agree with you. A better use of the record (although still not good) would be to work with the max and min temperatures independently (do not average them) and add error bars to each.”

I think so, too. If we use TMax charts, the 1930’s show to be as warm or warmer than the 21st century. And this applies to all areas of the world.

TMax charts show the true global temperature profile: The 1930’s was as warm or warmer than subsequent years. Therefore, the Earth, in the 21st century is not experiencing unprecedented warming. It was as warm or wamer than today back in the 1930’s, worldwide.

The CAGW narrative is dead. There is no “hotter and hotter” if we go by Tmax charts.

Or, CAGW is also dead if we go by unmodified surface temperature charts, which also show the 1930’s as being as warm or warmer than subsequent years.

It also tells you that frequencies above half the sampling rate will fold back to a frequency below half the sampling rate. A frequency at .6 the sample rate will appear as a frequency at .4 the sample rate.

Irrelevant. It is sampled time series data.

“Irrelevant.”

Of course it is relevant. You have said that “A frequency at .6 the sample rate will appear as a frequency at .4 the sample rate.”. But the only result that matters is the monthly average. All these regular frequencies, aliased or not, make virtually zero contribution to that.

Average is a low pass filter in the frequency domain. If the signal is aliased the monthly average will be wrong.

All the frequencies in Nyquist talk are diurnal or more. Yes, monthly average is a low pass filter, and they will all be virtually obliterated, aliased or not.

Plus the effect of the locking of sampling to diurnal. What component do you think could be aliased to low frequency?

Play some music through that monthly low pass filter and the song Hey Jude would be a constant note of C. Is that useful?

“would be a constant note of C”

Do, it would be silent, or a very soft rumble. There aren’t any low frequency processes there that we want to know about. But with temperature there are.

Sampling theory says the data is exactly correct for the bandwidth chosen, not approximately correct, if the sample rate is as least twice the highest frequency. Aliasing occurs only when the input signal isn’t bandwidth limited to fit the chosen sample rate.

I think one has to determine the instantaneous rate of temperature change to arrive at the optimum sample rate if one wants to come up with the most correct number. I suspect that rate varies considerably in different environments. I’m not sure that the calculated average will still be any more meaningful but the actual trend of that number over time should be more accurate, which seems to be the main point of this article.

The trend is the most important claim in today’s version of climate and the most important number in projecting a forecast of likely future results. However, it still seems pretty meaningless in view of what the real world actually seems to do.

Nick said: “But the only result that matters is the monthly average.”

Reply: The data is aliased upon sampling at too low of a rate. You can do all of the work you want after the sampling but the aliasing is in there. You can’t get it out later. I replied earlier but maybe you didn’t get it yet.

You can design your system and filter before sampling according to your design. You can do this if your system is electronic. You can’t filter the reading of a thermometer read “by-eye”.

” You can do all of the work you want after the sampling but the aliasing is in there. “

The process is linear and superposable. I set out the math here. Aliasing may shift one high frequency to another. But monthly averaging obliterates them all.

Thought the same, Nick -after all it’s not about “real” temperatures but to determine the difference between yesterday’s measurement and today’s measurement.

Johann,

We do get quoted annual record temperatures don’t we? I hope you know now that not one of those record temperature years is correct.

Your comment to Nick is about trends. I address trends. They do not escape aliasing.

“I address trends.”

To no effect. You show that you get different results, sometimes higher, sometimes lower. But it’s all well within the expected variation. There is no evidence of systematic bias.

“There is no evidence of systematic bias. – Nick Stokes”

On the contrary, It is discussed in the literature that the bias between true monthly mean temperature (Td0) – defined as the intergral of the continuous temperature measurements in a month – and the monthly average of Tmean (Td1) is very large in some places and cannot be ignored. (Brooks, 1921; Conner and Foster, 2008; Jones et al., 1999)

The WMO(2018) say that the best statistical approximation of average daily temperature is based on the integration of continuous observations over a 24-hour period; the higher the frequency of observations, the more accurate the average; as the head post suggested!

“Td1 may exaggerate the spatial heterogeneities compared with Td0, because the impact of a variety of geographic (e.g. elevation) and transient (e.g. cloud cover) factors is greater on Tmax and Tmin (and hence in Td1) than that on the hourly averaged mean temperature, Td0 (Zeng and Wang, 2012).

Wang (2014) compared the multiyear averages of bias between Td1 and Td0 during cold seasons and warm seasons and found that the multi‐year mean bias during cold seasons in arid or semi‐arid regions could be as large as 1 °C.

WMO (1983) suggested that it is advisable to use a true mean or a corrected value to correspond to a mean of 24 observations a day. “Zeng and Wang (2012) argued from scientific, technological and historical perspectives that it is time to use the true monthly mean temperature in observations and model outputs.”

The WMO(2018) have suggested that Td1 is the least useful calculation available if attempting to improve the understanding of the climate of a particular country!

WMO Guide to Climatological Practices 1983, 2018 editions

Brooks C. 1921. True mean temperature. Mon. Weather Rev. 49: 226–229, doi: 10.1175/1520-0493(1921)492.0.CO;2.

Conner G, Foster S. 2008. Searching for the daily mean temperature. In 17th Conference on Applied Climatology, New Orleans, Louisiana.

Jones PD, New M, Parker DE, Martin S, Rigor IG. 1999. Surface air temperature and its changes over the past 150 years. Rev. Geophys. 37: 173–199, doi: 10.1029/1999RG900002.

Li, Z., K. Wang, C. Zhou, and L. Wang, 2016: Modelling the true monthly mean temperature from continuous measurements over global land. Int. J. Climatol., 36, 2103–2110, https://doi.org/10.1002/joc.4445.

Wang K. 2014. Sampling biases in datasets of historical mean air temperature over land. Sci. Rep. 4: 4637, doi: 10.1038/srep04637.

Zeng X, Wang A. 2012. What is monthly mean land surface air temperature? Eos Trans. AGU 93: 156, doi: 10.1029/2012EO150006.

” It is discussed in the literature that the bias between true monthly mean temperature (Td0)”

As is clear from context, I am saying that there is no systematic bias between trends. If you think there is, please say which way it goes.

“As is clear from context, I am saying that there is no systematic bias between trends. If you think there is, please say which way it goes. – Nick Stokes”

Thorne et al. (2016) found a consistent overestimation of temperature by the traditional method [(Tmax + Tmin)/2], for the CONUS:

It is interesting to note from that study that spatial patterns in the annually averaged differences between the temperature-averaging methods are readily apparent. The regions of greatest difference between the two methods resemble previously defined climatic zones in the CONUS.

What concerns me most, is that this study found that the shape of the daily temperature curve was changing, such that more hours per day were spent closer to Tmin than Tmax during the (2001-15) period versus the base period (1981-2010), which doesn’t bode well for a changeover to the hourly method because it will have the effect of masking the old errors and sexing up any warming! ;-(

*Thorne, P. W., and Coauthors, 2016: Reassessing changes in diurnal temperature range: A new data set and characterization of data biases. J. Geophys. Res. Atmos., 121, 5115–5137, https://doi.org/10.1002/2015JD024583.

“As is clear from context, I am saying that there is no systematic bias between trends. If you think there is, please say which way it goes. – Nick Stokes”

Wang (2014)* analyzed approximately 5600 weather stations globally from the NCDC and found an average difference between the two temperature-averaging methods of 0.2°C, with the traditional method overestimating the hourly average temperature. They found that asymmetry in the daily temperature curve resulted in a systematic bias. And also that Tmean resulted in a more random sampling bias by under sampling weather events such as frontal passages.

Wang, K., 2014: Sampling biases in datasets of historical mean air temperature over land. Sci. Rep., 4, 4637, https://doi.org/10.1038/srep04637

Scott W Bennett January 15, 2019 at 7:02 pm

Scott, it seems you’re not discussing what Nick is discussing. He’s discussing trends, you’re discussing values.

The traditional method (max+min/2) definitely overestimates the true average value. However, as near as I can tell, it doesn’t make any difference in the trends. I ran the real and traditional trends on 30 US stations and found no significant difference. I also investigated the effect of adding random errors to the real data and found no difference in the trends.

I’m still looking, but haven’t found any evidence that there is a systematic effect on the trends.

w.

@ur momisugly Scott W Bennett on January 15, 2019 at 7:02 pm:

Your quotes are actually from Bernhardt, et al, 2018.

Bernhardt, J., A.M. Carleton, and C. LaMagna, 2018: A Comparison of Daily Temperature-Averaging Methods: Spatial Variability and Recent Change for the CONUS. J. Climate, 31, 979–996, https://doi.org/10.1175/JCLI-D-17-0089.1

https://journals.ametsoc.org/doi/pdf/10.1175/JCLI-D-17-0089.1

Willis, I’m not sure if you read my post immediately below about Wang(2014):

“They found that asymmetry in the daily temperature curve resulted in a systematic bias.”

Now I’m confused because I’m not sure what you mean by “trends” here.

I thought it was clear that several studies found long term global and regional systematic bias.

Perhaps I might have obscured the point that trends will be biased because the shape of the daily temperature curve was found not just to be skewed but also changing.

My understanding is that this will create a spurious trend in either method, unless explicitly teased out (By comparison with changes in humidity):

“Stations in the southeast CONUS experience more time in the lowest quarter of their daily temperature distribution due to higher amounts of atmospheric moisture (e.g., given by the specific humidity) and the fact that moister air warms more slowly than drier air.*”

For example, Thorne et al. (2016) using half ASOS and half manual observations – found a considerable difference in the two temperature-averaging methods in the cold-season results between Wang’s – ASOS only – observations but only a negligible difference for the warm season.

That’s me for now, I’ve exhausted my contribution to this subject! 😉

*Thorne, P. W., and Coauthors, 2016: Reassessing changes in diurnal temperature range: A new data set and characterization of data biases. J. Geophys. Res. Atmos., 121, 5115–5137, https://doi.org/10.1002/2015JD024583.

Phil,

You are right! My mistake, referencing is not my best skill, despite appearances. In my defence there is a cross-reference that I’ve obviously confused. I have all these notes and ideas in my head and it is bloody hard to go back and remember where I got them all from. I know, I should be more rigorous but I was trying to get the ideas across as informally as possible. I figure in this day and age it is almost superfluous as any diligent reader will quickly google it.

cheers,

Scott

The trend difference is the simple accumulation of the error. As discussed in other posts, there is a nature of the error that has a quality approaching random. I don’t think it is random – but I’ll let someone more knowledgeable try to measure that and report. It still appears to me that we see the accumulated error in the trends.

Nick is right. This paper has fundamental conceptual errors.

It is obviously wrong to claim that averaging the daily high and the low is WORSE than averaging two samples 12 hours apart. The high and the low are based on a huge number of “samples.” To treat each one as if it’s a single sample is not valid.

Let me say this a different way. The sampling theorem does not apply to statistics not based on samples. A mercury max/min thermometer uses an effectively infinite number of samples. In fact, it’s analogue; there are no samples at all.

About as far from correct as one can be and still be speaking English.

No, a mercury max/min thermometer is not taking an infinite number of samples, it takes two samples, the high whenever that occurred and the low, whenever that occurred.

If it were taking an infinite number of samples you could reconstruct the temperature profile of the previous day, second by second.

The best you can say is that while it’s constantly taking samples, it only stores and reports two of those samples.

Let me repeat, “It is obviously wrong to claim that averaging the daily high and low is WORSE than averaging two samples 12 hours apart.”

Is this, or is it not, obvious to you?

Ever used the sampling theorem at work?

I have.

@Frederick Michael

You are ignoring the shape of the curve. The shape of the daily temperature curve varies from one day to the next and is what is causing the error in the daily “average.” As you know, the “average” of a time series is not an average: it is a smooth or filter. Information is being discarded by the max-min thermometer. Without knowing the shape of the curve (which implies knowing exactly when the max and the min happened), the real daily smooth cannot be known. It is known that a sine wave is a bad model to assume for the daily temperature curve.

Phil – The error you cite is real. That’s the problem with using (min+max)/2. It is not a perfect method.

But that has absolutely nothing to do with the sampling theorem or aliasing.

Nice dodge there.

From the rest of my comment it is obvious I was addressing your ridiculous claims that a high and a low reading is the result of an infinite number of samples.

Mercury minmax can well be considered as having a very large sampling frequency.

But in reality, very short pulses of changing temperature don’t have time to fully express in the reading. So an electronic device may react faster and have practically much more volatility. How to mimic the mercury minmax with electronic thermometer sampling the temp is a hard question not put enough effort on.

Nick says the anomaly trend is maybe not affected. Well yeah, science is not about believing stuff, it is about proving it. For me it is clear that mercury minmax can’t be compared to different electronic devices, due to a number of reasons. The best reason is the most used thermometers are simply not planned to detect changes of 0.001 degrees during a year.

The record is contaminated every time something happens near the measuring point. It is contaminated by sheltering changes, both abrupt and slow. It is contaminated by some electronic jitter and smoothing protocols.

So far from certain, but then, you can argue we should play safe. What exactly is playing safe, is disagreed, notable opinions coming from Curry, Pielke Jr, and Lomborg.

Nick would be right if the daily profile of continuous sampling were to be the same each day. One day is not a sufficien exploration. 1st commenter vukevich had the best idea which is to measure the heat in a bucket of water each day at, say 2pm (rather a fluid that doesn’t freeze). This might be automated by encapsulating the fluid with an air cupola on top fited with a pressure guage “thermometer”.

You have an average temperature but it bears no relationship to earth energy balance which you are trying to work out in climate science 🙂

To understand why consider a cold rainy day your temperature all day could have been 20deg C the cloud clears for a brief spell and the temperature rises to 24 deg C .. so that is you max. Your average will be MAX-MIN/2 so it inflates your average …. you think there is a lot more energy that day than there is. Remember at the end of the day what they use this temperature average for is to create a radiative forcing so they are proxying earth energy budget.

I see this regularly where I live in upstate NY, especially on predominantly cloudy days: The weather report calls for a certain high temp and we get nowhere near it all day long. Then, late afternoon, the clouds break, and the temp moves up quickly to the predicted high, only to stay there for a short time before dropping back down as the sun sets.

We, in fact, hit the high temp called for, but we spent the majority of the day in noticeably cooler weather. The high temp for the day was almost meaningless, as the day was dominated by cooler temps.

Frederick Michael,

Please show the math or conceptual errors. Be advised you are fighting proven mathematics of signal analysis. You challenge is to show 1) that there is no energy at 1-cycle/day, 2-cycles/day or 3-cycles/day. Good luck showing there is no energy in the signal at 1-cycle/day. Or you need to show how 1 and 3-cycles/day do not alias to 1-cycle/day. Or you need to prove why Nyquist was wrong. Or show that every electronic device ever made that converts analog and digital data and has followed Nyquist, has done so needlessly.

A mercury max/min thermometer only delivers 2-samples/day. If you think the number is infinite then you wouldn’t mind giving me an example where you get say … 3-samples/day. Give me the 3 values you get please. You will quickly see that you are mistaken. If you have a high quality, calibrated max/min thermometer next to a USCRN site and compare your 2 values (samples) after a full day (properly sampled with no TOBS), and compare it to the 288-samples that day, you will find the max and min extracted from the 288-samples match what your max/min device gives. Max/min thermometers give you 2 samples.

You can play with USCRN data for yourself and show by example that the 2 samples taken at midnight and noon (or any 2 times separated by 12 hours) will usually give a lower error mean than the average of max and min. With clock jitter you get into some strange effects. It depends upon when the samples land in time relative to the spectral content for the day.

Thanks for your response.

The conceptual error is describing the min and the max as samples. While the (min+max)/2 method is less than perfect, it’s the right approach, given older technology. Of course, it is worse than a true average.

I do not believe that there’s no energy at frequencies higher than 1 cycle per day. Of course there is. But we are interested in a low pass filtered result, so the “energy” at higher frequencies doesn’t somehow contribute to the average temperature we seek. The min & max act as low pass filters and are appropriate.

Still, your empirical point overrules any theoretical argument I can make. I will play with the USCRN data you link to above. If it shows greater error from averaging min and max than averaging two individual samples 12 hours apart, I will gladly concede,

after changing my underwear.

Dang. Looks like I have to wait for the shutdown to end before checking the data.

“The min & max act as low pass filters and are appropriate.”

Not even wrong.

This does NOT act as a low pass filter AT ALL. The maths of calculating the average from the full 288 samples is a low-pass filter, min and max are not.

“…the “energy” at higher frequencies doesn’t somehow contribute to the average temperature we seek.”

Yes it does – by aliasing, and that is the point.

Comparing USCRN stations’ trends with the others’ over the same time period would yield useful information.

Frederick Michael,

Your underware?!! Now that is TMI (too much information)! LOL! Thanks for the laugh.

Here is what you are missing: once you sample you have to deal with what has aliased. So if you are interested in a low pass filtered result then you need to low-pass filter before you sample. Not after – unless you sample without aliasing. If you sample without aliasing then you have all the signal has to offer and you can process it in the digital domain just as you would process it in the analog domain before you sampled it. You can also (and this is going to drive Nick crazy!) convert the sample from digital back to analog without losing any of the original data!

When we look at a thermometer with our eyes we can not filter anything. Even a max/min thermometer. There is really no practical way to filter. Filtering has to be done electronically before sampling. Then the signal can be filtered before sampling and you can use 2 samples/day if you filter appropriately.

I show in my paper what happens to trends with aliasing. How do you explain the trend bias/error otherwise? If Nick were right that you can just “extract the monthly” data despite the aliasing then you would not have the trend error.

Some of you are really holding on tight to this, but do so at your own detriment. You need to show how Nyquist doesn’t apply. You need to show how the content at 1, 2 and 3 cycles/day doesn’t alias your signal trend and daily signal. I give you the graph (full paper). Just show me how it is wrong. Otherwise use it to make your work better. I didn’t invent it, I’m just pointing it out.

“Or you need to show how 1 and 3-cycles/day do not alias to 1-cycle/day. “

Well, they can’t. You get sum and difference frequencies.