Answers to comments from the original essay on WUWT, here.

By Christopher Monckton of Brenchley

I make no apology for returning to the topic of the striking error of physics unearthed by my team of professors, doctors and practitioners of climatology, control theory and statistics. Our discovery the climatology forgot the Sun is shining brings the global-warming scare to an unlamented end. My last article discussing our result attracted more than 800 comments. Here, I propose to answer some of the more frequently-occurring comments, which will be in bold face. Replies are in regular face.

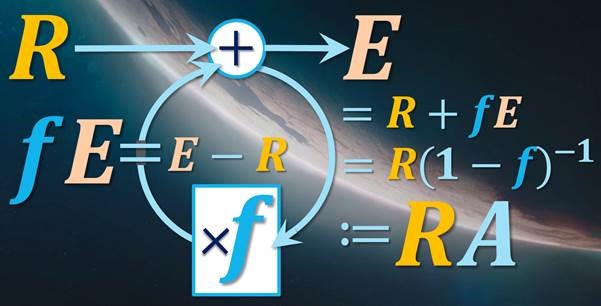

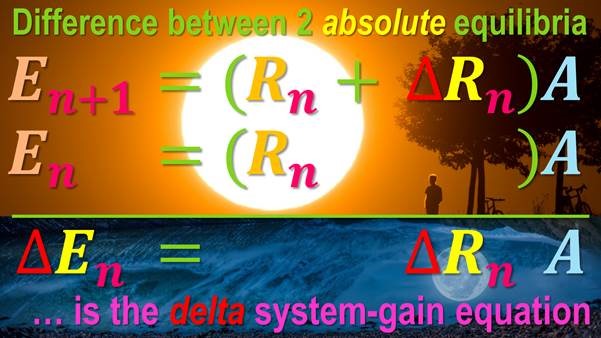

In a temperature feedback loop, the input signal is surface reference temperature ![]() before feedback acts. The output signal is equilibrium temperature E after feedback has acted. The feedback factor f (= 1 – R / E) is the ratio of the feedback response fE (= E – R) to E. Then E = R + fE = R(1 – f)–1. By definition, E = RA, where A, the system-gain factor or transfer function, is equal to (1 – f)–1 and to E / R.

before feedback acts. The output signal is equilibrium temperature E after feedback has acted. The feedback factor f (= 1 – R / E) is the ratio of the feedback response fE (= E – R) to E. Then E = R + fE = R(1 – f)–1. By definition, E = RA, where A, the system-gain factor or transfer function, is equal to (1 – f)–1 and to E / R.

But your result is too complex. Please state it in simpler terms.

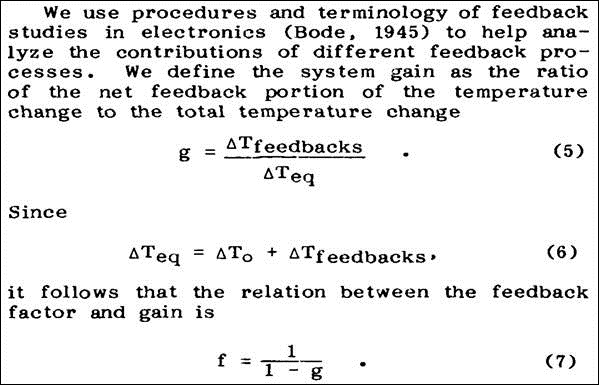

Erroneously, IPCC (2013, p. 1450) defines temperature feedback as responding only to changes in reference temperature. However, feedback also responds to the entire reference temperature. Climatology thus omits the sunshine from its sums and loses the opportunity to find, directly and reliably, the Holy Grail of climate-sensitivity studies – the system-gain factor.

Lacis+ (2010) imagined that in 1850 feedback response accounted for 75% of the equilibrium warming of ~44 K driven by the pre-industrial non-condensing greenhouse gases, implying a feedback factor 0.75, a system-gain factor 4 and an equilibrium sensitivity 4.2 K. i.e., 4 times reference sensitivity 1.04 K (Andrews 2012). Lacis misattributed to the non-condensing greenhouse gases the large feedback response to the emission temperature from the Sun.

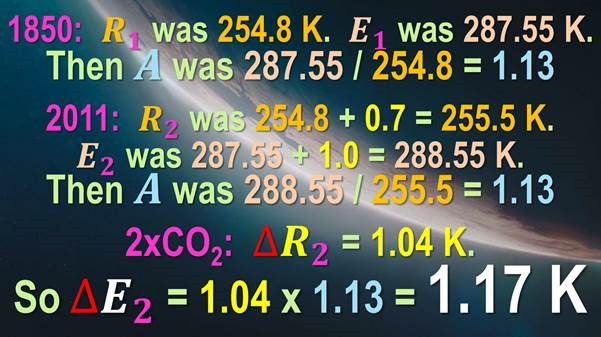

In reality, absolute emission temperature in 1850 with no non-condensing greenhouse gases would have been 243.3 K and the warming from those gases 11.5 K, giving a reference temperature of 254.8 K before feedback. The HadCRUT4 equilibrium temperature after feedback was 287.55 K Thus, the system-gain factor, the ratio of equilibrium to reference temperature, was 287.55 / 254.8, or 1.13.

By 2011, if all warming since 1850 was anthropogenic, reference temperature had risen by 0.68 K to 255.48 K. Equilibrium temperature had risen by the sum of the 0.75 K observed warming (HadCRUT4) and 0.27 K to allow for delay in the emergence of manmade warming: thus, 287.55 + 1.02 = 288.57 K.

Climatology would thus calculate the system-gain factor as 1.02 / 0.68, or 1.5. Yet the models’ current mid-range estimate of 3.4 K warming per CO2 doubling implies an impossible 3.25.

In reality, the system-gain factor was 288.57 / 255.48, or 1.13, much as in 1850. It barely changed over the 161 years 1850-2011 because the 254.8 K reference temperature in 1850 was 375 times the manmade reference sensitivity of 0.68 K from 1850-2011. Sun big, man small: nonlinearities in feedback response are not an issue.

Given 1.04 K reference warming from doubled CO2, equilibrium warming from doubled CO2 is 1.04 x 1.13, or 1.17 K, not the 3.4 [2.1, 4.7] K imagined in the CMIP5 models (Andrews, op. cit.). And that, in just 350 words, is the end of the climate scare. There will be too little warming to cause harm.

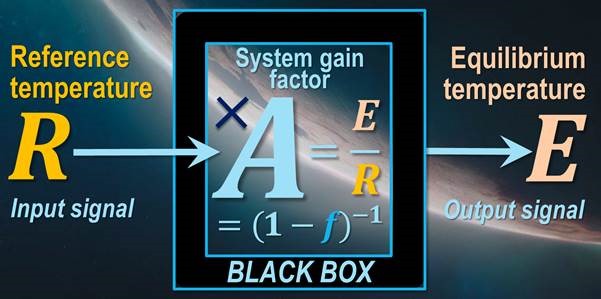

The feedback-loop diagram simplifies to this black-box block diagram

But your result is too simple. Bringing 122 years of climatology to an end in 350 words? It can’t be as simple as that. Really it can’t. It has to be complicated. Models take account of a dozen individual feedbacks and the interactions between them. IPCC (2013) mentions “feedback” more than 1000 times. Feedback accounts for 85% of the uncertainty in equilibrium sensitivity (Vial et al. 2013). You can’t just jump straight to the answer without even mentioning, let alone quantifying, even one individual feedback. Look, in climatology we just don’t do simple.

Inanimate feedback processes cannot “know” that they must not respond to the very large emission temperature but only to the comparatively small subsequent perturbations. Once it is accepted that feedback responds to the entire input signal, it becomes possible to derive the system-gain factor reliably and immediately. It is simply the ratio of equilibrium to reference temperature at any chosen time. Equilibrium sensitivity to doubled CO2 (after feedback has acted) is simply the product of the system-gain factor and the reference sensitivity to doubled CO2 (before feedback has acted). And that’s that. To find the system-gain factor, one does not need the value of any individual feedback. We can treat the transfer function between reference and equilibrium temperatures simply as a black box.

But each of the five Assessment Reports of the IPCC is thousands of pages long. You can’t just get the answer that has eluded the world’s experts in a few paragraphs.

To quote a former occupier of the office of President of the United States, “Yes We Can.” The “experts” had borrowed feedback math from control theory without understanding it. James Hansen of NASA first explicitly perpetrated the error of forgetting the sunshine in a lamentable paper of 1984. Michael Schlesinger perpetuated it in a confused paper of 1985. Thereafter, everyone in official climatology copied the mistake without checking it. Correcting the error makes it easy to constrain the system-gain factor and hence equilibrium sensitivity.

But climate sensitivity in models is what it is. The science is settled.

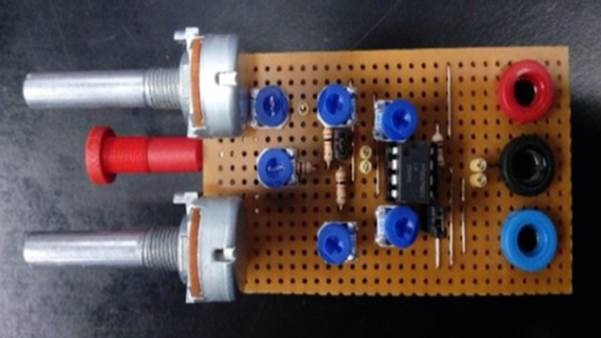

All honest experts in control theory will agree that feedback processes in dynamical systems respond to the entire input signal and not just to some arbitrary fraction of that signal. The math is the same for all feedback-moderated dynamical systems – electronic op-amp circuits, process-control systems, climate. Build a test rig. All you need is an input signal, a feedback loop and an output signal. Set the input signal and the feedback factor to any value you like. Now measure the output signal. The circuit doesn’t respond only to some fraction of the input signal. It responds to all of it. We checked by building our own test rig and then getting a government lab to build one for us and to measure the output under a variety of conditions.

Feedback amplifier test circuit built and operated for us by a government lab

But the circuits you built are too simple. Any undergraduate could have built them. You didn’t need to go to a government lab.

We knew official climatology and its devotees would kick and scream and whinge and throw all their toys out of the stroller when they learned of our result. Trillions are at stake. So we checked what did not really need to be checked. Feedback theory has been around for 100 years. To borrow a phrase, it’s settled science. But we checked anyway. Oh, and we went right back to basics and proved the long-established feedback system-gain equation by two distinct methods.

But you didn’t need to prove the equation by two methods. All you needed to do was to prove it by linear algebra.

Yes, indeed. The proof by linear algebra is very simple. Since the feedback factor is the ratio of the feedback response in Kelvin to equilibrium temperature, the feedback response is the product of the feedback factor and equilibrium temperature. Then equilibrium temperature is the sum of reference temperature and the feedback response. With a little elementary algebraic manipulation, it follows that equilibrium temperature is the product of reference temperature and the reciprocal of (1 minus the feedback factor). That reciprocal is, by definition, the system-gain factor.

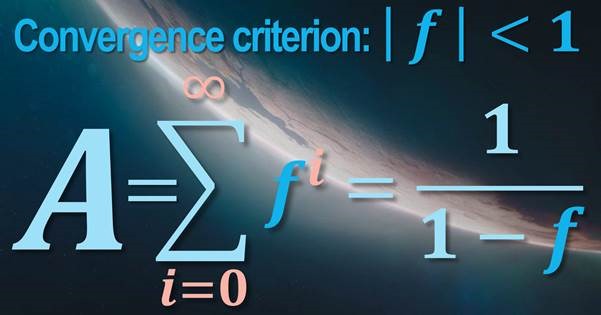

But we also obtained the system-gain factor as the sum of an infinite series of powers of the feedback factor. Under the convergence condition that the absolute value of the feedback factor is less than 1, the system-gain factor is the sum of the infinite series of powers of the feedback factor, which is the reciprocal of (1 minus the feedback factor), as before. We are guilty of double-checking. Get over it.

Convergence upon the truth

But the equation you use is not derived from any known physical theory.

Yes, it is. See the above answer. But all you really need to know about feedback is that the system-gain factor is the ratio of equilibrium temperature (before feedback) to reference temperature (after feedback). For 1850 and for 2011, we know both temperatures to quite a small margin of error. So we know the system-gain factor, and from that we can derive equilibrium sensitivity to doubled CO2.

But climatology’s version of the system-gain equation is derived from the energy-balance equation via a Taylor-series expansion. It can’t be wrong.

It isn’t wrong. It’s just not useful, because there is much more uncertainty in the delta temperatures than in the well constrained absolute temperatures we use. Neither the energy-balance equation nor the leading-order term in the Taylor-series expansion reliably gives the system-gain factor. It is only when you remember the Sun is shining that you can find the value of that factor directly and reliably.

Climatology in the dark

But if you’re saying climatology isn’t wrong, why are you saying it’s wrong?

Climatology’s system-gain equation, using reference and equilibrium temperature changes rather than absolute temperatures, is a correct equation as far as it goes. It is the difference between two instances of the absolute-value equation. But climatology erroneously limits its definition of feedback as responding only to changes, effectively subtracting out the sunshine. Feedback also responds to the absolute input signal, making it easy to find the system-gain factor and thus equilibrium sensitivity.

But you’re starting your calculation from zero Kelvin. You’re literally Switching On The Sun.

No. We have looked out of the window and noticed that the Sun is already Switched On and shining (well, not in Scotland, obviously, but everywhere else). Our calculation starts not with zero Kelvin but with the reference temperature of 254.8 K in 1850. The feedback processes in the climate respond to that temperature and not to any other or lesser temperature. They neither know nor care whether or to what extent they may have existed at any other temperature. They neither know nor care how they might have responded to some other temperature. They respond as they are, and they respond only to the temperature they find. We know the magnitude of the response they engender, for we can measure the equilibrium temperature, calculate the reference temperature and deduct the latter from the former.

But the Earth exhibits bistability. It can have two different temperatures for the same forcing.

Given the variability of the climate, Earth can have several temperatures for a single forcing. But not in the short industrial era. The system-gain factors for 1850 and 2011 are close to identical, indicating that at present there is insufficient inherent instability to disturb our result.

The scrambled account of feedback math in Hansen (1984)

But the feedback system-gain equation is not appropriate for climate sensitivity studies.

Interesting how the true-believers abandon their “settled science” when it suits them. The system-gain equation is mentioned in Hansen (1984), Schlesinger (1985), Bony (2006), IPCC (2007, p. 631 fn.), Bates (2007, 2016), Roe (2009), Monckton of Brenchley (2015ab), etc., etc., etc. If feedback math were not applicable to the climate, there would be no excuse for trying to pretend that equilibrium sensitivity to doubled CO2 is anything like 2.1-4.7 K, still less the values up to 10 K in some extremist papers. As it is, all such values are nonsense anyway, as we have formally proven.

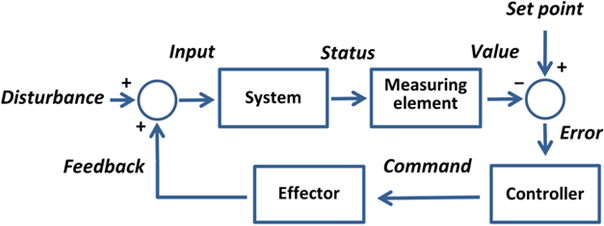

But Wikipedia shows the following feedback-loop block diagram, which proves that feedback only responds to changes, or “disturbances”, in the input signal and not to the whole signal –

A feedback loop diagram from the world’s chief source of fake news

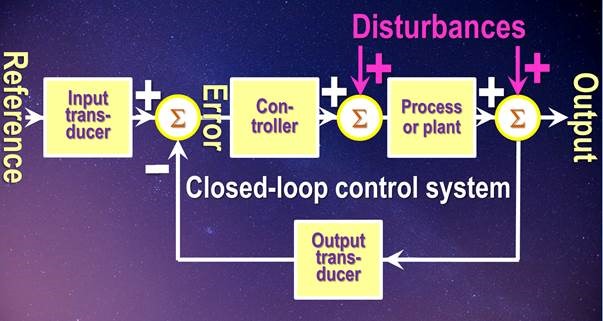

Our professor of control theory trumps the CreepyMedia diagram with the following diagram. And behold, the reference or input signal is at left; the perturbations (in pink) descend from above to their respective summative nodes; and the feedback block (here labeled the “output transducer”) acts on all of these inputs, specifically including the reference signal –

Mainstream block diagram for a control feedback loop

But the models don’t use the system-gain equation. They don’t even use the concept of feedback.

No, they don’t (not these days, at any rate, though until recently their outputs were fed into the system-gain equation to derive equilibrium sensitivity). However, we took some care to calibrate the models’ predicted [2.1, 4.7] K interval of Charney sensitivities using the system-gain equation, which produced exactly the same interval based on the excessive feedback factors derivable from Vial+ 2013. The system-gain equation is, therefore, directly relevant.

The models try valiantly to simulate the multitudinous microphysical processes, many of them at sub-grid scale, that give rise to feedback, as well as the complex interactions between them. But that is a highly uncertain and error-prone method – and even more prone to abuse by artful tweaking than the temperature records themselves: see e.g. Steffen+ (2018) for a deplorable recent example. Besides, no feedback can be quantified or distinguished from other feedbacks or even from the forcings that triggered it by any measurement or observation. The uncertainties are just too many and too large.

Our far simpler and more reliable black-box method proves that the models have, unsurprisingly, failed in their impossible task. By correcting climatology’s error of definition, we have cut the Gordian knot and found the correct equilibrium sensitivity directly and with very little uncertainty.

But you talk of reference and equilibrium temperature when radiative fluxes drive the climate.

Well, they’re called “temperature feedbacks”, denominated in Watts per square meter per Kelvin of the temperature that induced them. They are diagnosed from the models and summed. The feedback sum is multiplied by the Planck sensitivity parameter in Kelvin per Watt per square meter to give the feedback factor. Because the feedback factor is unitless, it makes no difference whether the loop calculation is done in flux densities or temperatures. Besides, our method requires no knowledge of individual feedbacks at all. We find the reference and equilibrium temperatures, whereupon the ratio of equilibrium to reference temperature is the feedback system-gain factor. Anyway, if you want to be pedantic it’s radiative flux densities in Watts per square meter, not fluxes in Watts, that are relevant.

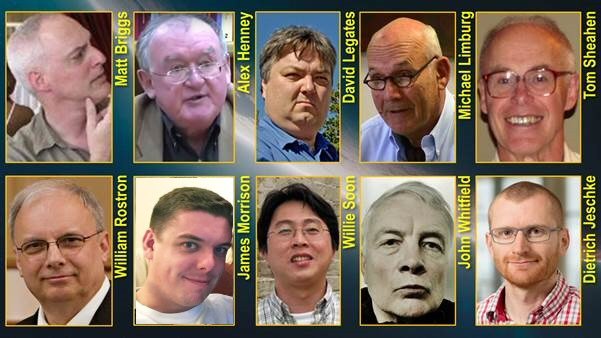

Ten handsome unpersons

But you’re not a scientist.

My co-authors include Professors of climatology, applied control theory and statistics. We also have an expert on the global electricity industry, a doctor of science from MIT, an environmental consultant, an award-winning solar astrophysicist, a nuclear engineer and two control engineers, to say nothing of our pre-submission reviewers, two of whom are the world’s most famous physicists.

But there’s a consensus of expert opinion. All those general-circulation model ensembles and scientific societies and intergovernmental agencies and governments just can’t be wrong.

Yes They Can. In suchlike bodies, totalitarianism prevails (though not for much longer). For them, the Party Line is all, and mightily profitable it is – at taxpayers’ and energy-users’ expense. But the trouble with adherence to the Party Line is that it is a narcotic substitute for independent, rational, scientific thought. The Party Line replace the heady peril of mental exploration and the mounting excitement of the first glimmer of a discovery with a dull, passive, cringing, acquiescent uniformity.

Worse, since the totalitarians who have captured academe ruthlessly enforce the Party Line, they deter terrorized scientists from asking the very questions it is the purpose of scientists to ask. It is no accident that most of my distinguished co-authors now live and move and have their being furth of the dismal scientific establishment of today: for if we were prisoners of that grim, cheerless, regimented, unthinking, inflexible, totalitarian mindset we should not have been free to think the thinkworthy. For these malevolent entities, and the paid or unpaid trolls who mindlessly support them in comments here regardless of the objective truth, punish everyone who dares to think what is to them the utterly unthinkable and then to utter the utterly unutterable. Several of my co-authors have suffered at their hands. Nevertheless, we remain unbowed.

But no one agrees with you.

Here is one of many supportive emails we have had. I get ten supportive emails for every whinger –

“Hi and congratulations on what I believe may have the potential to put the final nail in the coffin of the anthropogenic global warming hysteria. The work of you and your team is very promising and I cannot wait to see how alarmists will go about to attack this. Bring out the popcorn, as we say. The application of feedback theory in this case is simple, physics-wise elegant, mathematically beautiful, and understandable to a wider audience. I am especially excited about how the equation grasps the whole feedback problem without having to deal with all the impossible little details of trying to distinguish which gas does what and without relying on hopelessly complex computer models. And that is why I think it will stick. I will be following this eagerly in the coming months and years and I am considering going to Porto [on 7-8 September: portoconference2018.org: b there or b2] to catch all the latest from others as well, even though your work is the current crown jewel of the anthropogenic global warming debate so far.”

But global temperature is rising as originally predicted.

No, it isn’t –

Our prediction is close to reality: official climatology’s predictions are far out

But you have averaged the two global-temperature datasets that show the least global warming.

Yes, we have. The other three longest-standing datasets – RSS, NOAA and GISS – have all been tampered with to such an extent that they are no longer reliable. They are a waste of taxpayers’ money. We consider the UAH and HadCRUT4 datasets to be less unreliable. IPCC uses the HadCRUT dataset as its normative record. Our result explains why the pause of 18 years 9 months in global warming occurred. Because the underlying anthropogenic warming rate is so small, when natural processes act to reduce warming it is possible for long periods without warming to occur. NOAA’s State of the Climate report in 2008 admitted that if there were no warming for 15 years or more the discrepancy between the models and reality would be significant. It is indeed significant, and now we know why it occurs.

But …

But me no buts. Here’s the end of the global warming scam in a single slide –

The tumult and the shouting dies: The captains and the kings depart …

Lo, all their pomp of yesterday Is one with Nineveh and Tyre

Finely an explanation that agrees with my four years of engineering math and 20+ years of designing, developing the process control system, aligning and calibrating and tuning the process control systems for Nuclear power Plants. And my knowledge of process control theory was good enough that the final system needed minimal tuning during the startup phase of the plant. From all of the false theories I have read about this feedback/forcing BS associated with Climate change, I was beginning to think I needed a refresher course in Process control systems. I do not thank any man has designed a process control system to date that can keep a Nuclear power plant, Spacecraft, Airplane, even autonomous automobile as stable as the present inherent climate control system for global temperature.

usurbrain Yes Earth’s climate is operating well.

God originally stated it to be “very good”. Genesis 1:31

https://biblehub.com/genesis/1-31.htm

Earth’s climate has not always been so hospitable to life over the past four billion years. There have been many mass extinctions, and Snowball Earth episodes lasting hundreds of millions of years, in which average global temperature may have dropped to around -50 degrees C.

Extinction is a NATURAL part of the system. Nothing lasts for ever and the natural demise of one genus creates space for another. Irrespective of the actual cause of the demise. Time to send the Canutes back to school because they obviously were not paying attention the first time.

The problem with this lies here:

The so-called reference temperature : whatever the Earth would be without GHG feedbacks is unknowable. The only way is to guestimate what the Earth’s albedo would be in that state or know how strong the GHG warming is and work backwards.

It’s circular logic.

Why is it not possible to obtain a fairly good approximation from the temperature of Earth’s Moon and other Moons? Especially when I hear celebrated “Scientists” claiming they can determine if there is humanoids on newly discovered planets from the presence of GHG in their atmosphere.

Please state which scientists have claimed that they can determine the presence of “humanoids” on other planets based upon GHGs in their atmospheres. This claim has escaped my notice.

Thanks!

On a Discovery Channel Documentary about the discovery of planets a few years back (not more that 4 or 5) His logic absolutely flabbergasted me as I was responsible for calibrating instruments to NBS traceable requirements while in the military and understood the impossibility of this. His name and university was in the credits, I pulled up the university web page and wrote him a respectful letter asking how this was possible in that you are dealing with single digit parts per million in a device that does is not have that accuracy and all I got back wa gobbledygook professor speak, I am smarter than you lecture. Millions have seen it so millions believe it. Do not remember the title, only that it dealt with the discovery of exoplanets early when it was proven and verified.

Thanks. Couldn’t find it on YouTube.

Yes, I’m still in the evil clutches of Alphabet.

In response to Usurbrain, we have used a nifty wrinkle in geometric number theory (the spherical-surface areas of equialtitudinal spherical segments are equal) to derive the mean dayside temperature of the Moon: it is around 306 K. On the nightside, it is about 94 K. Mean lunar temperature is about 200 K, not the 270 K imagined by NASA, which has failed to allow for Hoelder’s inequalities between integrals.

Using a similar technique on Earth, the mean dayside temperature is 275 K and the nightside temperature around 246 K (based on a study of Earthlike aquaplanets by Merlis+ (2010), after allowing for the lower albedo in Merlis (0.38 against Lacis’ 0.42). Thus, the mean terrestrial surface temperature in the absence of noncondensing greenhouse gases is about 260.5 K, and the reference temperature after adding 11.5 K of pre-industrial non-condensing greenhouse gases is about 272 K.

This would imply a system-gain factor of 287.55 / 271.95, or 1.06, implying Charney sensitivity 1.10 K. We noted this result in passing in our paper, but adhered to climatology’s erroneous method that does not allow for Hoelder’s inequalities, so as to obtain the system-gain factor 1.13, implying CHarney sensitivity 1.17 K. Hope this helps.

Dear MoB, I greatly appreciate your efforts to de-fuse the climate bomb. Your comment above illustrates my main objection to the foundation of modern climate science. It is founded on several logical fallacies, primarily False Premise and Misplaced Precision.

To use your lunar temperature model as an example, it describes a day side temperature, a night side temperature, and a mean temperature as if these are real and not simply a mathematical abstraction. The mean exists nowhere and equilibrium between day and night exists nowhere.

Like wise the model for the Earth. There is no equilibrium, as the system is continually chasing it’s tail as it seeks an equilibrium that stays out of reach through the diurnal and seasonal cycles. This is actually perpetual Dis-equilibrium, and it pervades the entire atmospheric/hydrospheric system. I therefore propose in the spirit of truth in advertising that the term Equilibrium Temperature be henceforth changed to Disequilibrium Temperature.

It is also patently obvious after the climate gate capers that the HAD-CRUT database is absolutely corrupted by errors. It follows that the Reference Temperature is actually a wild-ass-guess. Thus the derivation of the term Climathemajics.

This from a biologist who gets my own local temperature readings from an old fashioned mercury/glass high/low recording thermometer calibrated in two degrees of accuracy, not tenths or hundredths, and understands that a reading of 55 1/2 degrees is actually translated from : ” Hmm, that looks like less than 56 and way more than 54, so lets say, oh, call it 55 1/2.”

Thanks for so publicly fighting the good fight.

In response to Richard G, there is so much wrong with official climatology’s methods and data that one hardly knows where to start. However, we took the simple approach of accepting ad argumentum all of official climatology except what we could disprove, and then demonstrating what we could disprove.

The influence of the sunshine, once it is taken into account in feedback calculations, is such as to overwhelm small differences in estimates of surface temperature etc. The value of our method is that, within reasonable limits, one can vary all the input parameters without much affecting the final answer.

I’m really trying to understand the background of your calculations, and why you believe them to be better than others.

So you are deriving the lunar temperature through mathematics, and believe that is a better estimate than measuring it? Do you take into account the temperature of the craters?

You use a single study of “Earthlike aquaplanets,” compensating for nothing but different albedo, to estimate pre-industrial terrestrial surface temperature? Am I understanding that correctly?

Can you define Hoelder’s inequalities in layman’s terms, and why it should be applied to estimation of climate sensitivity?

Why do you call it “Charney sensitivity”? Was he not the one who first came up with the 1.5-4 C range – and shouldn’t THAT be called the “Charney sensitivity,” it anything?

Where in your calculations of system gain do you account for the heat absorbed by the oceans?

Thank you in advance for answering my questions.

Ms Silber asks several interesting questions.

First, the lunar temperature. Unfortunately, we were unable to find anything in the Lunar Diviner mission’s papers that stated the lunar global mean surface temperature. Accordingly, we were compelled to compute it. On the dayside, we performed latitudinal calculations using the fundamental equation of radiative transfer and integrated, to find a mean temperature of 306 K. On the nightside, we used the Lunar Diviner data (for the nightside temperature varies little, and it is relatively easy to deduce the mean nightside temperature). That was about 94 K. So the lunar mean surface temperature is about 200 K.

We did a similar dayside calculation for the Earth, again calculating the mean temperature at each latitude and then integrating, using a useful device from geometric number theory that reduced the problem from a double integral (lat. and long.) to a single integral (for the spherical-surface areas of equialtitudinal spherical segments are equal). We used Merlis only to gain an idea of the nightside temperature, which depends far more on the heat capacity of the first 7 m of the ocean, treated as a slab, than on anything else. On Earth, we found that the emission temperature in the absence of greenhouse gases would not be the 243.25 K obtainable by a single global application of the fundamental equation of radiative transfer but more like 260.4 K. This consideration would reduce the system-gain factor from our 1.13 to about 1.06, in turn reducing Charney sensitivity from 1.17 to 1.10 K. We mentioned this result only in passing, as a consideration worthy of further work and, eventually, of a separate paper.

As to Hoelder’s inequalities between integrals, note that on the Moon a single use of the fundamental equation of radiative transfer suggests a lunar mean surface temperature of 270 K. However, it is in fact about 200 K, because the fundamental equation of radiative transfer is a fourth-power relation and the sum of a series of fourth powers differs from the fourth power of the sum of a series.

On Earth, owing to the formidable heat capacity of the ocean, the error is in the opposite direction: the temperature correctly calculated as the sum of a series of fourth powers (one for each spherical segment) is greater than the incorrectly-calculated single global value obtained from that equation.

Equilibrium sensitivity to doubled CO2 concentration is known to climatologists as “Charney sensitivity”. It is the standard metric or yardstick in equilibrium-sensitivity studies.

In our system-gain calculation we do not need to make any allowance for any individual feedback. All we need to know is the reference and equilibrium temperature for any chosen date for which respectable data are available. The system-gain factor is then the ratio of the latter to the former.

To allow for the possibility of time-delay occasioned by the heat capacity of the oceans, we performed not one but two calculations – one for 1850 and the other for 2011. The system-gain factor was the same in both years, at just 1.13 (or 1.50 if one uses the delta system-gain equation rather than the absolute-value equation). Therefore, time delay is not making much difference.

I do hope that these answers help.

Monckton of Brenchley

But does this not fall to the “insolated, isolated, insulated flat grey body in space” fallacy?

(A grey flat body is assumed uniformly insolated at a uniform rate in a perfect vacuum, and must lose enough energy from one side of the flat body to come in thermal equilibrium with the inbound radiation.)

Should not the moon be calculated as a (near-uniform) sphere illuminated on one side, rotating every 28 days at the earth’s actual orbit and losing energy from its entire surface?

Yes, thermal near-equilibrium can at best only be assumed if the weight, density, thermal mass and thermal conduction of the first 1 meter of the moon’s surface is approximated/estimated/guessed to be uniform. But the result would be 10 degree bands that can be proved useably correct by the Apollo instruments left on the surface.

If anything, the vacuum of the moon and slow rotation of the spherical surface would make a thermal radiation model of the moon easy for any of the GSM models to work.

In response to Mr Cook, we took ten billion spherical segments on the dayside hemisphere and derived the temperature of each by a calculation based on the zenith angle at the centerline of each segment. Then we took the average. Answer: 306 K. For the nightside, we averaged the Diviner measurements. Answer: 94 K. The mean of the two gives about 200 K.

Thank you.

Did you account for the varying albedo? If so, how was the albedo assessed at each point?

See:

Hi Christopher,

I don’t think my comments detract from your overall theory and presentation, but I must again take issue with the day, night and average temperatures of the moon that you are using. In doing so I will try and use clear language so others can perhaps see where this is going in terms of definitions.

The Diviner dataset provides detailed lunar temperature profiles from pole-to-pole and every 1 hour in longitude. I have taken the published Diviner dataset profiles and digitized them. I have then performed an integration over the lunar surface and also looked at running 12-hour windows to find the minimum and maximum hemisphere averages. The results of various ways of computing the average are:

1. Entire lunar surface and naively averaging the temperatures gives 180 K

2. Entire lunar surface averaged as T^4 gives 253 K

3. Entire lunar surface averaged as T^4 and weighted by area gives 270 K

To obtain an “average” which relates to the mean energy flux we must obviously use method (3). This agrees with NASA.

The coldest hemisphere lunar surface average (T^4 with area weights) gives 103 K

The hottest hemisphere lunar surface average (T^4 with area weights) gives 320 K

(The two hemispheres do not overlap in the above calculation – just to be clear!)

The naïve average of the hot and cold hemispheres is (103+320)/2 = 211 K

The T^4 average of these two numbers is 270 K – the same answer as integrating over the whole surface, exactly as we would expect.

These numbers and calculations are not in doubt but perhaps the meaning and utility of them is in dispute. If we want to state a single average temperature that summarises the average flux being radiated by the whole moon at any time the correct answer is 270 K. This is rooted in physics. If we want to state what the average thermometer reading on the surface of the moon is we would be using a number closer to 211 K, or your 200 K.

The T^4*area calculation is the one that relates the physical quantity temperature to the physical quantity energy flux in a meaningful way.

Hope that helps everyone. I don’t think this is really any direct relevance to your theory.

For others wanting to know about models with different physical properties of the atmosphere excluded I would suggest they refer back to Manabe & Strickler (1964) “Thermal Equilibrium of the Atmosphere with a Convective Adjustment”, J. Atmospheric Sci. 21 pp 361-385. There you will discover that the temperature with GHG but without weather would be +40 – +60 degC, contradicting the GHG = +33 meme completely. It’s the big effects of evaporation and convection that keep us cool.

Regards,

TS

TS, I fear that both Lord Monckton and you (along with Manabe & Strickler) have greatly underestimated the complexity of estimating the earth’s blackbody temperature. See here for Dr. Robert Brown’s clear examination of the problems involved in that calculation.

w.

Mr Eschenbach is quite right that the problem of deriving an emission temperature in the absence (and still more in the presence) of greenhouse gases is not easy. Our approach has been to accept official climatology’s method ad argumentum, but to note that if one performs one of the earliest steps recommended by the ever-interesting Dr Brown – namely a T^4 calculation at each point on the sphere – one comes closer to the true value both on the Moon (where the mean temperature is some 70 K less than using official climatology’s single, naive calculation) and on the Earth (where the error appears to be 10-20 K in the opposite direction). Our conclusion is that further work needs to be done on this question, which has little implication for equilibrium sensitivity obtained by our method (it reduces our 1.17 K mid-range estimate to about 1.10 K) but probably has major implications for official climatology’s method.

In the case of the Earth, the problem is more acute since the Earth is nothing like a blackbody with all the thermal inertia and lags.

Further, most energy is not absorbed at the surface, eg., the oceans which cover almost ~70% of the planet and where energy is absorbed several metres below the surface and some of this is carried to depth by oceanic overturning, in the atmosphere at cloud height and the height profile of water vapour in the atmosphere, in tropical rain forests little sunlight reaches the surface and solar is absorbed at canopy height and then it is converted powering photosynthesis and tree growth.

Yet further energy absorbed in one place is often reradiated in another place (eg., as a consequence of oceanic currents). and the problem caused by latent energy in evaporation, ice melt etc.

This is a 3D system where the incoming watts are simply not absorbed at one uniform height.

Mr Verney rightly points out some of the numerous complexities in reaching an estimate of global mean emission temperature. However, these complexities have a very small influence compared with the very large influence of official climatology’s failure to make allowance for Hoelder’s inequalities between integrals in arriving at its estimates of emission temperature.

In response to Greg, the values for albedo and reference temperature in the absence of non-condensing greenhouse gases were derived from a GCM by Lacis+ (2010). Assuming today’s insolation and Lacis’ albedo, the emission temperature in the absence of those gases was derived using the fundamental equation of radiative transfer in the usual way.

In practice, it makes very little difference what the reference temperature is: it can vary quite widely without much influencing equilibrium sensitivities.

Why do you use Lacis+ GCM, rather than another?

“In practice, it makes very little difference what the reference temperature is: it can vary quite widely without much influencing equilibrium sensitivities.”

What does “quite widely” mean here? Seems strange that your calculations are quite insensitive to actual conditions.

How did you pick the years (just two!) to estimate system gain?

“Lacis misattributed to the non-condensing greenhouse gases the large feedback response to the emission temperature from the Sun.”

It seems to me that once you bring the Sun into the equation, you are creating an open loop. The Sun’s emission is independent of any feedbacks, and that invalidates control theory.

If you are looking at the top-of-atmosphere energy balance, the input is the sun’s energy, and the output is the radiation from the planet into space. This cannot be described by control theory.

If you are looking at the temperature on the surface of the Earth, the actual emission temperature of the Sun is to some extent irrelevant since its energy is greatly modified by the time it hits the surface, and is dependent on some of the same controls that come into play in the feedbacks (clouds, albedo, etc.).

Where am I wrong here? Really, I want to know and understand, and I’d appreciate it if any response is in layman’s terms and refers to actual climate parameters rather than engineering/electronics models.

Ms Silber asks some sensible questions. I am happy to answer them.

We used Lacis+ 2010 as our starting point at the suggestion of Dr Mojib Latif, whom I had the pleasure of meeting at a climate conference organized by the City Government of Moscow last year. The virtue of using Lacis is that the co-authors are known to take a rather extreme position on global warming: few, therefore, would argue with their findings, though we are able to demonstrate that the feedback factor they imagine is absurdly high.

The reason why reference temperature R(1) may vary quite widely is that the influence of the Sun overwhelms the comparatively small influence from greenhouse gases. We allowed R(0), the emission temperature in the absence of greenhouse gases, to vary by 5% up or down on Lacis’ 243.25 K. We then conducted a 30,000-trial Monte Carlo simulation and derived the uncertainty interval of about 0.08 K either side of our mid-range estimate of 1.17 K equilibrium sensitivity to doubled CO2.

We selected 1850 as the start-point for our calculations because there had been little if any anthropogenic influence before that date and it was at that date that the first global-temperature measurement was conducted, albeit with an uncertainty of some 0.35 K either side of the mid-range estimate.

We selected 2011 as the end-point because that was the year to which IPCC and its contributors updated their data and methods in time for the most recent Assessment Report.

However, we also conducted an empirical campaign based on ten separate estimates of net anthropogenic forcing to various dates, four from IPCC’s reports and six from mainstream, peer-reviewed sources. In all cases the equilibrium sensitivity to doubled CO2 was found to be 1.17 K.

It is incorrect to state that including the emission temperature “creates an open loop”. It does no such thing. Build a test rig (or, if engineering is not your thing, just set up the Bode system-gain equation). Set the gain block to unity. Set the input signal to represent the 243.25 K emission temperature. Set the feedback block to any nonzero value. Measure the output. It is not 243.25 K. The entire difference between the input and output signal, where the gain block has been set to unity, must come from feedback. Case closed.

If Ms Silber thinks control theory is inapplicable to climate, she makes our case for us a fortiori: for in that event there is no basis for imagining that temperature feedbacks operate. Control theory is feedback theory.

As to the complications caused by the multiplicity of forcings and feedbacks, including water vapor, albedo and cloud feedbacks, our black-box method does not need to take them individually into account. All we need to know is the reference and equilibrium temperature for a given year, whereupon the system-gain factor is simply the ratio of the latter to the former.

I do hope these answers help.

Monckton of Brenchley said:

What? Clear proof of Russian climate collusion, notify Robert Mueller’s team immediately!

w.

Guilty as charged! Where do I collect my money?

I’m not convinced that feedback analysis is appropriate in the first place.

What CM et al have done is to accept, for sake of argument, Hansen’s feedback analysis but to demand that it be done correctly. Absolutely brilliant.

The only way CO2 will produce catastrophic warming is if there is positive feedback. Take that away, as CM et al have done and there is no Catastrophic Anthropogenic Global Warming (CAGW).

cB,

Pretty sure the feedback is still considered positive…it’s just much much smaller than “consensus” science thinks…

rip

… much much smaller … by around an order of magnitude. 0.08 vs 0.67 or 0.75 link

Given the lack of precision and accuracy of the data, the feedback might as well be zero.

Hahn. Agreed. Although, upon consideration, CM’s analysis seems to rest on all other things being equal. Just something to keep in mind, I guess. Not that I truly think insolation changes or orbital fluxes would impact this materially, but just sayin.

rip

Ripshin makes the fair point that we are not stating that we know so much about the climate that we are sure that Charney sensitivity is 1.17 K. We are saying that, accepting ad argumentum all of official climatology except what we can prove to be in error, and after correcting the error we can prove, Charney sensitivity is 1.17 K plus or minus 0.08 K, to 95.4% certainty.

Understood and agreed!

And, I’ll note two things in passing.

First, I’m exceedingly grateful that you are narrow in your conclusions. This is how science (and, as an aside, the law) should be conducted. Conclusions (and verdicts) should be narrowly constructed, giving due consideration to the limitations that produced them (whether, in the case of science it was an experiment, or in the case of law it was the trial/arguments). Broad, sweeping conclusions are almost always filled with errors and fallacies. Your careful description of your conclusion, taking in the caveats, is much appreciated. That a significant portion of “research” of an entire branch of science is, in one fell stroke, invalidated by such a simple and narrow conclusion such as yours is as much a kudos to you and your team as it is a (or should be) a censure to the ringleaders who’ve perpetuated this fallacy. [For some reason, I really wanted to write “farcical aquatic ceremony” there instead of “fallacy”: https://youtu.be/dt-a6sovg_k?t=1m41s ]

Secondly, I found your youtube presentation to be quite charming. You did a nice job, but I have to be frank here: I’m not sure I totally bought the cowboy hat and bolo combo. Once you started speaking, I still knew you were a villian (https://www.thecut.com/2017/01/why-so-many-movie-villains-have-british-accents.html).

At any rate, thanks again for keeping our community here updated and for the constant engagement to answer questions and comments.

Sincerely,

Brian Lindauer (rip)

Mr Lindauer’s comments are most kind and helpful. He has entirely understood both the limitations and (precisely because of the limitations) the scope and power of our result.

As to the Stetson with its associated gear, that was presented to me by the Republican Party of Montana as a thank-you for making the keynote speech at a fund-raiser for Mr Trump before the Presidential election. I wore it at Camp Constitution because the sunlight was very bright, and it provides better shade even than the excellent Stetson leather baseball cap that i bought in Cannes some years ago, and I have recently had a couple of operations on my eyes, which are more sensitive than usual at present. Also, the schoolkids who were my audience liked it. It is, of course, incongruous when talking of scientific matters, but I shall be giving a highly-focused 20-minute presentation on our result at a high-level scientific conference in Porto next month. That will be professionally filmed and posted up on YouTube, and I shall be soberly suited and looking serious.

Math tells me that it has to exceed all Negative Feedback to go into runaway warming. Do not think there is enough for that and geological history also shows no runaway. With levels of CO2 at 7,000 PPM the earth’s temperature was only 15 oC than now. Thus there is a negative feedback. My guess is H20.

I believe you are right. The water cycle is a net negative feedback. We live in a swamp cooler atmosphere.

Many thanks to Commiebob for his comments. It does seem clear from our analysis that net-positive feedback is acting, but that the effect is small.

Always been my position. WHERE is this highly positive feedback we hear of re CO2 – H2O? It seems to me ‘they’ recognised there’s a trifling amount of human CO2 and any GHE it produces (?) is overwhelmed by natural H2O, so they had to shoe-horn in an ‘explanation’ for it. I’ve never once bought into it.

I am very grateful to usurbrain for his kind comment.

If anyone would like a simple account for high-school students, go to:

Monckton of Brenchley

Chris, that blew me away.

Whilst I barely understood a word, nor do I suspect did the attendees, I understand why you had to go into the detail you did, for the benefit of your sceptics.

Nor should there be any doubt, the sceptics boot, is now on the other foot.

We ‘sceptics’ are in the ascendancy and are rapidly becoming the mainstream of climate change opinion. It is a bull market for us, invest!

No one believes in the concept of Catastrophic Anthropogenic Climate Change any longer other than the deluded hardliners who are being exposed as totalitarian elitist’s, determined to use CAGW to further their despicable ends, change the political world order and impose global governance.

I’ll write to the Swiss Embassy in the UK irrespective of my late submission and I await their reply with interest.

And when I retire back to Scotland in the next 4 or 5 years, I will turn up on your doorstep with a bottle of our finest to toast your good health, even if you’re not there.

Lang may yer lum reek Chris.

Thank you,

HotScot.

Most grateful to HotScot for his very kind comment, and I look forward to sharing a dram with him one day. The math was a bit too much for the high-school students, but the value of spelling it out is that the far larger audience on YouTube can watch the presentation and get some idea of the actually quite simple result.

If we are right, this is the end of the climate scare, which is no doubt why the IPCC has not complied with its own error-reporting protocol to the extent of acknowledging my report of its error.

‘If we are right, this is the end of the climate scare,’ Sad truth, it won’t be. As along as some people want Governance X. Co2 will be the villein.

Well, I am not a quitter. If we have succeeded in demonstrating a major error in official climatology’s math, and if we are right that in consequence of that error the equilibrium sensitivity to doubled CO2 is of order 1.2 K rather than 3.4 K, then that is indeed the end of the scare. One should not underestimate the power of a mathematical proof.

I expect we will not see the final end of the current climate hysteria until after they roll out the next hysteria to blame on modern industry and use as an excuse for a power grab.

Sad, but true. They have already gone from “global cooling” to “global warming,” in BOTH cases blaming human industry and in BOTH cases proposing the same “solution” to the non-existent “problem” – limits/controls on energy use. The fact is what they’re after has always been control of energy use, through which they can gain control of *everything.*

The True Believers have stated their positions so forcefully. for so long, that they are unable to reverse themselves. They will take their positions to the grave. How could they stand the humiliation of admitting they were wrong? Their brains won’t allow the possibility.

Steve O is right that the true-believers in the New Religion – or, rather, the New Superstition – are not going to be willing to abandon the Party Line without a struggle. However, our result does make it quite plain that equilibrium sensitivity is between one-third and one-half of their mid-range predictions – enough of a reduction to bring the global-warming scare to an end.

The first stage will be to see whether we can get our paper past peer review. There will be a lot of snapping and snarling by true-believing reviewers – indeed, there already has been – but, if the generally feeble opposition to our result from the true-believers here is anything to go by, there is nothing much for us to worry about.

Once our paper has been peer-reviewed and published, it will be up to the wider scientific establishment to see if it can find any significant holes in our argument. That may prove a great deal more difficult than some of them may think.

LMOB, I have much faith in the work of you and your team, but unfortunately little faith that the “journal” gatekeepers will allow your work to be published, because they prefer to refuse to publish anything that would kill the “golden goose.”

In response to AGW is not Science, we know it will be difficult to persuade the journals that the game is up and the scare is over, but there will come a point where, unless they can produce valid reasons to reject our actually quite simple argument, they will have to publish or face prosecution for fraud.

You and me both Scottie!

I’m quite partial to older versions of Bunnahabhain.

“Likewise, Science has as its end and object the truth in the physical world…Truth is the objective of science, and objective Truth is the objective of Science.”

Wonderful!

Thank you, sycomputing, for having said how much you enjoyed my little excursus into the philosophy of science and of religion. For what is the scientist but a seeker after truth, as al-Haytham used to say.

“I do not think any man has designed a process control system to date that can keep a Nuclear power plant, Spacecraft, Airplane, even autonomous automobile as stable as the present inherent climate control system for global temperature”

With respect to your experience with control systems, the climate is very stable simply because of the huge thermal mass of the Oceans (caused by the huge volume of the Oceans).

No feedback(s) necessary (or present). It takes a very long time for the temperature of the Ocean to change, and then the other things that respond faster (CO2, polar ice) simply go along for the ride.

Cheers, KevinK.

KevinK appears to imagine that there are no feedbacks present in the climate. However, it is readily demonstrable that there are. It has been demonstrated. A mere assertion to the contrary, unsupported by any evidence, does not constitute an effective refutation.

I understand very little of this, but that prediction-observation -graph looks a bit dishonest to me, seeing how it misses the last 6 years.

but it doesn’t miss all the years the IPCC, models, etc were wrong…and that’s the point

Not dishonest. Just reusing an old graphic.

Extending the graph to July 2018 would only slightly raise the angle of the blue line. It still wouldn’t make it up to the lower MoE line, ie into the IPCC’s yellow prediction zone.

Theo is correct. It is only if one uses the much-tampered-with GISS data to 2018 that the trend-line barely makes it into the very bottom of IPCC’s prediction region.

The 1998 El Nino raised the global average temperature above the model predictions, the 2015/16 El Nino raised it to the average of the models (and they cheered that they were accurately modelling global average temp), but since then it has cooled whereas the model predictions continue to warm. They will be lucky if the next major El Nino reaches their lowest forecast models.

Mr Turner makes an excellent point. It does seem clear that a large fraction of the observed warming in most datasets arises from adjustments (whether legitimate or otherwise).

In response to Meh, we shall of course update our analysis once up-to-date data from IPCC are to hand: but we chose 2011 as our end date because that was the date to which IPCC had derived its predictions and data.

However, we also ran an empirical campaign studying ten distinct estimates of net anthropogenic forcing over various periods, together with the observed industrial-era warming since 1850 for each period. In every case, the Charney sensitivity was found to be 1.17.

Dishonest?

Meh did something magical happen in the last six years?

http://www.drroyspencer.com/wp-content/uploads/UAH_LT_1979_thru_February_2018_v6.jpg

If there was anything ‘dishonest’ about 1850-2011 this thread would have gone into alarmist meltdown.

“I understand very little of this, but that prediction-observation -graph looks a bit dishonest to me”

Omission of recent warming is just one of the problems. The FAR century trend was, as usual, based on scenarios. Naturally this plot uses only Scenario A, which is for the highest estimate of GHG increase, which is not what happened 1990-2011. The scenarios are similar to Hansen’s. I have plotted below here the graph with data to date, proper surface data as postulated by IPCC (and TLT for those who like that sort of thing), all three FAR scenario century trends, and the MoB green line.

Omission of 2011-18 means not only omission of warming but also of cooling.

Surely you’ve noticed that the world has cooled since the totally natural warming associated with super El Nino of 2015=16.

Mr Stokes continues to quibble. Since there have been no reductions in the rate of CO2 concentration growth, it is the business-as-usual scenario that is relevant. On that scenario, the mid-range prediction of medium-term warming made by IPCC in 1990 was 2.8 K or 3.3 K, depending on which version of the medium-term prediction one relies upon. I chose the lesser of the two, so as to be kind to IPCC.

Mr Stokes maintains, disingenuously, that the forcings imagined by IPCC in 1990 have not come to pass. They have, however, but IPCC, realizing that they were not producing the desired warming rate, introduced a very large fudge-factor in the form of the negative aerosol forcings.

and this is what happens MoB when a scholar attempts to discuss logic with a fanatic: they will resort to obfuscation and self-deception to insulate their ego. If we plummeted into another LIA in the next ten years… Mr. Stokes would still blame CO2.

Thanks again sir, you rock!

Nick, you can continue kicking them

“and this is what happens MoB when a scholar attempts to discuss logic”

A scholar would at least tell you that there were scenarios involved, and that he was choosing the most extreme in terms of GHG growth. And then give some positive justification as to why that choice was justified by the events that unfolded. MoB has given no detail about the scenarios at all.

The accident-prone Mr Stokes should perhaps check to see whether the emissions growth to 2011 was below or above the IPCC’s business-as-usual scenario in 1990. Hint: it was above. IPCC’s prediction, therefore, was way off beam. It has realized this and has approximately halved its medium-term prediction since then – yet, unaccountably and inconsistently, it has left its longer-term predictions unaltered.

Monckton of Brenchley,

I seem to remember a few years back you were claiming that the increase in CO2 was well below what the IPCC predicted. Have you changed your mind since then?

As the ever-tiresome and unconstructive Bellhop will have realized by now, in climatology it is necessary to adapt one’s position as the data and the science change. If Bellman were to provide a reference to what I said, for I do not recollect having said any such thing, I should be able to recall the circumstances and provide a more detailed answer.

From February 2009,

“It is important to draw the distinction between the increase in CO2 emission, which has been at the high end of the IPCC’s projections, and the corresponding increase in CO2 concentration, which has recently been very near linear, and is running well below the least of the exponential rates of increase projected by the IPCC.

On the current, linear observed trend, CO2 concentration in 2100

will be just 575 ppmv (IPCC central estimate 836 ppmv), requiring the IPCC’s central projection of temperature increase to 2100 to be halved from 3.9 to a harmless 1.9 C°.”

Global Warming is Not Happening

At the hearing of March 25 2009: “Carbon dioxide is accumulating in the air at less than half the rate that the United Nations had imagined. This

century we may warm the world by just half a Fahrenheit degree, if that.”

Excellent. The CO2 concentration is still heading for about 575 ppmv by 2100, and that alone requires IPCC’s prediction of global warming to be reduced. The emissions, however, are – like it or not – above the business-as-usual scenario in IPCC (1990). The IPCC is, therefore, wrong on the following counts:

1. Despite decades of rhetoric and annual bilious climate conferences, IPCC, UNFCCC, UN et hoc genus omne have utterly failed in their primary mission of bullying the West into making heavy enough cuts in emissions to bring the emissions growth rate even down to its high-end or business-as-usual case.

2. Notwithstanding that emissions are running above the business-as-usual scenario envisioned by IPCC in 1990, CO2 concentrations are – as I had said they would – running at well below IPCC’s then mid-range estimate.

3. As our present result shows, equilibrium sensitivity is approximately one-half to one-third of IPCC’s mid-range estimate.

Now, put these telling facts together and it is indeed perfectly possible that the anthropogenic component in the global warming of the 21st century will be only 0.5 K. However, our present result concentrates solely on the question of equilibrium sensitivity to doubled CO2. Bearing in mind that consideration alone, we should expect the anthropogenic component in global warming this century to be of order 1.2 K.

However, if one were to bear in mind that IPCC was also wrong about the relationship between emissions and concentrations, one might well conclude that the anthropogenic component in 21st-century warming will be as little as 0.5 K. But that step, though relevant to my testimony before Congress, was not relevant to our present paper, which is narrowly focused.

“Excellent. The CO2 concentration is still heading for about 575 ppmv by 2100, and that alone requires IPCC’s prediction of global warming to be reduced.”

I’m probably missing something here, but I thought that was the whole point of Nick Stokes argument. CO2 is not rising in accord with the original IPCC business as usual scenario, and therefore you would not expect as much warming as they predicted.

“Now, put these telling facts together and it is indeed perfectly possible that the anthropogenic component in the global warming of the 21st century will be only 0.5 K.”

Yet you accept temperatures are currently rising three times faster than that.

Also, you predicted 0.5°F, not K – though a few minutes later you suggest it could be as much as 2°F.

Bellman continues to be deliberately obtuse. The CO2 emissions, and those from other greenhouse gases, are rising at somewhat above the IPCC’s business-as-usual rate as predicted in 1990. Yet the concentration is not rising as fast as IPCC had predicted. This is one of IPCC’s many mistakes.

However, our paper concentrates only on one mistake: the erroneous definition of “temperature feedback” in IPCC’s reports.

At present, temperatures are rising at 1.6 K/century equivalent on the HadCRUT dataset, less on the RSS dataset and more on the others. However, there has recently been a large el Nino, a naturally-occurring event, which has pushed up the warming rate. Before the el Nino, there had been no warming at all on the satellite datasets for 18 years 9 months, and none on the terrestrial datasets for about 15 years until they were tampered with (for whatever reason) in a fashion calculated to raise the apparent warming rate of recent decades compared with the original measurements.

Since most of the current century has yet to happen, it is interesting that Bellman – who perhaps lacks the statistical knowledge to understand that shorter-term trends tend to fluctuate more than longer-germ trends – assumes, on no evidence, that the current rate of warming will persist throughout the century.

As I have already explained twice (and one does understand that Bellman is calculatedly slow on the uptake, for it is paid to be so), it remains possible that the rate of warming this century will be as little as 0.5 K. However, for present purposes our argument takes no account of IPCC’s predictive failure that led it to assume a far greater concentration of greenhouse gases as a function of the emission rate than has actually occurred. That is why our paper, which is confined to the question how much global warming will occur after temperature feedbacks have acted, finds that equilibrium sensitivity to doubled CO2 will be 1.2 K.

“Since most of the current century has yet to happen, it is interesting that Bellman – who perhaps lacks the statistical knowledge to understand that shorter-term trends tend to fluctuate more than longer-germ trends – assumes, on no evidence, that the current rate of warming will persist throughout the century.”

That’s patent nonsense. I’ve argued here on numerous occasions that short term trends will fluctuate and cannot be used to predict future changes.

“it remains possible that the rate of warming this century will be as little as 0.5 K.”

Anything’s possible. We might plunge into a new ice-age by the end of the century, or warming might accelerate in line with the 1990 IPCC estimates. The problem is you claim the period from 1990 – 2011 verifies your prediction of 1.2°C / century. If it does that it doesn’t provide evidence for your 0.5°C warming, let alone your 0.5°F projections.

The furtively pseudonymous coward Bellman continues, pointlessly, to pick nits and to lie. It is contemptible. And, when caught out in one of the lies in which it specializes – for that is what cowards do when they are caught out in their bottomless ignorance time and again – it doubles down with further lies.

For the reasons I have explained, our analysis is confined to quantifying the effect of the error of definition on which official climatology has hitherto foolishly relied. Taking that matter on its own, one would expect about 1.2 K of global warming this century, on the assumption that the net centennial warming from all anthropogenic sources is approximately equivalent to the equilibrium warming in response to doubled CO2.

However, there remains the fact that IPCC’s original business-as-usual prediction of CO2 emissions growth is the track currently being followed by inferred CO2 emissions (see e.g. Le Quere’s annual papers), and yet the CO2 concentration growth is well below IPCC’s original business-as-usual prediction. It is for that reason, combined with official climatology’s error of definition, that I consider it possible that anthropogenic global warming this century may prove to be as little as 0.5 K. However, for the purposes of our present paper, 1.2 K this century is enough. If the warming turns out to be 1.6 K – the current HadCRUT4 trend – then our prediction will still be very much closer to reality than IPCC’s original business-as-usual prediction of about 4 K warming over the 21st century.

If one considers IPCC’s medium-term predictions rather than its centennial predictions, then our 1.2 K would be considerably closer to a centennial warming of 1.6 K than IPCC’s 2.8 K/century equivalent or 3.3 K/century equivalent medium-term business-as-usual predictions.

“it doubles down with further lies.”

It would be a real help if you could quote a specific lie. I’m sure I make mistakes and correct them when pointed out, but to be accused of making non-specific lies makes it impossible for me to respond.

“on the assumption that the net centennial warming from all anthropogenic sources is approximately equivalent to the equilibrium warming in response to doubled CO2.”

And that’s one of my problems, where does that assumption comes from? Logically it should depend on factors such as how much CO2 increases. Even if a sensitivity can be translated into a rate of warming over the 21st century, there is no reason to suppose this would be the same over a shorter period.

“However, there remains the fact that IPCC’s original business-as-usual prediction of CO2 emissions growth is the track currently being followed by inferred CO2 emissions (see e.g. Le Quere’s annual papers), and yet the CO2 concentration growth is well below IPCC’s original business-as-usual prediction. It is for that reason, combined with official climatology’s error of definition, that I consider it possible that anthropogenic global warming this century may prove to be as little as 0.5 K.”

I still don’t follow the logic of this. You appear to be confusing predicted rates of warming with climate sensitivity. If your argument is that we may only see 0.5°C warming over the 21st century because there will less of an increase in CO2 over that period, you are not saying that climate sensitivity is only 0.5°C.

“then our prediction will still be very much closer to reality than IPCC’s original business-as-usual prediction of about 4 K warming over the 21st century.”

I assume that’s a typo, the IPCC predicted 3 °C over the 21st century.

“If one considers IPCC’s medium-term predictions rather than its centennial predictions, then our 1.2 K would be considerably closer to a centennial warming of 1.6 K than IPCC’s 2.8 K/century equivalent or 3.3 K/century equivalent medium-term business-as-usual predictions.”

Again, the IPCC never predicted 3.3 / century mid term warming.

But the main issue is that as you so rightly said else where, you cannot project a short term warming trend over the next century. Your argument is that if warming over the next century is only 1.6 °C, the IPCC will have been wrong and you will have been less wrong. If.

Here are just a few of Bellnan’s lies, uttered from behind its cloak of cowardly anonymity.

1. IPCC predicted 3 K, not 4 K, business-as-usual warming over the 21st century. Yet the business-as-usual diagram quite clearly shows 4 K warming.

2. IPCC never predicted 3.3 K mid-term business-as-usual warming. This lie is repeated twice. Yet IPCC did predict 3.3 K mid-term business-as-usual warming.

3. Bellman, having lied and lied again about IPCC’s predictions, then says it is not interested in IPCC’s predictions. It should at least make some attempt to make its lies self-consistent.

The 4K warming is the warming since pre-industrial times, not the warming over the 21st century.

You can assert they did predict it ad infinitum, it doesn’t make it true. I’ve tried to explain why I think you are wrong. You have ignored what I say and just repeat your claim. The facts are that the IPCC FAR do state a figure of 3.3K / century up to 2030. They do not state there will be 1.35°C warming between 1990 and 2030. They did predict, as a crude estimate, 1°C warming up to 2025. Their graph does not show short term warming at a rate of 3.3°C / century.

If I’m wrong then Lord Monckton only has to point to the passage stating that and I’ll withdraw my assertion, but up to now all he has down is make some suppositions based on unrelated parts of the IPCC report to derive his own figure.

If I said I wasn’t interested in IPCC’s predictions it was in the context of thinking them irrelevant in confirming your predictions. Of course I’m interested in IPCC predictions, I just think their crude predictions from 28 years ago are less interesting than their current predictions.

I am glad that Bellman now concedes, contrary to his previous assertions, that IPCC do state a figure of 3.3 K/century up to 2030: or, rather, they state that there will be 1.8 K warming compared with pre-industrial. Now, there had been 0.45 K warming to 1990, as IPCC knew at the time. Therefore, it was predicting 1.35 K warming from 1990-2030, which is equivalent to at least 3.3 K/century. It is pleasing that we are now agreed on this.

And Bellman did indeed spend a lot of time citing IPCC’s predictions, in the hope of demonstrating some imagined inconsistency or another in the head posting. Only when it reallzed that I knew it had misstated IPCC’s position did it suddenly and falsely pretend that it was not, after all, interested in IPCC’s predictions. It is deliberate falsehoods like these that Bellman should work on avoiding, for they undermine whatever case it conceives it is supporting in its equivocations and mendacities here, delivered from behind a coward’s cloak of anonymity.

I’ve answered this below,

https://wattsupwiththat.com/2018/08/15/climatologys-startling-error-of-physics-answers-to-comments/#comment-2433583

but for the record my “concession” is the result of missing the word “not” from the above comment. The offending sentence should read “The facts are that the IPCC FAR do not state a figure of 3.3K / century up to 2030.”

The HadCRU books have also been cooked, but maybe just not extra crisply like the Karlmelized GISS.

Another good point from Theo. If we had confined ourselves to the UAH data, our trend-line would have coincided with the observed trend-line almost exactly.

I fear that this debate is no longer one that can be influenced by science and facts. It has become a religion and a handy dog whistle for politicians looking for an excuse to either bring in some sort of social program or to explain how it was not their neglect that is responsible for their city currently being underwater.

and we have an entire generation that has been indoctrinated at a faith and belief level. It will take a lot more than math and science they don’t understand to convince them. The Great Lakes freezing solid and staying frozen through the summer might not be enough.

Hey, haven’t you heard? The Great Lakes freezing over all summer is EXACTLY what climate models have predicted will happen.

Ask Dr John Holdren

Sadly, you are right, except that there never was a debate, just a shouting match.

The alarmists have done their best to indoctrinate the younger generation but half the population remain unconvinced. Trump has been elected and proved you do not need to kow-tow the priests of the cult of AGW to get elected.

He has cut off funding to UN climate green slush fund and hopefully at some stage will live up to his promise to pull USA out of Paris “agreement”.

Academics have completely blown there image of fair-minded, objective experts and have mostly come out as dishonest bigoted political activists. And that , folks, is about all we get for our money.

Peter Schell should not underestimate the power of a formal mathematical demonstration of the actually rather elementary error of physics perpetrated by climatology in recent decades. We have demonstrated that global warming in response to doubled CO2, after correcting the error, will only be about 1.2 K. On any view, that is not enough to be regarded as catastrophic.

No doubt the journals will continue to resist publishing our result. But, in the end, they cannot be seen to participate in a fraud. If we are correct, then our result deserves to be published and, if it is not published, questions will arise. If we are wrong, then at least we tried.

Dear Monckton of Brenchley,

What would the curve look like for CO2 doubling/warming…ie from10ppm to 20ppm, 20 ppm to 40ppm, 50ppm to 100ppm, 100ppm to 200ppm, 500ppm to 1000ppm etc all other things being equal [which they wouldn’t be but..]

Basically what is the nature of the curve ?

In the lab, it’s a logarithmic curve, with about 1.1 to 1.2 degrees C per doubling. If you start at one ppm, we’re working on the ninth doubling.

In the real, complex climate system, with positive and negative feedback effects, it’s still probably not much different from that value. IPCC however imagines that the ECS range is 1.5 to 4.5 degrees C per doubling.

In response to GregK’s excellent question, from 50 ppmv to 1000 ppmv the CO2 feedback curve is approximately logarithmic. The latest value of the CO2 radiative forcing is approximately 5 times the natural logarithm of the proportionate change in CO2 concentration: thus, for every doubling of CO2 concentration within the interval [50, 1000] ppmv, the forcing is 5 ln 2, or 3.466 W/m^2. The product of this value and the current Planck sensitivity parameter 0.299 K/W/m^2 is the reference sensitivity to doubled CO2: i.e., 1.0363 K, which I have rounded to 1.04 K in the head posting. Previous values of the coefficient in the forcing function were 5.35 (Myhre et al., 1998, cited in IPCC, 2001) and 6.3 (IPCC up to 1995).

I fear you are right Peter. Especially that between 1 and 2 generations have received AGW, almost intravenously. We have schoolbooks in my country (revised in 2016) where it says that the Arctic ice would disappear in 2013.

How can you have books that were actually revised in 2016 and still they claim that the ice should disappear by 2013? It tells a lot about how big this “climate hysteria” really is.

But now it’s all about fighting, we must get this out. Especially we who live in non english speaking countries. The climate deception is possibly more incorporated here than many English speakers believe. The biggest battle is most likely to be in Europe.

Than you Monckton of Brenchley, thank you so much for never giving up

I am most grateful to Helge Ankjaer for a most generous comment. Once we have succeeded in persuading a learned journal to publish our result, we expect that it will receive quite a bit of publicity.

I am so looking forward to UEA’s response! Thank you for the insight, focus, dedication and fearlessness you have shown in putting this together.

Many thanks to RichardW for his generous comment. We shall plug away at this until either we are given a credible explanation showing that we are wrong or we are published.

At an earlier stage in our research, a reviewer broke the scientific code of conduct by sending a copy of our paper to UEA with a request that they should assist him in refuting it. The paper found its way to the vice-chancellor, Professor David Richardson, who, in August 2017, called all 65 members of the environmental sciences faculty together and yelled at them: “This is a catastrophe. If Monckton’s paper is ever published, there will be hell to pay.” He ordered everyone to drop everything they were doing and work on refuting our paper. He has subsequently denied that any such meeting took place, but we received our information from one who was present.

Good post. Assuming the same things that produced the 1850 temperature still apply today is a difficult assumption to challenge.

Many thanks to Mr Halla for his kind comment. In fact, we did not assume that the system-gain factors in 1850 and in 2011 were the same: we demonstrated it.

I have been looking at the scale of mother nature.

To fill all the rivers and lakes. To dump all the snow on the mountain ranges around the world requires roughly 1350 cubic kilometres of water to be evaporated daily. A 2 meter deep swimming pool would cover an area the size of France. This evaporation plus the rotation produced by the rotation of the earth creates vast ocean currents pulling vast heat from the tropics towards the poles. So much heat it can warm entire continents such as Europe. The scale is enormous which you would expect from a process that regulates the temperature of a planet. The effect of CO2 is like a fart in the wind. CO2 is insignificant in the grand scheme of things. The slightest change in energy from the sun would swamp any effect by CO2.

Another scam by the loony left. As out media pushes this bull shine what other lies do they tell us. Do the media ever tell us the truth. The news is just some weird form of show business.