I enjoy surprises in data, especially when they might make alarmists unhappy.

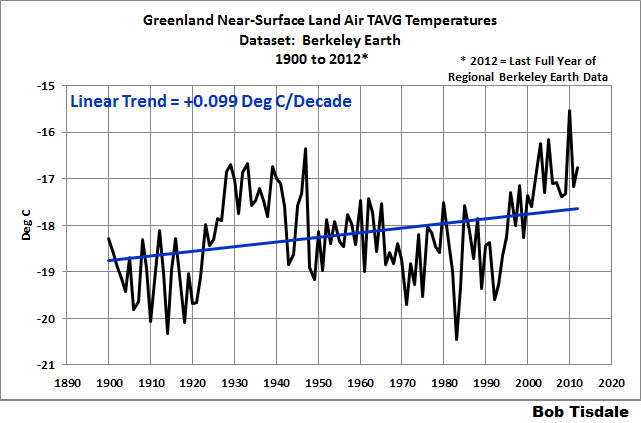

Willis Eschenbach’s post Greenland Is Way Cool at WattsUpWithThat prompted me to take a look at the Berkeley Earth edition of the Greenland TAVG temperature data. See Figure 1, which presents the graph of the annual Berkeley Earth TAVG temperature (not anomaly) data for Greenland from 1900 to 2012, which is the last full year of the regional Berkeley Earth data.

Note: I used the monthly conversion factors listed on the Berkeley Earth data page for Greenland to return the monthly anomaly data to their original (not anomaly) form and then determined the annual averages. [End note.]

Figure 1

With the relative large multidecadal variations in the data, it seemed as though I was taking the trend of one and a half cycles of a sine wave. That prompted me to start the graph in January 1925 and check the linear trend again. See Figure 2. Imagine that: a flat linear trend of Greenland land surface TAVG temperatures from 1925 to 2012.

Figure 2

MODEL-DATA COMPARISON

Are you wondering if the climate models stored in the CMIP5 archived (which was used by the IPCC for their 5th Assessment Report) showed a similar not-warming trend for the same time period? Wonder no longer. See Figure 3.

The climate model outputs, the CMIP5 multi-model mean, are available from the KNMI Climate Explorer. For Greenland, I used the coordinates of 59.78N-83.63N, 73.26W-11.31W, which are listed on the Berkeley Earth data page for Greenland, under the heading of “The current region is characterized by…”. The simulations presented use historic forcings through 2005 and RCP8.5 forcings thereafter. I use the model mean, because it represents the consensus (better said, group-think) of the climate modeling groups who provided climate model outputs to the CMIP5 archive, for use by the IPCC for their 5th Assessment Report.

Figure 3

For those who would prefer to see the full spread of the model outputs in a model-data comparison, you may want to think again. See Figure 4. It’s a graph created by the KNMI Climate Explorer of the 81 ensemble members of the climate models submitted to the CMIP5 archive, with historic and RCP8.5 forcings, for Greenland land surface temperatures, based on the coordinates listed above. There appears to be roughly a 7- to 8-deg C spread from coolest to warmest model. Well, that narrows it down. It’s just another example of how the climate models used by the IPCC for their long-term prognostications of global warming are not simulating Earth’s Climate. Each time I plot a model-data comparison, I find it remarkable (and not in a good way) that anyone would find climate model simulations of Earth’s climate, and their simulations of future climate based on bogus crystal-ball-like prognostications of future forcings, to be credible.

Figure 4

Back to the multi-model mean: Figure 5 presents the observed and climate-model-simulated multidecadal (30-year) trends in Greenland near-surface land air temperatures, from 1900 to 2012. Once again, the models are clearly not simulating Earth’s climate.

Figure 5

I’ll let you comment about that. We’ve already discussed and illustrated back in 2012 how climate models do not (cannot) properly simulate polar amplification. See the post Polar Amplification: Observations Versus IPCC Climate Models. The WattsUpWithThat cross post is here.

That’s it for this post. Enjoy the rest of your day and have fun in the comments.

STANDARD CLOSING REQUEST

Please purchase my recently published ebooks. As many of you know, this year I published 2 ebooks that are available through Amazon in Kindle format:

- Dad, Why Are You A Global Warming Denier? (For an overview, the blog post that introduced it is here.)

- Dad, Is Climate Getting Worse in the United States? (See the blog post here for an overview.)

And please purchase Anthony Watts’s et al. Climate Change: The Facts – 2017.

To those of you who have purchased them, thank you. To those of you who will purchase them, thank you, too.

Regards,

“See Figure 1, which presents the graph of the annual Berkeley Earth TAVG temperature (not anomaly) data for Greenland from 1900 to 2012”

It is anomaly data, and it is the spatial average of anomalies. Fig 1 just plots it with a shift to the y axis (as the note says).

And your point is, Nick?

“…And your point is, Nick?…”

He runs a red herring farm.

Nick is the original strawman but with global warming going to reverse to global cooling by 2030, he will run out of straw.

A herring farm with pervasive endemic whirling disease.

A red herring farm operated by strawmen.

Fishing for any strikes he can get!

Nick, it is NOT as you wrote “the spatial average of anomalies”. You’re once again thinking of GISS, NCEI and HADCRUT data or you’re attempting to intentionally mislead the readers here.

Steve Mosher of Berkeley Earth was very specific in his comment here that Berkeley Earth does NOT average anomalies:

https://wattsupwiththat.com/2018/11/29/examples-of-how-and-why-the-use-of-a-climate-model-mean-and-the-use-of-anomalies-can-be-misleading/#comment-2536683

“All other methods use station temperatures and then they construct station anomalies and then they combine those anomalies.

“Our approach is different. we use kriging…”

And my note in this post was very specific when I stated:

Note: I used the monthly conversion factors listed on the Berkeley Earth data page for Greenland to return the monthly anomaly data to their original (not anomaly) form and then determined the annual averages. [End note.]

Good-bye, Nick. You waste my time, and I don’t like having my time wasted.

Sincerely,

Bob

Bob,

“Nick, it is NOT as you wrote “the spatial average of anomalies”.”

It is. The data file you link is specific (my bold):

“Temperatures are in Celsius and reported as anomalies relative to the

% Jan 1951-Dec 1980 average.”

…

“% Note that all results reported here are derived from the full field

% analysis and will in general include information from many additional

% stations that border the current region and not just those that lie

% within this region. In general, the temperature anomaly field has

% significant correlations extending over greater than 1000 km, which

% allows even distant stations to provide some insight at times when

% local coverage may be lacking.”

…

“% For each month, we report the estimated land-surface anomaly for that

% month and its uncertainty.”

And once again, Nick, it does not state in the quote you provided that they are derived from anomalies. You boldfaced an “In general” comment, not a discussion of methodology.

Maybe you should read and try to understand their methods paper, without your willful misinterpretations.

Good-bye, Nick.

“Our approach is different. we use kriging”

But what do they use kriging on? Their methods paper explains clearly why they apply it to anomalies (my bold):

“One approach to construct the interpolated field would be to use Kriging directly on the station data to define T(x,t). Although outwardly attractive, this simple approach has several problems. The assumption that all the points contributing to the Kriging interpolation have the same mean is not satisfied with the raw data. To address this, we introduce a baseline temperature bᵢ for every temperature station i; this baseline temperature is calculated in our optimization routine and then subtracted from each station prior to Kriging. This converts the temperature observations to a set of anomaly observations with an expected mean of zero. This baseline parameter is essential our representation for C(xᵢ). But because the baseline temperatures are calculated solutions to the procedure, and yet are needed to estimate the Kriging coefficients, the approach must be iterative.”

My apologies, Nick. It appears that Mr. Mosher wasn’t complete in that comment, which he concluded with, “The Temp we give you is the absolute T. the source is the observations.”

The Berkeley Earth “methods” paper continues beyond what you quoted:

“In our approach, we derive not only the Earth’s average temperature record j avg T, but also the best values for the station baseline temperatures bi. Note, however, that there is an ambiguity; we could arbitrarily add a number to all the j avg Tvalues as long as we subtracted the same number all the baseline temperatures bi. To remove this ambiguity, in addition to minimizing the weather function W(x,t), we also minimize the integral of the square of the core climate term G(x) that appears in Equation (8). To do this involves modifying the functions that describe latitude and elevation effects, and that means adjusting the 18 parameters that define λ and h, as described in the supplement. This process does not affect in any way our calculations of the temperature anomaly, that is, temperature differences compared to those in a base period (e.g. 1950 to 1980). It does, however, allow us to calculate from the fit the absolute temperature of the Earth land average at any given time, a value that is not determined by methods that work only with anomalies by initially normalizing all data to a baseline period.”

So, in layman terms, it appears Berkeley Earth take the temperature data in absolute form, convert it to anomalies, do their magic to it, then convert back to absolute temperatures.

In some respects, we’re both correct.

Adios

Okay Nick, anomalies were involved. So what is your point?

Thank you, Alan! Made me laugh.

As I wrote in the post, “Note: I used the monthly conversion factors listed on the Berkeley Earth data page for Greenland to return the monthly anomaly data to their original (not anomaly) form and then determined the annual averages. [End note.]”

Cheers, Bob

Thanks, again, Bob.

I suggest people visit Bob on his site – Climate Observations – get his free stuff, buy a couple of his books and donate to him. He does a helluva lot of work and receives scant appreciation.

+100

And to think I included “(not anomaly)” in the text so that I could avoid using the term “absolute”, which other visitors here complain about, even with my qualifying the term as commonly used by the climate science industry.

Maybe I’ll use both next time to tweak them all.

Cheers!

Nitpickers gotta pick dem nits. You can’t win, Bob!

Dave Fair, THANK YOU for your comment.

Cheers,

Bob

Is it possible in todays world to obtain actual facts, without the use of “Models” ?

MJE

A very important question.

And the shocking answer us that no, it isn’t. But….

Even something as simple as taking the temperature of a pond with a thermometer, relies on a model we have of the world in which ponds, thermometers and temperatures actually in some sense exist.

The thing that will probably arouse the most ire in all the hard scientists with no background in philosophy of science, is that the notion of ‘temperature’ is a model – a categorisation of experience into a concept that is not directly accessible to the consciousness which conceives of it.

This is an important idea. Most people casually assume that ‘gravity’ is as real as the world they inhabit. It is not. It is an abstraction derived from it, or rather a proposition that seems to explain it. We never experience ”gravity’ just the ‘forces’ that it exerts on ‘bodies’.

The world is not turtles all the way down, it is, as far as our rational selves are concerned, models all the way down.

And MOST of the more flagrant mistakes we make are confusing our ideas about the world, with the world, in itself.

Models are idealised simplified mental constructs that represent the world.

WE say ‘there is next-doors black cat’ without realising just how many assumptions simplifications and downright anthropocentric bigotry is contained in that statement.

Information theory would show that even if it were possible to define and exact boundary around the ‘cat’ the contained only the ”catness” so its smells and its fishy breath and so one were excluded from it, and ignoring the fleas – are they part of ‘the cat’ ? – we are still left with a massive amount of information to represent the ‘cat’ at just a single moment in time, let alone in some kind of temporal persistence. Examination of its spectral output with respect to reflected visible light would reveal a complex and transient set of spectral emissions of which ‘black’ would be a gross and inelegant approximation.

As far as its ownership goes, to say that it ‘belongs’ to’ ‘next-door’ implies massive anthropocentric and cultural ideas of ownership and tribal relationships that surely are completely irrelevant to the creature.

Those who are familiar with IT will realise that ”next door’s black cat’ is essentially metadata – a pointer to a far far richer and more complex data set that is the experience of the the cat in its raw form.

And that is the problem. Before we can do physics, we need metaphysics. Metaphysics to tell us what the facts are, that we are using as data to confirm or disprove our theories. BUT the metaphysics is in itself arbitrary. And facts are in some sense an unknown and unknowable Reality transformed – perhaps convoluted is a better term, by applying a subconscious metaphysical process to it.

The term a posteriori was used to distinguish all the knowledge we have that applies to a (presumed) external world. What we know of the world is at first hand raw experience. Part of the process of becoming an adult is to acquire the ability to structure and categorise it and develop a sense of time and place. Yet even time and place are not raw sensation. They are internalised models that we use to categorise experience into meta data – ‘entities” that can have temporal persistence.

This is the metaphysics of the classical world that we think we live in. A world of objects in space time all of who have relation to reach other via laws of Nature which are in essence partial differential equations of behaviour (causality) expressed as with respect to time.

This model is of course in the limit known to be wrong and totally inadequate. Even classical physics of atoms shows that ‘point masses’ do not exist…

Approximations are OK up to a point (sic!)… BUT it is important to realise that they are approximations.

This view of the world and science as a hierarchy of models, some of which are more basic (metaphysical) than others, is, I think, a valid approach: It is on those to whom all knowledge is level and one dimensional that the greatest frauds may be perpetrated .

Those who cannot distinguish the warmth on their skin (sensation) from temperature (a notion) and therefore think that the hypothetical entities of science live in the same space as the more basic entities of the facts of their daily lives, and who utterly fail to realise that even those facts are the product not just of some presumed reality out there, but also of our means of interpreting it into a coherent collection of approximate entities in an approximate time and space matrix, are infinitely gullible.

It is models all the way down, BUT they come in layers. And the reason why we can say such things as ‘climate science is refuted by the facts’ is that climate science and its refutation lie above and purport to be about a set of agreed ‘facts’ which in this context are the notions that such a thing as a global average temperature – or even a series of local temperatures – exists and has meaning and is measurable.

Insofar as these things may be said to exist and have meaning and can be measured we can then say ‘these idealised notions that we have nonetheless measured reasonably accurately, refute the notion that carbon emissions are the biggest influence on them, at this point in time’.

That is, our basic ‘facts’ at this level are derived from metaphysical models of the world in which such abstract entities as ‘temperature’ and ”carbon dioxide’ have been given meaning.

That is, what we call facts are simply the product of models deeper towards the metaphysical end of the spectrum that we agree to treat as facts, because they accord reasonably well with our mutual experience of the world

That last bit is extremely important, As scientists we can partially accept the New Left’s insistence that Truth is relative to culture, in that the way we interpret the world does indeed vary from place to place and from one time to another BUT, the product of that culture has to be a worldview that is not inconsistent with experience.

In this context climate change has sought to make itself a metaphysical assumption. True because it was believed, and used as a metric to judge the world. Unfortunately it depends on a testable proposition, namely that the world is in fact getting warmer, and warmer in lockstep with rises in CO2 concentrations in the atmosphere. And that all these entities are as we who ‘deny’ climate change, understand them to be,. So we measure the temperature and Lo! it has not risen in accordance with predictions.

Climate change is inconsistent with the ‘facts’ it purports to represent. That these ‘facts’ are in fact (sic!) the output of other metaphysical models, is not relevant in this precise case. Climate science sets out to propose a hypothesis based on certain ‘facts’. If those very same facts refute it, then those promoting it have only themselves to blame.

Hmm.

Dogs may have owners.

Cats have servants.

Or am I delving too deeply?

Or not deeply enough?

If ‘the cat that habituates next-door’ scratches me when I go to pick it up, is that some sort of a model?

Probably I am one-dimensional; certainly, I am deplorable, and, likely, a ‘swivel-eyed loon’, too.

I have read – and re-read – but obviously not understood Leo’s contribution. Sorry.

Auto

I have read – and re-read – but obviously not understood Leo’s contribution.

It goes something like this: “There is no true statement except this one, that there are no true statements.” A proposition that contradicts itself on its very face.

Is Leo’s neighbor’s catness the Mockingjay?

A simple time-tested model, such as a thermometer that models temperature based on the expansion of mercury, is a far cry from a recent computer model that attempts to model complex and chaotic processes like climate based on the limited understanding of its programmer. The programmer is forced to make educated guesses about complex relationships that are not well known and leaves out influences that, as yet, are completely unknown. It requires hundreds, if not thousands, of years to time-test such a model to verify whether or not the educated guesses are correct or have missed the mark completely. Many useful idiots assume that computers are “smart” and can supply all missing information that humans do not yet understand. They are misinformed. Computers are slaves to their masters. They only do what their programmers tell them to do and reflect the biases of their programmers.

So do I . I really do.

But we should fight it.

And take the data as it is.

At this point in climate hustle, the modelling groups continued modelling with their Climate Cargo Cultist outputs simply demonstrates they are merely a jobs programs for over-produced climate PhD’s and their associated supercomputer centers.

The only way forward to sanity and return to rationality is to cut government funding, both extramural and intramural, to climate modeling research activities by at least 90%. Then hold that reduced funding level for at least 4 FY budget cycles to clean out the climate hustle government-industry cabal and then try an start over with just 2 NSF funded groups in the US.

You had me at “…cut government funding.” We should do this and stop there.

It’s clear Gore was brilliant when he banked on the late boomers buying his speel, temps trended down while they where children and then trended up as they got out o school (“V” trend, the larger trend irrelevant). I remember well how CO2 was going to save us all from GW in the late 70’s…the speel was executed well and effectively around 20 yrs b4 Gore lost his election. 20 years is the period that the propaganda folks plan/execute by, the last generation will be irrelevant and the next ignorant but in the “know” aka MSM cool aka OcrzyO…will she takes on male circumcision in the USA…seems more relevant that GW?

Is it not fascinating that they use historical data and tack on RCP8.5 speculations so induce the public to think the inflection represents an “acceleration”?

Anyone who checks the outcome of speculations with lesser copies of RCP’s will soon find curves of lesser value, both as thermal catastrophes and eye candy.

What value does RCP8.5 bring to the present or the future? Perhaps it has a place as a target of late show TV ridicule – its only net present value.

Even Science of Doom is dismissive of RCP8.5 – which he calls the “extremely high emissions scenario”. It seems that ‘RCP8.5’ was formulated for sensitivity analysis.

https://scienceofdoom.com/2019/01/01/opinions-and-perspectives-3-5-follow-up-to-how-much-co2-will-there-be/

I stopped looking at Science of Doom when I realized that he was only a copy and paste blog who knew nothing about climate science and who is secretly an alarmist even though he doesnt subscribe to RCP8.5.

He is definitely a warmist – which is why I said “*even* Science of Doom dismisses RCP8.5.

I think these are his credentials here (judge for yourself) https://www.rider.edu/faculty/steven-carson

Even Science of Doom is dismissive of RCP8.5 – which he calls the “extremely high emissions scenario”. It seems that ‘RCP8.5’ was formulated for sensitivity analysis …

=================================================

The CO2 emissions per year are tracking closest to RCP 8.5 (see Pathways for the 21st Century):

https://www.carbonbrief.org/analysis-four-years-left-one-point-five-carbon-budget

There seems to be backtracking going on amongst some IPCC apologists who are beginning to realise that the temperature projections associated with the ‘business as usual’ scenario are failing to materialise and are attempting to rationalise the fact.

Yes RCP 8.5 is for sensitivity analysis.

Don’t expect non modelers to get what this is.

Mundanely used in project financial analysis. Not so rarified as you think.

If it’s only used for sensitivity analysis, why do they always present it as the outcome that is going to happen?

But the rent-seekers use it for other purposes, Mr. Mosher.

Whenever you look at results from BEST, consider the station numbers and distances they use in their krigging: Note that there between 1-5 regional stations prior to WWII. There is also a huge drop in the last two years. For year 2000, about 20 stations in the regions krigged with another 30 stations outside the region to 500 km, another 40 stations 500-1000 km. Regional smearing at best. GIGO. For Greenland from their site:

http://berkeleyearth.lbl.gov/auto/Regional/TAVG/Figures/greenland-TAVG-Counts.png

“We’ve already discussed and illustrated back in 2012 how climate models do not (cannot) properly simulate polar amplification.”

They have it backwards, an increase in climate forcing cools the AMO and Arctic. There were AMO and Arctic cold anomalies in the mid 1970’s, mid 1980’s, and early 1990’s, when the solar wind was the strongest. And a warming AMO and Arctic from the mid 1990’s from when the solar wind weakened.

https://www.linkedin.com/pulse/association-between-sunspot-cycles-amo-ulric-lyons

Special thanks for the link to the data!

Anomalies means the trend at a station. Global average of anomaly presents the average trend. This is not affected by the magnitude of station temperature. Temperature averages are dominated by the Sun’s movement and local climate system effect.

Global average — temperature anomaly is appropriate

Station average and homogeneous zone [article under discussion represent this] — temperature magnitude is appropriate.

Dr. S. Jeevananda Reddy

The models also grossly underpredict ice loss by a factor of 2-3, hence they are using the wrong albedo. They would be running even hotter if they accounted for the proper level of ice loss.

Figure 5. “from 1925? To 2012.

Thank you for the interesting post

Bob, I compared your plot on Figure 1 to the historical annual mean temperature plot of Reykjavik in Iceland (see link below). Probably unsurprisingly (see Ulric Lyons comment above) , the shape is very similar.

https://data.giss.nasa.gov/cgi-bin/gistemp/show_station.cgi?id=620040300000&dt=1&ds=1

Thanks for all the work you do and for posting here for all to see.

Cheers.

See a Tony Heller video about NOAA and/or NASA faking the Reykjavik temperature data.

“Reykjavik

http://berkeleyearth.lbl.gov/stations/155459

adjusted down

1. NASA gets its data from NOAA, NOAA ( NCDC) adjust Reykjavik using PHA

2. Reykjavik and other hi Northern latitude stations offer some unique challenges to

automated debiasing with PHA

3. That has led to a change in the PHA program. Heller would not know this since he

doesnt read documentation of the debiasing code

For a history of some of the changes ( bug fixes, stations that were wrongly adjusted etc)

ftp://ftp.ncdc.noaa.gov/pub/data/ghcn/v3/techreports/Technical%20Report%20NCDC%20No12-02-3.2.0-29Aug12.pdf

ftp://ftp.ncdc.noaa.gov/pub/data/ghcn/v3/techreports/Technical%20Report%20GHCNM%20No15-01.pdf

In Version 4 ( now beta) The known issues with Hi latitude stations ( > 60N)

will be addressed

For the hi northern Latitude issue, see the ATBD

ftp://ftp.ncdc.noaa.gov/pub/data/ghcn/v4/documentation/CDRP-ATBD-0859%20Rev%201%20GHCN-M%20Mean%20Temperature-v4.pdf

in the final version of GHCN v4, The hi latitude stations are not adjusted via the algorithm.

Looking at the beta for this station

the adjustment adds about 10% to the trend. That differs from Berkeley were we decrease the trend a little.

“in the final version of GHCN v4, The hi latitude stations are not adjusted via the algorithm.”

That is good because the data that is presented GHCN is supposedly debiased by the Icelandic Met Office.

So in GHCN v4 it should be identical to the original data then.

Would you like to show that using Icelandic data from say the 1990s?

But why adjust it?

Why not retrofit it with the same type of thermometer/enclosure as was used in the 1`930s/1940s and then observe temperatures using the same TOB as used in the 1930s/1940s?

Then there would be no need to make any ajusyments, just compare RAW modern day measurements with past historic RAW measurements that were obtained at that station.

In that way, we would know whether at that station there had been any significant change from the temperatures that were observed at that station in the 1930s/1940s.

Comparison in this manner would be very helpful since more than 95% of all manmade CO2 emissions have taken place since the 1930s.

The figures show factual data. What these don’t show is causation of the ups and downs in the data.

As far as climate models go, do any show futuristic ups and downs? If I remember they all show up, up, up and no up/down variations. This I would question and bring me to have little to no trust in them.

The “models” do not have elevation, i.e.. the height of land above the geoid and all the effects that wind fields – pressure and temperature – and GRAVITY bring. The “model” domain is a 2-d “sheet” — a numerical array, no z-dimension. Talk about the REAL flat-earth society! There it is!

Ha ha

“The “model” domain is a 2-d “sheet” — a numerical array, no z-dimension.”

Complete nonsense. The models are 3D and have typically 32-40 vertical layers of cells. They take proper account of altitude, using terrain-following coordinates.

This looks like another chart showing the 1930’s as being as warm or warmer than subsequent years.

We are not experiencing unprecedented warmth today as the CAGW Alarmists claim, it was as warm or warmer in the recent past.

Unmodified regional charts from around the world show this same temperature profile.

if in fact this is a cyclical effect, the next year or two should be a plateau and then within at most five years we should see definite significant cooling.

Imagine that, a falsifiable hypothesis! Check back in 2025.

I notice that Nick avoids any comment on a point that is relevant to this point.

Tom, you’ve misread the graph in Figure 5, which you linked in your comment.

Look at the units of the y-axis. The units are Deg C/decade, not Deg C. Thus the title block read, “Model-Data 30-Year Trend Comparison.”

So it the warming rates for the 30-year periods ending around 1940 (not the temperatures themselves) that are comparable to the warming rates in more recent 30-year periods.

Regards,

Bob

Thanks for that correction, Bob.

Just so I don’t look like a complete fool, here are a couple of surface temperature charts from the same area that do show the warmth of the 1930’s.

These charts show the unadjusted data and show how NASA/NOAA took this data and changed it to make it appear cooler in the past and hotter in the present in their efforts to promote the CAGW narrative that things have been getting hotter and hotter for decade after decade. It’s just not so.

Unmodified charts from around the world show the 1930’s to be as warm or warmer than subsequent years. Unfortunately, I chose a bad example above with Bob’s chart, but there are plenty of others that make the point.

It seems to me if it can be shown that the 1930’s were as warm as today, then that destroys the CAGW story. I think it is a pretty good bet that just about all unmodified local and regional surface temperature records show this same temperature profile with the 1930’s being as warm or warmer than subsequent years We ought to take up a collection, and we should probably being guarding this data. I would also bet that none of those unmodified local and regional surface temperature charts would look like a “hotter and hotter” Hockey Stick chart.

The Hockey Stick is a lie meant to promote the CAGW speculation. Historical evidence shows the Hockey Stick does not represent the real surface temperature profile. The historical evidence shows there is no unprecedented warming in the 21st century. The Hockey Stick is not evidence of anything but fraud.

So the 30 year warming rate ending 2012, as projected by the models, is around 0.5C per decade *slower* than that seen in the observations?

With a Super El Nino at the end, yep, DRW 54.

According to fig. 5 the 30 year warming trend has been a lot higher than the modeled one for a long time prior to the 2015/16 el Nino.

In fact the data used stops in 2012, still in the the second part of a double-dip la Nina

Bob,how does this compare to Vinther’s 2006 long instrumental record of Greenland? This is a very long study over 200 years and co-authors are prominent alarmist UK scientists Dr Jones and Dr Briffa.

Looking at temps over this long period we find that much earlier decades are warmer than the last few decades and they even hold up well against some of the decades over one hundred years ago, back in the 1800s. See TABLE 8.

So what will be their excuse when the AMO changes to the cool phase, perhaps sometime in the 2020s? Or has it started already? Who knows?

https://crudata.uea.ac.uk/cru/data/greenland/vintheretal2006.pdf See TABLE 8 from the study comparing decades.

Bob, you’ve also found something else with this graph. The shape of this curve faithfully follows the real temperature record of the US, Canada, Europe, South Africa, Paraguay, Bolivia , etc (ie, the world) before the clime syndicate pushed the 20th Century’s high temperatures of 1930s -40s down, which also took out the 35yr deep cooling period that had the same clique panicking about an ice age in the making.

What is the significance of this? It means in actuality essentially all the global warming we’ve had took place between 1850 and 1940 with no CO2 rise! THAT is the reason for the criminal destruction of the temperature record. The actual record is a slam dunk falsification of our post modern climate theory. The “big” warming from 1979 to the end of the century is in actuality all a warming up from the deep cooling. 1998 El Nino was not a new temperature record at the time. This is when Hansen’s GISS went to work, and like T Karl, H to do the major overhaul ijust before his retirementn 2007.

The 20th Century high warming period, with a suitable lag from the ocean warming likely resulted in outgassing of CO2 on a significant scale after 1950.

Gary

You said, “… likely resulted in outgassing of CO2 on a significant scale after 1950.”

I completely agree with you.

“we’ve had took place between 1850 and 1940 with no CO2 rise! ”

Err no. There is an important rise in c02.

Dont forget C02 effect is ln()

Between 1845and 1939 (Law Dome) you have 286 to 309 ppm of c02

Thats 5.35* ln(309/286) or .41 Watts

C02 alone ( not counting CH4) added .41 Watts of forcing.

So from 1850 to 1940, ~.35C from C02 alone

Anomaly in 1940 .05 ( +- .09)

Anomaly in 1850 -.55 ( +-.14)

Difference = .6C

C02 explains about .35C of this rise

The balance .6C-.35C is .25C

Wanna know what explains this residual?

What sensitivity are you using to get 0.41watts from 25ppm of CO2?

Steven, my point still substantially survives. Outgassing was probably a signifucant factor in CO2 rise after 1850 with LIA warm up and probably is a measure of the CO2 drawdown by the LIA from similar high CO2 of the MWP a thousand years ago. Until goal posts were moved from 1950 to 1850 only a couple of years ago,1950 was considered the beginning of “human influence”. World population was one third today’s in 1950 and percapita energy use was quite a bit lower. World pop in 1850 was about a fifth of todays, and our percapita energy use was close to negligible. We largely burned sustainable wood and fired our transportation with sustainable oats and hay.

Bob

You said, “There appears to be roughly a 7- to 8-deg C spread from coolest to warmest model.”

In other words, the uncertainty of the predictions is about +/- 4 deg C for a mean temperature commonly reported with more precision than 1 deg.

“Figure 5 presents the observed and climate-model-simulated multidecadal (30-year) trends in Greenland near-surface land air temperatures, from 1900 to 2012″.

This looks like a mistake, I look at Figure 5 and see data starting in 1928 or 1929.

(unless each point represents the year and the previous 30 years… ok, got it).

If you want to see a detailed view of models versus Berkeley,

this has been there for years

http://berkeleyearth.org/graphics/model-performance-against-berkeley-earth-data-set/

lumping the models into one pile hides a lot of interesting detail.

This:

https://mashable.com/2018/02/26/arctic-heat-wave-north-pole-february-sea-ice/?europe=true

Such cases where an unusually strong low pressure area penetrates to the high Arctic have always happened occasionally.

Peter Freuchen describes such an event that happened around 1900. The snow in North Greenland partially melted and then froze again. The ice layer preevented the caribou from getting at any food, and they died out over most of northern Greenland-

We can learn from media that tha arctic region warms double the speed of the global warming. Where does that come from?

The tropics of course.

That is the only part of the Earth with a heat surplus. This gets exported to higher latitudes where it radiates away.

tty: the graph shows that there isno warming in the polar region at all.

+100

Espoo Finland

The question is…

“How many angels can dance on the head of a pin?”

As many as God wants to… And in this case, the CAGW crowd says “god” is the IPCC.

Griff: I do not know about eskimos but we in Finland including definitely the Sami people (the Lapps) do not call the temperatures between minus 20 and minus 10 degrees a heat wave.