Guest Post by Willis Eschenbach

I see that Zeke Hausfather and others are claiming that 2018 is the warmest year on record for the ocean down to a depth of 2,000 metres. Here’s Zeke’s claim:

Figure 1. Change in ocean heat content, 1955 – 2018. Data available from Institute for Applied Physics (IAP).

When I saw that graph in Zeke’s tweet, my bad-number detector started flashing bright red. What I found suspicious was that the confidence intervals seemed far too small. Not only that, but the graph is measured in a unit that is meaningless to most everyone. Hmmm …

Now, the units in this graph are “zettajoules”, abbreviated ZJ. A zettajoule is a billion trillion joules, or 1E+21 joules. I wanted to convert this to a more familiar number, which is degrees Celsius (°C). So I had to calculate how many zettajoule it takes to raise the temperature of the top two kilometres of the ocean by 1°C.

I go over the math in the endnotes, but suffice it to say at this point that it takes about twenty-six hundred zettajoule to raise the temperature of the top two kilometres of the ocean by 1°C. 2,600 ZJ per degree.

Now, look at Figure 1 again. They claim that their error back in 1955 is plus or minus ninety-five zettajoules … and that converts to ± 0.04°C. Four hundredths of one degree celsius … right …

Call me crazy, but I do NOT believe that we know the 1955 temperature of the top two kilometres of the ocean to within plus or minus four hundredths of one degree.

It gets worse. By the year 2018, they are claiming that the error bar is on the order of plus or minus nine zettajoules … which is three thousandths of one degree C. That’s 0.003°C. Get real! Ask any process engineer—determining the average temperature of a typcial swimming pool to within three thousandths of a degree would require a dozen thermometers or more …

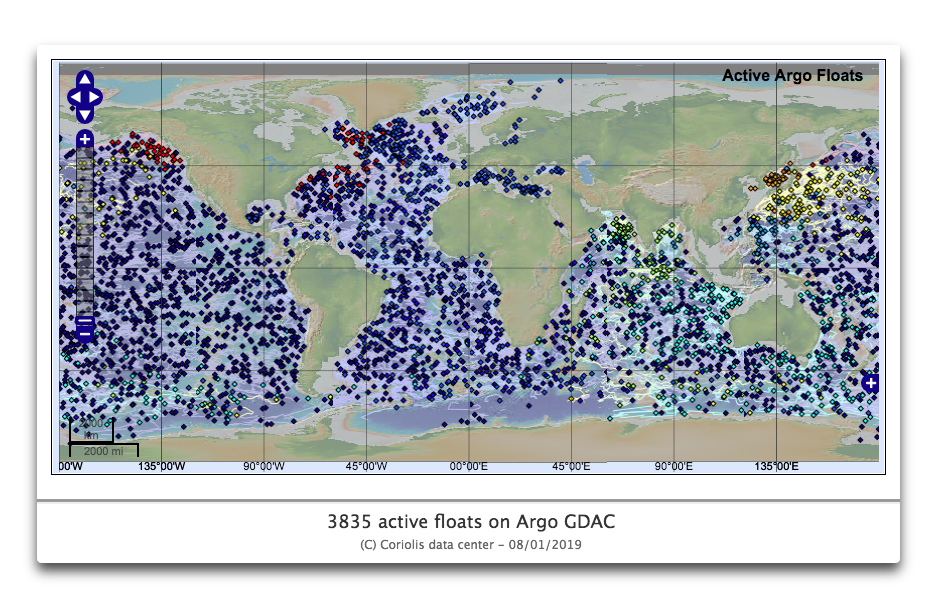

The claim is that they can achieve this degree of accuracy because of the ARGO floats. These are floats that drift down deep in the ocean. Every ten days they rise slowly to the surface, sampling temperatures as they go. At present, well, three days ago, there were 3,835 Argo floats in operation.

Figure 2. Distribution of all Argo floats which were active as of January 8, 2019.

Looks pretty dense-packed in this graphic, doesn’t it? Maybe not a couple dozen thermometers per swimming pool, but dense … however, in fact, that’s only one Argo float for every 93,500 square km (36,000 square miles) of ocean. That’s a box that’s 300 km (190 miles) on a side and two km (1.2 miles) deep … containing one thermometer.

Here’s the underlying problem with their error estimate. As the number of observations goes up, the error bar decreases by one divided by the square root of the number of observations. And that means if we want to get one more decimal in our error, we have to have a hundred times the number of data points.

For example, if we get an error of say a tenth of a degree C from ten observations, then if we want to reduce the error to a hundredth of a degree C we need one thousand observations …

And the same is true in reverse. So let’s assume that their error estimate of ± 0.003°C for 2018 data is correct, and it’s due to the excellent coverage of the 3,835 Argo floats.

That would mean that we would have an error of ten times that, ± 0.03°C if there were only 38 ARGO floats …

Sorry. Not believing it. Thirty-eight thermometers, each taking three vertical temperature profiles per month, to measure the temperature of the top two kilometers of the entire global ocean to plus or minus three hundredths of a degree?

My bad number detector was still going off. So I decided to do a type of “Monte Carlo” analysis. Named after the famous casino, a Monte Carlo analysis implies that you are using random data in an analysis to see if your answer is reasonable.

In this case, what I did was to get gridded 1° latitude by 1° longitude data for ocean temperatures at various depths down to 2000 metres from the Levitus World Ocean Atlas. It contains the long-term monthly averages at each depth for each gridcell for each month. Then I calculated the global monthly average for each month from the surface down to 2000 metres.

Now, there are 33,713 1°x1° gridcells with ocean data. (I excluded the areas poleward of the Arctic/Antarctic Circles, as there are almost no Argo floats there.) And there are 3,825 Argo floats. On average some 5% of them are in a common gridcell. So the Argo floats are sampling on the order of ten percent of the gridcells … meaning that despite having lots of Argo floats, still at any given time, 90% of the 1°x1° ocean gridcells are not sampled. Just sayin …

To see what difference this might make, I did repeated runs by choosing 3,825 ocean gridcells at random. I then ran the same analysis as before—get the averages at depth, and then calculate the global average temperature month by month for just those gridcells. Here’s a map of typical random locations for simulated Argo locations for one run.

Figure 3. Typical simulated distribution of Argo floats for one run of Monte Carlo Analysis.

And in the event, I found what I suspected I’d find. Their claimed accuracy is not borne out by experiment. Figure 4 shows the results of a typical run. The 95% confidence interval for the results varied from 0.05°C to 0.1°C.

Figure 4. Typical run, average global ocean temperature 0-2,000 metres depth, from Levitus World Ocean Atlas (red dots) and from 3.825 simulated Argo locations. White “whisker” lines show the 95% confidence interval (95%CI). For this run, the 95%CI was 0.07°C. Small white whisker line at bottom center shows the claimed 2018 95%CI of ± 0.003°C.

As you can see, using the simulated Argo locations gives an answer that is quite close to the actual temperature average. Monthly averages are within a tenth of a degree of the actual average … but because the Argo floats only measure about 10% of the 1°x1° ocean gridcells, that is still more than an order of magnitude larger than the claimed 2018 95% confidence interval for the AIP data shown in Figure 1.

So I guess my bad number detector must still be working …

Finally, Zeke says that the ocean temperature in 2018 exceeds that in 2017 by “a comfortable margin”. But in fact, it is warmer by only 8 zettajoules … which is less than the claimed 2018 error. So no, that is not a “comfortable margin”. It’s well within even their unbelievably small claimed error, which they say is ± 9 zettajoule for 2018.

In closing, please don’t rag on Zeke about this. He’s one of the good guys, and all of us are wrong at times. As I myself have proven more often than I care to think about, the American scientist Lewis Thomas was totally correct when he said, “We are built to make mistakes, coded for error” …

Best regards to everyone,

w.

PS—when commenting please quote the exact words that you are discussing. That way we can all understand both who and what you are referring to.

Math Notes: Here is the calculation of the conversion of zettajoules to degrees of warming of the top two km of the ocean. I work in the computer language R, and these are the actual calculations. Everything after a hashmark (#) in a line is a comment.

heatcapacity=sw_cp(t=4,p=100) # heat capacity, with temperature and pressure at 1000 m depth print(paste(round(heatcapacity), "joules/kg/°C")) [1] "3958 joules/kg/°C" seadensity=gsw_rho(35,4,1000) # density, with temperature and pressure at 1000 m depth print(paste(round(seadensity), "kg/cubic metre")) [1] "1032 kg/cubic metre" seavolume=1.4e9*1e9 #cubic km * 1e9 to convert to cubic metres print(paste(round(seavolume), "cubic metres, per levitus")) [1] "1.4e+18 cubic metres, per levitus" fractionto2000m=0.46 # fraction of ocean above 2000 m depth per Levitus zjoulesperdeg=seavolume*fractionto2000m*seadensity*heatcapacity/1e21 print(paste(round(zjoulesperdeg), "zettajoules to heat 2 km seawater by 1°C")) [1] "2631 zettajoules to heat 2 km seawater by 1°C" z1955error = 95 # 1955 error in ZJ print(paste(round(z1955error/zjoulesperdeg,2),"°C 1955 error")) [1] "0.04 °C 1955 error" z2018error = 9 # 1955 error in ZJ print(paste(round(z2018error/zjoulesperdeg,3),"°C 2018 error")) [1] "0.003 °C 2018 error" yr2018change = 8 # 2017 to 2018 change in ZJ print(paste(round(yr2018change/zjoulesperdeg,3),"°C change 2017 - 2018")) [1] "0.003 °C change 2017 - 2018"

The first chart shows me that the CO2 concentration is driven by the ocean temperature. There is a terrible correlation between the CO2 concentration and the temperature of the atmosphere, but there is a very good correlation with the ocean temperature. As the oceans contained a huge amount of CO2, what else could we expect, based on sound knowledge of vapour pressure?

The simplest explanation for that remarkable correlation is that as the oceans heat up, the CO2 out-gases and the atmospheric concentration increases.

Because a mechanism for heating the oceans using atmospheric CO2 as a driver is missing, we are left with the obvious: as the sun heats the oceans (net) the CO2 emerges, as expected. End of short story.

” a mechanism for heating the oceans using atmospheric CO2 as a driver is missing”

…

False.

…

The mechanism is clear and obvious.

…

The sun heats the ocean during the day.

…

CO2 retards the cooling at night.

…

Net effect is the oceans warm.

Please provide links to papers that show how and how much “CO2 retards the cooling at night”.

Thanks

https://www.nature.com/articles/nature14240

…

Please don’t ask me to explain to you how down welling IR works, as it is basic radiative physics.

You linked to a paywalled article. Bad form. Since I can’t read it nothing is proven, but just from the abstract I would say, 1) insufficient data sample, and 2) correlation does not prove causation! Try again? Furthermore, a closer look at that “correlation” would reveal changes in atmospheric CO2 lags changes in temperature by ~9-10 months, and the future can’t cause the past, so not even a correlation to brag about!

Don’t require things on a gold plate. We others (Willis included I guess) do take the GHE as a fact, and are bored to death for people requiring them hand fed ‘proof’ something they’re not going to accept anyways. Just waste of time from everybody’s side.

Red94ViperRT10 January 11, 2019 at 8:00 pm

You can get most any paywalled article from Sci-Hub …

w.

@Hugs January 11, 2019 at 8:24 pm

I do take the GHE as a fact, effected by ALL the greenhouse gasses. Out of all those, there has never been an experiment to show which one controls that temperature rise, if any. It might be like my insurance premiums, where either my children are covered or they’re not, I can’t have it part-ways. And there has been no proof of the TCS or ECS from a change in the concentration of atmospheric CO2, I believe there is one, but it could vary all the way to <0, once all the feedbacks have their feedback.

@Willis, BTW, thanks for the Sci-Hub link, I'll bookmark it!

We are all, or mostly adults here, and should be able to do our own homework.

It is not advancing the conversation to demand that everyone justify every statement, when most of these are things we discuss at length over and over again.

I agree it is tiresome, although it is also quite annoying that some people act like the long discussions we all just had on the same topic, several times in the past month, never happened.

Steve Heins and Steve O, this means you.

But that is how warmistas and their apologists work.

Just pretend like all previous discussions never happened.

They want to wear us down by repetition.

Enough to just link to one of the many recent articles and long discussion on the topic.

“You linked to a paywalled article”

No it’s not …

http://asl.umbc.edu/pub/chepplew/journals/nature14240_v519_Feldman_CO2.pdf

“Basic radiative physics” LOL

It is unfortunate that the real world and real physics cannot fit into climate alarmists’ models or heads.

1. Earth is not a blackbody, but has albedo at surface, upper and lower faces of tropospheric clouds, and both faces of stratospheric clouds. If you can’t model clouds, you can’t model climate.

2. Core mechanism of GHE conjecture and CAGW alarmism is a violation of known physics. After actual scientists repeatedly pointed out photons cannot be trapped, climate “scientists” invented the novel physical phenomenon of “back radiation,” which is the downward-biased re-radiation of upwelling LWIR from the surface due to CO2 absorbtion and re-emission in the troposphere. Back radiation only appears in models, not in actual validated observations.

3. Photons absorbed by any gas molecule are a billion times more likely to be thermalized into rotational, vibrational, and translational degrees of freedom whose phonon energy quanta are quickly exchanged via super-elastic collisions with other molecules long before they can be re-radiated.

4. Quantum dynamics dictates that any re-emission of energy as a photon is omni-directional, with no bias toward the surface.

5. Convection and conduction rates in the atmosphere are high enough to easily carry away upward any mysterious energy bloom that might appear at any altitude in the troposphere. The actual equations physicists and meteorologists use for gas energy transfer ignore radiation because its contribution is insignificant.

“Basic physics” is the antidote to “climate science” Lysenkoism.

Your reference examines the Southern Great Planes and the North Slope of Alaska, i.e., it’s a land-based study, and dirt doesn’t circulate.

In oceans, some of the warmed water will mix somewhat during the course of a day. Thus, at night, IR radiation from the ocean will leave not just from the immediate surface, but from the warmed layer, and so will have to traverse water, in the denser fluid rather than gaseous phase. The IR spectrum is likely to be different compared with ground-based emissions.

The IR release mechanism, and the “basic radiation physics”, is different enough to call the relevance of your reference into question. Perhaps you can enlighten us by citing a more appropriate ocean-based study.

So I looked at the paper in your link (I hope Anthony Barton linked to the same paper you referenced)… I’m still scratching my head. First off, it doesn’t really read like a research paper as compiled by post-doctoral researchers, or even a doctoral researcher, more like a report compiled by a technician or salesperson attempting to demonstrate his piece of equipment makes the measurements he claims it can make. Nonetheless, best I can gather through the jargon, the researchers collected one “… sample clear-sky measured AERI spectrum…” from 2001 (apparently this was the paper’s closest brush with actual data, but they managed to evade it, man that was close!), constructed and applied filters to eliminate all other effects except the CO2, then used another model, not even constructed by them (I don’t think), to estimate what the radiative forcing should be from that point through 2010 starting from that 2001 sample reading, given the changes in CO2 as reported by “…CarbonTracker 2011 (CT2011)20, which is a greenhouse gas assimilation system based on measurements and modelled [sic] emission and transport…”, doesn’t say where the “measurements” were taken nor when, but not an actual reading taken in 2010/11 at the exact same location as the 2001 radiation reading, nor do we have a 2001 CO2 reading to compare it against, not in the paper anyway. And I don’t see a 2010 radiation reading taken at the same location as the 2001 reading. And never at any time does the paper ever even make an attempt to connect the changes in CO2 or the (modeled) changes in radiative forcing to the actual temperature. All I can see is models all the way down. And even from that sample reading, I’m guessing you’re effectively measuring the temperature of the air. (The only way I can picture to measure the insulation value of air would be to place an emitter on the ground (or in space) that emits all the known wavelengths that can transport heat, and a sensor in space (or vice versa) and take a clear-sky nighttime measurement and see how much emission doesn’t make it to the sensor. That would be a valid experiment!)

Ike Kiefer

Partially true – but you overstate your case. R Essenhigh includes the small contribution to gas absorption/radiation in atmospheric lapse rate.

Essenhigh, R.H., 2006. Prediction of the standard atmosphere profiles of temperature, pressure, and density with height for the lower atmosphere by solution of the (S− S) integral equations of transfer and evaluation of the potential for profile perturbation by combustion emissions. Energy & fuels, 20(3), pp.1057-1067.

Ferenc Miskolczi exhaustively analyses molecular absorption/radiation for all greenhouse species across the most frequences with line by line model HARTCODE

eg see The Greenhouse Effect and the Infrared Radiative Structure of the Earth’s Atmosphere

In my January 11, 2019 at 9:25 pm comment, rather than “…the TCS or ECS … could vary all the way to <0…" I meant to say …could fall in a range all the way to <0…

Ike Kiefer – January 12, 2019 at 7:59 am

A fact of science, …… but a fact that the proponents of AGW/CAGW climate change REFUSE to consider or admit to because it is CONTRARY to their inferred claims that all IR energy emitted from the earth’s surface to GHGs in the atmosphere, …… is either “trapped” in said atmospheric GHGs ….. or is emitted directly back to the earth’s surface.

“Downwelling IR”

Has never been documented, or replicated. Don’t state conjecture as facts.

Strike one.

IR emissivity is a 360 sphere. What percentage “downwells’ and what prevents “upwelling”? Your search for the answer will disprove your beliefs.

Strike two.

Since a greenhouse works by preventing convection, generally a glass barrier, and “downwelling IR” does not prevent convection- please explain the [non-existent] mechanism a trace gas will prevent atmospheric convection?

(It doesn’t).

Strike three.

The last point is very, very important to understand.

For example, I suggest you go to Nevada, and visit the desert during the day when it is 90 degrees F, then stay there when the sun goes down to see how cold it gets.

…

After you do that go to Atlanta GA, during the day when it’s 90 degrees F, then stay there when the sun goes down to see how cold it gets.

…

You’ll learn to appreciate the green-house gas effect of H2O

…

Same thing happens with CO2 to a lesser extent.

Good point.

The driest desert is Antarctica.

No warming.

Case closed.

NEXT!

Mr. Hein: Thanks for keeping it so very simple. So while you were in the desert, without the interference of water vapor, you were able to precisely observe and measure the GHG effect of CO2? Can you provide the results? We’d all be very keen to have you describe your method, too! Surely you did this before walking to Atlanta (surely you didn’t burn fossil fuel for travel?) for stage 2 of your experiment? You know, where you were able to observe and measure the “lesser extent” you mention. Being a curious sort, you just had to know the numbers on this “extent”, right? Lookin’ fwd to seeing your data, so we can put this all to rest.

“So while you were in the desert, without the interference of water vapor, you were able to precisely observe and measure the GHG effect of CO2? Can you provide the results?”

He did.

In the paper he linked up-thread ……

http://asl.umbc.edu/pub/chepplew/journals/nature14240_v519_Feldman_CO2.pdf

Anthony

your paper is supported by which actual measurements that you made yourself?

How much warming is there in your own backyard? Did you check and can you give me a figure [that I can verify]?

like I said,

there is no warming here, man made, or otheriwse,

click on my name to read my reports on that.

@P.C.

If someone wishes to challenge a reviewed and published scientific paper, that person is expected to publish his/her own paper, giving reasons such as experimental data that contradict the original paper, demonstrating error in mathematical calculations, or showing obvious error in logic. Saying “I don’t agree with what you say and don’t believe in your conclusion” is woefully insufficient in science.

“like I said,

there is no warming here, man made, or otheriwse,”

If you say so Henry.

However the study observationally says otherwise.

And I don’t have to do it myself to verify.

I’m not a conspiracy theorist and believe researchers at their word.

Anthony,

it is rather foolish to trust the results of others. Not very scientific. That is why the gap between sceptics and ‘believers’ is getting bigger and bigger. At the very least, you could have done some verification?

e.g how much warmer did it get in the place where you stay over the past 40 years? let me know what you result you got.

Click on my name aand follow the links to figure out how you can easily determine the trend in your own neighborhood.

“If someone wishes to challenge a reviewed and published scientific paper, that person is expected to publish his/her own paper, giving reasons such as experimental data that contradict the original paper, demonstrating error in mathematical calculations, or showing obvious error in logic.”

Hey Don, I suggest you ask Dr. Soon, not to mention anyone else who has failed to go along with the warmista orthodoxy, just exactly what happens when someone tries to do just that?

Are you naïve or a liar or both?

I was taught in the sixth grade that, the reason a 90 degree day in a humid climate zone is followed by a warmer night than would be the case in a dry climate is that the dew point of humid air is higher than the dew point dry air. On a 90 degree day in Atlanta with a relative humidity of 57% the dew point would be 72.5 degrees. At this temperature the water vapor in the air will begin to condense, thus releasing the latent heat of evaporation contained in the water vapor. This slows the rate that temperature can fall relative to that which is seen in a dry climate.

In Death Valley, a 90 degree day at a relative humidity of 10% will have a dew point of 26.2 degrees, and will not “benefit” from the latent heat of the water vapor condensation until the temperature falls to that level.

With all other things equal (or ignored) (UHI cloud cover etc), the location with the lower dew point will experience the coolest night.

Is this no longer true?

You’re conflating latent surface heat with gasses acting as a “greenhouse gas”, a barrier, when it actually conducts & convects, not trap.

That desert cools faster due to the surrounding environment unable to hold latent surface heat. Comparing that to a massive concrete & asphalt complex with heat generating motors, cars, people, et cetera, it is incorrect to explain why it “stays warmer” invoking water vapor “trapping heat”.

The greatest con pulled on humanity is unassailable belief in “greenhouse gasses”.

And do not forget to specifically address the 402 molecules of CO₂ out of every ten thousand atmospheric molecules are somehow more powerful or efficient within their miniscule infrared range, than water vapor is over it’s vastly greater atmospheric levels and a very broad range of interactive infrared frequencies.

Actually, I believe there are currently 4 molecules of CO2 per ten thousand (0.04%), not 402. This merely reinforces your point, of course.

You are, of course, absolutely accurate, Graemethecat!

My error in not correcting the calculation when rewriting from parts per million to parts per ten thousand.

Thank you for correcting my oversight and misstatement!

“CO2 retards the cooling at night”

This is the famous “radiative forcing” myth which is coded into all of the computer models. The ones that don’t actually work, predicting 2x actual warming, not predicting the pause, etc. The atmosphere actually pumps heat about through convection, as Willis has discussed many times.

If you want to see the effect of back radiation, it is easy to calculate…

Place a 1 sq cm of foil on a substrate such as Styrofoam, facing the sky at night.

Place another, with Styrofoam on both sides, at the same height.

Measure the temperature difference between them.

Guess what? The one facing the sky will be COOLER, because it is radiating away from billions of molecules, while only a few ‘photons’ from CO2 will affect that cooling. If you can’t measure it, it ain’t there.

The obvious point is that the entire earth’s surface is radiating at all times, but more during the day. The ‘back radiation’ cannot affect daytime temperatures because it is overwhelmed by the sun. Night time radiation is at a lower rate than daytime radiation, but it is STILL dependent on the surface temperature. If the surface temperature is somehow ‘heated’ by back radiation, the RATE of radiation would increase to eliminate it. It is affected by the 4th power of temperature – an almost unbelievable thermostat. You cannot trap heat – if you try, radiation will increase to offset it.

@John Shotsky January 12, 2019 at 6:24 am

No, actually I don’t see the effect of back radiation. I see the effect of insulation. But mostly I don’t see the effect of “back radiation” because it doesn’t exist, it’s a figment of Mr. Trenberth’s fevered imagination.

Most ocean cooling is via evaporation and convection.

What is your scientific definition of “most” ??

…

Is it 95%?

…

Is it 80%?

…

or maybe 73%?

…

PS, convection and evaporation does not remove ocean heat into space, all it does is move the heat from point A to point B, and not off-planet.

See Trenberth et al 2009.

Most is usually more than 50 per cent.

Looking at how badly water freezes up, but how seldom it rises above 30C, I really think the thermostate is two ended. At the high end, evaporation and cloud formation stop further warming. At the low end, latent heat of freezing, and insulating, floating ice prevents the Earth becoming a total snowball.

However, the air above water may become really cold, and much of the measured hlobal warming is due to less intensive winter night cold above the frozen ground. Is that bad? Maybe, but not as bad as the cold itself.

@Steve Heins

Whenever considering ocean temperature and behavoir, one should always have in mind the below plot:

(a) is the night-time temperature, and (b) is the daytime temperature.

As you will note, the top half millimetre of the ocean is always cooler, both day and night. This is notwithstanding that 95% of all DWLWIR is fully absorbed in the top 10 MICRONS (may be even less given the omni-directional nature of DWLWIR) of the ocean.

Why is the top half millimetre cooler? The obvious answer is evaporation. Lick your hand and blow on it and you will feel the cooling effect of evaporation.

One can see that there is no heating of the ocean by DWLWIR since at night the only source of energy in is DWLWIR and the ocean temperature is constant between about the top half millimetre and about 5 metres.

Contrast that with the daytime where one can see solar energy at work. Solar is not absorbed in the top MICRONS, and one can see that the top half millimetre (where solar begins to get absorbed) is warmer than the 5 metre level and the heat is getting less and less as one descends from the top half millimetre to 5 metres.

Night-time temperatures above the ocean are determined by the temperature of the ocean itself. If there is any impeding of temperature loss, it would appear that this is due to water vapour immediately above the oceans, and not by CO2.

This planet is a water world and it is water that dominates everything.

“What is your scientific definition of “most” ”

What is your definition of a scientific definition, as opposed to say…oh, I don’t know, a dictionary definition?

I wonder, do you use the phrase “scientific fact” much?

Funny how someone can point out how water behaves in the air in deserts, but then forget all of that and make up different selective attention fact set a minute later when talking about the ocean.

richard:

“One can see that there is no heating of the ocean by DWLWIR …..”

The process of ocean warming is via reduction of cooling ….

http://images.remss.com/papers/rsspubs/Gentemann_JGR_2008_thermal_variability.pdf

An explanation here from Nick Stokes ….

https://moyhu.blogspot.com/2010/10/can-downwelling-infrared-warm-ocean.html

The skin layer is still warmer than without the (extra) GHE and the transference of heat to the atmosphere is via that layer. As heat flow depends on DeltaT the warmer water just below does not do that as well with a warmer skin.

“convection and evaporation does not remove ocean heat into space, all it does is move the heat from point A to point B, and not off-planet.”

The sun heats the ocean. The warm water evaporates and warms the air.

The war moist air rises, not rises, shoots up, 10 to 15Km, where the water vapor condenses and releases heat energy which is either conducted to adjacent gas molecules or is radiated.

More than half of the radiation is into space because the altitude at which it occurs is well above the surface, and because the cloud formation creates a high albedo surface underneath the cloud top. When you stand under the cloud and look up it is dark. When you fly over the cloud it is light. The colored graphic displays of satellite infrared images of cloud tops show the big high ones as red, because they are warmer than the surrounding air.

The effects are dramatic, we call them storms.

The red clouds are warmer than surrounding air that they rose through, or they would not have risen to where they are.

But it is incorrect that the reds are the warmest…those are the coldest cloud tops…colder because they are higher, and all air cools as it rises.

The clouds are radiating far more than the surrounding air though…even though if the air comprising the clouds is no longer rising, it is because they have reached a level where they rise no more since they are the same temp…because the water vapor and ice crystals in clouds actively radiate in many wavelengths, and the surrounding dry air does not. So the clouds and the air around them are the same temp, but the dry air is transparent and invisible and the clouds are not.

Air can only rise if it is warmer than the air it is rising into. Once that condition no longer is the case, it stops rising, with the exception of air which has moved rapidly and acquired enough momentum to overshoot.

Here is a satellite picture showing with a temperature scale…note the scale is reversed from the way such scales are often present…colder, and redder, is on the right:

http://www.recmod.com/hurricane/hurricane2005/katrina/satellite/irloop-8-28b.jpg

BTW, Walter, I am not disagreeing with the point you are making, just this detail.

I do not this it undermines your main point though

Menicholas: Thank you. You are correct. My main point ist that heat energy those cloud particles have given up has been radiated towards outer space.

http://applet-magic.com/cloudblanket.htm

Clouds overwhelm the Downward Infrared Radiation (DWIR) produced by CO2. At night with and without clouds, the temperature difference can be as much as 11C. The amount of warming provided by DWIR from CO2 is negligible but is a real quantity. We give this as the average amount of DWIR due to CO2 and H2O or some other cause of the DWIR. Now we can convert it to a temperature increase and call this Tcdiox.The pyrgeometers assume emission coeff of 1 for CO2. CO2 is NOT a blackbody. Clouds contribute 85% of the DWIR. GHG’s contribute 15%. See the analysis in link. The IR that hits clouds does not get absorbed. Instead it gets reflected. When IR gets absorbed by GHG’s it gets reemitted either on its own or via collisions with N2 and O2. In both cases, the emitted IR is weaker than the absorbed IR. Don’t forget that the IR from reradiated CO2 is emitted in all directions. Therefore a little less than 50% of the absorbed IR by the CO2 gets reemitted downward to the earth surface. Since CO2 is not transitory like clouds or water vapour, it remains well mixed at all times. Therefore since the earth is always giving off IR (probably a maximum at 5 pm everyday), the so called greenhouse effect (not really but the term is always used) is always present and there will always be some backward downward IR from the atmosphere.

When there isn’t clouds, there is still DWIR which causes a slight warming. We have an indication of what this is because of the measured temperature increase of 0.65 from 1950 to 2018. This slight warming is for reasons other than just clouds, therefore it is happening all the time. Therefore in a particular night that has the maximum effect , you have 11 C + Tcdiox. We can put a number to Tcdiox. It may change over the years as CO2 increases in the atmosphere. At the present time with 409 ppm CO2, the global temperature is now 0.65 C higher than it was in 1950, the year when mankind started to put significant amounts of CO2 into the air. So at a maximum Tcdiox = 0.65C. We don’t know the exact cause of Tcdiox whether it is all H2O caused or both H2O and CO2 or the sun or something else but we do know the rate of warming. This analysis will assume that CO2 and H2O are the only possible causes. That assumption will pacify the alarmists because they say there is no other cause worth mentioning. They like to forget about water vapour but in any average local temperature calculation you can’t forget about water vapour unless it is a desert.

A proper calculation of the mean physical temperature of a spherical body requires an explicit integration of the Stefan-Boltzmann equation over the entire planet surface. This means first taking the 4th root of the absorbed solar flux at every point on the planet and then doing the same thing for the outgoing flux at Top of atmosphere from each of these points that you measured from the solar side and subtract each point flux and then turn each point result into a temperature field and then average the resulting temperature field across the entire globe. This gets around the Holder inequality problem when calculating temperatures from fluxes on a global spherical body. However in this analysis we are simply taking averages applied to one local situation because we are not after the exact effect of CO2 but only its maximum effect.

In any case Tcdiox represents the real temperature increase over last 68 years. You have to add Tcdiox to the overall temp difference of 11 to get the maximum temperature difference of clouds, H2O and CO2 . So the maximum effect of any temperature changes caused by clouds, water vapour, or CO2 on a cloudy night is 11.65C. We will ignore methane and any other GHG except water vapour.

So from the above URL link clouds represent 85% of the total temperature effect , so clouds have a maximum temperature effect of .85 * 11.65 C = 9.90 C. That leaves 1.75 C for the water vapour and CO2. CO2 will have relatively more of an effect in deserts than it will in wet areas but still can never go beyond this 1.75 C . Since the desert areas are 33% of 30% (land vs oceans) = 10% of earth’s surface , then the CO2 has a maximum effect of 10% of 1.75 + 90% of Twet. We define Twet as the CO2 temperature effect of over all the world’s oceans and the non desert areas of land. There is an argument for less IR being radiated from the world’s oceans than from land but we will ignore that for the purpose of maximizing the effect of CO2 to keep the alarmists happy for now. So CO2 has a maximum effect of 0.175 C + (.9 * Twet).

So all we have to do is calculate Twet.

Reflected IR from clouds is not weaker. Water vapour is in the air and in clouds. Even without clouds, water vapour is in the air. No one knows the ratio of the amount of water vapour that has now condensed to water/ice in the clouds compared to the total amount of water vapour/H2O in the atmosphere but the ratio can’t be very large. Even though clouds cover on average 60 % of the lower layers of the troposhere, since the troposphere is approximately 8.14 x 10^18 m^3 in volume, the total cloud volume in relation must be small. Certainly not more than 5%. H2O is a GHG. Water vapour outnumbers CO2 by a factor of 25 to 1 assuming 1% water vapour. So of the original 15% contribution by GHG’s of the DWIR, we have .15 x .04 =0.006 or 0.6% to account for CO2. Now we have to apply an adjustment factor to account for the fact that some water vapour at any one time is condensed into the clouds. So add 5% onto the 0.006 and we get 0.0063 or 0.63 % CO2 therefore contributes 0.63 % of the DWIR in non deserts. We will neglect the fact that the IR emitted downward from the CO2 is a little weaker than the IR that is reflected by the clouds. Since, as in the above, a cloudy night can make the temperature 11C warmer than a clear sky night, CO2 or Twet contributes a maximum of 0.0063 * 1.75 C = 0.011 C.

Therfore Since Twet = 0.011 C we have in the above equation CO2 max effect = 0.175 C + (.9 * 0.011 C ) = ~ 0.185 C. As I said before; this will increase as the level of CO2 increases, but we have had 68 years of heavy fossil fuel burning and this is the absolute maximum of the effect of CO2 on global temperature.

So how would any average global temperature increase by 7C or even 2C, if the maximum temperature warming effect of CO2 today from DWIR is only 0.185 C? This means that the effect of clouds = 85%, the effect of water vapour = 13.5 % and the effect of CO2 = 1.5%.

Sure, if we quadruple the CO2 in the air which at the present rate of increase would take 278 years, we would increase the effect of CO2 (if it is a linear effect) to 4 X 0.185C = 0.74 C Whoopedy doo!!!!!!!!!!!!!!!!!!!!!!!!!!

Alan,

My focus is on IR exchange to and from the ‘bottom’ of clouds. I have spent considerable time pondering this. Maybe you can help me out here.

With regards to your quote “… The IR that hits clouds does not get absorbed. Instead it gets reflected. When IR gets absorbed by GHG’s it gets reemitted either on its own or via collisions with N2 and O2. In both cases, the emitted IR is weaker than the absorbed IR. …”

My reasoning is that up-welling IR from the ground (consider for discussion that the ground is warmer than the cloud base) will be absorbed and a portion re-emitted downwards at a lower emission temperature and thus energy level. Now the cloud base is not like a thin sheet of foil or micro boundary layer but is in fact a ‘spongy’ semi-transparent boundary layer of considerable thickness (possibly a couple of hundred feet thick). Some IR coming up from below my not be captured and re-emitted until well into the depth of this boundary layer at which point the re-remittance could send it deeper into the boundary layer or if sent downward it could be captured by the water condensate that it missed on the way up and its travel redirected yet again with some continuing deeper (upwards) into the cloud. This leads me to believe that clouds are absorbing some significant portion of the up-welling IR. Also if the general cloud emission temperature is less than the ground emission temperature it would seem reasonable to think that some energy was retained in the cloud even by the IR that was re-emitted downwards and back to the ground.

In the real would where I can climb or drive up a mountain into the fog the density of the fog increases over a period of elevation change of a few hundred feet until visibility becomes very poor. I would assume that cloud bases normally behave the same, but I don’t have a balloon to prove my point.

The thermal mass of the oceans is in the region of 1100 times the thermal mass of the atmosphere…maybe you could explain how an increase from 350ppm to 400ppm in CO2 in the atmosphere could cause a detectable increase in ocean temperatures?

I know it’s only basic physics…not you’re advanced ‘downwelling radiation’ science…but it would be fascinating to hear your attempt at the math.

Thanks.

….thermal mass!? How much does water expand when going from liquid to vapor?…

One mole of any gas at STP occupies a volume of 22.4 liters.

STP is one atmosphere of pressure and 273K, or 1012 millibars and 0.0 C

The volume of one mole of water is slightly below 1 cubic centimeter per gram at STP, or

<18 cc.

18 cubic centimeters is 0.018 liters.

Every day in the tropics the sun heats the ocean water by several (or more) degrees triggering emergent phenomena called clouds and thunderstorms. Every day, these emergent responses leave the water cooler than the triggering temperature. Cooling continues overnight. The warmer the water, the earlier in the day the clouds and thunderstorms form (clouds block sunlight and reflect it into space…storms drive humid air into high altitudes where it condenses radiating heat into space — CO2 assists in this high altitude radiative escape). This daily energy transfer activity is several orders of magnitude greater than the local effects of CO2.

And it is not entirely clear how much warming is done at ocean surfaces from downwelling IR. Only the upper few hundred microns absorb this IR…which immediately causes some surface evaporative cooling. So downwelling IR might actually cool the oceans.

Things are not nearly as simple as your Nature article would have it.

Climate science is not rocket science…IT’S AT LEAST AN ORDER OF MAGNITUDE MORE COMPLICATED than rocket science.

Steve Heins, Clouds redard the cooling at night.

What’s your point.

CO2 and clouds do the same thing.

That climate science is not nearly as simple as most AGW scientists admit publicly.

It is certainly not simple enough to model accurately without at least 3 orders of magnitude more (good) DATA and 2 orders of magnitude more spatial resolution to the data and a whole lot more (who knows how much) computing power.

You do not know that downwelling IR heats the water…at all. Evaporation requires lots energy. IR penetrates water hardly at all (most is reflected…mirror like). Lots of energy into a little bit of water causes evaporation…which causes cooling of the underlying body.

Broad spectrum Sunlight certainly penetrates and heats water…orders of magnitude more than the downwelling IR does. So, Things that block sunlight have way more effect on ocean temperatures than downwelling IR.

IT’S NOT AS SIMPLE AS CALCULATING JOULES OF DOWNWELLING IR HEATING UP WATER LIKE A STOVETOP BURNER.

In models, Emergent phenomena like clouds and storms are treated as averaged linear events and these phenomenon are FAR FROM THAT. They respond to the temperature of the water and act as heat engines producing powerful negative feedbacks.

None of the climate models can or do “model” these ubiquitous emergent phenomena. CO2 effects are real of course but puny compared to these (and many other) governing emergent phenomena.

Doc,

Water absorbs both in the UV and the IR . There might be some IR warming going down up to a meter or so

but the effect of the UV is much more pronounced as it warms the top of the surface easily to boiling point,

causing evaporation, mostly. The subsequent condensation of water vapor is what keeps the temperature on earth so much more equal,

be happy about that!

Hence, the increase or decrease in UV coming to earth is what is causing warming or cooling, respectively;

do the measurement!!

[sorry I don’t have the equipment]

Over the Intertropical oceans there are virtually no clouds overnight. “SO THERE”.

AGAIN…my point was that climate is NOT simple enough to point to one factor (like ocean heating from downwelling radiation) and say “SO THERE…end of discussion”.

IR doesnt heat the ocean and neither does the atmosphere, the atmosphere is heated by the ocean.

If the air above the ocean is warmer than the water, heat will flow from the air into the water.

Yes, by conduction. Gases are quite bad conductors, so the flow will be minimal, even for a large temperature differential.

Yes, air warmer than the ocean will cause the ocean to warm – a little. But the opposite is also true, and that happens daily, monthly, yearly. But the ocean will also be warmed by radiation from the sun, and it will radiate more due to that warming. So, what is the point of the comment? Heat goes in, heat goes out, and it has always been that way, and always will be. There is nothing of interest in what has always been, and always will be. Unless one does not understand that is how it is.

Global average air temperature is lower than global average water temperature. So besides the fact that very little heat transfers from the atmosphere to the ocean, what does is lowering the temperature on average.

If a given area of ocean emits 20 units of energy in the IR band, and the atmosphere returns 1 unit of energy via down welling IR, the net emission rate of the ocean is 19 units. ……..Down welling IR retards cooling.

That’s a big if…there are billions of radiating surface molecules for EVERY radiating CO2 molecule that is earth directed. Half are not earth-directed. ALL earth radiating molecules are space-bound. People think of CO2 molecules as if they were all street lights with shields to prevent outward radiation. Think of it as ½ the Co2 concentration is earth directed…yep…200ppm…

So John, what you are saying is that there is an effective IR mirror of 200 ppm, that reflects all outbound IR back towards the surface.

…

Thank you, you’ve just confirmed that the CO2 retards cooling.

In general, air by itself is a pretty good insulator, if it’s static, and you don’t need to invoke any mythical DWIR or any other such mumbo jumbo. That’s why you fill a wall cavity with insulation, to keep the air as static as possible, keep it from moving around (the insulation, if you remove all the air from it, is not a very good insulator by itself). The atmosphere is NOT static, its specific composition notwithstanding!!! Case closed.

If all of the increase in atmospheric co2 could be explained by man made co2, then the retained additional warming can not exceed 0.00012 provided that co2 is a perfect insulator . Do you really expect that to warm the oceans?

Steve, you do know that nitrogen is a green house gas too. It has a heat value as well. Do you know what the difference is between the heat retaining value of nitrogen as opposed to co2? The amount of nitrogen is 78% compared to 0.0.042 % . At 300 K, N is 1.040 and co2 is 0.846. How much co2 do you think it would take to overcome the specific heat of nitrogen? You’re thinking there is this enormous amount of co2 and it’s not. If there is any warming by co2, it is extremely small. That is, if all the increase in atmospheric co2 is man made, which I have my doubts… strong ones.

There is no correlation whatsoever shown in the first graph. The illusion comes from scaling the ocean heat flux. To tell if there is a correlation you generate a graph of CO2 in one axis to heat flux in the other axis and generate a least square trend. The first graph is Powerpoint bullshit, not science.

Agreed.

If one wanted to show something else the scale could be changed and alter the appearance completely.

Zeke is the Jim Acosta of climate.

I agree. Willis is far too kind to call him one of the good guys. He’s just another obfuscating, fear mongering, climate con artist and the tweet referenced in Willis’ article proves it. It’s his latest attempt to intentionally mislead and frighten the general public about the climate. To me, he’s part of the global warming deep state.

Louis Hooffstetter

I’m going with your comment on this guy Zeke (whom I’d never heard of before). The only rationale for picking an odd unit of measure and even ignoring your own error bars is because you could not generate the wanted conclusion and alarm otherwise.

agree

100%

A large part of the problem is trying to play nice with people who have as an end game some very nasty outcomes.

If the greenies, globalists, and other factions of the warmista cabal ever get control of our economy, governments, and lives, the state of the entire world will make the darkest days of the Soviet union look like a sunny beach side bikini babe barbecue.

Things are already getting pretty bad in some places, but nothing like what they have in mind.

How many times this claim has been presented, and nope, it’s not relevant. CO2 ticks up by a quantity much smaller than human emissions. The ocean is taking in and sinking a large portion of human-made CO2. In fact, it is changing gasses quickly to both directions but the balance is much on the sink side.

That Zeke posted this was pretty cheap of him. CO2 should not be scaled to correlate with OHC, as they by the best knowledge, are not real time correlated.

“CO2 ticks up by a quantity much smaller than human emissions. The ocean is taking in and sinking a large portion of human-made CO2. In fact, it is changing gasses quickly to both directions but the balance is much on the sink side.”

WRONG! WRONG! WRONG!

Even climate “scientists” agree that the amount of CO2 contributed to our atmosphere by natural sources dwarfs the human contribution. The last ice age began ~2.6 million years ago, began to end ~15,000 years ago, and we are still coming out of that ice age today. 20,000 years ago the CO2 concentration in the atmosphere was only ~190 ppm (ice core data), which indicates the frigid oceans removed a huge amount of CO2 from the atmosphere. Climate “scientists” also constantly tell us that the oceans are getting warmer and warmer every day. So if the oceans reached equilibrium with the atmosphere (with regards to CO2) during the last ice age, there is no possible way they can absorb more than a gnat’s ass of CO2 from the atmosphere today. They’re supersaturated with respect to CO2, and have to off-gas CO2 as they warm. That’s why the first chart shows the CO2 concentration (in the atmosphere) is driven by ocean temperature.

”Even climate “scientists” agree that the amount of CO2 contributed to our atmosphere by natural sources dwarfs the human contribution. ”

I kind of agree, but your fact is a totally irrelevant factoid.

‘there is no possible way they can absorb more than a gnat’s ass of CO2 from the atmosphere today’

And this is just bollocks. The growing partial pressure does the trick. Good for us, otherwise we’d doomed.

I respectfully disagree:

* At the peak of the last Ice Age (~20,000 years ago), the concentration of CO2 in our atmosphere was only ~190 ppm (from ice core data).

* The minimum concentration necessary for woody plants to survive is ~150 ppm. If the concentration falls below 150 ppm, all plants die.

* The concentration at which plants begin to die is ~225 ppm. So at the peak of the last Ice Age plants were suffering. Exposed areas near glaciers were barren deserts (as evidenced by large deposits of Loess, a wind-swept, flour-like soil dust).

*~10,000 years ago, the concentration of CO2 in our atmosphere was only ~280 ppm (from ice core data).

* By 1880, (when CO2 measurements began) level of CO2 in our atmosphere was only ~283 ppm.

* We are currently at 406.58 ppm (most recent Mauna Loa observatory reading I could find). But prior to the most recent cycle of Ice Ages (that started about 3.5 million years ago) the concentration of CO2 in our atmosphere was around 1000 ppm.

* Greenhouse experiments also show that when the level of CO2 falls below ~500 ppm, plant growth is severely retarded. So as far as plants are concerned, the current level of CO2 in our atmosphere is dangerously low.

* These same experiments also show that optimum plant growth occurs between 900 to 1,200 ppm. So commercial greenhouses add CO2 to the air to maintain levels within this range.

*Why do plants grow best when the atmosphere contains 900 to 1,200 ppm CO2? The reason is because our atmosphere contained 900 to 1,200 ppm CO2 when plants evolved.

* So our atmosphere is depleted in CO2 relative to when plants evolved, and still below the ‘comfort’ level for plants (500 ppm). And for most of the Earth’s geologic history, the levels of CO2 in the atmosphere have been much higher that today (without resulting in computer modeled disaster).

Louis,

Sorry for some foul language.

Your logic is very convoluted and only well refutable by detailed balance calculations. For now I just point a problem in your claim. If the atmospheric portion growth were spontaneous, what would make it happen just the same time as the industrial emissions? Why was the CO2 still low during the holocene climatic optimum?

If you claim substantial outgassing, this time should be much warmer than the medieval and holocene climate optimum, and outgassing would also require significant ocean temp rise. We are not seeing that. We see CO2 rising steadily over the industrial period. The rise corresponds roughly emissions. The human emissions are not in the atmosphere, so they needed to go somewhere. If you say they didn’t sink into the ocean, but the ocean was /also/ net outgassing, you need to find a huge land-based sink that took all human emissions and some ocean CO2 as well.

There’s no such sink, and isotope analyses put a severe limit on where the CO2 goes. Without me being a scientist at the area, which, I admit, is a serious weakness from my side, I regard your idea a fancy Salby like crackpot idea.

With respect, there is only so much you can claim without a darn good full explanation. I think you didn’t really answer the partial pressure question. When the partial pressure grows, CO2 starts sinking in even if the surface is a bit warmer. This is the case now.

Thanks for a civil answer, though. That’s more than I can do.

Louis Hooffstetter

January 12, 2019 at 6:54 am

Louis, regarding your comment below. A good summary of the importance of CO2. I think greenhouses often pump in around 1200ppm. However, my understanding is that CO2 was last at 1000ppm in the Cretaceous…is there data available that indicates it was at this level as recently as 3.5MYA?

Climate scientists are spending lots of time and money to determine what the exact CO2 concentration and temp was at every interval over the past 50 million years, and beyond, to prove their notions of CO2 being the thermostat of the atmosphere.

After all, who could dispute that if they are perfectly correlated in previous ages, that there is some cause and effect relationship?

Haha…just kidding!

They have not spent one penny or one breathe studying, researching, or talking about this, since it was pointed out that the ice cores actually refute the notion of CO2 causing the surface to warm.

Because anyone, even jackass warmistas, can easily see the other side of this logical coin: If such careful studies were done, and showed that there is no cause and effect correlation, that there is no tipping point, or runaway effect due to increasing water vapor once CO2 gets above a certain level, that the entire hypothesis will be dead and buried, conclusively and completely, once and for all.

But there are the kinds of studies and research that can and have been easily done by geologists and others even many decades ago, and could surely be done in far greater detail and with more certainty and less margin for error with modern instrumentation and methods.

So with all the humongous mountains of our money being carelessly spent on ultra-repetitive nonsense which amounts to circular reasoning and confirmation of prior assumptions, is it not about time to ask…WTF?

Hugs: No problem with the foul language. “Bollocks” is relatively mild compared to the language I use on occasion.

When I think about the ocean/atmosphere system of Earth, I imagine an unopened 2 liter bottle of soda. It’s not a perfect model, but it makes sense to me. The mass of the oceans is ~270 X the mass of the atmosphere, so in my model, the soda represents the oceans and the void space at the top is the atmosphere. The exchange of gasses between the two is controlled by temperature and pressure, but in our actual ocean/atmosphere system, pressure has less influence than it does in my unopened soda bottle model. And since the mass of the soda (oceans) is so much greater than the atmosphere, it’s the temperature of the soda (oceans) that dominates the process. As the soda warms, gasses diffuse into the atmosphere. When the soda cools, gasses are removed from the atmosphere. This can be illustrated with two unopened bottles of soda. Place one in the freezer for ~45 minutes, and place the other one in the sun. When the two are opened side by side, the cool bottle of soda bottle will have some pressure (fizz), but the warm bottle may have enough to cause soda to foam out of the top. The reason the CO2 curve in the ice core data lags the temperature curve is because it takes a while for the oceans to warm relative to the atmosphere, and so there is a lag time before they warm enough to begin to degas.

Alastair: I have seen the 3.5 mya reference in several places (that I can’t recall) but here is one: https://www.ucdavis.edu/news/what-ancient-co2-record-may-mean-future-climate-change/

Hugs, what happens with CO2 in or out of the ocean is determined both by the temperature of the water and the concentration of CO2 in the air. The higher the temp, the higher the outgassing – think a hot can of coca cola. Opposing this, the higher the atmospheric content of CO2, the greater the dissolution into the ocean. It isn’t a one-or-the-other type of thing. One must know what the concentration of the gas in the ocean is, what the concentration of the gas in the atmosphere is, and what the temperature of the ocean is doing – going up or down to decide whether CO2 is going net in or out. In general, given a period of no changes in these factors for a period, CO2 in the ocean is in equilibrium with that in the atmosphere. Were you to cool the ocean down, it would dissilve more, warm it up and it would outgas.

The picture is complicated by living sea organisms that take CO2 from the water, allowing more to be dissolved. However, major changes in in the main physical parameters: amount of CO2 in the atmosphere or water temperatures up or down decide which way the CO2 goes.

A missing mechanism….

Willis, why the blip?

JF

Well then, Nobel Laurate Abert Gore made popular the 800 year lag of CO2 behind temperature. If your hypothesis is correct, paleoclimatological data would show the world started warming 800 years ago Is that the case or am I missing something?

Yes bjorn,

I think you are missing the obvious.

First, the CO2 you referring to is about the concentration, which solely depends in the CO2

emission flux…where the proper lag of CO2 is to CO2… 🙂

Second, when considering the lag there, the 800 year one, it consist with a climatic variation either of temps or CO2 concentration in regard to time, in a very slow path.

Jumping 1.2C in 300 years as versus the case of 800 year lag period (1.2C in 3000years) , means that the lag time will be shorter…but it will be.

The CO2 flux is very much connected, related and dependent on the thermal flux…

So faster the “positive” variation in the “coupled” flux, shorter the lag time of CO2 concentration.

There still is a lag there of CO2 lag to temp increase, meaning that atmosphere started to warm before CO2 concentration starting to increase. In consideration of time this is ~100 years.

What is even more interesting about the lag, is that actually it relates far much more to temp variation than time perse.

The data for the last 300 years shows a lag of CO2 concentration to ~0.4C variation.

The modern data, also shows that there is no any detectable lag of CO2 flux response to the

thermal flux… no any detectable such, as yet, of any CO2 concentration acceleration in the given present thermal

stagnation of the atmosphere in the last 20 years…even when the increase of CO2 concentration is still high.

In this context, the lag, when considered in both, time and temps values, it means that in a slow long time path, the max climate thermal swing can not be more than 4C related….no more than 2C variation up or down from the mean. (~0.4C per ~800 years).

Hopefully this helps with your question.

cheers

Crispin in Waterloo but really in Beijing January -11, 2019 at 6:15 pm

Exactly correct you are, Crispin in Waterloo

And in reference to what the author stated, to wit:

I agree with the “non-belief” stated in the above statement, …… as well as the fact that I do NOT believe that we knew what the “ocean heat content” (temperature) of the top 2 km (6,562 feet) of the ocean was during the “1955-1990” time period as denoted on the included graphic, ….. Figure 1. Change in ocean heat content, ….. simply because that portion of the graph appears to me to be “random guesstimates”.

What could possible cause the “heat content” (temperature) of the top 2 kilometers (6,562 feet) of the ocean waters to change (increase/decrease) as quickly as denoted on said graph?

But anyway, I kinda really like the above noted graph …… simply because is shows a direct correlation (as per defined by Henry’s Law) between the 60 year increase in average ocean water temperature ….. and ….. the 60 year increase in average atmospheric CO2 ppm quantities.

The CAGW claim that the greening/rotting of the NH biomass is the “driver” of average biyearly/yearly atmospheric CO2 ppm quantities is nothing more than “junk science” agitprop.

as a kid i noticed when you let a soda get to room temperature it went “flat” meaning as it warmed it released its co2, because warmer water holds LESS co2.

100%

good observation

which is also the very reason why there is correlation with rising global T and rising CO2…

but rising CO2 does not cause rising global T

if all of us could just agree on that? that would be good…

the historic records shows clearly the temperature rises and then co2 rises……the global warming caused by humans crowd are claiming the cause comes AFTER the effect

true.

It will go flat once you open it even without getting to room temperature beacause the concentration of CO2 is higher than in the air above the surface.

Ever notice all those little CO2 bubbles in soda pop? They form as soon as you open the bottle, no matter how cold the pop is.

Yes, but the temperature is indeed a factor. I did a contract job in Tanzania in the 1980s and, looking for bottled water to drink in the local area, I had to settle for soda water and we had no refrigeration. When you were taking the cap off a bottle, you had to wait patiently letting the gas escape slowly. To snap the cap off, you would lose 90% of your water in an explosion of gas. I only absentmindedly did this once!

TY Gary, i grow tired of seeing my accurate information “corrected” here when it was already accurate and needed no correction.

Is the real question not how 1 measurenent is representative of a 300km X 300 km X 2km volume of water, not how you determine rhe math error-wize in 4000 measurements?

How accurate is one mearement of the volume? +/- 0.5C? Each reading 200 km apart? I’m thinking of the SST maps. Regional hot to what fineness?

I am not scientist, but I have experience in industry that tells me that the oceans cannot be getting warmer and more acidic at the same time as the ‘warmists’ argue, because of what you correctly say about the off gassing of CO2 as the oceans warm up.

The solubility of CO2 on salt water is more complicated than solubility in pure water. As well as temperature it depends on pH, partial pressure, absolute pressure and salinity.

Not easy to sell bullshit to Willis.

Yet another ocean heat content issue in the context of agw is the use of circular reasoning. Pls see

https://tambonthongchai.com/2018/10/06/ohc/

Chaamjamal,

Thank you for this very interesting link!

Very interesting video of the undersea volcano.

Fascinating really.

Is that clip part of a longer video, documentary, or discussion?

I would love to watch much more of this if there is more.

Perhaps a link?

Thank you in advance.

Nick M

The problem I have with it is. “If this were true”. If we go by NASA’s sea level rise which has been pretty flat for the past 3 years and all the heat is going to the ocean. Then where is the warming expansion of the oceans happening? Is it getting balanced by massive ice sheet builds on Greenland and Antarctica? The math doesn’t work out to me. Simple logic shows something isn’t right.

Forgot website: https://climate.nasa.gov/vital-signs/sea-level/

it shouldn’t be easy or even possible to sell this bullshit to any adult on the planet. i know willis asked not to rag on zeke ,but given he has displayed the fact he is an intelligent and articulate adult numerous times i find it very hard to believe these claims are “mistakes”.

i have huge respect for the time and effort willis puts into these analysis . here in scotland the most apt response to the claims by zeke would be ” aye right, ya walloper”.

that would suffice, because no one with an iq above 90 should swallow that we know the ocean heat content down to 2000m to the degree of accuracy claimed here , either 50 years ago or today. anyone claiming so is either stupid or a liar that knows no one of any consequence in the political or sci-political arena will ever call them out for selling the snake oil they are paid to sell.

I agree with you bit chilly.

Sorry Zeke ….HORSEFEATHERS !

A mistake is getting your answer wrong by a factor of two or three.

Getting the error bars wrong by 2 or 3 orders of magnitude falls into an entirely different category.

Is this yet another “peeer reviewed” error-strewn study? Peers ain’t what they used to be.

No, it’s a freaking marketing meme.

+1

“Peers ain’t what they used to be.”

Zeke’s peer reviewers are fellow climate “scientists” who also don’t follow the scientific method or know how to use significant figures.

As always, average temperature is a meaningless value. So just lead with that.

Avg T of water is not meaningless, but Zeke was using ZJ, not tenperature.

Chaamjamal January 11, 2019 at 6:17 pm

True sometimes … but in fact, the easiest person to fool is yourself, and I’m no more immune to that than anyone else …

w.

Enjoy all your posts sir particularly the data analysis ones. Thank you.

(easiest ?}

Thanks, fixed.

w.

Willis,

The easiest person too!

*I know how you hate typos*

BTW…good observations here.

I recall the first time I heard about this zetajoules crap and in two minutes had looked up the volume of the ocean and did the math.

It took me longer to find out what the resolution of the Argo thermometers was, but I knew instantly that the result was BS of a very egregious nature.

Zetajoules my eye!

At the very least they could use a more easily relatable unit…like Hiroshima’s!

I agree. No way was the 1955 measurement that precise, or the current ARGO measurements either.

Didn’t we have to adjust the Argo surface float data upward because it didn’t align with WWII ship intake water temperature data?

Yes, yes they did!

That was how they Karlized away the pause a few years back.

As sleazy and disingenuous as anything could be.

Once again, we have results specified with much greater accuracy and precision than that provided by the test instruments.

The assumption, that if you have a ton of data, you gain accuracy and precision is only true under specific circumstances. From experience, I would say that data gathered from nature almost never fulfills those criteria. I’m not a mathematician and I do wish that someone would come along and produce a theoretically sound proof. I can provide examples but that’s not the same.

0.001 C and probably 0.01 C measurements are measuring the microcurrents along the buoy skin and not the ocean and are probably influenced by heat from the buoy electronics, battery, and motors. At different scales of resolution does not mean you are measuring the same thing more accurately, but also means you are likely measuring other phenomena.

Measuring 0.001 C ocean microeddies and microfluxes is not the same as measuring world ocean currents in bulk.

ARGO floats can measure temperature to 1/1000th of a degree C with accuracy?

Is that really possible? Now my BS alarm is going off.

Argo site says +/- 0.002 C. That is the precision of the instrumentation. How accurately it reads a real temperature is up to debate. To be fairly certain, I would recalibrate every month. That doesn’t happen.

I wouldn’t be betting any part of my anatomy on it.

“The temperatures in the Argo profiles are accurate to ± 0.002°C and pressures are accurate to ± 2.4dbar. ”

http://www.argo.ucsd.edu/Data_FAQ.html#accurate

To claim that the resolution limit of the device is the same as the accuracy of the measurement is complete nonsense.

To do so is to claim that there are no errors from any source and the unit is constantly and perfectly performing at it’s theoretical design limitations.

Last month I got interested in how good of a thermometer I could get, so I went searching (online of course). Even after I asked for a “laboratory thermometer” the best I could find was ±0.25°C. If anyone can build a thermometer that actually reads to ±0.002°C, where do I buy one, and how much will it cost me? And how often will I have to get it calibrated? And how quickly can it give me that reading? Do I have to stand in one place for 20 minutes waiting for it to acclimate so I can actually read it?

I believe that the people who wrote Argo document stating 0.002 K “accuracy” have confused accuracy and precision. You determine precision by measuring the same thing multiple times and determining the variation in the readings. Accuracy is determined by measuring a known temperature multiple times. Known temperatures are the melting and boiling points of various substances. This is can be done in in a laboratory, but not very easily under 2,000 m of ocean water.

There are other factors which effect accuracy beyond the characteristics of the RTD sensor. The sensor’s resistance must be measured to convert to a temperature. This means that the voltage and current need to be measured with extremely high precision to match the RTD sensor precision. Electronic components are impacted by temperature changes, therefore I wonder if the Argo electronic components are in an isothermal enclosure. I find it hard to believe that the Argo temperature measurements are within several orders of magnitude of the 0.002 K that they claim on the Argo website.

Almost, Donald;

My only quibble is the microcurrents.

There is another curious caveat that leaves ocean temperature measurement begging:

From the same source:

A caveat that leaves one wondering why “ocean temperature” is not further defined with a relevant accuracy?

That thermal conducting buoy masks the micro-currents, otherwise I would agree with you.

* NDBC 0.08°C claimed accuracy,

* Continual growth of marine life on the buoy,

* Buoy sampling throughout a column of water,

* i) i.e. sampling different layers of water that are often drastically different temperatures,

* ii) Where a slight change in water layer thickness could drastically affect any averaged temperatures,

* iii) That difference in water layers means each buoy is not measuring the same thing. Even the same buoy, with currents changing the column of water is not likely to be measuring the same thing.

* iiii) Meaning, Ocean buoys are another situation where averages are improper reflection of hat is being measured.

The continual growth of marine life, along with the minor fact that buoys attract marine life obscures that claimed accuracy more as time passes.

Nor are all of the buoys Willis shows under USA control. Even within the USA, drifting buoys are spread amongst different owners and programs.

?dl=0

?dl=0

This image show the 1,684 United States Argos drifting buoys over the last six months.

The lines are the tracks of the buoys. I am also puzzled by tracks without buoys.

Different programs, different owners, different equipment, different instruments, different maintenance teams, different maintenance schedules, practices and procedures ar eall ignored in the claimed 0.08°C accuracy claimed by NDBC above.

Even if they were measuring the results from fixed buoys, they couldn’t increase accuracy the way the claimed.

And fixed buoys at least have the advantage of measuring the same patch of ocean each time.

The ARGO buoys are free floating, so the place being measured is different each time.

Fixed buoys are measuring the constantly changing ocean moving through their fixed position.

Many measurements by many floating buoys producing a better idea of average temperature within a volume of ocean certainly makes some sense but surely the maximum accuracy of that average cannot exceed the individual accuracy of the measurements.

To get accuracy increasing with the square root of N, the samples must be independent and unbiased.

Are two dimensions (time and space) of separation enough for you to conclude independence? If you claim “bias” you will have to provide conclusive proof of it.

Other way around, the burden of proof is on the assertion that the measurements are unbiased.

White noise is the most *optimistic* noise spectrum for a measurement, and this means the Null hypothesis should be that that the noise of sampling and measurement is correlated (Red noise), and one must prove that it’s not correlated in order to using N^(1/2).

(strictly speaking blue noise has negative correlation and improves better than N^(1/2) but blue noise is rare in nature)

Also since we are comparing two time periods, we need to able to conclude that the *space* is not correlated.

So let me ask you, is the temperature measurement of 1×1 area of the Earth’s surface strongly correlated with the cell next to it? The answer is quite probably yes. Therefore likely red noise. And thus N^(something less than 1/2) improvement with sample size.

They also have to be identically distributed. So you need to determine that samples all follow the same shape and characteristics. This is impossible without decent signal to noise ratio.

What Zeke has missed is the basic part of the scientific method. The tools (method) should provide you with sufficient low uncertainty in your data to be able to see variations predicted by your hypothesis.

If you don’t have this you can still assume it does. But your work carries much less relevance to the real world.

MHC,

Thank you for this common sense comment. Geoff.

This also assumes that the sampling is uniform: each buoy is measuring the same volume of water. I.e.: the mean is sum(temperature x volume of water) /N to get the heat content. W.E, demolishes this pretty effectively with a Monte-Carlo simulation.

I am continually astonished by some of the basic measurement / interpretation errors in climate science. If they were engineers, they might have learnt these things the hard way.

The sun is the object that heats up the Earth’s oceans. CO2 in the air and the air itself would have a hard time heating up the oceans which have a heat content 1200 times that of the atmosphere. The oceans heat the air not vice-versa. Hotter oceans expel more CO2 to be counted at Mauna Loa, the World’s largest volcano.

Earth oceans heated by the sun, geothermal heat flux of the ocean floor, undersea rift system volcanics, subduction volcanics and friction, heat from biodegradation in the sea floor sediment. Chemical reactions in the ocean also have endothermic and exothermic results, depending on the reaction.

Zeke is clearly allied with all the usual propaganda-pushers. What makes him “one of the good guys”?

Not necessarily allied. But sometimes a bit blinded. He’s good, because he doesn’t call names, sneer, and he has meaningful discourse with other, disagreeing people, unlike, say, Michael E Mann.

Hugs,

Thanks for the reply. Perhaps Willis was calling for civility. That’s always a good idea.

Even worse, the way I understand it, the Argo floats do exactly that – they float around and don’t even measure each time the same part of the ocean.

You can get a sense of buoy repeatability by taking multiple buoys that occur in a certain grid spacing and comparing their outputs. You can compare repeatability to grid size sampling as well. You can also test repeatability vs ocean depth and latitude. You can compare buoy to ship data and coastal station data. None of that was done.

You can also make sure everyone involved is honest, agenda-free, and non-biased.

Which we know is true, right?

“Scuse me, I gotta wipe the laugh tears off my face…

Specifically, what part of Donald Kasper’s post do you disagree with?

Specifically, which part of what I wrote gave you the idea I think he is “involved” with the Argo project?

VERY good suggestion; it’s always good to examine the basics.

There could always be systematic variations during the course of an ascent, that differ from one rise to the next. The bit about the precision improving as root N holds only for normal processes. There’s no reason to reject the possibility of “long tail” statistics outright.

the whole ocean is floating around. The buoys go with it, so, to some extent the buoy IS measuring the same part of the ocean, which makes rather nonsense of grid measurements.

Simply brilliant Willis. Thanks.