Jennifer Marohasy

If maximum temperatures are the same, whether measured by platinum resistance probes or mercury thermometers, why does the Bureau make an adjustment of 0.5 °C in the homogenisation of temperatures from Cape Otway Lighthouse?

On Friday (3rd February 2023), I will be appearing as an expert witness in the Administrative Appeals Tribunal in Brisbane. The hearing is about a Freedom of Information (FOI) request that has been denied my husband, Dr John Abbot, for over three years. The information, I will argue, needs to be made public, to enable some assessment of the reliability, or otherwise, of temperatures as recorded at official Australian Bureau of Meteorology weather stations.

Claims that we are facing a climate catastrophe because temperatures will soon be exceeding 1.5 °C is driving the closure of coal mines, caps on the price of gas and a mental health epidemic amongst children who increasingly fear global warming.

Temperature measurements as published by the Australian Bureau of Meteorology, and other such weather bureaus around the world, are at the heart of the concern, because they purportedly show an unprecedented increase, particularly over recent years.

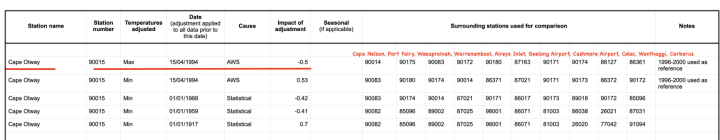

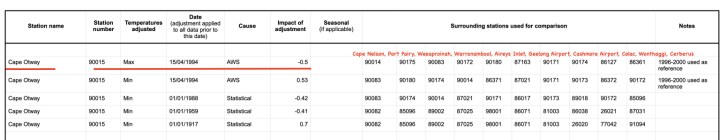

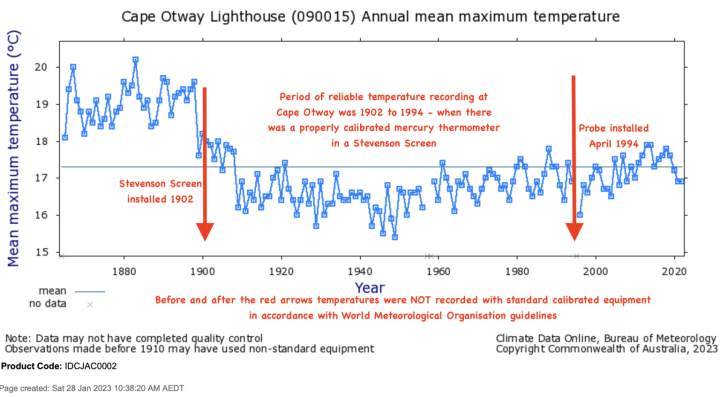

On the one hand, the Bureau claims temperature measurements from the platinum resistance probes, which now replace mercury thermometers at most official recording stations, are equivalent and therefore there is no need for scrutiny of the parallel measurements that exist for 34 weather stations. On the other hand, the Bureau has made changes to official temperatures as recorded at Cape Otway Lighthouse theoretically of 0.5 °C for the 84 years from 1910 to 1994 based on this instrument change. According to the ACORN-SAT Station Adjustment Summary temperatures have been dropped down from 15th April 1994 back to 1st January 1910 by 0.5 °C, citing installation of an automatic weather station (AWS) that involves a change from mercury thermometer to probe.

It is note worthy that the direction of the adjustment contradicts the expected affect of changing to a probe, which would be that the probe might measure warmer than the mercury. This is stated in the Bureau’s own Research Report No. 032 The Australian Climate Observations Reference Network – Surface Air Temperature (ACORN-SAT) Version 2 (October 2018) by Blair Trewin:

In the absence of any other influences, an instrument with a faster response time [a probe] will tend to record higher maximum and lower minimum temperatures than an instrument with a slower response time [a mercury thermometer]. This is most clearly manifested as an increase in the mean diurnal range. At most locations (particularly in arid regions), it will also result in a slight increase in mean temperatures, as short-term fluctuations of temperature are generally larger during the day than overnight.” (Page 21) End quote.

Because of the difficulty in achieving consistency between temperature recordings from the probes with traditional mercury thermometers the Indonesian Bureau of Meteorology (BMKG) records and archives measurements from both devices with a policy of having both types of equipment in the same Stevenson Screen at all its official weather recording stations.

It is important to note that by cooling past temperatures at Cape Otway by 0.5 °C, rather than, for example, increasing the value recorded from the probe each day, the Bureau has been theoretically under reporting the temperatures from Cape Otway lighthouse by 0.5 °C since 15th April 1994.

This difference of 0.5 °C – that is one third of the dread 1.5 °C that is driving the closure of coal mines, caps on the price of gas and a mental health epidemic amongst children who increasingly fear global warming – is only an estimate by the Bureau.

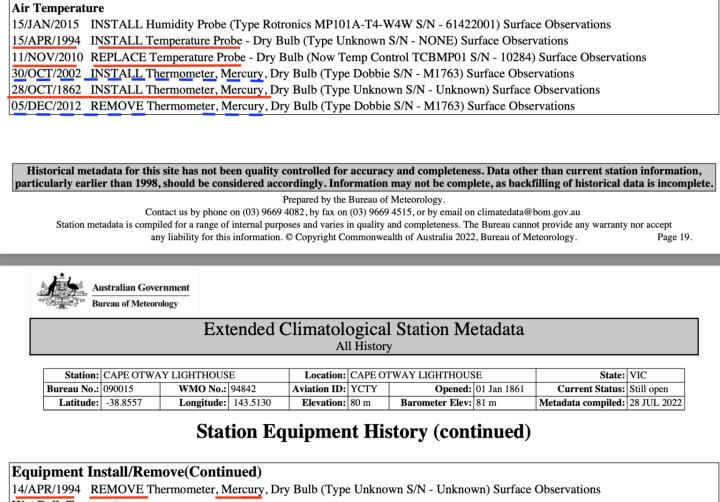

The Bureau cannot know the exact amount because the mercury thermometer was removed at the same time the probe was installed at the weather station at Cape Otway lighthouse. The mercury thermometer that was removed on 15th April 1994 had been faithfully used to measure maximum temperatures at Cape Otway lighthouse since 1865 – for 129 years. Information on equipment changes can be found in the Metadata for Cape Otway, available online.

The Australian Bureau has a policy of maintaining mercury thermometers with probes in the same Stevenson Screen for a period of at least three years when there is a change over. This policy, however, is not implemented and was completely ignored on 15th April 1994 at Cape Otway.

While the mercury thermometer had been reliably recording temperatures at Cape Otway for 129 years, the probe needed replacing after just 14 years, on 11th November 2010 according to the Metadata for Cape Otway.

According to the ACORN-SAT Station Adjustment Summary no additional changes were made to the official historical temperatures following the installation of the second probe.

It is therefore reasonable to assume that temperatures at Cape Otway light house, temperatures that are incorporated into global databases used to calculate global warming, are still reliably 0.5 °C cooler than the ‘real temperature’, in other words the temperature as it would be recorded by a mercury thermometer.

If we add this 0.5 °C to 1.47 °C, which is how much the Bureau estimates Australia has warmed since 1910, then the tipping point of 1.5 °C has already been exceeded!

This should be of immense concern to climate activists. They should be screaming for an audit of temperature measurements.

Because the only way we can know for sure whether the tipping point of 1.5 has been exceeded, or not, is if the parallel data from the 34 weather stations where the Bureau claims to be recording temperatures from mercury thermometers and temperature probes in the same Stevenson Screen is made public. Then we can know if the probes really do record 0.5 °C cooler than mercury thermometers that were historically used to measure temperatures.

This is the essence of the FOI request that has been refused: that the parallel data be released.

The Bureau has variously claimed that it cannot make this parallel data public because it would be too onerous to scan all the A8 Forms that have the maximum temperatures recorded manually (written by hand onto paper) from the mercury thermometer each day next, to the digitally recorded temperature from the probe. An example of an A8 Form is provided in Part 2 of this series.

More recently the Bureau has claimed that it cannot make this information public because it does not exist, or variously that an A8 Form is not a document and therefore not subject to John Abbot’s FOI request. All the while the Bureau’s director, Andrew Johnson, writes to me that the measurements from the probes are equivalent to the measurements from the mercury.

Following the intervention of then Environment and Energy Minister Josh Frydenberg back in October 2017, I was provided with over 10 681 scanned A8 pages for the period from 1 January 1989 to 31st January 2015 for the official weather station at Mildura. With the assistance of colleagues, I manually transcribed over 4 000 of these A8 Forms beginning 1 November 1996.

Analysis of this data indicates that the first probe at Mildura did indeed record temperatures too cool relative to the mercury thermometer, but the third probe that is now in place records temperatures too hot sometimes by as much as 0.4 °C. If this is indeed the case more generally at weather stations across Australia, then it is possible that the tipping point of 1.5 °C may have already been exceeded and by more than two whole degrees Celsius, specifically by 2.37 °C (1.47 + 0.4 + 0.5).

We can only know if the parallel data is made public, facilitating a more accurate assessment of the change in temperatures across the landmass of Australia since at least 1910.

________

SUPPLEMENTARY NOTES/ CLARIFICATIONS

The 1.5 °C tipping point is usually understood to be a mean temperature which is the average of the minimum as well as the maximum. In the above post I have compared it relative to just the maximum temperatures at Cape Otway as this blog series focuses on maximum temperatures. Of note, a problem comparing with the mean is that the alcohol thermometer, which measures minimum temperature, was also removed from the Cape Otway weather station in 1994. The Bureau only seemed to replace the maximum in 2002 and for a period of just ten years.

While the 2014 ACORN-SAT Station Adjustment Summary claims that the only change to Cape Otway maximum temperatures for the period before 15 April 1994 is a dropping-down of 0.5 °C, in reality, scrutiny of the ACORN-SAT data relative to the original measurements shows the changes to be more complex than that. Chris Gillham who owns WAClimate.net has emailed me:

Each day is treated differently by the algorithm within ACORN-SAT.

For example, Cape Otway …

6 January 1985

ACORN 2.3 : 15.4C / RAW 16.0C7 January 1985

ACORN 2.3 : 17.6C / RAW 18.0C8 January 1985

ACORN 2.3 : 18.3C / RAW 18.5C9 January 1985

ACORN 2.3 : 15.9C / RAW 16.5C15 April 1994

ACORN 2.3 : 17.0C / RAW 17.2CACORN cooling those five days by 0.4C on average.

Alternatively …

6 August 1931

ACORN 2.3 : 11.0C / RAW 10.8C7 August 1931

ACORN 2.3 : 11.0C / RAW 10.8C8 August 1931

ACORN 2.3 : 11.9C / RAW 12.3C9 August 1931

ACORN 2.3 : 12.9C / RAW 12.8C10 August 1931

ACORN 2.3 : 14.1C / RAW 14.3CBoth 12.2C on average.

Mind you, ACORN v RAW dailies never make sense.

All Cape Otway dailies from 1 Jan 1910 to 13 April 1994 average 16.7C in ACORN 2.3 and 16.6C in RAW.

All Cape Otway dailies from 15 April 1994 to 31 December 2022 average 17.2C in ACORN 2.3 and 17.1C in RAW.

[end Note from Chris]

I show the effect of the different versions of ACORN-SAT on the original ADAM values in my interactive table unique to this website, and specifically for Cape Otway here: https://jennifermarohasy.com/wp-content/uploads/2022/03/Tmax_090015.png

The ADAM values can be found here: http://www.bom.gov.au/climate/data/

The ACORN-SAT values can be found here: http://www.bom.gov.au/climate/data/acorn-sat/#tabs=Data-and-networks

Metadata for Cape Otway Lighthouse including instrument changes can be found here: http://www.bom.gov.au/clim_data/cdio/metadata/pdf/siteinfo/IDCJMD0040.090015.SiteInfo.pdf

In the following tables I annotate the relevant information in Metadata document and ACORN-SAT Adjustment Summary.

Table 1.

Table 2.

The ACORN-SAT Station Adjustment Summary, as provided to me in 2014 can be downloaded here.

The feature image at the top of this blog post is of Cape Otway lighthouse. Cape Otway Lighthouse is the oldest surviving lighthouse on mainland Australia. Built in 1848, the lighthouse sits 90 metres above the ocean of Bass Strait.

Filed Under: InformationTagged With: Temperatures

Reader Interactions

Comments

John & Suzanne Smeed saysJanuary 28, 2023 at 4:43 pmGood on Ya – Jen, I am sure you will give them hell !Happy New Yearfond regards JOHN

Sam saysJanuary 28, 2023 at 5:24 pmJenniferHow many instances have the BOM ‘increased’ historic temperatures?

Patrick Donnelly saysJanuary 29, 2023 at 1:34 amWell done!Exposing lies and obfuscation is tiring. I admire your energy. Stay well!

richard bennett saysJanuary 29, 2023 at 7:47 amThe BOM has a real problem with reporting actual measured data when it does not agree with the political narrative on climate. People around the world now recognise that the climate debate has become a toxic narrative on how to be a corrupt public sector in one easy step.

“If maximum temperatures are the same, whether measured by platinum resistance probes or mercury thermometers, why does the Bureau make an adjustment of 0.5 °C in the homogenisation of temperatures from Cape Otway Lighthouse?”

They are not the same, and no-one says they are. So they make an adjustment for the difference. The direct way to estimate the difference is to operate the two sensors together for a period of time. Other ways are to use differences measured by the same equipment pairing in other places, or the regular pairwise homogenisation where you observe the change in max relative to other stations. But you have to work out the adjustment somehow, and they do.

At they very least, time constants of these measurement devices need to be quantified and corrected for. Application of a difference offset is simplistic and inappropriate.

Averaging of temperatures is simplistic and inappropriate altogether.

The reality / rationality of this statement is borne out by the volume of point & counter-point debate that goes on about how many of these 3-decimal-point fairies can dance on the head of a mercury thermometer or a probe.

“Application of a difference offset is simplistic and inappropriate.”

I think from the numbers Chris Gillham has given above show that they are using some type of daily linear regression comparison, within the same month. The numbers coming close to the same Y = mX + b type relationship for the month of January 1985 etc.

I am reminded of the old adage, “Picking fly specks out of the pepper”

Don’t the current BoM Pt resistance probes have a customisation to provide thermal mass to closely match the response times of the previous LiG instruments? Or was that a later enhancement?

It’s interesting that both the LiG and Pt thermometers would have been subject to the same calibration but still read differently. Manufacturing differences in the LiG thermometers could easily have led to inhomogeneity, but one would expect a high degree of consistency in the Pt probes.

Re the comparison of readings and adjustment, do you know if these are blanket adjustments using a single offset, or is a more granular approach used?

If a more granular approach is used, do you know if the adjustments have been applied to the daily min & max readings for each site and the various averages recalculated? It should be a simple enough exercise with all of the readings available in digitised form.

“match the response times of the previous LiG instruments? Or was that a later enhancement?”

It is pleasing to see you asking the right questions. Nice to see the penny dropping. Yes the calibration that matters is the time constant with physical positioning and reading method also being suitably equivalent. It is a dynamic matching calibration rather than the stable state absolute accuracy.

The following are quotes from the BoM at the link below.

“The aim of the design was to mimic the time constant of the MiG thermometers to within a tolerance of ±5 seconds.”

Wind speed varies thermal time constants as roughly Y = 1 over the square root of X. Where X is the wind speed and Y is the time constant. 5 seconds is a large percentage of the error at an average wind speed but at high wind speeds it is even more. The range Dr Johnson quotes as 40 to 80 seconds of “integration” seems narrow.

The glass thermometers were mounted near horizontal and the bulb is short but the probes are long and vertical. The airflow can’t be the same.

“Four versions of the probe were produced before the design of the sensor stabilised and three are shown in Figure 6; all four varieties of these probes are in use across the observing network. The initial probe had a short thick steel probe but resulted in an extended time constant to that of the MiG thermometers.”

This longer initial time constant agrees with Jennifer’s analysis showing an initial lower maximum at Mildura.

Page 16 of document or 22 of PDF here.

http://www.bom.gov.au/climate/data/acorn-sat/documents/ACORN-SAT_O

I have a vague recollection that the louvred slats on a Stevenson screen are supposed to keep the wind out as far as possible while still allowing air exchange with the outside.

The louvres are intended to keep sunlight out while they encourage the free passage of air. For windless days to encourage the free passage of air and prevent thermal time constants that are too long, the bottom of the Stevenson screen has open slats and the ceiling has holes in it to allow vertical air flow up and out through into the roof cavity. The BoM have damaged this plan by using a grey steel pole instead of the old four white painted legs. The grey pole will obviously solar heat and in turn pre heat the rising air.

You can see the open floor slats and holes in the ceiling here.

Thanks. As I said, it was a vague memory. I had an idea that it acted as a still air chamber as well, but it was a long time ago.

Well i think common sense says you are correct. It will do that to some extent but it would not be desirable because heat will be exchanged between the box and thermometer when what is needed is only between the outside air and the thermometer.

You might enjoy reading this abstract.

https://rmets.onlinelibrary.wiley.com/doi/pdf/10.1002/qj.537

Thanks for the link!

here’s one for you:

https://iopscience.iop.org/article/10.1088/2515-7620/ac0d0b

“Insufficient time response damps recording of temperature extremes, potentially also influencing climatological means.”

This study compared two different measurement stations in close proximity with approximately the same microclimate. Whether the adjustment protocol they developed is adequate for different stations in different microclimates is difficult to judge. My guess is no. As Hubbard and Lin found, adjustments need to be done on a station-by-station basis.

What are you talking about Lance?

The time constant makes hardly any difference, perhaps at the second decimal place, which is irrelevant to a thermo vs. PRT comparison. The important point is that the sampling interval exceeds the time constant.

By now you should know that the myth that PRT values are instantaneous is just that – a myth. You also know that an instantaneous measurement would require rapid sampling and a very short response time (as short as the sampling interval or less would be ideal).

Your idea that calibration “is a dynamic matching calibration rather than the stable state absolute accuracy” is also entirely in your head. Calibration is a static quadratic relationship between resistance and the output signal, which is T.

Windflow through a Stevenson screen is also restricted by the louvers as determined by the pressure difference on the approach and lee side. Taken together, the effect of all this basically cancels-out. We end up simply with an integrated value which is the same (+/- uncertainity) regardless of instrument.

All the best,

Bill

“””””The time constant makes hardly any difference, perhaps at the second decimal place, which is irrelevant to a thermo vs. PRT comparison. “””””

This just isn’t true. The hysteresis of an LIG is on the order of minutes for a stable reading. I have attached a page from the NOAA ASOS manual that shows how electronic temperature thermometers work in the U.S. One of the reasons for the averaging is to be able to simulate the hysteresis of an LIG. It is unfortunate this decision was made because it would have forced the closing of an LIG station record at the time of changing to an electronic thermometer. Homogenization of old records would be less of an issue.

Thanks Jim,

(I’m running out of steam with this post.)

The response time (the time for an LIG instrument to stabilise) in a water-bath or ice bath is less than 1-minute, more like several seconds. Although I’ve never measured it, take a thermometer out of an ice bath, and it rapidly regains room temperature.

Because ambience is not a ‘steady-state’ condition, the response time to a change in ambient conditions is not comparable. Wind-speed within a screen is rarely 5m/second for very long for instance, and other changes and ‘thermal mass’ factors also come into play.

Even a well-trained eyeball cannot read a met-thermometer consistently to within 0.2 degC.

While lab thermometers can be more accurate Aust met-thermometers have an index mark every 0.5 degC, Instrument uncertainty is therefore 0.25 degC, which rounds up to 0.3 degC. The BoM estimates PRT uncertainty as 0.25 degC, but that depends how it is measured (whether it is uncertainty of a single observation, or repeated observations under controlled conditions, or repeated field observations compared instantaneously with a standard).

You can even place 5 Tmin or Tmax met-thermometers in a screen and two-people read them every morning for a week, and there will be variation. But is it the eyeball, placement in the screen, the thermometers …?

However, which ever way you may cut and dice an experiment, it makes no material difference – instrument variation is far less than that of the medium being sensed. Having an instrument with a response time that flattens instantaneous variation (which does not necessarily reflect the airmass being measured), is the challenge.

In Australia PRT probes have a response time longer than half the discrete 1-minute time-interval defining the sample. End of minute observations are therefore not instantaneous but represent a time-averaged value. In my view as a former observer, a 0.1 or 0.2 degC difference compared with a Lig thermometer (theoretically) observed to 1-decimal place, but having an inherent instrument uncertainty of 0.25 degC is neither here nor there.

Variance and precision are also related, Hence the conundrum between response-time, precision (how many decimal places) and the sampling rate, and hysteresis as well. I vaguely remember doing such experiments in physics 1.01 in 1965.

When we are measuring the weather, is it more important having an accurate (in decimal places) instrument, or a well maintained site (glossy, painted screen, no wasp nests, well-mowed grass), for example?

Yours sincerely,

Bill Johnston

“End of minute observations are therefore not instantaneous but represent a time-averaged value.”

You cannot get a time-averaged value using response times. Response times do not average anything. If the temperature changes faster than the response time of the sensor then you miss getting data that you need in order to calculate an average.

It would be like trying to calculate the average speed, the max speed, and the min speed of a race car going around an oval track using one stop watch at the start and a second one halfway around the track. You have no idea what happened on the second half of the track.

Nothing like calculating average speed Tim.

In a reply to a commentator to a WUWT post on 15 January: (https://wattsupwiththat.com/2023/01/15/the-on-going-case-for-abandoning-homogenization-of-australian-temperature-data/) I said:

The story about the BoM’s temperature probes is not that straightforward. It is true that Max and Min values for a particular day are 1-second readings; however, as explained in this paper: https://www.publish.csiro.au/es/pdf/ES19032, each 1-second reading is logged at the end of a discrete 1-minute sampling cycle. I know it sounds like splitting-hairs, but the Bureau argues that because the time-constant of the instrument (the response time to a particular signal-pulse) is longer than 59 seconds, it is in fact a dampened value and that as-such it makes no difference.

In the study, which you can access, they did collect all 1-Hz values – a sample for every second. They concluded that each 1-second measurement was NOT an instantaneous value and therefore that the Bureau’s measurement protocols were within the WMO’s sampling requirements.

While an update on a previous study (https://www.publish.csiro.au/es/pdf/ES19010), neither papers discuss ‘spikes’ and out-of-range data resulting from the conditions under which temperature is observed, which in my view is the elephant in the room.

Regardless of what Lance may think, it would be fair to conclude that the papers present the ‘best available evidence’ about the behaviour and performance of the Bureau’s PRT probes.

Cheers,

Bill

“Nothing like calculating average speed Tim.”

Please! It’s exactly the same. If you don’t have all the data then how do you calculate an average?

” it is in fact a dampened value and that as-such it makes no difference.”

A damped value is *NOT* an average.

“They concluded that each 1-second measurement was NOT an instantaneous value and therefore that the Bureau’s measurement protocols were within the WMO’s sampling requirements.”

So what? 1-second readings of anything with a slower response time than the signal won’t tell you anything about the average.

All the response time does is damp out noise – but it also damps out valuable data.

Once again, focusing on the *probes* is a red herring. It is the response time of the measurement station that is what is important. The probe is just part of that overall system! It doesn’t matter if the probe is a LIG thermometer or a PTR probe.

Dear Tim,

Average T in Australia is calculated as (Tmin + Tmax)/2, which is not an integrated value, but by definition that is the daily average.

A long response time does integrate across the sampling interval – which is discrete, not continuous. Therefore they don’t have to use running averages or whatever to detect the highest/lowest value in 24 hours. They have samples instead.

I have also never worked for the BoM and I am also critical of their methods, but this particular issue has been thrashed to death and having read the papers and background reports, which are all available, I don’t think there is much more juice to be extracted.

To be clear, although uncertainty of estimating a single T value is obviously an issue, Marohasy’s series of posts is about comparing two values each day, not about how those values were derived. She has strayed off-course by mixing Mildura and Otway, and also by insisting they are Bureau sites (bound by their protocols) when they were not. She seems to have started off with the misconception there would be overlap-data for Otway, but she got her thermometers mixed-up or something. At the very best, there might be some 9am dry-bulb comparisons but that is all. Dry-bulb thermos do not remember Max or Min.

She also will not discuss the methods by which she has done her comparisons and thereby derived a measure of significance. The paired t-test is simply invalid for time-ordered autocorrelated data.

Now I have another life so I’m taking a break. Not because I’m running away, but because I have other things to do. I may check back later.

All the best,

Bill Johnston

You forgot the page.

Even averaging is a kludge. A response time constant does not “average” anything. It is purely just how fast something can respond. It’s not an integration or an average. If the temperature goes up and then comes back down faster than a sensor can respond you don’t *know* what the average is nor can you do an integration since you won’t know the entire curve to be integrated.

What the time constant *can* do is remove “noise” that is less then the response time from the recorded values. But that comes at a price – you lose actual valuable data.

In the context of comparing thermometers with probes and what is going on with the weather, the data may not actually be valuable.

Cheers,

Bill

“In the context of comparing thermometers with probes and what is going on with the weather, the data may not actually be valuable.”

When you are trying to infer temperature averages out to the hundredths or thousandths digit *all* of the data is important.

Now if you want to say that the uncertainty in the measurements are great enough to overwhelm calculations out to that number of significant then I’ll agree wholeheartedly.

But that also kind of makes the conversion from LIG to PTR probes pretty much a waste of money and time!

“Your idea that calibration “is a dynamic matching calibration rather than the stable state absolute accuracy” is also entirely in your head. Calibration is a static quadratic relationship between resistance and the output signal, which is T.”

Not strictly true when you are considering a time constant for the probe. Some people call it hysteresis. The probe will read differently when the temperature is falling than when it is rising because of the time constant involved in a dynamic temperature curve. The probe will read low when the temperature curve is going up and will read high when the temperature curve is going down – because it can’t keep up with the dynamics. That’s the same problem with LIG thermometers. You never know if you got the exact maximum or minimum because the the measuring device can’t read the temperature that fast. It doesn’t have anything to do with sampling time but with response time of the device.

When compared to the measurement uncertainty of the entire station, it’s pretty much mox nix. You’ll never know it that closely anyway.

Thanks Tim,

I mentioned this in my previous response and also in Jennifer’s previous post in this series where I gave a couple of references addressing the issues. As you say, it’s pretty much mox nix (interesting mix of words).

In my view it boarders on harassment where people don’t read stuff, or they continue to claim end-of-minute spot measurements are instantaneous, when they are not, or claim immaterial differences between PRT and Lig are important. In my view there are bigger things to talk about.

A classic, is the spraying-out of ground-cover around where temperatures are measured at Amberley, Richmond RAAF, Bridgetown (WA) and many other places; gravel-mulching at Woomera, lack of maintenance of the screen at Rutherglen, scalping of topsoil around the site at Marble Bar, grading around the site at Bourke etc.

Such activities have gone-hand-in-hand with the change to sites now staffed by automatic weather stations.

All the best,

Bill Johnston

Compare the Bill nonsense to sensible instruction:

1) Bill says “Thanks Jim,

(I’m running out of steam with this post.)

The response time (the time for an LIG instrument to stabilise) in a water-bath or ice bath is less than 1-minute, more like several seconds.

2) JMA says “Leave the thermometer in the ice for more than 10 minutes before reading the indication, and push aside ice so that the 0°C mark is visible without moving the thermometer.

1.2.5.1 Liquid-in-glass Thermometers. Here

https://www.jma.go.jp/jma/jma-eng/jma-center/ric/Our%20activities/International/CP2-Temperature.pdf

How many times have you observed the weather Lance or filled-out an A8 form. The A8 is a field book, not the register, which is official document. Notice on the attached, that they tick-off (i.e., check) the values that go into the Register. Although we routinely did so, there was also no imperative for A8 forms to be retained.

I’ve also often done ice-bath comparisons Lance and also water-bath comparisons between a met-thermometer that seemed to be off-scale, or just as a check, and a lab-grade thermometer. You don’t need 10-minutes to equilibrate.

Accepting that everybody has probably gone to sleep by now, the attached jpg of the A8 form shown by Jennifer further down the list, which although this post is about Otway, is actually for Mildura, shows:

9am dry bulb 16.4

Max reset 16.4

Min reset 16.4

DB thermo 16.5

Max reset under “Muslin” 16.7

Min reset ditto 16.4

So which value is the ‘true ‘ value and why the difference?

All the best,

Bill

Even the “Quadratic” Buzzword use for the static calibration is a bit out of date, 1930. One of the manufacturers the BoM use explain it here.

https://www.wika.cn/upload/DS_IN0029_en_co_59667.pdf

Nope.

In the absence of any other influences, an instrument with a faster response time [a probe] will tend to record higher maximum and lower minimum temperatures than an instrument with a slower response time [a mercury thermometer].

So that does not excuse BOM from adjusting data prior to the installation of new measuring equipment just because it has a faster response time. Totally inappropriate.

Pairing two sensors in a different location? How far apart? The BOM has been caught homogenising (fudging) temperatures at sites 1,200 km apart. Let’s not call this any sort of science and admit that all the BOM is doing is creating propaganda for public consumption.

I think it was assuming the LiG & Pt instrument deltas at one site would be very close to those from a similar site rather than comparing the LiG at one site with Pt at another.

They are listed in Table 2. All in Victoria.

Yeah Colac has had as many shipwrecks as Cape Otway.

Those windjammers were going so fast they managed to hit the rocks at Cape Otway and then plough 57 kms inland to their final place of rest in the main street of Colac.

And the first thing the survivors did was check the temperature there and found that it was exactly the same as they read at Cape Otway just before the salt water spray in their faces turned to plains dust.

“Yeah Colac has had as many shipwrecks as Cape Otway.”

Maybe fewer. But not none.

To think – these lads came all the way from Ireland, only to discover that the middle of a big inland lake is not the place where you go to go shooting.

(I think as well as the monument, they were awarded a Darwin Award?)

Table 2 is interesting.

There’s a mismatch between the stations used for the minimum and maximum adjustments on 15/4/1994.

90175, 90172, 87163, 86127 and 86361 were used for the max adjustment, but not min.

There are mismatches between the stations used in the previous min adjustments as well, which is hardly surprising given the number of years between the changes being applied.

Also, does anybody commenting here know if the 4 min adjustments over the years were cumulative, or were the previous adjustments reversed before applying the new adjustments?

BOM also say the homogenisation process does not overwrite the original temperature which are kept and available for the public. Its supposed to only be to analyse temperature trends

Doesnt seem to work out this way

http://www.bom.gov.au/climate/data/acorn-sat/

How do you get that from your link, which just describes ACORN. The original readings are available. Here is the search page. You can find, for example, daily data for Cape Otway, back to 1864. They are having some technical problems at the moment.

Nick,

They have been having technical problems for several months now. Here is an email exchange from 23rd Nov 2022.

Geoff S

……………………

For some days now, I have been unable to access data on the BOM web site that has provided service for more than a decade.

This message appears when I try to visit this site:

http://www.bom.gov.au/climate/data/

We are currently experiencing technical issues with this service and are working to resolve them as quickly as possible. Please visit http://www.bom.gov.au/climate/data-services for alternate ways to obtain data.

Is this service closed or is it about to close?

Which alternative URL is recommended by the Bureau for access to similar “raw” data to that formerly made available?

Regards

Geoff Sherrington

Geoff,

It will all be on GHCN Daily. That page is very slow to load, so I recommend the portal here

Monthly averages are more conveniently got on GHCN V2 Monthly.

Nick,

Diversions again.

Why do you point me to a USA site whose data might or might not be altered from that at the original BOM Climate Data Online site?

That BOM sitehas operated for a decade or more, It is Australian data from the body appointed to curate it.

Why is the BOM making it hard to access data that used to be easy?

Is that allowed in the BOM charter?

I look forward to the day when you finally admit to the scope, nature and purpose of these shenanigans by our BOM.

Geoff S

Irrelevant Nick.

Should the data be made available to Dr John Abbot or not?

The BoM has a case.

The AAT will decide.

The BoM has no case. They have no right to withhold this public information from the public.

The next question is why would they want to hide this temperature data from the public? What harm would be caused by releasing this data? The only harm I can see is to the reputation of those who work at the BoM. And that’s why they won’t release the data to the public. The BoM should be happy I’m not judging their case.

If they have to resort to claiming that “an A8 Form is not a document” then their case is feeble.

It may be far worse than feeble. Our National archives in Australia stores the raw data from many old records as these observer forms. It is a major crime to alter these records. The National archives is a different department. So the BoM can’t change this history so easily. Those seeking to rewrite history could be attempting to alter the laws protecting the raw data on the A8 forms.

So they would need the public to be confused about what raw data is to devalue the A8 form. So other things would be called “raw” that are not. An example of calling things raw that are not can unfortunately be seen at the top of this page.

Some lighthouse keepers’ log books for Cape Otway are online from the National Archives of Australia. Log books show they observed wind direction and force, air pressure in bars and thermometer readings (degF) at 6am, Noon, 6pm and midnight – no Tmax and no Tmin.

Unless they ran a separate register, I suspect (but don’t know for sure) that Tmax in the BoMs database in those early years, is the midday DB observation, or possibly 6pm. Perhaps there is another source of info that either I have not tracked-down or is not in the public domain. I don’t know where the lighthouse service’s records ended up (possibly with State archives).

Evidence suggests they moved the screen to the vicinity of the now cafe in 1959 or 1960, but the effect is masked somewhat by missing data. Simon Torok’s adjustment file noted “entry/observer/instrument problems” in 1977.

I’m perplexed as to why there is no mention in any metadata about the site moving to the vicinity of the cafe. Metadata also does not note when the 60-litre screen was installed, which would have been after it was specified in 1973.

I’ve attached a picture of the 60-litre screen outside the cafe and the AWS in the distance that I took in 2015. The ‘cafe’ screen had a cable attached to its support indicating it was equipped with an electronic-probe.

ACORN-SAT says “A small site move (less than 20 metres), which had no significant impact on temperatures, occurred in 2010–11. A short period of parallel observations took place but those were not used in ACORN-SAT”. However, I think the move was more like 50m and when I went up to the the AWS, I found it is in an updraft zone from the south, that may affect measurements (especially rainfall).

Last time I analysed Cape Otway was in August 2020, perhaps 2 more years of data my confirm (or not) previous findings.

All the best,

Bill Johnston

http://www.bomwatch.com.au

“So other things would be called “raw” that are not. An example of calling things raw that are not can unfortunately be seen at the top of this page.”

Good point. Not everything called “raw” is really raw.

The temperature data mannipulators are sneaky little critters.

You didn’t answer the question, Nick

Dear Jen,

The 2014 adjustment summary for Cape Otway was available for those who searched. But Jennifer if you don’t search, you won’t find. Also, as a PDF, they are not much use. The ACORN-SAT V2.3 adjustment summary is also available directly from the Bureau. I develop these into Workbooks and lookup tables to ID all sites used for adjustments.

Also, Jen, if you don’t employ robust, replicable analysis protocols, how would you know if trends in data were caused by the climate, the weather or site and instrument changes? In Cape Otway’s case multiple site changes affected maximum (Tmax) temperature data. These were in 1899, 1909, 1943, 1951, 1960 and 2009. While not all can be reconciled with metadata, some can be inferred from other sources.

While you published a paper claiming cooling in Cape Otway and other datasets including Deniliquin (https://doi.org/10.1016/B978-0-12-804588-6.00005-7) as we have discussed before, control charts are no intended to determine shifts in the mean due to site change effects or other inhomogeneities, so you missed them. Your conclusions in relation to Cape Otway cooling were therefore spurious.

ACORN-SAT always adjusts past T-values relative to the present. They also only make adjustments that suit their purpose of maintaining a trend that agrees with models.

Trewin also said on p.22 of the referenced document:

“Given the relatively small proportion of the network which is affected, it is estimated that the overall effect on national maximum temperatures is in the order of +0.01 °C, and on minimum temperature, between zero and -0.01 °C. In the context of overall Australian temperature change and variability, these network-wide impacts are negligible, whilst even at the worst-affected stations, the size of the impact falls well below the 0.3 °C minimum threshold normally applied for station-specific adjustments in the ACORN-SAT dataset. No specific adjustment for this change was therefore made in version 2 of ACORN-SAT”.

I don’t see the point of splitting hairs over this, particularly if you constantly disregard important issues such as the uncertainity of comparing two values, use of paired t-tests on time-correlated data, your lack of transparent statistical protocols, and that you have not undertaken careful site research, which I have discussed with you before. You could check the work I’ve done on data homogenisation at http://www.bomwatch.com.au. Just the other day I released a study on Potshot/Learmonth.

In relation to Cape Otway, Simon Torok noted in his PhD thesis:

07/1898 “Alterations to thermometer shed now complete”.

1900 Stevenson screen supplied at least before 1902

03/1908 New screen supplied

10/1938 First correspondence (between BoM & Cape Otway staff)

07/1954 New screen replaces very poor one

09/1966 Screen moved slightly to better exposure

So, the cape Otway dataset was not fantastic at all. The screen may not have been installed in 1902. If it was it could have been of a different design to a standard 230-litre screen. It seems from the data that the 1908 screen was of a more consistent variety. Also, there is an up-step in 1959/60 following a break in the record. From 1877, numbers of observations hovered around 300/yr, in 1907 there were only 252 observations, in 1996 there were 281. While I have written R-code that processes large datasets in the blink of an eye, you seem not to have a protocol for assessing basic data quality.

By the way, Otway was not a Bureau-run site; a dry-bulb thermometer is not for measuring maximum or minimum and its clear from site-summary metadata that maximum and minimum thermometers were removed on 15 April 1994, which is the day the temperature probe (type unknown) was installed. They ran a dry-bulb wet-bulb until 14 April 1994, which is when they installed a wet-bulb probe (but not a humidity sensor, which was not installed until 15 January 2015).

So, while there should be 9AM dry-bulb temperatures available, good luck with finding overlap maximum thermometer vs. Tmax calculated from AWS data.

Oh, wait, here is picture from the 1940s. It shows the power-house and down near the lighthouse, the office, and somewhere near there would have been the Stevenson screen. I believe the radio room was in the building now used as the cafe.

Kind regards,

Bill Johnston

scientist@bomwatch.com.au

Comparing two measurement devices from time T0 going forward does *NOT* provide an offset for for the older device from time T0 going backward. You simply do not know what the calibration drift for the older device was over time. You can only determine an offset going forward from time T0 for the older device – and even that assumes the newer device is 100% accurate which is probably not justified over a time period of three years.

What *should* be done is that the old data set should be ended and a new data set started for the new device. The old data set should not be “adjusted” since the actual adjustments can not be known for the past – unless you have a time machine!

It’s remarkably fluid Nick. I found a very old BoM site-list that I archived on 4 December 2014, which is not long after I started discussing data homogenisation with Jennifer. The original site at Otway was listed as Latitude -38.8583 Longitude 143.5125, which before the widespread adoption of accurate GPS was probably estimated from a 4-mile to the inch military map . The coordinates put the site in the sea slightly off the coast from the lighthouse – in other words down there near where I thought it was, before it migrated up the hill at some unknown date.

I also just updated my adjustment file using info from the ACORN-SAT Catalogue. The AcV1 adjustment to Tmax of -0.5 degC on 15 April 2004 (AWS) is alas no more in AcV2.

Considering just Tmax, in the most recent iteration of ACORN-SAT (AcV2.3), there is a ‘Move’ 1 January 2011 (adjustment +0.33 degC), another for 1 January 1995 (statistical -0.54 degC) and a third ‘statistical’ applied 1 January 1985 +0.39 degC. So one single adjustment in 1994 has now morphed into three, whatever that means in practice.

A bit up here, a bit down there, a bit more up – a bit dodgy don’t you think?? Or is is the way policy-driven “science” works in the CSIRO/BoM climate industrial complex. (Don’t answer, I already know).

All the best,

Bill Johnston

http://www.bomwatch.com.au

“So one single adjustment in 1994 has now morphed into

three, whatever that means in practice.”

As I mentioned elsewhere in the thread, you can either base an adjustment on fundamentals – in this case, whatever you know about change from LiG to probe – or on pairwise comparison, which estimates solely based on identifying a change which didn’t happen with nearby stations. If you are doing pairwise comparison anyway, as they are, you need to avoid double counting. In this case it seems the 1994 change showed up in pairwise comparison, so the direct -0.5 should be omitted. As often, the pairwise gets the amount about right, but is not as precise about date.

Thanks Nick,

Except that is not what they do. The use a reference series comprised of up to 20 neighbors, selected from the pool of sites on the basis each is highly correlated with the target site. The correlation test (Pearsons linear) is based on first differences. As no Australian datasets are homogeneous (all have been affected by station moves and changes), the selection criteria effectively means they choose faulty data to correct faults in ACORN-SAT.

I’ve covered this in several recent downloadable reports on homogenisation at http://www.bomwatch.com.au, and also with respect to Rutherglen, Charleville and other sites where I’ve looked closely at the quality of data used to construct reference series.

As an example of policy driven science, the ACORN-SAT project is a fallacy designed to create trends in homogenised data that are unrelated to the climate.

Homogenisation has never been objective (see my most recent report on Potshot where they decided not to adjust when they should have). Trewin does no post-hoc evaluation of the product, which I’ve been doing in this latest series. I hope to get the number of ACORN-SAT sites that I’ve analysed up to ten.

I’ve collected material on Darwin, Alice Springs, Deniliquin, Cape Otway, Port Hedland, Onslow, Adelaide, Launceston, Mildura, and a bunch in NSW, like Wagga Wagga, Dubbo and Coffs Harbour. Do you have any personal preferences?

If there is no real trend across all the datasets I’ve thus-far examined (including Cape Otway), and subsidiary sites, then the models (otherwise known as the science) are wrong. Australians have been dudded by organsations we should be able to trust.

Bomwatch protocols are outlined using Parafield as the case study, and you are welcome to grab the data or data-packs for each of the studies (and earlier ones too), and analyse the same data for yourself,

Yours sincerely,

Dr Bill Johnston

(You can contact me at scientst@bomwatch.com.au)

Hubbard and Lin showed almost twenty years ago that adjustments to temperature measurement stations need to be done on a station-by-station basis because of the variances in microclimate for different stations. It doesn’t matter if you do pairwise comparisons or regional averaging, all you do with the homogenizing is spread the measurement uncertainties around to other stations.

Anthony’s studies of station sites clearly demonstrate the varieties of environmental effects on a stations microclimate. Trying to homogenize based on this information clearly demonstrates how futile it is from a MEASUREMENT standpoint.

Hubbard and Lin clearly showed that one must CALIBRATE EACH STATION on an individual basis because of varying conditions at individual stations.

It makes the whole process an adventure in futility. Measurement uncertainty can only compound when “homogenizing” various stations.

Allow me to hype the maximum temperature just outside of Boulder, Colorado today.

The high was 8F and the wife just yelled at me, turning down an offer to go out for dinner because she didn’t want to go outside and is tired of the cold. Her parents moved to Florida long ago. Funny how “global warming” is driving people to move to the South.

Contrast this to the same date in 1931 when the maximum was 70F. In my decades in Colorado, this January has been the coldest hands down (and in gloves). Reaching over 60 or 70F for at least a couple of days in January is common. Not this year.

A weatherman the other day said that our average this month has been 15 degrees lower than than normal. With this cold spell it’s probably closer to 20.

Taking variance into account, “global warming” is a fart in the wind.

For sure man. The same thing goes for Utahns; tomorrow we’re going to wake up only to experience a high of 21 degrees. Despite a short relief forecasted for early February, the models are implying that it is short lived and the west will go back to being cold by Feb 10.

This January will also be one of the warmest on record for CONUS, and the media will most likely shove climate change in our faces once more while ignoring the cold snowy winter out west.

The only reason this month was above average for the western half was because of the atmospheric river. Joe Bastardi predicted it before it happened. https://twitter.com/BigJoeBastardi/status/1607068166148206595

Aside from his cold bias, he’s been spot on with the nature of this winter; a true meteorologist.

“This January will also be one of the warmest on record for CONUS [Continental United States]”

The reason for this is the jet stream has been bringing in mild Pacific Ocean air across most of the U.S during January:

https://earth.nullschool.net/#current/wind/isobaric/500hPa/orthographic=-106.61,25.03,264

Also, the coldest air in the arctic at the moment (marked) is hovering over central Canada and trying to sink to lower latitudes:

https://earth.nullschool.net/#current/wind/isobaric/500hPa/overlay=temp/orthographic=-106.61,25.03,264/loc=-78.738,69.089

Here is the official maximum temperature series from the Government of Canada archive, last updated 2020, for Canada’s largest city. In Canada, it is warming twice as fast as the rest of the world due to our northerly latitude.

https://www.canada.ca/en/environment-climate-change/services/climate-change/science-research-data/climate-trends-variability/adjusted-homogenized-canadian-data/surface-air-temperature.html

Here is for Canada’s capital city, home of the nation’s lawmakers.

And another showing no unprecedented warming today.

Here is from the heart of the country, in the prairies.

Here is from the far east coast

here is from the far west coast

“Here is the official maximum temperature series from the Government of Canada archive, last updated 2020, for Canada’s largest city.”

Something is wrong with these graphs. The y-axis is presumably in degrees F. It says the average max in Toronto is about 33F, or 1C. That is far too cold. Wiki says the average max is 12.9C, or 55.2F. Even the average min is 5.9C.

Might be right for the winter max.

in Canada we are civilized and use units of degrees centigrade, or Celsius.

Commendable, but that puts Toronto at 33°C average Max. A bit on the warm side of civilized.

the charts are labelled annual maximum daily temperature.

So it’s the one hottest day in each year? You might have told us. And units on the axes do help.

the moving 30 year climatology of annual maximum daily temperature is indicated with the grey line.

The graph is very clear it is the homogenized maximum temperature, Toronto can be very hot and humid in the summer months, not counting the hot air generated by Queen’s Park.

Another temperature chart showing it was just as warm in the Early Twentieth Century as it is today, despite CO2 levels increasing in the Earth’s atmosphere.

There is no unprecedented warming today. This is a lie told by temperature data mannipulators.

Every person not cooperating with a legal request should be fired on the spot.

Reply to petty little bureaucrat:

Apologies but I’m finding it ‘a little onerous’ paying your salary this month.

I also find it onerous complying with your global warming diktats – even if I could onerate myself into believing you even exist any more.

Thank you for your kind attentions to date, they’re no longer required.

Goodbye.

This great article encouraged me to write a climate rap (article) on my climate science blog today, which is the main symptom of a great article. They make you think. I’ll spare you the whole rap (at the link below) and present the conclusion: A second Sunday climate change rap — a new personal record for one day for Ye Editor (honestclimatescience.blogspot.com)

The average surface temperatures are whatever government bureaucrats want to tell you they are, and those bureaucrats can’t be trusted.

The Climate Howler predictions of climate doom would continue even if historical temperatures were perfectly accurate.

If leftists did not scaremonger to gain political power and control with climate change, they would scaremonger with something else to gain political power and control.

“Climate change” (CAGW) and the Nut Zero panic response to CAGW, are not about science and engineering. They are about leftist politics and power.

The leaders of the climate change religion give no indication they really believe a climate emergency is coming, besides words spoken. They fly on private jets and have huge personal carbon dioxide footprints. They buy two mansions on the ocean (the Obamas). Almost all do absolutely nothing to demonstrate they are true believers in the climate junk science they spout like trained parrots.

*********************************************************************************

Hyping Daily Maximum Temperatures (Part 1) – Jennifer Marohasy

Hyping Maximum Daily Temperatures (Part 2) – Jennifer Marohasy

Part 1 and Part 2 of this series, at the links above, and Part 3, were all previously recommended on my daily list of the best climate science articles I have read: Honest Climate Science and Energy

Jennifer Marohasy has the best Australian climate website, IMHO

Jennifer Marohasy – Scientist, Author and Speaker

The best Australian climate science authors I’ve found are Jennifer Marohasy, Rafe Champion, Geoff Sherrington and Bill Johnston

Bill Johnston

http://www.BomWatch.com.au | Keeping Watch on Australia’s Bureau of Meteorology

Richard Greene,

Thank you for the compliment at the bottom.

However, I have problems with many of the assertions that you make on blogs because they are not adequately backed by measurement and observation. Therefore, I no not place much value in your compliment. Tips. Tidy up your act, go for quality rather than volume, deal with what is important and always quote source of data.

Geoff S

This lifted directly from the BOM website — My bold emphasis.

State of the Climate 2022: Bureau of Meteorology (bom.gov.au)

—————————————

Observations, reconstructions of past climate and climate modelling continue to provide a consistent picture of ongoing, long-term climate change interacting with underlying natural variability. Associated changes in weather and climate extremes—such as extreme heat, heavy rainfall and coastal inundation, fire weather and drought—have a large impact on the health and wellbeing of our communities and ecosystems. These changes are happening at an increased pace—the past decade has seen record-breaking extremes leading to natural disasters that are exacerbated by anthropogenic (human-caused) climate change. These changes have a growing impact on the lives and livelihoods of all Australians. Australia needs to plan for, and adapt to, the changing nature of climate risk now and in the decades ahead. The severity of impacts on Australians and our environment will depend on the speed at which global greenhouse gas emissions can be reduced.

—————————————–

Taxpayer funded alarmism. — Let’s blow up the BoM..

Yeah, if you read that and believed it, you would think the Earth is racing towards disaster.

And it’s all a BIG LIE! created by temperature data mannipulaors.

They could not prove one claim they made above. They are making unsubstantiated assertions and presenting them as facts to the public. They are lying, and their lies are causing GREAT HARM to the people they are supposed to be serving.

Steve G,

One can contradict assertions in that BOM statement.

For example, heatwaves becoming more intense recently.

Without proof, I can imagine a person thinking that because temperatures over Australia have been increasing at about 1.5C per century (which I dispute) then heatwave intensity must also be on the increase.

This does not seem to be the case.

Ambient temperatures at a station are not easy to mathematically relate to heatwave mechanisms. Melbourne heatwaves originate 1,000 km or more to the N-W, in the hot centre desert of Australia. The summer weather pattern can include a N-W wind that brings hot air from the centre to Melbourne. It is the metrology and meteorology around Alice Springs that affects how hot the Melbourne heatwave is.

This can be shown from heatwaves on our east and southern coasts.

The table shows the average temperature of heatwaves in 4 coastal cities.

km S of Brisb. Av 3-day Av 5-day Av 10-day heatwave T deg C

Brisbane 0 36 34.8 33.8

Sydney 666 35.8 33.3 30.8

Melbourne 1110 39.8 37.5 33.7

Hobart 1665 33.3 30.4 27.1

The heatwaves do not always get cooler with greater distance from the Equator as intuition would expect. Their “hotness” is mainly influenced by weather patterns shifting hot air from the centre to the coast, at various speeds and various preferred directions. More southerly Melbourne (and Adelaide, not shown) usually have hotter heatwaves than Brisbane or Sydney, which are much closer to the Equator. Intuition fails..

…………………

This is but one way to show that analysis of heatwaves is difficult. You must not believe all that you read. I have yet to see a paper about Australian heatwaves that does not leave out some important factor, so the conclusions are questionable.

Geoff S

I would also recommend that that the variance in the data be examined.

A mean or an arithmetic average is not a measurement! It is a statistical calculation to obtain the central tendency value of a distribution of data. As such one must also use other statistical parameters to describe the data being analyzed. Parameters such as variance, skewness, and kurtosis are the usual parameters.

I am beginning a close look at the variance in temperature data and am amazed no one ever discusses it. Starting with daily data, the diurnal temperature excursions result in a large statistical variance. What does that tell us? That the mean is a very inaccurate value when used to describe the mean of two temperature data points. Be my guest, put a Tmax and Tmin into one of the online statistical calculators and see what you get!

Large variances carry through to monthly station averages. Even using NIST TN1900 for determining the uncertainty in the estimated means of Tmax and Tmin, I am seeing a consistent variance in the range of 3 – 5 °F (1.7 – 2.8 °C). This far exceeds the values climate science portrays that it can ascertain via averaging.

This is where the terminology differences between different fields come into play.

I was soundly put in my place recently for having the temerity to suggest that “mean” could have any meaning other than the expected value of a probability distribution as per https://www.gwern.net/docs/statistics/probability/1957-feller-anintroductiontoprobabilitytheoryanditsapplications.pdf, IX.2, p 221

btw, I quite agree that

Using this definition then of what use is standard deviation? If the data converges to a finite expectation then standard deviation is meaningless.

The issue here is whether you are a statistician or a physical scientist/engineer/craftsman.

The *expected value” of a series of measurements may be calculated to any number of digits by a statistician. That does *NOT* mean that you can use that “expected value” for anything in the physical world.

Temperatures are used to describe the physical world, they are measurements and, as such, do not have *exact* values, not even an *exact* mean value or “expected value”.

Math, even statistics, is only useful in so far as it describes the real world. You can’t roll a 3.5 on a six-sided die no matter what the “expected value” converges to.

This is why I’m convinced that some very capable people are arguing past each other by using the same terms for different things, and becoming very frustrated because the others “don’t get it”

The copy/paste didn’t work very well, so best to go to the page in the PDF.

It’s essentially the sigma (x sub n times P(x sub n)) expected value

That is a perfectly good statistical definition for a probability function of a process that can be DEFINED, such that repeatable values of probability occur. Think of counting various discreet probability occurrences for flipping a coin, rolling a die, answering a multiple choice question, etc. Even a continuous process can have a defined probability function. Think about a sine wave.

Temperatures, well not so much. Especially when we only have two samples per day to work with. It would be nice if each day of the week, or month, or year had a defined probability of a certain temperature occuring. Alas, that isn’t going to happen. The best we can do is deal with limited data and question those who insist that statistics can give us answers to the thousandths of a degree.

The NIST TN1900 document provides firm basis for evaluating the uncertainty of a mean of an average temperature for a time period. Uncertainties with values in the units digit gives credence to the claim that anomalies in the thousandths digits are not statistically significant and can not be justified. Even an increase of 2 °C would probably not be significant, although I have more work to do to validate that.

As you said, temperatures not so much.

The challenge seems to be to get everybody using the same meanings. People seem to be coming at it from different directions, and with different definitions.

It is of real concern to me that so many faux scientist, both sceptics and alarmists, are uninterested in the evidence, specifically the parallel data. These are the real temperature measurements as manually recorded onto the A8 forms as a permanent record for that location for that day.

They are uninterested because this evidence does not accord with the narratives that have developed on both sides.

This is also why it is so difficult for newspapers, and other bloggers, to report on any of this. Because the evidence is difficult to fathom. In the end it shows the absolute incompetence of the Australian Bureau of Meteorology. Perhaps we will find eventually, and also other such institutions across the western world.

Yet the established narratives do not generally include incompetence, rather they focus on malfeasance.

Nick Stokes acknowledges that the temperature measurements from the platinum resistance probes and mercury thermometers are not the same. Bill Johnston quoting Blair Trewin falsely suggests that the difference is miniscule. Meanwhile the director of the Bureau, Andrew Johnson, tells me the measurements are the same.

We can see from the A8Forms that I have acquired for Mildura that the measurements are very different, but not necessarily in the direction expected. Who would have thought the first probes would record too cool!

The purpose of this blog post for me was to draw attention to the fact that The Australian Bureau/Blair Trewin has dropped-down temperatures at Cape Otway lighthouse not by some small amount, but by 0.5 degrees, to coincide with the installation of a probe replacing a mercury thermometer on 15th April 1994. Because the first probes did indeed record too cool.

There is no parallel data available for Cape Otway lighthouse because the Bureau removed the mercury at the same time it installed the probe. This is indirect contradiction of its own policies.

I have, however, been able to get parallel data for Mildura. And I have shown, in earlier posts in this series, how the data from the probe and mercury in the same shelter at the same location is not equivalent. Specifically that the first probe recorded too cold, and the third too hot, relative to the mercury.

And I will attempt to post here an actual A8 Form from Mildura, showing the probe recording 0.5 degrees C too cold, which matches exactly the amount the BOM estimated the effect of the instrumental change to be at Cape Otway. Specifically in the attached, the probe recorded 27.6 and the mercury 28.1. I will make an explanation of this the focus of my next blog post in this series.

For those of us interested in the truth we must not be blinded by established narratives but instead take solace from the words often repeated by Anthony Watts quoting Andrew Breitbart: “Walk towards the fire. Don’t worry about what they call you. All those things said against you because they want to stop you in your tracks.”

And for those nearby to Brisbane, wanting to attend, the AAT hearing will be this Friday 3rd February. You may need to register in advance. Details are:

APPLICANT: John William Abbot RESPONDENT: Director of Meteorology

This application has been listed as shown below:

Hearing

Date: Time: Location:

Contact Officer: Jessica S

Friday, 3 February 2023

10:00AM (Qld time)

Please proceed to Level 6 Reception Address: 295 Ann St

BRISBANE QLD 4000

I am planning to show some A8 Forms, and hopefully someone from the BoM will be present to confirm numbers. It is the case that the Director of Meteorology, Andrew Johnson, lives in Brisbane and has his main office in the Bom’s Brisbane office. But will he attend?

Dear Jennifer,

You say:”These are the real temperature measurements as manually recorded onto the A8 forms as a permanent record for that location for that day”. But they are not. Thy are just numbers and you can’t inherently know which is the more accurate, the more “real” and which is not.

I’ve consistently encouraged you to discuss/understand these differences in terms of uncertainties, not in terms of your impression that one is right and the other wrong, or that minuscule differences matter.

You also say that: “Bill Johnston quoting Blair Trewin falsely suggests that the difference is miniscule. Meanwhile the director of the Bureau, Andrew Johnson, tells me the measurements are the same.” Blair Trewin provided evidence that differences were minuscule. You should also know as a expert witness that differences between observations that fall within each others uncertainity bands ARE the same. Statistical fact.

Otherwise explain to all us dunderheads why not. I know why you will not explain, which is because you can’t.

I can’t imaging why Andrew Johnson would be there on Tuesday. Furthermore, if I were you I would steer right clear of the kind of conversation happening here. After all, they may bring in a few of their experts from their metrology lab who know this stuff backwards.

All the best,

Bill

Hey Bill,

Blair Trewin analysed the parallel maximum temperature data for Mildura for the period December 1997 to May 2000 and found a difference of minus 0.22. He did not comment on its statistical significance or otherwise. If he had, he would have had to conclude that the probe and the mercury are not equivalent.

I undertook the same analysis and got the same difference, minus 0.22 after transcribing a heap of numbers myself.

As far as I know, Blair and I are the only ones who have access to the A8 Forms with this data, and the only ones who have attempted any analysis of it.

Further I tested this difference between the temperatures from this first probe and the mercury for statistical significance and found it to to be so. There is a statistically difference in the temperature measurements for the period 1997 to 2000. For you to suggest otherwise is dishonest.

Blair Trewin stopped his analysis here. He has not undertaken any analysis of the Mildura data after May 2000. He has not considered the equivalence or otherwise of the second probe installed on 3 May 2000 or the third probe, installed on 27 June 2012.

I went on and analysed the data for the period 3 May 2000 to 27 June 2012 and found this second probe drifted relative to the mercury by the equivalent of nearly 1C over 100 years.

For you to have an opinion on this data, and my analysis, given you have no experience of either is dishonest. You have never requested the same from me. Instead you email various commentators, white anting. That is what you specialise in.

Continuing to transcribing the data from the A8 forms, I went on further, and analysed the data for the period after the 27 June 2012 and found this new third probe recorded hotter than the mercury often by plus 0.4C.

I could not make any determination as to the statistical significance of the mean value because the data from the mercury after the third probe was installed is not normally distributed, because they were not recording from the mercury on hottest days after June 2012. I suspect the difference was so large they hesitated to record it.

Given you have no access to this data, have not requested it from me or Blair Trewin at the Bom, I suggest you desist from having an opinion.

Cheers,

Thanks Jennifer,

Do you mean Table 3 on page 20 of is AcV2 Report, where he says from Dec 1997 to May 2000 there is a difference (I read a difference between maximum temperature averages) over 2.5 years of 0.22 degC?

In relation to that he said, “Furthermore, at two of the sites (Mildura and Cape Byron) where the differences are more than 0.1 °C, these differences align closely with the results of tolerance checks on the automated probe, indicating that the differences are primarily accounted for by the probe being slightly out of calibration (something which should affect maximum and minimum temperature equally)“.

And what test do you use?

And how can you claim a difference is significant if it is within the error (uncertainity) of both instruments?

It is a subject I am interested in, and I’m not in any way dishonest. (I don’t believe you are dishonest either.)

Kind regards,

Bill

“ (something which should affect maximum and minimum temperature equally)“”

Is it the probe being out of calibration or the entire measurement station? PRT probes may have a very linear response curve but that doesn’t mean the entire measurement station does. Far too many people tend to only look at the sensor and not the entire measurement station.

Dear Tim,

I quoted Trewin’s words without making any assertions about his words.

In reality, PRT probes rely on a quadratic calibration, which is resolved internally. Probes can be out of calibration at one end, and within tolerance limits at the other. In other words, while generally robust, over-ranging may be a problem.

Cheers,

Bill Johnston

Over-ranging of the sensor may be a problem but it still doesn’t address the fact that it is the overall measurement station that determines the overall calibration. The probe is just one of any number of contributing factors that affects the station calibration.

I disagree Tim, The instrument itself is measuring what is happening at a station. For instance, kill the grass with round-up and the instrument will report an increase in Tmax. Change the screen size, and something else will happen; a leaf in the tipping bucket raingauge will instantly reduce the rainfall.

Calibration of the instrument (which is assumed to be unbiased etc) is a stand-alone issue. If a thermometer is suspected to be out, one would do an ice check, or test it using an oil bath (don’t try boiling water!), or run another thermometer (say a more precise lab-thermometer) in parallel. Check the TBRG by pouring a set volume of water through the funnel and count the tips; check the level etc.

Nevertheless, sites themselves need to be maintained in a consistent way, according to stated standards – mow the grass, paint the screen, clean-up debris etc. There are even set standards for cleaning-up after a dust storm!

Thanks for your interest.

Bill Johnston

You are missing the whole point. Is that on purpose?

If Station 1 has a 3″ fescue cover under the station and Station 2 has pea gravel you simply can’t calibrate Station 1 using Station 2 readings. The same thing applies if the grass turns brown under one station because of poor soil moisture while it stays green under the other. The *microclimate* becomes different for each.

If Station 1 has a mud dauber nest in its air intake and Station 2 does no then trying to homogenize the temperatures using a pairwise comparison is doomed to fail.

Hubbard did a detailed analysis of uncertainties in stations using PTR probes and found that the components in electronics can have different drift characteristics between two different stations causing different calibration drift between the stations.

These are all what is known as systematic error and systematic error cannot be corrected through statistical analysis of a either a single station or among multiple stations.

Using a more “precise” lab-thermometer doesn’t guarantee accuracy. Precision and accuracy are *not* the same thing.

How many field measuring stations get calibrated on a quarterly or even an annual basis using an ice bath and a heat bath?

We aren’t talking rainfall measurement, that’s just a red herring.

I’ll repeat, how many field stations get repainted annually? How many get regular mowing? How many actually have grass underneath them? How often does someone go out to clean ice and snow out of the air intake after a blizzard?

This isn’t an issue of what *should* be done but an issue of what actually *gets* done.

Unless you can insure that the microclimate is *exactly* the same for two or more stations then trying to do homogenization using their readings only spreads the uncertainties of each station around to the others.

Think about it. Temperatures depend on lots of things like elevation, humidity , terrain, geography, distance, latitude, etc. How many homogenization routines actually quantify all of these and apply appropriate adjustments for them before trying to homogenize readings from one station with a different stations 10 miles away (where one is near a pond and the other is near a corn field as an example).

Sorry, Yes I did miss the point.

Depending on how comparator series are developed, I agree that inter-site comparisons as used in T-data homogenisation introduces another layer of potential bias.

In the case of ACORN-SAT they regularly use sites from other climate zones to form reference series. In the case of Rutherglen which is in the temperate zone of SE Victoria, they included Menindee a semi-arid place out in the central west of NSW, Portland, on the southern coast, over near South Australia and Melbourne.

(Do you have a reference to Hubbard?)

All the best,

Bill

“In the case of ACORN-SAT they regularly use sites from other climate zones to form reference series.”

You still don’t seem to be getting it. There is no such thing as a “reference series”. Unless the stations are identical with identical microclimates and identical systematic biases there can be no “reference” that means anything. When I read things like this it is usually from statisticians that ignore physical measurement uncertainty, assume all stated values are 100% accurate, and the spread of the stated values can be used for statistical analysis out to any number of significant digits.

It would be like me measuring the crankshaft journal wear in a diesel engine in Chicago and saying that it can be used as a reference for the crankshaft wear in a similar diesel engine in Paris, France.

I didn’t even mention that the variance of the temperature measurements change with climate zone. Colder zones see a wider variance in temperatures than warmer zones. How then can the temperatures in one zone be used as a reference for a different zone?

See: “Reexamination of instrument change effects in the U.S. Historical Climatology Network”, Hubbard, Lin