March 3, 2022 By jennifer

The Australian Bureau of Meteorology has now admitted, as I surmised in a blog post on 10th February, that the reference value for 2021 was not actually included in its calculation of the amount of warming as published in the 2021 Annual Climate Statement.

In short, we have a 2021 Annual Climate Statement that does not include the new 2021 value in its calculations.

This omission allowed the Bureau to announce an amount of warming of 1.47 °C which might seem respectable during a cooler La Nina year.

If the 2021 reference value of 0.56 °C* had been included, the amount of warming could have been rounded to 1.5 °C placing Australia at the dreaded tipping point.

A meeting of Intergovernmental Panel on Climate Change (IPCC) scientists held in early February stated the amount of global warming is 1.1 °C, and the consequences if ‘global heating’ passes the tipping point of 1.5 °C will be catastrophic.

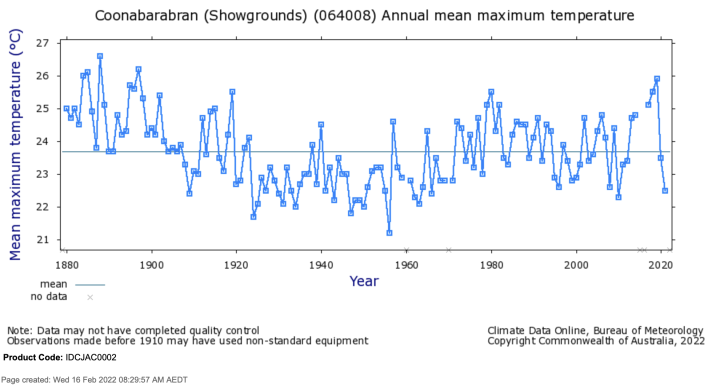

The last two years have been particularly cool as evident from maximum temperatures as recorded at Coonabarabran in New South Wales, Chart 1. This is one of the few remaining long temperature series where maximum temperatures are still recorded with a mercury thermometer.

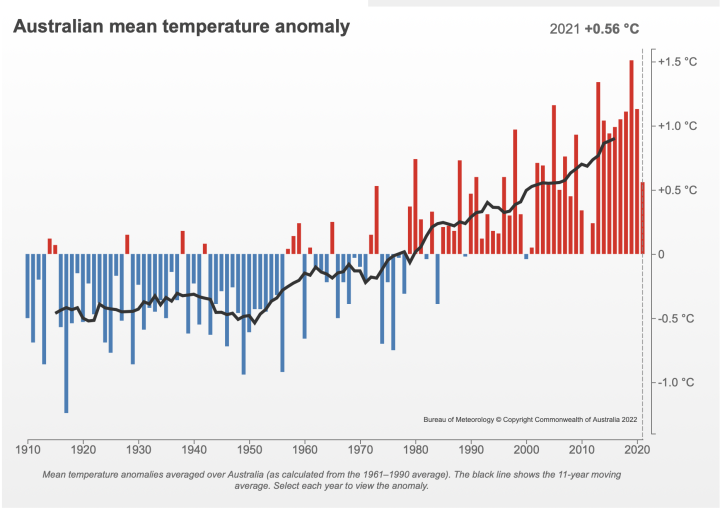

Over the last twenty years remodelling of the historical temperature data has stripped away the cycles, so even cool years now add warming to the official trend, see Chart 2.

Other artefacts that generate more warming for the same weather include the transition to electronic probes and the addition of data from weather stations in warmer locations for more recent years.

The true amount of warming across the land mass of Australia since 1910 is closer to 0.7 °C, which is the amount of warming at Coonabarabran since 1910, see Chart 3 (scroll to end).

At the beginning of each year the Australian Bureau of Meteorology (BoM) publishes The Annual Climate Statement that reports on warming trends in the previous year. This year the 2021 Annual Climate Statement included comment that:

Australia’s national mean temperature was 0.56 °C warmer than the 1961–1990 average, making 2021 the 19th-warmest year on record …

Based on the ACORN-SAT dataset, Australia’s climate has warmed on average by 1.47 ± 0.24 °C …

The headline in the Sydney Morning Herald was:

Despite the La Nina weather pattern and other climate drivers bringing rain and the coolest temperatures for the past decade, the heating trend under climate change continued during the past year.

When I downloaded the figures from the Bureau’s website for the period 1910 to 2021, I calculated the amount of warming for the period 1910 to 2021 (inclusive) as 1.49 ± 0.36 °C.

Curious as to why I was getting a different value, albeit only 0.2 °C different, I emailed the Bureau. Nearly two weeks later I received this reply:

Homogeneous ACORN-SAT data to the end of 2021 was not available at the time of writing the Annual Climate Statement. This is because data for the current year are appended to ACORN-SAT until they are subsequently analysed in the following year. The primary purpose of the Annual Statement is not to report on trends, but rather report on the events of the calendar year. The State of the Climate Report provides a biennial update of trends in Australia’s climate.

Using the methodology applied for both the State of the Climate 2020 and the 2021 Annual Climate Statement, the Bureau estimates Australia’s warming for 1910 to 2021 to be 1.48 ± 0.24 °C.

To be clear, the Bureau is now admitting it published an ‘Annual Climate Statement for 2021’ that did not include data for the year 2021 in its calculation of the amount of warming. It claims it was only reporting on ‘events’ for that calendar year. Yet curiously it included the value of 0.56 °C for 2021 in the downloadable dataset.

Which begs the questions:

- Why was the value of 0.56 °C included for 2021, if the data was not actually available to the end of 2021?

- How was the value of 0.56 °C as reported in the media release and The Annual Climate Statement generated? (Appreciating that this number was not actually used to calculate the amount of warming.)

- When will the new amount of warming of 1.48°C be announced?

- How will the new amount of warming be reconciled with the announcement accompanying the 2021 Annual Climate Statement that warming was 1.47°C?

The Australian Broadcasting Corporation (ABC) headline on 6th January, based on the Bureau’s media release, was:

The 2021 numbers are in

Within that article is a chart with a link in the caption to ‘get the data’ that includes the 0.56 °C value for 2021.

There is commentary that ‘2021 was almost 0.4 degrees cooler than the average of the last decade,’ Dr Bettio said.

“That doesn’t necessarily mean that it was ‘cool,’” she emphasised.

Lynette Bettio is a senior climatologist in the Climate Monitoring team at the Bureau of Meteorology.

A key to making sense of all of this is perhaps understanding that The Annual Climate Statement as published by the Bureau is not based on actual recorded temperatures. But rather on homogenised values that are by their very definition remodelled.

Australians incorrectly assumed that temperatures measured at official recording stations with mercury thermometers – by their very nature of being in the past – cannot be changed. But in climate science numbers are continually changed. It is the remodelling of maximum and minimum temperature series before they are combined to calculate the mean, and then added all together, to generate an overall annual average temperature for Australia, that most affects the amount of warming announced at the beginning of each year by the Australian Bureau of Meteorology.

It is the remodelling that makes the dataset homogenous. This remodelling has a very subjective component. Past temperatures are cooled, with more cooling the further back in time.

David Stockwell and Ken Stewart in a paper published in the peer-reviewed journal Energy & Environment (https://doi.org/10.1260/0958-305X.23.8.1273) in 2012 showed how the transition to the first homogenised dataset known as the High Quality Network (HQN) added 31% to the average temperature trend which they calculated to be 0.7 °C in the historical unhomogenised data for Australia.

Merrick Thomson back in 2014 calculated the transition from HQN to ACORN-SAT added 0.42 °C to annual average temperatures just by removing 57 stations from its calculations, and replacing them with 36 on-average hotter stations – independently of any actual real change in temperature.

The Bureau acknowledged in its transition from ACORN-SAT version 1 to version 2 in 2018 that further remodelling added 23 % to the warming trend.

The Annual Climate Statements always gives the impression that temperatures are increasing, and in a linear fashion. This is what homogenisation, and the addition of hotter stations later in the record, has done – the remodelling has stripped away the cycles, so even cool years now add warming to the trend.

If we consider the chart as published in the 2021 Annual Climate Statement, we see the last two years of data (the last two bars) show temperatures are coming down but are still above the long-term average. So, they will add warming to the overall trend. If consider just the last two data points in the Coonabarabran series, we see the same last two years are cooler than the long-term average so they would cool the overall trend.

Could it be that with all the remodelling there is now too much warming in the official homogenised ACORN-SAT temperature series?

If the Bureau announced an amount of warming of 1.49 °C based on the 0.56 °C value for 2021, it could reasonably have been rounded to 1.5 °C, indicating warming that exceeded the tipping point. This could have been perceived as overreached in its remodelling of historical temperatures especially during a cooler than usual La Nina period. Instead, the Bureau just omitted the last value 0.56 °C from its calculations.

This is part 3 of the series, Australia’s Broken Temperature Record.

It is possible to see the extent of the remodelling for all 104 ACORN-SAT temperature series in an interactive table using maximum and minimum annual series. This table was constructed in 2019 and is yet to be undated with the latest values to 2021 and does not include the latest ACORN-SAT series, that is version 2.2. Click across and see all 104 historical versus homogenised series here: https://jennifermarohasy.com/acorn-sat-v1-vs-v2/

* 0.56 °C is also known as the anomaly value, which describes the amount by which temperatures in 2021 exceeded the reference period from 1961 to 1990. The anomaly value in 2019, for example, was 1.51 °C . The anomaly/reference values for the last seven years as published by the Bureau from the ACORN-SAT Version 2.2 dataset on 6th January 2022 are:

2015 0.94

2016 0.99

2017 1.05

2018 1.11

2019 1.51

2020 1.13

2021 0.56

About as bad as GISS.

Drawing a straight line fit through a century of data, as in Chart 3, obfuscates the 1 to 3 year “big jumps” up or down that are likely caused by cloud cover changes as low and high pressure zones traverse the Southern Hemisphere. Four big coriolis effect cyclones one summer versus, say seven the next, make a big difference to average surface temp. over a large area.

More sunlight over a continent at ground level = higher temp…..more sunlight over ocean= warmer until the clouds develop

Sat view today

https://epic.gsfc.nasa.gov/

I agree that a straight-line is not appropriate. Except to calculate an amount of warming assuming continuous warming, which is the current paradigm. Much more appropriate to acknowledge the inter-decadal variability and also that for most rural/regional temperature series, particularly for inland regions in northern and eastern Australia, there was cooling to 1950/1960 and then warming since.

This whole business of country wide average temperatures is just a total nonsense. What exactly is an average temperature in surrounding that stretches fro +40 to -10 deg.

Obviously the Australian Bureau of Meteorology is run by political hacks that only employ bureaucratic drones that just follow their orders.

They should all agree to let these temperature records be audited by a panel of recognized and unbiased experts, if such a group could be found.

Hi Tom.1

I don’t have any confidence any more in panels. They have been variously setup. There was one back in 2014, I coordinated a heap of the submissions, including help write the submissions from Merrick Thomson who was a neighbour at the time, they are here: https://jennifermarohasy.com/temperatures/submissions-to-the-panel/

If you download a few of them you will see that many are long and very insightful. Find the one by Ken Stewart, for example.

Each of those submissions represents a lot of time. Each is unique. All of it was self-funded. None of us were employed to do that work. I was between jobs back then.

Do you know, not one of those submissions has ever even been acknowledged.

There was a final report from the Panel, and it concluded, after its own one-day of deliberation following presentations from a couple of Bureau staff, that everything was fine. Nothing to see here.

They wouldn’t let me in the door.

Sad to say you will never get a fair assessment or hearing.

I once spoke with the ex Minister responsible and said Mr Hunt you really should get an objective view of the so called science around what you are making policy on and he replied that he was happy with the science as presented by the BoM.

It is all too hard to publicly disagree as they will cancel you as soon as look at you.

Majority of Australia historic temperature records come from coastal cities and towns, where temperatures are controlled by thermal capacity of surrounding oceans, but further inland other natural variability parameters come into play.

Solar activity in particular solar magnetic cycles come to prominence. Geomagnetic response varies with polarity of the sunspot cycles, when solar magnetic half-cycles are either in phase with Earth’s field or of opposite polarity to it.

The attached graph shows temperature for a small inland town of Echuca well inland north of Melbourne, apparently no one bothered to ‘correct’.

(note : the graph was produced nearly a decade ago and needs updating)

Here is the temperature. The raw data is available from the BoM site. It is the homogenised record that makes the news cycle.

Rick,

Great chart, and it shows the last two cooler years. But they aren’t as cool as I would have thought. But the chart is of Tmax. Be interesting to calculate the mean.

I’ve just checked the metadata for that site (you can find the link to the right handside, half way down on the first CDO BOM page, and then follow the links to here: http://www.bom.gov.au/clim_data/cdio/metadata/pdf/siteinfo/IDCJMD0040.080015.SiteInfo.pdf), and it indicates still using liquid-in-glass at that locations. Yah!

Echuca has a wonderful long record. It is also one of the few that when I last looked, some time ago now, was still being recorded with liquid-in-glass thermometers. (Too many others are now recorded with electronic probes calibrated to record warmer.) Echuca moves more-or-less in unison with Deniliquin, Mildura, and other long records for that region.

These two charts that you show are extraordinary. So good! I hadn’t seen them before. It would be wonderful if they could be updated to the present. It would also be great if you could get in touch with me directly, via my gmail address: jennifermarohasy@gmail.com

Thank you for finding time to reply to my comment. Will do and will do, eventually.

If they altered data like this in a real science, like cancer research or maybe bridge design, they would be in jail. In politicized science like global warming and covid data it is applauded and rewarded.

This is what my thoughts are exactly. Certainly in engineering you would be up before a professional ethics committee.

The BoM have not only adjusted past temps but even recent data.

If you check the BoM’s climate reports it will tell you that 2001 and 2011 had Australian mean temps BELOW average. However these have been adjusted so that neither year is now. Most of the mean temps from at least 2000 to 2017 have been adjusted upwards. For those interested, check the following. First the climate summaries.

http://www.bom.gov.au/climate/current/statement_archives.shtml?region=aus&period=annual

Now the time series graph.

http://www.bom.gov.au/climate/change/#tabs=Tracker&tracker=timeseries

ACORN2 has been the driver of this – artificially adjusting raw data upwards to achieve another 0.1C-0.2C to support their meme.

“albeit only 0.2 °C different”

Actually, it’s 0.02°C different. The BoM is cooling the present! But really, why does any of this matter?

“Merrick Thomson back in 2014 calculated the transition from HQN to ACORN-SAT added 0.42 °C to annual average temperatures just by removing 57 stations from its calculations, and replacing them with 36 on-average hotter stations – independently of any actual real change in temperature.”

Bom calculates annual average anomalies only. It doesn’t matter if there is a drift toward warmer places, as the always first subtract the long term mean. That is a major reason for using anomalies.

In data anomalies there are always positive and negative outliers which have disproportionate effect on the final average. Reject negative, keep positive and you get desired result. It’s matter of statistic approach, a decorative ‘numerical origami’.

Outliers are not rejected in this calculation.

‘Well, they would say that, wouldn’t they’

Vuk, It is also interesting that these anomaly values are all worked up from daily values. With the transition to electronic probes from mercury thermometers the daily data has become much more spiky and recording hotter for the same weather. https://jennifermarohasy.com/2018/02/bom-blast-dubious-record-hot-day/

Hi Nick, Thanks for this. I’m not sure you are correct in dismissing the addition of the hotter stations because the Bureau uses anomalies. Vuk makes a good point. There is also the problem that these new warmer series don’t begin in 1910. Oodnadatta only began recording temperatures in 1940, and is added in to ACORN-SAT Version 2.2 from 1941. Wilcannia is only added in from 1957, yet there is data from the late 1800s. So in ACORN-SAT Version 2.2. we have a time series that begins in 1910 and runs to 2021, a reference period calculated from 1961 to 1990, and warmer stations variously added in from about 1941 onwards.

PS And the while the time series runs from 1910 to 2021, and the Bureau provide an anomaly value for 2021 (0.56), they choose not to use the value of 0.56 (apparently they didn’t use any value for 2021) in the calculation of the amount of warming in the 2021 Annual Climate Statement.

“Vuk makes a good point.”

I don’t think you or Vuk really appreciates what anomalies are. And it’s true that it is not a well chosen word. But it simply means that they subtract the base mean before proceeding. It doesn’t say anything about outliers.

Thanks Nick.

I understand that by ‘base mean’ you are referring to the period 1961 to 1990, those 30 years. To be clear, I understand the bureau does not subtract the mean of all the years from 1910 to 2021, which would arguably be a better approach. But there are other issues.

To reiterate, the bureau:

1. Uses a reference mean for the period 1961 to 1990 only,

2. Adds data from hotter locations sequentially from about 1941, and

3. Compiles the annual anomaly values sequentially after subtracting from the ‘base mean’ for all values from 1910 to 2021.

So, as far as I can see too many assumptions are violated for the time series to be mathematically meaningful.

Also, think about this, there was a transition from the Della-Marta et al. (2004) dataset to ACORN-SAT Version 1 in 2011. As part of this transition 56 stations were removed from the dataset used to calculate the base mean and 36 on-average hotter stations were added variously e.g. Oodnadatta that holds the official record for the hottest temperature in Australia only became part of the mix of stations used to calculate the ‘base mean’ from 2012 and from 1941 within the mix.

Yet we still have this same value of 21.81 as the mean for the reference period. It has been the ‘base mean’ value since the Bureau started publishing an Annual Climate Statement back in 1996.

Jennifer

“only became part of the mix of stations used to calculate the ‘base mean’ from 2012”

You really are not understanding how anomalies are calculated and used. They don’t calculate a ‘base mean’ for the group of stations. They calculate one for each station individually, and subtract each month’s base average (for the station) from each year’s monthly mean. This is done before any averaging across stations. If they bring in a warmer station, the average for that place is immediately subtracted, so it isn’t warmer any more.

Nick,

I think you are becoming disingenuous.

Oodnadatta only became one of the stations used to calculations the annual average (one of the 104 in ACORN-SAT) in 2012.

Before 2012 a different mix of stations was used.

If you click across to this link you will see where you can download the annual values (derived from daily, not monthly values) and you will see that the anomaly value is listed as 21.8 http://www.bom.gov.au/climate/change/#tabs=Tracker&tracker=timeseries

It would be possible to work something up as you suggest, but this is not how ACORN-SAT has evolved.

Seems they could make the same basic argument for Global Cooling using their current methods. Maybe that is the way to balance the debate?

Hey Nick, what’s wrong with simply observing and recording weather observations. Oh, that’s right, they don’t match the narrative.

Quite right, aussiecol. Check NSW mean max temp for Jan, 2022. BoM claims the mean was +0.72. Yet of the 130 NSW sites only 20 odd are above average. When averaged it shows -1.0C so homogenising and shading of temps have pushed it up.

One big anomaly is Cobar, where the AP registers -0.7C but the MO registers +1.6C. That’s over 2.0C in the one town.

http://www.bom.gov.au/climate/current/month/nsw/archive/202201.summary.shtml

Scroll down for individual site temps.

Methinks you have picked the wrong person to insult Nick.

“..I don’t think you or Vuk really appreciates what anomalies are…”.

If you were truly around the subject you would know that the good Dr appreciates exactly what anomalies are and exactly what the BoM is up to!

May I suggest that you expand your horizons.

Do you know what anomalies are?

Hey Nick

For it to be a proper anomaly it needs to be derived from the actual mean of all the values, or at least a representative subset.

But for the moment, accepting the Bureau’s method…

What you call the ‘reference mean’ is always 21.81, from which the new anomaly value of 0.56 for 2021 would have been derived, after homogenisation of individual series.

So the actual mean temperature for Australia last year, using their data as published on 6th January 2022, should be 22.37 (0.56 plus 21.81).

Do we agree?

What I call the reference mean is the mean for each station for a given month. I don’t know where you get the figure 21.81 from, but it is not part of the calculation of the anomaly average, or of any figures that the BoM promotes. If you look at the 2021 climate statement you linked, it starts:

That is a difference in the anomaly average (and yes, they should have said so). No figures (like 21.81) for the national temperature are mentioned. Nott even in the temperature section, where the featured graphs are at last properly labelled as anomaly averages.

Hi Nick

So the annual means are calculated from daily values. Monthly values are not used.

Where you can download the annual values for ACORN-SAT version 2.2, at that part of the Bureau’s website it gives you the value of 21.8 (various reports give will give you 21.81), so you can find all of this here: http://www.bom.gov.au/climate/change/#tabs=Tracker&tracker=timeseries

I see that 21.8 it is written as a note in the heading area. But it is not in the downloaded data, or on the graphs themselves. Nor is it mentioned in the Annual Climate Reports.

This is an old story. Temperatures themselves are inhomogeneous, and are very hard to average. As you’ve suggested, you have to get the right mix of cold and warm places. Consequently that figure of 21.8 is very uncertain, and rarely advanced as data.

Anomalies, OTOH, are much more homogeneous; their mean is approximately zero. So you don’t have to worry about getting the mix of stations exactly right. The anomaly average is known to much more accuracy than the actual average temperature. That is why it is universally the one quoted. The BoM is doing the same as the rest of the world.

“So the annual means are calculated from daily values. Monthly values are not used.”

The daily values for each station are just used to get the monthly average for that station. Here is the BoM:

“Starting with the daily timeseries, monthly averages of station temperature are calculated. If more than 10 days of data are missing in a given month, that monthly average is deemed to be missing, and is not used in subsequent calculations. Monthly normal values (1981–2010 averages) calculated for each station are subtracted from the monthly station temperature data. The resulting monthly temperature anomalies (departures from the normal value) are interpolated to the 5 km spatial grid using the Barnes successive correction techniques to obtain the monthly temperature anomalies for all of Australia.”

They actually use 1981-2010 as the base period; as they explain, they later renormalise to 1961-90. This is easily done once you have done the spatial averaging, which is the critical stage.

Hi Nick

Thanks for these insights. It is good to understand how and why we disagree.

BTW I agree that what the BoM is doing is the same as for the rest of the world. I also understand that it is considered ‘World’s Best Practice’. That doesn’t make it right.

The deteriorating skill of the BoM’s seasonal rainfall forecasts is reason to be concerned about lots of different aspects of climate science.

Was it statistician Edward Wegman (past chairman of the Committee on Applied and Theoretical Statistics of the National Academy of Sciences) who concluded that there is a real need for more professional statistician to be working alongside meteorologists at the WMO?

Anyway, as regards how anomalies are calculated by the BOM, we might be disagreeing about what is done at which time scales to the Australian data and for the rest of the worlds.

I might be incorrectly extrapolating from the homogenisation system/methodology to the calculation of anomalies and also weightings.

If you could clarify a few things, I’ve added numbers to make it simpler, if you have time to respond I would really appreciate that:

1.The ACORN-SAT dataset is homogenised at a daily timescale for most stations?

2.Weightings, after the anomaly value is derived for that station at the monthly time scale, are applied at the monthly time scale?

3.Please confirm this is the same (daily for homogenisation, monthly for weightings and anomalies) for the HADCRUT and other datasets?

4.The complexity of all of this means historical annual means keep changing?

For example, I noted that this year’s media release (January 6, 2022) included comment that:

“In 2021, Australia’s mean temperature was 0.56 °C above the 1961 to 1990 climate reference period. It was the 19th warmest year since national records began in 1910, but also the coolest year since 2012. [end quote]

5.Using the reference value (21.8) at the BoM website where the anomaly value (0.56) can be downloaded, I calculate that last year’s mean average temperature for Australia was 22.37 (0.56 plus 21.81). Is this the correct value?

6. More recently the Bureau have indicated that 0.56 is not the correct anomaly value, see my quote from the Bureau in above blog post. Indeed, that is why the 2021 anomaly value was not included in the 2021 trend calculations; that at the time of the Annual Climate Statement the correct value for 2021 was still being homogenised. So, what is the correct anomaly value and reference value for 2021 year?

Putting all of this in some context, when the 2012 Climate Statement was released, it was stated that 2012 was 0.11 °C above the reference period. Using the 21.81 °C reference value, I calculate that year (2012) had an official mean temperature for Australia of 21.92 °C (21.81 + 0.11).

I see the amount above the reference period/the anomaly as published on January 6, 2022, is now given for that year (2012) in ACORN-SAT Ver2.2 as 0.24 °C, which would give an official mean temperature for Australia in 2012 of 22.05 °C?

7. What was the annual mean temperature for Australia in 2012?

Thanks for reading this far!

Hi Jennifer,

There is not much connection between anomalies and homogenisation and weightings.

Homogenisation is done to correct a station time series.

Anomalies are used primarily in spatial averaging, which happens after you have the time series right. You can calculate anomalies either for adjusted or unadjusted data, and in the same way. I do both with TempLS; it is the same arithmetic on different time series.

By weighting, I assume you mean the weighting used in averaging over area. This is a function of the geometry of the station network, not of the temperatures you measure (or anomalise or homogenise).

I don’t think homogenisation is really done daily. It is based on identifying changes that should be treated as non-climatic (because they are suspiciously sudden, and not corroborated by other stations nearby). So you need months of data either side, and couldn’t identify the change to the nearest day. I think ACORN is unusual in publishing daily adjusted values, but they would work them out by calculation from formulae over longer time scales.

Yes, I agree that annual means keep changing. I think that happens more than it should, and although it doesn’t matter much, I think it should be fixed. I have written about “flutter” here.

https://moyhu.blogspot.com/2017/02/spatial-distribution-of-flutter-in-ghcn.html

“I calculate that last year’s mean average temperature for Australia was 22.37 (0.56 plus 21.81). Is this the correct value?”

It’s your calculation, not theirs. Bom never offers a time series of absolute temperatures with figures like 21.81. They should not. The confusing thing here is that they talk about the temperature being 0.56 above average, so it’s natural to ask, what is the average, then (and so, why not 22.37?). It comes back to that issue that you can calculate accurately the average anomaly. but not the average temperature – even the long term 21.81 is very inexactly known. What the 0.56 means is that you know how much each station is different to its own average, so you can average those differences to justify the statement that Australia was 0.56 above average. It should all be more carefully spelt out, but that would be a mouthful. Their inexact language does convey the right meaning.

“So, what is the correct anomaly value and reference value for 2021 year?”

Almost certainly 0.56. Well, that does have other uncertainty, but homogenisation is unlikely to change it. Homogenisation has little effect on modern data (because of modern equipment etc). It is important in correcting past data to overcome changes made there.

An example of modern change is when they moved the Melbourne station to Olympic Park in about 2017. They had instrumentation in both places for at least a couple of years, so they had a good measure of the difference between the locations. Then when a time series for Melbourne was quoted, it incorporated a correction for that change.

“What was the annual mean temperature for Australia in 2012? “

The Bureau isn’t saying. It will tell you the anomaly. That actually contains the information you want to know. Saying the average was 21.8 doesn’t tell you about where you are. But saying the anomaly was 0.56 says it was wrmer than historic, and so it is likely warmer where you are too.

“It comes back to that issue that you can calculate accurately the average anomaly. but not the average temperature – even the long term 21.81 is very inexactly known.”

Don’t look now, I just pulled a rabbit out of my ***.

An accurate average temperature can’t be calculated, yet that is exactly what you do to calculate a baseline when determining an anomaly.

Someone explain to me how an accurate average anomaly is calculated when an average baseline temperature used in determining it is considered inaccurate.

Normally, inaccuracies carry through all subsequent calculations. Why don’t they show up in anomalies?

“when an average baseline temperature used in determining it is considered inaccurate”

For the umpteenth time, you don’t calculate an average baseline temperature. It has no role. Individual station averages are subtracted from the individual records, and the residuals are then averaged.

This is what you said. Even the long term is very inexact. That means the short term is also inaccurate!

Are you saying you don’t calculate a temperature average for each station? Why is averaging temperatures in one situation inaccurate and in another it is accurate.

If an average of temperatures is inaccurate, it is inaccurate, no running around the bush.

“Why is averaging temperatures in one situation inaccurate and in another it is accurate.”

There is nothing special about temperatures here. The issue is homogeneity. You can average over a month at a station because temperatures are taken in the same place with the same equipment. Sometimes that is not true, and a correction is needed. But mostly it is. Spatial averaging mixes different places, latitudes, altitudes and equipment. That is very inhomogeneous for temperature. But most of those differences subtract out with the local month mean, leaving a much more homogeneous anomaly.

You are dancing around the bush and not answering the issue you brought up.

“but not the average temperature – even the long term 21.81 is very inexactly known.”

How can even the monthly average be exact when short term averages are inaccurate as you implied.

You have also not given any evidence that averages of anomalies are more exact than the inexact temperatures they are derived from.

You are caught in a conundrum here. If temperatures and their averages are inexact then the anomalies that are calculated from them MUST also have similar inaccuracies. You can not remove inaccuracies by subtracting a constant regardless of how that constant was determined.

“the anomalies that are calculated from them”

Wearily, the anomalies are not calculated from them. They are calculated as I described (many times).

“You can not remove inaccuracies by subtracting a constant”

You are not subtracting a constant. Let me say it yet again – from each station’s monthly averages (or daily reading if you prefer), you subtract the mean for that month at that station. That takes out most of the variability due to known factors (latitude, altitude etc). They affect the readings and the subtracted means equally.

The mean of that month at that station. That is the argument that you are so carefully avoiding.

I’ll post what you said again.

That very much implies that not only the long term averages are inexact, but also short term averages also.

In other words the averages calculated for each station and used to determine that stations anomalies are short term averages. Are they not the short term anomalies you refer to? How do you deal with the inaccuracies caused by creating a stations baseline from its own temperature readings?

The items you mention such as altitudes would seem to allow a bias to sneak in by giving lower altitude stations that are closer to the surface i.e., larger temperature variations an automatic higher weighting. Or how about stations close to an ocean or large body of water with a smaller variation in temperature having a smaller weight? It looks like warming is being baked into the output.

Hi Nick

Thanks for your response. And for confirming a few things for me, and for keeping the conversation going.

The Bureau does repeatedly (but not always) quote that 21.8C or 21.81C figure. But it will take me time to find my archived ‘Annual Climate Statements’ and ‘State of the Environment Reports’ to ‘prove’ this.

Of relevance, you can still now see that value (21.8C) published at their website, http://www.bom.gov.au/climate/change/#tabs=Tracker&tracker=timeseries

It has been there for as long as I have been downloading values, which became often from about 2011. (After the flooding of Brisbane in January 2011 I started using Artificial Neural Networks for rainfall forecasting, using BOM data.)

What is more important is you have confirmed, I think, that this value/the reference period mean/the 1961-1990 average must change. This is a crucial point.

But it is not as your opening statement suggests:

‘There is not much connection between anomalies and homogenisation and weightings.’

These are the three variables that the BoM and other institutions can vary to arrive at the amount of warming/the anomaly value for each year for particular locations, nationally and also globally.

That is my working hypothesis.

On the issue of anomalies. I am going to try and attach a worksheet that I created just now. It shows how the reference mean must change as hotter stations are added, and that if the reference period mean is not changed, the anomaly will obviously be much greater for a warmer station.

Do you?

I don’t think you or Vuk really appreciates what anomalies are.

See my reply at March 4, 2022 12:01 am

It appears that the BOM is not fit for purpose if accuracy and honesty is the purpose.

Rule #1 in winning hearts and minds to accept your position is to NOT argue the minutiae of your opponents’ argument points.

As Rud says –

RIDICULE is the best response.

Thanks Mr. When I drafted this blog post it was in my mind that I should just point out that the Bureau have admitted you can’t get the warming amount of 1.47 degrees Celsius with the dataset provided. But then it could be seen that I am arguing over ‘minutiae’ if my value is 1.49, and the difference only 0.02. Furthermore 1.49 would be more in accordance with the catastrophist’s thesis. So, I’ve written a lot. To try and layout some of the many problems with the entire approach that the Bureau takes to working-up this official historical temperature reconstruction. Of course, it is so similar to the approach taken in the US, UK, etc.

Jennifer, please don’t get me wrong – I value and admire your relentless work and expertise in revealing the perfidy of the AGW “team” (or “cause” as M. Mann called it).

However, in a world where the great unwashed and youngsters just don’t read details about anything much any more, let alone comprehend what they do struggle to read, the only way they engage now is through pictures, videos, sound-bites, social media circles, most of which have to be “entertaining”.

Alinsky’s “Rules For Radicals” have to be re-fashioned and used by AGW sceptics as the basis of producing and broadcasting easily-shared entertaining vignettes that ridicule the whole notion of mankind controlling the planetary systems, especially at a time when our “leaders” are challenged to tie their own shoelaces.

Hi Mr.

I’m sure there are those amongst us who are good at what you desire: to ridicule and entertain.

And there is a role for all of that. But I am not the person to do that. I already fear that my work and criticism of the institutions means there is less and less respect for what I love most, which is science and the scientific method and the value of critical thinking and knowing something about statistics.

What would be good, is if our side/which must be the side of truth, had people who could work with me and others to extend the absurdity, for example, of the Bureau not including the 2021 value in its 2021 report! This is truly absurd.

We need comedians.

What I really value at this blog at ‘wattsupwiththat.com’ is that it is possible to have meaningful discuss about the things I care most about and with others who have a capacity for critical thinking and also some training and expertise in mathematics and science.

How can this be legal? It is straight up falsifying government data.

Hi Bob, I agree. I have been pointing out these issues/falsification in a very public way since 2014. I tried to give up in 2018 and move on to other things, but with the publication of every Annual Climate Statement here in Australia I get upset all over again.

Impulse, or short duration events, sometimes longer in a human frame of reference, are convenient to spike handmade tales, but inconvenient “burdens” for inclusion in geological records.

good work, as usual, by Jennifer. If I wanted to add another Science Degree to my collection, instead of another “Philosophy of Science” class it looks like “Torturing and Adjusting Data For Fun and Profit” would be required.

With “Climate Alarmism For Dummies” as required reading for this course Ron?

“…Dummies…” is required reading, especially the Chapter “How to Heat UP a Funding Proposal to Get Climate change Money”.

The non-linear net warming over the past century for the continent of Australia may be around half a degree C plus or minus half a degree C, no-one knows for sure because of data corruption by activist BoM staff.

Hi Chris

Thanks for your comment. You may be correct that it has been about 0.5 for the entire continent. Very little if any across much of the north.

The amount is going to depend on the starting date. If we start in 1910, because that is when the BOM does, and thinking about the many different datasets I’ve worked with, including from my rainfall forecasting, I would guess it is about 1 degree for southern western Australia, about 0.5 for the Northern Territory (at most, much less if we begin in say 1880), maybe 0.8 for south eastern Australia, maybe 0.6 or 0.7 for much of the east coast.

I’ve now got some resources to begin an ‘alternative’ historical reconstruction and I would be interested in hearing from statisticians and mathematicians interested in working with me on this. I have some funding.

my best email address is jennifermarohasy@gmail.com

Minor typo:

“This table was constructed in 2019 and is yet to be undated with the latest values to 2021″.

‘Updated’ is possibly your intent but, with Oz curretn weather, ‘inundated’ could be more appropriate.

The continent of Australia is about 5% of the planet area and therefore pretty irrelevant ‘globalwise’, apart from the fact that alarmists use the BoM corrupted data to scare the local population into futile unproductive and expensive ‘save the planet’ measures that only benefit rent-seekers.

“Polar amplification is the phenomenon that any change in the net radiation balance (for example greenhouse intensification) tends to produce a larger change in temperature near the poles than in the planetary average” (Wiki).

In the past century there has been no net warming in the Arctic.

Maybe it’s just me, but that graph of maximum temperatures for Coonabarabran looks like it’s showing a slight _downwards_ trend over the timeframe it covers.

Given that it shows a relatively steep decrease followed by a fairly gentle increase (to my inexpert eye), doesn’t it cover too short a period of time to conclude which direction the longer term trend points?

Oh, and why would they start the graph for the mean temperature from 1910 (which looks to be a cool point in time) when the record goes back to ~1880 (when it appears to have been a fair bit warmer)? Or have I just answered my own question?

See any pattern in the wet years in our piddling record for a southern hemisphere continent?

Australia’s record-breaking floods can be traced back to one thing, experts say (msn.com)

But we do have a lot more rooves roads and agricultural land nowadays to contribute to the runoff when the wet years come.

Because what will happen now is we’ll go into drought or bushfire or another natural disaster, and we do forget. So it’s actually really important, if we can, to take stock a little bit

Sage advice although some might take the hint from Chicago-

Chicago Was Raised Over Four Feet in the 19th Century to Build Its Sewer (gizmodo.com)

although there was a local adaptation on the theme-

Queenslander (architecture) – Wikipedia

I grew up in Darwin in ‘houses on stilts’ before airconditioning-

Canberra-born man’s 25-year fascination with Darwin’s elevated homes – ABC News

The older you get the smarter you realize your parents were although Cyclone Tracy would modify the National Construction Code as we learn by doing.

At last the chickens are coming home to roost.

They have added so much warming that they are about to trip themselves up with their own “tipping points”.

I reckon there would be emails flying about saying slow down lads we are about to be exposed as alarmist zealots when nothing bad has really happened.

Hey Nankerphelge!

It makes me so happy to read those few words from you!

I was thinking I was going to get slammed here for the suggestion/for suggesting using their values it really was 1.5 but the bureau was hesitating on this. So, it was with some trepidation I saw that Charles had reposted and then opened this comments thread earlier today.

It was with some trepidation, because when I ran this reasoning/that they now have too much warming by a couple of friends (one of them an engineer), well he thought it laughable that the Bureau would be worrying about getting to 1.5 so quickly.

He also thought my surmise that the Bureau had just not used 2021 to calculate the amount of warming for the 2021 Annual Climate Statement a bit far fetched.

It is the case that you couldn’t make this stuff up. Modern climate science is absurd much of the time.

But what most upset a couple of other few people that I ran my idea past (as I waited for the response from the bureau) was that I was going to put their value out there as 1.49, which is almost 1.50 which seems like a lot.

Our side is always wanting to offer up a smaller number than the Bureau, for warming. And here I was proposing something even higher than the Bureau had published.

I always just go with what the calculation says.

But I knew I needed a story/a reason, or that 0.02 difference would seem like I was just nitpicking. So I wrote what I thought was the reason: that they have added so much warming they are tripping themselves up, to use your wonderful words.

So we agree!

That makes me happy.

Following is the text of an email from a friend who is a bit cranky with me. He sent it this morning to me.

“I’m sorry to be disagreeable Jen, but re:

“A meeting of Intergovernmental Panel on Climate Change (IPCC) scientists held in early February stated the amount of global warming is 1.1 °C, and the consequences if ‘global heating’ passes the tipping point of 1.5 °C will be catastrophic.

“That is for global average incineration, which includes >70% oceans.

“It appears that the IPCC probably employ Hadley-CRU methodology as suggested below.

“The alleged Oz 1.47 C excludes oceans and can perhaps be compared with NH Land-only. The Brits appear to allege a preindustrial warming of the NH Land-only of 1.8 C, which is not a tipping point for the IPCC.

“It seems that rather than use a curvilinear trend (perhaps a poly 6?), they’ve gone for a centred smoothing. I’m not sure if it’s a centred 5, 6 or 7-years because the thick line ends by eyeball at 2 or sometime 3 years short at each end. It’s also a lot smoother than is obtained in Excel’s equivalent PMA smoothing and I’m lost.

“I can’t see why the BoM should be worried about exceeding 1.5 C. [end quote]

*****

He is assuming the Bureau guys think like engineers, but they don’t. They are very political and have not been thinking far enough ahead.

Cheers and thank you.

““A meeting of Intergovernmental Panel on Climate Change (IPCC) scientists held in early February stated the amount of global warming is 1.1 °C, and the consequences if ‘global heating’ passes the tipping point of 1.5 °C will be catastrophic.”

This is wrong, unless the February referenced is February 2016.

February 2016 was the warmest year in the satellite era (1979 to the present). This was the time period when the alarmists claimed the globe had reached 1.1C above their average.

Today, the global temperatures are 0.7C cooler than the 2016 highpoint.

The globe is currently getting farther and farther away from that so-called 1.5C tipping point.

These temperature data mannipulators can’t keep their lies striaght.

Hi Tom,

Following is the URL, and text, from The Australian newspaper published on 14th February 2022.

https://www.theaustralian.com.au/world/ipcc-report-to-focus-on-living-with-climate-change/news-story/b83bc194f1321d61ec3d6a66ed8dd756

14 February 2022

Nearly 200 nations kicked off a virtual UN meeting late on Monday to finalise what is sure to be a harrowing catalogue of climate change impacts – past, present and future.

Species extinction, ecosystem collapse, mosquito-borne disease, deadly heat, water shortages, and reduced crop yields are already measurably worse due to global heating.

Just in the past year, the world has seen a cascade of unprecedented floods, heatwaves and bushfires across four continents.

All these impacts will accelerate in the coming decades even if the carbon pollution driving climate change is rapidly brought to heel, the Intergovernmental Panel on Climate Change report is likely to warn.

A crucial, 40-page summary – distilling underlying chapters totalling thousands of pages, and reviewed line-by-line – will be made public on February 28.

“This is a real moment of reckoning,” said Rachel Cleetus, the climate and energy policy director at the Union of Concerned Scientists.

“This not just more scientific projections about the future, this is about extreme events and slow-onset disasters that people are experiencing right now.”

The report will also underscore the urgent need for “adaptation” – climate-speak that means preparing for devastating consequences that can no longer be avoided, according to an early draft last year.

In some cases this means that adapting to intolerably hot days, flash flooding and storm surges has become a matter of life and death.

“Even if we find solutions for reducing carbon emissions, we will still need solutions to help us adapt,” said Alexandre Magnan, a researcher at the Institute for Sustainable Development and International Relations in Paris and a co-author of the report.

IPCC assessments – this will be the sixth since 1990 – are divided into three sections, each with its own volunteer “working group” of hundreds of scientists.

Last August the first instalment on physical science found that global heating was virtually certain to pass 1.5C, probably within a decade.

Earth’s surface has warmed 1.1C since the 19th century. The 2015 Paris deal calls for capping global warming at “well below” 2C, and ideally 1.5C.

“Earth’s surface has warmed 1.1C since the 19th century”

This is obfuscation by the alarmists. The implication is a steady warming since the 19th century. That is not the case.

It has warmed about 2.0C from 1910 to 1940, then it cooled by 2.0C from 1940 to 1980, then it warmed by 2.0C from 1980 to the present day, and now, it has cooled by 0.7C (headed towards 2.0C cooling in the next decade?).

Currently, it has warmed 0.4C since the 19th century. The highpoint occurred in 2016. We are now 0.7C cooler than 2016. Citing the 1.1C figure is not telling the whole picture. It makes things appear warmer than they really are.

U.S. surface chart (Hansen 1999)

The years 1998 and 2016 are tied statistically for the warmest temperature in the satellite era. This is the only times the Earth has been 1.1C above their average. We are currently 0.7C cooler than the 1.1C figure.

The alarmists are living in the past and want us to live in the past with them. Not me.

Tom

Thanks for this. You do realise that 1998 and 2016 are 18 years apart? There is an 18.6 years lunar declination cycle. 1998 and 2016 correspond with years of minimum lunar declination. These are also years of super El Niño, almost certainly caused by changed in gravitational pull of the moon on ocean current. IMO.

Yes, there are a lot of variables involved in determining the Earth’s climate. 🙂

Look at the U.S. chart. There is about an 18-year period between the warmest decade, the 1930’s, and the 1950’s, which were similarly warm, although the 1950’s didn’t quite get to the levels of the 1930’s. The two 18-year periods look similar to me.

And then after the warming in the 1950’s, the cooling began again and continued all the way to the late 1970’s, and that’s what I think is happening now. We are following a similar trend. We have warmed 2.0C to the highpoint of 2016, and now we are going to cool by about 2.0C, if history is any guide, and we have three historical periods of similar warming and cooling since the Little Ice Age..

If we cool off to the levels of the late 1970’s, that would pretty much put the stake in the heart of the alarmist argument, since CO2 would be continually increasing during all that time that the temperatures were cooling. CO2 would be shown to be a minor player in determining the Earth’s temperatures.

The alarmists’ Doomsday 10 years from now may turn into a Liberation Day as it is seen that CO2 is not overheating the atmosphere.

That’s my guess anyway.

and there is still snow on the ski fields.

Jennifer

How was there a thermometer at the Echuca aerodrome before the invention of planes?

Coordinates and opening dates of weather station on the BOM website don’t reconcile.

Melbourne regional office appears to have gone from flagstaff gardens to the botanical gardens then back to Victoria parade. BUT website shows as one location.

Hi Waza, you are probably trying to work from ACORN-SAT series which are amalgamations. If you want integrity in your datasets you really need to work from the CDO data, which is here: http://www.bom.gov.au/climate/data/

Jennifer

The CDO data provides data in 1881 for echuca aerodrome.

There was no such thing as an aerodrome in 1881.

Maybe the weather station was somewhere else in the town – post office??

The melbourne regional office is listed as being in the same location for 150 years – not possible- this station moved multiple times

But of course it was in the same spot, it was always in Melbourne! Precision is a matter of taste.

Waza

You make a good point about use of the word ‘aerodrome’ and chronologies! When did the Wright Brothers make that first flight? 1903!

I will dig around and see if I can find something regarding Echuca location moves, changes.

Regarding Melbourne, Tom Quirk wrote an entire chapter for the book I edited, Climate Change: The Facts 2017.

I’m not sure if any of the following is of interest, a few extracts from that chapter.

Melbourne, on the south-east tip of the Australian continent, was settled in 1835, and in 1851 it became the capital of the independent colony of Victoria. The temperature measurement record extends from 1856 to the present day, and is one of Australia’s longest. Temperatures were first recorded on Flagstaff Hill, then at the Melbourne Observatory when it was established in 1863 near the Botanical Gardens.

101

The city was the gateway to the gold rush of the 1850s. Conse- quently, by 1860 the colony of Victoria was able to afford its own observatory, which became the home of the Great Melbourne Tele- scope. This was the largest telescope in the Southern Hemisphere and second largest in the world. The astronomers were interested in looking at the stars and observing the movements of the planets, as well as measuring temperatures, rainfall and humidity, and making weather forecasts.

In 1902, the government astronomer and meteorologist Pietro Baracchi, who was my great-uncle, supported the move to have the observatory relieved of this duty, and instead the meteorological work, carried out by astronomers, was placed under Commonwealth control. On 1 January 1908, with the establishment of the Bureau of Meteor- ology, the weather station was moved to the north-eastern edge of the junction of Victoria and La Trobe Streets in the central business district (CBD), as shown in Figure 7.1. This was a move of about three kilo- metres from the old Melbourne Observatory.

In 1908, the La Trobe Street site had been on the fringe of the least developed part of the city. But by the 1990s there were fifteen-storey buildings less than 100 m from the site, as shown in Figure 7.1.

Measurements were taken at this site until it was closed in 2015 – and the Stevenson Screen removed. Since 2013, measurements have been made at Olympic Park – a site two kilometres south and back towards the original observatory site.

So, there have been four measurement sites (weather recording stations) in a city that grew from a population of about 100,000 in 1854 to nearly four million by 2010.

***

Until 1964, temperatures were recorded at midnight, and then the thermometers reset for the next 24 hours. From 1964 to 1996 the temperatures were read and reset at 9 am and 3 pm. Until 1996, maximum temperatures were recorded using a mercury thermometer, while minimum temperatures were measured using an alcohol thermometer.

In 1986, an automatic weather station (AWS) was installed at the La Trobe Street site. It measured temperatures alongside the mercury thermometer until 1 November 1996, when the AWS became the primary instrument replacing the thermometers. AWSs sometimes have a quite different housing to the weather station they replace, but in the case of the La Trobe Street weather station it was also housed in the Stevenson Screen, as shown in Figure 7.1.

When temperatures are measured using an AWS, the temperature is derived from a temperature-sensitive electrical resistance, known as a thermistor. The great advantage in using an AWS is that the recorded temperatures can be remotely measured with wireless communication, and the values read at any time.

For Melbourne, it is possible to download from the Bureau website daily minimum and maximum temperatures, and also the temperatures as recorded every 30 minutes through the 24-hour day. Figure 7.2 shows the average 30-minute frequency readings for June and December 2012 from the Melbourne La Trobe Street AWS. The minimum temperature occurs in the early morning, at about daybreak, while the maximum temperature is reached in the mid-afternoon.

The different temperatures that occur at such very different times of the day are from an atmosphere that is in very different states. The minimum temperature is subject to anomalies in the surface boundary layer of the atmosphere, which reflects local influences. The maximum temperature is from a large spatial volume of air and the presence of solar radiation that results in convective mixing of the atmosphere.

Interestingly, the mean maximum and minimum temperatures, which are generally used to calculate long-term climatic trends, give a different value to that calculated from the 48 readings taken every 30 minutes (weighted mean). The difference for December, a summer month, is 0.77 +/− 0.22 °C; while for June, a winter month, the differ- ence is 0.37 +/− 0.09 °C. As minimum and maximum temperature records are averaged, it could be concluded that the Melbourne mean temperature is now overestimated by some 0.5 °C.

*********

In order to calculate the UHI effect on Melbourne’s temperatures, they can be compared with temperatures at Laverton, about 20 km to the south-west of the city centre. Temperatures have only been recorded at Laverton since 1944.

The raw maximum temperatures and the trends of maximum temperature in Melbourne are similar to that at Laverton, as shown in Figure 7.5. The two-temperature series are strongly correlated with a correlation coefficient of 85%. This can be seen in Figure 7.5, where the two records reflect the same temperature variations. However, the raw minimum temperatures as measured at Melbourne show a greater increase compared to Laverton from 1944 to the present. The increase at Melbourne, of about 2 °C compared to an increase of about 1 °C at Laverton, is most likely an indication of the much larger UHI effect in Melbourne.

The comparative increases, and the annual, summer and winter Melbourne–Laverton differences for the annual temperatures, are shown in Table 7.1. The minimum differences show that the UHI effect is detectable throughout the year. However, the maximum temper- ature shows a significant difference between summer and winter. The maximum temperature winter increase of 0.08 +/− 0.02 °C per decade is consistent with the winter maximum increase for the Melbourne meas- urement of 0.14 +/− 0.04 °C per decade.

Clearly there is a UHI effect in Melbourne, most clearly detectable in the minimum temperature measurements, of 0.2 °C per decade or 1 °C over a period of 50 years. That amounts to 2 °C per century.

****

If Melbourne’s temperature as measured at the weather stations is to be used as an indication of long-term global change, then the maximum temperature in summer would give the best indication. This is because local temperature anomalies, such as the UHI, are not significantly affecting the record – at least not until 1996 at the La Trobe Street site. This is perhaps because there is opportunity for adequate mixing of the atmosphere during the longer summer daylight hours even over a large city such as Melbourne.

I calculate a rise in the summer maximum temperature at Melbourne of 0.03 +/− 0.02 °C per decade for the period from 1856 to 2015, which is equivalent to a rise of 0.3 °C per century. This rate of change is based on the Flagstaff Hill, Botanical Gardens, and La Trobe Street site data until 1996. I use the data from Olympic Park for the period 1996 to 2015.

This trend of 0.3 °C per century compares favourably with trends at nearby sites unaffected by UHI. For example, in a study of maximum temperatures at Cape Otway and Wilsons Promontory lighthouses, Jennifer Marohasy and John Abbot also found an overall annual change of 0.3 °C per century (Marohasy and Abbot 2015).

Jennifer

Thanks for the excellent response.

I live in melbourne and have walked around these sites many times.

My sprinter daughter often trains at Olympic park.

Olympic park, botanical gardens and the old Victoria/Latrobe site are all within walking distance, but often have different temperature and rainfall. That’s Melbourne.

Nice analysis! BTW, an aerodromes were used for dirigibles also. Don’t know about there though.

I’m surprised they even used data. I sent them an email on the 28th Feb pleading with them not to try and say Perth had it’s warmest summer on record. I gave the average temperature value (calculated from their website) for 1977/8 as 26.1(26.13111) and 2021/2 as 26.0 (26.00056).

Sure enough, 2 days later their press release says “The Bureau of Meteorology’s latest summer climate summary shows Western Australia saw its 7th warmest summer since records began in 1910, with Perth experiencing its hottest summer on record.”

Liars!

Absolutely. The Perth measuring site has been moved twice, from the original cool hilltop overlooking Perth, down into the CBD where it experienced the cool breezes off the river, then north of the CBD into a warmer location. For a time it seems that the temps from Perth Airport were used, out on the hot eastern plains. So any temperature range should only go back to 1995, the year when the current site commenced measurements.

This whole business of country wide average temperatures is just a total nonsense. What exactly is an average temperature in surrounding that stretches fro +40 to -10 deg.

Nick Stokes says:

“I don’t think you or Vuk really appreciates what anomalies are.”

You may think so, and you are welcome to think so.

For many decades the UK’s MetOffice was calculating the CET’s annual anomaly.

And guess what, Mr Stokes? MetOffice was using incorrect procedures.

Comes along ‘Vuk’ and points at the errors.

And guess what, Mr Stokes? MetOffice abandon their method within just four weeks and adopts procedure suggested by ‘Vuk’.

The whole matter was discussed on this blog few years ago,if you go through the archives you may be able to find reference to it.

Have a nice day.

The real problem with anomalies is two fold.

The need for “long” records incentives the manipulation of data by creating new information to replace measured and recorded data. Nowhere in science is this done. If data is not fit for purpose, it is discarded, not bastardized by changing it.

Anomalies hide so much information vital to determining what is really going on. Are Tmax or Tmin or both increasing? Is winter or summer or both warming that increases the average? Are we having longer springs and autumns that can raise the average? What regions are warming and what region are not.

As a result, you see all kinds of scientists just quoting a “warming” environment about all kinds of things. They never examine what local and regional temperatures are doing, they just take it as gospel that the entire earth at all locations are burning up!

Mr. Vuk: There is one anomaly I appreciate, his name is Stokes. What we see here has been described as “racehorsing” (h/t Steve McIntyre), where a commenter tries to obfuscate by jumping to another subject and claiming that the problem is your lack of understanding of the new subject (anomolies here). You have jumped ahead of him, thank you.

thanks

“tries to obfuscate by jumping to another subject”

I didn’t jump to another subject. I responded to Vuk’s comment on anomalies,

”In data anomalies there are always positive and negative outliers which have disproportionate effect on the final average.”

which are also mangled in the head post. Anomalies are just differences from the long term mean for that time and place. They do not, as Vuk seems to think, refer to outliers.

No I do not think so, you are at it again, and you wrong again.

You calculate anomaly for each station, and take average value of the sum. A station might for any number of reasons, be they true or false, outlier value, which then can be included or rejected.

Since temperature is reflection of thermal energy and that relationship is not linear there might be a good case for calculating the geometric instead of the arithmetic average.

That might not be acceptable to your crowd since it would reduce anomalies to fraction of the arithmetic values.

Thanks Vuk.

I will go back through the archive and try and find your suggested method, etc.

I’m wanting to do an Australian wide historical temperature reconstruction using the BOM method, and also a completely different one. So, I’m particularly interested in any advice you have regarding anomalies.

Hi

No need to search archives, comment was meant to pull down Mr. Stokes off his perch at least for few moments..

Suggestion was very simple, they were adding 12 individual months then dividing by 12. to get annual temperature anomaly. This gave February more weight, e.g. over January (10% longer) etc.

Suggestion was to add daily temps and divide by 365 (/366).

If only monthly temperatures are available multiply by number of days in the month, add all and again divide by 365 (/366)

Correction was minor but it was more accurate.

with kind regards

vuk

Here is another suggestion. Begin the year at the start of summer on the SH (start of winter in the NH) so temps in a season don’t get split between years.

Why are calendar years used instead of seasons to determine climate. If TOBS is a thing, splitting the last season of a calendar year between years is no better.

I did above calculations, sent result to the MetOffice, they saw that the actual annual temperature anomalies went up a fraction of a degree and they bought it, I hope for the accuracy reasons.

For Australia, February being hot month, the anomalies might be pulled down a bit.

Well, I’m no scientist but moving the thermometers around to warmer areas doesn’t sound very scientific. No less deceptive than recording data over time, in fixed locations in many urban areas. There is a heat sink effect in urban areas where the temps rise as these locations grow larger, more paving and buildings cause more stored thermal energy. This would account for much of the rise in urban locations, where the measurements have been historically measured. It’s All BS and poppycock unless there is a standard parameter for locations and equipment. I will continue to believe my lying eyes. When I look at the greening of our planet, consider the volcanic activity, and sun fluctuations, even 1.0 C would be meaningless. Unless the data showed a year over year increase, then we would see a steady climb without all the changing and massaging of the data. Moving the thermometers around, and not using a globally standardized, modern measuring device and SOP when we are looking for small changes, delegitimizes their conclusions. The fantasy of a global disaster or mitigation, that conveniently steels our freedoms and costs mountains of money, that’s going directly to the interests of those preaching their half truths and manufactured data, seems more like a religion than science. Again, historic data informs me we have always had “climate change” and change it what it does. Show me any sustained period of time since the arrival of our species, we’re the weather or climate wasn’t changing. I understand that CO2 was much higher than current levels during the Jurassic period. Then we had a climate change that brought us to another ice age. Yep, weather and climates change. Learn to adapt or move. That’s what our ancestors have been doing all along. Until I see the global warming crowd stop driving, flying or using electricity in any form, I will believe my lying eyes. If they really believe it, then show us by your own actions.

Hi Jon

They didn’t move the thermometers in the example I’ve given. Worse, they just stopped using the temperatures from some locations, and started using temperatures from warmer locations. There have been no published criteria for inclusion or otherwise of particular temperature series.

There is the issue of how an anomaly is calculated and its relevance. There is also the related issue of how many mathematical assumptions are violated when trends are calculated from annual averages that have been calculated from non-equivalent series.

I used interpolation methods in my doctoral research (on decision making) to overcome a handful of missing survey data. In my stats training on how to do this using measures from other S’s as predictors, I was warned by the very experienced stats lecturer that to do this for more than a handful of measures (some say 5% some 10%) was to create a distorted picture that bore little relevance to reality.

Does anyone know what the ratio between interpolated (ie data modelled on other sites’ data) and real data (ie measured empirical site data) in the Australian (and indeed global) context is?

I would guess, given the patchy coverage in both that interpolated data would outweigh empirical data.