by Ross McKitrick

I recently published an op-ed in the Financial Post describing the findings of the new JGR paper by NOAA’s Zou et al. NOAA’s STAR series of the MSU satellite-based tropospheric temperatures used to show more warming than UAH or RSS in the mid-troposphere. Zhou et al. recently rebuilt their dataset and now STAR has a slightly lower trend than UAH. This is a big deal because it adds to the evidence that GCMs are warming too much compared to observations, which suggests problems with their climate sensitivity (ECS) values.

In the ensuing discussion on Twitter and elsewhere, a few people criticized me for not drawing attention to Zou et al.’s observation that the warming rate appears to be accelerating, with the post-2001 trend about double that of the series as a whole. Apart from the limits of space in an op-ed, there are two reasons why I didn’t discuss that topic.

First, not too long ago many skeptics were pointing to the warming slowdown after 2001 as evidence that models were unreliable. The stock response on the part of modelers was to insist that we have to look at the whole data set to get the long-term picture right. Over short sub-intervals, so the argument goes, natural variability can cause departures above or below the trend, but this doesn’t take away from the reality of a long-term steady warming rate. Presumably the same logic still applies. It is especially the case when there was a pair of strong El Nino events after 2015 pushing the average tropospheric temperatures above trend. Of course, you will get an apparent acceleration if you compare a short sub-interval ending in 2020 with a trend using an earlier sample.

Second, if we want to test whether the warming rate has changed, it needs to be done properly. Zou et al. presented some suggestive calculations but not formal statistical tests. Suppose we have a data series Y(t) and we want to test if the trend changes after a chosen date which I’ll call g. The statistical question can be posed by using a regression model of the following form:

Y(t) = a0 + a1 x D(g) + a2 x T + a3 x D(g)T + e(t)

where a0 to a3 are regression coefficients, Y(t) is the temperature data series we are interested in, T is a time trend, and D(g) is a dummy variable, or binary indicator variable, taking the value 0 if the date is prior to the comparison point g and 1 if it is after; and e(t) are the error terms. For instance, Zou imposed a break point at 2001, so D(g) would be a vector consisting of zeroes up to December 2000 and ones thereafter. Note that D(g)T is a vector consisting of zeroes up to date g and the values of the time trend thereafter.

This form of the regression allows us to test if the trend changes after the date g. In the pre-g interval D(g) = 0 so the intercept is given by a0 and the trend is given by the coefficient a2. In the post-g interval D(g) = 1 so the intercept is given by the sum of a0 + a1 and the trend is given by the sum of a2+a3. Thus a1 measures if there was a step change and a3 measures if there was a change in trend between the pre- and post-g periods. To test if there was a significant change in the trend we use an F (or t) test of the restriction a3 =0. If the p-value on this test is above 0.05 we do not reject the null model and we can conclude there was no significant acceleration (or deceleration). Note that this allows for the alternative possibility that there was a step-change at g, but no change in the trend.

In order to do the test properly two further issues need to be addressed, only one of which I will deal with here. First, the error terms e(t) are autocorrelated, so an autocorrelation-consistent variance estimator is needed. It is common in climate applications to use an AR(1) model, but I will be using monthly data and this is likely inadequate. Instead I will use the Newey-West HAC method, which if you aren’t familiar with, think of it as being like AR(1) but more flexible so it is unbiased in large samples even if the autocorrelation process has more than just one lag.

[Digression: This further assumes that Y(t) is stationary around a deterministic trend. If this assumption is true then that raises large problems for attribution studies since GHG forcings are nonstationary and do not cointegrate. If that means nothing to you never mind. I will have much more to say on this at a later date.]

The second issue concerns how g is chosen. It is tempting to treat it as an unknown parameter and estimate it using an informal grid search. You can do that, but then you need to take account of the fact that you estimated it. It is common to experiment with values of g based on looking at the data series itself. Here is the total tropospheric temperature (TTT)-Global series from Zou et al.

If you insert the break at various points to see what happens, this amounts to treating g as an unknown parameter to be estimated, but then inference regarding the other parameters is conditional on the value of g. I we perform the usual F test without taking account of the fact that g has been estimated, we will use incorrect critical values and overstate the significance of the test score. Tim Vogelsang and I discussed this issue in a 2014 paper in Environmetrics.

I’m going to ignore this issue for now but I’ll remark on it as we go along. I obtained from John Christy the new NOAA data showing the monthly TTT-Global and TTT-Tropics data (TTT= Total Tropospheric Temperature) from 1979:1 to 2022:12. I allowed g to vary month-by-month from 1990:1 to 2012:12, thus always allowing at least a decade before and after the break. For each placement of the break, I estimated the regression model described above and computed an F-test of the restriction a3 = 0 using the linear Hypothesis command in R with a Newey-West variance-covariance matrix.

The chart below shows the p-values of the test plotted over the assumed break date. The dotted line denotes the 5% significance level. Whenever the green or red line is below the dotted line we reject the hypothesis of no acceleration; in other words we have evidence of a significant change in the trend conditional on that break date.

Looking at the global series (green), placing the break over most of the sample there is no evidence of acceleration but there is a brief interval in late-2005 through mid-2006 during which if you place the break there you can claim evidence of a significant acceleration in warming. But after that the tests go back into the non-rejection region, which means the apparent acceleration is temporary. Also we need to take account of the fact that we cherry-picked the date and since the p-value is so close to 0.05, any upward adjustment to the critical values will mean the rejection is no longer robust. Overall ,the TTT-global series does not support the claim of an accelerating trend. Further evidence is provided by the red line. If global warming in the troposphere was accelerating, then this presumably would also show up over the tropics. However, looking at the red line we see very clearly no evidence for acceleration regardless of the chosen break date.

The next figure shows the same results for the global LT (lower troposphere) and MT (mid troposphere) series. There is a bit more evidence of acceleration especially in the MT layer. But once again you have to cherry-pick the break date, and even then the tests move back into the non-rejection region if it is placed after mid-2011. Thus for most of the record we would conclude there is no robust evidence of a trend acceleration, even without adjusting the critical values for the fact that g is unknown.

With regard to the mid-troposphere series we can say there is preliminary evidence of an acceleration, but the test scores reverse after 2010 so it is not yet a permanent feature of the data. Here is the time series of the global MT from NOAA v5.0. The linear trend is 0.092 degrees C per decade. Visually it looks like the record jumped in 2015 and has been returning to the trend ever since. It will take another 5 years or more of data to identify a permanent acceleration.

In sum, based on a preliminary analysis the new NOAA data do not support a claim that warming in the troposphere has undergone a statistically-significant change in trend. The Global and Tropical TTT series show no support for the claim. The Global MT series appears to show support but only if the break data is placed in a specific interval in the early part of the last decade, and more recently the tests do not support acceleration. Finally, all of these results are biased towards finding evidence of a trend break due to the treatment of g. Robust critical values could be generated, which I might get to someday if no one else does it first.

How would the graph (lower trop) look if you removed all ENSO events between 2001 and present?

If you stretch out the tracts of a roller coast, then you get a flat straight line, but that ain’t no fun.

Sorry, off topic but now you know … https://www.facebook.com/carsieblanton/videos/888759708782417/?__cft__%5B0%5D=AZXj3wz0rsGMhxBs9QQ2oDE3Ff7LxqG57gCqY7d2G6Xz-9Nyads6rTTrVol-lFLlTUisuJUN_GEbPX8plB3vRtytreQsKbadYd69Hpip16ux8HAxo9VLtqigBE2vF9CS__E&__tn__=-UK-R

The satellite data shows a big increase after the 2014 PDO phase change. It peaked during the following El Nino in 2016. It then has fallen back due to the recent La Nina events. If you put trend lines in you see all of the warming occurred in 2014-15. That’s hardly an acceleration.

https://woodfortrees.org/plot/uah6/from:1997/to/plot/uah6/from:1997/to:2014.5/trend/plot/uah6/from:2016.08/to/trend/plot/uah6/from:2014.5/to:2016.08/trend

Once the AMO returns to its negative phase we should see whether there’s been any real warming or not.

Every time I look at that graph, I literally just see 3 stairs. If people think that CO2 is the main driver only for the graph to look like stairs, they are either trolling with you or have down syndrome.

Another example of thoughtful statistics work by Ross McKitrick. I’m not ashamed to admit that I had to pull my old regression text off the shelf in order to recall how his set-up allowed for both the slope and the intercept to change, depending on the value of D(g).

Something else that caught my attention was his ‘digression’ that broached the subject of ‘cointegration’. I recall a paper by Beenstock et al from a while ago that had the alarmists’ underwear in a knot at the time because it showed that temperature change and CO2 concentration weren’t cointegrated, hence couldn’t be causally linked together. Would love to see an update…

Interesting post, even if the math is beyond me.

But the conclusions are fairly apparent: the climate crisis emperor just lost another clothing item.

Typo: (second sentence just below the Zhou “TTT Global…” graph)

” I[f] we perform the usual F test without taking account of …”

During the Little Ice Age, there was a heat spot in the North Atlantic below Greenland.

Significance

Vikings occupied Greenland from 985 CE to the mid-15th century. Hypotheses regarding their disappearance include combinations of environmental change, social unrest, and economic disruption. Occupation coincided with a transition from the Medieval Warm Period to the Little Ice Age and Southern Greenland Ice Sheet advance. We demonstrate using geophysical modeling that this advance would have (counterintuitively) driven local sea-level rise of ~3 m (when combined with a long-term regional trend) and inundation of 204 km2. This largely overlooked process led to the abandonment of some sites and pervasive flooding. Progressive sea-level rise impacted the entire settlement and may have acted in tandem with social and environmental factors to drive Viking abandonment of Greenland.

https://www.pnas.org/doi/10.1073/pnas.2209615120

Local sea level rise of 3 meters is hard to believe. This would show up in tide gauges and the geologic record. Is there evidence of this beyond the model? Can the model be tested on other locations where this would have occurred?

I’ll take your word for it, but don’t think I wouldn’t go back to school and get my masters in statistics to check your work if it looked fishy.

Or better yet ask ChatGPT so it would walk me through more math that’s over my head

“Or better yet ask ChatGPT so it would walk me through more math that’s over my head”

How can anyone have any confidence in ChatGPT to give the right answers? ChatGPT has already been caught making things up out of thin air.

All the information ChatGPT provides needs to be fact checked before use. Otherwise, how do you know whether it is lying or not?

What strikes me is that all these numbers are very small. Aren’t we supposed to be in a Rapidly Warming Planet? Wake me when it starts to happen

The Warmists gained a bit of momentum with their fairly recent invention of the “Tipping Point”. This is the temperature point at which catastrophic events will occur. At first this was +2 C. However, this turned out to be more than a generation in the future, too far away to panic. Now, it has been lowered to + 1.5 C, placing it within the present generation. This means that it’s time for panic NOW!

Unfortunately now, for the Warmists, by some measurements, we’re already there. Yet, this morning, the sun rose, Spring is still in the air, Miami hasn’t flooded, and the Gulf of Mexico isn’t boiling. We shall survive this, too. The numbers are, indeed, “very small”.

“This means that it’s time for panic NOW!”

So, Doctor of Theology Thunberg was right! 🙂

you must have missed Al Gore’s announcement that the oceans are boiling

“Very small” is the key point. Shout it to the rafters. Very small will not equate to “crisis.” Everybody in the UN, the environmental amen corner, even in the so-called “scientific community” accepts that there is a “real” crisis. To quote Edgar Bergen, “Mortimer, How can you be so stupid?”

The problem with making any claims about the Troposphere is quite simple. It varies from 4 to 12 miles (roughly polar to equatorial but oscillates with seasons) in height starting literally at ground level and is regulated ONLY by gravitational pull, that height fluctuates in bulges and rollers daily.

Because it is HEAVILY mixed by daily heat input it is not consistent enough to even be given an accurate daily average temperature. If you’re going to claim mathematically detectable changes in it based entirely off maybe 60 global altitude data points in the lower 30% and ground level thermal sensors you’d have to live in a fantasy world where becoming a PhD only requires an attendance ribbon. There are also rather drastic effects upon it caused by mountains.

A bigger question is why they aren’t trying to model based on the different maximum effective altitudes of the various “epeen house gasses” that I prefer to call thermal trans-emissive gasses (variations to that). Do you think there’s any methane at 20,000 feet over the north pole? They track these sources then ignore their maximum altitudes and create a gestalt moron-dele’ that somehow ignores the law of thermodynamics even tho it’s moving at a minimum of 12mph and entirely regulated by gravity… which means that the amount of energy at any given fixed altitude in a mechanical volume is going to stay almost the same no matter what the temperature of it is because that’s how specific energy works.

Stratospheric Circulation

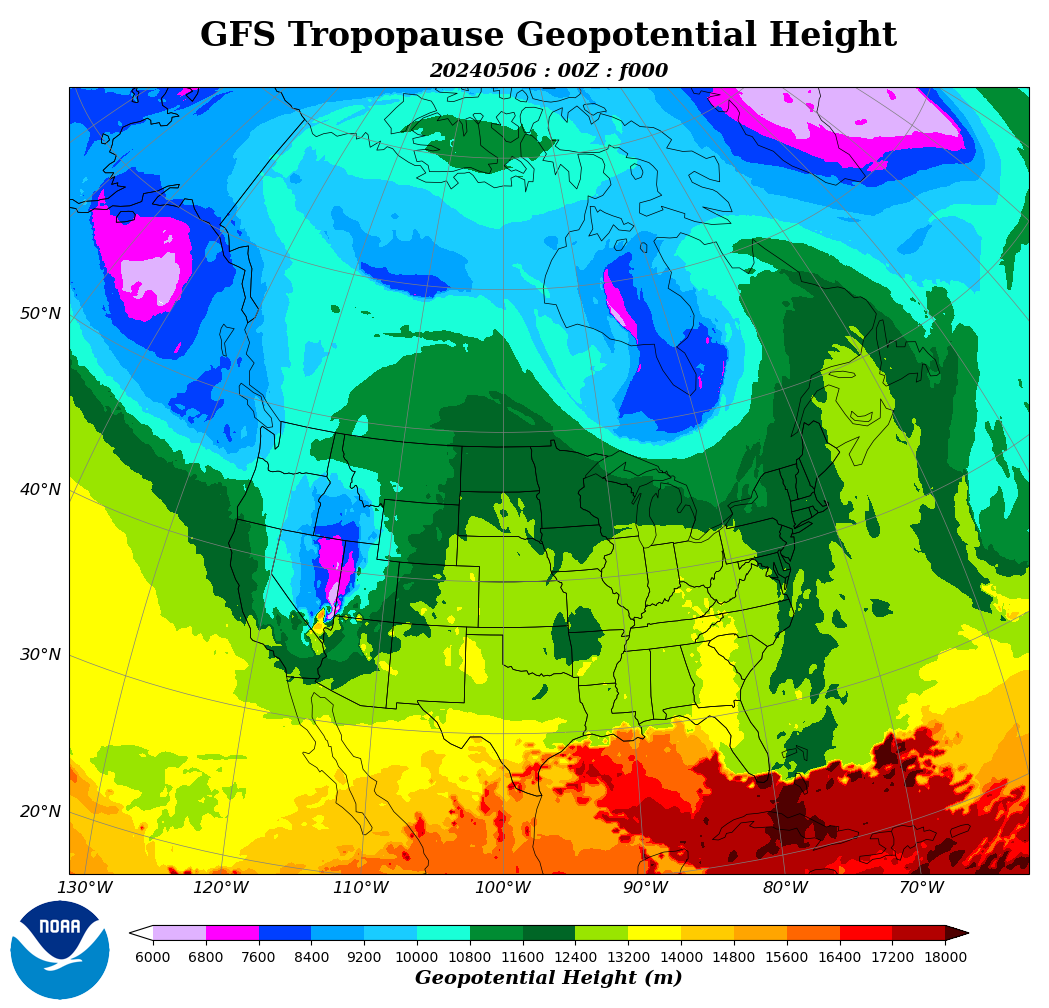

Tropopause Height

There are many temperature data sets that show no warming. How can the entire contiguous US show no warming by the entire globe warming? Do the laws of physics cease to exist in the US? I’ve posted more graphics in the Open Thread. Link