Reposted from the Cliff Mass Weather Blog

During the next few weeks, leadership in NOAA (the National Oceanic and Atmospheric Administration (NOAA) and the National Weather Service (NWS) will make a key decision regarding the future organization of U.S. numerical weather prediction. A decision that will determine whether U.S. weather forecasting will remain third rate or advance to world leadership. It is that important.

Specifically, they will define the nature of new center for the development of U.S. numerical weather prediction systems in a formal solicitation of proposals (using something called a RFP–Request for Proposals).

This blog will describe what I believe to be the essential flaws in the way NOAA has developed its weather prediction models. How the U.S. came to be third-rate in this area, why this is a particularly critical time with unique opportunities, and how the wrong approach will lead to continued mediocrity.

I will explain that only profound reorganization of how NOAA develops, tests, and shares its models will be effective. It will be a relatively long blog and, at times, somewhat technical, but there is no way around that considering the topic. I should note that this is a topic I have written on extensively over the past several decades (including many blogs and an article in the peer-reviewed literature), given dozens of presentations at professional meeting, testified about in Congress, and served on a number of NOAA/NWS advisory committees and National Academy panels dealing with these issues.

The Obvious Problems

As described in several of my previous blogs, U.S. numerical weather prediction, the cornerstone of all U.S. weather prediction, is behind other nations and far behind the state-of-the-art. Our global model, the GFS, is usually third or fourth ranked; behind the European Center and the UK Met Office, and often tied with the Canadians.

We know the main reason for this inferiority: the U.S. global data assimilation system is not as good as those of leading centers. (data assimilation is the step of using all available observations to produce a comprehensive, physically consistent, description of the atmosphere).

The U.S. seasonal model, the CFSv2, is less skillful than the European Model and is aging, while the U.S. is running a number of poorly performing legacy modeling systems (e.g., the NAM and Short-Range Ensemble System). Furthermore, our global ensemble system has too few members and lacks sufficient resolution. The physics used in our modeling systems are generally not state-of-the-art, and the U.S. lacks a large, high-resolution ensemble system capable of simulating convection and other small-scale phenomena. Finally, operational statistical post-processing, the critical last step in weather prediction, is behind that of the private sector, like weather.com or accuweather.com.

The latest global statistics for upper air forecast skill at 5 days shows the U.S. in third place.

There is one area where U.S. numerical weather prediction is doing well: high-resolution rapid refresh weather prediction. As we will see there is a reason for this positive outlier.

The generally inferior U.S. weather modeling is made much worse by NOAA’s lack of computer resources. NOAA probably has 1/00th of what they really need, crippling NOAA’s modeling research as well as its ability to run state-of-the-science modeling systems.

Half-way Steps Are Not Enough

Although known to the professional weather community for decades, the inferiority of U.S. weather prediction become obvious to the media and the general U.S. population during Hurricane Sandy (2012), when the European Center model provided a skillful forecast days ahead of the U.S. GFS. After a number of media stories and congressional inquiries, topped off by a segment on the NBC nightly news about abysmal state of U.S. weather prediction (see picture below), NOAA/NWS leadership began to take steps that were funded by special congressional budget supplements.

New computers were ordered (the U.S. operational weather prediction effort previously possessed only had 1/10th the computer resources of the Europeans), an improved hurricane model was developed, and NOAA/NWS began an effort to replace the aging U.S. global model, the GFS. The latter effort, known as the Next Generation Global Prediction System –NGGPS, included funds to develop a new global model and to support applicable research in the outside community.

During the past 8 years, there has been a lot of activity in NOAA/NWS with the goal of improving U.S. weather prediction, and some of it has been beneficial:

- NOAA management has accepted the need to have one unified modeling system for all scales, rather than the multitude of models they had been running.

- NOAA management has accepted the idea that the U.S. operational system must be a community system, available to and used by the vast U.S. weather community.

- NOAA management has increased funding for outside research, although they have not done this in an effective way

- NOAA has replaced the aging GFS global modeling system with the more modern FV-3 model.

- NOAA has made some improvements to its data assimilation systems, making better use of ensemble techniques.

- Antagonistic relationships within NOAA, particularly between the Earth System Research Lab (ESRL) and the NWS Environmental Modeling Center (EMC) have greatly lessened.

But with all of these changes and improvements in approach, U.S. operational weather prediction run by NOAA/NWS has not advanced compared to other nations or against the state-of-the science. We are still third or fourth in global prediction, with the vaunted European Center maintaining its lead. Large number of inferior legacy systems are still being run (e.g., NAM and SREF), computer resources are still inadequate, and the NOAA/NWS modeling system is being run by very few outside of the agency.

This is not success. This is stagnation.

But why? Something is very wrong.

As I will explain, the key problems holding back NOAA weather modeling can can be addressed (and quickly), but only if NOAA and Congress are willing to follow a different path. The problem is not money, it is not the quality of NOAA’s scientists and technologists (they are motivated and competent). It is about organization.

Let me repeat this. It is all about ineffective organization.

With visionary leadership now at NOAA and the potential for a new center for model development, these deficiencies could be fixed. Rapidly.

The REAL Problems Must be Addressed

So with substantial resources available, the acute need for better numerical weather prediction in the U.S., and the acknowledged necessity for improvement, why is U.S. numerical weather prediction stagnating? There are several reasons:

1. No one individual or entity is responsible for success

Responsibility for U.S. numerical weather prediction is divided over too many individuals or groups, so in the end no one is responsible. To illustrate:

- The group responsible for running the models, the NWS Environmental Model Center (EMC), does not control most of the folks that develop new models (located OUTSIDE of the NWS in NOAA ( the ESRL and NOAA labs).

- Financial responsibility for modeling systems is divided among several groups including OSTI (Office of Science and Technology Integration) and OWAQ (NOAA Office of Weather and Air Quality), and a whole slew of administrators at various levels (head of the National Weather Service, head of NCEP, head of EMC, NOAA Administrator, and many more).

U.S. weather prediction is not the best? No one is responsible and fingers are pointed in all directions.

2. The research community is mainly using other models, and thus not contributing to the national operational models.

The U.S. weather research community is the largest and best in the world, but in general they are NOT using NOAA weather models. Thus, research innovations are not effectively transferred to the operational system.

The National Center for Atmospheric Research in Boulder, Colorado

Most American weather researchers use the weather modeling systems developed at the National Center for Atmospheric Research (NCAR), such as the WRF and MPAS systems. They are well documented, easy to use, supported by NCAR staff and large user community, with tutorials and annual workshops. Time after time, NOAA has rejected using NCAR models, decided to go with in-house creations, which has led to a separation of the operational and research communities. It was a huge and historic mistake that has left several at NCAR reticent about working with NOAA again.

There is one exception to this depressing story: the NOAA ESRL group took on WRF as the core of its Rapid Refresh modeling systems (RAP and HRRR). These modeling systems, not surprisingly, have been unusual examples of great success and state-of-science work in NOAA.

3. Computer resources are totally inadequate to produce a world-leading numerical weather prediction modeling system.

NOAA currently has roughly 1/10 to 1/100th of the amount of computer resources necessary for success. Proven technologies (like 4DVAR and high-resolution ensembles) are avoided, ensembles (running the models many times to secure uncertainty information) are low resolution and small, and insufficient computer resources are available for research and testing.

Even worse, NOAA computer resources are very difficult for visitors to use because of security and bureaucracy issues, taking the better part of a year, if they are ever allowed on.

There is a lot of talk about using cloud computing, but there is still the issue of paying for it, and cloud computing has issues (e.g., great expense) for operational computing that requires constant, uninterruptible large resources.

With responsibility for U.S. numerical weather prediction diffused over many individuals and groups, no one has put together a coherent strategic plan for U.S. weather computing or made the case for additional resources. Recently, I asked key NWS personnel to share a document describing the availability and use of NWS computer resources for weather prediction: no such document appears to exist.

4. There is a lack of careful, organized strategic planning.

NOAA/NWS lacks a detailed, actionable strategic plan on how it will advance U.S. numerical weather prediction. How will modeling systems advance over the next decade, including detailed plans for coordinated research and computer acquisition. Major groups, such as the European Center and UKMET office, have such plans. We don’t. Such plans are hard to make when no one is really responsible for success.

NOAA has tried to deal with the lack of planning by asking U.S. researchers to join committees pulling together a Strategic Implementation Plan (SIP), but these groups have been of uneven quality, have tended to produce long laundry lists, and their recommendations do not have a clear road to implementation.

5. The most innovative U.S. model development talent is avoiding NOAA/NWS and going to the private sector and other opportunities.

U.S. operational weather prediction cannot be the best, when the best talent coming out of our universities doesn’t want to be employed there. Unfortunately, that is the case now. Many of the best U.S. graduate students do not want to work for NOAA/NWS–they want to do cutting edge work in a location that is intellectually exciting.

EPIC: The Environmental Prediction Innovation Center

Congress and others have slowly but surely realized that U.S. numerical weather prediction is still in trouble want to deal with this problem. To address the issue, Congress passed recent legislation (The National Integrated Drought Information System Reauthorization Act of 2018 ), which instructs NOAA to establish the Earth Prediction Innovation Center (EPIC) to accelerate community-developed scientific and technological enhancements into operational applications for numerical weather prediction (NWP). Later appropriation legislation provided funding.

Last summer, NOAA held a community workshop regarding EPIC and asked for input on the new center. There was strong support, most participants supporting a new center outside of NOAA. The general consensus: it will take real change in approach to result in real change in outcome. They are right.

Two Visions for EPIC

There are two visions of EPIC and the essential question is which NOAA will propose in its request for proposals to be released during the next month.

A Center Outside of NOAA with Substantial Autonomy and Independence

In this vision, EPIC will be an independent center outside of NOAA. It will be responsible for producing the best unified modeling system in the world, supplying the one point of responsibility that has been missing for decades.

This EPIC center would maintain advisory committees that would directly couple to model developers, and should have sufficient computer resources for development and testing. It would build and support a community modeling system, including comprehensive documentation, online support, tutorials, and workshops.

Such a center should be in a location attractive to visitors and should entrain groups at NCAR and UCAR (like the Developmental Testbed Center). It will maintain a vibrant lecture series and employ some of the leading model and physics scientists in the nation.

EPIC should be led by a scientific leader of the field, with a strong core staff in data assimilation and physics. This EPIC center will be able to secure resources from entities outside of NOAA (although NOAA funding will provide the core support).

Such an EPIC center might well end up in Boulder, Colorado, the intellectual center of U.S. weather research (with NCAR, NOAA ESRL lab, University of Colorado, Joint Center for Satellite Data Assimilation, and more), and there is hope that UCAR (the University Corporation for Atmospheric Research) might bid on the new center. If it did so and won the contract, substantial progress could be made in reducing the yawning divide between the U.S research and operational numerical weather prediction communities.

The Alternative: A Virtual Center Without Independence Or Responsibility

There are some in NOAA that would prefer that the EPIC center would simply be a contractor to NOAA that supplies certain services. It would not have responsibility for providing the best modeling systems in the world, but would accomplish NOAA-specified tasks like external support for the unified modeling system and fostering the use of cloud computing. It is doubtful that UCAR would bid on such a center, but might be attractive to some “beltway bandit” entity. This would be a status-quo solution.

The Bottom Line

From all my experience in dealing with this issue, I am convinced that an independent EPIC, responsible for producing the best weather prediction system in the world, might well succeed. It is the breakthrough that we have been waiting for.

Why? Because it can simultaneously solve the key issues that have been crippling U.S. operational numerical weather prediction centered in NOAA: a lack of single point responsibility, that complex array of too many players and decision makers, and the separation of the research and operational communities, to name only a few.

A NOAA-dependent virtual center, which does not address the key issues of responsibility and organization, will almost surely fail.

And let me stress. The problems noted above are the result of poor organization and management. NOAA and NWS employees are not the problem. If anything, they have been the victims of a deficient organization, working hard to keep a sinking ship afloat.

The Stars are Aligned

This is the best opportunity to fix U.S. NWP I have seen in decades. We have an extraordinary NOAA administrator (Neil Jacobs) for whom fixing this problem is his top priority (and he is an expert in numerical weather prediction as well). The nation (including Congress) knows about the problem and wants it fixed. The President’s Science Advisory (Kelvin Drogemaier) is also a weather modeler and wants to help. There is bipartisan support in Congress.

During the next month, the RFP (request for proposals) for EPIC will be released by NOAA. We will then know NOAA’s vision for EPIC, and thus we will know whether this country will reorganize its approach and potentially achieve a breakthrough success, or fall back upon the structure that failed us in the past.

HT/Yooper

Do we really need NWS anymore? NOAA?

Replace the NWS with TWC?

No thanks!

The problem has been they’ve become bureaucracies in that organizations with no accountability. No one in the top tiers are held personally responsible for doing poorly. They are too often rewarded despite the reliability of the product they produce.

The problem with any government run or even funded agency is all the bureaucracy and regulations. It is more important to push for social justice then to actually meet a scientific goal for example. The levels of management become, in a word, unmanageable. People who have NO COMPETENCY in the field end up running the bureaucracy – getting their through politics and not because they are great scientists. I don’t know how many times I have seen this in our government and related agencies.

You can start all over with a new agency and make a lot of progress – for about 10 to 15 years – and then it all starts over again. The politicians rise through the ranks, the rules and regulations focus on the wrong goals, and the new agency becomes once again, incompetent.

This can ONLY be fixed by keeping these entities private. Have 2 or 3 competing private entities at any given time and keep paying the winner bonuses while changing out the want-to-be’s. Remember to keep all code and data public domain. Competition will keep these companies trim and competent (at least relative to a government agency).

As an aside – Norman (OK) *would*be a good place for such a weather center – their are a lot of still competent weather scientists found there, and lots of engineers. They are a less elite-style of people, more down to Earth workers.

NASA is a good example of a once proud agency that now is mostly incompetent. They are more concerned with diversity and gender promotions then in actually performing competent science and engineering. When social justice becomes the driving force, it will be the only goal met.

Now just think of all health care becoming government paid for and controlled, and then try to sleep…

None of my farmer friends rely on the government for weather forecasting. They all pay for the accuracy provided by the private sector.

But any private sector company has to buy in model product!

They number crunch the world’s weather …. which is what is needed even on a regional scale for a period longer than a day.

A private company will just make it more user friendly.

That is why it is unfair.

Organisations such as the UKMO actively give info to METEOGroup …. who took away their BBC contract. Because it was cheaper.

Well of course it was.

MeteoG don’t have to run and maintain state-of-the-art supercomputers just for a start.

Can you explain why UKMO is giving away their product at below cost?

They are all using major global and regional models paid for by taxpayers John. They provide next to nothing original, they interpret and reformat then represent the data from the taxpayer funded models. Private does not work on that scale beyond that sort of piggy-back WX service model (which is not to belittle what they do or the value of it).

Cliff. Excellent overview. I echo much of what you say when, during my talks about weather, I always get the question … “why are we so bad” compared to the European model? It’s eye-opening to see the politics behind the science — but that’s usually the problem with anything — especially climate change. Anyway, this recent article helps explain some of the data issues …

https://qz.com/1769842/how-meteorologists-are-using-data-to-improve-hurricane-forecasts/

Don’t concentrate just on models. Data feed is equally important. How do models handle satellite data? Data from ships, airplanes, and ARGO buoys?

And enough spot ‘truthing’ sensors to calibrate global input data on the fly.

the U.S. global data assimilation system is not as good as those of leading centers.

Prb’ly. In addition, Joe Bastardi says the GFS model has way too much feedback in its parameters & thus chronically misses cold air in the longer forecasts.

http://www.weatherbell.com/premium

The state-of-the-art is in very long range solar based prediction of annular mode anomalies.

” … solar based prediction of annular mode anomalies.”

—

I agree about prediction of annular mode anomalies, but who’s doing such a solar-based prediction?

It is very likely that NOAA is simply overburdened with activism by it’s management and rank and file bureaucrats.

Producing Climate Crusader tools like this.

https://www.climatecentral.org/news/noaas-new-cool-tool-puts-climate-on-view-for-all-16703

It’s just another deep state operating without consequences.

Remove “politics” from political science and what’s left?

Something that doesn’t pretend to understand everything, but it want’s too.

Something that isn’t always right and often wrong, but it’s honestly trying to get it right.

How do they define “accurate”?

I was watching the weather radar for my area a couple weeks back when we were expecting snow. The “future” radar kind of went haywire about 4 hours ahead, at least that’s the way it looked to me. After those 4 hours, the current radar sort of resembled the earlier “future” radar (actual cloud cover and snowfall was not nearly as widespread), but it’s unclear to me how accurate I can expect it to be, or how accurate it can be.

I’ve often wondered about the “future radar” stuff.

What I’ve never seen is a split screen of the “future radar” side by side with the later actual radar.

I wouldn’t expect it to be an exact match but I would like to see unbiased comparisons.

re: ” “future radar” stuff.”

Conceptualization/presentation created for the public from the “chance of precipitation” number cranked out by models; it works better for people’s minds to SEE what is meant by that abstract number, “chance of precipitation” than just hear it “voiced” or flashed on the screen.

Notice also the colored “zones” over a geographical area depicting expected precipitation amounts shown by some stations in advance of a storm system; those can also be used to ‘give life’ to that “chance of precipitation” number.

DCE said: “…All using new computers will get you is the wrong answer, faster…..” Not entirely true but close to it. More likely it means “being able to run more scenarios for AGW until we get one that supports the narrative”. Trying to tame a chaotic system with computers is a fool’s errand. It’s like trying to continually cut something in half to get to zero.

“…All using new computers will get you is the wrong answer, faster…..”

Ignorant nonsense of the worst kind. With improved speed comes better coding and more data and higher resolution, you get a forecast system that’s more accurate more often for longer. WX forecast models have zip to do with AGW, what are you talking about? Even chaotic systems are physics and described in math, and have a degree of predictability that can be leveraged and teased out by research and experiment. It’s anti-science absurdity to pretend they don’t – it’s also observably wrong.

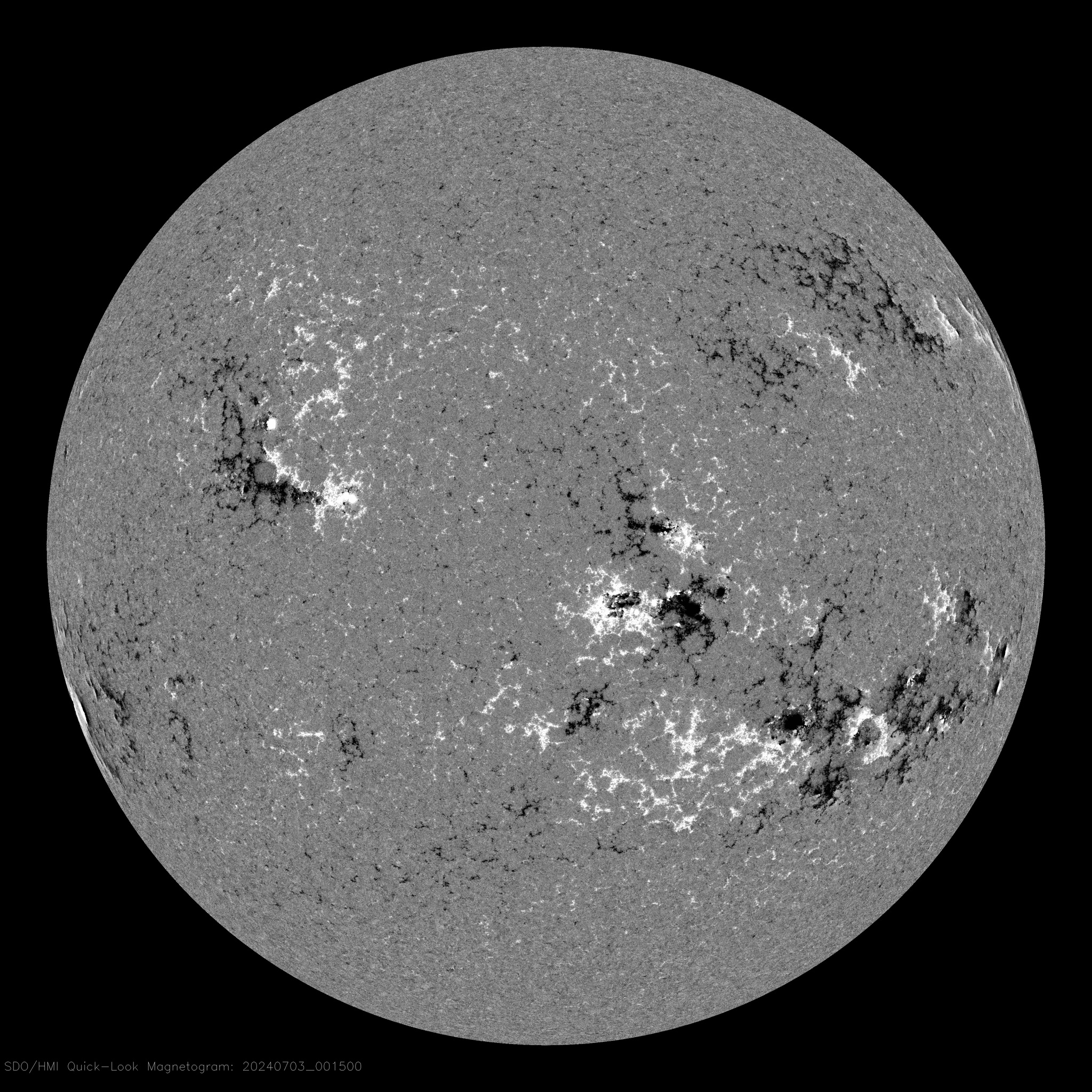

NOAA does lot of space weather prediction, and they do good job as far as I can judge, while I think that NOAA/NASA co-chaired international panel forecast of the SC25 max is on high side:

https://www.swpc.noaa.gov/news/solar-cycle-25-forecast-update

Today’s solar magnetogram has two sunspots, one in the NH is from the old SC24 while one in the SH is from the mew SC25 cycle.

January up to date had 16 spotless days while monthly SSN count points to 5 or 6.

With NOAA, NCAR, ESRL, UCAR, NWS, EMC, OSTI, OWAQ, plus independent military systems we have too many offices.

The National Weather Service should collect data and supply it to users. The National Climate Atmospheric Research unit stands alone and should become the National Climate Research unit- this should be where weather modelling research should be done.

Earth Systems Research probably can stand alone in coordination with other labs.

University Corporation for Atmospheric Research should be handled by limited term fellowships at government labs or by using only university funds with no Federal money or offsets included.

Environmental Modeling Center becomes a part of ESRL

Office of Scientific and Technical Information combines with the National Technical Reports Library

Office of Weather and Air Quality- Air Quality is moved to the EPA. Office of Weather(Quality) is folded into the ESRL.

That eliminates five separate duplications of effort and puts the functions together in complementary units.

Of course all the various units will fight like hell and NOAA will be the worst. Parts of NOAA should go back to being the National Air and Space Authority where all the international politics and over sight belongs.

Weather forecasts with probabilistic degrees of continuity.

The weather will probably be predicted by a poll

Democracy works until overridden for special, peculiar, and politically congruent interests.

CLASSIC 1980’s weather forecast using hand-drawn maps AND a visible-light satellite image-

Intro starts with venerable KXAS ch 5 Dallas / Ft. Worth Texas weatherman Harold Taft going through some of the earlier ‘tools of the trade’ used when forecasting wasn’t such an exact science:

Prediction implies surmounting a discontinuity in time, space, knowledge, or skill. Prediction is precluded by chaos (i.e. incomplete or insufficient characterization and computationally unwieldy). Forecasts, however, will improve in a system that is noteworthy for its semistable processes.

There is an interesting concept in storm forecasting that has been thrown up recently, and that is the coincidence in timing between the acceleration point of any large storm and solar ‘Ap’ impacts.

Some outline discussion is given in :

https://howtheatmosphereworks.wordpress.com/about/solar-activity-and-surface-climate/storm-analysis/

with further discussion on the ‘Electromagnetic Theory’ section on the same site.

If you have access to the raw data, try a cross reference between any large storm acceleration point and the relevant ‘Ap’ impact records.

An example may be that of “The Perfect Storm” of 1st November 1991 cross referenced to Carrington Rotation – CR1848;- ’Ap’ impacts October 27 to November 02, peaking at ‘Ap = 128’ on October 29.

As with all things in nature, nothing is ever 100% however if we observe any large storm which suddenly accelerates from, say Cat:3 to Cat:5, the relationship to a sudden ‘Ap’ impact is far too common to be pure coincident.

A good area for further research as it may imply the ability to predict such events a few days in advance.

Links in first principles? (i.e., what are the links in the first principle laws in physics; Ampere’s Law, Gauss’s Law, Newton’s Law, Maxwell etc.)

Existing models already predict cyclonic storm category changes with very good skill levels, in time, space and location with reference only to accurate and sufficient atmospheric met data to predict trends, so why bother with external observations.

Occam’s Razor.

For those advocating switching public forecasting and warning duties to the private sector, who will take on the liability issues of issuing warnings for public safety? Will the public have to pay for services that have historically been provided by taxpayer funds…a nominal few cents per household annually? In addition, private entities will be financially unable to sustain a profit if they are forced to collect and process the data needed for numerical weather forecast models. Thus, the government would be relegated to a data collection and processing entity. But, the point brought up by others about national security is exceedingly salient. The cost-to-benefit ratio in paying for government weather offices with meteorologists analyzing and disseminating forecasts, and especially warnings, is net positive to society, as evidenced by numerous academic studies throughout the years (I leave it to the readers as a research task to search). But conflict-of-interest issues have historically been a major factor in keeping weather forecasting and warning in the public arena. How can society be assured that a forecast concern sponsored by an umbrella company, or a raincoat maker, or other weather-dependent companies, will not be beholden to its sponsors and issue scientifically correct and neutral products? I also would strongly advise AGAINST basing any new model organization at Boulder…it seems talent there has been misused.