Guest Post by Willis Eschenbach [see update at the end]

How much is a “Whole Little”? Well, it’s like a whole lot, only much, much smaller.

There’s a new paper out. As usual, it has a whole bunch of authors, fourteen to be precise. My rule of thumb is that “The quality of research varies inversely with the square of the number of authors” … but I digress.

In this case, they’re mostly Chinese, plus some familiar western hemisphere names like Kevin Trenberth and Michael Mann. Not sure why they’re along for the ride, but it’s all good. The paper is “Record-Setting Ocean Warmth Continued in 2019“. Here’s their money graph:

Now, that would be fairly informative … except that it’s in zettajoules. I renew my protest against the use of zettajoules for displaying or communicating this kind of ocean analysis. It’s not that they are not accurate, they are. It’s that nobody has any idea what that actually means.

So I went to get the data. In the paper, they say:

The data are available at http://159.226.119.60/cheng/ and www.mecp.org.cn/

The second link is in Chinese, and despite translating it, I couldn’t find the data. At the first link, Dr. Cheng’s web page, as far as I could see the data is not there either, but it says:

When I went to that link, it says “Get Data (external)” … which leads to another page, which in turn has a link … back to Dr. Cheng’s web page where I started.

Ouroborous wept.

At that point, I tossed up my hands and decided to just digitize Figure 1 above. The data may certainly be available somewhere between those three sites, but digitizing is incredibly accurate. Figure 2 below is my emulation of their Figure 1. However, I’ve converted it to degrees of temperature change, rather than zettajoules, because it’s a unit we’re all familiar with.

So here’s the hot news. According to these folks, over the last sixty years, the ocean has warmed a little over a tenth of one measly degree … now you can understand why they put it in zettajoules—it’s far more alarming that way.

Next, I’m sorry, but the idea that we can measure the temperature of the top two kilometers of the ocean with an uncertainty of ±0.003°C (three-thousandths of one degree) is simply not believable. For a discussion of their uncertainty calculations, they refer us to an earlier paper here, which says:

When the global ocean is divided into a monthly 1°-by-1° grid, the monthly data coverage is <10% before 1960, <20% from 1960 to 2003, and <30% from 2004 to 2015 (see Materials and Methods for data information and Fig. 1). Coverage is still <30% during the Argo period for a 1°-by-1° grid because the original design specification of the Argo network was to achieve 3°-by-3° near-global coverage (42).

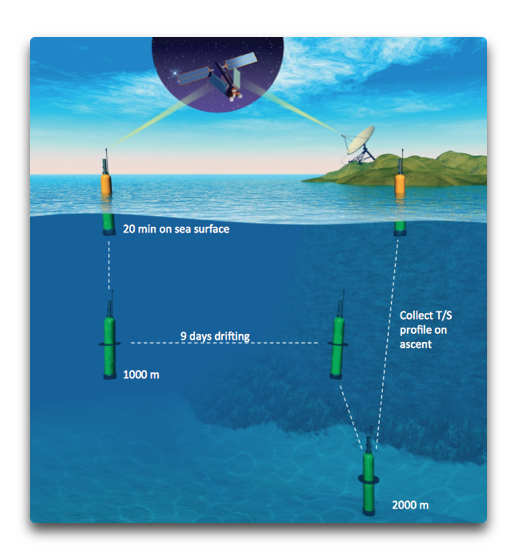

The “Argo” floating buoy system for measuring ocean temperatures was put into operation in 2005. It’s the most widespread and accurate source of ocean temperature data. The floats sleep for nine days down at 1,000 metres, and then wake up, sink down to 2,000 metres, float to the surface measuring temperature and salinity along the way, call home to report the data, and sink back down to 1,000 metres again. The cycle is shown below.

It’s a marvelous system, and there are currently just under 4,000 Argo floats actively measuring the ocean … but the ocean is huge beyond imagining, so despite the Argo floats, more than two-thirds of their global ocean gridded monthly data contains exactly zero observations.

And based on that scanty amount of data, which is missing two-thirds of the monthly temperature data from the surface down, we’re supposed to believe that they can measure the top 651,000,000,000,000,000 cubic metres of the ocean to within ±0.003°C … yeah, that’s totally legit.

Here’s one way to look at it. In general, if we increase the number of measurements we reduce the uncertainty of their average. But the reduction only goes by the square root of the number of measurements. This means that if we want to reduce our uncertainty by one decimal point, say from ±0.03°C to ±0.003°C, we need a hundred times the number of measurements.

And this works in reverse as well. If we have an uncertainty of ±0.003°C and we only want an uncertainty of ±0.03°C, we can use one-hundredth of the number of measurements.

This means that IF we can measure the ocean temperature with an uncertainty of ±0.003°C with 4,000 Argo floats, we could measure it to one decimal less uncertainty, ±0.03°C, with a hundredth of that number, forty floats.

Does anyone think that’s possible? Just forty Argo floats, that’s about one for each area the size of the United States … measuring the ocean temperature of that area down 2,000 metres to within plus or minus three-hundredths of one degree C? Really?

Heck, even with 4,000 floats, that’s one for each area the size of Portugal and two kilometers deep. And call me crazy, but I’m not seeing one thermometer in Portugal telling us a whole lot about the temperature of the entire country … and this is much more complex than just measuring the surface temperature, because the temperature varies vertically in an unpredictable manner as you go down into the ocean.

Perhaps there are some process engineers out there who’ve been tasked with keeping a large water bath at some given temperature, and how many thermometers it would take to measure the average bath temperature to ±0.03°C.

Let me close by saying that with a warming of a bit more than a tenth of a degree Celsius over sixty years it will take about five centuries to warm the upper ocean by one degree C …

Now to be conservative, we could note that the warming seems to have sped up since 1985. But even using that higher recent rate of warming, it will still take three centuries to warm the ocean by one degree Celsius.

So despite the alarmist study title about “RECORD-SETTING OCEAN WARMTH”, we can relax. Thermageddon isn’t around the corner.

Finally, to return to the theme of a “whole little”, I’ve written before about how to me, the amazing thing about the climate is not how much it changes. What has always impressed me is the amazing stability of the climate despite the huge annual energy flows. In this case, the ocean absorbs about 2,015 zettajoules (10^21 joules) of energy per year. That’s an almost unimaginably immense amount of energy—by comparison, the entire human energy usage from all sources, fossil and nuclear and hydro and all the rest, is about 0.6 zettajoules per year …

And of course, the ocean loses almost exactly that much energy as well—if it didn’t, soon we’d either boil or freeze.

So how large is the imbalance between the energy entering and leaving the ocean? Well, over the period of record, the average annual change in ocean heat content per Cheng et al. is 5.5 zettajoules per year … which is about one-third of one percent (0.3%) of the energy entering and leaving the ocean. As I said … amazing stability.

And as a result, the curiously hubristic claim that such a trivial imbalance somehow perforce has to be due to human activities, rather than being a tenth of a percent change due to variations in cloud numbers or timing, or in El Nino frequency, or in the number of thunderstorms, or a tiny change in anything else in the immensely complex climate system, simply cannot be sustained.

Regards to everyone,

w.

h/t to Steve Milloy for giving me a preprint embargoed copy of the paper.

PS: As is my habit, I politely ask that when you comment you quote the exact words you are discussing. Misunderstanding is easy on the intarwebs, but by being specific we can avoid much of it.

[UPDATE] An alert reader in the comments pointed out that the Cheng annual data is here, and the monthly data is here. This, inter alia, is why I do love writing for the web.

This has given me the opportunity to demonstrate how accurate hand digitization actually is. Here’s a scatterplot of the Cheng actual data versus my hand digitized version.

The RMS error of the hand digitized version is 1.13 ZJ, and the mean error is 0.1 ZJ.

Hi Willis,

Could you please explain how you covnerted the energy change to a temperature change ?

David, the conversion is possible due to the fact that it the “specific heat” of seawater is about 4 megajoules per tonne per degree C. In other words, it takes 4 megajoules to warm a tonne of water by 1°C.

w.

“The RMS error of the hand digitized version is 1.13 ZJ, and the mean error is 0.1 ZJ.”

Hey Willis, can we have that in degrees please 😉

seriously, nice work.

“Not sure why they’re along for the ride, but it’s all good. ”

how do you expect them to get a hockey stick without having Mann on board to present incompatible data from a variety of data sources in the same colour and pretend it’s a trend.

Where would any search for missing heat be with the bone fides of Trenberth .

You knocked the Puck outa that post!

Trenberth and Mann have ‘prestige’ in the right circles to get the best coverage for the ‘oceans are boiling’ story. What with them now being acid too, count me out from having a paddle.

Changes in salinity alter the specific heat more than allows for the “accuracy” the paper claims then.

12 cheeseburgers. Your comment is talking about 12 cheeseburgers ± a few pickles.

Maybe 12 pickle molecules.

All their claimed are pickled, or they are all pixelated.

Excellent commentary on the error bars, yet certainly there are other factors that increase the absurdity of their claims…

The floats are not fixed or tethered to one location! They all move!

Finally, making a WAG that they are right, then as the atmosphere warms one far quicker, the difference between the ocean T and atmospheric T increases, thus over time the oceans ability to counter the atmospheric warming increases.

Yeah, but how many sesame seeds per bun?

They should use scarier units, such as electron-volts. 1 zJ = 6.24E39 eV! Now THAT’S some scary stuff!

zeta = 10²¹

J/eV = 1.6×10⁻¹⁹, so do 1/x inversion

eV/J = 6.25×10¹⁸

eV/zetaJ = 6.25×10¹⁸ × 10²¹ → 6.25×10³⁹!

Yay.

Math.

Thanks, Goatguy. My go-to resource on conversions is Unit Juggler, q.v.

w.

Maths – FIFY

Excellent article, would there be any other reason (other than leveraging alarmism) that the original paper would use Zetta-joules as a measurement ? We’re they trying to illustrate something else ?

Will Manb & co tell us, ARGO is measuring zeta joules ?

The hysteria surrounding this is unbelievable … here’s CNN:

Oceans are warming at the same rate as if five Hiroshima bombs were dropped in every second

People truly don’t realize how big the energy flows in the climate system actually are. Five Hiroshima bombs per second is the same as 0.6 watts per square metre … and downwelling energy at the surface is about half a kilowatt per square metre.

w.

YES. And what is more is that for every kilogram of water evaporated from the oceans some 694 Watthrs. are removed from the surface and dissipated into the clouds and beyond to space. This being why the oceans never seem to get above 35DegC even after tens of thousands of years of these bombs being dropped every second.

A watched kettle never boils it appears.

“A watched kettle never boils it appears.”

+1

Willis

Nice article. The britishpress are talking about a surge in warming with oceanic apocalypse around the corner.

one of the problems is context, such as your pertinent comment about the huge amount of energy entering the ocean, of which the human content is actually miniscule, which somehow never makes it into the media

The other problem is that most people have problems with numbers. It would be useful if numbers less than one could be expressed in words,for example one hundredth of a degree centigrade rather than the figure. The vanishingly smaller the number such as 0.001, the less likely it is that the average person will understand it

Tonyb

TonyB

Because many people have problems with numbers, it behooves us to translate oddball metrics into things people can understand. Willis did exactly that.

Something else we can do is explain in short sentences that a claim for a detected change that is smaller than the uncertainty about that change has to be accompanied by a “certainty” number.

Mmm It is not that we can’t calculate some average value from a host of instruments and readings. It is just that propagating the uncertainties by adding in quadrature to get the “quality” of the average (the mean) means getting a number with a pretty large uncertainty.

Until we know the number of readings and the number of instruments we can’t say exactly what the uncertainty is, but it is certainly more than 1.5 degrees C.

Suppose the claimed change is 0.1 degrees ±1.5, for example. We have to consider what certainty claim should accompany the 0.1. Suppose the errors in readings were Normally distributed (a reasonable assumption). Given a Sigma 1 uncertainty of ±1.5 C it means we can say the true average value is going to be within 1.5 degrees 68% of the time (were we to repeat the experiment). To say it is within 0.1 degrees is quite possible provided we admit there is, for example, only a 2% chance that this is true.

The public does not consider the implications of claims for small detected changes with a large uncertainty. If the public were all educated and sharp-eared consumers of information they would insist that the purveyors of calamity and disaster state the claims properly. Clearly, scientists are not going to do this unprovoked.

The reason I said “2%” is because there is 98% chance that the true answer lies outside the little range within which the “0.1 degrees” lies. That’s just how it is folks.

The British press – notably the Guardian, chief doomsayer among them all!

Carbon 500

Is that the Manchester Grauniad ?

cheers

Mike

Willis, at the very outset of the AGW hysteria, I’ve regarded the leftist media as the most culpable “dealer” in the whole supply chain of charlatans who contrive to benefit themselves from this perfidy –

1. the media is addicted to ‘click-bait’ stories;

2. dodgy academics know the media will publish every alarmist press release they put out;

3. the media knows that politicians will shamelessly jump aboard any issue that can garner them votes;

4. the circle of perfidy is completed when university administrators work on their academics to produce research that will pressure politicians and bureaucrats to direct grant funding to those projects that they can claim are “doing something”.

And so it goes on and on and on.

Hopefully, in the not too distant future, there will be another “Enlightenment” event that will cease the current auto-da-fe inquisition being inflicted on climate data.

Willis,

Not the Hiroshima bombs again!

This old chestnut was discredited years ago, I thought.

I remember when this bogeyman was being pushed and it was claimed the earth was subjected to 5 Hiroshima bombs per second by global warming, someone pointed out that the Sun was bombarding the earth’s atmosphere with 1700 Hiroshima bombs a second.

Did another 5 really matter?

It does once it is translated into Manhattans of ice melted away in the Arctic!

Nicholas,

As distinct from the Antarctic gaining Manhattans of Polar Ice!

I prefer to measure in ham sandwiches. The oceans are warming by 85 million ham sandwiches a second. Don’t tell AOC or she will say this is unfair to the vegan fish.

Impossible to melt Manhattan islands worth of ice with 5 Hiroshima bombs.

Leaving your Manhattans and ice reference as the ice in a few shallow Manhattan drinks.

Try cutting back.

Interesting. The results of the Von Shukman paper using Argo float data to 2012 was 0.62w/ sq m (+/- around 0.1w/sq m). This is from memory. It might be 0.64 +/- 0.09 but it’s close. Also, she did same thing in 2010 when the float deployment wasn’t quite complete and got 0.72w/sq m.

Her reference to 0.003°C accuracy was for the precision of the thermometers on the Argo floats themselves, not the overall accuracy of the gridded result which involves…models. As you can see from the above, her error is ~1/6 of the result and that error translates directly in the temp conversion because the relevant water mass and specific heat capacity of water are known constants.

Von Shukman seemed to be the go-to authority around 2012. I’ve not followed OHC in any detail for a long time since then though.

Note that “device resolution” is not at all the same concept as “device precision”, and neither is equivalent to what is known in the scientific world (as opposed to the fantasy world of climate science) as “accuracy”.

In fact these are all quite distinct concepts, not to mention different in how they help to try to determine exactly what has been measured and how anyone should have confidence that the result given is meaningful and properly expressed.

Metrology is an entire discipline in and of itself…as is statistical analysis.

Neither of these fields of study has ever been discovered to exist by any of the alarmists, let alone incorporated into the malarkey they (seemingly reflexively) spewed forth.

My frequent thoughts, exactly, but you are much more eloquent than I could ever hope to be.

In looking at Willis’ error bars in his digitised graph you can eyeball the 2010 error bar and see that it’s roughly 1/6 of the full reading. This is in keeping with Von Shukman 2010 and 2012 +/- error as stated in my comment above.

So it also bears out my point that the 0.003°C is related to the precision of the Argo float thermometers and not the accuracy of the modelled sum of gridded areas. The precision of the Argo float thermometer would’ve been calibrated in the laboratory before deployment. This would explain such fine precision as being credible whereas 0.003°C is indeed not credible for the OHC or its ocean temperature derivative that Willis derived.

Any measurement, as well as any calculation derived from any measurement, can only legitimately be reported to the number of significant figures as the least certain element of the calculation.

People that work in labs know how difficult it is to accurately measure even a small vessel of water to within one tenth of a degree.

The resolution of the device simply gives the maximum theoretical precision, and the calibration standard the maximum theoretical possible accuracy.

These guys think measuring random places in the ocean a few times a month lets them translate this theoretical value (if one wants to be generous and assume that the manufacturer’s supplied info is true without fail and in every case) of the sensor in the ARGO float, to the accuracy of their calculation for the heat content of the entire ocean and how this is changing over the years.

No explanation for how they have the same size error bar in the year 2000, prior to a single ARGO float being deployed, as they show in 2010, when they project had only recently reached an operational number of devices deployed.

And not much different (in absolute terms) than decades prior to that when virtually no measurement of deep water had ever been made, and electronic temperature sensors had not even been invented yet.

On top of that…it needs to be mentioned in every discussion, that all of the results they get are at several stages adjusted and “corrected”, and made to match the measured TOA energy imbalances between upwelling energy and incoming solar energy.

Just what is the “right” temp for the oceans? We are in an ice age so I would guess that we are running a little cold.

I would like things to be a little warmer as our governor here in NY is working hard to destroy our energy infrastructure and I’ll be freezing to death if the climate doesn’t warm a bit.

I’d like to know how many HBPS (Hiroshima bombs per second) are “going off” when the Fleet of Elon’s Teslas are charging/discharging every day.

Need some balancing perspective here.

How many Tesla cars have been sold in the US so far? 2012-2020 over 890,000. Compare to just Ford F-series pickup truck sales per year:

2019 1,000,000 or so…

2018 909,330

2017 896,764

2016 820,799

2015 780,354

2014 753,851

2013 763,402

2012 645,316

The Ford F-Series outsells all makes and models of EV’s combined in the US by a wide margin.

Why is market capitalization of Tesla greater than Ford and GM combined? Market expectations for Tesla must include not only huge growth in car/truck sales but also other things not yet identified. Or maybe Tesla stock is just over priced.

“Oceans are warming at the same rate as if five Hiroshima bombs were dropped in every second”

Jan 2014 Skeptical Science:

“… in 2013 ocean warming rapidly escalated, rising to a rate in excess of 12 Hiroshima bombs per second”

https://skepticalscience.com/The-Oceans-Warmed-up-Sharply-in-2013-We-are-Going-to-Need-a-Bigger-Graph.html

Well that’s the first thing you said that’s not true. It is quite believable the hysteria surrounding this.

Oh we forgot !!!!

SUB-SEA VOLCANO,s

Shhh! Don’t introduce nasty old facts……

All those Hiroshima’s seem to be causing nuclear winter in BC.

Every second day there’s a fresh layer of fallout needing to be plowed and shovelled.

It’s just about time to see a travel agent about a trip to somewhere warmer. Maybe Montreal.

I just received the Hiroshima bomb analogy from the ‘scholarly’ Sigma Xi Smart Briefs, relying on a second hand source. Who in academia are teaching that second-hand sources are authorities? Thought that we were relying on climate scientists who rely on second-hand data?

https://edition.cnn.com/2020/01/13/world/climate-change-oceans-heat-intl/index.html

everybody signs on cause it’s publish or perish…and then when one of the others does a paper…the others jump on it too…

…only problem I have with Argo…each one floats around in the same glob of water

Argo in situ calibration experiments reveal measurement errors of about ±0.6 C.

Hadfield, et al., (2007), J. Geophys. Res., 112, C01009, doi:10.1029/2006JC003825

At WUWT a few years ago, usurbrain posted a very comprehensive criticism of the accuracy of argo floats.

The entire paper is grounded in false precision.

Just like the rest of consensus climatology. It’s all a continuing and massive scandal.

Thanks, Pat, always good to hear from you. I hadn’t seen that study. From the abstract:

Don’t know whether to laugh or cry …

w.

Which means they don’t know if the oceans have warmed or cooled, period.

The way I look at it, Jeff, given atmospheric temperatures are generally increasing, a process that is influenced minimally by increased levels of CO2, it seems safe to extrapolate that the upper levels of the oceans are warmer than before and thus injecting massive amounts of heat into the atmosphere.

Could be Chad. But the paper reviewed by Willis doesn’t demonstrate it.

I don’t think we really know how much “the Earth has warmed” in any given time frame.

Thanks, Willis. It’s always a pleasure to read your work. It’s never short of analytically sound and creative.

“Don’t know whether to laugh or cry …”

I am gonna stick with anger, personally…tempered with a overwhelming and deep seated fatalism, and rounded over time by a raging river of humor.

“Argo in situ calibration experiments reveal measurement errors of about ±0.6 C.”

….that’s all of global warming

Exactly right, Latitude. And the land-station data are no better.

Except for the CRN data, which are of limited coverage (the US) and date only from 2003.

Yes, the USCRN is gold standard.

And the CONUS trend based on USCRN is 0.12 C/decade higher than that of ClimDiv (a very significant divergence).

I couldn’t find any reference to that figure in that paper?

I did find this “The temperatures in the Argo profiles are accurate to ± 0.002°C”

http://www.argo.ucsd.edu/FAQ.html

Believe me, I’m not a warmist, but where did you get that figure from??

From this and Figure 2, we conclude that the Argo float measured increase in global ocean temperature is 0.08C +/- 0.6C (face palm)

‘Science’ by Kevin Trenberth and Michael Mann……

I see, thanks for that!

I knew a university type that wrote a paper with a long title.

Then the title was changed, and a bit more, and the thing was published in a different journal. Repeat. Again, and again.

At an end-of-year party the grad students gave each of the faculty a “funny” sort of gift. One person was given rose-colored glasses.

The “change-the-title” person was given an expanded resume with each of his publication titles permutated in every manner possible.

This made for a large document.

I, of course, had nothing to do with any of this.

@Willis

http://159.226.119.60/cheng/images_files/OHC2000m_annual_timeseries.txt

Thanks, Krishna, appreciated. Curiously, that one goes back to 1940 but the one in their study starts in 1958 …

w.

@Willis

their study starts in 1958 …

So, not all data have an ARGO origin…

Ah, splicing. That’s where Dr. Mann shines.

i can harly beleive they can measure it too.. but then we have to explain this regular increase..it should be a mess..

Exactly my thoughts. A surprisingly noiseless plot for even a 100% coverage of a uniform ocean. Surely an El Nino year affects the average temperature by a hundredth of a degree, let alone the average of of the poor coverage – or extremely poor pre Argo.

SST has a very small effect when you are covering down to 2km.

There are changes to deeper currents. The Humbolt current is affected down to 600m. There is half a degree effect at the surface, which would be bigger than the plot for the average down to 2000m. My comment is more about the effect on limited sampling even if the actual average remained the same eg a shift of warmer water (0.01°C) to where it is sampled.

Excellent!

I am always amazed they think numbers like 0.003C is an accurate variable range when the equipment used to gather data doesn’t even remotely reach that level of accuracy in the first place.

Interesting, because on a paper where the accuracy requirement for the Argo floats is stated to be 0.005 C, so higher than the 0.003 C they claim to measure year over year…

https://www.google.com/url?sa=t&source=web&rct=j&url=https://www.terrapub.co.jp/journals/JO/pdf/6002/60020253.pdf&ved=2ahUKEwjZs5qD_oPnAhUFA2MBHV-8BhEQFjACegQIBBAB&usg=AOvVaw2zagHkNd5NPKta2pinjwLU

I can totally see how they could convince themselves that, by using the power of averaging, they could produce such accuracies. The technique works well in some circumstances, in the presence of truly random noise. The problem is that nature usually does not throw truly random noise at us. Nature likes to throw red noise at us.

Red noise has decreasing energy as frequency increases. White (truly random) noise has equal energy at all frequencies. That means the energy of white noise is infinite, clearly impossible.

Because of the low frequencies of red noise, it tends to look like a slow drift. For that reason, averaging a signal containing red noise does not, at all, improve accuracy.

The problem with statistics is that most scientists do not understand the assumptions they are making when they apply statistics. I have a hint for them: the ocean is not remotely similar to a vat of Guinness. link

Ha! Averaging works just fine when I’m grinding a crankshaft. I just use a wooden meter stick and measure 50K times,,, all the accuracy I want, great tolerances.

(Three econometricians) encounter a deer, and the first econometrician takes his shot and misses one meter to the left. Then the second takes his shot and misses one meter to the right, whereupon the third begins jumping up and down and calls out excitedly, “We got it! We got it!” link

You must be the guy who did the last overhaul on my Jaguar E-Type.

You don’t mention if that was good or bad.

There is one overhaul item that is different than 99.9% of other cars. valve lash

Of all the car servicing disasters I have heard, the worst was for Jag E-Type. It seems that there overpowering temptations to take short cuts that don’t turn out well.

I’m guessing Ron isn’t a satisfied customer.

The power of the Central Limit Theory!!

Even if it was white noise, that would only matter if they were repeatedly measuring the same piece of water. Measuring a second piece of water, hundreds of miles away, tells you nothing new about the piece of water right in front of you.

Hi Willis! Why so many names on the paper? They’re in it to get a paper count: it’s like beach-bums showing off their pecs: it’s a confirmation- in their eyes- that they are the best. I have a thing, never believe the 5-star on Amazon.

It is an LPU (Least Publishable Unit) exemplar. i.e. a confected, sexed up document aimed at a) publicity, b) some rationale for funding and c) free sexed up content bribes to the backside sniffers in the msm.

@Willis PS

monthly

http://159.226.119.60/cheng/images_files/OHC2000m_monthly_timeseries.txt

Outstanding, thanks.

w.

Willis,

Go to Le Quere et al 2018 which is the annual ‘bible’ paper on the Global Carbon Budget which I have been studying, particularly to gauge the error margin for the Oceans.

There are 76 Co-authors (!) and it must be the holy grail for mainstream climate scientists.

Thank you Willis. Great conversion to reality mode.

Highly related, also, thanks Anthony et al., for getting the ENSO meter back on the sidebar.

Brilliant. Thank you. I saw this splashed all over the front page of the Grauniad (no, I didn’t buy it) and found it hard to tie up with the recent peer-reviewed publications reproduced over at Pierre Gosselin’s brilliant site (No Tricks Zone). You have clarified the situation.

http://www.woodfortrees.org/plot/hadsst3gl/from:1964/plot/hadsst3nh/from:1964/plot/hadsst3sh/from:1964/plot/hadsst3gl/from:1964/trend

Willis. Thanks for the post. I agree with you on your comments abt their report. There are some of us who think the reason for the difference in nh SST and sh SST is pollution.. .

The reviewers need to be disqualified from any future vetting.

Willis thanks for another lesson!

The magnitude of our oceans still challenges my little brain.

Mac

I hope I’m alive when the world wakes up to the ginormous scientific fraud that is being perpetrated by Michael “Piltdown” Mann et al.

Thanks for putting this massively hyped paper into context. It’s all over the broadsheets in the U.K.

Perhaps you could clarify one thing that bothers me on OHC? The common claim in the press releases for papers like this is that “90% of warming due to increases in GHG is in the oceans” yet this only represents ca.70% of the earth’s surface.

At the equator this rises to ca.79%, and the DLW, due to higher air temps, will be greater there than at other latitudes. Is that sufficient to support the ‘90% ‘ claim, or is the figure simply alarmist padding?

James, we don’t actually know how much “warming due to increases in GHG” there is. It might actually be zero. The claim that 90% of it is “in the oceans” is simply not supportable.

w.

They say 90% , that is Trenberth’s “missing heat. ”

They “know” the heat is there because their ( failed ) models say it must be. They can not find it in the surface record, so they hide it in the deep ocean where no one can check their work.

In reality the missing heat is in their heads. That is why they keep exploding.

If 90% of the heat is in the oceans, and the result is they have warmed by a tenth of a degree in sixty years, can we call it a day and cancel the ‘climate crisis’?

Seems reasonable to me.

Obviously you haven’t heard… “60% of the time, it works every time….”

90% of statistics are wrong, and the other half are mostly just made up.

So here’s the hot news. According to these folks, over the last sixty years, the ocean has warmed a little over a tenth of one measly degree.

I know you’re not trying to be funny, but worrying about a + 0.12 K change since 1960 kinda makes a joke of worrying about the “hidden” warming.

“I know you’re not trying to be funny, but worrying about a + 0.12 K change since 1960 kinda makes a joke of worrying about the “hidden” warming.”

Temperature isn’t heat content.

Mass and specific heat come into it.

Try working out what that 0.12K delta would look like in it was to be applied to the atmosphere.

You’ll need the fact that the oceans have a mass 250x that of the atmosphere and that the specific heat of water is 4x that of air.

I was thinking that one could make quite a bit of money by betting people that they could not tell which bowl of water sitting in front of them was warmer…iffen the difference was even 1° , let alone one tenth of that amount.

How many people could tell when the room they were sitting in had warmed by a tenth of a degree, or even one degree?

Typically a room has to change by that amount (~1° C) before a wall thermostat kicks on or off, simply to avoid short cycling of the (air conditioning or heating) equipment being regulated.

Put another way…even a room which is climate controlled by a properly operating thermostat, the air temp will vary by at least one or two degrees (F, or 1°C) between when the things kicks on and when it kicks off.

This is the whole reason for reporting a temperature change in the ridiculous unit of a zettajoule to begin with, and why published MSM accounts of such a study is then helpfully translated into the readily relatable (to the average person in one’s daily life) unit known as one Hiroshima.

They could relate in terms of units such as “the amount of energy delivered by the Sun to the Earth in a day”…but that would make the number appear as meaninglessly tiny as it really is.

Try working out what that 0.12K delta would look like in it was to be applied to the atmosphere.

I don’t care if the 0.12K delta occurred for a million gigatons of mass, it would raise the temp of a flea, guess what, 0.12K. You were trying to make some kind of “point”, and you blew it.

And to add, all your “point” demonstrates is the obvious — the oceans have a huge thermal inertia and can absorb/release large amounts of energy with only small temperature changes. That’s a very good thing because it greatly decreases temp changes due to varying energy inputs.

Thanks to Krishna, I can now demonstrate just how accurate my hand digitization of the data graph actually was. Here’s the comparison …

RMS error of the digitizing is 1.1 ZJ.

w.

Very nice work, Willis!

Do you use WebPlotDigitizer?

Or some other tool?

Or do you just pull up the image in MS Paint or similar, and enter the pixel values in a spreadsheet, and then convert them yourself?

I’m running a Mac, and I use “Graphclick” for digitizing.

w.

You’re right Willis it’s nonsense.

The fact that the atmosphere cannot heat the ocean deserves a mention in my opinion. Heat flows from the ocean to the atmosphere and then lost to space, never the other way round.

It’s the sun, stupid 😀 😀

As always said 😀

Good comment, John.

“the atmosphere cannot heat the ocean …”

True, however, it can, and does, slow it’s cooling.

Just like it does over land.

It’s called the GHE, caused by GHGs.

But we don’t know if any warming or cooling is human caused. Their margin of error means they don’t even know if the oceans are warming or cooling.

Jeff Alberts

Their margin of error for the data shown in the first chart in Willis’s post (their Fig. 1) is stated as “… 228 ± 9 ZJ above the 1981–2010 average.” Their best estimate far exceeds the error margin.

“Their margin of error means they don’t even know if the oceans are warming or cooling.”

I think this is the most important point to come out of this article. The alarmists are making exaggerated claims based on what? Based on a margin of error in their measurements of 0.6C!

See Connolly and Connolly radiosonde data. Ain’t no greenhouse effect bro.

I don’t believe that heat flow from the atmosphere to the oceans can be ruled out, but the issue here is the vast difference in the thermal capacity of air and water. If there was a situation where the atmosphere was warmer than the oceans, so little heat would flow that its effect on the ocean temperature would be very small.

Why not ?

Uncertainty is one of those concepts that alarmists can’t understand, for if they did, they would know with absolute certainty that they can only be wrong. The most obvious example is calling an ECS with +/- 50% uncertainty ‘settled’ where even the lower bound is larger than COE can reasonably support.

Great article, as usual! I look forward to your down-to-earth explanations and analysis for those of us who have some science and/or engineering background, but are not experts in the field of weather or climate and have had reservations about the “certainty” some have on how the complex systems of our planet work.

I was fascinated with the whole Argo project when it started up years ago, but noticed that when its data didn’t immediately confirm rapid “global warming” it dropped out of the news. Thanks again for giving us some perspective on the actual magnitude of trends in our ocean systems.

Willis,

At their provided link:

http://159.226.119.60/cheng/

I did find this data in .txt tabular form here:

http://159.226.119.60/cheng/images_files/OHC2000m_annual_timeseries.txt

http://159.226.119.60/cheng/images_files/OHC2000m_monthly_timeseries.txt

============================

My comments:

Their paper states, “The OHC values (for the upper 2000 m) were obtained from the Institute of Atmospheric Physics (IAP) ocean analysis (see “Data and methods” section, below), which uses a relatively new method to treat data sparseness ….”

IOW, that made up a lot of fake data to infill as they liked.

To wit from their Methods: “Model simulations were used to guide the gap-filling method from point measurements to the grid, while sampling error was estimated by sub-sampling the Argo data at the locations of the earlier observations (a full description of the method can be found in Cheng et al., 2017).

Mann and Trenberth likely were recruited and brought onboard during manuscript drafting by Dr. Fasullo. Mann was listed as senior author, but that was just more pandering to help get the paper published in high impact Western journal. They might as well have put Chinese President Xi as senior author.

What you have to love about these lying perps is the way they ended the manuscript:

“It is important to note that ocean warming will continue even if the global mean surface air temperature can be stabil- ized at or below 2°C (the key policy target of the Paris Agreement) in the 21st century (Cheng et al., 2019a; IPCC, 2019), due to the long-term commitment of ocean changes driven by GHGs. Here, the term “commitment” means that the ocean (and some other components in the Earth system, such as the large ice sheets) are slow to respond and equilibrate, and will continue to change even after radiative forcing stabilizes (Abram et al., 2019). However, the rates and magnitudes of ocean warming and the associated risks will be smaller with lower GHG emissions (Cheng et al., 2019a; IPCC, 2019). Hence, the rate of increase can be reduced by appropriate human actions that lead to rapid reductions in GHG emissions (Cheng et al., 2019a; IPCC, 2019), thereby reducing the risks to humans and other life on Earth.”

What a stinkin’, heapin’ load of dog feces. “Reducing risks to humans and other life?” They might as well ask for offerings to volcano gods and conjure up voodoo incantations and spells. They have to reveal an agenda and appeal to the IPCC to infill their conclusions with junk science claims.

Maybe someone should point-out to Mann, Trenberth, and Fasullo that this Chinese-origin paper (sponsored by the “Chinese Academy of Sciences”, the “State Key Laboratory of Satellite Ocean Environment Dynamics, Second Institute of Oceanography, Hangzhou”, and the “Ministry of Natural Resources of China, Beijing”) is from the largest global anthro-CO2 emitter, a nation with no reduction INDCs under Paris COP21, and that makes this laughable piece of propaganda: “reduced by appropriate human actions that lead to rapid reductions in GHG emissions.” The Chinese have no intention to rapid reductions” and those 3 TDS afflicted stooges know that.

These 3 Stooges (Mann, Trenberth, Fasullo) just let themselves be the useful idiots for the Chinese Communist Party and their economic war on the West and the UN’s dedicated drive for global socialism.

Thanks, Joel. Formatting fixed.

w.

Voodoo incantations are more reliable than the fantasy of measuring temperature to three decimal places of accuracy when the measuring device only measures two decimal places. At least voodoo might be correct occasionally.

Here, the term “commitment” means that the ocean (and some other components in the Earth system, such as the large ice sheets) are slow to respond and equilibrate, and will continue to change even after radiative forcing stabilizes (Abram et al., 2019).

Without any “forcing” ( ie radiative imbalance ) the massive heat reservoir of the oceans will continue to warm.

Wow, they have officially abandonned one of the axioms of physics: the conservation of energy.

Now that’s what I call “missing heat” !!

greg

Amazing that they’ve fallen for the naïve error of believing in thermal inertia, in the same way that a heavy rolling object has kinetic inertia. There is no thermal inertia. Heat input stops, heating stops. Thermal “inertia” is used as a metaphor for massive heat capacity of oceans, but it indeed does not exist.

Now they’re on record as believing in magic.

Useful (well compensated) idiots?

Damn you and your facts Willis, a whole lot of time and money went into making that graph look scary.

Yes, conversion to reality mode is much appreciated.

I’m quite sure CNN and LAT will be telling us what a zettajoule is any time now. not

The fact that they go back 60 years to get such a small result is indicative of the problem with Ocean Heat Content. Before ARGO the data was laughably unreliable, canvas buckets, Engine Cooling Water intakes from two meters depth to ten meters, and almost nothing from the entire Southern Hemisphere where most of the ocean is found. ARGO data itself has been adjusted as well.

Just Bad Science…

Just Bad

Science…The data isn’t “bad”. It is just data. Bad is the use of it without keeping the limitations in mind.

Thanks for the expose’, Willis!

RE: “….Kevin Trenberth and Michael Mann. Not sure why they’re along for the ride…”

There seems to be a persistent correlation between these ‘authors’ and deliberate attempts to mislead and scare people into participation in their zeta-deceits whilst masking their +/-0.001 truth content.