Guest post by Kevin Kilty

Introduction

This short essay was prompted by a recent article regarding improvements to uncertainty in a global mean temperature estimate.[1] However, much bandwidth has been spilt lately in the related topic of error propagation [2, 3, 4], and so a small portion of this essay in its concluding remarks is devoted to it as well.

Manufacturing engineers work to improve product design, make products easier to manufacture, lower costs, and maintain or improve product quality. Among the tools they use to accomplish this, many are statistical in nature, and these have pertinence to the topic of the surface temperature record and its interpretation in the light of climate model projections. One tool I plan to present here is statistical process control (SPC).[5]

1. Ever Present Variation

Manufactured items cannot be made identically. Even in mass production under the control of machines, there are influences such as wear of the machine, variations in settings, skill of operators, incoming material property variations and so forth, which lead to variation in a final product. All precision manufacturing begins with an examination of two things. First, there is the customer specification. This includes all the important product parameters and the limits that these parameters must stay within. Functionality of a product suffers if these quality measures do not stay within limits. Second is the process capability. Any manufacturer worth the title will know how the process used to make products for a customer varies when it is in control. This leads the manufacturer to an estimate of how many products in a run will be outside tolerance, how many might be reworked and so forth. It is not possible to estimate costs and profits without knowing capability.

2. Process Capability and Control

If a manufacturer’s process can produce routinely within the specifications, perhaps only one in a hundred items, or one in a thousand or three in a million (six sigma) outside of it, whatever is cost effective and achievable, then the process is capable. If it proves not capable one might ask what cost in new machinery would make it capable, and if the answer is not cost effective one might pass on the manufacturing opportunity or have someone more capable handle it. When a process is in control, it is operating as well as is humanly possible considering one’s capability. A process in control is an important concept to our discussion.

3. Statistical Process Control

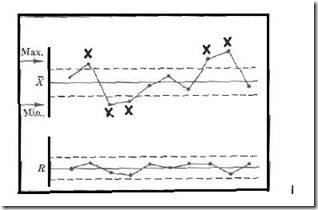

Statistical process control (SPC) is mainly a process of charting and interpreting measurements in real time. Various SPC charts become a tool through which an operator, potentially someone of modest training, can monitor a process and adjust it or stop it if indications are that it is drifting out of control. There are many different possible control charts, but a common one is the X −bar chart so named because the parameter being monitored and recorded on the chart is the mean attribute of a sample of manufactured items. Often it is paired with an R chart which shows the range within the same measurements. R is often used in manufacturing because it is capable of showing the same information about variation as say, standard deviation, but with much less calculation. Let’s discuss the X −bar chart. Figure 1 shows an example of a paired set of charts.[5]

Figure 1. A pair of control charts for X-bar and range. The X-bar chart shows measurements exceeding control limits above and below, while the range shows no increase in variability. We conclude an operator is unnecessarily changing machine settings. Source [5].

The chart begins with its construction. First, there is a specified target value for the process. A process is then designed to achieve this target. Then some number of measurements are taken from this process while it is known to be operating as well as is humanly possible – i.e. in control. Measurements are gathered into consecutive groups of fixed number, N (five and seven are common), and the mean of the means, and range of the means is calculated. Dead center horizontally across the chart is the target value then horizontal lines are placed above and below at some multiple of the process standard variation, measured by range or standard deviation. These are known as the process control limits (upper and lower control limits respectively UCL, LCL).

At this point one uses the chart to monitor an ongoing process. Think of charting as recording a continuing sequence of experiments. On a schedule our fixed number of manufactured items (N) are removed from production. The mean and range of some important attribute is calculated for this sample and the results plotted on their respective charts. The null hypothesis in each experiment is that the process continues to run just as it did during the chart creation period. As work proceeds the sequence of measured and plotted samples show either a pattern that is expected of a process in control, or a pattern of unexpected variations which suggest a process with problems. Observation by an operator of an unlikely pattern, such as; cycles, drift across the chart, too many points plotting outside control limits, or hugging one side of the chart, is evidence of a process out of control. An out of control process can be stopped temporarily while the process engineer or maintenance find and rectify the problem. One thing worth emphasizing is that SPC is a highly successful tool for handling variation in processes and identifying problems.

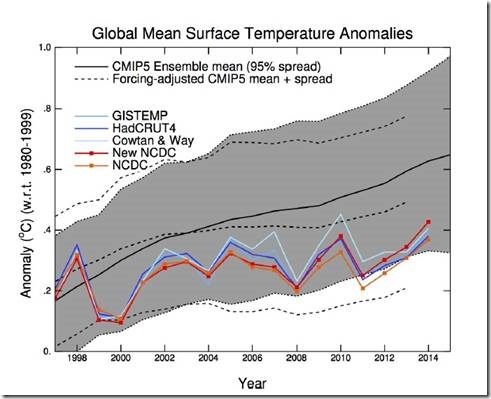

Figure 2. “…Comparison of a large set of climate model runs (CMIP5) with several observational temperature estimates. The thick black line is the mean of all model runs. The grey region is its model spread. The dotted lines show the model mean and spread with new estimates of the climate forcings. The coloured lines are 5 different estimates of the global mean annual temperature from weather stations and sea surface temperature observations….” Figures and description: Gavin Schmidt[6].

4. Ensemble of Models

Let’s turn attention to the subject of climate. The oft cited ensemble of model projections is something like a control chart. It represents a spread of model projections carefully initiated to represent what we believe is a future path of mean earth temperature with credible additions of CO2. It is not a plot of the full variation that climate models might conceivably produce, but rather more controlled variation of our expectations given what we know of climate and the differential equations representing it when it is in control. It is this in control concept that makes the process control chart and the projection ensemble similar to one another. The resemblance is even more complete with an overlay of observed temperature.

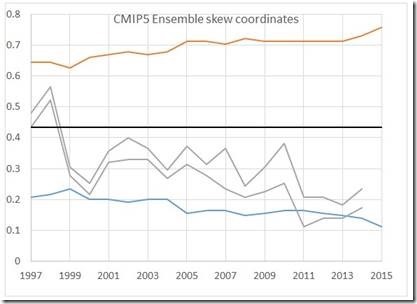

Figure 3. The grey 95% bounds of Figure 2 redrawn in skewed coordinates (blue/orange) to look more like a control chart. The grey lines indicate the envelope of observations. Black line is target.

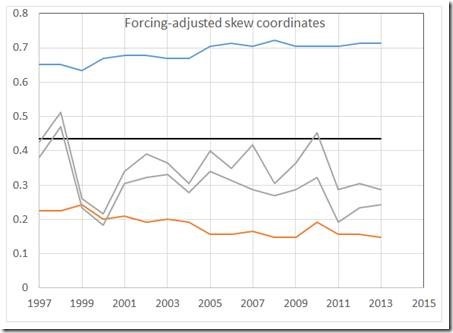

This ensemble became controversial once people began placing observed temperatures on it. Schmidt produced one in a blog post in 2015.[6] Figure 2 shows it. What the comparison between observed and projected temperature showed, initially, was a trend of observed temperature across the ensemble. Some versions of similar graphs have observed temperatures departing from projections entirely.[7] Figures 3 and 4 show Figure 2 rotated into skewed coordinates to look more like a control chart monitoring a process. Schmidt states that Earth temperature are well contained within the ensemble – especially so after accounting for some extraneous factors (Figure 4). Yet, this misses an important point. The measurements in Figure 3 trend in an unlikely way across the ensemble, and have gone to running along the lower limit. After eliminating the trend in Figure 4 the comparison still shows observed temperatures hugging the lower end of the projections. Despite being told often that the departure of observations from the center of the ensemble is a non-issue, with each new comparison some unlikely features remains to fuel doubt. It is difficult to avoid concluding that what is wrong is one of the following.

(1) The models do run too hot. They overestimate warming from increasing CO2, possibly because of a flawed parameterization of clouds or some other factor.

(2) The observations are running too cool. What I mean is there are factors external to the models which are suppressing temperature in the real world. The models are not complete. Figure 2 from Realclimate.org takes exogenous factors into account. Yet, note that while the inclusion of these factors reduces the improbable trend across the diagram, it leaves the improbable tendency to cling to the lower half of the diagram, which suggests item 1 in this list again.

(3) The models and observations are of slightly different things. The observations mix unrelated things together, or contain corrections and processing not duplicated in the models.

Figure 4. The dashed (forced) 95% bounds of Figure 2 redrawn in skewed coordinates (blue/orange) to look more like a control chart. The grey lines indicate the envelope of observations. Black line is target.

These charts present data only through 2014, but while observed temperatures rose into the target region of the chart with the recent El Nino, they have more lately settled back to the lower part of the chart. It takes an extraordinary event to push observations toward the target region. One more observation about these graphs seems pertinent. If as Lenssen, et al, claim the 95% uncertainty bounds of the global mean temperatures are truly as small as 0.05C, then the spread in the various observations is, at times, unlikely itself.

Our little experiment here cannot settle the question of whether models run too hot, but two of our three possibilities suggest they do. It ought to be important to figure if this possibility is the truth.

5. Conclusion

The first draft of this essay concluded with the previous section. However, in the past few weeks there has been a lengthy discussion at WUWT about propagation of error, or what one could call propagation of uncertainty. The ensemble of model results is, in one point of view, an important monitor (like SPC) of health of our planet. If we believe that the assumptions going into production of the models are a true representation of how the Earth works, and if we are certain that our measurements represent the same thing the ensemble represents, then we arrive at the following: A trend across our control chart toward higher values suggests a worrying problem; a trend across the chart toward lower values suggests otherwise. But without some credible measure of bounds and resolution, no such use is reasonable.

One response of the climate science community to the apparent divergence of observations to models is to argue that there really is no divergence because the ensemble bounds could be widened to show true variability of the climate, and once this is done the ensemble limits will happily enclose observations. Or, they argue, there is no divergence if one takes into account exogenous factors ex post. But in my view arguing this way makes modeling pointless because it removes one’s ability to test anything. There is certainly a conflict between the desire to make uncertainties small, thus making a definitive scientific statement, and a desire to make the bounds larger to include the correct answer. The same point Vasquez and Whiting make here [8] …

”…Usually, it is assumed that the scientist has reduced the systematic error to a minimum, but there are always irreducible residual systematic errors. On the other hand, there is a psychological perception that reporting estimates of systematic errors decreases the quality and credibility of the experimental measurements, which explains why bias error estimates are hardly ever found in literature data sources….”

is what Henrion and Fischoff [9] found to be so in the measurement of physical constants over 30 years ago. Propagation of error plays an important role in the interpretation of the bounds and resolution of models and data. It is more than just initiation errors being damped out in a GCM. But to discuss its pertinence would make this post too long. Perhaps we‘ll return in a week or two when that topic cools off.

6. Notes:

(1) Nathan J. L. Lenssen, et al., (2019) Improvements in the GISTEMP Uncertainty Model. JGR Atmospheres, 124, 6307-6326.

(2) Pat Frank https://wattsupwiththat.com/2019/09/19/emulation4-w-m-long-wave-cloud-forcing-error-and-meaning/

(3) R.C. Spencer, https://wattsupwiththat.com/2019/09/13/a-stovetop-analogy-to-climate-models/

(4) Nick Stokes, https://wattsupwiththat.com/2019/09/16/how-errorpropagation-works-with-differential-equations-and-gcms/

(5) AT&T Statistical Quality Control Handbook, Western Electric Co. Inc., 1985 Ed.

(6) RealClimate, NOAA temperature record updates and the ‘hiatus’ 4 June 2015, Accessed September 18, 2019.

(7) Ken Gregory, Epic Failure of the Canadian Climate Model,

https://wattsupwiththat.com/2013/10/24/epic-failure-of-the-canadianclimate-model/

(8) Victor R. Vasquez and Wallace B. Whiting, 2005, Accounting for Both Random Errors and Systematic Errors in Uncertainty Propagation Analysis of Computer Models Involving Experimental Measurements with Monte Carlo Methods, Risk Analysis, Volume25, Issue 6, Pages 1669-1681.

(9) Henrion, M., & Fischoff, B. (1986). Assessing uncertainty in physical constants. American Journal of Physics, 54( 9), 791– 798.

News Flash!!! The models will always run hot because they model a direct and near-linear relationship with CO2. CO2 demonstrates a near-linear uptrend established 12 years ago with the ending of the ice age. Additionally, the ground measurements are impacted by the UrbanHeat Island Effect and “adjusted” to show a near-linear warming trend. The data is being adjusted to reduce the models from warming hot. Y=mX+b guarantees that they need to make Y (Temp) more linear to adjust for the X (CO2). They are even adjusting the RSS data to make it more linear.

To prove this is all pure nonsense, simply go to NASA: https://data.giss.nasa.gov/gistemp/station_data_v3/

Identify the ground stations with 0 to 10 BI that existed prior to 1902 (when the Hockeystick dog-legs) and download the “UNADJUSTED” data. you will see that there has been Zero, Nada, Zip warming over the past 116 years. None of the stations that I’ve examined show anything close to a linear increase over that time. Many if not most show temperatures recently below those of the 1902 level.

Excellent graph, especially the coverage graph showing zilch for Southern Hemisphere pre 1900. And yet we are supposed to believe we know the temperatures for 1/2 the globe before 1900. Only in lala land.

???

Why the bias?

The graphs, used as described by CO2isLife, do not pretend or claim to know the temperature for 1/2 the globe before 1900.

In fact, CO2isLife explains;

That temperature measurements taken from long term temperature stations do not demonstrate warming.

Making your whine about 1/2 of the globe a red herring logical fallacy.

So with 10% coverage of half the globe in 1880, we are supposed to have confidence in any numbers?

I don’t whine. Just don’t understand the logic of imputing any numbers with such a gap in our knowledge.

GIGO

well of course…..when you adjust past temps to show a faster rate of warming….to fit an agenda

and then tune the models to that….they reproduce that same fake rate of warming

when you put this in…..

you get this out…. http://wattsupwiththat.files.wordpress.com/2013/06/cmip5-73-models-vs-obs-20n-20s-mt-5-yr-means11.png

CO2 says:

News Flash!!! The models will always run hot because they model a direct and near-linear relationship with CO2. CO2 demonstrates a near-linear uptrend established 12 years ago with the ending of the ice age. Additionally, the ground measurements are impacted by the UrbanHeat Island Effect and “adjusted” to show a near-linear warming trend.

You & I know the billion-dollar models can be ridiculously simplified to a single linear equation of beefed-up (supposed H2O feedback) CO2 warming. That simplified linear equation has been shown here & other websites (climateauditdotcom for ex) numerous times in the past. Think of how many needy people could’ve been helped w/that money — instead laundered to the liberal elite apparatchiks (so-called climate-scientists)

The “ice age recovery” and “urban heat island” arguments are starting to wear a bit thin. UHI might be a factor in comparison with early 20th century temperatures but has had less influence on trend since the 1970s. Remember UAH whch is unaffected by UHI also shows a 0.5 deg increase since 1979. Similarly, While it’s reasonable to assume that some of the warming since 1850 is due to a “recovery”, the warming has continued into the 21st century even when natural factors such as ocean oscillations & solar activity have been operating in their ‘cool’ cycles.

Also your point about the number of stations is irrelevant unless you are trying to argue that the climate was warmer in earlier centuries. Station coverage has been more than adequate since the 1940s.

As CO2 accumulates in the upper atmosphere it is likely that the earth will warm. Whether that warming will be excessive or even harmful in any way is another matter. Most responsible sceptics (Lindzen, Curry, Spencer, Jack Barrett etc) know that this, i.e climate sensitivity, is the only area of uncertainty.

The earth is warming. The earth will continue to warm. Get used to it. Continuing to deny it simply destroys the credibility of the sceptic cause.

It’s amazing. Everything you just said is wrong.

By all means tell me what it is I’ve got wrong. If I’ve got it wrong then many of the most qualified leading sceptics have it wrong.

I’ve been closely following the climate change debate for over 15 years. I challenged Michael Mann on the ‘hide the decline’ issue 5 years before Climategate. I’m not an alarmist but I do understand what the data is telling us. We are warming.

What is warming is the AVERAGE! What does a warming average tell you? Does it tell you if maximum temperatures are going up? Does it tell you if minimum temperatures are going up?

There are lots of places where maximums are actually going down, e.g. the entire central US. Global warming holes are being identified all over the globe, e.g. in Siberia.

What is wrong is using an increasing average global temperature to forecast anything for the globe. If maximum temps are moderating and minimum temps are as well we will be headed for a bright future with longer growing seasons around the globe, more food, less starvation, and fewer deaths from cold weather. All this can happen while the global average temperature is RISING!

This is definitely the case. I have heard before on a skeptic site that the temperature record is not notably changed by investigating issues with individual sites like the urban heat effect.

The trademarked 97% consensus among scientists is accurate, including almost all skeptics, except in the way that what that consensus actually is is implied to be. (High warming with high costs)

The 97% study doesn’t just theoretically include skeptics, it specifically included papers by well known skeptics. The parameters for agreeing with the consensus are a belief that it has warmed up since ~1850 and that some amount of that warming is CO2 and otherwise human caused.

Skepticism is about how large the CO2 effect is likely to be, what the costs and benefits of that warming are likely to be, and what the most reasonable policies are in response.

You are including the volcanoes which cooled the surface considerably, so it is disingenuous to claim UAH shows the earth warmed by .5C since 1979.

Also, according to the GHE “theory”, the highest rate of temperature increase should be in the tropical troposphere which UAH definitely does not support.

So here’s a simple task for you; show that a small change in cloud cover has been ruled out by the scientific method.

It’s quite simple you know

https://youtu.be/EYPapE-3FRw

I remember you used to hang out at Solar Cycle 24 and continually got your ears pinned back for such incorrect statements and error by omission.

….”it is likely that the earth will warm.”

Likely 😉

“U.N. Predicts Disaster if Global Warming Not Checked

PETER JAMES SPIELMANN

June 29, 1989

UNITED NATIONS (AP) _ A senior U.N. environmental official says entire nations could be wiped off the face of the Earth by rising sea levels if the global warming trend is not reversed by the year 2000…”

https://www.apnews.com/bd45c372caf118ec99964ea547880cd0

When air pressure gets low enough, CO2 actually helps heat to escape.

As the co2 accumulates in the atmosphere I likely get older. So what!

A serious question: has the planet rebounded from the Little Ice Age before such time that it reaches the temperature that it had before the descent into the Little Ice Age?

There is a logical argument that the rebound from and out of the LIA is not complete until such time as we see temperatures that were seen in the Medieval Warm Period.

No one knows precisely how warm it was in the MWP, but there is plenty of evidence to suggests that it was warmer than today. Thus there is a strong argument that the rebound from and out of the LIA is not yet complete, and the 20th century warming was simply part of the rebound.

Yes, the LIA is just as inconvenient for the believers as the MWP.

Recently I was looking at a paper by a concensus scientist. It mentioned in passing that the LIA cooling was one degree C. And how much warming has occurred since the end of the LIA? One degree C.

It’s difficult to think of a simpler and more effective argument against extreme AGW. That’s why Mann’s fraudulent hockeystick was so important: it got rid of the LIA as well as the MWP.

Chris

No. It has not.

That silly person above was cherry-picking the decade that was the the coldest that the earth had been since the 1800s, after nearly 4 decades of dramatic cooling and at the HEIGHT of the global cooling scare for comparison.

The 1930s were hotter than EVERY DECADE SINCE. Every. Single. One. And it still wasn’t as warm as the medieval warm period.

The only graphs that don’t show this are those that “adjust” the 1930s cooler–in the US as well as global data–and generally also warm up the global cooling scare so that the temp looks more like a gradual rise instead of a spike, a crash, a slow rise to PARTIAL recovery, and a weak flat wobble since then.

To buy the global warming nonsense, you have to throw out actual recorded history as bunkum. The Dust Bowl did not happen. The Krakatoa volcanic winter? Now not really a big thing anymore! Year Without a Summer? What Year Without a Summer? The Medieval Warm Period and the Minoan Warm Period are erased and the Little Ice Age smoothed out.

So let’s talk evidence, actual physical things that you can see and touch.

1.) AGW must insist that the world is as hot as it has ever been in more than 1000 years.

2.) ALL climate science declares that the high latitudes heat up faster than tropical latitudes.

3.) AGW proponents go further and insist that the northern high latitudes are, have been, and always will be heating up the fastest of any place on earth–much more than in the southern latitudes. Greenland is supposed to be ESPECIALLY hot.

4.) We have tree roots grown through Viking bones from the first settlements in Greenland. We also have incontrovertible evidence that Vikings grew and harvested barley in Greenland.

5.) We cannot grow trees in Greenland right now. It is too cold. We cannot grow and harvest even the most cold-resistant barley in Greenland right now. It is too cold.

The conclusion from this, if you buy AGW, is that Greenland is hotter than it used to be, and the Medieval Warm Period was just a mistake, and LALALALALALAALAALALA I DONT HEAR YOU WHAT BARLEY????

You have TWO choices. You either throw out all known history and physical evidence as potentially debunkable by AGW, or you realize that the model the AGW consortium is replying on and all of their massive “adjustments” must be wrong.

I’m one of those that agree (a lot) that models do produce more warming that the temperature that is observed in the real world. However, it is not a good idea to show graphics with data that ends in 2013 and 2014. If you show the latest data, it will not take out merit to this idea because most of the recent warming is a reminiscence from the latest El Nino event and I’ll bet that things will go back to a flat trend. Showing data only until 2013 is exposing this article to critics.

I thought I had responded to this comment earlier. Your point is well taken. However, I did look at the post 2015 data, and commented on its effect on the graphs. A more important consideration for me was finding a graph produced by a well-known climate scientist that also included an explanation for the departure between models and observations. I wanted to show that this explanation missed the important idea about looking at data from the standpoint of “does the chart show something improbable?”

It was a calculated judgement on my part. I knew someone would point this out.

Fair Kevin, and for me it’s perfectly fine as you pointed it. However you realize that alarmists will cling to it tooth and nail to dismiss the idea. That’s why I always prefer to use all the available data and, even so, point out the fragilities of the model based alarmist point of view.

“However, I did look at the post 2015 data, and commented on its effect on the graphs. “

Well, you said

“but while observed temperatures rose into the target region of the chart with the recent El Nino, they have more lately settled back to the lower part of the chart.”

But in fact, in July 2019, relative to that 1980-1999 base, GISS was at 0.62°C, NCDC at 0.58, HADCRUT at 0.52. These seem pretty close to mid-range.

Here, from here, is Gavin’s plot updated to end 2018. It went over mid-range in 2016 and then dropped below, but not much. 2019 will be very close to midrange.

Now Nick, the central question – in which one do you believe most? The Fig2 presented by Kevin or the ones presented by Gavin? Thanks for the links for Gavin’s graphs. It’s so in “the mouche” at all time that I guess that not even Gavin believes in those… We must be, at least, a little bit intellectually honest to be credible I guess. There will be a “before” and an “after” those Gavin’s graphs for sure 🙂

Gavin seems to have found the trick to hide the decline for sure. He seems to recenter the “0” in the y axis to make things match. Just a thought… One thing is for sure, one of the graphs (Kevin’s or Gavin’s is wrong because they do not match both in data or in Y axis “0” position.

Nick, why did you pick up the graph with CMIP3 (circa 2004) when you could pick up the CMIP5 (circa 2011), that also was updated by Gavin with data from 2019 (http://www.realclimate.org/images//cmp_cmip5_sat_ann-2.png)

In this case, 2019 is going “dangereously” close the lower range os the “ensemble”. Are you “cherry picking?” 🙂

JN,

“The Fig2 presented by Kevin or the ones presented by Gavin?”

According to the author, Fig 2 is the one presented by Gavin.

” He seems to recenter the “0” in the y axis”

He’s using an updated reference period, 1980-2019. That changes the numbers on the axis, but not the relative positions of the curves.

“why did you pick up the graph with CMIP3 (circa 2004)”

It was the one I found, and didn’t notice it was CMIP3. But there is a reason for CMIP3. The forecast period is twice as long (since 2004).

WHAT?! I work with both scenarios and now that is new to me!

“why did you pick up the graph with CMIP3 (circa 2004)”

It was the one I found, and didn’t notice it was CMIP3. But there is a reason for CMIP3. The forecast period is twice as long (since 2004).

Nick, sorry to say but it seems that you have noticed that you made a miss judgement and now are trying to use very funny arguments to not admit it. CIMP3 and CIMP5 do not have different periods of observation. CIMP5 has more models, most models are more advanced and it is the one that was used primarily for the 5th assessment report from the IPCC. I could elaborate a lot more about both what are these two and the main differences but I guess that is only a question of you to google it. In this case you can try to ”twist” things to justify why you used the graph that was on the same page than one that was more adequate but it did not fit in your pre conception. Are you able, at least, to admit that, in CIMP5 graph, real data is more frequently below modeled average and 2019 is approaching to the lower limit? I really like your comments and posts, usually with views rather based on logical arguments but, in this case, you are almost behaving like a child who is unable to admit something it did wrong…

Models do produce more warming that real world observations. I know that, you know that and most people from the IPCC also know that.

CMIP3. Lame. From Schmidt.

“Are you able, at least, to admit that, in CIMP5 graph, real data is more frequently below modeled average and 2019 is approaching to the lower limit”

Yes, observations have been on the low side of the average of models.

No, 2019 will not be approaching the lower limit. It will probably be the second warmest year on record, and fairly close (on the low side) to mid-range of the forecasts.

Neither.

Models run on empty.

(There’s nothing there to run.)

What “models”?

Is the author referring to the computer games, used by government bureaucrats with science degrees, to express their personal consensus opinions on what causes climate change, in a way that is very complex and appears to be “real science”, but the climate predictions are always wrong ?

Or is he referring to these models:

A gentle off topic rant on the state of reference materials and today’s culture:

I was reminded by that ATT handbook of my copy given me as a new Western Electric employee in 1963.

I did a Google search to refresh my recollections and was dismayed to find nowhere was there mention of the author, a true pioneer of industrial Quality Control, Walter Shewhart.

Dr. Shewhart wrote the manual for the Hawthorne (Chicago) plant in 1924, and the manual I got in the 60’s was virtually unchanged. However the best any reference in Google would report was that the manual was assembled “by a committee” in 1956.

The “history of Now” generation no longer feel it necessary to honor giants of the past.

The worst example was the remake of the 1950s best selling book and movie “Cheaper by the Dozen”. Dr Frank Gilbreth was a pioneer in the technology of Industrial Engineering who with his wife Lillian had 13 children. The entertaining part of the story was a very large family being raised by Industrial Engineering rules. But the real heart of the book was that Frank died early with much of his work still incomplete, and Lillian while mother of 13, picked up the mantle and established herself as an important figure in the technology.

So what does Hollywood do? They hire Steve Martin for the remake lead, but decide Industrial Engineering is too boring so they change the historical characters name and change his job to football coach. Toss Frank and Lillian into the dustbin.

Rant over; please resume.

Deming was sent to Japan after WWII to help Japan rebuild their manufacturing. He taught them SPC.

I thought about listing Shewhart’s book, now published by Dover, as a potential reference to learn about process control, but didn’t do so in the final draft. I work in the same engineering department that Edwards Deming graduated from in 1921. Everyone around here knows of him. No one, for some reason has heard of Shewhart.

Bell Labs and Western Electric employed an unbelievable number of talented scientists and engineers, Nyquist, Shannon, Shewhart, Pierce, Brattain, Holden, Bardeen, Shockley, Penzias, Wilson….quite a few Nobel Prize winners. What places they must have been to work at.

Kevin Kilty

Thank you for the essay. I would never have thought to use Shewhart and climate science in the same paragraph. There are numerous references to Shewhart on the web, beginning with ASQ.ORG.

Kevin: Great article. I graduated college with a math (statistics and probability) degree but didn’t learn much useful until I got a job in SPC. Then I was introduced to Schewhart, Deming and Juran among others. I was a member of ASQC for many years a knew Hi Pitt. Western Electric’s rules were our guiding light in SPC. One of the great lessons of this education was that data can easily mislead and one must always be on the look out for confirmation bias, particularly when QC folks are talking to production or marketing. 😉

That’s Hy Pitt.

Is the title still in question? Really?

I don’t think so.

Jeff

The models only look hot when they’re compared to the actual temperature data (note: “actual temp data” is becoming less and less “actual”).

/sarc off

Purely speculation but I think heat tends to escape earth in ways we don’t fully understand and are difficult to model. That and possible negative cloud feedback can make models run hot. Time will tell. Another 10 years and we will start to have good data. It is very important to keep model source code around to test after times have psssed. Is the source code available so modelers can’t not change it ? We need to save all these graphs.

Heat leaves the Earth in a well defined, quantifiable, manner. This is as photons, emitted by the surface, emitted by clouds, emitted by particulates or re-emitted by atmospheric GHG’s.

People get confused by the complexity at the boundary between the surface and the atmosphere, but that arose as Trenberth inappropriately conflating the energy transported by photons (BB emissions of the surface, clouds, poarticulates and re-emissions by GHG’s) with the energy transported by matter (latent heat and convection). The reason this is invalid is because only the energy transported by photons can leave the planet.

Any energy transported into the atmosphere from the surface by matter can only be returned to the surface. To the extent that this matter emits photons, the matter is also absorbing photons and in the steady state is absorbing the same as its emitting. All the transport of energy by matter can do is redistribute existing energy around the planet.

Too simple.

Potential Heat Energy, as energy of vaporisation, can be carried quite high in the atmosphere by convection, especially by thunderstorms….to several tens of thousands of feet, above much of the atmosphere, in terms of % of the molecules in the whole atmosphere. CO2 is dense relative to O2 and N2, thus relatively more concentrated in the lower atmosphere. This potential heat energy of vaporisation can be carried to heights above much of the CO2, and then released by condensation, and then is radiated in all directions….some downwelling, but about half directed upwards. This heat energy, as long wave IR, relatively escapes the CO2 greenhouse effect compared with the usual calculation of IR created near ground or sea level.

This effect has been mentioned on WUWT, by Willis E., I think. I do not think the models can account well for this, because the magnitude of this effect and its change as sea temps and hence evaporation rise, are not well known. For a sense of magnitude of the effect, we know that ocean surface temps drop a couple of degrees as a hurricane passes. Indeed, the more that heat is trapped by greenhouse gasses as upwelling IR from near the surface, the more important the convection process of heat escape may become, relatively.

Much talk of cloud cover’s complex effects has also clarified that the models are in their infancy, especially in their subcomponents related to positive and negative feedbacks.

The relative stability of the Earth’s climate over time indicates it is a complex system dominated by negative feedback. Until the models get the feedback part right, they will not predict climate change accurately.

kwinterkorn,

It’s not too simple if it predicts the data, which it does better than any other model I’ve seen, so why add unnecessary complexity when it’s effect is already embodied by the mean? I’m a proponent of Occam’s Razor, so simpler is always better, especially when it works.

I get that most peoples gut instinct is to worry about what isn’t understood. Frankly, I think this is the motivation for adding so much excess complexity in the first place. Excess complexity provides the wiggle room to feign support what the laws of physics can not.

To be fair, my model has an additional constraint on cloud coverage that causes them to converge properly. That constraint is that the systems desires to maintain a constant average ratio between the RADIANT emissions by the surface and the emissions at TOA. This ratio can be measured and it’s average about 1.62 W/m^2 of radiant surface emissions per W/m^2 of radiant forcing from the Sun, moreover; this average is converged to very rapidly by the actions of clouds and is demonstrably consistent from pole to pole. When clouds are present, the ratio between surface emissions and planet emissions increases and under clear skies, the ratio between surface emissions and planet emissions decreases. It takes the perfect average ratios of cloudy to clear skies from pole to pole in order to maintain this relatively constant emissions ratio.

These 2 plots demonstrate the relatively constant average ratio of surface emissions to emissions at TOA across slices of latitude.

surface emissions Y, planet emissions X:

http://www.palisad.com/co2/sens/po/se.png

Surface temperature Y, planet emissions X (green line is ideal constant ratio):

http://www.palisad.com/co2/tp/fig1.png

This next plot shows the bizarre measured behavior of clouds that result in the maintainance of this relatively constant ratio.

Fraction of clouds Y, planet emissions X:

http://www.palisad.com/co2/sens/po/ca.png

Note that every dot in each plot corresponds to another dot on every other plot. More info about these plots and and many other similar plots can be found here:

http://www.palisad.com/co2/sens

One of 2 things must be true. A really bizarre hemispheric specific (blue vs. green dots) relationship between clouds and the planets emissions coincidentally results in a relatively constant ratio of surface emissions to planet emissions OR the climate system goal is a constant ratio of surface emissions to planet emissions and clouds adapt to achieve this goal. Again, I’ll invoke Occam’s Razor.

Whether or not the desired ratio is the golden mean or not is still up in the air, but since the measured value is within a percent of the golden mean, the climate is a chaotically self organized by clouds and the golden mean shows up in other chaotically self organized systems …

The above topic is relevant and in that vane I would suggest that climate science does not adequately address the uncertainty of measurements themselves, such as would be done by Gage R&R investigations.

There is no climate crisis as far as its behavior is concerned. Whether short term trends are being influenced by human CO2 emissions or not, nothing outside of normal variability is being observed.

It ought to impress people that through Gage R&R one can separate the influences that operators, measuring instruments, machines and even incoming materials have on variations in products or processes. But I have also taught enough introductory statistics courses to know that the average person struggles just to understand descriptive calculations — inferences and meaning are far beyond them.

Lots of good could come from people thinking just a little more like the ideal manufacturing engineer — they’d be a bit more skeptical, even about their own biases, and less inclined to “believe the magic”.

The kind of “tricks” being used by climate scientists are pervasive and it would be good for these kinds of things to be addressed in statistics and philosophy courses, so just as you say people are a bit more skeptical. It would help people from being scammed even if only from robo calls and email phishing.

I took a logic course in college and there was a section on the most common fallacies. Learning to recognize these has been invaluable to me.

They say they “found” the missing heat going into the oceans. (Covered under exogenous factors I assume)

If the ocean heat is a measurable factor it can be included in the analysis. But I don’t know if it can be relied on. Are sea temperatures reliably recorded for a long enough period of time to be able to dismiss the apparent bias we should have that the models are hot?

I think it was Trenberth who said “I’ve found the missing heat”, and before that that it was embarrassing that they couldn’t account for the “missing heat”.

It’s obvious from this that the mainstream climate modelers have been biased to the accuracy of their models over the observed temperatures, which is why I have a feeling the ocean heat is a too good to be true (for their models) factor that isn’t well verified.

My assumptions are that the ocean heat could explain the models but it’s not something that could be called consensus science given how recently it was ‘found’ and the obvious bias to accepting it without a second thought. Secondly, if the ocean is absorbing the proposed extra heat this is evidently a new change in the climate system that we don’t know the mechanics of and could go on for centuries. In geological timescales it would be completely unsurprising if this effect goes on long after effectively carbon free energy sources are developed. So there should still be a reasonable presumption given the data that the models are hot, and that the climate system may have also changed to damper surface temperatures down from the not so alarming projections we currently have.

The UN projects warming costs of 2 to 4% of GDP in 2100. It’s obvious that the cost analysis has been very biased towards finding potential costs rather than benefits. It’s also likely that the projected temperatures are high either because the models are hot or because the ocean is now absorbing more heat than before 2000.

In short, the least scientific analysis is to be alarmist given current data, and that’s even if you were to go with the UN’s own projection of costs which assume the models are correct and which have an obvious bias to assuming higher costs than benefits.

Yes I agree that if this heat is going into the deep oceans then the ocean provides a buffer of perhaps hundreds of years and warming of atmosphere then will not be a problem at all. Oceans have a massive capacity to store heat.

Ahh. But then they will scare you with the horror of melting methane ices, which are an 84X more potent “greenhouse” gas than CO2 (short term).

How does the heat get into the deep ocean without first transiting the ocean surface? Wouldn’t the ocean surface warm first? Or is there some kind of Star Trek transporter somewhere sending the heat directly into the deep ocean?

I don’t know, and people don’t seem to talk that much about the ‘missing heat’. At least I recently read a RealClimate discussion of the satellite temperature data that concluded that the models are probably running a little hot in sensitivity (feedback effects), while assuming the basic forcings were accurate. They didn’t say a word about ocean heat.

As I recall the reason it was only found about halfway into the surface temperature pause was that sensors for the deeper ocean were not widespread before that so there was basically no data.

As I am sure you know, about 70% of the Earth’s surface is ocean…therefore the majority of heat energy from sunshine first heats the ocean surface. We know that the convection of heat in the ocean is not just around the globe at the surface via currents, but also up and down, from surface to the depths, through vertical components of the currents. So yes, the heat is first at the surface, not counting volcanic sources, and then either rises via evaporation or IR emission upward into the atmosphere or distributes downward due to the movement of the water. My understanding is that the flow of heat up and down occurs on a timescale of years and decades, but it nevertheless significant

“As I am sure you know, about 70% of the Earth’s surface is ocean…therefore the majority of heat energy from sunshine first heats the ocean surface.”

That’s *exactly* what I said. The problem is that, according to NASA at least, the ocean surface has been cooling since 2003. So how did the “excess” heat since 2003 get into the deep oceans? Via a Star Trek transporter somewhere?

The claim that the global warming “hiatus” happened because the heat since the early 2000’s is hiding in the deep ocean is ludicrous unless someone can explain how a cooling ocean surface temperature is consistent with conducting heat into the deep ocean!

“(2) The observations are running too cool. ”

If there is something in the real world that is cooling temperatures, but this something is not included in the models, then this is a variation of (1), the models are running hot. (If there is something missing from the models, this a flaw in the models.)

The only time observations could be running cool is if there is some error in how observations are being taken that make the recorded temperatures lower than the actual temperatures.

At first blush it does seem that 2) is just the contrapositive of 1), but upon further reflection you might see that 2) refers to what we might call “wrong model” bias. 1) represents too much CO2 gain in models, while 2) leaves out real negative feedbacks and other influences. In any event some people in the climate science field make a distinction between 1) and 2), so I do as well.

Seems to me that defining 2) this way is a good way to hide half of the model failures.

If we include too much warming, it’s a model failure.

If we don’t include enough cooling it’s a problem with the data.

The people in the climate science field do many things that aren’t justifiable, this appears to be another one of them.

I know Joe Bastardi has mentioned constantly that at least the American weather model forecast runs notoriously hot and cannot “see” cold air a week or more in the future. Telling that it can’t see cold but sees plenty of heat. Just a coincidence huh…..

Met office forecasts are the same, they have stopped releasing the long range forecasts as they got embarrassed by then being so wrong, normally on the hot side.

There is the chance that the satellite temperature measurements accurately reflect physical reality. All the statistics in the world can’t confirm that or repudiate it.

“A trend across our control chart toward higher values suggests a worrying problem; a trend across the chart toward lower values suggests otherwise.”

But not in the case of Climate, down is disaster whereas up is getting back to normal.

I have come to the conclusion that few folks involved in climate science are indoctrinated in physical experimental science. They have become mathematicians, statisticians, and programmers that are removed from the real world and consequently their bias lies toward getting the (unreal) answer they want from the models rather than getting a real and repeatable projection of the world as it truly is.

I have mentioned on a few earlier threads that my university began teaching a probability and statistics course for new hires in engineering and the sciences because we found that many people were coming out of graduate school poorly grounded in experimental methods–ironically, those people better prepared turned out to be from the college of education because they had taken a research methods class or two. I suppose the thinking was that engineers and scientist should be able to teach research methods to themselves, but what actually happened is that research methods became an “unknown unknown”. They had become focused on teaching the theoretical aspects of their discipline. It is difficult to decide to teach yourself about some you are utterly unaware of.

Jim Gorman

Yes, the oft repeated claim that GCMs are based on physics is fluff. The parameterization and tuning turns them into curve-fitting exercises that match the adjusted (increasing slope) temperature histories.

You can have a PhD in Engineering but not qualify for a license to practice engineering. If your undergrad degree wasn’t in Engineering, you’ll be missing basic skills and knowledge and that will lead you into grave errors.

What we’re seeing is scientists applying statistical tools (eg. from Matlab etc.) without understanding the basics. That means they don’t even know what assumptions they are making.

There should be a rule that, if your paper has any statistical analysis, you must have a statistician as a co-author.

commieBob says, “There should be a rule that, if your paper has any statistical analysis, you must have a statistician as a co-author.”

I second this emotion, and would apply it rigorously to epidemiology and drug studies as well.

Michael Mann has a math degree.

You’d have a hard time, if not an impossible one, getting a PhD in engineering in the US without satisfying the equivalency of ABET accreditation requirements. Most universities won’t grant a masters in engineering without some demonstration of equivalency. You shouldn’t be “missing basic skills and knowledge.” There are biannual continuing education requirements for license renewal which differ by state as well.

Beyond education, you must pass an 8-hr exam to become an engineering intern, acquire 4 yrs of relevant experience under a licensed professional engineer, and then pass an 8-hr licensure exam specific to your field of engineering as part of the process.

You also won’t be signing and sealing any drawings, calculations, or reports not related to your expertise or competence level (at least not without putting your license on the line and risking suspensions, fines, cancellations, and/or jail time), so there should not be any issues with “missing basic skills and knowledge.” And even if you tried…QA/QC processes at engineering firms tend to be rigorous. Sure, there are some cowboys out there, but usually old-timers who have enough experience that they are not “missing basic skills and knowledge” in their practice.

I’m well aware of the requirements necessary to become a professional engineer.

I have known folks with a PhD in engineering who didn’t have an undergrad engineering degree and who therefore would not qualify to become professional engineers.

My point was that it is possible to pick up advanced certifications while still lacking basic knowledge.

The other thing that gets up my nose is the folks with advanced certifications who just assume they understand stuff about which they demonstrably have no clue.

My institution actually had that rule, for a while. I was in the Division that was supposed to provide the statisticians. It was, IMO, a disaster. The authors were often at cross purposes, and it was rarely the statisticians who were right.

There’s a big problem communicating between silos.

It’s like the problem communicating between Engineering and Manufacturing, and nobody around who could speak the two languages well enough to translate.

It’s more than just knowledge of statistics and how they are applied. It’s a lack of hands-on working experience with real, and I do mean real, physical measurements. Having to actually measure something and then try to repeat it. To deal with skewed data and find out the reasons for it. To really understand why and how measurements are adjusted and how it might affect your conclusions.

I’ll give you an example. We know data from various recording sites have been adjusted in order to make them “more compatable” for modeling. However, is the adjusted data for a single site correct to use in a small region for, let’s say, an insect study where temperature is important for reproduction?

I’m an old timer. How many folks today have tried to set up an analog meter to read a voltage? First you physically adjust the meter to read zero while using the mirror to eliminate parallax. Then you turn it on for a warm up time and then electrically “zero” the bridge again using the mirror. Finally you use the mirror to try to eliminate parallax when reading the measured value.

All these make you very aware of error in both reading and calibration. Digital instruments are nice but mislead newbies into believing they are exact and perfectly calibrated. Same thing with statisticians. A measurement is a number that is perfect and how it fits into a population of other numbers is the real question.

“All these make you very aware of error in both reading and calibration. Digital instruments are nice but mislead newbies into believing they are exact and perfectly calibrated. Same thing with statisticians. A measurement is a number that is perfect and how it fits into a population of other numbers is the real question.”

To expand further: If multiple measurements are made then the the errors in the multiple measurements will be random and the central limit theory can be used to determine a mean. The problem is that there is also an uncertainty factor associated with the instrument itself. That mean of a normal distribution may or may not be accurate. It may be precise but you don’t know the accuracy because the true value can be anywhere in the uncertainty interval. The uncertainty interval is not random and can’t be reduced by the central limit theory. It can only be reduced by comparing the measurement results with an external calibration standard. And even then some uncertainty remains. In a digital meter the oscillator driving the counter window can drift with age, temperature, humidity, etc. always leaving some uncertainty behind even after being recently calibrated against a standard (the standard being the physical world and not a computer model). When that residual uncertainty is used in a sequential manner, e.g. the first measurement stage drives a second measurement stage, etc, the uncertainty adds, it doesn’t stay static and it doesn’t reduce.

As a statistician and data scientist I am very aware that there is little evidence of statistical understanding in climate science. Michael Mann is not unusual in using statistical methods out of a computer program with nil understanding of what they actually involve and of the basic assumptions behind them. In particular there is no understanding amongst climate modellers of the basic rule that the more you overfit a statistical model (aka tuning, aka taking advantage of chance variation in the sample) the less likely the model will generalise to other samples (ie the future). Mathematicians and physicists are NOT the same as statisticians.

The starting point in SPC is that you know what the dimension you are measuring is supposed to be. We do not know what the correct temperature is supposed to be.

True. In real process control we have at our disposal a real process to test and build a chart upon; and then we test exactly the same process sample by sample. In climate science we have only models to build the chart and compare them to observations which are different, of course–yet, the two seem similar in important ways.

The irony is that the warmists think they are forecasting temperature.

But all they doing is forecasting the rise in CO2 concentration.

I want to open a can of worms, here.

But is it real?

The unspoken assumption here is that the data is Gaussian. What this means is the data set has a central tendency, or a mean value, or a tendency more or less steadily increasing or decreasing. Very important is the fact that deviations from said core values are “random” or “randomly distributed” or “Gaussian”. Then we base all our calculations on Gaussian statistics, including mean, standard deviation, 95% confidence intervals, and the revered “wee p values”. And so on.

But what happens when the data distribution is not “random” or Gaussian”. What if there are well known influences on the measured parameter that are absolutely *not* random. Like perhaps a train of El Nino – La Nina cycles. A huge Super El Nino right in the middle of the record. A weird aborted El Nino – Pacific Hot Pool – real El Nino at the end of the record. Not predicted, not necessarily well understood, but *not* Gaussian distribution.

Now what can we say about the chances of this, or of that, or in control, or out of control, or the chance of 0.5 degree difference?

Statisticians: Have at it!

Well, you do open a can of worms. Petr Beckmann once called the tendency to over use the normal distribution to calculate uncertainty limits and other characteristics as “the Gaussian disease”. Let’s try to quantify things…

Assume mean Earth temperature is known as well as 0.05C to 95% confidence. Presume these researchers are using Student’s t because no one in right mind would use a Z-value — the variance is actually unknown and has to be calculated from the sample. Then the probability that two independent measures of mean temperature would vary by the 0.12C shown in Figure 2 in year 2010 is less than 50 in 1,000. It’s improbable, which is what SPC focuses on as an indicator of trouble.

Because we are already using a “fat tailed” distribution in our adoption of Student’s t, then what else should we consider? We might look at using statistics which arise from a 1/f process, but in this case the mean value depends on the time span chosen for observations and one has to use a Monte-Carlo method to find expected deviations, like doing things in financial engineering.

Thanks for the cogent and interesting reply.

The beauty of using x-bar charts is that they are averages. And the average of even extremely non-Gaussian distributions rapidly tends toward Gaussian, thereby allowing these techniques to be quite useful still. As a Senior Member of the American Society for Quality (the ASQ.org mentioned above) and Certified Quality Engineer (CQE), I have spent a lot of time using Dr Shewhart’s work. He was one of the founders of the society.

“he beauty of using x-bar charts is that they are averages. And the average of even extremely non-Gaussian distributions rapidly tends toward Gaussian, thereby allowing these techniques to be quite useful still.”

How can the average of a uniform distribution rapidly trend toward a Gaussian distribution? Every point in a uniform distribution has an equal probability making an average somewhat meaningless.

“How can the average of a uniform distribution rapidly trend toward a Gaussian distribution?”

The average of points taken from a uniform distribution tends rapidly towards Gaussian. The average of two is a triangular distribution. The average of n is just the uniform distribution convolved with itself n times, or the B-spline distribution.

Here is the average of four – a Parzen window shape. Already pretty close to Gaussian.

“The average of points taken from a uniform distribution tends rapidly towards Gaussian. ”

Huh? The average of points taken from a uniform distribution gives a median value but it doesn’t change the uniform distribution into a Gaussian distribution. The median of a Gaussian distribution is the point with the highest probability of occurrence. The median of a uniform distribution has the same probability of occurrence as all other points in the distribution. They are not the same!

“The average of two is a triangular distribution. The average of n is just the uniform distribution convolved with itself n times, or the B-spline distribution.”

Exactly! You have to *do* something (i.e. convolve two rectangular windows) to convert a rectangular window into a triangle window! Taking an average and finding the median value on the x axis is *not* convolving anything!

In essence what you have thrown out is nothing more than a red herring argument. Finding a median value and convolving two windows are *not* the same thing.

This is a pertinent discussion because it leads into the use of averages. Averages (or means) hide information. It is also a difference between a mathematician and an engineer that deals with the physical world.

Is a mean of 3.5 when throwing die meaningful in the real world? I can’t think of a reason that the mean describes anything physical about a roll of the die. One can’t even adequately assume the probability of throwing a given number from only this information. How about within one sigma? That encompasses 2, 3, 4, 5. What about 1 and 6? How about two sigma’s? That encompasses all the possible values. What about the other 5%? Can you assume this is a uniform distribution from the mean and standard deviation?

Ok, I make doors. They are nominally 80 inches and I advertise them that way. I take measurements and show that the mean is 80 inches so I’m not lying. However, the doors are in reality 79 inches, 80 inches or 81 inches. What does the mean tell you? What about 1 sigma? 2 sigma’s?

Information disappearing is one the primary arguments about using Tmax and Tmin to calculate an average just like the examples above. Does an average tell you about how many cooling days are involved? Does an average tell you about how many heating days are involved? This is important. If cooling days are going down, then Tmax is decreasing. If heating days are going up, then Tmin are going down. This is all information that is lost when doing performing statistical calculations.

Nick’s got it. For a specific example, consider tossing dice. One die has an even chance of 1 to 6. Two dice yields a triangular distribution centered at 3.5 average. It doesn’t take many more to get very close to Gaussian. It’s easy to try for yourself.

Nick has nothing! There is no tendency for a uniform distribution to all of a sudden become a triangle distribution or Gaussian distribution! You had to *add* a second dice in order to make that happen!

You have to convolve two rectangular windows (i.e. the same uniform distribution with itself) to get a triangle distribution. As the first window slides across the second one the area that is under both goes from zero when they first intersect to a maximum as the two windows become congruent and then decreases to zero as the two windows no longer intersect.

How is this a tendency for a uniform distribution to become Gaussian? An uncertainty interval with a uniform distribution doesn’t magically become a Gaussian distribution where the median value is the highest probability. That’s just magical thinking useful only as a rationale to try and dismiss Pat Frank’s uncertainty analysis!

“You had to *add* a second dice”

No, you can throw the same dice twice. I’ve no idea what you are on about here. The central limit proposition is simple. If you add numbers taken from any distribution, or even a mixture, the result becomes more like a normal distribution with the number in the sample. Averaging just scales by 1/N.

“No, you can throw the same dice twice. I’ve no idea what you are on about here. The central limit proposition is simple. If you add numbers taken from any distribution, or even a mixture, the result becomes more like a normal distribution with the number in the sample. Averaging just scales by 1/N.”

UNCERTAINTY IS NOT RANDOM AND IT IS NOT ERROR. The central limit theorem only works with a random distribution where values vary – i.e. measurement error of a single sample with measurements taken multiple times. The uncertainty propagation is not like throwing a dice multiple times. Have you read nothing that Dr. Frank has posted?

Why do you continue to try and troll this argument? The average of n …. infinity is 1 if n=1. You can add as many 1’s as you want or extract as many 1’s as you want but the distribution will never become more like a normal distribution! No amount of scaling will change that fact.

Tim: You are obviously completely unfamiliar with the Central Limit Theorem, which you should have learned in the first couple of weeks of an introductory statistics class.

You seem to be missing Geoff’s point completely. These charts plot a set of averages of N points each. It is indeed true, by the CLT, that regardless of the underlying distribution, the distribution of a set of the average of N (independent) samples tends toward Gaussian. (Technically, in the limit as N -> infinity, you get a Gaussian distribution.

If you want to think of it in terms of your convolutions, keep convolving your resulting distribuition with another square wave, your result will get closer and closer to Gaussian.

Do you really believe that if you plotted the results of set of averages of 100 dice tosses each, you would not get a Gaussian distribution???

“You are obviously completely unfamiliar with the Central Limit Theorem, which you should have learned in the first couple of weeks of an introductory statistics class.”

When you have to resort to an ad hominem attack you have already lost the argument.

“These charts plot a set of averages of N points each. It is indeed true, by the CLT, that regardless of the underlying distribution, the distribution of a set of the average of N (independent) samples tends toward Gaussian. (Technically, in the limit as N -> infinity, you get a Gaussian distribution.”

Not for a uniform distribution. No matter how many samples you take of a population with a uniform distribution your mean will always the same. The average of those means will always be the same. If the uniformity is normalized to a value of 1 then any single sample will also be one. The average of any group of samples will always be one. The average of any number of sample groups will always be one. The standard deviation will always be zero. No matter what you do you will unable to come up with a normal, i.e. Gaussian, distribution of values.

“Do you really believe that if you plotted the results of set of averages of 100 dice tosses each, you would not get a Gaussian distribution???”

Of course you would. The issue is that each individual dice roll gives a random value. This applies to a distribution of errors as well. But this is all a red herring when it comes to calculating uncertainty. UNCERTAINTY IS NOT A MEASURE OF ERROR. It doesn’t have a random distribution of any kind. It tells you the interval in which the truth lies, not what the truth is.

PS – convolution of a uniform distribution was not my idea, it was Nicks. And it doesn’t apply either in the case of uncertainty.

Tim – You say: “When you have to resort to an ad hominem attack you have already lost the argument.”

No, it was a logical deduction based on your arguments. And your subsequent arguments only confirm the deduction to a higher degree.

If you objected to Geoff’s very valid point, you cannot really understand the CLT and its applicability.

You may have misunderstood the point Geoff was trying to make. Like Geoff, I have done many of these charts over the years, and his point as far as it goes is completely valid. In objecting to it for whatever reason, you make yourself look very foolish.

“No, it was a logical deduction based on your arguments. And your subsequent arguments only confirm the deduction to a higher degree.

If you objected to Geoff’s very valid point, you cannot really understand the CLT and its applicability.”

I note that you did not actually refute my assertions about 1. the uniform distribution and 2. the difference between error and uncertainty.

“You may have misunderstood the point Geoff was trying to make. Like Geoff, I have done many of these charts over the years, and his point as far as it goes is completely valid. In objecting to it for whatever reason, you make yourself look very foolish.”

The point (as far as it goes) is 1. wrong and 2. inapplicable in this case, it is a red herring. Again, uncertainty is not error. The only one here that is looking foolish is you because you can’t refute any assertion I have made.

What Tim is trying to explain is a distribution that looks like a square wave. In other words, every value in the interval has the same frequency of occuring, i.e., “1”. If you plot the frequency of occurrence of each number of a single die, you will find they tend toward equal frequencies and therefore a flat distribution.

That is a perfect illustration of uncertainty. That is, any value in the interval is equally possible. Pick any integer number from the die, any number, and tell why it is more likely to occur than any other number.

Using statistical calculations to attempt come up with descriptions of the distribution won’t tell you a thing. First, you’re dealing with integers and the mean is not an integer – meaningless.

That is what over thinking statistics will do.

If you end up with a distribution like this in SPC you need to evaluate what you are measuring and with what. You won’t be able to recognize a process approaching out of control until all of a sudden it is.

What would help here is to plot the distribution of the propogation of errors. Not bound it. That’s already been done. Bounds without a distribution are not helping me. And if it is a propogations errors, follow through and give us a distribution or at least a typical distribution. Or, say that no distribution of propagated errors can exist.

Correction to my above:

And if it is a propogation of errors,

Tim – Wow, just wow! Not only did I refute your point, you AGREED that I refuted it. (Although you apparently didn’t — and still don’t — understand this.)

Let’s review. Geoff made the very simple observation that one of the nice things about these SPC charts is that, by using averages consisting of multiple individual samples each, these averages tend toward Gaussian distributions, even if the underlying distribution is NOT Gaussian.

While a side issue to the main point of the original post, this is an absolutely correct observation that anyone who has understood a very basic statistics course will recognize — it is a simple illustration of the Central Limit Theorem.

After you objected to Geoff’s asseration, Nick and I explained it more carefully. I brought up the example of dice toss results, where the distribution of each individual toss is uniform, but the distribution of sets of N tosses is not.

I specifically used the example of sets of N=100 averages, asking if the distribution of these averages would be Gaussian. You answered: “Of course you would.”

That is all that Geoff, Nick and I have been arguing. That’s it! And you agree!

None of us made the argument that the mean would change, as you seem to think we have.

None of us made any assertion about error versus uncertainty, as you seem to think we have.

I’m sorry, but you remain completely confused about a very basic statistical concept.

“Let’s review. Geoff made the very simple observation that one of the nice things about these SPC charts is that, by using averages consisting of multiple individual samples each, these averages tend toward Gaussian distributions, even if the underlying distribution is NOT Gaussian.”

As Jim Gorman tried to explain to you this just isn’t the case. If you roll a die 1000 times the probability for each side of the dice coming up is 1/6. The probability for a one is 1/6. The probability for a 6 is 1/6. So you have a 1000 rolls. You can take 100 groups of 100 samples out of those 1000 rolls, all equal to 1/6, average each group to get [100*(1/6)]/100 = 1/6, and then average the average of each group. You will wind up with 1/6 , a constant, not a Gaussian or even near-Gaussian distribution.

“While a side issue to the main point of the original post, this is an absolutely correct observation that anyone who has understood a very basic statistics course will recognize — it is a simple illustration of the Central Limit Theorem.”

It is *NOT* simple at all. As I just showed you. In order to use the central limit theory to generate a near-Gaussian distribution you must have a random distribution of some kind to begin with! If you have a uniform distribution no amount of sampling and averaging will give you anything but the same uniform distribution!

“After you objected to Geoff’s asseration, Nick and I explained it more carefully. I brought up the example of dice toss results, where the distribution of each individual toss is uniform, but the distribution of sets of N tosses is not.”

You claimed this but you never proved it. As I show above no amount of sampling and averaging will change a uniform distribution into anything other than a uniform distribution. Show me how my math is wrong in the above example!

“None of us made the argument that the mean would change, as you seem to think we have.”

First, I haven’t agreed with anything you or Nick have said on this subject. Second, there is *NO* mean in a uniform distribution!

“None of us made any assertion about error versus uncertainty, as you seem to think we have”

Then what is the purpose of trying to confuse the issue? Are you just trolling the thread to see what kind of confusion you can sow?

“I’m sorry, but you remain completely confused about a very basic statistical concept.”

I’m sorry. The only one confused here is you. A uniform population has no random distribution that can be projected into a Gaussian distribution using the central limit theorem. That would mean that some of the population would have to have a different probability of occurrence than the other members, which is exactly what a uniform distribution is *NOT*.

Actually, to a mathematician, the result of averaging a uniform distribution is a limiting case of Gaussian distribution. As the variation in the distribution reduces, so does the standrd deviation. The result o averaging 3 samples of a uniform distribution with members having the value of “10” would be a gaussian distribution with a mean of 10 and a standard deviation of “0”.

Geoff,

A standard deviation of zero is not a Gaussian distribution. It is a uniform distribution

Tim — I cannot believe that you are seriously arguing these points!

You say: “In order to use the central limit theory to generate a near-Gaussian distribution you must have a random distribution of some kind to begin with! If you have a uniform distribution no amount of sampling and averaging will give you anything but the same uniform distribution!”

You are arguing that random distributions and uniform distributions are mutually exclusive. But the very example at hand — that of the toss of a die — is both random (you don’t know what value you are going to get on any particular toss) and uniform (you have an equal chance of each possible value).

In fact, it is THE textbook example of such a distribution, as anyone with even a passing acquaintance with basic statistics would know.

Then you say: “no amount of sampling and averaging will change a uniform distribution into anything other than a uniform distribution. Show me how my math is wrong in the above example!”

OK, I’ll be glad to help! Let’s take the case of N=2 dice tosses. You have 6 times the chance of rolling a 7 (average 3.5) as you do of rolling a 2 (average 1.0) or a 12 (average 6.0). The distribution has changed from uniform (flat) for N=1 to triangular for N=2, as we keep explaining to you. Most seven-year-olds who play dice games realize this, at least qualitatively, but it is beyond you!

And you say: “there is *NO* mean in a uniform distribution!”

Hogwash! There IS a mean to any distribution. In the case of a die toss, the mean is (1+2+3+4+5+6)/6 = 3.5. Elementary school math.

These are basic, basic points, but you are complettely unable to grasp them.

Ed,

“You are arguing that random distributions and uniform distributions are mutually exclusive”

That is *not* what I am saying at all! The chances of rolling any specific integer on the die is random, but the probability of all the integers being rolled is equal. No standard deviation at all, its ZERO. All probabilities are equal to 1/6. When you graph the number rolled on the x-axis and the probability on the y-axis you get a rectangle. You can select as many samples of arbitrary size out of the population as you would like, average each sample and then average the averages. You will wind up with the very same rectangle. There will be no standard deviation at all.

“OK, I’ll be glad to help! Let’s take the case of N=2 dice tosses. You have 6 times the chance of rolling a 7 (average 3.5) as you do of rolling a 2 (average 1.0) or a 12 (average 6.0). ”

You just proved my point. You generated a non-uniform distribution by using two dies and taking their sum!

“The distribution has changed from uniform (flat) for N=1 to triangular for N=2, as we keep explaining to you”

ROFL!!! No, that is *not* what you’ve been asserting. You have been asserting that a uniform distribution can be changed into a Gaussian distribution using the central limit theory! Then you try to prove it by generating a non-uniform distribution to work with!

“Hogwash! There IS a mean to any distribution. In the case of a die toss, the mean is (1+2+3+4+5+6)/6 = 3.5. Elementary school math.”

How do you roll a 3.5 on a die? Do you cut off the corners so it can land halfway in between two numbers? If the mean value is an impossibility then is it actually a mean? You’ve just demonstrated the main difference between a mathematician or computer programmer and an engineer! The mathematician/computer programmer doesn’t care if what they calculate has any relationship to the real world! By Jimminy they calculate an answer and it is precise and accurate and that is the end of it!

Now, instead of trying to prove me wrong about a uniform distribution by starting with a non-uniform distribution why don’t you stop and think about it for minute?