Reposted from DrRoySpencer.com

August 2nd, 2019 by Roy W. Spencer, Ph. D.

July 2019 was probably the 4th warmest of the last 41 years. Global “reanalysis” datasets need to start being used for monitoring of global surface temperatures.

We are now seeing news reports (e.g. CNN, BBC, Reuters) that July 2019 was the hottest month on record for global average surface air temperatures.

One would think that the very best data would be used to make this assessment. After all, it comes from official government sources (such as NOAA, and the World Meteorological Organization [WMO]).

But current official pronouncements of global temperature records come from a fairly limited and error-prone array of thermometers which were never intended to measure global temperature trends. The global surface thermometer network has three major problems when it comes to getting global-average temperatures:

(1) The urban heat island (UHI) effect has caused a gradual warming of most land thermometer sites due to encroachment of buildings, parking lots, air conditioning units, vehicles, etc. These effects are localized, not indicative of most of the global land surface (which remains most rural), and not caused by increasing carbon dioxide in the atmosphere. Because UHI warming “looks like” global warming, it is difficult to remove from the data. In fact, NOAA’s efforts to make UHI-contaminated data look like rural data seems to have had the opposite effect. The best strategy would be to simply use only the best (most rural) sited thermometers. This is currently not done.

(2) Ocean temperatures are notoriously uncertain due to changing temperature measurement technologies (canvas buckets thrown overboard to get a sea surface temperature sample long ago, ship engine water intake temperatures more recently, buoys, satellite measurements only since about 1983, etc.)

(3) Both land and ocean temperatures are notoriously incomplete geographically. How does one estimate temperatures in a 1 million square mile area where no measurements exist?

There’s a better way.

A more complete picture: Global Reanalysis datasets

(If you want to ignore my explanation of why reanalysis estimates of monthly global temperatures should be trusted over official government pronouncements, skip to the next section.)

Various weather forecast centers around the world have experts who take a wide variety of data from many sources and figure out which ones have information about the weather and which ones don’t.

But, how can they know the difference? Because good data produce good weather forecasts; bad data don’t.

The data sources include surface thermometers, buoys, and ships (as do the “official” global temperature calculations), but they also add in weather balloons, commercial aircraft data, and a wide variety of satellite data sources.

Why would one use non-surface data to get better surface temperature measurements? Since surface weather affects weather conditions higher in the atmosphere (and vice versa), one can get a better estimate of global average surface temperature if you have satellite measurements of upper air temperatures on a global basis and in regions where no surface data exist. Knowing whether there is a warm or cold airmass there from satellite data is better than knowing nothing at all.

Furthermore, weather systems move. And this is the beauty of reanalysis datasets: Because all of the various data sources have been thoroughly researched to see what mixture of them provide the best weather forecasts

(including adjustments for possible instrumental biases and drifts over time), we know that the physical consistency of the various data inputs was also optimized.

Part of this process is making forecasts to get “data” where no data exists. Because weather systems continuously move around the world, the equations of motion, thermodynamics, and moisture can be used to estimate temperatures where no data exists by doing a “physics extrapolation” using data observed on one day in one area, then watching how those atmospheric characteristics are carried into an area with no data on the next day. This is how we knew there were going to be some exceeding hot days in France recently: a hot Saharan air layer was forecast to move from the Sahara desert into western Europe.

This kind of physics-based extrapolation (which is what weather forecasting is) is much more realistic than (for example) using land surface temperatures in July around the Arctic Ocean to simply guess temperatures out over the cold ocean water and ice where summer temperatures seldom rise much above freezing. This is actually one of the questionable techniques used (by NASA GISS) to get temperature estimates where no data exists.

If you think the reanalysis technique sounds suspect, once again I point out it is used for your daily weather forecast. We like to make fun of how poor some weather forecasts can be, but the objective evidence is that forecasts out 2-3 days are pretty accurate, and continue to improve over time.

The Reanalysis picture for July 2019

The only reanalysis data I am aware of that is available in near real time to the public is from WeatherBell.com, and comes from NOAA’s Climate Forecast System Version 2 (CFSv2).

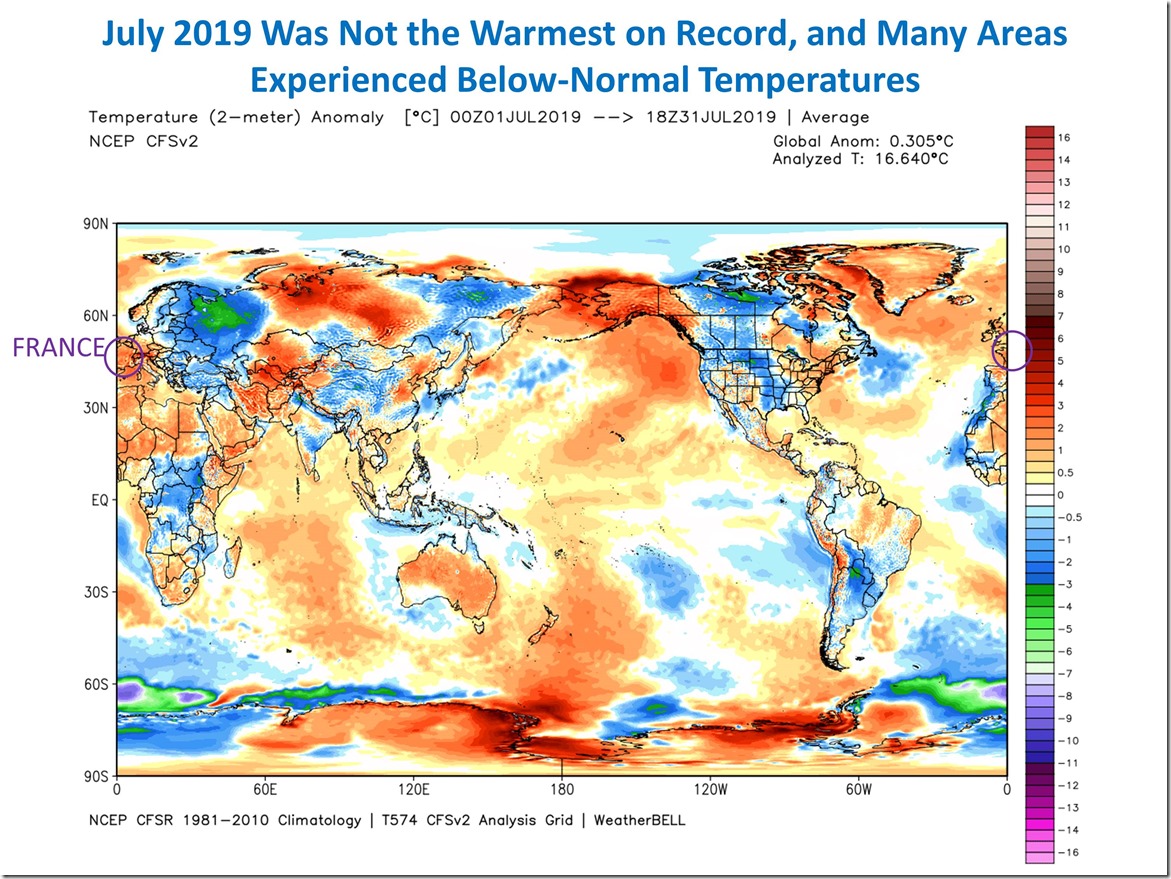

The plot of surface temperature departures from the 1981-2010 mean for July 2019 shows a global average warmth of just over 0.3 C (0.5 deg. F) above normal:

Note from that figure how distorted the news reporting was concerning the temporary hot spells in France, which the media reports said contributed to global-average warmth. Yes, it was unusually warm in France in July. But look at the cold in Eastern Europe and western Russia. Where was the reporting on that? How about the fact that the U.S. was, on average, below normal?

The CFSv2 reanalysis dataset goes back to only 1979, and from it we find that July 2019 was actually cooler than three other Julys: 2016, 2002, and 2017, and so was 4th warmest in 41 years. And being only 0.5 deg. F above average is not terribly alarming.

Our UAH lower tropospheric temperature measurements had July 2019 as the third warmest, behind 1998 and 2016, at +0.38 C above normal.

Why don’t the people who track global temperatures use the reanalysis datasets?

The main limitation with the reanalysis datasets is that most only go back to 1979, and I believe at least one goes back to the 1950s. Since people who monitor global temperature trends want data as far back as possible (at least 1900 or before) they can legitimately say they want to construct their own datasets from the longest record of data: from surface thermometers.

But most warming has (arguably) occurred in the last 50 years, and if one is trying to tie global temperature to greenhouse gas emissions, the period since 1979 (the last 40+ years) seems sufficient since that is the period with the greatest greenhouse gas emissions and so when the most warming should be observed.

So, I suggest that the global reanalysis datasets be used to give a more accurate estimate of changes in global temperature for the purposes of monitoring warming trends over the last 40 years, and going forward in time. They are clearly the most physically-based datasets, having been optimized to produce the best weather forecasts, and are less prone to ad hoc fiddling with adjustments to get what the dataset provider thinks should be the answer, rather than letting the physics of the atmosphere decide.

The alarmist spin machine have lost no time.

“July was hottest month EVER,EVER, EVER, recorded on Earth…”

https://www.independent.co.uk/environment/july-weather-hottest-month-ever-climate-change-heatwave-global-warming-wmo-a9035356.html

Complete with parched earth and bleached animal bones.

”Complete with parched earth and bleached animal bones.”

And fish bones no less!…which means it’s probably the driest, droughtiest, most desiccating, river destroying month too!

I think it’s easier to convince young people that warming is a threat because they have a short “temperature memory.” I, being 56 years old, and having been a competitive runner when young, recall very clearly how hot or cool it was in the past.

Utter nonsense. UAH multiply adjusted troposphere temps show nothing reliable. this from a politically motivated observer.

An area of Siberia the size of Belgium is on fire, despite floods across the region… still lowest arctic sea ice extent, Greenland record melt and record temps across a wide area of western Europe, in a second heatwave for the summer.

And of course Berkley earth long since proved that UHI is not distorting the global temp records.

(You should refrain from attacking a scientist without evidence. You do this again, it will be moderated) SUNMOD

Now tell us about the many cold records that were broken across the globe this summer. That’s what a meridional jet stream does: hot air moves north and cold air moves south.

ok, tell us. (hint: none)

Several low temperature records were broken near where I live.

Here are a few. This doesn’t even cover Europe.

US

Oklahoma City, OK — new record 59F, old record 61F

Lawton, OK — new record 58F, old record 60F

McAlester, OK — new record 59F, old record 63F

Abilene, TX — new record 62F, old record 65F

San Angelo, TX — new record 57F, old record 58F

Lufkin, TX — new record 50F, old record 60F

Atlas, OK — new record 58F

Decatur, AL — new record 58F

Salina, KS — new record 58F

Anderson, SC — new record 61F

North Little Rock, AR — new record 64F

A new record all-time daily low temperature was set in International Falls on Tuesday, July 30 — the mercury dipped to 2.8C (37F), busting the previous record of 3.3C (38F) set way back in 1898.

With a low of 14.4C (58F) on Thursday, July 25, the Austin-Bergstrom International Airport thermometer smashed its previous all-time monthly low of 15.6C (60F) set in 2013, making it Austin’s coldest July temperature on record in books dating back to World War II.

Camp Mabry observed 17.2C (63F) which comfortably surpassed the previous daily record low of 18.3C (65F) from 1924.

Residents of San Angelo, a city on the Concho River, welcomed the summer-respite as the cold front dropped temperatures to a sweater-grabbing 13.9C (57F) — the coolest July 24 observation on record, narrowly busting the previous record low of 14.4C (58F) set in 1915 (solar minimum of cycle 14).

In addition, nearby Abilene also broke it’s previous all-time record for July 24, of 18.3C (65F) from 1989, with Wednesday morning’s low of 16.7C (62F).

Canada

https://pbs.twimg.com/media/EAqFNHpX4AATyhj?format=jpg&name=small

Russia

On July 24, in Susuman, a record breaking -4.1C (24.6F) was observed — busting the previous daily record of -3.5C set back in 1973.

In Seimchan, -2.9C (26.8F) beat the previous record for the date of -2.4C (27.7F) from 1991.

In Brokhovo, the new record low for July 24 is now 4C (39.2F), which surpasses the 4.6C (40.3F) from 1973.

The -1.4C (29.5F) in Talon comfortably ousted the -0.6C (30.9F) from 1973.

Tompo’s -0.3C (31.5F) bumped-off the previous all-time record 0.3C (32.5F) from 1977.

While in Zyryanka, the mercury fell to 2.7C (36.9F), busting 1956’s 2C (35.6F).

You’re comparing individual daily temperatures associated with transient events (e.g. a strong midsummer cold front) to mean monthly temperatures. Smh.

Are you shaking your head about the few days of transient hot weather in France and Greenland, too?

“Are you shaking your head about the few days of transient hot weather in France and Greenland, too?”

Good comeback! 🙂

We haven’t had much of a summer in Washington either

Here’s some more

Connecticut – June 4, 2019

In Danbury, the mercury plunged to 4.4C (40F) — smashing the city’s previous all-time June 04 low of 15C (59F) set back in 2003.

Bradley Int. Airport toppled its previous cold record with 10C (50F).

New Haven’s 10.6C (51F) set a new all-time daily record low for the city.

Stratford’s measurement of 12.2C (54F) was also a new record.

Brasil

Urupema, a municipality in the state of Santa Catarina in southern Brazil, recorded it’s coldest ever temperature on the morning of July 07. The mercury plunged to -9.2C (15.4F) at the Epagri-Ciram weather station, making it the lowest temperature ever recorded there, comfortably busting the -8.8C (16.2F) set on June 28, 2011.

Slovakia

The mercury in Poprad, a city located at the foot of Slovakia’s High Tatras Mountains, dropped to -1C (30.2F) at a height of 5cm above ground level on Wednesday morning. “Two metres above the ground, the temperature [was] 3.7C (38.7F) in Poprad and 6.3C (43.3F) in Kamenica nad Cirochou,” reported the SHMÚ.

Those readings marked the coldest July morning for both weather stations since records began.

More Russia

Petrozavodsk, the temperature dropped to 3.2C (37.8C) last Friday — comfortably busting the city’s previous July record low of 3.6C (38.5F).

Cherepovets, a city in Vologda Oblast, set a new July low of 1.8C (35.2F) — annihilating the previous record of 5C (41F) set in 1968.

Tikhvin, a town in Leningrad Oblast, the 2.4C (36.3F) measured last Friday smashed the previous record of 5.3C (41.5F) set way back in 1958.

While the 5.4C (41.7F) observed in the riverside city of Kostroma just pipped the previous 1968 record of 5.5C (41.9F).

Netherlands

At the former Twente airbase, the temperature plunged to -1.6C (29F) on July 04, which, according to Weerplaza weather agency, was a new all-time record-low for the month of July, for the east of the country. The previous low was -1.5C (29.3F) measured in Gilze and Rijen on July 01, 1984 (solar minimum of cycle 21).

Meaningless.

What is the ratio of hot records to cold? How has that ratio changed over the last 100 years?

The first graph assumes a normal probability distribution for temperatures. That is an unsupported assumption. In addition what should be looked at is record high minimums, not just record low minimums. Much of the globe is seeing higher minimum cold temperatures but fewer maximum high temperature. That alone argues against the use of a normal distribution for temperatures. Remember, your second graph shows that we are seeing fewer record high temperatures as well as fewer record cold temperatures.

The global average is going up because of higher minimums and not because of higher maximums. That is a *good* thing! It leads to longer growing seasons and higher crop yields.

Your deflection is meaningless. Climate alarmists do this all of the time. In fact they’re doing it now claiming the few days of hot weather in France and Greenland mean anything pertaining to climate.

griff:

You know I usually support your right to have your say, but I have to tell you, you went over the top.

What are you going on about? UAH data has been adjusted a couple of times for things like orbital drift compensation. Adjustments have been open and transparent and amount to a few hundredths of a degree. Try to defend NASA practice of seemingly random rewrites of their whole data set going all the way back to the beginning. These changes keep going on, and there is no explanation for any of it.

Did you just defame one of the best climate scientists in the field? Did you just accuse an outstanding researcher of falsifying his results to satisfy his political agenda?

This is a viciously inflammatory statement you have made. It was no little “throw away” smear.

You now have a choice to make.

1) Provide hard, fast proof that Dr. Spencer has corrupted his results.

2) Publicly retract that statement

griff, one or the other.

Your move.

On the weather forecasts here in the U.K. during the winter even the BBC state that the temperatures are for the towns and that rural areas will be 1-2 degrees Celsius colder. Although the difference may be a couple of degrees it makes the difference between frost and no frost in winter, but on a hot summers day the difference is around 7% and so the effect is reduced. However, for absolute measurements the difference between 35 and 33 becomes significant.

“…the objective evidence is that forecasts out 2-3 days are pretty accurate, and continue to improve over time.”

It depends on what your use is. If you want to know whether to wear shorts of long pants, carry an umbrella or not, or similar, then current weather forecasts are just fine.

But, look at any site like weather.com or accuweather.com and note the expected high for two days from now. Then sit back and watch how this number changes almost by the hour, sometimes by quite a bit over the two days. That doesn’t make it bad for most uses. But… for Climate analysis, don’t make me laugh.

TonyL – My initial reading of griff’s comment (” UAH multiply adjusted troposphere temps show nothing reliable. this from a politically motivated observer.”) interpreted “this” as referring to the first part. IOW a politically motivated observer (ie, griff) was expressing the opinion that UAH temps show nothing reliable. Even on re.reading, I think my interpretation seems right, because (a) Roy did not say UAH temps are unreliable and (b) griff is clearly politically motivated.

griff is of course entitled to express an opinion, but when it is self-acknowledged as politically motivated and has no supporting evidence or argument it seems pretty pointless to take it seriously.

Yeah, no. That is not what griff meant, and that is not how English is read. It is also not the first time Geoff has used such innuendo against Dr. Spencer. Don’t try to cover for him.

TonyL

Griff never has publicly apologized for saying that Susan Crockford is not a scientist. I doubt that he has the integrity to take responsibility for his ‘over the top’ behavior.

“2) Publicly retract that statement griff,”

I think that is a good idea. But don’t expect Griff to retract. He didn’t retract his smear of Dr. Susan Crockford so I doubt we will hear from him on this smear, either.

Dang! My post above appeared in the comments immediately after I posted it! And it’s not even the top of the hour! What’s going on? Is this a comment software fix or a fluke?

I don’t think I have had a post appear immediately in many, many months.

Wow, Griff, what a lot of comments you got in before leaving the building. Berkley Earth did not prove UHI is not distorting the global temp records. Maye they thought they did, and it connected to your thought process, but it is clear UHI produces higher recorded temperatures than same setting rural sites. I think I will get my comments from Dr. Roy, thank you.

Two points. When I drive from the city center, a city of about 700,000, to the rural golf course about 15 minutes away (same altitude), the temperature is almost always 3-5 degrees cooler. I’ve been noting this for years.

Our area in the Western US has experienced a notably cool summer. Only a couple of days over 100F, when normally there are 20 or more.

I was listening to a Tulsa weather report yesterday and the meterologist reported that the record high temperature in Tulsa for Aug. 4, was 111F set in 1923. It was about 89F in Tulsa yesterday.

“An area of Siberia the size of Belgium is on fire”

And the central plains of the US are seeing highs in the 80’s for most of July and in the low 80’s for the first two days of August. At least 10-15degF lower than usual for the dog days of summer for the central plains.

“An area of Siberia the size of Belgium is on fire”

Griff’s childish imagination overshadows any reasonable thought one would normally expect from someone his/her age. To wit:

11,849 mi² = size of Belgium

93,628 mi² = size of Great Britain

163,696 mi² = size of California

3,797,000 mi² = size of United States

5,100,000 mi² = size of Siberia

Sadly, due to metrication, the Wales unit of area is losing currency and is gradually being replaced with the Belgium. Because of the sheer number of statistics these days, it may be useful to know that one Belgium is worth 1.47 Waleses.

https://www.theguardian.com/notesandqueries/query/0,5753,-4587,00.html

H/T Tom Sutch, West Malling

griff, the EPA has done UHI studies on most major US cities…..it’s online

The EPA says UHI runs ~10-15 degrees average…and often much more

Berkeley claims that UHI is less than 5 degrees….and they adjust down for UHI 2-3 degrees

…that allows them to claim 2 things

Their claim is they adjust for UHI….and the adjustments lower the temperature

I posted this at Roys site in reply to David Appell, who made an irrational reply to what I wrote:

“Gee David, you still fall for their baloney. I had an experience of a HUGE UHI effect changes just two days ago.

I left the city (250,000 Metro) around 10:00 pm, going north for about 9 miles, the temperature dropped rapidly from 84 to 72 as I drove out of the city into the sparsely populated rural area, with negligible buildings and concrete.

Then after showing my two teen daughters the much darker sky for stars, double stars and such, drove home around 11:45 pm, the change in temperature was just as rapid going back up to 82 from 71.

I see the obvious UHI effect every time I drive, the range is usually around 2 degrees when I drive through the river delta.

The UHI is real and often significant. I tire of the lies Berkely and other groups promote.”

http://www.drroyspencer.com/2019/08/july-2019-was-not-the-warmest-on-record/#comment-370482

=============================

He is so fixated on the BEST UHI baloney, that I wonder if he realize that if UHI was properly accounted for, most of the warming would vanish, statistically.

In Roys site, Joseph D Aleo made this post showing the OBVIOUS strong UHI effects of the recent European heatwave:

Joseph S. D’Aleo says:

August 2, 2019 at 12:06 PM

https://www.jpl.nasa.gov/news/news.php?feature=7445&fbclid=IwAR06X9kqUS6NWWaGRqvCQcMisTjI54eXun1BhyL3TdAmrOkMYWEHacZIrZs

NASA JPL clearly shows an large UHI contamination in the European heat wave.

====================================

The FOUR cities in the link are satellite images

Geoff,

You still have provided zero hard evidence that said wildfires, floods etc have any measured, repeatable connection to atmospheric CO2 levels.

You can stretch an old, failed hypothesis only so far before it snaps. Geoff S

That “Mankini” doesn’t look good once snapped!

Berkley Earth proved nothing of the sort.

The Berkley Earth dataset has been well and thoroughly criticized for it’s many flaws and mistakes. One of the biggest being the utterly ridiculous method it used to decide rural vs. urban stations.

Oh wow, somethings on fire. Since nothing like that has ever happened before in the history of the world, it must be caused by too much CO2.

Greenland’s melt isn’t a record, and Greenland has been over all gaining mass for the last few years. And the Arctic has been gaining ice for the last 6 or 7 years.

Yes, there were record high temperatures in a handful of locations. At the same time there were record cold temperatures in many other locations. It’s called weather. Perhaps you should try learning the difference.

BTW, I just love how you decide that anyone who disagrees with the conclusions of your religious elders, must be politically motivated.

“Is on fire” 😀 Griff you are an idiot of the highest order.

Meanwhile tell me why it was bloody COLD here in Finland in July.

Oh I get it, it was weather here in Finland, that made July cold but it was CO2 that made Siberia warm.. is that what you think? 😀

griff,

A lot of unsupported claims, as usual! If you want to be taken seriously, you should provide citations for your claims. It also helps to put the claims in context. That is, your claim about the fires in Siberia says nothing about past fire sizes and has no statistical data to indicate that it is actually abnormal behavior. That is, is the area burning something like 3 sigma larger than the past 30 years? Of not, then you are just engaging in hand waving.

I love the last part of your comment where you threat to “moderate” , i.e. forbid to speak your opponent.

Shows your real colors … Just like the German regime in the 40s!!!

“The only reanalysis data I am aware of that is available in near real time to the public”

I download NCEP/NCAR V1 daily, with about 2 days delay, and post the daily figures for global surface averge here, along with monthly totals, maps etc. In that index July was second warmest since 1994 (after 2016). Earlier years are patchy. This index is a long-standing one, and has lower resolution, but that doesn’t matter for calculating a global average.

The plus point for reanalysis that it is used for forecast only works for near current years. Pre 1994, say, it has been back-calculated from whatever data is available.

“If you think the reanalysis technique sounds suspect”

I don’t but some here will, because it is effectively to a GCM (or NWP equivalent) which ingests current data. I think that is fine for its use, but there is one problem, which is homogeneity. Data sources change over the years, and the mix drifts. This isn’t a problem for forecasting, but is a problem for comparing results years apart. I don’t use reanalysis to calculate records, because I don’t think even 2016 and 2019 are really comparable. But it is great for tracking daily progress during a month. Much beyond that, I would always go back to an index calculated with known, if imperfect, instruments, rather than an unknown mix.

I must say that I think the accounts of “hottest month ever” are regrettable. They rely on superimposing the anomalies on the seasonal cycle, and given the preponderance of land in the NH, NH summer is always going to be the hottest on that measure. Anomalies are there for a purpose and are meaningful; this is not. July 2019 is nowhere near the highest anomaly.

That a reanalysis “ingests current data” is quite a significant feature.

“Effectively to a GCM (or NWP equivalent)” but over shorter timeframes, with smaller timesteps, at a higher spatial resolution, and with a demonstrated accuracy over local and regional scales…in other words, nothing like long-term GCM results.

Nick,

Thank you for your response here.

When comparing daily temperatures at 2 locations, what rule-of-thumb error envelope do you use to be sure that there is a statistically real difference?

Geoff S

“what rule-of-thumb error envelope do you use to be sure that there is a statistically real difference?”

I don’t. “Statistically real” is relevant only if you are trying to deduce some rule from that difference that you expect would be true in such pairings in the future. We had a cold day last week. It wasn’t statistically significant; cold days happen in winter. But it was a cold day.

Nick,

Then what error number do you use for a run of the mill, daily T max temperature record of the type typically appearing in ACORN-SAT data sets? Geoff.

Geoff,

I don’t use one at all, because I am not trying to deduce anything from it. It is just an observation. It reached 15°C here yesterday; quite warm for August. I don’t need an error number to make that observation.

When you put a whole lot of numbers into an average, you might well wonder whether movements in the average are significant. That is, if July was hot globally, say, does this change our expectation about what Julys should be in future? And there are many things that might have caused this July reading to be high that won’t apply in the future. One is that the world’s thermometers just happened to be read high that month – instrument (or observer) error. Another is just the vagaries of weather. These are all lumped into a single uncertainty figure.

But it is the weather vagaries that dominate. They cause much more variability in July averages than the chance that everyone was getting a too high reading on their thermometers.

July might not have been but June was.

January 3rd warmest,

Fepbruary 5th,

March 2nd,

April 2nd,

May 4th,

June was the warmest.

Judging by the “physics of the atmosphere” 2019 will probably be the warmest year too.

You’re not qualified to judge a cane toad race mate.

Probably not. Things seem to be aligning for cooler temps.

* NASA is predicting a Dalton-level minimum for SC25

* NOAA’s CFSv2 forecast shows La Nina conditions now into 2020

* Many cold records have been broken in 2019 so far

I’m loving the cool Summer here in the Midwest USA.

The Winter was warmer than usual, which is good, but Spring was too cold and rainy.

Overall, Climate Change has been good at moderating the temperatures in the Midwest, but crops don’t like this colder, wetter climate.

Yep. Beautiful summer in Wisconsin, now that the cold and heavy rains have ceased. Very cool start to summer in Washington state has transitioned to a totally normal summer now. Travels across the Dakotas, Montana, Idaho, and Iowa all demonstrated similar conditions. Cool, late, wet start to summer…..

A cool summer in the US is just weather. A hot summer in parts of Europe is proof that CO2 is gonna kill us all.

Once again Loydo demonstrates his impenetrable innumeracy.

1) The record claimed is by a thousandth of a degree or so.

2) The sensors being used are only accurate to a tenth of a degree.

3) We would need somewhere between 1000 and 10,000 more sensors to come even close to adequately monitoring the entire planet.

4) The system is so poorly maintained that individual stations often have biases of multiple degrees.

“July the 4th warmest”

If its good enough for Roy its good enough for me.

France’s record was from a low class 3 station next to a vast lorry park covered in black asphalt.

Germany’s recent record was also a defective station probably shown to be about 3 deg C above accurate readings.

Both were “validated” by the respect Met agencies.

The big lie continues even within the official records.

And the UK record came from a compromised site

I often have a look at Climate Reanalyzer. It is interesting to see how weather patterns are supposed to evolve. The choice for different variables can be made as well as for specific regions. An interesting starting point is https://climatereanalyzer.org/wx/fcst/#gfs.world-ced.t2anom Using the slide gives a good view on patterns to develop.

Maps, timeseries and correlations can be found under ‘Climate’.

Data used: https://climatereanalyzer.org/about/datasets.php

Who has a look at the ever changing patterns realizes that ‘static views’ and simple explanations are far from real Earth’s reality. It’s all dynamics, trying to stabilize the ever changing reality of every moment, a reality that is changing daily, seasonal, over periods of years and longer. No impulse into the system – nor human nor natural – has a simple linear effect. After some days, all effects of any initial (!) change are unpredictable.

Super yachts are causing global warming and contribute to the seal level rise (‘Eureka is that lunch ready yet’ shouted Archimedes at his missus)

Mr Gates, accompanied by his wife, Melinda, and three children, came to Montenegro by his super-yacht ‘Lady S’ docked in front of Kotor and Risan until 28 July, when the Gates family decided to visit Sveti Stefan and then Porto Montenegro. They left the country on Thursday.

Putting that super yacht into the ocean probably caused more sea level rise than CO2 has.

https://www.youtube.com/watch?v=9mjOmsqIibk&list=WL&index=7&t=0s

What sea level rise?

Ryan Maue tweeted that July was warmest July on record according to JRA-55 (and thus the warmest month in absolute temp), but it was only 0.01 C warmer than July 2016

https://twitter.com/RyanMaue/status/1157318343482888192

ERA5, with 29 days of 31 in, seems to go the same way..

https://www.bbc.com/news/science-environment-49165476

Whenever I see temperature stated out to one hundredth of a degree with no statement of any error budget values I simply shudder. This is only done by statistical manipulation performed by mathematicians who have had no experience in real physical measurements.

I suspect the correct value would be something like

0.01 +/- 0.1 degrees or even worse!

Jim

You can easily calculate the uncertainty for an average of a set of temperatures. You have to know the resolution and uncertainty for any reading. Suppose the readings are made with PT100 resistance temperature devices (RTD’s). The quality needed for air temperature measurement has an accuracy (when mated to an expensive set of electronics) of 0.001 and an uncertainty of ±0.001.

How many measurements averaged? How many reporting stations? Suppose there are 15,000 reporting stations and the make 24 readings per day and 31 days for July.

15,000 x 24 x 31 = 11,160,000

The uncertainty propagated from the readings to the average of the anomaly (in many reports) is:

Sqrt(the sum of all error measurement values^2 x n)

Where n is the number of measurements.

So 0.001^2 x 11,160,000 = 11.16

And Sqrt (11.16) = 3.34

The uncertainty about the reported average is ±3.34 deg C. That means the true average has a 68% probability of being with that range.

One could argue that the average uses just 31 readings, but in reality the daily average is created by a process of averaging so the same rule applies. All measurements have an associated uncertainty and performing calculations using measurements requires that error propagation be considered.

The uncertainties increase in quadrature during each calculation step. The uncertainty about the global average surface temperature is far larger than any measurable difference that might be encountered. You could use a much smaller number of readings but then you’d have sampling issues.

The calculation of the anomaly that Nick likes so much requires that the new value ±3.34 be subtracted from the longer term average ±3.34 to create the anomaly. The same calculation applies: it is square roof of the sum of the squares of the uncertainties.

The final answer for a calculated anomaly in case is ±4.72 C.

It is well known in basements that one might try to help solve kidnappings by domestic gangs. When doing so creates problems, it is often solved by the new money in duffel bags – the delay was a ruse. How are they going to let the badly trained dogs play in the park with the others? By accepting whatever real action brings uncertainty. For 15,000 readings, the uncertainty of the anomaly is ±7.42 K and it is only in that range 68% of the time.

Crispin,

1. The resolution of the measuring element is *not* the error bar for the measurement device itself. First, if you will study the measuring element it has to be calibrated in order to be accurate. Over time even the best measurement element with the best resolution will lose accuracy. It is the error bar for the accuracy that is of importance, not the resolution. Second, the accuracy of the element is not the accuracy of the overall device itself. The device has to be situated correctly and designed correctly. Both of these affect the overall accuracy of the device regardless of the ability of the actual measuring element itself.

2. You are trying to use the theory of large numbers to decrease the error bar. The theory of large numbers only works when you make multiple measurements of the same thing using the same measurement device. That is not the case with temperature measurements from different measuring devices measuring different things. In that case the error bars propagate forward unchanged. Think of 1000 steel girders used in building a bridge. It does no good to average the errors in length when designing fish plates used to connect the girders together. The fish plates have to be designed to handle the maximum and minimum errors in length. Otherwise you will end up with fish plates that won’t work. The theory of large numbers simply isn’t applicable, the error bar remains . It isn’t applicable with temperature measurement either.

Tim Gorman,

+1

“Where n is the number of measurements.

So 0.001^2 x 11,160,000 = 11.16

And Sqrt (11.16) = 3.34

The uncertainty about the reported average is ±3.34 deg C.”

No, that is the uncertainty of the sum. To get the average, the sum is divided by n, and so is the uncertainty. Result, 3.34/11160000, quite small.

Of course, that is the uncertainty due to measurement error only, and it may be larger if they are correlated. But not large enough to notice, for that average.

You are misapplying the theory of large numbers in the same manner as Crispin. That theory simply doesn’t apply when you have different measuring devices measuring different things. It doesn’t even apply when you have the same measurement device measuring different things.

Like I said, if you design girder fish plates based on the statistical mean of the measurement of the girders you will wind up with a lot of fish plates that won’t work to connect the girders. The fish plates must be designed to handle both the negative and positive ends of the error bars associated with the girders. I.e. if two girders are both too long, are both two short, or one short and one long, and two girders of proper nominal length.

Temperature measurements are no different. If the error bar of the measuring devices are +/- 0.1deg then that carries over into the error of the mean. I.e. you can’t get 0.01deg accuracy in the mean by averaging all the readings. The error bar remains +/- 0.1deg.

“You are misapplying the theory of large numbers in the same manner as Crispin.”

But getting a very different answer.

“That theory simply doesn’t apply when you have different measuring devices measuring different things.”

It does. It’s just the mathematics of random variables, of whatever origin. The theory says nothing about devices. There is a requirement for independence, which is important. It’s something you have to figure out. No blanket statements can be made.

“It does. It’s just the mathematics of random variables, of whatever origin. The theory says nothing about devices. There is a requirement for independence, which is important. It’s something you have to figure out. No blanket statements can be made.”

Sorry, this just isn’t true. Using one device to measure one thing a large number of times generates a random distribution of error. You can use the theory of large numbers to gain accuracy. Think of someone using a ruler marked in sixteenths of an inch to measure a rod. Take a lot of measurements, which will have a random variation, and you can gain accuracy through statistical methods. The error bar shrinks from what it is for each individual measurement.

That just doesn’t happen when you have multiple devices measuring different things. If I have a yardstick and my friend has a different yardstick and I measure the height of my rose trellis while he measures the height of my tomato cage no amount of averaging or statistical analysis is going to gain any accuracy in either measurement. You can add as many friends as you want, each with their own yardstick, and have them measure as many things as you want, the height of my garage or the height of my radio tower or the height of my oak tree, and no amount of statistical analysis will make their measurements any more accurate. Their error bars remain the error inherent in using a yardstick to measure.

The same thing happens with multiple thermometers measuring different temperatures at different locations. Averaging the reading at site A with the reading at site B simply doesn’t increase the accuracy of either reading. If the accuracy of each is +/- 0.1deg then the average will have an error bar of +/- 0.1deg as well. The errors are simply not random, they are individual for each device. You simply can’t cancel the errors out. It’s like trying to cancel out the errors in the lengths of steel girders by taking an average of their lengths. It simply doesn’t work. No amount of averaging will change the overall error bar that has to be allowed for in designing whatever structure is going to use the girders. Temperatures taken at different sites using different devices are exactly the same. Anything you do with them has to take into account the overall error bar for each device. You can’t just wish those error bars away with statistics.

Do the math. If I measure 2deg then the actual temperature could be 1.9deg to 2.1deg (assuming a +/- 0.2deg error bar). If my neighbor measures 2.1deg then his actual temperature could be 2deg to 2.2deg. You simply don’t know if the temp at my site is 2deg and his is 2.1deg because of accuracy or if it is because of actual variation in the temperature. No amount of averaging can determine this. You simply don’t know what the actual temp is. Even if you assume that the temperatures *are* the same at both sites you don’t know if the actual temperature is 2deg or 2.1deg since both are within the error bar. Adding sites doesn’t change this at all. If the error bar of the devices is +/- 0.1deg then the error bar of the average will be +/- 0.1deg.

Reporting on last week’s advection of a Saharan air-mass north across western Europe, the news stated (without any hint of understanding) that our UK peak temperatures were now higher than those in Dubai. Talking of Dubai, the coconut butter completely melted inside the jar in my kitchen cupboard during this heat storm. The last time I saw liquid coconut oil was when visiting the Dubai souk in 1974.

And then on Tuesday 30th July things cooled down and we had the torrential rain storm.

Notice the curious coincidence with the afternoon thunderstorms in the Ahaggar Mountains, Tamanrasset, Algeria, deep in the central Sahara, almost exactly due south of the British Isles. Because the previously advected Saharan air had moved north over Europe, this teleconnection allowed moist West African monsoonal air to move north into the central Sahara, bringing afternoon thunderstorms to the Ahaggar Mountains on 30th July.

I have still got to wash the Saharan dust off my car.

Do it again, ….. and again, … and again, ….. and the Sahara will “bloom again”.

Samuel,

I first became aware of this process of natural climate change associated with the northern movement of the West African Monsoon in 2007.

https://www.eumetsat.int/website/home/Images/ImageLibrary/DAT_IL_07_08_08.html?lang=EN

Afternoon rainstorms are also occurring this summer in the Algerian Atlas Mountains.

https://www.ventusky.com/?p=34.9;9.3;5&l=rain-3h&t=20190802/1500

https://eumetview.eumetsat.int/static-images/MSG/IMAGERY/IR039/BW/WESTERNAFRICA/IMAGESDisplay/vUzFqm4NBJ3pX

https://www.dzmeteo.com/meteo-djelfa.dz

If the West African Monsoon goes north over the Sahara, then there is less energy going west over the Atlantic. This has implications for hurricane formation.

So here we are 12 years on from 2007 (one sunspot cycle) and the West African Monsoon is once again showing signs of making its way north across the Sahara to the Atlas Mountains in August.

https://www.ventusky.com/?p=25.2;-5.2;4&l=rain-3h&t=20190807/1800

I recommend a close watch on this over the next 30 days.

Of course, this has nothing to do with the Sun /sarc.

Right you are, Philip, ….. the western Sahara/Africa is the per se “breeding ground” for a majority of Atlantic hurricanes.

The earth is too big for puny humans to measure accurately everywhere all at once, with the instruments changing all the time. There’s that, too.

No one motivated by politics is likely to admit being “puny.” They’d rather act like the two fleas in the Crocodile Dundee joke, arguing over which one owns the dog.

Until the last few decades, records were only made of daily highs and lows.

Trying to construct a daily average out of that is a fools errand.

Unfortunately the incomes for a lot of fools depend on such nonsense.

I love the argument that re analysis models are better than surface temperature records.

Thats why re analysis guys check their product against the surface products! to find their mistakes.

This is hilarious

‘But, how can they know the difference? Because good data produce good weather forecasts; bad data don’t.

“The data sources include surface thermometers, buoys, and ships (as do the “official” global temperature calculations), but they also add in weather balloons, commercial aircraft data, and a wide variety of satellite data sources.”

i suspect Roy never looked at the data sources reanalysis uses.

1. Thermometer data taken from state DOTs. Thats right, temperatures taken along highways

and roads.

2. Thermometer data taken on by railway companies along their tracks.

3. Thermometer data taken by high school students.

4. Specific urban network data, these are data networks set up in cities to mesaure UHI.

This data is un available to folks like BEST, GISS, because it is proprietary.

Roy should probably work on publishing his code, publishing his raw data, and ALL of his

adjustment code.

Should the same requirement be applied to any study, past, present, or future that is ultimately funded by taxpayer money?

I don’t think that Dr Roy realises that not a single reanalysis, radiosonde or satellite dataset support the low trend of UAH v6 in the AMSU-era (from 1998 and on).

Here is my collection of current datasets (a few others have been discontinued, but they also disagree with UAH v6):

https://pbs.twimg.com/media/EAPM-iKWsAAXNIJ?format=png&name=medium

So anyone of the reanalysis datasets is probably a more reliable choice than Roys own product (but I prefer the most advanced ones, ERA5 and JRA-55)

PBS twitter? Goodness.

What about IGRA? https://www.researchgate.net/publication/323644914_Examination_of_space-based_bulk_atmospheric_temperatures_used_in_climate_research

“PBS Twitter” is just a way to store and display images on the internet. There are other services as well…

IGRA raw data? I assume that the result would be similar to Raobcore raw and Ratpac B raw included in my graph, i e not supporting Dr Roys dataset..

Wrap up Mosher.

You’re not a scientist, you have a qualification in English.

You have an ego the size of the planet because your employers handed you the title ‘scientist’ which is, if not fraudulent, it should be, otherwise I also claim the right to call myself a scientist. And the fact is, I have a far more analytical mind than you do because that was my training.

You don’t understand what Roy Spencer is talking about but deem yourself sufficiently qualified to criticise him.

You’re a fraud Mosher.

I love how predictable Mosher’s response is to anything that challenges BEST’s unsavory global data sausage, wherein intact temperature records are chopped into pieces and poured into a rotten casing of geophysically unreasonable constraints. Not content with serving as a carny barker for his patently over-ambitious employer, he also peripatetically fishes for free code. The wholly predictable M.O. is hilarious!

Mosh has been referring to UAH as UHA for over a decade. The usual “messages are garbled because I use text-to-speech” excuse makes that mistake even more ridiculous. How can such a simple thing be failed time-and-time again?

“…Roy should probably work on publishing his code, publishing his raw data, and ALL of his

adjustment code…”

https://climateaudit.org/2016/01/05/update-of-model-observation-comparisons/#comment-765741

CAPS for emphasis:

Stephen Mosher Posted Jan 6, 2016 at 10:30 AM

“…IF YOU REVIEW UHA CODE ( AVAILABLE FINALLY) you’ll be amazed.. It would be nice if RSS made code available…”

So you saw the UAH code over 3.5 yrs ago and were amazed. It was “finally available.” Did you somehow forget that in all of your amazement?

Yeah, if you put it on the internet, it’s forever! 🙂

Mosher

You said, “Because good data produce good weather forecasts; bad data don’t.” Based on my experience, weather forecasters need better data!

“2. Thermometer data taken on by railway companies along their tracks.”

That’s interesting. So someone is actually using thermometer data from the railroads.

I used to work for a couple of railroads in an earlier part of my life, and railroads measure and record the temperature and weather conditions four times a day, for every little railroad station up and down the line, and have been doing so for several hundred years.

I always thought the railroad temperature readings would be valuable and now it seems someone else does, too. I wonder how far back the railroad temperature records they are using go? The temperature readings were written down on “trainsheets” which kept track of daily railroad operations, and those trainsheets should still be available.

Some may question the accuracy of the readings, but one good thing about the railroad readings is you could check other railroad stations nearby to see if they had similar readings.

Katy Railroad! The best little railroad in the nation. At one time it was the second busiest single-track, trainorder railroad in the United States. We ran more trains in 24 hours than anyone.

Then the UP railroad bought out the Katy and destroyed it by turning it into a part of an unwieldy bureaucratic railroad. The Katy could run circles around the UP any day.

That’s what you get when you go from a streamlined 2,500 person company to a bureaucratic 25,000 person company, where the left hand doesn’t know what the right hand is doing. I’m amazed the UP can even make a profit. That they do, just shows you how easy it is to make a profit out of the railroad business even while operating in an incoherent way.

Average global temperatures will continue to cool for the next several months – here is why and how much:

https://www.ospo.noaa.gov/Products/ocean/index.html

Go to Sea Surface Temperatures; SST Anomaly Charts; Pacific:

Note the “cold tongue” of Sea Surface Temperatures moving west into the equatorial Pacific:

5. UAH LT Global Temperatures can be predicted ~4 months in the future with just two parameters:

UAHLT (+4 months) = 0.2*Nino34Anomaly + 0.15 – 5*SatoGlobalAerosolOpticalDepth

Source: https://wattsupwiththat.com/2019/06/15/co2-global-warming-climate-and-energy-2/

Figures 5a and 5b prove the accuracy of this predictive equation.

In the absence of century-scale volcanoes (like El Chichon 1982 and Mt. Pinatubo 1991+), this equation will suffice:

UAHLT (+4 months) = 0.2*Nino34Anomaly + 0.15

Nino SST’s are here:

https://www.cpc.ncep.noaa.gov/data/indices/sstoi.indices

“several months”

How many is several?

“here is why and how much”

It says neither.

How much by when?

Loydo:

All the information you need to calculate four months into the future is in the paper – but based on your previous comments, you are probably innumerate.

Also, the predictive Nino 34 SST cooling process will probably continue for a while longer, as will the global atmospheric cooling.

Good that you’re willing to put your hypothesis publicly to the test. If your prediction: “Average global temperatures (I presume according to UAH) will continue to cool for the next several months” (four?) does not come to pass would that not invalidate your hypothesis? (My brackets)

There is one thing that doesn’t quite gel – the only “continued” cooling according to UAH is in the last month ie from June to July, June was warmer than May and the 13 month running mean is not cooling either. Can you explain?

It doesn’t matter.

All the anomolous heat in the northern and southern oceans above 60N and below 60S comes from the tropics, which have been in an El Niño state for a few years. All this heat will go into space over the next couple of years and with the tropics now moving to a colder phase, there will be no warm water to replace it.

There will be a mad scramble to keep adjusting the temperature records to maintain the global warming missive alive, but sooner or later the house of cards will collapse.

The Earth’s temperature cycles up and down. It’s currently on its way down and the chicken little’s won’t be able to hide this fact.

Slightly warmer is the current trend. Records go back around 150 years, while any climate change beyond a cyclical trend is measured in millennia. I’m taking away nothing more.

Here is the USCRN surface temperature data since ~2004 – Not much warming, probably none of significance.

http://icecap.us/images/uploads/p6.png

ALLAN MACRAE

“Here is the USCRN surface temperature data since ~2004”

What the heck does that have to do with the Globe Roy Spencer is talking about?

If part of the globe is *not* warming then the entire AGW theory of GLOBAL WARMING is disproved. It should be titled REGIONAL warming instead. That would then allow regional cooling to also be specified.

“If part of the globe is *not* warming then the entire AGW theory of GLOBAL WARMING is disproved.”

Yes, and it’s been cooling in the United States since the 1930’s. There goes the theory! 🙂

Bindi – you figure it out.

Good post Roy!

so you are saying that the climate is getting cooler. Sweater weather! excellent …

“Part of this process is making forecasts to get “data” where no data exists. ”

And this is one reason I cannot tolerate “Climate Science” thinking. They think they can “make up” data and mix it with real observations and then canonize the data set as if it were pure. When you “get” data where no data exists, you can make up whatever you want – your bias WILL affect the outcome. If you need data about the arctic, then setup monitoring stations and collect real observational data.

“but the objective evidence is that forecasts out 2-3 days are pretty accurate, and continue to improve over time.”

And this is why climate models are worthless. Everyone seems to agree that climate is just the average weather over a period of at least 30 years. We can’t predict the weather accurately for 4 days? So you have to just hope, to just believe that all the errors will just cancel out. Models are iterative, so they are using their own output as the next iterations input. This means any error is conflated (possibly exponentially) as the iterations are run. So modelers often build in data fences (barriers) to keep certain data from straying too far from an expected value – this by itself means the model is WRONG, but its often too complicated to figure out why the data is wandering off course, and so the easier path is taken, forgot about, and becomes enshrined in the behavior of the model.

Compare building a climate model to predicting the path of a hurricane. The hurricane’s path should be thousands of times easier to get right, but when looking at the output of several models they have it moving all over the place. It really becomes obvious when there is a divergence point – the conditions are ripe for a big change in the path of the hurricane, but it could go either way. Run enough models and ONE is likely to get the path somewhat right, but this isn’t the same as understanding – its just guess matching. Predicting climate will be just like that – with enough models one will likely come close, but probably NOT for the right reasons.

The more I study this idea of a global temperature, the more I realize how plain WRONG it is as a useful measurement. It cannot be accurately gauged, it has no predictive power for anywhere specific on the globe, and hides all the important details. Predicting local climates, well now that would be helpful – but that’s really really hard to do – you can’t just mush everything together and predict things like “some places will be hotter and some will be colder…”.

Natural warming is a fact – there is simply no denying it happens. Natural based warming will warm the poles the fastest. Places in the arctic tundra today have evidence of large forests – so we KNOW in the past it was warmer there. There simply is no scientific process that yet exists to separate natural warming from and man-caused component. Unless and until you can thoroughly explain natural warming – which is a FACT – you cannot hope to predict future climate or how man is affecting it. No data set helps, because there is no understanding of process.

Robert of Texas, ……. a good read ……. and just thought I would ask, you do know the one (1) and only difference between Climatology (Climate Science) and Phrenology, …….. don’t you.

For those who don’t know, …… then I will tell them.

Phrenology quickly lost out in Academia simply because its “Cash Cow” status was valued at the “little end of nothing”, ……. whereas government funding for Climatology studies would make Scrooge McDuck jealous.

Ok, so I spent the last 30 years doing “predictive analysis” for the US DoD and IC. your premise is incorrect, therefore what follows is counterfactual (logically TRUE, meaningfully irrelevant)

so as you dismiss “global temperature … plain WRONG … as a useful measurement”, I’m going to search for real estate that is (a) at least 100M above sea level, and (b) north of the Mason-Dixon Line.

Note: this is for my grandchildren (2). I’m too old (67) to worry, other than dying of heat stroke while walking to my car from my air conditioned health club. 🙂

cheers, or something

The hot weather in France and others places is just a couple of data points.

I live in Chicago. We had some hot days this summer, but I have lived through hotter Chicago summers. One in the late 80s and one in the early 90s Chicago had days when it hit at least 99 degrees F. Also the Chicago summer in 2005 was hotter than this summer.

Bears repeating that where I live, summers were much, MUCH warmer 70 and more years ago. Not going to say where that is, given that this forum is one of the few places on the Internet that is trying to stand between a very large number of ruthless amoral scumbags and $100 trillion, but it is what Environment Canada tells me every day.

Isn’t the entire premise of the global warming theory that more heat is being trapped in the atmosphere?

Air temperature measurements are just that. They do not measure the amount of heat! To know the amount of heat contained in the air you must know the relative humidity!

To me, this certainly sounds like a bit of a “slam dunk” from Dr. Spencer! You mean, we can use the techniques that meteorologists use, to get more accurate global temperature averages, starting from 1979 onwards? Who’d ‘a thought!

Here in the UK there is a ‘much ado about nothing’ of the July temperatures ‘nearly’ highest ever, from the BBC, Met office, newspapers, tv, climate change pontificators, etc. etc.

So let’s take look: http://www.vukcevic.co.uk/Jul19.htm

not even exceptional.

The problem is using these scary looking “anomaly” pictures. Looking at the picture in the article you’d think Alaska is on fire. It’s not, it’s relatively cool compared to other parts of the globe. It’s just wrong to use anomaly pictures. The “0” floats based on the average, and it really doesn’t tell u squat. There could be a boiling hot anomaly where the average is -40C, and the reading is -35C …. firehouse red on an anomaly map, but still freezing it’s butt off compared to the tropics.

Aside from that point, I don’t believe we r capable of accurately measuring the “global” temp. That makes it perfect for propaganda meant to deceive people.

Dr Deanster

1. ” There could be a boiling hot anomaly where the average is -40C, and the reading is -35C …. firehouse red on an anomaly map, but still freezing it’s butt off compared to the tropics.”

The problem with people like you is that

– while they cry ‘Wooooaaaah’ when Cotton, Minnesota gets an anomaly 0f -10 °C in January/February 2019,

– they would say “All is well!” when somewhere in the Arctic the anomalies move from -20 °C up to 0 °C.

2. “Aside from that point, I don’t believe we r capable of accurately measuring the “global” temp. ”

How do you know that?

Why are for example Roy Spencer’s UAH anomalies for the lower troposphere at 5 km above land surfaces so similar to those measured by surface stations?

You deal in hyperbole much?

I haven’t said whooaaaa to an anomaly in cotton mn, and I,ve yet to see an arctic anomaly of +20C. Looking at the DMI Arctic map, I have noticed it rarely if ever goes above the red line in summer, and the “warm” anomalies of winter are still sufficient to freeze your nuts off.

As for Roy, if his metic was so accurate, why the new versions with adjustments? Not saying Dr Spencer doesn’t do a fine job, nor saying that his product is not relatively the best …. I prefer UAH over all others …. BUT, when we are talking a (global) anomaly average in the hundredths of a degree, I disagree we have the technology to MEASURE, not calculate, but MEASURE the global temp at that level of accuracy.

Just sayin

Dr Deanster

Probably the way the data should be displayed is with a 2 1/2 D display. That is, show the temperature with a color, and show the anomaly with an apparent height above a plane or the geoid. But then, it might be more difficult to convince the public that the Arctic is burning up.

From the article: “But most warming has (arguably) occurred in the last 50 years, and if one is trying to tie global temperature to greenhouse gas emissions, the period since 1979 (the last 40+ years) seems sufficient since that is the period with the greatest greenhouse gas emissions and so when the most warming should be observed.”

That doesn’t apply to the United States. In the United States, the 1930’s were warmer than any year in the 21st centry. Hansen said 1934 was 0.5C warmer than 1998, and that would make 1934, 0.4C warmer than 2016, if going by the UAH chart which shows 1998 as being 0.1C cooler than 2016.

I would say it is arguable that the 1930’s were just as warm worldwide as the temperatures today based on unaltered surface temperature records from the past. I see no basis for claiming current warming is unprecedented, and if the temperatures were just as warm in the 1930’s with Mother Nature being the cause, then there is no reason to believe that Mother Nature is not the cause of the current, equal warming today.

At least in the United States which seems to be a special case versus the rest of the world. How can the U.S. temperature profile look completely different from the Hockey Stick global temperature profile?

The answer is the reason the U.S. is a special case is because the U.S. has the most and best documented temperature data in the world which makes it very difficult for the Climategate Charlatans to blatantly change the U.S. record, so in the past they just settled on bastardizing the temperature records for the rest of the world. But in recent years they are even trying to bastardize the U.S. surface temperature record and even state temperature records.

But they didn’t erase the old regional historic temperature records which show the 1930’s to be as warm as today, and they haven’t erased the Tmax data which also shows the 1930’s to be as warm as current-day temperatures. They’ve been busy little bees but they can only do so much defrauding in a day so they left us the clues.

Given all the above I don’t see how one can say “But most warming has (arguably) occurred in the last 50 years”. I don’t think the available data correlates with that.

A greenhouse gas theory is not data or evidence of anything.

Mother Nature is in charge of the Earth’s weather and climate until there is evidence to show otherwise. In the United States, Mother Nature was in charge of the weather and climate in the 1930’s and it appears to be in charge today under very similar circumstances. No CO2 required.

Let me ask this question here: Would you agree that it is a possibility that CO2 adds NO net heat to the Earth’s atmosphere?

+1

Tom Abbott

Can you provide some proof the planet was warmer in the 30’s. This seems to be what you are hanging your hat on. I have never seen anything that says that is remotely true. The Trump driven EPA doesn’t even think the US was warmer as you seem to faithfully believe….

https://www.epa.gov/climate-indicators/climate-change-indicators-us-and-global-temperature

“Tom Abbott

Can you provide some proof the planet was warmer in the 30’s. This seems to be what you are hanging your hat on. I have never seen anything that says that is remotely true.”

Well,here’s the U.S. surface temperature chart (Hansen 1999) which shows the 1930’s were warmer than today. Note that this link shows the Hansen 1999 U.S. surface temperatue chart next to a bogus, bastardized Hockey Stick chart which erased the significance of the 1930’s in order to enable the promoters of the CAGW fraud to make claims like “hotter and hotter” and “Hottest Year Evah!”. The Hansen 1999 chart is the true representative of the global temperature profile. The bogus Hockey Stick chart is an invention of criminals, meant to defraud the people of the world.

http://www.giss.nasa.gov/research/briefs/hansen_07/

And here are some Tmax charts for various nations that show it was just as warm in the past as it is today.

Tmax for the U.S.:

Tmax for China:

India:

There are others in the southern hemisphere that show the same temperature profile, i.e., that the recent past was just as warm as today. I try to dig those out.

I also have unmodified charts from around the world that show the same temperature profile of the past being just as warm as today, before the Climategae Charlatans got hold of them and changed them into bogus, bastardized Hockey Stick charts.