By William Ward, 1/01/2019

The 4,900-word paper can be downloaded here: https://wattsupwiththat.com/wp-content/uploads/2019/01/Violating-Nyquist-Instrumental-Record-20190112-1Full.pdf

The 169-year long instrumental temperature record is built upon 2 measurements taken daily at each monitoring station, specifically the maximum temperature (Tmax) and the minimum temperature (Tmin). These daily readings are then averaged to calculate the daily mean temperature as Tmean = (Tmax+Tmin)/2. Tmax and Tmin measurements are also used to calculate monthly and yearly mean temperatures. These mean temperatures are then used to determine warming or cooling trends. This “historical method” of using daily measured Tmax and Tmin values for mean and trend calculations is still used today. However, air temperature is a signal and measurement of signals must comply with the mathematical laws of signal processing. The Nyquist-Shannon Sampling Theorem tells us that we must sample a signal at a rate that is at least 2x the highest frequency component of the signal. This is called the Nyquist Rate. Sampling at a rate less than this introduces aliasing error into our measurement. The slower our sample rate is compared to Nyquist, the greater the error will be in our mean temperature and trend calculations. The Nyquist Sampling Theorem is essential science to every field of technology in use today. Digital audio, digital video, industrial process control, medical instrumentation, flight control systems, digital communications, etc., all rely on the essential math and physics of Nyquist.

NOAA, in their USCRN (US Climate Reference Network) has determined that it is necessary to sample at 4,320-samples/day to practically implement Nyquist. 4,320-samples/day equates to 1-sample every 20 seconds. This is the practical Nyquist sample rate. NOAA averages these 20-second samples to 1-sample every 5 minutes or 288-samples/day. NOAA only publishes the 288-sample/day data (not the 4,320-samples/day data), so to align with NOAA the rate will be referred to as “288-samples/day” (or “5-minute samples”). (Unfortunately, NOAA creates naming confusion with their process of averaging down to a slower rate. It should be understood that the actual rate is 4,320-samples/day.) This rate can only be achieved by automated sampling with electronic instruments. Most of the instrumental record is comprised of readings of mercury max/min thermometers, taken long before automation was an option. Today, despite the availability of automation, the instrumental record still uses Tmax and Tmin (effectively 2-samples/day) instead of a Nyquist compliant sampling. The reason for this is to maintain compatibility with the older historical record. However, with only 2-samples/day the instrumental record is highly aliased. It will be shown in this paper that the historical method introduces significant error to mean temperatures and long-term temperature trends.

NOAA’s USCRN is a small network that was completed in 2008 and it contributes very little to the overall instrumental record. However, the USCRN data provides us a special opportunity to compare a high-quality version of the historical method to a Nyquist compliant method. The Tmax and Tmin values are obtained by finding the highest and lowest values among the 288 samples for the 24-hour period of interest.

NOAA USCRN Examples to Illustrate the Effect of Violating Nyquist on Mean Temperature

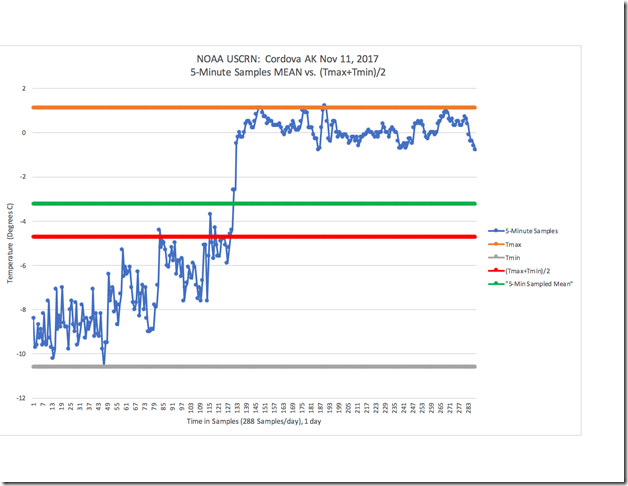

The following example will be used to illustrate how the amount of error in the mean temperature increases as the sample rate decreases. Figure 1 shows the temperature as measured at Cordova AK on Nov 11, 2017, using the NOAA USCRN 5-minute samples.

Figure 1: NOAA USCRN Data for Cordova, AK Nov 11, 2017

The blue line shows the 288 samples of temperature taken that day. It shows 24-hours of temperature data. The green line shows the correct and accurate daily mean temperature that is calculated by summing the value of each sample and then dividing the sum by the total number of samples. Temperature is not heat energy, but it is used as an approximation of heat energy. To that extent, the mean (green line) and the daily-signal (blue line) deliver the exact same amount of heat energy over the 24-hour period of the day. The correct mean is -3.3 °C. Tmax is represented by the orange line and Tmin by the grey line. These are obtained by finding the highest and lowest values among the 288 samples for the 24-hour period. The mean calculated from (Tmax+Tmin)/2 is shown by the red line. (Tmax+Tmin)/2 yields a mean of -4.7 °C, which is a 1.4 °C error compared to the correct mean.

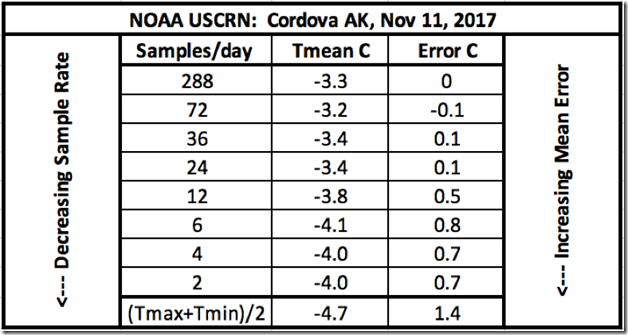

Using the same signal and data from Figure 1, Figure 2 shows the calculated temperature means obtained from progressively decreased sample rates. These decreased sample rates can be obtained by dividing down the 288-sample/day sample rate by a factor of 4, 8, 12, 24, 48, 72 and 144. Therefore, the sample rates will correspond to: 72, 36, 24, 12, 6, 4 and 2-samples/day respectively. By properly discarding the samples using this method of dividing down, the net effect is the same as having sampled at the reduced rate originally. The corresponding aliasing that results from the lower sample rates, reveals itself as shown in the table in Figure 2.

Figure 2: Table Showing Increasing Mean Error with Decreasing Sample Rate

It is clear from the data in Figure 2, that as the sample rate decreases below Nyquist, the corresponding error introduced from aliasing increases. It is also clear that 2, 4, 6 or 12-samples/day produces a very inaccurate result. 24-samples/day (1-sample/hr) up to 72-samples/day (3-samples/hr) may or may not yield accurate results. It depends upon the spectral content of the signal being sampled. NOAA has decided upon 288-samples/day (4,320-samples/day before averaging) so that will be considered the current benchmark standard. Sampling below a rate of 288-samples/day will be (and should be) considered a violation of Nyquist.

It is interesting to point out that what is listed in the table as 2-samples/day yields 0.7 °C error. But (Tmax+Tmin)/2 is also technically 2-samples/day with an error of 1.4°C as shown in the table. How can this be possible? It is possible because (Tmax+Tmin)/2 is a special case of 2-samples per day because these samples are not spaced evenly in time. The maximum and minimum temperatures happen whenever they happen. When we sample properly, we sample according to a “clock” – where the samples happen regularly at exactly the same time of day. The fact that Tmax and Tmin happen at irregular times during the day causes its own kind of sampling error. It is beyond the scope of this paper to fully explain, but this error is related to what is called “clock jitter”. It is a known problem in the field of signal analysis and data acquisition. 2-samples/day, regularly timed, would likely produce better results than finding the maximum and minimum temperatures from any given day. The instrumental temperature record uses the absolute worst method of sampling possible – resulting in maximum error.

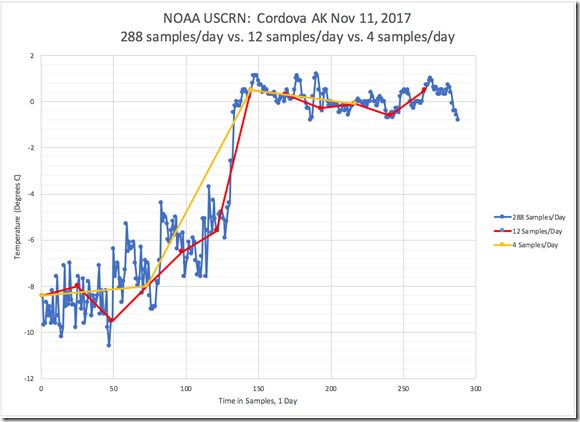

Figure 3 shows the same daily temperature signal as in Figure 1, represented by 288-samples/day (blue line). Also shown is the same daily temperature signal sampled with 12-samples/day (red line) and 4-samples/day (yellow line). From this figure, it is visually obvious that a lot of information from the original signal is lost by using only 12-samples/day, and even more is lost by going to 4-samples/day. This lost information is what causes the resulting mean to be incorrect. This figure graphically illustrates what we see in the corresponding table of Figure 2. Figure 3 explains the sampling error in the time-domain.

Figure 3: NOAA USCRN Data for Cordova, AK Nov 11, 2017: Decreased Detail from 12 and 4-Samples/Day Sample Rate – Time-Domain

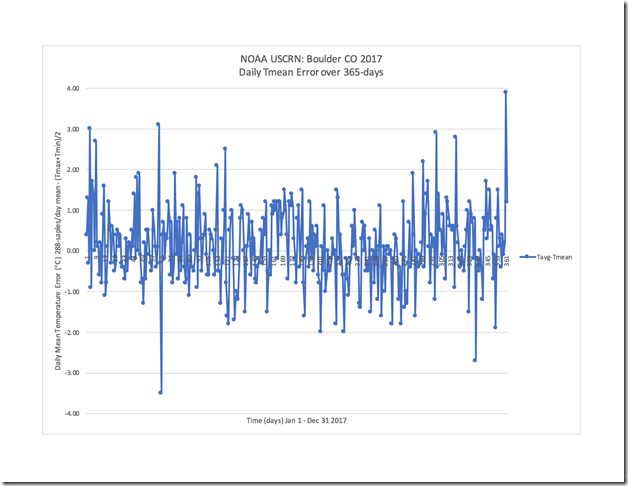

Figure 4 shows the daily mean error between the USCRN 288-samples/day method and the historical method, as measured over 365 days at the Boulder CO station in 2017. Each data point is the error for that particular day in the record. We can see from Figure 4 that (Tmax+Tmin)/2 yields daily errors of up to ± 4 °C. Calculating mean temperature with 2-samples/day rarely yields the correct mean.

Figure 4: NOAA USCRN Data for Boulder CO – Daily Mean Error Over 365 Days (2017)

Let’s look at another example, similar to the one presented in Figure 1, but over a longer period of time. Figure 5 shows (in blue) the 288-samples/day signal from Spokane WA, from Jan 13 – Jan 22, 2008. Tmax (avg) and Tmin (avg) are shown in orange and grey respectively. The (Tmax+Tmin)/2 mean is shown in red (-6.9 °C) and the correct mean calculated from the 5-minute sampled data is shown in green (-6.2 °C). The (Tmax+Tmin)/2 mean has an error of 0.7 °C over the 10-day period.

Figure 5: NOAA USCRN Data for Spokane, WA – Jan13-22, 2008

The Effect of Violating Nyquist on Temperature Trends

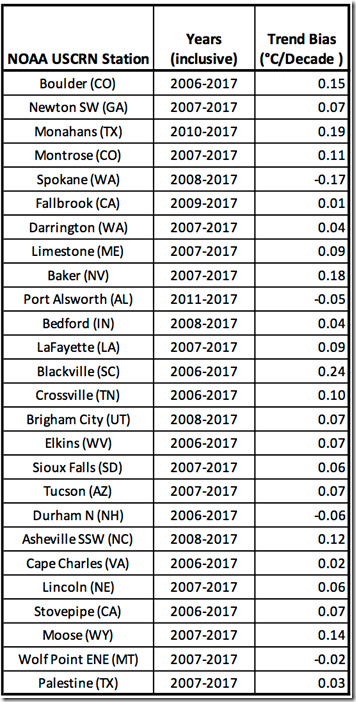

Finally, we need to look at the impact of violating Nyquist on temperature trends. In Figure 6, a comparison is made between the linear temperature trends obtained from the historical and Nyquist compliant methods using NOAA USCRN data for Blackville SC, from Jan 2006 – Dec 2017. We see the trend derived from the historical method (orange line) starts approximately 0.2 °C warmer and has a 0.24 °C/decade warming bias compared to the Nyquist compliant method (blue line). Figure 7 shows the trend bias or error (°C/Decade) for 26 stations in the USCRN over a 7-12 year period. The 5-minute samples data gives us our reference trend. The trend bias is calculated by subtracting the reference from the (Tmaxavg+Tminavg)/2 derived trend. Almost every station exhibits a warming bias, with a few exhibiting a cooling bias. The largest warming bias is 0.24 °C/decade and the largest cooling bias is -0.17 °C/decade, with an average warming bias across all 26 stations of 0.06C. According to Wikipedia, the calculated global average warming trend for the period 1880-2012 is 0.064 ± 0.015 °C per decade. If we look at the more recent period that contains the controversial “Global Warming Pause”, then using data from Wikipedia, we get the following warming trends depending upon which year is selected for the starting point of the “pause”:

1996: 0.14°C/decade

1997: 0.07°C/decade

1998: 0.05°C/decade

While no conclusions can be made by comparing the trends over 7-12 years from 26 stations in the USCRN to the currently accepted long-term or short term global average trends, it can be instructive. It is clear that using the historical method to calculate trends yields a trend error and this error can be of a similar magnitude to the claimed trends. Therefore, it is reasonable to call into question the validity of the trends. There is no way to know for certain, as the bulk of the instrumental record does not have a properly sampled alternate record to compare it to. But it is a mathematical certainty that every mean temperature and derived trend in the record contains significant error if it was calculated with 2-samples/day.

Figure 6: NOAA USCRN Data for Blackville, SC – Jan 2006-Dec 2017 – Monthly Mean Trendlines

Figure 7: Trend Bias (°C/Decade) for 26 Stations in USCRN

Conclusions

1. Air temperature is a signal and therefore, it must be measured by sampling according to the mathematical laws governing signal processing. Sampling must be performed according to The Nyquist Shannon-Sampling Theorem.

2. The Nyquist-Shannon Sampling Theorem has been known for over 80 years and is essential science to every field of technology that involves signal processing. Violating Nyquist guarantees samples will be corrupted with aliasing error and the samples will not represent the signal being sampled. Aliasing cannot be corrected post-sampling.

3. The Nyquist-Shannon Sampling Theorem requires the sample rate to be greater than 2x the highest frequency component of the signal. Using automated electronic equipment and computers, NOAA USCRN samples at a rate of 4,320-samples/day (averaged to 288-samples/day) to practically apply Nyquist and avoid aliasing error.

4. The instrumental temperature record relies on the historical method of obtaining daily Tmax and Tmin values, essentially 2-samples/day. Therefore, the instrumental record violates the Nyquist-Shannon Sampling Theorem.

5. NOAA’s USCRN is a high-quality data acquisition network, capable of properly sampling a temperature signal. The USCRN is a small network that was completed in 2008 and it contributes very little to the overall instrumental record, however, the USCRN data provides us a special opportunity to compare analysis methods. A comparison can be made between temperature means and trends generated with Tmax and Tmin versus a properly sampled signal compliant with Nyquist.

6. Using a limited number of examples from the USCRN, it has been shown that using Tmax and Tmin as the source of data can yield the following error compared to a signal sampled according to Nyquist:

a. Mean error that varies station-to-station and day-to-day within a station.

b. Mean error that varies over time with a mathematical sign that may change (positive/negative).

c. Daily mean error that varies up to +/-4°C.

d. Long term trend error with a warming bias up to 0.24°C/decade and a cooling bias of up to 0.17°C/decade.

7. The full instrumental record does not have a properly sampled alternate record to use for comparison. More work is needed to determine if a theoretical upper limit can be calculated for mean and trend error resulting from use of the historical method.

8. The extent of the error observed with its associated uncertain magnitude and sign, call into question the scientific value of the instrumental record and the practice of using Tmax and Tmin to calculate mean values and long-term trends.

Reference section:

This USCRN data can be found at the following site: https://www.ncdc.noaa.gov/crn/qcdatasets.html

NOAA USCRN data for Figure 1 is obtained here:

NOAA USCRN data for Figure 4 is obtained here:

https://www1.ncdc.noaa.gov/pub/data/uscrn/products/daily01/2017/CRND0103-2017-AK_Cordova_14_ESE.txt

NOAA USCRN data for Figure 5 is obtained here:

NOAA USCRN data for Figure 6 is obtained here:

https://www1.ncdc.noaa.gov/pub/data/uscrn/products/monthly01/CRNM0102-SC_Blackville_3_W.txt

“Beware of averages. The average person has one breast and one testicle.” Dixie Lee Ray

The average of 49 & 51 is 50 and the average of 1 and 99 is also 50.

First they take the daily min and max and compute a daily average. Then they take the daily averages and average them up for the the year an then those annuals are averaged up from the equator to the poles and they pretend it actually means something.

Based on all that they are going to legislate policy to do what? Tell people to eat tofu, ride the bus and show up at the euthanization center on their 65th birthday?

Then there is the case of the statistician who drowned crossing a river with average depth of one foot.

Ha! Good one.

+1000 to both of you.

I would disagree, for a couple of reasons.

First, the definition of the Nyquist limit:

The first problem with this is that climate contains signals at just about every frequency, with periods from milliseconds to megayears. What is the “Nyquist Rate” for such a signal?

Actually, the Nyquist theorem states that we must sample a signal at a rate that is at least 2x the highest frequency component OF INTEREST in the signal. For example, if we are only interested in yearly temperature data, there is no need to sample every millisecond. Monthly data will be more than adequate.

And if we are interested in daily temperature, as his Figure 2 clearly shows, hourly data is adequate.

This brings up an interesting question—regarding temperature data, what is the highest frequency (shortest period) of interest?

To investigate this, I took a year’s worth of 5-minute data and averaged it minute by minute to give me an average day. Then I repeated this average day a number of times and ran a periodogram of that dataset. Here’s the result:

As you can see, there are significant cycles at 24, 12, and 8 hours … but very little with a shorter period (higher frequency) than that. I repeated the experiment with a number of datasets, and it is the same in all of them. Cycles down to eight hours, nothing of interest shorter than that.

As a result, since we’re interested in daily values, hourly observations seem quite adequate.

The problem he points to is actually located elsewhere in the data. The problem is that for a chaotic signal, (max + min)/2 is going to be a poor estimator of the actual mean of the period. Which means we need to sample a number of times during any period to get close to the actual mean.

And as his table shows, an hourly recording of the temperature gives only a 0.1°C error with respect to a 5-minute recording … which is clear evidence that an hourly signal is NOT a violation of the Nyquist limit.

So I’d say that he’s correct, that the average of the max and min values is not a very robust indicator of the actual mean … but for examining that question, hourly data is more than adequate.

Best to all,

w.

Another way to look at it is to try to estimate the area under the curve of daily temperature. The original model of a sine wave is clearly invalid. There is a distortion of a sine wave every day. Hourly data can approximate this distortion a lot better than the min-max model. 5 minute data can improve upon that, but at the expense of diminishing returns. Nyquist or no Nyquist, the min-max practice assumes a perfect sine wave as a model of the daily temperature curve and this model is invalid. In short, I agree that hourly data is much better than min-max thermometers.

Salute Phil!

A great point and I would like to see that sort of record used versus simple hi-lo average.

The daily temperature is not a pure sine wave, and at my mountain cabin a simple hi-lo average is extremey misleading, especially when trying to grow certain veggies or sprouting flower seeds.

Seems to this old engineer and weatherwise gardener that we should use some sort of “area under the curve” method. Perhaps assign a simple hi-lo average to defined intervals, maybe 20 minutes, and then use the number of increments above the daily “low” in some manner to reflect the actual daily average.

I can tellya that up at my altitude that during the summer it warms up very fast from very low temperatures in the morning and then stays comfortable until after sunset. I hear that the same thing happens in the desert. So a weighted interval method would show a higher daily average during the summer when days are long and a lower average during the shorter winter days. This past summer’s data from the 4th of July was a good example I found from a nearby airfield. The high was 88 and low was 58 and average was published as 73. But the actual day was toastie! 13 hours were above the :”average”, and 10 of those hours were above 80 degrees. The exact reverse happens in the winter, and at the same site earlier this month we saw 23 for low and 62 for a high. 42 average. It was unseasonably warm, but we still had 13 hours below the average and only 11 hours above.

Gums sends…

w. ==> Quite right …. In a pragmatic sense, we have the historic Min/Max records and the newer 5-minute (averages) record. So our choice is really between the two methods — and the 5-min records is obviously superior in light of Nyquist. Where 5-min records exist, they should be used and where only Min/Max records exist — they can be useful if they are proper acknowledged to be error-prone and acknowledged with proper uncertainty bars….

Min-Max records do not produce an accurate and precise result suitable for discerning the small changes in National or Global temperatures.

Hey Willis,

As a result, since we’re interested in daily values, hourly observations seem quite adequate.

Agreed. I’ve run some comparisons between 5-min and 1-hr sampled daily signal – they yield very similar averages. But here we’re talking about 2 samples per day, or effectively just one sample (daily midrange value).

Also, Nyquist only applies to regularly spaced periodic sampling … but min/max sampling happens at irregular times.

I see it bit differently: irregular sampling makes a problem worse. If irregular sampling could alleviate Nyquist limitations that would be very cheap win: just sample any signal sparsely and irregularly and no need to worry about Shannon! Well, regardless of sampling method such method must obey Shannon in order to replicate a signal.

As you can see, there are significant cycles at 24, 12, and 8 hours … but very little with a shorter period (higher frequency) than that.

I’ve got also smaller frequency peaks around 6 and 3 hours. Still most of the signal energy resides in the yearly and daily cycles (and around).

Lots of one and two degree errors in there … the only good news is that the error distribution is normal Gaussian.

Thanks for sharing – have you run any ‘normality tests’ to determine that or in this case eyeballing is perfectly sufficient?

Paramenter said: “If irregular sampling could alleviate Nyquist limitations that would be very cheap win: just sample any signal sparsely and irregularly and no need to worry about Shannon! Well, regardless of sampling method such method must obey Shannon in order to replicate a signal.”

+1E+10

I noticed in Fig 6 that the trends for Blackville, SC were very large. The regularly sampled trend is 9.6°C/Cen, and the min/max is higher. It would be interesting to see the other USCRN trends, not just the differences. The period is only 11 years.

I did my own check on Blackville trends. I got slightly higher trend results (my record is missing Nov,Dec 2017).

Average 11.8±17.8 °C/Cen

Min/Max 16.5±18.4 °C/Cen

These are 1 sd errors, which obviously dwarf the difference. It is statistically insignificant, and doesn’t show that the different method made a difference.

Anecdotal evidence is somehow scientific? And be honest, Nick, if the station showed significant cooling it would thrown out or “corrected” by the likes of you.

It is WW’s choice, not mine.

The whole exercize is itself a biassed argument. The error factor [Table] is relative to 288 points of 5 minute average. Here the writer has not taken into account the drag factor in the continuous record. The drag is season specific and instrument specific. On these aspects several studies were made before accepting average as maximum plus minimum by two.

Dr. S. Jeevananda Reddy

I would have thought that scientists were always looking for consistency in results when comparing the present with even the near past, let alone back to the earliest times of recording temperature. I suppose that it never occurred to anyone at the time, that when the new AWS measuring systems came on line that it might be opportune to conduct a lengthy (10 -20 year) experiment by continuing the old process alongside the new. In this way, perhaps coming up with a way to convert the old to match the new. Then again, if you didn’t know that there was this kind of issue, that thought most likely wouldn’t have crossed your mind!

I was thinking of the process used by Leif Svalgaard and colleagues when trying to tie all of our historical sun spot observations together into a cohesive and more meaningful manner. Could this be done for the temperature method to everyone’s satisfaction?

Changing our methodology due to improvements in technology doesn’t seem to always alert us to the possibility that the ‘new’ results are actually different to the old ones. Yet, from what I have read here that most certainly is the case.

Here is another example of how technology has dramatically changed the way we record natural phenomena. I have been an active auroral observer in New Zealand for just over 40 years. Up until this latest Solar Max for SSC 24 our observations were written representations of our visual impressions. This tied easily into the historical record for everywhere that the aurorae can be seen. Photographs were a bonus, and sometimes considered a poor second to the ‘actual’ visual impression of the display!

At the peak of SSC 23 the internet first played its part in both alerting people that something was happening, and therefore increasing the number & spread of observers worldwide, but also in teaching people about what they were seeing. As SSC 23 faded the sensitivity involved in Digital SLR cameras seemed to rise exponentially. This led to the strange situation as SSC 24 matured that people who had never seen aurorae were recoding displays that couldn’t be seen with the naked-eye! This unfortunately coincided with the least active SSC for at least 100 years. This manifested itself among the ‘newbies’ as a belief that aurorae are hard to see, and even when you can see them, only the camera can record the colour! The ease with which people can record the aurorae has lead to a massive increase in ‘observers,’ and I use that term loosely, who very rarely record their visual impressions, mostly because they don’t appreciate how important those impressions are as a way to keep the connection with the past going.

If there is a fundamental disconnect in the way we now record temperature compared to the past, then this should be addressed sooner rather than later!

Mr. Ward is quite correct.

The proper way to prevent aliasing is to apply a low pass filter that discards all frequencies above 1/2 your sample rate. This is standard practice in electronics work. Sometimes the sensor (ie a microphone) performs this function for you.

Problem with the (max-min)/2 method is that there is no proper low pass filter that rejects “quick” spikes of extreme temps. The response time of the sensor is set by the thermal diffusivity of the mercury. This could allow a 5 minute hot blast of air from a tarmac to skew the reading.

An electronic temperature sensor (say a platinum RTD) can respond in milliseconds and normally filtering it so it cannot respond faster than 1 minute would be necessary to sample it a a 30 second rate.

An even larger problem with the official “temperature” record is the “RMS” versus “True RMS” problem. That would be a whole discussion on it’s own. The average of a max and a min temperature is NOT representative of the heat content in the atmosphere at a location.

So here we are prepared to tear down and rebuild the world’s energy supply based a a terrible temperature record that is so corrupt it is not fit for purpose at all.

Cheers, Kevin

A platinum resistance thermometer has a time constant in the range of a few seconds based on its diameter and the fluid. The fluid in this case was either flowing water at 3 feet per second or flowing air at 20 feet per second. The situation in a Stevenson screen is much slower air flow, so the time constant is much slower. I’ll do a measurement or two tomorrow with a 0.125″ diameter PRT when I get to the lab of the time constant in relatively still air. A thermistor would have a faster time constant due to the lower mass of the sensor and its sheath. Thermistors are also notorious for drifting, so unless you calibrate it often, the temperatures aren’t ready for this application.

Loren, if you investigate the “newer” surface mount chip Platinum RTD’s you see they have response times down into the 100 millisecond range. The small volume (ie thermal capacity) of the sensor does allow those response times. I may have “stretched” things a bit, but any value from 1 millisecond to 999 milliseconds is “a millisecond response time”.

Cheers, Kevin

1/8″ diameter SS sheathed PRT from Omega – still air time constant on the order of 80 seconds. I did not find the specs on the ones used in the USCRN weather stations. Are they thin film, IR, or a more traditional sheathed PRT?

This is an inept rehash of an earlier guest blog by someone who fails to understand that:

1) Shannon’s sampling theorem applies to strictly periodic (fixed delta t) discrete sampling of a continuous signal

2) It doesn’t apply to the daily determination of Tmax and Tmin from the continuous, not the discretely-sampled record

3) While (Tmax + Tmin)/2 certainly differs from the true daily mean, it does so not because of any aliasing, but because of the typical asymmetry of the diurnal cycle, which incorporates phase-locked, higher-order harmonics.

1sky1 says: “1) Shannon’s sampling theorem applies to strictly periodic (fixed delta t) discrete sampling of a continuous signal”

Reply: max and min are samples, period = 1/2 day and with much jitter. Jitter exists on every clock. Nyquist doesn’t not carve out jitter exceptions nor limit their magnitude. Any temperature signal is continuous. You need to address and invalidate these points or your comments are not correct.

1sky1 says: “3) While (Tmax + Tmin)/2 certainly differs from the true daily mean, it does so not because of any aliasing, but because of the typical asymmetry of the diurnal cycle, which incorporates phase-locked, higher-order harmonics.”

Reply: “…because of the typical asymmetry of the diurnal cycle…” this is frequency content. Content that aliases at 2-samples/day. “…which incorporates phase-locked, higher-order harmonics.” So “phase locked higher order harmonics gets you a waiver from Mr. Nyquist?!? 35 years – many industries – many texts – never heard of such a thing. Orchestras are “phase locked” to the conductor, does that mean I can sample music sans Nyquist?!? Of course not.

Your failure to recognize that the daily measurement of extrema doesn’t remotely involve any clock-driven sampling (with or without “jitter”) speaks volumes. Not only are those extrema NOT equally spaced in time (the fundamental requirement for proper discrete sampling), but what triggers their irregular occurrence and recording has everything to do with asymmetric waveform of the diurnal cycle, not any clock per se.

Nyquist thus is irrelevant to the data at hand, which are NOT samples in ANY sense, but direct and exhaustive measurements of daily extrema of the thermometric signal. Pray tell, where in “35 years – many industries – many texts” did you pick up the mistaken notion that Nyquist applies to the extreme values of CONTINUOUS signals?

The movement of the Earth is the clock. The fact that we can get the same max min values from a Max/Min thermometer and a USCRN 288-sample/day system proves they are samples. Once you have the values it doesn’t matter what there source is. The result is indistinguishable from the process. Nyquist doesn’t somehow stop applying because the sample happens to be the “extreme values”. Once you bring the sampled data into your DSP system for processing how does your algorithm know if the data came from a Max/Min thermometer or an ADC with a clock with jitter? If you were to convert back to analog would the DAC know where the values came from? Would the DAC care that you don’t care about converting the signal back to analog and only intend to average it? No. You get the same result. They are samples, they are periodic. Nyquist applies. You are free to take the samples and use them as if they are not samples. Fortunately there are no signal analysis police to give you a citation.

1sky, if you decide to reply then the last word will be yours. Best wishes.

Bass-ackwards logic! All this proves is that sufficiently frequently sampled USCRN digital systems will reveal nearly identical daily extrema as true max/min thermometers sensing the continuous temperature signal. There’s no logical way that the latter can be affected by aliasing, which is solely an artifact of discrete, equi-spaced, clock-driven sampling.

All the purely pragmatic arguments about DSP algorithms being blind to the difference between the obtained sequence of daily extrema and properly sampled time-series do not alter that intrinsic difference. In fact, the imperviousness of the extrema to aliasing and clock-jitter effects is what makes the daily mid-range value (Tmin + Tmax)/2 preferable to the four-synoptic-time average used in much of the non-Anglophone world for reporting the daily “mean” temperature to the WMO.

My primary concern from a Nyquist standpoint is the rate of change of the reading in relationship to the sampling rate. If the rate of change is is less than 2X the sampling rate then you meet the criteria. However, that is for reconstruction of the original signal, and not for a true averaging of the system at hand. In many control systems the sampling rate is much higher than the rate of change, many times 10X or more. This is because the average over a period needs more data than the minimum in Nyquist requirements. In refrigeration we oversample at well over 20 times to get sufficient data to rule out noise, dropped signals, etc. We know the tau of the sensor and how it reacts to temperature change, so this allows us to accurately detect and respond to changes in temperature.

I would be interested in knowing what the response curve is for the sensor being used. That would give you sufficient data to determine how the system reacts to change. I have always thought that using the midpoint between max and min temps to be a very poor measurement of average temperature. Maybe someday I will do that study.

R Percifield,

Sampling far over Nyquist is done for many reasons. If you have noise as you say, then in fact your bandwidth is higher and technically your Nyquist rate is also higher. Most often sample rates are much higher because 1) for control applications the ADCs are capable of running so much faster than the application needs and memory and processing are cheap, so why not do it; and 2) sampling faster relaxes your anti-aliasing filter requirements. You can use a lower cost filter and set your breakpoint farther up in frequency such that your filter doesn’t give any phase issues with your sampling. Audio is the perfect example. CD audio is sampled at 44.1ksps. Audio is considered to be 20Hz to 20kHz. Sampling at 44.1ksps means that anything above 22.05kHz will alias. So your filter has to work hard between 20kHz and 22.05kHz. If you are an audiophile you don’t want filters to play with the phase of your audio, so this is another big issue. Enter sampling at 96.1ksps or 192ksps. Filters can be much farther up in frequency and your audio can be up to 30kHz, etc. There is a titanic argument in the audio world about whether or not we “experience” sound above 20kHz. I won’t digress further…

There is the Theory of Nyquist and then the application. In the real world there are no bandwidth limited signals. Anti-aliasing filters are always needed. Some aliasing always happens. It is just small and doesn’t not affect performance if the system is designed properly.

In a well designed system – audio is a good example – you should be able to convert from analog to digital and back to analog for many generations (iterations) without any audible degradation. Of course this requires a studio with many tens – perhaps hundreds of thousands of dollars of equipment – but it is possible.

“1) Shannon’s sampling theorem applies to strictly periodic (fixed delta t) discrete sampling of a continuous signal”

Seems to me measuring the temperature one a day is the definition of a strictly periodic discrete sampling of a continuous signal. Sample period is 24 hours (frequency is 1.15 e-5 Hz)

“2) It doesn’t apply to the daily determination of Tmax and Tmin from the continuous, not the discretely-sampled record”

See my first comment above

“3) While (Tmax + Tmin)/2 certainly differs from the true daily mean, it does so not because of any aliasing, but because of the typical asymmetry of the diurnal cycle, which incorporates phase-locked, higher-order harmonics.”

That would be the “RMS” versus “True-RMS” error source in the official temperature guesstimates…..

Cheers, Kevin

If the temperature measurement took place at exactly the same time each day, then you would have strictly periodic sampling and great aliasing. But that’s not what is done by Max/Min thermometers that track the continuous temperature all day and register the extrema no matter at what time they occur. Those times are far from being strictly periodic in situ.

1SKY1 wrote;

“If the temperature measurement took place at exactly the same time each day, then you would have strictly periodic sampling and great aliasing.”

You are refering to the phase of the periodic sampling, even more complicated. The periodic sampling takes place once every 24 hours. The Min/Max selection adds phase to the data, just another error source.

Cheers, Kevin

When people who lack even Wiki-level grasp of concept start parsing technical words, you get the above nonsense about what constitutes strictly periodic discrete sampling in signal analysis. The plain mathematical requirement is that delta t needs to be constant in such sampling. Daily extrema simply don’t occur on any FIXED hour! The attempt to characterize that empirical fact as “Min/Max selection adds phase to the data, just another error source” is akin to claiming error for the Pythagorean Theorem–when applied to obtuse triangles.

1sky1 said: “The plain mathematical requirement is that delta t needs to be constant in such sampling. ”

Reply: Agreed, low jitter clocks are what we want for accurate sampling. Show me the text and rules of Nyquist that limit jitter. What are the limits – just how much jitter before Nyquist gets to have the day off? How can any ADC work in the real world and meet Nyquist? Do you have the datasheet for the jitterless ADC? What is its cost in industrial temp, 1M/yr quantities?

This all BS. The temperature at any place on earth is more associated with the wind direction than anything else. Southerly winds warm you up here in the northern hemisphere, and northerly winds cool you down. What it most certainly has NOTHInG to do with is the local concentration of CO2.

The entire radiation approach is a scam. The unbalances that may occur are absorbed into the many energy sinks that exists here on earth. For sure, they will completely mask any 0.1C change in temperature globally.

http://applet-magic.com/cloudblanket.htm

Clouds overwhelm the Downward Infrared Radiation (DWIR) produced by CO2. At night with and without clouds, the temperature difference can be as much as 11C. The amount of warming provided by DWIR from CO2 is negligible but is a real quantity. We give this as the average amount of DWIR due to CO2 and H2O or some other cause of the DWIR. Now we can convert it to a temperature increase and call this Tcdiox.The pyrgeometers assume emission coeff of 1 for CO2. CO2 is NOT a blackbody. Clouds contribute 85% of the DWIR. GHG’s contribute 15%. See the analysis in link. The IR that hits clouds does not get absorbed. Instead it gets reflected. When IR gets absorbed by GHG’s it gets reemitted either on its own or via collisions with N2 and O2. In both cases, the emitted IR is weaker than the absorbed IR. Don’t forget that the IR from reradiated CO2 is emitted in all directions. Therefore a little less than 50% of the absorbed IR by the CO2 gets reemitted downward to the earth surface. Since CO2 is not transitory like clouds or water vapour, it remains well mixed at all times. Therefore since the earth is always giving off IR (probably a maximum at 5 pm everyday), the so called greenhouse effect (not really but the term is always used) is always present and there will always be some backward downward IR from the atmosphere.

When there isn’t clouds, there is still DWIR which causes a slight warming. We have an indication of what this is because of the measured temperature increase of 0.65 from 1950 to 2018. This slight warming is for reasons other than just clouds, therefore it is happening all the time. Therefore in a particular night that has the maximum effect , you have 11 C + Tcdiox. We can put a number to Tcdiox. It may change over the years as CO2 increases in the atmosphere. At the present time with 409 ppm CO2, the global temperature is now 0.65 C higher than it was in 1950, the year when mankind started to put significant amounts of CO2 into the air. So at a maximum Tcdiox = 0.65C. We don’t know the exact cause of Tcdiox whether it is all H2O caused or both H2O and CO2 or the sun or something else but we do know the rate of warming. This analysis will assume that CO2 and H2O are the only possible causes. That assumption will pacify the alarmists because they say there is no other cause worth mentioning. They like to forget about water vapour but in any average local temperature calculation you can’t forget about water vapour unless it is a desert.

A proper calculation of the mean physical temperature of a spherical body requires an explicit integration of the Stefan-Boltzmann equation over the entire planet surface. This means first taking the 4th root of the absorbed solar flux at every point on the planet and then doing the same thing for the outgoing flux at Top of atmosphere from each of these points that you measured from the solar side and subtract each point flux and then turn each point result into a temperature field and then average the resulting temperature field across the entire globe. This gets around the Holder inequality problem when calculating temperatures from fluxes on a global spherical body. However in this analysis we are simply taking averages applied to one local situation because we are not after the exact effect of CO2 but only its maximum effect.

In any case Tcdiox represents the real temperature increase over last 68 years. You have to add Tcdiox to the overall temp difference of 11 to get the maximum temperature difference of clouds, H2O and CO2 . So the maximum effect of any temperature changes caused by clouds, water vapour, or CO2 on a cloudy night is 11.65C. We will ignore methane and any other GHG except water vapour.

So from the above URL link clouds represent 85% of the total temperature effect , so clouds have a maximum temperature effect of .85 * 11.65 C = 9.90 C. That leaves 1.75 C for the water vapour and CO2. CO2 will have relatively more of an effect in deserts than it will in wet areas but still can never go beyond this 1.75 C . Since the desert areas are 33% of 30% (land vs oceans) = 10% of earth’s surface , then the CO2 has a maximum effect of 10% of 1.75 + 90% of Twet. We define Twet as the CO2 temperature effect of over all the world’s oceans and the non desert areas of land. There is an argument for less IR being radiated from the world’s oceans than from land but we will ignore that for the purpose of maximizing the effect of CO2 to keep the alarmists happy for now. So CO2 has a maximum effect of 0.175 C + (.9 * Twet).

So all we have to do is calculate Twet.

Reflected IR from clouds is not weaker. Water vapour is in the air and in clouds. Even without clouds, water vapour is in the air. No one knows the ratio of the amount of water vapour that has now condensed to water/ice in the clouds compared to the total amount of water vapour/H2O in the atmosphere but the ratio can’t be very large. Even though clouds cover on average 60 % of the lower layers of the troposhere, since the troposphere is approximately 8.14 x 10^18 m^3 in volume, the total cloud volume in relation must be small. Certainly not more than 5%. H2O is a GHG. Water vapour outnumbers CO2 by a factor of 25 to 1 assuming 1% water vapour. So of the original 15% contribution by GHG’s of the DWIR, we have .15 x .04 =0.006 or 0.6% to account for CO2. Now we have to apply an adjustment factor to account for the fact that some water vapour at any one time is condensed into the clouds. So add 5% onto the 0.006 and we get 0.0063 or 0.63 % CO2 therefore contributes 0.63 % of the DWIR in non deserts. We will neglect the fact that the IR emitted downward from the CO2 is a little weaker than the IR that is reflected by the clouds. Since, as in the above, a cloudy night can make the temperature 11C warmer than a clear sky night, CO2 or Twet contributes a maximum of 0.0063 * 1.75 C = 0.011 C.

Therfore Since Twet = 0.011 C we have in the above equation CO2 max effect = 0.175 C + (.9 * 0.011 C ) = ~ 0.185 C. As I said before; this will increase as the level of CO2 increases, but we have had 68 years of heavy fossil fuel burning and this is the absolute maximum of the effect of CO2 on global temperature.

So how would any average global temperature increase by 7C or even 2C, if the maximum temperature warming effect of CO2 today from DWIR is only 0.185 C? This means that the effect of clouds = 85%, the effect of water vapour = 13.5 % and the effect of CO2 = 1.5%.

Sure, if we quadruple the CO2 in the air which at the present rate of increase would take 278 years, we would increase the effect of CO2 (if it is a linear effect) to 4 X 0.185C = 0.74 C Whoopedy doo!!!!!!!!!!!!!!!!!!!!!!!!!!

As ever, if asked for measured data as to whether our weather is warming or cooling the answer appears to be do not know.Could not say for sure.

What time frame?

What data ?

What margin of error?

Is “Climatology” as practised a science or an art?

A religion- Al Gore’s Church of Climatology

William Ward,

Thank you for this timely essay.

Can you please correct me if these notions are wrong?

1. Nyquist Shannon sampling was derived from signals with cyclicity, which might be likened to a sine wave, typical of audio/radio signal carrier waves. Is the temperature data in this cyclicity category? Is it sufficiently like sine waves to allow application of Nyquist?

2. Can your analysis be extended from the use of actual thermometer values (confusingly named ‘absolutes’) to partially detrended signal (aka ‘anomaly values’)? There is a notion afoot that processing the former to give the latter somehow improves the variance of the data.

3. How can error estimates from Nyquist work be combined with other error sources, such as change from reading in F to C, UHI, the cooling effect of rain, the readability of divisions on a LIG thermometer, rounding errors, etc. Is it correct to use a square root of sum of squares of errors method?

4. In Australia, the BOM (Bureau of Meteorology) seems to now be using 1-second readings. Here is part of an email by the BOM:

“Firstly, we receive AWS data every minute. There are 3 temperature values:

1. Most recent one second measurement

2. Highest one second measurement (for the previous 60 secs)

3. Lowest one second measurement (for the previous 60 secs)”

It is more fully discussed at https://kenskingdom.wordpress.com/2017/03/01/how-temperature-is-measured-in-australia-part-1/

At first blush, would you consider this methodology to alter your error conclusions in the head post?

Geoff

The Sampling Theorem is a mathematical requirement that applies to strictly periodic, discrete sampling all continuous signals (periodic, transient, or random) . It involves the misidentification in spectrum analysis of undersampled high frequencies beyond Nyquist as lower frequencies in the baseband. That should not be confused with any sampling “error” in the customary statistical sense.

Geoff,

You asked, “Is the temperature data in this cyclicity category?”

The utility of the Fourier Transform is that ANY varying signal can be decomposed into a series of sinusoids of differing phases and amplitudes. The more rapidly a signal changes (steep slope) the more the signal contains high frequency components. This is where the Nyquist limit comes in. To properly capture the transient (high frequency) components, a high sampling rate is necessary. With a low sampling rate, a vertical shoulder on a pulse might instead be rendered as a 45 degree slope, or missed entirely. So, the answer to your question is, “Yes, temperature data for Earth is represented by a time-series that is composed of a wide range of ‘cyclicity.'”

https://en.wikipedia.org/wiki/Fourier_transform

A substance neutral but IMO important process comment about this guest post.

ctm earlier sent this to me and one other VERY well known sciency guest WUWT poster for posting advice. Sort of (not really) like peer review. He got back a split opinion, I said let her rip, he said nope based on some preliminary sciency reasons.

ctm chose to let her rip. IMO that is important, signalling a WUWT direction out of skeptical echo chambers and toward a newly improved utility. Following is a summary of my ctm recommendation thinking, for all to critique as AW evolves his justly famous blog, as he promised beginning of this year.

1. Interesting serious effort, with references and clear methods. A big PLUS (unlike Mann’s hockey stick), because enables ‘replication’ or ‘disproof’. Essence of science right there.

2 Whether right or wrong, the paper introduces a fresh perspective not previously encountered elsewhere (maybe because just silly wrong, maybe because ‘climate acientists’ are so generally unskilled…like Mann). The Nyquist Sampling theorem is a fundamental of information theory, governing (early) wireline telephone voice quality (Nyquist developed his information theory at Bell Labs), the later music CD digital spec ( humans cannot hear much over 18000 Hz, although dogs can—hence literal ultrasonic dog whistles with pitch from ~24000 up tpo ~53000Hz, so the CD sampling rate spec was set at 44500, meaning (with error) about 22000Hz, also proving that all analog audiophiles DO NOT KNOW/believe the Nyquist sampling theorem), and now much more. So in the worst case WUWT readers might learn about basic informationtheorics they may not have previously known.

3. The wide and deep WUWT readership will sort out this potential guest post’s validity in short order, no different than McIntyre eventually did to Mann’s hockey stick nonsense. Collective intelligence based on reality decides in the end, not peer reviewers, not AGW or skeptical consensus (granted, there is too little of the latter), and surely not ctm, me, or the other frequent WUWT poster. We all have to own up to too many basic goofs.

Just my WUWT perspective offered to ctm.

Thanks, Rud. I was the other person who reviewed it. I thought that the Nyquist argument was flawed for a couple of reasons that I listed above.

However, I thought his discussion of the errors in the max/min average versus the true average was very good and worth publishing.

I stand by that. As I said above, Nyquist does NOT mean that you have to sample at 2X the highest frequency in your data, just at 2X the highest frequency of interest. Which is important because climate contains signals at all frequencies. And since our interest is in days, and the only large cycles up there are 24, 12, and 8 hours in length, sampling hourly is well above the highest frequency (aka the shortest period) of interest.

Also, Nyquist only applies to regularly spaced periodic sampling … but min/max sampling happens at irregular times. Generally the day is warmest around 3 PM, but in fact maxes can occur at any time of day. This makes the sampling totally irregular. Here are the max and min sampling times for San Diego:

You can see how widely both the min and max temperature times can vary.

All of that is why I said, leave the Nyquist question alone, and talk about the very real errors. Here are the errors for San Diego for the period shown above. This is the difference on a daily basis of the true average and the max/min average …

Lots of one and two degree errors in there … the only good news is that the error distribution is normal Gaussian. But that will still affect the trends, because the presence of even symmetrical errors generally will mean that the measured trends will be too large.

Best to all,

w.

Willis,

I have been at this for 8 hours tonight. Enjoying it. But I’m out of gas, have to get up early and a long day. I’ll try to come back to you tomorrow as you deserve it. But let me park this comment: You don’t know what you are talking about here.

Willis said: “As I said above, Nyquist does NOT mean that you have to sample at 2X the highest frequency in your data, just at 2X the highest frequency of interest. ”

Willis – this is fundamental signal analysis 101, first day of class mistake you are making here. I suggest you go read up on this before misinforming people. I know you have a strong voice here and are respected – as you should be and as I respect you for your many informative posts. For this reason I’m being very direct, and maybe not very diplomatic. I mean no rudeness.

Nyquist does not say what you say. I’ll quote myself, but please consult an engineering text book:

According to the Nyquist-Shannon

Sampling Theorem, we must sample the signal at a rate that is at least 2 times the highest

frequency component of the signal.

fs > 2B

Where fs is the sample rate or Nyquist frequency and B is the bandwidth or highest frequency

component of the signal being sampled. If we sample at the Nyquist Rate, we have captured all

of the information available in the signal and it can be fully reconstructed. If we sample below

the Nyquist Rate, the consequence is that our samples will contain error. As our sample rate

continues to decrease below the Nyquist Rate, the error in our measurement increases.

If you have “interest” in a subset of the available frequencies in the signal then you MUST FIRST filter out frequencies above that. Its called an anti-aliasing filter. Then you can sample at a rate that corresponds to the bandwidth of your filtered signal.

Did Charles send you my reply to your comments last night? Your analysis is a way off the mark. I’m sure you are busy so I’m not surprised if you just gave a cursory read of the WUWT short paper. If you read the full paper you will be better off. I didn’t do a deep dive into clock jitter but mention it and it explains what happens when we deal with the timing of daily Tmax and Tmin. I also briefly discuss how real world application of Nyquist address non-bandlimited signals – which is all real world signals! This is in my full paper – not the abbreviated one. My paper for WUWT states briefly what I cover in the first 12 pages in my full paper. Those 12 pages are but a snipet of what you get in 4 semesters of engineering signal analysis and 30 years of applying it to bring hundreds of millions of devices to market that are based upon Nyquist.

If you are interested I suggest you take a look at and challenge the particular examples I present or statements I make. I welcome the challenge, but I warn you not to mystify this. I’m presenting very basic signal analysis. It applies to every signal. What I presenting here is not novel or revolutionary – nor should it be controversial. This paper has been reviewed by a few of my colleagues – experts in the industry in signal analysis and data acquisition. So bring your challenge but please digest the full paper and absorb it first. What is astonishing is that it is not known and standard fare for dealing with atmospheric temperature signals.

More tomorrow night – probably after 8 EST.

Thanks kindly for your answer, William.

To start with, as I said, in a chaotic system like the daily temperature record there are signals all the way down to seconds in length. The sun goes behind a cloud for a couple of seconds. Almost instantly the temperature drops and comes up again.

So is it your claim that Nyquist means we need to sample at milliseconds?

Next, suppose that our interest is in the yearly temperature signal. Are you saying that we need to take 288 samples per day to get an accurate number?

Next, the Nyquist limit only applies to band-limited signals which have no frequencies above a certain limit … is it your claim that temperature data fits this, and if so, what is the upper limit?

I ask in part because my periodogram shows that there is very little in the way of stable frequencies with periods shorter than eight hours … which would indicate that we could reconstruct the daily signal by sampling six times per day.

Next, the main problem with the max/min method has nothing to do with Nyquist—it is that we do not know the time of the max or the min. Knowing that would allow us to do a much better job reconstructing the daily signal … and again, that problem has nothing to do with Nyquist.

So let me ask a couple of questions

First, at what rate do we have to sample if our interest is the annual average temperature?

Second, you indicate that 288 samples per day satisfies the Nyquist limit … but I haven’t seen any mathematical discussion in your paper as to why you think this is the case. What am I missing here?

All the best, and thanks for an interesting discussion,

w.

Hi Willis,

I’m hitting these out of order… and I think it would be best to focus on the long post I just made to you where I focus on the basics that may be hanging us up. If we can dialog about that it would be really good because so much is based upon the fundamentals. But on this (you comment about chaotic system behavior) a few comments of reply.

First, I think there are those more informed than me who may correct me or add to this but the word “chaotic” has a mathematical definition and I’m not sure if we really mean that here or not… Can I treat that word to mean highly variable?

The “shuttering” effect of cloud cover is a good one. One I mention in my full paper briefly. These effects are not synchronized strictly to anything else. Their effects can last a few seconds to hours or days. But the shuttering effect of cloud cover is a signal and could be properly sampled and studied. Cloud cover with its variability and shuttering or modulation effect on the air temperature signal will move energy around in the frequency domain. Fast passing clouds with lots of thin spots or openings over the station should result in much higher frequency components. What is the frequency and amplitude exactly? We don’t know unless we study it. It is also possible that a heavy could cover moving slowing and lasting days can have content below 1-cycle/day. I think this is intuitive. Let me know if you disagree. But from a fundamental perspective, sampling faster is always better. I was tempted to write that in caps. There is never a downside, except that you waste memory, waste some space on a HDD and perhaps waste some processing power to handle the data. I say who cares about that. Remember, the fate of humanity depends upon understanding the climate so we can afford a bit of waste to get all of the data. Sampling faster gives us a better chance of getting all of the information in a Nyquist-compliant way. With all of the money flowing into climate science I’m sure we can hire a small army of people to study this and determine what the system really needs. I said on another post that we really need standardized systems. We need to specify the temp sensor sensitivity, linearity, drift, thermal mass, response time, mechanical enclosure, wiring material, electrical front-end with its associated input impedance, linearity, offset, drift, sample rate, converter specs, anti-aliasing filter design, power supply voltage, accuracy, ripple, etc., etc. USCRN seems to be really good. I just don’t know how much of that is specified and if it was specified was it based upon research or a guess.

After writing the above, and being a practical kind of guy, I realized that discussing theoretical limits might not be the best way to settle the question. So I looked again at the Chatham Wisconsin 5-minute temperature data. I calculated the change in error in the daily mean temperature at a wide range of sampling rates. The following figure shows those results.

If I understand you, you claim that sampling every hour violates the Nyquist limit. But there is no visible break in the data until we get up to about 8 hours.

More to the point, sampling once an hour only results in an error in the mean daily temperature of four-hundredths of a degree (0.04°C). And while that might make a difference in the lab, in climate science that error is meaningless.

So whether or not hourly samples violate the Nyquist limit is what I call “a difference that doesn’t make a difference” …

Again, thanks for a most thought-provoking discussion,

w.

Good Evening Willis!

This is just a quick ping back to you at 9:15 PM EST on 1/15. I’m just catching up with all of the great posts from the day and now I will come back to you to engage on the points you brought up. I have spent the day pretending to be half my age, installing a retaining wall in Georgia red clay. It is amazing how many joules of energy 1 cubic yard of clay can consume! Give me a few minutes (not sure how long…) and I’ll provide some thoughtful things so we can go back and forth to see if we can agree. There are so many good comments and questions from many people, but I fear I cannot keep up with them all… So, I’ll focus on your comments first as I think your comments capture much of what other said – and many people will want to hear your thoughts I’m sure.

I re-read my post to you from late last night and I’m glad that it doesn’t sound as grumpy as I feared it might when I thought about it this morning while swinging the mattock.

Back in a bit with more…

WW

Willis,

I propose we cover some basics to see where we deviate. Hope this is ok with you. I think/hope good for others reading as well. I need to say that those who are good at using statistical analysis will find themselves frustrated and out of their element. There is absolutely no need for statistical analysis before, during or after proper sampling. However, once a signal is properly sampled you can do anything you want with it mathematically – including statistical analysis.

Signals: After looking at and working with signals for tens of thousands of hours over 35 years, I assume it is completely intuitive to everyone – but this is a very, very bad assumption I see! A signal is (simply put) any parameter that you can measure that varies with time. Anything you can see on the screen of an oscilloscope is a signal. Anything that you can convert to an electrical signal through a transducer is a signal. Example: Take a microphone and connect its output to an amplifier input and then the amplifier output to an oscilloscope. The microphone will convert the air pressure (sound) it experiences at its capsule to an electrical voltage. You see what that voltage looks like on the screen of the scope. You could take a thermistor and with an electronic circuit convert the temperature to a voltage. That voltage can be viewed on the oscilloscope screen. So, there should be no question in anyone’s mind. Atmospheric air temperature is a signal.

Sampling: Sampling is measuring over and over at a periodic rate. Signals must be sampled because by definition signals are always changing. So how often must we sample? Harry Nyquist answers this: At a rate that is > 2x the highest frequency component of the signal being sampled. The frequency content of a signal can be measured with a spectrum analyzer.

Why Sample: Analog signals can be processed mathematically while in the analog domain, via Analog Signal Processing (ASP). We can add, subtract, divide, multiply, integrate and differentiate analog signals. This used to be the way control systems worked. (Some still do…) But when microprocessors and memory chips came along there was an opportunity to do things digitally. To store signals an manipulate them mathematically all you want and easily. Analog signals can be stored on magnetic tape but getting to the information you want has to be done by going through the tape to get to the point needed. It was not “random access”. To get analog signals to the digital domain we need to sample.

Why sample according to Nyquist? If we sample according to Nyquist, then we have captured *all* of the information available in the signal. Even if every peak of the analog signal is not visible in the samples it is there and can be extracted digitally. No need to sample faster than Nyquist to capture the absolute peaks (some wrote to claim this was needed – its not). This is part of the “magic” of sampling according to Nyquist. If we sample even much faster, it does not (repeat does not) get us any additional information. There *are* other reasons to sample much faster but I won’t digress into that here. It has to do with relaxing the requirements of the anti-aliasing filter that is used in front of all real world Analog-to-Digital Converters (ADCs). If we sample according to Nyquist, then we can convert from digital back to analog and have the exact same signal back again. With high quality ADCs and Digital-to-Analog Converters (DACs) we can go back and forth with multiple generations of conversion before we start to lose information because of converter introduced error. Not that going back and forth is necessarily the goal. The main point here is that with proper sampling you have captured what was going on in the analog domain and now your Digital Signal Processing (DSP) algorithms can be run on the data and you know it is as good as processing the analog signal itself.

How sampling works: When we sample, we create spectral images of our signal and these images are located at the sample rate in the frequency domain. The goal is to sample such that the image does not overlap the signal content. Overlap is aliasing. It is the addition of energy to our samples that doesn’t belong there.

Properly sampled: https://imgur.com/L7Wc393

Violating Nyquist: https://imgur.com/hPgub33

With an aliased sample you are working with data that does not represent the signal you sampled – at least to the extent that it is aliased.

Air temperature signals: Engineers usually work in units of Hz (Hertz), but it is more intuitive to use cycles/day here. Are air temperature signals 1Hz sinusoids shifted up with “DC” content from lower frequency portions of the signal (annual, multi-annual components)? No, they are not sinusoids. Some days look like sinusoids, but distorted sinusoids. This “distortion” is higher frequency components. Some days look almost like square waves and square waves have much higher frequency components. Some days have strange “messy” patterns. These come from higher frequency components. The sun rises and sets each day, and this is 1Hz, but depending upon your latitude and time of year you have a different duty cycle between night and day – this adds higher frequency components. Clouds passing overhead act as shutters, modulating the daily signal. This adds higher frequency components. Nyquist requires the sample rate to be greater than twice the highest frequency component of the signal. If we had a temp signal of 1Hz we still need to sample at more than 2x/day. [I’m ignoring the case where the sampling frequency can *equal* 2x the highest frequency component for a reason…]. So, can we agree that at 2-samples/day, done periodically, that Nyquist is violated?

What about this “periodic” requirement? To my frustration, many here have “gone off” with an amazing amount of conviction about this and obviously need to be educated. I’d rather light a candle than curse their darkness! Nyquist requires the sampling to be periodic, but what does that mean and imply? Does it imply perfection of the clock? No, it does not!!!! No real world ADC clock is perfect!!!!! (sorry, I don’t mean to be rude – but I want people to hear this.) Every real world ADC clock has what is called jitter. Jitter is variation in the length of each period of the clock. In modern electronics the jitter is usually quite low, but it is there. Jitter adds error – a different kind of error. Mercifully, I won’t do a dissertation on it here. So, does Nyquist limit just how much jitter can be on the clock? No! You just have to account for the jitter in your design and calculate the potential error if need be. Does jitter invalidate Nyquist? No! So 2 measurements of temperature that correspond to max and min ARE PERIODIC! They happen 2-times/day. This is the period. There is just a lot of jitter on the clock. So max and min are 2 periodic samples and the sample rate is 2-samples/day. Furthermore, if we look at the 288-samples taken each day in USCRN, we see that 2 of the 288 become our max and min. Are the 288 samples actually samples? Yes. Are the 2 that become our max and min samples? Yes. If we throw away all of the samples but the max and min are they still 2 samples? Yes! Throwing them away is no different than if the clock just happened to only fire the ADC at the times the max and min happened. So, let’s put this to rest please! Max and min are samples and they are periodic, and they fit into Nyquist.

Can we just sample at the frequency of interest? No! Any content above half of our sample rate frequency will alias. See figures showing overlap. This is by definition of Nyquist not legal per the theorem. Does Nick Stokes’ magic monthly averaging diurnal blah blah blah allow you to recover the correct monthly average? NO!!! Once you alias you can’t get it out! Go look at USCRN Monthly data for any station any month. I can get a file to someone to load for all to share if need be. We don’t have to do any more than look at what NOAA provides us. They give us the monthly “averages”, “means” or whatever the hell we are supposed to call it, for both methods every month! Just let your eyes do the walking. Fallbrook CA is one of my favorite because each and every month is off by 1.0-1.5C. But the trends for this station do well. Other stations don’t have quite as large of a difference monthly, but the trends do worse. In the real world application of Nyquist there is always an anti-alias filter in front of the ADC and the sample rate is set much higher than needed to make sure the spectral images are spaced far apart. This ensures much less aliasing and it relaxes the requirements on the filter. I don’t know if USCRN employs a filter. I hope so.

CRITICAL POINT ALERT: More on Aliasing of daily and trend signals: You need to consult my Full paper on this for more info. Any content at 1 and 3-cycles/day will come down and alias the daily signal. Any content at/near 2-cycles/day will alias the long term trends.

Can we just look at the spectrum of the signal and “by eye” to determine what the aliasing impact will be? I don’t think so. We need to know the phase relationship between the signal and aliasing components. It is best to see how the affect manifests in the time domain by looking at mean variation over time and trends. There is no other explanation for the mean and trend errors except for Nyquist violation. Give me your science if you want to disagree. Note: I’m not factoring in my list of the other 11 problems with the record (calibration, siting, etc.). Anecdote from mixing audio signals and setting levels: You can have levels set properly to not clip the converters and then if you do an EQ change to REDUCE a bass signal you can get clipping! How can a signal level reduction give you clipping??? Well if you have a large higher frequency transient (like symbol crash) that happens at the trough of a low bass note and then you reduce the base note, then the positive going high frequency transient will be higher and clip! We should resolve the impact of aliasing in the time domain.

What is “THE” Nyquist frequency of an atmospheric air temperature signal. Answer, I don’t have that exact answer, but going from what NOAA does, 288-samples/day appears to be close. Why they do the average of 20-sec samples I don’t know. Would 20-sec samples be better than averaging to 5-min? I don’t know. The calculation of mean seems to converge above 72-samples/day. Is that because they implement an anti-aliasing filter in agreement with 20-sec samples? I don’t know. Do we seem to get much better results from 288-samples than 2-samples? Hell yes. Who cares about a few hundredths or tenths of a degree C trend over a decade? Apparently climate scientists do, hence my use of Nyquist to call them on the carpet.

Darn Willis – I went and wrote a book. Jeez I don’t know how to do this with any more brevity. Many are so confused on this I hope this brings people along or at least helps to frame the debate better. I hate to “credential drop” because I know it is bad form, but I didn’t just read a wiki article and decide to write this essay. I have 35 years of practical application in the real world with real signals, working for the premier converter companies making converter solutions for the premier consumer electronics companies worldwide. Hundreds of millions of boxes and billions of channels of conversion. You most likely have some of them in your car or home. Feynman says science is the belief in the ignorance of the experts. Yes – I think we all subscribe to this. But how do I ask people to take a step back and for a moment think about what they can learn vs reaffirming what they already know? Nyquist compliant sampling is boring, daily everyday stuff for all of the technology world except climate science. The only novel thing here is the application to climate.

Hey Willis,

I just sent a long reply with the hope of seeing if we can align on some basics. But to comment here briefly to your comments about the Chatham WI USCRN data: The important thing to know is that there is broad range in spectral patterns from each station. I think the system needs to be designed for the full range of what we see in the world. I can find stations that behave very badly when aliased and other stations that seem to behave well despite aliasing. To be more clear, they seem to behave well for trends over 10 years. All stations I have studied seem to have significant error in means and absolute starting and ending points. The wide variation of impact comes from the wide variation of spectral content at a location – this can be seen visually by looking at a plot of the time domain signal for different days at different stations. Of course also over multiple days, months etc. It just makes itself more apparent over 1 day. Also, frustrating but true, what we see doesn’t always translate to the means and trends. The phase relationship of the aliased components play a big role as well as the magnitude.

An example to make the point. Most audio amplifiers can sound different to trained ears if reference recordings are used and played back on mastering grade systems in a acoustically treated mastering studio. I mean sound REALLY different. I have taken outputs from various amplifiers and sampled them and studied them in the time domain and frequency domain. Sometimes I see slight differences – sometimes not. But I hear them and this is done in a blind study setting and is repeatable. The point here is that resolving the complete impact of some effect might take multiple ways of looking at it. Yes, on some spectral plots you might not think there is significant energy at some frequency but combined with its magnitude and interaction with other components yields an impact that is larger than expected or obvious.

It would be something to consider studying for anyone interested. Examine data from various USCRN stations, compare visually what we see daily, compare what spectrum exists, compare the impact to mean and trend and then try to connect it to latitude, season, cloud cover, precipitation, etc. I already have some ideas from what I have examined but it is not thorough enough to disperse.

Sampling at a higher rate, like USCRN 288-samples/day seems to allow us to take a major step forward. With proper sampling then we can hopefully, someday, get to the point where we feed the digital signals (samples) into characteristic equations that explain weather and climate. I’m amazed that all this effort takes place to distill the work down to a number. Utilizing full properly sampled signals and running them into mathematical functions to yield output signals that mean something – now we are starting to look like science in the 21st century.

Ps – I’m not hung up on 288-samples/day. Just utilizing what USCRN offers and it appears that we get good results from a variety of stations. If the number turns out to be 72 then fine – but my overall point is not changed – just the number.

What do you think?

William, you did “write a book” … but nowhere in it did you answer my questions. So I’ll ask them again:

I also posted an analysis showing that hourly sampling only has an RMS error of 0.04°C with respect to a 5-minute sampling … and it was my understanding that you think hourly sampling violates Nyquist.

Next, I pointed out that a chaotic analog signal like temperature has frequencies with periods from seconds to millennia … since your claim is that we have to sample at twice the “highest frequency” to avoid aliasing, what are you claiming that “highest frequency” is, and how have you determined it?

Next, you seem to be overlooking the fact that Nyquist gives the limit for COMPLETELY RECONSTRUCTING a signal … but that’s not what we’re doing. We are just trying to determine a reasonably precise average. It doesn’t even have to be all that accurate, because we’re interested in trends more than the exact value of the average.

Finally, you still haven’t grasped the nettle—the problem with (max+min)/2 has NOTHING to do with Nyquist. Pick any chaotic signal. If you want to, filter out the high frequencies as you’d do for a true analysis. Sample it once every millisecond. Then pick any interval, take the highest and lowest values of the interval, and average them.

Will you get the mean of the interval? NO, and I don’t care if you sample it every nanosecond. The problem is NOT with the sampling rate. It’s with the procedure—(max+min)/2 is a lousy and biased estimator of the true mean, REGARDLESS of the sampling rate, above Nyquist or not.

Best regards,

w.

Willis,

At some point posts no longer offer the option to reply directly below, so I’m not sure where this is going to land in-line. This is in reply to your post where you say

“William, you did “write a book” … but nowhere in it did you answer my questions. So I’ll ask them again:”

I sent you a few more posts that do address your questions. I’ll give you time to read those and respond. Did you read my “book”? Sorry, it seems like we need to find out where we disconnect on the fundamentals. Some of your questions are answered in those posts. For your new questions…