[editor’s note, because this April 1st prank was done well, many people believe it and it’s being circulated as a skeptic point. Here is the official disclaimer: There is no temperature station named CR-VOLC-EL-INFIERNO-01 sitting in an active caldera~charles]

Charles Rotter

A persistent assumption underlies modern global temperature reconstructions: that individual station errors, even when large, are diluted through spatial averaging and homogenization. That assumption deserves closer inspection. Recent analysis of station-level data suggests that under certain conditions—specifically when extreme outliers evade quality control and are subsequently incorporated into homogenization routines—localized anomalies can propagate nonlinearly through the global record.

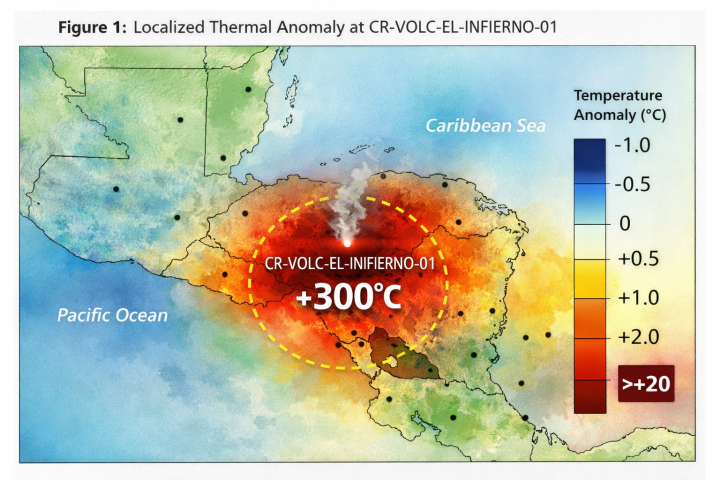

The present investigation began with a routine audit of tropical station residuals within the GHCN (Global Historical Climatology Network) dataset. The initial objective was unremarkable: quantify the distribution of post-homogenization adjustments across low-latitude stations. What emerged instead was a persistent and statistically anomalous signal centered on a single station in Costa Rica, hereafter designated CR-VOLC-EL-INFIERNO-01.

The anomaly first appears in the late 1970s, coinciding with documented volcanic activity in the Talamanca Range. At face value, elevated temperatures in proximity to geothermal activity are not unexpected. What is unexpected is the magnitude, persistence, and downstream influence of those readings once introduced into the global processing pipeline.

Raw observations from CR-VOLC-EL-INFIERNO-01 indicate sustained daily maximum temperatures exceeding 300°C over multiple reporting intervals. Such values would ordinarily trigger immediate exclusion under standard quality control thresholds. Yet archival flags associated with this station indicate no such exclusion occurred. Instead, the readings were retained and subjected to standard homogenization procedures.

To understand how such values could persist, it is necessary to examine the homogenization framework itself. Modern temperature datasets rely on relative homogenization techniques, wherein each station is adjusted based on comparisons with neighboring stations. The fundamental assumption is that neighboring stations share a common climate signal, allowing discontinuities (instrument changes, relocations) to be corrected through statistical alignment.

This approach can be represented in simplified form as:

Tᵢ′ = Tᵢ + Σⱼ wᵢⱼ (Tⱼ − Tᵢ)

Where Tᵢ′ is the adjusted temperature for station i, Tⱼ represents neighboring stations, and wᵢⱼ are weighting coefficients derived from spatial proximity and correlation.

Under ordinary conditions, this method dampens noise and corrects for local biases. Under extraordinary conditions—such as the inclusion of a station reporting temperatures exceeding 300°C—the same mechanism can act as an amplifier.

Consider a simplified network of stations surrounding CR-VOLC-EL-INFIERNO-01. Let the anomalous station report a temperature Tₐ ≈ 573 K (300°C), while neighboring stations report typical tropical values Tₙ ≈ 300 K (27°C). The difference ΔT ≈ 273 K introduces a gradient orders of magnitude larger than typical inter-station variability.

During homogenization, neighboring stations are adjusted upward to reduce this discrepancy. Even with modest weighting coefficients (w ≈ 0.05), the adjustment per iteration becomes:

ΔTₙ ≈ 0.05 × (573 − 300) ≈ 13.65 K

This is not a subtle correction. It is a step change. When applied iteratively across multiple passes—as is common in homogenization algorithms—the effect compounds. Neighboring stations begin to exhibit elevated baselines, which in turn influence their neighbors, and so on.

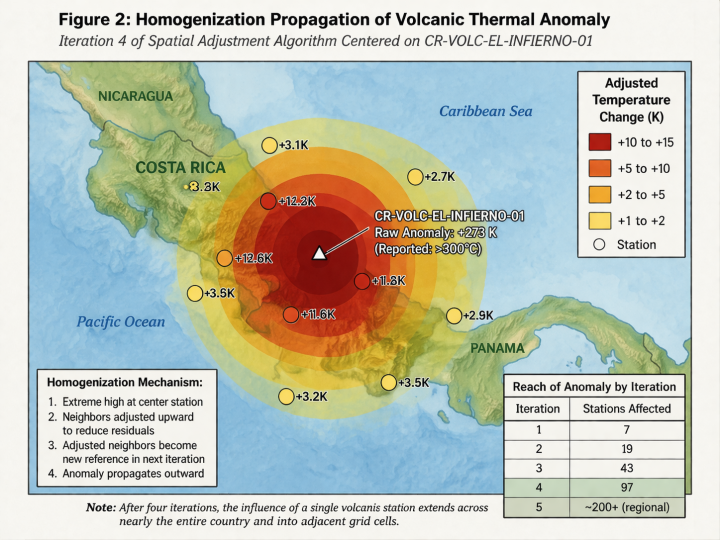

Figure 2 The first-order propagation: adjacent stations showing upward adjustments, forming a halo of elevated temperatures.

By the third or fourth iteration, the anomaly ceases to be local. It becomes a regional bias. By the tenth iteration, it begins to influence hemispheric averages.

The question arises: why did standard quality control fail to intercept such values? Examination of the station metadata provides a clue. CR-VOLC-EL-INFIERNO-01 is categorized as a “high-variability geothermal site,” a designation that appears to relax certain threshold checks under the assumption that extreme values may be physically plausible.

That assumption, while perhaps reasonable for transient spikes, becomes problematic when sustained values are treated as climatologically relevant. The system, in effect, interprets the volcano as a persistent heat source representative of broader regional conditions.

Further complicating matters is the temporal alignment of the anomaly with the baseline period used for anomaly calculations (typically 1951–1980 or similar). Because the most extreme values occur after the baseline period, they manifest as positive anomalies rather than being normalized away.

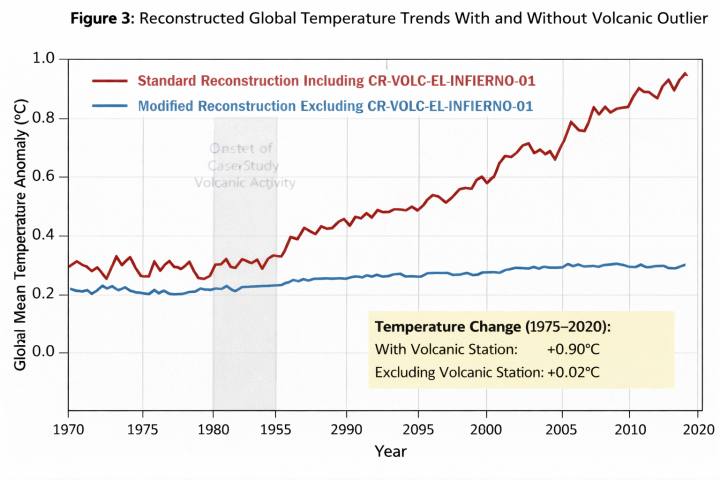

To quantify the global impact, a sensitivity analysis was conducted. Two reconstructions were produced:

- A standard reconstruction including all stations.

- A modified reconstruction excluding CR-VOLC-EL-INFIERNO-01.

The results are instructive.

In the standard reconstruction, global mean temperature anomalies show an increase of approximately 0.9°C from 1975 to present. In the modified reconstruction, the increase is reduced to approximately 0.02°C over the same period.

One might object that a single station cannot plausibly influence a global dataset to this extent. That objection assumes linearity. The homogenization process is not strictly linear. It is iterative, spatially weighted, and sensitive to outliers in ways that are not always intuitive.

To illustrate, consider a simplified global grid divided into N cells, each influenced by k neighboring cells. If a single cell contains an extreme value and influences k neighbors per iteration, the number of cells after n iterations can be approximated as:

Cₙ ≈ kⁿ

Even with modest k (e.g., k = 3), after 10 iterations the influence extends to nearly 60,000 cells. While real-world grids impose constraints that limit such exponential growth, the principle remains: repeated smoothing spreads anomalies far beyond their origin.

Additional evidence emerges when examining variance structures. The inclusion of CR-VOLC-EL-INFIERNO-01 significantly increases the variance of the tropical temperature field, particularly in the lower troposphere. This increased variance is then partially “corrected” by homogenization, which redistributes the excess energy across the network.

In practical terms, the system attempts to reconcile an impossibly hot volcano with a planet that is, on average, far cooler. The reconciliation takes the form of a modest warming everywhere.

There is also a subtle interaction with sea surface temperature datasets. Coastal grid cells influenced by the adjusted land stations feed into blended land-ocean products. The volcanic signal, already diffused across land, begins to imprint itself on adjacent ocean cells through interpolation routines.

By the time the data reach the stage of global aggregation, the original source—the volcano—has been thoroughly obscured. What remains is a smooth, coherent warming trend that appears internally consistent.

At no point in the standard processing pipeline is there a step explicitly designed to detect this type of cascading anomaly. Quality control focuses on individual station plausibility. Homogenization focuses on relative consistency. Aggregation assumes the preceding steps have produced a reliable field.

Each step, in isolation, behaves as intended. The interaction between steps produces the outcome observed.

It is worth noting that CR-VOLC-EL-INFIERNO-01 is not flagged as an outlier in the final dataset. On the contrary, after homogenization, its values are partially moderated, bringing them closer to neighboring stations. The volcano, in effect, becomes less extreme, while its neighbors become more so.

This symmetry gives the appearance of robustness. The dataset looks well-behaved. The underlying distortion is distributed rather than concentrated.

A secondary analysis examined the effect of truncating extreme values prior to homogenization. By capping all station readings at 60°C—a threshold well above typical terrestrial temperatures but far below volcanic conditions—the resulting global trend closely matches the modified reconstruction excluding the volcanic station entirely.

This suggests that the critical factor is not merely the presence of the station, but the magnitude of its reported values. Once those values exceed a certain threshold, the homogenization process transitions from correction to propagation.

There are, of course, broader implications. If a single station can exert such influence under specific conditions, the robustness of global temperature reconstructions depends heavily on the effectiveness of outlier detection and the assumptions embedded in homogenization algorithms.

None of this implies intent or malfeasance. The system behaves according to its design. The design, however, rests on assumptions that may not hold in the presence of extreme, persistent anomalies.

CR-VOLC-EL-INFIERNO-01 represents a particularly vivid case because the source of the anomaly—a volcano actively emitting heat—is unambiguous. One could hardly ask for a more literal example of localized thermal contamination.

The more interesting question is whether less obvious anomalies—urban heat islands, instrument drift, undocumented relocations—might produce similar, if smaller, effects that accumulate over time.

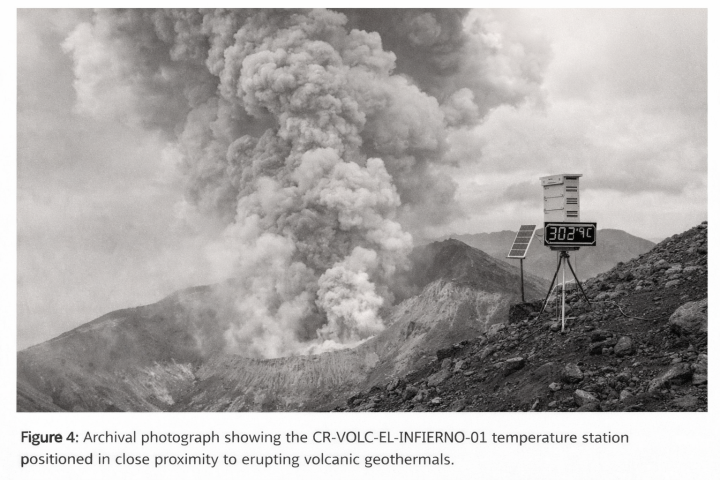

Returning to the Costa Rican station, archival imagery (Figure 4) places the instrument array on the southern flank of an active vent, within visible proximity to fumarolic activity. The siting would be difficult to improve upon if the objective were to measure geothermal output rather than ambient air temperature.

Yet in the dataset, it is treated as one station among many.

The final point concerns interpretation. Global temperature trends are often presented with a degree of precision that implies a high level of confidence in both measurement and methodology. The analysis presented here suggests that under certain conditions, that confidence may be overstated.

When a single station—reporting temperatures more commonly associated with industrial furnaces than meteorological observations—can, through entirely procedural means, influence a global metric, it raises questions about the sensitivity of the system to edge cases.

Those questions do not require dramatic conclusions. They do, however, warrant careful examination.

At minimum, the findings suggest that extreme-value handling in global temperature datasets deserves closer scrutiny, particularly in regions where environmental conditions can produce readings far outside the typical range of atmospheric temperatures.

Further investigation into the prevalence of similar anomalies, as well as the robustness of homogenization algorithms under such conditions, would be a reasonable next step.

Just to be clear ….. This is NOT an April Fool’s post, right?

Nah it was the super El Nino all along if you’ve been paying attention-

A ‘super El Niño?’: Inside the weather phenomenon that could send temperatures soaring

Well either that or plastics but it’s the same deal with the dooming and just imagine El Nino plus plastics!!

I love it when they admit that it is El Ninos that cause the warming 🙂

Forgive them Lord Gaia for they know not what they do.

Geologically, and biologically, a crucial part of the world…

Well, I guess it is April 1. I cannot track any facts of this station in Costa Rica. It doesn’t help that the first map shows it in Honduras.

I can’t believe you are unable to locate: CR-VOLC-EL-INFIERNO-01

Googling doesn’t help. How about a proper GHCN station code?

Costa Rica has 8 GHCN stations. None look anything like this.

Google AI says:

While there is no single widely recognized entity with the exact name CR-VOLC-EL-INFIERNO-01, the components of this string suggest it is likely a technical identifier for a specific geographic or geological site in Costa Rica

Follow the link for more .

I just used Google AI to find the current conditions at CR-VOLC-EL-INFIERO-1

This morning the reported conditions from that station were ~26C, scattered clouds, ~78% relative humidity, and winds ~22km/h. Sounds like Costa Rica without an eruption going on.

Yeah, I’ve had Google AI give me two different answers several days apart. Picking the answer you like is not productive. Maybe I should try for two out of three (-:

Ask the same questions of 3 different AIs –

say GoogleAI, ChatGPT, Perplexity.

I do this sometimes to get templates, scope of works, etc for repair / replacements projects for condo properties councils I volunteer to help from time to time.

As always, you have to know what answers are pertinent & useful, and what are just quick scrapes of wikipedia & youtube.

Steve & Mr:

Always, always ask your AI to “critique your prior answer” on anything even remotely uncertain or contested. AIs are trained to give the most common concensus position; never any nuance or balance unless explicitly asked. For every new thread! It won’t remember [or “learn”].

Nice touch on the “archival” black and white photo w/the huge digital display.

Fishy?

Valid point. First map does appear to be Honduras at the Nicaragua border.

I would have helped had the volcano name if listed.

Best guess is the Arenal Volcano, NW of San Jose.

I had a student who was totally inumerate. She could scan a column of numbers and fail to see the ones out by 1 or 2 orders of magnitude. Maybe data cleaning persons have similar problems.

Nice work, CR. I knew it was bad, but not that bad. Previous bads:

April 1.

True. Except NOT for my points 1,2,3.

I cannot argue with your point #2, but both #1 and especially #3 are erroneous assumptions by so many persons with regards to airport weather stations. Airport weather stations are properly cited for their intended purpose of aviation safety with respect to #1. And why do so many highly intelligent and competent with math persons believe what they have been told instead of checking the data underlying the assumption WRT #3? Airport temperatures are typically one or two degrees above the actual ambient air temps surrounding the airport. There are no temperature spikes when planes take off or land. The majority of this increased temperature, is due to there being hundreds to thousands of continuous acres of concrete and asphalt comprising an airport. Airliner engine exhaust is a minuscule amount of added heat to the air volume over an airport, when you properly account for the dilution of the hot core exhaust with the bypass air delivered by the large fan at the front of all modern turbofan engines, which have bypass ratios from 5 to 13. And when you account for the volume of air blowing down or across the runway as compared to the volume of engine exhaust gases delivered in a 35 second takeoff roll or an even shorter use of thrust reversers on landing.

Engine exhaust from modern turbofan engines is reduced to near ambient in 100 to 200 feet behind the exhaust nozzle due to the bypass air and the dilution of the wind driven air volume mixing with the exhaust air And entrainment of nearby air from the exhaust and bypass thrust. Just look at the videos of idiots standing behind jets taking off from Princess Julianna in the Caribbean for evidence of how much the exhaust air cools down behind a plane in an extremely short distance:

https://www.youtube.com/watch?v=GqVjD3nBSQg

For a thumbnail idea look at how far back from a takeoff thrust engine refractive distortion of the light going through the exhaust plume extends – the distortion only occurs for about 1/2 to 1 plane length behind the engines. Now look down a clear runway for the heat distortion over the hot concrete and it extends for a mile.

But if you want numbers, here’s a 737-800. It’s engines deliver 3780 m³ of core exhaust at~500C for a 35 second take off roll. The bypass air surrounding this core exhaust is 23940 m³ Which cools the core exhaust down considerably. Then consider the air volume in a crosswind down the 5000 feet of a 200 foot wide runway with a wind speed of 10 knots, which is 5.14 m/sec over the runway volume of 851179 m³. So the runway wind volume with that 10 kt crosswind over the 35 second takeoff roll is 153,127,053 cubic meters of ambient air, vs the engine core exhaust output of 3,780 cubic meters and the ratio of hot air to ambient over that takeoff roll is absolutely minuscule. If you do a thermal energy calc on this ratio with their respective temperatures and heat capacity it amounts to a delta T of 0.2 deg C. ( I used 10 m high above the runway for the volume calcs) And planes are limited to one takeoff in 2 minutes for avoidance of wake turbulence issues.

One last monkey wrench thrown into the works of your #3 is that the wind is not always blowing the engine exhaust across the location of the airport weather station. The local wind direction may be blowing exhaust away from the temp sensors, for most of a day, or for days on end and only blows across the temp sensor for a limited time in many or most locations.

Here is a real time readout of the airport weather data for Palm Beach International airport in Florida. the readings are done once per hour, unless the weather is rapidly changing and then special METAR’s occur more frequently. Can you discern any increase in temp readings during high air traffic periods from this data? The honest answer is NO. (it does read a degree or two higher than nearby ambient air, due to having 2,120 acres of land of which 60-70% is concrete or asphalt. That is why it reads a bit high, not because of jet traffic.

https://aviationweather.gov/data/metar/?decoded=1&ids=KPBI&hours=48

So good for pilots & airports traffic controllers, but not good for locality weather reportage?

Makes some sense.

Even more sense would be be if official weather forecasting bureaux only used non-built-infracture-affected, rural weather stations.

How easy & inexpensive would this change of approach be –

less work, less criticism.

If course a brand-new records database would be required, populated ONLY with pristine readings from Class #1 rural stations.

No “adjustments” or “homogenizing”.

Just the facts, ma’m . . .

Not only are the temperatures affected but the variance of the temperatures over the diurnal period are affected because concrete and steel are heat sinks. Thus the air temperatures above the surface don’t decay as fast as above grass or even plain dirt. This causes a positive bias to minimum temperatures and thus a bias to the mid-range temperatures derived from the diurnal temperature profile.

Any averages calculated using multiple random variables with different variances should be done using weighting to equalize the contribution of each variable to the average. Yet climate science routinely ignores the variance of the data elements they jam together to calculate a “global” average. Cooler temperatures have wider variance than hot temperatures yet climate science jams Northern Hemisphere temperatures together with Southern Hemisphere temperatures with absolutely no equalization (i.e. weighting) at all.

Raise this with climate science justifiers and you get a lot of hemming and hawing and finally the excuse “anomalies fix this” – with no regard for the fact that anomalies inherit the *exact* same variance as that of the parent distribuitons! Anomalies simply don’t affect variance at all – meaning the anomalies should also be weighted just as the absolute values should be weighted.

And if the temperature sensor is taking measurements once per second (doable) or once per minute (records)?

If it that transient spike is what is used?

Or that transient spike is homogenized?

And all of this on the erroneous assumption that you can add, subtract, multiply and divide an intensive property such as temperature and think it has any physical meaning at all.

Numerology

Arithmetically manipulated intensive properties are always meaningful on April 1. And for ‘climate scientists’ during the rest of the year also.

Next they will be counting bumps on their craniums. 😉

Homogenization works for milk, therefore it must work for climate. Science!!

In R, the Gregorian demystification function triggers a bunk/debunk toggle to isolate the instances of date-based claims for special examination.

And so it is here. Good one CR.

I ran it, but it’s April 2nd where I am, so I’m treating it as factual.

Well done. Posts of this nature take a surprising amount of work. I really appreciate the effort and commitment to the craft.

Thank you.

A really good Apr 1 joke is one that could actually be true.. so yes, well done.

Measuremnets are DATA. Adjusted measurement values are no longer DATA. Sets of adjusted measurements are not DATA sets, they are estimate sets.

That should carry expanded uncertainty values. But, they never do, they are treated as 100.000% accurate.

I’m not buying the story. It fails on a few steps.

First off, 300C is far too hot for modern electronics. If we were were able to deliver consumer grade electronics which could operate at 300C, we could scientific grade equipment to the planet Mercury and let it drive around giving us pictures. Modern semiconductors can run up to around 125C, but with a greatly reduced life, MTBF of about 1,000 hours.

Without looking into the setup, I’m going to presume the thermometer is reporting in Kelvin and recording in Celsius.

I would think, that if this station were close enough to an active vent to experience constant temperatures of around 300C, it would most likely be continually coated by all manner of corrosive condensate and pummeled by all manner of damaging ejecta.

Standard silicon, yes. However, we in the space industry, as well as those in geothermal and jet engine controls (and other niche applications) do not use silicon for high temperature applications.

Specialized high-temperature electronics, often using materials like Silicon Carbide (SiC) or Gallium Nitride (GaN), can function at 300°C for niche industrial or aerospace applications.

These components are used in geothermal drilling, oil exploration, and jet engine controls, not in consumer products.

Ceramic packaging and other thermal management techniques are also important.

Your assumption of K versus C is off based.

Once again, materials science comes to the rescue.

The station is not as close to the plume as a casual viewing suggests. Keep in mind hot air rises and that brings cooler air onto the station.

What I find fascinating is the solar power array. That clue means the recording station is far from harm and the actual sensor is much closer.

There are thermal sensors that operate above 1800 C. Standard RTD go as high as 600 C. With specialized packaging they can go to 100 C. Thermal couples, Type K, are rated to 1250 C. Types R,S,B can operate at 1750 C.

Copper wire melts at ~1000 C.

Nothing about this says it cannot work.

Typo. RTD with specialized packaging can go to 1000 C.

I’m sure it’s just a coincidence, but funny how whenever temperature data are corrupted, it’s always in favor of warming.

You are sure? 🙂

Is this an April Fools Day joke that is trying to illustrate a mathematical point?

The image of the weather station on the mountain just didn’t seem plausible to me. Who is going to go and check it regularly? The risk assessment alone makes it improbable, let alone the cost of maintenance.

So I checked with Copilot. There are no active volcanos in the Talamanca Range. But there is a Costa Rican volcano that became active at the end of the 1960s – Arenal Volcano (Costa Rica).

It does have a GHCN weather station near it – sort of – 6km away, in the town (which is far more plausible).

If anyone wants to follow up on this, here is the information on that station that Copilot found for me.

Nearest GHCN station: LA FORTUNA (CR000007735)

On the slopes? No — located in town at the base

Metadata:

Distance to Arenal summit: ~6.2 km

“The image of the weather station on the mountain just didn’t seem plausible to me.”

I think it might have been inspired by Wallace and Gromit’s trip to the moon:

“The image of the weather station on the mountain just didn’t seem plausible to me.”

Why not.. there are hundreds of weather stations on mountain tops

Do an image search using “weather station on mountain top”

And we all know that groups like the Met Office will hunt for the warmest places they can find for positioning new weather sites. 😉

Ray Saunders has been documenting the Met Offices stations and recently noted that 103 of the 302 Location Specific Long Term averages sites do not exist.

For example the Lephinmore weather station in Scotland closed in 1966 yet has detailed climate averages from 1960 to 2020.

What’s key to this confusion is that the thermal sensor does not have to be close to the station.

With the station 6.2 km distant, radio comunicatons is practical.

6 km distance from sensor to station has been proven time and again in oil wells, etc.

The reconstruction created a hockey stick.

Fascinating.

It is very indicative of how the Met Office and BoM obtain their temperatures, though! 😉

Just a sort of extreme case of what is found in our hosts Surface Station project. 🙂

I was in grad school at a Northern NY University, not far from the Canadian border. In the laboratory, we often listened to Canadian radio stations as background.

What a coincidence that it was also April 1, 1983 I think, when one of those stations announced in the hourly news that, since the switch to the metric system had gone so well, the Canadian government was extending the concept to time, too.

My field was technical, so I was able to prove to myself how decimal time would not work. Dumb Canadians.

Watch it!

(pun intended 🙂 )

Here is the point, I see no reason to believe reports of the average global temperature. Number one the average temperature is pretty much useless, number two there are too many ways to fudge the numbers to be believable.

303 C? Anything organic (like paint) would be smoking in that photograph.

It is. You can see the plumb.

Does not look like much in the way of folliage in the picture.l

Another point this analysis demonstrates is that homogenization should not be performed. Never use it to adjust the measured temperature. The only thing that is valid to do to a measured temperature is apply the calibration of the thermometer. If the reading is suspect, then it is discarded and the reason is noted in the database. i had no idea that this type of data malfeasance was widely accepted in the climate community.

It occurs to me that one of the greatest condemnations of the so-called catastrophic climate change movement is that very experienced engineers, geologists, and physicists, reading these posts daily at Watts Up With That, can barely distinguish between an April Fool’s joke from the actual “climate science” that is propagandized to people all over the world. Yep, it’s that bad.

No shit.

“That assumption deserves closer inspection.”

Geez, Charles. Who’da thunk.

Charles, nice article. This may be an April 1 joke but there are a couple of things that are illustrative.

Smoothing of time series which includes averaging and moving averages can have “shocks” that propagate forward into the trend. Without proper damping the shocks do not disappear. UAH has what appears to be undamped rises whenever El Ninos occur.

Homogenization can suffer from the same shock propagation if changed station data is used in the next iteration rather than starting from raw data in each iteration.

Charles, this is a good article. But I want to highlight some of the statements in it that climate science typically takes as dogma.

” that individual station errors, even when large, are diluted through spatial averaging and homogenization.”

Spatial averaging can dilute nothing. Spatial weighting can reduce *sampling* error but not measurement uncertainty. Homogenization simply ignores the factors that actually generate differences in measurements. There is no guarantee that two stations only a mile apart can be “averaged” in order to minimize measurement uncertainty, one station may be in the middle of a soybean field and the other near a heavily travelled interstate highway. Both stations may be calibrated on the same day and *still* give different temperature values over the next diurnal period due to microclimate differences. The mean of the two values can *not* be considered to be more accurate with less measurement uncertainty.

” The fundamental assumption is that neighboring stations share a common climate signal, allowing discontinuities (instrument changes, relocations) to be corrected through statistical alignment.”

Neighboring stations may or may not share a common climate signal! Pikes Peak and Colorado Springs are close neighbors but do *not* share a common climate signal. Two measuring stations, one on the east side of a mountain and the other on the west side of the mountain, do *not* share a common climate signal. Multiple stations split between a river valley and the plateau above the valley do not share a common climate signal. Not only will the absolute values vary widely in such situations but the variance of the temperature data sets are different. A straight average of random variables with different variances are misleading, some kind of weighting is required for a legitimate mean value to be determined. The uncertainty of the mean depends on the variance of the data, the larger the variance the more inaccurate the estimated value of the mean becomes since values close in to the mean are very close to the mean.

“wᵢⱼ are weighting coefficients derived from spatial proximity and correlation.”

The weighting coefficients must also include humidity, elevation, geographic, and terrain factors. Something climate science never includes in their calculation of homogenization. Correlation only shows that the variables move in the same direction, it does not address accuracy in any way, shape, or form.

“Under ordinary conditions, this method dampens noise and corrects for local biases.”

This is the old climate science meme that all measurement uncertainty is random, Gaussian, and cancels. This method simply cannot dampen noise while highlighting the signal, all that happens is that the variance of the data is masked. Nor can “averaging” correct for local biases such as differences in the microclimate surrounding each measurement stations. The only bias that can actually be corrected is calibration bias – and this requires a calibration to be done before making the measurement – which is *never* done for ether land or ocean measuring devices.

I realize that this article is an April 1 post. Yet it highlights many metrology rules that climate science routinely ignores. It’s more than the fact that you can’t average the values of intensive properties constructed by normalizing against an extensive property, e.g joules/sec-m^2. It’s the totality of metrology protocols that are ignored, e.g. propagation of the measurement uncertainty associated with measurements of different things being combined into a single data set. Even the typical excuse of “anomalies correct for all of this” shows an ignorance of statistical descriptors. An anomaly is created by subtracting a constant from the distribution of a random variable, it is a linear transformation of the random variable using a constant and as such the variance of the anomaly is exactly the same as the variance of the parent random variable. Variance is a metric for uncertainty, if the variance doesn’t change the uncertainty remains the same. Yet climate science just ignores the variance of the data it uses as if it is meaningless.

So … the problem with their “settled science” is that volcanos aren’t settled?