We publish this here, not to confirm that it is correct, but to stimulate the debate needed to determine whether or not it is correct or if it’s simply an exercise in curve fitting. ~ctm

George White, August 2017

Climate science is the most controversial science of the modern era. A reason why the controversy has been so persistent is that those who accept the IPCC as the arbiter of climate science fail to recognize that a controversy even exists. Their rationalization is that the IPCC’s conclusions are presented as the result of a scientific consensus, therefore, the threshold for overturning them is so high, it can’t be met, especially by anyone who’s peer reviewed work isn’t published in a main stream climate science journal. Their universal reaction when presented with contraindicative evidence is that there’s no way it can be true, therefore, it deserves no consideration and whoever brought it up can be ignored while the catch22 makes it almost impossible to get contraindicative evidence into any main stream journal.

This prejudice is not limited to those with a limited understanding of the science, but is widespread among those who think they understand and even quite prevalent among notable scientists in the field. Anyone who has ever engaged in communications with an individual who has accepted the consensus conclusions has likely observed this bias, often accompanied with demeaning language presented with extreme self righteous indignation that you would dare question the ‘settled science’ of the consensus.

The Fix

Correcting broken science that’s been settled by a consensus is made more difficult by its support from recursive logic where the errors justify themselves by defining what the consensus believes. The best way forward is to establish a new consensus. This means not just falsifying beliefs that support the status quo, but more importantly, replacing those beliefs with something more definitively settled.

Since politics has taken sides, climate science has become driven by the rules of politics rather than the rules of science. Taking a page from how a political consensus arises, the two sides must first understand and acknowledge what they have in common before they can address where they differ.

Alarmists and deniers alike believe that CO2 is a greenhouse gas, that GHG gases contribute to making the surface warmer than it would be otherwise, that man is putting CO2 into the atmosphere and that the climate changes. The denier label used by alarmists applies to anyone who doesn’t accept everything the consensus believes with the implication being that truths supported by real science are also being denied. Surely, if one believes that CO2 isn’t a greenhouse gas, that man isn’t putting CO2 into the atmosphere, that GHG’s don’t contribute to surface warmth, that the climate isn’t changing or that the laws of physics don’t apply, they would be in denial, but few skeptics are that uninformed.

Most skeptics would agree that if there was significant anthropogenic warming, we should take steps to prepare for any consequences. This means applying rational risk management, where all influences of increased CO2 and a warming climate must be considered. Increased atmospheric CO2 means more raw materials for photosynthesis, which at the base of the food chain is the sustaining foundation for nearly all life on Earth. Greenhouse operators routinely increase CO2 concentrations to be much higher than ambient because it’s good for the plants and does no harm to people. Warmer temperatures also have benefits. If you ask anyone who’s not a winter sports enthusiast what their favorite season is, it will probably not be winter. If you have sufficient food and water, you can survive indefinitely in the warmest outdoor temperatures found on the planet. This isn’t true in the coldest places where at a minimum you also need clothes, fire, fuel and shelter.

While the differences between sides seems irreconcilable, there’s only one factor they disagree about and this is the basis for all other differences. While this disagreement is still insurmountable, narrowing the scope makes it easier to address. The controversy is about the size of the incremental effect atmospheric CO2 has on the surface temperature which is a function of the size of the incremental effect solar energy has. This parameter is referred to as the climate sensitivity factor. What makes it so controversial is that the consensus accepts a sensitivity presumed by the IPCC, while the possible range theorized, calculated and measured by skeptics has little to no overlap with the range accepted by the consensus. The differences are so large that only one side can be right and the other must be irreconcilably wrong, which makes compromise impossible, perpetuating the controversy.

The IPCC’s sensitivity has never been validated by first principles physics or direct measurements. It’s most widely touted support comes from models, but it seems that as they add degrees of freedom to curve fit the past, the predictions of the future get alarmingly worse. Its support from measurements comes from extrapolating trends arising from manipulated data where the adjustments are poorly documented and the fudge factors always push results in one direction. This introduces even less certain unknowns, which are how much of the trend is a component of natural variability, how much is due to adjustments and how much is due to CO2. This seems counterproductive since the climate sensitivity should be relatively easy to predict using the settled laws of physics and even easier to measure with satellite observations, so what’s the point in the obfuscation by introducing unnecessary levels of indirection, additional unknowns and imaginary complexity?

Quantifying the Relationships

To quantify the sensitivity, we must start from a baseline that everyone can agree upon. This would be the analysis for a body like the Moon which has no atmosphere and that can be trivially modeled as an ideal black body. While not rocket science, an analysis similar to this was done prior to exploring the Moon in order to establish the required operational limits for lunar hardware. The Moon is a good place to start since it receives the same amount of solar energy as Earth and its inorganic composition is the same. Unless the Moon’s degenerate climate system can be accurately modeled, there’s no chance that a more complex system like the Earth can ever be understood.

To derive the sensitivity of the Moon, construct a behavioral model by formalizing the requirements of Conservation Of Energy as equation 1).

1) Pi(t) = Po(t) + ∂E(t)/∂t

Consider the virtual surface of matter in equilibrium with the Sun, which for the Moon is the same as its solid surface. Pi(t) is the instantaneous solar power absorbed by this surface, Po(t) is the instantaneous power emitted by it and E(t) is the solar energy stored by it. If Po(t) is instantaneously greater than Pi(t), ∂E(t)/∂t is negative and E(t) decreases until Po(t) becomes equal to Pi(t). If Po(t) is less than Pi(t), ∂E(t)/∂t is positive and E(t) increases until again Po(t) is equal to Pi(t). This equation quantifies more than just an ideal black body. COE dictates that it must be satisfied by the macroscopic behavior of any thermodynamic system that lacks an internal source of power, since changes in E(t) affect Po(t) enough to offset ∂E(t)/∂t. What differs between modeled systems is the nature of the matter in equilibrium with its energy source, the complexity of E(t) and the specific relationship between E(t) and Po(t). An astute observer will recognize that if an amount of time, τ, is defined such that all of E is emitted at the rate Po, the result becomes Pi = E/τ + ∂E/∂t which is the same form as the differential equation describing the charging and discharging of a capacitor which is another COE derived model of a physical system whose solutions are very well known where τ is the RC time constant.

For an ideal black body like the Moon, E(t) is the net solar energy stored by the top layer of its surface. From this, we can establish the precise relationship between E(t) and Po(t) by first establishing the relationship between the temperature, T(t) and E(t) as shown by equation 2).

2) T(t) = κE(t)

The temperature of matter and the energy stored by it are linearly dependent on each other through a proportionality constant, κ, which is a function of the heat capacity and equivalent mass of the matter in direct equilibrium with the Sun. Next, equation 3) quantifies the relationship between T(t) and Po(t).

3) Po(t) = εσT(t)4

This is just the Stefan-Boltzmann Law where σ is the Stefan Boltzmann constant and equal to about 5.67E-8 W/m2 per T4, and for the Moon, the emissivity of the surface, ε, is approximately equal to 1.

Pi(t) can be expressed as a function of Solar energy, Psun(t), and the albedo, α, as shown in equation 4).

4) Pi(t) = Psun(t)(1 – α)

Going forward, all of the variables will be considered implicit functions of time. The model now has 4 equations and 7 variables, Psun, Pi, Po, T, α, κ and ε. Psun is known for all points in time and space across the Moon’s surface. The albedo α and heat capacity κ are mostly constant across the surface and ε is almost exactly 1. To the extent that Psun, α, κ and ε are known, we can reduce the problem to 4 equations and 4 unknowns, Pi, T, Po and E, whose time varying values can be calculated for any point on the surface by solving a simple differential equation applied to an equal area gridded representation whose accuracy is limited only by the accuracy of α, κ and ε per cell. Any model that conforms to equations 1) through 4) will be referred to as a Physical Model.

Quantifying the Sensitivity

Starting from a Physical Model, the Moon’s sensitivity can be easily calculated. The ∂E/∂t term is what the IPCC calls ‘forcing’ which is the instantaneous difference between Pi and Po at TOA and/or TOT. For the Moon, TOT and TOA are coincident with the solid surface defining the virtual surface in direct equilibrium with the Sun. The IPCC defines forcing like this so that an increase in Pi owing to a decrease in albedo or increase in solar output can be made equivalent to a decrease in Po from a decrease in power passing through the transparent spectrum of the atmospheric that would arise from increased GHG concentrations. This definition is ambiguous since Pi is independent of E, while Po is highly dependent on E, thus a change in Pi is not equivalent to a the same change in Po since both change E, while only Po changes in response to changes in E which initiates further changes E and Po. The only proper characterization of forcing is a change in Pi and this is what will be used here.

While ∂E/∂t is the instantaneous difference between Pi and Po and conforms to the IPCC definition of forcing, the IPCC representation of the sensitivity assumes that ∂T/∂t is linearly proportional to ∂E/∂t, or at least approximately so. This is incorrect because of the T4 relationship between T and Po. The approximately linear assumption is valid over a small temperature range around average, but is definitely not valid over the range of all possible temperatures.

To calculate the Long Term Equilibrium sensitivity, we must consider that in the steady state, the temporal average of Pi is equal to the temporal average of Po, thus the integral over time of dE/dt will be zero. Given that in LTE, Pi is equal to Po, and the Moon certainly is in an LTE steady state, we can write the LTE balance equation as,

5) Pi = Po = εσT4

To calculate the LTE sensitivity, simply differentiate and invert the above equation which gives us,

6) ∂T/∂Pi = ∂T/∂Po = 1/(4εσT3)

This derivation does make an assumption, which is that ∂T/∂Pi = ∂T/∂Po since we’re really calculating ∂T/∂Po. For the Moon this is true, but for a planet with an semi-transparent atmosphere between the energy source and the surface in equilibrium with it, they aren’t for the same reason that the IPCC’s metric of forcing is ambiguous. None the less, what makes them different can be quantified and the quantification can be tested. But for the Moon, which will serve as the baseline, it doesn’t matter.

Define the average temperature of the Moon as the equivalent temperature of a black body where each square meter of surface is emitting the same amount of power such that when summed across all square meters, it adds up to the actual emissions. Normalizing to an average rate per m2 is a meaningful metric since all Joules are equivalent and the average of incoming and outgoing rates of Joules is meaningful for quantifying the effects one has on the other, moreover; a rate of energy per m2 can be trivially interchanged with an equivalent temperature. This same kind of average is widely applied to the Earth’s surface when calculating its average temperature from satellite data where the resulting surface emissions are converted to an equivalent temperature using the Stefan-Boltzmann Law.

If the average temperature of the Moon was 255K, equation 6) tells us that ∂T/∂Pi is about 0.3C per W/m2. If it was the 288K like the Earth, the sensitivity would be about 0.18C per W/m2. Notice that owing to the 1/T3 dependence of the sensitivity on temperature, as the temperature increases, the sensitivity decreases at an exponential rate. The average albedo of the Moon is about 0.12 leading to an average Pi and Po of about 300 W/m2 corresponding to an equivalent average temperature of about 270K and an average sensitivity of about 0.22 C per W/m2.

As far as the Moon is concerned, this analysis is based on nothing but first principles physics and the undeniable, deterministic average sensitivity that results is about 0.22C per W/m2. This is based on indisputable science, moreover; the predictions of Lunar temperatures using models like this have been well validated by measurements.

The 270K average temperature of the Moon would be the Earth’s average temperature if there were no GHG’s since this also means no liquid water, ice or clouds resulting in an Earth albedo of 0.12 just like the Moon. This contradicts the often repeated claim that GHG’s increase the temperature of Earth from 255K to 288K, or about 33C, where 255K is the equivalent temperature of the 240 W/m2 average power arriving at the planet after reflection. This is only half the story and it’s equally important to understand that water also cools the planet by about 15K owing to the albedo of clouds and ice which can’t be separated from the warming effect of water vapor making the net warming of the Earth from all effects about 18C and not 33C. Water vapor accounts for about 2/3 of the 33 degrees of warming leaving about 11C arising from all other GHG’s and clouds. The other GHG’s have no corresponding cooling effect, thus the net warming due to water is about 7C (33*2/3 – 15) while the net warming from all other sources combined is about 11C, where only a fraction of this arises from from CO2 alone.

Making It More Complex

Differences arise as the system gets more complex. At a level of complexity representative of the Earth’s climate system, the consensus asserts that the sensitivity increases all the way up to 0.8C per W/m2, which is nearly 4 times the sensitivity of a comparable system without GHG’s. Skeptics maintain that the sensitivity isn’t changing by anywhere near that much and remains close to where it started from without GHG’s and if anything, net negative feedback might make it even smaller.

Lets consider the complexity in an incremental manner, starting with the length of the day. For longer period rotations, the same point on the surface is exposed to the heat of the Sun and the cold of deep space for much longer periods of time. As the rotational speed increases, the difference between the minimum and maximum temperature decreases, but given the same amount of total incident power, the average emissions and equivalent average temperature will remain exactly the same. At real slow rotation rates, the dark side can emit all of the energy it ever absorbed from the Sun and the surface emissions will approach those corresponding to it’s internal temperature which does affect the result.

The sensitivity we care about is relevant to how the LTE averages change. The average emissions and corresponding average temperature are locked to an invariant amount of incident solar energy while the rotation rate has only a small effect on the average sensitivity related to the T-3 relationship between temperature and the sensitivity. Longer days and nights mean that local sensitivities will span a wider range owing to a wider temperature range. Since higher temperatures require a larger portion of the total energy budget, as the rotation rate slows, the average sensitivity decreases. To normalize this to Earth, consider a Moon with a 24 hour day where this effect is relatively small.

The next complication is to add an atmosphere. Start with an Earth like atmosphere of N2, O2, and Ar except without water or other GHG’s. On the Moon, gravity is less, so it will take more atmosphere to achieve Earth like atmospheric pressures. To normalize this, consider a Moon the size of the Earth and with Earth like gravity.

The net effect of an atmosphere devoid of GHG’s and clouds will also reduce the difference between high and low extremes, but not by much since dry air can’t hold and transfer much heat, nor will there be much of a difference between ∂T/∂Pi and ∂T/∂Po. Since O2, N2 and Ar are mostly transparent to both incoming visible light and outgoing LWIR radiation, this atmosphere has little impact on the temperature, the energy balance or the sensitivity of the surface temperature to forcing.

At this point, we have a Physical Model representative of an Earth like planet with an Earth like atmosphere, except that it contains no GHG’s, clouds, liquid or solid water, the average temperature is 270K and the average sensitivity is 0.22 W/m2. It’s safe to say that up until this point in the analysis, the Physical Model is based on nothing but well settled physics. There’s still an ocean and a small percentage of the atmosphere to account for, comprised mostly of water and trace gases like CO2, CH4 and O3.

The Fun Starts Here

The consensus contends that the Earth’s climate system is far too complex to be represented with something as deterministic as a Physical Model, even as this model works perfectly well for an Earth like planet missing only water a few trace gases. They arm wave complexities like GHG’s, clouds, coupling between the land, oceans and atmosphere, model predictions, latent heat, thermals, non linearities, chaos, feedback and interactions between these factors as contributing to making the climate too complex to model in such a trivial way, moreover; what about Venus? Each of these issues will be examined by itself to see what effects it might have on the surface temperature, planet emissions and the sensitivity as quantified by the Physical Model, including how this model explains Venus.

Greenhouse Gases

When GHG’s other than water vapor are added to the Physical Model, the effect on the surface temperature can be readily quantified. If some fraction of the energy emitted by the surface is captured by GHG molecules, some fraction of what was absorbed by those molecules is ultimately returned to the surface making it warmer while the remaining fraction is ultimately emitted into space manifesting the energy balance. This is relatively easy to add to the model equations as a decrease in the effective emissivity of a surface at some temperature relative to the emissions of a planet. If Ps is the surface emissions corresponding to T, Fa is the fraction of Ps that’s captured by GHG’s and Fr is the fraction of the captured power returned to the surface, we can express this in equations 7) and 8).

7) Ps = εxσT4

8) Po = (1 – Fa)Ps + FaPs(1 – Fr)

The first term in equation 8) is the power passing though the atmosphere that’s not intercepted by GHG’s and the second term is the fraction of what was captured and ultimately emitted into space. Solving equation 8) for Po/Ps, we get equation 9),

9) Po/Ps = 1 – FaFr

Now, we can combine with equation 9) with equation 7) to rewrite equation 3) as equation 3a).

3a) Po = (1 – FaFr)εxσT4

Here, εx is the emissivity of the surface itself, which like the surface of the Moon without GHG’s is also approximately 1, where (1 – FaFr) is the effective emissivity contributed by the semi-transparent atmosphere. This can be double checked by calculating Psi, which is the power incident to the surface and by recognizing that Psi – Ps is equal to ∂E/∂t and Pi – Po.

10) Psi = Pi + PsFaFr

11) Psi – Ps = Pi – Po

Solving 11) for Psi and substituting into 10), we get equation 12), solving for Po results in 13) which after substituting 7) for Ps is yet another way to arrive at equation 3a).

12) Ps – Po = PsFaFr

13) Po = (1 – FaFr)Ps

The result is that adding GHG’s modifies the effective emissivity of the planet from 1 for an ideal black body surface to a smaller value as the atmosphere absorbs some fraction of surface emissions making the planets emissions, relative to its surface temperature, appear gray from space. The effective emissivity of this gray body emitter, ε’, is given exactly by equation 3a) as ε’ = (1 – FaFr)εx.

Clouds

Clouds are the most enigmatic of the complications, but none the less can easily fit within the Physical Model. The way to model clouds is to characterize them by the fraction of surface covered by them and then apply the Physical Model with values of α, κ and ε specific to average clear and average cloudy skies and then weighting the results based on the specific proportions of each.

Consider the Pi term, where if ρ is the fraction of the surface covered by clouds, αc is the average albedo of cloudy skies and αs is the average albedo of clear skies, α can be calculated as equation 14).

14) α = ραc + (1 – ρ)αs

Now, consider the Po term, which can be similarly calculated as equation 15) where Ps and Pc are the emissions of the surface and clouds at their average temperatures, εs is the equivalent emissivity characterizing the clear atmosphere and εc is the equivalent emissivity characterizing clouds.

15) Po = ρεsεcPc + ρ(1 – εc)εsPs + (1 – ρ)εsPs

The first term is the power emitted by clouds, the second term is the surface power passing through clouds and the last term is the power emitted by the surface and passing through the clear sky. GHG’s can be accounted for by identifying the value of εs corresponding to the average absorption characteristics between the surface and space and between clouds and space. By considering Pc as some fraction of Ps and calling this Fx, equation 15) can be rearranged to calculate Po/Ps which is the same as the ε’ derived from equation 3a). The result is equation 16).

16) ε’ = Po/Ps = ρεs εcFx + ρεs (1 – εc) + (1 – ρ)εs

The variables εc, Fx and ρ can all be extracted from the ISCCP cloud data, as can αc and αs., moreover; the data supports a very linear relationship between Pc and Ps. The average value of ρ is 0.66, the average value of αc is 0.37 and αs is 0.16 resulting in a value for α of about 0.30 which is exactly equal to the accepted value. The average value of εc is about 0.72 and Fx is measured to be about 0.68. Considering εs to be 1, the effective ε’ is calculated to be about 0.85.

From line by line simulations of a standard atmosphere, the fraction of surface and cloud emissions absorbed by GHG’s, Fa, is about 0.58, the value of Fr as constrained by geometry is 0.5 and is measured to be about 0.51. From equation 13), the equivalent εs becomes 0.71. The new ε’ becomes 0.85 * 0.70 = 0.60 which is well within the margin of error for the expected value of Po/Ps which is 240/395 = 0.61 and even closer to the measured value from the ISCCP data of 238/396 = 0.60. When the same analysis is performed one hemisphere at a time, or even on individual slices of latitude, the predicted ratios of Po/Ps match the measurements once the net transfer of energy from the equator to the poles and between hemispheres is properly accounted for.

At this point, we have a Physical Model that accounts for GHG’s and clouds which accurately predicts the ratio between the BB surface emissions at its average temperature and predicts the average emissions of the planet spanning the entire range of temperatures found on the surface.

The applicability of the Physical Model to the Earth’s climate system is a hypothesis derived from first principles, which still must be tested. The first test predicting the ratio of the planets emissions to surface emissions got the right answer, but this is a simple test and while questioning the method is to deny physical laws, surely some will question the coefficients that led to this result. While the coefficients aren’t constant, they do vary around a mean and its the mean value that’s relevant to the LTE sensitivity. A more powerful testable prediction is that of the planets emissions as a function of surface temperature. The LTE relationship predicted by equation 3) is that if Po are the emissions of the planet and T is the surface temperature, the relationship between them is that of a gray body whose temperature is T and whose emissivity is ε’ and which is calculated to be about 0.61. The results of this test will be presented a little later along with justification for the coefficients used for the first test.

Complex Coupling

In the context of equation 1), complex couplings are modeled as individual storage pools of E that exchange energy among themselves. We’re only concerned about the LTE sensitivity, so by definition, the net exchange of energy among all pools contributing to the temperature must be zero. Otherwise, parts of the system will either heat up or cool down without bound. LTE is defined when the average ∂E/∂t is zero, thus the rate of change for the sum of its components must also be zero.

Not all pools of E necessarily contribute to the surface temperature. For example, some amount of E is consumed by photosynthesis and more is consumed to perform the work of weather. If we quantify E as two pools, one storing the energy that contributes to the surface temperature Es, and the energy stored in all other pools as Eo, we can rewrite equations 1) and 2) as,

1) Pi = Po + ∂Es/∂t + ∂Eo/∂t

1a) ∂E/∂t = ∂Es/∂t + ∂Eo/∂t

2a) T = κ(Es – Eo)

If Eo is a small percentage of Es, an equivalent κ’ can be calculated such that κ’E = κ(Es – Eo) and the Physical Model is still representative of the system as a whole and the value of κ’ will not deviate much from its theoretical value. Measurements from the ISCCP data suggest an average of about 1.8 +/- 0.5 W/m2 of the 240 W/m2 of the average incident solar energy is not contributing to heating the planet nor must it be emitted for the planet to be in a thermodynamic steady state.

Thus far, GHG’s, clouds and the coupling between the surface, oceans and atmosphere can all be accommodated with the Physical Model, by simply adjusting α, κ and ε. There can be no question that the Physical Model is capable of modeling the Earth’s climate and that per equation 6), the upper bound on the sensitivity is less than the 0.4C per W/m2 lower bound suggested by the IPCC. The rest of this discussion will address why the issues with this model are invalid, demonstrate tests whose results support predictions of the Physical Model and show other tests that falsify a high sensitivity.

Models

The results of climate models are frequently cited as supporting an ‘emergent’ high sensitivity, however; these models tend to include errors and assumptions that favor a high sensitivity. Many even dial in a presumed sensitivity indirectly. The underlying issue is that the GCM’s used for climate modeling have a very large number of coefficients whose values are unknown, so they are set based on ‘educated’ guesses and it’s this that leads to bias as objectivity is replaced with subjectivity.

In order to match the past, simulated annealing like algorithms are applied to vary these coefficients around their expected mean until the past is best matched, which if there are any errors in the presumed mean values or there are any fundamental algorithmic flaws, the effects of these errors accumulate making both predictions of the future and the further past worse. This modeling failure is clearly demonstrated by the physics defying predictions so commonly made by these models.

Consider a sine wave with a gradually increasing period. If the model used to represent it is a fixed period sine wave and the period of the model is matched to the average period of a few observed cycles, the model will deviate from what’s being modeled both before and after the range over which the model was calibrated. If the measurements span less than a full period, both a long period sine wave and a linear trend can fit the data, but when looking for a linear trend, the long period sine wave becomes invisible. Consider seasonal variability, which is nearly perfectly sinusoidal. If you measure the average linear trend from June to July and extrapolate, the model will definitely fail in the past and the future and the further out in time you go, the worse it will get. Notice that only sinusoidal and exponential functions of E work as solutions for equation 1), since only sinusoids and exponentials have a derivative whose form is the same as itself, given that Po is a function of E. Note that the theoretical and actual variability in Pi can be expressed as the sum of sinusoids and exponentials and that this leads to the linear property of superposition when behavior is modeled in the energy in, energy out domain, rather than in the energy in, temperature out domain preferred by the IPCC.

The way to make GCM’s more accurate is to insure that the macroscopic behavior of the system being modeled conforms to the constraints of the Physical Model. Clearly this is not being done, otherwise the modeled sensitivity would be closer to 0.22 C per W/m2 and no where near the 0.8C per W/m2 presumed by the consensus and supported by the erroneous models.

Non Radiant Energy

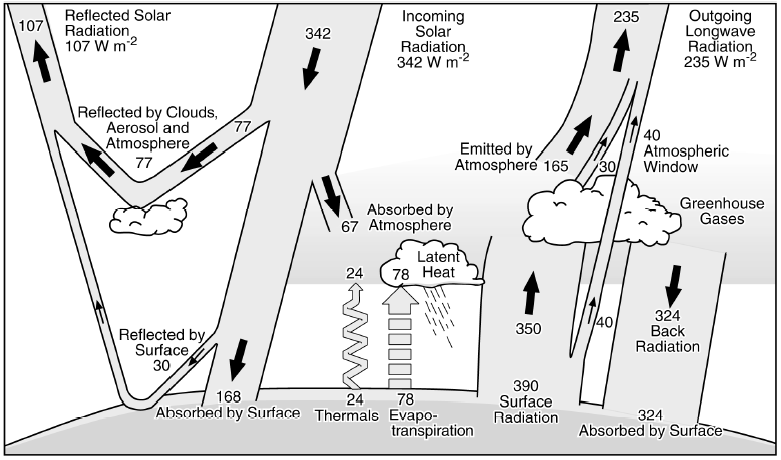

Adding non radiant energy transports to the mix adds yet another level of obfuscation. This arises from Trenberth’s energy balance which includes latent heat and thermals transporting energy into the atmosphere along with the 390 W/m2 of radiant energy arising from an ideal black body surface at 288K. Trenberth returns the non radiant energy to the surface as part of the ‘back radiation’ term, but its inclusion gets in the way of understanding how the energy balance relates to the sensitivity, especially since most of the return of this energy is not in the form of radiation, but in the form of air and water returning that energy back to the surface.

The reason is that neither latent heat, thermals or any other energy transported by matter into the atmosphere has any effect on the surface temperature, input flux or emissions of the planet, beyond the effect they are already having on these variables and whatever effects they have is bundled into the equivalent values of α, κ and ε. The controversy is about the sensitivity, which is the relationship between changes in Pi and changes in T. The Physical Model ascribed with equivalent values of α, κ and ε dictates exactly what the sensitivity must be. Since Pi, Po and T are all measurable values, validating that the net results of these non radiative transports are already accounted for by the relative relationships of measurable variables and that these relationships conform to the Physical Model is very testable and whose results are very repeatable.

Chaos and Non Linearities

Chaos and non linearities are a common complication used to dismiss the requirement that the macroscopic climate system behavior must obey the macroscopic laws of physics. Chaos is primarily an attribute of the path the climate system takes from one equilibrium state to another and is also called weather, which of course, is not the climate. Relative to the LTE response of the system and its corresponding LTE sensitivity, chaos averages out since the new equilibrium state itself is invariant and driven by the incident energy and its conservation. Even quasi-stable states like those associated with ENSO cycles and other natural variability averages out relative to the LTE state.

Chaos may result in over shooting the desired equilibrium, in which case it will eventually migrate back to where it wants to be, but what’s more likely, is that the system never reaches its new steady state equilibrium because some factor will change what that new steady state will be. Consider seasonal variability, where the days start getting shorter or longer before the surface reaches the maximum or minimum temperature it could achieve if the day length was consistently long or short.

Non linearities are another of these red herrings and the most significant non linearity in the system as modeled by the IPCC is the relationship between emissions and temperature. By keeping the analysis in the energy domain and converting to equivalent temperatures at the end, the non linearities all but disappear.

Feedback

Large positive feedback is used to justify how 1 W/m2 of forcing can be amplified into the 4.3 W/m2 of surface emissions required in order to sustain a surface temperature 0.8C higher than the current average of 288K. This is ridiculous considering that the 240 W/m2 of accumulated forcing (Pi) currently results in 390 W/m2 of radiant emissions from the surface (Ps) and that each W/m2 of input results in only 1.6 W/m2 of surface emissions. This means that the last W/m2 of forcing from the Sun resulted in about 1.6 W/m2 of surface emissions, the idea that the next one would result in 4.3 W/m2 is so absurd it defies all possible logic. This represents such an obviously fatal flaw in consensus climate science that either the claimed sensitivity was never subject to peer review or the veracity of climate science peer review is nil, either of which deprecates the entire body of climate science publishing.

The feedback related errors were first made by Hansen, reinforced by Schlesinger and have been cast in stone since AR1 and more recently, they’ve been echoed by Roe. Bode developed an analysis technique for linear, feedback amplifiers and this analysis was improperly applied to quantify climate system feedback. Bode’s model has two non negotiable preconditions that were not met by the application of his analysis to the climate. These are specified in the first couple of paragraphs in the book referenced by both Hansen and Schlesinger as the theoretical foundation for climate feedback. First is the assumption of strict linearity. This means that if the input changes by 1 and the output changes by 2, then, if the input changes by 2, the output must change by 4. By using a delta Pi as the input to the model and a delta T as the output, this linearity constraint was violated since power and temperature are not linearly related, but power is related to T4. Second is the requirement for an implicit source of Joules to power the gain. This can’t be the Sun, as solar energy is already accounted for as the forcing input to the model and you can’t count it twice.

To grasp the implications of nonlinearity, consider an audio amplifier with a gain of 100. If 1 V goes in and 100 V comes out before the amplifier starts to clip, increasing the input to 2V will not change the output value and the gain, which was 100 for inputs from 0V to 1V is reduced to 50 at 2V of input. Bode’s analysis requires the gain, which climate science calls the sensitivity, to be constant and independent of the input forcing. Once an amplifier goes non linear and starts to clip, Bode’s analysis no longer applies.

Bode defines forcing as the stimulus and defines sensitivity as the change in the dimensionless gain consequential to the change in some other parameter and is also a dimensionless ratio. What climate science calls forcing is an over generalization of the concept and what they call sensitivity is actually the incremental gain, moreover; they’ve voided the ability to use Bode’s analysis by choosing a non linear metric of gain. For the linear systems modeled by Bode, the incremental gain is always equal to the absolute gain as this is the basic requirement that defines linearity. The consensus makes the false claim that the incremental gain can be many times larger than the absolute gain, which is a non sequitur relative to the analysis used. Furthermore, given the T-3 dependence of the sensitivity on the temperature, the sensitivity quantified as a temperature change per W/m2 of forcing must decrease as T increases, while the consensus quantification of the sensitivity requires the exact opposite.

At the measured value of 1.6 W/m2 of surface emissions per W/m2 of accumulated solar forcing, the extra 0.6 W/m2 above and beyond the initial W/m2 of forcing is all that can be attributed to what climate science refers to as feedback. The hypothesis of a high sensitivity requires 3.3 W/m2 of feedback to arise from only 1 W/m2 of forcing. This is 330% of the forcing and any system whose positive feedback exceeds 100% of the input will be unconditionally unstable and the climate system is certainly stable and always recovers after catastrophic natural events that can do far more damage to the Earth and its ecosystems then man could ever do in millions of years of trying. Even the lower limit claimed by the IPCC of 0.4C per W/m2 requires more than 100% positive feedback, falsifying the entire range they assert.

An irony is that consensus climate science relies on an oversimplified feedback model that makes explicit assumptions that don’t apply to the climate system in order to support the hypothesis of a high sensitivity arising from large positive feedback, yet their biggest complaint about the applicability of the Physical Model is that the climate is too complicated to be represented with such a simple and undeniably deterministic model.

Venus

Venus is something else that climate alarmists like to bring up. However; if you consider Venus in the context of the Physical Model, the proper surface in direct equilibrium with the Sun is not the solid surface of the planet, but a virtual surface high up in its clouds. Unlike Earth, where the lapse rate is negative from the surface in equilibrium with the Sun and up into the atmosphere, the Venusian lapse rate is positive from its surface in equilibrium with the Sun down to the solid surface below. Even if the Venusian atmosphere was 90 ATM of N2, the surface would still be about as hot as it is now.

Venus is a case of runaway clouds and not runaway GHG’s as often claimed. The thermodynamics of Earth’s clouds are tightly coupled to that of its surface through evaporation and precipitation, thus cloud temperatures are a direct function of the surface temperature below and not the Sun. While the water in clouds does absorb some solar energy, owing to the tight coupling between clouds and the oceans, the LTE effect is the same as if the oceans had absorbed that energy directly. This isn’t the case for Venus, where the thermodynamics of its clouds are independent from that of its surface enabling clouds to arrive at a steady state with incoming energy by themselves.

Even for Earth, the surface in direct equilibrium with the Sun is not the solid surface, as it is for the Moon, but is a virtual surface comprised of the top of the oceans and the bits of land that poke through. Most of the solid surface is beneath the oceans and its nearly 0C temperature is a function of the temperature/density profile of the ocean above. The dense CO2 atmosphere of Venus, whose mass is comparable to the mass of Earth’s oceans, acts more like Earth’s oceans than it does Earth’s atmosphere thus Venusian cloud tops above a CO2 ocean is a good analogy for the surface of Earth and will be at about the same average temperature and atmospheric pressure.

Testing Predictions

The Physical Model makes predictions about how Pi, Po and the surface temperature will behave relative to each other. The first test was a prediction of the ratio between surface emissions and planet emissions based on measurable physical parameters and this calculation was nearly exact. The values of αc, αs, ρ, and εc in equations 14) and 16) were extracted as the average values reported or derived from the ISCCP cloud data set provided by GISS while εs arose from line by line simulations.

Figures 1, 2, 3 and 4 illustrate the origins of αc, αs, ρ, and εc, where the dotted line in each plot represents the measured LTE average value for that parameter. Those values were rounded to 2 significant digits for the purpose of checking the predictions of equations 14) and 16). Clicking

on a figure should bring up a full resolution version.

The absolute accuracy of ISCCP surface temperatures suffers from a 2001 change to a new generation of polar orbiters combined with discontinuous polar orbiter coverage which the algorithms depended on for consistent cross satellite calibration. This can be seen more dramatically in Figure 5, which is a plot of the global monthly average surface temperature derived from the gridded temperatures reported in the ISSCP. While this makes the data useless for establishing trends, it doesn’t materially affect the use of this data for establishing the average coefficients related to the sensitivity.

Figure 5 demonstrates something even more interesting, which is that the two hemispheres don’t exactly cancel and the peak to peak variability in the global monthly average is about 5C. The Northern hemisphere has significantly more seasonal p-p temperature variability than the Southern hemisphere owing to a larger fraction of land resulting in a global sum whose minimum and maximum are 180 degrees out of phase of what you would expect from the seasonal position of perihelion. To the extent that the consensus assumes the effects of perihelion average out across the planet, the 5C p-p seasonal variability in the planets average temperature represents the minimum amount of natural variability to expect given the same amount of incident energy. In about 10K years when perihelion is aligned with the Northern hemisphere summer, the p-p differences between hemispheres will become much larger which is a likely trigger for the next ice age. The asymmetric response of the hemispheres is something that consensus climate science has not wrapped its collective heads around, largely because the anomaly analysis they depend on smooths out seasonal variability obfuscating the importance of understanding how and why this variability arises, how quickly the planet responds to seasonal forcing and how the asymmetry contributes to the ebb and flow of ice ages.

While Pi is trivially calculated as reflectance applied to solar energy, both of which are relatively accurately known, Po is trickier to arrive at. Satellites only measure LWIR emissions in 1 or 2 narrow bands in the transparent regions of the emission spectrum and in an even narrower band whose magnitude indicates how much water vapor absorption is taking place. These narrow band emissions are converted to a surface temperature by applying a radiative model to a varying temperature until the emissions leaving the radiative model in the bands measured by the satellite are matched and then the results are aligned to surface measurements. Equation 15) was used to calculate Po which was based on reported surface temperatures, cloud temperatures and cloud emissivity applied to a reverse engineered radiative model to determine how much power leaves the top of the atmosphere across all bands. This is done for both cloudy and clear skies across each equal area grid cell and the total emissions are a sum weighted by the fraction of clouds modified by the clouds emissivity. To cross check this calculation, ∂E(t)/∂t can be calculated as the difference between Pi and the derived Po. If the long term average of this is close to zero, then COE is not violated by the calculated Po. Figure 6 shows this and indeed, the average ∂E(t)/∂t is approximately zero within the accuracy of the data. The 1.8 W/m2 difference could be a small data error, but seems to be the solar power that’s not actually heating the surface but powering photosynthesis and driving the weather and that need not be emitted for balance to arise. Note that ∂E/∂t per hemisphere is about 200 W/m^2 p-p and that the ratio between the global ∂E/∂t and the global ∂T/∂t infers a transient sensitivity of only about 0.12 C per W/m^2.

Figure 7 shows another way to validate the predictions as a scatter plot of the relative relationship between monthly averages of Pi and Po for constant latitude. Each little dot is the average for 1 month of data and the larger dots are the per slice averages across 3 decades of measurements. The magenta line represents Pi == Po. Where the two curves intersect defines the steady state which at 239 W/m2 is well within the margin of error of the accepted value. Note that the tilt in the measured relationships represents the net transfer of energy from tropical latitudes on the right to polar latitudes on the left.

The next test is of the prediction that the relationship between the average temperature of the surface and the planets emissions should correspond to a gray body emitter whose equivalent emissivity is about 0.61, which was the predicted and measured ratio between the planets emissions and that of the surface.

Figure 8 shows the relationship between the surface temperature and both Pi and Po, again for constant latitude slices of the planet. Constant latitude slices provide visibility to the sensitivity as the most significant difference between adjacent slices is Pi, where a change in Pi is forcing per the IPCC definition. The change in the surface temperature of adjacent slices divided by the change in Pi quantifies the sensitivity of that slice per the IPCC definition. The slope of the measured relationship around the steady state is the short line shown in green. The larger green line is a curve of the Stefan-Boltzmann Law predicting the complete relationship between the temperature and emissions based on the measured and calculated equivalent emissivity of 0.61. The monthly average relationship between Po and the surface temperature is measured to be almost exactly what was predicted by the Physical Model. The magenta line is the prediction of the relationship between Pi and the surface temperature based on the requirement that the surface is approximately an ideal black body emitter and again, the prediction is matched by the data almost exactly.

For reference, Figure 9 shows how little the effective emissivity, ε’ varies on a monthly basis with a max deviation from nominal of only about +/- 3%. Figure 10 shows how the fraction of the power absorbed by the atmosphere and returned to the surface also varies in a relatively small range around 0.51. In fact, the monthly averages for all of the coefficients used to calculate the sensitivity with equation 16) vary over relatively narrow ranges.

The hypothesized high sensitivity also makes predictions. The stated nominal sensitivity is 0.8C per W/m2 of forcing and if the surface temperature increases by 0.8C from 288K to 288.8K, 390.1 W/m2 of surface emissions increases to 394.4 W/m2 for a 4.3 W/m2 increase that must arise from only 1 W/m2 of forcing. Since the data shows that 1 W/m2 of forcing from the Sun increases the surface emissions by only 1 W/m2, the extra 3.3 W/m2 required by the consensus has no identifiable origin thus falsifies the possibility of a sensitivity as high as claimed. The only possible origin is the presumed internal power supply that Hansen and Schlesinger incorrectly introduced to the quantification of climate feedback.

Joules are Joules and are interchangeable with each other. If the next W/m2 of forcing will increase the surface emissions by 4.3 W/m2, each of the accumulated 239 W/m2 of solar forcing must be increasing the surface emissions by the same amount. If the claimed sensitivity was true, the surface would be emitting 1028 W/m2 which corresponds to an average surface temperature of 367K which is about 94C and close to the boiling point of water. Clearly it’s not once again falsifying a high sensitivity.

Conclusion

Each of the many complexities cited to diffuse a simple analysis based on the immutable laws of physics has been shown to be equivalent to variability in the α, κ and ε coefficients quantifying the Physical Model. Another complaint is that the many complexities interact with each other. To the extent they do and each by itself is equivalent to changes in α, κ and ε, any interactions can be similarly represented as equivalent changes to α, κ and ε. It’s equally important to remember that unlike GCM’s, this model has no degrees of freedom to tweak its behavior, other than the values of α, κ and ε, all of which can be measured, and that no possible combination of coefficients within factors of 2 of the measured values will result in a sensitivity anywhere close to what’s claimed by the consensus. The only possible way for any Physical Model to support the high sensitivity claimed by the IPCC is to violate Conservation Of Energy and/or the Stefan-Boltzmann Law which is clearly impossible.

Predictions made by the Physical Model have been confirmed with repeatable measurements while the predictions arising from a high sensitivity consistently fail. In any other field of science, this is unambiguous proof that the model whose predictions are consistently confirmed is far closer to reality than a model whose predictions consistently fail, yet the ‘consensus’ only accepts the failing model. This is because the IPCC, which has become the arbiter of what is and what is not climate science, needs the broken model to supply its moral grounds for a massive redistribution of wealth under the guise of climate reparations. It’s an insult to all of science that the scientific method has been superseded by a demonstrably false narrative used to support an otherwise unsupportable agenda and this must not be allowed to continue.

Here’s a challenge to those who still accept the flawed science supporting the IPCC’s transparently repressive agenda. First, make a good faith effort to understand how the Physical Model is relevant, rather than just dismiss it out of hand. If you need more convincing after that, try to derive the sensitivity claimed by the IPCC using nothing but the laws of physics. Alternatively, try to falsify any prediction made by the Physical Model, again, relying only on the settled laws of physics. Another thing to try is to come up with a better explanation for the data, especially the measured relationships between Pi, Po and the surface temperature, all of which are repeatably deterministic and conform to the Physical Model. If you have access to a GCM, see if its outputs conform to the Physical Model and once you understand why they don’t, you will no doubt have uncovered serious errors in the GCM.

If the high sensitivity claimed by the IPCC can be falsified, it must be rejected. If the broadly testable Physical Model produces the measured results and can’t be falsified, it must be accepted. Falsifying a high sensitivity is definitive and unless and until something like the Physical Model is accepted by a new consensus, climate science will remain controversial since no amount of alarmist rhetoric can change the laws of physics or supplant the scientific method.

References

1) IPCC reports, definition of forcing, AR5, figure 8.1

AR5 Glossary, ‘climate sensitivity parameter’

2) Kevin E. Trenberth, John T. Fasullo, and Jeffrey Kiehl, 2009: Earth’s Global Energy Budget. Bull. Amer. Meteor. Soc., 90, 311–323. Trenberth

3) 2) Bode H, Network Analysis and Feedback Amplifier Design

assumption of external power supply and active gain, 31 section 3.2

gain equation, 32 equation 3-3

real definition of sensitivity, 52-57 (sensitivity of gain to component drift)

3a) effects of consuming input power, 56, section 4.10

impedance assumptions, 66-71, section 5.2 – 5.6

a passive circuit is always stable, 108

definition of input (forcing) 31

4) Jouzel, J., et al. 2007: EPICA Dome C Ice Core 800KYr Deuterium Data and Temperature Estimates.

5) ISCCP Cloud Data Products: Rossow, W.B., and Schiffer, R.A., 1999: Advances in Understanding Clouds from ISCCP. Bull. Amer. Meteor. Soc., 80, 2261-2288.

6) Hansen, J., A. Lacis, D. Rind, G. Russell, P. Stone, I. Fung, R. Ruedy, and J. Lerner, 1984: Climate sensitivity: Analysis of feedback mechanisms. In Climate Processes and Climate Sensitivity, AGU Geophysical Monograph 29, Maurice Ewing Vol. 5. J.E. Hansen, and T. Takahashi, Eds. American Geophysical Union, 130-163.

7) M. E. Schlesinger (ed.), Physically-Based Modeling and Simulations of Climate and Climatic Change – Part II, 653-735

8) Michael E. Schlesinger. Physically-based Modelling and Simulation of Climate and Climatic Change (NATO Advanced Study Institute on Physical-Based Modelling ed.). Springer. p. 627. ISBN 90-277-2789-9

9) Gerard Roe. Feedbacks Timescales and Seeing Red, Annual Review of Earth Planet Science 2009, 37:93-115

10) Stefan, J. (1879), “Über die Beziehung zwischen der Wärmestrahlung und der Temperatur” [On the relationship between heat radiation and temperature] (PDF), 79: 391–428

11) Boltzmann, L. (1884), “Ableitung des Stefan’schen Gesetzes, betreffend die Abhängigkeit der Wärmestrahlung von der Temperatur aus der electromagnetischen Lichttheorie” 258 (6): 291–294

Even 1% of positive feedback will render a system unstable if that is truly the total feedback of the system and not just one of many.

The problem is that when consensus climatologists talk about positive f/b or even net +ve f/b , they don’t mean net +ve f/b they mean ” net +ve f/b ( except for the biggest feedback in the system, which is negative). ”

The Planck f/b dominates ALL other feedbacks and any positive feedbacks just make it a little less negative. Thus the system remains stable as we know it has to from the geological record.

So if climate modellers suggest that the water vapour f/b doubles the effect of CO2 forcing they are suggesting that WV is a +ve f/b which slightly counters the Planck f/b making the true net f/b less negative. This means that new equilibrium temp will be higher than without WV but still bounded by the strong and non linear Planck feedback.

CTM asked me back door last week whether this guest post should be published at WUWT. I recommended no, and gave general rather than specific reasons. CTM did commendably publish with his very good reasons (post publication peer review), forcing me to put my money where my mouth was.

Background clarification. I spent my college years basically learning how to build applied math models, in any course available inside or outside my economics concentration. For example, in a mathematical biology course, proved the equivalency of a Markov chain probability model (yup, learned from taking advanced probability theory in the math department that same semester) to the standard differential equation form of the classical predator prey equations. You know, rabbits multiply because few foxes. More rabbits leads to more foxes. Soon too many foxes eat most rabbits. Rabbit population crashes, then fox population crashes from starvation. Cycle repeats. In differential equations, mess with rabbit and fox reproduction rates (dP/dT) produces different cycle timings. Same in equivalently formulated Markov chain probability distributions even without applying Bayes theorem. So think am competent to comment on this apparently technical mathematical guest post.

George Box, a famous statistician, said ‘all models are wrong but some are useful’. The question to be addressed is whether the Physical model presented in this guest post is useful. The short answer is, for the Moon yes but for the Earth no. This comment aspires to prove that conclusion without undo mathematical baggage. Apologies if is longer than some of my previous WUWT guest posts. Have not had the comment time to make a longish thought simple and short.

In any mathematical model, there are two fundamental sources of error (assuming the math itself is not goofed up, and in this guest post after several hours of study it isn’t): 1. faulty assumptions behind an equation derivation; 2. erroneous equation inputs. This comment will provide examples of both, pointing to specific guest post text. It will also highlight some of the graphical ‘proofs’ that actually cannot be. If wrong, I welcome specific factually detailed corrections by the guest poster or any others. This is not intended to be an exhaustive critique; it suffices as illustrative only.

Basics

To understand this guest post, I had some initial difficulty translating from conventional climate sensitivity (ECS, effective or equilibrium climate sensitivity to a doubling of CO2—varying only in longish time frames) in degrees C per doubling of CO2 (AR4=3, CMIP5 median=3.2, observational energy budget models [e.g. Lewis and Curry 2014] ~ 1.65) to the guest post framework of lambda per W/m^2. Here is that decoder ring.

An alternative way to define ECS is ΔT=λΔF. The canonical IPCC consensus λ=0.8 (for F in W/m2)=3C/ CO2 doubling. The post figure 8 (more below) ‘derives’ a max λ0.39 and a min λ0.19 compared to the moon at λ0.22. Reasonable?

ΔF is without argument (post figure 7 label) =5.35*ln(C1/C0) W/M2, which for any standard doubling (the IPCC definition of ECS) is 5.25*ln(2)=3.7W/M2.

The Moon

I can find no fault in the post that derives the Moon equations from basic physics (through equation 6). I do not doubt that the moon sensitivity is λ~0.22.

The Earth

Well, unlike the Moon, the Earth has an atmosphere. Now I also have no doubt that if there were no oceans, and the atmosphere was just N2, O2, and Ar, it would be similar to the Moon. But it isn’t, because Earth has oceans covering 71% of the planet, therefore water vapor, therefore clouds, and even some CO2.

And this complexity is where the guest posted Physical model goes awry. It argues similarity. I shall point out crucial dis-similarities.

A first logic only example is the last paragraph before the section heading “Making it more complex”. The paragraph says that the water vapor positive feedback cannot be distinguished from the cloud/ice negative albedo feedback, so the water net effect is 18C rather than the canonical consensus 33C. This is silly. Water in clouds and ice is not in the vapor state; it is a liquid or a solid. And in the guest post, Albedo is separately treated. The paragraph is just nonsense.

A second logic plus math formulation error is in the Clouds section. It derives equation parameters for clear sky versus cloudy sky using ISCCP. Well and good, but wrong, since clouds are not created equal. All cirrus warms (cause ice is transparent to visible light but opaque to infrared). And the rest depend on cloud type, cloud altitude, and entrained condensed water (both optical density and inherent precipitation). No such ‘constant’ can be derived from general ISCCP data because it does not have that level of granularity. Check for yourselves.

In the complex coupling section, it is asserted that an analysis of ISCCP data says the amount of radiation reaching the surface only calculates 1.8 W/M2 of nonwarming insolation (e.g. biological energy forming processes). I have examined ISCCP carefully today, and can imagine no way this calculation can be made as asserted from the data publically available. Some facts. Careful measurements over years of the Sulawese national rain forest in Indonesia say ~1% of insolation is converted to biomass. That would be 2.4 W/M2 using the guest post’s figures. The loss is mainly leaf shadowing. The average for properly planted temperate crops during the growing season is 4-8% depending on crop. So divide by ~2 for temperate and you are >2% for cultured land. Oceans average >2% because in the euphotic (biologically active photosynthetic upper tens of meters), there is little to no shadowing. Simply too dilute phytoplankton. So the asserted low E0 which provides Physical model complex coupling equivalency to the Moon simply is not true observationally. How much of an error this wrong physical assumption introduces, dunno. Did not bother to follow its math consequences further.

‘Physical equation proofs’ in the charts.

We will highlight just 3.

Figure 3, cloud fraction ~0.66. Two problems, one mentioned above: all clouds are not created equal. Second, specifically relevant to the Physical model critique. Nowhere in the described Physical model is the could fraction derived. It is an input, not an output. Curve matching at a ridiculously illogical level.

Figure 7. I can understand what was done. The labeled resultant Po is 1.7 W/M2 versus the 5.35ln(2) input of 3.7W/M2. Well, that works out to an implicit λ=1.7/3.7= 0.46, which is well within the believable observational energy budget range of roughly ½ the IPCC ECS— but contradicts the guest post central thesis.

Figure 8. I cannot understand, let alone reproduce it as latitudinal slices from ISCCP. Code? The X axis is at best confusingly labeling, unless someone smarter than myself can enlighten. And, the apparently calculated from equation 6 ( my assumption) max and min ECS still include the water vapor phase state error discussed above. Since I could not understand the X axis, did not bother to redo the math. The graphic is impressive on the surface, perhaps meaningless when fully deconstructed. Dunno, don’t care.

You’ve obviously spent a lot longer looking at it than I have. I don’t want to comment on most of what you say because I’ll need to think about it. Just a couple of points.

It is not unreasonable to consider both of these effects together since both are a consequence of adding water to a waterless model. The fact that water can be in different states does not seem particularly relevant. I don’t find the use of words like “silly” and “nonsense” persuasive.

With regard to your critique about clouds that “not all clouds are created equal”; every model must involve simplifying assumptions. What reason do you have to think that the particular simplifying assumption of treating clouds as an average over all species of cloud is invalid as a first approximation. The link between the fraction of each cloud type and climate is poorly understood. What more reasonable assumption could one make in the absence of a deeper understanding of clouds.

in the absence of a deeper understanding of clouds than one size fits all , the reasonable assumption is that if you don’t know the basics you will get a useful model.

you will NOT get a useful model.

My basic reason for that opinion has two inputs. First, a series of papers suggesting net cloud feedback is neutral or slightly negative, as opposed to positive as Dessler falsely ‘showed’. Delineated in climate chapter of ebook The Arts of Truth, and again partly in essay Cloudy Clouds in ebook Blowing Smoke. Second, when Lindzen’s proven adaptive cirrus iris ( via Tstorms, BAMs 1991) is put into a climate model, sensitivity is almost halved. See Bjorn Stevens 2014. Double commented by Judith Curry and myself in back to back posts at the time at her Climate Etc. Read those both before returning here.

ristvan,

Let me address your points.

On the basics, the metric of forcing used by the IPCC is misleading owing to its highly non linear nature and the T^-3 dependence of the sensitivity on the temperature. A sensitivity quantified as W/m^2 of surface emissions per W/m^2 of forcing is linear and works over all temperatures found on any planet. W/m^2 of emissions are a valid way to equivalently express a temperature which also allows expressing the sensitivity (gain) as the dimensionless ratio used by Bode in which case the many errors mapping Bode to the climate become far more obvious. For example, the basic requirement of linearity is that the same sensitivity (gain) must apply uniformly to each of the 240 W/m^2 of total forcing and that the idea that the incremental gain is 3-4 times larger than the average gain is preposterous.

I stand by my assertion that you can’t separate the effects of water vapor from the effects of liquid and solid water. Focusing on only the water vapor distracts from the larger picture where water has more than just a GHG effect. To some extent, this is a bias introduced by the IPCC’s metric of forcing, which is a change in solar input AFTER reflection by albedo. If not for the influences of water, what causes the emissions of the planet to drop from about 303 W/m^2 (270K) without water or GHG’s to 240 W/m^2 (255K) with them. The point being that the ‘cooling’ is a negative feedback like effect consequential to water that is widely discounted in order to lend plausibility to the idea of massive amplification by water vapor feedback.

You are correct that clouds are not all equal, but when their properties are averaged, the averages do become representative of the whole. The reason is that all of the attributes in the model are related to energy and the climate system is very linear in the energy domain, superposition applies and averages are relevant. The ISCCP data reports the IR optical depth of clouds (a non linear property) which can be trivially converted into the clouds IR emissivity which as a property that acts linearly on energy, can be geometrically averaged and the results are a meaningful proxy for the whole. This same analysis has been performed at a more detailed level and works even down to individual pixels where the differences you are concerned about are differentiated based on ISCCP adjustments to the optical depth, so the averages already account for these difference. I originally developed this model to predict missing pixels in the DX data and it worked so well, it inspired me to turn it into a climate model. Determining the reflectivity of clouds from the D2 data was trickier owing to the differences between ice and water in clouds, but I also have the DX data which I used to validate the cloud reflectivity I extracted from the D2 data. There are still some small deviations, but the average is correct and relative to the LTE sensitivity, how averages change is all that matters.

The 1.8 W/m^2 average dE/dt is the sun of two larger 180 degree out of phase signals with an average p-p variability of about 190 W/m^2, so we are talking about 1% here and the data isn’t any better than that. The error in the 1.8 value is at least +/- 1.8 W/m^2. I should point out that I applied simulated annealing like algorithms to the coefficients to see if I could make this difference go away and I couldn’t, although it did get minimized to about 1.7 W/m^2.

Related to the cloud fraction, it can be computed from the other measured attributes, but it is itself a primary product of the ISCCP data. It’s not curve matched to anything, it’s a measured value, and given the other variables, there’s only one value that works. Calculating what it needs to be by orthogonal methods is far more difficult, although I have made significant progress along those lines.

You are not understanding figure 7. The magenta line is the line where Pi == Po. The data shows that 3.7 W/m^2 of Pi (forcing) increases Po by only 1.7 W/m^2 which corresponds to a surface emissions increase of 1/0.61 * 1.7 = 2.8 W/m^2 corresponding to a temperature increase from 288K to 288.5K indicating that doubling CO2 increases the temperature by 0.5K and is a lower sensitivity than I predict from the equations. However, dPo/dPi, which is the slope of the relationship in figure 7, is distorted by energy transferred from the equator (on the right) to the poles (on the left), but can never exceed the average limits of the magenta line. The point here was to show how dPi/dt is less than dPo/dt and while the equations assumed they were equal, the direction that they are unequal in only decreases the sensitivity.

BTW, my central thesis is that the climate system must obey the laws of physics and I don’t see how this contradicts it.

Regarding figure 8. The X axis is power density in W/m^2 and the Y axis is the surface temperature. Both the relationships between Po (in yellow) and the surface temperature, T, and Pi (in red) and the surface temperature are plotted to the same scale as both Po and Pi are measured in W/m^2. BTW, when drawn to the same scale, where they intersect defines the steady state average and is where the theoretical Pi (green line) and theoretical Pi (magenta line) also intersect. The sensitivity per the IPCC definition is dT/dPi, which is about 0.19C per W/m^2, while the sensitivity along the output path of the planet is about 0.3C per W/m^2. I assert that the true sensitivity is somewhere between these two limits.

Yes. But I have already explained why I think you are wrong. Some specific examples. Clouds are not homogenous, and the data base you rely on provides no granularity. Your comment assumes inhomogeneity averages out. Now prove it.

E0 ‘annealing algorithms’. Post them for critique, cause I cannot figure out how that can be done from any ISCCP data. I posted observational counters. So show your biological ‘annealing algorithms’ from ISCCP for scrutiny.

As for figure 8, it contradicts your figure 7. You have not countered my simple interpretation of your own figure 7 labels. I just read them and converted the label arithmetic. Cannot misunderstand your own specific labeled values. Just is.

As for your central thesis that climate must obey only the laws of physics, let me point out again that Earth is a biologically active planet where the laws of physics are not exclusively determinative, unlike the Moon (or Venus). The laws of physics do not explain thick limestones or fossil fuel deposits or biologically sourced turpene, isoprene, and dimethyl sulfide aerosol cloud nucleators thst influence cloud fraction and so albedo.

Finally, your very low ECS conclusions are refuted by all recent observational energy budget analyses of ECS. My comment cited my personal favorite paper amongst several similar conclusions, Lewis and Curry 2014. Please credibly reconcile your Physical model conclusions to those observational results.

Look, GE, we are actually both on the skeptical rather than warmunist side of this great controversy. But I seek rock solid, simple, incontrovertible arguments to use against warmunists. Equating grey dry atmosphereless Moon to blue water world atmospheric Earth does not pass that PR sniff test. And never will. Even if you were right, which I have shown in several different ways you likely are not.

Ristvan,

Why are you opposed to average cloud properties as being representative of the whole. Equivalent modelling is a very powerful concept where you can arrive at a simpler system that from its external behavior (in this case, Pi, Po and T) is indistinguishable from the more complex system manifesting the behavior being modelled. Since sensitivity as defined by the IPCC is dT/dPi, if we can quantify the relationships between Pi, Po and T, we can quantify the sensitivity and this is all that I’m doing.

Yes, there are many different kinds of clouds which is why an average is useful. The ISCCP data does differentiate based on clouds type and the basic analysis works for any cloud type, so there’s no reason it wouldn’t work for an equivalent average. As I said, it works for individual pixels, but also works for constant latitude slices of pixels of any width up to complete hemispheres and the planet as a whole. It just works far to well as a predictor of the seasonal response to varying solar input.

The annealing processes I tried to get rid of the 1.8 W/m^2 were not used for any of the data I presented. But it was a rather simple approach of just varying the coefficients in an effort to minimize the difference.

How does fig 8 contradict fig 7. In fig 7, X and Y are W/m^2 and it plots the relative relationship between Pi (the energy arriving at the planet) and Po (the emissions leaving the planet, not the surface which is about 1/e times Po). Figure 8 plots both Pi and Po against the surface temperature and its the exact same Pi and Po values plotted against each other in fig 7.

As I have pointed out, many are confused by all the apparent complexity, but it’s like trying to understand an internal combustion engine from inside the combustion chamber. We exist inside the combustion chamber of the climate (the atmosphere) and this biases how we think about the climate system. If instead of trying to understand what is happening within the atmosphere, we should simply understand what happens at its two boundaries, one with space and another with the surface.

How would you suggest we modify the model to account for the tiny fraction missing from the Earth without GHG’s or water? Incrementally add 1 ppm at a time and at what point does the result stop conforming to SB and COE?

BTW, my sensitivity range of 0.2 to 0.3 C W/m^2 is equivalent to .74 – 1.11 C for doubling CO2 with closer to 1.11 being more likely than 0.74 and this is only slightly less then the estimates in the papers you cite, which BTW still is using a variety of likely suspect estimates of forcings and uptake from AR5.

GE, a simple rather than detailed reasoned answer. Cause on all evidence I think you are wrong by a factor of ~2, and have already commented how and why. If you converged on observational ECS, well and good. You don’t. You extend your valid physics Moon model to Earth using unvalidatable assertions and assumptions about oceans, water vapor, and biology. Fail.

ristvan,

You haven’t offered a better alternative to explain the demonstrable fact that the relationship between the emissions of the planet and the surface temperature follows the SB LAW with an emissivity of about 0.61 and that the ratio between planet emissions and surface emissions is the same 0.61. This was my hypothesis (actually my hypothesis was that the Earth obeys the laws of physics) and the data only confirms it. Unless you can find data that contradicts this relationship and/or supports different physics, or can come up with a better explanation for the data, the reasons you think I could be wrong must be invalid, although I’ve already explained why I think they are invalid.

Relative to the scientific method, I’ve held up my part, which is to offer a testable hypothesis and a few tests that could falsify it, but instead support it. Find an experiment that falsifies my hypothesis and only then will you have sufficient grounds to claim my hypothesis is incorrect.

ristvan,

Here’s a question for you. Which of equations 1) through 4) do you believe is not representative of how the Earth climate responds to forcing provided the proper average coefficients are chosen. These are the only equations that define the model I assert describes how the macroscopic properties of the Earth’s climate system react to forcing. The other equations simply decompose the variables in equations 1) through 4) into more primitive constituents that I can measure in order to calculate the effective emissivity by means other than simply dividing planet emissions by surface emissions.

ΔF is without argument (post figure 7 label) =5.35*ln(C1/C0) W/M2, which for any standard doubling (the IPCC definition of ECS) is 5.25*ln(2)=3.7W/M2.

I have an argument. In reproducing the original research study, I did not get the same formula but 3.12*ln(C1/C0).

Link: https://wattsupwiththat.com/2017/03/17/on-the-reproducibility-of-the-ipccs-climate-sensitivity/

Yes, that “without argument” statement raised a red flag with me, also, . Even though I couldn’t calculate the figure for myself, Clive Best also performed the calculation, and HE got 5.6 watts per square meter for a doubling of CO2 from 300 to 600 ppm.

http://clivebest.com/blog/?p=4265

So there are at least 3 different calculations with three different results, showing there IS an argument.. They’re all within a dex of about 0.26, though.

“The Moon

I can find no fault in the post that derives the Moon equations from basic physics (through equation 6). I do not doubt that the moon sensitivity is λ~0.22.

The Earth

Well, unlike the Moon, the Earth has an atmosphere. Now I also have no doubt that if there were no oceans, and the atmosphere was just N2, O2, and Ar, it would be similar to the Moon. ” ristvan

“The 270K average temperature of the Moon would be the Earth’s average temperature if there were no GHG’s since this also means no liquid water, ice or clouds resulting in an Earth albedo of 0.12 just like the Moon. “GW

The mean at the equator of the moon is 220K. From dawn to dusk, its about 340K. You can’t treat the moon like a super conductor (or just a big ball of copper) rather than a BB is the issue. Each square km is the temperature required for emission to equal absorption independent of the rest of the moon.

The mean of T on Earth should be higher if the mean of T^4 was the same as the moon just because of the lower spread of temperatures. No need for a GHE, just the atmosphere and oceans spread the heat around.

Then there is the ignored rotation. The dark side of the moon cools to 93K while the Earth might only cool to only 120K (temp for the first 12 hours of night on the moon) in the much shorter night but warm up just as quickly to 340K daytime mean if everything else was equal to the moon. That’s an average of 240K compared to the moons 220K.

What you should have done instead is to try and sort out the points of contention with George – directly or enlisting ctm’s diplomatic services – encouraging him to publish an improved version.

CTM definitely made the right decision to publish.

Whilst I am one of the critics behind one of the fundamental assumptions, namely that one can make a useful comparison between the Moon and the Earth which assumption I consider to be fundamentally misconceived, the post and the comments are very interesting.

One can learn a great deal by things which are not correct, or are partly correct, even if they only reveal looking at a problem from a different angle.

it would have been quite wrong for this article not top have been circulated to a wider audience just because ristvan has issues with it.