By Andy May

Frank Stefani, of the Helmholtz-Zentrum Dresden-Rossendorf, Institute of Fluid Dynamics, has published a very interesting new paper that compares the solar “aa” index and CO2 emissions to global SST (sea surface temperatures using the HadSST4.2 dataset) and finds a CO2 sensitivity (TCR or the “Transient Climate Response”) of 1.1 to 1.4K. This is at the low end of the IPCC TCR range of 1.2 to 2.4K (IPCC, 2021, p. 93), but quite close to the values calculated by Lewis and Curry and Nicola Scafetta (Lewis & Curry, 2018), (Scafetta, 2023), and (Lewis, 2023). Scafetta found a plausible range of TCR (versus HadSST4.2) of 1.0K to 1.2K and Lewis & Curry report a range of 0.9K to 1.7K for TCR versus HadCRUT4. The estimates are compared in Table 1.

Table 1. Estimates of TCR, or Transient Climate Response to a doubling of CO2. Sources: (Stefani, 2026), (Lewis & Curry, 2018), (Scafetta, 2023), and (IPCC, 2021, p. 93).

TCR Estimates | ||||

Author | Best Estimate | Range: Low end | Range: High end | Note |

Stefani | 1.26K | 1.1 | 1.4 | 2026 |

Lewis & Curry | 1.2K | 0.9 | 1.7 | 2018 |

Scafetta | 1.1K | 1 | 1.2 | 2023 |

IPCC AR6 | 1.8K | 1.2 | 2.4 | p. 93 |

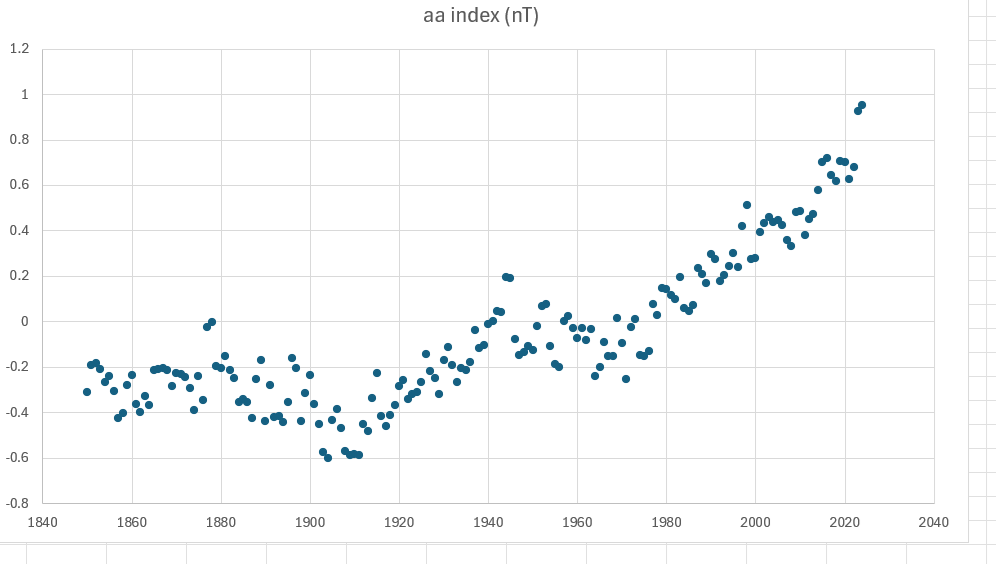

All the estimates in table 1 are based on regression models of varying complexity and all attempt to account for the influence of solar variability. The Lewis and Curry estimate does not incorporate a solar activity proxy directly but does use the Atlantic Multidecadal Oscillation (AMO) as an indicator of natural climate variability, which is, in large part, solar variability. Stefani uses the aa index of geomagnetic activity, essentially how disturbed Earth’s magnetic field is by the sun. It is measured in nanoteslas (nT).

Nearly every observable form of solar variability is a magnetic phenomenon at its core, including sunspots, flares, and solar wind variability. Thus, the aa index is a good indicator of changes in the sun’s state and output. The aa index as a measure of solar-geomagnetic coupling has been measured consistently since 1868.

Table 1 shows that Stefani’s aa index + CO2 model compares well to Lewis and Curry’s AMO + CO2 model and Scafetta’s solar proxies + CO2 model. Scafetta uses three estimates of total solar irradiance (TSI) in his study, although he acknowledges that variations in TSI may be only ~20% of the sun’s total influence on Earth’s climate. None of these observation-based models support the high-end IPCC TCR estimate of 2.4K per doubling of CO2 or their best estimate of 1.8K, but all are near the lower end of the IPCC range. The Lewis (2023) correction to the Sherwood (2020) assessment of ECS and TCR relied upon in AR6 is not included in the table. However, after changing Sherwood’s subjective Bayesian assessment of multiple estimates of TCR to an objective Bayesian assessment, Lewis calculated a TCR of 1.37 to 1.4 depending upon the assumptions made. This is still close to the other observation-based objective estimates in Table 1.

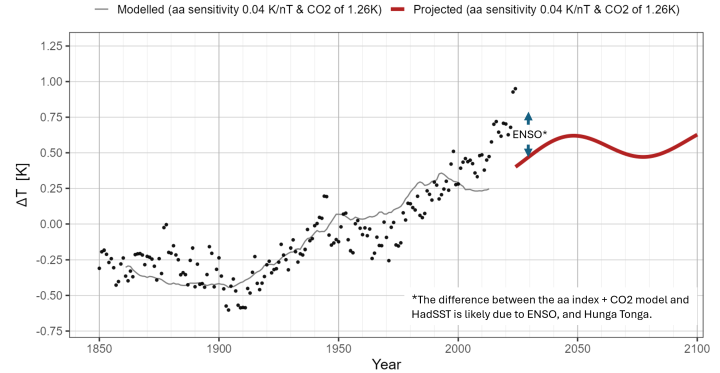

Stefani found that the solar aa index can successfully predict HadSST4.2 up to 1990-2000 on its own. After 1990 to 2000 the role of CO2 in the regression increases significantly. In figure 1, Stefani’s robust aa index weight of 0.04 K/nT (that is the HadSST4.2 anomaly temperature in °C per the aa index in nanoteslas) plus CO2 with a sensitivity of 1.26K per doubling is shown as a thin dark gray line. It is compared to the HadSST4.2 anomaly (shown as black dots). His projected aa index plus CO2 at 1.26K per doubling function to 2100 is shown as a red line. The maximum departure in 2023 and 2024 looks startling, but we need to remember that this follows two strong El Niños (2018-19 & 2023-24) and the Hunga Tonga volcanic eruption. In particular the El Niño from June 2023 to May 2024 was one of the strongest El Niños on record. The HadSST4.2 global anomaly has been falling since September 2024. Thus, a portion of the difference between the aa index plus CO2 function and HadSST4.2 may just be ENSO, the Hunga Tonga eruption, and weather.

Stefani’s optimal model projection to 2100 assumes constant emissions of 30-50 Gt of CO2 per year (roughly current levels) plus a simple linear carbon sink model and predicts a global SST increase of 0.6°C (1.1°F) compared to the standard HadSST4.2 reference period of 1961-1990. Using pessimistic parameters (high CO2 emissions, a low sensitivity to the aa-index, and a high sensitivity to CO2) yields a 2100 temperature increase of ~1K over 1960-1990. This is still a benign result.

A word on Transient Climate Response

The transient climate response is the modeled warming due to increasing the atmospheric CO2 concentration by 1% each year until it doubles, which would take about 70 years. This is different from the commonly cited value of ECS, which is the equilibrium climate sensitivity, or the final warming due to a sudden doubling of CO2. ECS is an untestable number, since it would take over 1,000 years for the atmosphere to completely come to equilibrium after CO2 suddenly doubles. Given the implausible scenario, ECS can never be tested, except in a climate model. Thus, it is not a scientific quantity per Karl Popper (Popper, 1962). TCR on the other hand is very realistic and could be tested given enough time and effort.

Conclusions

The paper is a welcome addition to the growing group of observation-based estimates of climate sensitivity to CO2. It is appropriate that Stefani chose to create his model around HadSST4.2. SSTs are more stable than air temperatures on land and they respond mainly to changes in insolation, whether due to cloud cover changes or changes in the sun itself. The changes in SST due to the greenhouse effect are smaller for the reasons discussed in my previous post. I recommend the paper, it is interesting, significant, and a good read.

Works Cited

IPCC. (2021). Climate Change 2021: The Physical Science Basis. In V. Masson-Delmotte, P. Zhai, A. Pirani, S. L. Connors, C. Péan, S. Berger, . . . B. Zhou (Ed.)., WG1. Retrieved from https://www.ipcc.ch/report/ar6/wg1/

Lewis, N. (2023, May). Objectively combining climate sensitivity evidence. Climate Dynamics, 60, 3139-3165. https://doi.org/10.1007/s00382-022-06468-x

Lewis, N., & Curry, J. (2018). The Impact of Recent Forcing and Ocean Heat Uptake Data on Estimates of Climate Sensitivity. Journal of Climate, 31, 6051-6071. DOI: https://doi.org/10.1175/JCLI-D-17-0667.1.

Popper, K. R. (1962). Conjectures and Refutations, The Growth of Scientific Knowledge. New York: Basic Books. Retrieved from http://ninthstreetcenter.org/Popper.pdf

Scafetta, N. (2023). Empirical assessment of the role of the Sun in climate change using balanced multi-proxy solar records. Geoscience Frontiers, 14(6). Retrieved from https://www.sciencedirect.com/science/article/pii/S1674987123001172

Sherwood, S. C., Webb, M. J., Annan, J. D., Armour, K. C., J., P. M., Hargreaves, C., . . . Knutti, R. (2020, July 22). An Assessment of Earth’s Climate Sensitivity Using Multiple Lines of Evidence. Reviews of Geophysics, 58. https://doi.org/https://doi.org/10.1029/2019RG000678

Stefani, F. (2026). Solar and Anthropogenic Climate Drivers: An Updated Regression Model and Refined Forecast. Atmosphere, 17(3). https://doi.org/10.3390/atmos17030252

Hindcasting for fun and profit.

Andy,

The most obvious failing of your comparison is that whatever sensitivity Stefani has calculated, it is for SST, not air temperatures. Most of the indices you have calculated it for air for air temperatures.

But he hasn’t calculated TCR at all. There is no attempt to look at an increase of 1% pre year over 70 yers, as you explain. All he has is a regression coefficient.

And it isn’t a proper regression coefficient, as he rather remarkably admits:

” Although this procedure may appear to be a “statistical sin”, I consider its outcome to be better justified than that of a “correct”, albeit somewhat naive double regression. That way, the CO 2 sensitivity was narrowed down from the ample range of 0.6–1.6 K derived in [ 39 ] to 1.1–1.4 K. The alternative double regression would not only have resulted in a moderately enhanced CO 2 sensitivity value of 1.74 K, but also in a dramatically reduced aa sensitivity of 0.011 K/nT. Faced with the choice of either accepting an unreasonably huge reduction in the aa sensitivity over the last two decades (compared with its stable long-term average) or attributing some of the recent steep warming to factors other than CO2 or the sun, I opted for the latter.“

Weird. It is a sin. He knows how it should be done (double regression), but that gave too high a result (1.74). So he rejected that because he thought it too high, and hacked together a home made procedure which gave a result he liked better.

And Nicky knows about “statistical sin”. 😉

All true, but I did not consider it a failing.

First, the distinction between SST and air temperatures is valid, but it’s not a showstopper here. Stefani’s focus on SST aligns with how ocean heat content drives much of the climate system—air temps often lag or amplify SST changes. Many sensitivity estimates (e.g., from ARGO data or satellite records) use SST as a proxy because it’s more directly measurable over long periods. If anything, this makes his approach conservative, as SST warming tends to be slower than surface air temps in most datasets like HadCRUT or GISS.

He estimated TCR, while including a proxy for the sun’s effect on Earth’s climate. The aa index is arguably a better proxy than TSI (total solar irradiance) for the effect because all or nearly all the changes in the sun are related to changes in its magnetic field. TSI is a side effect, not the cause. The aa index (geomagnetic activity) is indeed a stronger correlate for solar influence than TSI alone, as it captures magnetic field variations that affect cosmic rays, cloud formation, and potentially albedo—mechanisms TSI misses. Studies like those from Svensmark or Shaviv support this; TSI is more of a byproduct of magnetic cycles, not the primary driver.

Statistically what Stefani did was a sin, but how many sins statistical have the consensus committed? I couldn’t even count them. I mention Sherwood in the post as an example. Climate science is riddled with similar adjustments. Sherwood et al. (2020) on hot spot amplification relied on reanalysis tweaks that inflated sensitivities, and AR6’s narrowing of ECS (1.5–4.5 K) involved downweighting high-end models post-hoc. Or take Mann’s hockey stick—principal component centering was a “sin” that exaggerated recent warming. If we’re calling out sins, consistency demands we apply it across the board, given you are part of the consensus you don’t want to go there.

Both ECS and TCR are model fantasies. Useful heuristics, but they’re model-derived abstractions that assume CO2 dominance and linear forcings. They ignore nonlinear feedbacks like solar-ocean coupling or multidecadal oscillations (AMO/PDO). Real-world CO2 growth isn’t exactly 1% per year, but from ~280 ppm in 1850 to 425 ppm today is about 52% total increase over 176 years (using NOAA data), averaging ~0.3% per year. Over the last 50 years (1974–2024), it’s closer to 0.5–0.6% annually. Stefani, Lewis, and Scafetta all work in this observational ballpark, yielding lower sensitivities (1–2 K) that better match the ~1.1°C warming since 1850 without invoking massive positive feedbacks. If solar forcing via aa explains even 20–30% of that, CO2’s role shrinks accordingly.

That is Stefani’s very valid point.

Andy,

On your first point, you say it yourself:

” SST warming tends to be slower than surface air temps in most datasets like HadCRUT or GISS”

Yes, and so Stefani’s SST “sensitivity” would necessarily be less than an air sensitivity. The IPCC would have quoted lower numbers for SST.

Second point: you listed the requirements for TCR. They are specific – 1% per year, 70years. They appear nowhere in Stefani’s calculation.

Your third is the typical WUWT defence of last resort. OK, our paper is rubbish, but what about Mann, or Sherwood, or whatever? The relevant point is that our paper is rubbish.

“surface air temps”

This is typical climate science cognitive dissonance. We live *on* the surface, not in the air. Soil temperatures are more of a major factor in climate than air temperature six feet above the soil. That’s not to say they are not related but it is a complicated functional relationship and not the simplistic assumption that air temperature is surface temperature.

This is why it was agriculture science found the increase in growing seasons, not climate science. It was agricultural science that forecasted larger food production because of this, not climate science. Climate science has, and still does, predict LOWER food production from higher air temperatures. It was remote sensing science that first found the “greening” of the earth, not climate science. Climate science only predicted *less* green as higher temperatures destroyed plant life.

Freeman Dyson’s main criticism of climate science, and especially the climate models, was that climate science is not holistic and it requires a holistic approach to classify climate and its impacts.

The truly sad fact is that even after 50 years of intense study, climate science can’t even accurately predict the air temperature anywhere let alone globally. That’s not likely to change unless climate science moves away from using simplified “averages” that are inaccurate and moves toward developing holistic algorithms for the biosphere.

“This is typical climate science cognitive dissonance. We live *on* the surface, not in the air.”

But we don’t live in the sea.

“Soil temperatures are more of a major factor in climate than air temperature six feet above the soil.”

SST is sea surface temperature, not soil temperature.

70% of the Earth’s surface is covered by water.

And what percentage of people live in that 70%?

What percentage of people depend on harvests extracted from the sea?

Not relevant.

“Not relevant.”

It is if you are claiming we live there.

Nailed it!

But much of what we harvest from the sea DOES live in the sea, just like much of our land harvests live in the soil!

And I know what SST is. But as usual you don’t don’t seem to understand the REAL world significance.

Typical deflection. Why can you just not admit you misunderstood what SST means?

You said it was more important to measure soil temperature than air temperature because we don;t live in the air. Now you switch to talking about fishing. Of course 100% of what we harvest from the sea comes from the sea, just as 100% of what we harvest from the land comes from the land. It’s got nothing to do with comparing sea temperature with global temperature.

It sure does have something to do with global temperature.

Attribution for one. There are three pieces being dealt with, atmosphere, land, and ocean. Averaging them without appropriate weighting is incorrect.

Land and ocean are heat sinks. They “store” some amount of heat for a period of time that is not available for heating the atmosphere. If that storage amount and storage time is different in land and ocean, the air temperatures will not be comparable. (Not that they are anyway due to enthalpy.)

Land insolation peaks about noon local time, yet air Tmax doesn’t peak until 2 – 3 hours later. Why is that?

What has any of this got to do eith tge comment I was responding to? It’s just the usual Gorman distraction technique.

“What has any of this got to do eith tge comment I was responding to? It’s just the usual Gorman distraction technique.”

In other words, “I have no answer”.

It has to do with your comment, not the one you were responding to. I even quoted you as follows.

Answer the question I asked.

“It has to do with your comment”

Then address that comment. Why do you think it’s appropriate to ignore the 30% of the globe that is land in order to determine the global TCR?

“Land insolation peaks about noon local time, yet air Tmax doesn’t peak until 2 – 3 hours later. Why is that?”

If you don’t know, why not just do an internet search. But it should be pretty obvious that heat builds during the day. That even as solar radiation decreases after noon, the air is getting more radiation then it is emitting. I’m really not sure why this confuses you.

But again, all of this is a distraction from the claim that we should ignore land temperatures.

No sh*t, Sherlock.

Why is the question! What are the intermediate processes that are occurring? Can these processes be the cause of the radiation imbalance? Why does climate science try to connect insolation diectly to air temperature?

The point is that soil and water are heat sinks but they are never treated as such. They are treated like black bodies, what comes in immediately goes out.

You’ll never get an answer to this from bellman. He has absolutely no knowledge of the real world – including thermal inertia.

“ Why do you think it’s appropriate to ignore the 30% of the globe that is land in order to determine the global TCR?”

As Andy has already pointed out, TCR is a non-physical product determined from a model, not from measurements. A model can ignore whatever the modeler wants to ignore.

” But it should be pretty obvious that heat builds during the day.”

That is *NOT* an answer as to why heat builds during the day instead of changing instantaneously.

“That even as solar radiation decreases after noon, the air is getting more radiation then it is emitting.”

What determines the amount of heat the surface is emitting? Go look up the term “thermal inertia”. And what determines the amount of heat the “air” is emitting? Again, look up the term “thermal inertia”.

This is all part of why it is inappropriate to classify the Earth as a black body with an emissivity when determining instantaneous radiation flux balance.

“But again, all of this is a distraction from the claim that we should ignore land temperatures.”

Determining how the oceans react is *NOT* ignoring land temperatures. You are still showing your lack of reading comprehension skills.

” A model can ignore whatever the modeler wants to ignore. ”

Not if you are claiming that TCR is less just because you’ve ingored the faster warming parts if the Earth. That’s like removing the fastest raising prices from your inflation calculation and then claiming you’ve reduced inflation.

“That is *NOT* an answer as to why heat builds during the day instead of changing instantaneously. ”

It’s not a question of it changing instantaniosly. It’s the fact that the temperature doesn’t instantly rise to a point where energy out equals energy in. Until it reaches that point the temperature will continue to rise even as energy in decreases. As I say, if you really don’t understand this I’m sure there

are sources on the web that can explain it better than I can.

“What determines the amount of heat the surface is emitting?”

Its temperature.

“Go look up the term “thermal inertia”.”

See, you do understand it. But that’s just a phrase for what I’m trying to explain. It takes time for a body to reach the equalibrium temperature.

“Determining how the oceans react is *NOT* ignoring land temperatures.”

It’s what you’ve been arguing whever you understand it or not. You said at the start the model van ignore anything you want. In this case, by only using SST the modeller is ignoring land.

You can’t seem to get it into your head that TCR is derived FROM A MODEL!

Are you *really* trying to say that the model has included *ALL* physical factors?

The question you were asked is WHY IS THAT? Not “what is happening”. You were told what is happening.

It’s not due to energy in and energy out, at least not totally. It’s mostly due to the thermal inertia of the surface material, be it water or soil. The specific heat and thermal heat conductivity are crucial in understanding surface and sub-surface physical processes for both land and water.

The TCR estimate is done for “over water”. You are doing nothing but whining that Stefani didn’t find a composite TCR for the land/water combination. You can’t find the composite until you find each component.

Stop whining.

Again, you have *NO* understanding of physical processes. Your grasp of reality is tenuous at best. You didn’t bother to go look up “thermal inertia” at all, did you?

If you pulse heat into the heat sink on a computer main processor (perhaps from hitting the spreadsheet calculate key), the temperature of that heat sink will continue to rise for a period of time EVEN AFTER THE PULSE HAS ENDED. That process has nothing to do with energy in equaling energy out at any point in time. It has to do with the thermal inertia of the heat sink.

This basically all boils down to the fact that you, as well as climate science, tries to equate temperature with heat (joules). THEY ARE NOT THE SAME THING. 10 joules in for one second will equal 1 joule out for 10 seconds as far as heat is concerned – and the temperature of the object being heated is not dependent on the temperature of the object doing the heating. Since the earth radiates heat 24 hours per day while the sun only inputs heat for about half that period of time, the temperature of the earth doesn’t have to rise to maximum based on the energy being input. If it did the earth would become a frozen ball at night!

And it’s not just this either. If the energy in equals the energy out and together they determine the temperature of the earth then how does AGW happen?

You don’t understand it at all! Equilibrium with what? Again, as a heat sink and not a black body, the maximum temperature of the earth is not driven by the instantaneous energy input. The temperature of that heat sink will be determined by its thermal inertia. Objects with high thermal inertia heat up and cool down slowly, their temperature tends to be more stable than objects with low thermal inertia. Water, sandy soil, loam, rock, etc all have varying specific heat capacities and thermal conductivities – meaning that they all reach different maximum temperatures from a specific heat input because of their thermal inertia.

“It’s what you’ve been arguing whever you understand it or not. You said at the start the model van ignore anything you want. In this case, by only using SST the modeller is ignoring land.”

SO WHAT? Again, you have to understand the components before you can understand the composite of the components. You are just whining that someone is working with a component instead of the composite. Again, SO WHAT?

Again, you have to understand the components before you can understand the composite of the components.

Here is what CoPilot gives after summarizing several published studies..

Your “lag” is basically the system’s thermal inertia showing up: some of the net radiation is going into soil heat storage (via (G)), so the surface and near‑surface air don’t warm instantaneously with insolation.

Flux is time dependent. You can’t just add them as if they represent an energy value. To actually find the energy balance you need to integrate each term and then add them. Which, in turn, means you have to know the functional relationship to time for each component.

I’m not sure it is wise to take advice concerning what you can and can’t do with radiant exitance fluxes (in W.m-2) from a guy who vigorously defends the notion that they are extensive properties.

You have no room to criticize. If you can’t show that fluxes need to be normal to a point, then you can’t prove they are additive. This is difficult to do with what is absorbed on a sphere. Similarly, the fundamental units are (Joules/sec·m²). S/B is not a panacea for determining the heat from a flux unless you are dealing with a black body.

Q = mcₚΔT is the definition of heat. Or ΔT = Q / mcₚ. Note the use of ΔT. Heat is defined by the difference in temperature. It is not an equation that you can use to determine a temperature unless you already know the heat that has been added. Q’s units is Joules. That is, total Joules needed to cause rise in temperature. In other words, an integrated flux over time.

Don’t confuse the earth with a black body, it is not. Ideal theory is an important place to start but one needs to move into the real world at some point. Read Planck about a pure reflection of radiation from a body. See what he says about how those fluxes add to make the body warmer. Show the math that refutes that.

Read Planck’s Heat Treatise and his lectures to Columbia University. Entropy is a real bitch. I can’t beat it, you can’t beat it, up to this point, no one has ever beaten it or we would have perpetual motion machines. Cold will never warm hot. I don’t care how much flux you add together.

Of course I have room to criticize. Radiant exitance fluxes (W.m-2) are intensive properties because they don’t depend on the size of the system. If you have a 1 m^2 surface emitting homogenously then the right half will have the same radiant exitance as the left half and of the whole. That’s what makes it intensive. You can mock me all you want. It’s still an intensive property.

You’re not going to see me adding radiant exitance fluxes that’s for sure.

“…Stefani didn’t find a composite TCR for the land/water combination.”

That’s the problem. The TCR is not the temperature just over water. It’s defined in relation to the globally averaged surface temperature. You can’t just ignore temperature change over 30% of the globe and say you have determined the TCR. You can derive an estimate for how much changes there will be in sea surface temperature – but that is not the TCR. And whatever you call it you cannot do what May does, and say it’s at the low end of the IPCC TCR range, becasue you are comparing two different things.

A completely nonsense, unmeasurable quantity.

Bellman *still* thinks that an average of measurements is a measurement as well.

Yep. As you keep pointing out, he has no experience in the real world.

The GUM says it is. For example see [JCGM GUM-6:2020] section 5.7 and E.2.2 for explicit statements that measurands can be averages.

And of course the GUM has many references and examples of averaging. Section 11.10.4 even discusses a measurement model that is an average of other measurement models.

Granted…the GUM does contain examples of averaging intensive properties (including temperature) which you vehemently reject so I have my doubts whether you will be persuaded but it is worth a shot.

Do you have a clue about fitness of purpose? Let’s look at [JCGM GUM-6:2020] Section 5.7

The conformity assessment can encompass an average mass of a batch of objects. It is used to check the dispersion of a manufacturing process, not to determine a single measurand. Think tolerance. Think quality control.

Here is what ANSI has for a definition. StandardsAlliance-Handbook-2022-SECTION5.pdf

What is conformity assessment? Conformity assessment is the term given to techniques and activities that ensure a product, process, service, management system, person or organization fulfils specified requirements.

It has nothing to do with averaging measurements to determine a single measurand.

Now let’s look at your E.2.2 reference.

As usual, you miss entirely the need for measuring the SAME THING. This is not measuring 5 different strings and averaging the values to get one measurand. It is measuring ONE string at five points approximately equally

spaced along its length using a caliper.

Lastly, maybe you read 5.6 also. What it says is important.

5.6 When building a measurement model that is fit for purpose, all effects known to affect the measurement result should be considered. The omission of a contribution can lead to an unrealistically small uncertainty associated with a value of the measurand [156], and even to a wrong value of the measurand. Considerations when building a measurement model are given in 9.3. Also see JCGM 100:2008, 3.4.

See that [156] reference. It goes to THOMPSON, M., AND ELLISON, S. L. R. Dark uncertainty. Accred. Qual. Assur. 16 (2011), 483–487.

Here is the abstract from the paper.

Reproducibility deviation is an item on an uncertainty budget. Read JCGM 100-2008; B.2.16, F.1.1.2, and H.6.

Now read NIST TN 1900 EX. 2 and see if this applies when measurement uncertainty is negligible and the difference among samples is used to calculate the repeatability uncertainty.

There are online classes in metrology that you can take. I suggest you do so.

Nothing in your post invalidates the GUM’s statement that a measurand can be an average of something.

Great response, but it is wrong. Maybe you have difficulty in understanding what I posted.

Read this again.

These two parts of conformity testing go together. The mean and the dispersion around that mean. Look up what six sigma means in terms of quality control.

You can’t simply ignore that that the purpose of averaging a batch of objects to determine if the average of a batch of objects is within a specified limit is a legitimate purpose of quality control. You also can’t ignore that the dispersion (standard deviation) of that batch is important also.

It unequivocally says a measurand can be an average mass of a batch of objects.

This is why it is so difficult having a conversation with you. Bellman or I will point out that the GUM, NIST, etc says A. You then cite B from the same source and insinuate that it invalidates A..

I don’t even know why you even bother citing the GUM, NIST, etc. anyway since they have examples of averaging intensive properties which you vehemently reject as being meaningful and useful.

Global average air temperatures are a meaningless statistic that cannot represent “the climate”.

Cue the usual “buh, buh, but WUWT publishes them!”

Neither bdgwx or bellman seem to be able to differentiate between

You *can* measure the temperature of a single object multiple times and average the readings to get a “best estimate” of the temperature (an intensive property) for that single measurand.

You can *NOT* measure the temperature of multiple objects and average the readings to get a “best estimate” of the intensive property of the multiple objects.

You can only get a physical average for a property you can add physically. E.g. mass. You can *not* get a physical average for a property you can’t add physically.

1g + 1g = 2g

80F + 70F ≠ 150F

Statisticians and computer programmers think you can average anything and get a physically meaningful value. Engineers and physical scientists know that the *real* world doesn’t work that way.

“Neither bdgwx or bellman seem to be able to differentiate between

This differentiation has been put in front of them innumerable times, and they invariably avoid the inconvenience. At this point I have to acknowledge the possibility they do understand the difference but are forced to ignore it to keep the fiction of climatology trendology alive.

A Type A uncertainty evaluation is done on each input quantity separately and then combined. Each input quantity has multiple single observations of the same thing that are considered entries in a random variable. The mean and standard deviation of the random variable make up the estimates of that input quantity.

You can declare a monthly average as your measurand and use daily temps as the observations. However a Type A evaluation requires those observations to form a random variable X1 (one input quantity) of which the mean and standard deviation are the estimates of the measurand. Inherent in this is the need for the functional relationship to have only one variable. That is, f(x) = (X1).

Each data point has uncertainty that contributess to the combined uncertainty AND the dispersion of the values must also be added to the combined uncertainty.

This what NIST TN 1900 does. They declare the measurement uncertainty of each observation to be negligible and then calculate the dispersion of the values.

I do have a problem with using an expanded SDOM rather than the SD. However, ±1.8°C versus ±4.1°C are both reasonable.

“Neither bdgwx or bellman seem to be able to differentiate between…”

Stop lying. I’m sure I’ve been over the difference with you multiple times. It doesn’t alter the fact that an average if multiple measurements of the same thing and the average of multiple different yhings are both useful and can both be considered measurements if you wish.

You just need to be clear what the measurand is. In the first case it’s the specific quantitu you are measuring and i. the sevond it’s the mean of the population you are sampling.

“You *can* measure the temperature of a single object multiple times and average the readings to get a “best estimate” of the temperature (an intensive property) for that single measurand.”

Of course you can, but your entire logic of not averaging intensive properties was this it would be meaningless. In both cases you have to add up intensive properties, which is meaningles. If I measure a rock 20 tomes and get a sum of 400°C, what does that temperature mean, and how would be different if I added the temperature of 20 different rocks and got the same 400°C? You either have to accept that having a meaningless part of an equation dors not necesserily invalidate the final result, or you need to cone up with a different excuse for not wanting to consider average temperatures.

“You can only get a physical average for a property you can add physically. E.g. mass.”

As I keep trying to explain, averaging a sample is just a way of estimating a population mean, and in the case of an intensive property the population mean is really the mean if some implicit extensive proprty. If you want the average trmperature over an area then the extensive property is temperature times area, The sum is the integral of temperature with respect to area, and the average is just that integral divided by total area. The same if you are averaging over time, units ate temperature times time. e.g. degree days.

You say you understand and then make a statement like this?

Again, if I put a rock of 80F in your bare hand and then add a second rock at 70F do you have a total of 150F in your hand? PLEASE ANSWSER!

The “average” is only useful if you do have 150F in your hand. If you don’t then you simply cannot calculate an average because you can’t add the temperatures.

PLEASE ANSWER! Stop ignoring the question!

An average is *NOT* a measurement. It is a statistical descriptor. An average is ONLY useful in finding a “best estimate” of the value of a property of a measurand – it is *NOT* a measurement of that property however, it is just a method for finding a “best estimate” of the value. And even then it *has* to meet restrictive requirements for the distribution of the actual measurements – requirements that are just ignored by climate science (and YOU).

You have to be averaging measurements of the same measurand in order to determine a best estimate OR be measuring an extensive property for multiple measurands. You say you understand this but then just ignore this simple distinction. YOU HAVE TO BE ABLE TO ADD THE MEASUREMENTS TO GET A PHYSICAL TOTAL FOR MULTIPLE MEASURANDS. You cannot add the temperatures of two different rocks and get a PHYSICAL sum. Only a blackboard statistician will say “it doesn’t matter”. Only a blackboard statistician will say “you can add any two numbers and get a physically meaningful total”.

There is *NO* mean of a distribution of an intensive property of multiple objects. To get a physically meaningful mean of a distribution you *have* to be able to add the component values and get a physically meaningful total. You can’t do that with intensive properties.

You are a blackboard statistician with no concern for physical science requirements. To you, any two numbers can be averaged and provide a “meaningful” mean on the blackboard. One of the numbers could be the maximum height of an adobe building and the other the maximum height of a steel building and *YOU* would see the mean of the two values as “meaningful”. It wouldn’t matter what the physical limitations of each building material is.

You can’t even distinguish when you are trying to find the best estimate for the property of a single measurand as opposed to finding a mean value of an intensive property of multiple measurands.

Let me repeat:

—————————–

“Neither bdgwx or bellman seem to be able to differentiate between

——————————

In the first case you are *NOT* averaging DIFFERENT intensive properties. You are averaging the measurements of an intensive property FOR A SINGLE MEASURAND. In essence, you are performing a protocol process meant to account for measurement uncertainty in finding a best estimate of the property of that single measurand.

In the second case you are actually measuring DIFFERENT THINGS, not just different measurands but different properties! Averaging the values is *not* accounting for measurement uncertainty in any way, shape, or form. It is *NOT* finding a “best estimate” for the value of A property.

Climate science should *not* be a blackboard statistical exercise with no physical meaning. It *should* be a physical science discipline meeting physical science requirements.

“PLEASE ANSWER! Stop ignoring the question!”

Please stop ignoring my answers. You keep asking the same ignorant questions over and over, and just pretend I haven’t answered.

You cannot add two temperatures together and get a meaningful result. Temperature is an intensive property. That’s my answer.

Even if you could add them it would make no sense to use Farenheit as it’s not an absolute scale.

Why do you keep talking about sums when the discussion is about averages? The sum of two intensive properties is meaningless, the average can be meaningful. This is true whether adding different measurements of the same thing or of different things.

Answer this – if you have a rock with a temperature of 300K. and you take it’s temperature 10 times, do you now have a rock with a temperature of 3000K? If not, can you still divide that 3000K to get an average measurement?

If you can’t add them then you can’t average them either. Averaging requires adding the temperatures.

“Why do you keep talking about sums when the discussion is about averages?”

The average of two values is: (Value1 + Value2)/2

Value1 + Value 2 IS A SUM!

If you cannot add Value1 and Value2 then you can’t find an average either.

Not in the physical world. You do not understand physical science at all.

What you are *really* doing is trying to define a gradient map between two points, not finding an “average”. A gradient map in the physical world does *NOT* have to be a direct linear functional relationship. The mid-point between two points does *NOT* equate to an average.

This is the same issue with trying to define a diurnal temperature “average” as the mid-point between the maximum and minimum value. The temperature profile is *NOT* linear, part of it is sinusoidal and part is exponential, and that is AT BEST! Each and every diurnal temperature profile deviates from these distributions because of daily weather variation!

I have a set of MEASUREMENTS of a property for a single object. I can average those measurements to get a “best estimate” for the property of that single object. That requires summing all the measurements and then dividing by the number of objects. IF THE DISTRIBUTION OF THE MEASUREMENTS MEETS THE REQUIREMENTS OF BEING RANDOM AND GAUSSIAN.

That best estimate for the value of an intensive property of a single measurand has no physical relationship to the best estimate for the value of an intensive property of a second measurand. You cannot find a physically meaningful average for that intensive property.

Can you understand the difference? If I have ten rocks with a single measurement each for the temperature of each, can I sum those temperatures to get a total that is physically meaningful?

The ten measurements of a single object ARE related, the ten measurements of ten different objects are *NOT* related, at least if what is being measured is an intensive property.

Give me one reference from the internet that says you can average intensive properties of different objects.

Open up your favorite AI and type this in: “can you find any reference on the internet that says you can average the values of an intensive property from multiple objects”

Let us all know what you get for an answer.

“If you can’t add them then you can’t average them either. Averaging requires adding the temperatures.”

Its the magic of dividing by the magic number N!

Like OLR, averaging is just a model. (Tmax + Tmin)/2 is also just a model, a very poor one to be sure.

Good point! I swear that anyone supporting the current climate science discipline has never lived a single day in reality. It’s all blackboard crap done by using implicit assumptions that are never stated let alone understood.

E.g. “all measurement uncertainty is random and Gausian”. “The average is the true value”. “Error and uncertainty are the same thing”, “multiple measurements of the same thing is equivalent to single measurements of multiple things”, “intrinsic properties can be summed and averaged across different measurands”, “averages have meaning all by themselves without knowing the standard deviation”. “linear transformation of a distribution by a constant decreases the standard deviation”. “the entire globe is a flat, homogenous object so you can infill temperature data without regard to any confounding variables”. “you don’t need to use weighted averages based on the variances of the component data”, “temperature = heat”, “radiant heat is not time dependent, i.e. radiant flux is a constant”. “the mid-point diurnal temperature is an “average” value”, “temperature gradients are always linear”, “CO2 is an insulator”, “increasing CO2 density doesn’t increase total radiative flux”

Jeesh, I need to stop. I could fill a whole post with the idiocy.

“What you are *really* doing is trying to define a gradient map between two points, not finding an “average”.”

I’ve no idea what you want to do. All I’m doing is talking about an average. If you have two rocks of different temperature there is no gradient between them. If on the other hand you are talking about temperature over time or area, they will be a gradient, but there is no reason to suppose it will be linear.

“The mid-point between two points does *NOT* equate to an average.”

Well it sort of does. As you say, the mean of two values is (value 1 + value 2 )./ 2. That’s the mid point between the two values.

“I have a set of MEASUREMENTS of a property for a single object. I can average those measurements to get a “best estimate” for the property of that single object. ”

Again, not the question I asked, which was about the sum of those measurements.

“That requires summing all the measurements and then dividing by the number of objects.”

And the question is whether that sum is physically meaningful. It’s obvious you will just keep dodging the question, because you know it demonstrates your logical inconsistency.

“IF THE DISTRIBUTION OF THE MEASUREMENTS MEETS THE REQUIREMENTS OF BEING RANDOM AND GAUSSIAN.”

There is no such requirement – which is why you have to write in all caps. I keep warning you that it’s a tell.

“If I have ten rocks with a single measurement each for the temperature of each, can I sum those temperatures to get a total that is physically meaningful?”

No, because as I keep explaining the sum of intensive properties is not meaningful. This is true if you are measuring different rocks and true if you are measuring the same rock multiple times. You are the one insisting the sum has to be physically meaningful in order for the average to be meaningful. I’m telling you it does not.

“The ten measurements of a single object ARE related, the ten measurements of ten different objects are *NOT* related”

So no you switch argument and say it’s to do with relatedness, not to summing intensive properties. It’s almost as if you are scrambling to find any argument which will justify your claim. By this logic, the average mass of 10 rocks is not valid if the rocks are not “related” even though mass is an extensive property.

But this is all meaningless unless you explain why the rocks are not related, and that gets back to the question of why you are averaging the rocks in the first place. If you are doing it to estimate the mean of a population, then the rocks are related by being part of the same population.

“the ten measurements of ten different objects are *NOT* related, at least if what is being measured is an intensive property.”

What does intensive have to do with it? Why do the 10 rocks have unrelated temperatures and densities, but related masses and volumes?

“Open up your favorite AI and type”

If we are using argument ad AI as as if it was a good why of determining truth, why not just ask it “can you average an intensive property”

Or just “can you average temperatures”

But let’s ask the random word generator your exact question

and concludes

Intensive properties can be averaged mathematically.Thermodynamics uses weighted averages to compute meaningful combined-system properties.Physics and climate science use spatial or temporal averages of intensive fields as statistical descriptors.The arithmetic mean of an intensive property is not itself a thermodynamic state variable.

“ only one of them corresponds to a real thermodynamic outcome.”

“not a thermodynamic property of a combined system”

“ intensive fields”

“The arithmetic mean of an intensive property is not itself a thermodynamic state variable.”

Once again we see your lack of reading comprehension skills. These few pieces tells you all you need to know!

An intensive FIELD is *not* the same as the intensive property of an object. An object is *NOT* a field.

You provide NOTHING showing that you can average the intensive properties of different objects and get anything meaningful physically.

“Intensive properties can be averaged mathematically” does *NOT* mean that the average is physically meaningful.

“These few pieces tells you all you need to know!”

Yes. that the “ai” statistical model that you seem to regard as authoritive produced words that disagree with what you claim. It is possible to average intensive properties. What meaning and how you do it depends on the context.

I do find it interesting that the peopke hete who insist that statistics tell you nothing about tge real world, are quite happy to accept the output of a statistical model as an authority.

Statistical descriptors tell you about your data! If the data doesn’t represent the real world then neither will the statistical descriptors.

Like I told you about my son studying microbiology but not statistics. And using a math major to analyze the data but not knowing anything about biology. The blind leading the blind.

It’s EXACTLY the same with you. You know nothing about physical science so you have no way to judge if your statistical descriptors actually tell you anything about the real world.

Trying to defend averaging intensive properties gives you away – the blind leading the blind.

“ It is possible to average intensive properties. What meaning and how you do it depends on the context.”

“The key is that intensive properties don’t add the way extensive properties do”

“not a thermodynamic property of a combined system”

What in Pete’s name do you think temperature is?

You are using the same kind of argument as: “you *can* calculate the number of angels on the head of a pin – it just depends on the context”.

“What in Pete’s name do you think temperature is?”

We are not talking about a temperature. but an average of temperatures. You need to know why you want the average of temperature, and that depends on the context.

“We are not talking about a temperature. but an average of temperatures. You need to know why you want the average of temperature, and that depends on the context.”

you can’t average an intensive property of different things to get an average temperature. Context doesn’t matter.

The truth is that the average of intensive properties of different objects may be mathematically valid but physically meaningless. That average will tell you nothing about the thermodynamics of the system of objects. It won’t tell you the temperature of any individual object, it won’t tell you the temperature of the total system, and it won’t tell you an equilibrium temperature.

In essence all you are arguing is that that the mean of the intensive properties of multiple objects exists mathematically and that is all you require. You simply don’t care if it is of any use at all in the physical world. Neither does climate science.

“you can’t average an intensive property of different things to get an average temperature. ”

The falacy of argument by repetition. You still need to come up with a logically coherent reason why you can’t do it. This is difficult given the countless examples of it actually being done.

“That average will tell you nothing about the thermodynamics of the system of objects.”

Why do you think it has to tell you that?

“It won’t tell you the temperature of any individual object,”

If you want to know the temperature of aan individual object then you just measure that object. Again, it’s not the point of the average.

Nothing you say here has anything to do with intensive properties. You can say exactly the same about any extensive property. What does the average mass if a rock tell you about the mass of an individual rock?

That is *NOT* all you are doing. WHERE does the average value of the intrinsic properties of two objects exist? If you are talking about temperature the *only* place it can exist is somewhere between the two objects – which is defined by the temperature gradient between the two objects. Temperature gradients do *NOT* have to be linear so the average doesn’t have to be at the 3D mid-point between the two objects.

You just contradicted yourself in two consecutive sentences. “there is no gradient” and “there is a gradient”.

If the temperature gradient over time isn’t linear then why does climate science (and you) assume the mid-point of the time gradient is the “average”?

You just said that the gradient doesn’t have to be linear. If the gradient isn’t linear then how can the mid-point be the average?

So what? You *STILL* can’t seem to understand the difference between multiple measurements of the same thing and single measurements of different things.

“And the question is whether that sum is physically meaningful.”

The sum of ten measurements of the same thing is *NOT* physically meaningful. The distribution of those measurements *IS* physically meaningful. The average of those ten measurements is *NOT* physically meaningful unless the standard deviation is also provided. Why do you (and climate science) ALWAYS omit including standard deviation when speaking to an average value? And the physical meaning of the average is as a BEST ESTMATE and not a true value. You have yet to get the “true value/error” meme out of your brain!

Pure malarky! The measurement distribution of the intensive property of a single measurand IS A FUNCTION OF THE MEASURING DEVICE. The average of the measurements is a BEST ESTIMATE of the value of the property. The measurement distribution of the intensive property of multiple measurands IS A FUNCTION OF BOTH THE MEASURING DEVICES AND THE MEASURANDS.

Can you tell the difference?

“You are the one insisting the sum has to be physically meaningful in order for the average to be meaningful. I’m telling you it does not.”

This is because you can’t differentiate between multiple measurements of the same thing where the distribution of values is a function of the measuring device and single measurements of different things where the distribution of values is a function of both the measuring devices and the measurands.

In one case you are averaging the measurments. In the other case you are averaging the intensive property. They are *NOT* the same. Open your eyes!

Of course there is! If the distribution of measurements is not Gaussian then the average is not the most likely value, the mode is. Why would you use the average as the “BEST ESTIMATE” for the value of the property being measured if the distribution is skewed?

You are making NO SENSE at ll. Intensive properties of different objects are simply not summable. You admit that having a rock at 80F and one at 70F in your bare hand does *NOT* add to having 150F in your hand. Yet you keep arguing that you can average something you can’t even add.

You have yet to answer my query about the rocks.

———————————–

If I stick a thermometer into the pile of rock pieces what will it read? 80F? 70F? 60F? 75F? 140F? 560F?

If it reads 70F then what is the average temperature? 70F/8 = 8.8F?

If it reads 80F then what is the average temperature? 80F/8 = 10F?

If it reads 60F then what is the average temperature? 60F/8 = 7.5F?

You’ll only get an AVERAGE temperature of 70F if the thermometer reads 560F!

Wanna bet on whether the thermometer will read 560F?

If you don’t put the rock pieces in a pile then what does the “average value” mean physically?

————————————

It’s obvious that you simply *can’t* answer. You have absolutely no understanding of the real world.

“You just contradicted yourself in two consecutive sentences. ”

That’s your reading problem. I’m describing two different situations. The clue was when I said “on the other hand”.

“You just said that the gradient doesn’t have to be linear.”

What do you think mid-point means? What is he mid point between 20 and 30?

“So what?”

The “what” is how telling ut is that you simply fail to address the logical comtradiction. If you argue that the average of an intensive property is meaningless just because it’s sum is meaningless then you would have to apply the same logic to the sum of multiple measurements of the same thing. Just yelling that they are different isn’t an argument.

You *still* can’t recognize the difference between averaging measurements of the same thing in order to obtain a “best estimate” and averaging single measurements of different things in order to identify a property.

You can’t find an average of an INTENSIVE PROPERTY of different objects. You *can* find an average for the measurements taken of the same thing in order to evaluate the intensive property OF THAT SINGLE object.

Wake up and join the real world! Burn your blackboard!

Just repeating your claims in capitals does not make your argument any sounder.

You need to explain why you cannot average an intensive property in a way that doesn’t also invalidate the average for the property of a single object. Just saying the sum is meaningless is not that explanation.

Your aren’t giving answers that have any backup resources. It means you have not studied metrology of physical measurements sufficiently.

Your compatriot bdgwx has been referencing different JCGM documents. Here are two references from JCGM_GUM_6_2020. Check out Sections 11.9.8 and 11.7.3, they are pertinent to temperature.

11.9.8 discusses using ARIMA for auto-correlated time series, which temperature data is. I have been using SARIMA that also removes seasonal correlations in longer term data streams.

This whole concentration on averages of averages of averages is misapplied. Averaging is a smoothing process. The calculation removes variation which is important for proper uncertainty analysis.

You need to read through W. M. Briggs classes. Read this one about trends. Class 77: Trendy Trends In Time Series – William M. Briggs. Here is another that is pertinent to smoothing, which averaging does. (4) How Smoothing Time Series Generates Massive Over-Certainty

“It means you have not studied metrology of physical measurements sufficiently.”

If you want to demonstrate you understand metrology rather than just studied it, try to explain the concepts in your own words rather than juts repeating text.

“11.9.8 discusses using ARIMA for auto-correlated time series”

Stop changing the subject. This discussion was about whether a mean can be considered a measurement, not about liner regression.

“I have been using SARIMA that also removes seasonal correlations in longer term data streams.”

You’ve been going on about all the time series analysis you keep doing for the last 5 years, yet so far have not shown any of your work.

“The calculation removes variation which is important for proper uncertainty analysis.”

What uncertainty? If you insist an average is not a measurement then by definition it cannot have a measurement uncertainty.

“You need to read through W. M. Briggs classes”

I really do not. If you have learnt anything relevant from his numerous articles, just say what it is rather than expecting everyone else to plough through is verbiage.

“Read this one about trends.”

Isn’t that the same nonsense you gave me before? I pointed out that his example is just wrong. He keeps claiming his short term trends are statistically significant when they clearly aren’t.

“Here is another that is pertinent to smoothing, which averaging does.”

Well yes, don’t do regression on smoothed data, especially if you don’t correct for auto-correlation. But then he states nonsense like “There is no earthly reason to smooth actual observations.” which just ignores all the reasons why smoothing real data can be useful.

.

It has been pointed out to you ad infinitum that the measurement uncertainty of the average is the propagated uncertainty of the components of the average.

The average is *NOT* a measurement. It is, however, a “best estimate” for the value of the property being measured. As an ESTIMATE, it is *not* a true value, it has an uncertainty. That uncertainty is defined by the measurement uncertainty of the components used to derive the estimate.

Can be useful for WHAT PURPOSE?

So it can be used in subsequent averaging?

The most common reason for smoothing by blackboard statisticians is to “eliminate noise”. The problem is that natural variation is *NOT* noise. That natural variation is a prime component of the uncertainty associated with the average. Since it is *the* defining component of the standard deviation of the data it is absolutely necessary to carry it forward in any subsequent analysis. Unless, of course, you are doing climate “science”.

“It has been pointed out to you ad infinitum that the measurement uncertainty of the average is the propagated uncertainty of the components of the average.”

Ranting is not pointing out. What you say makes no sense given the definition you use for measurement uncertainty. By definition measurememt uncertainty requires a measurand. Propagating measurement uncertainty requires a function tgat gives you a measurand. Propagating the components of an average implies the average is a measurand.

“The average is *NOT* a measurement. It is, however, a “best estimate” for the value of the property being measured.”

How is that not measuring? You’ve literally said the property is being measured.

“As an ESTIMATE, it is *not* a true value, it has an uncertainty.”

No measurement is a true value. That’s why you have measurement uncertainty.

“Can be useful for WHAT PURPOSE?”

Better understanding of the data for one. Identifyng a signal from the noise.

https://www.investopedia.com/terms/d/data-smoothing.asp

The paper in this article, the one you keep defending, uses smoothing of the aa index to remove the 11 year solar cycle.

Malarky!

You can’t even distinguish between Type A measurement uncertainty and Type B measurement uncertainty so how would you know if the definition of measurement uncertainty makes sense?

You can’t distinguish between averaging multiple measurements of the same thing and averaging single measurements of different things so how would you know if the definitions make sense or not?

Propagating measurement uncertainty does *NOT* require a function that gives you a measurand. MEASURING a measurand gives you a measurement uncertainty.

Propagating the components of an average DOES *NOT* imply the average is a measurand. The average is a statistical descriptor of the data – NO MEASURING INVOLVED!

Propagating the measurement uncertainty of the components of an average is, ITSELF, a statistical process, not a measurement process. It’s no different than Variance_total = Variance1 + Variance2.

You apparently can’t even tell the difference between “x_i” and “X_i” in the GUM so how can you speak to definitions?

Judas H. Priest! Averaging measurements is *NOT* making a measurement! It is used to estimate the value of the property being measured as determined from actual measurements. It is *NOT* a measurement of the property. And it *only* applies if the distribution of the actual measurements meets the requirements needed to make the average the “best estimate”. If the distribution of the measurements is skewed, for instance, the mode is a better estimate for the value of the property being measured.

If you take ten measurements of the length of a board the average of those measurements is not itself a measurement. Why is that so hard to understand? It is a statistical descriptor of the actual measurements and the standard deviation of those measurements is needed for a complete statistical description – and that is assuming that the measurements form a Gaussian distribution. If the measurements do not form a Gaussian distribution then the 5-number statistical descriptor is a far better method for analyzing the data – and the average is *NOT* part of the 5-number statistical descriptor.

Do you understand what the old saying “the map is not the territory” is trying to get across? It’s not apparent that you do. You *are* trying to say that the average (a map) *is* the territory (the measurand). It’s just wrong.

“Malarky!”

You know you’ve lost the argument when you say that. You do it every time.

“You can’t even distinguish between Type A measurement uncertainty and Type B measurement uncertainty…”

Deflection. The definition is the same.

“Propagating measurement uncertainty does *NOT* require a function that gives you a measurand”

Citation required. Please give an example of propagating uncertainty without a function that gives you a measurand.

“The average is a statistical descriptor of the data – NO MEASURING INVOLVED!”

I’ll ask again. Just point to your definition if “measurement”.

Note that the GUM specifically says (1.3)

“Deflection. The definition is the same.”

The definition of a Type A and a Type B measurement uncertainty *IS* different. They are both specified as standard deviations but the definition *is* different.

Type A: 4.2.1 In most cases, the best available estimate of the expectation or expected value μq of a quantity q that varies randomly [a random variable (C.2.2)], and for which n independent observations qk have been obtained under the same conditions of measurement (see B.2.15), is the arithmetic mean or average q (C.2.19) of the n observations: (bolding mine, tpg)

Type B: 4.3.1 For an estimate xi of an input quantity Xi that has not been obtained from repeated observations, the associated estimated variance u2(xi) or the standard uncertainty u(xi) is evaluated by scientific judgement based on all of the available information on the possible variability of Xi . (bolding mine, tpg)

For Pete’s sake – READ THE GUM FOR MEANING AND CONTEXT SOMETIME! It might take you a year based on your lack of reading comprehension skills but it would be time well spent.

“The definition of a Type A and a Type B measurement uncertainty *IS* different. ”

Then tell me what your definition is. It’s obviously not the ine given in the GUM ir by NIST.

Nothing you quote here is the definition of measurement uncertainty.

And stop with these petty insults if you want me to take your argument seriously.

You are averaging MEASUREMENTS of a single property, not properties of different things.

Because you are averaging different properties, not different measurements of the same property!

I don’t know why this is so hard to understand. The temperature of rock A is a totally different property from the temperature of rock B. Adding the two temperature properties, rockA temp + rockB temp, together does *NOT* provide any physically meaningful value.

Take rockA at 4g and 80F. Break it in half. Suddenly the average mass becomes 2g, not 4g. But the average temperature remains 80F. Now break each half in half again. The average mass is now 1g but the average temperature is still 80F.

Now do the same for rockB at 8g and 60F. Then shove all the pieces of rocks together in a pile. What do you get?

rockA: 4 pieces at 1g each and 80Feach

rockB: 4 pieces at 2g each and 60Feach

If I put all 8 pieces on a scale I’ll get a total of 12g. An average mass of 1.5g.

If I stick a thermometer into the pile of rock pieces what will it read? 80F? 70F? 60F? 75F? 140F? 560F?

If it reads 70F then what is the average temperature? 70F/8 = 8.8F?

If it reads 80F then what is the average temperature? 80F/8 = 10F?

If it reads 60F then what is the average temperature? 60F/8 = 7.5F?

You’ll only get an AVERAGE temperature of 70F if the thermometer reads 560F!

Wanna bet on whether the thermometer will read 560F?

If you don’t put the rock pieces in a pile then what does the “average value” mean physically?

Put all the pieces spaced equally around a circle, alternating between 80F and 60F. Is the average temperature going to be found at the center of the circle? Between adjacent pieces along the circle circumference? Somewhere outside the circle? Exactly what will the average temperature be? 80F? 70F? 60F? 75F? 140F?

Temperature is *NOT* an extensive property, either implicitly or explicitly. The mean of a set of intensive properties is *NOT* an extensive property.

This kind of logic means you could create two piles of rock made from rockA + rockB, and rockC + rockD. And the average value of the temperature of the two piles could be found from the average value of each pile.

But if you can’t define the average temperature of each pile then how to you find the average value of the two piles?

Stop trying to define physical science when you have absolutely no idea of how it works.

How do you find the average temperature over an area? You don’t even understand the implicit and unstated assumptions necessary to find the average temperature over an area. Atmospheric temperature has many independent variables including elevation, wind, humidity, cloudiness, terrain, geography, ground cover, etc. Any variation in any of the variables means a different temperature profile between locations.

Climate science, and you apparently, just assume a flat earth with a homogeneous atmosphere and surface with no variation in cloudiness, humidity, terrain, geography, etc. Blackboard statisticians and computer programmers do it this way!

Average temperature times area is *NOT* meaningful unless you can define the average temperature. Since temperature is an intensive property finding an “average” temperature is doomed to failure.

Unless you can define the 2D temperature profile functional relationship then what are you going to integrate? Besides, this should be a 3D temperature profile since elevation is an independent variable in the temperature functional relationship.

The functional relationship you would get would be a GRADIENT MAP for temperature. And the gradient between two points can’t be assumed to be linear. Have you ever laid out a long distance hike using a topographical map? Not all elevation changes are 45deg slopes. For temperature the mid-point temperature between two locations is *not* always an average value.

“You are averaging MEASUREMENTS of a single property, not properties of different things.”

That’s not an answer to my question.

“Because you are averaging different properties, not different measurements of the same property!”

Again not an answer. And I specificaly asked about the sum, not the average.

“Adding the two temperature properties, rockA temp + rockB temp, together does *NOT* provide any physically meaningful value.”

Again, what physically meaningful value do you get if you add two measurements of the same rock?

Now you are just being a troll

“That’s not an answer to my question.”

Of course it is. Mulitple measurements of the same thing *IS* different than single measurements of different things.

“Again not an answer. And I specificaly asked about the sum, not the average.”

Just because you can add numbers on a blackboard does *NOT* make the sum physically meaningful.

You are stuck in blackboard statistical world. Join the rest of us in the real world someday.

You have already admitted that you can’t add the values of the intensive property of multiple objects. If you can’t add them then you can’t find an average.

“Again, what physically meaningful value do you get if you add two measurements of the same rock?”

Again, for the umpteenth time: multiple measurements of the same thing is *NOT* the same as single measurements of different things. Neither you are bdgwx seem to be able to grasp the difference.

Averaging ten measurements of the crankshaft journal of an engine is *NOT* the same thing as averaging ten single measurements of ten different crankshaft journals on ten different engines.

Averaging the ten measurements of the same thing can be used as a “best estimate” for the property of the SINGLE MEASURAND (if all requirements for doing so are met).

Averaging the ten measurements of ten different things is *NOT* the best estimate of anything!

Averaging extensive properties is based on the property being scaled based on system size. Creating a new system by combining two separate systems is additive. Intensive properties do *not* sum based on system size. You cannot create a new system by combining two separate systems. 1 gram + 1 gram = 2 grams. T1°K combined with T2°K is *NOT EQUAL* to T1°K + T2°K.

Degree-days are *NOT* an average over time. An average would be degrees/time, not degree-time. Degree-days are a *SUM* over time. Degree-days are the AREA under the temperature profile, not an average value of the temperature profile. And the sum is for ONE measurand, not for multiple measurands.

As I said, stop trying to lecture on physical science. You can’t even differentiate between degree-time and degrees/time.

“Degree-days are *NOT* an average over time.”

I didn’t say they were. What I said is they are an extensive property. That’s why you can add them, and get something meaningful.

“Degree-days are the AREA under the temperature profile”

That’s what I said.

“And the sum is for ONE measurand, not for multiple measurands.”

So the sum of all daily degree days over a year is a single measurand. Good. Now what do you get when you divide that single measurand by the number of days?

Really? You didn’t say: ““The same if you are averaging over time, units ate temperature times time. e.g. degree days.”

It sure looks like you are saying “averaging” is temperature times time.

Averaging would *NOT* be temperature times time, it would be temperature divided by time.

T * time ≠ T/time

No, it isn’t. You tried to equate “averaging” temperature with calculating degree-days.

You just can’t get this into your head, can you?

Multiple measurements of the same thing is *NOT* the same as single measurements of different things.

If you are summing the area under the temperature profile FOR A SINGLE MEASURAND (i.e. measurement station), then degree-year is a valid value.

That is *NOT* the same thing as creating a temperature profile using single temperature measurements from 365 different measuring stations measuring different measurands.

You *could* find the degree-year values for each of the different measuring stations and sum them to get a total degree-day value for the entire system. Ask yourself why climate science doesn’t to this to get a total global degree-year value and use that to track “climate change”. The data needed to do this has been available for over 40 years. Why not use it?

“Really? You didn’t say: ““The same if you are averaging over time, units ate temperature times time. e.g. degree days.””

Sorry if I keep overretimating your ability to read. That sentence has to be read in the context of the previous point. Hence why I say “the same”. I am describing averaging over time by using units of temperature × time, not that temperature × time is the average.

“Averaging would *NOT* be temperature times time, it would be temperature divided by time.”

Try some dimensional anylisis. What are the units of the average? If the average is temperature divided by time you have units of temperature divided by time. What would that mean?

“Multiple measurements of the same thing is *NOT* the same as single measurements of different things. ”

I’m asking if uou think the sum of all degree days over the year is a single mesurand, or the combination multiple measurands.

My answer would be it’s both. Just as the average of all max temperatures in May can be an average of multiple measurands and a single measurand.

“If you are summing the area under the temperature profile FOR A SINGLE MEASURAND (i.e. measurement station),…”

We’ve been here before, but a messurement station is not a measurand. A measurand is a specific quantity you are measuring, not the instrument used to measure it. And the measurand in this case is either a specific temperature. or the average temperature over a period of time, or the total temperature times time.

“That is *NOT* the same thing as creating a temperature profile using single temperature measurements from 365 different measuring stations measuring different measurands.”

So you are saying average temperature over time is OK, but not average temperature over area? I teally think you need some self reflection. Try to consider what you want to say and then ask yourself if it is logically consistent.

“You *could* find the degree-year values for each of the different measuring stations and sum them to get a total degree-day value for the entire system.”

Thst wouldn’t make sense. You need to average the stations, preferably area weighted. Otherwise you are saying you can increase the total value for the system just by increasing the number of stations.

I gave you the dimensional analysis. degree-time is *NOT* the same dimension as degree/time.

How can you be so dense?

If Day1 has a 10,000 degree-day value and Day2 has a degree-day value of 20,000 what do you think you are doing by adding the two values?

IT IS THE SAME THING AS ADDING UP ALL THE DEGREES OVER 48 HOURS INSTEAD OF 24 HOURS.

It’s the area under the temperature profile curve over an interval of 48 hours. Not divided by 48 hours. Just a straight sum of the degrees. Not an average. (degree-days + degree-days) is *NOT* degree-days/days

The sum of all degree-day values over a year is a SINGLE VALUE. It is *NOT* a measurement. It is the sum of a set of measurements. Why is this so hard for you to understand? Yes, it will be a large number. SO WHAT? It would *not* be an average, just a sum. In principle it would be no different than adding up all the masses of objects in the asteroid belt in our solar system. It would *NOT* be an average, it would be a sum. And you could compare that sum to the sum of an asteroid belt in a different solar system.

“My answer would be it’s both. Just as the average of all max temperatures in May can be an average of multiple measurands and a single measurand.”

You still haven’t properly internalized TN1900! “all max temperatures in May” is MULTIPLE MEASUREMENTS OF THE SAME THING – i.e. a single measurand – multiple measurements. It is *not* multiple measurands.

Like I keep saying, neither you or bdgwx can differentiate between multiple measurements of a single measurand and single measurements of multiple measurands.

You are kidding, right? What do you think a measurement station is measuring?

As usual, you are trying to expound on physical science with absolutely no understanding of physical science at all!

If you take 10 temperature measurements of a water bath you can average those readings to get a “best estimate” of the temperature of the water bath. MULTIPLE READINGS OF A SINGLE MEASURAND. Multiple measurements of an intensive property for a single measurand.

Temperature measurements “over an area” are SINGLE MEASUREMENTS OF MULTIPLE MEASURANDS. And to make it worse it’s single measurements of an intensive value which can’t be added to find an average, be it an average over an area or an average over time!

Why do you need to average the stations? You say you need to but you give no reason for why.

There is no reason why I can’t find the degree-day value for yesterday from my measurement station and compare it to the degree-day value for yesterday at a measurement station a mile down the road. I don’t need to use “area” at all. Thus no “area weighting” needed.

You have been seduced by the idiocy of climate science assuming that temperature, an intensive property, is not only correlated between locations but is also EQUAL – thus you can infill temperatures at StationA with values from StationB whether the stations are 10 feet apart of 400 miles apart! It’s based on assuming that the average value of temperature will be the same over an area. It *is* idiocy.