Guest essay by Michael Cochrane

Of the many important issues clamoring for the attention of world policy-makers and government officials, global climate change is among the most controversial. Its existence, likely effects on the environment, probable causes, and possible solutions have all been highly politicized. Many on the political left consider it the defining issue of our time, while many on the political right are highly critical of what they perceive as “junk” science undergirding the assertion of dangerous anthropogenic (human caused) global warming (AGW).

Policy solutions undertaken to deal with AGW, on the premise that it is dangerous (DAGW), will have massive monetary and non-monetary costs to society. Such high costs demand a correspondingly high degree of certainty regarding the likelihood of possible future scenarios. In particular, the scientific community must be able to show, with a very high level of confidence, that reducing or ceasing human activity associated with greenhouse gas emissions will stop or reverse global warming. If this is not possible, policymakers should assume that DAGW is essentially unstoppable and consider defensive measures (adaptation) as the most prudent policy approach.

One way to cut though the noise on climate change and help policy makers think clearly and rationally about governmental responses to the risks of global warming is to develop a risk or decision-scenario model. This can help senior policy makers explore alternative scenarios and decision outcomes before actually taking steps to commit national resources or enter into agreements with lasting economic effects.

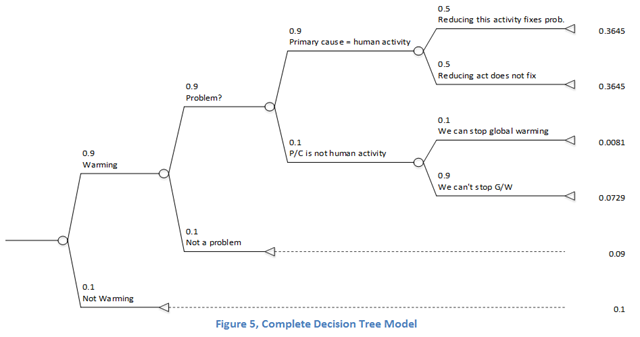

Probability trees are valuable tools for modeling the potential outcomes of a succession of events. Each path along the tree from the origin to a terminal node—from root to tip of branches—describes one of potentially dozens of possible outcomes. Each node in the tree describes a possible event with two (or more) outcomes having a given probability of occurrence. A scenario is described by tracing a sequence of events along a path from the origin to a terminal node. The likelihood or probability associated with each path or scenario is found by multiplying the probabilities of all the event nodes along that path. The total probability for all possible paths described on the tree should sum to one.

Building a Climate Change Policy Decision Model

The following climate change policy model is designed to help us explore a range of scenarios associated with different answers to a sequence of questions fundamental to the issue of global warming and that must be addressed prior to proposing policy solutions. The questions are:

1. Is the earth actually warming? A summary of modern climate history suggests that from 1979 to the present there has been “a large disparity between surface thermometers, which show a fairly strong warming, and independent temperature readings of satellites and balloons, which show little warming trend” (Singer & Avery, 2007, p. xv).Rigorous data analysis (Heller, 2016) and application of statistical methods that control for heteroskedacity and autocorrelation (McKitrick & Vogelsang, 2014) suggest that apart from a step-wise change in global average temperature (GAT) around 1977 there has been no statistically significant warming trend since ~1958 or earlier.

2. If the earth is warming, is this actually a problem? There is some disagreement over this question, with global warming activists citing the potential for rising sea levels, more extreme weather events, famines and other catastrophes. Other scientists, however, argue that rising atmospheric CO2 levels and warming attributable to it may actually have net beneficial effects such as longer growing seasons, expanded growing ranges, increased plant growth, increased food production, and reduced morbidity and mortality from cold snaps. (Davis, 2005) (Singer & Avery, 2007)

3. If the earth is warming, and this is a problem, to what extent is human activity causing the warming? This question gets at the heart of the issue. Many environmental activists think human activity (i.e., burning of fossil fuels and other activities that generate CO2 and other so-called “greenhouse gases”) is the primary cause of global warming, while others believe warming is largely or wholly a natural, cyclical phenomenon primarily caused by solar cycles, ocean current cycles, and the eccentricities of the earth’s axial tilt and orbit. (Singer & Avery, 2007)

4. If human activity is the primary cause of global warming, will reducing this activity also reduce global warming? The assumption undergirding environmental policies such as the (now obsolete) Kyoto Protocol, the “Clean Power Plan” in the U.S., and the global climate agreement negotiated in Paris in late 2015 is that if anthropogenic CO2 causes warming, then reducing the output of anthropogenic CO2 will retard the warming trend. Many scientists reject the deterministic view of the relationship of anthropogenic CO2 and climate change assumed by such policies, arguing that there is a high degree of uncertainty associated with understanding the effects of changes in atmospheric CO2. (Posmentier & Soon, 2005)

5. If human activity is not the primary cause of global warming, is it still possible to stop it? Posing this question acknowledges the existence and the problem of climate change, but forces consideration of alternative solutions.

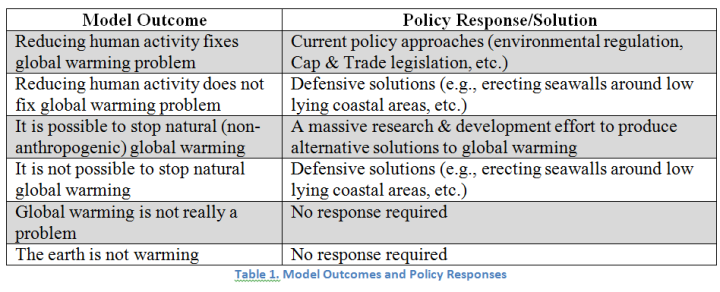

Each of the five questions is modeled in the probability tree (Figure 1) by an event node with two possible outcomes. There are six unique paths through the tree resulting in a total of six outcomes. Four of the outcomes require some type of policy response or proposed solution. Table 1 lists the model outcomes along with a suggested high-level policy approach or solution strategy.

The next step in preparing the probability tree model is to assign probabilities to each of the five events in the tree. This might best done by eliciting estimates from subject matter experts, who should be able to ground their estimates in an understanding of the current state of knowledge in their field and offer persuasive empirical evidence for any hypotheses or theories underlying their judgments. However, the beauty of a decision model is that one can still learn a great deal about appropriate policy responses by experimenting with a range of different probabilities and observing the degree to which the outcome values are sensitive to changes in the event probabilities.

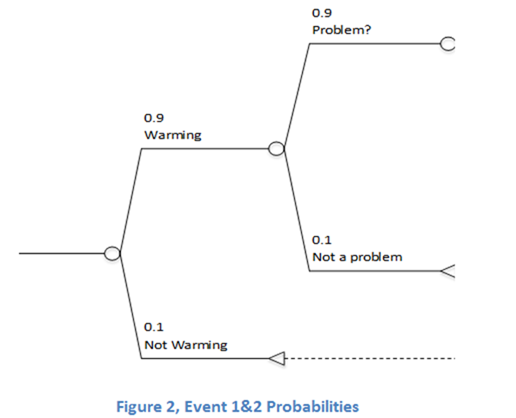

Figure 2 shows the probabilities associated with the first two questions. Although satellite and radiosonde data show no statistically significant warming over the last twenty years, and the possibility that warming since ~1960 is limited largely to a stepwise rise around 1977 with no long-term trend either before or after (McKitrick & Vogelsang, 2014), much of the scientific community argues that the earth has generally been warming over the last several decades. We have, therefore, elected to assign a probability of 0.9—near certainty—that the earth is warming.

Although there is not a broad consensus regarding the degree to which global warming will be a problem for humanity, and such palaeoclimatologists and palaeoecologists as Hubert H. Lamb presented evidence that warmer periods were healthier for all kinds of life on Earth, including humans (Lamb, 1965), the prevailing opinion among environmentalists and climate activists today seems to be that, on balance, a warmer environment would be a net negative outcome for the world, so again, to be conservative in our estimates, we have assigned a probability of 0.9 that global warming is a problem. Figure 2 now shows events one and two in sequence, with their associated assumed probabilities.

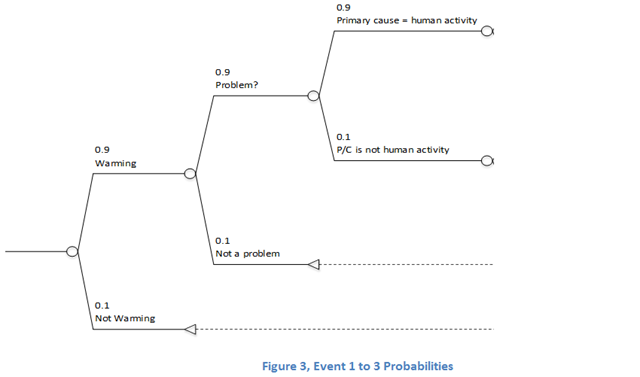

The issue of whether global warming is blamed on what is called anthropogenic carbon dioxide forcing (i.e., human activities such as burning of fossil fuels) seems to be more of a political than a scientific one. Most scientists appear to acknowledge that human-based greenhouse gas emissions contribute something to climate change, but the key question is whether anthropogenic CO2 forcing is the primary cause. There is debate over whether there is a strong scientific consensus on this question. However, for purposes of this initial modeling effort, we will give the benefit of the doubt to anthropogenic CO2 forcing and assign it a high probability of 0.9. Figure 3 shows the first three events in the probability tree.

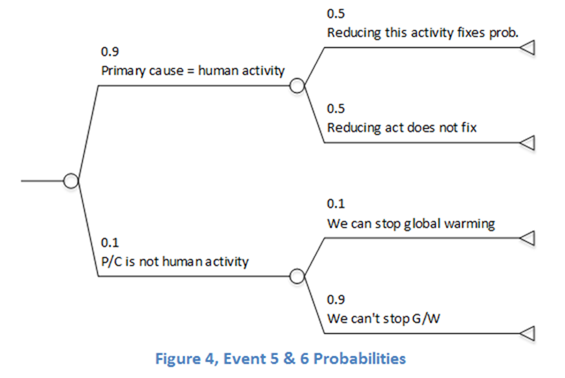

If human activity is the primary cause of global warming, the next to last event models the question, “Does reducing the level of human induced greenhouse gas emissions retard or reverse the advance of global warming?” If human activity is not the primary cause of global warming, the final event models the question, “Is it still possible to stop global warming?” Figure 4 illustrates how we would model these questions in our decision tree.

We assign a probability of 0.5 to event four to reflect the level of uncertainty and indifference in the scientific and policy community about whether this is actually possible. We will eventually perform a sensitivity analysis on this probability distribution.

If the primary causes of global warming have nothing to do with human activity, it is highly likely we will be unable to intervene effectively in the complex climate system to reverse a warming trend. Therefore we assign a very low probability of 0.1 to event five.

In the completed model, we multiply the probabilities for each event along each of the six paths through the tree.[1] The resulting probability distribution at the six triangular terminal nodes reflects the relative significance of each of the outcomes.

Interpreting Model Results

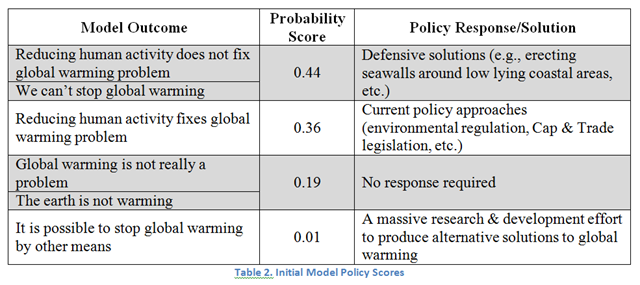

Because we have already associated likely policy solutions to four of the six model outcomes, we can now associate the relative probabilities (or likelihood of occurrence) to the policy responses. In Table 2, we can see that, although current policy approaches and defensive measures both score the same if human activity is the primary cause of global warming, defensive measures are also an important response to the relatively small probability that we cannot stop global warming (given that it is not caused by human activity). Consequently, we combine these two scores for the Defensive Solutions policy response for a probability of 0.44. The most appropriate policy solution given this particular model would be those that direct national resources toward measures to defend the country against potentially devastating effects of climate change, e.g., rising sea levels or more frequent or severe droughts.

[1] The compound event probability distribution generated by the probability tree is based on the assumption that each of the event probabilities following the initial one is a conditional probability based on the prior event outcome.

Sensitivity Analysis

The probability scores for the top two policy responses are fairly close. So one of the first questions we must ask is, “how would the event probabilities in the model have to change for these scores to be equal?” Since the model is formulated in Microsoft© Excel™ we can use the “goal seek” function in the data tools menu to explore this possible outcome. We set the terminal probability score for “current policy approaches” to 0.4 and let the goal seek algorithm find the corresponding probability distribution for event four (reducing human activity). The model outcome “reducing human activity fixes global warming problem” must have an assigned probability of 0.55 or higher for current policy approaches to equal or exceed the scores for defensive solutions (assuming all other probabilities in the model are held constant). This is clearly visible in Figure 6, which shows that the these two general policy approaches are highly sensitive to changes in the probability that reducing human carbon dioxide emitting activities also reduces global warming.

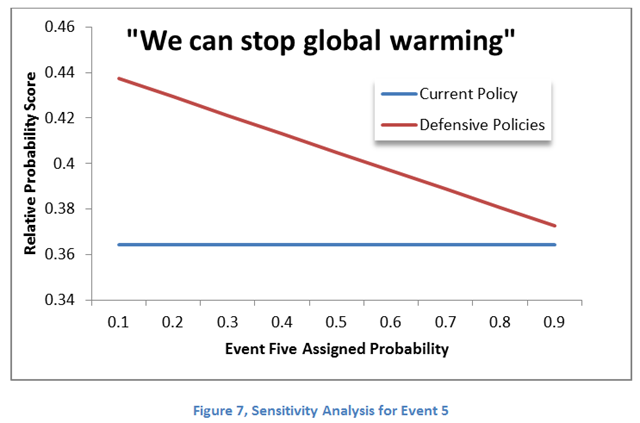

Figure 7 shows the degree of sensitivity of the two major policy approaches to changes in the probabilities for event five. There is no point across the range of probabilities for this event at which the probability score for defensive solutions drops below that of current policy approaches. This is because the defensive solutions policy is also the appropriate response for the outcome in which global warming cannot be reversed given that human activity is not a primary contributor.

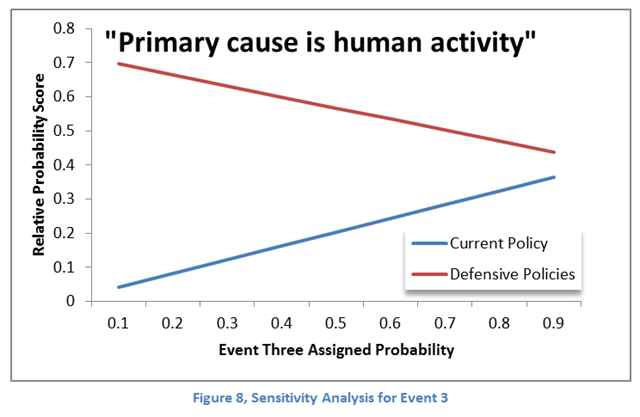

Figure 8 addresses model sensitivity to the probability associated with event three: whether human activity is the primary cause of global warming. As with event five, the model is insensitive to changes in event probability across the range of possible probability settings (again assuming all other event probabilities are held constant).

Varying the probabilities of events one and two has no bearing on the relative movement of the outcome probabilities scores we have been observing. This is because any change in these probabilities is applied uniformly across all the event probabilities downstream from these nodes.

Conclusions

The degree to which reducing anthropogenic CO2 forcing correspondingly retards global warming is the major driver of policy solutions. Our model shows that it does not matter whether human activity is actually the primary cause of global warming (90% probability). The currently advocated global environmental policies exemplified by the Kyoto Protocol, Cap and Trade laws, and the recently negotiated Paris climate agreement assume a deterministic relationship between global warming and anthropogenic CO2 forcing that operates in both directions. In other words, if humans caused it by their activity, they can “un-cause” it by reducing or ceasing that activity. This may not be the case. Our modeling effort suggests that we must be at least 55% certain that reducing human activity will cause a corresponding reduction in global warming before we even consider the current regulatory policies advocated by the environmental movement.

It should also not be overlooked that, even assuming a model heavily weighted toward problematic global warming caused by human activity, there is still an almost twenty percent probability that warming either is not happening or won’t be a significant problem if it is.

The cost of policy solutions undertaken to deal with the threat of climate change will be massive and will entail both monetary and non-monetary costs to society. Any benefit to society from such policies must be weighed against those costs. Not wanting to overly complicate an initial policy model, we intend to explore such a cost/benefit analysis in future research.

The likely high costs of climate change mitigation policies demand a correspondingly high degree of certainty regarding the likelihood of possible future scenarios. In particular, the scientific community must be able to show with a very high level of confidence, that reducing or ceasing human activity associated with greenhouse gas emissions will reverse global warming. If this is not possible, policymakers should assume that global warming is essentially unstoppable and consider defense measures as the most prudent policy approach.

Michael Cochrane, Ph.D., Engineering Management and Systems Engineering, is Founder of Value Function Analytics, a consulting firm that helps clients achieve their objectives by helping them to think about values. Also a writer with World News Group, he has expertise in statistical modeling and analysis.

Works Cited

Davis, R.E. (2005). Climate Change and Human Health. In P.J. Michaels, Shattered Consensus: The True State of Global Warming (pp. 183–209). Lanham, MD: Rowman & Littlefield.

Heller, T. (2016). Evaluating the Integrity of Official Climate Records. Paper presented at the annual meeting of Doctors for Disaster Preparedness, online at http://realclimatescience.com/wp-content/uploads/2016/07/Evaluating-The-Integrity-Of-Official-Climate-Records-4.pdf.

McKitrick, R.R., & Vogelsang, T.J. (2014). HAC robust trend comparisons among climate series with possible level shifts. Environmetrics 25(7): 528–547.

Posmentier, E.S., & Soon, W. (2005). Limitations of Computer Predictions of the Effects of Carbon Dioxide on Global Climate. In P.J. Michaels, Shattered Consensus: The True State of Global Warming (pp. 241–281). Lanham, MD: Rowman & Littlefield.

Singer, S.F., & Avery, D.T. (2007). Unstoppable Global Warming: Every 1,500 Years. Lanham, MD: Rowman & Littlefield.

You have 10 unknowns that can only be filled in with guesses and opinions. Doesn’t tell you much.

Looks like a runaway growth to me.

The NCAA seems to work in the opposite direction.

g

This is much like the famous “Drake Equation” to estimate the probability of extraterrestrial life elsewhere in the Universe. Lots of terms that seem to give a precise final answer. However, every single term in the Drake equation is no better than a “WAG” wild — guess.

It’s UNFCCC policy based.

We should have asked King Cnut.

Actually, the King Cnut story has been reversed to advance anti-aristocratic sentiments. In the real story Cnut was tired of brown-nosing sycophants and stood in front of the rising tide to demonstrate that even a great king has little to no power over the great forces of nature.

But the sanctimonious Collectivists of the political Left wanted you to think that it shows just how arrogant the (old) aristocracy was – while being completely oblivious to the fact that they are the arrogant (new) aristocracy who can commit crimes that the media will hush up (illegal mail servers, passing thousands of classified documents including above-Top Secret programs outside secure systems to unvetted people, blatantly lying to Congress and the FBI with impunity, treasonous actions of giving weapons to Al Qaeda in Syria and overthrowing Libya and turning over Egypt to the Muslim Brotherhood, etc etc etc).

In fact, the real lesson of Cnut was he was a leader who had wisdom and demonstrated to the hapless bureaucracy of lesser men just how clueless their model of sea-level rise really was.

I disagree with DAV. This approach tells you a lot.

Unless you can stop time, climate change is an issue where you are forced to make a decision. Doing nothing is a decision, even if not a conscious one. This decision tree approach forces you to state your assumptions and choose a course that is consistent with those assumptions. Further, it let’s you explore the sensitivity of different variables to understand how far you would have to stretch your assumptions before you would make a different decision.

If the correct model has “10 unknowns that can only be filled in with guesses and opinions”, then any alternative decision making process, including ‘gut feel’ has those same 10 unknowns. You’re making guesses in either case so why not be systematic and transparent about it.

If the “10 unknowns” version is not the correct model, then this decision tree approach ensures that someone else can intelligently comment on your model and why it should be 8 variables or 12 and what the likely values for the unknowns should be.

Conditional probability is not intuitive. Uncertainty is hard, but that’s life. Pretending humans are able to weigh these complexities without some form of decision analysis approach is foolish.

Great post Mr. Cochrane.

I quite agree as well. One of the most important outcomes of an effort like this is that it highlights the difference between people’s opinions, because as noted by DAV, the probabilities are ultimately subjective (even if based on deep amounts of research).

It is upon ideas like this that I feel DAGW can be challenged.

I have had training in this approach when I did got my PMP credentials, so I certainly see the value it provides. I have often pushed the idea of using Nelson Rules of Process control to measure the success of models. The weakness in that is that I can’t provide an appropriate Time interval. One year seems too short, but 5 is too long. I think 2 or 3 works, but that is just a hunch.

https://en.wikipedia.org/wiki/Nelson_rules

Note that Rule #2 is the one models are breaking.

What?! Using a dart board not good enough for you? If all you can do is plug in guesses you haven’t done any better than the dart board.

Not to mention the problems with the presented tree.

The primary one: it’s not a decision tree. It’s computing the probabilities of an action or event occurring. So what? What would that probability mean (even if it’s given you had accurate values all along the tree)? You would take an action because it’s probable? Really? Isn’t that a bit like casting your vote for whoever is leading the polls just because they are leading? That’s how you make your decisions?

A decision tree should weigh something meaningful like costs and benefits and uses the probabilities to reach expected values. Then you at least have a basis for a decision. I don’t see that here. Unfortunately that would then mean 30 unknowns for the depicted tree. More ways to fool yourself into thinking you’ve done anything meaningful because — hey! — you CALCULATED it!

Re: DAV @7:42pm

Granted, this was not a decision tree. The original post only alluded to this in the text and perhaps I read too much into the author’s intent. I assumed a more fulsome formulation would do so and this was simplified for a blog post. For example, being consistent with the post, a new first order branch would be decisions to implement a policy approach from table 2. [although I would not have formulated it that way myself – my original comment was more on the general utility of the approach, not the specific formulation presented in the post]. The utility could be expressed as NPV and an expected value of the different decisions could be computed. Of course, the decisions presented in table 2 aren’t mutually exclusive / exhaustive, and need some work, but I think we may be of similar minds on this aspect.

Regarding guesses and dart boards I would agree with you if the guesses were pure guesses. My assumption is that although there is a lot of uncertainty most informed people would be doing more than pure guessing at the value. They would have some basis for estimating their personal guess other than randomness. Further, even if there estimate was highly uncertain, they can provide not only a central estimate but a range. That range then can be used for sensitivity analysis to understand how multiple models influence the utility of different decisions. If the guesses, and particularly the ranges, start to approach pure guesses the expected values would start to show overlap in range and less difference between the decisions. A proper interpretation of which would be to accept that decision analysis cannot be used to ‘compute’ a decision in this situation if uncertainty can not be reduced. If indeed it would be possible to reduce uncertainty, the decision analysis approach would highlight where effort would best be applied.

Your estimate of 30 more unknowns is probably reasonable. Unfortunately we are in the situation today where various groups are advocating full steam ahead on a specific decision without even listing the relevant variables, let alone an honest look at the unknowns. In this case I think the value of using a decision analysis approach is not in making the decision but a useful framework to explore the uncertainty and interdependencies (conditional probabilities, etc). With authentic/honest participants (I know, a stretch on this topic), decision analysis forces them to be very specific about what they disagree on. It moves the argument from an untenable ‘I’m right, your wrong’ to more specific bite size arguments where meaningful discourse might actually be possible.

Thanks for the conversation so far.

See the link to hypernumeracy regarding assigning numeric values just because one can. Level two of the tree, “Is it a problem,” is an example. Something that begs opinion and revolves endlessly. You only need to look around to see it. Assigning a numeric value to opinion is just plain silly.

I fully agree with you that “is it a problem” is poorly formulated. How are the words ‘it’ and particularly ‘problem’ defined and so on. But lets assume we have a decision tree that is properly framed and characterized.

I generally agree with the hypernumeracy post. Particularly for arbitrary scales or for exercises where the numbers are just a translation for translations sake. However, I don’t think the main message of the post precludes the assignment of numbers to opinion as a means to an end, just that the numbers are not the end themselves.

If you’ll permit the indulgence, let’s switch the problem to something a bit simpler than climate change. Let’s assume we are directors for a company pondering an increase in production capacity for a product we already produce or investing in a production line for a new product. Some part of our decision will include a forecast of future price for the two products. We may have lot’s of reports from think tanks, pundits, economists, etc to help inform that price forecast or we may be in a novel/volatile market and left largely to opinion. We may both agree that it is likely the price of product A will stay the same but there is some chance it will increase or decrease. This is where assigning numbers becomes helpful. You may rate likelihood of a stable price as 6 out of 7, and I may rate it as 5 out of 7, that is interesting and potentially relevant. The potential relevance being how sensitive is the decision to the price of product A. We would only bother exploring that difference of opinion if it was relevant.

However, I would never suggest using a single point estimate or any arbitrary scale (e.g. 1 to 7). Instead we are much better off expressing our opinion on future prices in terms of probability distribution* of future prices. Given our previously stated opinion that price is likely to stay the same, both of our distributions would be centered near equal price, but you may be skewed higher, lower, or wider than me. We then use that probability distribution in the decision analysis to understand the expected value for investing in increased production or a new product line. We consider not only the overall expected value for each decision, but also the range of expected values given each future price probability (e.g. maximum upside and downside risk), and combine that with the companies overall risk appetite, portfolio of other holdings and relative risk, current cash position, and so on to make a decision.

To get back to our climate change example, if the problem can be properly characterized (i.e. defined terms, agreed structure, valuation, etc) , then assigning probability, particularly probability distributions, to given outcomes can be a meaningful way to explore and inform the decision. As a start, the decision analysis approach can be used to eliminate proposed actions that have low expected value given all possible probabilities. Given that most advocates seem to be arguing on emotion and not logic at present, this alone would significantly advance the conversation.

Apologies for the long reply, brevity is not my strong suit.

*Full probability distributions are often not needed and unrealistic to ask a person to draw. If you can get a high, low, and best estimate the expected value result is very close to the same as full simulation of a complete distribution.

Just to be clear, I’m not saying there aren’t situations where something like this wouldn’t be appropriate. I am saying this situation is not one of them. It’s nothing more than an attempt to put numbers to gut feel then declare it something more than gut feel. Quantifying the unquantifible. As pointed out above, it is strongly similar to the Drake equation. The results offer no increase in precision. or accuracy; in fact, are meaningless — a pointless exercise..

A timely and relevant post on Hypernumeracy

http://wmbriggs.com/post/19747/

All Government Policies should be based on REALITY, not an ever failing Socialist Agenda!

There is another arm: Is greenhouse gas emission reduction the best solution?

For example, some are worried about the impact of climate change on malaria. Others develop a malaria vaccine.

..and others ponder why malaria is no longer endemic in northern Sweden and Finland

Malaria was endemic in Stockholm. Further north, it was episodic.

Excellent post. Finally a systems engineering approach. Need more engineering thinking and decision making for defining any future mitigation strategies and spending.

“Excellent Post”

I agree 100%,

I have used similar tools with a plot to help management make a decision as to whether they should repair a potential problem during a plant shut down, which would be extended the shutdown if repaired now, or risk an unscheduled outage. Using data on the loss of production caused by a unscheduled shutdown, the probability range of a forced shutdown with the potential problem, using the duration to repair during an unscheduled shutdown, etc. It takes emotion out of the decision.

Management original simple position posed to the engineers was “I don’t want an unexpected shutdown”; however, when presented with the costs based on a realistic range of probabilities, it was clear that taking the risk of an unscheduled shutdown was the right economic decision.

If the CAGW folks were forced to assign probabilities and consequence of their claims using the tree in this posting, it would be clear that the current policies are likely not the correct approach. These tools are used every day in engineering and business to weed out the alarms in failure analysis, risk/consequence, and other decisions. Why not in climate science? Those who go along out of fear for reprisal would be forced to admit the probabilities and consequences are not significant.

And that’s with bending over backwards to give the warmists the benefit of every doubt.

Using more realistic probability estimates, the justification for massive intervention shrinks even more.

+1

The subdivision of systems engineering disappeared from my former place of employment years ago. Produced numerous valuable real-world assessments. Some of these were a little “uncomfortable”, eg a vessel in a GBR sea lane was 35 times more likely to be involved in an “incident” if there was a pilot on board.

Glad to see that the discipline survives elsewhere.

Good luck with that! This is all a political toolkit to produce other objectives. The politicians don’t even care about solving problems, only the leverage they can get from them. The left and the Greens combine their failed religions and run a three-legged race to dystopia! All in the name of saving the planet so it’s hard to call b.s.- even though that’s exactly what it is!

Missing from the probability calculations is : the probability that the green left can seize absolute power using noble cause policies. Even at sub-50% probability, the policies are worth pursuing in the absence of better ones (for the green left), because the prize is so gteat.

rms,

While I agree with you on the systems engineering approach, politics and rational thinking just don’t mix well. There is vary little political value in actually fixing problems. As H. L. Mencken said: “The whole aim of practical politics is to keep the populace alarmed (and hence clamorous to be led to safety) by menacing it with an endless series of hobgoblins, all of them imaginary.”

Even if the world can be convinced that cagw is hokum, it will still be beneficial to spend money on protective measures. Obviously though, if the world is bankrupted by measures to stop cagw, then there won’t be the cash available to stop the devastation of another Katrina.

How is it beneficial to borrow money to spend on useless endeavours? That is Lefty thinking, like digging holes and filling them in to create jobs. Don’t use equipment! Pick and shovel means more jobs! Good grief!

Weather will happen anyway. There will always be another Katrina, even if AGW is not real.

That’s the finding of the article.

Mitigation is required anyway. So the extra costs of fighting AGW are (near certainly) not required.

This is why the precautionary principle is misguided.

The worst scenario is to avoid the position where we bankrupt ourselves (an de-industrialize) in a failed attempt to mitigate damage, but because CO2 is not the driver of change, the Climate continues to warm (due to natural variation) and this warming cause real measurable damage, and we no longer have the financial wherewithal to adapt.

Adaption is always the most sensible policy, especially as global warming is not global and consequences are felt locally, not globally..

Adaption works whether warming is caused by CO2, or if due to natural variation.

Adaption works because it is targeted as and where needed; not all regions may warm and change may not always, if ever, be bad.

if warming is net beneficial why would we wish to spend money in an attempt to stop it. Much better to adapt as necessary if and only if there is a problem that needs to be addressed.

Mitigation is a very poor response, especially since it is far from certain that mitigation can work, the costs of which are prohibitively expensive far out weighing the potential harm of some warming, and the science is so poor and the climate system so poorly understood.

“especially as global warming is not global and consequences are felt locally, not globally..”

“not all regions may warm and change may not always, if ever, be bad”

Interesting facts for politicians. As adaptation to eventual climate change is far more cheaper, ending the ‘Prevent Climate Change Program’ and spending 10% to 20% of that amount to an ‘Adaptation Program’ for where ever in the world, will give a profit for everyone. And the most profit for those who really need it.

(Here in Holland we are having a wonderfull September month with really nice temperatures. I suppose 90% if not all of the Dutch prefer to have such a September again next year and all years after this one. And in October, November, December, Februari, March and April we would like to have these temperatures as well. Only in January I think we would like to have some cold weather to enjoy some snow and some skating. But that’s enough for most of us.

P.S. 3 million of the 17 million Dutch flee the country in the warmest part of the year to visit the hottest part of Europe: Spain, Portugal, Italy, Greece, Turkey. It still seems to be far too cold in Holland, even in summertime and even already experiencing a lot of the recent warming ….)

We don’t need a “climate change policy”. Mostly, the type of policies we are talking about have little, if anything to do with climate. They mostly have to do weather – things such as flooding or droughts. Like anything, there is a cost/benefit analysis to do. That is all. We don’t know where the climate is headed – up, down, or sideways.

FEMA says (in print) that ANYWHERE where it can rain, it can flood.

Seems a bit far fetched to me. But when they are pushing their “National Flood Insurance Progrom” , one can easily see where they can force a tax on everybody to bail out those people who continue to build, and then rebuild in places that are below sea level; like N’orlins.

And let’s not forget the hollyweird compounds that are built hanging on the side of mountains, that either burn to the ground in the fire season, or wash down the cliff during the following mud season.

Insurance does not seem like a National imperitive to me. But in this era of free stuff, well why put any restraints on the stupid things people are aloud to do on their neighbor’s nickel.

G

I snicker. I live on a coastal rise of land that is probably a hundred feet above the Puget Sound (on one side) and a similar height above the Kent Valley (on the other side). If it rained bad enough for my house to be flooded, in the evening news variety of flood, it would be raining bad enough for Noah to have a second mission in the Ark. (Once upon a time, it did rain hard enough that the local water table rose to fill my crawl space.)

“stupid things people are aloud to do ”

I have noticed that stupid people often are pretty loud.

They love to sell the insurance. What they hide is that there are restrictions on collecting. The fact that there was a heavy rain and your basement rec room and media room got flooded means nothing. That they claim could be simply because the rain washed down the street and into your house. It is not an insured flood unless your neighbors get flooded also or you get nothing.

Michael J

People within 10 miles of my house have been flooded in recent years even though they aren’t on a floodplain or anywhere historically flooded… victims of surface water run off due to intense rainfall (Louisiana style) or groundwater flooding – where even though miles from a river the water table rises up.

Here in the UK we have seen an increase in intense rain and winter and summer flooding as a result since 2000.

“The degree to which reducing anthropogenic CO2 forcing correspondingly retards global warming is the major driver of policy solutions.”

TOTAL B.S.

the ‘decision tree’ is completely linear:

http://imgur.com/a/M2ppW

There is another general solution, as with prostate cancer: watchful waiting. The world hasn’t appreciably warmed since 2000 except for a now rapidly cooling 2015 El Nino blip. During that period, about 35% of the Keeling curve CO2 increase since 1958 occurred. Plainly CO2 is not causing AGW as modeled. Observational EBM ECS is ~1.65, not 3.2 as modeled. NPP shows the planet is greening; that means biological sinks are increasing. Despite frantic searches for them, no possible tipping points are in evidence; SLR is not accelerating, and when properly estimated using 2013’s observational GIA, Antarctica is not losing ice mass and (Zwally IceSat) may be gaining.

Watchful waiting means implementing sensible no regrets policies (soot, PM2.5, ocean overfishing, sea protection for subsiding cities like New Orleans and Bangkok), but otherwise doing nothing until we better understand how climate operates, especially the all important TCR and ECS. Gain a clearer picture of whether eventual adaptation or mitigation is a more prudent course–if needed at all. Massive implementation now of intermittent renewables was and remains a fools errand motivated by subsidy farmers.

There is nothing sensible about the PM2.5 standards and if a city is already below sea level and still sinking. It’s a lost cause. Better to spend that money moving the people and allow the sea to reclaim that land. Otherwise you are sinking good money after bad year after year forever.

“Better to spend that money moving the people and allow the sea to reclaim that land.” Not necessarily. Try telling that to the Dutch.

Agree with Ristvan

This line of thinking is not far removed from Bjorn Lomborg who altho a believer in

mmcc thinks the danger greatly overstatef d and instead of rushing into half baked policies that will be very costly for only marginal impact we should invest in

A monitoring of climate

B r an d in reducing costs and effectiveness

of non CO2 emitting energy

For his pains he has been regularly called a denier and banned from Australian universities by those

academic freedom loving academics

+1 Ristvan

Move slowly, try a few different things. Watch, learn, assess, evaluate. Get better over time.

I would also add that the cost solution should large be borne by the source of the problem. I think we would all agree that pollution is bad, and air pollution is worse in LA/Bejing vs rural Africa. Poor people in Africa should not be penalized to fix LA’s pollution problem.

There is quite a bit of evidence to suggest that temperatures today are no warmer than they were in the late 1930s/mid 1940s.

indeed, in the late 1970s NASA/NOAA were claiming that the globe had cooled by up to 0/5degC between 1940 and 1975. According to the satellite data, it has warmed by about 0.4degc between 1979 to date.

The US temp data also backs that up, and of course, this was the problem that M@nn found with his tree rings which were not showing any significant warming and were not showing a response similar to the highly adjusted land based thermometer record, and the lack of warming in the tree ring data post 1940s prompted M@nn to do his nature trIck

Of course over the past 20 years the land based temperature record has been repeatedly adjusted to get rid of this period of cooling and replace it with a pause.

If global temperatures are today about the same as they were in 1940, it follows that not less than about 90% of manmade CO2 emissions have occurred and there has been no measurable warming (taking into account errors in the measuring system and resulting data series). This suggests that Climate Sensitivity to CO2 could be around zero, or so close thereto.

The inescapable fact is that on no time scale do temperatures and CO2 correlate and to the extent that there are some similarities, there is evidence to suggest that changes in CO2 are a response to temperature change, not a driver of temperature change.

Ristvan’s approach is sensible, logical, and, IMHO, exactly what we should be doing.

….perhaps add to it: carry out ongoing nuclear power research/advances as an emergency option backup.

They are of course correct. Can they hick-jack international politics by cradle to grave indoctrination with false scientific claims. Can their sympathisers in academia and the media throw science under a bus in order promote their political agenda with false scientific authority?

For them it is a defining issue of the generation.

Greg

dead on

And they are very exposed to losing the long game and their last shreds of credibility. The AG thing. The data manipulation thing. The fact that China and India won’t play along. The fact that renewables still need large subsidies and are still intermittant. The fact that many past predictions are past their expiration dates and are shown to be flat wrong; Arctic ice has not disappeared and sea level rise has not accelerated.

+1

China and India are playing along – China is building 2 wind turbines every hour, India is heading for 175GW of renewable energy capacity by 2022.

Arctic ice hit 2nd lowest in the record this year and it was poor weather for melting.

The data has been checked by the skeptic funded Berkley Earth project. Yes, surface temp data checks out.

Really, for most of the world, e.g. all those governments that signed up in Paris, this is an ever more real thing, based on secure scientific observation.

This blog here – this is a tiny fringe and mostly a US based one at that…

Griff, now you are lying.

China is building 2 wind turbines every hour to sell them to us.

175GW of renewable energy capacity by 2022 is a very small part of what is needed by India then.

Berkley Earth isn’t skeptic funded.

There is no scientific observation, let alone a secure one, of… err, what did you say was observed?

Now Griff is lying????

The leader of the Berkley project claimed that he was a skeptic, however a quick review of his statements over the previous couple of years showed that he was a big believer in catastrophic global warming.

Speaking as lefty, with insider knowledge, the key issue of our time is inequality. Both locally and globally.

Imagine a world where one person has all the wealth – would that be the most efficient? It has happened in the past (Hawaii on a small scale, the Mongol Horde on a larger scale).

The rich are getting richer. We are heading back to the semi-stable but inefficient feudal distribution of wealth and power.

This has nothing to do with the heat distribution in the atmosphere and oceans.

Funny thing, the bigger government gets, the more unequal the distribution of wealth is.

Always been the case, always will be the case.

Dangerous Anthropogenic Global Warming DAGW

or

Dangerous Anthropogenic Warming of the Globe DAWG

Careful…It may bite

DAWG–I love it!

Qustion 4 should be two qustions: Will Western emissions reductions inspire reductions in the rest of the world? Will reductins in t rest of the world reduce temperature increases? Since 4 is unlikely, the 0.44 score is cut in half.

Given the natural warm burst rates in the temperature record, ie, 1860 to 1880, and 1910 to 1940, which are nearly identical to 1975 to 1998.. I cannot be convinced that there can be ANY human attribution, other than our bias.

Phil Jones was asked about this. He did not deny its existence in his temperature record. That interview was in Feb 2010:

http://news.bbc.co.uk/2/hi/8511670.stm

Then there are further uncertainties. The Climategate emails discuss manipulations to cool the 1940 blip. There are uncertainties from station dropping and homogenization.

Given this, how can the author above warming to humans. Try as I might, I cannot do it. I see only natural variation. The hiatus after 2000 reinforces my view.

To me, doing nothing, other than the usual flood preparation, etc, is the best policy.

I’ve pressed a number of warmists on this point. Once you can get them to admit that the temperature actually has risen without the help of CO2, they usually take the tack that while we don’t know what caused earlier temperature increases, we know that the current increases are caused by CO2, the models have proven it.

My usual reply is that I have a model running on my computer where human beings can fly and punch down buildings. An unvalidated computer model is just a video game. They say “but ours has physics built in” and I respond that so does the video game developers spend years getting the motion looks right by applying physics to polygons to make the movement right – it still doesn’t mean I can fly and punch down a building.

… and/or they say “regardless, reducing our dependence on fossil fuels can only be a good thing.”

but we do know the causes of earlier temp rises – e.g. Milankovitch cycles, solar activity, etc.

When you examine the temp record to see if those are in play, you find: no they aren’t.

Yet the temperatures are rising.

The only known temp driver left which can account for the magnitude of increase is CO2 -and we can see the amount in the atmosphere has risen and that (isotopically) that it is human produced CO2.

There’s a clear chain of evidence here…

Griff, there’s a clear chain of evidence here of an argument from ignorance.

Griff, do you ever get tired of lying?

Are you really trying to claim that the Minoan, Roman and Medieval were caused by the Milankovitch cycles?

As for solar cycles, you warmistas have been quite vocal in declaring that the sun has only a trivial, if any, impact on climate?

Griff..

Please explain how the warm bursts I listed (and that Phil Jones was asked about) was caused by Milankovich cycles etc.

Phil Jones and Trenberth, who he discussed natural cycles with in those Climategate emails(they will kill us if it turns out to be just a natural cycle), must be stupid not to have your knowledge, so please do explain it.

This may be a useful approach to articulating and refining a strategy. But it is incomplete without a financial cost profile and some kind of impact assessment attached to each outcome. Similarly given that any strategy is based upon a simple risk assessment, the costs of futility need to be evaluated.

Whether warming is human induced or not, expenditure on mitigation will not be wasted. Costs of reducing emissions may prove to be futile if the human component is proved limited.

The cost of mitigation actions is likely to be spread over several decades as infrastructure is upgraded, building and other codes updated etc. Agriculture, and arguably the environment may largely simply adapt as it has done in the past, albeit over decadal rather than centennial time frames.

If as the science improves it is confidently demonstrated that the human component of warming is material, mitigation actions already taken will generally not have been wasted.

Actually this well thought-out tree allows for the preparation of a focused financial cost analysis/preparation since this modified Risk / Benefit Analysis limits arms that need to be assessed by throwing out the illogical approaches. Kudos.

The cost curve bends both ways. The global economy is a coupled, non-linear dynamic system that, unlike the earth’s climate, has demonstrated “tipping points” in the past. It is foolhardy to risk a global economic meltdown in pursuit of a climate optimum that may or may not exist, especially in light of recent peer-reviewed research pointing to reduced sensitivity and shorter uncertainty tails that drastically reduce the probability of a climate catastrophe even if human emissions is the culprit.

We have ample time for an orderly, market based transition to a non-carbon based global economy over the next century or two without government coercion, without wasting trillions and without sentencing those in the developing world to a life of poverty.

This is truly outstanding. I have a new presentation to prepare. Thank you.

A good review of what has to be actually researched, and the choices that have to be made, but all the probabilitites in the article are POOMA, in a field with much too much imaginary statistics.

Certainly a structured approach to the problem and a rational one, but the weakness isn’t removed. There is no compelling evidence to support the probabilities assigned. As long as that condition persists it’s a toy model that can’t be used. For example, you write the decision to assign a probability of 0.9 to anthropogenic CO2 as a cause occurs in an environment where “There is debate over whether there is a strong scientific consensus on this question”, which isn’t correct in any way other than political. There is no scientific consensus on the subject, only political consensus. There can’t be scientific consensus without repeated verifiable experiments, and none exist. So there’s no data to support your assignment, it’s simply a concession to an increasingly unpopular opinion that happens to sell magazines and newspapers.

Absent data, this is all opinion and conjecture. Laying a skin of engineering discipline over a complete lack of empirical support just obfuscates the issue and promotes ongoing pseudo-scientific justifications for political ends that have no scientific basis. That problem can’t be fixed in the way you’ve proposed. No data is no data.

A logical next step prior to assessing a focused cost analysis, great point Bartleby

The probabilities presented here are simply a SWAG

they always are.

Surely the point was, even if you apply the high level of certainty (eg the 0.9s) the outcome is not convincing. In my experience, Ristvan’s “watchful waiting” has often been the appropriate advice. Trouble is, regulatory agencies very often stop watching after a while. I recall a prediction of mine (from modelling development trends) that amounted to “I can’t currently (1999) tell you WHERE the shortfall (in sewage processing) will occur first, but by 2005-6 the location WILL be apparent”. Result? No action taken, system overflow, sewage flowing into storm water drains, short-term very expensive pumping and truck removal required, much embarassment, LOL.

On the other hand, improved technology came to the rescue; by the time the overflow occurred, pressurised trunk mains had become more affordable and provided a long-term better solution than augmenting the local plant.

Maybe it is insurance companies that ought to enforce competent assessment and risk recording? I recall occasions when they used to help by funding the research for this, but all too often these days, they are away with the fairies. Locally, I am aware of one insurance company that will provide flood insurance for coastal properties less than 1.9m above msl, but refuse it for properties at 5 to 50m 🙂

this is the exact same decision tree used for policy decisions allocating funds to mitigate the invasion of invisible elephants.

The biggest flaw in this analysis is that the branches are offered as mutually exclusive options, but it is highly likely that the effects under consideration have multiple causes.

For example, if global warming exists, it is likely to be due to both natural causes and man-made causes.

“1. Is the earth actually warming? A summary of modern climate history suggests that from 1979 to the present there has been “a large disparity between surface thermometers, which show a fairly strong warming, and independent temperature readings of satellites and balloons, which show little warming trend” (Singer & Avery, 2007, p. xv).Rigorous data analysis (Heller, 2016) and application of statistical methods that control for heteroskedacity and autocorrelation (McKitrick & Vogelsang, 2014) suggest that apart from a step-wise change in global average temperature (GAT) around 1977 there has been no statistically significant warming trend since ~1958 or earlier.”

Seriously. You start by citing Goddard.

I had held some hope out for this approach ( we did similar things in OR and war planning ), BUT then you start by citing GARBAGE

Second. there is no large disparity.

Satellites diverge from the LAND RECORD ( 30% of the earth) starting in MAY 1998, when people switched sensors ( from MSU to AMSU ) and Further the GREATEST discrepancy is in the areas

where the land is covered by snow.. well used to be covered by snow..

since satellite methods ASSUME a constant emmissivity for the ground ( haha) you can see what the problem is if snow cover changes over time.

Another drive by Mosh.

What do you disagree with on the logic or sensitivity assessment approach.

You can argue quotes or references and deflect from the substance of the issue or you can try to address the actual issue presented.

Boils down to, current approach is wasting a lot of money. Diverting resources away from other programs that would have real benefit.

eg. People die every die for lack of clean water a sanitation, cheap reliable power would largely fix this. Instead time and money it is being directed into unicorn farming.

Too funny

“Further the GREATEST discrepancy is in the areas where the land is covered by snow.. well used to be covered by snow.. since satellite methods ASSUME a constant emmissivity for the ground ( haha) you can see what the problem is if snow cover changes over time”.

===============================================

Not much of a trend apparent in the NH:

http://www.climate4you.com/images/NHemisphereSnowCoverSince1972.gif

Northern hemisphere weekly snow cover since January 1972 according to Rutgers University Global Snow Laboratory (Climate4you).

How come there is good correlation between weather balloon datasets and satellites one post 1998 so? (see Christy graphic on mid-troposphere comparison of 104 IPCC models with satellite and weather balloons)

Regarding Christy’s famous “all models are wrong” graphics, aka “too little warming in the troposphere”, there are a few tricks at work that creates the discrepancy:

-The use of too short temperature series that start with a relatively warm period around 1980.

-The use of datasets with poor vertical resolution, which masks the troposphere warming by blending it with stratosphere that cools faster than expected by models.

Using a radiosonde dataset with longer time span and vertical resolution gives this alternative view:

http://m.imgur.com/c1BY01n

The observed warming in the troposphere follows the models relatively well.

The stratosphere cools much faster than expected by the the models, but hey, isn’t stratospheric cooling a sign of increased greenhouse effect?

Since RSS TLT v3.3 is no longer endorsed by its producers due to drifts, there is only ONE dataset, an unpublished satellite beta product, supporting the “pause”.

No other dataset; surface, radiosonde, satellite, reanalysis, SST, whatever, suggest a pause. Here is a pick from the free troposphere:

http://m.imgur.com/tiOeMnx

I don’t know if the AMSU sensors are sensitive to (declining) snow or ice..

IMO, the tricks needed to get a pause is to use a poor diurnal drift correction, and to use the rouge AMSU-5 sensor onboard NOAA-15 as much as possible..

Steven Mosher,

“Seriously. You start by citing Goddard.”

Perhaps Mr. Mosher could enlighten us. Which of the three papers quoted was written by Goddard? Singer & Avery, 2007, Heller, 2016 or McKitrick & Vogelsang, 2014?

My only quibble with an interesting approach is the assumption that all AGW (if it exists) is caused by CO2.

This illustrates the uselessness of these decision trees. A law firm in Seattle once suggested that I use one in plotting lawsuit strategy. I told them it was all BS, and to use our best judgment.

Proof that decision trees are useless is that you once told a law firm that they are BS.

Is that really the line you want to go with?

+1

They are useful in guiding best use of time and resources.

All well and good. As someone has noted, we have no database from which to draw estimated probabilities.

Which points up the fundamental problem of this approach: it is based on the popular myth that probability is a concept that can be applied to unique events. It can’t. Probability is only a description of what can be expected from an ENSEMBLE of events (or measurements). Talk of probability in the above context is literally empty. (This is also partly why the Drake Equation is mostly mathematical finger-painting.)

Now, to be sure, some otherwise very intelligent people go along with this. I see it all the time at my big aerospace employer, where we go through the fantasies of assessing the Probability of Win or the Probability of Award in evaluating our competitive standing. As long as everyone understands this is only a subjective guessing game and we are dealing with betting quantities, no particular harm is done.

This approach (i.e., the Probability Tree) does have a compelling advantage over SWAG’n for a solution to a perceived problem. It forces you into a more logical analysis rather than the purely emotional one that comes with all the surrounding emotional, social and political baggage. Sure, the number of variables is nothing but guesswork, but in having to stating them you begin to realize how much you actually know about the subject. The probabilities at each junction are nothing more than a guess, but it requires that you collect your thoughts and think them out. Basically the Probability Tree leads you into an expanded ‘Fermi’ approach to the problem (re: https://en.wikipedia.org/wiki/Fermi_problem).

Where do things like this fit in?

http://www.co2science.org/education/reports/co2benefits/MonetaryBenefitsofRisingCO2onGlobalFoodProduction.pdf

Trillions in agricultural productivity. You have plenty of unknowns, such as how much of the warming is/will be from humans, how much it will cost to take actions and what will be the results of those actions.

However, photosynthesis is not a speculative theory and there is 100% certainty that increasing CO2, will continue to increase plant growth and crop yields/world food production(despite biased studies that have all been wrong, claiming that climate change will offset CO2 fertilization).

So is 10% more food for humans worth a 1 inch/decade of sea level rise and 5% more high end flooding events from the warmer atmosphere that holds more moisture?

What if it turns out to be 2″/decade sea level increase and 10% more high end flooding events?

I am only including sea level increase and excessive rain events, not only to simplify things but because these are the only 2 that, based on what actually happens with global warming are the main threats with highest probabilities of occurring(when we decrease the meridional temp gradient, which is what happens when you warm the coldest places the most).

One should not underestimate the tremendous value of world food production. To appreciate it, what if we suddenly pulled the CO2 rug out and went back to 280 ppm, with global temperatures around 1 deg. C cooler than today.

The negative impact would cause a horrific shortage of almost all crops and hard to imagine price shock.

Face it, humans are DEPENDENT on, what has been the best 4 decades of weather/climate and CO2 levels for growing crops, since at least the Medieval Warm Period.

Appreciating this more, allows one to properly assess what damages from rising sea levels and more excessive rain events, for instance that one should be willing to suffer in order to “break even”.

If the user of this information was objective, they could calculate the increase in food production based on the many know studies:

http://www.co2science.org/data/plant_growth/dry/dry_subject.php

Throw out the speculative, not happening future crop adversity scenario’s and just weigh these benefits to world food production from increasing CO2 against the speculative damage from flooding and sea level increase.

In a rational world this would make sense.

“IF” global climate models were improved and displayed a reasonable amount of skill/confidence in projecting future warming from increasing CO2, we could actually determine a reasonable point, at which the increasing food production, would be offset by sea levels accelerating higher, along with large scale flooding damage from high end rain events.

One can complicate this and dial in other reasonable expectations, like less heating demand in Winter, more cooling demand in Summer and surely a dozen other “speculative” consequences…………less polar bears, more mosquitoes (-:

Then it just goes back to one of the biggest problems that skeptics have……global climate model predictions.

Until there is evidence in the observational world (that catches up with the models warm bias) that the known benefits of increasing CO2 are losing ground to the realistically considered consequences, the rational choice is to let the CO2 go higher.

This is the type of modelling that engineers do intuitively every day – it is a part of their job. Probably too hard for alarmists to understand.

no.

no self respecting engineer does anything like this.

it’s pure fantasy about phantasms.

gnomish as a engineer I can tell you I do these types of scenario modelling frequently. It largely drives the direction to the best ROI. The high vs low probability sensitivity analysis is where the money is.

well, i’m ready to learn something if you care to explain how probabilities figure into engineering

(i would want to be persuaded that calling it engineering is not done in the way that dictatorships are soi disante – ‘democratic republics of’ or ‘social sciences” – i think you get the idea)

i’ll be happy to listen and i’ll acknowledge whatever i can construe as valid)

cuz, lenny- all day long, every day- i do cad and code and cut metal and invent things and make stuff.

tonight i’m doing an injection mold for something that goes on a machine i just finished making 10 of –

and the only time i come close to anything ‘statistical’ is looking at a MTBF spec for some electronic part.

this is my life and i never ever use statistics – i don’t budget for failure- i do tightrope without a net all the time.

i can’t gamble.

well, i’ll get on the injection mold after i finish testing the control board i made 21 of for the lasers that go on the machines so i can have 750W pulses. i’m using the o-scope to see the waveforms to choose the right r/c values to get the optimal pulse width for the system.

it’s as far as one can get from playing the odds. and when i used to live in reno- i had 100% sure thing of not losing cuz not playing.

so yeah- what do you do that is really engineering and yet is based on statistical analysis?

Failure analysis uses decision trees.

Besides, Marketting almost invariably chooses the “what to do”.

Engineering is simply faced with doing what marketing demands.

The “Decision Tree” in some organizations I have worked for goes about like this:

Some ” fine art ” student in marketing, designs a nice cardboard box with elegant pictorials on the outside of it. ” This should really get customers to grab these off the shelf as fast as we can restock them, ” says the sales department representative.

Management, asks R&D manager; “What could we build that we could fit in this box for sale ?”

R&D agrees to come up with some new finger toy to go in the Wunderbox for sale.

Manufacturing draws up a printed circuit board that fits nicely into the elegant 3D printed plastic package that the marketing arts department came up with.

Mechanical engineering devises some bullet proof mounting hardware to mount the board in the plastic package and consumes only 35% of the board space.

The PC board Arts department, designs a neat PC board part number label to place on all four edges on both sides of the board, taking up only 25% of the board space.

The QC department recommends putting some blue LEDs around the part number labels, to illuminate them even in total darkness, for safety precautions.

The IC department designs a new integrated circuit and built in heat sink to drive the blue LEDs and to emit an audible warning, if any of the LEDs ever burns out, it only uses 10% of the board space.

The job is almost done, and there is still about two square inches of space, right over there in that corner. It’s in a sort of a ZEE fold shape thusly /\/\/\ .

So they bring the board with most of the components loaded on to it, over to me.

Here you go George, we left this space over here for the Optics. Can you come up with a small ten X zoom lens to go in this space, so we can advertise a built in selfie camera ??

Optics is a couple of lines of fundamental physics (Snell’s Law, Newton’s formula etc), and the rest is just GEOMETRY.

I have NO CONTROL over the fundamental Physics.

And Geometry takes up just the right amount of space to get the optical task done.

Not all Optical tasks can be solved in …. /\/\/\ …

G

I use one of those beautiful cardboard boxes to throw the scrunched up pages of designs that can’t fit into ….. /\/\/\ …. into !!

George,

My experience was quite so bad but I think this pretty well covers both: http://www.projectcartoon.com/cartoon/1111 Of course that cartoon has been expanded a bit since its origin some 40 years ago.

Sorry, change that “was” to “wasn’t” above.

Since 1960 CO2 levels have risen by about 80ppm and global temperature has risen by about 0.5 deg C according to Hadcrut 4

If instead this were 1940 and CO2 had risen by about 30 ppm since 1910 and global temperature had risen by 0.5 deg C according to Hadcrut3, and we had a fear of catastrophic climate change and decided to save the planet what would our global state of economic development be like now 75 years later?

What stronger case is there for business as usual now, deal with whatever issues arise and plan building and development with flooding in mind, which we haven’t done very well since 1940?

In her Senate testimony, Judith Curry offers other alternatives.

In other words, do things that will help no matter whether CAGW is real or not.

help what? there is no problem except ppl crying doom over a nonproblem

There are a bunch of actual problems that we could be working on. We could work on them if it weren’t for the money we’re wasting on CAGW non-solutions.

k- gotcha. tnx