by Willis Eschenbach

People keep saying “Yes, the Climategate scientists behaved badly. But that doesn’t mean the data is bad. That doesn’t mean the earth is not warming.”

Darwin Airport – by Dominic Perrin via Panoramio

Let me start with the second objection first. The earth has generally been warming since the Little Ice Age, around 1650. There is general agreement that the earth has warmed since then. See e.g. Akasofu . Climategate doesn’t affect that.

The second question, the integrity of the data, is different. People say “Yes, they destroyed emails, and hid from Freedom of information Acts, and messed with proxies, and fought to keep other scientists’ papers out of the journals … but that doesn’t affect the data, the data is still good.” Which sounds reasonable.

There are three main global temperature datasets. One is at the CRU, Climate Research Unit of the University of East Anglia, where we’ve been trying to get access to the raw numbers. One is at NOAA/GHCN, the Global Historical Climate Network. The final one is at NASA/GISS, the Goddard Institute for Space Studies. The three groups take raw data, and they “homogenize” it to remove things like when a station was moved to a warmer location and there’s a 2C jump in the temperature. The three global temperature records are usually called CRU, GISS, and GHCN. Both GISS and CRU, however, get almost all of their raw data from GHCN. All three produce very similar global historical temperature records from the raw data.

So I’m still on my multi-year quest to understand the climate data. You never know where this data chase will lead. This time, it has ended me up in Australia. I got to thinking about Professor Wibjorn Karlen’s statement about Australia that I quoted here:

Another example is Australia. NASA [GHCN] only presents 3 stations covering the period 1897-1992. What kind of data is the IPCC Australia diagram based on?

If any trend it is a slight cooling. However, if a shorter period (1949-2005) is used, the temperature has increased substantially. The Australians have many stations and have published more detailed maps of changes and trends.

The folks at CRU told Wibjorn that he was just plain wrong. Here’s what they said is right, the record that Wibjorn was talking about, Fig. 9.12 in the UN IPCC Fourth Assessment Report, showing Northern Australia:

Figure 1. Temperature trends and model results in Northern Australia. Black line is observations (From Fig. 9.12 from the UN IPCC Fourth Annual Report). Covers the area from 110E to 155E, and from 30S to 11S. Based on the CRU land temperature.) Data from the CRU.

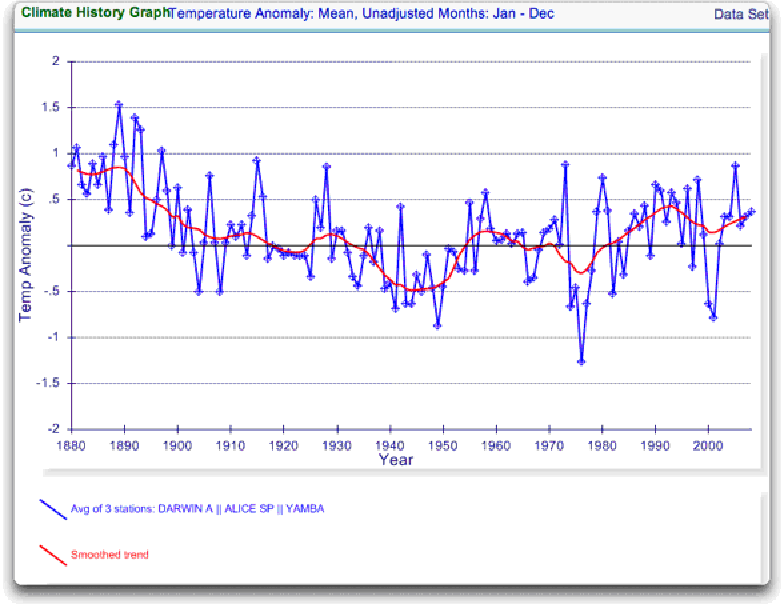

One of the things that was revealed in the released CRU emails is that the CRU basically uses the Global Historical Climate Network (GHCN) dataset for its raw data. So I looked at the GHCN dataset. There, I find three stations in North Australia as Wibjorn had said, and nine stations in all of Australia, that cover the period 1900-2000. Here is the average of the GHCN unadjusted data for those three Northern stations, from AIS:

Figure 2. GHCN Raw Data, All 100-yr stations in IPCC area above.

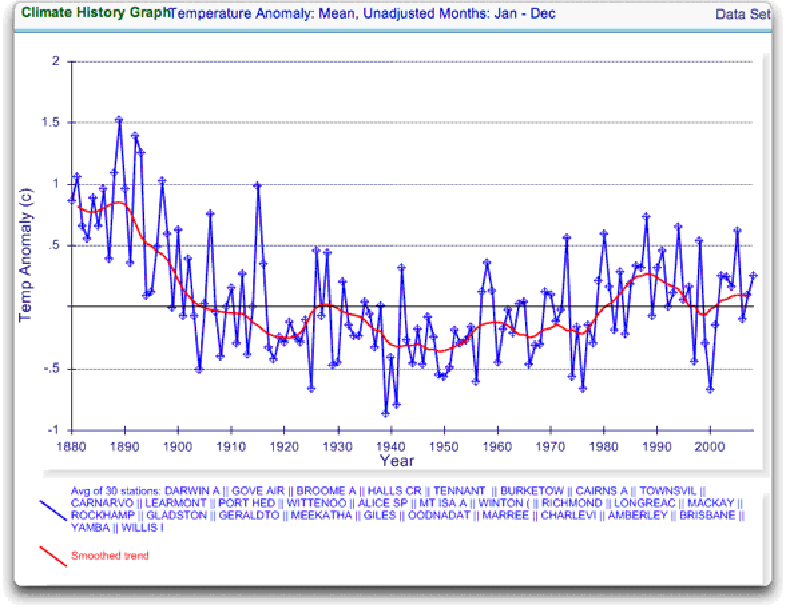

So once again Wibjorn is correct, this looks nothing like the corresponding IPCC temperature record for Australia. But it’s too soon to tell. Professor Karlen is only showing 3 stations. Three is not a lot of stations, but that’s all of the century-long Australian records we have in the IPCC specified region. OK, we’ve seen the longest stations record, so lets throw more records into the mix. Here’s every station in the UN IPCC specified region which contains temperature records that extend up to the year 2000 no matter when they started, which is 30 stations.

Figure 3. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

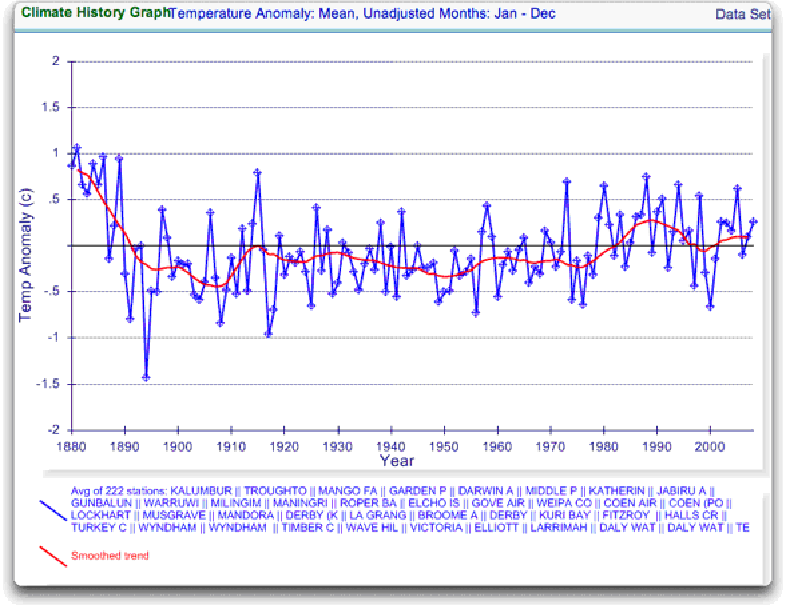

Still no similarity with IPCC. So I looked at every station in the area. That’s 222 stations. Here’s that result:

Figure 4. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

So you can see why Wibjorn was concerned. This looks nothing like the UN IPCC data, which came from the CRU, which was based on the GHCN data. Why the difference?

The answer is, these graphs all use the raw GHCN data. But the IPCC uses the “adjusted” data. GHCN adjusts the data to remove what it calls “inhomogeneities”. So on a whim I thought I’d take a look at the first station on the list, Darwin Airport, so I could see what an inhomogeneity might look like when it was at home. And I could find out how large the GHCN adjustment for Darwin inhomogeneities was.

First, what is an “inhomogeneity”? I can do no better than quote from GHCN:

Most long-term climate stations have undergone changes that make a time series of their observations inhomogeneous. There are many causes for the discontinuities, including changes in instruments, shelters, the environment around the shelter, the location of the station, the time of observation, and the method used to calculate mean temperature. Often several of these occur at the same time, as is often the case with the introduction of automatic weather stations that is occurring in many parts of the world. Before one can reliably use such climate data for analysis of longterm climate change, adjustments are needed to compensate for the nonclimatic discontinuities.

That makes sense. The raw data will have jumps from station moves and the like. We don’t want to think it’s warming just because the thermometer was moved to a warmer location. Unpleasant as it may seem, we have to adjust for those as best we can.

I always like to start with the rawest data, so I can understand the adjustments. At Darwin there are five separate individual station records that are combined to make up the final Darwin record. These are the individual records of stations in the area, which are numbered from zero to four:

Figure 5. Five individual temperature records for Darwin, plus station count (green line). This raw data is downloaded from GISS, but GISS use the GHCN raw data as the starting point for their analysis.

Darwin does have a few advantages over other stations with multiple records. There is a continuous record from 1941 to the present (Station 1). There is also a continuous record covering a century. finally, the stations are in very close agreement over the entire period of the record. In fact, where there are multiple stations in operation they are so close that you can’t see the records behind Station Zero.

This is an ideal station, because it also illustrates many of the problems with the raw temperature station data.

- There is no one record that covers the whole period.

- The shortest record is only nine years long.

- There are gaps of a month and more in almost all of the records.

- It looks like there are problems with the data at around 1941.

- Most of the datasets are missing months.

- For most of the period there are few nearby stations.

- There is no one year covered by all five records.

- The temperature dropped over a six year period, from a high in 1936 to a low in 1941. The station did move in 1941 … but what happened in the previous six years?

In resolving station records, it’s a judgment call. First off, you have to decide if what you are looking at needs any changes at all. In Darwin’s case, it’s a close call. The record seems to be screwed up around 1941, but not in the year of the move.

Also, although the 1941 temperature shift seems large, I see a similar sized shift from 1992 to 1999. Looking at the whole picture, I think I’d vote to leave it as it is, that’s always the best option when you don’t have other evidence. First do no harm.

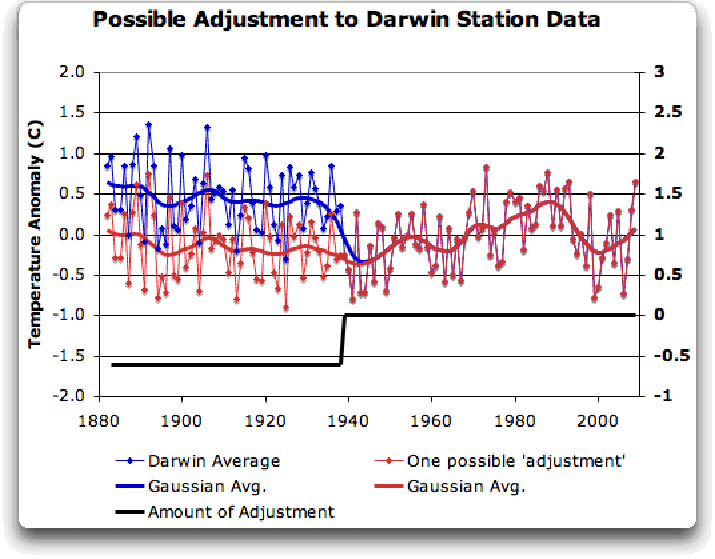

However, there’s a case to be made for adjusting it, particularly given the 1941 station move. If I decided to adjust Darwin, I’d do it like this:

Figure 6 A possible adjustment for Darwin. Black line shows the total amount of the adjustment, on the right scale, and shows the timing of the change.

I shifted the pre-1941 data down by about 0.6C. We end up with little change end to end in my “adjusted” data (shown in red), it’s neither warming nor cooling. However, it reduces the apparent cooling in the raw data. Post-1941, where the other records overlap, they are very close, so I wouldn’t adjust them in any way. Why should we adjust those, they all show exactly the same thing.

OK, so that’s how I’d homogenize the data if I had to, but I vote against adjusting it at all. It only changes one station record (Darwin Zero), and the rest are left untouched.

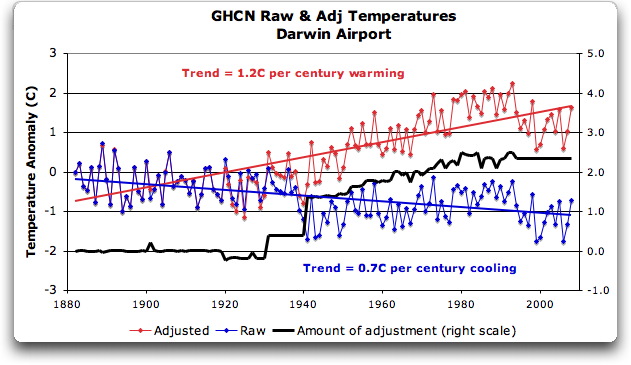

Then I went to look at what happens when the GHCN removes the “in-homogeneities” to “adjust” the data. Of the five raw datasets, the GHCN discards two, likely because they are short and duplicate existing longer records. The three remaining records are first “homogenized” and then averaged to give the “GHCN Adjusted” temperature record for Darwin.

To my great surprise, here’s what I found. To explain the full effect, I am showing this with both datasets starting at the same point (rather than ending at the same point as they are often shown).

Figure 7. GHCN homogeneity adjustments to Darwin Airport combined record

YIKES! Before getting homogenized, temperatures in Darwin were falling at 0.7 Celcius per century … but after the homogenization, they were warming at 1.2 Celcius per century. And the adjustment that they made was over two degrees per century … when those guys “adjust”, they don’t mess around. And the adjustment is an odd shape, with the adjustment first going stepwise, then climbing roughly to stop at 2.4C.

Of course, that led me to look at exactly how the GHCN “adjusts” the temperature data. Here’s what they say

GHCN temperature data include two different datasets: the original data and a homogeneity- adjusted dataset. All homogeneity testing was done on annual time series. The homogeneity- adjustment technique used two steps.

The first step was creating a homogeneous reference series for each station (Peterson and Easterling 1994). Building a completely homogeneous reference series using data with unknown inhomogeneities may be impossible, but we used several techniques to minimize any potential inhomogeneities in the reference series.

…

In creating each year’s first difference reference series, we used the five most highly correlated neighboring stations that had enough data to accurately model the candidate station.

…

The final technique we used to minimize inhomogeneities in the reference series used the mean of the central three values (of the five neighboring station values) to create the first difference reference series.

Fair enough, that all sounds good. They pick five neighboring stations, and average them. Then they compare the average to the station in question. If it looks wonky compared to the average of the reference five, they check any historical records for changes, and if necessary, they homogenize the poor data mercilessly. I have some problems with what they do to homogenize it, but that’s how they identify the inhomogeneous stations.

OK … but given the scarcity of stations in Australia, I wondered how they would find five “neighboring stations” in 1941 …

So I looked it up. The nearest station that covers the year 1941 is 500 km away from Darwin. Not only is it 500 km away, it is the only station within 750 km of Darwin that covers the 1941 time period. (It’s also a pub, Daly Waters Pub to be exact, but hey, it’s Australia, good on ya.) So there simply aren’t five stations to make a “reference series” out of to check the 1936-1941 drop at Darwin.

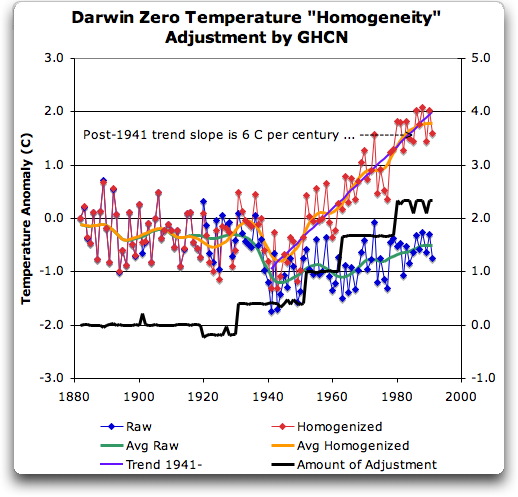

Intrigued by the curious shape of the average of the homogenized Darwin records, I then went to see how they had homogenized each of the individual station records. What made up that strange average shown in Fig. 7? I started at zero with the earliest record. Here is Station Zero at Darwin, showing the raw and the homogenized versions.

Figure 8 Darwin Zero Homogeneity Adjustments. Black line shows amount and timing of adjustments.

Yikes again, double yikes! What on earth justifies that adjustment? How can they do that? We have five different records covering Darwin from 1941 on. They all agree almost exactly. Why adjust them at all? They’ve just added a huge artificial totally imaginary trend to the last half of the raw data! Now it looks like the IPCC diagram in Figure 1, all right … but a six degree per century trend? And in the shape of a regular stepped pyramid climbing to heaven? What’s up with that?

Those, dear friends, are the clumsy fingerprints of someone messing with the data Egyptian style … they are indisputable evidence that the “homogenized” data has been changed to fit someone’s preconceptions about whether the earth is warming.

One thing is clear from this. People who say that “Climategate was only about scientists behaving badly, but the data is OK” are wrong. At least one part of the data is bad, too. The Smoking Gun for that statement is at Darwin Zero.

So once again, I’m left with an unsolved mystery. How and why did the GHCN “adjust” Darwin’s historical temperature to show radical warming? Why did they adjust it stepwise? Do Phil Jones and the CRU folks use the “adjusted” or the raw GHCN dataset? My guess is the adjusted one since it shows warming, but of course we still don’t know … because despite all of this, the CRU still hasn’t released the list of data that they actually use, just the station list.

Another odd fact, the GHCN adjusted Station 1 to match Darwin Zero’s strange adjustment, but they left Station 2 (which covers much of the same period, and as per Fig. 5 is in excellent agreement with Station Zero and Station 1) totally untouched. They only homogenized two of the three. Then they averaged them.

That way, you get an average that looks kinda real, I guess, it “hides the decline”.

Oh, and for what it’s worth, care to know the way that GISS deals with this problem? Well, they only use the Darwin data after 1963, a fine way of neatly avoiding the question … and also a fine way to throw away all of the inconveniently colder data prior to 1941. It’s likely a better choice than the GHCN monstrosity, but it’s a hard one to justify.

Now, I want to be clear here. The blatantly bogus GHCN adjustment for this one station does NOT mean that the earth is not warming. It also does NOT mean that the three records (CRU, GISS, and GHCN) are generally wrong either. This may be an isolated incident, we don’t know. But every time the data gets revised and homogenized, the trends keep increasing. Now GISS does their own adjustments. However, as they keep telling us, they get the same answer as GHCN gets … which makes their numbers suspicious as well.

And CRU? Who knows what they use? We’re still waiting on that one, no data yet …

What this does show is that there is at least one temperature station where the trend has been artificially increased to give a false warming where the raw data shows cooling. In addition, the average raw data for Northern Australia is quite different from the adjusted, so there must be a number of … mmm … let me say “interesting” adjustments in Northern Australia other than just Darwin.

And with the Latin saying “Falsus in unum, falsus in omis” (false in one, false in all) as our guide, until all of the station “adjustments” are examined, adjustments of CRU, GHCN, and GISS alike, we can’t trust anyone using homogenized numbers.

Regards to all, keep fighting the good fight,

w.

FURTHER READING:

My previous post on this subject.

The late and much missed John Daly, irrepressible as always.

More on Darwin history, it wasn’t Stevenson Screens.

NOTE: Figures 7 and 8 updated to fix a typo in the titles. 8:30PM PST 12/8 – Anthony

Willis Eschenbach (17:19:16) :

Please see my post:

bill (17:11:19) :

For “reasoned” changes suggested for the raw data

1941 +0.8C

1994 +0.8C

Note that both these steps are across a missing months data – time to set uo and calibrate?

The june 1994 step, I can find no reason for on the web (digital sensors perhaps?)

CC (17:52:13) :

“”I think in this case I prefer the blind algorithm, even if it does occasionally spit out an odd result!””

I’d still like to find out exactly how blind the algorithm really is.

GISS 1977 step @ur momisugly Oenpelli (Gunbalunya) (Station 14042; 230 km east of Darwin) +0.5 C ± ~0.3 C.

Not blind if it was an ‘AlGorhythm’.

Wow, no one has responded to Lambert’s criticism. Still. The issue is not whether the codes are released – that’s just stonewalling/ ad hominem attacks. The real issue is that no one has ever given a reason why the adjustments were not justified.

Before making accusations of “bogus” adjustments, the burden of proof is to show why the adjustments should not have been made.

JJ et al:

I agree that one data series like this is not conclusive. I think most people would agree with that. Why can’t folks do exactly this kind of analysis showing the adjustment to see if this happens a lot? If a random sample of say 50 equivalent stations were run and all or nearly all of them had significant warming adjustments made like Darwin Zero (which I think everyone agrees looks fishy – even though it might not be) then I think we’d all agree that maybe there is some sort of bias built into the adjustment method. If they all pretty much average out (and I think that we could pretty easily determine what a statistically significant result would be) then that tells us that while Darwin Zero may be adjusted wrong, it’s not indicitive of a trend.

One way a bias could be introduced is that (if I understand correctly) this kind of adjustment isn’t run in the United States because we have the meta data. By treating the United States differently, if the US data is used as “neighboring” by the adjustment software it could introduce a trend that could replicate. It’s also not out of the question that the adjustment code could use adjusted data at each location instead of raw data so you could also wind up with a runaway trend there as well.

If some of the stats wiz folks here could come up with the number of stations that would be statistically significant and then someone come up with a procedure for picking that number of stations at random and then we see the graphs, wouldn’t that tell us something?

Well, for the US, there are 1221 NCDC/USHCN1 stations.

Weighting them all equally (quick and dirty, but nonetheless informative):

Raw Data: +0.14C per century

Adjusted Data (FILNET): +0.59C per century

That’s a pretty big adjustment. Roughly twice as many trends are adjusted warmer as cooler.

(I suspect this is much the same for the rest of the world. We’ll find out, soon enough!)

Hello Willis,

I commend the effort you have expended here, it truly takes someone dedicated to go through all of the trouble. However, I think you have mislead your audience in not quoting some of the more important parts of the article you cite (the quote below Figure 7). From the article (pp. 2844–2845), they: (1) state the error on their “second minimizing technique” of p=0.01 from a Multivariate Randomized Block Permutation (thus allowing the reader to determine whether they think that is reasonable); (2) they go on to explain their discontinuity adjustments as being based on tests at every annual data point (95% confidence being the cutoff for adjustment, using a nonparametric technique not subject to distributional biases); and (3) that their goal is to create historic data that is homogenized to current record keeping.

A couple comments (and here I am assuming that what they did is what they said they did—a replication could determine whether that was the case). First, as an effort to remove bias from all periods, their statistical method seems sound. One can always argue with whether they should have picked a 99% confidence level for the annual discontinuity adjustments, and one could re-estimate their results accordingly. But 95% is the usual, baseline standard and the analysis can always be repeated. Second, as your black line shows, the adjustments are sometimes in the negative direction, but the great majority are positive. Each of the jumps, of course, are the points at which the discontinuity analysis found, with a 95% confidence, that there was a positive *or* negative jump. This is the source of their adjustment—statistical estimation. Any one of the adjustments has a 5% chance of being an error, but as you know, the chances of just 5 being wrong simultaneously is very, very small. Still, of course, you could re-estimate the models and apply a stricter confidence interval. Third, there seems to be some assumption in the above discussion that what is being measured is the temperature in Darwin. I’m not a climate scientist (or a geographer), but I don’t believe that is correct. What they are trying to estimate is a temperature for the geographic area, what they *call* Darwin, which is why the distance to and from each point doesn’t matter much in terms of trying to get a historic baseline (assuming, of course, they aren’t ignoring other valid data points within that region). Finally, a purely graphical approach may not be able get to the bottom of their statistical techniques. Unless you can see in dimensions > 3 (I certainly can’t), then projecting multiple station moves, etc., onto a 2D surface may be misleading.

Best,

~JWM

This is exciting. Thanks.

I hope this leads to some actions. I mean, I would like to see more answers on high level to all these “An Inconvenient Truths”

CC (14:30:52) :

“Seeing as how you seem to know what you’re talking about, and are good at explaining stuff for laymen, I have a question. What’s the purpose of the GHCN2 homogenization? I am struggling to see what use it has.”

Thank you. I will preface by caveating myself. I do quite a lot of environmental monitoring and a fair bit of modeling, so am familiar with the territory that these homogenization schemes operate in, and I am comfortable with much of the math. I am not an expert in homogenization theory, however. I can probably pull off a decent explanation for the layman, but my own understanding doesnt necessarily extend much deeper.

The general idea behind homogenization is to remove non-climate signals from the data. These include various observational errors as well as instrument changes, siting changes, etc. The two methods that GHCN uses to accomplish that are :

1) a metadata approach. You look at detailed station records for indications of non-climate effects, and you apply a specific correction for each effect.

2) a relative approach. This begins with the assumption that climate is fundamentally regional, and that all stations within a particular region should show the same climate pattern. Individual sites within a region may vary from one another in scale (one may be warmer or cooler than another on average) and there will of course be random sampling effects. Other than that, sites within the same region are assumed to have the same pattern and the same variability about the pattern, and thus should be highly correlated with one another. Thus, if you compare sites in the same region and you see a relatively minor difference in pattern between otherwise highly correlated sites, that minor difference is assumed to be a non climate signal that can be adjusted out.

GHCN uses 1) for US sites, and 2) outside the country. There are benefits and drawbacks to both. Good quality metadata isnt available everywhere. Even where it is, coming up with an adjustment to apply for each non climate effect can be challenging. It’s often an exercise in modeling itself – and carries with it all of the error inherent in that process. Often, the adjustment applied to an effect discovered by the metadata approach is derived using the relative approach.

With the relative approach, you dont need metadata. Even where there is good metadata, the relative approach can pick up things that arent recorded. On the other hand, the relative approach is founded on some broad assumptions. The adjustments that you choose to make and the magnitude of those adjustments are not based on site specific knowledge about what happened there, but what you infer should have happened there, based on what you see happening elsewhere in the ‘neighborhood’. You are relying on your assumptions holding frequently enough that the results are close enough to the truth often enough to improve the aggregate results vs what you would have got using the raw data.

There are statistical methods that can be used to make those assumptions, and sometimes to check those assumptions, but they arent perfect arbiters even in theory. And as we have seen with the statistics bound up in the Tree Ring Circus, sometimes the theory and/or the proper application of complex statistics is above the heads of many researchers for whom statistics is not their primary field. The maths often dont operate intuitively, and spurious results are often difficult or effectively impossible to detect. I dont know that there is anything amiss in the area of homogenization schemes, but it is a concern.

“Presumably the reference series must be known to be more reliable that the series being adjusted, otherwise the adjustment would have no justification.”

Rather the opposite. The reliability of the reference series is not known, it is assumed. That is part of the fundamental assumption that climate is regional in pattern. That assumption is the weakness of the relative approach. Is climate truly regional in pattern? Everywhere and always? Good questions.

“But if we have a reliable reference, why use the less reliable series at all?”

The series being adjusted is not necessarily less reliable than any of the individual series that are aggregated to comprise the reference series. In fact, it might be more reliable overall than any of them. The assumption is that it is unlikely that several stations will all show exactly the same non climate effect at the same time, and that the group can correct the individual.

Say station #1 moves to the airport in 1963. It is unlikely that stations #2 and #3 will also move the same year to a place that engenders the same average temp change as the move by station #1, so stations #2 and #3 will expose the change at station #1 in 1963. Meanwhile, station #2 might get a Stevenson screen in 1930, move to the park in 1950, and change over to MMT sensor in 1980. It can contribute to making station #1 more reliable, though it is itself generally less reliable.

“I assume the reference must be some distance away, and we are just trying to get broader coverage.”

For me, it is an interesting question as to exactly where the balance lies between the influence of greater spatial coverage and the influence of the regional homogeneity assumption on the overall results.

“If there is a non-negligible geographic distance between the reference and the other series which are adjusted to it, why is it justified to adjust the other series to the reference? Might the differences not be due to local climate variations?”

Under the fundamental assumption of the relative approach, no. At least, there wont be enough of that to effect the overall result significantly. So they say.

“Also, if a series such as the Darwin series is adjusted so much that it no longer bears any resemblance to the actual temperature at Darwin, which the authors seem to admit might potentially happen, then how is it useful? Isn’t the whole point to work out what the average temperature of an area is? How is this helped by moving some of the raw data away from its correct temperature?”

If that happens, it is evidence that the fundamental assumption has been broken, for some time, at that site.

That is why Willis’ work here is interesting. If correct, it exposes a fairly significant bust at this site. It isnt a ‘smoking gun’ all by itself, however, as the methods are understood to produce these errors. And allegedly, these sort of errors happen infrequently enough that the overall result is still improved vs not applying the method.

That assertion, along with all of the method assumptions, certainly deserve a thorough going over, IMO.

Also, note that even if they are operating perfectly according to plan, these methods dont necessarily pick up all non climate signals in the data. The relative method keys in on discontinuities (jumps) in the data. A non climate signal that presents as a continuous trend change will likely not be identified by . UHI is suspected to

I like the idea of focusing on a single fishy-looking case and asking climate scientists and data-adjusters to explain it in full detail. Even if the result is inconclusive after this length of time due to various uncertainties, it would still establish a range for the unknowns and shed light on the issues involved (amount of subjectivity in making adjustments, amount of automation in the adjustments, etc.) in the larger controversy.

Even if it’s not much progress, it’s some — and we all should take what we can get and be happy for that.

I posted this over at the Accuweather site:

Mark,

You should check out the actual sites, before you state, “Global warming contrarians often don’t understand why it might be necessary to adjust raw temperature data, so they create in their minds theories involving misconduct and fabrication.”

It is obvious it is you who are creating things in your mind, for it is obvious you have failed to do the hard work of double-checking the adjustments made by these frauds.

The GHCN data of Darwin, Australia shows four .5 degree upwards adjustments. A single .5 degree adjustment would raise eyebrows, but four (for a total of two degrees) is just plain absurd.

You are quite correct when you state adjustments have been made for urban heating, but have you ever gone to see what those adjustments actually are? In some cases they are an upward adjustment!!!

More and more people are double checking the three main data sets graphed above. They just go look at the raw data from a local weather station, and compare it with the “adjusted” data. Over and over these people, (foot soldiers in the battle for Truth,) report the raw data shows little rise, or a slow recovery from the Little Ice Age, and see nothing like the sharp rise shown in the three graphs shown above.

I am afraid, Mark, that you have been hoodwinked. Your trust has been abused. You sit there and parrot the talking points you read at Alarmist sites, but never get down to the dirty details of actually checking the individual weather stations.

The three major data sets are compiled by three closely associated groups of quasi-scientists who are constantly cross-checking with each other. They remind me of three school boys sitting in the waiting room of the headmaster, desperately whispering to each other, attempting to get their alibis straight.

However, when the headmaster is Truth, (as is the case with strict science,) all alibis unravel.

I don’t know if you’ve ever been in the position of questioning fibbing school boys, but they resemble Climate Scientists. When asked to produce proof, they often resort to stating, “I lost it,” (much like UEA, concerning raw data.) Also they often point at the other guy, and state “he did it too,” which is what is being said when you stress “the three data sets agree with each other.”

If one data set is shown to be rotten, it does not make the other two look better. Quite the opposite. It makes them look worse.

What is needed is full exposure. Show the raw data. Show each adjustment. Explain each adjustment. Only then can this “new and improved” data be trusted.

If you trust without verification, you are likely to find yourself in the uncomfortable position of waking up one day and finding you have been a sucker and a chump.

If you are too busy (or lazy) to do the verifying yourself, at least you should appreciate the efforts of those who are doing the hard work, station by station, all over the world.

JJ (23:48:48) :

That’s all very interesting, I am a long time (PhD) consulting environmental scientist myself with a fair bit of modeling experience e.g catchment hydrology, mine site hydrology etc. So I understand reasonably well this ‘territory’ you have so succinctly summarized. But it still doesn’t help me understand the logic behind such as this:

http://thedogatemydata.blogspot.com/2009/12/raw-v-adjusted-ghcn-data.html

For example, how can a relative method be expected to satisfactorily ‘key in’ as you put it on discontinuities (jumps) in the data associated with (in particular) widely separated coastal sites when each coastal site is at the edge of expanse of open ocean intent on creating natural discontinuities with decadal or longer term warm/cold current or prevailing wind shifts.

Similar difficulties can be envisioned for sites separated by mountain chains, major rivers, etc., etc.

I suspect there are plenty of ‘busts’ out there.

@wobble

“I’m fine dropping the accusations of wrongdoing. But let’s be realistic about the chances of a replication exercise yielding any fruit at all.”

That is no attitude to take. The little objections you are bringing up are easily surmounted. You don’t know what ‘neighboring’ means? Then do a spatial correlation test for yourself, to see how correlation drops off with distance. You’ll find out. Or, as jj recommends, keep reading through the paper tree to get a full idea. These papers look pretty detailed to me; don’t ask to be spoon-fed.

Willis:

“Thanks for the question. Sure, it has occurred to me. But I must confess, I’ve given up asking. I’ve asked for so much data, and filed FOI requests for so much data, and been blown off every time, that I’ve grown tired of being ignored.”

Willis, I’m saying this earnestly. You’ve made rather strong accusations in this post, without doing nearly enough work to back them up. Your credibility is at stake when you do such things. What will you do if the Australians dig out the site history, and show a bunch of things about this site that require adjustments? Where does that leave you?

The least you could have done was to politely ask the Australians first, with a good faith question. If they don’t respond, then so be it.

“And those adjustments are the GHCN adjustments, not the Australian adjustments. I was writing about the GHCN. I haven’t a clue what the Aussies do, there’s only so many hours in a day.”

Yes, but again, the GHCN adjustments should correspond to some physical thing. So if you don’t have the time to repeat the GHCN method for yourself, asking the Aussies for the site history would help.

I can’t emphasize this enough: if you think you have the time to make accusations of fraud, then you have to take the time to do the required legwork. If you don’t have that time, then all you can do is make a post saying “I don’t understand these adjustments; does anybody know what’s going on here?”

“So what adjustment are you proposing we make? A tenth of a degree? Half a degree? A degree and a half? Show your work.”

For a rough start, just do whatever you showed in Fig 6; it’d be better than doing nothing. I agree that a rigorous adjustment is difficult using only the data you have above; perhaps the sites overlapped by a bit – it’d be worth checking the Aussie site.

“Your statement of nearby stations in the sixties doesn’t help at all with the adjustments that were done in 1920, 1930, and 1941.”

Then expand your definition of nearby. See how far out you can go and still have a correlation.

“And while it is true that GISS, CRU, and the Aussies all do their own homogenization and they all roughly agree, that does not comfort my soul in the slightest … if you don’t understand why, read the CRU emails.”

I don’t know that they do all agree in all ways.. the picture I see on GISS leaves out the mess at 1941. It’d be useful to put them all up, especially the CRU set since that’s what’s used to make the IPCC diagrams at the top of your post. And finally, you can’t just use ‘oh, read the emails’ as an excuse to not do the work to back up your claims. Not everybody thinks the emails are as dastardly as the regulars at this site.

Steve Short

Yes, there are lots of other nearby stations, mostrly with fairly short records, but covering some time in all. I posted some analysis on the other thread which may be relevant, and I’ll repeat it here:

I did a test on a block of stations in the v2.temperature.inv listing, which are in north NT. I noted that wherever there was an adjustment, the most recent reading was unchanged. So I listed the adjustment (down) that was made to the first (oldest) reading in the sequence. Many stations, with shorter records, did not appear in the _adj file – no adjustment had been calculated. That is indicated by “None” in the list – as opposed to a calculated 0.0. Darwin’s 2.9 is certainly the exception.

In this listing, the station number is followed by the name, and the adjustment.

50194117000 MANGO FARM None

50194119000 GARDEN POINT None

50194120000 DARWIN AIRPOR 2.9

50194124000 MIDDLE POINT 0.0

50194132000 KATHERINE AER 0.0

50194137000 JABIRU AIRPOR None

50194138000 GUNBALUNYA None

50194139000 WARRUWI None

50194140000 MILINGIMBI AW None

50194142000 MANINGRIDA None

50194144000 ROPER BAR STO None

50194146000 ELCHO ISLAND 0.7

50194150000 GOVE AIRPORT None

Here ia a comment from a scientist who has been involved in adjustments to the Australian data sets. Here’s what he says about Darwin in particular:

No, it’s an algorithm. I think they describe what they do, even if they’re not showing you the algorithm in detail. IIRC, they’re taking the first difference of each of the stations’ annual records, and then using “nearby” stations to resolve step changes (a unidirectional spike in the first difference data) in a single station. If all the stations show that step change, then there’s nothing to resolve, or at least no way to resolve it.

I haven’t actually attempted this, but I’ve done some of this kind of data analysis WRT other, unrelated applications.

It’s probably not all that simple, because if for some reason there are holes in the station data record, you’re going to have to toss out some data, so you have to code for those eventualities.

Interesting that the just published EPA “Endangerment Finding” responds to surface station criticisms by referencing a 2006 study by Peterson which concluded that the adjustments result in no bias to long term trends. This looks like another case of the fox guarding the hen house.

http://www.epa.gov/climatechange/endangerment/downloads/RTC%20Volume%202.pdf

I wonder how the subset of sites selected for Peterson’s study was selected.

“And CRU? Who knows what they use? We’re still waiting on that one, no data yet …”

It might appear that the only way to get the CRU data is for someone to “steal” it. Wait, isn’t that what Robin Hood did?

I’m trying to understand JWM’s (21:34:47) explanation.

So, if for instance ‘large’ drops in temperature tends to occur suddenly (within a year), but increases in temperature occurs gradually, then only the drops will be cancelled out automatically because they fulfill the 95% discontinuance test level?

And if the confidence level is fixed at 99.9% instead then the global average adjusted temperature will likely match the unadjusted?

… I accidently hit the ‘Submit’ button while typing last night, and cut myself off. Probably wasnt a bad thing, given how long that post was 🙂

I’ll finish the truncated thought by stating that UHI effects are expected to often present as continuous trends, so these homogenization schemes that do search and destroy on discontinuities will miss such UHI effects.

I’ll round out the general discussion of homogenization schmes by noting that Willis’ analysis of Darwin appears to establish that an incorrect ‘correction’ of something more than a degree C can be applied by the GHCN homogenization scheme. If there are busts in the assumptions of that scheme, particularly in the assumption that there is not bias in the errors, that 0.6F trend in the effect of the adjustments on the global result could be largely error.

Wobble,

You cannot possibly have worked you way thru that paper and its antecedents with sufficent attention to detail to make that statement.

“Please. Here’s a quick example.”

Please? Thats not a response. Did you in fact work your way thru that paper and its antecedents before posting? I will bet a six-pack not.

The two ‘missing facts’ you identify (definition of ‘neigboring’ and the t-Test significance cut off for calling a discontinuity) are almost certainly given in the papers cited, with (Peterson and Easterling 1994) being the most likely candidate. I’d wager a pizza on that one.

Please respond promptly. It’s Friday and I’ve got a cheap date planned 🙂

@carrot eater.

I think you are going overboard. From what we have now been told by the Aussies the temperature monitoring was re-invented three times and then moved so often they might as well have strapped the damn thing to a 4×4 and driven all over the outback.

To claim that any scientifically useful data could be drawn from such a piece of equipment is itself fraudulent, and begs the question “Why?”.

JWM (21:34:47) :

“”From the article (pp. 2844–2845), they: (1) state the error on their “second minimizing technique” of p=0.01 from a Multivariate Randomized Block Permutation (thus allowing the reader to determine whether they think that is reasonable); (2) they go on to explain their discontinuity adjustments as being based on tests at every annual data point (95% confidence being the cutoff for adjustment, using a nonparametric technique not subject to distributional biases)””

I just want to make sure that everyone understands this properly.

These aren’t confidence levels associated with the actual discontinuity adjustments. These confidence levels are associated with the establishment of the reference series and the IDENTIFICATION of actual discontinuities.

There is no definitive statement regarding the confidence level associated with the actual adjustment applied to the discontinuity.

“”Finally, a purely graphical approach may not be able get to the bottom of their statistical techniques. Unless you can see in dimensions > 3 (I certainly can’t), then projecting multiple station moves, etc., onto a 2D surface may be misleading.””

I think this is a fair point. However, it’s now obvious that discontinuities – as defined by GHCN (in other words – strictly as compared to reference series) – exist in data sets which present a smooth 2D graph and have no associated metadata.

So we should be looking for these counter-intuitive GHCN defined discontinuities in which no algorithmic adjustments were applied.