by Willis Eschenbach

People keep saying “Yes, the Climategate scientists behaved badly. But that doesn’t mean the data is bad. That doesn’t mean the earth is not warming.”

Darwin Airport – by Dominic Perrin via Panoramio

Let me start with the second objection first. The earth has generally been warming since the Little Ice Age, around 1650. There is general agreement that the earth has warmed since then. See e.g. Akasofu . Climategate doesn’t affect that.

The second question, the integrity of the data, is different. People say “Yes, they destroyed emails, and hid from Freedom of information Acts, and messed with proxies, and fought to keep other scientists’ papers out of the journals … but that doesn’t affect the data, the data is still good.” Which sounds reasonable.

There are three main global temperature datasets. One is at the CRU, Climate Research Unit of the University of East Anglia, where we’ve been trying to get access to the raw numbers. One is at NOAA/GHCN, the Global Historical Climate Network. The final one is at NASA/GISS, the Goddard Institute for Space Studies. The three groups take raw data, and they “homogenize” it to remove things like when a station was moved to a warmer location and there’s a 2C jump in the temperature. The three global temperature records are usually called CRU, GISS, and GHCN. Both GISS and CRU, however, get almost all of their raw data from GHCN. All three produce very similar global historical temperature records from the raw data.

So I’m still on my multi-year quest to understand the climate data. You never know where this data chase will lead. This time, it has ended me up in Australia. I got to thinking about Professor Wibjorn Karlen’s statement about Australia that I quoted here:

Another example is Australia. NASA [GHCN] only presents 3 stations covering the period 1897-1992. What kind of data is the IPCC Australia diagram based on?

If any trend it is a slight cooling. However, if a shorter period (1949-2005) is used, the temperature has increased substantially. The Australians have many stations and have published more detailed maps of changes and trends.

The folks at CRU told Wibjorn that he was just plain wrong. Here’s what they said is right, the record that Wibjorn was talking about, Fig. 9.12 in the UN IPCC Fourth Assessment Report, showing Northern Australia:

Figure 1. Temperature trends and model results in Northern Australia. Black line is observations (From Fig. 9.12 from the UN IPCC Fourth Annual Report). Covers the area from 110E to 155E, and from 30S to 11S. Based on the CRU land temperature.) Data from the CRU.

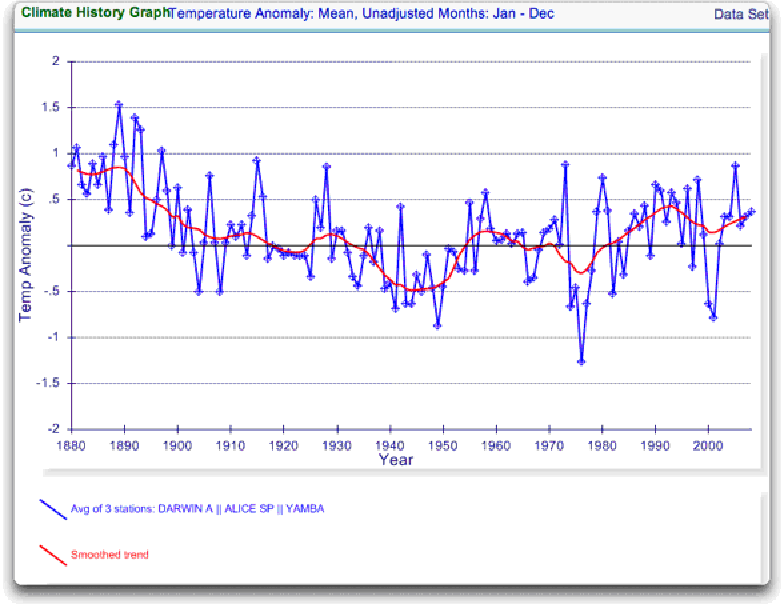

One of the things that was revealed in the released CRU emails is that the CRU basically uses the Global Historical Climate Network (GHCN) dataset for its raw data. So I looked at the GHCN dataset. There, I find three stations in North Australia as Wibjorn had said, and nine stations in all of Australia, that cover the period 1900-2000. Here is the average of the GHCN unadjusted data for those three Northern stations, from AIS:

Figure 2. GHCN Raw Data, All 100-yr stations in IPCC area above.

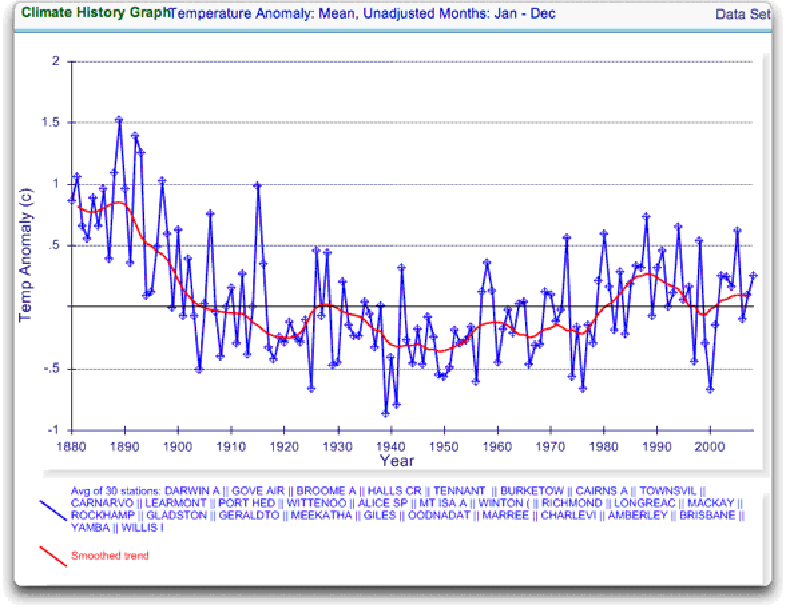

So once again Wibjorn is correct, this looks nothing like the corresponding IPCC temperature record for Australia. But it’s too soon to tell. Professor Karlen is only showing 3 stations. Three is not a lot of stations, but that’s all of the century-long Australian records we have in the IPCC specified region. OK, we’ve seen the longest stations record, so lets throw more records into the mix. Here’s every station in the UN IPCC specified region which contains temperature records that extend up to the year 2000 no matter when they started, which is 30 stations.

Figure 3. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

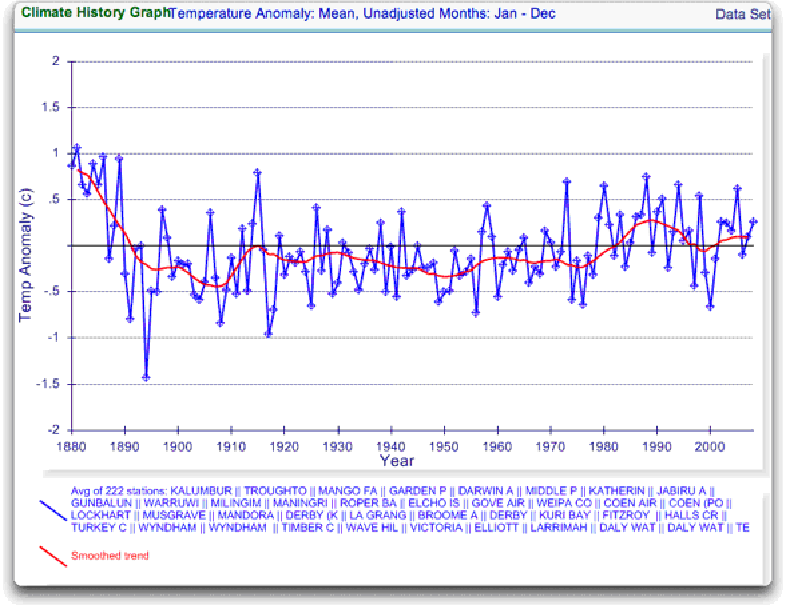

Still no similarity with IPCC. So I looked at every station in the area. That’s 222 stations. Here’s that result:

Figure 4. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

So you can see why Wibjorn was concerned. This looks nothing like the UN IPCC data, which came from the CRU, which was based on the GHCN data. Why the difference?

The answer is, these graphs all use the raw GHCN data. But the IPCC uses the “adjusted” data. GHCN adjusts the data to remove what it calls “inhomogeneities”. So on a whim I thought I’d take a look at the first station on the list, Darwin Airport, so I could see what an inhomogeneity might look like when it was at home. And I could find out how large the GHCN adjustment for Darwin inhomogeneities was.

First, what is an “inhomogeneity”? I can do no better than quote from GHCN:

Most long-term climate stations have undergone changes that make a time series of their observations inhomogeneous. There are many causes for the discontinuities, including changes in instruments, shelters, the environment around the shelter, the location of the station, the time of observation, and the method used to calculate mean temperature. Often several of these occur at the same time, as is often the case with the introduction of automatic weather stations that is occurring in many parts of the world. Before one can reliably use such climate data for analysis of longterm climate change, adjustments are needed to compensate for the nonclimatic discontinuities.

That makes sense. The raw data will have jumps from station moves and the like. We don’t want to think it’s warming just because the thermometer was moved to a warmer location. Unpleasant as it may seem, we have to adjust for those as best we can.

I always like to start with the rawest data, so I can understand the adjustments. At Darwin there are five separate individual station records that are combined to make up the final Darwin record. These are the individual records of stations in the area, which are numbered from zero to four:

Figure 5. Five individual temperature records for Darwin, plus station count (green line). This raw data is downloaded from GISS, but GISS use the GHCN raw data as the starting point for their analysis.

Darwin does have a few advantages over other stations with multiple records. There is a continuous record from 1941 to the present (Station 1). There is also a continuous record covering a century. finally, the stations are in very close agreement over the entire period of the record. In fact, where there are multiple stations in operation they are so close that you can’t see the records behind Station Zero.

This is an ideal station, because it also illustrates many of the problems with the raw temperature station data.

- There is no one record that covers the whole period.

- The shortest record is only nine years long.

- There are gaps of a month and more in almost all of the records.

- It looks like there are problems with the data at around 1941.

- Most of the datasets are missing months.

- For most of the period there are few nearby stations.

- There is no one year covered by all five records.

- The temperature dropped over a six year period, from a high in 1936 to a low in 1941. The station did move in 1941 … but what happened in the previous six years?

In resolving station records, it’s a judgment call. First off, you have to decide if what you are looking at needs any changes at all. In Darwin’s case, it’s a close call. The record seems to be screwed up around 1941, but not in the year of the move.

Also, although the 1941 temperature shift seems large, I see a similar sized shift from 1992 to 1999. Looking at the whole picture, I think I’d vote to leave it as it is, that’s always the best option when you don’t have other evidence. First do no harm.

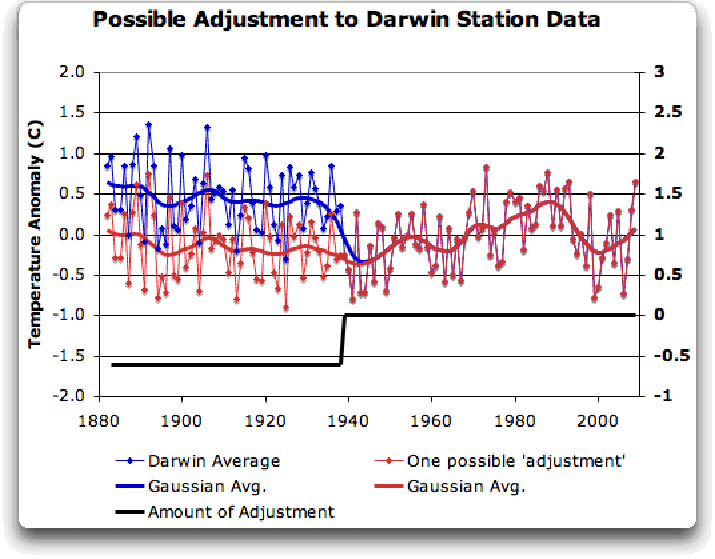

However, there’s a case to be made for adjusting it, particularly given the 1941 station move. If I decided to adjust Darwin, I’d do it like this:

Figure 6 A possible adjustment for Darwin. Black line shows the total amount of the adjustment, on the right scale, and shows the timing of the change.

I shifted the pre-1941 data down by about 0.6C. We end up with little change end to end in my “adjusted” data (shown in red), it’s neither warming nor cooling. However, it reduces the apparent cooling in the raw data. Post-1941, where the other records overlap, they are very close, so I wouldn’t adjust them in any way. Why should we adjust those, they all show exactly the same thing.

OK, so that’s how I’d homogenize the data if I had to, but I vote against adjusting it at all. It only changes one station record (Darwin Zero), and the rest are left untouched.

Then I went to look at what happens when the GHCN removes the “in-homogeneities” to “adjust” the data. Of the five raw datasets, the GHCN discards two, likely because they are short and duplicate existing longer records. The three remaining records are first “homogenized” and then averaged to give the “GHCN Adjusted” temperature record for Darwin.

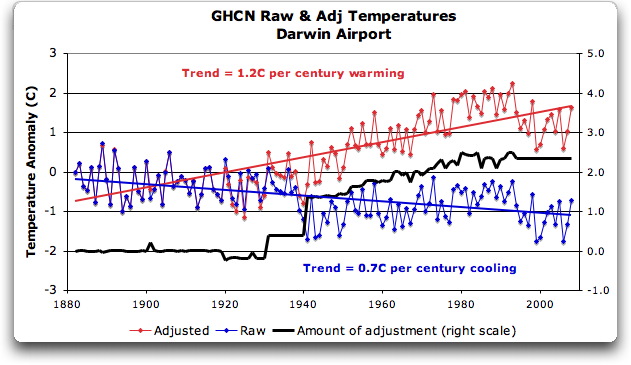

To my great surprise, here’s what I found. To explain the full effect, I am showing this with both datasets starting at the same point (rather than ending at the same point as they are often shown).

Figure 7. GHCN homogeneity adjustments to Darwin Airport combined record

YIKES! Before getting homogenized, temperatures in Darwin were falling at 0.7 Celcius per century … but after the homogenization, they were warming at 1.2 Celcius per century. And the adjustment that they made was over two degrees per century … when those guys “adjust”, they don’t mess around. And the adjustment is an odd shape, with the adjustment first going stepwise, then climbing roughly to stop at 2.4C.

Of course, that led me to look at exactly how the GHCN “adjusts” the temperature data. Here’s what they say

GHCN temperature data include two different datasets: the original data and a homogeneity- adjusted dataset. All homogeneity testing was done on annual time series. The homogeneity- adjustment technique used two steps.

The first step was creating a homogeneous reference series for each station (Peterson and Easterling 1994). Building a completely homogeneous reference series using data with unknown inhomogeneities may be impossible, but we used several techniques to minimize any potential inhomogeneities in the reference series.

…

In creating each year’s first difference reference series, we used the five most highly correlated neighboring stations that had enough data to accurately model the candidate station.

…

The final technique we used to minimize inhomogeneities in the reference series used the mean of the central three values (of the five neighboring station values) to create the first difference reference series.

Fair enough, that all sounds good. They pick five neighboring stations, and average them. Then they compare the average to the station in question. If it looks wonky compared to the average of the reference five, they check any historical records for changes, and if necessary, they homogenize the poor data mercilessly. I have some problems with what they do to homogenize it, but that’s how they identify the inhomogeneous stations.

OK … but given the scarcity of stations in Australia, I wondered how they would find five “neighboring stations” in 1941 …

So I looked it up. The nearest station that covers the year 1941 is 500 km away from Darwin. Not only is it 500 km away, it is the only station within 750 km of Darwin that covers the 1941 time period. (It’s also a pub, Daly Waters Pub to be exact, but hey, it’s Australia, good on ya.) So there simply aren’t five stations to make a “reference series” out of to check the 1936-1941 drop at Darwin.

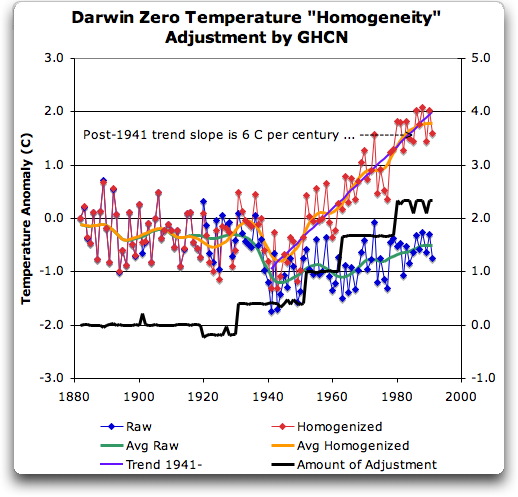

Intrigued by the curious shape of the average of the homogenized Darwin records, I then went to see how they had homogenized each of the individual station records. What made up that strange average shown in Fig. 7? I started at zero with the earliest record. Here is Station Zero at Darwin, showing the raw and the homogenized versions.

Figure 8 Darwin Zero Homogeneity Adjustments. Black line shows amount and timing of adjustments.

Yikes again, double yikes! What on earth justifies that adjustment? How can they do that? We have five different records covering Darwin from 1941 on. They all agree almost exactly. Why adjust them at all? They’ve just added a huge artificial totally imaginary trend to the last half of the raw data! Now it looks like the IPCC diagram in Figure 1, all right … but a six degree per century trend? And in the shape of a regular stepped pyramid climbing to heaven? What’s up with that?

Those, dear friends, are the clumsy fingerprints of someone messing with the data Egyptian style … they are indisputable evidence that the “homogenized” data has been changed to fit someone’s preconceptions about whether the earth is warming.

One thing is clear from this. People who say that “Climategate was only about scientists behaving badly, but the data is OK” are wrong. At least one part of the data is bad, too. The Smoking Gun for that statement is at Darwin Zero.

So once again, I’m left with an unsolved mystery. How and why did the GHCN “adjust” Darwin’s historical temperature to show radical warming? Why did they adjust it stepwise? Do Phil Jones and the CRU folks use the “adjusted” or the raw GHCN dataset? My guess is the adjusted one since it shows warming, but of course we still don’t know … because despite all of this, the CRU still hasn’t released the list of data that they actually use, just the station list.

Another odd fact, the GHCN adjusted Station 1 to match Darwin Zero’s strange adjustment, but they left Station 2 (which covers much of the same period, and as per Fig. 5 is in excellent agreement with Station Zero and Station 1) totally untouched. They only homogenized two of the three. Then they averaged them.

That way, you get an average that looks kinda real, I guess, it “hides the decline”.

Oh, and for what it’s worth, care to know the way that GISS deals with this problem? Well, they only use the Darwin data after 1963, a fine way of neatly avoiding the question … and also a fine way to throw away all of the inconveniently colder data prior to 1941. It’s likely a better choice than the GHCN monstrosity, but it’s a hard one to justify.

Now, I want to be clear here. The blatantly bogus GHCN adjustment for this one station does NOT mean that the earth is not warming. It also does NOT mean that the three records (CRU, GISS, and GHCN) are generally wrong either. This may be an isolated incident, we don’t know. But every time the data gets revised and homogenized, the trends keep increasing. Now GISS does their own adjustments. However, as they keep telling us, they get the same answer as GHCN gets … which makes their numbers suspicious as well.

And CRU? Who knows what they use? We’re still waiting on that one, no data yet …

What this does show is that there is at least one temperature station where the trend has been artificially increased to give a false warming where the raw data shows cooling. In addition, the average raw data for Northern Australia is quite different from the adjusted, so there must be a number of … mmm … let me say “interesting” adjustments in Northern Australia other than just Darwin.

And with the Latin saying “Falsus in unum, falsus in omis” (false in one, false in all) as our guide, until all of the station “adjustments” are examined, adjustments of CRU, GHCN, and GISS alike, we can’t trust anyone using homogenized numbers.

Regards to all, keep fighting the good fight,

w.

FURTHER READING:

My previous post on this subject.

The late and much missed John Daly, irrepressible as always.

More on Darwin history, it wasn’t Stevenson Screens.

NOTE: Figures 7 and 8 updated to fix a typo in the titles. 8:30PM PST 12/8 – Anthony

@wobble: “Are you claiming that the GHCN2 homogenization can alter data at stations at which nothing has occurred to warrant such alterations – other than observed divergence from a reference series?”

I think this is mostly correct, except insofar as it could be read to suggest that there is just one reference series against which all weather stations are measured, which is _not_ the case. My understanding of what NOAA is doing is comparing the data for each station to the other nearby weather stations with which that station’s data is otherwise most highly correlated.

To provide a very bad example using completely made up numbers, assume the following weather stations show the following temperature readings:

A: 1,2,3,4,3,2,1

B: 3,4,5,6,5,4,3

C: 1,2,3,4,7,6,5

If you calculate the period-over-period differences in temperature readings:

A: +1,+1,+1,-1,-1,-1

B: +1,+1,+1,-1,-1,-1

C: +1,+1,+1,+3,-1,-1

C is highly correlated with A and B except for one outlier (the +3 rather than -1). If that difference is statistically significant, an adjustment will be applied to C.

Now, to get to what I take your central point to be: could this adjustment be applied even if there is no historical information available that might explain what might have happened to temperature readings at C between year 4 (when the reading was 4) and year 5 (when the reading was 7)? YES.

Why do that? Here’s my best understanding:

1) This statistical method can be mechanically applied to the data set without having researchers make subjective assessments of what impact particular historical changes at particular stations might have on data collection at those stations.

2) Outside of certain stations in the US, there is not good historical information about station conditions (“metadata”) that would enable such subjective assessments to be made.

3) In some cases, historical information wouldn’t be helpful in reconstructing the reasons for oddities in the data (e.g., historical operator and transcription errors).

4) The adjusted data is recommended by NOAA only for use in regional analysis. (If you are doing a global calculation, errors in unadjusted will hopefully mostly cancel each other out. If you are looking at one individual particular station’s data for some reason, you’re better off doing what you can with the metadata you have.)

Wobble,

“Are you claiming that the GHCN2 homogenization can alter data at stations at which nothing has occurred to warrant such alterations – other than observed divergence from a reference series?”

Yes.

Now that you have grasped that part, please go back and read the rest of my posts on the subject, and endeavor to understand the other parts as well. Please do this before replying again …

The short version is that the GHCN2 methods document that Willis quotes from says:

You may see enormous adjustments applied to some stations. These adjustments may not track well with the temperatures local to that station. That is OK. While the sometimes goofy looking adjusted data should probably not be used for local analyses, we think it actually gives more reliable results when applied to long term global trend analysis, and here’s why (cites paper).

To which Willis artfully replies:

AHA!!! I have found enormous adjustments to this one station!!!!! And these adjustments do not track well with the temperatures local to that station!!!!!! You guys are all a bunch of crooks and liars!!!

To which Anthony adds:

Smoking Gun!!!!!!

Calm down folks.

There may be something up here, but you certainly havent found it yet. In fact, all you’ve done so far is confirm what they already told you.

Carbon dioxide cause or effect?

We should take the climate change seriously because these changes have created and destroyed huge empires within the history of mankind. However, it is a shame that billion dollar decisions in Copenhagen may be based on tuned temperature data and wrong conclusions.

The climate activists believe that the CO2 has been the main reason for the climate changes within the last million of years. However, the chemical calculations prove that the reason is the temperature changes of the oceans. Warm seawater dissolves much less CO2 than the cold seawater. See details from:

http://www.antti-roine.com/viewtopic.php?f=10&t=73

This means that CO2 content of the atmosphere will automatically increase, if the sea surface temperature increases for ANY reason. Most likely, carbon dioxide contributes to global warming, but it is hardly the primary reason for climate change.

The magnitude of CO2 and water vapor emissivity and absorptivity are the same, however, the concentration of CO2 (0.04%) is much less than water (1%) in atmosphere. In this mean the first assumption is that water vapor effect on the climate change must be much larger than CO2.

If it is true that:

1. CRU adjusted and selected the data according to their mission,

2. The town heating effect have nearly been neglected,

3. Original data has been deleted,

4. The climate warming cannot be seen from country side weather station data, then real scientist should be a little bit worried 🙂

Climate models which correlate with the CRU data cannot validate the methods of CRU, because all these models have been calibrated and fittet with the CRU data. So it is not a miracle that they fit to the CRU results. An other problem is that they do not take into account the effect of oceans.

Kyoto-type agreements transfers emissions and jobs to those countries which do not care about environmental issues. They also channel emissions trading funds to the population growth and increase of welfare, which both increase CO2 emissions. New Post-Kyoto agreements should channel the funds directly to development of our own sustainable technology and especially to the solar technology.

I don’t quite get the point of all this: you start the post correctly saying that weather station data need to be adjusted, than you take a station out of 7000 and show that it was, indeed, adjusted. How is this a smoking gun I don’t understand.

I understand you are not willing or able to reproduce the exact same procedure that GHCN used but what is the point? I mean, I can also cook pasta here at home, claim that I am cooking pasta the way you do and then say “YIKES! You see! This pasta tastes like crap ERGO JJ cannot cook”. This is beyond human logic, come on.

More on Time of Observation from the warmist site I do battle on. Any comment here Willis?

“I had time to read a little bit about the Time of Observation Bias, and it’s fascinating. As I understand it, before November 1994 Australia was calculating its daily mean temperature as the average of the daily maximum and minimum, as USA presently does. I don’t know what studies were done on TOB effects in Australia, but the study by Karl et al (1986) for the USA showed that bias can be as high as 2C, and that the difference in bias, say between late afternoon and around sunrise, can be even greater. The average of 7 years of data showed significant areas of northern Texas, Oklahoma and southern Kansas have a change in TOB of 2.0C or more in March if time of observation changes from 1700 to 0700.

Before 1940 in the US, 75% of the cooperative network observers were making the observation in the evening, but by 1986 only 45% were doing this, and one of the most frequent observation times had become 0700. But apparently the need for adjustments of such magnitude in cases like this is “nonsense”.”

After viewing many Australian sites on the GISS records (and not knowing what is adjusted or not) I cannot see how anyone in a sane mind can make any sense out of it at all!…….

The records are in the main disjointed with gaps and short periods shown, the trends are different for adjoining stations, about 1/3rd of the sites go up, 1/3rd go down and 1/3 show random ups and downs with no clear trend at all.

In the very main the only sites showing any real significant trends up are the large city urban warming BOM sites, and some of their nearby BOM airport stations do not show this upward trend at all(even though their are tarmac heat issues there as well)…….

Leaving one to believe strongly in urban warming distortions, And I note also that these sites are the only ones that have been updated to 2009.

IMO the surface record should be scrapped after viewing this mess, how anyone can make adjustments and fill in gaps and fit that all together and say anything meaningful at all about long term temperature trends is beyond me! “An unbelievable mess!”(Paraphrased…….As the leaked email scandal programmer stated in his notes on Australian stations!)

wobble (11:03:08) and also @JJ (11:38:10) :

“Are you claiming that the GHCN2 homogenization can alter data at stations at which nothing has occurred to warrant such alterations – other than observed divergence from a reference series?”

At this point, I must rather disagree with JJ. The GHCN uses statistical methods to produce corrections. These are described in the literature in great detail, and the basic method should be reproducible. But the corrections are not imaginary nor unrelated to reality; they should correspond to something that actually happened at that station. Willis quotes the NCDC above on what sorts of changes might have happened. If you had access to the historical data about the station (the metadata), you would likely see the reason for those corrections.

IN fact, the Australian BoM does have access to that historical metadata; it’s their station, after all. They perform their own homogenisation, independent of GHCN. They use both statistical methods and the metadata (knowledge of site changes, etc).

There is a nice example of this in Torok (1996), which is cited by the Australian BoM. They use the stats to sniff out changes at some station, and as it turns out in that example, all of the corrections but one actually correspond to some known change at the site: a station move, a new screen, some problem with the screen, and so on. One correction in 1890 was not explained by the historical notes.

tallbloke

I haven’t accessed the ‘warmist site’ you refer to but have these people never ever heard of maximum and minimum thermometers (much less how to reset them)?

While most determinations of maximum and minimum daily temperatures are now done electronically, the Maximum Minimum Thermometer also known as Six’s thermometer, was invented by James Six in 1782.

This TOB stuff sounds like another amazing warmist ‘cover up’ to me.

First, I showed in the original post that (in contradiction to the quote you cite) the Stevenson Screen was in use at Darwin at the turn of the century. So it can’t be that. Check out the “Recommended Reading”.

Second, we have five unexplained adjustments in the Darwin Zero record. Even if one of them were for a Stevenson Screen (it’s not, but we can play “what if”, what are the other four for?

w.

Here’s a nice data set for viewing.

http://www.bom.gov.au/climate/change/amtemp.shtml

JJ,

I just honored your request and read through all your comments on this thread.

I certainly see your point, but the details of your comments don’t square with Willis’ claims.

He seems to claim that homogenization adjustments of the raw data is done on a case by case basis.

However, you seem to be claiming one of two things:

1) that these homogenization adjustments were automatically applied according to quantitative rules put in place without any analysis of Darwin’s actual temperature data; or

2) that these homogenization adjustments were made specific to Darwin independent of any preestablished quantitative rules. And that the qualitative reasons for the specific adjustments don’t necessarily rely on documented events.

If you are claiming #1, then I’d like to see the apparent disagreement with Willis hashed out.

If you are claiming #2, then we’re back to disputing the justifications for adjustments which may or may not be provided. However, I do agree that it’s better to merely question and leave any definitive accusations out of the post. The definitive accusations are a liability without serving much of a “simple message” purpose.

It’s not the “need” for the adjustments that is “nonsense” but it is the WAY in which the adjustments are made that is unknown. By your own admission, it is known since 1986 that up to 2C can be attributed to TOB but we are expected to believe that the “adjustments” are accurate? While at the same time outfits such as CRU refuse to release their data and refuse to proffer a rationale for their adjustments and then when we do get a glimpse of the information from them in FOIA information that was released a bit earlier than they expected we see enormous fudge factors and no explanation as to how they came to these “adjusted” data sets other than what amounts to “take our word for it, we’re the scientists and we know what we’re doing”. Is that science? And would you get on an airplane if that was the paradigm used in its design?

JJ (11:38:10) :

I’m sorry, but that explanation doesn’t hold water. There were adjustments back as far as 1930. In the thirties Darwin was the only station within 500 km. So the changes could not have been based on a five station “reference series” as you claim. There were no stations near to Darwin then, much less five stations.

this is good work, terrific imho, and many of the comments too.

alkali (11:35:33) :

“”I think this is mostly correct, except insofar as it could be read to suggest that there is just one reference series against which all weather stations are measured, which is _not_ the case. My understanding of what NOAA is doing is comparing the data for each station to the other nearby weather stations with which that station’s data is otherwise most highly correlated.””

Yes, it seems as if this is the case. Have you taken a look at the documents which supposedly outline the algorithm? I’ll take a look, but I’ll be very surprised if subjective inputs (open to the questions that Willis is asking) are a requirement for replicating the adjustments to the raw data (making replication impossible).

So what we may be left with is claims of, “the temperatures were all properly adjusted according to our published and universal algorithm” despite the fact that the published algorithm allows significant, subjective input.

We will see, and I am very willing to back away from any accusations until we answer these questions definitively.

Sorry, above I meant, “I’ll be very surprised if subjective inputs … are NOT a requirement for replicating the adjustments…”

http://scienceblogs.com/deltoid/2009/12/willis_eschenbach_caught_lying.php#more

Wobble,

“I just honored your request and read through all your comments on this thread.”

Thank you.

“I certainly see your point, but the details of your comments don’t square with Willis’ claims.”

They do, however, appear to square with the methods document that Willis quotes from 🙂

“However, you seem to be claiming one of two things:”

To be clear, my claims are only as to my understanding of the content of the methods document. Basil and alkalai both give decent exposition of those methods above.

“1) that these homogenization adjustments were automatically applied according to quantitative rules put in place without any analysis of Darwin’s actual temperature data; ”

If i understand it right, that is probably the closest.

It appears that the GHCN2 homogenization method for non US stations adjusts a station’s data based on first difference comparison of those data to a reference series. That is an analysis of the Darwin station data – it is a search for and correction of apparent discontinuities within the Darwin data, using a reference series created from a nearby station both to locate the discontinuity as well as to provide the correction. It does not rely on documented events for either.

“If you are claiming #1, then I’d like to see the apparent disagreement with Willis hashed out.”

Me too!

Town of Oenpelli in West Arnhem Land founded around 1900

“The Oenpelli Mission began in 1925, when the Church of England Missionary Society accepted an offer from the Northern Territory Administration to take over the area, which had been operated as a dairy farm. The Oenpelli Mission operated for 50 years.”

Oenpelli weather station records:

http://weather.ninemsn.com.au/climate/station.jsp?lt=site&lc=14042

Station commenced 1910

Situated 230 km due east of Darwin

Willis: “Even if one of them were for a Stevenson Screen (it’s not, but we can play “what if”, what are the other four for?”

Has it occurred to you to ask the Australian BoM in private correspondence? They’re the ones who have the historical metadata for the station.

Taking your graph at face value, most of the adjustments are after the site move in 1941 (which I still don’t understand why you don’t want to adjust for). So for recent adjustments, the reference network comes into play. If the statistical method used by the GHCN is working well here, then those adjustments should line up with something in the metadata (the GHCN says they don’t have or use metadata for non-US stations).

You should also note that GISS, CRU and the Australians all do their own homogenisation, and the IPCC used the CRU, not the GHCN homog. Why not show all four sets of adjusted results, so we can see how they compare?

Don’t know what happened to this the first time:

weschenbach (12:25:33) :

From what I’ve seen we have E.M. Smith’s work, A.J. Strata, a site called CCC, Steve McIntyre and Jean S over at CA, and I’m sure various others, and of course your good self, all working in various ways on the various datasets.

Can a way be found to get you all together, plus interested parties willing to do some work (like me), to really work on this and produce a single temperature record, but rather than rehash CRU’s, GHCN’s or GISS’s code in something like R, actually come up with a new set of rules for adjusting temperatures.

I’m available. I’ve been planning to ‘re-hack’ GIStemp to make “Smith Temp” by de-buggering it; but I’d be just as happy to point out what they do that is reasonable, and what they do that is borken 😉 and have the thing re-done right, from scratch.

The biggest problem I see is to get ahead of NCDC and GHCN into the full planetary data series and avoid their “cooking by deletion” in the last 20 years.

So last night I went and registered surfacetemps.org. I envision a site with a single page for each station. Each page would have all available raw data for that station. These would be displayed in graphic form, and be available for download. In addition there would be a historical timeline of station moves, copies of historical documents, and most importantly, a discussion page.

Works for me. I’ve bookmarked it.

I do not think our purpose should be to create a new dataset, although that may be a by-product. I think our purpose should be to serve as a model for data transparency and availability. For each page, we should end up with a recommendedset of adjustments, clearly marked, shown, and discussed. But if someone wants to use one of the adjustments but feels the others are not justified, fine.

Given the selective thermometer deletions in GHCN, if you just do a “don’t hack it like GIStemp or CRU do” you are missing the third leg of this stool…

The first and most pernicious “fudge” is the removal of recent cold records from the Global Historical Climate Network data set. If all you do is ratify that as “OK”, then you will get very similar results to all the other folks once you start splicing, homogenizing, and trend plotting. You can get around that be leaving OUT all the recently deleted records from the entire past, but then you have a very small and very biased set of records to work with (hot stations mostly).

So you have to get in front of NCDC and GHCN, and that means in some way making your own dataset. Be it a ‘by product’ or goal…

On the main page, we should have a graphing/mapping interface that will allow anyone to grab a subset of the data (e.g. rural stations with a population under 100,000 in a particular area that cover 1940 to 1960).

How will you get “rural stations” when GHCN has 90+% of surviving stations at airports? (And many of the rest in cities). That is why you have to get ahead of GHCN and make a data set of raw(er) data.

For the USA, you can get USHCN, but even that has been “value added” (YUCK! I’m coming to hate those words…) by NCDC in unknown ways…

I would suggest that we do our work in R.

Yet Another Language to learn?… SIGH…. OK, I’ll look at it. But the lag time of learning YAL, and the time consumed to do it, is not attractive.

Oh, yeah. There’s more to come on the GHCN question, I’ve uncovered a very curious and pervasive error. Stay tuned for my next post.

And where will this post appear? Here one hopes…

Oops, my apologies.

I have just discovered that all temperature data for Oenpelli (230 km east of Darwin) prior to Decmber 1963 appeared to have been lost according to BOM records.

The full rainfall record back to 1910 was not lost.

A very interesting blog. I commend the comments of wobble, alkali and JJ from whom I am learning a lot. Could do with less name calling though (jrshipley) – doesn’t help, just pisses people off.

It seems to me though, that these temperature records are such a tangled mess, I can’t see how even the sharpest minds can extract any useful knowledge from them. They should all be binned and replaced with proxy records.

This is quite striking.

I would like to replicate your results. Please provide URLs for the data and the source code.

Thanks in advance.