by Willis Eschenbach

People keep saying “Yes, the Climategate scientists behaved badly. But that doesn’t mean the data is bad. That doesn’t mean the earth is not warming.”

Darwin Airport – by Dominic Perrin via Panoramio

Let me start with the second objection first. The earth has generally been warming since the Little Ice Age, around 1650. There is general agreement that the earth has warmed since then. See e.g. Akasofu . Climategate doesn’t affect that.

The second question, the integrity of the data, is different. People say “Yes, they destroyed emails, and hid from Freedom of information Acts, and messed with proxies, and fought to keep other scientists’ papers out of the journals … but that doesn’t affect the data, the data is still good.” Which sounds reasonable.

There are three main global temperature datasets. One is at the CRU, Climate Research Unit of the University of East Anglia, where we’ve been trying to get access to the raw numbers. One is at NOAA/GHCN, the Global Historical Climate Network. The final one is at NASA/GISS, the Goddard Institute for Space Studies. The three groups take raw data, and they “homogenize” it to remove things like when a station was moved to a warmer location and there’s a 2C jump in the temperature. The three global temperature records are usually called CRU, GISS, and GHCN. Both GISS and CRU, however, get almost all of their raw data from GHCN. All three produce very similar global historical temperature records from the raw data.

So I’m still on my multi-year quest to understand the climate data. You never know where this data chase will lead. This time, it has ended me up in Australia. I got to thinking about Professor Wibjorn Karlen’s statement about Australia that I quoted here:

Another example is Australia. NASA [GHCN] only presents 3 stations covering the period 1897-1992. What kind of data is the IPCC Australia diagram based on?

If any trend it is a slight cooling. However, if a shorter period (1949-2005) is used, the temperature has increased substantially. The Australians have many stations and have published more detailed maps of changes and trends.

The folks at CRU told Wibjorn that he was just plain wrong. Here’s what they said is right, the record that Wibjorn was talking about, Fig. 9.12 in the UN IPCC Fourth Assessment Report, showing Northern Australia:

Figure 1. Temperature trends and model results in Northern Australia. Black line is observations (From Fig. 9.12 from the UN IPCC Fourth Annual Report). Covers the area from 110E to 155E, and from 30S to 11S. Based on the CRU land temperature.) Data from the CRU.

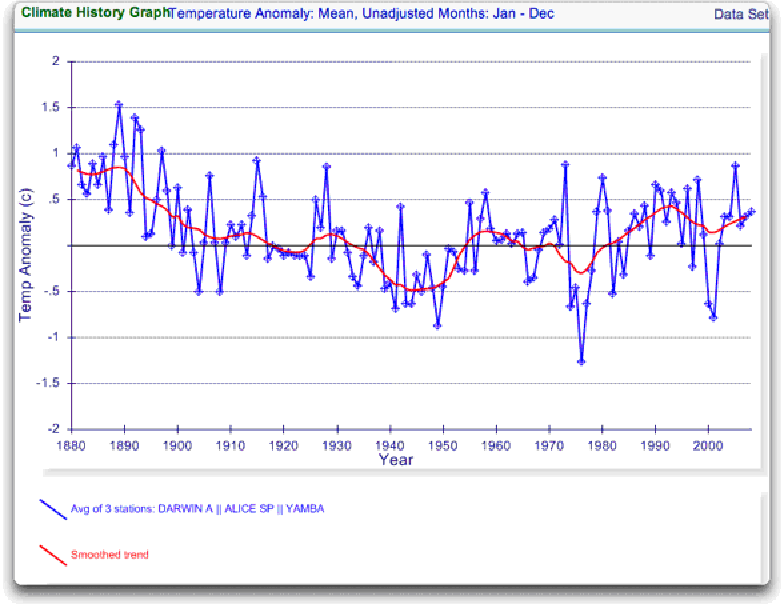

One of the things that was revealed in the released CRU emails is that the CRU basically uses the Global Historical Climate Network (GHCN) dataset for its raw data. So I looked at the GHCN dataset. There, I find three stations in North Australia as Wibjorn had said, and nine stations in all of Australia, that cover the period 1900-2000. Here is the average of the GHCN unadjusted data for those three Northern stations, from AIS:

Figure 2. GHCN Raw Data, All 100-yr stations in IPCC area above.

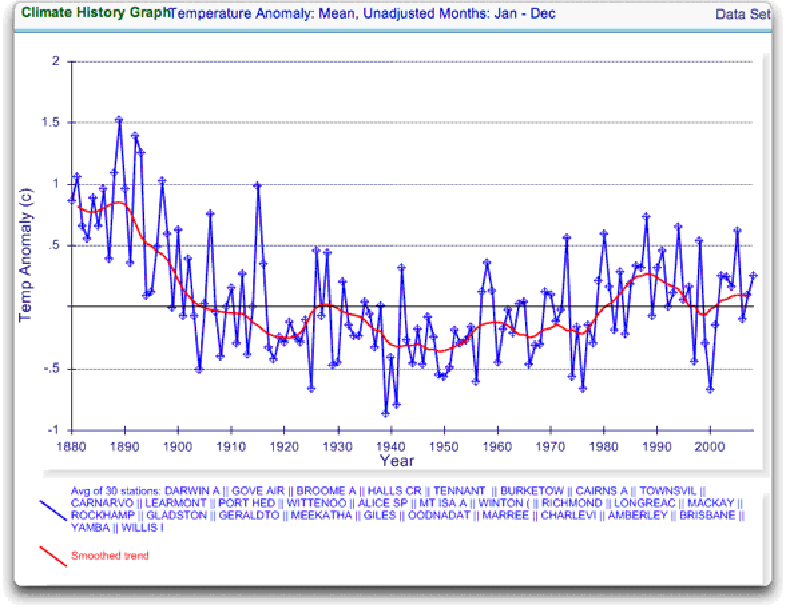

So once again Wibjorn is correct, this looks nothing like the corresponding IPCC temperature record for Australia. But it’s too soon to tell. Professor Karlen is only showing 3 stations. Three is not a lot of stations, but that’s all of the century-long Australian records we have in the IPCC specified region. OK, we’ve seen the longest stations record, so lets throw more records into the mix. Here’s every station in the UN IPCC specified region which contains temperature records that extend up to the year 2000 no matter when they started, which is 30 stations.

Figure 3. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

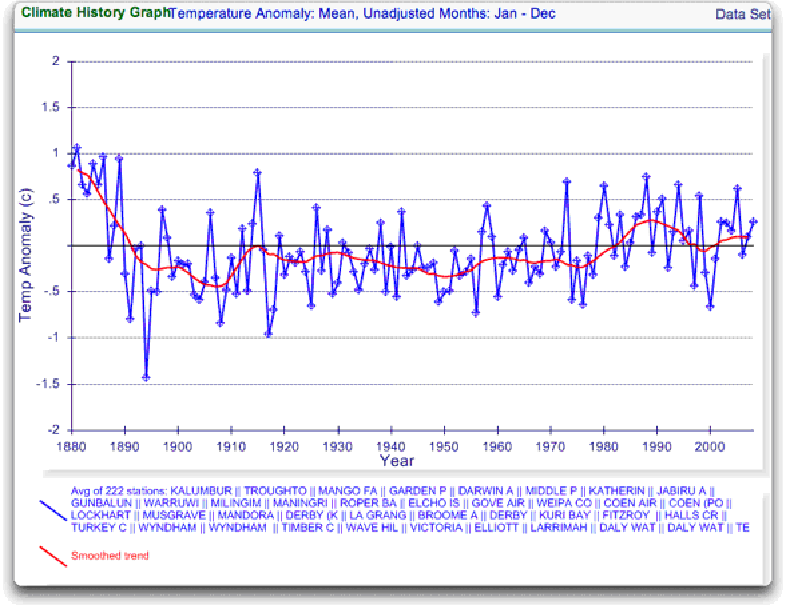

Still no similarity with IPCC. So I looked at every station in the area. That’s 222 stations. Here’s that result:

Figure 4. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

So you can see why Wibjorn was concerned. This looks nothing like the UN IPCC data, which came from the CRU, which was based on the GHCN data. Why the difference?

The answer is, these graphs all use the raw GHCN data. But the IPCC uses the “adjusted” data. GHCN adjusts the data to remove what it calls “inhomogeneities”. So on a whim I thought I’d take a look at the first station on the list, Darwin Airport, so I could see what an inhomogeneity might look like when it was at home. And I could find out how large the GHCN adjustment for Darwin inhomogeneities was.

First, what is an “inhomogeneity”? I can do no better than quote from GHCN:

Most long-term climate stations have undergone changes that make a time series of their observations inhomogeneous. There are many causes for the discontinuities, including changes in instruments, shelters, the environment around the shelter, the location of the station, the time of observation, and the method used to calculate mean temperature. Often several of these occur at the same time, as is often the case with the introduction of automatic weather stations that is occurring in many parts of the world. Before one can reliably use such climate data for analysis of longterm climate change, adjustments are needed to compensate for the nonclimatic discontinuities.

That makes sense. The raw data will have jumps from station moves and the like. We don’t want to think it’s warming just because the thermometer was moved to a warmer location. Unpleasant as it may seem, we have to adjust for those as best we can.

I always like to start with the rawest data, so I can understand the adjustments. At Darwin there are five separate individual station records that are combined to make up the final Darwin record. These are the individual records of stations in the area, which are numbered from zero to four:

Figure 5. Five individual temperature records for Darwin, plus station count (green line). This raw data is downloaded from GISS, but GISS use the GHCN raw data as the starting point for their analysis.

Darwin does have a few advantages over other stations with multiple records. There is a continuous record from 1941 to the present (Station 1). There is also a continuous record covering a century. finally, the stations are in very close agreement over the entire period of the record. In fact, where there are multiple stations in operation they are so close that you can’t see the records behind Station Zero.

This is an ideal station, because it also illustrates many of the problems with the raw temperature station data.

- There is no one record that covers the whole period.

- The shortest record is only nine years long.

- There are gaps of a month and more in almost all of the records.

- It looks like there are problems with the data at around 1941.

- Most of the datasets are missing months.

- For most of the period there are few nearby stations.

- There is no one year covered by all five records.

- The temperature dropped over a six year period, from a high in 1936 to a low in 1941. The station did move in 1941 … but what happened in the previous six years?

In resolving station records, it’s a judgment call. First off, you have to decide if what you are looking at needs any changes at all. In Darwin’s case, it’s a close call. The record seems to be screwed up around 1941, but not in the year of the move.

Also, although the 1941 temperature shift seems large, I see a similar sized shift from 1992 to 1999. Looking at the whole picture, I think I’d vote to leave it as it is, that’s always the best option when you don’t have other evidence. First do no harm.

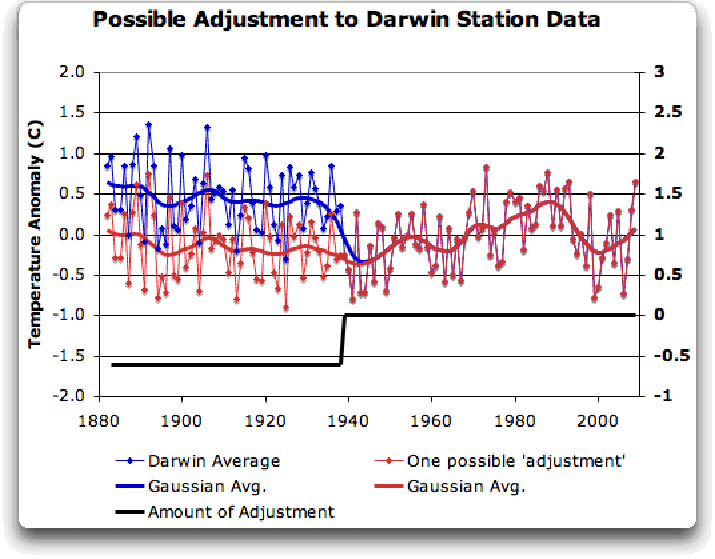

However, there’s a case to be made for adjusting it, particularly given the 1941 station move. If I decided to adjust Darwin, I’d do it like this:

Figure 6 A possible adjustment for Darwin. Black line shows the total amount of the adjustment, on the right scale, and shows the timing of the change.

I shifted the pre-1941 data down by about 0.6C. We end up with little change end to end in my “adjusted” data (shown in red), it’s neither warming nor cooling. However, it reduces the apparent cooling in the raw data. Post-1941, where the other records overlap, they are very close, so I wouldn’t adjust them in any way. Why should we adjust those, they all show exactly the same thing.

OK, so that’s how I’d homogenize the data if I had to, but I vote against adjusting it at all. It only changes one station record (Darwin Zero), and the rest are left untouched.

Then I went to look at what happens when the GHCN removes the “in-homogeneities” to “adjust” the data. Of the five raw datasets, the GHCN discards two, likely because they are short and duplicate existing longer records. The three remaining records are first “homogenized” and then averaged to give the “GHCN Adjusted” temperature record for Darwin.

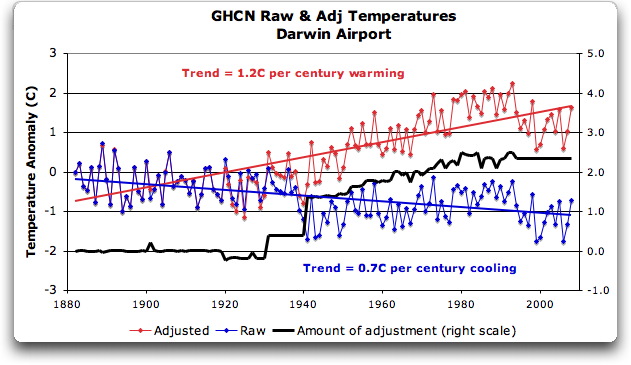

To my great surprise, here’s what I found. To explain the full effect, I am showing this with both datasets starting at the same point (rather than ending at the same point as they are often shown).

Figure 7. GHCN homogeneity adjustments to Darwin Airport combined record

YIKES! Before getting homogenized, temperatures in Darwin were falling at 0.7 Celcius per century … but after the homogenization, they were warming at 1.2 Celcius per century. And the adjustment that they made was over two degrees per century … when those guys “adjust”, they don’t mess around. And the adjustment is an odd shape, with the adjustment first going stepwise, then climbing roughly to stop at 2.4C.

Of course, that led me to look at exactly how the GHCN “adjusts” the temperature data. Here’s what they say

GHCN temperature data include two different datasets: the original data and a homogeneity- adjusted dataset. All homogeneity testing was done on annual time series. The homogeneity- adjustment technique used two steps.

The first step was creating a homogeneous reference series for each station (Peterson and Easterling 1994). Building a completely homogeneous reference series using data with unknown inhomogeneities may be impossible, but we used several techniques to minimize any potential inhomogeneities in the reference series.

…

In creating each year’s first difference reference series, we used the five most highly correlated neighboring stations that had enough data to accurately model the candidate station.

…

The final technique we used to minimize inhomogeneities in the reference series used the mean of the central three values (of the five neighboring station values) to create the first difference reference series.

Fair enough, that all sounds good. They pick five neighboring stations, and average them. Then they compare the average to the station in question. If it looks wonky compared to the average of the reference five, they check any historical records for changes, and if necessary, they homogenize the poor data mercilessly. I have some problems with what they do to homogenize it, but that’s how they identify the inhomogeneous stations.

OK … but given the scarcity of stations in Australia, I wondered how they would find five “neighboring stations” in 1941 …

So I looked it up. The nearest station that covers the year 1941 is 500 km away from Darwin. Not only is it 500 km away, it is the only station within 750 km of Darwin that covers the 1941 time period. (It’s also a pub, Daly Waters Pub to be exact, but hey, it’s Australia, good on ya.) So there simply aren’t five stations to make a “reference series” out of to check the 1936-1941 drop at Darwin.

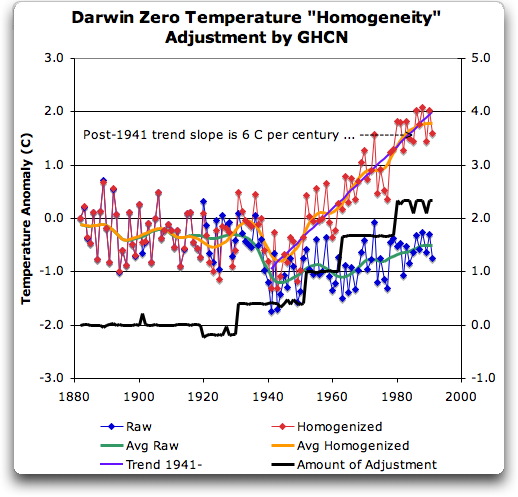

Intrigued by the curious shape of the average of the homogenized Darwin records, I then went to see how they had homogenized each of the individual station records. What made up that strange average shown in Fig. 7? I started at zero with the earliest record. Here is Station Zero at Darwin, showing the raw and the homogenized versions.

Figure 8 Darwin Zero Homogeneity Adjustments. Black line shows amount and timing of adjustments.

Yikes again, double yikes! What on earth justifies that adjustment? How can they do that? We have five different records covering Darwin from 1941 on. They all agree almost exactly. Why adjust them at all? They’ve just added a huge artificial totally imaginary trend to the last half of the raw data! Now it looks like the IPCC diagram in Figure 1, all right … but a six degree per century trend? And in the shape of a regular stepped pyramid climbing to heaven? What’s up with that?

Those, dear friends, are the clumsy fingerprints of someone messing with the data Egyptian style … they are indisputable evidence that the “homogenized” data has been changed to fit someone’s preconceptions about whether the earth is warming.

One thing is clear from this. People who say that “Climategate was only about scientists behaving badly, but the data is OK” are wrong. At least one part of the data is bad, too. The Smoking Gun for that statement is at Darwin Zero.

So once again, I’m left with an unsolved mystery. How and why did the GHCN “adjust” Darwin’s historical temperature to show radical warming? Why did they adjust it stepwise? Do Phil Jones and the CRU folks use the “adjusted” or the raw GHCN dataset? My guess is the adjusted one since it shows warming, but of course we still don’t know … because despite all of this, the CRU still hasn’t released the list of data that they actually use, just the station list.

Another odd fact, the GHCN adjusted Station 1 to match Darwin Zero’s strange adjustment, but they left Station 2 (which covers much of the same period, and as per Fig. 5 is in excellent agreement with Station Zero and Station 1) totally untouched. They only homogenized two of the three. Then they averaged them.

That way, you get an average that looks kinda real, I guess, it “hides the decline”.

Oh, and for what it’s worth, care to know the way that GISS deals with this problem? Well, they only use the Darwin data after 1963, a fine way of neatly avoiding the question … and also a fine way to throw away all of the inconveniently colder data prior to 1941. It’s likely a better choice than the GHCN monstrosity, but it’s a hard one to justify.

Now, I want to be clear here. The blatantly bogus GHCN adjustment for this one station does NOT mean that the earth is not warming. It also does NOT mean that the three records (CRU, GISS, and GHCN) are generally wrong either. This may be an isolated incident, we don’t know. But every time the data gets revised and homogenized, the trends keep increasing. Now GISS does their own adjustments. However, as they keep telling us, they get the same answer as GHCN gets … which makes their numbers suspicious as well.

And CRU? Who knows what they use? We’re still waiting on that one, no data yet …

What this does show is that there is at least one temperature station where the trend has been artificially increased to give a false warming where the raw data shows cooling. In addition, the average raw data for Northern Australia is quite different from the adjusted, so there must be a number of … mmm … let me say “interesting” adjustments in Northern Australia other than just Darwin.

And with the Latin saying “Falsus in unum, falsus in omis” (false in one, false in all) as our guide, until all of the station “adjustments” are examined, adjustments of CRU, GHCN, and GISS alike, we can’t trust anyone using homogenized numbers.

Regards to all, keep fighting the good fight,

w.

FURTHER READING:

My previous post on this subject.

The late and much missed John Daly, irrepressible as always.

More on Darwin history, it wasn’t Stevenson Screens.

NOTE: Figures 7 and 8 updated to fix a typo in the titles. 8:30PM PST 12/8 – Anthony

Willis,

“I’m sorry, but that explanation doesn’t hold water.”

Yeah, it still does.

“There were adjustments back as far as 1930. In the thirties Darwin was the only station within 500 km. So the changes could not have been based on a five station “reference series” as you claim. There were no stations near to Darwin then, much less five stations”

I have been unable to find a reference to a 500 km limit in the methods document. Given the method’s focus on ‘regional and larger’ scales, they may have used five stations from farther away. If that is the case, that might be a productive line of investigation for you to pursue, in the context of critiquing the claims about the method’s applicability to global average long term trends. Keep in mind if you go that route that some aggregate maths can be very robust to such extreme examples, in ways that are not intuitive.

It remains that there is no proof that the results you are seeing are anything other than artifacts as predicted by the documented methodology.

Speculating some: in addition to using five sites, some or all of which may be farther than 500km from Darwin, it is also possible that a modification of the methods was appplied – using less than five sites, for example. In my experience, that sort of thing wouldnt be that unusual. If such departures from the methods occured, that would be important.

You have the data and the methods. Thus, you have the means to perform a Steve M style audit, attempting to replicate results. Use the five stations nearest to Darwin that meet the method’s criteria. See what you get. That would be an excellent follow up to your current work. You can do that, while you wait for NOAA to respond to the questions you aksed of them.

You did ask NOAA those important questions, didnt you?

🙂

Willis

Following up from this may help you:

http://www.bom.gov.au/climate/averages/tables/cw_014042_All.shtml

AFAIK Oenpelli is the oldest station within 500 km of Darwin with the longest temperature records. For personal reasons I know the C of E mission kept fairly reliable (max/min) temperature records from 1925 until relinquished around 1975. A Stevenson Screen was certainly in place in WWII. Why all pre-1993 data temperature should now be missing from BOM records is a mystery to me.

IMHO, logically, if “…an analysis of the Darwin station data …. and correction of apparent discontinuities within the Darwin data, using a reference series created from a nearby station both to locate the discontinuity as well as to provide the correction.” occurred it would have most likely used data from this weather station (which was not subject to coastline effects etc.).

Therefore, presumably, all corrections to the Darwin Station data occurred from 1964?

JJ,

Seeing as how you seem to know what you’re talking about, and are good at explaining stuff for laymen, I have a question. What’s the purpose of the GHCN2 homogenization? I am struggling to see what use it has.

Presumably the reference series must be known to be more reliable that the series being adjusted, otherwise the adjustment would have no justification. But if we have a reliable reference, why use the less reliable series at all? I assume the reference must be some distance away, and we are just trying to get broader coverage.

If there is a non-negligible geographic distance between the reference and the other series which are adjusted to it, why is it justified to adjust the other series to the reference? Might the differences not be due to local climate variations?

Also, if a series such as the Darwin series is adjusted so much that it no longer bears any resemblance to the actual temperature at Darwin, which the authors seem to admit might potentially happen, then how is it useful? Isn’t the whole point to work out what the average temperature of an area is? How is this helped by moving some of the raw data away from its correct temperature?

I’m not a skeptic trying to pick holes in everything, I’m just genuinely interested in the methodology used here.

Vincent (13:34:52) :

It seems to me though, that these temperature records are such a tangled mess, I can’t see how even the sharpest minds can extract any useful knowledge from them. They should all be binned and replaced with proxy records.

Lol. Which sort of proxy did you have in mind?

This was written in a 2000 Aust. Federal Govt. agency report (Office of the Supervising Scientist based in Jabiru).

“Climate records in the Alligator Rivers Region and neighbouring regions are relatively limited. Following common practice, weather stations have typically been associated with human habitations and consequently the monitoring sites have records limited by the relatively short history of townships in the region. For example, Jabiru has a climate record

extending back to 1982. Within the region, the longest climate record is approximately 80 years, from Gunbalunya (Oenpelli). Longer-term climate records are available for Darwin.

Climate conditions have been described by a range of authors, including the Australian Bureau of Meteorology (1961), McAlpine (1969), Christian and Aldrick (1977), Hegerl et al (1979), Woodroffe et al (1986), Nanson et al (1990), Riley (1991), Wasson (1992), Butterworth (1995), Russell-Smith et al (1995), McQuade et al (1996) and Bureau of Meteorology (1999). On the basis of these authors’ works a general description of the climate can be made.”

Quite apart from the other adjustment-related issues discussed in the most recent posts above, how are we to explain that of the ~80 years of temperature records for Oenpelli (Gunbalunya) to 2000 i.e. since ~1920 (most prob. ~1925) which BOM saw fit to review in 1961 and academic authors in 1969, 1977 etc ., the raw (or even summary) temperature records for Oenpelli prior to calendar 1964 are now not available from BOM?

IMHO that station is the primary (perhaps only suitable?) candidate for an intra-regional basis for adjustment of the Darwin temperature record prior to ~1980 back to 1941 and earlier!

carrot eater,

This is a direct quote from the paper that Lambert claims documents the methodology ABM used to do their adjustments.

According to the paper, an objective method to detect a discontinuity required suitable reference stations with five years of overlap prior to the discontinuity, five years of overlap following the discontinuity, within eight degrees of latitude, within eight degrees of longitude, and within 500 meters of elevation. I’ll have check the lat/longs and temperature records to see if this requirement was met.

Then we learn:

“”Where a discontinuity was evident

from the reference series or DTR test, but not detected

by the objective method, the magnitude of adjustment

was estimated.””

So the subjective (visual) reference series test and the subjective (visual) DTR test were used to determine the existence of a discontinuity if the requirements of the objective method weren’t met. And if the requirements for the objective method weren’t met, then we now know that the magnitude of the “adjustment was estimated.”

Got that? It was estimated, and the paper doesn’t disclose an estimation methodology.

The paper also admits:

“The decision of whether or not to correct for a

potential inhomogeneity is often a subjective one.”

And:

“The subjectivity inherently involved in the

homogeneity process means that two different adjustment

schemes will not necessarily result in the same

homogeneity adjustments being calculated for individual

records.”

In fact, this paper (Marta and Collins – 2003) couldn’t even replicate Torok and Nicholls (1996) despite their best efforts.

I’m thinking that it’s a bit disingenuous for anyone to be assuming that the level of subjectivity used by ABM can be replicated.

JJ, do you have a link to the GHCN2 methodology that you just reviewed?

Wobble,

I am unclear as to the relevance of ABM methods to the GHCN dataset.

GHCN methods are here:

http://www.ncdc.noaa.gov/oa/climate/ghcn-monthly/images/ghcn_temp_overview.pdf

There does not appear to be a lot of room for subjectivity in this method, as described. Decisons are based on numeric cut offs, significance test values, etc.

@wobble: “Have you taken a look at the documents which supposedly outline the algorithm? I’ll take a look, but I’ll be very surprised if subjective inputs (open to the questions that Willis is asking) are NOT a requirement for replicating the adjustments to the raw data (making replication impossible).”

I think the motivation for using that procedure is to avoid that kind of subjectivity. The GHCN methodology is described here.

Note that the GHCN (US; NOAA) adjusted set is different from the ABM (Australian) adjusted set. The GHCN set does not try to give weight to historical information about station conditions (“metadata”); the ABM set does.

@cc: “Also, if a series such as the Darwin series is adjusted so much that it no longer bears any resemblance to the actual temperature at Darwin, which the authors seem to admit might potentially happen, then how is it useful?”

The same document linked above states at p.11:

“A great deal of effort went into the homogeneity adjustments. Yet the effects of the homogeneity adjustments on global average temperature trends are minor (Easterling and Peterson 1995b). However, on scales of half a continent or smaller, the homogeneity adjustments can have an impact. On an individual time series, the effects of the adjustments can be enormous. These adjustments are the best we could do given the paucity of historical station history metadata on a global scale. But using an approach based on a reference series created from surrounding stations means that the adjusted station’s data is more indicative

of regional climate change and less representative of local microclimatic change than an individual station not needing adjustments. Therefore, the best use for homogeneity-adjusted data is regional analyses of long-term climate trends (Easterling et al. 1996b). Though the homogeneity-adjusted data are more reliable for long-term trend analysis, the original data are also available in GHCN and may be preferred for most other uses given the higher density of the network.”

One could cynically argue that no historical temperature data could ever be useful: if you use raw data, you can’t be sure that some adjustment isn’t warranted for changes in station conditions over time; if you adjust using historical information, you introduce subjectivity and potential bias; if you adjust using some kind of algorithm, hey, you aren’t taking into account historical information. I don’t think that Catch-22 quite works: the challenge is to make a fair evaluation of the considerable amount of very good data that we do have.

Willis,

While there is no reference to 500 km in the documents, they keep talking about “nearby” stations, and they say they use five stations. In 1930 there were no stations within 750 km of Darwin, which is hardly “nearby” in anyone’s books.

It is possible that as you say these are “artifacts” of the homogenization process. If so, it is the responsibility of those running the database to remove such artifacts, and they have not done so.

More as time permits.

A small correction: I should have said that the GHCN set does not try to give weight to historical information about station conditions (“metadata”) for non-US sites. The GHCN site _does_ take metadata into account in adjusting for a substantial number of US sites (I suppose because NOAA trusts its own metadata).

JJ (16:06:35) :

“”GHCN methods are here:””

What? Nothing can be replicated using that “Overview.” It doesn’t provide nearly enough detail to generate code or even perform simple adjustments.

If fact, one can’t even get a sense of the objectivity versus subjectivity of that methodology from that.

“”I am unclear as to the relevance of ABM methods to the GHCN dataset.””

Concur. It’s not relevant. Maybe Lambert and carrot eater will figure that out, too.

Willis,

“While there is no reference to 500 km in the documents, they keep talking about “nearby” stations, and they say they use five stations.”

In point of fact, they dont say ‘nearby’. They say ‘neighboring’.

“In 1930 there were no stations within 750 km of Darwin, which is hardly “nearby” in anyone’s books.”

They may be in the ‘neighborhood’, espcially considering (once again) the scale that their intended use assumes: regional and larger. The assertion is that these methods are valid for large area, long term trend analysis. Supporting research is provided for that assertion.

“It is possible that as you say these are “artifacts” of the homogenization process. If so, it is the responsibility of those running the database to remove such artifacts, and they have not done so.”

Nonsense. There is no specific ‘responsibiity’ to remove artifacts from any dataset, only to account for their effect on the intended use of the adjusted data. They have done so.

The two primary claims about the validity of the homogenization method are the rocks against which your current criticism crashes. You cannot succeed without first refuting those claims, and they cannot be refuted with handwaving.

wobble: GHCN and ABoM are producing independent products here, using similar but different methods. I’ve already pointed out (if not here, elsewhere, I forget) there is some subjectivity in the BoM method; I read the same things you did. But you should still get similar results, if you do what they said. Then again, they have and use the historical meta-data; so far as I can tell, that information is not online.

The GHCN does not use historical metadata for non-US stations. I’m just saying that the corrections that come up using the GHCN method should correspond to actual physical events in the historical metadata, IF you obtained that data from the ABoM. Time of observation changes, changes in equipment, etc.

I think the algorithm described by Peterson for GHCN in multiple publications is more than concise enough to use. If anybody cares all that much, they should go ahead and try it. Reproducing somebody’s results isn’t about taking their code, hitting ‘run’, and getting the same number. It’s far better to implement the procedure for yourself. See how neighboring stations correlate with each other; see how far away they can be and still correlate. Get some idea for that, then build your own reference network, and so on.

I agree with jj that it’s incumbent upon Willis to go this step further. All Willis has done is shown that there are adjustments. But we knew that. The question is whether those adjustments are based on a defensible use of statistics, or did the algorithm choke at Darwin and send up spurious results?

Again, we’ve got BoM, GHCN, CRU and GISS. All take the same raw data, and homogenise them in their way. Willis has only put up one of these. It’d be useful if he or somebody else put up all of them. At a glance, it looks like GISS only starts at 1963 – perhaps using their algorithm, it wasn’t possible to homogenise before that point, due to lack of neighbors? I don’t know.

And I’m still curious what the baseline period is for the anomalies above. It seems pretty clear that afterwards, they’ve been shifted up or down so they start at zero; I don’t understand why that’s necessary. Just let them start wherever they start. There’s no need for them to start at zero.

Posted on the stick thread:

Willis Looking at the unadjusted plots leads me to suspect that there are 2 major changes in measurement methods/location.

This occur in january 1941 and June 1994 – The 1941 is well known (po to airport move) . I can find no connection for the 1994 shift

These plots show the 2 periods each giving a shift of 0.8C

http://img33.imageshack.us/img33/2505/darwincorrectionpoints.png

The red line shows the effect of a suggested correction

This plot compares the GHCN corrected curve (green) to that suggested by me (red).

The difference between the 2 is approx 1C compared to the 2.5 you quote as the “cheat”.

http://img37.imageshack.us/img37/4617/ghcnsuggestedcorrection.png

Any comments

“What? Nothing can be replicated using that “Overview.” It doesn’t provide nearly enough detail to generate code or even perform simple adjustments.”

You cannot possibly have worked you way thru that paper and its antecedents with sufficent attention to detail to make that statement.

The methodology looks very straightforward to me. Further details in the half a dozen specific methodology papers cited. If there is anything missing, you would need to scour the paper tree to know that, and be very specific as to what you need.

“If fact, one can’t even get a sense of the objectivity versus subjectivity of that methodology from that.”

Where’s the subjective process step? You provided an example from ABM. Where’s the window here?

Willis

I strongly support what you are doing but your statement:

“While there is no reference to 500 km in the documents, they keep talking about “nearby” stations, and they say they use five stations. In 1930 there were no stations within 750 km of Darwin, which is hardly “nearby” in anyone’s books.”

is simply untrue as I’ve just discussed here in several posts reporting on my efforts to locate the data for Oenpelli (Gunbalunya), only 230 km due east of Darwin. There is plenty of evidence (personal contacts in the region, plus academic references plus a BOM report) that temperature (and rainfall etc) records were taken at Oenpelli since at least 1925 when the CofE mission was established. However, as I’ve pointed out, full temperature records prior to calendar 1964 (some back to January 1957) cannot be sourced from BOM (although rainfall back to 1910).

FYI, not counting Oenpelli, there was at least one other Northern Territory mission-based weather station within 750 km of Darwin probably also in existence in 1930 (Warruwi) . The temperature records for Warruwil before January 1957 also appear to have been lost by BOM. The funny thing is it that BOM actually refers to those records in a report in 1961!

Therefore we must conclude that the adjustments at Darwin from 1940 through 1960 (say) were made on a wider regional basis. This brings in other much wider apart locations such as Daly Water, Alice Springs, Port Moresby, Jackson Airfield, Broome etc.

alkali (16:17:02) :

“”I think the motivation for using that procedure is to avoid that kind of subjectivity. The GHCN methodology is described here.””

and JJ

“”You cannot possibly have worked you way thru that paper and its antecedents with sufficent attention to detail to make that statement.””

Please. Here’s a quick example.

Unless “neighboring” is defined then this methodology is excessively vague.

“”The first of these sought the most highly correlated neighboring station, from which a correlation analysis was performed on the first difference series: FD1 = (T2 – T1).””

It’s impossible to determine which neighboring station is the most highly correlated without knowing which stations are candidates and which are not.

Without knowing this, it’s impossible to complete Step 1.

Here’s another quick example:

“”the discontinuity was evaluated using Student’s t-test. If the discontinuity was determined to be significant, the time series was subdivided into two at that year.””

Significance is not defined. Given that the Darwin data contained many small adjustments in later years then you can bet your a$$ that the determination of significance of a discontinuity is germane.

carrot eater (13:26:38) :

Thanks for the question. Sure, it has occurred to me. But I must confess, I’ve given up asking. I’ve asked for so much data, and filed FOI requests for so much data, and been blown off every time, that I’ve grown tired of being ignored.

And those adjustments are the GHCN adjustments, not the Australian adjustments. I was writing about the GHCN. I haven’t a clue what the Aussies do, there’s only so many hours in a day.

I’m happy to adjust for the site move … but there was no abnormal change in the record from 1940 to 1941, and the move was in January 1941. So what adjustment are you proposing we make? A tenth of a degree? Half a degree? A degree and a half? Show your work.

Your statement of nearby stations in the sixties doesn’t help at all with the adjustments that were done in 1920, 1930, and 1941.

And while it is true that GISS, CRU, and the Aussies all do their own homogenization and they all roughly agree, that does not comfort my soul in the slightest … if you don’t understand why, read the CRU emails.

Nice work Bill.

Been doubting the warmists for years. Also have been following Lomborg’s lead when I teach children. It’s more important to use our gifts to improve the present status of the less fortunate than to pursue questionable and overzealous policies. Perhaps the next step in your data “forensics” is: What CO2 producing activity were they trying to mimic with their “adjustments”?

Keep up the good work.

carrot eater (16:51:36) :

“”I’ve already pointed out (if not here, elsewhere, I forget) there is some subjectivity in the BoM method; I read the same things you did. But you should still get similar results, if you do what they said. “”

That’s completely unrealistic. How can I “do what they said” when they merely tell me to estimate the “magnitude of adjustment” based on visual findings of discontinuity.

“”It’s far better to implement the procedure for yourself. See how neighboring stations correlate with each other; see how far away they can be and still correlate. Get some idea for that, then build your own reference network, and so on.””

I agree that this must be done prior to making any accusations. However, I doubt the effort will yield any results. I have a feeling that such a replication exercise will be a complete time sink as one attempts to continuously tweak and turn dozens of assumption nobs. In the event of a replication failure, no accusations can be made and the failure would simply be greeted with “well you didn’t make the proper assumptions because you’re not a climate professional.”

I’m fine dropping the accusations of wrongdoing. But let’s be realistic about the chances of a replication exercise yielding any fruit at all.

Steve Short,

Is this the data set you’re proposing?

http://data.giss.nasa.gov/cgi-bin/gistemp/gistemp_station.py?id=501941380000&data_set=0&num_neighbors=1

alkali,

“One could cynically argue that no historical temperature data could ever be useful: if you use raw data, you can’t be sure that some adjustment isn’t warranted for changes in station conditions over time; if you adjust using historical information, you introduce subjectivity and potential bias; if you adjust using some kind of algorithm, hey, you aren’t taking into account historical information. I don’t think that Catch-22 quite works: the challenge is to make a fair evaluation of the considerable amount of very good data that we do have.”

Yes I suppose you’re right… and given all the hoo-ha about the in-group mentality exposed in the CRU emails and its potential to increase subconscious bias, I think in this case I prefer the blind algorithm, even if it does occasionally spit out an odd result!

Proposing (?) the wrong word.

That certainly is the (BOM-sourced) dataset from GISS for Oenpelli for the period from 1964. Naturally it tells us nothing about the missing 1925 – 1963 Oenpelli record and hence casts no light on Willi’s concerns over the 1941 adjustment.

As you can see there is some sort of ‘step shift’ in the Oenpelli record around 1977 – 1978. Is this a reasonable step shift (i.e. due to a position change etc) or yet another obscure ‘step up’ BOM or a GISS adjustment? Did you spot any related metadata?

In any event it, certainly provides us with an very long-standing inland site (quite rare in the Northern Territory) only 230 km east of Darwin to compare with Darwin.

However, Bill seems to have identified a step adjustment to the Darwin record having occurred in June 1994.

It certainly is a worry that these step shifts always seem to be up and seem to mostly to occur from about the late 1970s…….

I must confess I inhaled (way back then 😉