by Willis Eschenbach

People keep saying “Yes, the Climategate scientists behaved badly. But that doesn’t mean the data is bad. That doesn’t mean the earth is not warming.”

Darwin Airport – by Dominic Perrin via Panoramio

Let me start with the second objection first. The earth has generally been warming since the Little Ice Age, around 1650. There is general agreement that the earth has warmed since then. See e.g. Akasofu . Climategate doesn’t affect that.

The second question, the integrity of the data, is different. People say “Yes, they destroyed emails, and hid from Freedom of information Acts, and messed with proxies, and fought to keep other scientists’ papers out of the journals … but that doesn’t affect the data, the data is still good.” Which sounds reasonable.

There are three main global temperature datasets. One is at the CRU, Climate Research Unit of the University of East Anglia, where we’ve been trying to get access to the raw numbers. One is at NOAA/GHCN, the Global Historical Climate Network. The final one is at NASA/GISS, the Goddard Institute for Space Studies. The three groups take raw data, and they “homogenize” it to remove things like when a station was moved to a warmer location and there’s a 2C jump in the temperature. The three global temperature records are usually called CRU, GISS, and GHCN. Both GISS and CRU, however, get almost all of their raw data from GHCN. All three produce very similar global historical temperature records from the raw data.

So I’m still on my multi-year quest to understand the climate data. You never know where this data chase will lead. This time, it has ended me up in Australia. I got to thinking about Professor Wibjorn Karlen’s statement about Australia that I quoted here:

Another example is Australia. NASA [GHCN] only presents 3 stations covering the period 1897-1992. What kind of data is the IPCC Australia diagram based on?

If any trend it is a slight cooling. However, if a shorter period (1949-2005) is used, the temperature has increased substantially. The Australians have many stations and have published more detailed maps of changes and trends.

The folks at CRU told Wibjorn that he was just plain wrong. Here’s what they said is right, the record that Wibjorn was talking about, Fig. 9.12 in the UN IPCC Fourth Assessment Report, showing Northern Australia:

Figure 1. Temperature trends and model results in Northern Australia. Black line is observations (From Fig. 9.12 from the UN IPCC Fourth Annual Report). Covers the area from 110E to 155E, and from 30S to 11S. Based on the CRU land temperature.) Data from the CRU.

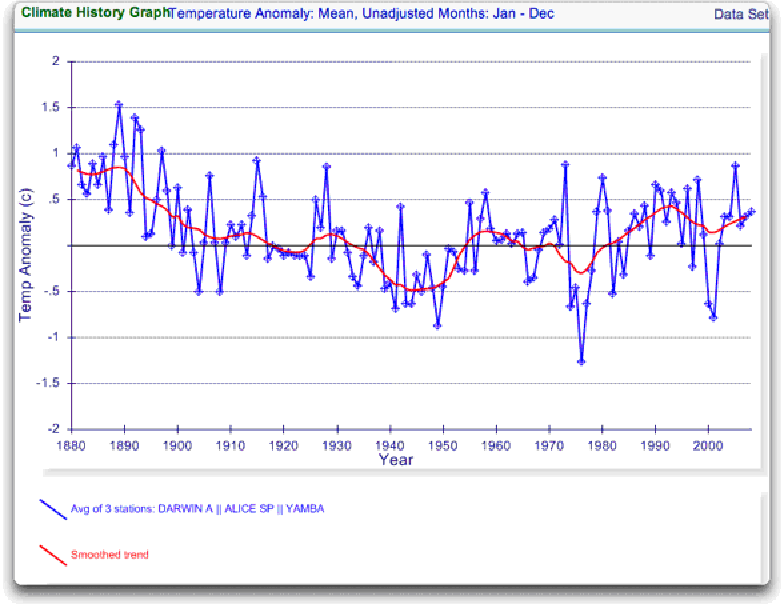

One of the things that was revealed in the released CRU emails is that the CRU basically uses the Global Historical Climate Network (GHCN) dataset for its raw data. So I looked at the GHCN dataset. There, I find three stations in North Australia as Wibjorn had said, and nine stations in all of Australia, that cover the period 1900-2000. Here is the average of the GHCN unadjusted data for those three Northern stations, from AIS:

Figure 2. GHCN Raw Data, All 100-yr stations in IPCC area above.

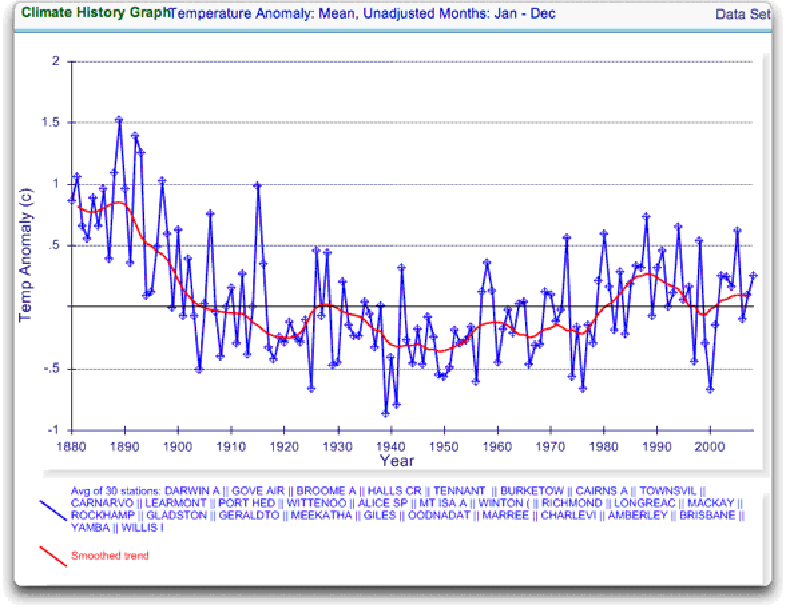

So once again Wibjorn is correct, this looks nothing like the corresponding IPCC temperature record for Australia. But it’s too soon to tell. Professor Karlen is only showing 3 stations. Three is not a lot of stations, but that’s all of the century-long Australian records we have in the IPCC specified region. OK, we’ve seen the longest stations record, so lets throw more records into the mix. Here’s every station in the UN IPCC specified region which contains temperature records that extend up to the year 2000 no matter when they started, which is 30 stations.

Figure 3. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

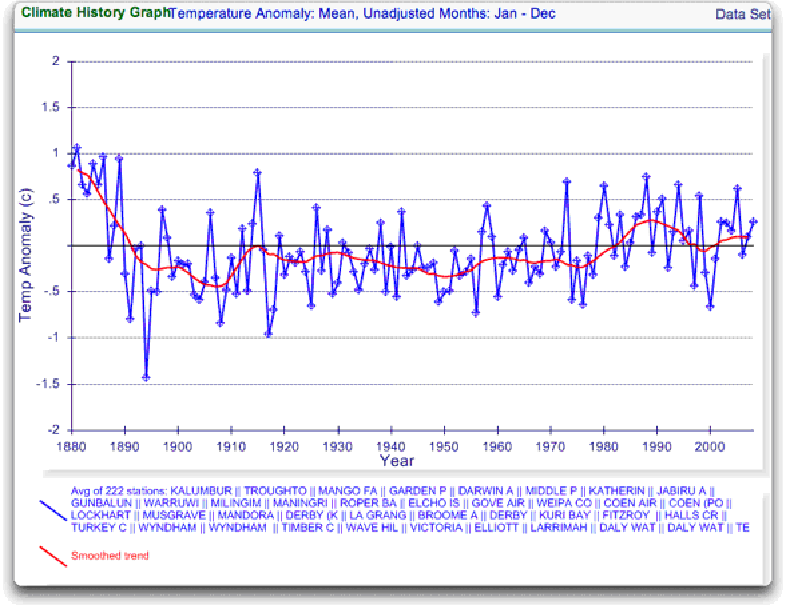

Still no similarity with IPCC. So I looked at every station in the area. That’s 222 stations. Here’s that result:

Figure 4. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

So you can see why Wibjorn was concerned. This looks nothing like the UN IPCC data, which came from the CRU, which was based on the GHCN data. Why the difference?

The answer is, these graphs all use the raw GHCN data. But the IPCC uses the “adjusted” data. GHCN adjusts the data to remove what it calls “inhomogeneities”. So on a whim I thought I’d take a look at the first station on the list, Darwin Airport, so I could see what an inhomogeneity might look like when it was at home. And I could find out how large the GHCN adjustment for Darwin inhomogeneities was.

First, what is an “inhomogeneity”? I can do no better than quote from GHCN:

Most long-term climate stations have undergone changes that make a time series of their observations inhomogeneous. There are many causes for the discontinuities, including changes in instruments, shelters, the environment around the shelter, the location of the station, the time of observation, and the method used to calculate mean temperature. Often several of these occur at the same time, as is often the case with the introduction of automatic weather stations that is occurring in many parts of the world. Before one can reliably use such climate data for analysis of longterm climate change, adjustments are needed to compensate for the nonclimatic discontinuities.

That makes sense. The raw data will have jumps from station moves and the like. We don’t want to think it’s warming just because the thermometer was moved to a warmer location. Unpleasant as it may seem, we have to adjust for those as best we can.

I always like to start with the rawest data, so I can understand the adjustments. At Darwin there are five separate individual station records that are combined to make up the final Darwin record. These are the individual records of stations in the area, which are numbered from zero to four:

Figure 5. Five individual temperature records for Darwin, plus station count (green line). This raw data is downloaded from GISS, but GISS use the GHCN raw data as the starting point for their analysis.

Darwin does have a few advantages over other stations with multiple records. There is a continuous record from 1941 to the present (Station 1). There is also a continuous record covering a century. finally, the stations are in very close agreement over the entire period of the record. In fact, where there are multiple stations in operation they are so close that you can’t see the records behind Station Zero.

This is an ideal station, because it also illustrates many of the problems with the raw temperature station data.

- There is no one record that covers the whole period.

- The shortest record is only nine years long.

- There are gaps of a month and more in almost all of the records.

- It looks like there are problems with the data at around 1941.

- Most of the datasets are missing months.

- For most of the period there are few nearby stations.

- There is no one year covered by all five records.

- The temperature dropped over a six year period, from a high in 1936 to a low in 1941. The station did move in 1941 … but what happened in the previous six years?

In resolving station records, it’s a judgment call. First off, you have to decide if what you are looking at needs any changes at all. In Darwin’s case, it’s a close call. The record seems to be screwed up around 1941, but not in the year of the move.

Also, although the 1941 temperature shift seems large, I see a similar sized shift from 1992 to 1999. Looking at the whole picture, I think I’d vote to leave it as it is, that’s always the best option when you don’t have other evidence. First do no harm.

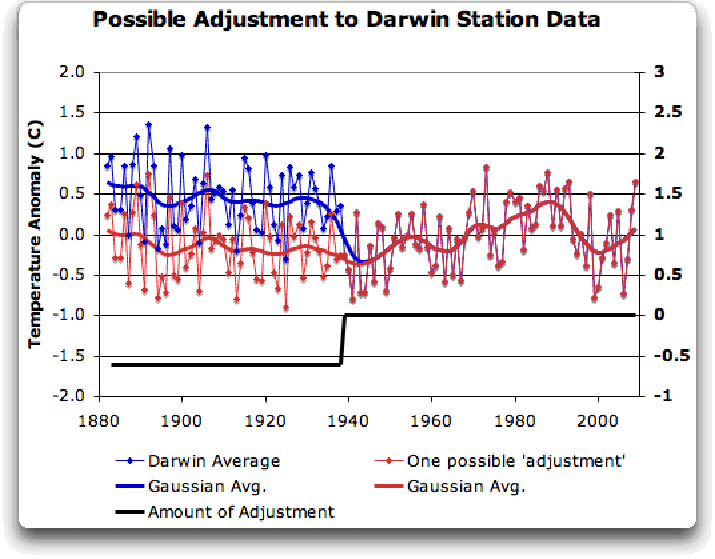

However, there’s a case to be made for adjusting it, particularly given the 1941 station move. If I decided to adjust Darwin, I’d do it like this:

Figure 6 A possible adjustment for Darwin. Black line shows the total amount of the adjustment, on the right scale, and shows the timing of the change.

I shifted the pre-1941 data down by about 0.6C. We end up with little change end to end in my “adjusted” data (shown in red), it’s neither warming nor cooling. However, it reduces the apparent cooling in the raw data. Post-1941, where the other records overlap, they are very close, so I wouldn’t adjust them in any way. Why should we adjust those, they all show exactly the same thing.

OK, so that’s how I’d homogenize the data if I had to, but I vote against adjusting it at all. It only changes one station record (Darwin Zero), and the rest are left untouched.

Then I went to look at what happens when the GHCN removes the “in-homogeneities” to “adjust” the data. Of the five raw datasets, the GHCN discards two, likely because they are short and duplicate existing longer records. The three remaining records are first “homogenized” and then averaged to give the “GHCN Adjusted” temperature record for Darwin.

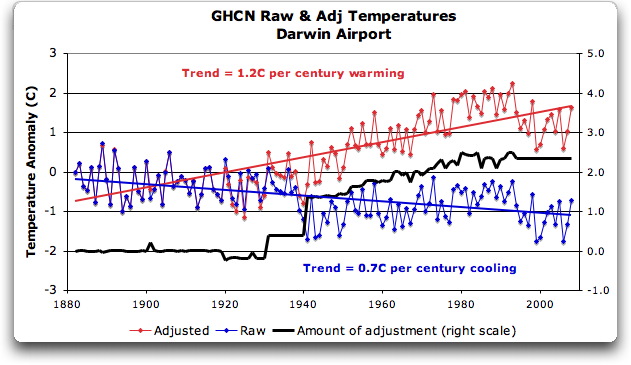

To my great surprise, here’s what I found. To explain the full effect, I am showing this with both datasets starting at the same point (rather than ending at the same point as they are often shown).

Figure 7. GHCN homogeneity adjustments to Darwin Airport combined record

YIKES! Before getting homogenized, temperatures in Darwin were falling at 0.7 Celcius per century … but after the homogenization, they were warming at 1.2 Celcius per century. And the adjustment that they made was over two degrees per century … when those guys “adjust”, they don’t mess around. And the adjustment is an odd shape, with the adjustment first going stepwise, then climbing roughly to stop at 2.4C.

Of course, that led me to look at exactly how the GHCN “adjusts” the temperature data. Here’s what they say

GHCN temperature data include two different datasets: the original data and a homogeneity- adjusted dataset. All homogeneity testing was done on annual time series. The homogeneity- adjustment technique used two steps.

The first step was creating a homogeneous reference series for each station (Peterson and Easterling 1994). Building a completely homogeneous reference series using data with unknown inhomogeneities may be impossible, but we used several techniques to minimize any potential inhomogeneities in the reference series.

…

In creating each year’s first difference reference series, we used the five most highly correlated neighboring stations that had enough data to accurately model the candidate station.

…

The final technique we used to minimize inhomogeneities in the reference series used the mean of the central three values (of the five neighboring station values) to create the first difference reference series.

Fair enough, that all sounds good. They pick five neighboring stations, and average them. Then they compare the average to the station in question. If it looks wonky compared to the average of the reference five, they check any historical records for changes, and if necessary, they homogenize the poor data mercilessly. I have some problems with what they do to homogenize it, but that’s how they identify the inhomogeneous stations.

OK … but given the scarcity of stations in Australia, I wondered how they would find five “neighboring stations” in 1941 …

So I looked it up. The nearest station that covers the year 1941 is 500 km away from Darwin. Not only is it 500 km away, it is the only station within 750 km of Darwin that covers the 1941 time period. (It’s also a pub, Daly Waters Pub to be exact, but hey, it’s Australia, good on ya.) So there simply aren’t five stations to make a “reference series” out of to check the 1936-1941 drop at Darwin.

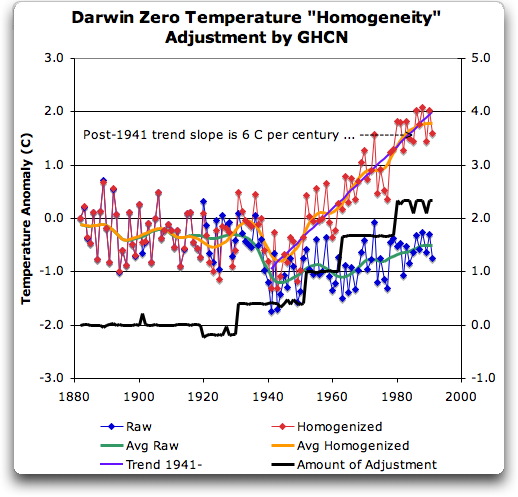

Intrigued by the curious shape of the average of the homogenized Darwin records, I then went to see how they had homogenized each of the individual station records. What made up that strange average shown in Fig. 7? I started at zero with the earliest record. Here is Station Zero at Darwin, showing the raw and the homogenized versions.

Figure 8 Darwin Zero Homogeneity Adjustments. Black line shows amount and timing of adjustments.

Yikes again, double yikes! What on earth justifies that adjustment? How can they do that? We have five different records covering Darwin from 1941 on. They all agree almost exactly. Why adjust them at all? They’ve just added a huge artificial totally imaginary trend to the last half of the raw data! Now it looks like the IPCC diagram in Figure 1, all right … but a six degree per century trend? And in the shape of a regular stepped pyramid climbing to heaven? What’s up with that?

Those, dear friends, are the clumsy fingerprints of someone messing with the data Egyptian style … they are indisputable evidence that the “homogenized” data has been changed to fit someone’s preconceptions about whether the earth is warming.

One thing is clear from this. People who say that “Climategate was only about scientists behaving badly, but the data is OK” are wrong. At least one part of the data is bad, too. The Smoking Gun for that statement is at Darwin Zero.

So once again, I’m left with an unsolved mystery. How and why did the GHCN “adjust” Darwin’s historical temperature to show radical warming? Why did they adjust it stepwise? Do Phil Jones and the CRU folks use the “adjusted” or the raw GHCN dataset? My guess is the adjusted one since it shows warming, but of course we still don’t know … because despite all of this, the CRU still hasn’t released the list of data that they actually use, just the station list.

Another odd fact, the GHCN adjusted Station 1 to match Darwin Zero’s strange adjustment, but they left Station 2 (which covers much of the same period, and as per Fig. 5 is in excellent agreement with Station Zero and Station 1) totally untouched. They only homogenized two of the three. Then they averaged them.

That way, you get an average that looks kinda real, I guess, it “hides the decline”.

Oh, and for what it’s worth, care to know the way that GISS deals with this problem? Well, they only use the Darwin data after 1963, a fine way of neatly avoiding the question … and also a fine way to throw away all of the inconveniently colder data prior to 1941. It’s likely a better choice than the GHCN monstrosity, but it’s a hard one to justify.

Now, I want to be clear here. The blatantly bogus GHCN adjustment for this one station does NOT mean that the earth is not warming. It also does NOT mean that the three records (CRU, GISS, and GHCN) are generally wrong either. This may be an isolated incident, we don’t know. But every time the data gets revised and homogenized, the trends keep increasing. Now GISS does their own adjustments. However, as they keep telling us, they get the same answer as GHCN gets … which makes their numbers suspicious as well.

And CRU? Who knows what they use? We’re still waiting on that one, no data yet …

What this does show is that there is at least one temperature station where the trend has been artificially increased to give a false warming where the raw data shows cooling. In addition, the average raw data for Northern Australia is quite different from the adjusted, so there must be a number of … mmm … let me say “interesting” adjustments in Northern Australia other than just Darwin.

And with the Latin saying “Falsus in unum, falsus in omis” (false in one, false in all) as our guide, until all of the station “adjustments” are examined, adjustments of CRU, GHCN, and GISS alike, we can’t trust anyone using homogenized numbers.

Regards to all, keep fighting the good fight,

w.

FURTHER READING:

My previous post on this subject.

The late and much missed John Daly, irrepressible as always.

More on Darwin history, it wasn’t Stevenson Screens.

NOTE: Figures 7 and 8 updated to fix a typo in the titles. 8:30PM PST 12/8 – Anthony

carrot eater (03:50:07) :

“”You don’t know what ‘neighboring’ means? Then do a spatial correlation test for yourself, to see how correlation drops off with distance. You’ll find out.””

OK. So tell me this. If a station 350 miles away correlates well, a station 500 miles away correlates better, a station 800 miles away correlates best, and a station 1,000 miles away correlates perfectly then which station is the most highly correlated neighboring station?

carrot eater (04:31:18) :

“”Yes, but again, the GHCN adjustments should correspond to some physical thing.””

I think many of us have concluded that this isn’t the case. CHCN adjustments (outside the US) are supposedly algorithmically done based purely on discontinuities with reference data. These adjustments supposedly occur whether or not there was any physical change with the station sensor or any other explanation for the defined discontinuity.

Nick Stokes (05:11:33) :

“”In the specific case of Darwin, while we haven’t done the updated analysis yet, I am expecting to find that the required adjustment between the PO and the airport is greater in the dry season than the wet season, and greater on cool nights than on warm ones. The reason for this is fairly simple – in the dry season Darwin is in more or less permanent southeasterlies, and because the old PO was on the end of a peninsula southeasterlies pass over water (which on dry-season nights is warmer than land) before reaching the site.””

If the southeasterlies are more or less permanent, then shouldn’t there also be pre-1941 adjustments?

Slartibartfast (05:15:40) :

“”No, it’s an algorithm. I think they describe what they do, even if they’re not showing you the algorithm in detail.””

“”I haven’t actually attempted this, but I’ve done some of this kind of data analysis WRT other, unrelated applications.””

OK. Well, I’ll leave the ABM replication to you. I think it is more important to focus on GHCN.

A few thoughts:

1) The purpose of collecting and adjusting this data is not solely to use it for climate change study (although that’s important). There are all kinds of commercial, agricultural, and scientific uses for historical climate data. You might want to have good local and regional data even if the adjustments come out in the wash when you’re using it to look at global temperature.

2) For climate change study purposes, I’m not sure how much it matters whether the adjustments are overall positive, negative, or zero if the important thing is the historical trend in the data. If _old_ temperature data are adjusted upward, that will tend to reduce any warming trend (or will reinforce a cooling trend).

3) Suppose the adjusted data shows a greater overall warming trend (or cooling trend). On the one hand, that could mean an indicator of bias. On the other hand, maybe that’s just what happens when you take the noise out of data. (Hypothetical: Suppose I ask a bad typist to transcribe a George Carlin monologue. Then I give it to a copy editor. After copy editing, the ratio of dirty words in the text goes up. Does that mean the copy editor’s mind is in the gutter, or does it mean that the corrected text is a better reflection of the actual performance?) I’d want to think about this a bit because it seems like a hard question.

JJ (06:49:46) :

“”The two ‘missing facts’ you identify (definition of ‘neigboring’ and the t-Test significance cut off for calling a discontinuity) are almost certainly given in the papers cited, with (Peterson and Easterling 1994) being the most likely candidate.””

I still haven’t found Peterson. I’ll search more thoroughly this weekend. Don’t keep your cheap date waiting.

@carrot eater: “[T]he GHCN adjustments should correspond to some physical thing.”

@wobble: “I think many of us have concluded that this isn’t the case. GHCN adjustments (outside the US) are supposedly algorithmically done based purely on discontinuities with reference data. These adjustments supposedly occur whether or not there was any physical change with the station sensor or any other explanation for the defined discontinuity.”

Ideally, if the algorithm were very cleverly designed, it would make adjustments at the “right” places — that is to say, the adjustments made by the algorithm would correspond to the adjustments an unbiased researcher with access to perfect historical information would make based on changes in station conditions. This algorithm is certainly not that good, but it would not surprise me that if in many cases the GHCN algorithmic adjustments do roughly correspond with historical events that are the basis for the ABM’s adjustments based on metadata.

wobble (07:21:33) :

“These adjustments supposedly occur whether or not there was any physical change with the station sensor or any other explanation for the defined discontinuity.”

I think I’ve been clear about this. Yes, the GHCN statistical method detects the adjustments to be made without using the historical metadata (knowledge of specific things that happened at that site).

However, if you were able to obtain a list of site changes (the Aussies would have that info), you might be able to match up the GHCN statistically detected adjustments with actual events on the ground. You might not be able to find a match for all of them, but at least some of them.

Hence, getting that data from the Australian BoM would be of interest, as well as looking at their own adjustments, which apparently use both the statistical methods and knowledge of the historical metadata.

alkali (07:57:21) :

You have expressed better than I have the point I am trying to make. If you had access to the historical metadata, you should see some correspondence between the GHCN adjustments and the metadata.

You wouldn’t be able to put a label on every single adjustment as Willis is demanding, but you’d have some idea as to what’s going on, physically.

There won’t be perfect matches either way, as there are limits to both the GHCN statistical method and the record-keeping of the metadata, but it’d be a useful exercise, I think.

Nick Stokes (05:11:33) :

Here ia a comment from a scientist who has been involved in adjustments to the Australian data sets. Here’s what he says about Darwin in particular:

The post-1941 adjustments (all small) at Darwin Airport relate to a number of site moves within the airport boundary. These days it’s on the opposite side to the terminal, not too far from the Stuart Highway.

All small?

totalling 2C??

Is he a BOM scientist?

carrot eater and alkali,

I agree with both your points. Non-US GHCN adjustments probably match up often to actual physical events on the ground.

But how many instances does it really take to bias the entire average towards warming?

Even if the Peterson and Easterling methodology is sound, how difficult would it be to program a slight bias in the code which performs the adjustments. And how often was human intervention required to address a discontinuity because the code wasn’t robust enough to handle a minority of the situations?

Having studies this issue more carefully (with the help of many of you) it’s now clearly obvious how bad the world’s temperature records are, that the worst data is adjusted by data merely considered to be less bad, and that the whole issue is completely vulnerable to personal biases.

alkalai,

“2) For climate change study purposes, I’m not sure how much it matters whether the adjustments are overall positive, negative, or zero …”

&

“3) Suppose the adjusted data shows a greater overall warming trend (or cooling trend). On the one hand, that could mean an indicator of bias.”

I do have concern over the net effect of the adjustments as reported by NOAA. I would of course not expect an overall effect of zero – no point in an adjustment if it doesnt change anything. It is not at all clear to me why the effect would be almost universally positive, let alone why it would be increasing in magnitude leading to a trend line with … that particular shape.

Why would the non climate effects have that distribution?

Hmmmmmm ….

Excellent Job. You do some fantastically thorough reporting. This is the second time I have read one of your posts, and was more impressed than the first. I have added this post to my ‘Recent Reading’.

Truth, I’d like another helping…

Nathan R. Jessup

http://www.the-raw-deal.com

wobble (08:32:14) :

I think you’re reaching unfounded conclusions, at least on the basis of what you’ve seen so far. I’d suggest spending much more time reading the literature, studying the statistics and looking at several examples before reaching any broad conclusions over how robust the methods are, or how poor the quality control is, or whether there are any systematic biases.

I haven’t done that, so I don’t have any personal opinion on the matter. For all I know, there could be a handful of stations where one of the analyses (GHCN, GISS, CRU, the national met bureau) might send up a weird result that hasn’t been noticed by their quality control methods. I don’t know. Maybe Darwin is such a spot, though Willis hasn’t done the work necessary to actually demonstrate that. But I find it unlikely that they’re all so incompetent at statistics that the overall results are way off.

JJ (23:48:48) :

Thanks for answering my questions. These blog comment threads can be a really useful source of information once all the cheerleading has died down!

The method they use seems to be a reasonable approach, but I agree that if one dodgy homogenization is found, there’s no obvious reason why it can be simply written off as unrepresentative without further investigation of the other data sets.

Nice detective work.

carrot eater (08:58:46) :

“”I’d suggest spending much more time reading the literature, studying the statistics and looking at several examples before reaching any broad conclusions over how robust the methods are, or how poor the quality control is, or whether there are any systematic biases.””

I didn’t conclude that the quality control was bad or that there are systematic biases.

I stated that the raw data is bad and that the quality control efforts are vulnerable to biases.

WAG (20:49:23):

“…the burden of proof is to show why the adjustments should

nothave been made.”Fixed.

Smokey: If you’re going to explicitly accuse somebody of fraud, as Willis is doing here, then the burden is on him to actually show that.

If he would have phrased it as “here is a station with some large adjustments, can somebody help me understand why these adjustments are made?”, that’s another matter.

But Willis didn’t say that. He went straight to “indisputable evidence” that somebody was fiddling the numbers. If he’s going to say that, the burden is on him to actually demonstrate that. He didn’t. He just showed that some adjustments were made. Well, we know that adjustments are made, and at a few stations here and there, those adjustments can be pretty big. To support his statement, Willis has to show that the adjustments are not only unjustified, but intentionally so. I think he has rather more work to do.

Nick Stokes (05:11:33) :

All very interesting but here’s a few facts for you to consider:

(1) Apparently (Bill’s analysis of GHCN) two major adjustments at Darwin – one in 1941 (seems reasonable to me), but only one major shift June 1994 – doesn’t look to me like many minor adjustments as your BOM? informant suggests.

(2) Oenpelli (NT). BOM ‘loses’ all records prior to 1963 (or is it 1957 – even their own modern metadata is contradictory on that point) going back to 1920 – 1925 despite apparently having the earlier records in 1961 (and researchers accessing it over the following 1 – 2 decades).

(3) GISS Oenpelli record (back to 1964). One major shift only (1977) of the order of 0.5±0.3 C (record finishes in the 1990s so I can’t be more precise).

(4) Brisbane GHCN record. Major upward shift(s) by comparison with the raw BOM record. We therefore have two Australian state capital sites, both major, longstanding airports, neither likely to be subject to UHI, both subject to (at least cumulatively) major upward shifts in the post-AGW ‘realisation’ era.

Good on Ya.

If you go to the UK Met.Office they have several data sets comprising the annual ‘ anomalies’, sample errors and biased data. What hit me was the insertion of a value of -1.000 for ‘missing data’ throughout the data. It occurs widely in the early years when negative values of anomaly are common but much less so in recent years where the number of readings has increased. These latter readings have a tendency to positive but the effect of the -1.000 seems to amplify the time series differences especially as the actual values fall between -.787 and +.581. Is there any justification for this? I only ask as a half smart schmuck who feels that the wool is being pulled over our eyes. Your site is like an oasis in a desert. Many Thanks

As we know, more climate info is openly availble than most unpaid people can read. FOI requests for public domain stuff is disengenuous at best and sabotage at worst.

Knowing this I checked you allegations about the Darwin weather station. It is not the lonely site you claim. Australia shows 88 weather stations, 17 of them within your 500 km. radius. Your post is meaningless.

Sorry. You may be smarter than me, but I’m not as stupid as you think.

carrot eater (11:16:49),

You are turning the scientific method on its head. Those putting forth a new hypothesis are obligated to fully cooperate with skeptical scientists [the only honest kind of scientist], by providing all of the methods and data that have any bearing on their hypothetical conclusions. The result is an unsupported opinion; a conjecture, as opposed to a legitimate hypothesis.

As we can see, the purveyors of the CO2=CAGW hypothesis continue to stonewall those requesting cooperation. See Steve Short’s example above.

To bring you up to speed on the difference between a scientific Law, Theory, Hypothesis and Conjecture, see here: click.

For the umpteenth time: the burden of the AGW hypothesis is on those promoting it — not on those questioning it.

Why does the alarmist crowd run like scared girls from a garter snake when they are asked for their raw and massaged data and their methodologies? Here’s Richard Feynman on peer review and the scientific method:

Until the relatively small clique of stonewalling CRU scientists and their U.S. and UN counterparts start following the scientific method, they are only making unfalsifiable, untestable conjectures. Deliberately hiding their data and methods from those who pay for their work product doesn’t pass the smell test. And neither do their flimsy retroactive excuses.

Has anyone already tried summing all the GHCN adjustments to see if the result is close to zero?

Given the nearly universal positive values for GHCN (US) Final-Raw, and the strong, increasing, positive trend (i.e. Hockey anyone?), that hardly seems possible.

http://www.ncdc.noaa.gov/img/climate/research/ushcn/ts.ushcn_anom25_diffs_urb-raw_pg.gif

I would like to see a similar graph for the non US stations, and for the globe.

Anybody know how to find those?