by Ross McKitrick

- Optimal fingerprinting is a statistical method that estimates the effect of greenhouse gases (GHGs) on the climate in the form of a regression slope coefficient.

- The larger the coefficient associated with GHGs, the bigger the implied effect on the climate system.

- In 2003 Myles Allen and Simon Tett published an influential paper in Climate Dynamics recommending the use of a method called Total Least Squares in optimal fingerprinting regression to correct a potential downward bias associated with Ordinary Least Squares

- The problem is that in most cases TLS replaces the downward bias in OLS with an upward bias that can be as large or larger

- Under special conditions TLS will yield unbiased estimates, but you can’t test if they hold

- Econometricians never use TLS because another method (Instrumental Variables) is a better solution to the problem

Introduction

The method of “optimal fingerprinting” works by regressing a vector of climate observations on a set of climate model-generated analogues (called “signals”) which selectively include or exclude GHG forcing. According to the theory behind the methodology, the coefficient associated with the GHG signal indicates the size of the effect of GHGs on the real climate. If the coefficient is greater than zero then the signal is “detected”. The larger the coefficient value, the larger is the implied effect on the real climate.

The seminal method of optimal fingerprinting was presented in a 1999 Climate Dynamics paper by Myles Allen and Simon Tett. With some modifications it has been widely used by climate scientists ever since. Last year I published a paper in Climate Dynamics showing that the basis for believing the method yields unbiased and significant findings was flawed. This website provides links to my paper, as well as to the Allen and Tett (1999) paper I critiqued, a non-technical summary of my argument, Myles Allen’s reply and my response, and a comment by Richard Tol.

One of the arguments Allen made in response was that the issue is now moot because the method he co-authored has been replaced by newer ones (emphasis added):

“The original framework of AT99 was superseded by the Total Least Squares approach of Allen and Stott (2003), and that in turn has been largely superseded by the regularised regression or likelihood-maximising approaches, developed entirely independently. To be a little light-hearted, it feels a bit like someone suggesting we should all stop driving because a new issue has been identified with the Model-T Ford.”

Ha ha, Model T Ford; we all drive Teslas now, aka Total Least Squares. But in 20 years of usage did any climate scientists check if TLS actually solves the problem? A few statisticians looked at it over the years and have expressed significant doubts about TLS. But once it was adopted by climatologists that was that; with few exceptions no one asked any questions.

I have just published a new paper in Climate Dynamics critiquing the use of TLS in fingerprinting applications. TLS was intended to correct a potential downward bias in OLS coefficient estimates which could understate the influence of GHG’s on the climate. While there is a legitimate argument that OLS can be biased downward, the problem is that in typical usage TLS is biased upwards, in other words it overstates the influence of GHGs. There is a special case in which TLS gives unbiased results, but a user cannot know if a data set matches those conditions. Moreover, TLS is specifically unsuitable for testing the null hypothesis in signal detection and its results ought to be confirmed using OLS.

The Errors-in-Variables Problem and the Weakness of TLS

OLS models assume that the explanatory variables in a regression are accurately measured, so the “errors” separating the dependent variable from the regression line are entirely due to randomness in the dependent variables. If the explanatory variables also contain randomness, for instance due to measurement error, OLS will typically yield biased slope estimators. In a simple model with one explanatory (x) variable and one dependent (y) variable the bias will be downward, which is called “attenuation bias.” David Giles has a nice explanation of the problem here, and you can also look at econometrics texts like Wooldridge or Davidson and MacKinnon.

The measurement problem is referred to as errors-in-variables or EIV. Since climate models yield noisy or uncertain estimates of the true climate “signals” Allen and Stott (2003) suggested the TLS method as a remedy. This is not how econometrics deals with the issue. In every econometrics textbook of which I am aware, the recommended treatment for EIV is Instrumental Variables estimation, which can be shown to yield unbiased and consistent coefficient estimates. I have never seen TLS covered in any econometrics textbook, ever. Nor have I ever seen it used in economics, or anywhere else outside of climatology except in the small literature looking at the properties of TLS estimators, primarily a 1987 book by Wayne Fuller, a 1981 article in the Annals of Statistics by Leon Gleser and a 1996 article in The American Statistician by RJ Carroll and David Ruppert.

Both Fuller and Gleser discuss the difficulty of proving that TLS (or orthogonal regression as it is more commonly called) yields unbiased and consistent estimates. The problem, as explained by Carroll and Ruppert, is that the method requires estimating more parameters than there are “sufficient statistics” in the data: or in other words, more parameters than the data can identify. Implementation of TLS therefore requires arbitrarily choosing the value of one of the parameters. Both y and x have error terms with variances needing to be estimated, and the assumption in practise is that they are equal, so only one needs to be estimated. If they happen to be equal, Gleser shows that the TLS estimate is consistent (meaning any bias goes to zero as the sample goes to infinity). If not, consistency cannot be guaranteed. In the signal detection application this means that unless model-generated signals contain random errors with exactly the same variance as the random errors in the observed climate (or if they can be rescaled to make them equal), TLS cannot be shown to yield unbiased slope coefficients.

Carroll and Ruppert also point out that TLS depends on the assumption that the regression model itself is correctly specified, in other words the regression model includes everything that explains variations in the dependent variable. OLS assumes this as well, but it is more robust to model errors. If the model omits one or more variables but they are uncorrelated with the included variables then OLS coefficients will not be biased, but if any of the omitted variables are correlated with the included variable OLS will be biased up or down depending on the sign of the correlation. With TLS, bias arises either way, whether the omitted variable is correlated with the included variables or not, but the bias is always upwards. Unless you happen to have a regression model that fully explains the dependent variable, so that in the absence of random noise every observation would lie exactly on the regression line, the default assumption should be that TLS overestimates the parameter values.

Thus TLS can, in principle, yield unbiased signal detection coefficients, but only if the climate model that generates the signals includes everything that explains the observed climate, and adds random noise to the signals with precisely the same variance as the randomness in the observed climate. Of course, if those claims were true we wouldn’t need to do signal detection regressions in the first place. If we wanted to know how GHG’s influence the climate, we could just look inside the model. Signal detection regressions are motivated by the fact that climate models are neither perfect nor complete, yet the claim that the results are unbiased presumes that they are both.

Comparing TLS and OLS in practise

To investigate how these issues affect signal detection regressions I ran simulated regressions as follows. Imagine a sample of surface temperature trends (y) from a sample of 200 locations stretching from the North Pole to the South Pole. I constructed two uncorrelated explanatory variables X1 and X2. X1 can be thought of as 200 simulated trends (or “signals”) for those locations from a model forced with anthropogenic greenhouse gases and X2 comes from a model with only natural forcings. Then I added some random noise to the X’s yielding the random variables W1 and W2. Since every regression model potentially omits at least one relevant explanatory variable I also generated two additional variables Q1 and Q2. Q1 is just an uncorrelated set of random numbers. Q2 is a set of random numbers partially correlated with X1.

Then I generated 9 versions of the dependent variable y:

Y1 = bX1 + X2/2 + v where b was set equal to 0.0, 0.5 or 1.0 and v is white noise;

YQ1 = bX1 + X2/2 + Q1 + v

and

YQ2 = bX1 + X2/2 + Q2 + v;

and in each of the latter two b was again allowed to be 0.0, 0.5 or 1.0.

I regressed each version of y on W1 and W2:

Y1 = b1 W1 + b2 W2 + e;

YQ1 = b1 W1 + b2 W2 + e

and

YQ2 = b1 W1 + b2 W2 + e.

Each time I estimated the coefficients b1 and b2 using both OLS and TLS. By construction b2 should always equal 0.5 and I didn’t focus on it. Instead I focused on b1, which should equal 0.0, 0.5 or 1.0 depending on the simulation.

The important thing to bear in mind is that a researcher doesn’t know which dependent variable he or she has used. If we assume it’s Y1 then we are assuming the regression model is correctly specified, the only problem is W1 is a noisy version of X1. If we used YQ1 that means we assume the regression model omits an uncorrelated explanatory variable and if we assume we used YQ2 that means the regression model omits a correlated explanatory variable. There is no reason to assume we only ever use Y1 in practice: wouldn’t that be nice.

I ran these 20,000 times each and looked at the distributions of b1 under OLS and TLS. I then added a couple of other wrinkles. First I reduced the variance on the noise term on the X’s, which is analogous to improving the signal-noise ratio in X. I also ran a version in which the X’s are slightly negatively correlated, to correspond to the situation in signal detection applications where the anthropogenic and natural signals are negatively correlated.

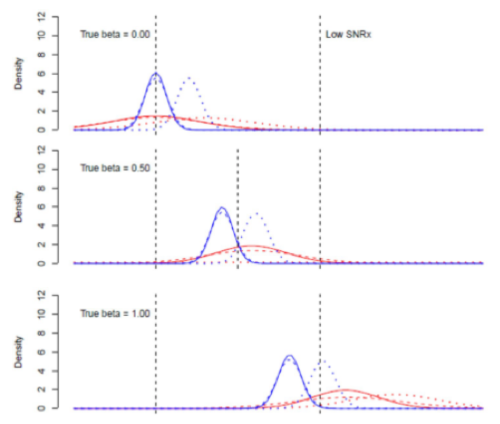

The working assumption in the signal detection field is that the OLS estimates of b1 are biased low but the TLS estimates are unbiased. In the first set of results the distributions of b1 were as follows.

OLS is in blue and TLS is in red. A solid line means the dependent variable was Y1, a dashed line means it was YQ1 and a dotted line means it was YQ2. Looking at the OLS results, attenuation bias is multiplicative so when the true value of b is zero OLS is unbiased. It remains unbiased if the model omits an independent explanatory variable but if the omitted variable is correlated with X1 (dashed line) the OLS estimate is biased upward. As the true value of b rises the OLS estimate becomes centered below the true value. In the bottom panel, dotted line, the attenuation bias and the omitted variable bias roughly cancel each other out (dotted line) but this is just a fluke, not a general rule.

The TLS results are different. First of all the distribution is much wider because TLS is less efficient. When the true value of b is zero and there are no omitted variables the distribution is centered on zero. As the true value of b goes up, all three versions of the TLS regression yield positively-biased estimates.

Positive bias matters not only because of the risk of false positives but because the coefficient magnitude itself feeds into “carbon budget” calculations. The higher the coefficient value the smaller the “allowable” carbon budget when estimating the point at which the world crosses a certain climate target. These are important calculations with very large global macroeconomic consequences so I find it disconcerting that the problem of positive bias in TLS-based fingerprinting regression results hasn’t been examined before.

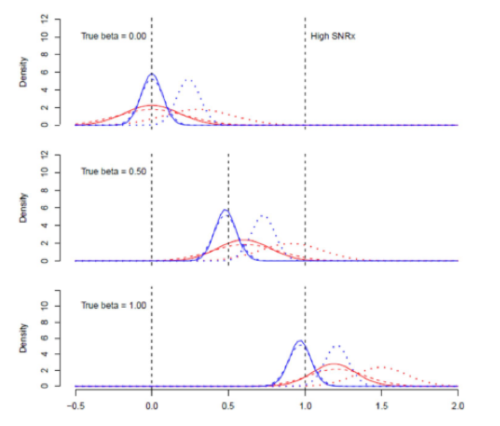

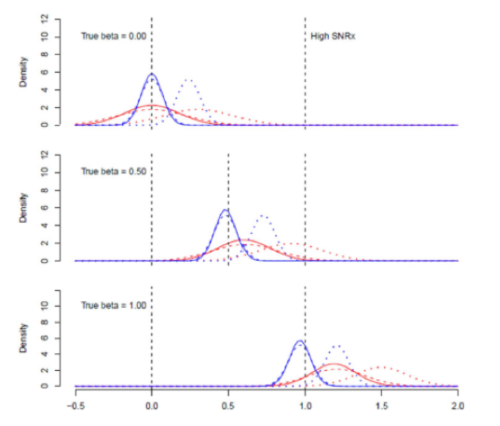

For the next batch of estimates I reduced the variance of the noise on the X’s, which I call the high SNRx case.

Now OLS moves towards the true value when there is no correlated omitted variable, which makes sense because as the noise on X goes to zero we approach the case where OLS is known to be unbiased. But TLS does not have the same tendency, indeed the positive bias gets slightly worse in the omitted variables case. This is not a good property of an estimator: as an important noise component shrinks you’d expect it to converge on the true value.

Next I looked at the case when the noise on the X’s and on y is the same magnitude, which is the optimal configuration for TLS because the assumed variance ratio in the computation algorithm corresponds to the actual unobservable variance ratio. If the regression model is correctly specified TLS is unbiased. But if a variable is omitted, even an uncorrelated one, and the true value of beta is >0, TLS has an upward bias. OLS has a downward bias except when Q2 is missing, then its net bias is upward.

I examined numerous other configurations of the simulation model and discussed the question of which estimator should be preferred. The differences in results do not reflect methodological choices, they reflect different assumptions about the underlying data generating processes and if the researcher has no idea which one best describes the data set at hand, OLS is more often a preferred option than TLS, notwithstanding its known biases. Yes OLS sometimes yields a coefficient biased towards zero, but it is a known bias. TLS will typically yield a coefficient with a positive bias, and the size of the bias is difficult to predict in part because of the large variance.

Interestingly, as the true value of b goes to zero, the estimator preference unambiguously goes toward OLS because the attenuation bias goes to zero and the TLS estimator becomes undefined. This means that if we are testing the null hypothesis that b=0, in other words greenhouse forcing does not explain observed climate changes, we shouldn’t rely on TLS since if the null is true we wouldn’t use TLS, we would use OLS. Or, put another way, if a significant signal detection result depends on using TLS rather than OLS, it is not a robust result.

Next Steps

I have another study under review in which I explore in some detail the consequences of allowing the X’s to be correlated with each other. I included a preliminary look at this case in the present paper. I found that when the signals are correlated OLS still exhibits attenuation bias even when the true value of b = 0 and TLS exhibits a positive bias, but in this case the TLS bias gets large enough to risk false positives: namely an apparently “significant” value of b even when the true value is zero.

In sum I conclude that in general TLS over-corrects for attenuation bias, thereby yielding signal coefficients that are too large. It also yields extremely unstable estimates with large variances. Researchers should not rely on TLS for signal detection inferences, unless they have done the required testing (as discussed in my paper) that establishes that TLS is appropriate for the context.

Also, climate scientists should consider using Instrumental Variables as a remedy for the EIV problem, since it can be shown to yield unbiased and consistent results.

Note: when I did the page proofs the main results tables as rendered on screen looked OK, but the print version is messed up. The 1st, 7th and 13th rows should each be shifted down one row from where they are. Arrgh.

Sounds pretty comprehensive!

However, I have a much simpler model to measure attribution bias for extreme weather events.

The alarmists claim that every dangerous weather event is made worse by global warming.

I will take them at their word and reduce every event by one category level.

For instance, when a Cat 5 hurricane strikes the U.S., I predict at least one major news source will quote a scientist as saying that hurricane was made worse by global warming. I now toss that storm into the “Cat 4” bin.

Using my model, I predict we will not have a single true Cat 5 storm over the next 20 years that was not elevated by the odious influence of CO2.

That would easily be the longest stretch in the modern record.

I predict my model will likewise discover attribution bias in the record of major hurricanes and in the record of strong to violent (F3+) tornados in the U.S.

Given the weight of all the additional carbon dioxide in the atmosphere, I am sure we will also see a significant increase in major earthquakes!

Actually your <sarc> model needs to be downgraded by 2 categories, because one can find proof they have been fudging the numbers of Hurricane wind speeds, and hence artificially bumping up the categories for at least the past 5-6 years. No different than the “adjustments” of the temperature record to support the cult of carbon hypothesis. (which when you plot the adjustments against CO2, it is is a straight line with an upward slope)(image from realclimatescience.com)

The whole field is as fraudulent and unethical as if the likelihood of your mechanic diagnosing a costly repair to your car is proportional to whether his boat payment is due:)

Good point, Boss!

I saw this over at Judith’s. I summa’d in econometrics at a famous university, so can actually follow Ross’s technical arguments. His simulated examples are self explanatory here. The AGW statistical ‘fingerprinting’ stuff doesn’t work.

But there are simpler ways to get to the same ‘fingerprinting’ conclusion:

Both of which we should fire barrages of whenever warmunists pop their heads above the parapet. CliSciFi ignores the basics because it takes huge amounts of mental masturbation to overcome facts and sustain elaborate fictions.

“Thus TLS can, in principle, yield unbiased signal detection coefficients, but only if the

climate model that generates the signals includes everything that explains the observed

climate, and adds random noise to the signals with precisely the same variance as the

randomness in the observed climate. Of course, if those claims were true we wouldn’t need

to do signal detection regressions in the first place.”

As someone who got through college by the skin of my teeth, I find it both disdainful

& hard to understand why highly intelligent people like The Mannchild™ or Allen & Tett

continued forward with their KNOWN limited/flawed work. They were young enough in their

careers to have switched to a much better & potentially winning idea.

Its easy to understand, Old Man: Fame and fortune, plus no consequences for fraud.

Yes, this is indeed the heart of the matter. They are seeking to detect a signal from a series that doesn’t have one and resorting to lots of sub-par statistics to generate one.

‘Carroll and Ruppert also point out that TLS depends on the assumption that the regression model itself is correctly specified, in other words the regression model includes everything that explains variations in the dependent variable. OLS assumes this as well, but it is more robust to model errors.’

Full disclosure, my experience was limited to OLS, where it was always stressed to me that correct specification of prospective models was paramount, particularly with respect to using the correct functional form of the data. And that meant graphing the data nine ways to Sunday before banging out regressions. I don’t see any evidence that climate alarmists do so.

Here’s my comment that I made over at Judith Curry’s blog …

Call me crazy, but when you find a difference between observations and climate models, seems like that should be called a “Model Fingerprint” rather than a “Human Fingerprint” …

For example, the following claim is often made.

• Take a climate model that is specifically tuned to reproduce (hindcast) the past with a given set of inputs.

• Then remove some subset of the inputs.

• If the models do worse than they did with all of the inputs, clearly the specific missing inputs must be the critical inputs …

Now, clearly, this logic is a joke. Doesn’t matter which inputs you remove—the model is guaranteed to do worse without them. Pull out some of the inputs, and the model will NOT be able to reproduce the historical record.

And that says NOTHING about the chosen inputs.

Here’s an example, from the IPCC:

Well, duh … it doesn’t matter which set of forcings you leave out, you won’t get the right answer. That’s the nature of tuned models.

And that doesn’t even include the fact that “No climate model has reproduced the observed global mean warming trend or the continental mean warming trends in all individual continents (except Antarctica) over the” 18th century … but I digress.

Not only that, but as I showed in posts including Life Is Like A Black Box Of Chocolates, the global temperature output of climate models is nothing but a lagged, rescaled version of the “forcings” input to the models. Pull out some of the forcings, and your fit gets bad really fast … so what?

That says NOTHING about the forcings, and everything about the futility of using simplistic tuned models to attempt to understand chaotic fractal systems.

w.

The historical surface record is unreliable anyway and in no way can be thought to be an accurate record of the global average temperature over the past 150 years.

WE, saw your comment over at Judith’s and thought sufficient. Since you had not (yet) commented here, I did. Ross is correct but very technical. There are simpler ways (yours being but one of several) to debunk the ‘fingerprinting’ claim.

Thanks, Rud. Glad to have your comment. I’m looking at your idea about the earlier and later temperature rise.

w.

Not my idea. Comes from MIT’s Lindzen long ago circa 2011. I should have attributed the observation in this comment, as did in most previous posts/comments.

The early versus late temperature rise is visually evident, even to an unschooled layperson. I was making PowerPoint presentations showing this since about 2004, when I first began to examine the claims of climate alarmists and quickly concluded the obvious. I don’t publish, and I long ago let my math skills erode (when I need advanced quantitative and modeling expertise, I lean on my friend and former colleague [BS mathematics, MIT; BS earth & planetary sciences, MIT; MS earth & planetary sciences, MIT; PhD, Geophysics, Texas A&M). Of course, he is ordinarily too busy being paid to solve real-life problems to be bothered by the climate wars.

I greatly admire and appreciate those of you who have the knowledge, skill, wisdom and fortitude to stand in the gap against the evil forces seeking to tear apart our civilization. Until I retire some day soon, I have to remain cautious as I am in the administration and represent a major institution of higher education.

I wouldn’t describe my statistics as strong, like Ross.

But people who have done thoughtful real science (biochemical medical in my case) recognise that the statistical likelihood of a correlation being non-random doesn’t usually transfer to the magnitude of the effect. It may be large or small, and most likely non-linear.

Until climate models incorporate the physics of deep convection, they will remain useless.

Placing a hard clamp on ocean surface temperature of 30C would be the simplistic alternative. Once that is done, any notion of runaway global warming is dead in the water.

No climate model can get close to modelling the temperatures recorded in the tropical oceans in the satellite era.

Nobody should be remotely surprised by any of this. Climate ‘science’ abuses statistics appallingly. In fact this is now so bad that to put ‘science’ next to the word ‘climate’ has become a joke.

We have results from climate models involving numerous variables, many co-linear, for a non-deterministic system predicted years into the future quoted to absurd levels of accuracy without a single reference to CIs or significance.

The reason, of course, is because for any such model you might as well toss a coin or shake a couple of dice, because the results would be just as ‘accurate’. Whenever I challenge anyone in the climate community about this, including high-level ‘scientists’, they just clam up.

It’s totally and utterly farcical yet trillions of dollars are being wasted on results not worth the paper they’re written on.

Due diligence is a lost concept in consensus science.

There is no due diligence on all the mandated transfer payments from poor to less poor and wealthy that is occurring with the addition of wind and solar generators to existing electricity networks.

After two decades of trying to make W&S generators economic, it is time to take a good hard look at the lessons learnt and change direction.

Until the recent piping issues with the nuclear fleet in France, I had not realised most have been built this century. Germany has spent a large fortune on W&S this century while France was spending a similar fortune on nuclear. Germany has become entirely dependent on the rest of Europe for reliable power supply.

The big subsidy farmers are now pursuing green hydrogen. It will give another decade or two of windfall profits as uneconomic processes attract mandated profits.

Mark, the reason they clam up is because you are just too stupid and ignorant to understand the sublime science behind their oracle-like pronouncements and it would be a waste of their time to try to educate you. And that goes double for all the rest of us rubes.

It’s a good sign there’s trouble when there is a reliance on selecting obscure stats. It’s usually safest to keep it simple. If something is wrong it’s usually self-evident, and this is evidently the case by simple comparison of CMIP members. Apparently a major revision to the physics of greenhouse atmosphere is needed.

A quote attributed to Rutherford says: “If your experiment needs statistics, you ought to have done a better experiment.”

It seems in CLISCI, the more obscure the statistical method, the better they like it…especially if the method has underlying data requirements that cannot be tested.

While every day during summertime, every day at any time, a temperature graph such as the one here is unfolded.

In real time and updated every 5 minutes.

Where the air temperature all through the night is less than the earth/ground soil temp

Then almost instantly when the sun glimpses over the horizon, the air temp skyrockets

(in this case sunrise was at 04:30, soil temp was 15°C, having started May at 14°C)

In the 6 hours between sunrise and solar noon, air temp went from 7°C to 17.5°C

While Climate Science asserts that the earth/soil/dirt warms the air and the air then warms “the radiation is re-emitted in all directions” the earth.

That the sun *only* warms the earth/soil/dirt

Yet if I point to that graph and assert that the sun is warming the air I am told I’m a low-life science denier and general all round ignorant uneducated <f-expletive> scum – how can **anyone** not see that the air is transparent to solar radiation?

[Confession by Projection, much]

Then that The Computer knows about ‘forcings’, forcings which patently violate entropy – perfectly illuminated in the graphic attached here.

i.e.

What Is Going On Here

Koutsoyiannis and others have subjected historic climate data from long before the industrial period to a deep causality analysis and found incontrovertibly that it is temperature change that causes CO2 change, not the reverse:

New Research Applying Scientific Method Shows The Perception CO2 Causes Global Warming ‘Can Be Excluded’ (notrickszone.com)

Interesting study. Worthy of a post here on WUWT

These are very important words that can not be emphasized enough. Lots of time is spent here arguing about regressions on Temperature vs Time. As this statement points out, Time is not an independent variable that “explains variations in the dependent variable”. Determining temperature trends in time by using regressions will never answer the question of how CO2 reacts to affect changes in temperature. You can use all the statistical tricks and data corrections you want on temperature versus time, but there won’t be any connections to show what and why things are happening. The best you will be able to say is that CO2 is not directly correlated with temperature because temperature can remain stable while CO2 grows.

Attempting to use linear regressions on different dependent variables against time to show a correlation will never prove a relationship exists or what the mathematical relationship actually is. I can plot anything against time, postal rates, drug overdoses, population growth and scale to show a correlation in time. Yet, there is no relationship. Only experimental data and mathematical derivations will do that.

Doing some study on the history of linear regressions informs me that the original purpose was to investigate whether the relationship between an independent variable and a dependent variable was truly linear or was some other mathematical function like exponential or trigonometric. Think varying Temperature in the Ideal Gas Law, PV=nRT. If CO2’s impact is through radiation, there must be a ^4 relationship somewhere in the relationship. In essence that temperature is related via the 4th root of radiation.

Ross et al,

This may be a dead thread at this juncture, and no one may actually read or respond to what I have to say. Yet, this “climate fingerprinting” seems to have enough problems associated with it to render it completely inert. In reading A.W.F. Edwards book “Likelihood” a while back I was altered to an older book by S.D. Silvey on statistical inference — difficult reading that is somewhat obscure in places, but full of good discussion. It seems to me there is yet one more flaw, not completely unrelated and not yet explicitly discussed.

This climate fingerprinting is a sort of inverse problem. We take observations (a vector x), operate on it with a linear operator built from the design matrix of our experiment (model plus measurement characteristics like location, A), and what comes out is a model estimate (a vector b of parameters) we can compare to a “finger print”. This fingerprinting comparison is simply another linear operation, a compound operation, and it could output something a simple as a single number representing the resemblence to our fingerprint.

Ross has pointed out that the observation vector is not a “sufficient statistic” to completely assign values to the model, a parameter vector (b). Thus, he says people need to resort to assigning the value of a parameter. Yes, this could solve that problem, but one could also add some other constraint that reduces the problem. One can legitimately wonder about these constraints or assumptions. People almost always presume normality — what Petr Beckmann used to call the Gaussian disease.

A related problem it seems to me is that people resort to the calculational aspects of the problem using too many significant figures. If data are measured realistically, they possess a finite resolution. I usually find that people are overly optimistic about data resolution. They will claim temperature resolution to plus or minus a millikelvin, for example, when their instruments have a precision at least 100 times poorer and it isn’t realistic to expect a lot of averaging to gap the divide here because reality doesn’t provide measurements conforming to some hope like independence and identical distributions ( the IID assumption). The resulting rank of A or some operator built from it is very low possibly, and there is little or no resolving power for some of the parameters even though someone can find values for these parameters (they exist in a null space). The problem is like having a large variance but also contains an aspect of what one might think of as censored data.

Finger printing is fine, but if the fellow comparing two finger prints has cataracts his judgment isn’t too credible, is it?

Excellent observations.

My faith in the scientific method, and in the integrity of scientists in general, has been critically wounded by the fraudulent behavior of climate “scientists.” And whatever was left for killed by our unscientific reaction to Covid.

Well, you’ve said it all when the climate signal detected is actually the output of a model.

It means that they can’t use extreme events attributed to climate change as a proof that climate change is indeed happening.

It’s the same as demonstrating that god exists based on the premise that god exists.

Circular reasoning.