H. Sterling Burnett February 10, 2022

YOU SHOULD SUBSCRIBE TO CLIMATE CHANGE WEEKLY.

IN THIS ISSUE:

- Climate Model Problems Persist, Changes Reduce Accuracy Further

- Podcast of the Week: Industrial Wind: How to Fight It in Your Hometown and Win! (Guest: John Droz)

- Disaster Losses Declining as a Percentage Of GDP

- Coral Collection Greater Threat to Coral Than Climate Change

- Climate Comedy

- Video of the Week: Authoritarianism in the Climate Change Debate

- BONUS Video of the Week: The Trajectory of Electrical Power Generation

- Recommended Sites

Climate Model Problems Persist, Changes Reduce Accuracy Further

In the past week, two more articles have been added to the growing body of literature discussing the fact that climate models have consistently failed to project the Earth’s temperatures and temperature trends accurately, since their inception.

As if that were not bad enough, The Wall Street Journal and Powerline report climate models’ projections of future temperatures have gotten worse over time. As new generations of supposedly improved climate models are produced and refined, the accuracy of their temperature simulations decreases. Each new generation of general circulation models fails to track or correspond to a greater degree with measured temperature changes and trends than the previous generation.

This makes a joke of the Intergovernmental Panel on Climate Change’s (IPCC) claims that climate models have improved, which in normal discourse would mean they’ve become more accurate.

Every time the IPCC issues a new report, from the first (CAR1) issued in 1990 through its Sixth and most recent Assessment report (CAR6) released in August 2021, it claims the newest generation of models it uses is more accurate than the prior generation. The “Summary for Policy-Makers” for CAR3 stated, “Confidence in the ability of models to project future climate has increased.” Yet, instead of narrowing the range of future possible temperatures from the previous report, the range nearly doubled. That’s like doubling the size of the bulls-eye on a target, barely hitting the outside edge of the larger bullseye, and claiming it’s a sign the shooter’s accuracy has increased.

The IPCC’s CAR6 reports states,

“These models include new and better representation of physical, chemical and biological processes, as well as higher resolution, compared to climate models considered in previous IPCC assessment reports. This has improved the simulation of the recent mean state of most large-scale indicators of climate change and many other aspects across the climate system.”

How can their simulations be “improved” when, as I discussed in Climate Change Weekly 407, the modelers themselves were forced to admit, just weeks before CAR6 was released, that the models were projecting even hotter temperatures and steeper temperature trends than the previous iteration, the simulated temperatures of which were already too hot, failing to represent measured temperatures accurately?

Only a government bureaucracy or a con artist could claim with a straight face a technology has improved when it does not perform its required task as well as poor-performing previous versions. It’s like confidently asserting a class of electric vehicles is improving based on laboratory modelling even as the miles they can travel between recharges is declining and the amount of time it takes to recharge them is getting longer. Worse performance is not better, unless the goal is to fail.

The fact that computer models are flawed and produce untrustworthy climate projections has long been recognized. Reports by the National Center for Policy Analysis (which I edited when I worked there) in 2001 by environmental scientist Kenneth Green, Ph.D. and in 2002 by David Legates, Ph.D. (who was then the director of the Center for Climatic Research at the University of Delaware-Newark) detailed the numerous flawed projections computer models had made, and they explained why the failures occurred and were likely to continue to be the norm.

Legates wrote,

Models are limited in important ways, including:

- an incomplete understanding of the climate system,

- an imperfect ability to transform our knowledge into accurate mathematical equations,

- the limited power of computers,

- the models’ inability to reproduce important atmospheric phenomena, and

- inaccurate representations of the complex natural interconnections

These weaknesses combine to make GCM-based predictions too uncertain to be used as the bases for public policy responses related to future climate changes.

Whereas computing power has improved markedly over time, modelers’ knowledge of the myriad factors and interconnections that drive climate change has not. In part, this is because the IPCC has always focused on understanding the human factors that affect climate, to the exclusion of other factors, even though it admits other factors do have some effect.

To their credit, CARs 1 through 5 acknowledged that natural factors—the Sun, clouds, ocean currents, etc.—play at least some role in climate change, however poorly understood at the time. The IPCC has provided lists of factors, natural and human, that affect temperatures. The list has changed over time, as have the estimates of the direction the various factors drive temperature and by what amount. What never changes, however, is the amount of confidence or degree of understanding the IPCC has about the temperature effects of non-anthropogenic factors, because study of these is largely ignored. Previous CAR reports consistently admitted the IPCC had low or very low understanding of each of the natural factors that drive temperature changes. Nonetheless, the IPCC has been confident in dismissing them as significant sources of present climate change.

That is like trying to understand how a car functions, admitting you know nothing about radiators, alternators, timing belts, oil pumps, and myriad other systems, but being confident that having a full tank of gasoline and a key in the ignition are the only real factors that make a car function. Then, when the car doesn’t start, you state with confidence the only reason it could have failed was because the key was broken or the car was out of gas.

The IPCC got away with this nonsense by claiming that when they run their models without carbon dioxide it does not produce the warming they expect, regardless of changes in assumptions about the other factors, but when they add carbon dioxide, the models produce significant warming. That’s circular reasoning at best and idiotic at worst. It should not surprise us that the assumptions modelers make about carbon dioxide and other greenhouse gas emissions are the only ones that produce the results they expect and are getting.

In CAR6, the IPCC abandons even the appearance of scientific curiosity about nonhuman factors’ effects on climate. If you read only AR6’s summary for policymakers, you wouldn’t know clouds existed unless humans caused them by creating aerosols. Yet water vapor is by far the dominant greenhouse gas, accounting for more than 97 percent of all the greenhouse gases in the atmosphere, and clouds have huge long-term and short-term effects on surface temperatures. The IPCC acknowledged as much in previous AR reports, admitting climate models account poorly for the role changes in cloud cover play in climate change.

AR6 virtually ignores any effect the Sun has on climate change. The report barely acknowledges solar irradiance as having any role at all in climate change, in a graphic on page SPM-8 (Summary for Policy Makers). There is no mention of solar cycles, which we know from history correlate with climate changes. Nor does the report even mention that increases and decreases in cosmic rays resulting from solar fluctuations affect cloud cover and thus temperatures. Except for volcanoes, all other factors—such as large-scale decadal ocean circulation patterns—are lumped into a category called “Internal Variability” to which AR6 attributes almost no effect on climate change.

Climate modelers’ response to the fact that their models perform poorly and their performance has worsened over time is not to admit it is a matter of “Garbage in, Garbage out” that should lead them to question their fundamental assumptions about whether human greenhouse gas emissions are the sole or even dominant factor driving temperature changes. Instead, as The Wall Street Journal reports,They reworked 2.1 million lines of supercomputer code used to explore the future of climate change, adding more-intricate equations for clouds and hundreds of other improvements [emphasis mine]. They tested the equations, debugged them and tested again.The scientists would find that even the best tools at hand can’t model climates with the sureness the world needs as rising temperatures impact almost every region.

That’s right, climate modelers’ response to the consistent failure of their models to reflect real climate conditions is to spend more money and time adding complexity to their models. Complexity is not in and of itself a virtue.

The climate system is certainly complex. Even so, there is no reason to believe making models more complex will make them more accurate. We don’t adequately understand all the factors that drive climate changes or how the Earth responds to different perturbations on the overall climate. What one doesn’t understand, one can’t model well. Absent that basic understanding, adding more lines of code and making increasingly complex assumptions about climate feedback mechanisms that are even more poorly understood than the basic physics only makes models more error-prone. Every line of code and every complex calculation is one more area, formula, or operator where a flawed assumption or simple mistake of math or code punctuation can cascade throughout the model. Complexity introduces more opportunities for errors or “bugs” in the code, which can throw off the projections.

The fact that as modelers make their models increasingly complex their simulated climate outputs increasingly diverge from real-world climate data should serve as an indicator complexity is a weakness of the models. Modelers simply don’t know what they don’t know. That’s a fact they should admit, instead of building their ignorance into their models by pretending elegant mathematical formulae reflect reality simply because they are elegant and complex. The first step in getting out of a hole you have dug is to stop digging.

A second indicator that complex climate models are inherently flawed is the fact that simpler climate models perform better in matching real-world temperature data. Simple models reject assumptions about how different aspects of the climate system will add to or reduce relative warming as greenhouse gas emissions rise. Absent the additional forcing from modeled feedback mechanisms or loops, simple models project a modest warming in response to rising emissions. In this respect, the simple models reflect well what Earth has actually undergone.

There has been no runaway warming, and there is little or no reason to expect such a thing to occur from any reasonably expected future rise in atmospheric greenhouse gas concentrations. If models don’t get right their basic projections—temperatures—there is no reason to trust their ancillary or projected secondary effects which are supposed to be driven by rising temperatures.

SOURCES: Intergovernmental Panel on Climate Change; The Wall Street Journal; Powerlineblog

Check Out All Our Presentations in Scotland

Podcast of the Week

Wind energy is touted as “clean”, “environmentally friendly”, and effective, when the opposite is true. Industrial wind turbines kill animals that are essential to agriculture, are inefficient as a power source, and can even have a direct effect on your health.

These facts can be used to convince local councils to take a second look at proposed wind projects and draft siting regulations and rules that can stop wind projects from getting a foothold.Subscribe to the Environment & Climate News podcast on Apple Podcasts, iHeart, Spotify or wherever you get your podcasts. And be sure to leave a positive review!

Disaster Losses Declining as a Percentage Of GDP

Roger Pielke Jr., Ph.D., reports U.S. losses from natural disasters have declined as a percentage of overall economic outputs, measured as gross domestic product (GDP), as documented by the U.S. disaster loss database at the Center for Emergency Management and Homeland Security at Arizona State University. The Federal Emergency Management Agency (FEMA) uses the university’s Spatial Hazard Events and Losses Database for the United States (SHELDUS) data set to estimate expected annual losses from disasters in the United States.

Based on SHELDUS’s data, FEMA estimates that when all the loss numbers are finally in, disaster losses in the United States in 2021 will be around $141 billion.

That would confirm what other data sources have reported: since 1990, there has been a significant downward trend in U.S. disaster losses as a proportion of U.S. GDP (see the figure below), as monitored by the Office of Management and Budget, despite tremendous population growth and land-use development.

Pielke points out the United Nations’ preferred methodology for calculating disaster costs is as a fraction of GDP, per the Sendai Framework for Disaster Risk Reduction.

The declining disaster losses in the United States are part of and consistent with a “broader global trend of declining vulnerability to weather and climate extremes, which has been documented around the world and for a wide range of weather and climate phenomena,” Pielke writes.

Did you miss the headlines announcing disaster costs were falling amid climate change? I know I sure did. Instead, I am constantly barraged with headlines claiming disaster costs are rising and setting records because global warming is causing more extreme weather, with no recognition of context, population and demographic trends, or price inflation.

SOURCES: Climate Change Dispatch; SHELDUS

Heartland’s Must-read Climate Sites

Coral Collection Greater Threat to Coral Than Climate Change

With the Australian government announcing plans to spend $1 billion to save the Great Barrier Reef from bleaching ostensibly caused by global warming, and maintain its status as a UNESCO World Heritage Site, Jennifer Marohasy, Ph.D., notes approximately 200 tons of coral from the reef, in addition to sea life interacting with it, are being dug up each year as part of the aquarium trade. As an aside, additional thousands of tons are excavated each year to satisfy the international demand for coral jewelry.

Marohasy notes the amount of coral removed represents only a small amount of the reef as a whole, yet it is likely to be more than is replanted with the government’s $1 billion in funds.

This is on top of $443 million Australia’s government granted to the small but politically connected Great Barrier Reef Foundation for research, protection, and recovery efforts in 2018. That grant set aside more than $86 million for administrative expenses.

How will the money be spent? Some of it will go to go to a consortium committed to replanting corals, creating jobs for scuba divers, and charter boats, Marohasy writes. Their work will be filmed by underwater videographers, and marine scientists will participate in, monitor, and collect data.

It is unclear whether any money will go to those gathering and providing coral for the foreign aquarium trade, but Marohasy writes,

… an October 2021 assessment of the Queensland Coral Fishery by the federal Department of Agriculture, Water and the Environment explains there is a quota of 200 tonne total allowable catch, split between ‘specialty coral’ (30 per cent) and ‘other coral’ (70 per cent).”

Many of the corals are listed under the Convention on International Trade in Endangered Species of Wild Fauna and Flora (CITES). The assessment report does mention that there is some concern around the lack of harvest limits for CITES-listed coral species and the lack of adequate mechanisms to enforce harvest limits. It also explains that the take of corals has been increasing.

Interestingly, all this money is being spent to save the Great Barrier Reef from death induced by climate change even though the data indicate the Great Barrier Reef is doing well, with most of its coral successfully recovering from the limited bleaching it experienced in recent years.

Nor, based on historical records, have ocean temperatures where the reef resides increased over the past 150 years, as reported at Climate Realism:

Temperatures are monitored at eighty sites within the Great Barrier Reef by the Australian Institute of Marine Sciences, and individual records do not show a long-term warming trend. There are no studies showing either a deterioration in coral cover or water quality.

In short, it seems all this money is being spent to satisfy UNESCO and a small but vocal group of politically connected researchers who say global warming is threating the Great Barrier Reef, without any confirming evidence.

SOURCES: The Spectator (AU); Climate Realism

Video of the Week: Climate Change Roundtable: ESG Scores

The Climate Change Roundtable team tackles the emergence of environmental, social, and governance (ESG) scores. ESG scores are portion of the growing elitist movement known as the Great Reset. Corporations are incorporating ESG scores into their decision making promise, causing executives to ignore their fiduciary duty by not acting in the best interests of shareholders.

Andy Singer, Linnea Lueken, Anthony Watts, Bette Grande, and H. Sterling Burnett discuss how ESG scores threaten the freedoms America was founded on.

BONUS Video of the Week: What Drives Global Temperature Trends, Q&A

Anthony Watts and Ross McKitrick, Ph.D., take questions after their presentations on the latest global temperature trends, and explain what’s driving them. Recorded at The Heartland Institute’s 14th International Conference on Climate Change at Caesars Palace in Las Vegas.

Climate Comedy

via Cartoons by Josh

The “success” of climate models has to be judged in light of their objective; and fear is growing exponentially.

Well, I guess it’s not that more accurate models cannot be created. It’s just that in order to create such models, one has to significantly decrease the role CO2 plays in them. And that does not suit the agenda…

The fact that the new models are projecting even hotter temperatures and steeper temperature trends than the previous generation, the simulated temperatures of which were already too hot, means that the models are doing exactly what the IPCC wants in terms of publicity. The fact that they are all more inaccurate is a mere minor detail.

The UN IPCC CliSciFi AR6 game appears to be creation of new, even more wildly hot models to be thrown out of the official model mix such that the old, wildly hot models are deemed to be acceptable “science.” All this is being done by dirty government propaganda shills. Another reason for intelligent people to mistrust all Leftist governmental pronouncements. And that is even before Leftist NGO and MSM wild distortions.

Apparently every region but not long-term for the surface Arctic and Antarctic which is odd because according to the models in a warming climate the poles ought to be warming at the fastest rate.

“Coral Collection Greater Threat to Coral Than Climate Change”

It occurred to me that there may be enough of a parallel to how fisheries have been handled compared to coral harvest at least for planning. There has been considerable discrimination against commercial fishing, some basis with over exploitation, currently solved with some success by some species culture. In the US Gulf of Mexico commercial fishing has been on its way out for decades in large part due to competition with the sports fishery which the current pandemic just proved is not as important as commercial production.

Mariculture has been successful with species that have a rapid enough turnover rate, mostly growth, reproduction mostly adequate if understood. Oyster harvest is now being pushed with considerable government aid into culture. There have been successful cases and may be here, but the only historical GOM oyster fisheries, Louisiana the best example, have been with a lease system as a form of culture. Otherwise the law of the commons operates.

Is the problem that corals have too slow a turnover rate for exploitation?

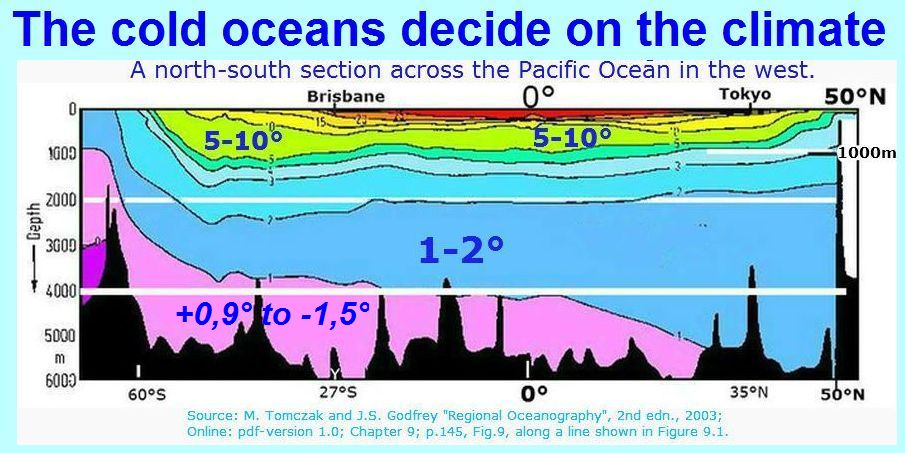

As long as models ignore that the global heat-ration capacity is 1 to 1000 (air to ocean), whereas the ocean mean temperature just reaches + 4°C, , climate models will hardly ever work properly and completely fail to identify anthropogenic climate changes.

, climate models will hardly ever work properly and completely fail to identify anthropogenic climate changes.

See the corresponding text at: https://oceansgovernclimate.com/the-world-is-not-flat/

“They tested the equations, debugged them and tested again.”

That makes no sense. You can debug code- in the sense that the code is correct code- but you can’t debug equations about variables out in the real world without experiments to determine how accurate the theories are behind the equations.

and they had “2.1 million lines of supercomputer code”

Is supercomputer code any different than non supercomputer code?

Is there any reason to think that a super complicated model is necessarily any more accurate than a less complicated model?

Probably the opposite

Should add I love Josh’s NetZero Cartoon

In their equations, they just flipped a few signs from + to -, and inverted a few variables to see if they might get a better answer.

(they might as well be throwing bones)

There is a beautiful example showing how stupid and flawed ECS estimates are. From AR4:

For those who do not know, these are all primary feedbacks which need to be added up and multiplied with a Lambda of 0.3 to convert it into temperature. Using central estimates with a 1.1K forcing by 2xCO2 you get..

1.1 / ( 1 – (1.8 – 0.84 + 0.26 + 0.69) x 0.3) = 2.58K

With the given uncertainty ranges you could get any result from 1.58K to 7K.

The really interesting part however is the “lapse rate feedback” of -0.84. If the atmosphere warms and WV increases, this WV is prone to transport more latent heat from the (ocean-) surface into the atmosphere, thereby making the lapse rate “wetter”. A wetter lapse rate is a smaller lapse rate, and this would shrink the GHE all over. So it is a negative feedback.

In fact WV feedback can be split up in these two components. There is the positive radiative feedback of about 1.8W/m2, and the negative LR feedback of of said -0.84. In the literature you might alternatively find either the 1.8W/m2 figure, or 1W/m2 for WV feedback. The difference is about including LR feedback (or not).

Anyhow, conventional wisdom tells us LRF, relative to the emission level, should reduce surface warming by about 30% (or even less). Technically it is not even a real feedback, as it does not directly respond to temperature, but is a “side effect” of WV so to say. And that is the problem. Let us consider what the LRF does in the above ECS estimate by removing it..

1.1 / ( 1 – (1.8 + 0.26 + 0.69) x 0.3) = 6.29K

With these central estimates LRF would reduce ECS by almost 60%, far too much. And it gets worse once we test the high end of the uncertainty ranges…

1.1 / ( 1 – (1.8 + 0.18 + 0.26 + 0.08 + 0.69 + 0.38) x 0.3) = -64.7K (!!!)

The problem is, primary feedbacks must never exceed 1K (or 3.33W/m2 as 3.33 x 0.3 = 1). Beyond this climate would be perfectly unstable. The combined uncertainty ranges allow for that to happen and LRF neither physically nor mathematically can remedy that, as it is not really a feedback.

So it is all just random numbers. The (real) positive feedbacks are set far too high, and the negative false LR feedback keeps it within bounds. Very bad “science”..

https://greenhousedefect.com/the-holy-grail-of-ecs/vapor-feedback-ii-the-lapse-rate-and-the-feedback-catastrophe

Lapse rate feedback is -.84 W/(M^2-C) still ignores clouds, except as a fudge factor. And the formation of clouds is highly dependent on local lapse rates that result in about 2/3 of the planet being cloud covered….tests to determine cloud feedback show it to be around zero averaged over day and night, but there is a proclivity amongst such researchers to turn off their equipment when it is raining, or not allow for a layer of rainwater on their sensors, so actually averaging out their readings is likely not correct.

And cloud Albedo of up to 0.9 when Sol is shining down at 1360 watts in mid-afternoon, versus ground to outer space radiation “all night long” with clouds in the way, really works out to only a few minutes of afternoon cloudiness difference….which is controlled by convection and surface evaporation rate. So evaluating clouds to have “zero” effect is like saying “the brick wall has been tested and shows no feed back on the crumpled sports car laying near it”

Water vapor positive feedback is physically impossible as it violates the requirement for energy minimisation in equilibrium thermodynamics. (Gibbs free energy principles)

Humidity has decreased in the upper troposphere (probably due to convective drying)

demonstrating negative feedback (as expected by equilibrium thermodynamic) and falsifying CAGW. The decrease in humidity is the reason the hot spot is missing. https://www.climate4you.com/GreenhouseGasses.htm

I remember how people scoffed when Donald Rumsfeld said those immortal lines:

“There are known knowns. These are things we know that we know. There are known unknowns. That is to say, there are things that we know we don’t know. But there are also unknown unknowns. There are things we don’t know we don’t know.”

But in the context of science and studying the climate of the Earth, nobody has put it better. At best a model only includes what is known and what is “”assumed””. It deserves double quotation marks, don’t you think?

“climate modelers’ response to the consistent failure of their models to reflect real climate conditions is to spend more money and time adding complexity to their models. “

There are two options.

Refuse to release the code and/or data. H/T M.E. Mann, P. Jones et al

Hope that it’s that complex that nobody – not even McKittrick or McIntyre – can fathom it.

SURFRAD model problems.

dw_solar measures the incoming flow during the day.

dw_ir measures the IR upwelling from the heated surface 24/7.

Summing these is nonsense.

Cloudy conditions were apparent in this data set. When the sun goes behind a cloud dw_solar falls off but the ground heating dw_ir continues.

According to the SURFRAD read me files the instruments that measure dw_solar & uw_sol see 200 to 3,000 nm. The instruments that measure uw_ir and dw_ir see 3,000 to 50,000 nm. Column 27 sums the nets from the two ranges. Since the ir instruments do not apply emissivity correctly and dw_ir equals zero this sum is somewhat meaningless.

Wavelength can be converted to Joules with h & c. Calculating the Joules across the spectrum shows that LWIR contributes very little comparative share of kJ. There was an animated response that ir provides the majority of terrestrial heating. Well, since dw_ir delivers as much as 90% W/m^2 as dw_sol (good trick, violates LoT 1)I can understand why he feels that way. But dw_ir = 0 so my point stands, ir does not contribute a major portion of terrestrial warming.

Uh, … “dw_ir measures the IR upwelling from the heated surface 24/7.” WTF? And all the other creative math.

I used to be indifferent to AGW alarmism; then doubtful; but their continued model failures, outright lies and coverups, and shouting down skeptics have made me a firm denier. People with the truth on their side don’t need to lie or shout down the opposition. They are their own worst enemy.

Climate comedy? Actually a grim cartoon that is happening right before our eyes. Here in Colorado my maximum monthly energy bill was $180 going back eight years. Last month’s bill was $207 the latest bill is $253. I’m thankful we can afford it but worry for my neighbors who might not.

All I need to know is in that headline up top: “Climate model problems persist” and this, too, “Changes Reduce Accuracy Further”.

Thank you. The real problem lies with those who think they can control the climate on a planet that is gazillions of times larger than they are, when they can’t even control their own digestive systems or emotions.

They are, indeed, the most pathetic creatures I’ve run across in a long, long time. I’m inclined now to suspect that mosquitoes and earthworms are smarter than they are. That’s my story and I’m sticking to it.

“These models include new and better representation of physical, chemical and biological processes,”

Yes, chemical processes are a central operator of climate. Yet there seems to be no chemists employed in the climate game. Correct me if I’m wrong. Any chemist knows about Le Châteliers Principle (LCP) which states that in any multi component system (chemical substances, pressure, temperature, enthalpy factors, biologic agents, solar insolation…) if you change one component, all the other components react in such a way as to resist the change. Any change that finally results is considerably muted from what was expected.

Chemical engineers use LCP to manipulate production of chemical products to enhance quality and output at lower cost. It is real, and one of the wonders of the world that seems to be a mystery to even most scientists, certainly in the climate sphere. We always hear “it’s the physics” which is a clear admission that they don’t know about LCP.

How can we use this principle to fix the models? Let’s assume cli-modelers got the physics right, and yet they themselves (eg Gavin Schmidt of GISS) have reluctantly admitted that models run a way too hot. Measured against observations about a decade ago, they over estimated warming by 300%! Knowing LCP is indisputably operating in the climate system, an LCP coefficient of 0.33 to multiply “the physics” by would have correctedthe forecast. The modellers may take heart in having discoverrd by experiment the all important LC P coefficient for climate change.

“Internal Variability” is that which has not been identified or studied, and for which there is no explanation.

People seem to have forgotten the basis for the IPCC, the energy and climate branches, the United Nations Energy and Environmental program, and now, many others.

Around 1965 or so the United Nations bon vivants decided the UN needed more money for its programs. A Canadian oil baron, Maurice Strong felt wealthy enough to start a second career. He had cultivated people in the UN and gave ideas for how to go about making change. In the early 1970s he was Secretary General of the United Nations Conference on the Human Environment. That led to the United Nations Environment Program where he was a leader, maybe a bit sub rosa. The whole purpose of his ideas in the UN was aimed at raising funds for programs to increase the UN’s cash flow and political programs.

He served as a commissioner of the World Commission on Environment and Development in 1986. This led to the IPCC (Intergovermental Panel on Climate Change) which was very specifically to address “human caused climate change”.

The UN specifically included that clause in the IPCC charter. The UN was trying to build up cash flow to fund even more offices and programs and increase its international effectiveness, particularly among less wealthy nations.

Given all that, in synopsis, the United Nations is appearing to do its best to become something like the International World government rather than simply an international conduit between members of the UN.

Cheers.