Guest Post by Willis Eschenbach [SEE UPDATE AT END]

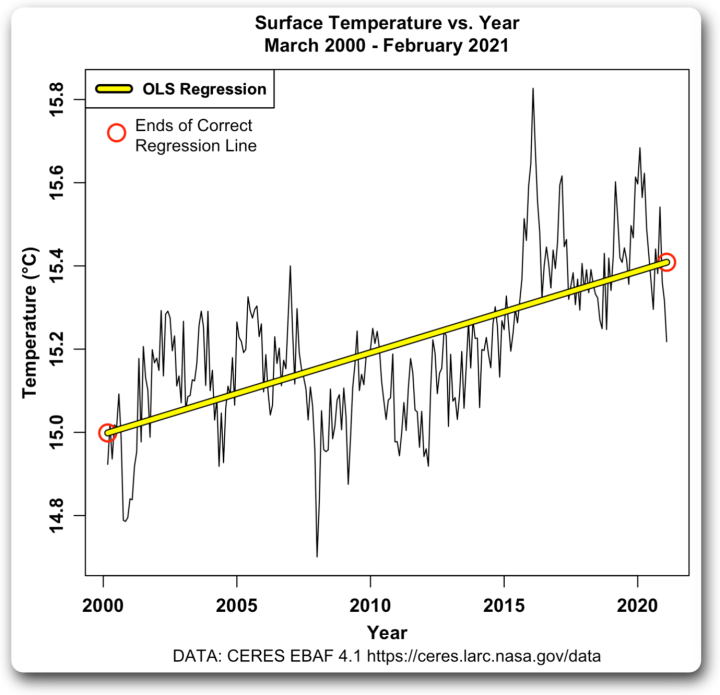

In my most recent post, called “Where Is The Top Of The Atmosphere“, I used what is called “Ordinary Least Squares” (OLS) linear regression. This is the standard kind of linear regression that gives you the trend of a variable. For example, here’s the OLS linear regression trend of the CERES surface temperature from March 2000 to February 2021.

Figure 1. OLS regression, temperature (vertical or “Y” axis) versus time (horizontal or “X” axis). Red circles mark the ends of the correct regression trend line.

However, there’s an important caveat about OLS linear regression that I was unaware of. Thanks to a statistics-savvy commenter on my last post, I found out that there is something that must always be considered regarding the use of OLS linear regression.

It only gives the correct answer when there is no error in the data shown on the X-axis.

Now, if you’re looking at some variable on the Y-axis versus time on the X-axis, this isn’t a problem. Although there is usually some uncertainty in the values of a variable such as the global average temperature shown in Figure 1, in general we know the time of the observations quite accurately.

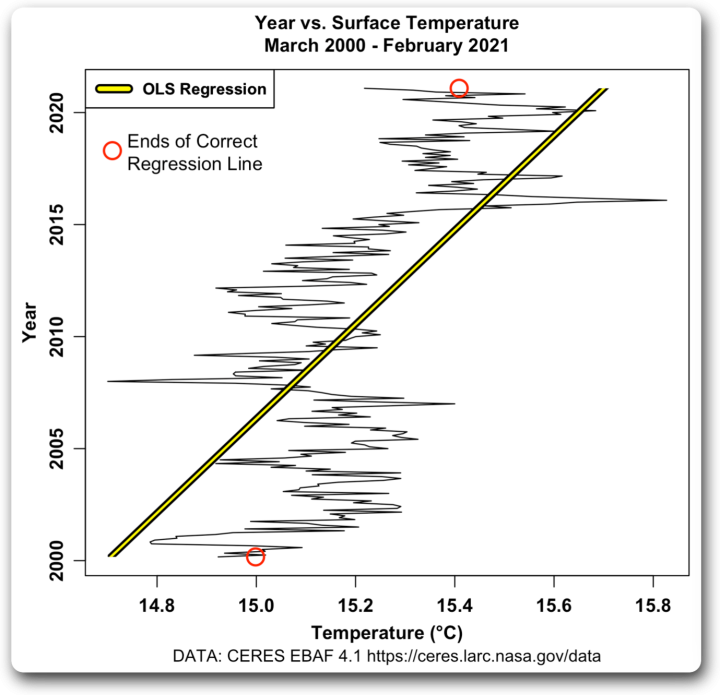

But suppose, using the exact same data, we put time on the Y-axis and the temperature on the X-axis, and use OLS regression to get the trend. Here’s that result.

Figure 2. OLS regression, time (vertical or “Y” axis) versus temperature (horizontal or “X” axis). As in Figure 1, red circles mark the ends of the correct regression trend line.

YIKES! That is way, way wrong. It greatly underestimates the true trend.

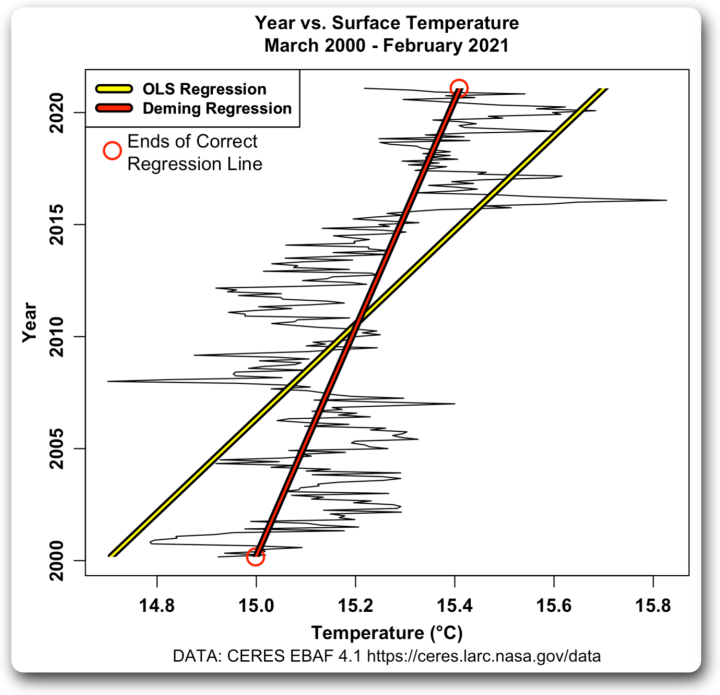

Fortunately, there is a solution. It’s called “Deming regression”, and it requires that you know the errors in both the X and Y-axis variables. Here’s Figure 2, with the Deming regression trend line shown in red.

Figure 3. OLS and Deming regression, time (vertical or “Y” axis) versus temperature (horizontal or “X” axis). As in Figure 1, red circles mark the ends of the correct regression trend line.

As you can see, the Deming regression gives the correct answer.

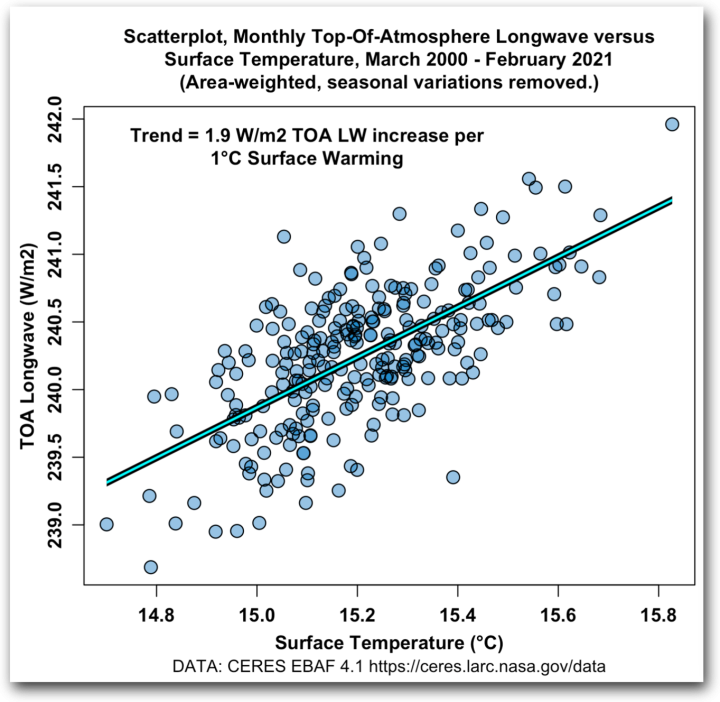

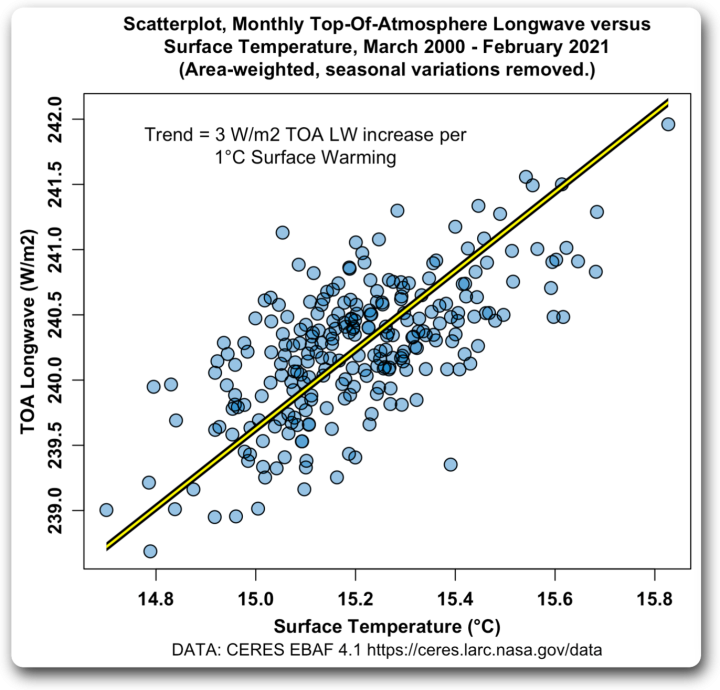

And this can be very important. For example, in my last post, I used OLS regression in a scatterplot comparing top-of-atmosphere (TOA) upwelling longwave (Y-axis) with surface temperature (X-axis). The problem is that both the TOA upwelling LW and the temperature data contain errors. Here’s that plot:

Figure 4. Scatterplot, monthly top-of-atmosphere upwelling longwave (TOA LW) versus surface temperature. The blue line is the incorrect OLS regression trend line.

But that’s not correct, because of the error in the X-axis. Once the commenter pointed out the problem, I replaced it with the correct Deming regression trend line.

Figure 5. Scatterplot, monthly top-of-atmosphere upwelling longwave (TOA LW) versus surface temperature. The yellow line is the correct Deming regression trend line.

And this is quite important. Using the incorrect trend shown by the blue line in Figure 4, I incorrectly calculated the equilibrium climate sensitivity as being 1°C for a doubling of CO2.

But using the correct trend shown by the blue line in Figure 5, I calculate the equilibrium climate sensitivity as being 0.6 °C for a doubling of CO2 … a significant difference.

I do love writing for the web. No matter what subject I pick to write about, I can guarantee that there are people reading my posts who know much more than I do about the subject in question … and as a result, I’m constantly learning new things. It’s the world’s best peer-review.

[UPDATE] My friend Rud said in the comments below:

First, CERES is too short a data set to estimate ECS.

I replied that climate sensitivity depends on the idea that temperature must increase to offset the loss of upwelling TOA LW. What I’ve done is measure the relationship between temperature and TOA LW. I asked him to please present evidence that that relationship has changed over time … because if it has not, why would a longer dataset help us?

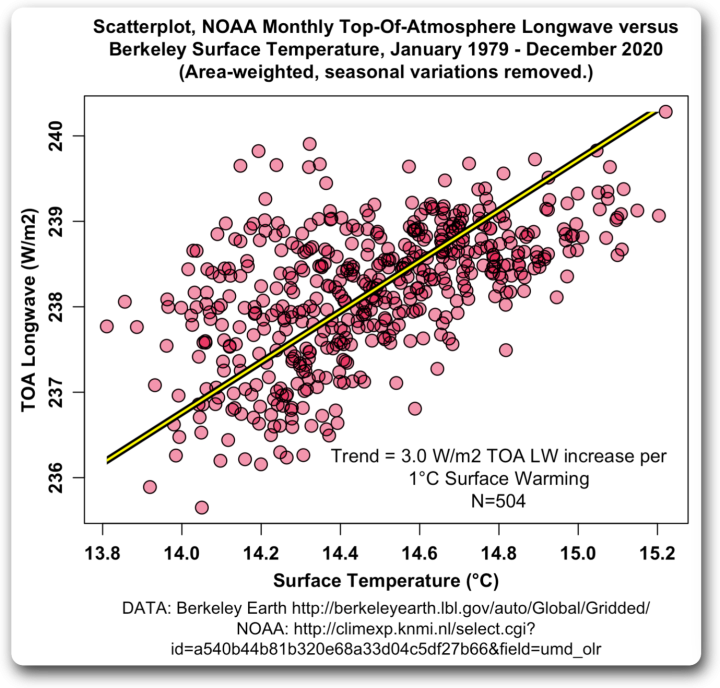

Of course, me being me, I then had to go take a look at a longer dataset. NOAA has records of upwelling TOA longwave since 1979, and Berkeley Earth has global gridded temperatures since 1850. So I looked at the period of overlap between the two, which is January 1979 to December 2020. Here’s that graph.

Figure 6. Scatterplot, NOAA monthly top-of-atmosphere upwelling longwave (TOA LW) versus Berkeley Earth surface temperature. The yellow line is the correct Deming regression trend line.

Would you look at that. Instead of using CERES data for the graph, I’ve used two completely different datasets—upwelling TOA longwave from NOAA and global gridded temperature data from Berkeley Earth. And despite that, I get the exact same answer to the nearest tenth of a watt per square meter— 3.0 W/m2 per °C.

My thanks to the commenter who put me on the right path, and my best regards to all,

w.

Surface temperature or eye level measuring height?

As the Cheshire Cat observed, if you don’t know where you are going any path will take there.

Correlation is still not cause.

Are you sure you can just flip the X & Y like that? Doesn’t it say something wrong about the relationship between year & temp?

It can be flipped—-but you still will get the same results—somehow the author is confusing the angle difference with the measurement difference—The Deming and OLS give identical results—look at the change in temperature over the 20 year period for each.

Temperature should be expressed only in K because K has thermodynamic substance, C does not.

C is just a different name to denote the same “substance”

I cannot tell you how many OLS regression calculations I’ve done, and I’m stunned to learn that I have never heard of Deming regression. I wanted to try it in Excel, but I see that is not in the Excel built in function library. I also do not find any VBA code that I could plug in. I think the explanation here help me to understand it better: Deming regression – Wikipedia

Take a look here: https://www.real-statistics.com/free-download/real-statistics-resource-pack/

Free – donation optional. However, I have not used it, so I cannot attest to quality. (Other people I know do use the Real Statistics stuff and say it is good for their needs. Which may or may not include Deming regression.)

Deming regression is included, but I have not used it. But Xrealstat is spiffy for OLS trending of weighted data.

Real Statistics stuff is good. My opinion. I checked the code and used it.

It’s there, in the Xrealstat add in (see below), but I don’t see how you can use it for data points that have changing x and y distributions (or just expected value x’s and distributed y’s) over the data set. I.e. in sea level station data or BEST temp data, both which have changing y distributions with time. I just found it today, and will monkey with it some more…

Tom (and Willis), OLS calculations invoke several assumptions such as the errors being homoskedastic and having zero conditional means. There are also specific considerations when regressing time series data, such as checking for serial correlation and unit roots. Many books on econometrics cover the assumptions and the consequences when they are violated. OLS is also highly sensitive to outliers. An alternative to OLS is Theil-Sen regression, which has been used for weather-related data.

Deming Regression in Excel: https://peltiertech.com/deming-regression-utility/ . It’s a free Add in.

Folks, what was there to down click in this helpful post by frankclimate? Not rhetorical, what?

0.6 C per CO2 doubling! Heads are exploding at IPCC right this moment….

0.6 near tropopause according to this analysis, likely approaching zero at TOA. Increasing tropospheric latent heat flux results in drying the upper atmosphere above the cloud deck. This neutralizes total column IR radiative effects.

Nope. That’s using TOA figures, not troposphere figures. See my previous post linked above.

w.

ok I see that now thanks.

What is the point of top of troposphere values, then?

Surely the energy the earth is accumulating can only be measured at the true top of atmosphere?

Heading in the right direction.

Yes, this is really a number that requires further analysis since it doesn’t take into account the effect of other variables. For example, the solar TSI declined slightly during this period which could then mean the CO2 sensitivity might be higher. In the same light, cloud effects reduced reflected solar energy. Exactly the opposite effect on sensitivity. The sum of these two effects was a large increase in solar energy reaching the surface.

IOW, the CO2 sensitivity should be quite a bit lower.

In fact, a deeper analysis as provided by Dubal/Vahrenholt 2021 showed that the greenhouse effect (changes in upwelling LWIR) only increased during this period under clear skies. The data does not allow differentiation between GHGs, but this difference makes it pretty clear the changes were almost all due to water vapor.

The one other issue is where you start and where you stop. The climate is a never ending story (at least until the Sun runs out of H2) and the time segments being used to validate arguments are so short that no matter what trends are calculated, all you have to do is wait a while and they will change.

Another issue is the assumption of linearity, especially in a demonstrably nonlinear relationship.

Most of the Sun is plasma, so H, not H2

0.6 is fast approaching zero

Or noise

Or meaninglessness

Exactly (or perhaps a valid approximation taking into account errors) Pat.

I wonder what this process would do to Lord Monkton’s present and previous pause results? Are not the pauses calculated using similar regressions?

Since the pauses have time on the X-axis, as mentioned above, it’s not a problem.

w.

Willis, that’s all well and good, as was your first essay on the subject. But for those of us who build satellites, I’m not sure how useful it is. I suggest a practical engineering definition. All earth-orbiting spacecraft require thrusters for stationkeeping. But some require thrusters to counter atmospheric drag, and some do not. Satellites on low earth orbit do. I concede it’s still fuzzy. Designers must consider the bird’s drag coefficient on orbit, mass, operational life, and other factors before deciding whether to counter drag. So there is no single or simple answer. But I would suggest the following engineering definition of the top of the atmosphere: “The top of the atmosphere is the point at which the spacecraft does not require thrusters to counter atmospheric drag for the required mission life.”

Thanks, Julius. For the purposes of the TOA radiation balance, which is what is in question in my discussion of the “CO2 ROOLZ TEMPERATURE” theory, 70 km is quite adequate.

I say that because per MODTRAN, at that point the remaining downwelling IR is 0.05W/m2, in other words below instrument precision and lost in the knows.

w.

Comment for modtran use

Lots of good info modeling atmosphere(static)

Older pdf not behind paywall

You should be interested

https://www.google.com/url?q=http://web.gps.caltech.edu/~vijay/pdf/modrept.pdf&sa=U&ved=2ahUKEwiFhsWe4aX1AhUekokEHUxKBX8QFnoECAkQAg&usg=AOvVaw1VOhKdYYYzaVWRxCgLFdLg

It’s been half a century since my college days and my rusty old brain is creaking like an ancient iron gate. Must be ime for a nap.

Please give the helpful commenter’s handle.

It helps us lurkers know whom has contributed and thus is worth more consideration.

Click on the link that says “commenter”, it will take you to his comment.

w.

Those scatter plots look rather like the relation ship is centered about a single point, with variable errors, with a few outlying extreme values. Could it be that there is no relationship, and the extreme points which create the apparent relation ship are just major errors and should be discounted?

Nope. The standard error on the 3.0 trend value is only 0.1, so the relationship is solid.

w.

His handle is Greg, his comment is here

Willis. Why is is so hard for you to acknowledge your sources by name?

It’s not hard for me to refer to commenters by name, and I’ve done so from time to time. If he’d used his real name, I would have done so.

But he didn’t. He used an alias. What good does it do to identify him as “Greg” when there are lots of other Gregs out there?

It’s one of the downsides of posting anonymously—you can’t claim ownership of your own words.

Finally, if anyone was interested in the commenter, all they have to do is click on the link and see his alias there … so I’m hardly hiding anything. And if they are not interested enough to click through to the comment, why would they care about his alias?

Could you possibly find something less significant to complain about?

w.

If only paid climate scientist and their followers fessed up like this. Just think what it would be like if they retracted papers as soon as they were demonstrated to be false!!!

Willis is very good.

Just a probable typo…1st sentence under fig. 2:

“YIKES! That is way, way wrong. It greatly underestimates the true trend.”

Surely it over estimates, not underestimates the true trend.

Fig. 1 0.4C difference

Fig 2 0.9 C difference

Or am I missing something?

No, it underestimates the trend. Remember that we are not measuring the trend of the temperature with respect to time. We’re measuring the trend of time with respect to temperature.

In fact, the presence of error in the X-values almost always underestimates the true trend. See Figures 4 and 5 as an example.

w.

OK, so I WAS missing something. Thanks for the clarification.

Hi Willis,

What you call Deming regression is a special case of the more general case of having not only errors in the X and Y values, but these errors are correlated to each other. The more general case was addressed by the late great Derek York (my PhD advisor) in:

York, Derek, 1969, Least-squares fitting of a straight line with correlated errors: Earth Planet Sci. Lett., v. 5, p. 320-324.

This paper was later improved by Mahon, who corrected a minor error estimate value:

Keith I. Mahon (1996) The New “York” Regression: Application of an Improved Statistical Method to Geochemistry, International Geology Review, 38:4, 293-303, DOI: 10.1080/00206819709465336

This is an important issue in isotope geochemistry because when one regresses one isotope ratio to another, for example when fitting an “isochron”, the X and Y errors are frequently correlated. This is also an issue when either the X or Y value is a derived quantity where some part of the value is involved in the calculation for both the X and Y values. If the correlation coefficient for the X and Y values is zero, this is what you refer to as Deming regression.

I’ve gone down this rabbit hole before. If time is the x-axis variable, then OLS regression gives the right answer, doesn’t it? Or am i missing something?

You are correct, because the errors in the time measurement are basically zero. Where I got into trouble was a scatterplot of two variables, both with errors. In that case, OLS underestimates the true trend, as shown in Figures 4 and 5.

w.

I doubt that’s true for proxy measurements? Its rare for the age of a proxy to be precisely known.

True. I was referring to observational measurements rather than proxy measurements.

Thanks,

w.

Subject adjacent, and an opportunity to ask the expert. I’m sure it’s stat101 simple, but I’ve never seen a derivation of how uncorrelated, distributed y values (temp, sea level, etc.) v time, increase the standard error of the resulting trend. I’ve looked, repeatedly. I have an intuitive idea of how it works, and have gotten close agreement with distributed BEST and sea level station data, using my idea and comparing it to brute force approximations (as in hundreds of thousands of excel rand functions). But I might still be off in my thinking and/or derivation, and would appreciate knowing how this evaluation is done properly..

Thanks in advance….

The standard error of the slope is calculated as below:

Regards,

w.

Thank you Willis. This I knew. What is interesting me is how that standard error increases when some or all of the y values are uncorrelated distributions. As in BEST temp and sea level station data.

I will try and peruse a reference from another reply.

Thanks again.

Top of atmosphere is 240 watts and radiates to the earth according to Willis Eschenbach. Not earth emits 340 watts and depletes with height.by 240 watts. Because molecules are in constant motion and therefore emitting energy. Molecules further from the earth are at slower velocities and therefore have less energy(lapse rate). As do molecules in higher latitudes have less energy as ground is colder. Every 103.41 hPa decrease, atmosphere is 6.5°C cooler for 10km.Willis sticks to radiative cooling and top down warming. Found in climate models. Molecules do not stop moving, reason top of atmosphere is at slowest and much less matter at 100 watts. Solar heating at most sun absorbed pole at 10hPa is why stratosphere is so warm. Battling with strong radiative cooling from carbon dioxide. Heights where high energy and strong cooling maintains the average at 100 watts.

This is exactly why I ask people to QUOTE THE EXACT WORDS YOU ARE DISCUSSING. You make a claim that something is “according to” me, but I have no clue what you are talking about.

w.

Your unaware the earth emits average 340 watts(yet to discover 390 watts is incorrect).

Your unaware 70hpa temperature range -50° to -80°C.(average 100 watts)

Unaware temperature is directly proportional to radiation. 100 watts is around -66°C

Your unaware 99.9% of the atmosphere expands with ascending height and compresses with descending height. And this increases (descending) and decreases(ascending) temperature. Change in temperature means change in energy(velocity).

Unaware solar irradiance heats surface above what earth emits. Heats(shorter waves than earths longwave radiation) trace gases at the top of atmosphere (100 watts)

Earths emitted energy is transparent to space. Otherwise we would burn and die.

There are two poles which energy(added together) is subtracted to energy at the equator. (460/2 – (460-230) 230(NP) – (460-230) 230(SP)= 0).

Your article is academic and only fits with imagined climate modeling and not real world observations.

This is gibberish

If you don’t do the work, your mind will reject what you read. i have over a years work and convinced on what the observations tell me.

That may be true but you need to use actual English sentences with approximately correct grammar if you want to communicate your thoughts to this audience.

Willis, If you as an experienced veteran did not know, I am willing to bet very few others also did not know, say 99%?

What about all the graphs out there constructed by THOSE folks?

Too much math, too many statistics, not enough thought.

Anyone interested in the overall effect of increasing CO2 in the atmosphere should refer themselves to someone who understands the underlying topic physics — maybe Will Happer?

I made a comment which disappeared. Disagree with the WE result for three reasons. In sum:

First, CERES is too short a data set to estimate ECS.

Second, there are three ‘observational’ ways to derive ECS: energy budget, Lindzen Bode feedback observationally corrected from IPCC, and Monckton’s equation. All three converge about 1.7C (as does Callendar’s 1938 curve).

Third, the zero feedback can be calculated reliably between 1.1-1.2C (Monckton’s equation produces 1.16C. So anything below that no feedback value including feedbacks means a significant negative feedback—very unlikely given absolute humidity averages about 2% so WVF MUST be significantly positive.

But if you consider the huge increase in CO2 over the satellite era and compare that to the flat temp response once the El Nino step- ups in 1998 and 2016 have been removed, it seems temps are ignoring CO2.

Trends are too short to draw such a conclusion. There are clear superimposed natural cycles on order of 60 and a few hundred years.

Rud, always good to hear from you. You say:

The climate sensitivity depends on the idea that temperature must increase to offset the loss of upwelling TOA LW. What I’ve done is measure the relationship between temperature and TOA LW. Please present evidence that that relationship has changed over time … because if it has not, why would a longer dataset help us?

Three?

Three???

Here are no less than fifty-seven observational ways to derive ECS including Monckton and Lindzen, and no, they do not “converge” in any sense.

SOURCE

I have dozens and dozens of posts up here on WUWT detailing a whole host of significant thermoregulatory and negative feedback processes. To me, the whole idea that there are significant positive feedbacks is a non-starter because of the stability of the system … over the entire 20th century, for example, the surface temperature varied by less than half a percent.

I owe you my thanks, however. You’ve enticed me to take a long at a longer dataset. I got the TOA upwelling LW data since 1979 from NOAA, and the Berkeley Earth surface temperature. Here’s that relationship:

Despite using two totally different datasets and twice as long a record, the answer is the same to within a tenth of a watt per square meter.

My very best to you,

w.

I dont understand where this “community certainty” has come from either. Habbit I guess.

Much like the community certainty that AGW must be worse for us without so much the briefest consideration it might actually be better.

Kip, if you don’t like math or statistics, fine, but don’t come here to whine about it.

As to “not enough thought”, that’s just a slimy ad-hominem without even a scrap of facts to tie it to.

w.

w. ==> What Rud said…..that’s the “thought” side. All of scientific investigations must begin with lots and lots of thought and then one may appropriately apply maths to the right sets of carefully selected data.

Starting out with maths is almost always backwards.

I know we disagree about this — have for years.,

I think some of the most inciteful results come from simple comparisons well expressed. This goes to the core idea in AGW that the surface must warm to restore the balance.

I think that a good example is Einstein’s thought experiments, before he summarized them with succinct mathematical expressions.

Willis, does this explain why the Sea of Marmara seems to be warming at a rate of circa 0.5K per decade?

JF

(I know, I know, but I wish someone would come up with a reason. Could it be anything to do with sea snot?)

Willis, Your essay is very neat, thank you.

Back in the 1960s there were no computers, but we did use statistics, often by pencil and paper AND eraser. Like, I did Analysis of Variance by Fisher’s original equations, dozens of times, looking at levels and types of fertilizers affecting plant yields in factorial experiments.. I mention this because manual work causes focus on individual numbers (as opposed to the gaint Hoovers that suck them into impartial computers). Because one often saw a number that might be suspicious, errors and uncertainties were more front of mind than seems to be the case in modern day work.

You might have seen me going on about errors in posts at WUWT over the decade. The short conclusion is that there remains a lot of ignorance among climate change researchers about error and uncertainty. (Just ask Pat Frank). I have seen a few excellent papers with proper error treatment, but they are rare as hens’ teeth or proper apostrophe use.

So, when you discuss the need for knowledge about errors in both X and Y parameters for a valid regression, one has to assume that the magnitude of the errors is both known and correct.

Particularly woith measurements of incoming and outgoing flux at top of atmosphere, with the important tiny difference between these 2 large numbers, eoor determination is critical. Sadly, given the way that the numbers are obtained, without much scope to tightly replucate measurements from satellites, the customary couple of Watt per square metre numbers are more likely to show creative accounting than repeatable, valid errors. Different satellites gave gross numbers around the 1350 units, but some were 15 units of difference between satellites that were adjusted out, resulting in people now quoting differences of small values like 0.1 W/m^2. Sorry, but this does not seem justified. So, in a sense, it is back to the drawing board with your regression story, so that a valid error can be used on both axes.

All the best Geoff S

http://www.geoffstuff.com/toa_problem.jpg

Geoff, there are two major sources of error in getting to the data shown in Figs. 4 and 5.

The first is the standard error of the mean of the 64,800 individual gridcells that are averaged to give each month’s value. For the temperature, this is about 0.06°C

The other is the standard error of the mean of the monthly averages that are removed from the data to remove the seasonal variations. Again for the temperature, this is about 0.03°C

Regards,

w.

Willis,

I must disagree with your characterizing Standard Error of the Mean (SEM)as the “getting to the error”.

Although Wikipedia is not always accurate, it has a good description of Standard Error (also SEM).

https://en.wikipedia.org/wiki/Standard_error

The upshot is that SEM (or SE) is the description of how well a sample means distribution with a given sample size captures the correct value of a population mean. It is only useful if you have done an adequate job of sampling the population. It is not an “error” of the real population mean, that is best shown as the parameter known as the Standard Deviation of the population.

Again, from Wiki:

I can’t emphasize enough that if you declare an entire 5000 or 10000 stations as a single sample (i.e. n = 5000 or 10000), then the standard deviation of that entire distribution is the SEM. As a sample statistic, the proper way to determine the population Standard Deviation is to multiply the SEM by (sqrt n).

To summarize, for one to know how well the mean represents variation in the values of the data, one must use a statistical parameter such as standard deviation. The SEM is not a statistical parameter, it is a simple statistic of a sample distribution that can be used to obtain an estimate of a population standard deviation.

Here are some links to help explain.

https://www.scribbr.com/statistics/standard-error/

https://www.investopedia.com/ask/answers/042415/what-difference-between-standard-error-means-and-standard-deviation.asp

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1255808/

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC2959222/#

Lastly, a way too many scientists have gone off the rails with these statistical calculations. Even the NIH acknowledges this in their documents I have referenced.

You many estimate a population mean very accurately, but the SEM is not a parameter that properly defines how well the mean categorizes the entirety of the data. Only a properly calculated Standard Deviation will do that.

Jim, you say:

I can’t find anywhere that I said that … ?

w.

Sorry I should have copied and pasted instead of typing off my memory. The phrase was “two major sources of error in getting to the data”.

“manual work causes focus on individual numbers (as opposed to the gaint Hoovers that suck them into impartial computers)”

That’s why I am so suspicious of grid averages and monthly averages of temperatures. I’ve spent hours scrolling through daily temperature records for dozens of different locations around the US. There are a lot of errors and a lot of missing data points in the records, never mind the observation and instrument errors that must accompany older observations. You can’t just average this up and make the errors go away.

“I have seen a few excellent papers with proper error treatment, but they are rare as hens’ teeth or proper apostrophe use.”

Links?

In his 1964 book “The Statistical Analysis of Experimental Data” John Mandel said, “The proper method for fitting a straight line to a set of data depends on what is known or assumed about the errors affecting the two variables.” He broke the topic into four cases:

1) classical OLS where x is known exactly

2) Errors in both variables, Demings generalized use of least squares

3) The case of controlled errors in the x variable where we only “aim” at particular measurement values (Berkson, Are there two regressions? J. Am. Stat. Assoc. 45, 164-180, 1950

4) Cumulative errors in the x variable.

What appears simple always has a bit more to it.

Thx Kevin, I’ll hunt for it. 1b or 2 might be an explain of how to analyze single point x data but uncorrelated, distributed y data. Per my question for Dr. Spencer.

It would be interesting to see a compilation of “eyeball” straight line trends and see if there is some agreement on the trend line.

Quick question. What were the input X and Y errors used for your corrected regression? You should have also been given as output error estimates for both the slope and intercept. For your purpose, only the slope error estimate is important.

See above for the input errors. The standard error of the slope is ~ 0.095 W/m2 per °C.

w.

Thanks. That appears to address the input errors for the temperatures. It’s probably the best you can do with what you’ve got. Do you assume the TOA power values have a uniform error? If so, what would that error estimate be? If you have a complete set of input errors, you should have a goodness of fit parameter such as a chi-squared value that results from the fit. Are your slope error estimates purely from a priori errors, or are they adjusted to include scatter about the line (a posteriori). That is usually done by multiplying the a priori error estimate by the square root of the chi-squared variable divided by n-2 (i.e., the number of degrees of freedom). This “adjusts” the input errors to the point where the chi-squared would be n-2. Sorry for the questions, but these are standard issues in the isotope geochemistry biz.

What a lot of people do not realize is that a correct approach to linear regression is in fact a rather knotty bit of non-linear inverse theory!

Assuming the Myhre 1998 value of 3.7 W/m2 and the WE value of 0.6 C per 2xCO2 is correct that would be a climate sensitivity of 0.6 C / 3.7 W/m2 = 0.16 C/W.m2. The 1 C warming that has occurred would have required 6.2 W/m2 plus the 0.8 W/m2 of EEI for a total of 7.0 W/m2. And given the -1 W/m2 of aerosol forcing that means we need 8.0 W/m2 of positive forcing. Where did the +8.0 W/m2 forcing come from?

You’re conflating the 3.7 W/m2 troposphere forcing from 2XCO2 with the 1.9 W/m2 reduction in TOA upwelling longwave from 2XCO2.

And these in turn are different from the 3.0 W/m2 increase in TOA upwelling LW from a 1°C increase in surface temperature.

And none of these tell us how much energy is required to raise the surface temperature by 1°C.

Best regards,

w.

It is my understand that the 0.6 C per 2xCO2 ECS figure is agnostic of that detail. So if the tropopause forcing is +3.7 W/m2 per 2xCO2 then the climate sensitivity is 0.6 C / 3.7 W/m2 = 0.16 C per W/m2 in terms of tropopause forcing. You can plug in any value of W/m2 and the sensitivity would then be in terms of that value whether it be tropopause, TOA, or any other reference height. I just happen to choose the 3.7 W/m2 tropopause value provided by Myhre 1998 so the 0.16 C per W/m2 sensitivity is thus in reference to the tropopause forcing.

What error (uncertainty) did you use for the surface temperature, Willis? 🙂