Guest Post by Willis Eschenbach

The HadCRUT record is one of several main estimates of the historical variation in global average surface temperature. It’s kept by the good folks at the University of East Anglia, home of the Climategate scandal.

Periodically, they update the HadCRUT data. They’ve just done so again, going from HadCRUT4 to HadCRUT5.

So to check if you’ve been following the climate lunacy, here’s a pop quiz. What did the new record do to the HadCRUT historical temperature trend?

• It decreased the trend, or

• It increased the trend?

Yep, you’re right … it increased the trend. You’re as shocked by that as I am, I can tell.

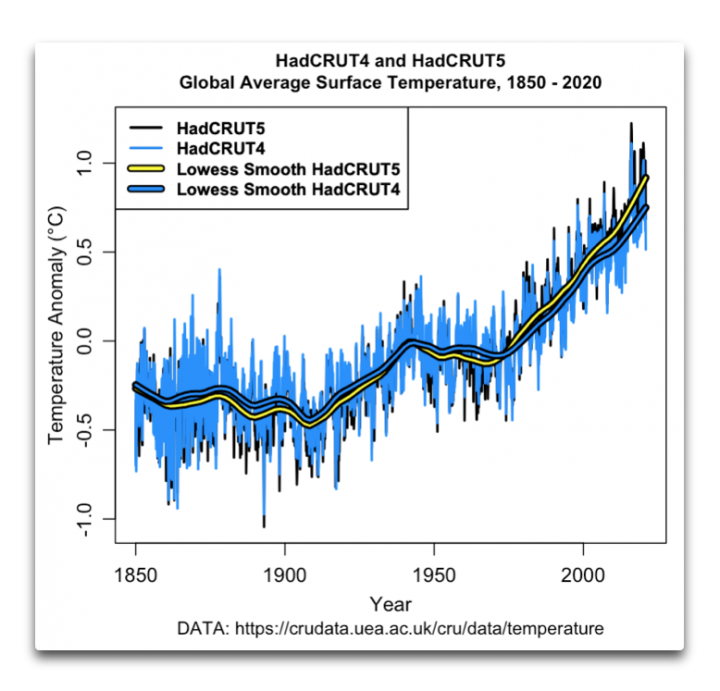

So … here’s the old record and the new record.

Figure 1. HadCRUT4 and HadCRUT5 temperature records. The yellow/black and blue/black lines are lowess smooths of each dataset.

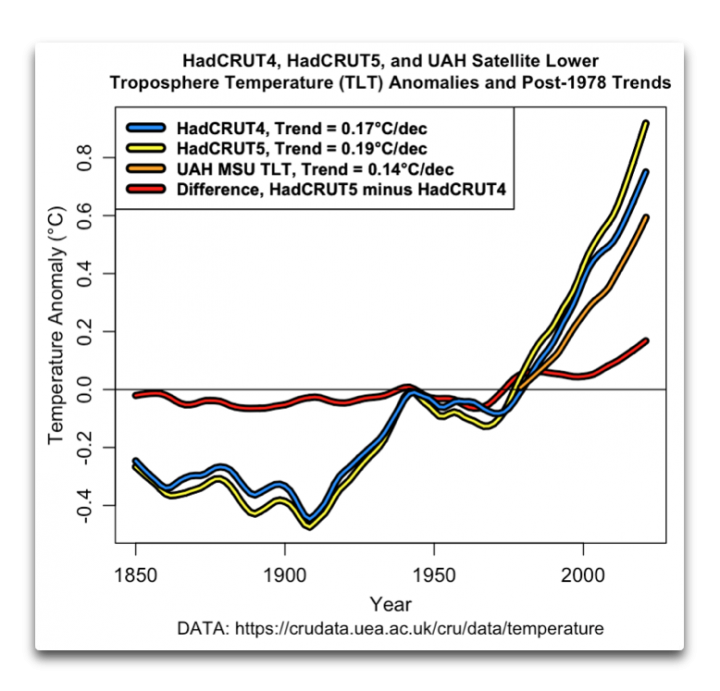

Let’s take a closer look at the changes. Here are just the lowess smooths, which give us a clear view of the underlying adjustments. I’ve added the University of Alabama Huntsville microwave sounding unit temperature of the lower troposphere (UAH MSU TLT) for comparison.

Figure 1. Lowess smooths of HadCRUT4 and HadCRUT5 surface temperature records, and the UAH MSU satellite lower troposphere temperature record. The yellow/black, blue/black, and orange/black lines are lowess smooths of each dataset. The red/black line shows the adjustments made to the HadCRUT4 dataset.

There were a couple of surprises in this for me. Normally, the adjustments are made on the older data and reflect things like changes in the time of observations of the data, or new overlooked older records added to the dataset. In this case, on the other hand, the largest adjustments are to the most recent data …

Also, in the past adjustments have tended to reduce the drop in temperature from ~ 1942 to 1970. But these adjustments increased the drop.

Go figure.

Anyhow, that’s the latest chapter in the famous game called “Can you keep up with the temperature adjustments”. I have no big conclusions, other than that at this rate the temperature trend will double by 2050, not from CO2, but from continued endless upwards adjustments …

After I voted today (no on tax increases), the gorgeous ex-fiancee and I spent the afternoon wandering the docks down at Porto Bodega, and looking at a bunch of boats that I’m very happy that I don’t own. She and I used to fish commercially out of that marina, lots of great memories.

My best regards to all,

w.

A graph going back to the Mediaeval Warm Period would be rather more useful.

IPCC FAR, cancelled, of course in subsequent ARs::

https://climateaudit.org/2008/05/09/where-did-ipcc-1990-figure-7c-come-from-httpwwwclimateauditorgp3072previewtrue/

All our current alarmist climate scientists have seen these charts which show it was as warm or warmer in the past, yet now they pretend they never existed and try to sell the narrative that we are living in the warmest times in human history.

Alarmist climate scientists are lying to us.

Hi Stephen.

I just posted something after you about Eddy. Let me know what you think. BW

The differences between HadCRUT V4 and V5 have nothing to do with adjustments to the station data, they’re reflecting changes to how HadCRUT treats grid cells that are missing station data entirely. Previously these grids were simply dropped from the global average (which has been the major difference between HadCRUT and other temperature analyses like GISTEMP), and starting with V5 they are infilled using data from nearby grid cells. In particular, this change increases spatial coverage in the Arctic, where station coverage is sparse but where the planet is experiencing the most rapid warming. All of this is described in the documentation, so it is not a surprise in the slightest:

https://www.metoffice.gov.uk/hadobs/hadcrut5/HadCRUT5_accepted.pdf

“infilled using data from nearby grid cells”

I think adding station data where there wasn’t any station data before is “adjusting the station data” lol

Andrew

I would not call that adjusting station data. An adjustment would be taking the value as read from the thermometer and altering (increasing or decreasing) it. It’s fine to have private definitions for things, but it’s probably not useful in public discourse.

“I would not call that adjusting station data.”

Weekly_rise,

So what would you call adding new station data to station data?

Enhancing? Expanding? Modifying?

How would you phrase it?

Andrew

HadCRUT v5 is not adding new station data, it’s using statistical methods to increase the coverage of the analysis with existing station data. Think of it in this way: suppose we have two adjacent grid boxes, one of which has a station juuuuust near the edge of the box, at the boundary between both boxes, while the other has no station inside of it. HadCRUT v4 and earlier would have thrown up its hands and said, “welp, guess we have no way of knowing what on earth the temperature inside that empty grid box might be!” HadCRUT v5 would say, “the grid box boundary is an arbitrary delimiter. The fact that a box doesn’t have a station inside it doesn’t mean we have no idea what the temperature there would be. We can use all the stations in nearby boxes to get an idea of what it should be.”

This is clearly a superior approach and one that HadCRUT ought probably to have done from the beginning. I don’t have the historical context to understand why it wasn’t done this way before, but it’s a good change that makes the analysis better.

Weekly_rise,

I’ve heard all this before. What you are saying is that the analysis pretends there is more information now, to cover a larger area where there is no data.

Seems like this leaves room for some creativity. If you know what I mean.

Andrew

Saying, “the CRU hypothetically could be committing fraud,” is a rather worthless position, in my view. The methodology described in the documention is valid, and would produce good results. It’s possible the CRU is committing fraud and not actually following the methodology as written, but such an allegation seems… fanciful, at best. You need some evidence of the fraud you’re alleging.

That uber warming of the Arctic needs explanation after an outflow from there severely froze the central plains and notably Texas and Mexico breaking records across a vast area by double digits. Similarly, were the new record lows in 2019 in Illinois, Ohio, Indiana… the year when sharks, frozen solid washed up on Massachusets beaches.

That’s because the 3d F warming in the Arctic took it from mind numbing cold to freeze your nards off cold

I wondered the same thing but haven’t had a chance to search further. I know some of the arctic locations like Iceland and Greenland show little if any warming.

The uber warming in the Arctic is a result of the increased exposure of the Arctic ocean surface,particularly in winter, due to the reduction in sea ice cover.

The sea ice acts as an insulator reducing the amount of heat that radiates to space .less sea ice, more heat in the arctic atmosphere as it passes through leaving the planet. Low Arctic sea ice levels are a planetary cooling mechanism due to the net negative energy balance in the region.

The “climategate” emails has already proven the fraud. There are hundreds of pages available. It wasn’t prosecuted because they managed to drag their feet long enough to pass the deadline for the statute of limitations.

Rory,

In the UK there is no statute of limitations for criminal cases so that excuse don’t fly.

All that was needed was to show there was no mens rea to obviate criminal intent. A limitation then pertains within the statute.

https://wattsupwiththat.com/2012/07/18/climategate-investigation-closed-cops-impotent/

Or perhaps it wasn’t prosecuted because multiple independent investigations found no evidence of fraud or misconduct? Guess we’ll never know…

It wasn’t prosecuted for the reasons I provided, no more, no less. There were NO “independent investigations”. All were obviously partisan and laughingly conducted white washes. The evidence of fraud was as plain as the nose your face and is still available for scrutiny on these pages, unless you prefer guess work and speculation, like the rest of the “science” you believe in.

‘No evidence’? Really? What about Wegman:

‘Overall, our committee believes that Mann’s assessments that the decade of the 1990s was the hottest decade of the millennium and that 1998 was the hottest year of the millennium cannot be supported by his analysis.’ ?

AND:

‘A cardinal rule of statistical inference is that the method of analysis must be decided before looking at the data’

(https://climateaudit.files.wordpress.com/2007/11/07142006_wegman_report.pdf)

Deciding what data to include and which to reject after collecting it sounds like ‘fraud’ to me. As does constant refusal of data requests and Jones whinges about people wanting to find ‘something wrong’ with his data.

The Wegman report was published in 2006, the CRU email hack occurred in 2009. You might also be surprised to discover that Mann is not and never has been an employee of the CRU.

Their explanation of the database needs to explicitly lay out that the final result is dependent upon modified and created data. It should only be used if it meets the specific needs of the user. An easy method of accessing the original, unmodified data should be available.

Selling fiction as real is fraud

We can use all the stations in nearby boxes to get an idea of what it should be.”

[…]

I don’t have the historical context to understand why it wasn’t done this way before, but it’s a good change that makes the analysis better.

Sorry, but you make me laugh 😀

Take a “may be” temperature makes the analysis better, no, it makes the analysis worthless.

We should NOT try to interpolate temperatures in boxes with no measurement stations between those measured in neighboring boxes.

Suppose, for example, that there is no measurement station in Olympic National Park in Washington State. We could have measurements in Seattle to the east, on Puget Sound, and measurements along the Pacific coast to the west.

But we should not try to interpolate a temperature for Mount Rainier between those on the coast and in Seattle. The stations on the coast and in Seattle are near sea level, while Mount Rainier is thousands of feet above sea level, and would likely be much colder (and have more precipitation) than the stations along the coast and in Seattle.

What you describe would be an issue if the absolute temperature were used instead of the anomaly. But while the mean temperature of Mount Rainier is lower than the surrounding lower areas, changes in the mean temperatures are very likely to be consistent between the nearby regions.

The existing stations has always been used to account for the whole area, so what has been done amounts to nothing more than giving an area of sparse coverage greater weight.

No matter how you slice it, some people changed how they arrived at a GAST, and as has been the case 100% of the times they have done it previously, the new result conformed to the ideas promoted by these same people.

Nonsense … each station has its own micro-climatic influences … UHI, poor maintenance, vegetation, etc. Anomalies will not resolve the bad ‘data’.

Using anomalies does not solve all potential data issues. The anomalies just solve the problem of one station being on a hill while another nearby station is in a valley, and the length of both station records might not be equal. It’s the specific problem that Steve Z raises above.

Even anomalies can vary when the terrain is different. If there is a slope, different humidity levels and different wind directions can cause clouds to form.

Lakes can create lake affects that will affect different areas depending on the wind directions.

Reality is not as simple as the AGW activists want to believe.

There will certainly be random variability captured in the anomaly that does not reflect regional climate, but the long term trend in anomalies will certainly capture regional climate change, which is the thing we want to be measure to begin with.

Mt. Rainier, 14,411 feet, lies to the east of Puget Sound, in the Cascades. The highet point on the Olympic Peninsula is Mt. Olympus, 7980 feet high.

But of course your point is valid.

> “The fact that a box doesn’t have a station inside it doesn’t mean we have no idea what the temperature there would be.”

Yes.It.Does. The very definition of “not data.”

Not have a direct measurement of a quantity doesn’t mean we’re blind about what values the quantity might take on.

“two blocks down”

Cause that’s what this is about, right? -guessing what’s two block down.

Nothing new here. Just a Warmer named Weekly_rise making noise.

Andrew

“Not have a direct measurement of a quantity doesn’t mean we’re blind about what values the quantity might take on.”

Unless you take the measurement, you don’t know what it is.

So, yes. Without a measurement you are blind.

The warmists just don’t seem to understand what empirical evidence is.

Unlike most other scientific disciplines climate science doesn’t appear to be too hot on empirical evidence.

In some cases empirical evidence has been discarded as it didn’t fit preconceived notions of what is should be showing.

Exactly! The entirety of the basis for the greenhouse effect, which underpins true believer climate “science” is conjecture and speculation. Therefore all they seem to require is the same standard for their supporting evidence. Hell, these same people fully believe that consensus is somehow a function of the scientific method … sort of “majority rules” science.

In fact, there is absolutely no empirical evidence to support the greenhouse effect. CO2 is NOT the control knob of climate.

These are the same guys who consider the output of computer models to be data.

Yep … odd isn’t it? It drives them mad when you point that fact out to them.

We physically can’t place a weather station at every single point on Earth’s surface, so the best we can ever do is use a finite number of stations to “represent” the temperature of all the places we don’t directly measure. This is a fundamental shortcoming of living in the actual real world and needing to make actual real measurements of things.

Do you not see your circular reasoning here?

This is not science.

> “…might take on.”

What if we did that at the grocery store? Average your purchases with the person in front and behind you in line? Effectively giving them each 1.5x weight and you 0 weight? And that’s what we have here. Arctic temps rising 2x the world average getting more weight by creating synthetic temperature records.

The price of several people’s groceries are independent quantities unrelated to each other, this is not true of regional climate. The climate of one area is fundamentally connected to the climate of an area a few kilometers away.

Is your extrapolation still valid over thousands of kilometers?

Look at your local weather map on tv some night. Temps will vary AT LEAST 1, 2, and even 3 degrees within a square or even 5 miles. That’s anomaly differences of that same value even within a grid square and probably less. How do you choose which to use? An average? That is made up data with NO BACKUP other than we think this may be correct!

The AGW’ers assume that whatever the variance between these locationsis today, it will remain the same, tomorrow, next week, next year.

Anyone who watches weather reports for a few weeks will be able to see that this assumption is not true.

Example of what you said, measured data

MIGHT 😉

What a load of GARBAGE

You are blind, certainly within a few degrees either way.

And of course once Urban areas are smeared over vast tracts of non-urban eares, trends will be HIGHLY AFFECTED.

Exactly, propagating bad data into an adjacent cell with no data just spreads bad data

Even the best guess, is still a guess.

This “clearly superior” approach was presumably made up by someone without a lot of experience in the outdoors. If I turn left at the end of the street and walk 1/2 mile it can be as much as five degrees cooler than if I turn right and walk 1/2 mile.

HadCRUT is using anomalies, which are representative of a much larger area than the absolute temperature is. The mean temperature 1/2 mile away might be different than the mean temperature at your house, but a change in the temperature at your house is almost certainly accompanied by a change in the temperature 1/2 mile away.

BS.

Hand waving.

Which is the reason why they have little understanding of climate and their models fail continually.

That is “almost certainly” wrong … for a long list of reasons.

You know what weatherfronts are, right ? (left pic, cold from east to west, right pic snow forecast)

On the one side, you have warm or hot air, on the other cold(er) air.

That front doesn’t move 24/7. It stops or changes the direction.

Enjoy interpolating the right way. 😀

What’s wrong with saying that when you have no data you just say, “Sorry, we have no data there, we don’t know the record or the trend.”

“Yes! We have no bananas! We have no bananas today!”

How do you know people would not respect that, given it is the truth?

Obtaining basic scientific honesty should not be like pulling-teeth, it should come easily, with no effort. It takes real effort to keep on lying and avoiding that you actually have no bananas today.

Lets interpolate an imaginary banana from this real banana, 2,000 km away … and call it a real banana!

That’s genius level science!

Guess what?

You still don’t know. And you are now lying to everyone, and calling it science … which is another lie.

And this is why you are not, and never can be respected.

Having no data is actually the best possible result for the AGW crowd. The less data they have, the more opportunities they have to generate the data that they need.

>>What’s wrong with saying that when you have no data you just say, “Sorry, we have no data there, we don’t know the record or the trend.”<<

When you discard gridcells with no data, you lose the correct area weighting, which might affect the result.

Try not making a map with inadequate data then.

Weekly_rise, you are an idiot. You say that “a change in the temperature at your house is almost certainly accompanied by a change in the temperature 1/2 mile away.” If that was true, then the entire planet would change temperature in lockstep all the time. That is obviously, not the way it works in the real world. Yes, I know there was a peer reviewed paper making that claim. That means there are a bunch of idiots out there besides you.

Give it up!

Where do you come up with this sh**te? Why would an anomaly be representative of a larger area? That doesn’t even make logical sense!

Do you ever, ever wonder why these modifications always come out with higher temps? Because you are averaging a high one with a low one. Show us where the infilled data actually uses the lower temp.

If you have one at +2 and one at -2, what do you use? A value that is always higher than the low one. Do you think that might, just might bias the infilled data?

Because an anomaly represents a change from the regional climatology at a location. It is unlikely, nigh on impossible, that the climate can change locally over a single point on the earth’s surface and not also have changed in the surrounding region.

UTTER RUBBISH, weakling.

A large proportion of temperature sits are in urban affected areas

the TREND ARE NOT REPRESENTATIVE of the surrounding areas.

That right there is the biggest load of nonsense i have ever heard. I seriously hope you are not involved in climate science in any way although it would explain some of the junk science it generates.

It is your assumption that all three locations will warm or cool by the same amount in unison. In the real world, that assumption is rarely valid.

No it is not. Go for a ride on a motorcycle down a road and you will constantly go through warmer and cooler air, so by just riding over a hill into a dip in the road the air temperature can change noticeably. Pass through woodlands and open fields and the same thing happens.

Even in my yard I currently have daffodils in bloom in one part and just coming up in others because of uneven temperatures.

Also how about measuring the direct temperature in the Arctic or Antarctic? How many sensors are there per 1000 km2? But HadCRUT has no issues giving us the air temperatures to the nearest hundredth of a degree. I never see error bars on any of what is published.

The absolute temperatures vary quite a bit over short distances, just because of changes in landscape, but the long term regional climatology does not vary so much, and changes in the climate at one point in a region are almost certainly echoed in changes in the climate at other points within the region. Anomalies are measuring change from the average regional climate (is it hotter or colder than normal for this spot?).

While cautious to avoid introducing additional confusion into the discussion, imagine that instead of the regional climatology, our “baseline” is simply the annual average temperature from January to December for a region. We might expect that a spot on a hill will be cooler in the summer than a spot in a nearby valley because of differences in altitude, so the absolute temperature might be quite different. But both points will both be experiencing summer at the same time, so the anomaly for both can be expected to be higher than the annual average “baseline.” The same goes for long term climate – different points in a region might have different absolute temperatures due to local differences in terrain, land cover, altitude, etc., but their long term climate will be evolving similarly over time.

The Hadley Centre does certainly publish error estimates for the HadCRUT dataset, you can view them here, or download the data to play with yourself.

Here’s another analogy Weekly –

I had to have treatment for skin cancers on my arms recently.

The medicos identified dozens & dozens of little buggers all up & down my arms.

They prepared a plan to treat each spot with a variety of treatments over a few weeks.

I said – “why do a spot-by-spot exercise? Wouldn’t it be more practical to just assume that all the skin on my arms needs treatment, and have at it?”

They said – ” just because one small area presents evidence of a skin cancer, we can’t assume that the adjoining skin areas are in the same condition. That would be overreaching. But even if there were contiguous detections, they could be in wholly different stages of development, and require different approaches”

So, as the old saying goes –

“when you ASSUME, you make an ASS out of U and ME”

Adjustment is when the set of measured values is changed. In this case you start with a data value that hasn’t been measured, ie. it’s not in the set of measured values. You end with a data value that has been constructed by a computer, ie. the set of measured values has been changed. It’s an adjustment.

Area above the Arctic circle: ~4% of the globe.

Area between the Tropics: ~40% of the globe

Think of it this way…

All the temperature sites in the L.A. basin go off line and return 9999. So the data is appended with nearby grids like Mojave and Death Valley. Or instead of land based grids, they utilize the grids due west in the Pacific. What could go wrong with that?

Make an educated choice. I´d go for the Pacific, but have never been there. The worst thing to do would be to calculate with 9999, something which has happened many times in the history of computers.

Weeklyrise, this explanation is utter nonsense, as is the pha method and any other method that involves pseudo data as opposed to physical measurement in the area specified.

My front door faces north east, my back door south west. i can easily see a 10 c difference in temperature at certain times of year depending upon prevailing conditions.

Anyone claiming a measured temperature applies to any other spot on the planet other than where the measuring equipment is sited is flat out wrong. If climate science was held to the same rules as all the other scientific disciplines the error bars on these published trends would make a mockery of their claimed significance.

By statistical methods, do you mean kriging, nearest-neighbour analysis, interpolation, gaussian smoothing or something else. If so then the increase in HadCRUT v5 should be marginal as for most of those methods, a best unbiased linear estimation is used…

What it should be? Or what it is?

You apparently don’t understand statistics, their limitations and their blatant misuse.

Yeah but wasn’t HADCRUTV4 also doing same sort of infilling for uncovered regions? Why is the higher “degrees” (driving 0.2C higher global averages for current temps) of infilling in V5 somehow “better” than what technique they used to infilling for V4?

You should understand why it looks fishy to a layperson.

You’re right– That’s not “adjusting data,”..It’s “fantasizing data where none exists.”

That’s it exactly. An interpolation between two measured locations might be useful in some respects, but when they average two interpolated loci, they’re wandering into fantasy land.

The Canadian Arctic has few measuring stations. The Antarctic has even fewer per given area. Yet they somehow manage to be able to provide near certainty with temperatures to the 100th of a degree. Amazing (if you believe them)

That is normally addressed as “correcting” data and requires detailed records of why and where.

Perhaps the word you’re looking for isn’t infilling or adjusting, but fabricating.

But I’m just an engineer…

So how does the new estimate compare with the average of stations without infilling? If it is the same, then no need to infill – you are just making up data to claim better coverage. If it is different, how do you know it is more accurate? By your own admission, the stations are sparse and don’t always report. Hard to use only part of them to infill and then compare your estimated temperatures to reality. In any other discipline, infilling is condemned as poor research at best, fraud at worst.

It’s called making up stuff

Clearly they’ve learned this from the Australian BoM … create infill ‘data’ from other artificially ‘enhanced data’ … do not under any circumstances use ‘real’ data which does not support the narrative. For example … https://kenskingdom.wordpress.com/2020/10/05/more-questionable-adjustments-cape-moreton/

Thanks, Weekly. I read that in their description, but it made no sense to me. Why?

Because the gridcells that have no coverage today had no coverage 50 years ago. In fact, there would be more empty arctic gridcells fifty years ago than today.

So infilling them all should have changed the temperatures 50 years ago more than the recent temperatures. But the temperatures 50 years ago are basically unchanged.

And that’s why I was surprised.

What am I missing?

w.

Willis, I am no longer surprised.

Tony Heller has been calling it fraud for years. Over 50% is now filled and created. Soon we will not need observations. Look at Heller’s correlation of temp adjustment and CO2 increase- 97 to 98 % correlation coefficient. And some are still calling that science.

Heller is quite incorrect in the way he treats the surface station data, and his claims about the infilling done by NOAA are quite wrong (and not relevant to the recent changes by HadCRUT). There is a really nice article here that lays out the arguments against Heller’s approach nicely. Recommend giving it a read.

Out of your link:

When fact checkers at Polifact

LOL, reason to stop reading further.

You should have kept reading just a few words further. The author goes on to say, “Unfortunately, they [politifact] didn’t give a clear rebuttal.” And then goes on to deliver the clear rebuttal that Politifact failed to.

Sorry, couldn’t find any rebuttal, what I found was a false rectification.

I eagerly await your explanation of what was false about it.

All is false about, so simple 😀

Lots of blatant arm-waving , devoid of science.

Can you be more specific? I’m genuinely interested to hear a factual rebuttal of the information in the article, since it seems to me to be quite compelling. Snappy lines get you more brownie points from your peers, but they don’t contribute to meaningful discussion.

The whole article basically ADMITS THAT THEY FABRICATE DATA.

Thanks for making out point for us, moron. ! !!

You are doing a “griff”, by presenting articles that back up our facts.

In-filling DOES NOT GIVE YOU THE RIGHT DATA.

It gives you FABRICATED DATA

It CANNOT IMPROVE any results.

The problem isn’t that Politifact isn’t clear. The problem is that Politifact is a heavily biased source.

WRONG..

You just DON’T LIKE IT because it exposes the FRAUD of the HadCrud fantasy fabrication.

the fact this clueless joker writing the article references zeke horsefather, tells you all you need to know.

Zeke is ALL IN on data fabrication and mastication.

Tony Heller uses WHAT WAS RECORDED.

Get over it.

The change in temperature is reflecting the fact that the Arctic is warming faster than any other place on earth, and the Arctic was essentially not represented in HadCRUT V4 and earlier. You’re not seeing an increased trend in the recent decades because of increasing sampling through time, you’re seeing an increased trend in the present day because the Arctic has been warming the fastest in the past few decades.

Is that any clearer?

Weekly, let’s take the year 1970. During that time, the Arctic was warming faster than the rest of the planet, just like today.

And if anything, there were more empty cells in the Arctic in 1970 than there are today.

So given that they are now infilling it all … why no change in 1970? I can understand that there might be a different change now than in 1970 from the infilling … but why is there no change at all in 1970?

And by the same token, HadCRUT5 in 1950 is cooler than HadCRUT 4 … how is that explained by infilling?

Finally, it seems to me that regardless of the rise in Arctic temperatures, if we’re adding a bunch of cold gridcells to the dataset, it would end up cooler, not warmer …

Again, what am I missing?

Best regards,

w.

The use of anomalies hides many sins.

Exactly, it’s a form of scientific fraud in itself – pretending that a large area, that requires less energy to increase the temperature, is reflective of the global energy budget.

Did they infill the missing grids in the Antarctic, where it has been cooling?

The anomaly is a combination of trend+noise, so picking out individual years and asking why they’re higher or lower in a particular version is not a fruitful exercise. The anomaly in the Arctic now has proper spatial weighting, so it actually represents the area of the globe covered by the Arctic. Whatever the anomaly for the Arctic region might have been in a particular year, it’s now receiving accurate representation in the global average.

Also, this is a dataset of anomalies, it doesn’t matter whether you’re increasing coverage in a warmer or colder part of the world, it only matters whether you’re increasing coverage for a part of the world that is warming or cooling.

HadCrud an AGENDA DRIVEN FABRICATION that bears little resemblance to measured temperatures in MOST of the world.

While I appreciate your comments, just ask yourself this – if the Arctic was cooling faster, do you think this exercise would have been produced? It’s called the Texas sharpshooter fallacy.

Part of the problem with anomalies is that when averaged, you get a linear view of what the earth looks like. That’s why it appears that everywhere is warming the same. That’s the averages work. On the earth, that simply isn’t true.

Here is what a gradient for earth might look like. As you can see temperatures are not linear. This is to be expected as you move from a hot temp to a cold temp. As you integrate delta T’s, they would seldom turn out linear. This means GAT never describes the gradient properly and why a variance with an average is important.

“The anomaly is a combination of trend+noise” So how do they separate trend from noise? Is the noise perhaps constant? If so can you provide evidence of this?

“so it actually represents the area of the globe covered by the Arctic” I think you meant “misrepresents”.

“…it’s now receiving accurate representation in the global average.”

If it is now “accurate,” are they through changing the methods they use to derive the global temperature? Is this the gold standard for now and the future? or are they going to change their methods again because today’s methods, like previous methods, will be deemed sub par? Until they stop changing the data, we cannot rely on today’s data because it’s going to change again. Who knows how much. That makes it virtually worthless.

They will continually update the surface temperature analyses as improved methodologies are developed and as they’re able to add more data. The changes always provide incremental improvement through time.

To note, though, that this particular change just brings HadCRUT into line with methods other groups have been using for a very long time, so in that way they have been lagging the pack.

Repetitive commentary is drowning an interesting debate here between Willis and Weekly. Please resist the temptation to post variations of the same comment you’ve posted thirty times in every thread for the last 3 years. I get it. You think they’re liars.

Was it? GISS shows the Arctic as being colder than average that year.

“…the fact that the Arctic is warming faster than any other place on earth,”

I call that conforming the data to meet expectation. Your discipline has decided what is fact and then adjust the data to agree. It’s pseudoscience crap.

No other discipline of science would even consider just making up missing data to “fill in the blank.”

So many of today’s climate “scientists” are frauds, and most don’t know it or won’t admit what they are doing is junk science. They just continue to perpetrate an artifice on the fraud on the public’s confidence. A con game to keep the grants and prestige flowing for professional advancement, while satisfying a political agenda of their grant masters.

Chris Moncton posted an article on this HadCrut update a couple of weeks ago. I highlighted in a snapshot what Hadley CRU had done to the past 150 years ago.

There simply can be no ethical explanation for this crap.

If you don’t have data across most of the Arctic then how do you assume that it is warming faster than any other place on earth? That’s an opinion and not a fact.

https://notalotofpeopleknowthat.wordpress.com/2018/01/29/greenland-is-getting-colder-new-study/

Except IT ISN’T

COOLING in the Arctic from 1980-1995

Then a step up at the 1998 El nino

Then NO WARMING THIS CENTURY until the 2015 El Nino Big Blob event, gradually subsiding.

And the Arctic was actually similar or WARMER in the 1940s than now.

How do you know this? Extrapolation into a huge region?

Nick Stokes recently criticized Andy May for the same explanation. That more Arctic stations in the northern Pacific artificially biases the temperature trend lower. It apparently never crossed his mind that the lower temperatures may be more correct.

Isn’t it funny how COLDER Arctic temperatures show up in one place, but suddenly they become warmer in another?

Then why no change to the 1930s through ’40s “data?” Smell test, anybody?

surprise, surprise, surprise

When making up data, they manage to create data that makes the trend look worse.

Don’t worry boys, we’ve made sure that our phony baloney jobs are safe for another year.

If we assiduously collected station data from existing sites and then used ONLY that data, you would have a global average that may not be exact, but changes over time would be clear and reliable. After all, it is the trend that they worry about. It’s the misbegotten idea that we have to have a true, cobbled up, global average that makes this all an exercise in making up data.

http://temperature.global

I check this every day.

That’s called “making shit up” in my science book.

No other discipline of science would allow such data creation of officially produced products that others are to rely, especially not public policy.

That the effect of “adjustments” always go in one direction, i.e. cooling the past, warming the present, suggests ill motives at work, not ethical data science.

That these “updates” happen with shrugging acceptance in the climate world demonstrates how far lost the discipline is into a la-la fantasy land of pseudoscience.

I can’t speak for all fields of science, but interpolation is quite a common technique, as far as I’m aware, and this goes also for spatial interpolation of geographic data. A GIS can take a set of elevation points and interpolate between them to construct a continuous elevation surface, and I think very few people would call such an exercise “making shit up,” or would argue that the terrain is more accurately represented as a landscape of point-scale spikes in elevation present only where we have data points.

You’re dealing with historical data, not physical geography. If I interpolate elevation between where I live in Tucson Arizona (2,500′) and Oracle Arizona (4,500′), Mt Lemmon at 9,100′ is going to smack my ass on the trip.

These historical temp adjustments are simply Orwellian manifestations and not to be trusted.

“Who controls the past controls the future. Who controls the present controls the past.” – 1984, Orwell.

Hadley CRU is simply adjusting the past in the present to attempt to control the future. No other plausible explanations exist at this point.

There are good reasons to believe that the climate of nearby regions is connected, and that the anomaly represents a region with a radius of many kilometers around the station. And, as I mention earlier, the gridding is an arbitrary convention – the fact that a station sits only in a single grid cell does not mean it only represents a region bounded by the cell. In fact such an assumption is certainly untrue, so that the fact that HadCRUT is no longer making this assumption is a good thing.

Wow, you are a true believer in this stuff.

Congratulations.

Not just a true believer but my radar is telling me a true understander.

Is this like Russia colluuuusion Simon?

Is that truth 😉

No, a totally clueless ACDS non-entity, trying to defend the indefensible

Maybe on an extreme LOW level of comprehension,

brain-washed into IGNORANCE

…. just like you are Simple Simon

YEs, he understands that what he is doing is wrong, but he is better than average at coming up with ever more imaginative excuses for doing so.

Antibes, between Nice and Menton (nearer to the mountains)

Take a coastline, Mediterranean sea on the south, in the North, 1.5 – 2 km away the upswinging Sea Alpes, ca. 10 km latest away even snow. What will you correctly interpolate there ? Not even the same climate zone, but you will make us believe, the climate of nearby regions are connected. There is no connection at all.

I love walking the beaches here in Ventura, CA, when it’s sunny and 70 – and looking at the snow-capped Topa Topa mountains just 30 miles away.

Take a look at Denver or Colorado Springs versus Pikes Peak. Look at the north side of the Kansas River Valley and then the south side of the Kansas River Valley. Look at San Diego and Ramona (25 miles east of San Diego.

The infill makes *NO* adjustments for geographical or terrain differences between points.

Which is of course why San Francisco can be at Average Temp on a given day and Oakland can be 2d above average on the same day while Santa Rosa can be 5d above average. Using Oakland to infill SF produces a temp anomaly increase of 2d while infilling with Santa Rosa will give SF an anomalous increase of 5d

You sir, are a loon.

WRONG,

Urban areas are affected by urban warming

There is NO REQUIREMENT for regions to be homogeneous

That is a LIE to allow the corruption of data.

What kind of anomalies would you expect from this map. I see a difference of 12 degrees within 150 miles. There are many more of 2 or 3 degrees. Using a common baseline, the anomalies are all over the place. Remember, this is in the Great Plains with only small hills for terrain. What would you interpolate some of the surrounding temps to be? We can look them up too!

The anomalies will contain a combination of signal+noise (climate+weather), you have to look at them over long periods of time to determine whether there is a trend.

Why must there be a trend?

Plot the standard deviations of all this averaging versus time, maybe it will show you something. Hint: averaging does not remove uncertainty.

There will not necessarily be a trend, if the climate is not changing.

“Hint: averaging does not remove uncertainty.”

An average will certainly have lower uncertainty than a single measurement. In fact the uncertainty of the mean is inversely proportional to the number of observations used to compute the mean.

Wrong.

Go learn some statistics and then go study the GUM.

Thank you for your guidance, I just took a look at the GUM and it confirmed my earlier comment. In particular:

“The experimental variance of the mean s2(q‾‾) and the experimental standard deviation of the means(q‾‾) (B.2.17, Note 2), equal to the positive square root of s2(q‾‾), quantify how well q‾‾ estimates the expectation μq of q, and either may be used as a measure of the uncertainty of q‾‾.”

Let’s take a look at the reference from the GUM that you are giving.

As you can see it says,

In case you have trouble understanding this, it means multiple (n) measurements of the same thing (measurand).

When ‘n’ = 1, then ‘s’ becomes undefined, as it should be for a one time, single measurement. You have been told this multiple times. Single measurements DO NOT have a probability distribution.

Experimental standard deviation means building up a probability distribution for many measurements of the same thing each of which have random errors. The mean of this distribution is the “true value” as given by the measurement device. The standard deviation describes the “dispersion” of possible values around that mean.

For single measurements that have no standard deviation, one must use the best estimate available and that is plus/minus half the interval of the next digit. Thus, 75 +/- 0.5 F. See the attached next definition in the GUM.

See in Note 1, “the half-width of an interval having a stated level of confidence.” That is the +/-0.5.

The stupid first screenshot didn’t post.’

Answer this with a description of what the “uncertainty of the mean” means along with references that discuss it in mathematical terms.

Temperatures trends are not and do not indicate climate. They are a very poor proxy for climate. Consequently, “climate + weather” is meaningless.

Station temperature series are time series data. As such they must be analyzed as time series. Averaging a multitude of different time series and doing some kind of a regression hides multiple problems like spurious correlations.

Here is a link to some info on time series analysis. There is much more on the internet about this.

6.4. Introduction to Time Series Analysis (nist.gov)

And just exactly what makes up that trend? Tell us if it is something different than daily temperature, anomalies in your case, that is averaged together. It doesn’t matter if you are infilling absolute temps or anomalies, you are by default infilling made up temps.

There are good reasons to believe that the climate of nearby regions are *NOT* 100% connected. Yet no weighting is done with the adjustments. That’s magic, not science.

Imagine the fact, that we had 2 or 3 points in Germany with night temps. below 0°C in May, in June and July (happend really last year) Surrounding data may have been 5 – 10°C. Will give a nice interpolation, don’t you think ?.

Yes weakling, they are smearing URBAN WARMING all over the globe, where it doesn’t, in reality, exist

Infilling and homogenisations are total bastardisations of reality !

What you are doing is not interpolation, and you can’t invent new information where none exists.

Like the magical image enhancement software on TV that can take four pixels out of a reflection and produce a perfect image of the car license plate.

You mean the CSI TV shows aren’t always completely true? That blows a big hole in most of the science believers knowledge of the world.

But it sure makes it easy to get the perpetrators within the allotted 45 minutes.

We used to call that the creation of cumulative error, within geographic overlays.

Now people like you pass it off as actual “data”.

Contemptible … and pathetic … in the extreme.

Circus is in town!

AKA dry-labbing.

Creating new cells that previously had no trend (no data) and infilling with adjacent data known to be rising twice as fast as the world average is very much an example of adjusting the station data.

Alaska’s all-time high temperature is 100 F, set at Fort Yukon in 1915. Fairbanks reached its all-time high of 99 F in 1919. Despite increasing urbanization, these records still stand.

The hottest temperature ever recorded in Greenland was also in 1915, of 86.2 F. A supposed new record in 2013 was promptly shown to be phoney.

Record high for Siberia is 109.8, set in 1898 at Chita. Granted, it lies only a bit north of 52 N. Couldn’t find a record high for Belushya Guba, the largest settlement on Novaya Zemlya or Uelen on the Chukotka Peninsula.

John, i am afraid weekly rise won’t understand your post as it uses actual data to demonstrate a point that goes against the narrative they would like to promote.

Yet I thank Weekly Rise for commenting here. We need CACA adherents to show the complete and total depravity and lack of scientific support for the catastrophic, man-made global warming scam, which has cost the world so many lives and so much treasure.

I’ll agree with you. It’s clear that station data is adjusted to better reflect the temperature trend of the grid, which is independent of station data.

Its good to find some common ground.

“which is independent of station data”

I was expecting a giggle rather than -8.

There is no one who is surprised that they have the sophistry all worked out in advance.

No one.

BTW…when there were fewer stations, those stations were automatically interpolated/extrapolated to the places where there are no stations.

Because at that time they represented all of the data for that region.

An exercise in extrapolation, got it.

WR

If more new data is added (infilled) in regions that are warming most – the Arctic – then of course the total overall warming trend will increase. More warming signal is added to the total.

The job’s a good-un!

Worked for BOM too.

Sensor surrounded by tarmac? … Check!

Interpolate for 1,500 km radius where no data exists … Check!

Instant ‘BOM-Data’™.

This was not possible before computers, because people used their brains back then, and realized it was nuts, and completely inaccurate and dishonest.

“…Previously these grids were simply dropped from the global average (which has been the major difference between HadCRUT and other temperature analyses like GISTEMP)…”

What “major difference?” They claimed HadCRUT4 was “very consistent” with other global and hemispheric analyses.

Global surface temperature data: HadCRUT4 and CRUTEM4 | NCAR – Climate Data Guide (ucar.edu)

“…starting with V5 they are infilled using data from nearby grid cells…”

Actually, according to the link about, there are TWO V5 releases…one with infilling, and one without: “In late 2020, the Met Office Hadley Centre released HadCRUT5. HadCRUT5 is offered in two different versions. The first version is similar to HadCRUT4 in that no interpolation has been performed to infill grid cells with no observations.”

Why would they release a V5 that is the same a V4?

I’ll take it a step further…

Lines 597 onward in your own link mention some of the differences between v4 and the non-infilled version of v5 that include adjustments to past data (albeit not land stations, but sea data). Of course these adjustments increase the warming trend as they always seem to do. Are you just ignorant, or dishonest?

My favorite part of the paper started around line 636 where they professed that there is less uncertainty with the infilled data v5 than the non-infilled v5. Are they just ignorant, or dishonest?

How anyone thinks that an area the size of the Arctic can affect the whole world’s average temperature needs to explain why the USA and Antarctic doesn’t.

The majority of unadjusted records show no warming and in fact many show cooling over the last 3 decades. Take a look at the work of Kirye here

https://notrickszone.com/2021/03/02/jma-data-winter-global-warming-left-japan-decades-ago-no-warming-in-32-years/

With other posts there.

From page 5 of the PDF file you linked to :

From page 6 :

My understanding from this (and other reading around the subject) is that the “Non-infilled / HadCRUT5 analysis” version of HadCRUT5 corresponds to an “updated HadCRUT4” — which I think is the version used for “comparison” purposes by Willis in the ATL article, NOT the “Infilled” one (!?) — while the “Infilled” version is intended to replace the “Cowtan & Way / kriged HadCRUT4” one.

NB : It is entirely possible that my “understanding” (and “thinking” ?) is WRONG !

Valid “comparison” projects would therefore be either :

1) “HadCRUT5 Non-infilled” vs. HadCRUT4, or

2) “HadCRUT5 Infilled (/ analysis)” vs. C & W

From the HadCRUT5 webpage (direct link) you can download TWO versions of the “Ensemble means, Global (NH+SH)/2, monthly” data :

– “HadCRUT5 analysis time series” (at the top of the webpage), and

– “HadCRUT5 non-infilled time series” (you just need to scroll down a bit for this one …)

You will then need to download (and learn to use) the “h5tools” package (Linux, as I did) or application (Windows / Mac-OS).

Hint : I ended up using the following “h5dump” commands to extract the “Infilled” and “Non-infilled” datasets from my local copies of the netCDF (.nc) files.

h5dump -d tas_mean -y -w 17 -o HadCRUT5_1.data HadCRUT5_1220.nc

h5dump -d tas_mean -y -w 17 -o HadCRUT5_2.data HadCRUT5_Non-infilled_1220.nc

The first line of each [.]data file contains the monthly anomaly (w.r.t. the 1961-1990 reference period) for January 1850, the 2052nd contains the December 2020 anomaly.

A normalized graph of climate ‘research’ funding might also be interesting.

One wonders how the people behind this work can keeps straight face, or how any honest climate scientist cannot wonder WTF! Especially when one compares previous data sets to 5 and sees that ALL the adjustments—in the name of getting the most accurate trends, of course—increase the trend.

Only a fully-corrupted climate science and political process (which funds the scientists) could accept these changes without intense doubt and thorough examination. Then, we have the media who are shameless cheerleaders.

Sadly, how our society thinks and acts in regard to climate-related stuff will only come to reason when sufficient cold for sufficiently long that society’s foundations are rocked will bring us brackets to reason and reality.

In the interim, we skeptics can console ourselves by poking at the insanity around us.

The Climate Research Unit is now run by Mr Bean.

That must be why they make us laugh

Ah yes, the usual…

Cooling the past and warming the recent data to “enhance” the trend.

Willis, I noted (Mk. 1 eyeballs) that ver. 4 and 5 adjusted temperature data were essentially the same during the 1930s and ’40s. On either side (earlier and later) ver. 5 was cooled additionally, up until the magic mid-1970s acceleration where it took off above ver. 4. What happened in the 1930s and ’40s that obviated the need for additional cooling adjustments made to the ver. 5 data both immediately before and after that period?

“Why the blip?”

HadCRUT is dead. Long live HadCRUT!

World population grew from 3.9 billion in 1973 to 7.8 billion in 2020 (it doubled). Twice as many people crammed into mostly urban spaces (cooking, heating, transport, air travel etc generally warming the surrounding environment where most measurements take place) affecting the surface temperature record. Urban Heat Island effect is observably at least 1 degree Centigrade higher currently in cities compared to rural areas and night-time minimums have generally increased in the records (due to concrete storing more heat). Hardly any observable impact on rural temperature or troposphere temperatures though. This is not adequately compensated for. It’s all BS really.

“This is not adequately compensated for.”

Not onlyis urban warming not adequately compensated for…

They actually USE that urban warming and smear it all over non-urban areas creating a TOTALLY FAKE and MEANINGLESS representation of the global temperature.

They seem to have forgotten about 10% of the planet as well. So they decided to interpolate from hundreds or thousands of km away in the Arctic where it’s warming and simply leave out the Antarctic where it is possibly cooling. What will they think of in revision 6 to cool the past and warm the present?

The South Pole hasn’t warmed at all since records began there in 1958.

But recently a remote interior region previously unsampled was found to be much colder than previously imagined. Using GISS’ standard of 1200 km., this frigid, off-world-like vast area could have been filled in with “data” from a relatively balmy coastal station.

Science, post-modern.

JT, BEST disagrees with your observation.They have a slight warming trend. It was manufactured by their automatic data QC algorithm, as explained in footnote 25 to essay When Data Isn’t in my ebook Blowing Smoke.

BEST rejected 26 very low temperature months in their station 166900, because the next nearest station did not also show them, thus creating a slight warming for Antarctica. The next nearest station is McMurdo, 1300km away and 2700 meters lower on the coast. You see, 166900 is Amundsen-Scott AT the south pole. It is arguably the best maintained, and certainly the most expensive weather station in the world. That is how warming trend sausage is made.

So, when necessary, the 1200 km standard is tossed, and 1300 km is accepted. Why am I not surprised?

No. They use the same techniques for both Arctic and Antarctic.

Information creation?

Information fabrication

Compare to UAH. 5 is worse than 4, and both are ‘off’ high compared to UAH both in anomaly and anomaly trend. That is not Arctic infilling. It is bolixed.

CliSci tells us that the “greenhouse effect” occurs in the atmosphere and is reflected back to the surface. Satellites and radiosondes show minor warming trends of about 0.14 C/decade. And this is over a period where cyclical global temperatures were on the upswing. Ignoring this will eventually bite CliSci in the posterior.

Yup, this is the big problem. Climate science views the surface as if it is different from the atmosphere. Turns out they are in the same thermodynamic system and moving energy from one area to the other does not change the temperature of the system.

Once a person realizes this they also realize that GHGs cannot warm the planet. The entire basis of greenhouse warming is unphysical.

That is not what I said nor implied. GHGs warm the planet; it is the water vapor enhancement of minor CO2 warming potential in which I disagree.

Dave, I understood you did not say that. I was pointing out you were accepting a view that is wrong. I accepted it as well up to a couple of months ago. I had no reason not to accept it until I dug a little deeper.

UAH matches HadSST3 almost perfectly. Personally, I would throw out all the land data as it is completely corrupted. Don’t need it anyway. Even if we are warming it has nothing to do with CO2.

“Normally, the adjustments are made on the older data and reflect things like changes in the time of observations of the data, or new overlooked older records added to the dataset. “

What is “normally”? There is a difference here which no-one at WUWT seems to want to take notice of. HADCRUT does not adjust station data. They use the data as supplied by the Met office source. It’s true that they incorporate new station data when they can get it. But they don’t themselves adjust old readings.

As Weekly_rise says, the reason for the change is the correction for a failing pointed out by Cowtan and Way in 2013. In dealing with cells lacking data, they did not try, as one should, to estimate using local information. Deducing a global figure from a finite set of sample points necessarily requires estimating every point that has not been measured, ie if is not actually a station. If you leave out empty cells, that is equivalent to estimating them as the same as the average of all cells that have data. But sometimes you know this is wrong, as with the Arctic, where there has been recent warming. HADCRUT 5 uses local information to estimate all missing cells, which reverses the artificial cooling that came from supposing that missing Arctic cells only experienced the global rise.

That is why the change is in recent averages. It isn’t because any station data was changed. It isn’t because something was different in measurement 50 years ago. It is because the Arctic warming has been properly weighted, and that warming is recent.

It doesn’t matter the HADCRUT trend; UAH 6 is the gold standard. You are just putting lipstick on hopeless “data.”

Why UAH 6? It seems to be the data set most at odds with the others.

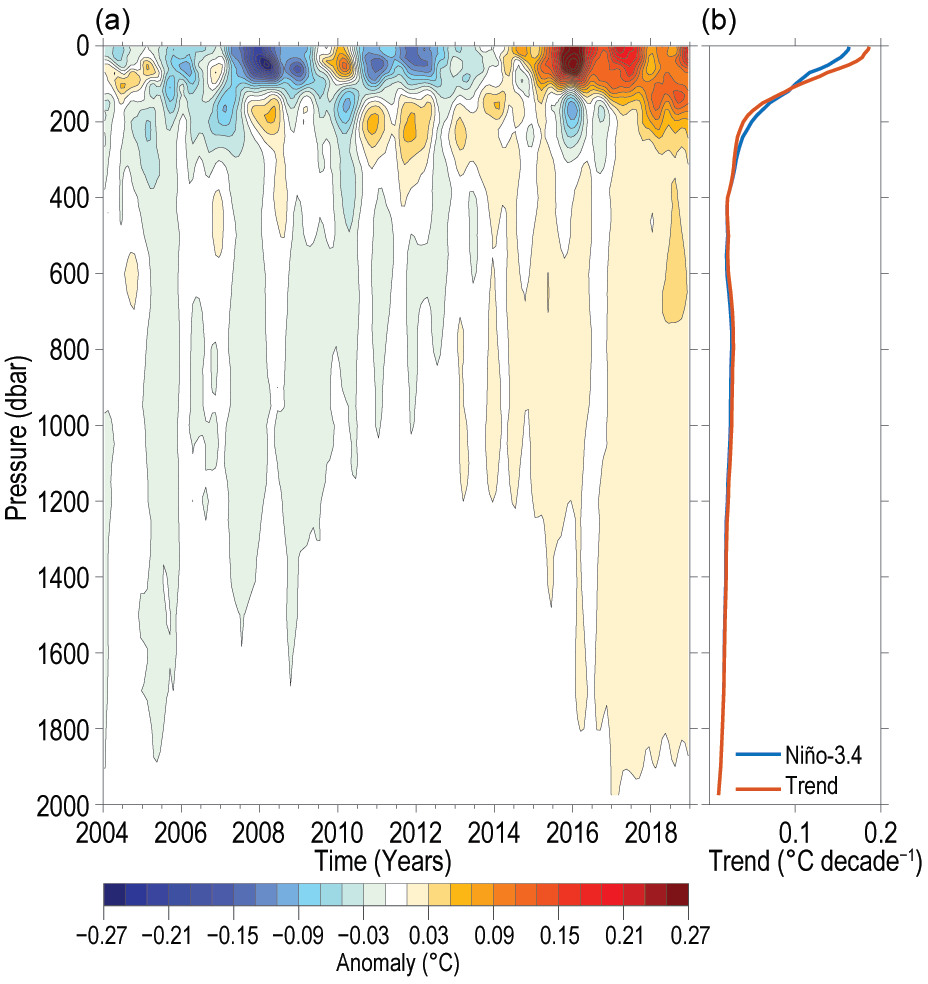

Why? Because it doesn’t mix hopeless pre-ARGO sea surface data with confused and inaccurate land surface measurements. Especially considering that ARGO’s 16-year upper ocean trend, ending on a Super El Nino, is consistent with UAH’s 40+ year trend of 0.14 C/decade.

UAH 6 and HadSST3 are an almost perfect match. Nothing else is worth a plug nickel.

https://woodfortrees.org/plot/uah6/from:1979/to/plot/uah6/from:1979/to/trend/plot/hadsst3gl/from:1979/to/offset:-0.35/plot/hadsst3gl/from:1979/to/offset:-0.35/trend

ARGO mixed layer trend is about the same.

“UAH 6 is the gold standard”

Not for surface temperature. Nor of anything. Was UAH 5.6 also the gold standard? It told a very different story.

https://moyhu.blogspot.com/2018/01/satellite-temperatures-are-adjusted.html

B.S. by moyhu blog. Please explain why one would not use UAH 6 to determine the 40+ year global atmospheric temperature trend. The justification for the satellites was that they would give a more accurate picture of GHG-driven temperature changes on a global basis.

Why would you not use RSS? But you’d get a totally different trend, higher than thermometers. OK, don’t trust RSS (though we loved them during the Pause). So it isn’t just satellites, but who’s doing the calculation. But that comes back to UAH V5.6 – same people, different result.

Deflection on your part, Nick. UAH 6 was an improvement, fully documented by Spencer, et al. Mears at RSS is a proven ideologue, using unsuitable models for diurnal drift adjustments. He was incensed that the unwashed were using his reconstruction to blow up CAGW and the models. It doesn’t matter anyway; both RSS and UAH show minimal temperature trends over the period of maximal CO2 increases.

RSS DID match UAH closely before they started making MANIC AGENDA-DRIVEN “adjustments”, using “climate models” no less.. Seriously !!!!

There are very good reasons that RSS should now be TOTALLY IGNORED. !

Again, you KNOW all that.. so why the ACDS mis-information / LIES, nick

You are TRASHING your reputation even more everyday.

Now you need to be treated on the level of griff, etc as a CHRONIC MIS

The surface data is kludged together MESS of mis-matched, in-filled, smeared , adjusted and CORRUPTED once-was-data

UAH matches the trend of only pristine surface data almost exactly

You are MIS-DIRECTING / LYING, yet again, Nick

Everybody needs to realise that moyhu is a site that uses every twisted bit of non-science it can to support the ACDS of its owner.

It is NOT interested in science, it is interested in AGENDA.

It is a rabid PROPAGANDA site, behind a thin FACADE of science.

It takes station data that has already been adjusted….

Why always the manic misdirection to support your ACDS, nick ?

Pretending otherwise is just AGW cult behavior, UNWORTHY of a rational mind.

Again with this BS

No warming apart for the El Nino this century.

.

Arctic was similar or WARMER in the 1940s.

“ But sometimes you know this is wrong, as with the Arctic, where there has been recent warming. “

How do we know that when we have no measurements?

Because we assume that they have and so add in imaginary stations that show the warming.

And to compete the circle, these made-up results confirm what we already wanted to know.

It’s not best practice.

“How do we know that when we have no measurements?”

We do have measurements, and HADCRUT 5 now has more of them.

It an issue of inhomogeneity and proper weighting. As I showed here

https://moyhu.blogspot.com/2013/11/coverage-hadcrut-4-and-trends.html

you can get a result similar to Cowtan and Way by just averaging 10° latitude bands first, omitting empty cells, and then combining those bands by area. It comes back to this issue of not infilling, but just omitting empty cells. That effectively assigns to them the population average. If you average by bands first, the omission assigns to missing data cells the value for the latitude band, rather than the globe. That is already better. In fact HADCRUT has always done a limited version of this, in that they average hemispheres separately, and then combine the result. They made a point of doing that, but should have taken it further. Now they have.

We saw the same issue with the “Goddard spike”

https://wattsupwiththat.com/2014/05/10/spiking-temperatures-in-the-ushcn-an-artifact-of-late-data-reporting/

There the problem was that over a four month period to April (US), missing data was mostly in the latest month (time delay) and so averaging without infilling assigned to these the average, which was to cold. Steve Goddard sort of fixed this by averaging by month separately, and then combining the averages. But it’s a weak version of proper infilling.

You have used many words to say back what I said. And I don’t think you realise it.

The question to be answered is “What to do about areas with no data?”

The answer is either

A) Admit that we do not know about that region and say we cannot discuss it.

B) Take all the trends in anomalies we can find and apply that mean to the unknown regions.

C) Take all the trends in anomalies we think are appropriate (by proximity) and apply that mean to the unknown regions.

Option A is the least useful and the most true.

Option B has the benefit of hopefully eliminating most random errors most strongly. Although it is obviously flawed for systematic error.

Option C is exactly the same as Option B but weaker. However, it has the benefit of meeting the initial guesswork by assuming that proximity means more representative. That is not justified, except by the circular reason I described.

No measurements are not equal to imaginary stations.

“The question to be answered is “What to do about areas with no data?””

I answered it. The whole world is an area with no data, except where there is, and that is at just a finite number of points.. You have to estimate the rest, to get a global average. This is a universal situation in science.

The cells are a distraction. It is useful to estimate specific areas first, within which local variation is small, and then put them together. But it is all derived from the sample (station) readings. The cell lines are arbitrary. All it means is that inside an empty such area, you just have to look further afield for data. But just lumping it with the global average is definitely not good, and I’m glad that HADCRUT is now doing better.

How can someone who is so bright be so dumb…geez Nick you take the cake.

WOW, you are getting so, so desperate in you DENIAL, Nick

Now you are ADMITTING that they are making up data. !!

We knew that !!

Its rather pathetic that you still try to justify the UNJUSTIFYABLE

Your ACDS is overwhelming your ability for rational thought.

EXCEPT THAT THEY ARE NOT !!

You will know they are doing “better”, when their result get at least SOMEWHERE NEAR the original data.

Seems they are GETTING WORSE as the put a VOID between REALITY and their fabrications.

The issue i have with all the various statistical techniques is, if true that there is some homogeneity by grid square/latitude band etc ,why bother using these techniques at all ? Surely just using the physical data we do have would give the same result ?

Unless of course that result doesn’t support a narrative.

You are totally ignoring that there are areas that may or may not be at the average of a band. Without data, how do you know? Look at this map and tell everyone how temps can’t vary widely over just a few miles.

When you are calculating anomalies to the 1/100ths of a degree, you have no way to know how accurate absolute/anomaly truly is. You are playing a guessing game and trying to convince us that you know what you are doing!

Good question!

Another information creator.

Honestly Nick, you missed your calling, just think of all the used cars you could have sold, you would be a very rich man.

Has it ever crossed your cerebellum that just maybe it’s obviously quite inappropriate and dishonest to be trying to squeeze a global map from the actual data available?

“But! … but … it’s a GIS man! … that’s what we do!!”

Enjoy your unbridled cumulative-error constructs mate, it’s all you’ve got as a substitute for having an intellectual foundation.

He would have tried to weasel his way around missing engines, missing wheels, no brakes

Trying to sell a LEMON… even after they have all-but rusted into dust.

In this case in the form of HadCrud surface temp FABRICATIONS

Just IMAGINE there are 4 wheels 😉

This is HadCrud….. Nick style !!

“Deducing a global figure from a finite set of sample points necessarily requires estimating every point that has not been measured, ie if is not actually a station.”

False. It does no such thing. You simply use the data you have, and specify the tolerance in terms of accuracy of average (based on the tolerance of each instrument used; you cannot average away tolerance errors) and spatial resolution.

You could say “we believe the anomaly was X.X deg C (+/- Y deg C) with a spatial resolution of ZZZZ km”. Of course, that would require thinking people to actually understand what is stated. And it does not drive the agenda as much as stating “the anomaly is X.X deg C”.

In the GIS world in which I’ve participated in the past, you always specify the tolerance of your equipment AND of your geographic resolution. Failure to do both meant you did not actually report your data.

Adding new short term data, either real or imagined is going to bias the information if it is different from the past. In other words, you have no idea what the past data should have been, and are making up all kinds of trends that get into the average. If they are included after the current baseline years, they are not even included in the baseline!

Ah, I have had a sailboat for 49 years now, Pearson 26. Raising kids and college, etc. curtailed cruising big time. But, empty nest now and I am good to go. Planning three months of Maine cruising, going wherever.

“…looking at a bunch of boats that I’m very happy that I don’t own.”

Yep. A boat owner’s happiest days are the day he buys the boat, and then the day he sells the boat.

I have no big conclusions, other than that at this rate the temperature trend will double by 2050, not from CO2, but from continued endless upwards adjustments …

It’s called re-writing history. GISTEMP does it too. The issue is, that they get away with it in broad daylight. You & I and a lot of others are losing the argument. Freedom of the press is limited to those who own a printing press. These days the press is the internet and the so-called main stream media. There isn’t any public organization that is going to come along and break up the information monopoly that is becoming more and more obvious every day.

The reason we’re losing is because we are playing on their field with their ball and by their ever changing rules. Time for a change.

1) The claimed 33 C of warming from the greenhouse effect is false. There is no warming period. The Earth’s temperature is exactly as predicted by S-B when measured properly. Radiative gases simply move energy around.

2) The atmosphere-surface model is incorrect. The correct model is closer to what is called the ecosphere. It includes the atmosphere and the surface skin where life is found. When this model is used there is no ability of GHGs to create warming.

3) The energy budget views are nothing but cherry picked nonsense. They are meaningless.

Only when these and other glaring climate science mistakes are picked up by skeptics and used against the false science that exists today will progress be made.

Thanks for the analysis, Willis. I found this statement of yours very interesting:

“Also, in the past adjustments have tended to reduce the drop in temperature from ~ 1942 to 1970. But these adjustments increased the drop.”

I wonder if the CMIP6 models better capture that cooling.

Regards,

Bob

So it looks from your 2nd graph Willis that the “settled science” was back around 1940?

Does anyone get to see the justification for the adjustments?

Willis, I also note that they lowered the older temperatures as every iteration seems to do. My suspicious heart suspects the steepening achieved an important goal too, since alarm is greater in this case. It also maybe forestalling dev of a new ‘pause’, currently standing at a 5 yr decline.

The UAH has constrained the jiggery pokery on the recent end of the temperature curve up intil now. With GISS jerking up their recent end satellite temps and hachet work by climate wroughters on UAH and satellite temps in general over the past decade, they must feel less restraint to their wants.

Sorry for OT, but at Climate Audit Stephen McIntyre has a new article “Milankovitch Forcing and Tree Ring Proxies”

HadCRUT #4 & 5? Is that what you had at last night for dinner?

Welcome to the Adjustocene.

Has HadSST4 come out?

Do they swap the hemispheres back?

Do they have as massive a change to the first half of the base period as going from v2 to 3, with a barely perceptible change to other decades?

Is there a seasonal signal in Hadsst SH (or was it NH) with an amplitude increasing linearly with time after 2000?

Does it still appear as the maximum anomaly of 1998 is used as the reference rather than the mean of individual months in the base period?

These people are a joke.

A tragic joke, as at least millions of lives are at stake and trillions in treasure as a result of this antiscientific drivel, not fit for any purpose, except to enrich its perpetrators and enable globalism.

Thanks again Willis. The difference plot is interesting and anomalous.

Quoting Ole Humlum @ur momisugly climate4you: Temporal stability of global air temperature estimates:

“… a temperature record which keeps on changing the past hardly can qualify as being correct …”.

Will they ever get it right⸮

“The science” (an unscientific formulation) is settled, except where it needs to be resettled, closer to the bogus narrative.