Guest Post by Willis Eschenbach

The HadCRUT record is one of several main estimates of the historical variation in global average surface temperature. It’s kept by the good folks at the University of East Anglia, home of the Climategate scandal.

Periodically, they update the HadCRUT data. They’ve just done so again, going from HadCRUT4 to HadCRUT5.

So to check if you’ve been following the climate lunacy, here’s a pop quiz. What did the new record do to the HadCRUT historical temperature trend?

• It decreased the trend, or

• It increased the trend?

Yep, you’re right … it increased the trend. You’re as shocked by that as I am, I can tell.

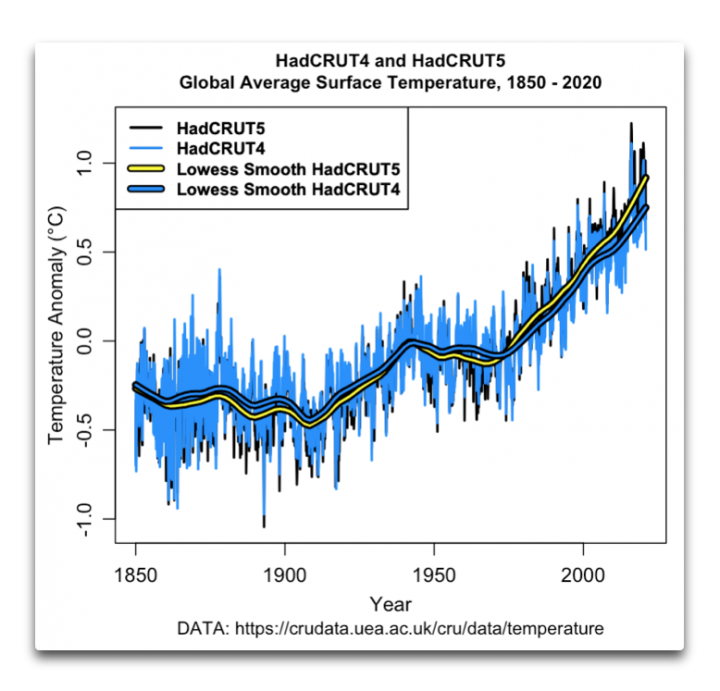

So … here’s the old record and the new record.

Figure 1. HadCRUT4 and HadCRUT5 temperature records. The yellow/black and blue/black lines are lowess smooths of each dataset.

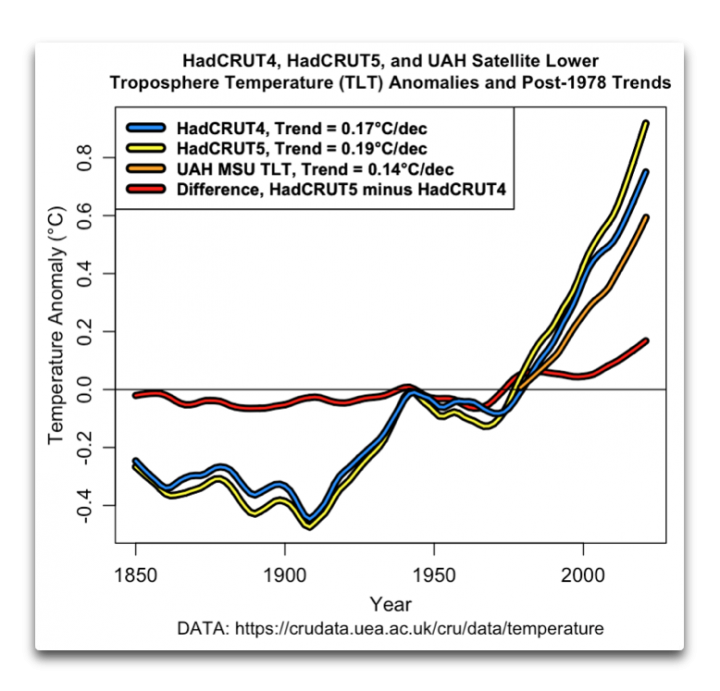

Let’s take a closer look at the changes. Here are just the lowess smooths, which give us a clear view of the underlying adjustments. I’ve added the University of Alabama Huntsville microwave sounding unit temperature of the lower troposphere (UAH MSU TLT) for comparison.

Figure 1. Lowess smooths of HadCRUT4 and HadCRUT5 surface temperature records, and the UAH MSU satellite lower troposphere temperature record. The yellow/black, blue/black, and orange/black lines are lowess smooths of each dataset. The red/black line shows the adjustments made to the HadCRUT4 dataset.

There were a couple of surprises in this for me. Normally, the adjustments are made on the older data and reflect things like changes in the time of observations of the data, or new overlooked older records added to the dataset. In this case, on the other hand, the largest adjustments are to the most recent data …

Also, in the past adjustments have tended to reduce the drop in temperature from ~ 1942 to 1970. But these adjustments increased the drop.

Go figure.

Anyhow, that’s the latest chapter in the famous game called “Can you keep up with the temperature adjustments”. I have no big conclusions, other than that at this rate the temperature trend will double by 2050, not from CO2, but from continued endless upwards adjustments …

After I voted today (no on tax increases), the gorgeous ex-fiancee and I spent the afternoon wandering the docks down at Porto Bodega, and looking at a bunch of boats that I’m very happy that I don’t own. She and I used to fish commercially out of that marina, lots of great memories.

My best regards to all,

w.

“Only the future is certain; the past is always changing.”–Chinese proverb

Seems like adjustments should bring the data CLOSER to the satellite data, not farther away.

CliSci has a fundamental problem: Theory says that increasing GHGs will heat the atmosphere and drive surface temperatures higher. To get significant surface warming, the UN IPCC climate models had to invent a tropospheric “hot spot” to reflect water vapor amplification. Since the satellites detect no “hot spot,” CliSci had to discredit UAH (see Nick Stokes’ comments). Mears, a fellow traveler, adjusted RSS higher to mimic surface and, to an extent, model trends.

There is nothing wrong with the physics around how ghg’s behave. The problem is no one in climate science has spent any serious time or effort looking at how the earth responds and it’s natural ability to lose energy/heat.

It’s likely (note use of climate sciency term) the reason runaway warming didn’t occur in the past with significantly higher co2 levels.

Willis, can you post a graph of RAW data that goes into the adjustments? That would be informative. It can be argued that RAW does not include truly needed adjustments. But it also can be argued that any adjustments that don’t increase the warming trend are dismissed out of hand, seems like. For my local station, the raw data is a noisy flat line back to 1893. Guess what the adjustments do?

Temperatures took an upswing about the same time as water use (about 1960). The upswing in irrigation and other sources of increased water vapor explains the temperature increase of HadCRUT4. I don’t expect HadCRUT5 to make much difference. https://www.researchgate.net/publication/338805648_Water_vapor_vs_CO2_for_planet_warming_14

Within the 4 square mile area with my house in the center, Weather Underground real-time read outs show several degrees difference, which varies from day to day, with no evidence that anomalies are consistent. Therefore, I call BS on the notion that grid infilling, whether using anomalies or actual temps, has any validity.

Compare the same site over time, that might tell you something – changing the rules and creating data tells you only that they didn’t like the old answer.

Please, don’t go bringing how the real world operates into this.

Next you’ll want error margins on all the imaginary bananas, that were interpolated from a known real banana on the other side of the continent.

If we subject the imaginary bananas to these provocative imposts of real observation that sort of scrutiny will cause the entire fruit-shop to fold-up and collapse into a bottomless chasm of figments and we’ll be right back to having only one banana again!

Who wants that? All advancement in banana-science lost!

Science needs these bananas!

I’ve suggested something like this in the past. Look at each station and determine its individual trend. Assign a + or – according to the slope.

Then just go around the globe adding up all the pluses and all the minuses and see what comes out!

Well paint me shocked.

Just more of the same, the same desperation that is, nature just ain’t playing along so needs must in their noble cause of saving humanity.

They keep telling us that climate models are right and they keep proving it with record homogenisation.

When does this fiddling get called out for what it really is?

When the U.S. Social Cost of Carbon (SCC) gets before the Supreme Court.

“When does this fiddling get called out for what it really is?”

_________________

The second somebody can demonstrate, in peer-reviewed comments or rebuttals, that the various homogenisation techniques used by the many different groups, all of which produce broad agreement on trends, are all flawed. Critics have had an awfully long time to accomplish this simple task – to no avail as yet. Lot’s of heat; very little light.

Rusty strikes again

Here is rusty’s car he is trying to sell you

You have had an AWFUL long time to produce empirical scientific evidence of warming by atmospheric CO2

You still remain an empty sock, awaiting a hand up your **** so you can graduate to being a muppet. !

First, every “adjustment” made to the previous data is a clear demonstration that the techniques are flawed—otherwise, there would be no need for a new version, would there?

Second, not only is there no “broad agreement on trends”. There’s not even broad agreement between HadCRUT4 and HadCRUT5—their error bars don’t overlap.

And the HadCRUT5 trend is no less than 40% higher than UAH … “broad agreement”? I don’t think so.

As to studies on the matter, see Dr. Roy’s study of the flawed UHI adjustment methods here and here.

w.

This is the sort of manic “adjustment” you condone

Are you REALLY so ANTI-SCIENCE that you think this sort of activity is ANYTHING BUT FRAUD !!

And here are the nearest sites to Alice Springs to show that are all similar to the RAW Alice Springs data

There is absolutely ZERO reason for this sort of fraudulent adjustment

Let’s add Bourke as well

Why haven’t the “homogenised” (lol) to Deniliquin temperatures

Dang! Those are serious “adjustments”…

D’OH of course they do.. that is the AIM of the homogenisations routines..

to CREATE WHAT YOU WANT. !

All the major groups use the same pre-adjusted data from NCDC/GHCN.

Why are you so DELIBERATELY IGNORANT about these things !

Is it you are just DUMB, or is it willful DECEIT.

If people actually bothered to read the discussion paper they would find the paragraph:

“The most obvious difference is the relative warming of HadCRUT5 between around 1970 and 1980. This arises from improved estimates of biases in measurements made in ship engine rooms at that time. Engine room measurements were biased warm in the 1960s with the warm bias dropping over time, first between 1970 and 1980 and then again between the early 2000s and present. There are also changes around the Second World War, where changes to the assumptions made in HadSST4 about how measurements were taken shifted the mean and broadened the uncertainty range, reflecting the lack of knowledge of biases during this difficult period (Kennedy et al., 2019).”

https://www.metoffice.gov.uk/hadobs/hadcrut5/HadCRUT5_accepted.pdf

Which provides the reason why the difference is most marked after 1970. Nothing to do with infilling.

Thanks, Isaac. I read the discussion paper. I took exception to their claim of “improved estimates of biases in measurements made in ship engine rooms at that time. Engine room measurements were biased warm in the 1960s with the warm bias dropping over time, first between 1970 and 1980 and then again between the early 2000s and present.”

While they are assuredly using different estimates, the claim that they are “improved” is … well … questionable. For example, why were there no changes in their estimates between 1980 and 2000?

In addition, I took a look at their bucket vs engine temperature values. They claim that bucket temperatures may read warm because of sunshine on the buckets. But all the previous studies I read say that bucket temperatures may read cold because of both evaporation and temperature exchange with the generally cooler air.

Finally, we’ve had satellite measurements of sea surface temperatures since 1981 … but HadCRUT is not using them. Why? I don’t know. They get a mention in their paper on the adjustments, but they’ve chosen to use adjusted, re-adjusted, and re-re-adjusted engine water inlet temperatures instead.

Me, I pay little attention to HadCRUT except to laugh at them. If you ever read the “HARRY READ-ME” file, or my link in the head post about Climategate, you’ll understand why I consider them a bunch of lying cheats who are not scientists in any sense of the word.

w.

“Here are just the lowess smooths, which give us a clear view of the underlying adjustments. I’ve added the University of Alabama Huntsville microwave sounding unit temperature of the lower troposphere (UAH MSU TLT) for comparison.”

________________________

There’s not much difference between them. Willis has excluded error margins in the trends here (he has also excluded the RSS satellite TLT data). For comparison, the ‘post 1978 trends’ in HADcrut4, HADCrut 5 and UAH all show statistically significant warming and all overlap when error margins are taken into account. RSS_TLT is also within the error margins of all the other producers’ trends and is in far better agreement with the surface trends than is UAH.

1) UAH’s 40-year warming trend (0.14 C/decade) is less than HADCrut and RSS, overlapping error bands notwithstanding.

2) Mears adjusted RSS to mimic surface trends.

ARGO trends (accounting for ending on a Super El Nino) are arguably closer to UAH.

UN IPCC climate model average trends exceed all of them.

The science is not settled and everyone needs to get a grip.

“Mears adjusted RSS to mimic surface trends.”

Any evidence to support that. RSS TLT v4 methodology is in the peer reviewed literature. Not aware of any rebuttals.

TheFinalNail:

If you think the error margins overlap, why are you complaining about me not including RSS? Given what you say, there’s no reason to prefer any one of them over any other of them …

But that’s not the case. The HadCRUT error margins don’t overlap. They start not overlapping about 1975, and they don’t overlap at all since 2000.

Me, I think that the stated uncertainty should include any and all “adjustments” made to the data … but obviously, HadCRUT doesn’t.

w.

Willis,

“If you think the error margins overlap, why are you complaining about me not including RSS? Given what you say, there’s no reason to prefer any one of them over any other of them …”

It’s not a question of what I ‘think’; it’s a demonstrable fact that all the data sets, surface and satellite, show statistically significant warming post 1978 and that the error margins in their trends overlap.

“But that’s not the case. The HadCRUT error margins don’t overlap. They start not overlapping about 1975, and they don’t overlap at all since 2000.”

______________

Can you source this please? HadCRUT4 since 2000 shows +0.168 ±0.089 °C/decade (2σ) warming. HadCRUT5 would need to show a best estimate of at least +0.4°C/decade warming since 2000 for the lower bound of its error margins not to overlap with HadCRUT4:

http://www.ysbl.york.ac.uk/~cowtan/applets/trend/trend.html

significant 🤓

The error estimates are at the HadCRUT 4 site. The Had4 estimates are there as a text file, but the Had5 estimates are only available, as far as I can tell, as a NetCDF file.

The numbers I gave are the 1 sigma values.

w.

WRONG

There is only ONE set of pristine data, and the trend matches UAH over that area very closely. Just as UAH match unadjusted balloons, even NOAA’s own satellite data matches UAH well.

RSSV$ matches the surface data BECAUSE THEY WANT IT TO.

They even use “climate models” to do their manic adjustments. It has become a TOTAL FARCE.

Which of the surface data sets show the Arctic a similar temperature or slightly warmer than now in 1940 ?

For reference, the error margins associated with the trends in the current versions of RSS and UAH overlap. Since 1979 these are:

UAH: +0.137 ±0.051

RSS: +0.216 ±0.054

Both shown in °C/decade at 2σ confidence. http://www.ysbl.york.ac.uk/~cowtan/applets/trend/trend.html

There is no evidence to support the defamatory allegation that RSS V4 was changed to match suface trends. Their methods were published in a reputable peer reviewed journal in 2016 and have not been rebutted or subjected to peer reviewed comment, to my knowledge.

How were these bounds calculated?

Unless they were obtained with the uncertainty methods in the GUM, they are meaningless. Simply taking the s.d. of the last step (the linear regression) and multiplying by 2 is misleading at best.

Does Hadcrud show NO WARMING from 1980-1997?

And NO WARMING from 2001-2015 ?

That is what happens when you stuff around with the raw data

You DESTROY ANY MEANING it might have once had,

.

…. ending up with a meaningless load of GIGO !

Eg….. the warming period around the 1940s which has been INTENTIONALLY AND FRAUDULENTLY REMOVED

I know some people like to use satellite “data” to prove whatever point but the simple fact remains it is just as bad as any other “data” set used in climate science when the methodology used to arrive at the final numbers is examined.

[Snipped. Violates site rules, and is slimy as hell.]

[Snipped. Same reason. Stop it.]

Colleagues were once at the global leading edge of knowledge about interpolation of grades and tonnes between assayed drill holes for the purpose of ore resource estimation.

Here is an important learning point from that experience.

The purpose of interpolation between drill holes was not to invent grades of ore between the drill holes. It was primarily to help to understand the uncertainty of the final estimates of grades and tonnes, by making a range of assumptions about the interpolations for sensitivity analysis, to see what the wildly high and wildly low assumptions might mean in terms of $$$ profit and loss. These uncertainty estimates were taken to the bankers who used them to understand the risk of lending money to develop the mine.

The real, useful values were the assays of intervals along the drill core. These are analogous to actual measured temperatures at stations. The interpolated block grades, just mathematical calculations, are analogous to the grid cell infilling that Nick Stokes goes on about. The actual assays were often repeated over and over to estimate their uncertainty as well. (This step cannot be done with past temperatures). When we made a decision whether to mine or to walk away, it was based on the assay results (and other factors not relevant here). It was NOT based on the mathematically interpolated values. If the Stock Exchange thought something was fishy, the asked about the assay results from the drill holes, not about the interpolated, imaginary values.

So, the lesson here is that interpolation is OK ONLY when it is used to help estimate uncertainty of actual temperature measurements. Interpolated values have no place masquerading with presumed importance similar to measured values for credibility. They are not similar. One is an actual physical measurement, recorded at the time. The other is an imaginary mathematical construct that can vary, or can be manipulated, because it has no pin back to the reality of a value recorded at the moment of observation.

As to Nick Stokes saying that to make a global average one has to assume values between the measured points – that is correct, but the premise is horribly wrong. There is inadequate past data to make a global average, so that interpolation should never have been attempted (except to confirm that it was an invalid exercise).

As to uncertainty, I await a valid description about how you estimate the proper uncertainty of an interpolated value, which is a guess that you can vary at whim.

Thanks, Geoff. Always good to hear from someone with real-world experience.

w.

“It was primarily to help to understand the uncertainty of the final estimates of grades and tonnes”

So what are those estimates based on if not inference about what lies between the drill holes. People aren’t staking their investment on mining those drill holes. They are basing it in the interred value of the whole ore body. That is what the whole business of kriging etc is about. And yes, they want to know about the uncertainty of the estimate. But the primary purpose of drilling is to sample the ore body, to get an estimate of mass and grade. ie what lies between the drill holes.

“Rrsource estimation”. It was the phrase you used.

My problem with the “infilling” is that computers don’t do edges very well, but nature loves edges.

If you ask a computer to “infill” between the center of a cloud and the surrounding clear air, you get a gradual transition from full cloud to no cloud.

But in the real world, you’re basically either in the cloud or out of it. Very little halfway.

Same in the ocean. If you sail west from the coast by my house in Northern California, you’ll be in pea-soup green water and fog for fifty miles (80 km) or so. Then, in an instant, you come out of the fog to find clear blue water and clear skies. There’s no gradual transition, you can see the line in the ocean.

As a result, I’m always suspicious of “interpolation” or “infilling” or the like. Doesn’t mean it can’t work, don’t misunderstand me. It absolutely can.

But it can also not work, and stupendously so. What is the color of a spot somewhere between the center of a freckle and the surrounding skin? Hint: it’s not half-dark.

Or as the poet said:

w.

Willis,

These often-planar disruptions are found in such ore resourse estimation work. A fault is one example. A geological contact between 2 different rock types is another. A dyke is another. The are common.

The usual way to cope with them is to divide the resource into parts either side of the discontinuity and calculate separately, but then to look at the whole picture to see which tonnes to send to the waste dump.

AFAIK, nobody tried the climate research method of temperature anomalies normalised to some average in an illusory attempt to better understand the ore disposition.

Nick, ore resource estimation deals with the early stages of evaluation. That is what I was discussing. Ore resource becomes ore reserve once strict criteria about the quality of the work are met.

Who controls the quality of work of the temperature adjusters?

……………………………

Nick, you asked “So what are those estimates based on if not inference about what lies between the drill holes”.

You can calculate tonnes and grade by averaging assays and using a few other variables, or you can do tonnes and grade on interploated values. If your work is good, they might agree well, but they are not the same measure. By analogy, the measured past temperatures are a primary resource, while the interploated values should never be confused with them and used in their place for policy planning because they are capable of adjustment for good reasons and bad.

What is your best estimate of the uncertainty of the observed daily temperature measurements that BOM curated over the last 100 years? BOM have declined to tell me their estimate, if they have one, so the bankers would not let them in the door if it came to wanting development money.

That, in a nutshell, is one big difference between hard science and post-modern waffle.

Geoff S

Nick,

I was there before the era of interpolation. FFS. We found, evaluated and opened a number of profitable mines based on the drill core results, with no interpolation like that used now. We prospered and went on to find more. Tremendous new wealth to be shared by all Australians. On last count, over $70 billion in sales so far and climbing.

It is possible to proceed to mine based on data without interpolation. Likewise, it is possible to do research on original temperatures, used raw as they were measured. This business of statistical manipulation is not required for an understanding of the climate of the Earth. It is a fancy accessory whose main claim to fame so far is converting hard numbers to soft njumbers suitable for adjustment to fit preconceptions. Again, hard science versus post-normal. The gold standard is the hard science, take it to the bank.

Geoff

“I was there before the era of interpolation”

This is just very basic. You drill some holes, assay, and infer something about the whole orebody. It doesn’t matter what numerical technique you use, if any. You are concluding something about the region outside the samples, based on what you found in those holes.

So we do with temperature readings. BMO looks in a box in Richmond, and tells you what the temperature was in overall Melbourne. People who live here find that useful.

“I was there before the era of interpolation”

It’s been going on for as long as resources have been extracted.

Petroleum engineering training in the ’70’s included a plethora of empirical methods to both estimate rock and fluid properties at points in inner space, and to interpolate them. Most (not all) have been replaced with simulations that are, interpolations. Both empirical and simulation processes combine many techniques for each property desired, and can handle disparate estimation techniques just fine. There would be next to no modern oil biz without reservoir simulation…

bigoilbob,

I agree with using benefits of advancing technology, but that is not the argument.

Courts of law still allow observational evidence, but reject hearsay.

Actual, measured temperatures are evidence. Interpolated values are hearsay and must be kept in a separate category of reliability.

Geoff S

None of the relevant common elements of AGW modeling and reservoir simulation can be called “advanced technology”. The fundamentals of stochastic, spatial interpolation have been known for quite awhile. The “advanced technologies” are mostly computing advances that enable us to do more, faster. From their arithmetic bases, to the fact that they have made many industries many billions, the basics of how to do this are not disputed in superterranea.

Nick,

I’m in the UK so had to look up Richmond. I see it is an inner suburb 3kms from the centre of Melbourne and well away from the cities outer suburbs. Please tell me how a box in Richmond can tell you what the overall temperature in Melbourne is.

Ask the TV stations. Ask the newspapers. They broadcast those readings, and people find them meaningful.

Nick, Let’s move into non-deflection mode.

Care to answer what was posed above?

1.As to uncertainty, I await a valid description about how you estimate the proper uncertainty of an interpolated value, which is a guess that you can vary at whim.

2. What is your best estimate of the uncertainty of the observed daily temperature measurements that BOM curated over the last 100 years?

Geoff S

Geoff,

The interpolated values are never quoted, or even explicitly calculated. What is relevant is the uncertainty of the derived quantity, the global average. This is extensively dealt with in the papers written about the indices; HADCRUT 5 is here

https://www.metoffice.gov.uk/hadobs/hadcrut5/HadCRUT5_accepted.pdf

Section 3.1 introduces the error model.

Hypotheses are disconfirmed when hypothetical projections exceed empirical observations by more than 2 standard deviations for a statistically significant duration.

To avoid CAGW disconfirmation, Leftists have the choice of either reducing hypothetical projections (thereby making CAGW less scary), or by manipulating the empirical data higher to better match projections…

From experience, we know Leftists always choose the latter option…

Unfortunately for Leftists running the HADCRUT5 dataset, there are still honest scientists involved in the UAH6.0 global temperature anomaly dataset and the disparity between the two are increasing rapidly.

When the PDO and AMO eventually reenter their respective 30-year cool cycles, the disparities between CAGW hypothetical projections and HADCRUT5 vs UAH6 observations will become so huge, the CAGW hypothesis and HADCRUT5 will become laughingstocks and a major scandal.

Leftists are digging their own graves by their blatant manipulations of observations and their irrational CAGW hypothetical projections…

Patience, folks. This silly CAGW hypothesis’ days are numbered.

Patience, folks. This silly CAGW hypothesis’ days are numbered.

So is Marxism, but don’t hold your breath.

I’ve collected many a sea squirt from under the floating docks at Spud Point Marina in Bodega Bay.

With lunch following at Ginochio’s.

Good times were had by all, except the seq squirts, who gave up their green blood.

We put them into nylon nets, though, and released them back into the wild suspended under the docks, where they can replenish their supply and get on with it

The picture shows a group of sea squirts, hard-partying on a lanyard suspended by a long time friend and collaborator.

I was always struck by the number of boats that looked like they never left the docks.

“Spud Point Marina”? Well la, di, da, ain’t you upmarket! Me, I hang with the ragamuffins and scurve dawgs over at Porto Bodega.

If you get out this way again give me a shout, my treat at Ginochios … that is if Governor Gruesome ever lets the restaurants out of jail again.

w.

PS—What do you do with green sea squirt blood?

Never got to Spud Point’s upmarket side, Willis. I usually ended up horizontal and hanging over the edge of the floating docks looking for sea squirts. Hardly romantic. 🙂

But the floating docks have lots of underwater surface area, making it a good sea squirt hunting ground. Last time we were there, the local ecology looked very healthy. We’d never before seen such a prolific array of organisms growing below the water line.

The harbor master was always accommodating. Last time they let us set up in their laundromat, with a small swinging bucket centrifuge and plasti-pak syringes. It all worked out very well.

Sea squirt blood is green. Mr. Spock would identify. Their entire hematology is *very* different from ours. From all evidence it is unchanged from all the way back to the Cambrian. It’s so different from ours, theirs may as well be a space-alien blood system.

In the picture, the green circles are morula cells. They’re part of the immune system. The surface is covered with small vacuoles that release their contents when immune-challenged. The green color is from “tunichrome” which is a large tripodal anti-oxidant molecule synthesized from the amino acid tyrosine.

Tunichrome may be part of the wound-healing mechanism. It can sclerotize underwater, forming a kind of plastic layer and closing a wound. Wound-closing is a big problem underwater.

The colorless circles are signet ring cells. No one knows exactly what they do. But they’re about 50% of the blood cells. They have (usually) one large inner vacuole filled with air-sensitive trivalent vanadium and 1 Molar sulfuric acid.

The vanadium is concentrated about 1 million-fold from sea water. It starts out vanadium +5. Two electrons are then added. The detailed biochemical mechanism is still mysterious.

We were interested in the signet-ring cells, trying to figure out all the stuff I just described. Thanks for asking. 🙂

I’d really enjoy meeting up, Willis. Although that part of my work is done, my collaborators are good friends and live in Petaluma. We plan to visit sometime. A trip to Bodega would be a necessary part of the visit. I’ll let you know. I’m sure our beautiful ex-fiancees would get along well. 🙂

Upmarket? Well, Spud Point has a laundromat and bathrooms … in any case, give me a shout when you’re in the neighborhood.

w.

Perhaps we all should take a much longer term point of view…

https://breadonthewater.co.za/2021/03/04/the-1000-year-eddy-cycle/

Can anyone explain to me why temperature data isn’t de-seasonalized by appropriate differencing instead of manipulated into anomalies using some arbitrary period?

The manipulation of measured temperature data seems devoid of statistical rigor.

As one simple example. I was headed out to eastern WA in September during the height of the wild fires. After hearing Gov. Newsome claim that climate change was making the fires worse, I decided to download all of the data for the 7 stations in the Climate Reference Network into excel so I could analyze the data during my flight. I always look at the data when I can so I just graphed the raw data. None of the 7 stations appeared to trend over the 15 years or so of data availability. It was a simple matter to de-seasonalize the data and test for a time trend. None of the 7 stations had a statistically significant trend over the period analyzed. All told, it took about an hour to analyze the 7 stations. Yet, to my knowledge, Gov Newsome got almost no pushback from the press on his claim of climate caused wild fires. I should mention that I also looked at rainfall and while the data is noisy, there isn’t a trend there either.

I have yet to find a station in the CRN that displays a significant positive time trend, though I have only looked at 25 or so.

There are videos out “there” of a drone flying along a Cal. freeway lighting the countryside on fire along a kilometer length in 2020, ergo “global warming is making the fires unprecedented”.

Ah, another person wondering why we aren’t using time series analysis instead of simple averaging and resgression.

I see they’re still having difficulty tidying away the blip. Anyone wonder ‘why the blip?’

JF

Mr Eschenbach,

I was interested in the actual impact that this increase in trend might have, and wondered whether, if the figures generated by ground observation stations were being artificially increased, how they compared to the satellite data. Of course, these are measuring different things, but I would expect some commonality.

I am far less sophisticated than you are in my ability to mine original data, so I just went to Woodfortrees and dialled up the ‘Linear Trend’ item for the major data sets between 1980 and 2020. Again, this is a very simplistic measure, but I expected to find that the satellite data showed a flatter trend compared to the ground stations.

My ‘eyeball estimates’ of the temperature anomaly change over this period are:

UAH – 0.55

RSS – 0.85

HAD – 0.7

GISS – 0.7

Which is, I suppose, about what I would expect. Though I am rather surprised at the RSS figure. I wonder how that comes out so much higher than the rest? Perhaps someone is taking the averages of the two satellite figures, which coincidentally also add up to 0.7….