By Andy May

As described in my previous post, the ocean “mixed layer” is sandwiched between the very thin “skin” layer at the ocean surface and the deep ocean. The skin layer loses thermal energy (“heat”) to the atmosphere primarily through evaporation, gains thermal energy from the Sun during the day, and constantly attempts to come to thermal equilibrium with the atmosphere above it. During the day, with the Sun beating down on the ocean and calm clear conditions, the skin layer might be as thick as ten meters. At night, especially under windy conditions, it can be less than a millimeter thick.

The mixed layer is a zone where turbulence, caused by surface currents and wind has so thoroughly mixed the water that temperature, density, and salinity are relatively constant throughout the layer. Originally, the “mixed layer depth,” the name for the base of the layer, was defined as the point where the temperature was 0.5°C different from the surface (Levitus, 1982). This was found to be an inadequate definition near the poles in the winter, because the temperature there, in certain areas, can be nearly constant to 2,000 meters, but the mixed layer turbulent zone isn’t that deep (Holte & Talley, 2008). Two of the areas that create problems when picking the mixed layer depth with the 0.5°C criteria are the North Atlantic, between Iceland and Scotland and in the Southern Ocean southwest of Chile. The areas are noted with light blue boxes in Figure 1.

Figure 1. The North Atlantic and Southern Ocean areas where defining the mixed layer depth is difficult because of downwelling surface water during the local winter.

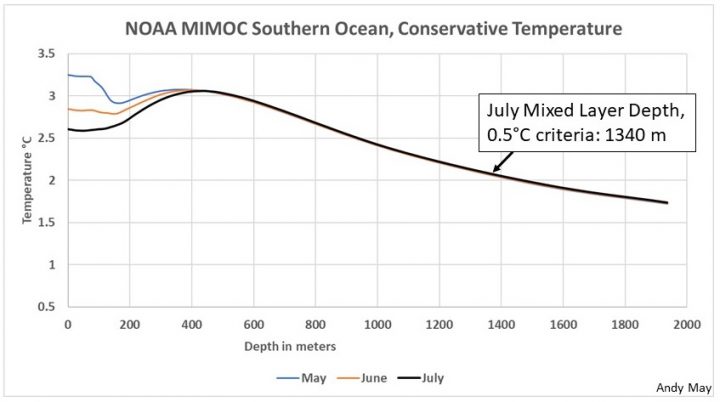

The regions shown in Figure 1 are areas where significant downwelling of surface water to the deep ocean takes place, these are not the only areas where this happens, but these areas often contain nearly constant temperature profiles for the upper 1,000 meters, or even deeper. Figure 2 shows the average July temperature profile for the Southern Ocean area in Figure 1.

Figure 2. A Southern Ocean July average temperature profile from the blue region shown in Figure 1. The data used to make the profile is from more than 12 years, centered on 2008. Data from NOAA MIMOC.

As explained by James Holte and Lynne Talley (Holte & Talley, 2008), the deep convection in this part of the Southern Ocean has distorted the temperature profile to such an extent that a simple temperature cutoff cannot be used to set the mixed layer depth. Numerous solutions to the problem have been proposed over the years and these are listed and discussed in their article. Their proposed methodology is used by Sunke Schmidtko to define the mixed layer in the NOAA MIMOC dataset discussed below (Schmidtko, Johnson, & Lyman). The Holte and Talley method is complicated, as are many of the other solutions. It does not seem that a generally accepted methodology for defining the mixed layer has been found to date.

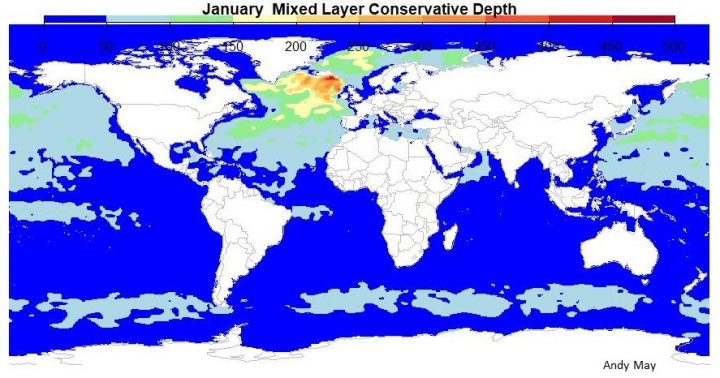

Depending upon location and season, the mixed layer depth changes. It is thickest in the local winter in the higher latitudes. There it can extend to 400 meters below the surface or farther using the Holte and Talley logic, and much deeper using the 0.5°C temperature cutoff. Figure 3 shows a map of the mixed layer depth in January.

Figure 3. Ocean mixed layer depth in January using the Holte and Talley logic. The oranges and reds are 400 to 500 meters. Data from NOAA MIMOC.

However, the Northern Hemisphere mixed layer thickness thins during the northern summer months and it thickens in the Southern Hemisphere, especially in the Southern Ocean surrounding Antarctica, as shown in Figure 4.

Figure 4. The ocean mixed layer depth in July using the Holte and Talley logic. Again, the oranges and reds are 400 to 500 meters. Data from NOAA MIMOC.

The thicker mixed layer zones always occur in the local winter and reach their peak near 60° latitude as seen in Figure 5.

Figure 5. Average mixed layer thickness by latitude and month. The thickest mixed layer is reached in the Southern Hemisphere at about 55 degrees south. In the Northern Hemisphere the peak is reached around 60 degrees. The mixed layer depth in this plot is computed using the methodology developed by Holte and Talley. Data from NOAA MIMOC.

The significance of the Mixed Layer

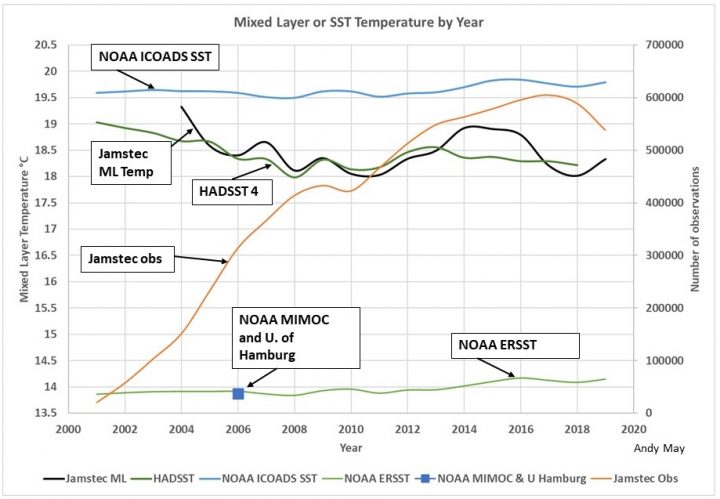

In our previous post, we emphasized that the mixed layer is in thermal contact with the atmosphere, with a small delay of a few weeks. It also has about 22 times the heat capacity of the atmosphere, which smooths out the radical changes in atmospheric temperature caused by weather events. Thus, when looking at climate, which is much longer term than weather, observing the trend in mixed layer temperature seems ideal. In Figure 6 we compare the yearly global average mixed layer temperature from Jamstec, MIMOC and the University of Hamburg to the global sea-surface temperature (SST) estimates from the Hadley Climatic Research Unit and NOAA. These are not anomalies, these are actual measurements, but they are all corrected and gridded. I have simply averaged the respective global grids.

Figure 6. The Jamstec computed global mixed layer temperature is plotted in black. It is compared to HadSST version 4, NOAA’s ICOADS SST, and NOAA’s ERSST. NOAA MIMOC, and the University of Hamburg’s mixed layer temperatures are nearly identical and centered on 2006, they are plotted as a boxes, which overly one another. Data is from the respective agencies.

Decent ocean coverage is only available since 2004, so the years before then are suspect. In Figure 6, all data are plotted as yearly averages. The Hadley CRU temperatures agree well with the Jamstec mixed layer record and, surprisingly, both records show similar declining temperature trends of about two to three degrees per century. NOAA’s ICOADS (International Comprehensive Ocean-Atmosphere Data Set) SST trend is flat to increasing and over a degree warmer than the other two records. The number of Jamstec mixed layer observations are plotted in orange (right scale) to help us judge the data quality for each year. Jamstec reached 150,000 observations in 2004 and we held the subjective opinion that this was enough.

The NOAA MIMOC and University of Hamburg mixed layer temperatures are much lower than HadSST and the Jamstec mixed layer temperatures. Yet, these two multi-year averages, that are centered on 2006, fall right on top of the ERSST SST record and they are over four degrees lower than HaddSST and Jamstec. The difference cannot be simply where the temperatures are taken. It can’t even be in the data, all these records use nearly the same input raw data, it must be in the corrections and methods.

The various mixed layer temperature estimates are different and so are the SSTs. Why are two records declining and the rest flat or increasing? The different estimates do not agree on temperature or trend.

Sea surface and mixed layer temperatures should not need to be turned into anomalies unless they are compared to terrestrial temperatures. They are all taken at approximately the same elevation and in the same medium. All the datasets are global, with similar input data. All are gridded to reduce the impact of uneven data distribution. The grids are different sizes and the gridded areas do differ in their northern and southern limits, but all the grids cover all longitudes. Table one lists the latitude data limits of the grids, they aren’t that different.

Table 1. The north and south data limits for each dataset in 2019.

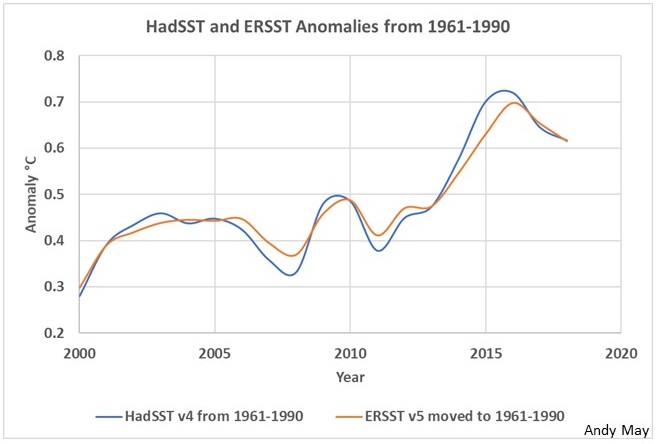

In Figure 7 we have plotted the HadSST and ERSST anomalies. How did they get these anomalies from the measurements in Figure 6?

Figure 7. HadSST and ERSST version 4 temperature anomalies.

The HadSST record is maintained by John Kennedy and his colleagues at the MET Hadley Climatic Research Unit (Kennedy, Rayner, Atkinson, & Killick, 2019). They note that their record is different from ERSST and admit it is due to the difference in corrections and adjustments to the raw data. Kennedy mentions that SST “critically contributes to the characterization of Earth’s climate.” We agree. Kennedy also writes that:

“One of the largest sources of uncertainty in estimates of global temperature change is that associated with the correction of systematic errors in sea-surface temperature (SST) measurements. Despite recent work to quantify and reduce these errors throughout the historical record, differences between analyses remain larger than can be explained by the estimated uncertainties.” (Kennedy, Rayner, Atkinson, & Killick, 2019)

One glance at Figure 6 verifies this statement is correct. Most of Kennedy’s 90-page paper catalogues the difficulties of building an accurate SST record. He notes that even subtle changes in the way SST measurements are made can lead to systemic errors of up to one degree, and this is the estimated 20th century global warming. We do not believe the SST record from 1850 to 2005 is worthwhile. The ambiguous data sources (mainly ships and buckets to WWII and ship intake temperatures after) and the imprecise corrections swamp any potential climate signal. The data is much better since 2005, but Figure 6 shows wide differences in compilations from different agencies. Next, we review the agency definitions of the variables plotted in Figure 6.

Met Office Hadley Centre HadSST 4 data set.

This data was read from a HadSST NetCDF file. NetCDF files are the way most climate data are delivered, I’ve explained how to read them with R (a high quality free statistical program) in a previous post. The variable read from the HadSST file was labeled “tos,” it is a 5-degree latitude and longitude grid defined as “sea water temperature.” The documentation says it is the ensemble-median sea-surface temperature from HadSST v4. The reference given is the paper by John Kennedy already mentioned (Kennedy, Rayner, Atkinson, & Killick, 2019). HadSST uses data from ICOADS release 3, supplemented by drifting buoy data from the Copernicus Marine Environment Monitoring Service (CMEMS). Kennedy mentions the difference between his dataset and ERSST v5 seen clearly in Figure 6. The Hadley Centre SST is corrected to a depth of 20 cm.

NOAA ERSST v5

In Figure 6 we plot yearly global averages of the ERSST v5 NetCDF variable “sst,” which is defined as the “Extended reconstructed sea surface temperature.” They note that the actual measurement depth varies from 0.2 to 10 m, but all measurements are corrected to the optimum buoy measurement depth of 20 cm, precisely the same reference depth as HadSST. Also, like HadSST, ERSST takes its data from ICOADS release 3 and utilizes Argo float and drifting buoys between 0 and 5 meters to compute SST. This makes sense, since ERSST agrees with the University of Hamburg dataset and NOAA’s MIMOC (mixed layer) datasets, which also rely heavily on Argo float data. As discussed above, SST (at 20 cm) and the mixed layer temperature should agree closely with one another almost all the time. The ERSST anomalies plotted in Figure 7 are from the variable “ssta.” I moved ssta to the HadSST reference period of 1961-1990, from the original reference of 1970-2000. The basic reference to ERSST v5 is a paper by Boyin Huang and colleagues (Huang, et al., 2017).

Like Kennedy, Boyin Huang directly addresses the differences between ERSST and HadSST. Huang believes that the differences are due to the different corrections to the raw data applied by the Hadley Centre and NOAA.

NOAA MIMOC

The global average NOAA MIMOC mixed layer “conservative temperature” is plotted in Figure 6 as a box that falls on the ERSST line. It is plotted as one point in 2006 because the MIMOC dataset uses Argo and buoy data over more than 12 years centered on that year. The global average temperature of all that data is 13.87°C from 0 to 5 meters depth. Conservative temperature is not the same as SST. SST is measured, conservative temperature is computed such that it is consistent with the heat content of the water in the mixed layer and takes into account the water salinity and density. However, we would expect SST to be very close to the conservative temperature. Since the conservative temperature more accurately characterizes the heat content of the mixed layer, it is more useful than SST for climate studies. The primary reference for this dataset is the already mentioned paper by Schmidtko (Schmidtko, Johnson, & Lyman).

University of Hamburg dataset

The University of Hamburg dataset is similar to MIMOC in that it is not divided by year, but pools all available data over the past 20 to 40 years to make a high resolution dataset and set of grids of ocean temperature by depth. Like ERSST and MIMOC it relies heavily on Argo and buoy data. The global average temperature of the upper five meters of the oceans in this data set is 13.88°C, barely different from the MIMOC value. The NetCDF variable used is “temperature.” It is defined as the “optimally interpolated temperature.” The documentation states that the zero depth is SST, it is not “conservative temperature.” However, the value is nearly identical to conservative temperature. The main reference for this data set is an Ocean Science article by Viktor Gouretski (Gouretski, 2018).

NOAA ICOADS

The NOAA ICOADS line in Figure 6 is downloaded from the KNMI Climate Explorer and labeled “sst.” The description is: “Sea Surface Temperature Monthly Mean at Surface.” ICOADS version 3 data is used in all the other agency datasets described here, but the organization does not do a lot of analysis. By their own admission they provide a few “simple gridded monthly summary products.” Their line is shown in Figure 6 for reference, but it is not a serious analysis and probably should be ignored. It does help show how imprecise the data is.

Jamstec ML Temperature

The Jamstec MILA GPV product lines up well with HadSST as can be seen in Figure 6. This line is from the NetCDF variable “MLD_TEMP.” It is the central temperature of the mixed layer. The temperature is not a true “conservative temperature,” but the way it is computed ensures that it will be close to that value. The reference for this product is a Journal of Oceanography article by Shigeki Hosada and colleagues (Hosada, Ohira, Sato, & Suga, 2010). Jamstec uses mostly Argo float and buoy data.

Conclusions

The total temperature spread shown in Figure 6 is over 5.5°C and yet these agencies are starting with essentially the same data. This is not an attempt to characterize the SST and mixed layer temperature one-hundred years ago, these are attempts to tell us the average ocean surface temperature today. To put Figure 6 into perspective, the total heat content of our atmosphere is roughly 1.0×1024 Joules, the difference between the HadSST line in Figure 6 and the ERSST line, assuming an average mixed layer depth of 60 meters (from Jamstec), is 3.9×1023 Joules or nearly 39% of the total thermal energy in the atmosphere.

I have no idea whether HadSST’s or ERSST’s temperatures are correct. They cannot both be correct. I lean toward the ERSST temperatures since it is hard to have an 18-degree ocean in a 15-degree world, but then why are the HadSST temperatures so high? The single most important variable in determining how quickly the world is warming or cooling is the ocean temperature record. We have better data available since about 2004, but obviously no agreed method of analyzing it. When it comes to global warming (or, perhaps, global cooling) the best answer is we don’t know.

Given that oceans cover 71% of the Earth’s surface and contain 99% of the heat capacity, the differences in Figure 6 are enormous. These discrepancies must be resolved, if we are ever to detect climate change, human or natural.

I processed an enormous amount of data to make this post, I think I did it correctly, but I do make mistakes. For those that want to check my work, you can find my R code here.

None of this is in my new book Politics and Climate Change: A History but buy it anyway.

You can download the bibliography here.

The skin layer is apparently only one micron thick because evaporation is pretty much instantaneous in the location where there is enough energy for it to take place.

That ultra thin layer cannot get enough energy from the air or from sunlight to sustain the process so it draws energy up from below via conduction which has the effect of reducing the temperature gradient between mixed layer and the skin.

So, there are actually three layers, the skin itself, the cooled region beneath and the mixed layer beneath that.

Getting a proper grip on the mechanics of thermal events at the atmosphere/ocean interface is essential to seeing why the effects of CO2 cannot be significant as regards ocean temperatures.

You make a good point here about layers Stephen. There is also the additional layer above the water surface which is the steam ( aka: the vapor/droplet mix) through which the radiation has to pass and evaporate the droplets. – Again at constant temperature. This affects the amount of radiation which actually reaches the water surface.

Where is really does get complex is the effect on the Partial Pressure of water in this layer ; as this determines the RATE at which evaporation takes place. This aspect being where wind and air movements have considerable influence.

Stephen, As you saw in my last post (linked in the first line of this post), some subdivide it into 4 layers, or even more. Usually, at least from the satellite POV, it is ~10-20 micrometers thick. I deliberately wrote a separate post on the skin effect/skin layer(s) to avoid getting into the minutia of the skin layer here. I just wanted to make it clear that there is a separate layer above the mixed layer that has a diurnal and weather-related variation in temperature independent of the mixed layer. So there is a whole post that addresses your comment. This post is on the mixed layer and its characteristics.

The reason we spend so much time looking only at the atmosphere is because that’s what most directly affects us. It does remind me of a joke:

The phenomenon is unsurprisingly called the drunkard’s search. It affects all areas of human endeavour including science. It’s like the principle that, to a woman who has only a hammer, every problem looks like a nail.

+1

“That ultra thin layer cannot get enough energy from the air or from sunlight to sustain the process so it draws energy up from below via conduction which has the effect of reducing the temperature gradient between mixed layer and the skin.”

Ultra thin layers of could conduct heat but water is poor conductor of heat, so cold surface water is falling and being replaced with less cold water.

With freshwater lakes the falling surface water stops when water is more dense below it, when the body of water is about 4 C, with saltwater, it just keeps falling.

Stephen Wilde : “That ultra thin layer cannot get enough energy from the air or from sunlight” – is this correct? When there is sunlight, quite a high proportion of all the non-visible wavelengths penetrate no further than the top micron. Some of the visible light ends there too. [From memory of when I did the calcs, but I would have to do them again to check].

Solar energy is predominantly short wave energy – that is light. If you are underwater and can see, that water is being ‘warmed’ by solar radiation. Is is not a ‘skin’ effect, energy penetrates meters, probably up to 10 or so. As a diver, I have seen the bottom illuminated at 200 feet…that is short wave energy, and it is being absorbed by the water.

It confounds me that people have such an unimaginative concept of the sea surface and what is below. Of course, having ridden submarines for 8 years, we spent a lot of time finding the thermal isoclines where temperature sharply changed from warm to cool to be able to hide.

The fact is, however, that once light is ‘in’ the ocean, it is not somehow magically ‘reemitted’, so it HAS to be absorbed. Only that which is reflected AT the surface is not absorbed.

The 0.5 deg definition of the mixed layer is not fit for purpose since that criterion does not necessarily reflect the zone affected by turbulent mixing.

The reason there is much deeper water within this isotherm in certain zones in the winter is because around 3 degrees C there very little change in density with temperature ( this is the inversion point in the temperature dependency graph, below that it gets less dense ). This means that there is no thermal stratification : that which causes warm water to float above cooler water and maintain a stable “mixed layer”. That does NOT mean that this deeper layer is in fact mixed. It just means that it is at the same temperature, the only thing which is being considered.

This whole article seems to assume that a uniform temperature implies there is mixing throughout this volume. That is a false assumption IMO.

Greg December 14, 2020 at 4:01 am Edit

Greg, this is true … but only for pure water at atmospheric pressure. Here’s a couple of graphs I just made up to show the difference. First, fresh water at atmospheric pressure.

And here’s sea water at 1,000 metres.

I fear this invalidates your whole theory, sorry.

w.

HadSST has data gaps where there are no observations. These gaps are largest at high latitudes where SST happens to be lower. ERSST is spatially interpolated and it is essentially globally complete. Consequently, the globally-averaged actual SSTs (as opposed to anomalies) are higher in HadSST than ERSST.

In order to do a like-for-like comparison, you would need to average the two data sets on to the same grid, mask them both to the same coverage, and then compare. Note that the NOAA ICOADS SSTs are also gappy.

John, what you write is correct, but so is what I wrote. The difference between the two (HadSST and ERSST) has nothing to do with the data or the coverage. It is in the processing, gridding, corrections and methods. Regardless of how you word it or spin it, you are faced with the same problem. You don’t know which is correct and masking the problem by creating “anomalies” where they aren’t needed is disingenuous. We still don’t know the ocean temperature, or whether the oceans are warming or cooling.

BTW, thanks for commenting. I’m sure all the readers are very interested in your point of view on this. We need to hear all sides.

I keep reading that older temperature observations are unreliable and are to be ignored. I completely understand that the methods and instruments used in years gone by were primitive compared to those we use now, and hence the absolute temperatures they report will have wide error bars. However, taken over a sufficiently long time period, surely they are enough to indicate any significant trend?

Richard, They indicate at least two trends, as shown in Figure 6. Which one is correct? The ocean temperature series that we have do not have the accuracy to detect the changes the “climate establishment” are claiming. The corrections and uncertainty are well over one degree.

In a past life as a hunter and tracker of Soviet submarines it was very important to have knowledge of where the mixed layer boundary was. Due to the physics of sound underwater submarines can “hide” in various places in a water column.

Apparently you didn’t find John Shotsky

Until the delta temperature is more than the uncertainty interval you can’t discern any trend since you will not know the true value at any point in time.

If the uncertainty interval is +/- 0.5C and over a long period of time you think you see a 0.15C difference in temperatures then you really aren’t seeing any trend at all. Until the difference overcomes the uncertainty you simply can’t state anything.

Ex.: Jan 1, 2000 = 10C. The uncertainty interval is +/- 0.5C so the true value lies somewhere between 9.5C and 10.5C. Jan 1, 2020 = 11C. the uncertainty interval is +/- 0.5C so the true value lies somewhere between 10.5C and 11.5C. Since the true value for both dates *could* be 10.5C then how do you know if there was a warming trend over the two decades?

I don’t pretend to even try to understand your analysis or ideas .

But common sense tells me the oceans control our climate far more than CO2 .

The sun and clouds ,ocean currents and prevailing winds certainly control local weather .

Climate is just an average of the weather over a period of time .

Time for some serious study to disprove CO2 , means to prove other causes .

George1st,

I think you understand my ideas just fine!

They already did disprove CO2 causes ‘Climate Change” in, and I will say it again, the Antarctic Ice Core data published 20 years ago that showed, for a period of 3 glacial-interglacial cycles, temperature changes first then CO2 changes, after a gap of centuries, proving beyond all doubt there is NO “climate sensitivity to CO2”.

And every meteorologist and every climate scientist knows this.

Agree!

I have a problem with the scheme of distinguishing superficial, mixed, and deep. Specifically, what point is being made wrt climate?

Direct measurements are data. Adjusted or “corrected” measurements are no longer “data”, but instead data as seen through the distorting lens of some theory, the theory that tells you why the data must be adjusted. The adjusted data are now floating somewhat free of reality—-in the land of theory.

It seems to me a simple data measurement, say of avg global sea temps in the volume 5 to 10 meters below the surface, without corrections, and extended over a period of decades, might possibly yield useful information about climate trends. Possibly.

If there is no discernible trend outside the parameters of uncertainty, that would put bounds on any global warming (or cooling) that might otherwise be theorized to be occurring.

If a trend is seen, that too would be informative.

The problem is that past sst measurements varied in quality and location. The totality of the 21st Century measurements presented here indicate no significant change to the average mixed layer temperature one way or the other. The takeaway? Shout that from the rooftops ’till the cows come home! All commentators to the UN IPCC AR6 need to hammer at that fact.

The largest radiator on earth is the oceans, which comprise over 70% of the earth’s surface. That radiator completely swamps any ‘evaporative’ cooling although that happens too. But it doesn’t make sense to not acknowledge that the oceans are responsible for most of the radiation that makes it back to space, and thus, is the largest ‘cooler’ as well.

John,

The oceans radiate most of the thermal energy to be sure. But, given they are oceans, and evaporation is taking place, and water vapor is a greenhouse gas, I’m not sure how much of that radiation makes it to outer space in one hop – that is, directly from the ocean surface to space. You need low humidity for that to happen, thus most of the surface radiation that sends thermal energy all the way to outer space in one go, is probably from the Arctic, Antarctic, and deserts.

Unless the oceans radiate predominately in the atmospheric window frequencies. Look it up.

The oceans are broadband radiators, just as land is. The only thing that is different is emissivity of each material, not bandwidth. Radiation from the oceans can be intercepted by gases with the proper absorption bandwidth, but the rest of the radiation goes to space. It is the biggest cooler the earth has. ALL of the oceans (water surface) radiate constantly based on their temperature and emissivity at a given point. Continuous radiation, day and night. Even the ice radiates, but with a different emissivity.

If that didn’t happen, this place would be hot as HELL!

All of each day’s radiation is returned to space every day. If it wasn’t, this place would be hot as HELL!

Andy

Area averaged annual precipitation is 2.7mm/day; what comes down has to go up. Taking 2264kJ/kg as latent heat of water, the precipitation rate corresponds to 70W/sq.m across the globe. Around 25% of the surface SWR.

Once cloudburst forms, all the OLR leaves at very low temperature as you see here:

https://neo.sci.gsfc.nasa.gov/view.php?datasetId=CERES_LWFLUX_M

Any of the blue region over tropical ocean is radiating high up in the atmosphere. The OLR in these regions corresponds to a precipitation rate of between 7 to 8mm per day; much higher than the global average.

RickWill December 13, 2020 at 3:27 pm

I fear you’re using the latent heat of freshwater. Most water evaporated is seawater, with a latent heat of evaporation being 2,458 kJ/kg at 17°C.

A cubic metre of seawater contains about 1020 kg. So it will take about 79.4 W/m2 to evaporate a cubic metre of seawater. As above, I generally round that to 80 W/m2.

w.

About 50% of the net ocean energy uptake occurs at 28C. So the average temperature of evaporating from sea surface will be close to 28C; as there will likely be higher rate at 30C near the maximum and evaporation falls off dramatically below 26C; so say 2370kJ/kg corresponding to 28C on avrage.

Last time I tasted rain is was close to pure water where I live. Figure the salt is not getting stored in the atmosphere. So 2.7mm/day of rain corresponds to 2.7kg of pure water evaporated. That gives 74W/sq.m assuming only oceans but some of the water comes off land as well.

I figure my first rough estimate is better than what you came up with.

RickWill December 14, 2020 at 2:48 am

Rick, seems you want more detail. I use the Gibbs Sea Water collection of functions regarding sea water. It gives the following for evaporation of seawater at 28°C. First, the details:

This gives the following for seawater at 28°:

This is in kJ/kg. Now, a cubic metre of seawater at the surface at 28°C weighs 1,026.2 kg/cubic metre. This is because it contains 1,000.8 kg of water and the rest is salt. So it will make a metre of rain when evaporated.

This means that to evaporate a cubic metre of seawater, which in turn will make a metre of rain, requires 1026.2 * 2431.8 * 1000 = 2,495,513,160 joules

One watt per square metre per year = 31,556,926 joules.

THEREFORE, it will take some 79.1 W/m2/year to evaporate a cubic metre of seawater …

Finally, as you point out, not all evaporated water is seawater. Estimates are that 10% of the water cycle is evaporated over the land, and 90% over the ocean.

Running the same calcs for freshwater at 28°C gives us 77.7 W/m3. Less than seawater, as you noted.

This gives us a weighted mean of 79.0 W/m2.

Now, I’d estimated 79.4 W/m2, and you’d estimated 74 W/m2. So it also gives us a lesson.

The lesson is, I’m a math and data guy. Yes, I’ve been wrong many times … but if you’re going to bust me, you’d best double-check your math and data before you claim that your first rough estimate is better than what I came up with …

Having said that, I must congratulate you sincerely for running the numbers yourself to check my claims … always worth doing for anyone’s claims, and you did a commendable job. My sense is that you just didn’t have the tools to do the exact calcs.

If you can program, let me most highly recommend that if you are not programming in R, you really, really, really should learn it. It’s free, cross-platform, and has a free killer user interface, RStudio. It also has free packages for most anything you can imagine, including the Gibbs Sea Water package called “gsw”.

My best to you,

w.

John Kennedy (author of HadHSST4) has pointed out on twitter that this analysis has missed something really basic – that ERSST & HadSST4 and cover different areas because one is interpolated and one is not.

This seems like a fairly straightforward for this apparent “discrepancy” – ie: it isn’t a discrepancy after all, because it’s like comparing apples with oranges. Or at least, an apple with half an apple.

Richard, Perhaps. HadSST simply averages the measurements in 5 lat-long degree bins. ERSST interpolates using a gridding algorithm of some kind. But, if that is the reason, why does it make such a large difference? As you can see in Table 1, the areas they cover are not that different. I checked where the northernmost and southernmost values were in each dataset to make sure of the values in that table. HadSST actually has values north of 82.5, the reason that is their northern limit is they use 5 degree bins.

Anyway, my point was, both use essentially the same data, the processing and methods are different and this causes a difference of >4 degrees. He is trying to say the area covered is different, that is not true, it is the methodology that is different, averaging versus interpolation. Which is the best estimate?

Richard, could you quote John Kennedy’s tweet so I can find it?

Your Figure 6 shows the “mixed layer or SST temperature by year” and you were looking for an explanation for why e.g. HadSST4 was higher than NOAA ERSST. This seems likely to be due to the very different coverage of the two data sets.

HadSST4 has gaps in the gridded fields where there are no observations, and those gaps are largest at higher latitudes where SST happens to be lower. ERSST is spatially interpolated and there are no gaps. Consequently averaging and comparing the two data sets without accounting for this difference in coverage would lead to a higher global average for HadSST4 than ERSST. To reiterate, averaging the two data sets without taking missing data into account effectively means averaging over two different areas, so it’s not surprising that there is a difference.

You can see coverage in HadSST3 (which is similar to HadSST4) for recent months at https://www.metoffice.gov.uk/hadobs/hadsst3/charts_past.html . You can clearly see gaps in ocean areas at high latitudes in those plots. Your table showing the latitudinal extent doesn’t account for how many grid cells are populated at each latitude.

John, here is the plot you link to, to help everyone.

We can see the 5 degree grid cells in the north and south that have no values. As you say, the gridding algorithm that is used by ERSST, as well as some of their tethered buoys in the Arctic and Antarctic can be used to help fill in these large missing areas as you say. Contributing to the problem is the large size of your grid cells.

All that is true. But, it is much more a processing problem than a data problem. HadSST carefully adheres to the data. You average values in bins, very intellectually honest, I like that. Yet your temperature trend is down in recent years. Your year-to-year difference in coverage area can’t be that large, correct? The trend is 3.5 degrees per century!

ERSST uses interpolation and a little extrapolation and a finer grid. Their documentation suggests that they weight the ARGO data more. The data doesn’t cover more area, the method simply stretches it farther. As I’ve said, it is all in the gridding, corrections, and methods.

My opinion? We don’t know what the average temperature of the surface of the ocean is and we don’t know if it is warming or cooling. The average temperature is PROBABLY between 13 and 18 degrees and the trend is PROBABLY flat, but those are guesses.

“…..PROBABLY 13 to 18….”

Which brings up one of my favorite topics, the notable Bowen ratio, a parametrized number calculated from assumed factors used in climate models, which means approximated, of ocean heat evaporated versus heat absorbed….

About 90% of the radiant heat striking the top couple of mm of ocean surface causes evaporation and 10% goes into warming the water, which loses that 10% some other day or night when the air temperature is sufficiently cooler than the water. The ejected water molecules take 2545 KJ/Kg of heat from the water surface as they evaporate.

Various “Pan evaporation” equations and studies show the evaporation from a water surface to be proportional to the wind speed and the difference in partial pressure of the water vapour in the air, and the partial pressure of water at the liquid surface. Reference attached.

You can make yourself a spreadsheet with some approximate wind speed and guesses of air relative humidity and discover that, as a Trenberth chart shows, evaporation averages about 40 times higher than CO2 forcing. But, evaporation is 100% more if the wind changes from 2 to 4 m/sec due to surface rising air parcels (think thunderstorms as an extreme example).

What a Trenberth chart doesn’t show is that 1 C local warming, whether from CO2 or sunshine, results in a couple percent more cloud cover that cools the surface under the clouds by more than a degree. So, Reflectivity of clouds controls the Earth’s temperature. Sorry to those who believe it is CO2. All CO2 does is minorly change the elevation in the middle troposphere where clouds will form as the H2O laden air parcels rise.

https://publishing.cdlib.org/ucpressebooks/view?docId=kt167nb66r&chunk.id=d3_3_ch04&toc.depth=1&toc.id=ch04&brand=eschol

Thanks DMacKenzie, good points.

DMacKenzie: “evaporation averages about 40 times higher than CO2 forcing”

WR: An interesting comment. Do you mean by ‘CO2 forcing’ the extra forcing by ‘double CO2’? Could you give the numbers for what you mean to say?

Sure – https://twitter.com/micefearboggis/status/1338069968252964865

I see Kennedy’s comment as to being moderated and am looking into why.

Usually it’s people mispelling their email addresses and we have to go in and fix them, as just happened to you.

John Kennedy (author of HadHSST4) has pointed out on twitter that this analysis has missed something really basic – that ERSST & HadSST4 and cover different areas because one is interpolated and one is not.

This seems like a fairly straightforward for this apparent “discrepancy” – ie: it isn’t a discrepancy after all, because it’s like comparing apples with oranges. Or at least, an apple with half an apple.

This is the tweet

https://mobile.twitter.com/micefearboggis/status/1338069968252964865

Thanks, found it and replied.

“…. the imprecise corrections swamp ….”

You wouldn’t have needed the rest of the sentence😊. That, in itself, speaks volumes. Perfect description.

Excellent article.

ERSSTv4 represent SST observation in the depth of 5-20 meters.

https://www.cicsnc.org/ncics/pdfs/obs4MIPs/ERSSTv4.Obs4MIPs_Technical_Note_Guidance_v3.pdf

I’ll read your reference, but this is from the primary reference for the dataset by Huang (2017). The exact reference is in the biblio.

nobodysknowledge,

I see where you got your number, your reference contains the following quote:

Boyin Huang is saying the observations were taken in that depth range. He says pretty much the same in the paper. But, the paper says these readings are “corrected” to 20 cm and presented in the dataset as being from that depth.

Thank you for your comment. You say:

“ERSSTv4 represent SST observation in the depth of 5-20 meters” and: “Boyin Huang is saying the observations were taken in that depth range. He says pretty much the same in the paper. But, the paper says these readings are “corrected” to 20 cm and presented in the dataset as being from that depth.”

Could this “correction” say something about the difference between HadSST and ERSST?

nobodysknowledge, I don’t know and wish I did.

nobodysknowledge,

This graph of uncertainty is in your reference. Notice if you add the uncertainty in ERSST to the uncertainty in HadSST, you do not make up the difference between them in Figure 6.

I have found by sad experience that my physical property data were not as precise as the RMS sum of the precisions of my instruments. Note that we haven’t even got to accuracy. Precision is a necessary but not sufficient condition to be accurate. Two possible reasons – my instruments overstated their precision, or the system was affected by other variables which I did not find or evaluate. Probably some of both. Just pointing out that we have more faith in both the precision and accuracy of our measurements and models than is warranted. There are exceptions where the researcher can compare the data to a model that actually models reality well from a sound theoretical basis.

+1

Interpolation, is as far as I can tell, is a somewhat obscure word to describe the process of making up data where none exists. In the areas of science and engineering that I have worked, making up data is simply not done. A researcher can assume that a property of interest varies linearly between points of time or space but that is an assumption that must be verified by repeated measurements. Perhaps I do not correctly understand the meaning of the word within the climate science discipline but otherwise, “interpolation” of data seems to represent an enormous gulf between real science and climate science.

DHR, as a former computer mapper, I agree. The comparison of ERSST and HadSST simply illustrates the extreme uncertainty in the observations being used. Opposite trends and a huge 4+ degree difference.

Filling in the blank data, makes a big difference if interpolation or extrapolation is used.

With interpolation, blank data will be filled in with values that are between control points, regardless of the increasing/decreasing trends of rate where there is control data. Maps with final infill data may appear unusual with not much rate change taking place where there was previously blank data, and the average of the final data will usually be near the average of control point data.

With extrapolation, blank data will be filled in considering both the nearest control point data and the rate of change near that control point data. Unfortunately, this technique can be manipulated to generate very high or low values when infilling the blank data areas and can be easily used to homogenize the data to give you any average answer you want, as there are many extrapolation techniques.

Tony Heller has often pointed out where blank temperature data such as Africa is filled in with much hotter data than control points. Looks to me like extrapolation is being used rather than interpolation for that homogenization.

The soil/ground surface behaves in a similar way, a thermal surge tank.

USCRN data shows that the top 100 cm of soil/ground absorbs energy from the sun during the day and releases it back into the air during the night.

That contradicts RGHE.

There are tremendous differences to the SST profile under a high pressure system in the tropics with no wind and a category 5 hurricane in the same place. The amount of energy extracted from the water by the hurricane and subsequent upwelling from below is huge and the storm can be hundreds of km wide. And the disturbance can be seen for weeks after as the track of cooler surface water lingers.

I can appreciate attempts to make sense of the temperature profile of the ocean from the surface down to say 500m or so. But to try to calculate this to small numbers is a fools errand- similar to stating the global temperature average to a hundredth of a degree. Computer models gridding temperature profiles to a couple of degrees lat/lon using infilling, etc. only gives us a maybe good estimate.

Trying to find the actual processes that go on from all the interfaces is worthwhile, no doubt. But to put them into the Cray and come out with the real numbers just gives you pretty graphs that you can’t trust. I think Andy points that out very well.

Andy:Your opening statement is that the “skin”layer loses thermal energy to the atmosphere primarily through evaporation—-.No. A proper surface energy balance shows that the heat loss from the surface is dominated by L.W.radiation emission, with evaporation a factor of about 5 less.

Anthony, That surprises me. Do you have a reference for those numbers? I’d like to read it.

My source Faizal, 2011, says “evaporation is the largest”

https://onlinelibrary.wiley.com/doi/abs/10.1002/er.1885

Only about 6% of incoming radiation is transmitted to space via radiation. 13% goes to the atmosphere and a large portion of that is returned to the ocean. 21% is lost via evaporation. Some is lost via conduction (sensible heat loss), but again a lot of that is eventually evaporated or returned to the ocean. Complex subject.

Anthony, According to my source,

Hartman, Global Physical Climatology, p. 102-105.

I got as far as this and I had to comment:

“The skin layer loses thermal energy (“heat”) to the atmosphere primarily through evaporation, gains thermal energy from the Sun during the day, and constantly attempts to come to thermal equilibrium with the atmosphere above it.”

As far as I know, this is far from true. The CERES data, for example, gives the following heat flows at the ocean surface:

Absorbed solar: 173 W/m2

Absorbed longwave: 360 W/m2

==========================

Total Absorbed: 533 W/m2

Emitted longwave: 410 W/m2

Evaporation/conduction/convection: 123 W/m2

==========================

Total Emitted: 533 W/m2

You’ve left out “gains LW 24/7”, “loses energy primarily through radiation”, and conduction/convection is totally absent from from your calculations.

From the looks of things, you’re considering NET longwave flows … which means that according to you the surface is not absorbing any LW. In addition, your omission of conduction/convection means there’s much more evaporation than in reality.

And that is not the physical reality. The physical reality is that there are two separate LW flows, and if you want to analyze the top of the ocean, you cannot simply add them together. Nor can you add evaporation and conduction/convection. The reason for that is that they are different physical processes and as such are ruled by different variables …

Finally, the skin layer is not physically distinct from the mixed layer, because the entire top of the ocean overturns every night, and restratifies during the day. As a result, there’s a big temperature difference between the skin layer and the mixed layer during the day, with the skin layer being warmer than the mixed layer.

However, at night, due to the overturning + evaporation + radiation, the skin layer is cooler than the mixed layer.

Now, back to reading your interesting analysis.

w.

Willis,

I’m sure your CERES numbers are fine, but they are not relevant here. Thermal energy flows at the surface are not subject of this post. I would refer you to Faizal, 2011, which says “evaporation is the largest”

https://onlinelibrary.wiley.com/doi/abs/10.1002/er.1885

and Hartman, Global Physical Climatology, p. 102-105.

Neither of them ignore LW radiation or conduction. This is a hugely complex topic and your assumptions about what I am ignoring are incorrect. I think if you dig into it you will find that Hartman and Faizal are correct.

I agree with your comments on the mixed layer and the skin “layer.” They are similar and consistent with what I’ve written in this post and the previous one, as far as I know. There should not be a contradiction.

Thanks much, Andy. Faisal is absolutely doing what I said, which is taking the difference of the two LW flows at the ocean surface.

As I said above, this is NOT the physical reality. And Faisal agrees. He doesn’t call it the radiation, instead calling it the “effective back radiation”.

It also leads to incorrect statements like Faisal saying:

Nope. Evaporation is only larger than the EFFECTIVE back radiation, which is NOT A PHYSICAL FLOW OF ENERGY. It is a mathematical construct. If we look at actual energy flows, LW is the largest loss from the surface.

I know that this is not the focus of the post, but I cannot let this totally incorrect description go by without pointing out the error. Why? Because if I don’t, folks will say “But Willis, you’re wrong, I know because Andy May says something other than what you say” …

w.

OK Willis, I understand what you are saying now. I also understand Anthony Mill’s comment and reference better. In your context, you are correct. There is a flux in both directions and they are large. I was talking in terms of energy transfer or as you put it “net energy flow.” Your framework is more oriented toward radiation flows, mine was more in the thermodynamic world. This is why most people who study thermodynamics eventually go crazy (that’s a joke, but painfully close to the truth!). Anyway, the net energy flow from the ocean, at the point of incidence of incoming solar radiation, to elsewhere, is via ocean currents or evaporation. Radiation is moving all over the place in every direction at the surface of the ocean, but it mostly isn’t getting anywhere. About 6% makes it to space, some small percentage goes into heating the overlying air and is carried away by the wind, but with the exception of ocean currents, the net flow, out of the ocean is by evaporation. That is well established. I’ll try and write more precisely next time. At least I said “heat” which is a thermodynamic “net energy” concept. Heat only goes from warmer to cooler. Energy fluxes go both ways. Like I said, thermodynamics plays with your mind.

Willis, see this from DMcKenzie in another comment above:

Andy May December 13, 2020 at 11:57 am Edit

I’m sorry, but this is not true. First, it’s not “calculated from assumed factors”. We have data on some of this.

More importantly, it is NOT the ratio of “ocean heat evaporated versus heat absorbed” as DMcKenzie said. Here’s the real definition:

The Bowen ratio is the ratio of sensible to latent fluxes going UPWARDS from the surface into the air. Absolutely nothing to do with “heat absorbed”.

w.

“As far as I know, this is far from true. The CERES data, for example, gives the following heat flows at the ocean surface:

Absorbed solar: 173 W/m2

Absorbed longwave: 360 W/m2”

With sunlight an ocean is not directly heated much at skin surface

And with “Absorbed longwave” it’s all absorbed at skin surface.

Those values are averaged over 24 hour – or if like, over a year period.

So 360 watts per second average or 360 watt hours per hour.

Seconds in 24 hours: 86,400 seconds times 360 watts is 31,104,000 joules of heat absorbed per day or 8.640 kw hours of Absorbed longwave energy per day.

It seems if 31,104,000 joules of heat is absorbed in the skin surface of water, one will have a lot of evaporation.

1 mm of cubic meter of water is 1 kg of water, “heat of vaporization of water is about 2,260 kJ/kg” Or 2.26 million joules {though that not saltwater] and skin surface that longwave IR can heat is less than 1 mm.

So sunlight sort of uniformly heats more than 1 meter depth of water, or skin of ocean absorbs less than 1/1000th of the 173 W/m2 of sunlight and therefore why say “not heated by sunlight directly {or very small fraction of sunlight heat skin surface}

But I think all realize the Absorbed longwave: 360 W/m2 isn’t energy heating the skin surface or it’s a way to say how much atmosphere insulates against heat loss to space. Which some could call misguided.

A better way to characterize it, is that convectional and evaporative heat transfers dominate heat process in surface air. Whereas if the surface was vacuum, radiant transfers would dominate.

gbaike, Exactly so. Almost all the incident solar energy (or radiation, if you prefer), probably 90% or so, goes into evaporating water from the surface layer. This causes the density of the overlying air to lower because water molecules are lighter than air, the humid air rises, cooler, denser dry air takes its place and more evaporation occurs. All it takes is for the ocean surface to be 0.3 degrees warmer than the overlying air. Radiation is moving in all directions, but the heat transfer is done through evaporation. Its all a function of vapor pressure.

I would add most of ocean heating occurs in the tropics and tropical ocean is loosely known as heat engine of the world. And drives global circulation by evaporative heating.

This due to having Sunlight nearer zenith and that 40% of world gets more than 50% of the sunlight reaching the surface.

And would say it’s not tropics, but rather the tropical ocean, though of course as the sunlight nears the tropic of Cancer and/or Capricorn {in terms of it’s relation to zenith] it brings strong seasonal effect upon the oceans outside of tropics.

Many consider Milankovitch cycles having direct effect upon land areas due to “widening” the tropical zone, and it seem to me it’s more of oceanic effect. Or simply, it seems rain has greater effect upon glaciers.

+1

Andy May December 13, 2020 at 12:19 pm

Not true. The surface absorbs about 164 W/m2 (downwelling minus surface reflections).

Of this, we know that about 80 W/m2 goes into evaporation. How do we know this? Because:

1. What goes up must come down,

2. Average global rainfall is about a metre.

3. It takes about 80 W/m2 to evaporate a cubic metre of water.

Thus, evaporation is about 80 W/m2, absorbed solar is about twice that.

In addition, we cannot forget the downwelling LW. That’s about 345 W/m2, some of which assuredly goes into evaporation.

Next, total losses from the surface. The surface absorbs about 508 W/m2 (SW + LW), and loses about 399 W/m2 by radiation. This leaves about 109 W/m2 for the sensible and latent losses.

But we know the latent losses are on the order of 80 W/m2 … which means there is about 29 W/m2 of sensible heat loss.

This gives us a global Bowen ration of about 0.36 …

Here’s a graph from Hartmann, D. L. (2016). The Energy Balance of the Surface. Global Physical Climatology, 95–130. doi:10.1016/b978-0-12-328531-7.00004-9. You’ll have to go to Sci-Hub to get it. It shows how the Bowen ratio varies with temperature

Note that at a Bowen Ratio of 0.36, the value I got above, the corresponding temperature is about 17°C or so … which is about the global average temperature.

w.

Willis: “This gives us a global Bowen ration of about 0.36”

WR: Great find, as is the graphic. Reading the logarithmic scale of the graphic I see confirmed that latent heat (cooling by evaporation, convection and cloud formation) is by far dominant in the higher temperature range (+ 30°C) while conduction dominates the low surface heat loss in the low temperature range (from about minus 7°C down ward).

Convection is breaking stratification/insulation. So in the low temperature range stratification/insulation) dominates and at the high end de-stratification, the transport of energy away from the surface. These are the two ways the Earth keeps surface temperatures within margins. All controlled by [the release of] water vapor which in itself is temperature controlled.

Water vapor controls surface temperatures (by stratification and de-stratification of the atmosphere) and surface temperatures control the quantity of water vapor. The central system.

Willis, You don’t specify a time period, but it seems you are using 24 hour averages. Thus, you include nighttime. We were discussing instantaneous solar incidence on a clear day at noon in the tropics.

Thanks, Andy. Whether you are only discussing the daytime averages or the 24 hour averages, you get exactly the same total energy. So it doesn’t change my calculations in the slightest, as they are concerned with the total energy flows.

w.

No such thing happens. Energy flow is unidirectional in the E-M field.

Nope. Energy flows both ways.

w.

“Energy flows both ways.”

I may have misunderstood this phrase, but energy flows from warm to cold, always. Key term being ‘flow’.

Radiation has no such requirement – everything radiates, no matter what it hits. Thus, radiation from the earth hits the sun.

What exactly did you mean?

I was speaking about radiation, John, since he was talking about “in the E-M field”. That obviously doesn’t refer to conduction or convection. Sorry for my lack of clarity.

w.

E-M radiation operates in the electro-magnetic field. Energy flows one way.

Leard a bit of powerline theory and you will get to understand how fields equilibrate at the characteristic velocity of the medium. Initial waves are sent out at a power limited by the characteristic impedance. Once the field interacts with another radiating body the field equilibrates. The energy flow is then a function of that field. Energy flow always unidirectional in time and space.

The concept of the photon challenges the “Greenhouse Effect” for silly ideas.. Photons are just discrete packages of energy but the direction of energy flow is always from emitter to receiver down the energy gradient of the E-M field.

Studying thermodynamics ultimately leads to madness!

“The concept of the photon challenges the “Greenhouse Effect” for silly ideas.. Photons are just discrete packages of energy but the direction of energy flow is always from emitter to receiver down the energy gradient of the E-M field.”

That is like saying a balloon only explodes in one direction.

When a photon is emitted, it can be in any direction, regardless of what it eventually strikes. Thus, a photon emitted from your forehead can strike your stove and be absorbed. That is regardless of how many photons your forehead receives from the stove. Think of a photon more as a bullet than anything. Can go in any direction, has no idea what it will strike.

Just to inject some quantum / relativistic madness …

Photons don’t travel.

Travel implies time and photons travel at the speed of light so experience no passing of time. In quantum terminology they are a “massless field”.

Photons don’t do time so they don’t travel.

A photon comes into existence between two points but strictly cannot be considered to be traveling from one to the other.

So the idea that light from a distant star was emitted a long time ago is also false.

The light takes no time to travel from for example the star Betelgeuse.

However time itself is 700 years different at Betelgeuse than here.

Time and space are one. ask Berty.

Excellent analysis. Well done.

Thanks Rud.

Andy, here’s another data point which bears out your analysis … the CERES analysis of SST.

I suspect that at least some of the difference is in how they treat ice covered sea water—both the water temp and the temp of the ice surface are used, with very different results.

The CERES data has the same basic shape as the MIMOC and ICOADS … but again at a different temperature than either of those.

Regards,

w.

Amazing, the error bars are increasing. CERES is reading the skin temperature (upper 20 microns or so) so it will be a bit high depending upon how much of that estimate was made near or in daylight hours.

Andy:My source for the ocean surface energy balance is: http://www.temporalpublishing–Instructors–Supplementary Material–8.The Ocean Surface Balance. Hope it will be helpful.AFM

Thanks Anthony. I found it and the quote you refer to is on page 67. Not sure how to reconcile that with Hartman and Faizal, 2011 at first glance. It could be that Mills and Coimbra are taking a very specific hypothetical and theoretical case, then only discussing instantaneous transfer. Not sure why they are doing that without reading the whole chapter.

Faizal and Hartman are dealing with net, ultimate transfer of energy, which is the context I’m dealing with. Much of the energy is absorbed by the ocean (that is not reflected by the ocean surface or absorbed by the overlying atmosphere before hitting the ocean surface) and transferred by currents away from where it was absorbed. The ocean surface is generally warmer than the overlying air, so there will be some transfer up into the atmosphere. If the temperature difference is more than 0.3 deg., evaporation will take place and it will carry most of the thermal energy that leaves the ocean surface. Some is passed by conduction, some is radiated to the atmosphere. Much of this is radiated back to the ocean, or causes evaporation of water droplets in the lower air. Very little is radiated to space, perhaps 6%. The net transfer of nearly all the energy from the incident surface ocean location to elsewhere is either due to evaporation or by ocean currents.

Anthony, See my discussion with Willis above. I think it is closely related to this one.

https://tinyurl.com/y3qdxjtu

An EL Niño event that relese thermal energy in the athmosphere corresponding to a global temperature increase of about the same order as the total increase in temperature of the athmosphere in the entire industrial period (~1° C), within a timespan of a year, leads me to the question: Istn’t it very difficult to compute the total increase of energy in the Global climate system?

Andy May concludes that: “Given that oceans cover 71% of the Earth’s surface and contain 99% of the heat capacity, the differences in Figure 6 are enormous. These discrepancies must be resolved, if we are ever to detect climate change, human or natural.”

Your message is received, loud and clear, and we thank you for this good work.

Bill Rocks, You are very welcome.

Very good paper. It’s clear that, despite the addition of the Argo floats, the differences in massaging the data leaves us with no confidence in any purported conclusion regarding ocean temperature and zero confidence in the error estimates that claim measurement accuracy is a fraction of a degree. So, is the most accurate indicator of the ocean’s temperature the increase in sea level? I’ve read, without verifying, that the largest component of sea level rise comes from thermal expansion. How accurate are the estimates of glacial melt and aquifer withdrawal to be able to arrive at such a conclusion? It’s clear that accurate thermal expansion can’t be determined from direct temperature measurements with this wildly varying massaging.

Meab, thermal expansion is estimated to be over a third of sea level rise. See the table here:

https://andymaypetrophysicist.com/2017/12/28/glaciers-and-sea-level-rise/

But, as you say we don’t have any idea how quickly the oceans are warming, or even if they are warming, so this estimate doesn’t really mean anything.

“it is hard to have an 18-degree ocean in a 15-degree world”

Depends on how cold the land surface gets at night, the sea surface doesn’t cool much during the night.

Ulric, Land is only 29% of the surface and 10% of that is Antarctica. Land doesn’t matter that much, day or night.

Land could easily lower the global mean 2°C below the mean SST. Think of the seasons and higher latitudes.

The minute you assign a number to it, you invalidate your concept, because you cannot actually know the contribution of land. Land gets very cold as well as warm, but again, if taken globally, it probably averages out – OR THE EARTH WOULD HAVE SPUN OUT OF CONTROL MILLENIA AGO.

What amazes me is that the earth is so AMAZINGLY constant, in the face of many external and internal changes. What a MASSIVE thermostat earth has!

What a MASSIVE thermostat earth has

It’s called the biosphere.

Jim Lovelock was right, the Gaia hypothesis is correct (despite it’s name that suggests airy-fairy new age nonsense, which his hypothesis is not). The biosphere regulates its environment to suit its needs.

It sort of all becomes academic from a climate perspective once you realise the SST is thermostatically limited to the range 271.3K and 305K.

Three widely separated oceans with vastly different net energy uptake and they all arrive at the the same maximum temperature:

https://1drv.ms/b/s!Aq1iAj8Yo7jNhAeQGp_7ns2Kulo9

Andy, thank you very much for this fascinating and informative discussion. It helps confirm my prior understanding that ocean data prior to the 21st Century is unusable in a scientific sense because of prior measurement issues. I agree with your assessment that there is no discernible difference nor trend in ocean temperatures over the 21st Century based on any of your identified datasets.

Is there any way to get these analyses into the UN IPCC AR6 gang-fest?

If they ask, I will provide. I doubt they will ask.

Andy, it seems that such a fundamental question is something a scientist would ask: Why do various recent studies/databases indicate there has been no discernible changes to worldwide ocean temperatures over the last couple of decades?

Oh, well. Asking about the Emperor’s new clothes is never a popular topic with those asking for things from the Emperor. The UN IPCC has alot of eggs in that CAGW basket. [I love mixing metaphors.]

Thanks Dr May for all your hard work in preparing this important article.

It makes very good sense to define the upper ocean as the mixed layer above the thermocline.

This is based on the real physical division that the thermocline represents, and much better than arbitrarily defining an upper layer of 100 or 200m etc.

I would however have expected the word “thermocline” to appear at least once in the article, let alone in all the comments. Maybe I’m just an old school oceanographer.

Hi Phil,

No PhD, I just have a bachelor’s degree in geology. Courtesy of receiving a very good job offer in 1974, it paid 4X the salary I would have received at the University of Chicago had I gone into their graduate school. I still think I made the right decision, but no Dr. title.

The thermocline is the same as the mixed layer depth. Most of my sources use mixed layer depth or MLD and only rarely use the term thermocline. So I followed their lead and didn’t complicate the article by throwing in a term that wasn’t needed to make my point.

But the thermocline is a physical phenomenon in is own right, a real liquid to liquid boundary that defines the mixed layer. Explaining it even with just a sentence would underline still more why it’s so much better to look at the temperature of the mixed layer than an arbitrary depth. That is, it would strengthen still further the basis of your approach. Just saying …

I spent far too long at University/Polytechnic, did a BSc then MSC then another MSc then a PhD (although I was doing a paid job at the same time). But I hated the politics in academia and left for industry, first a contract research house then manufacturing of x-ray microscopes. Keeps me off the streets.

During La Niña, the thermocline in the western equatorial Pacific falls below 250 m, in the eastern Pacific it rises to about 50 m.

http://www.bom.gov.au/archive/oceanography/ocean_anals/IDYOC002/IDYOC002.200902.gif

During El Niño falls very thermocline in the eastern equatorial Pacific.

http://www.bom.gov.au/archive/oceanography/ocean_anals/IDYOC002/IDYOC002.201601.gif