Guest Essay by Kip Hansen — 6 February 2020

How deeply have you considered the social life of spiders? Are they social animals or solitary animals? Do they work together? Do they form social networks? Does their behavior change as in “adaptive evolution of individual differences in behavior”?

How deeply have you considered the social life of spiders? Are they social animals or solitary animals? Do they work together? Do they form social networks? Does their behavior change as in “adaptive evolution of individual differences in behavior”?

In yet another blow to the sanctity of peer-reviewed science and a simultaneous win for personal integrity and self-correcting nature of science, there is an ongoing tsunami of retractions in a field of study of which most of us have never even heard.

Science magazine online covers part of the story in “Spider biologist denies suspicions of widespread data fraud in his animal personality research”:

“It’s been a bad couple of weeks for behavioral ecologist Jonathan Pruitt—the holder of one of the prestigious Canada 150 Research Chairs—and it may get a lot worse. What began with questions about data in one of Pruitt’s papers has flared into a social media–fueled scandal in the small field of animal personality research, with dozens of papers on spiders and other invertebrates being scrutinized by scores of students, postdocs, and other co-authors for problematic data.

Already, two papers co-authored by Pruitt, now at McMaster University, have been retracted for data anomalies; Biology Letters is expected to expunge a third within days. And the more Pruitt’s co-authors look, the more potential data problems they find. All papers using data collected or curated by Pruitt, a highly productive researcher who specialized in social spiders, are coming under scrutiny and those in his field predict there will be many retractions.”

The story is both a cautionary tale and an inspiring lesson of courage in the face of professional setbacks — one of each for the different players in this drama.

I’ll start with Jonathan Pruitt, who is described as “a highly productive researcher who specialized in social spiders”. Pruitt was a rising star in his field and his success led to his being offered “one of the prestigious Canada 150 Research Chairs” — where he has established himself at McMaster University in Hamilton, Ontario, Canada in the psychology department where he is listed as the Principal Investigator at “The Pruitt Lab”. The Pruitt Lab’s home page tells us:

“The Pruitt Lab is interested in the interactions between individual traits and the collective attributes of animal societies and biological communities. We explore how the behaviors of individual group members contribute to collective phenotypes, and how these collective phenotypes in turn influence the persistence and stability of collective units (social groups, communities, etc.). Our most recent research explores the factors that lead to the collapse of biological systems, and which factors may promote systems ability to bounce back from deleterious alternative persistent states.”

This field of study is often referred to as behavioral ecology. In terms of research methodology, this is a difficult field — one cannot, after all, simply administer a series of personality tests to various groups of spiders or fish or birds or amphibians. Experimental design is difficult and not normalized within the field; observations are in many cases by necessity quite subjective.

We have seen a recent example in the Ocean Acidification (OA) papers concerning fish behavior, in which a three-year effort failed to replicate the alarming findings about effects of ocean acidification on fish behavior. The team attempting the replication took care to record and preserve all the data and, Science reports, “It’s an exceptionally thorough replication effort,” says Tim Parker, a biologist and an advocate for replication studies at Whitman College in Walla Walla, Washington. Unlike the original authors, the team released video of each experiment, for example, as well as the bootstrap analysis code. “That level of transparency certainly increases my confidence in this replication,” Parker says.”

The fish behavior study is of the same nature as the Pruitt studies involving social spiders. Someone has to watch the spiders under the varied conditions, make decisions about perceived differences in behavior, record differences in behavior, in some cases time behavioral responses to stimuli. The results of these types of studies are in some cases entirely subjective — thus, in the OA replication, we see the care and effort to video the behaviors so that others would be able to make their own subjective evaluations.

The trouble for Pruitt came about when one of his co-authors was alerted to possible problems with data in a paper she wrote with Pruitt in 2013 (published in the Proceedings of the Royal Society B in January 2014) titled “Evidence of social niche construction: persistent and repeated social interactions generate stronger personalities in a social spider“.

That co-author is Dr. Kate Laskowski, who now runs her own lab at the University of California at Davis San Diego. She was, at the time the paper was written, a PhD candidate. I’ll let you read her story — it is inspiring to me — as she tells it in a blog post titled “What to do when you don’t trust your data anymore”. Read the whole thing, it might restore your faith in science and scientists.

Here’s her introduction:

“Science is built on trust. Trust that your experiments will work. Trust in your collaborators to pull their weight. But most importantly, trust that the data we so painstakingly collect are accurate and as representative of the real world as they can be.”

“And so when I realized that I could no longer trust the data that I had reported in some of my papers, I did what I think is the only correct course of action. I retracted them.”

“Retractions are seen as a comparatively rare event in science, and this is no different for my particular field (evolutionary and behavioral ecology), so I know that there is probably some interest in understanding the story behind it. This is my attempt to explain how and why I came to the conclusion that these papers needed to be removed from the scientific record.”

How did this happen? The short story is that as a result of meeting and talking with Jonathan Pruitt at a conference in Europe, Pruitt sent Laskowski “a datafile containing the behavioral data he collected on the colonies of spiders testing the social niche hypothesis.” Laskowski relates how the data looked good and that there was clear inference in the data that was “strong support for the social niche hypothesis”. With such clear data, she easily wrote a paper.

“The paper was published in Proceedings of the Royal Society B (Laskowski & Pruitt 2014). This then led to a follow-up study published in The American Naturalist showing how these social niches actually conferred benefits on the colonies that had them (Laskowski, Montiglio & Pruitt 2016). As a now newly minted PhD, I felt like I had successfully established a productive collaboration completely of my own volition. I was very proud.”

The situation was a dream come true for a young researcher — and her subsequent excellent work brought her to UCSD where she established her own lab. Then….

“Flash forward now to late 2019. I received an email from a colleague who had some questions about the publicly available data in the 2016 paper published in Am Nat. In this paper we had measured boldness 5 times prior to putting the spiders in their familiarity treatment and then 5 times after the treatment.

The colleague noticed that there were duplicate values in these boldness measures. I already knew that the observations were stopped at ten minutes, so lots of 600 values were expected (the max latency). However, the colleague was pointing out a different pattern – these latencies were measured to the hundredth of a second (e.g. 100.11) and many exact duplicate values down to two decimal places existed. How exactly could multiple spiders do the exact same thing at the exact same time?”

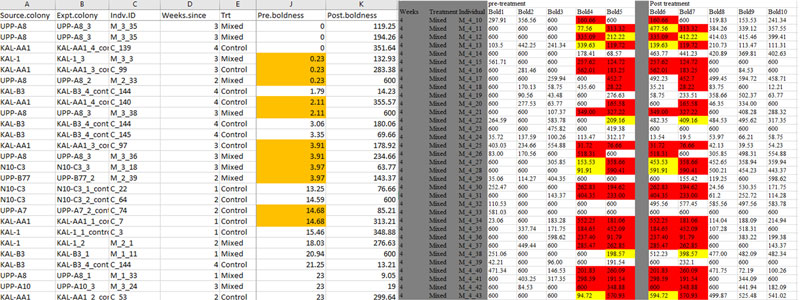

Lawkowski performed a forensic deep-dive into the data and discovered problems such as these (highlights indicate unlikely duplications of exact values; see Lawkowski’s blog post for larger images and more information):

Remember, Laskowski’s paper was not based on data that she had collected herself, but on data provided to her by a respected senior scientist in the field, Jonathan Pruitt. It was data collected by Pruitt personally, not as part of a research team, but by himself. And that point turns out to be pivotal in this story.

Let me be clear, I am not accusing Jonathan Pruitt of falsifying or manufacturing the data contained in the data file sent to Laskowski — I have not investigated the data closely myself. Pruitt is reported to be doing field work in Northern Australia and Micronesia currently and communications with him have been sketchy — inhibiting full investigations by the journals involved. Despite his absence, there are serious efforts to look into all the papers that involve data from Pruitt. Science magazine reports “All papers using data collected or curated by Pruitt, a highly productive researcher who specialized in social spiders, are coming under scrutiny and those in his field predict there will be many retractions.” [ source ]

A blog that covers this field of science, Eco-Evo Evo-Eco, has posted a two part series related to data integrity: Part 1 and Part 2. In addition, there are two specific posts on the “Pruitt retraction storm” [ here and here ] , both written by Dan Bolnick, who is editor-in-chief of The American Naturalist. This journal has already retracted one paper based on data supplied by Pruitt, at Laskowski’s request.

In one of the discussions this situation has spawned, Steven J. Cooke, Institute of Environmental and Interdisciplinary Science, Carleton University, Ottawa, Canada opined:

“As I reflect on recent events, I am left wondering how this could happen. A common thread is that data were collected alone. This concept is somewhat alien to me and has been throughout my training and career. I can’t think of a SINGLE empirically-based paper among those that I have authored or that has been done by my team members for which the data were collected by a single individual without help from others. To some this may seem odd, but I consider my type of research to be a team sport. As a fish ecologist (who incorporates behavioural and physiological concepts and tools), I need to catch fish, move them about, handle them, care for them, maintain environmental conditions, process samples, record data, etc – nothing that can be handled by one person without fish welfare or data quality being compromised.”

It wasn’t long ago that we saw this same element in another retraction story — that of Oona Lönnstedt, who was found to have “fabricated data for the paper, purportedly collected at the Ar Research Station on Gotland, an island in the Baltic Sea.” Science Magazine quotes Peter Eklöv, Lönnstedt’s supervisor and co-author in this Q & A:

Q: The most important finding in the new report is that Lönnstedt didn’t carry out the experiments as described in the paper; the data were fabricated. How could that have happened?

A: It is very strange. The history is that I trusted Oona very much. When she came here she had a really good CV, and I got a very good recommendation letter—the best I had ever seen.

In the case of Jonathan Pruitt, the evidence is not yet all in. Pruitt has not had a chance to fully give his side of the story or to explain exactly how the data he collected alone could reasonably contain so many implausible duplications of overly exactly measurements. I have no wish to convict Jonathan Pruitt in this brief overview essay.

But the issue raised is important and has wide generalisability. It can inform us of a great danger to the reliability of scientific findings and the integrity of science in general.

When a single researcher works alone, without the interaction and support of a research team, there is the danger that shortcuts can be taken with justifying excuses made to himself, leading to data being inaccurate or even just filled in with expected results for convenience. Dick Feynman’s “fooling themselves” with a twist.

Detailed research is not easy — and errors can be and are made. Data files can become corrupted and confused. The accidental slip of a finger on a keyboard can delete an hour’s careful spreadsheet reformatting or cast one’s carefully formatted data into oblivion. And scientists can become lazy and fill in data where none was actually generated by experiment. A harried researcher might find himself “forced” to “fix up” data that isn’t returning the results required by his research hypothesis, which he “knows” perfectly well is correct. In other cases, we find researchers actively hiding data and methods from review and attempted validation by others, out of fear of criticism or failure to replicate.

There are major efforts afoot to reform the practice of scientific research in general — suggestions include requiring pre-registration of studies including their designs, methodologies, statistical methods to be applied, end points, hypotheses to be tested with all these posted to online repositories that can be reviewed by peers even before any data is collected. Searching the internet for “saving science”, “research reform” and the “reproducibility crisis” will get you started. Judith Curry, at Climate etc., has covered the issue over the years.

Bottom Line:

Scientists are not special and they are not gods — they are human just like the rest of us. Some are good and honorable, some are mediocre, some are prone to ethical lapses. Some are very careful with details, some are sloppy, all are capable of making mistakes. This truth is contrary to what I was led to believe as a child in the 1950s, when scientists were portrayed as a breed apart — always honest and only interested in discovering the truth. I have given up that fairy-tale version of reality.

The fact that some scientists make mistakes and that some scientists are unethical should not be used to discount or dismiss the value of Science as a human endeavor. Despite these flaws, Science has made possible the advantages of modern society.

Those brave men and women of science that risk their careers and their reputations to call out and retract bad science, like Dr. Laskowski, have my unbounded admiration and appreciation.

# # # # #

Author’s Comment:

I hope readers can avoid leaving an endless stream of comments about how this-that-and-the-other climate scientist has faked or fudged his data. I don’t personally believe that we have had many proven cases of such behavior in the field. Climate Science has its problems: data hiding and unexplained or unjustified data adjustments have been among those problems.

The desire to “improve the data” must be tremendously tempting for researchers who have spent their grant money on a lengthy project only to find the data barely adequate or inadequate to support their hypothesis. I sympathize but do not condone acting on that temptation.

I would appreciate it if researchers and other professionals would leave their stories and personal experiences that apply to the issue raised.

Begin your comments with an indication of whom you are addressing. Begin with “Kip…” if speaking to me. Thanks.

# # # # #

They certainly prowl the Web daily looking for the freshest ideas in food

The real lesson here for the post-modern scientist – train an associate that is loyal to you and will make sure there are no duplicate numbers in your data file. (Sarc… I hope)

Mike ==> There have been cases where an assistant has noticed irregularities and finally spoken up — much later.

Dear friends,

When Politics meets Science, the resulting science will loose. You observe this in all the science disciplines; the most recent one is the Synthetic Biology science delivering its newest Corona model.

Again when big money, in this case Defence, is hitting scientists, we have a problem.

oebele bruinsma ==> Give us a bit more information on your view on this.

Readers can see this link for some idea what this is about:

https://www.forbes.com/sites/johncumbers/2020/02/05/seven-synthetic-biology-companies-in-the-fight-against-coronavirus/#3757439216ef

Kip Hansen, your closing comment struck a nerve for me :

“Scientists are not special and they are not gods — they are human just like the rest of us. . . This truth is contrary to what I was led to believe as a child in the 1950s, when scientists were portrayed as a breed apart — always honest and only interested in discovering the truth. I have given up that fairy-tale version of reality. . . Those brave men and women of science . . . like Dr. Laskowski, have my unbounded admiration and appreciation. . . “

In 1995, ecologist and soil microbiologist Geoffrey Dobbs cautioned his fellow scientists in “The Local World” not to follow the path of previous movements for human advancement which have grown too great and felt the temptations of power. (Do I hear the words ‘climate change’ echoed here?)

Dobbs wrote words to the effect: The true language of physical science, namely mathematics, though capable of indefinite expansion on the one plane of number and quantity, is totally incapable of dealing with the personal, with the will, the purpose of life, etc.

In his lifetime he experienced that those who pressed forward with the exploitation of such power were characterised by a certain arrogance which showed in their contempt for those who retain the humbler attitude to nature.

He pinpointed two scientists of the Institute for Theoretical Biochemistry and Molecular Biology at Ithaca, New York, who writing in Nature, April 1989, provided a somewhat crude example of the superiority-complex of the physicist and chemist towards the biologist.

Although described as ‘biologists’, presumably because they applied these elemental sciences to material derived from the living, they described biologists as fundamentally uneducated people who do not understand how science works; few of whom can appreciate the need for revolutionary hypotheses and fewer still can generate them.

Biologists, they said, write innumerable papers presenting excruciatingly boring collections of data, in contrast with physicists, in whom speculation is encouraged. Physics, they say, grew out of philosophy, while biology grew from medicine and bird-watching!

oebele bruinsma wrote:

“When Politics meets Science, the resulting science will loose. You observe this in all the science disciplines; the most recent one is the Synthetic Biology science delivering its newest Corona model.”

Then oebele added: “Again when big money, in this case Defence, is hitting scientists, we have a problem.”

I would like to add: “The love of, that is the preference for Money, above all other considerations, is the root of all kinds of evil.”

That truth was known over two thousand years ago! But science with its mathematics alone doesn’t have the answer to this problem.

Dobbs’ work here:

https://alor.org/Storage/Library/PDF/Dobbs_G-The_Local_World.pdf

Betty ==> Thanks for your insightful comment.

And thanks for the Dobbs link — I have downloaded the book and will get to it.

Mike Bryant February 6, 2020 at 7:02 am

The real lesson here for the post-modern scientist – train an associate that is loyal to you.

____________________________________

What about

– find an associate known as loyal to facts and findings.

Unfortunately, almost all of the rewards in research motivate getting the desired answers rather than the correct answers.

I have personally had a researcher in a pharmaceutical company ask if I could write an editor to enable him to modify automatically acquired sequences of data “because the curve it generates has some unwanted features”.

Basically, not the nice smooth curve that they wanted to see.

I refused, but I can’t guarantee that someone else didn’t do it for him.

The real answer, of course, at least step one, would be to get more data. If the artifacts then smoothed out, fine, if they didn’t, or became more pronounced then there was probably something going on that needed to be explored. This researcher, and his boss didn’t want to hear that more time and effort needed to be expended, possibly uncovering things that others would be unhappy with.

Motivation is the key here. It should be positive for uncovering problems. Not the reverse.

Phillip ==> It is only in the software industry that I know people are rewarded for discovering problems. I have a friend who has spent his entire career breaking software for a VERY big tech company. He writes wicked routines that sneak in an simulate idiot users or malformed data and run them against the software packages until it breaks — then he makes them fix it.

Muhahaha!

For a guy who has the talent that must be a very fun job.

Richard ==> He refuses to retire…they pay him too much and he does admit to having far too much fun.

QA Engineer walks into a bar.

Orders a beer.

Orders 0 beers.

Orders 999999999 beers.

Orders 3.14159 beers.

Orders a lizard.

Orders -1 beers.

Orders a sfdeljknesv.

Orders .

I once made a very big, very expensive, ERP software package I was evaluating (no names!) go completely off the rails by almost literally “ordering -1 beer” (putting in a negative value for a quantity, that by definition is >0).

They did not like that at all, but sooner or later some idiot user will do just that.

“Nothing can ever be made foolproof because fools are such creative people”

Being an ERP programmer myself, I can tell you there are times we are asked to make it possible to order -1 beer…

To which the answer is obviously NO. Perpendicular red lines and all that.

Pariah Dog ==> Yeah, when the customer wants the impossible….

tty ==> My experience as well, working with brand new cutting edge web apps that were quick’d and would crash browsers.

Not just -1 either. I broke a compiler with -0.

BobM ==> That would do it!

I used to work in field service in the semi conductor business. One of our customers would always ask for a SW fix to prevent a bonehead move every time one of their operators screwed up. Eventually it got to the point where through put had been so badly stunted that they had to ask us to go back on a bunch of the changes.

Kip – I worked in IT many years ago, designing and developing systems. I told the testers that their job wasn’t to demonstrate that the software worked, it was to prove that it didn’t. When our software was released, it worked. My very first boss, in the late 1960s, was absolutely brilliant. I have never forgotten the time she was complimented by her boss for producing very large and complex systems in which no-one had ever found a bug. She replied “I didn’t know they were allowed”.

Mike ==> Great story — thanks.

“All software has bugs” is a mantra I heard and used often in my IT career.

Programmers should not forget that a cat never walks over a keyboard the same way twice.

Many thanks for your numerous contributions to this blog.

John in Oz ==> Thanks, John.

Everything I ever did had bugs, but I managed to convince my superiors that they were intended FEATURES.

Kip,

I spent many years as an electronics design engineer – our company built ion implanters which are used to manufacture silicon chips.

I coined these phrases:

“There’s always one more bug”.

“If it can go wrong it already has”.

“Every solution is a problem”.

Many thanks for a very interesting article. I’m passionate about the integrity of science. That’s why I’m so despondent about the state of climate science. But, although it often gets things wrong initially, science is also self-correcting. Continental drift is a perfect example of how the scientific concensus can be totally wrong – and also how it is self-correcting.

It’s ironic that, in his early years as he formulated Relativity, Einstein was the sceptic and he was attacked by the concensus scientists. He famously said that if they were right and he were wrong, it would only need one scientist to prove it.

It is truly painful to have to watch climate science corrupted by alarmism, green fantasy and self-interest. But I know eventually the truth will triumph. Sadly it may not be in my lifetime.

Chris

Chris ==> I really like you coined phrases — I hope you won;t mind if I use them in the future.,

One frequently hears that concrete has cracks and see that every where. In fact, it is bad specification and installation that is behind cracks. There is no excuse for cracks, A dam would not hold water if has cracks, The Romans were able to make water reservoirs, dams and aqueducts some of which are still working 2000 years later.

There is no excuse for getting measured data wrong whether by an individual or a group. It just requires precision in measurement, full openness about methods, good statistics for potential errors and allowing independent review to check and/or duplicate.

Respectfully disagree with “There is no excuse for getting measured data wrong ” It should be there is no excuse for not recording data and methods accurately. Dishonesty should be first and foremost what there is no excuse for. Wrong is subjective. After all, if my curve does not match the untouchable hockey stick is it “wrong” and need to be fixed? One of my biggest problems with CAGW is how did Mann mistakenly (for the methods are garbage) produce an “accurate” hockey stick? He claims following studies got same curve as vindication. I claim it is proof of curve fitting because scientists thought their answer was “wrong” when it didn’t. What are the odds of accidentally getting it right?

ironargonaut ==> I tend to agree. Data must be observed and recorded exactly — if then your measurements look funky, then that needs to be investigated and explained before going on.

There is also no such thing as bug free software. Just software that is stable, meaning that it hasn’t been broken in a long time.

I used to do something similar, many years ago, now I just specialise in M$ tech and watch it break itself and have a good chuckle.

Amen, brother! The purpose of science is not to be correct. The purpose of science is to discover how the world works. If I research a hypothesis, and the research shows that my hypothesis was wrong, that is a win for science. It might be painful to me when I find that my hypothesis is wrong, but I should find solace in the fact that I have saved other scientists from spending time and money on going down the same deadend.

Ken ==> Yes — it is terrifically important that “negative results” make it into the official record — published science — so that we also know what isn’t true.

The failure to publish negative results is a big issue in medical research where it can cost lives.

Susan ==> Absolutely — science must show what DOESN’T work as well as what does.

We would joke at work when an experiment would fail and just say let’s publish in the Journal of Negative Results.

“The purpose of science is not to be correct.”

Not sure if you really meant to say that. Not to be correct? To not be correct would mean to be wrong.

“If I research a hypothesis, and the research shows that my hypothesis was wrong, that is a win for science.”

But it’s also a win if you’re correct, no?

The purpose of science is not to prove that the investigator’s hypothesis is correct. IOW, the purpose of science is not to be correct.

“Not sure if you really meant to say that. Not to be correct? To not be correct would mean to be wrong.”

“Not to be correct” is absolutely not the same thing as “To not be correct.”

jorgekafkazar ==> I get the point though — a researcher’s goal is NOT to do an experiment that proves his hypothesis — it is to discover if his hypothesis is true in the real world.

Either way, whether your hypothesis is wrong or not, you’re trying to come up with the correct answer.

“Not to be correct” is absolutely not the same thing as “To not be correct.”

This seems like a distinction without a difference.

It is taught in college. For example chem lab 201. Heat water to boiling record data with automatic temperature wand hooked to computer. One value does not fit expected curve. Automatic B because STUDENT must have done something wrong. What? Unknown. It was mostly automated after all. Lesson learned? Change the one value manually in data. Honesty doesn’t count. Only matching the hockey stick curve…err I mean water heating curve does.

ironargonaut ==> ah, bad lesson….the real lesson would be to require the student try to explain why his results differed from the expected results. Lots to learn from tat exercise.

Kip, shouldn’t it be a “principal investigator”?

Curious ==> Of course it should! My fingers can’t tell the principle differnece though…..

Well, she seems like a principled researcher to me.

H.R. ==> Good one….

principal instigator?

still the problem of corrupting peer review…

how many times was his papers cited…without any of them ever being replicated

Latitude ==> The papers were written in good faith by a serious and ethical young researcher. She was depending on the data supplied to her by an extremely well-respected, even famous-in-the-field senior scientist.

Once the problem was pointed out to her, she immediately did a through investigation of the data, discovered even deeper problems, and requested retraction.

..and how many times were the papers cited without ever being replicated?

I said that’s the problem with the way peer review has been corrupted…

…even though she caught it….that changes nothing about how peer review has been corrupted to mean truth

“I am aware that there are concerns affecting a large number of papers at multiple other journals, and at this point I’m aware of co-authors of his who have contacted editors at 23 journals as of January 26. “

Latitude ==> You see the downside — but now look at the upside.

Researchers in the field will be able to re-evaluate their work that cited the retracted papers — all the hullabaloo will bring about a new look and a better understanding.

Of course, things would have been better if there had been no papers requiring retraction.

one paper was cited 22 times…..each one of those papers were cited again…and again…and again

…and each time…they accepted peer review as truth…built the science around it…and passed it on

the downside is this is the way it’s done…and this one time will not change anything…that 1000’s of other times have not changed

Not to hijack a thread – well, a little, but it’s important – out here in Portland, OR, we are again fighting the Cap and Trade – there is a huge rally at the state capital – my boss and the owner of my company are there (I gotta work – I don’t think they wanted me to go – they were afraid I might bite someone – and I almost never do that).

This is Governor Kate Brown’s contact info – she’s a rigidly close-minded hack, with no concern other than paying her PERS debt by gouging the tax-paying middle-class (but not Nike – Heaven forbid), but she was pushed back last year. Any help is appreciated.

https://katebrownfororegon.com/contact

Joel ==> Good luck in your fight there in Oregon.

Be careful who you bite, some people have terrible diseases.

Indeed. I wouldn’t even LICK any of the XR people, let alone bit them.

Oops. Bite them!

Beware of sound bites, too.

jorgekafkazar ==> Sound bites can be even MORE dangerous.

I know. I’m behaving.

“Highly productive” raises alarm bells. Pruitt was listed as an author or co-author for 31 papers in 2019 and 17 papers in 2018. Sometimes this indicates that the author is the head of a research consortium where it’s customary to include the head as an author, or a professor who is advising a boatload of graduate students, most of whom are publishing. But it beggars belief that anyone could give so many papers the attention that being a co-author warrants.

Killer ==> Pruitt is the head of his own lab at McMaster in Canada. As I understand it, he shares his research data — data from field trips etc — freely with others, and ends up as a co-author on papers that use his stuff.

The current focus is on papers that used Pruitt’s self-collected data.

Thanks, Kip Hanson, for bringing us this story. Quite the clear cut example of the crisis in science that’s been so concerning to us all along!

I know I may sound like a sourpuss, but I have to say that to me, this kind of situation suggests that we are wasting lots of money on research that just helps to enable some kind of pre-determined bad policy biases? You know how it is, activists and/or the researchers themselves will claim that the (not at all acid) “acidified” ocean is making the fish go crazy, etc.! Then, at that point, even the best informed skeptics, who come along and question the whole thing, naturally get called “anti-science”. Rinse and repeat, and we get real damage out of any resulting bad policy decisions — better off if the original researchers had just stayed home!

David ==> I agree that you are being a sourpuss….:-)

A 2-second look around you, where ever you are, will show you the wonders that science research have made possible – despite the occasional bad player.

You must be reading this on one f those modern miracles brought to you through research in science — a computer that when I was a by was Science Fiction.

The rewards we reap from just one decent scientific discovery are incalculable. On the other hand …

The vast majority of published research findings are wrong and can’t be reproduced, replicated, or repeated.

It’s kinda like gold mining where the yield can be one gram per ton of ore.

That’s fine. Without the ore, there’s no gold. The problem is the scientists who point out the undeniable benefits of science and then demand that we believe them (say, about dietary fat, or CAGW for instance) because they’re scientists. I’m not sure which logical fallacy that is, but it’s fallacious as crap.

commie ==> As with the Secret Science stuff, it is the DEMAND that we believe or accept any particular finding that is DEAD WRONG and ANTI-SCIENCE.

CommieBob, what is your evidence that the “vast majority of published research findings are wrong and can’t be reproduced, replicated or repeated”?

First, there is a difference between (a) can’t be reproduced, that is, someone has tried to reproduce the results and has failed, and (b) haven’t been reproduced, because no-one has tried.

But many more replications occur than you probably realise. In mining geology for instance, if one geologist theorises that, say, diamonds form in certain geological situations, and claims to have found some based on this theory, you can be certain that there will be an army of other geologists checking out every such geological occurrence to see if this is true. If the first geologist faked the original data to make a killing on the stock exchange, they will soon be found out by others attempting replication. See medicine (biology), agriculture (botany etc) and many other sciences.

If you wish to claim that the ‘vast majority’ of research papers are wrong, you need to show that you or others have actually tried to replicate the results and have not been able to. Otherwise your claim is open to the same criticism, that it has not been reproduced, replicated or repeated. Perhaps start with definition of terms? Exactly what is a ‘vast majority’? 51%? 75%? 99%?

The area where replication is routinely attempted is in biomedicine. That’s because drug companies are looking for findings that can be turned into new drugs. The first step in evaluating an interesting paper is to try to replicate it.

There is a book, Rigor Mortis that details the attempts by Amgen and Bayer to try replication. In the case of Amgen, the success rate was fewer than 1 in 10. In a disturbing number of cases, scientists could not even reproduce their own experimental results.

The replication crisis is real and few scientists dispute that fact.

Tom,

John P.A.Ionnidis, “ Why Most Published Research Findings Are False”, (2005) PLOS Medicine,2(8):e24.

See also the Wikipedia entry on John P.A.Ionnidis and the 23 References listed.

It appears that the issue is widespread and accepted as a problem.

please excuse my old eyes and fingers today … they seem to be acting up …

of boy etc.

The slack researchers and other problem people that were making my frowny face didn’t create these things. However, it would appear that if they get their way, they could make a lot of our essentially fuel based tech disappear — or at least make things so expensive that access would disappear for most of us.

David, there is a difference between sceptics who come along and question the whole thing, and scientists who try and replicate the research. The ‘sceptics’ often dislike the implications of the research and are looking for a reason to reject it as fake; they are gleeful if they find this.

The scientists don’t set out to prove the original researcher was intentionally falsifying data, they are puzzled by data anomalies or may want to expand or clarify the results. They don’t expect or seek falsification, and are disappointed if they find it. But they also know that it could be sloppiness, or cutting corners to get lots of publications out.

It would help if scientists were encouraged to publish unsuccessful research, and got as much credit as for the big new breakthrough. That would remove the temptation to massage the data in order to get another publication.

Tom ==> Psychology has The Reproducibility Project. — https://en.wikipedia.org/wiki/Reproducibility_Project and the social science have http://www.socialsciencesreplicationproject.com/

“The scientists don’t set out to prove the original researcher was intentionally falsifying data, they are puzzled by data anomalies or may want to expand or clarify the results. They don’t expect or seek falsification, and are disappointed if they find it. But they also know that it could be sloppiness, or cutting corners to get lots of publications out.”

They are so puzzled, they refuse access to data and methods, and do things like “hide the decline”.

Steve McIntyre was genuinely puzzled by data anomalies, and was constantly rebuffed by your scientists.

This sounds like a fine example of why “Secret Science” should be under heavy attack – the crap that has been and is being passed off as real science needs to be exposed so we can stop wasting resources on it, prevent it from doing more harm than good, etc.

AGW ==> There are a lot of issues with research these days. There are also a lot of efforts being made to correct the institutional, societal, and methodological issues that are known.

Stay hopeful — you are reading this on a modern miracle brought to you through scientific research.

There are also many papers out there that have suffered in value due to progress in technology. It does not mean they should be retraced, but the details of the procedure and analysis are critical to the meaning of the results.

I studied black mussel phospholipid fatty acids years ago, examining their cell membranes’ ability to withstand huge temperature changes with the tides and seasons. Published data only included fatty acids that composed < or = to 1.0%, listing only 10 different fatty acids. My up to date GC data showed 65 components. If those 55 extra components are all just <1%, they could represent up to 64% of the mixture. The apparent complexity of such a fatty acid mixture would be vastly underestimated in the earlier paper. This is why the methods, instruments, and analyses used have to be specifically listed for readers to understand the potential of the results.

Charles ==> Thanks for your input as a professional researcher. Your ppoint is well-taken — we must not neglect the effect of advancing technology in research — and the inverse, the fact that materials and methods in the past were different and often far short of the techniques we have today. That does not invalidate their findings, but must be taken into account.

Ah , spiders…the old rhyme comes to mind .

Pider, pider on the wall …

Ain’t you got no sense at all ?

Don’t you know walls are made for plaster ?

GET OFF THAT WALL YOU STUPID ….

Pider !

😉

This is hardly a new issue – Sir Peter Medawar wrote about the case of the Spotted Mice a long time ago. Perhaps the 1980s…

Dodgy ==> Yes, a famous case where a researcher has had success with a skin graft on a mouse but then couldn’t repeat that success. In desperation, he faked a new result and was caught out.

“the team released video of each experiment”

I’ll wager that would would be extraordinarily un-gripping to watch.

Jeff ==> Well, it might be boring but think of the value to future researchers….

Want to check their results? watch the video of each interaction, make up you own mind what effect or lack of effect it shows.

The foundational error in this example was to skip carefully examining the source data and relying on the reputation of the provider. When Willis Eschenbach does a post here he almost always explicitly shows this step. It’s a practice that people would rather skip to get to the “good stuff” but not only does it provide an opportunity for finding anomalies and errors, for a questioning mind it can provide some additional insight.

Gary ==> Sounds easy — but isn’t. Read Laskowski’s blog on the amount of work she had to do to discover the tell-tale oddities in the data — far beyond the usual consistency checks and tests for data points way out of line (outliers, obvious errors, etc).

Maybe it is not easy. Nonetheless, is fundamental.

I have not read her story yet. But repeated reaction time values should have been checked, if they were a leading outcome of any study.

You run the frequencies that you get for the outcome value, and look at the results. If we get a thousand high school kids running the 100 meter dash, there should be something of an even distribution, with a positive skew. If there are clusters of 20 data points at 13.008 seconds and 14.010 seconds, something is weird.

While you should inspect your data before running any analyses – in order to detect such things as multiple entries of the same value, or out-of-range values, you should also “predict” what the outcomes will be. Whether you run chi-squared, t test, regression, or whatever.

You can run a zero-order correlation to examine the relation between any two continuous variables before you run a regression. If the value of the standardized regression weight is similar to the value of the “pearson r” or whatever correlation metric you use, you can feel OK, having anticipated the magnitude of the standardized regression weight. But if these differ, then you know instantly to go see where a third is sharing variability.

So, inspect the data first to detect problems, whether intentional or not, with values, and to anticipate what your modeling results will be so that you catch on quicker when something funny gets spit out of the computer.

In fact, you should hypothesize the results you expect to see before you even gather data. If I am going to gather spider response time, I should know whether I will get data in one-tenths of a second or in minutes. Likewise, I should anticipate correlations between measures.

I am a peer reviewer. In my few areas of expertise, I know what values I should see on different types of measures. If I review an paper and the overall epidemiological incidence in a population does not match what is known generally, then I know I am looking at a pretty different sample.

Example: if breast cancer incidence in a cohort of women by age 60 is around half a percent, and a submitted manuscript has incidence at 2%, then something is going on. I can anticipate a lot of what I expect to see by reading the study title.

I interviewed for a post doc once upon a time. The guy was pretty smart. He asked what my dissertation was. I told him the topic. He asked, “what is your sample size?” I told him. He said, “so, you have about [x] number of cases?” I was like “did you read my under-construction draft or something??!!” He just knew the incidence I likely would have if my sample were close to the population value, and could quickly calculate in his head that incidence percent crossed with my sample size of a few thousand.

If he happened to be a peer reviewer of the eventual manuscript, hew would know what to look for by just reading the title of the ms. (I would say the reviewers were lenient on a couple weak points and this study is in a well known journal.)

In the hospital, people taking pulse will often take pulse for 15 seconds and multiply by 4 to get BPM. Hence, pulse tends to be in a medical chart in numbers that are divisible by 4 (whole number result). Not a big deal – clinically, the diff between 70 and 74 BPM is not a big deal. But if you are testing some hypothesis, you may be at the “oh darn” p level due to such mis-measure.

But if you are on a research team, you can inform everyone that the data will probably look like that. Then, we all know to look for what we might expect.

And, you can be a better peer reviewer.

Inspect your data.

Democrat ==> A good description of what Laskowski had to do in what she called a forensic look at the data.

Thanks for your professional viewpoint.

Kip==>Usually the essential things aren’t easy, but it comes with the job. However, even the rudimentary checks often are skipped. A basic level of data examination ought to be part of the protocol for any research.

However this is often not practical beyond a very shallow level. Say you are refereeing a paper based on specimen data from several museum collections from different parts of the World. You can check for consistency, that there are no obviously wrong data, that the data distribution does not look too odd, that the museums exist, and that the specimen metadata look legit, but you can’t really do much more than that.

Peer review is unfortunately not likely to catch a really skillful fake.

tty ==> Or even to catch just plain poor data collection practice.

Gary ==> I do agree, of course, but the normal checks might not have caught the problem in Pruitt’s data.

Who needs math anyway?/sarc

Don’t Listen to E.O. Wilson

Math can help you in almost any career. There’s no reason to fear it.

E.O. Wilson is an eminent Harvard biologist and best-selling author. I salute him for his accomplishments. But he couldn’t be more wrong in his recent piece in the Wall Street Journal (adapted from his new book Letters to a Young Scientist), in which he tells aspiring scientists that they don’t need mathematics to thrive. He starts out by saying: “Many of the most successful scientists in the world today are mathematically no more than semiliterate … I speak as an authority on this subject because I myself am an extreme case.” This would have been fine if he had followed with: “But you, young scientists, don’t have to be like me, so let’s see if I can help you overcome your fear of math.” Alas, the octogenarian authority on social insects takes the opposite tack. Turns out he actually believes not only that the fear is justified, but that most scientists don’t need math. “I got by, and so can you” is his attitude. Sadly, it’s clear from the article that the reason Wilson makes these errors is that, based on his own limited experience, he does not understand what mathematics is and how it is used in science.

http://www.slate.com/articles/health_and_science/science/2013/04/e_o_wilson_is_wrong_about_math_and_science.html

brent ==> I can see why the mathematicians are up in arms about E.O. Wilson’s viewpoint.

But I fear that mush of what is passing as “good science” today is the results of the “over-mathematation” of Science.

This includes what I call “computational hubris” and the myriad nonsensical results of the misuse of statistics placing numerical statistical results over logic and plausibility.

…much of …

“the myriad nonsensical results of the misuse of statistics placing numerical statistical results over logic and plausibility.”

Kip,

I taught high school chemistry (college prep, honors and AP). When I got to the section of chemical equilibrium I would give problems which required the use of the quadratic or cubic formula to solve. It was to teach to look at the plausibility of the answers. The students were use to reporting all answers in their math classes and so they’d do the same in my chem classes. I then ask them if it made sense that you used more of chemical that you started with. They get a funny look on their faces when they realized what they had done. It made them look at answers more closely in the future.

chemman ==> Great Example! Love it —

I don’t know why equilibrium would be a challenging concept, however as below it seems to be for some.

That Wilson could believe there was some kind of “equilibrium” 10K or 20K years ago is astounding. Only 18K years ago all of Canada was under the ice sheets, and ice cover was still extensive 10k years ago.

“If all mankind were to disappear, the world would regenerate back to the rich state of equilibrium that existed ten thousand years ago. If insects were to vanish, the environment would collapse into chaos.”

― Edward O. Wilson

https://www.goodreads.com/author/quotes/31624.Edward_O_Wilson

“Possibly here in the Holocene, or just before 10 or 20 thousand years ago, life hit a peak of diversity. Then we appeared. We are the great meteorite.”

― Edward O. Wilson

https://www.goodreads.com/author/quotes/31624.Edward_O_Wilson?page=4

Timeline of Recent Glaciation

http://www.nps.gov/features/romo/feat0001/BasicsIceAges.swf

Indeed. A check for half a dozen or so of the most common statistical errors are depressingly often successful in turning up a “hit”.

For example:

– falsely assuming that the data are normally distributed

– using methods only applicable to normal distributions for other distributions

– ignoring autocorrelation

– ignoring regression dilution

– using OLS for obviously non-linear data

– absurd Bayesian priors (or even not telling what prior was used)

– extending smoothing to both ends of a chart

tty ==> A lot of those in CliSci.

Agreed.

“Did you know the average person eats nine spiders… every time I cook for them?”

– Anthony Jeselnik “Fire in the Maternity Ward”

Michael ==> I’ll try to stay out of the maternity ward……

Stay out of the morgue. That’s where they count the spiders.

jorgekafkazar ==> Even when I worked in hospitals, I stayed out the the morgue on general principles.

Reminded me of a quirky childhood song….. little kids (3-8) love it!

“I know an old lady that swallowed a spider

that wiggled, and jiggled, and tickled in side her.

She swallowed the spider to catch a fly

and I don’t know why she swallowed that fly!

Perhaps she’ll die.”

There are more verses…..

Burl Ives – I Know An Old Lady

https://youtu.be/zQHmZMf6zwo

J Mac ==> Used to sing it to my kids….

Why not?

Full of protein.

Open to correction by I understand there are some cultures that do(did?) regularly deliberately eat spiders to supplement their otherwise low protein diet.

Om nom nom 😀

Remember that spider crawling on the wall above your bed that you threw the shoe at and it fell down the wall and disappeared behind the headboard?

He remembers

Data can be tested against Benford’s law to find oddities.

Ed ==> In many cases this is true, but remember that “It tends to be most accurate when values are distributed across multiple orders of magnitude, ”

In many experimental settings, such as Laskowski’s spider-response-times, the data set is limited to numbers between 0 and 600, timed by hand by stop watch. So a lot of “no response” values are expected to be 600 — which throws off the analysis.

What clued Laskowski and others were the number of repeated far-too exact measurements and repeated sequences.

If Pruitt had been making up the results while cloth, Benford’s Law testing might have caught it.

…making up the data whole cloth ….

Harvard’s Law of Biological Research Comes to mind:

“Under the most carefully controlled conditions of temperature, pressure and other related variables,

the organism under study will do what ever it darn well pleases.”

P.H. ==> Thanks for that — very very important for researchers and those that follow research.

That law is generalization to other fields a s well — psychology, behavioral evolution, etc etc. Living things are not machines that MUST, by design, always respond in the same way.

Or Heinrich’s rule:

“Never try to do a dissertation about an animal that is smarter than You”

(He switched from Ravens to Bumblebees)

In high school,and even most undergraduate course, the emphasis is on getting the right answer. I fear that attitude has infected most graduate courses and is now even the modus operandi of the professors. Just like in an undergrad lab course, many students will fudge the observations to have the correct values. Researchers are doing the same. I actually believe they may have come to believe that is how science is done.

Jim ==> I hope not…..

Dude works in Hamilton Ontario, research into the social behaviour of any non human species is way down the list of priorities if you know what I mean. I worked in Hamilton for GE and taught some night courses in statistics at the local college – Mohawk. There is ample strange human subjects that need help in Hamilton.

Greg61 ==> Thanks for the personal insight into the local Hamilton, CA fauna….

I spent three years in a lab environment after graduation. You learn things in lab work that you can learn nowhere else, most of which deal with mistakes and “creative” research. Just one example: my boss was lamenting the fact that our results did not produce nice, smooth curves, like Dr. Whozit’s. He showed me the relevant page and noticed that Dr. Whozit had published log-log plots in which the ink traces were broader than some of the distances between our ±1σ lines.

I highly recommend Huff’s “How to Lie with Statistics,” as well as C. Northcote Parkinson’s “Parkinson’s Law.”

You cannot depend on your eyes when your imagination is out of focus.–Mark Twain

Oh what a tangled web we weave,

When first we practice to deceive.

I am astonished no-one else has said this by now.

(Apologies.)

seadog ==> Good to see it trotted out…..

I can still hear Gale Gordon saying that to “Our Miss Brooks.”

“I still really love the social niche specialization hypothesis. I just think it’s so cool and really resonates with our own human experiences as social creatures…” –Dr. Laskowski

My admiration for Dr. Laskowski is great, and I think the above quote is very instructive. As researchers, we must not fall in love with our hypotheses, no matter how cool. Nor should we anthropomorphize our subjects. (Unless, of course, they are actual anthropoids.)

Let me make it clear that my remark is intended as a general admonition for all of us and does not change the fact that Dr. Laskowski’s conduct in this matter was admirable.

jorgekafkazar == And I heartily agree!