Reposted from Dr. Judith Curry’s Climate Etc.

by Ross McKitrick

Challenging the claim that a large set of climate model runs published since 1970’s are consistent with observations for the right reasons.

Introduction

Zeke Hausfather et al. (2019) (herein ZH19) examined a large set of climate model runs published since the 1970s and claimed they were consistent with observations, once errors in the emission projections are considered. It is an interesting and valuable paper and has received a lot of press attention. In this post, I will explain what the authors did and then discuss a couple of issues arising, beginning with IPCC over-estimation of CO2 emissions, a literature to which Hausfather et al. make a striking contribution. I will then present a critique of some aspects of their regression analyses. I find that they have not specified their main regression correctly, and this undermines some of their conclusions. Using a more valid regression model helps explain why their findings aren’t inconsistent with Lewis and Curry (2018) which did show models to be inconsistent with observations.

Outline of the ZH19 Analysis:

A climate model projection can be factored into two parts: the implied (transient) climate sensitivity (to increased forcing) over the projection period and the projected increase in forcing. The first derives from the model’s Equilibrium Climate Sensitivity (ECS) and the ocean heat uptake rate. It will be approximately equal to the model’s transient climate response (TCR), although the discussion in ZH19 is for a shorter period than the 70 years used for TCR computation. The second comes from a submodel that takes annual GHG emissions and other anthropogenic factors as inputs, generates implied CO2 and other GHG concentrations, then converts them into forcings, expressed in Watts per square meter. The emission forecasts are based on socioeconomic projections and are therefore external to the climate model.

ZH19 ask whether climate models have overstated warming once we adjust for errors in the second factor due to faulty emission projections. So it’s essentially a study of climate model sensitivities. Their conclusion, that models by and large generate accurate forcing-adjusted forecasts, implies that models have generally had valid TCR levels. But this conflicts with other evidence (such as Lewis and Curry 2018) that CMIP5 models have overly high TCR values compared to observationally-constrained estimates. This discrepancy needs explanation.

One interesting contribution of the ZH19 paper is their tabulation of the 1970s-era climate model ECS values. The wording in the ZH19 Supplement, which presumably reflects that in the underlying papers, doesn’t distinguish between ECS and TCR in these early models. The reported early ECS values are:

- Manabe and Weatherald (1967) / Manabe (1970) / Mitchell (1970): 2.3K

- Benson (1970) / Sawyer (1972) / Broecker (1975): 2.4K

- Rasool and Schneider (1971) 0.8K

- Nordhaus (1977): 2.0K

If these really are ECS values they are pretty low by modern standards. It is widely-known that the 1979 Charney Report proposed a best-estimate range for ECS of 1.5—4.5K. The follow-up National Academy report in 1983 by Nierenberg et al. noted (p. 2) “The climate record of the past hundred years and our estimates of CO2 changes over that period suggest that values in the lower half of this range are more probable.” So those numbers might be indicative of general thinking in the 1970s. Hansen’s 1981 model considered a range of possible ECS values from 1.2K to 3.5K, settling on 2.8K for their preferred estimate, thus presaging the subsequent use of generally higher ECS values.

But it is not easy to tell if these are meant to be ECS or TCR values. The latter are always lower than ECS, due to slow adjustment by the oceans. Model TCR values in the 2.0–2.4 K range would correspond to ECS values in the upper half of the Charney range.

If the models have high interval ECS values, the fact that ZH19 find they stay in the ballpark of observed surface average warming, once adjusted for forcing errors, suggests it’s a case of being right for the wrong reason. The 1970s were unusually cold, and there is evidence that multidecadal internal variability was a significant contributor to accelerated warming from the late 1970s to the 2008 (see DelSole et al. 2011). If the models didn’t account for that, instead attributing everything to CO2 warming, it would require excessively high ECS to yield a match to observations.

With those preliminary points in mind, here are my comments on ZH19.

There are some math errors in the writeup.

The main text of the paper describes the methodology only in general terms. The online SI provides statistical details including some mathematical equations. Unfortunately, they are incorrect and contradictory in places. Also, the written methodology doesn’t seem to match the online Python code. I don’t think any important results hang on these problems, but it means reading and replication is unnecessarily difficult. I wrote Zeke about these issues before Christmas and he has promised to make any necessary corrections to the writeup.

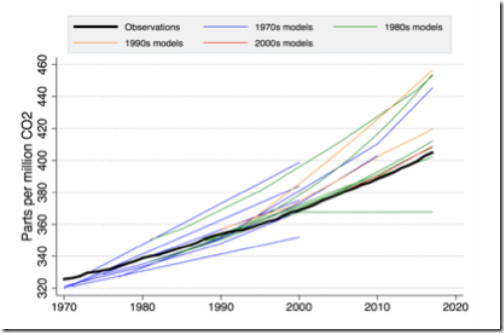

One of the most remarkable findings of this study is buried in the online appendix as Figure S4, showing past projection ranges for CO2 concentrations versus observations:

Bear in mind that, since there have been few emission reduction policies in place historically (and none currently that bind at the global level), the heavy black line is effectively the Business-as-Usual sequence. Yet the IPCC repeatedly refers to its high end projections as “Business-as-Usual” and the low end as policy-constrained. The reality is the high end is fictional exaggerated nonsense.

I think this graph should have been in the main body of the paper. It shows:

- In the 1970s, models (blue) had a wide spread but on average encompassed the observations (though they pass through the lower half of the spread);

- In the 1980s there was still a wide spread but now the observations hug the bottom of it, except for the horizontal line which was Hansen’s 1988 Scenario C;

- Since the 1990s the IPCC constantly overstated emission paths and, even more so, CO2 concentrations by presenting a range of future scenarios, only the minimum of which was ever realistic.

I first got interested in the problem of exaggerated IPCC emission forecasts in 2002 when the top-end of the IPCC warming projections jumped from about 3.5 degrees in the 1995 SAR to 6 degrees in the 2001 TAR. I wrote an op-ed in the National Post and the Fraser Forum (both available here) which explained that this change did not result from a change in climate model behaviour but from the use of the new high-end SRES scenarios, and that many climate modelers and economists considered them unrealistic. The particularly egregious A1FI scenario was inserted into the mix near the end of the IPCC process in response to government (not academic) reviewer demands. IPCC Vice-Chair Martin Manning distanced himself from it at the time in a widely-circulated email, stating that many of his colleagues viewed it as “unrealistically high.”

Some longstanding readers of Climate Etc. may also recall the Castles-Henderson critique which came out at this time. It focused on IPCC misuse of Purchasing Power Parity aggregation rules across countries. The effect of the error was to exaggerate the relative income differences between rich and poor countries, leading to inflated upper end growth assumptions for poor countries to converge on rich ones. Terence Corcoran of the National Post published an article on November 27 2002 quoting John Reilly, an economist at MIT, who had examined the IPCC scenario methodology and concluded it was “in my view, a kind of insult to science” and the method was “lunacy.”

Years later (2012-13) I published two academic articles (available here) in economics journals critiquing the IPCC SRES scenarios. Although global total CO2 emissions have grown quite a bit since 1970, little of this is due to increased average per capita emissions (which have only grown from about 1.0 to 1.4 tonnes C per person), instead it is mainly driven by global population growth, which is slowing down. The high-end IPCC scenarios were based on assumptions that population and per capita emissions would both grow rapidly, the latter reaching 2 tonnes per capita by 2020 and over 3 tonnes per capita by 2050. We showed that the upper half of the SRES distribution was statistically very improbable because it would require sudden and sustained increases in per capita emissions which were inconsistent with observed trends. In a follow-up article, my student Joel Wood and I showed that the high scenarios were inconsistent with the way global energy markets constrain hydrocarbon consumption growth. More recently Justin Ritchie and Hadi Dowladabadi have explored the issue from a different angle, namely the technical and geological constraints that prevent coal use from growing in the way assumed by the IPCC (see here and here).

IPCC reliance on exaggerated scenarios is back in the news, thanks to Roger Pielke Jr.’s recent column on the subject (along with numerous tweets from him attacking the existence and usage of RCP8.5) and another recent piece by Andrew Montford. What is especially egregious is that many authors are using the top end of the scenario range as “business-as-usual”, even after, as shown in the ZH19 graph, we have had 30 years in which business-as-usual has tracked the bottom end of the range.

In December 2019 I submitted my review comments for the IPCC AR6 WG2 chapters. Many draft passages in AR6 continue to refer to RCP8.5 as the BAU outcome. This is, as has been said before, lunacy—another “insult to science”.

Apples-to-apples trend comparisons requires removal of Pinatubo and ENSO effects

The model-observational comparisons of primary interest are the relatively modern ones, namely scenarios A—C in Hansen (1988) and the central projections from various IPCC reports: FAR (1990), SAR (1995), TAR (2001), AR4 (2007) and AR5 (2013). Since the comparison uses annual averages in the out-of-sample interval the latter two time spans are too short to yield meaningful comparisons.

Before examining the implied sensitivity scores, ZH19 present simple trend comparisons. In many cases they work with a range of temperatures and forcings but I will focus on the central (or “Best”) values to keep this discussion brief.

ZH19 find that Hansen 1988-A and 1988-B significantly overstate trends, but not the others. However, I find FAR does as well. SAR and TAR don’t but their forecast trends are very low.

The main forecast interval of interest is from 1988 to 2017. It is shorter for the later IPCC reports since the start year advances. To make trend comparisons meaningful, for the purpose of the Hansen (1988-2017) and FAR (1990-2017) interval comparisons, the 1992 (Mount Pinatubo) event needs to be removed since it depressed observed temperatures but is not simulated in climate models on a forecast basis. Likewise with El Nino events. By not removing these events the observed trend is overstated for the purpose of comparison with models.

To adjust for this I took the Cowtan-Way temperature series from the ZH19 data archive, which for simplicity I will use as the lone observational series, and filtered out volcanic and El Nino effects as follows. I took the IPCC AR5 volcanic forcing series (as updated by Nic Lewis for Lewis&Curry 2018), and the NCEP pressure-based ENSO index (from here). I regressed Cowtan-Way on these two series and obtained the residuals, which I denote as “Cowtan-Way adj” in the following Figure (note both series are shifted to begin at 0.0 in 1988):

The trends, in K/decade, are indicated in the legend. The two trend coefficients are not significantly different from each other (using the Vogelsang-Franses test). Removing the volcanic forcing and El Nino effects causes the trend to drop from 0.20 to 0.15 K/decade. The effect is minimal on intervals that start after 1995. In the SAR subsample (1995-2017) the trend remains unchanged at 0.19 K/decade and in the TAR subsample (2001-2017) the trend increases from 0.17 to 0.18 K/decade.

Here is what the adjusted Cowtan-Way data looks like, compared to the Hansen 1988 series:

The linear trend in the red line (adjusted observations) is 0.15 C/decade, just a bit above H88-C (0.12 C/decade) but well below the H88-A and H88-B trends (0.30 and 0.28 C/decade respectively)

The ZH19 trend comparison methodology is an ad hoc mix of OLS and AR1 estimation. Since the methodology write-up is incoherent and their method is non-standard I won’t try to replicate their confidence intervals (my OLS trend coefficients match theirs however). Instead I’ll use the Vogelsang-Franses (VF) autocorrelation-robust trend comparison methodology from the econometrics literature. I computed trends and 95% CI’s in the two CW series, the 3 Hansen 1988 A,B,C series and the first three IPCC out-of-sample series (denoted FAR, SAR and TAR). The results are as follows:

The OLS trends (in K/decade) are in the 1st column and the lower and upper bounds on the 95% confidence intervals are in the next two columns.

The 4th and 5th columns report VF test scores, for which the 95% critical value is 41.53. In the first two rows, the diagonal entries (906.307 and 348.384) are tests on a null hypothesis of no trend; both reject at extremely small significance levels (indicating the trends are significant). The off-diagonal scores (21.056) test if the trends in the raw and adjusted series are significantly different. It does not reject at 5%.

The entries in the subsequent rows test if the trend in that row (e.g. H88-A) equals the trend in, respectively, the raw and adjusted series (i.e. obs and obs2), after adjusting the sample to have identical time spans. If the score exceeds 41.53 the test rejects, meaning the trends are significantly different.

The Hansen 1988-A trend forecast significantly exceeds that in both the raw and adjusted observed series. The Hansen 1988-B forecast trend does not significantly exceed that in the raw CW series but it does significantly exceed that in the adjusted CW (since the VF score rises to 116.944, which exceeds the 95% critical value of 41.53). The Hansen 1988-C forecast is not significantly different from either observed series. Hence, the only Hansen 1988 forecast that matches the observed trend, once the volcanic and El Nino effects are removed, is scenario C, which assumes no increase in forcing after 2000. The post-1998 slowdown in observed warming ends up matching a model scenario in which no increase in forcing occurs, but does not match either scenario in which forcing is allowed to increase, which is interesting.

The forecast trends in FAR and SAR are not significantly different from the raw Cowtan-Way trends but they do differ from the adjusted Cowtan-Way trends. (The FAR trend also rejects against the raw series if we use GISTEMP, HadCRUT4 or NOAA). The discrepancy between FAR and observations is due to the projected trend being too large. In the SAR case, the projected trend is smaller than the observed trend over the same interval (0.13 versus 0.19). The adjusted trend is the same as the raw trend but the series has less variance, which is why the VF score increases. In the case of CW and Berkeley it rises enough to reject the trend equivalence null; if we use GISTEMP, HadCRUT4 or NOAA neither raw nor adjusted trends reject against the SAR trend.

The TAR forecast for 2001-2017 (0.167 K/decade) never rejects against observations.

So to summarize, ZH19 go through the exercise of comparing forecast to observed trends and, for the Hansen 1988 and IPCC trends, most forecasts do not significantly differ from observations. But some of that apparent fit is due to the 1992 Mount Pinatubo eruption and the sequence of El Nino events. Removing those, the Hansen 1988-A and B projections significantly exceed observations while the Hansen 1988 C scenario does not. The IPCC FAR forecast significantly overshoots observations and the IPCC SAR significantly undershoots them.

In order to refine the model-observation comparison it is also essential to adjust for errors in forcing, which is the next task ZH19 undertake.

Implied TCR regressions: a specification challenge

ZH19 define an implied Transient Climate Response (TCR) as

where T is temperature, F is anthropogenic forcing, and the derivative is computed as the least squares slope coefficient from regressing temperature on forcing over time. Suppressing the constant term the regression for model i is simply

The TCR for model i is therefore where 3.7 (W/m2) is the assumed equilibrium CO2 doubling coefficient. They find 14 of the 17 implied TCR’s are consistent with an observational counterpart, defined as the slope coefficient from regressing temperatures on an observationally-constrained forcing series.

Regarding the post-1988 cohort, unfortunately ZH19 relied on an ARIMA(1,0,0) regression specification, or in other words a linear regression with AR1 errors. While the temperature series they use are mostly trend stationary (i.e. stationary after de-trending), their forcing series are not. They are what we call in econometrics integrated of order 1, or I(1), namely the first differences are trend stationary but the levels are nonstationary. I will present a very brief discussion of this but I will save the longer version for a journal article (or a formal comment on ZH19).

There is a large and growing literature in econometrics journals on this issue as it applies to climate data, with lots of competing results to wade through. On the time spans of the ZH19 data sets, the standard tests I ran (namely Augmented Dickey-Fuller) indicate temperatures are trend-stationary while forcings are nonstationary. Temperatures therefore cannot be a simple linear function of forcings, otherwise they would inherit the I(1) structure of the forcing variables. Using an I(1) variable in a linear regression without modeling the nonstationary component properly can yield spurious results. Consequently it is a misspecification to regress temperatures on forcings (see Section 4.3 in this chapter for a partial explanation of why this is so).

How should such a regression be done? Some time series analysts are trying to resolve this dilemma by claiming that temperatures are I(1). I can’t replicate this finding on any data set I’ve seen, but if it turns out to be true it has massive implications including rendering most forms of trend estimation and analysis hitherto meaningless.

I think it is more likely that temperatures are I(0), as are natural forcings, and anthropogenic forcings are I(1). But this creates a big problem for time series attribution modeling. It means you can’t regress temperature on forcings the way ZH19 did; in fact it’s not obvious what the correct way would be. One possible way to proceed is called the Toda-Yamamoto method, but it is only usable when the lags of the explanatory variable can be included, and in this case they can’t because they are perfectly collinear with each other. The main other option is to regress the first differences of temperatures on first differences of forcings, so I(0) variables are on both sides of the equation. This would imply an ARIMA(0,1,0) specification rather than ARIMA(1,0,0).

But this wipes out a lot of information in the data. I did this for the later models in ZH19, regressing each one’s temperature series on each one’s forcing input series, using a regression of Cowtan-Way on the IPCC total anthropogenic forcing series as an observational counterpart. Using an ARIMA(0,1,0) specification except for AR4 (for which ARIMA(1,0,0) is indicated) yields the following TCR estimates:

The comparison of interest is OBS1 and OBS2 to the H88a—c results, and for each IPCC report the OBS-(startyear) series compared to the corresponding model-based value. I used the unadjusted Cowtan-Way series as the observational counterparts for FAR and after.

In one sense I reproduce the ZH19 findings that the model TCR estimates don’t significantly differ from observed, because of the overlapping spans of the 95% confidence intervals. But that’s not very meaningful since the 95% observational CI’s also encompass 0, negative values, and implausibly high values. They also encompass the Lewis & Curry (2018) results. Essentially, what the results show is that these data series are too short and unstable to provide valid estimates of TCR. The real difference between models and observations is that the IPCC models are too stable and constrained. The Hansen 1988 results actually show a more realistic uncertainty profile, but the TCR’s differ a lot among the three of them (point estimates 1.5, 1.9 and 2.4 respectively) and for two of the three they are statistically insignificant. And of course they overshoot the observed warming.

The appearance of precise TCR estimates in ZH19 is spurious due to their use of ARIMA(1,0,0) with a nonstationary explanatory variable. A problem with my approach here is that the ARIMA(0,1,0) specification doesn’t make efficient use of information in the data about potential long run or lagged effects between forcings and temperatures, if they are present. But with such short data samples it is not possible to estimate more complex models, and the I(0)/I(1) mismatch between forcings and temperatures rule out finding a simple way of doing the estimation.

Conclusion

The apparent inconsistency between ZH19 and studies like Lewis & Curry 2018 that have found observationally-constrained ECS to be low compared to modeled values disappears once the regression specification issue is addressed. The ZH19 data samples are too short to provide valid TCR values and their regression model is specified in such a way that it is susceptible to spurious precision. So I don’t think their paper is informative as an exercize in climate model evaluation.

It is, however, informative with regards to past IPCC emission/concentration projections and shows that the IPCC has for a long time been relying on exaggerated forecasts of global greenhouse gas emissions.

I’m grateful to Nic Lewis for his comments on an earlier draft.

Comment from Nic Lewis

These early models only allowed for increases in forcing from CO2, not from all forcing agents. Since 1970, total forcing (per IPCC AR5 estimates) has grown more than 50% faster than CO2-only forcing, so if early model temperature trends and CO2 concentration trends over their projection periods are in line with observed warming and CO2 concentration trends, their TCR values must have been more than 50% above that implied by observations.

“exercize” = exercise….

Come on everybody do your exorcise

😉

“errorcise” (^_^)

… the updated description of climate “science”.

Bryan A, there used to be a children’s TV show in the 1960s that had a song w/that lyric (exercise of course). I think it was a local Wash DC show called Wonderama….

The graphics included are fuzzy, like they were blown up from much smaller images. It’s very hard to read the legends, especially on the final image (Implied TCR values). Could this be corrected? Thanks.

DEN*ER!! How dare you!

“The graphics included are fuzzy” …

My mother taught me:

Never trust a man with fuzzy graphics.

There is a capital assumption in calculating TCS or ECS values based on the empirical data. That capital assumption is that the temperature changes are due to the CO2 concentration changes only. That is the game that the IPCC wants climate researchers and media to play: the reasons for climate change are anthropogenic: humanity is the only guilty one.

Here is my simple evidence of what the researchers should show to be wrong. During the period from 1997 to 2014, the CO2 emissions increase from 264 gigatonnes (GtC) to 404 GtC; the emissions of this period are 35 % of total emissions since 1750 and increase as much as 49 %. What was the temperature impact? No increase in global surface temperature. Zero.

This is a piece of undeniable evidence that there are other climate drivers as CO2. Therefore any analysis based on observational data is not scientific enough to give the right answer for the question of climate sensitivity. If somebody does so, he/she should first eliminate the effects of all other factors. Problem is that nobody knows those other factors.

Antero

Of course you are right.

And brilliant to conclude “nobody knows”.

But it is okay to analyze global average temperature changes, and then ASSUME only CO2 is responsible for warming — that would be a fair estimate of a worst case for warming caused by CO2.

Unfortunately, no one mentions it’s a “worst case estimate”, except me !

Not a perfect worst case estimate, because feedback, if any, is unknown.

Ross McKitrick is an ethical and competent gentleman who I met circa year 2000. I do not know Zeke Hausfather.

Competent individuals like Ross and Steve McIntyre have been writing these learned treatises for decades, pointing out the fatal flaws in warmist papers like the Mann hockey stick series (MBH98 et al).

I used to do so as well, but I finally came to the credible conclusion that everything written by the warmist camp is false propaganda.

The following post is from 2012:

https://wattsupwiththat.com/2012/02/16/quote-of-the-week-andrew-bolt-nails-fakegate/#comment-770951

I repeat:

You can save yourselves a lot of time, and generally be correct, by simply assuming that EVERY SCARY PREDICTION the global warming alarmists express is FALSE.

The warming alarmists have a near-perfect negative predictive track record – every one of their scary predictions has failed to materialize.

I wrote the following some weeks ago, not for the first time, and am doing pretty well so far.

https://wattsupwiththat.com/2012/02/01/briggs-schools-the-bad-astronomer-on-statistics/#comment-761273

[excerpt]

Finally Kevin+37, you have demonstrated a near-perfect track record of negative predictive skill – not one of your scary predictions has materialized! Should we then, statistically, disbelieve everything you predict? It appears we should.

Thank you!

While those of us who approach this issue rationally and logically – not emotionally – can follow and appreciate a verbal argument, most people find it far easier to be shown and have matters explained in a simple visual way. The best defense in a debate often makes far less of an impression on the minds of listeners that a very clever cartoon that everyone sees and laughs at.

While I wonder if climate alarmism is not encouraging one of the biggest financial frauds of all time, I believe that many people caught up in the alarmism are sincere but muddled. They are buying a pig in a poke. We need to help them to see this and laugh, and then slowly get angry when they recognize their ignorance is being exploited.

MacRae

Brilliant comment.

7:31 am

My own spin is:

You can’t change the mind of a climate alarmist with real science, because his apocalyptic beliefs about the future climate were never created by real science in the first place.

It won’t even work if you tell them one climate science fact, and then smack them upside the head with a rolled up Sunday New York Times newspaper.

Although it is a good use for a New York Times newspaper.

Basically, leftists do not change their minds, except to move further left.

And lying to support their cause is okay, because truth is not a leftist virtue.

I suppose the good news is the world is going to end in 12 years, maybe 11 years now, so finally, we won’t have to listen to leftists bellowing their always wrong predictions of climate doom any more.

They are also OK for puppy training, and lighting the fireplace on frigid nights.

Current upswing in global temperatures is most likely due to natural cycle (on century scale) causes of which have not been considered at all in any of the climate models past or present. Any shorter term dip in temperature could be result of the well known AMO 60 yr cycle or a drastic decades long fall in solar activity e.g. Maunder Minimum as it happened in the late 1600s.

I considered N. Hemisphere temperature trends (just about usable data available) and have estimated that the centenary cycle is around 380-400 years long (most likely Atlantic-Southern ocean sub-loop of the great ocean conveyor belt). This cycle last time partially peaked about 1720s as it was mostly suppressed by the Maunder Minimum (a repeat is possible in comming decades)

http://www.vukcevic.co.uk/NH-GM.htm

Analysis show that the current temperature is most likely to encounter a pause due to the imminent negative AMO or even fall for a decade or two if there is another Grand Minimum as some solar commentators are predicting. Failing the solar GM occurrence, temperatures are likely to rise further up to the end of this century.

In all this saga the CO2 is the very minor bit player, a horsefly in a tail of a ‘rumble in the jungle’ elephant.

Always love your graphs, Vuk. Have you ever looked into the changes solar minimums and maximums have on terrestrial temperature spikes and dips? I’m not so sure they impact longer lasting trends, but solar mins have such an impact on upper atmosphere warmings, ozone concentrations and magnetic changes the weather can get wacky on the ground.

An extended minimum (if it comes to be) would certainly prolong the effects, which might change the ocean currents and warm/cold ocean pool locations and would certainly have longer lasting effects. But even then would it impact the long year cycles? And almost all of the really big volcanic activity has gone off during solar minimums. Again over the long haul, they seem just to be transient events.

Hi RB.

Thanks. Yes, I agree and yes I have looked into it, but there is a problem or two in the way.

– Solar cycle is usually between 10.5 and 11 year long, of which max and min are about year or maybe two each, and when you consider that max follows min about 4.5-5 years later, we might get small effect of about 0.1C globally, a bit more in the N.H. where there is more land.

– With world oceans great inertia (either way, warming or cooling) sunspot cycles effect is strongly suppressed.

– However, if and when GM occurs then it is not just a year or two but 30 or even 40 years long solar minimum, the oceans have time to cool down and global temperature may fall about 0.7C and N.H possibly more.

– Integrating sunspot cycles over period of a century may have some significance, and certainly many experts thought there is something to it, but as ever it is difficult to prove the effect.

Yes. We are probably still warming out of the Little Ice Age. Attributing that portion of the modern warming to CO2 is a serious mistake.

Judith Curry (and many others) observes that the early 20th century warming is similar to the late 20th century warming. Again, that is evidence that warns against attributing much of the late 20th century warming to CO2.

If we’re just discussing model performance, I agree with Dr. McKitrick that Pinatubo’s effects should be adjusted out.

On the other hand, ENSO is part of the climate system. If the models can’t cope with it, that’s an indication that the models are missing something.

Absolutely! The existence of the MWP and LIA (and all other similar periods in the Holocene) as well as the instrumental record of the early 20th century warming should provide all the evidence necessary that long period natural cycles are important and perhaps dominant. If we can’t say what the natural trend is, how the heck can we presume to calculate climate sensitivity due to CO2?

Right. It’s all down to some century scale mystical forcing which is yet to be discovered.

But you’re certain it’s not CO2.

Emission spectra from satellites shows that CO2 is very relevant particularly in the higher, colder, drier regions of the atmosphere. This means that emission of absorbed energy from these CO2 molecules will be at a much reduced power relative to emission from the surface. This results in an imbalance between incoming solar energy & outgoing LW energy. In a nutshell – the Earth will warm

I spent several years arguing the case against CAGW (not AGW) with numerous AGW proponents (including Michael Mann in 2004) but I’m finding the continual refusal to accept reality (and SCIENCE) by ‘sceptics’ impossible to defend.

There isn’t a respectable ‘sceptical’ scientist who doesn’t recognise that CO2 is a significant greenhouse gas.

The absorption of LW radiation by GH gases and clouds do not mean a long-term imbalance between the incoming and outgoing radiation. The time constants of the Earth are the ocean’s mixing layer about 3 months and about 1 month for land. It means that about one year of relaxation time.

A concrete example. I live on the shore of Baltic sea. In March the sea may be frozen. In the beginning of May, the seawater temperature is zero and in July it is about 20 degrees. It means that if the summer weather would continue six months more, the temperature would be about 25-30 degrees.

It is quite a common idea that GH effect is based on the ongoing energy imbalance. It is not. When the outgoing LW radiation decreases because of increased absorption, it means that the atmosphere radiates more energy on the surface, its temperature increases, it radiates more energy into space according to Planck’s law and pretty quickly the outgoing LW radiation is the same as incoming. This is according to the energy conservation law. The energy cannot disappear. What is coming in, must go out.

Perhaps I didn’t explain it very well but I thought my comment agreed pretty much with yours.

When the outgoing LW radiation decreases because of increased absorption, it means that the atmosphere radiates more energy on the surface, its temperature increases,

Yes.

it radiates more energy into space according to Planck’s law and pretty quickly the outgoing LW radiation is the same as incoming. , its temperature increases, it radiates more energy into space according to Planck’s law and pretty quickly the outgoing LW radiation is the same as incoming.

Yes.

However, not all the imbalance will be immediately realised as surface warming, e.g. ocean warming.

We have long term phenomena which, in no way, can be explained by CO2. Why, for instance, do we bang between glaciation and interglacials? Why the various warm and cool periods during the Holocene? You can’t point at our lack of understanding and then insist that the answer must be CO2.

All the possible explanations of climate changes come up short. Therefore, you have to accept my contention that they are caused by the Weather God’s tummy aches. Hey, it’s just as logical as a CO2 thermostat.

How about a falsifiable prediction. If you anger the Weather God by refusing to believe in him, he will cause bad weather. You can see it with your own eyes. The evidence is all around you.

“We have long term phenomena which, in no way, can be explained by CO2. Why, for instance, do we bang between glaciation and interglacials?”

Indeed so. No-one claims the glacials were caused by CO₂. In that time frame, there were no large additions of CO₂.

Gavin said, rightly, that the prediction was that any such big addition, if it happened, would cause warming. It happened, and it warmed.

Correlation is not causation, Nick.

I am surprised at you using such broken logic.

Science progresses by testing hypotheses by predictions, which can be verified. That is what happened here. If a theory can account for observations, it gains credibility, even when one can’t say that no other explanation is possible. The causation comes from the theory (where else?).

It’s observably been warming since the mid 1800s when the glaciers started to retreat. You get no points for predicting that the warming will continue.

You could predict that extra CO2 will raise the temperature above the naturally occurring trend. OK then, but you really don’t know what the naturally occurring trend should be.

The observed trend no more validates AGW than bad weather validates the angry Weather God hypothesis.

There is a lot of range in that word ‘significant’. Significant can be noticeable and can be dominant. This discussion is not denying the greenhouse gas it is trying to establish the significance.

“Right. It’s all down to some century scale mystical forcing which is yet to be discovered.”

You are indeed correct, although it was you who used the word mystical.

The null hypothesis was formulated to counter claptrap, or the musings of someone who was too busy being a smart-arse to put their brain in gear while typing.

Please do let us know what was the “mystical” (your word) forcing that caused temperatures to rise during the Medieval and Roman Warm Periods (for starters). Perhaps if climate scientists weren’t all focused on conclusion-based-“scientific” conclusions, we all might know and you would be able to drop the word “mystical” from your cognitive dissonance.

CO₂ emission spectra?

That is; CO₂ molecules were raised to incandescence so that their unique emission spectra can be analyzed and verified in a spectroscope?

Which sun or heat source was used to heat Earth’s atmospheric molecules to incandescence?

CO₂’s infrared emissions are constrained to very few and tightly constrained frequencies.

Frequencies that are shared by other products, including atmospheric gases.

CO₂ is an atmospheric mite compared to the blue whale of H₂O.

H₂O is infrared active in all three physical states, gas, liquid and solid.

H₂O is extremely active over a very large breadth of light frequencies, including infrared. Infrared frequencies where H₂O is active swamps a huge range of light frequencies including most of the emission frequencies CO₂ shares.

CO₂ is 0.04% of the atmosphere and is minimally infrared active.

Extremely infrared active H₂O comprises a range of much higher atmospheric percentages.

Plus, H₂O is a substantial driver of atmospheric convection.

Indeed. And how ~3% of 0.04% CO2 is the driver of climate change is anyone’s guess.

“CO₂ is 0.04% of the atmosphere and is minimally infrared active.”

Here is an actual measured IR spectrum taken over Alaska. The big bite around 15 μm is the CO₂ absorption.

A big bite?

Note the words “cloud free atmosphere”. Got one for a non-cloud free atmosphere over any part of the earth?

“Got one for a non-cloud free atmosphere over any part of the earth?”

That was the whole point of the experiment.

To determine the magnitude of changes to the forcing exerted by CO2 in the atmosphere.

So obviously (?) the experiments were conducted in cloud free skies.

(In case it’s not obvious) because then the signal is obscured by H2O

And yes we know that water absorbs at an overlap with CO2 near 15 micron (the Earth’s strongest emission) BUT there’s plenty of places in the Earth’s atmosphere where dry air predominates, most notably at altitude where the GHE is strongest.

“Anthony Banton January 19, 2020 at 11:45 am”

What is altitude at the surface looking up? What is your definition of “dry air” because I know of two. One is theoretical.

Exactly. And more importantly, the “business-as-usual” RCP8.5 temperature and sea level rise projections are also exaggerated nonsense, but these are the ones used almost exclusively in every climate-change-related study and news report.

A quick review of current long-term (~40 year) temperature and sea level trends puts them squarely in the RCP2.6 range.

According to UAH, the linear temperature trend since 1979 is 1.3° C per century.

According to UC sea level rise analysis, the linear sea level trend since 1993 is 0.31 meters per century.

The RCP2.6 range by 2100 for temperature is 0.3 to 1.7 C and the likely mean is 1.0 C.

For sea level rise the range is 0.26 to 0.55 meters and the likely mean is 0.4 meters.

The RCP2.6 scenario assumes global, aggressive CO2 emissions reduction so that they peak between 2010 and 2020 (yes, right now!) and decline for the rest of the century. In fact, they are still substantially growing globally, thanks to China and India and other developing countries struggling to rise out of poverty, while they are declining modestly in many Western countries including the U.S.

In other words, globally the emissions scenario is what RCP8.5 assumes, but the measured temperature and sea level trends are RCP2.6. Mic drop. If the mainstream media weren’t so drastically Leftist, this news would be common knowledge by now.

“Exactly. And more importantly, the “business-as-usual” RCP8.5 temperature and sea level rise projections are also exaggerated nonsense, but these are the ones used almost exclusively in every climate-change-related study and news report.”

An excellent point. All the gloom-and-doom climate scaremongering is based on the unrealistic, never-going-to-happen, RCP8.5 scenario. That means all those scary scenario’s based on RCP8.5 are also never going to happen.

The Alarmists are scaring the children (and adults) with human-caused climate change science fiction tales.

And those scenario’s are used to make policy-

“RCP8.5 – and only RCP8.5 – is mandated by law to be used by the US National Marine Fisheries Service in its implementation of the Endangered Species Act

Once you starting digging you find that there is no bottom”

https://t.co/2ZemcSOLLA pic.twitter.com/5SeFMQIxkI

— Roger Pielke Jr. (@RogerPielkeJr) January 18, 2020

For a global radiation balance model…ok, brag about how your simplified model closely matches observed temps to some degree (and claim there is mucj more warming in the pipeline).

The GCMs however are total failures. Even when they get the overall global trend mostly right, that is the product of bad misses in temps regionally. Summing up garbage to get sonething respectable is not proper in science, math, or engineering. On top of that, they fail with precipitation, cloud cover, and other parameters.

More money= more exaggerated claims. That’s why so called Climate scientists are flocking to the EU from the US. It’s greener on the other side (till the Dems rule again).

Cooking data in global warming science to get more money is a crafty job that needs no demonstrating when the Democrafts ruled before or might rule again.

I agree with Antero Ollila January 18, 2020 at 7:21 am.

For example, it is accepted that volcanic eruptions can cause a temporary reduction in global temperature through the creation of reflective aerosols. The TCR formula above ignores the impact of this natural forcing on trend because it is temporary, so at best, it creates a blip in the trend. The same can be said for man made CO2. On longer time scales, fossil fuel use will be seen as a temporary blip on the long run trend in global temperature. Furthermore, atmospheric aerosols were not at 0 in the mid 1800’s at the start of most “modern” temperature series designed to capture the impact of man made CO2. If aerosols were relatively high in the 1700’s and 1800’s then a reduction in aerosols would cause warming as the fell to a relatively normal level during the 1900’s.

I assume there is a way to approximate the concentration of aerosols prior to 1900, but I am not in this line of research so will defer to others. This is only an example to make the point that the model suffers from omitted variable bias causing ECS to be to high.

It all seems a bit ad hoc to assume changes in man made CO2 are the sole explanatory variable that determines the trend in global temperature change.

“For example, it is accepted that volcanic eruptions can cause a temporary reduction in global temperature through the creation of reflective aerosols.”

Willis has several posts on the subject which question that theory.

Not very attention grabbing, but it should be. We put up with worst case scenario modeling for over 20 years before observations proved them to be worst case projections based on over sensitivity to CO2. Thanks for exposing the errors behind the wishful hindsight maneuver.

Mr. Ross McKitrick is obviously a very smart fellow.

I’m glad he is on “our side”.

I have some very different opinions about debating “climate models”.

There is no precise climate physics model to be the foundation for a real climate model.

Therefore, no real climate models can exist.

The things that are called climate models are just the opinions of the person/people who programmed the computer(s).

All models seem to assume CO2 levels are very important.

They ignore the fact that our planet had 4.5 billion years of NATURAL causes of climate change, with only one apparent connection to CO2 — warming oceans, from natural causes, released some dissolved CO2 in the air, with a multi-hundred year lag. Vice versa for cooling oceans.

There are many climate models, but most seem locked into the 1970s — assuming a 100% increase of the CO2 level will cause a roughly +3 degrees C. global warming, plus or minus 50%.

The plus or minus 50% makes me very suspicious of that “formula”.

The next step is to examine global warming in the past century, to see if there a strong positive correlation with CO2 level changes.

It does not matter that models did not exist at the time, since we can apply their general temperature-CO2 formula to the very rough estimates of CO2 levels (from ice cores) before 1958.

Question:

Does the CO2 level act as a global average temperature “control knob” ?

With very little Southern Hemisphere temperature data, we believe there was global warming from 1910 to 1940, but with very little change in the CO2 level.

Weak positive correlation of average temperature and the CO2 level.

With rough global temperature data, we believe there was global cooling from 1940 to 1975, in spite of significantly increasing CO2 levels.

Negative correlation.

With good global temperature data, especially UAH satellites after 1975, there was significant warming and CO2 level increases, from 1975 to 2005.

Strong positive correlation.

From 2005 through mid-2015, lot’s more CO2 in the air, but very little temperature change ( ignoring the temporary late 2015 / early 2015 EL Nino heat release, unrelated to CO2. )

No correlation.

So, since 1910, the correlation of the global average temperature and CO2 levels has repeatedly changed.

And there is no reason to be confident that CO2 levels control the global average temperature.

Even less reason to assume natural causes of climate change do not matter.

The so called climate models, therefore, are nothing more than opinions, that ignore climate history.

There are enough personal opinions, er, I mean climate models, to assure that at least one will seem to have made decent temperature predictions in past decades (i,e,; The Russian INMCM model, now in the fifth generation (INMCM5), being developed at the Institute of Numerical Mathematics of the Russian Academy of Sciences.)

That does not mean future predictions from that Russian model will be accurate.

It’s easy to predict more global warming, after our planet has been warming (intermittently) since the 1690s.

That doesn’t mean our planet will ALWAYS be warming.

In fact, ice core studies suggest our planet is rarely as warm and comfortable as it has been for several decades.

The US stock market has been rising for almost 11 years.

That doesn’t mean stock prices will ALWAYS be rising.

Another example of the temperature pause of the 2000’s. Let us assume that there is an unknown climate driver X, which has caused a temperature increase of 0.75 C and CO2 has caused a temperature increase of 0.25 C since 1750. Then the temperature effect of decreases by 0.1 C because of the changing effect of factor X, and at the same the temperature effect of CO2 increases during the period from 2000 to 2018 the same 0.1 C. According to IPCC, it should have increased about 0.3 C.

Conclusion. The pause does mean that the climate sensitivity of CO2 is zero because you do not know the temperature effects of factor X during the pause.

Imagine someone in 1890 trying to build out projections of CO2 emissions for the next 100 years… They would not have had any idea that diesel would replace steam, that cars would become a common commodity, that there would have been 2 great wars, or aircraft, or that people would live so long. or that wealth would increase so dramatically. Oil was primary for lubrication of machine parts and lamp oil.

Now sit back and try to imagine the world in the next 100 years. The simple fact is, we don’t known what we don’t know, and any CO2 emission projections are just guesswork.

Now take you guesswork and feed it into a climate project model that is based on a lot more guesses. You will get as output the exact expectation(s) written into the model by its creators – their best guesses based on their biases.

None of this smacks of science. It’s much closer to reading tea leaves.

Zeke Hausfather is a scammer.

I analyze Berkeley data here:

https://phzoe.wordpress.com/2019/12/30/what-global-warming/

https://phzoe.wordpress.com/2020/01/17/precipitable-water-as-temperature-proxy/

Interesting stuff Zoe.

Steven Mosher makes angry drive-by posts here and is also part of Berkeley Earth. I am sure he’d be enraged at your posts and call you names.

I look forward to being called names by tax funded scumbags.

An excellent seriously involved meticulous extremely detailed investigation and results performed by Dr. Ross McKitrick.

Well Done and Thank You!!

For example:

Just one of many investigation details.

Ross,

“Since the 1990s the IPCC constantly overstated emission paths and, even more so, CO2 concentrations by presenting a range of future scenarios, only the minimum of which was ever realistic.”

That doesn’t make sense. As you said earlier

“The emission forecasts are based on socioeconomic projections and are therefore external to the climate model.”

The IPCC presented a range precise because they don’t know how much CO2 people will choose to emit, and climate models can’t tell them. Eventually at best just one forecast will prove correct. You think it will be at the lower end. So? They are still covering the range.

“the 1992 (Mount Pinatubo) event needs to be removed since it depressed observed temperatures but is not simulated in climate models on a forecast basis”

In fact Hansen’s B and C scenarios had a major eruption in 1995, producing an effect very similar to Pinatubo. If Volcanoes are to be removed from the results, they should be removed from the projection too.

“Likewise with El Nino events.”

El Nino is part of a cycle, and there is no reason why it should be expected to overstate trends. But if it is to be removed, then surely La Nina should be too.

“The Hansen 1988-B forecast trend does not significantly exceed that in the raw CW series but it does significantly exceed that in the adjusted CW (since the VF score rises to 116.944, which exceeds the 95% critical value of 41.53). “

That is a pretty good result, since CW should not have been adjusted for volcanoes (since H88B had them too).

The only important model they should be running is the current emissions projections (so 2-3% per year). No-one except climate scientists and activists actually believe that any other scenario is ever going to happen and that is how the political reality is playing out. I don’t know how many COP have to come and go before the climate science community get the message.

So other projections and pipe dreams may be nice to look at occasionally but when it comes down to how accurate are the models the only projection that matters is the projection of current emissions.

Mainstream climate scientists all implictly presume Fourier knew what he was talking about.

Fourier was a geothermal denier and his short sightedness is still reaking havoc upon the intelligentsia.

As far as I know, I’m the first to uncover what is so darn obvious:

https://phzoe.wordpress.com/2019/12/25/why-is-venus-so-hot/

https://phzoe.wordpress.com/2019/12/24/hot-plate-heat-lamp-and-gases-in-between/

https://phzoe.wordpress.com/2019/12/06/measuring-geothermal-1/

https://phzoe.wordpress.com/2019/12/04/the-case-of-two-different-fluxes/

This research is a paradigm changer, and I’m hoping people more qualified than a financial analyst can get behind it.

Thank you for listening.

-Zoe

I’m wondering: do people here know that according to mainstream science, CO2 can’t directly heat the air?

It’s true!

The official radiation budget shows GHGs sending all the radiation it receives. Therefore GHGs can’t transfer their vibrational energy into translational energy, otherwise there would be no or less vibrational energy to generate IR – thus destroying the radiation balance.

By conserving radiation flows, there is no heating of the air by GHGs.

Isn’t that funny?

I think you need to read up on CO2 ‘thermalization’. The reality is just the opposite of your conjecture.

I think you need to look at the radiation budget and the constraint that it places.

If you want to accept co2 thermalization you will have to reject the energy budget diagram of Kiehl & Trenberth.

It’s one or the other. Which do you choose?

Ross McKitrick = understated Canadian competence. I am very glad such people are alive today and here with us on this planet.

Since my earlier post appears to have been lost I’ll repeat it here.

The main forecast interval of interest is from 1988 to 2017. It is shorter for the later IPCC reports since the start year advances. To make trend comparisons meaningful, for the purpose of the Hansen (1988-2017)

and FAR (1990-2017)interval comparisons, the 1992 (Mount Pinatubo) event needs to be removed since it depressed observed temperatures but is not simulated in climate models on a forecast basisOn the contrary in Hansen B & C a major volcano was included in 1995 and 2015 so there is no need to remove the Pinatubo event.

So Ross McKitrick found that climate models where always right and we’re amidst climate danger tipping points.

The problem with 70s climate models were volcanic events and till today who knew that volcanic eruptions happen in geological time spans

and are daily business as usual too.

CO₂ remains our first enemy. On Planet Earth with its ring of fire.

Well done, just keep up the good work.