Brief Note by Kip Hansen — 10 January 2020

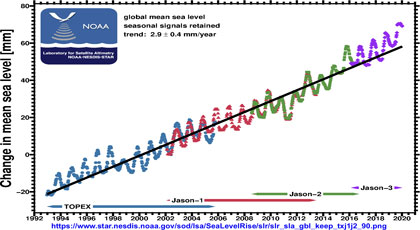

Those who follow the GMSL data from NOAA may have noticed an oddity in the data from December 2019. The oddity was seen in the .png file available at the bottom of the web page for NOAA’s Laboratory for Satellite Altimetry. As of 31 December 2019, the graphic looked like this:

Those who follow the GMSL data from NOAA may have noticed an oddity in the data from December 2019. The oddity was seen in the .png file available at the bottom of the web page for NOAA’s Laboratory for Satellite Altimetry. As of 31 December 2019, the graphic looked like this:

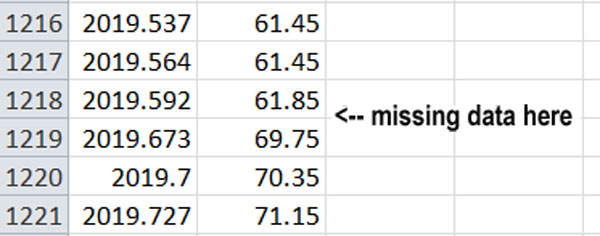

One can see that there is what appears to be missing data at the upper right. Checking the .csv data file on the same day reveals that there are in fact missing data points:

My email enquiry has resulted in a correction and an explanation:

Subject: Re: Data Break in STAR GMSL data Date: Fri, 10 Jan 2020 15:48:56 -0500 From: Eric Leuliette – NOAA Federal To: Kip Hansen Dear Kip,

Thanks for bringing the gap in the global mean sea level data to my attention. For 2 cycles of Jason-3 data, our database was missing some data due to a script failure. I’ve fixed the database and rerun the mean sea level data. The replacement files are on our web site now.

Please let me know if you have any other questions or concerns.

Eric

____________________________________________________________

Eric W. Leuliette, PhD

Branch Chief

Laboratory for Satellite Altimetry

National Oceanic and Atmospheric Administration

NOAA Center for Weather and Climate Prediction (NCWCP)

xxxx (some contact data removed – kh )

______________________________________________________________

The contents of this message are mine personally and do not necessarily reflect any position of NOAA.

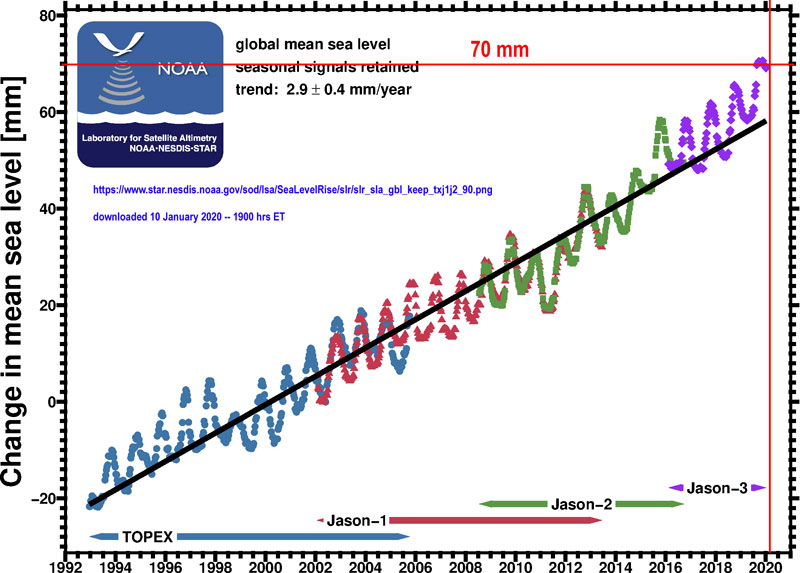

The updated chart now shows:

The missing data points have been filled in — but, unfortunately, there is a new problem!

The latest data points, from the middle of December, should be:

| 2019.592 | 61.81 | |||

| 2019.619 | 66.11 | |||

| 2019.646 | 69.41 | |||

| 2019.673 | 69.71 | |||

| 2019.7 | 70.31 | |||

| 2019.727 | 71.11 | |||

| 2019.755 | 69.81 | |||

| 2019.782 | 71.81 | |||

| 2019.809 | 72.01 | |||

| 2019.836 | 69.71 | |||

| 2019.863 | 72.81 | |||

| 2019.89 | 70.81 | |||

| 2019.917 | 68.81 | |||

| 2019.945 | 70.21 | |||

| 2019.972 | 66.71 | |||

| 2019.999 | 65.61 | |||

| 2020.016 | 62.01 |

Yet the .png graphic file shows values for January 2020 (2020.016) at 69-70mm, not the 62.01mm given in the .csv data file.

I have sent another enquiry….

# # # # #

Author’s Comment:

Why does this matter? It doesn’t matter that much, except that these are the official NOAA SLR data and graphics — they are used in all sorts of science and journalism — and they simply ought to be correct — or, if we can’t guarantee that they are correct, they should at least agree with one another. If they do not, then there is something wrong, as Eric has stated, with the scripts that produce either the .csv data files or the graphics.

On the upside, Eric Leuliette at NOAA is responsive and helpful — recognizing, acknowledging and correcting the missing data.

Still, the current version of the graphic shows a steep rise with a tiny little hook at the top — when the data shows that it ought to have a big hook back down to 62mm — either the data or the graphic is wrong. I’ll let you know.

# # # # #

That means in 100 years, we’d expect an additional 30 centimeters or 2 1/2 feet. Even Florida will survive that handily!

30 centimeters or 2 1/2 feet

Ummm… math?

Maybe in Hobbit feet?

Mark ==> aren’t Hobbit feet big?

hahahaha!

good enough for the warmists….

30 cm is less than a foot! .95ft!

I think Florida and every other place on the Planet will be just fine!

Some sites in Florida and elsewhere along the Atlantic coast have sinking seashore land at rates approaching the average se rise rate. The effect is the same.

donb ==> double trouble when land sinks and sea rises….the most usual case.

donb — Absolutely! Limestone deterioration in FL is the main issue for us! Massive subsidence in LA Gulf coast is the cause of their concern.

30 cm is not 2.5 feet. It’s ~11.8 inches. Even less of a problem.

No, it is dwarf feet, you know.

Max, I make that one foot.

I wouldn’t bank the survival of FL. Our leaders are idiots and put Marco Rubio in the senate. FL. man is more than just a meme.

Convert 30 Centimeters to Inches

How long is 30 centimeters

30 Centimeters =11.811024 Inches (rounded to 8 digits)

Max et al ==> 3mm times 100 years is 300mm which is in fact 11.81 inches — or about 1 foot.

This, not oddly at all, is about what we have had for the last 100 years.

“That means in 100 years, we’d expect an additional 30 centimeters or 2 1/2 feet. “

AOC? Is that you?

Obviously, you’re brain was thinking inches not centimeters. A forgivable lapse. We’ve all been there.

Keep after them, Kip. I personally feel an alarm whenever there is missing, adjusted, or corrected data coming from any government agency. Thanks, and keep us posted, please.

good work Kip. Unfortunately there are so many opportunities for fiddle factors between measured time delay and resulting “mean sea level” that I no long give any credibility to satellite MSL.

There is also a suspicious gap and jump around 2015.6 , what caused the worlds entire oceans to jump a couple of mm higher in the space of one month. Physically improbable, more likely data problems or someone with well-meaning, planet saving thumb on the scales.

Ron and greg ==> I have my own serious doubts about the mtric itself — being reported to a ridiculously and dubiously to 100ths of a millimeter — silly at best. These people are reporting what their complicated computational models spit out — without realizing that they are fooling themselves.

That accuracy versus precision confusion thing again. It turns up everywhere in consensus climate science.

One would think they’ve never been exposed to it.

They NEVER really understood the difference. No amount of beating them about the head with it will make any difference. Just because an instrument gives a reading in 100ths of a mm does NOT make that the accuracy!!

Been going round and round on Twitter. Where did the misconception arise that the Central Limit Theory determines the precision of the calculated mean? It blows my mind. There is no, and I mean none, awareness of trending and how it can filter data.

The lack of statistical knowledge is staggering, let alone the lack of metrology training in a field where measurements are fundamental.

greg

January 11, 2020 at 11:43 am

Yes, good catch Greg. It looks like everything more recent than that break should be brought down by your 2mm…but they won’t as it would ruin the alarmist message.

Maybe Kip could query his mate Eric on this too…

“I personally feel an alarm whenever there is missing, adjusted, or corrected data coming from any government agency.”

How do you ever get any sleep Ron?

NOAA’s magic data selecting tool.

A script that has run well before and then fails for a time? I’m not buying it. Somebody is mucking in the scripts. But why change the script if “the science is settled?”

Liars lie and feel good while lying.

I have had perfectly good processes run fine for very long times – only to crap out unexpectedly.

Almost always due to something else going on in the system. An operating system update, a data source having issues, etc.

I recently had a program crap out after years of running because somebody fat fingered a different character into the data set, and the program didn’t know how to handle that character.

Mark ==> a typical day in the life…..

Newt and WO ==> I have written a lot of scripts, in many languages, over the years. Doing such things as sucking in remote data streams and turning them into to pre-formated html pages ready for the web.

There are myriad ways for this process to go wrong — and one always hopes that one has been smart enough to plan for all the possibilities for things to go wrong — but, though I have had enough success to be considered a wizard in the field, I have never had 100% success — there is always a time when some danged idiot at the other end of the process does something that breaks the script.

The longer a script has been successful, the greater the chance that some as yet unexpected glitch will mess things up.

I’m happy to let Eric at NOAA get it sorted out.

It’s been noted many times before that you can’t make something 100% idiot proof…cause they’re always coming out with better idiots… 🙂

rip

Does show that their own QC processes are inadequate.

Adam Gallon ==> Yeah, but it happened over the holidays. No one in the office….etc.

This shows the value of public peer review — called ‘side-checking’ in some fields.

Eric at NOAA reveals that there is a script that performs the magic — and these are always prone to glitches and the unexpected. I think the would have caught I sooner or later.

I am a little surprised that the correction resulted in yet another error…..

The graph points are monthly data so the January data won’t be plotted yet.

Phil ==> Not monthly data points — about ten-day data points (has to do with the satellites path). See the .csv file for the dates of each point. There were originally two data points missing in mid-December. After that was fixed, there were mismatches between the data and the graphic.

You would think that after discovering a bug, and engineering a fix, they would take the time to validate that the new data was actually correct before posting it.

You would think, but in government science, it’s a lot of “close enough” and “not my job” or “but then why the blip?”

You know the old song?

99 bugs in the script on the wall, 99 bugs in the script,

Patch one down and pass it around,

114 bugs in the script on the wall …

My son is a computer science major and he told me that every time you fix a bug, you inadvertently introduce another. I am not a good programmer, just number-crunching, but I have to agree. Every fix of these scripts or of a climate model has to be verified independently.

Loren Wilson ==> With all due respect to our son — it’s not quite that bad — it just seems like it sometimes.

In this case, we are just discussing what I always called an “auto-magic” script — i just automated some repetitive task. In this case, it seems to suck in the GMSL data, build the .csv files, and create the .png image from the data.

Ja. Ja. The climate is changing.

If nobody had noticed it would have been claimed to be ACCELERATION!!!

Thanks, props to you,

w.

…and get them to explain why no tide gauge shows the acceleration they are claiming

Tide Gauge data was “adjusted” back in 2017.

https://notrickszone.com/2017/12/04/whistleblower-scientists-psmsl-data-adjusters-are-manufacturing-sea-level-rise-where-none-exists/

A C ==> thanks for the link to Parker and Ollier.

Latitude ==> Nerem et al’s “acceleration” is a figment of statistical imagination.

A great leap. A veritable hockey stick. Catastrophic.

w. ==> Thanks, I have been trying to sort this out so I can finish writing an essay about SLR, Nerem et al, etc. but have been stymied by the data and graphics being wrong….Eric at NOAA has been helpful so far.

When the data doesn’t fit the narrative, torture it until it does.

Why does have such a regular sinusoids variation? Is that due to Northern Hemisphere winter weather and deposition of snow and ice on land? How does the satellite correct for the uneven distribution of water level due to tidal influences? What is the margin of error for the scale on the y axis? Has that changed with the 4 different instruments used? Just asking for a friend.

R Moore ==> That is the seasonal signal — we usually see the version with the seasonal signal removed.

That graphic is found here.

…or, if we can’t guarantee that they are correct, they should at least agree with one another.

NO. My experience is that it is these places where “things don’t agree” are where the most advancement is to be found. Searching for “data consensus” can lead to adjusting away the MWP and 1930s CONUS heat spike and “hiding the decline.”

Rob ==> It is only the data file and the graphic of that very same data that I insist must agree — the have a script that brings in the data from somewhere, creates a .csv txt file that contains the data and the processes the data file into a graphic. The graphic must needs show the data exactly as given in the data filefrom which it has been created.

Well the data file shows numerical data every 10 days, except for the last data point you’re querying which is indicated as being on the 6th Jan. The description of the graph indicated that the plot is monthly so I wouldn’t expect the January data to be plotted yet

Ten days being the orbit repeat time for the satellites.

Phil ==> The graphic is not monthly — but every data point (about ten days apart) are shown.

The Technical data for the graph says that the Temporal Resolution is ‘monthly’.

In any case the data point shown in the data file is only for 6 days, i.e. less than the orbit repeat time, so doesn’t constitute a complete data point. Probably get an accurate point when they update it (this week?)

Phil ==> I’m afraid I can’t reconcile the differences in their technical description. They plot every data point in the .csv file — which are at ten day intervals — which I have confirmed by plotting the data myself.

As I mention, I have queried once more and await a reply.

Data has been updated but not yet reached a complete orbit repeat time:

2020.0224, 63.41000

Needs to reach 2020.027

Phil ==> I see that … a bit odd that a figure shows up for an incomplete orbit cycle. Nonetheless, the data points in the graphic, even for completed cycles, still do not match the data file.

> …a script that brings in the data from somewhere, creates a .csv txt file that contains the data and the processes the data file into a graphic.

I’d bet there are script exceptions handlers. 000mm or 999mm don’t get graphed. I get that and agree as long as noted. Unfortunately this invites the slippery slope. 50mm or 80mm are likely exceptions or are they? We have to be very careful with exceptions.

Good job bringing light to this subject. Thanks.

Rob ==> That’s the rub with writing scripts — what to check for, what to flag as an exception, how to handle the unexpected….I have always tried o include an ‘alarm’ function that sends me an alert on exception — a pain but it prevents this type of public embarrassment.

Absolutely. And don’t forget the “exception” when the latest datum agrees to four digits. Possible, yes. Likely, no.

We engineers should never have taught bean counters how to use VisiCalc.

Meanwhile Colorado University’s Sea Level Research Group

http://sealevel.colorado.edu/

hasn’t updated their page since February 12th 2018 when they claimed an acceleration in sea level rise of

0.084 ± 0.025 mm/yr²

Can we expect Dr. Nerem’s group to come up with a increase in that figure over these past two years? As you might recall, they extrapolated that acceleration claim out 82 years and came up with 0.65 meters of sea level rise by 2100.

We live in interesting times. (-:

If you compare it to the tide gauges….you can see how they cherry picked to show acceleration

Besides that the numbers are certainly BS, they should have been reported as 0.08 ± 0.03 mm/yr². That was one of the very first things I’ve learned in my physics course – always quote the uncertainty to one significant digit, if it’s not 1. And always round the uncertainty up.

Bingo!

This shows that the SLR acceleration is man-made.

It is a bit more concerning seeing all the corrections applied, include the ocean tide height model, wave action and atmospheric moisture for something that does not work near land and cannot be calibrated to tide gauges. They also do not describe how they splice together data from the different satellites to form the trend since 1992 (especially with the data drift later in the prior satellite record). Given the 60 year cycle in tide gauge data, its hard to put much faith in the short altimetry records

Tide Guy ==> There are manuals describing all of these features of satellite altimetry. I gave links in my SLR series. But, you are correct — there are lots and lots of guess-timates involved — and I am working on an essay on the very issue you raise — if satellites incorrectly “measure” Sea Surface Height at a location at which the Sea Surface Height is known by direct tide gauge measurement — then this gives us an idea of the degree of “original measurement error”… it is quite large, btw.

From the NOAA graphs above they claim 2.9 +/-0.4mm/year

The four years ago Nils-Axel Mörner said this:

– In 1982, NASA showed 1 mm/year. Now they claim 3.3 mm/year. They have more than tripled sea level rise by simply altering the data.

I suppose it depends on the measurement methods, and in the terms of coastal based measurements it is problematic with the varying land (sinking and rising).

I think not directly altering the data but doing the ‘right’ calibration as everything in this kind of science.

The old data is still available at the defunct’s John Dally site:

http://www.john-daly.com/ges/images/niv_moy.gif

Mörner complained about the recalibration that was done about 2003 to show the 3 mm/year rise and replace the 0.8 mm.

It remained about the same since, seen all kind of recalibrations with all those satellites. Newer satellites should be better and should not need any recalibration based on the older ones but apparently this is how it works :(?

I remember envisat showed no sea level rise until post mortem it suddenly did.

NASA has a superb Independent Verification and Validation program. e.g., see:

Independent Verification and Validation Technical Framework

https://www.nasa.gov/sites/default/files/atoms/files/ivv_09-1_-_ver_p.pdf

This established and worked with validating industry Computational Fluid Dynamics models against data with the NPARC alliance.

https://www.grc.nasa.gov/WWW/wind/valid/

When will NASA have the leadership & insight to have the group independently analyze, verify, and validate NASA’s OWN climate data?

Plus-minus 4cm.

Arachanski ==> Don’t get me started on their presentation of ‘”errorless SLR”.

Everyone seems to be using the ‘new math.’

Only those who do real work all the time can know how difficult it is never to do any mistake, Mr Hansen.

Rgds

J.-P. D.

“Only those who do real work all the time can know how difficult it is never to do any mistake, Mr Hansen.”

That leaves climate scientists out.

Bindidon ==> Yes, I agree100%. see my reply above on this topic here.

Bindidon: Yes, people make mistakes. REAL scientists admit those mistakes, thank the person who pointed them out and get back to work. Unfortunately, climate “science” is not really science in so many cases. It’s good that Eric Leuliette corrected the data, and it will be interesting to see what comes next.

That being said, accuracy is vital in science and one would expect review of the data by more than one person before it’s released. Perhaps future releases will receive that kind of scrutiny. Science could use a boost right now.

President Trump should simply require all publicly funded climate research to be open sourced. Put everything on github – raw data, scripts, results…

Sheri

What a poor, arrogant comment! But such comments are nothing unusual here.

You do, above all, not seem to understand that if either

– the Globe was cooling since decades

or

– fossile fuel would have stopped to play a role decades ago, because it would have been successfully replaced by new energies

this web site would not exist, let alone would people like you pretend that climate science is no science compared with the rest.

Feel free to continue discrediting the work of all these scientists. No problem for me!

I hope for you that you REALLY do or did work of similar complexity compared with theirs.

My personal experience in 45 years of partly very hard work is that those I know they did such complex things never would write such poor comments. Never and never.

Rgds

J.-P. D.

Your comment does not address Sheri’s in any way.

Can you tell us a legitimate scientific reason why, for example, Phil Jones would refuse data to another researcher? Or Michael Mann, or Lonnie Thompson, etc ad nauseum.

“My personal experience in 45 years of partly very hard work is that those I know they did such complex things never would write such poor comments. Never and never.”

You didn’t read the CRU emails, did you. Or any public comment by the likes of Mann calling anyone who disagrees with his scientific view an industry shill.

I think your blinders are doing an excellent job.

That coming from James “Manhattan under water” Hansen is a massive joke.

At least most working people get it right most of the time and quite often pay with their jobs if they don’t.

Of course that doesn’t apply to Climate Scientists does it.

Bindidon ==> there is nothing personal about my noting he missing data in the NOAA graphic and data. NOAA was happy to have the fault pointed out.

Apparently the.csv data file and the graphic are created by script and “auto-magically” appear on the web.

As with many web sites, there is seldom enough time to have a human check the pages in real time — and errors appear and go uncorrected unil some third part points them out.

When this has happened to me, I have always appreciated the help!

Kip Hansen

100 % agreed!

My comment was not personal against you, but rather against the attitude in general.

Please have a look at the harsh replies above, and you will understand what I mean.

Rgds

J.-P. D.

Bindidon ==> The handling of comments on blogs that deal with controversial issues — such as this — is always tricky. It is unfortunately far too easy to be misunderstood or to have one’s true attitude leak through and set off an emotional response from another reader.

Readers here have a very wide range of background education and communication skills — and for many, English is not their first language.

A great deal of patience is required. These little upsets can usually be overcome with a soft reply.

“Swedes Vote Climate Policy Biggest Waste of Tax Payer Money in 2019”

https://www.breitbart.com/europe/2020/01/11/swedes-vote-climate-policy-biggest-waste-tax-payer-money-2019/?fbclid=IwAR1MYsjQqrPS_EpPZz4zEc-yTY-LYUdeYKoFEXOeBvzbsrrN5K2_yqW6uwQ

“In second place in the poll was a project from the artists’ commission that saw over a million krona donated to a project focused on art for earthworms and fungi.”

ROTFLMAO

That is about $100,000 US$.

Spending such on art about fungi would not be hard.

Them things are interesting. Do an image search with ‘fungi’ to see some of the real items.

‘Art of fungi’ is a nice diversion, also.

[We went to a presentation about the topic about 6 weeks ago.]

Can anyone tell me how they MODEL glacial melt? It seems to me an impossible task.

Easy stuff gets done in a week or so.

“Impossible” tasks take a little longer.

A year, at least.

Degree day models

More nonsense I see! thanks

Out of curiosity, what are the error bars on the individual measurements?

Commenting at ATTP on Keeling curve when noticed a possible similarity to SLR

“Meanwhile, little old Keeling Curve just going to be keeping on keeping on being the familiar Keeling Curve… for a long time.

If we were to begin cutting emissions this year sufficient to halve emissions by 2030, the change of slope or progression of the Keeling would be almost imperceptible. We would be emitting at about the same level as the mid-1970’s, and with a similar rises in the Keeling Curve as in that era.”

–

One of my pet bugbears.

The Keeling curve is scientifically wrong.

Something does not add up.

–

Reasons?

One expects a seasonal variation.

The see saw effect. Granted.

One expects a general rise in trend. Granted.

But climatic changes, economic changes, temperature changes, (volcanoes even) mean that the graph should have real swings up and down in the yearly CO2 levels.

There is No natural variability here.

None.

Natural variability is as dead as the fabled parrot.

Why is the question one should be asking.

–

Interestingly the GMSL data from NOAA does show a similar sawtooth pattern and uptick.

Cannot think of a link between CO2 and sea level rise though there could be more water to expand in the Southern Hemisphere in summer so a seasonal cause to the spikes.

Sea level rise at least shows some natural variation.

Due to years with heavy landlocked rainfall.

angech

“But climatic changes, economic changes, temperature changes, (volcanoes even) mean that the graph should have real swings up and down in the yearly CO2 levels.”

Sorry, but… it is as it is:

https://www.youtube.com/watch?v=k7jvP7BqVi4&feature=player_embedded

https://www.youtube.com/watch?v=j1ehcjjDPy8

https://www.youtube.com/watch?v=6-bhzGvB8Lo

Please keep in mind that for example volcanoes account for no more than 1 % (yeah: one percent) of Mankind’s emissions.

“The Keeling curve is scientifically wrong.”

You are guessing here. It would be better to prove it, angech.

Rgds

J.-P. D.

You are guessing here. It would be better to prove it, angech.

Perfection is usually a human attribute, not random.

It would be nice to see figures from somewhere else to compare continually

Yeah – I’ve wondered that. Assumed it was due to them filtering the raw data to ‘baseline’ clean conditions or something like that. But the other possibility is that variation in human emissions is just completely swamped by the natural carbon cycle. Or that curve is just showing Henry’s Law effect on CO2 in Oceans nearby. In theory, if it’s sampling high altitude air then it should be well mixed, but I’ve spent a few years doing that and in my experience you simply don’t get that amount of homogeneity. How fossil fuels can be the *only* explanation of modern temperature is just beyond me…

Another of the many places where confirmation bias led to accepting errors in favor of the narrative.

Kip. There is a lot of out of date graphics in sea ice extents and ENSO, too. In the past, this has signaled some fudge-making as in the addition of isostatic adjustments for glacial rebound that added a small amount to sea level. Since this is a volume of ocean basin change the reported sea level is somewhat above actual and into the atmosphere. BTW, the odd drop in SL in middle part of your graphic is where they made the adjustment after many weeks of not reporting sea level. Don’t forget, they also declared the Jason/Tropex sea surface T and Ocean Heat Content measurements to be too cool and dropped using them! Color me sceptical.

Gar ==> there are a lot of reasons to be skeptical…..