From the “we’re gonna need a bigger computer” department.

Climate model uncertainties ripe to be squeezed

The latest climate models and observations offer unprecedented opportunities to reduce the remaining uncertainties in future climate change, according to a new study.

Although the human impact of recent climate change is now clear, future climate change depends on how much additional greenhouse gas is emitted by humanity and also how sensitive the Earth System is to those emissions.

Reducing uncertainty in the sensitivity of the climate to carbon dioxide emissions is necessary to work-out how much needs to be done to reduce the risk of dangerous climate change, and to meet international climate targets.

The study, which emerged from an intense workshop at the Aspen Global Change Institute in August 2017, explains how new evaluation tools will enable a more complete comparison of models to ground-based and satellite measurements.

Produced by a team of 29 international authors, the study is published in Nature Climate Change.

Lead author Veronika Eyring, of DLR in Germany,said:

“We decided to convene a workshop at the AGCI to discuss how we can make the most of these new opportunties [sic] to take climate model evaluation to the next level”.

The agenda laid-out includes plans to make the increasing number of global climate models which are being developed worldwide, more than the sum of the parts.

One promising approach involves using all the models together to find relationships between the climate variations being observed now and future climate change.

“When considered together, the latest models and observations can significantly reduce uncertainties in key aspects of future climate change”, said workshop co-organiser Professor Peter Cox of the University of Exeter in the UK.

The new paper is motivated by a need to rapidly increase the speed of progress in dealing with climate change. It is now clear that humanity needs to reduce emissions of carbon dioxide very rapidly to avoid crashing through the global warming limits of 1.5oC and 2oC set out in the Paris agreement.

However, adapting to the climate changes that we will experience requires much more detailed information at the regional scale.

“The pieces are now in place for us to make progress on that challenging scientific problem”, explained Veronika Eyring.

From the University of Exeter via press release

The paper: https://www.nature.com/articles/s41558-018-0355-y

Taking climate model evaluation to the next level

Abstract

Earth system models are complex and represent a large number of processes, resulting in a persistent spread across climate projections for a given future scenario. Owing to different model performances against observations and the lack of independence among models, there is now evidence that giving equal weight to each available model projection is suboptimal. This Perspective discusses newly developed tools that facilitate a more rapid and comprehensive evaluation of model simulations with observations, process-based emergent constraints that are a promising way to focus evaluation on the observations most relevant to climate projections, and advanced methods for model weighting. These approaches are needed to distil [sic] the most credible information on regional climate changes, impacts, and risks for stakeholders and policy-makers.

Fig. 1: Annual mean SST error from the CMIP5 multi-model ensemble.

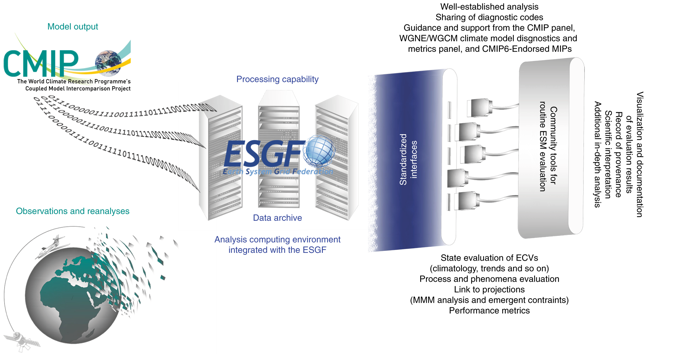

Fig. 2: Schematic diagram of the workflow for CMIP Evaluation Tools running alongside the ESGF.

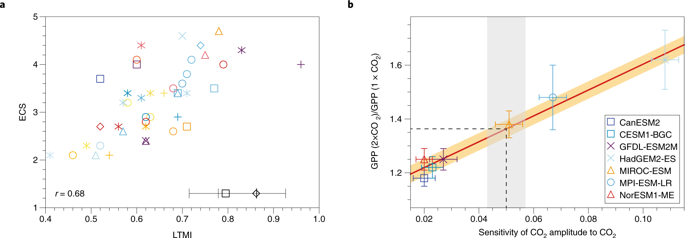

Fig. 3: Examples of newly developed physical and biogeochemical emergent constraints since the AR5.

Fig. 4: Model skill and independence weights for CMIP5 models evaluated over the contiguous United States/Canada domain.

Aspen in August. Why not Houston, I wonder.

Rhetorical question.

No doubt various distillates were consumed during the conference, perhaps leading to the spelling error.

What about edibles and inhalables?

Consumption of those too could help explain an orthographical error by such eminent savants.

Savants/// I thought they were Ser-vants of the great church of AGW

I guess we all know what they are really squeezing out.

Could we maybe get back to observation and experimental science and forget about all these computer games?

Maybe?

Don’t say: ” we have a problem Houston. ”

Really?

Oh, so it not actually that clear what the human impact is. Funny , I thought you said it was clear.

The whole exercise is only superficially about ‘science’ but rather is 100% about ‘science communication’, i.e. using sciency language that sounds sciency to the avareage Joe or Joanna as a marketing exercise.

These filth would market Thalidomide if their careers would benefit.

Thalidomide was originally marketed in Europe as a sleeping pill. But it is not useless as a medicine. From wikipedia:

“Thalidomide is used as a first-line treatment in multiple myeloma in combination with dexamethasone or with melphalan and prednisone, to treat acute episodes of erythema nodosum leprosum, and for maintenance therapy. Thalidomide is used off-label in several ways.”

This is all about pretending to be honing their skills and computing ability, duping the public into funding them even more while not accomplishing anything real, except using a lot of energy and money.

Remember, they will never be done because they need job security and thus will always need more funding.

well, it’s clear the human impact is somewhere between near-zero and very large.

After 30 years and tens of billions of dollars, I’m glad we’ve narrowed the human pinkie to footprint down.

Same as ECS range after 40 years: 1.5 to 4.5 degrees C in 1979 and 2019.

My bet for ECS is approx. 0 to 1C/doubling* – that is, NOT a problem.

Regards, Allan

Post script: 🙂

* The asterisk is because CO2 trends LAG temperature trends at all measured time scales, and I am still having difficulty understanding how the future can cause the past.

Hmm… maybe its Relativity – if the CO2 molecules vibrate really fast, like the speed of light or even faster, can they time-travel? Can I get a grant to study this? Anyone?

With regard to Allan’s comment, maybe the CO2 is inside the Tardis?

@Allan MACRAE January 7, 2019 at 5:27 pm

I agree with your upper limit, though based on actual empirical evidence the upper limit is most likely <0.5°C/2xCO2, but I firmly believe ECS could still be negative once all feedbacks are allowed to feedback.

+1!

And, the first one of those actually means ‘the impact of climate change on humans is now clear’. They used the wrong preposition.

Just as SOON as we figure out the actually climate sensitivity of CO2, we’ll KNOW that climate models are still pointless toys, systematically taking minuscule and major errors alike, and multiplying them billions and trillions of times, then run hundreds of different times, producing evidence free output (not climate data), all of which will be objectively wrong, but which nevertheless will be spun by CAGW alarmists into the absolute and objective scientific truth, just as Gavin Schmidt hoped when he said (something like) “hopefully, reality will be somewhere in that spread.”

Jesu Christo, please don’t allow the future of Western Civilization be decided by such stupidity.

But if there are more models, used in the way you describe, will decrease uncetainty. (Do I need a \sarc?)

too much climate change in Houston during summer for almost anyone to bear

Two or three years ago I found that the Global temperature anomaly is strongly correlated (R^2 = 0.86) to the delayed strength of the Earth’s magnetic dipole

http://www.vukcevic.co.uk/CT4-GMF.htm

Ever since I tried to come up with plausible mechanism to explain the above, but failed to define a scientifically credible hypothesis, therefore, since I have no reason to think that the NOAA’s geomagnetic data files are ‘fiddled’ and the nature doesn’t tolerate coincidences, I am (for the time being) of the view that the global temperature anomaly is a ‘work of art’ rather than a product of nature.

Devising models to match something that could be just an illusion, a mirage, a sort of fatamorgana, it is a total waste of time.

Is there any way to predict the “…strength of the Earth’s magnetic dipole…”?

Considering that changes in the strength are usually (but not always) at a slow rate, there are reasonable estimates to the nearest quintile.

Bloody hot in Houston in August.

It’s all relative. After living around the Houston area for several decades, then moving to Massachusetts and getting to live in 95/95 conditions for weeks at a time with no AC, I’ve come to the conclusion that Hou ain’t that bad. We’re rarely at 95% at midday, more like 60% and that’s sticky but not unbearable.

I’ve also survived the “dry heat “ of Arizona, FLASH if it’s above your skin temperature it’s hot. Quibbling about “feels like” is wasting time, it’s just HOT!

It is a dry heat is what they tell the Turkey at Thanksgiving before they put it in the oven.

Massachusetts at 95°F and 95% Humidity, for weeks?

Nonsense.

Not even Atlanta, Mobile or Jacksonville have weeks of 95°F and 95% Humidity.

It is quite easy to trundle on to the big box stores and buy an A/C; including ones that do not need to be hung in a window.

I’ve been in Luxor Egypt in their summer at 50C which is 122F.

But it’s a dry heat. 🙂

Vietnam’s Mekong Delta had wet heat, but I was a lot younger!

I have been in Djibouti, right on the Red Sea. It’s not a “dry” heat (I went in Oct, >90°F max every day, often >100°F, with average 65%RH). After the first trip there I promised that I owed myself a ski vacation… it has been 6 years and another trip there, and I still haven’t gotten that ski vacation!!! And now my flat feet are producing sore ankles so I probably can’t ski anyway! I’m wondering if it would be worthwhile to go anyway just to sit in the lodge and watch?

Red94ViperRT10

I just re-started skiing last weekend, ten years after a horrific injury. I encourage you to give it a try. I had to buy new fitted boots and so on, but it is all worthwhile.

We all have to surrender to old age, but not too soon.

I spoke with Felix recently. He was born in Innsbruck and is the best ski tuner anywhere. He is gong back to downhill skiing after an 8-year hiatus. He is 84. 🙂

Progress of some quality, certainly. A monotonic perspective. The lack of correlation between hypothesis and observation is due to incomplete, and clearly insufficient, characterization, and an unwieldy experimental space. Extrapolating/inferring the effectiveness of a mechanism in a lab to the wild has limited utility.

Climate change is predicated on the idea that sample noise is data and that trends in noise have meaning. Recent posts of sea level in Japan to 4 decimal places when the accuracy is not better that a whole digit shows that this technology of using garbage to promote science conclusions is catching on all over.

The logical approach would be to start dropping models that are the worst at matching observations altogether, not smoosh them up with everything else in some insane belief that averaging things known to be wrong somehow arrives at something more accurate.

But then the dropped models would have a tougher time maintaining their funding. Can’t have that, so smoosh them in and apply some adjustments to compensate for them…

I have to go puke now.

Of course if one networks enough crystal balls together all the uncertainty will disappear completely!

Just as two ‘wrongs’ do not make a ‘right’, the sum of all fictions does not equal the truth.

Crystal balls are rather hard to read and interpret. Better model it with Magic-8 balls.

Not that simple david.

For example, models that perform better on one set of metrics ( say temperature) can perform

worse on a different set, say rain

Yes folks did think about your approach.

years ago

So why does the one that performs better on temperature also perform worse on rain?

It can’t be because it accurately reflects the physical processes as the physical processes are not independent of each other.

So why pick a model that happens to follow history for the wrong reasons? You will end up with a Frankenstein’s Monster of many errors.

Which explains why Climate Science has made no progress in thirty years.

Remember the uncertainty in Climate Sensitivity has not reduced since AR1. Despite improved computers and observations.

The approach is flawed. Go back to davidmhoffer’s.

“…So why pick a model that happens to follow history for the wrong reasons? You will end up with a Frankenstein’s Monster of many errors…”

Therein lies the rub.

Even models which appear reasonably accurate on a global scale when compared to observed data are utterly hogwash on continental and regional scales.

They are a comedy of errors which sum up to something that looks reasonable, and they are somehow given credibility.

MC

Yes, it is a cardinal ‘sin’ to be right for the wrong reason in science. It might well just be coincidence that a model is right with one parameter. In any event, a metric for evaluation for models should be how well ALL the predictions perform, not just one. It is a strong suggestion that the tuned models are either missing something or are doing things wrong if interrelated physical processes correlate poorly.

Velikovsky was right about the surface temperature on Venus – but for the wrong reasons…

“Yes folks did think about your approach.

years ago”

Great that they thought about it. What did they DO about it?

Mosh – as M Courtney already pointed out, if the model does a bad job of anything, it means it is getting the physics WRONG. That the sum of the errors produces a result that matches observations on some things means it has done so on physics that is WRONG, and only arrives at a better match due to other errors or adjustments. Since the underlying physics is WRONG, run it long enough and it will go wildly off kilter.

I do not think Mosher’ statement in his comment is wrong.

Maybe Mosher has to emphasize the difference in meaning between the

“temperature” and “rain” when it comes to GCM projections, especially when for one of these the case already dialed down from “worst than ever” to “worse” and in the prospect of further reduction in the climate sensitivity it either be considered better or the AGW completely loses the GCM support from climate projections.

While switching to rain or precipitations or winds can still keep the “worst” around for some longer with a chance to dial it up to “worse than ever”…within the man-made climate paradigm.

Sorry, if me reading something else than you meant, Steve.

cheers

But Mosher’s statement supports the strategy on climate modelling that has failed for thirty years.

A good definition of madness is to repeat the same thing over and over and expect different results.

Seriously, you cannot study parameters in isolation if they interact. Not when there is no possibility of holding all the other parameters constant while you run the world.

Mosher’s statement has been proven wrong by logic and by experience. The approach does not work. And it should not be expected to work.

Why would anyone expect it to work?

“One promising approach involves using all the models together to find relationships between the climate variations being observed now and future climate change.”

So meld the hopeless models together, we will get a better output. Whether it is “more true” remains moot.

There are no “… climate variations being observed now …” Other than minor post-LIA warming, with ups and downs, there has been no observed significant change to any climate metric in over 100 years. Get a grip, people; our climate is remarkably stable.

So just mush together the ones that do good on rain and exclude those that don’t from the rain projection.

Then mush together the ones that do good on temperature and exclude those that don’t from the temperature projection.

It’s not really that complicated, assuming an accurate projection is actually your goal.

No. They only do good on rain then.

There’s no reason to expect them to do good on rain in other times if the reason they do good on rain is wrong.

And as they do bad on things other than rain the reason they do good on any one thing is probably wrong.

You may have an accurate projection of your expectations but it won’t be an accurate prediction of the real world.

MC Even if you had some modicum of success on temperature, but rain was wrong and you added a coefficient to make it good on both, this is a model waiting to fail massively in the future. This is children doing brain surgery.

Moreover, if there were ever to be a model that at least could get the sign correct from the signal in the data, the data itself has been and, remarkably, continues to be, adjusted. Any chance of a real discovery being made in this science has been foreclosed on by agenda driven data fiddling. Certainly this has to be major factor in the worsening of model performance with rigid catechismic adherence to a formula cast in stone 40yrs ago.

I’m puzzled by this mashing together business. Surely the obvious thing is to work out why the models that are good at rain are good at rain and why models that are good at temperature are good at temperature and then produce a model good at both.

Ben Vorlich.

———-

One main thing you missing there Ben….is that none, while either of, rain or wind or precipitation is global…

While temps could be “global”…in some aspect considered as such, as per the merit of GCM projections….rain ain’t not it, while also meaning that local or regional ain’t not it too !

NOT it, AGW…where G stands there clearly for global only, and not regional or local!!

ECS only can be considered as in the concept of global, not local or regional, like rain or wind or snow…or as per any other claim of later days stupids.

ECS, or TCS are only terminology, in the consideration of accomplishing a great intended fraud-deception in the line of learning and knowledge,,,,

where actually no much of any value there these days when it comes to either ECS or TCS,

ECS simply there to support the concept of CS when it comes to GCMs,

and TCS only there to support CS when it comes to real data, as CS in both cases, either GCMs or real data, can not make it in its own, unless this further intended deceiving approach considered.

Meaning that CS, ECS or TCS are simply a big huge bollocks, while when considered at the whole of the spectrum consisting as no more than a very well thought and intended calculated scientific deception, in as per science and knowledge in concept and principle,…. very well objectively intended, very well objectively thought and forcefully objectively applied…with no much regard.

while…as as per the old rules, whole this affair considered, it stands as the worst of all crimes, that of a “sorcery”…

very well and objectively thought and very well and objectively intended…

within whole it’s objective fakery, and intended objective disruption.

But hey, no much worrying there , as no any one really cares any more of the cardinal crime in the means of the old….either objectively, or subjectively.

When clearly losing CS, or it’s main supports, ECS and TCS, then the only thing remaining for the sillies, is to brush all of it under the carpet and force it criminally, it,

their AGW further accommodation and acceptance, as forced by-in the concept of local and regional weather projections, as per means of it all, derived and squeezed out of GCM climate projections…

into weather GCM projections supporting AGW, at any cost…under any circumstance…while all concept under global as per merit of CS is clearly lost.

cheers

cheers

“For example, models that perform better on one set of metrics ( say temperature) can perform

worse on a different set, say rain”

….then they are total garbage

Models that differ in base global temperatures by 3+ degrees C are not modeling the same physics.

The fact that modeled hindcasts diverge tremendously, modeled late-20th century converge and modeled future diverge tremendously show that the whole thing is an exercise in parameterization to mimic the late-20th Century. Each model has its own physics and cannot be compared to nor combined with any other model.

Then what you have isn’t really a model of any sort. What you have is a lucky paramaterization. A true model, that is actually physics based, would have to follow at least the sign of the variable. Do any of them do that at least, say, 85% of the time?

I don’t think any adjustments were made to the models running hot. They are source of the 4.5 degrees per doubling of CO2, yes? Those hot running models are left hot in order to make scary “projections”.

SR

The UN IPCC AR5 had to arbitrarily reduce the near-term modeled “projections” because they were running way too “hot.” They did not reduce the out-years because that would hurt the CAGW meme.

I wonder what they will do with the CMIP6 results in the UN IPCC AR6? From what little I understand, it will be more of the same modelturbation with scary stores about a hothouse earth.

When you cool the past…get rid of the MWP…and then hind cast the models to that

…what would you expect…..exactly what they do

Steve, the synod of climate gurus are caught hetween a rock and a hard place. It was pointed out the delta T projections proved to be 300% too high. A 40 year deep cooling period after 1940, when CO2 emissions were accelerating, and an 18 year T Pause after we recovered from the deep cooling, bringing us only back to~ the 1940 high by 1998 meant that all the warming to date actually had occurred prior to 1940 when CO2 wasn’t a factor.

They set to work feverishly, bending and twisting data to remove this falsifying evidence. They calculated what the facts meant for ECS and realized it was so small that there was nothing to be alarmed about. Then they fiddled the models, tuned them to the grotesquely altered data and doubled down on the alarm. Only now, instead of 3-5C warming from 1950 to 2100, it was only 0.7C more from 1950 (1.5C from 1850!) that was going to have serious impacts!! 2.OC (1.2C from 1950) was to be catastrophic! I have a wager that all-in, we can’t even reach 1.5 if we strived to attain such an increase

Why is it now clear that we need to rapidly reduce carbon dioxide emissions to avoid “crashing” through the 1.5 – 2.0 deg C set out in the Climate Paris agreement?

There has never been ANY science that indicates that going over 1.5C will be a problem, much less a disaster.

Originally, the 1.5C was merely the point at which the world would get back to the temperature the world enjoyed during the MWP. The claim was that above that, we don’t know what would happen.

Somehow the usual suspects have taken “we don’t know” to “here be dragons”.

BTW, it’s been warmer than the MWP 3 times in the last 5000 years and the world thrived.

It’s been warmer than the MWP for some 80 to 90% of the last 10K years and the world thrived.

So the claim that disaster awaits if we go over 1.5C has already been disproven. By the real world.

The last interglacial was about 3C warmer than the current one and the planet and our ancestors survived just fine. Not only has there not been any science to indicate that going over 1.5C is a problem, there’s not even any validated science that can show how doubling CO2 would cause more than 1.1C and most likely far less. Do nothing and the effect of doubling (even tripling) CO2 is already less than 1.5C.

Removing uncertainty from the presumed ECS will not do any good, as the existing uncertainty doesn’t even span the actual ECS which is about 0.3C per W/m^2 and less than the claimed lower limit of 0.4C per W/m^2.

“way to focus evaluation on the observations most relevant to climate projections, and advanced methods for model weighting.”

Who will and how will they determine which observations are most relevant? Sounds like more gobbledygook to me and another way to rig the data.

Those would be the goal posts. They must retain control of the goal posts, in case they have to move them – again.

29 authors and the masterly obfuscations and obliqueness of this article are sure signs of a CYA story.

Bet it does not change the process for AR6.

Can somebody please explain figure 3 and 4? Where are the constraining observations?

There doesn’t seem to be a simple way to explain the figures without quoting the paper so extensively I would be concerned about copyright issues. The caption to Figure 3 says the two graphs are taken from two papers, both of which have some of the authors of the present paper included:

53. Sherwood, S. C., Bony, S. & Dufresne, J. L. Spread in model climate sensitivity traced to atmospheric convective mixing. Nature 505, 37–42 (2014)

61. Wenzel, S., Cox, P. M., Eyring, V. & Friedlingstein, P. Projected land photosynthesis constrained by changes in the seasonal cycle of atmospheric CO2. Nature 538, 499–501 (2016).

The graph on the left of Fig. 3 is described as showing that there is some correlation between ECS values generated by CMIP models and an index called a lower tropospheric mixing index from the same models, which it says is calculated as the sum of an index for the small-scale component of mixing that is proportional to the differences of temperature and relative humidity between 700 hPa and 850 hPa and an index for the large-scale lower-tropospheric mixing.

The graph on the right appears to relate CO2 fixed photosynthetically to a measurement of annual increases of CO2 at Point Barrow Alaska.

Fig 4 caption says it is taken from

Sanderson, B. M., Wehner, M. & Knutti, R. Skill and independence weighting

for multi-model assessments. Geosci. Model Dev. 10, 2379–2395 (2017).

and just displays a particular “skill weighting” procedure.

The paper looks like a straightforward recitation of the discussion that occurred at the workshop rather than an attempt to demonstrate anything new. “Emergent” appears to mean essentially things they didn’t of before that might be a good idea.

I have already spent too much time looking at this paper and need to get back to what I was doing now.

How do they determine model “skill”?

By how well the models produce the numbers that the organizers are paying to see?

Probably by a show of hands.

“Produced by a team of 29 international authors, the study is published in Nature Climate Change.”

Why does it take 20 or 30 “authors” to do ONE of these “climate studies” ? IIRC , Einstein had one assistant to help him change the world…..hmmmmmmmmm……..

Not in 1905. He was patent clerk working at home part time.

Why is it now clear that we need to rapidly reduce carbon dioxide emissions to avoid “crashing” through the 1.5 – 2.0 deg C set out in the Climate Paris agreement? I didn’t think it was now clear at all. Talk about being in denial. The Climate Paris agreement is dead. They pretend it is still alive.

Amazingly, the only “model” that matches reality is the Russian model…Oh no, Now Mother Nature is “colluding” with Russians…! LOL

Candidate Trump told Monafort to send Page to Prague to pay the Russian hackers to model a lower sensitivity, didn’t you know?

Help! The Russians have hacked the climate!

Is this before or after we change all the historical data to be colder and all the current temperatures higher? Alternatively I guess we could use raw data from rural stations that have been well sited and maintained. However I think the best thing to do would be to not do the study at all and worry about real problems instead

29 authors ??

If she/he were correct, only one would be needed.

I recall Willis has a rule about the number of authors.

The whole thing smells of more GIGO climate porn. I have yet to see them conduct an engineering evaluation of the models, and the approach of “averaging them together” to get a “better answer” has always smacked of confirmation bias gone wild!

Exactly

The average of Wrong, Wrong, and Wrong isn’t necessarily Right mot more likely the Wrong average

The models are adjusted until they give a sensitivity that the modelers think is “about right.” Each modeling group has its own idea of “about right.”

When the uncertainty has been squeezed out,

there will be no climate models.

No computer model can calculate the future because the future does not exist as a single point. It is only a probability with a range of possible futures regardless of any action we might take.

We can influence the future, but even trying to calculate the effect is problematic. Cutting co2 could even make things worse due to unintended consequences .

Better to maximize economic benefit rather than try and change the weather. That way you have more resources to cope with whatever happens.

This is OT but worth of a mention

In the hotel ‘New Yorker’, New York, on today’s date, on 7 January 1943 died great American/Serb scientist, inventor and engineer Nikola Tesla (87).

As it happens today is the orthodox christians’ (and Nikola Tesla’s family) Christmas day, so anyone who might be celebrating today have a happy Holy Day.

Merry Christmas to you

It doesn’t matter how big and complicated your model is, if it is based on the wrong fundamental assumptions:

They need a method to get rid of the Russian model. It is embarrassing them. Too close to reality for their own good.

Although Pat Frank was never able to get his paper published that I know of his presentation in this video (https://www.youtube.com/watch?v=THg6vGGRpvA ) is clear and reasonable and his conclusions valid. From an uncertainty propagation standpoint models can tell us nothing about the future. Any result will be within the huge uncertainty window and cannot be counted as any more probable than any other.

Additionally this paper erroneously assumes that human emissions are responsible for all the increase in atmospheric CO2 and thus all the warming from it.

How can more models reduce uncertainties? It’s only scientific experiments that can prove relationships.

He hasn’t been here is a while, but rgbduke had a number of comments making some excellent points on this issue here at WUWT. You can probably search and find them; they are well worth the effort.

If the models are actually worth something then the error covariance between any randomly selected set of models should be random. But if there are enough models you will get two or more of them that trend together, just from chance. Okay, you guys can take it from here …

“Although the human impact of recent climate change is now clear”

It’s only “clear” if one assumes that all the warming of the last 150 years is due to CO2.

+10

The impact of any human action on recent climate change is very far from clear and is clearly less than the error bars also. Using a bigger computer will only make the uncertainty arrive earlier.