From the University of York:

A new study led by a University of York scientist addresses an important question in climate science: how accurate are climate model projections?

Climate models are used to estimate future global warming, and their accuracy can be checked against the actual global warming observed so far. Most comparisons suggest that the world is warming a little more slowly than the model projections indicate. Scientists have wondered whether this difference is meaningful, or just a chance fluctuation.

Dr Kevin Cowtan, of the Department of Chemistry at York, led an international study into this question and its findings are published in Geophysical Research Letters. The research team found that the way global temperatures were calculated in the models failed to reflect real-world measurements. The climate models use air temperature for the whole globe, whereas the real-world data used by scientists are a combination of air and sea surface temperature readings.

Dr Cowtan said: “When comparing models with observations, you need to compare apples with apples.”

The team determined the effect of this mismatch in 36 different climate models. They calculated the temperature of each model earth in the same way as in the real world. A third of the difference between the models and reality disappeared, along with all of the difference before the last decade. Any remaining differences may be explained by the recent temporary fluctuation in the rate of global warming.

Dr Cowtan added: “Recent studies suggest that the so-called ‘hiatus’ in warming is in part due to challenges in assembling the data. I think that the divergence between models and observations may turn out to be equally fragile.”

Dr Cowtan’s primary field of research is X-ray crystallography and he is based in the York Structural Biology Laboratory in the University’s Department of Chemistry. His interest in climate science has developed from an interest in science communication. This is his second major climate science paper. For this project, he led a diverse team of international researchers, including some of the world’s top climate scientists.

###

Which 36 modeling projections did he choose to evaluate, and WHY those 36.

The ones where projected CO2 matches the observed CO2. Then you could evaluate whether calculated temperatures, humidity, cloudiness, rain and so on for each grid cell match the corresponding observations.

Hi Jake,

The 36 models used were all CMIP5 models that included the requisite fields for the analysis (e.g. “the surface air temperature (‘tas’ in CMIP5 nomenclature), sea surface temperature (‘tos’), sea ice concentration (‘sic’), and the proportion of ocean in each grid cell (‘sftotf’)”)

This is a remarkably straightforward paper. Instead of comparing simulated 2-meter surface air temperature from models to observations (which combine air temperature over land and sea surface temperatures over oceans), compare the blended air temperatures over land and sea surface temperatures over oceans from the models to the observations. Otherwise you end up with a bias because models have air temperature over oceans warming a bit faster than sea surface temperature.

You haven’t solved the problems of off-setting model parameter errors and of non-unique solutions. You’re not modeling climate, you’re just mimicking a climate observable; an observable (air temperature) that in any case is very poorly constrained. Studies such as yours can never be anything more than tendentious.

Pat Frank writes: “Falsifiable because if the prediction is wrong, the physical theory is refuted.”

…

(refrence: http://wattsupwiththat.com/2015/05/20/do-climate-projections-have-any-physical-meaning/ )

…

Impressive!!!

…

Weather forecasting models are based on the physical theory that hot air rises. So according to Mr. Frank, when the weather prediction fails (which happens whenever you plan a picnic) , it proves that hot air doesn’t rise.

Otherwise you end up with a bias because models have air temperature over oceans warming a bit faster than sea surface temperature.

==============

which would make the models run hot. which they are. however, the problem is worse than this. you are only addressing how well the predictions match reality.

You cannot properly train the models by comparing 2 meter surface temps to combination of surface and ocean temps. faulty training leads to faulty results.

This is the elephant in the room. The training was faulty in exactly the same way the scoring of the results was faulty, because they both use the same methodology.

However, faulty training is much more serious than faulty marking, because it means the models are inherently wrong.

Not only wrong as usual, Joel, but you’ve managed an especially fatuous comment. Well done (for you).

In any case, weather modeling mentioned nowhere in my analysis.

You’re right to focus on hot air, though. It rises vigorously whenever you operate a keyboard.

I apologize to you Mr Frank, it seems whenever someone uses direct quotes from the things you write, it exposes how incoherent your writings are.

..

PS, I quoted you from your article on “climate models”

…

Keep posting, the appropriate saying is , “like shooting ducks in a barrel”

It’s remarkable how nearly all the biases discovered by climate scientists make observed temperature trends warmer. Perhaps there is some secret, fossil-fuel-funded effort to systematically interfere with all climate-related data gathering and analysis, which climate scientists must heroically struggle against.

I can’t think of any other reasonable explanation.

Bogus Zeke

http://www.nature.com/ngeo/journal/v7/n3/fig_tab/ngeo2098_F1.html

more excuses

It’s not the quote, Joel, it’s your inevitably irrelevant mindlessness. You never lose an opportunity to be simultaneously outspoken and vacuous.

Well, a new day, and I am back to wondering if Joel Jackson and I inhabit the same planet.

Zeke says……compare the blended air temperatures over land and sea surface temperatures over oceans from the models to the observations…’

————————————————————————————————————————

So really, they never used the modeled SST, they had them, but instead ran modeled 2m air T and compared them to SSTs?

“Otherwise you end up with a bias because models have air temperature over oceans warming a bit faster than sea surface temperature”

==========================================

Are they suppose to? If not is this physics issue the models got wrong? Why would the relationship between SST and 2 m air T above the sea surface change?

Maybe I read too much into this; but maybe Lord Monckton’s push of difference between model and data is getting some traction and this is an attempt to deflect that line of questioning.

Maybe I’ve just been exposed to a little to much ‘Climate Science’ over the years, but something about this paper makes me think it’s just another excuse to ‘adjust’ the temperature data again.

Oh ya, that would be it. ^¿^

the pause is

GW theory says that the Polar region will warm faster than the rest of the world due to amplifications in the region, in fact this map from NOAA http://polar.ncep.noaa.gov/sst/ophi/color_anomaly_NPS_ophi0.png seems to indicate that the polar region is about 5C above the average but the temperature graph from DMI shows the situation to be Business as usual with the High following the Bell Curve http://ocean.dmi.dk/arctic/plots/meanTarchive/meanT_2015.png

The PAWS BUOY system http://psc.apl.washington.edu/northpole/index.html, a series of 6 small Buoys collecting local meteorological data in the Polar Ice indicate something different. 5 of 6 have temp readings of 0.7, 2.2, 4.9, 4.4, 0.52 for an average of about 2.5C which agrees with the DMI temp graph. One buoy however, Buoy 553800 indicates a temperature of 17.4C or 63F and site about 1/2 way between Greenland and the Pole. Adding this measurement into the mix skews the average to 5C and matches the NOAA map. Could all the red on the NOAA map be a product of a broken thermometer?

Oh it’s not broken, it’s telling them exactly what they want it to.

The polar regions should warm more due to less water% in the air and therefore more CO2 gases in theory have a greater influence.

We know the CO2 theory is contradicted with atmospheric behavior above deserts. They should show any influence CO2 has, but very little warming in the tropics (high humidity) and sub tropic zones where all the world deserts are based.

The NOAA map has anomaly on average nowhere near 5 c and more like 2-3 c, but has a biased continually cooled past of which the exaggerated anomalies arrive. There is no indication in the chart that the bad buoy has been used.

This below is the best data source on the planet we have for polar temperatures by far. DMI data is good for unbiased source covering regions the satellite below doesn’t.

http://climate4you.com/images/MSU%20UAH%20ArcticAndAntarctic%20MonthlyTempSince1979%20With37monthRunningAverage.gif

Only goes to 85 N and 85 S , but that is only 0.48% of the planets surface from both poles missing.

No, its the 5 thermometers that show lower readings that are faulty, obviously.

First we had Heidi the Decline, then Heidi the Heat, Heidi the Pause and now, Heidi the Gap.

Soon to be followed by Heidi the Oceans and shortly thereafter by Heidi the Satellites.

Did you mean “robust assumptions and maybe – possiblies”?

Any remaining differences may be explained by the recent temporary fluctuation in the rate of global warming.

I believe this is called “natural variability.”

Here lies the “Catch 22” of climate science. Their mistakes are “natural variability.”

How and why can it be assumed that anything is “temporary”?

This betrays a bias that is hard to deny.

ta, that was the bit that narks me..temporary? 18+yrs?

when one yr or less of someplace being a tad warm is a global tragedy?

sheesh

Do not the UAH and RSS satellite datasets record global air temperature? Cowtan says the models compute air temperature, thus so long as one is comparing satellite temps with modeled temps, is it not apples and apples? And is there not a divergence?

I’m not getting something here.

Satellites do not measure air temperature. They measure microwave brightness. The “data” you are looking at when referencing UAH or RSS is not “temperatures” but model output.

Satellites do not measure air temperature? Then mercury (or any other) thermometers do not measure temperature, speedometers do not measure speed, scales do not measure weight, etc, etc. But that doesn’t mean they are unreliable.

The UAH and RSS are converted to temperatures and this data is shown, so they are temperatures. Like saying the surface data is not how much the expansion/contraction of liquid was so it’s not temperature.

Satellites measure brightness AT THE SENSOR.

Then a physics model is applied. The physics model is a radiative transfer model. radiative transfer physics is the SAME PHYSICS that tells you C02 will warm the planet

in other words.

IF you accept UAH temperature, THEN you have to accept the physics model that gets you

from BRIGHTNESS to TEMPERATURE.

BUT, that same physics, radiative transfer, is the physics that tells you C02 will warm the planet

When skeptics discover this, they mumble.

True. But irrelevant. Because those modeled temperature outputs were VALIDATED (explicitly in the UAH case) by many radiosonde temperature measurements at many latitudes, for example along the entire North American West Coast from southern Mexico to northern Alaska. Do try to keep up with model validation stuff. Cowtan in an effort to minimize the pause exposes a basic consensus climate science goof. Christy uses massive amounts of weather balloon data to make sure his translation ( really not a model) of MSU channel signals into temperatures is correct.

The difference is stark.

The skeptics may mumble, Steve, because they’re embarrassed to tell you the obvious — that a satellite sensor is not a physical analogy of the terrestrial climate. You ought to listen to them.

Wow, Frank, your response to Steve was outspoken and vacuous.

…

Ever hear about “glass houses?”

So, Mr. Mosher, those rejecting UAH and RSS data are REJECTING the physics that tells you CO2 will warm the planet. It’s a two-way street. If you accept the science, you must accept both the satellite data and CO2 warming, but you can do the latter and arrive at only about 2 degree maximum warming. To that warming, one must add the effect of natural variation. Since no one has demonstrated the ability to predict the natural variation, the final result is, we have no idea if the future will be cooler, warmer, or the same, but it seems likely that, because of Man, it will be two degrees warmer than it would be otherwise.

You can’t make policy decisions based on that.

Steven Mosher; I most disagree. The Satellites are a man made artifact manufacture for the purpose of measurement of temperature. Yes it does this through first measuring radiance. The conversion to temperature is not unlike that of metric to English standard.Or the Specific gravity of water and choc milk when calculating weight to volume. No model just math.

Now CO2 it of course is an object to be measured and recorded. Model are used to project its effect on the atmosphere-climate, this is not the case with the Satellite date.

michael

Thermometers do not measure temperature either, then.

Liquid in glass ones measure the expansion and contraction of a fluid, and bimetal ones measure the relative expansion coefficients of different metals.

And those modern gizmos measure some modern gizmo dealio stuff *sorry about the technical jargon here 🙂 *

All then use some method of converting this into a meaningful form: A NUMBER! Which our brains then interpret as being related to how hot or cold it is.

Sophistry:

noun

-The use of clever but false arguments, especially with the intention of deceiving.

“The Seven Basic Types of Temperature Sensors

Thu, 2000-12-28 09:17

Temperature is defined as the energy level of matter which can be evidenced by some change in that matter. Temperature sensors come in a wide variety and have one thing in common: they all measure temperature by sensing some change in a physical characteristic.

The seven basic types of temperature sensors to be discussed here are thermocouples, resistive temperature devices (RTDs, thermistors), infrared radiators, bimetallic devices, liquid expansion devices, molecular change-of-state and silicon diodes.

Thermocouples

Thermocouples are voltage devices that indicate temperature by measuring a change in voltage. As temperature goes up, the output voltage of the thermocouple rises – not necessarily linearly.

Often the thermocouple is located inside a metal or ceramic shield that protects it from exposure to a variety of environments. Metal-sheathed thermocouples also are available with many types of outer coatings, such as Teflon, for trouble-free use in acids and strong caustic solutions.

Resistive Temperature Devices

Resistive temperature devices also are electrical. Rather than using a voltage as the thermocouple does, they take advantage of another characteristic of matter which changes with temperature – its resistance. The two types of resistive devices we deal with at OMEGA Engineering, Inc., in Stamford, Conn., are metallic, resistive temperature devices (RTDs) and thermistors.

In general, RTDs are more linear than are thermocouples. They increase in a positive direction, with resistance going up as temperature rises. On the other hand, the thermistor has an entirely different type of construction. It is an extremely nonlinear semiconductive device that will decrease in resistance as temperature rises.

Infrared Sensors

Infrared sensors are noncontacting sensors. As an example, if you hold up a typical infrared sensor to the front of your desk without contact, the sensor will tell you the temperature of the desk by virtue of its radiation – probably 68°F at normal room temperature.

In a noncontacting measurement of ice water, it will measure slightly under 0°C because of evaporation, which slightly lowers the expected temperature reading.

Bimetallic Devices

Bimetallic devices take advantage of the expansion of metals when they are heated. In these devices, two metals are bonded together and mechanically linked to a pointer. When heated, one side of the bimetallic strip will expand more than the other. And when geared properly to a pointer, the temperature is indicated.

Advantages of bimetallic devices are portability and independence from a power supply. However, they are not usually quite as accurate as are electrical devices, and you cannot easily record the temperature value as with electrical devices like thermocouples or RTDs; but portability is a definite advantage for the right application.

Thermometers

Thermometers are well-known liquid expansion devices. Generally speaking, they come in two main classifications: the mercury type and the organic, usually red, liquid type. The distinction between the two is notable, because mercury devices have certain limitations when it comes to how they can be safely transported or shipped.

For example, mercury is considered an environmental contaminant, so breakage can be hazardous. Be sure to check the current restrictions for air transportation of mercury products before shipping.

Change-of-state Sensors

Change-of-state temperature sensors measure just that – a change in the state of a material brought about by a change in temperature, as in a change from ice to water and then to steam. Commercially available devices of this type are in the form of labels, pellets, crayons, or lacquers.

For example, labels may be used on steam traps. When the trap needs adjustment, it becomes hot; then, the white dot on the label will indicate the temperature rise by turning black. The dot remains black, even if the temperature returns to normal.

Change-of-state labels indicate temperature in °F and °C. With these types of devices, the white dot turns black when exceeding the temperature shown; and it is a nonreversible sensor which remains black once it changes color. Temperature labels are useful when you need confirmation that temperature did not exceed a certain level, perhaps for engineering or legal reasons during shipment. Because change-of-state devices are nonelectrical like the bimetallic strip, they have an advantage in certain applications. Some forms of this family of sensors (lacquer, crayons) do not change color; the marks made by them simply disappear. The pellet version becomes visually deformed or melts away completely.

Limitations include a relatively slow response time. Therefore, if you have a temperature spike going up and then down very quickly, there may be no visible response. Accuracy also is not as high as with most of the other devices more commonly used in industry. However, within their realm of application where you need a nonreversing indication that does not require electrical power, they are very practical.

Other labels which are reversible operate on quite a different principle using a liquid crystal display. The display changes from black color to a tint of brown or blue or green, depending on the temperature achieved.

For example, a typical label is all black when below the temperatures that are sensed. As the temperature rises, a color will appear at, say, the 33°F spot – first as blue, then green, and finally brown as it passes through the designated temperature. In any particular liquid crystal device, you usually will see two color spots adjacent to each other – the blue one slightly below the temperature indicator, and the brown one slightly above. This lets you estimate the temperature as being, say, between 85° and 90°F.

Although it is not perfectly precise, it does have the advantages of being a small, rugged, nonelectrical indicator that continuously updates temperature.

Silicon Diode

The silicon diode sensor is a device that has been developed specifically for the cryogenic temperature range. Essentially, they are linear devices where the conductivity of the diode increases linearly in the low cryogenic regions.

Whatever sensor you select, it will not likely be operating by itself. Since most sensor choices overlap in temperature range and accuracy, selection of the sensor will depend on how it will be integrated into a system.”

http://www.wwdmag.com/water/seven-basic-types-temperature-sensors

Mosher writes “BUT, that same physics, radiative transfer, is the physics that tells you C02 will warm the planet”

Ouch Mosher. You sound as if you understand the instantaneous radiative transfer solution has anything to do with atmospheric physics leading to warming. Radiative transfer is merely one part of a very complex whole.

“Everything Should Be Made as Simple as Possible, But Not Simpler” – einstein.

So your basis for believing CO2 forced warming is a fail, Steve.

But Mosh conveniently fails to mention that the satellite data is tested and tuned against weather balloon temperature data which use more conventional forms of meteorological thermometers, so the imaged brightness which is measured by the satellite sensor is properly control calibrated by tuning it with real and measured radiosonde data..

Calibrated against independent PRT and radiosonde data, which you always neglect to include in your posts.

It is the Cowtan Way.

Or in real world, the way to kowtow.

This sure has that smell of desperation, so the models are right, once we adjust the created data to suit?

Maybe civilization is completely collapsed and I just have not noticed yet.

Do climate scientists actually believe modelers know enough about the climate and its feedbacks to model it accurately? That is pure arrogance. They have already admitted they don’t know how to model “clouds,” and they can’t possibly know much about other feedbacks either. So, if the models were to get it right, it would be random chance. It would be like a broken clock getting the time right twice a day. They have to know that. But apparently, the “cause” is more important than the truth to these people.

Modelers proceed, Louis Hunt, as though the models were perfect representations of the physics of the climate. The only errors then are caused by the parameter unknowns.

This assumption of perfect models is directly implicit in the way modelers represent physical error. It’s always the variation in projections produced by “perturbed physics.” In these studies, the parameters are varied through their uncertainty range. The only way these studies fully represent error is if the model itself is physically complete.

In practice, climate modelers don’t know anything about physical error analysis, and as a consequence have no idea how to evaluate the reliability of their own models.

“Modelers proceed, Louis Hunt, as though the models were perfect representations of the physics of the climate.”

…

No, most modeler follow what Gavin Schmidt says… http://www.columbia.edu/cu/alliance/EDF-2012-documents/Reading_Schmidt_2.pdf

Gavin’s article does not mention model accuracy, reliability, error, or uncertainty. It’s irrelevant to my point.

The RSS / UAH sensors, match the weather balloons, so model – meets observation, meets verification. The climate models predict about three times the warming of what is observed in the troposphere, so J.J. meet failed model.

Steven M, pay attention also. We do not in general object to models for study. We object to models that fail to meet the observations, being used for public policy, We object that the molded mean of many models, all running wrong in one direction, to warm, are being used for CAGW harm projections.

Mosher, STOP making straw man arguments. Stop lumping all skeptics into one basket.

Thanks for the link, Joel. In that article, titled “Wrong but useful,” Gavin Schmidt explains that climate scientists like him don’t actually believe that models can model the climate accurately at this time. That’s good to know. I’m glad they’re not as arrogant as I thought. His reasons are similar to mine: “interactions among the various components – like low-level ozone, aerosols (airborne particles) and clouds – can get hideously complicated.” Obviously, something that is “hideously complicated” cannot be well understood. And something that is not well understood cannot be accurately modeled. Schmidt admits that when he says, “All climate models are wrong, but some of them are useful…” But what exactly can “wrong” climate models be useful for? They “might,” he explains, “have a vitally important part to play in breaking through some of the log jams now hampering policymakers.” In other words, they might be useful politically to help persuade policymakers to get on board with the program. Climate models that are “wrong” obviously cannot be used to establish scientific truth or provide factual information. But they can be useful as propaganda.

Schmidt wrote the article back in 2009. It appears that using “wrong” climate models to break through the “log jams” hampering policymakers hasn’t worked as well as he had hoped. So now they’re busy working feverishly to adjust temperature observations to more closely match model forecasts and thereby give them more weight. There’s another meeting of the policymakers coming soon, and so it doesn’t matter that the models are wrong, or that they are corrupting temperature data with their adjustments. It only matters that they persuade policymakers to break the “log jams” and support the cause.

Actually real computer modellers do know enough to know that before you make any assumption about CO2 you have to model the entire natural CO2 system, prove that it matched the data before the industrial era. You have to then show that the projected characteristics no longer match after the CO2 of industrialisation.

A proper QA department then queries the assumption that the change is due to the industrial CO2 and checks that the CO2 distribution is consistent with man made CO2 production and not a coincidental natural global increase or worse still a local phenomenon. They also check that all the stations were properly annually certified accurate and no adjustments made to the data.

This is the case for the computer modelling of products for the low end of the commercial market.For life critical the demands are far more stringent.

Please do not dismiss all computer modelling because of the behaviour of the dregs.

The average guy on the street is not buying this stuff, there are so many great scientists of the past that used phrases like” “if you can’t explain it to a …. 6 year old …. your mother …. some guy at a bar ….” then you really don’t know what your talking about. At some point it does begin to sound like a mish-mosh of doubletalk. The only people that are buying into this crap are the pseudo intellectuals like John Kerry and George Clooney, and the hundreds of severely compromised folks that graduate from Oberlin each year.

And you forgot to mention celebrities whose wealth and fame are the result of their ability to make the entirely fictitious appear real.

“Challenges in assembling the data”… Does anyone else find this disturbing? Sounds like a euphemism for “we haven’t yet mastered the art of manipulating the data to suit our agenda”.

” . . . whereas the real-world data used by scientists are a combination of air and sea surface temperature readings.”

He neglected to mention that the real-world data used by scientists has been heavily adjusted, homogenized and in-filled, all done to fit a political narrative.

The other paper mentioned in this article is the one Cowtan and Way published to try and bring back the warming. They did this by infilling the polar regions, where there is little actual data, with what they felt the data should be.

wrong. Cowtan and Way treated the artic in just the same way that Skeptics Odonnel, jeff id and Steve Mcintyre treated antartica when they debunked Steigs paper.

There reconstruction of the artic passed all validation tests, out of sample tests, and tests against independent data from arctic bouys, and reanalysis AND data from the new AIRS satellite

SM, they krigged. Krigging was invented as statistical method to extrapolate mineral ore bodies from limited drill core information. A fact you do or should know. There are ‘uniformity’ assumptions behind the method, which you also do or should know. (Uniformity means, in layman speak, no abrupt discontinuities within the ore body, although are expected at its edges.) Those underlying assumptions are met in Arctic winter and early speing, when everything is snow covered aomething. They are not in late spring, summer, and fall, when the mix of open water, sea ice, and tundra is anything but ‘uniform’. Cowtan’s methodolgy is suspect to anyone with deep knowledge of statistical methods, or the ability to look them up as needed. Essay Unsettling Science explained this ‘little detail’. You might learn something by reading it.

Oh, and that essay also finishes with a figure you yourself provided to CE that overlays Berkeley Earth on the other datasets including CW. The pause exists! Well, until Karl further fudged the data.

I thought krigging was invented so my girlfriend could strengthen the muscles in her…oh, never mind.

Kegel menicolas… Kegel!

A little off topic…but….that wing they’ve found that is suspected to be from that Malaysian plane that disappeared, washed up on shore in an area 4100 miles from where the COMPUTER MODELS forecast it to have been.

Ahhhh, the wonderful world of modeling.

after tonight’s adjustments the models will correctly predict the debris to be found on Reunion.

+1

No doubt all the carbon in the plane caused it to crash!

Be careful with this. Oceanic currents make it very likely that an object would drift from the west coast of Australia across the Indian Ocean towards Reunion in this time frame.

The phenomenon has nothing to do with modeling. It’s a simple matter of the prevailing ocean currents which have been well-known to mariners for centuries.

Of course that never happens to the Argo floats.

So the models did not incorporate currents which have been known for centuries? Still sounds like faulty modelling.

Wing of jet found is a “little” off topic?

And CAGW is a “little” fib, told for no particular reason.

What can we expect from a bunch of activist scientists, objectivity?

If they can just figure out why all this warming hasn’t been detectable, they will have their cake well-frosted.

Ironic that we agree that the past global temps have been misrepresented. This looks like an attempt to muddy the waters some more and nullify the sceptic perspective on it.

Primary field of X-ray Crystallography eh, I suppose that is better than psychology for climate science but it still heavily discounts the phrase “I think….”

“real-world data used by scientists are a combination of air and sea surface temperature readings”

The satellite data is the lower troposphere air temperature regardless of whether it’s over land or sea.

“The research team found that the way global temperatures were calculated in the models failed to reflect real-world measurements. ”

And yet they had a 95% Confidence level in AR5. Will an Adjustment to AR5 be forthcoming?

“The climate models use air temperature for the whole globe, whereas the real-world data used by scientists are a combination of air and sea surface temperature readings.”

This is ridiculous. First of all, satellite temperatures are air temperatures, and they show even less warming.

Secondly, the “real-world” data is not even data — GISS is a model of the data with adjustments and fabricated, er, infilled readings.

Third, the adjustment to surface temperatures are generally to the land stations, so this study is basically saying the climate models match the “data” temperature models. Of course they do! That tells us a lot about the state of climate science but not much about the science of climate.

I can’t help but wonder what the chances of getting a huge grant for X-ray crystallography research are compared to a huge grant for climate change research. Maybe wildlife isn’t the only thing that migrates due to climate change.

According to the University of York, the Cowtan, et al. (2015) study was unfunded. Of course, all of the time donated by the authors, along with equipment and office space, etc., might effectively be “double-dipping” into extraneous grants.

Or maybe they all did this on their summer vacation.

If you can’t predict the past how can we rely on you to predict the future. Time to stop this scam

A genuine expert can always foretell a thing that is 500 years away easier than he can a thing that’s only 500 seconds off.

– A Connecticut Yankee in King Arthur’s Court

“Dr Cowtan’s primary field of research is X-ray crystallography…” Going by the usual shrieks of AGW-ers doesn’t that disqualify him from pronouncing on climate matters as he “isn’t a climate expert”.

he is publsihed in climate science as are his co authors.

Being published is the standard for qualification to opine, Mr. Mosher?

Maybe some day, being correct will be the standard for what makes someone an expert.

Actual railway engineers, and people who can not keep their hands of others or the failed politicians are amongst the many people with zero qualifications in the area , or in science at all, whose unquestioning support for ‘the cause ‘ has earned them the right to be consider ‘experts’ in climate ‘science’

Although to be fair given the standard of science seen in climate ‘science’ is not far away from the standard seen from a dead raccoon, then this may actual make sense, after all if all what matters its your ability to produce BS than it is true ‘anyone’ can be a expert .

This is really basic.

When you compare the models output with observations you have to compare the same thing.

Note I have lodged this complaint many times against people on both sides who do model/observation

comparisons.

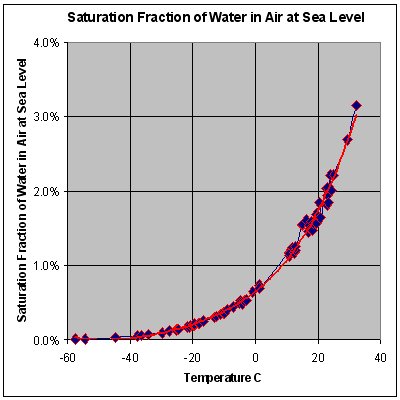

Lets start with observations:

Observations consist of SAT ( surface air temperature taken over land ) and SST ( ocean temperatures taken just beneath the surface)

Note; this is WHY we call global “averages” indexes. because SAT and SST have been mashed together.

Now you want to compare the model output to this. What the vast majority of people do is they go to the model output and they select t2m or the temperature at 2 meters OF THE AIR!

But this is NOT what the observations are. The observations are the air temp over land and SST ( not MAT)

over ocean.

So fundamentally Cowtan is doing it the right way. using modelled SST and Modelled SAT and comparing that to OBSERVED SST and OBSERVED SAT

Which demonstrates- One. More. Time. -that the models are running hot and the only culprit is the CO2 fudge factor. I predict they eventually have to reduce the fudge factor to the point that it will be another few 1000’s of years before the models rise above the natural noise.

Steven Mosher July 30, 2015 at 2:22 pm

This is really basic.

When you compare the models output with observations you have to compare the same thing.

==============

agreed. the exact same rules apply when training. both the training and evaluation of the prediction were flawed, since they did not compare the same thing.

but of the two flaws, the training flaw is the more serious, because it means the models must be inherently wrong. not simply that they were evaluated incorrectly, but that their fundamental training was wrong.

Where is the global data set for surface 2m air T above the oceans?

Steve M, the post says, “The climate models use air temperature for the whole globe, whereas the real-world data used by scientists are a combination of air and sea surface temperature readings.”

So are you saying they reran the models using modeled SST?

Here is the Hadc 3 SST anomalies

http://woodfortrees.org/graph/hadsst3gl/from:1974/to:2014