Dr. Roy Spencer proves what we have been saying for years, the USHCN (U.S. Historical Climatology Network) is a mess compounded by a bigger mess of adjustments.

==============================================================

USHCN Surface Temperatures, 1973-2012: Dramatic Warming Adjustments, Noisy Trends

Guest post by Dr. Roy Spencer PhD.

Since NOAA encourages the use the USHCN station network as the official U.S. climate record, I have analyzed the average [(Tmax+Tmin)/2] USHCN version 2 dataset in the same way I analyzed the CRUTem3 and International Surface Hourly (ISH) data.

The main conclusions are:

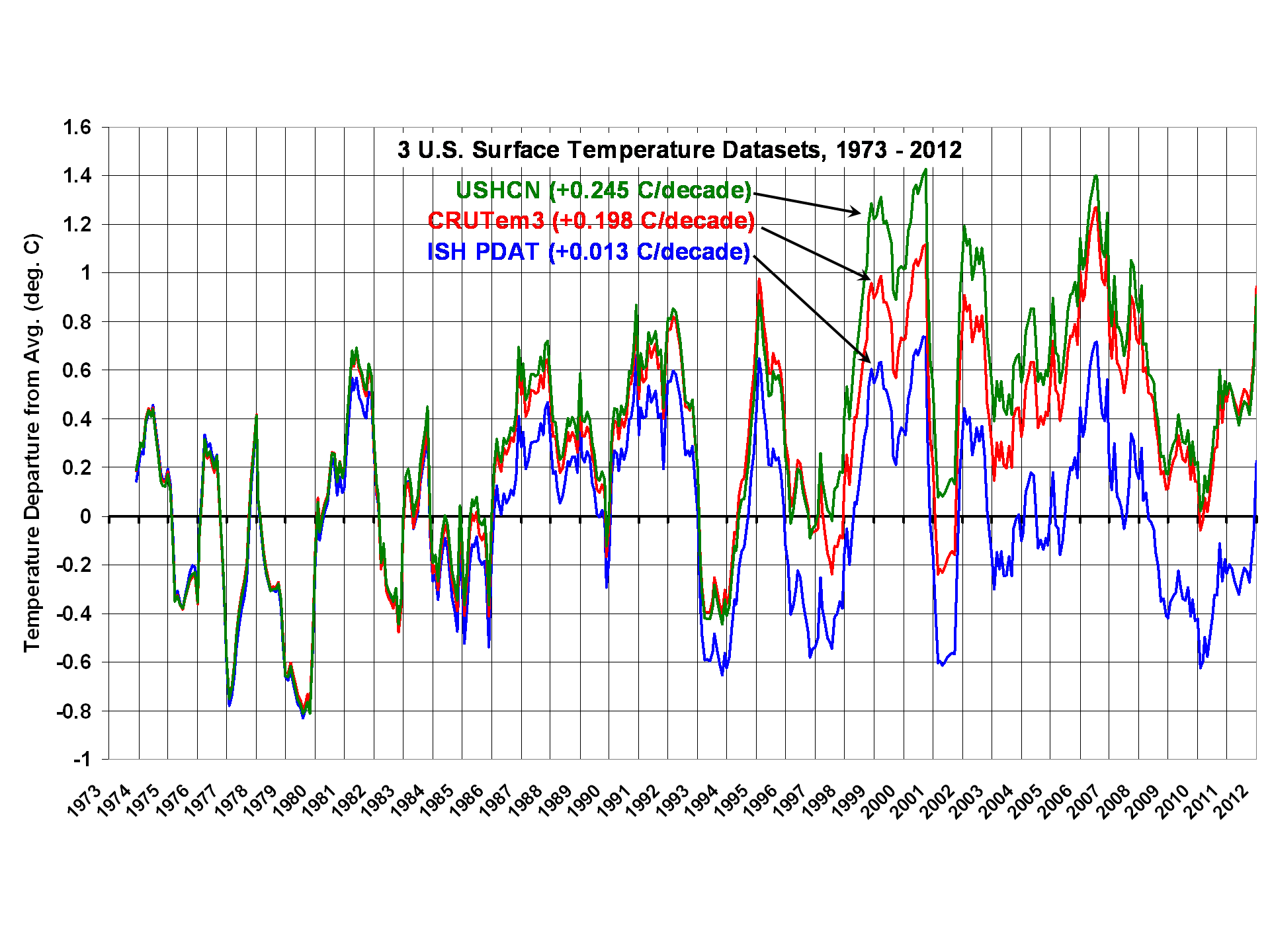

1) The linear warming trend during 1973-2012 is greatest in USHCN (+0.245 C/decade), followed by CRUTem3 (+0.198 C/decade), then my ISH population density adjusted temperatures (PDAT) as a distant third (+0.013 C/decade)

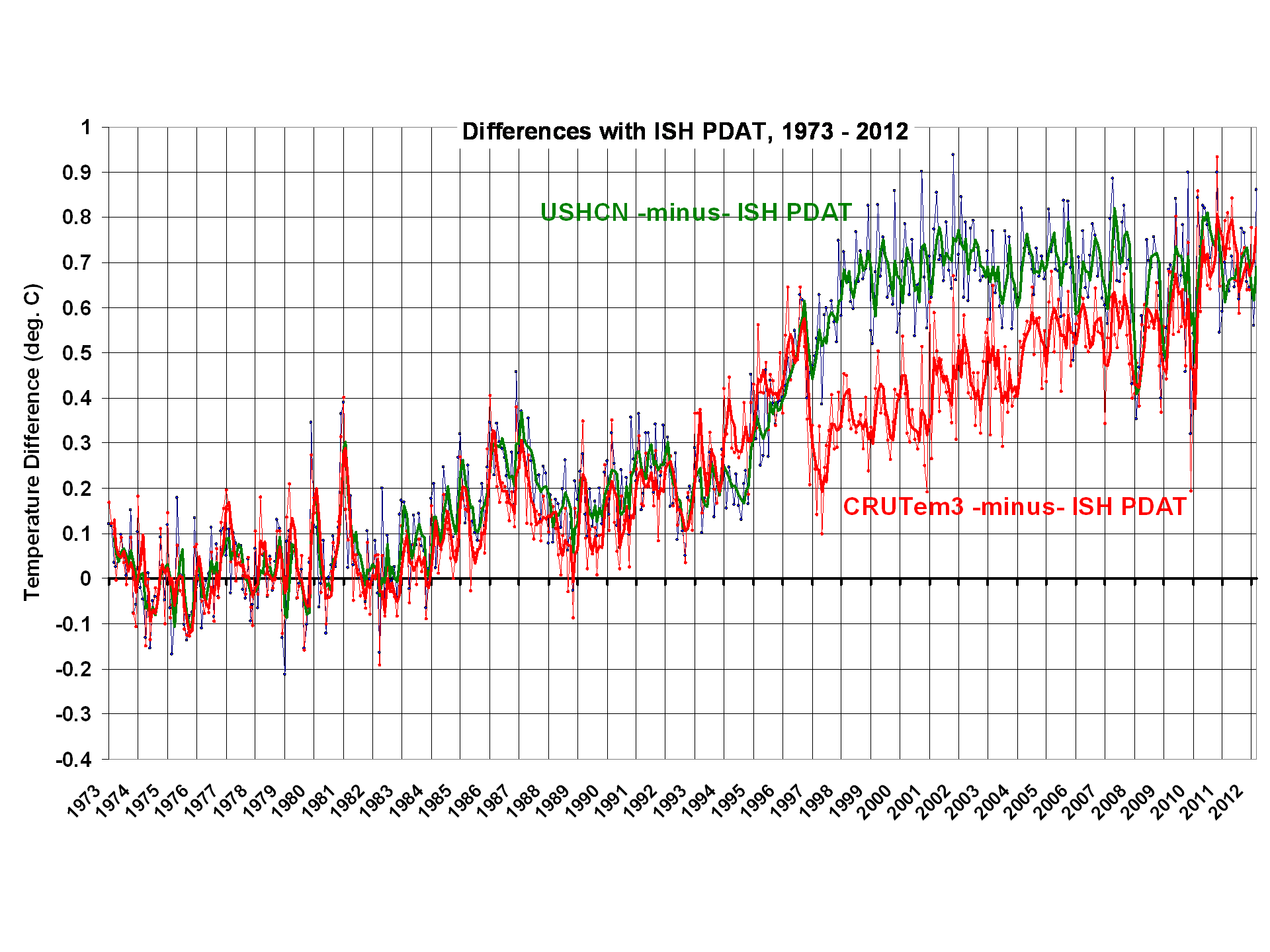

2) Virtually all of the USHCN warming since 1973 appears to be the result of adjustments NOAA has made to the data, mainly in the 1995-97 timeframe.

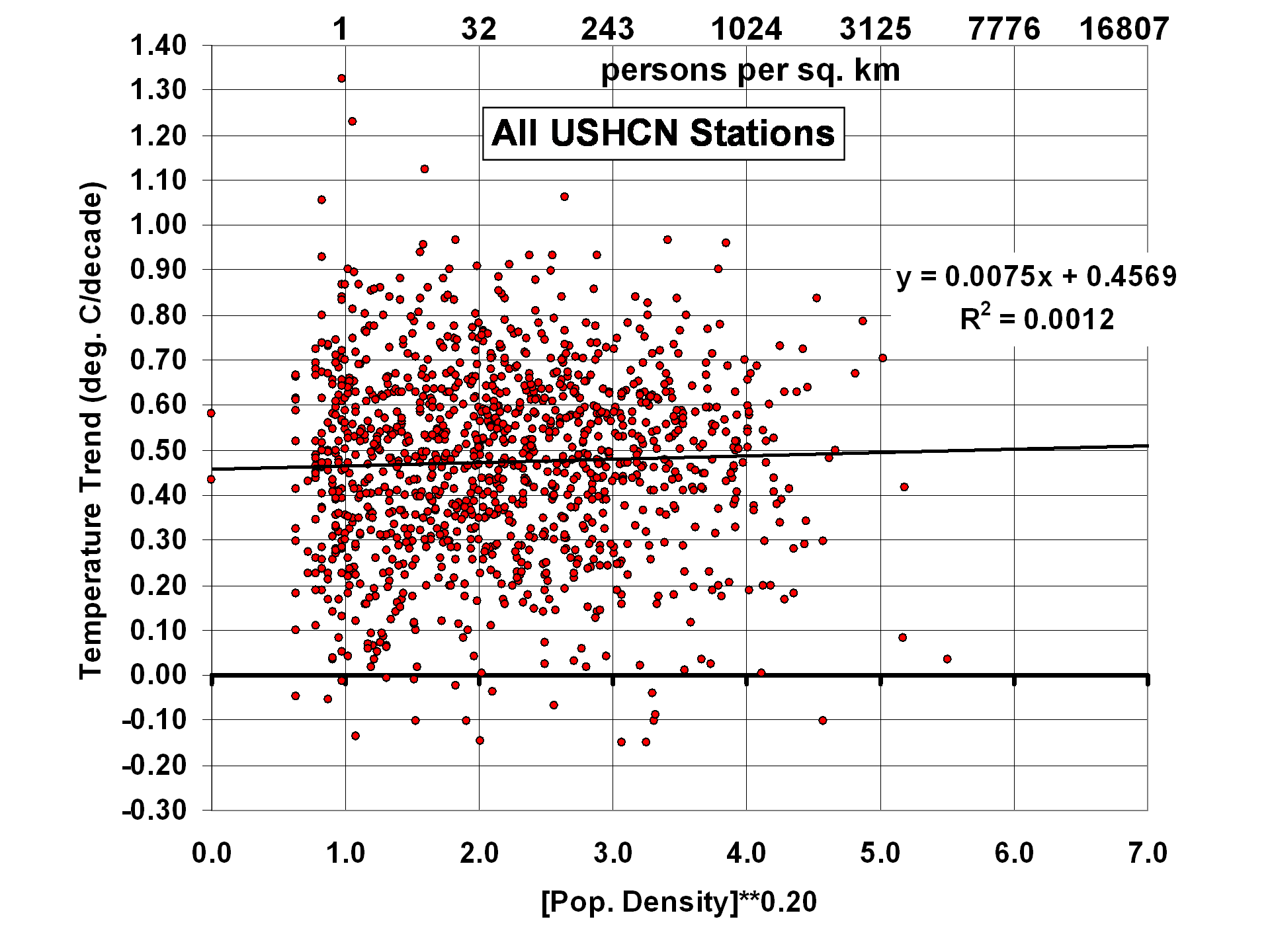

3) While there seems to be some residual Urban Heat Island (UHI) effect in the U.S. Midwest, and even some spurious cooling with population density in the Southwest, for all of the 1,200 USHCN stations together there is little correlation between station temperature trends and population density.

4) Despite homogeneity adjustments in the USHCN record to increase agreement between neighboring stations, USHCN trends are actually noisier than what I get using 4x per day ISH temperatures and a simple UHI correction.

The following plot shows 12-month trailing average anomalies for the three different datasets (USHCN, CRUTem3, and ISH PDAT)…note the large differences in computed linear warming trends (click on plots for high res versions):

The next plot shows the differences between my ISH PDAT dataset and the other 2 datasets. I would be interested to hear opinions from others who have analyzed these data which of the adjustments NOAA performs could have caused the large relative warming in the USHCN data during 1995-97:

From reading the USHCN Version 2 description here, it appears there are really only 2 adjustments made in the USHCN Version 2 data which can substantially impact temperature trends: 1) time of observation (TOB) adjustments, and 2) station change point adjustments based upon rather elaborate statistical intercomparisons between neighboring stations. The 2nd of these is supposed to identify and adjust for changes in instrumentation type, instrument relocation, and UHI effects in the data.

We also see in the above plot that the adjustments made in the CRUTem3 and USHCN datasets are quite different after about 1996, although they converge to about the same answer toward the end of the record.

UHI Effects in the USHCN Station Trends

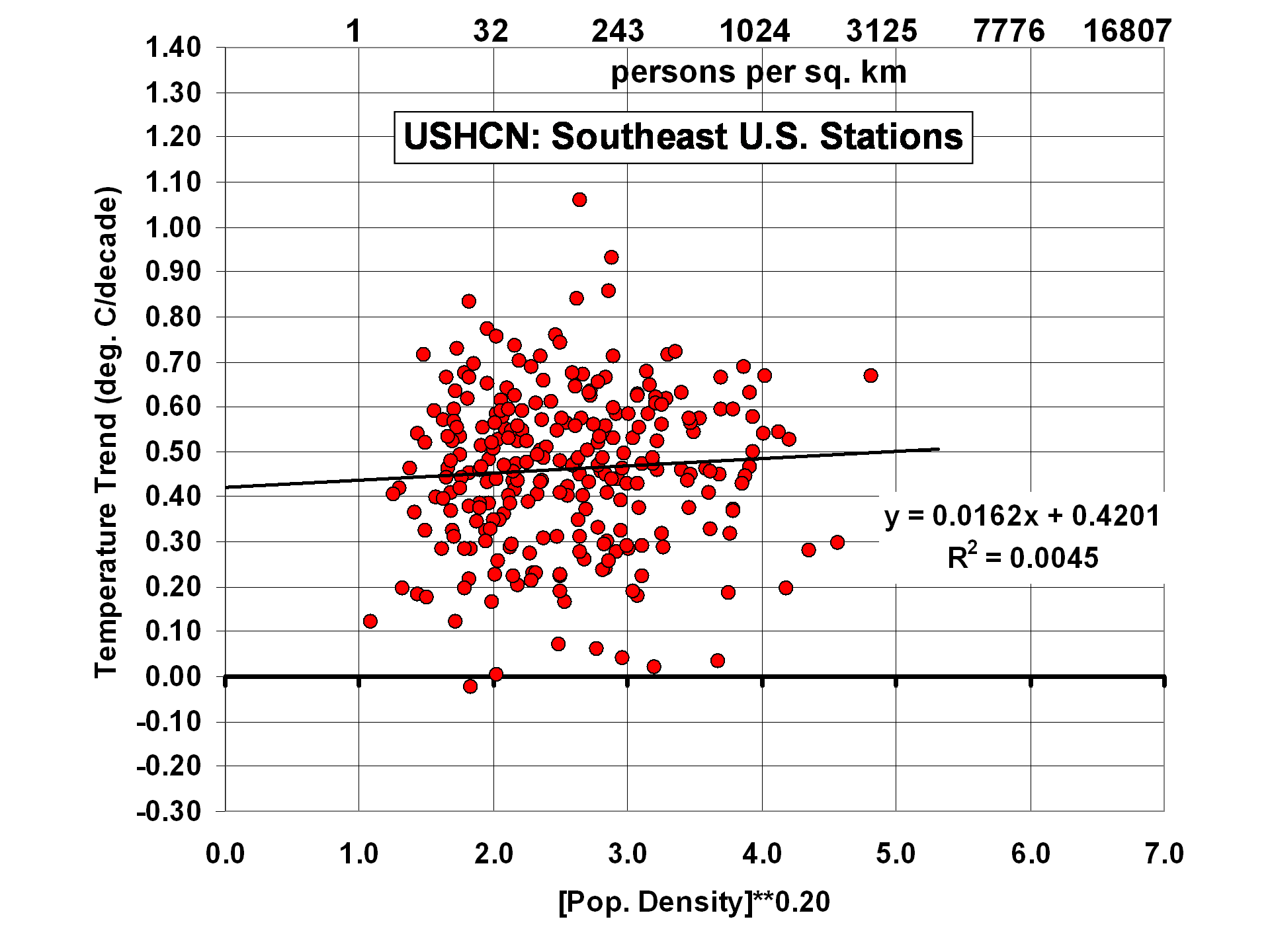

Just as I did for the ISH PDAT data, I correlated USHCN station temperature trends with station location population density. For all ~1,200 stations together, we see little evidence of residual UHI effects:

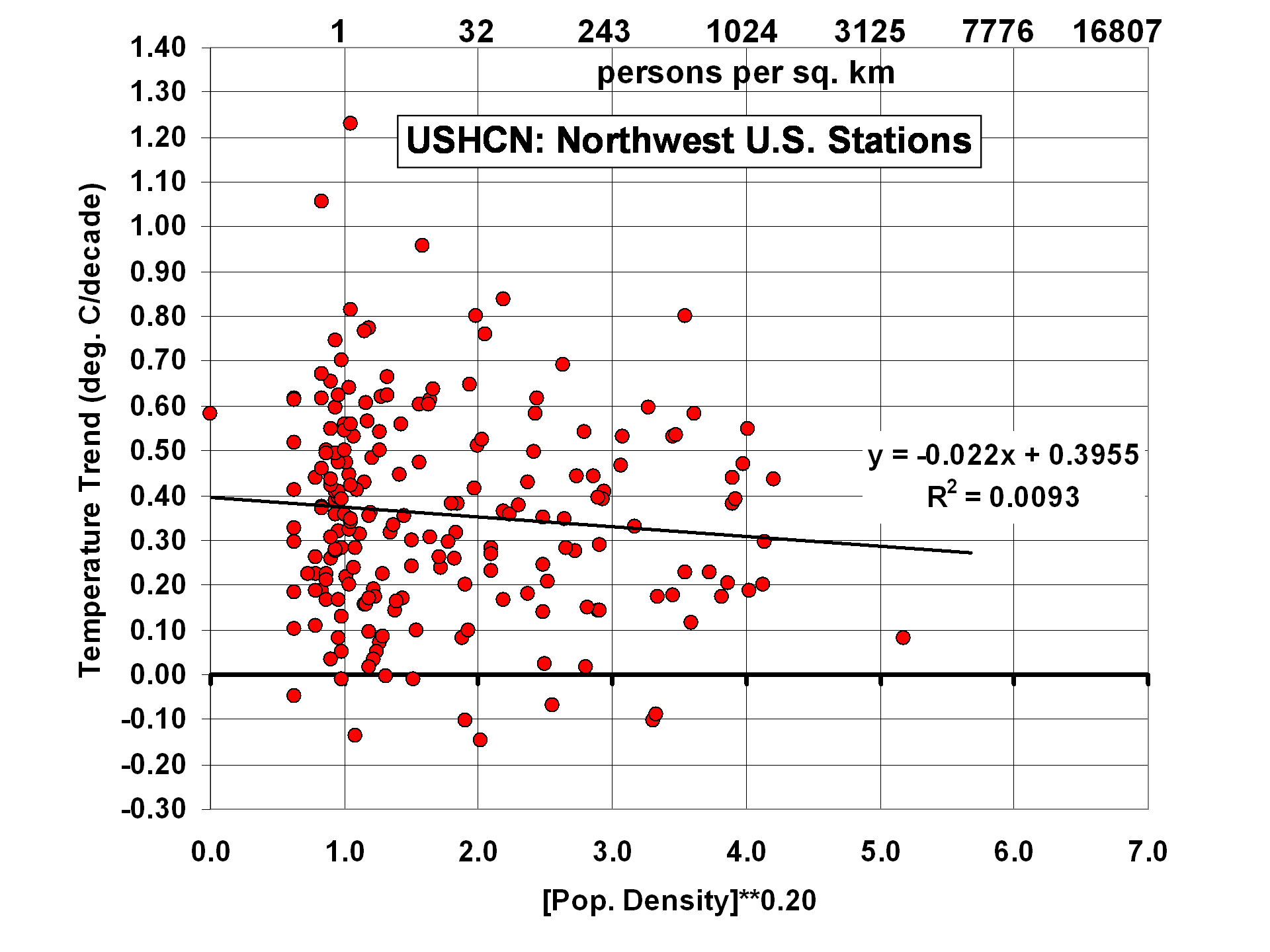

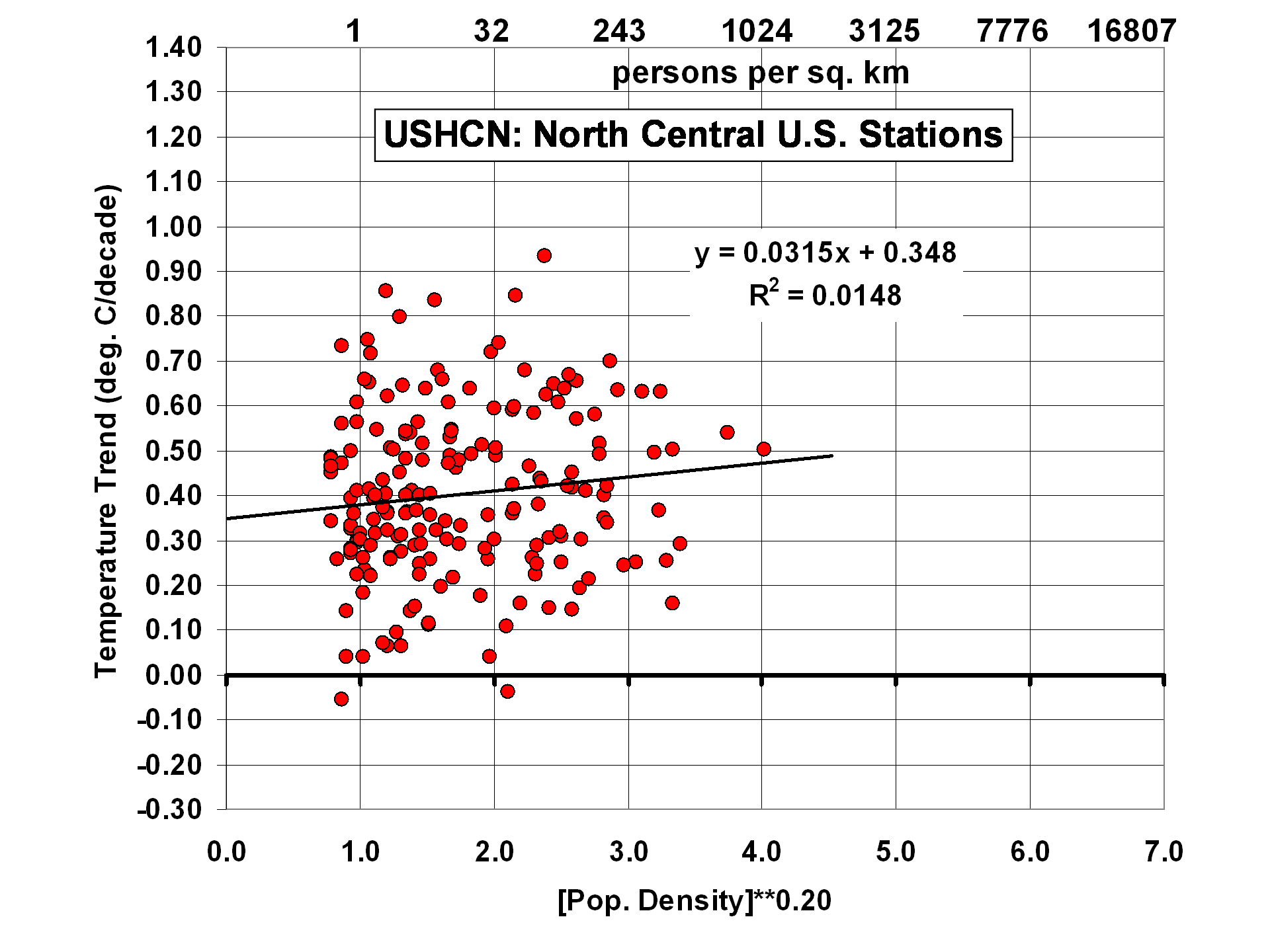

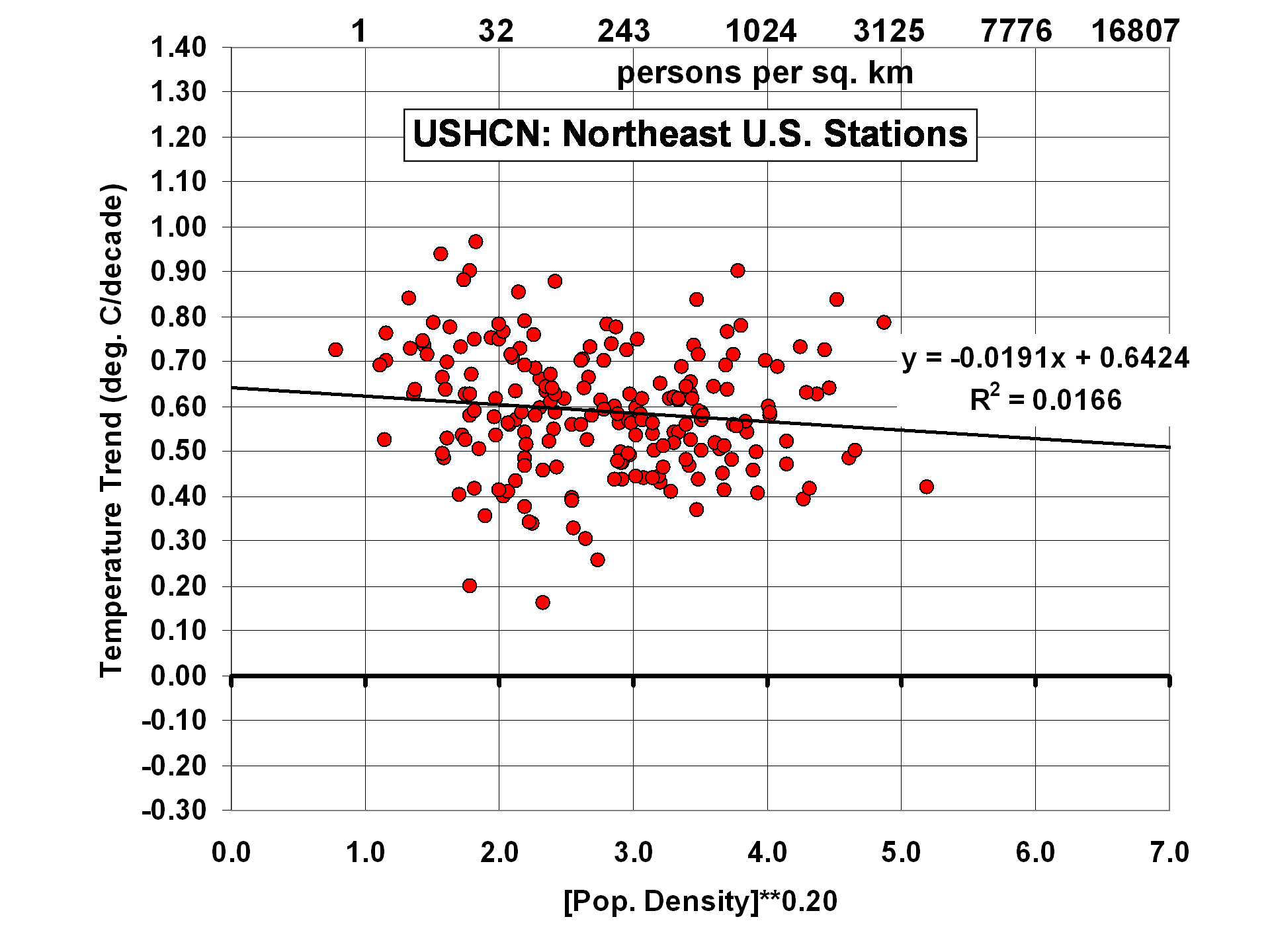

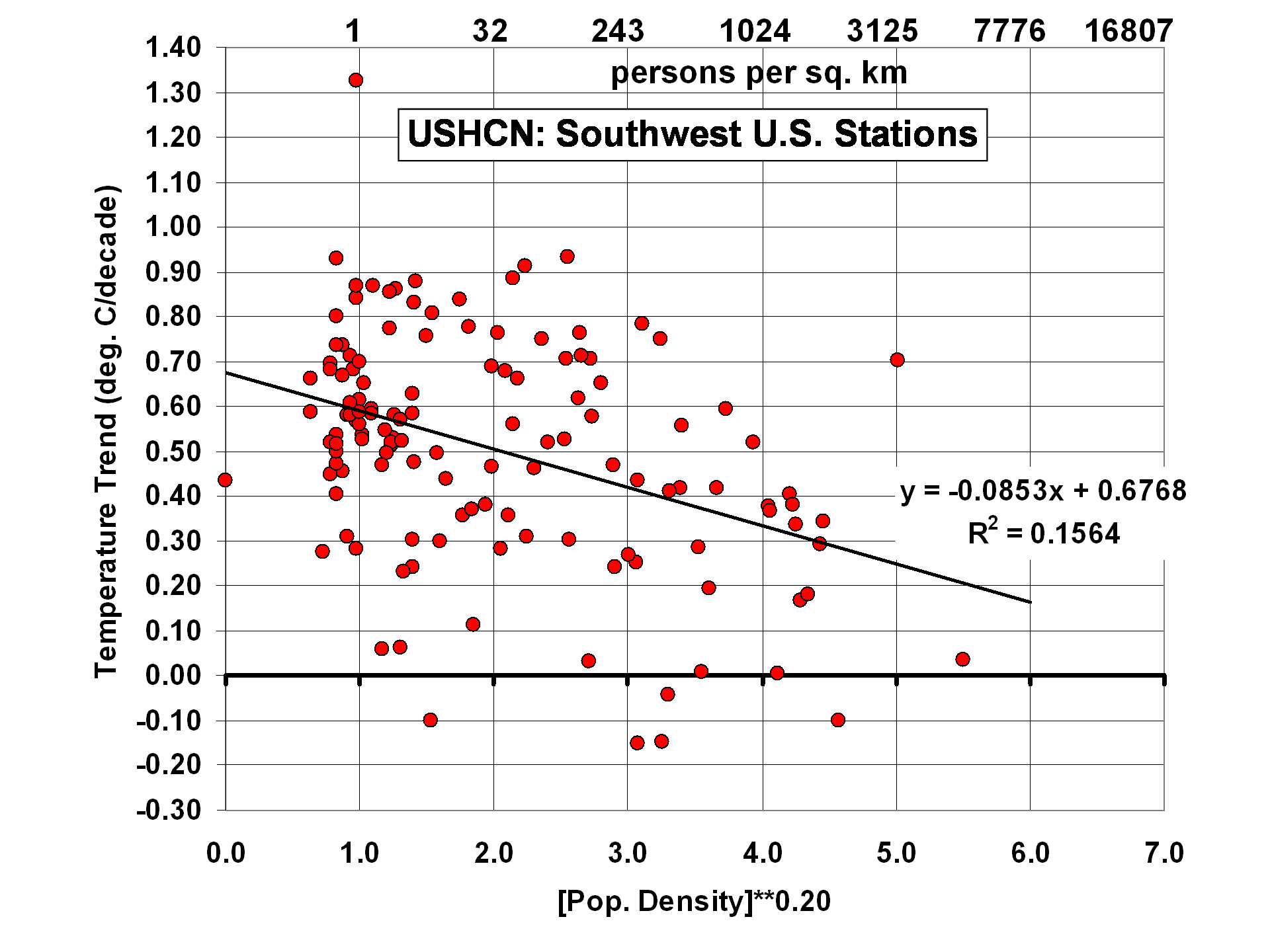

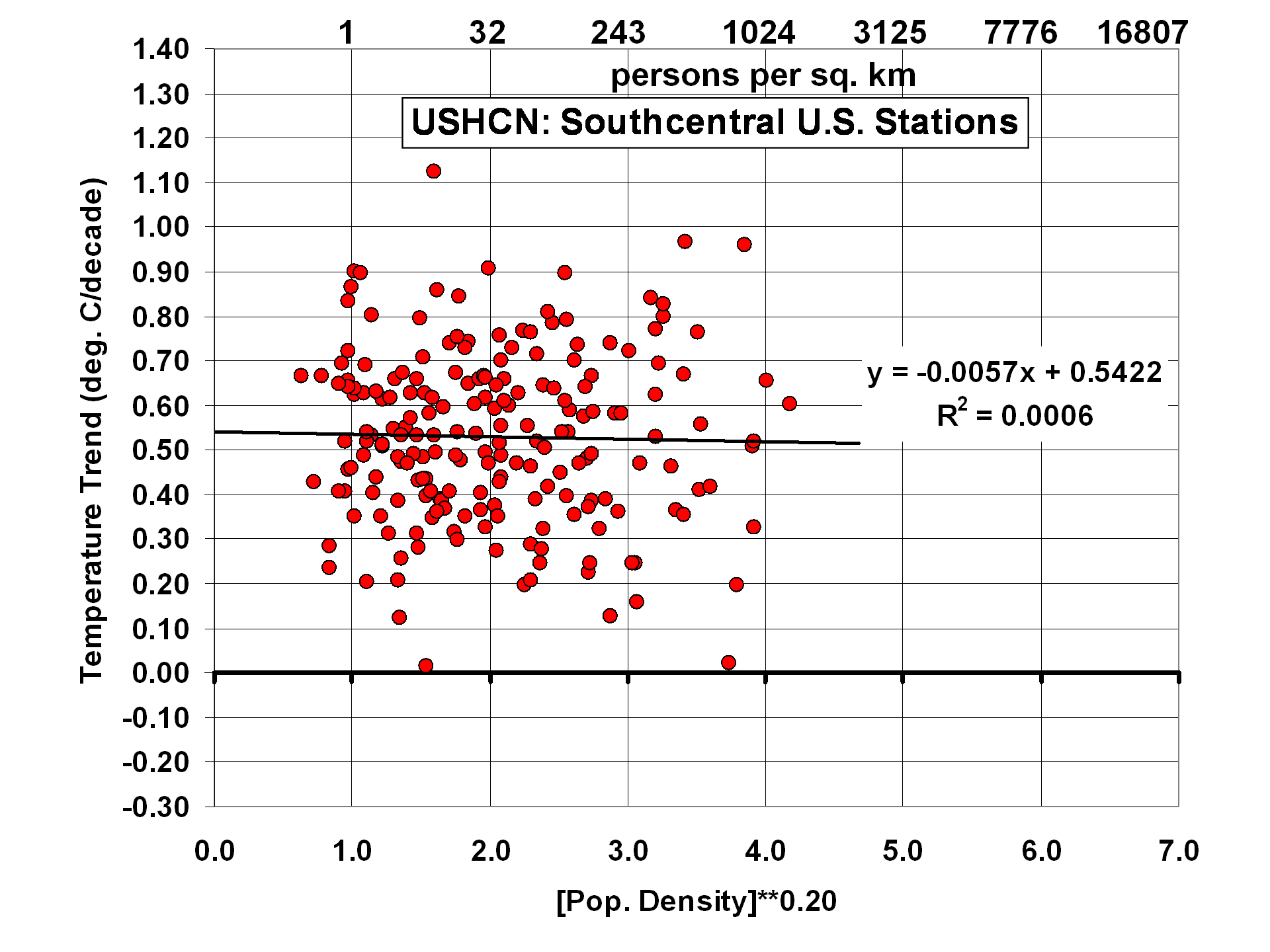

The results change somewhat, though, when the U.S. is divided into 6 subregions:

Of the 6 subregions, the 2 with the strongest residual effects are 1) the North-Central U.S., with a tendency for higher population stations to warm the most, and 2) the Southwest U.S., with a rather strong cooling effect with increasing population density. As I have previously noted, this could be the effect of people planting vegetation in a region which is naturally arid. One would think this effect would have been picked up by the USHCN homogenization procedure, but apparently not.

Trend Agreement Between Station Pairs

This is where I got quite a surprise. Since the USHCN data have gone through homogeneity adjustments with comparisons to neighboring stations, I fully expected the USHCN trends from neighboring stations to agree better than station trends from my population-adjusted ISH data.

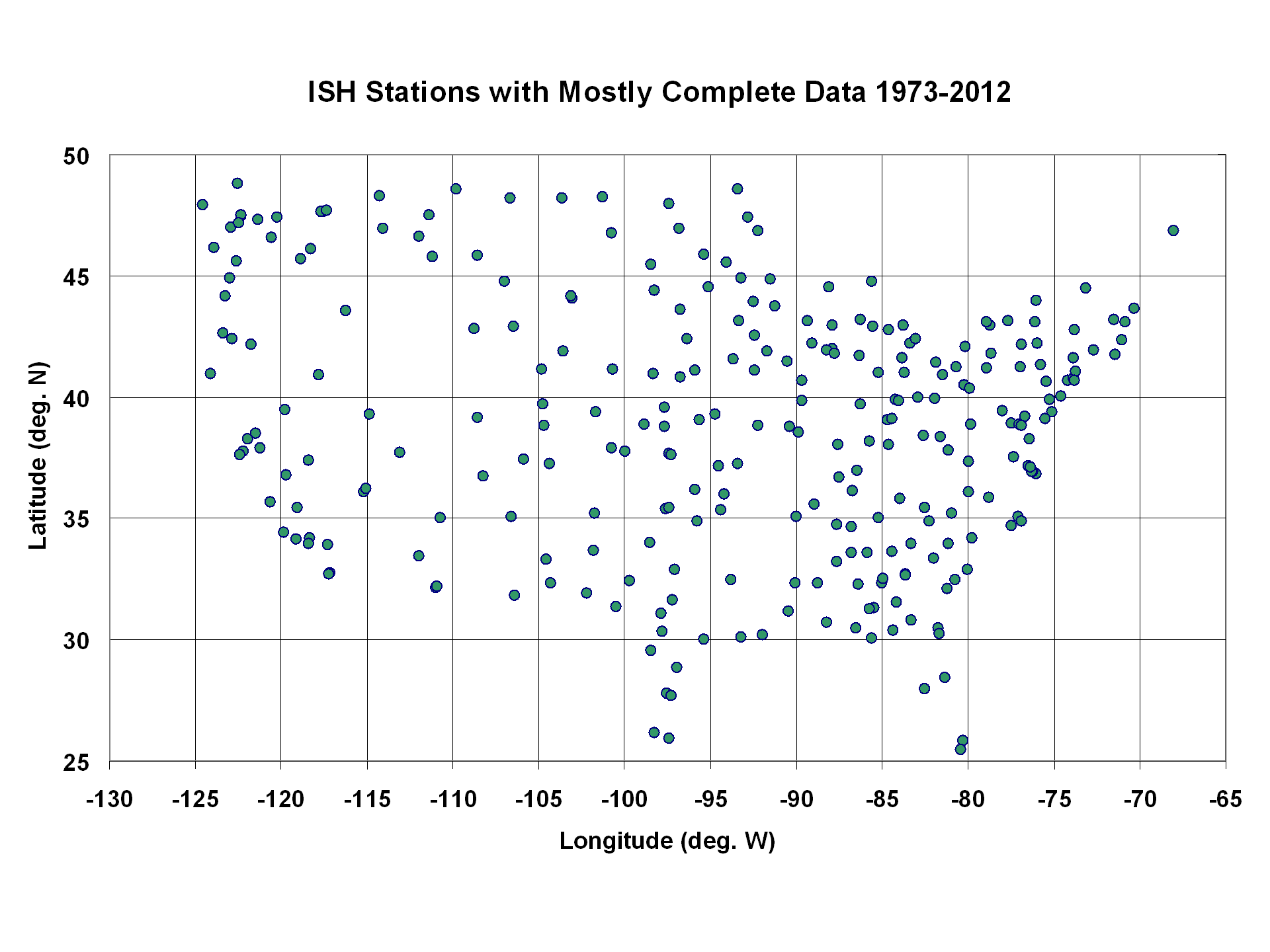

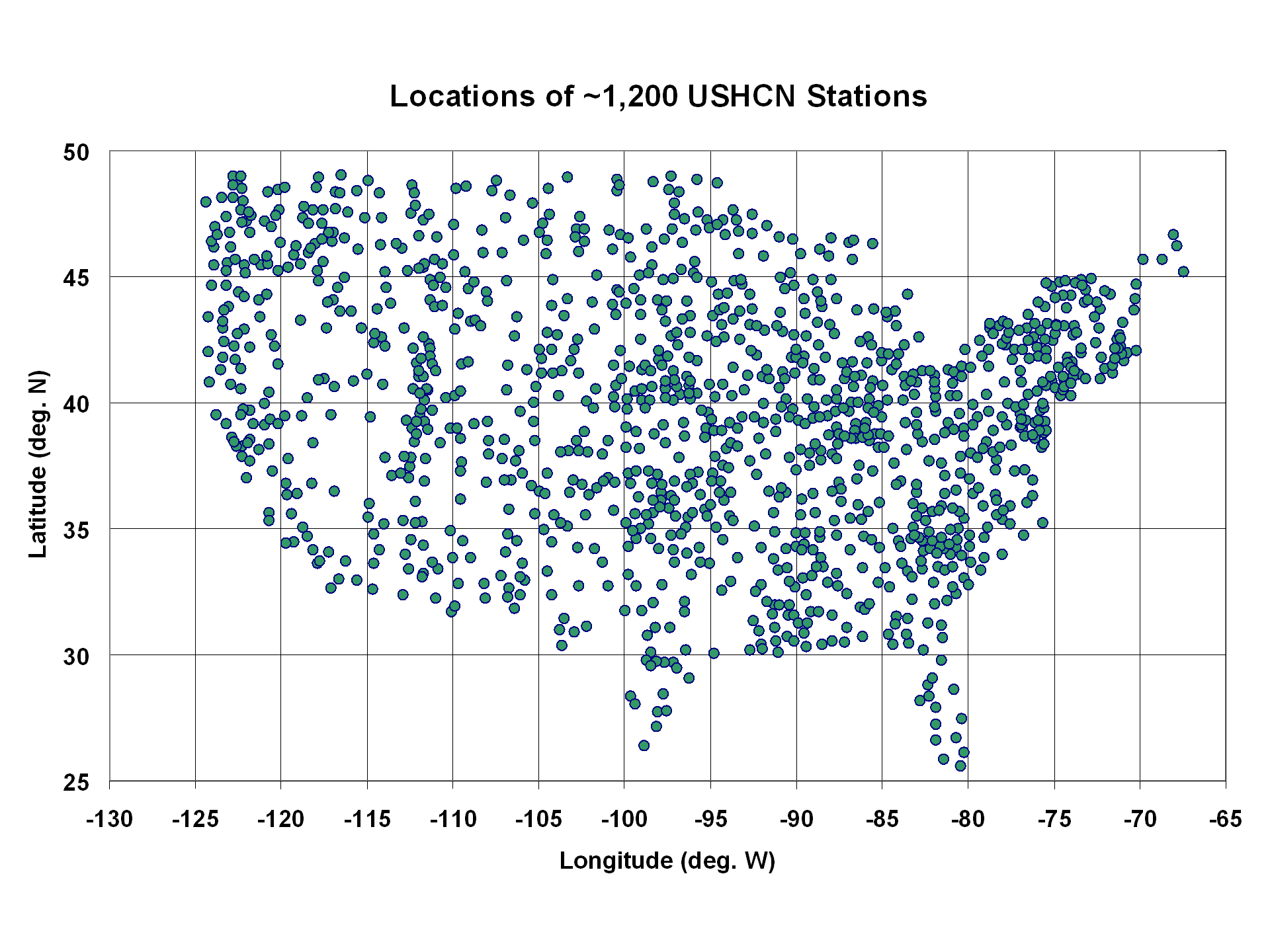

I compared all station pairs within 200 km of each other to get an estimate of their level of agreement in temperature trends. The following 2 plots show the geographic distribution of the ~280 stations in my ISH dataset, and the ~1200 stations in the USHCN dataset:

I took all station pairs within 200 km of each other in each of these datasets, and computed the average absolute difference in temperature trends for the 1973-2012 period across all pairs. The average station separation in the USHCN and ISH PDAT datasets were nearly identical: 133.2 km for the ISH dataset (643 pairs), and 132.4 km for the USHCN dataset (12,453 pairs).

But the ISH trend pairs had about 15% better agreement (avg. absolute trend difference of 0.143 C/decade) than did the USHCN trend pairs (avg. absolute trend difference of 0.167 C/decade).

Given the amount of work NOAA has put into the USHCN dataset to increase the agreement between neighboring stations, I don’t have an explanation for this result. I have to wonder whether their adjustment procedures added more spurious effects than they removed, at least as far as their impact on temperature trends goes.

And I must admit that those adjustments constituting virtually all of the warming signal in the last 40 years is disconcerting. When “global warming” only shows up after the data are adjusted, one can understand why so many people are suspicious of the adjustments.

Anyone who thinks a minus 0.05 degree C adjustment for UHI is adequate need only consider the previous post concerning peak temperatures at airports. Recall as well that even in the early 1950s commercial jets were uncommon.

It isn’t the average correction that matters — that SHOULD be rather small if there are enough rural sites that don’t need it. The size of the correction as a function of urban density, land use change, and so on should, in most cases, increase with urban density and many sitings, and as Roy pointed out, the real correction need not just be “urban” and may not have a uniform sign — land use change can net cool a site by e.g. planting trees and grass and importing irrigation water from rivers far away in a site that properly speaking should be and once was desert.

However, the real test of any such correction is the inversion test I mentioned above (which is one of a family of symmetries one can test for). One can take the original data, subtract your UHI correction, form the now “corrected” sample means, and invert around this. What happens to the temperatures now? One can apply the UHI with the opposite sign (and then invert). The point is that in the end, all of the algorithms must possess an inversion symmetry of some sort or another or they are biased.

rgb

The Future Is Certain; It’s the Past Which Is Constantly Changing

Gail, you bring up a very important point in this debate. On any given day, all temperature readings have immediate natural drivers that are well known and completely unrelated to AGW (except for jet engines, black top, brick walls, BBQ’s, burn barrels, and overturned aluminum boats). A hot day is hot because of local atmospheric conditions which can be traced back to pressure systems and jetstream interplay. Those can be traced back to systems that are created over oceans and then meander their way to land. The creation of those conditions are brought about by the coreolis affect, Earth’s tilt, land masses, and oceans swirling the pattern of the atmosphere this way and that in a somewhat choreographs dance. A cold day is likewise. Logically, you cannot therfore deduce an AGW signal by gridding up the resulting temperature readings, adding adjustments, data averaging, and plotting.

Straw cannot be spun into gold. Natural temperature readings cannot be spun into another signal of a different nature.

Lance Hilpert says: April 14, 2012 at 7:59 am

The Future Is Certain; It’s the Past Which Is Constantly Changing

…and if you wait about 270 years there is a chance that you can see the past nearly repeating itself.

http://www.vukcevic.talktalk.net/CET1690-1960.htm

Gail, can I just write my comments on a word processor then paste?

I see. You get the same words with no formatting.

Budgenator says:

April 13, 2012 at 9:59 pm

I’m sorry, I know this will seem trollish, but every time I see the good ‘ol average = [(Tmax+Tmin)/2]; I can’t help but to think who ever thought that up wouldn’t have done very well on the TV show “Are You Smarter Than a 5th Grader”.

Budgenator – unfortunately you are so right.

Firstly, atmospheric temperature is NOT a measure of atmospheric heat content.

Note that Nick Stokes did not respond to my proposal that he used the humidity metrics to calculate the enthalpy of the atmosphere at the time of the measurements. This is because the AGW hypothesis fails totally if the correct metric of KiloJoules per atmospheric Kilogram is used.

The AGW hypothesis is that CO2 traps HEAT —- CO2 cannot trap TEMPERATURE so stop measuring temperature and start measuring heat content!!!.

The heat content in KJ/Kg at each hourly observation should be calculated from the atmospheric enthalpy and temperature at the time of observation and then a graph of the atmospheric heat content would show whether there was any ‘heat being trapped’ or not. A cool humid misty morning at 60F that turns into a low humidity 100F afternoon may actually show a totally level heat content profile. These buffoons would have generated a totally meaningless ‘average temperature of 80F’ then compound the unreality by averaging all those averages.

We appear to be joining the climate ‘scientists’ associated useful idiots and trolls in their deliberate use of incorrect metrics. This must stop.

If they can only think in temperatures then use the ocean temperature – ideally before they have adjusted and overwritten the raw data there as well.

Nick, as sea level and temp rise are not accelerating AT ALL, as both metrics continue to defy climate models by staying below their central estimates, isn’t it becoming obvious that climate sensitivity is between 1.2 and 3 C? What data currently supports your fear that it will become ‘hot’?

Nick Stokes:

In reply to my request for you to justify your unfounded assertion, you reply at April 14, 2012 at 2:33 am saying;

“Richard S Courtney says: April 14, 2012 at 2:06 am

“Really? You know that? How?”

Greenhouse effect. Putting CO2 in the air impedes outgoing IR. Heat accumulates, temperature rises. Flux balance then restored (until more CO2 accumulates).”

Oh dear, No! That is a ‘schoolboy error’.

The atmosphere is a complex system so a change to one parameter (e.g. atmospheric CO2 concentration) alters everything else. You made the assertion that the net effect of all those changes would be to make the world “hot”. I asked you how you could know that. Your answer says you don’t have any reason to suppose that: you merely know of one change that would occur; i.e. “impedance of outgoing IR”.

To date there is no evidence of any kind to support your assertion that you have now demonstrated you cannot justify but which you used as a putative reason to change the economic and energy policies of the entire world.

Read e.g. the above article by Roy Spencer and learn how little evidence there is to support your assertion (hint, there is none).

Richard

Nick Stokes says:

April 13, 2012 at 10:20 pm

Thanks, Nick, that was my point. When you say “I’ll write a blog post”, where will it be posted? I’m interested to read it.

w.

Doc –

“When “global warming” only shows up after the data are adjusted, one can understand why so many people are suspicious of the adjustments.”

That was my general impression from long ago, and I have seen little to disabuse myself of that since. Your take on it makes it more likely to me to be true.

Thanks!

Gail Combs says: April 14, 2012 at 5:28 am

There is also the question of how good the data is during the time of Red China’s “Purges” where up to 80 million were killed.

http://www.paulbogdanor.com/left/china/deaths2.html

Thank you Gail for remembering these tortured millions. Below is an excerpt from that article.

The CAGW scam is not, as many of us originally believed, the innocent errors of a close-knit team of highly dyslexic scientists. The evidence from the ClimateGate emails and many other sources, and the intransigence of these global warming fraudsters when faced with the overwhelming failure of their scientific predictions, suggests much darker motives.

The lesson of Mao, Hitler and Stalin is that one should not trust, and one should never cede power, to those who have no moral compass.

Best regards, Allan

Scholars Continue to Reveal Mao’s Monstrosities

Exiled Chinese historians emerge with evidence of cannibalism and up to 80 million deaths under the communist leader’s regime.

Beth Duff-Brown,

Los Angeles Times,

November 20, 1994

Gong Xiaoxia recalls the blank expression on the man’s face as he was beaten to death by a Chinese mob.

He died without a name, becoming another statistic among millions.

“I remember him so vividly, he really had no expression on his face,” Gong said. “After about 10 or 20 minutes, God knows how long, someone took out a knife and hit him right into the heart.”

He was then strung on a pole and left dangling and rotting for two months.

“I think the most terrible thing, when I recall that period, the most terrible thing that struck me was our indifference,” said Gong, today a 38-year-old graduate student at Harvard researching her own history.

That terrible period was China’s 1966-1976 Cultural Revolution. The blinding indifference was in the name of Chairman Mao Tse-tung and the Communist Party.

Gong is among a new wave of scholars and intellectuals, both Western and Chinese, who believe modern Chinese history needs rewriting.

While the focus of many books and articles today is on China’s successful economic reforms, dramatic new figures for the number of people who died as a result of Mao Tse-tung’s policies are surfacing, along with horrifying proof of cannibalism during the Cultural Revolution.

It is now believed that as many as 60 million to 80 million people may have died because of Mao’s policies-making him responsible for more deaths than Adolf Hitler and Josef Stalin combined.

Gong said killer is not a strong enough word to describe Mao. “He was a monster,” she said.

*******************

OK, I have found a trend line for UAH data set that shows warming of approximately .5 degrees C over the last 30 years.

From listening to Lord Monckton and I recall posts from Anthony Watts, the question of global warming is not so much “if,” but “how much.” So the question is, say the earth warmed another 1 degrees C over the next 60 years, how bad/good is that?

My view is the models are proving themselves to not be worth much. It’s not a surprise, as I think of Climate science as nascent, and perhaps well beyond humans and their tools to really understand for who knows how long. The truth is in the “muck and mire.”

Is it equally futile to attempt to understand what different increases in temperature would mean? What would a 1 degree C increase mean, for instance. I suppose it is a lot easier if you say “Temperatures are going to increase 5 degrees C,” because then major systems would be impacted, and we can all make the case about nasty humans changing mother gaiai earth’s system.

I feel I’m wasting my time trying to understand this stuff. I wish our leaders would take a step back, and re-focus the discussion to something meaningful. I’m tired of hearing how global warming causes an increase in toe fungus.

@Ed Barbar: “I’m tired of hearing how global warming causes an increase in toe fungus.”

Mountains out of molehills, and very likely imaginary molehills. “Cartoon molehills” may be too harsh, but perhaps “creatively rendered molehills” is not.

One of the genuine problems/concerns seems to be that some people are actively engaged in demonizing our society’s very existence. The tone here has slowly increased about how what used to be called “Progress” – and which is now labeled “planet killing” – really and truly has improved life for humankind, spreading a more hygiene, less threatened, better fed, healthier, and more enjoyable kind of life to more and more people. Should that progress be done with a minimum of negative impact? Certainly. But the ‘let’s all humans commit suicide so that plants and animals can live in peace and harmony” school of thought is pretty much insane.

Skeptics, almost to a man/woman, all know we must not injure the planet beyond a certain point. Much of the alarmist-vs-skeptical argument really stems from our differences in where the point-of-no-return point is. Alarmists believe it is upon us. Skeptics are confused how they can assert such things. The world environmentally was in much worse shape half a century ago, and we’ve done wonders improving it and pushing back any day of reckoning.

We don’t have killer fogs in London anymore, and even Mexico City has gone a long way toward cleaning its air. And China imposed extra export tariffs four years ago or so, to fund cleaning up their air. If Sao Paolo does the same, it is a good thing. Industrial cities as long ago as 100 years had much worses air and environmental hazards than today. We can’t rest on our laurels, but neither should we shut down what industries support our society.

When toe fungus becomes the most inportant issue, we will deal with it. Warming, like toe fungus, is far from our moswt pressing issue. When it DOES become important, we won’t need screamers like James Hansen to tell us – it will be when our envorinment is worse off than 1910 and 1960 – and that will not be for a very long time, if ever. We showed in the 1970s and 1980s that we can fix what we screwed up. Since then nothing has happened to make us drop everything else and fix warming.

Ed Barbar says: April 14, 2012 at 10:31 am

OK, I have found a trend line for UAH data set that shows warming of approximately .5 degrees C over the last 30 years.

_____________

Ed – please see my above post.

There is probably NO trend if you look at a FULL PDO cycle rather than the warming HALF, as you have done.

Weather satellites only went up in 1979, about the start of the recent warming.

There was global cooling from ~1940 to 1975. The Surface Temperature data from that time is also probably warm-biased by UHI, etc.

What? a free google blogger with some graphs and number with a broken link called “document repository”? what do you want to prove with that?

Here is a more useful link. IMHO.

http://www.retrojunk.com/tv/quotes/343-spongebob-squarepants/

@Evan Jones

Think about the difference between measuring the temperature 20 times a day at one station v.s. once per day at 20 nearby stations. Each reading has a 0.5 degree precision. Which is more accurate? Now think of measuring one station once. The precision of the measurements remains 0.5 degrees no matter how many times they are read.

What you can say about the multiple readings is that the Mean is known with more confidence. It says nothing about the accuracy. They might all be quite inaccurate. Multiple readings don’t improve precision or accuracy as they remain properties of the measurement system and directly give the error bars that have to be placed around a more or less well know Mean.

You can quickly see the meaningfulness of saying the ConUS temp went up 0.161 plus minus 0.5 degrees.

Willis Eschenbach says: April 14, 2012 at 9:42 am

“Thanks, Nick, that was my point. When you say “I’ll write a blog post”, where will it be posted? I’m interested to read it.”

Thanks, Willis. It’s here..

Chuck Nolan says:

April 14, 2012 at 8:40 am

Gail, can I just write my comments on a word processor then paste?

_______________________

Yes, At least I do it with Ubunto Libre office and firefox or opera. Makes editing much easier. you can use WUWT test to see if the HTML markup is OK.

“Nick Stokes says:

April 14, 2012 at 5:57 am

Yes, water vapor feedback amplifies the temperature increase due to any forcing.”

That’s like saying when I bake bread, the water in the bread which turn into water vapor increases the oven temperature from 375 to 380. I have my doubts.

You are telling me that the water vapor in Shreveport, LA is making it hotter than Yuma, AZ. Both cities have about the same population, are along the same altitude and 90 some miles from a large body of water

http://www.climate-zone.com/climate/united-states/louisiana/shreveport/

http://www.climate-zone.com/climate/united-states/arizona/yuma/

Crispin in Johannesburg says: @ur momisugly April 14, 2012 at 2:05 pm

Think about the difference between measuring the temperature 20 times a day at one station v.s. once per day at 20 nearby stations. Each reading has a 0.5 degree precision. Which is more accurate? Now think of measuring one station once. The precision of the measurements remains 0.5 degrees no matter how many times they are read….

What you can say about the multiple readings is that the Mean is known with more confidence. It says nothing about the accuracy…..

_____________________________________

Actually it is WORSE. I look at it the same why I would for sampling a batch or continuous process in industry.

Your best accuracy/precision (tightest distribution) is multiple samples from several different points in a well mixed batch, with testing preformed by the same TRAINED tech with the same CALIBRATED equipment. This gives you the best shot of getting something close to the “true value”

As soon as you add another tech the distribution is not going to be as tight (larger standard deviation), add different equipment and again the standard deviation increases. Add different batches, different mix equipment, different plants and different raw material lots and the distribution becomes wider and wider. I really do not care how many samples you take that standard deviation is going to reflect the differences in equipment and technicians as added error. At this point coming up with the “true value” of say the average mgs of codeine per gram of liquid in cough syrups on the shelf in the USA, by just using batch test records from selected manufacturers gets a lot harder and the standard deviation wider. This is what I see as the equivalent to what climate scientists are trying to do with temperature.

The 95% confidence interval is when 95% of the data is within 1.96 standard deviations of the mean Normally plus minus 2 standard deviations are used. (At least in chemistry)

Urederra says: April 14, 2012 at 12:06 pm

‘What? a free google blogger with some graphs and number with a broken link called “document repository”?’

Well, Roy, Anthony, me, we’re all bloggers. Paying a hosting fee doesn’t guarantee validity. The post you read is about two years old, and drop.io has gone out of business. But the repository with the code is still available – just look at “Document Store” in the resources list top right.

But it’s not just me. Lots of people have studied the global temp record. Not just a few counties in California.

Ian W says: April 14, 2012 at 9:10 am

“Note that Nick Stokes did not respond to my proposal that he used the humidity metrics to calculate the enthalpy of the atmosphere at the time of the measurements.”

No, because it is OT. This post is about temperature metrics. Why not suggest to Roy and Anthony that they use humidity? Then we could compare.

feet2thefire says: April 14, 2012 at 11:19 am

“When toe fungus becomes the most inportant issue, we will deal with it. “

feet2thefire?

Nick Stokes says:

April 14, 2012 at 5:07 pm

Ian W says: April 14, 2012 at 9:10 am

“Note that Nick Stokes did not respond to my proposal that he used the humidity metrics to calculate the enthalpy of the atmosphere at the time of the measurements.”

No, because it is OT. This post is about temperature metrics. Why not suggest to Roy and Anthony that they use humidity? Then we could compare.

It is NOT off topic – and if you bothered to read the posts that I made I said:

“We appear to be joining the climate ‘scientists’ associated useful idiots and trolls in their deliberate use of incorrect metrics. This must stop.

Atmospheric temperature metrics do not measure the amount of heat ‘trapped’ by the ‘green house effect’. So everyone can discuss temperature metrics as much as they like – they are the incorrect metric.

I am sorry if you don’t understand the concept of enthalpy – you should really learn what it means. then you might not be parading your ignorance quite as loudly.

.