by Willis Eschenbach

People keep saying “Yes, the Climategate scientists behaved badly. But that doesn’t mean the data is bad. That doesn’t mean the earth is not warming.”

Darwin Airport – by Dominic Perrin via Panoramio

Let me start with the second objection first. The earth has generally been warming since the Little Ice Age, around 1650. There is general agreement that the earth has warmed since then. See e.g. Akasofu . Climategate doesn’t affect that.

The second question, the integrity of the data, is different. People say “Yes, they destroyed emails, and hid from Freedom of information Acts, and messed with proxies, and fought to keep other scientists’ papers out of the journals … but that doesn’t affect the data, the data is still good.” Which sounds reasonable.

There are three main global temperature datasets. One is at the CRU, Climate Research Unit of the University of East Anglia, where we’ve been trying to get access to the raw numbers. One is at NOAA/GHCN, the Global Historical Climate Network. The final one is at NASA/GISS, the Goddard Institute for Space Studies. The three groups take raw data, and they “homogenize” it to remove things like when a station was moved to a warmer location and there’s a 2C jump in the temperature. The three global temperature records are usually called CRU, GISS, and GHCN. Both GISS and CRU, however, get almost all of their raw data from GHCN. All three produce very similar global historical temperature records from the raw data.

So I’m still on my multi-year quest to understand the climate data. You never know where this data chase will lead. This time, it has ended me up in Australia. I got to thinking about Professor Wibjorn Karlen’s statement about Australia that I quoted here:

Another example is Australia. NASA [GHCN] only presents 3 stations covering the period 1897-1992. What kind of data is the IPCC Australia diagram based on?

If any trend it is a slight cooling. However, if a shorter period (1949-2005) is used, the temperature has increased substantially. The Australians have many stations and have published more detailed maps of changes and trends.

The folks at CRU told Wibjorn that he was just plain wrong. Here’s what they said is right, the record that Wibjorn was talking about, Fig. 9.12 in the UN IPCC Fourth Assessment Report, showing Northern Australia:

Figure 1. Temperature trends and model results in Northern Australia. Black line is observations (From Fig. 9.12 from the UN IPCC Fourth Annual Report). Covers the area from 110E to 155E, and from 30S to 11S. Based on the CRU land temperature.) Data from the CRU.

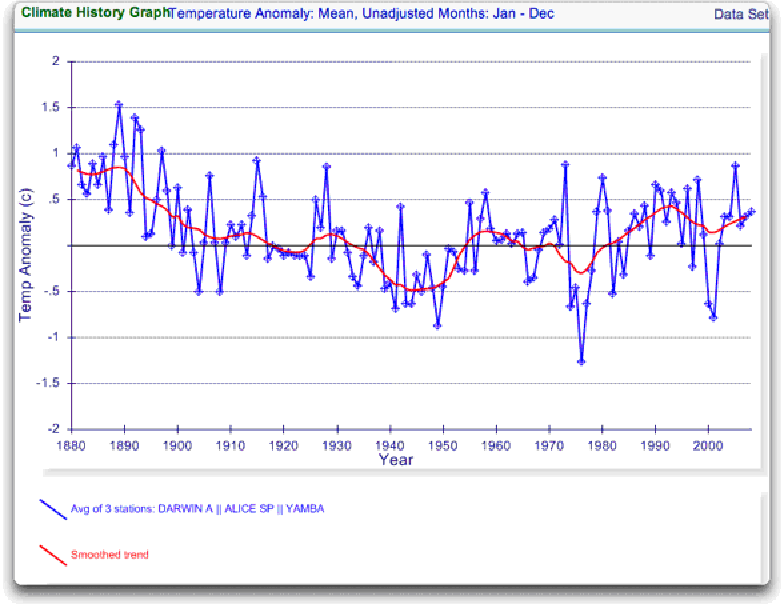

One of the things that was revealed in the released CRU emails is that the CRU basically uses the Global Historical Climate Network (GHCN) dataset for its raw data. So I looked at the GHCN dataset. There, I find three stations in North Australia as Wibjorn had said, and nine stations in all of Australia, that cover the period 1900-2000. Here is the average of the GHCN unadjusted data for those three Northern stations, from AIS:

Figure 2. GHCN Raw Data, All 100-yr stations in IPCC area above.

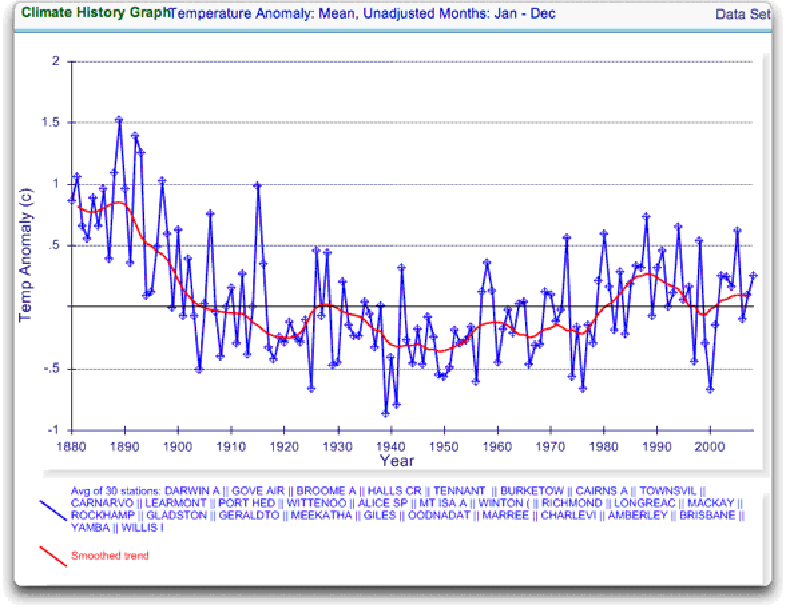

So once again Wibjorn is correct, this looks nothing like the corresponding IPCC temperature record for Australia. But it’s too soon to tell. Professor Karlen is only showing 3 stations. Three is not a lot of stations, but that’s all of the century-long Australian records we have in the IPCC specified region. OK, we’ve seen the longest stations record, so lets throw more records into the mix. Here’s every station in the UN IPCC specified region which contains temperature records that extend up to the year 2000 no matter when they started, which is 30 stations.

Figure 3. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

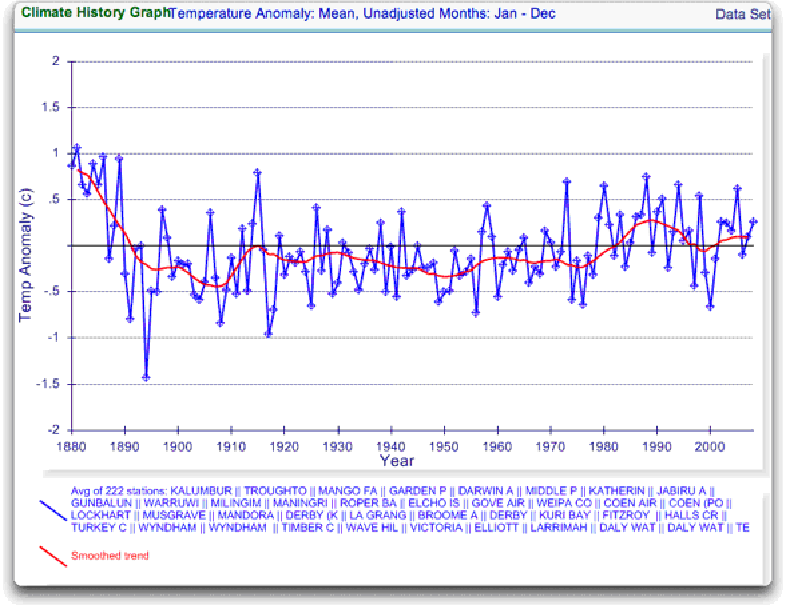

Still no similarity with IPCC. So I looked at every station in the area. That’s 222 stations. Here’s that result:

Figure 4. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

So you can see why Wibjorn was concerned. This looks nothing like the UN IPCC data, which came from the CRU, which was based on the GHCN data. Why the difference?

The answer is, these graphs all use the raw GHCN data. But the IPCC uses the “adjusted” data. GHCN adjusts the data to remove what it calls “inhomogeneities”. So on a whim I thought I’d take a look at the first station on the list, Darwin Airport, so I could see what an inhomogeneity might look like when it was at home. And I could find out how large the GHCN adjustment for Darwin inhomogeneities was.

First, what is an “inhomogeneity”? I can do no better than quote from GHCN:

Most long-term climate stations have undergone changes that make a time series of their observations inhomogeneous. There are many causes for the discontinuities, including changes in instruments, shelters, the environment around the shelter, the location of the station, the time of observation, and the method used to calculate mean temperature. Often several of these occur at the same time, as is often the case with the introduction of automatic weather stations that is occurring in many parts of the world. Before one can reliably use such climate data for analysis of longterm climate change, adjustments are needed to compensate for the nonclimatic discontinuities.

That makes sense. The raw data will have jumps from station moves and the like. We don’t want to think it’s warming just because the thermometer was moved to a warmer location. Unpleasant as it may seem, we have to adjust for those as best we can.

I always like to start with the rawest data, so I can understand the adjustments. At Darwin there are five separate individual station records that are combined to make up the final Darwin record. These are the individual records of stations in the area, which are numbered from zero to four:

Figure 5. Five individual temperature records for Darwin, plus station count (green line). This raw data is downloaded from GISS, but GISS use the GHCN raw data as the starting point for their analysis.

Darwin does have a few advantages over other stations with multiple records. There is a continuous record from 1941 to the present (Station 1). There is also a continuous record covering a century. finally, the stations are in very close agreement over the entire period of the record. In fact, where there are multiple stations in operation they are so close that you can’t see the records behind Station Zero.

This is an ideal station, because it also illustrates many of the problems with the raw temperature station data.

- There is no one record that covers the whole period.

- The shortest record is only nine years long.

- There are gaps of a month and more in almost all of the records.

- It looks like there are problems with the data at around 1941.

- Most of the datasets are missing months.

- For most of the period there are few nearby stations.

- There is no one year covered by all five records.

- The temperature dropped over a six year period, from a high in 1936 to a low in 1941. The station did move in 1941 … but what happened in the previous six years?

In resolving station records, it’s a judgment call. First off, you have to decide if what you are looking at needs any changes at all. In Darwin’s case, it’s a close call. The record seems to be screwed up around 1941, but not in the year of the move.

Also, although the 1941 temperature shift seems large, I see a similar sized shift from 1992 to 1999. Looking at the whole picture, I think I’d vote to leave it as it is, that’s always the best option when you don’t have other evidence. First do no harm.

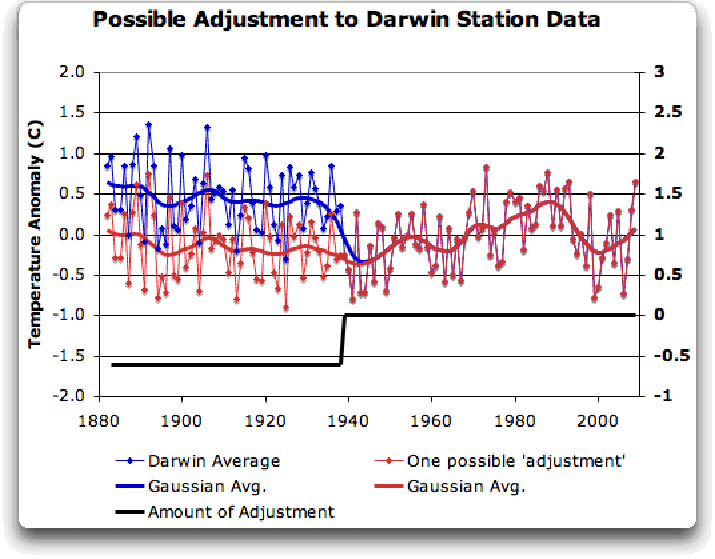

However, there’s a case to be made for adjusting it, particularly given the 1941 station move. If I decided to adjust Darwin, I’d do it like this:

Figure 6 A possible adjustment for Darwin. Black line shows the total amount of the adjustment, on the right scale, and shows the timing of the change.

I shifted the pre-1941 data down by about 0.6C. We end up with little change end to end in my “adjusted” data (shown in red), it’s neither warming nor cooling. However, it reduces the apparent cooling in the raw data. Post-1941, where the other records overlap, they are very close, so I wouldn’t adjust them in any way. Why should we adjust those, they all show exactly the same thing.

OK, so that’s how I’d homogenize the data if I had to, but I vote against adjusting it at all. It only changes one station record (Darwin Zero), and the rest are left untouched.

Then I went to look at what happens when the GHCN removes the “in-homogeneities” to “adjust” the data. Of the five raw datasets, the GHCN discards two, likely because they are short and duplicate existing longer records. The three remaining records are first “homogenized” and then averaged to give the “GHCN Adjusted” temperature record for Darwin.

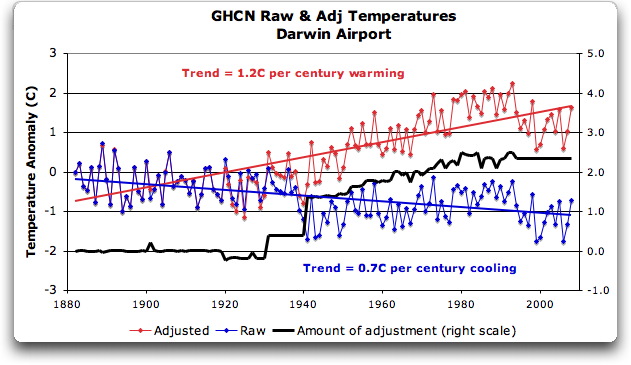

To my great surprise, here’s what I found. To explain the full effect, I am showing this with both datasets starting at the same point (rather than ending at the same point as they are often shown).

Figure 7. GHCN homogeneity adjustments to Darwin Airport combined record

YIKES! Before getting homogenized, temperatures in Darwin were falling at 0.7 Celcius per century … but after the homogenization, they were warming at 1.2 Celcius per century. And the adjustment that they made was over two degrees per century … when those guys “adjust”, they don’t mess around. And the adjustment is an odd shape, with the adjustment first going stepwise, then climbing roughly to stop at 2.4C.

Of course, that led me to look at exactly how the GHCN “adjusts” the temperature data. Here’s what they say

GHCN temperature data include two different datasets: the original data and a homogeneity- adjusted dataset. All homogeneity testing was done on annual time series. The homogeneity- adjustment technique used two steps.

The first step was creating a homogeneous reference series for each station (Peterson and Easterling 1994). Building a completely homogeneous reference series using data with unknown inhomogeneities may be impossible, but we used several techniques to minimize any potential inhomogeneities in the reference series.

…

In creating each year’s first difference reference series, we used the five most highly correlated neighboring stations that had enough data to accurately model the candidate station.

…

The final technique we used to minimize inhomogeneities in the reference series used the mean of the central three values (of the five neighboring station values) to create the first difference reference series.

Fair enough, that all sounds good. They pick five neighboring stations, and average them. Then they compare the average to the station in question. If it looks wonky compared to the average of the reference five, they check any historical records for changes, and if necessary, they homogenize the poor data mercilessly. I have some problems with what they do to homogenize it, but that’s how they identify the inhomogeneous stations.

OK … but given the scarcity of stations in Australia, I wondered how they would find five “neighboring stations” in 1941 …

So I looked it up. The nearest station that covers the year 1941 is 500 km away from Darwin. Not only is it 500 km away, it is the only station within 750 km of Darwin that covers the 1941 time period. (It’s also a pub, Daly Waters Pub to be exact, but hey, it’s Australia, good on ya.) So there simply aren’t five stations to make a “reference series” out of to check the 1936-1941 drop at Darwin.

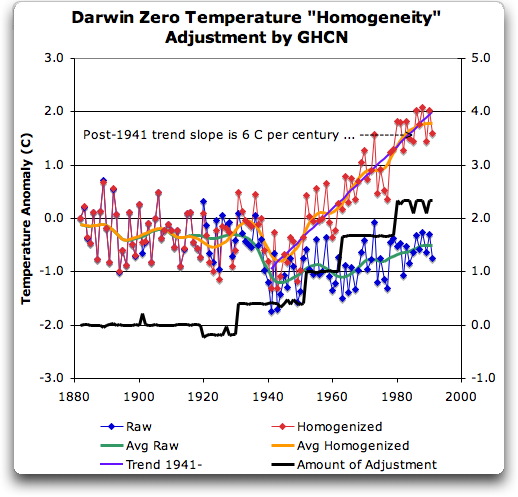

Intrigued by the curious shape of the average of the homogenized Darwin records, I then went to see how they had homogenized each of the individual station records. What made up that strange average shown in Fig. 7? I started at zero with the earliest record. Here is Station Zero at Darwin, showing the raw and the homogenized versions.

Figure 8 Darwin Zero Homogeneity Adjustments. Black line shows amount and timing of adjustments.

Yikes again, double yikes! What on earth justifies that adjustment? How can they do that? We have five different records covering Darwin from 1941 on. They all agree almost exactly. Why adjust them at all? They’ve just added a huge artificial totally imaginary trend to the last half of the raw data! Now it looks like the IPCC diagram in Figure 1, all right … but a six degree per century trend? And in the shape of a regular stepped pyramid climbing to heaven? What’s up with that?

Those, dear friends, are the clumsy fingerprints of someone messing with the data Egyptian style … they are indisputable evidence that the “homogenized” data has been changed to fit someone’s preconceptions about whether the earth is warming.

One thing is clear from this. People who say that “Climategate was only about scientists behaving badly, but the data is OK” are wrong. At least one part of the data is bad, too. The Smoking Gun for that statement is at Darwin Zero.

So once again, I’m left with an unsolved mystery. How and why did the GHCN “adjust” Darwin’s historical temperature to show radical warming? Why did they adjust it stepwise? Do Phil Jones and the CRU folks use the “adjusted” or the raw GHCN dataset? My guess is the adjusted one since it shows warming, but of course we still don’t know … because despite all of this, the CRU still hasn’t released the list of data that they actually use, just the station list.

Another odd fact, the GHCN adjusted Station 1 to match Darwin Zero’s strange adjustment, but they left Station 2 (which covers much of the same period, and as per Fig. 5 is in excellent agreement with Station Zero and Station 1) totally untouched. They only homogenized two of the three. Then they averaged them.

That way, you get an average that looks kinda real, I guess, it “hides the decline”.

Oh, and for what it’s worth, care to know the way that GISS deals with this problem? Well, they only use the Darwin data after 1963, a fine way of neatly avoiding the question … and also a fine way to throw away all of the inconveniently colder data prior to 1941. It’s likely a better choice than the GHCN monstrosity, but it’s a hard one to justify.

Now, I want to be clear here. The blatantly bogus GHCN adjustment for this one station does NOT mean that the earth is not warming. It also does NOT mean that the three records (CRU, GISS, and GHCN) are generally wrong either. This may be an isolated incident, we don’t know. But every time the data gets revised and homogenized, the trends keep increasing. Now GISS does their own adjustments. However, as they keep telling us, they get the same answer as GHCN gets … which makes their numbers suspicious as well.

And CRU? Who knows what they use? We’re still waiting on that one, no data yet …

What this does show is that there is at least one temperature station where the trend has been artificially increased to give a false warming where the raw data shows cooling. In addition, the average raw data for Northern Australia is quite different from the adjusted, so there must be a number of … mmm … let me say “interesting” adjustments in Northern Australia other than just Darwin.

And with the Latin saying “Falsus in unum, falsus in omis” (false in one, false in all) as our guide, until all of the station “adjustments” are examined, adjustments of CRU, GHCN, and GISS alike, we can’t trust anyone using homogenized numbers.

Regards to all, keep fighting the good fight,

w.

FURTHER READING:

My previous post on this subject.

The late and much missed John Daly, irrepressible as always.

More on Darwin history, it wasn’t Stevenson Screens.

NOTE: Figures 7 and 8 updated to fix a typo in the titles. 8:30PM PST 12/8 – Anthony

I keep remembering that Enrons fall back position was that they had Arthur Anderson doing their external audits. Meanwhile, AA was rapidly shredding files and deleating emails.

JJ, if a site selected at random shows this kind of manipulation, it’s not unreasonable to postulate there is improper conduct in the handling of other data, particularly since the crew trying to use this to cripple the world’s economies have repeatedly chosen to hide the raw data and the manipulations, up until the Blessed Saint Whistleblower of East Anglia put it all on the Web. Yes it’s possible this station was handled appropriately…it’s also possible that Jesse James had bank accounts at a lot of midwestern banks where he made “urgent withdrawals”…it’s possible the core of the moon is really green cheese. But the burden of proof rests with the Warmenistas, who by their conduct, have already shown themselves untrustworthy, and Willis Eschenbach has done a great service by screening down to show what was done to the numbers at Darwin.

Check a few more randomly chosen sites using the same methodology…that’s the scientific way. You tell us what you find.

Well I guess it finally reappeared. Note that the extreme surface temperature range on earth, other than on volcanic lava flows or in boiling mud pools, goes from about +60C on the hottest tropical desert surfaces (maybe higher) down to about -90C at Vostok Station. (close to the extreme lowest low official temperature).

Why don’t they start plotting GISStemp on that scale from -90 to + 60, instead of -1 to +1 deg C ? then we can all see how insignificant it is.

Geoff wrote: “If you can tell me how to display graphs on this blog I’ll put up a spaghetti graph of 5 different versions of annual Taverage at Darwin, 1886 to 2008.”

Upload them to TinyPic.com then click on the uploaded image until you see a View Raw Image link to click on and post that URL.

It’s good to have this blog to look at after seeing the BBC’s full page of Copenhagen coverage. “Earth headed for 6 C of Warming” and “Our Warm Globe,” beh. I’m all for not doing stupid things to the environment, but come on people, can we at least take a tiny peep at the data before assembling 20K people together to throw money around and ruin our collective economies? Thanks to everyone who puts in the time and effort to keep this site running and to keep posting things like this analysis.

It is perhaps the only truly transparent component of the current national administration that the Manifesto Media….continues to ignore these issues while they obfuscate. Is it chilly in Copenhagen?

MM

I appreciate your efforts Willis.

Thank you for this.

I’m wondering if this is an example of what actually needs to happen for *all* of the monitoring stations datasets — quite literally we need an audit of the datasets with this exactly kind of public disclosure and debate on how the homogenization or “value added” data can be arrived at.

Only until there is widespread agreement on the data itself can reasonable conclusions or predictions be made.

JJ is absolutely correct. It’s not pleasant to hold yourself from advancing ahead of the facts, but as per strategy, it’s a bad idea to abandon your supply train. Leave inference to Mann, Jones and the rest. One day they will hang for it. If we are reasonable, measured and patient, we will not be ignored.

Thank you, Willis. I greatly appreciate the clarity of your writing.

I think Gary Pearse (07:20:39) and john (09:00:05) have the right idea. I’d like to see a parallel effort to the surface stations project. Maybe something like stationAdjust.org where a procedure could be outlined and others could use it to investigate other locations. Maybe it could be seeded by Willis laying out the steps he used to create this article.

I think a lot of people would more than happy to help if a common approach was documented.

Mr. Willis Eschenbach:

Brilliant article – clear, understandable, incisive. Lots of hard work and dogged persistence. Many thanks and great respect to you. Sure helps explain the origins of the ‘global temperature record’. And it also highlights the genuine extreme difficulty and complexity of compiling such a thing.

I agree with previous posters who say we should work with the ‘rawest’ data possible – down to the level of daily min/max temperatures, however and wherever measured. If we could put together a global database of that raw data, by means of a global collaborative effort, then that would be a real foundation upon which ‘citizen researchers’ could then build. Much like AW’s surfacestations.org project, in terms of the ‘citizen data gathering’ aspect. We are legion, after all.

I think in such a database a re-sited weather station should be given a new weather_station_id – new site, new ID. Obviously a lot of work making paper records digital, but hey, many hands make light work. There was a weather station here in Tentsmuir Forest near Tayport in Scotland until recently. I should start here. Maybe I’ll do that.

We need to use the Internet for important things, while it is available in its present form. In the future, as in the past, we may not be able to do that sort of thing quite so easily. And they do seem to be intent on rewinding history, and sending us back to the dark ages with these fictions they have brainwashed our children with. It makes my blood boil, it really does.

I had found a few horrors and posted a GIStemp “Hall of Shame” a while ago:

http://diggingintheclay.blogspot.com/2009/11/how-would-you-like-your-climate-trends.html

According to Steve McIntyre (whose page on the GISS adjustments I have linked to), of 7364 sites, 2236 are positively (correct direction) adjusted for UHI, but a whopping 1848 (25% of the total) have a negative adjustment that increases the warming trend. IMHO there’s a lot of your global warming!

Willis analysis is good.

There is another issue with the ‘raw’ data which Joe D’Aleo and Ross McKitrick have been stating for years and that is the apparent link to station numbers. Note that there is, of course a close tie between the number of stations and global coverage.

Judging by the quality of the code from CRU if similar or the same was used to create the data from sparse stations, it would seem that D&M could well be right.

Some while back I took the data from McKitrick’s website and used the correlation between the station numbers and raw temperature to back out the effect of the station number variation. The result was surprising. It would seem likely a coincidence, but that cannot be ruled out, yet. My corrected values show a trend mid way between the trends of satellite data over the period of overlap.

http://homepage.ntlworld.com/jdrake/Questioning_Climate/userfiles/Influence_of_Station_Numbers_on_Temperature_v2.pdf

Trends for overlap period:

Surface Stations (Raw) 1.03°C per decade

Surface Stations (Corrected) 0.112°C per decade

RSS Satellite 0.157°C per decade

UAH Satellite 0.093°C per decade

NB: I wrote the piece in two sections, so don’t stop half way.

Martin (09:36:00) :

Willis,

I don’t know where the NOAA URL is that gives individual station data (as opposed to data sets) so I went to the NASA/GISS site with the individual stations data.

http://data.giss.nasa.gov/gistemp/station_data/

The Darwin raw data there (http://data.giss.nasa.gov/cgi-bin/gistemp/findstation.py?datatype=gistemp&data_set=0&name=Darwin) seems to correspond with the raw data you showed in your graphs. But the homogenized data (at http://data.giss.nasa.gov/cgi-bin/gistemp/findstation.py?datatype=gistemp&data_set=2&name=Darwin) does not look like what you show as homogenized data.

Could you give a URL for the site where you got the data (and indicate which file if it is an ftp or otherwise multiple file listing)?

Thanks.

I’m curious about this myself. I also went to the GISS site, and noticed that the “homogenized” data for Darwin basically leaves out all the older, cooler, stuff. Now, this is GISS, not GHCN, so I don’t know if that matters. But with GISS, it looks like they may have taken the upward adjustments for Darwin from GHCN, but left out the early years.

Doug,

“JJ, if a site selected at random shows this kind of manipulation, it’s not unreasonable to postulate there is improper conduct in the handling of other data,”

That is not correct. It is not only unreasonable to ‘postulate improper handling of other data’, it is absolutely unreasonable for you to assume that there is improper handling of these data, based on the info we have on hand. When you do that, you are acting like the men in the emails.

Once again, there is nothing necessarily wrong with these adjustments. If you want to claim that there is, then the burden of proof is on you. That they havent yet supported their claims in sufficient detail does not relieve you of the responsibility to prove your own claims. To the contrary, it is wrong for you to make unsupported accusations, and in the long run supremely stupid.

Allow yourself to rise to worms, you will eventually find a hook.

JJ

Willis fantastic post!

I havent read through all the comments so please excuse me if someone has already brought this up.

The adjustment line shown by Willis does look strangely (and disturbingly) familiar to a scaled valadj array! which BTW also looks like the adjustments put on USHCN http://www.ncdc.noaa.gov/img/climate/research/ushcn/ts.ushcn_anom25_diffs_urb-raw_pg.gif

Triple Yikes! Talk about deja vu!

Anthony perhaps a separate post on this strange similarity in adjustments?

APE

Willis,

I think you are seeing the results that occur when data is thrown into the meat grinder. That is, an analyst constructs what he thinks is a viable adjustment proceedure. He applies it to a few cases. he views the results and concludes that the “adjustment” make sense. Then he throws all the data at that adjustment code. When the end result confirms his bias, that the world is warming, he takes this as evidence that his adjustment “works” There are two distinct approaches to this problem. The first approach is what I would call a top down approach. Understand the problem of adjustment. Construct an adjustment approach and apply it to all data. A few spot checks and it’s good to go. Theory dominates the data. The other approach, your approach, Anthony’s approach, is to tackle the problem from the bottoms up. A station at a time. Question: which station in GHCN shows the largest trend over the past century after adjustment. That might be an interesting approach to a systematic station by station investigation of the adjustment procedure.

Great work, Willis. As with E.M.Smith’s sterling work on GISS, it’s only when the data is dissected thermometer by thermometer that the real story emerges. Real science….

I’ve suggested (having an IT/database/accounting background) that the re-done temperatuture records should have a transactional basis. Every data point (station/date/time/transaction # as the index, temperature as value) should be stamped with who/what/when/type etc, to provide a full audit trail. And most importantly, this leaves the raw data visible (as the first transaction). If we mock up a single measurement of this sort, and assume that the 2.4 adjustment is applied to that data point, it looks like this:

station/date/time/transaction #/temperature/who/what/when/type

Darwin/20091208/0930/30.4/WayneF/Raw data/200912090100/TEMP

Darwin/20091208/0930/2.4/GHCN/Kludge Factor/200912090101/ADJUST

This sort of transactional record is the heart and soul of accounting systems, and if stored in a SQL dadatbase, renders searches trivially easy: get the 2009 raw temp records for Darwin:

Select temperature from temperturestable where type = ‘TEMP’ and station = ‘Darwin’ and date between 20090101 and 20091231

That would give real accountability, identify at a glance what adjustments were being applied, by what process, and to what data points, and facilitate research and audit.

Perhaps we should be thinking about an ‘Audit the Temp’ movement, because Willis’ analysis certainly points to some dark and dirty data adjustments.

And I can’t help thinking that this is a Piltdown man moment ….. a reconstructed and apparently plausible temperature record which, when looked into, is simply a shambles.

Audit the Temp!

To those many other novices like me searching for the truth I recommend these sites. “What the Stations Say” at http://www.john-daly.com/station/stations.htm which has a global map showing locations, details and records of many ground stations. There is also a “Stations of the Week” series at http://www.john-daly.com/stations.htm . Like everything John did it is all explained in simple easy to follow terms and layout.

The following Postscript by John L. Daly highlights many AGW sceptics concerns about GISS and CRU reliance on and manipulation of such data.

“The whole point of this investigation of just one station is that although Darwin shows an overall cooling due to that site change, this faulty record is the one used by GISS and CRU in their compilation of global mean temperature. More importantly, most of the stations used by them will have similar local faults and anomalies, rendering any averaging of them problematical at best. The best statistical number-crunching cannot eliminate these errors.

And why pick on a cooling station – on a site skeptical of `global warming’?

Simply this – the vast majority of stations are affected by urbanisation with inadequate urban adjustment by GISS, as demonstrated elsewhere on this site. Even where urbanisation is not at issue, rural stations also have serious local problems, discussed in more detail in “What’s Wrong with the Surface Record?”. (One such station here in Tasmania is also featured on this site – “Hot Air at Low Head”)

The net effect is that since most such local errors result in warming (and not cooling as in Darwin’s case), the result will be an apparent global warming in the surface record where none may actually exist. This is why the satellite record is a much more reliable guide to recent temperature trends.

Darwin was picked out here simply because it’s record was visibly faulty even from the data itself. But if Darwin can `slip through the net’, what happens to those thousands of faulty station records where the faults are less visible and obvious? As Ken Parish pointed out above,

“All the above historical information emphasises just how much you need to know about the history and surrounding circumstances of local surface weather readings in order to draw any meaningful long-term trend conclusions from them, especially when you are dealing with an apparent global warming trend of only around +0.25°C since 1976”.

Temperature at Copenhagen at 1800 UTC was 6 Celsius. Not sure if that’s homogeneous, pasteurised or plain raw – it came from the British Met Office web site…

Oh, fergot the transaction# in my little mock-up. Mea culpa, mea maxima culpa.

Why wouldn’t acceleration be used as the measuring tool instead of measured value? I am no statistician, just a lowly applied math grad but hear me out. All stations around the world are going to have numerous adjustments made for various reasons not associated to actual temperature change. I believe that each station would, in general, have a log that details the adjustments and why they made. However, we already know that these adjustments are highly subjective and are often done with offsets that exceed the supposed global increase. Therefore, these adjustments need to be removed from the data set entirely. Since the logs detail the date that the adjustment was made, we are able to accurately remove those points of the data set that would introduce an invalid temperature acceleration. Having removed such data, we are now unable to measure the actual temperature. But, we are still left with data sets that show the acceleration of the temperature in either a positive or negative way and we have removed the inaccurate acceleration data. Given that we can consider most thermometers to have been relatively accurate across their range and given that we must accept the accuracy of the date of logs, we have an accurate representation of the temperature acceleration or deceleration over time. This then would allow us to measure the positive or negative gradient over time. If such analysis was applied to the entire data set of all stations, I think it would be possible to produce a largely accurate representation of the temperature record that completely excluded all man introduced adjustments. This would require the logs and the original temperature readings. Do we have those yet?

You’d think if AGW people were as dedicated to science as they say, they’d be picking apart all this stuff like Willis Eschenbach just did. But they’re not.

Science be damned.

Holy cow. I’m starting to doubt that there’s any global warming at all, man-made or otherwise. Especially now that they’re telling us that 2009 is one of the five warmest years when I know for a FACT that it’s the coldest winter and summer I’ve ever experienced in La.