by Willis Eschenbach

People keep saying “Yes, the Climategate scientists behaved badly. But that doesn’t mean the data is bad. That doesn’t mean the earth is not warming.”

Darwin Airport – by Dominic Perrin via Panoramio

Let me start with the second objection first. The earth has generally been warming since the Little Ice Age, around 1650. There is general agreement that the earth has warmed since then. See e.g. Akasofu . Climategate doesn’t affect that.

The second question, the integrity of the data, is different. People say “Yes, they destroyed emails, and hid from Freedom of information Acts, and messed with proxies, and fought to keep other scientists’ papers out of the journals … but that doesn’t affect the data, the data is still good.” Which sounds reasonable.

There are three main global temperature datasets. One is at the CRU, Climate Research Unit of the University of East Anglia, where we’ve been trying to get access to the raw numbers. One is at NOAA/GHCN, the Global Historical Climate Network. The final one is at NASA/GISS, the Goddard Institute for Space Studies. The three groups take raw data, and they “homogenize” it to remove things like when a station was moved to a warmer location and there’s a 2C jump in the temperature. The three global temperature records are usually called CRU, GISS, and GHCN. Both GISS and CRU, however, get almost all of their raw data from GHCN. All three produce very similar global historical temperature records from the raw data.

So I’m still on my multi-year quest to understand the climate data. You never know where this data chase will lead. This time, it has ended me up in Australia. I got to thinking about Professor Wibjorn Karlen’s statement about Australia that I quoted here:

Another example is Australia. NASA [GHCN] only presents 3 stations covering the period 1897-1992. What kind of data is the IPCC Australia diagram based on?

If any trend it is a slight cooling. However, if a shorter period (1949-2005) is used, the temperature has increased substantially. The Australians have many stations and have published more detailed maps of changes and trends.

The folks at CRU told Wibjorn that he was just plain wrong. Here’s what they said is right, the record that Wibjorn was talking about, Fig. 9.12 in the UN IPCC Fourth Assessment Report, showing Northern Australia:

Figure 1. Temperature trends and model results in Northern Australia. Black line is observations (From Fig. 9.12 from the UN IPCC Fourth Annual Report). Covers the area from 110E to 155E, and from 30S to 11S. Based on the CRU land temperature.) Data from the CRU.

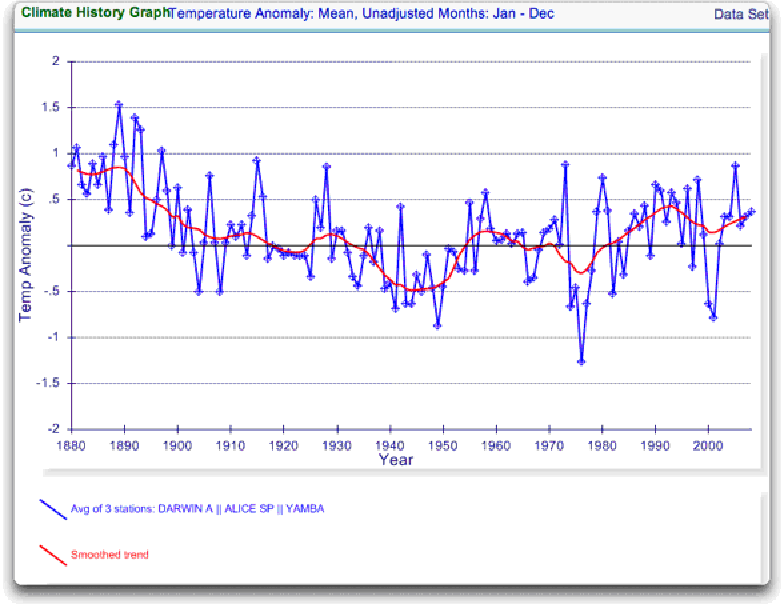

One of the things that was revealed in the released CRU emails is that the CRU basically uses the Global Historical Climate Network (GHCN) dataset for its raw data. So I looked at the GHCN dataset. There, I find three stations in North Australia as Wibjorn had said, and nine stations in all of Australia, that cover the period 1900-2000. Here is the average of the GHCN unadjusted data for those three Northern stations, from AIS:

Figure 2. GHCN Raw Data, All 100-yr stations in IPCC area above.

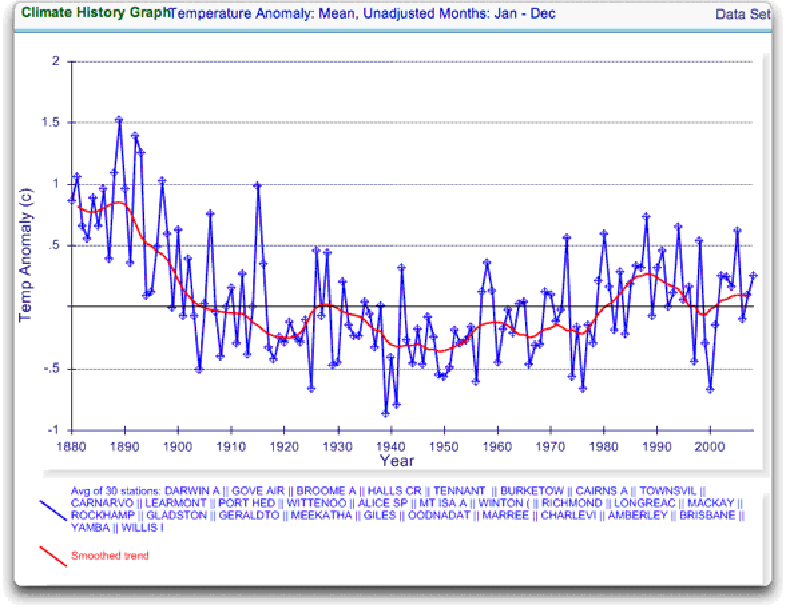

So once again Wibjorn is correct, this looks nothing like the corresponding IPCC temperature record for Australia. But it’s too soon to tell. Professor Karlen is only showing 3 stations. Three is not a lot of stations, but that’s all of the century-long Australian records we have in the IPCC specified region. OK, we’ve seen the longest stations record, so lets throw more records into the mix. Here’s every station in the UN IPCC specified region which contains temperature records that extend up to the year 2000 no matter when they started, which is 30 stations.

Figure 3. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

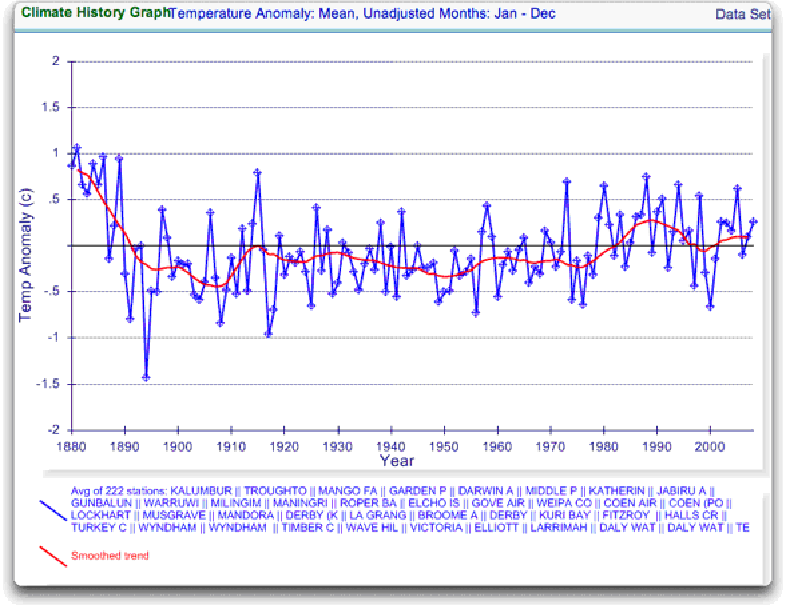

Still no similarity with IPCC. So I looked at every station in the area. That’s 222 stations. Here’s that result:

Figure 4. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

So you can see why Wibjorn was concerned. This looks nothing like the UN IPCC data, which came from the CRU, which was based on the GHCN data. Why the difference?

The answer is, these graphs all use the raw GHCN data. But the IPCC uses the “adjusted” data. GHCN adjusts the data to remove what it calls “inhomogeneities”. So on a whim I thought I’d take a look at the first station on the list, Darwin Airport, so I could see what an inhomogeneity might look like when it was at home. And I could find out how large the GHCN adjustment for Darwin inhomogeneities was.

First, what is an “inhomogeneity”? I can do no better than quote from GHCN:

Most long-term climate stations have undergone changes that make a time series of their observations inhomogeneous. There are many causes for the discontinuities, including changes in instruments, shelters, the environment around the shelter, the location of the station, the time of observation, and the method used to calculate mean temperature. Often several of these occur at the same time, as is often the case with the introduction of automatic weather stations that is occurring in many parts of the world. Before one can reliably use such climate data for analysis of longterm climate change, adjustments are needed to compensate for the nonclimatic discontinuities.

That makes sense. The raw data will have jumps from station moves and the like. We don’t want to think it’s warming just because the thermometer was moved to a warmer location. Unpleasant as it may seem, we have to adjust for those as best we can.

I always like to start with the rawest data, so I can understand the adjustments. At Darwin there are five separate individual station records that are combined to make up the final Darwin record. These are the individual records of stations in the area, which are numbered from zero to four:

Figure 5. Five individual temperature records for Darwin, plus station count (green line). This raw data is downloaded from GISS, but GISS use the GHCN raw data as the starting point for their analysis.

Darwin does have a few advantages over other stations with multiple records. There is a continuous record from 1941 to the present (Station 1). There is also a continuous record covering a century. finally, the stations are in very close agreement over the entire period of the record. In fact, where there are multiple stations in operation they are so close that you can’t see the records behind Station Zero.

This is an ideal station, because it also illustrates many of the problems with the raw temperature station data.

- There is no one record that covers the whole period.

- The shortest record is only nine years long.

- There are gaps of a month and more in almost all of the records.

- It looks like there are problems with the data at around 1941.

- Most of the datasets are missing months.

- For most of the period there are few nearby stations.

- There is no one year covered by all five records.

- The temperature dropped over a six year period, from a high in 1936 to a low in 1941. The station did move in 1941 … but what happened in the previous six years?

In resolving station records, it’s a judgment call. First off, you have to decide if what you are looking at needs any changes at all. In Darwin’s case, it’s a close call. The record seems to be screwed up around 1941, but not in the year of the move.

Also, although the 1941 temperature shift seems large, I see a similar sized shift from 1992 to 1999. Looking at the whole picture, I think I’d vote to leave it as it is, that’s always the best option when you don’t have other evidence. First do no harm.

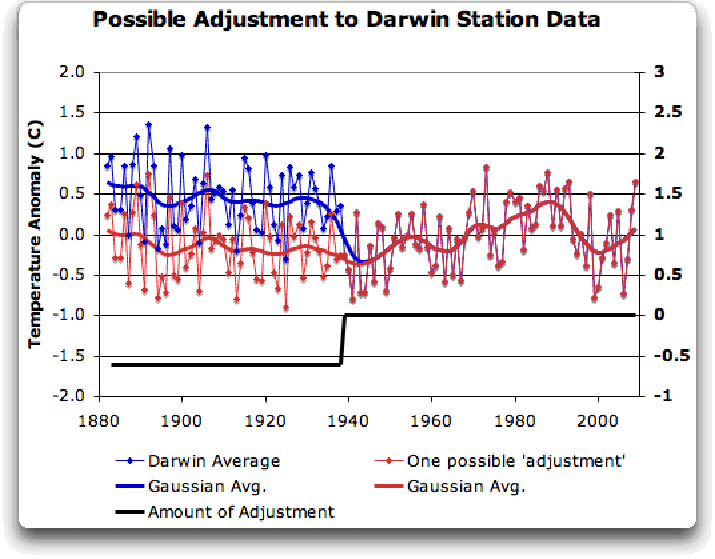

However, there’s a case to be made for adjusting it, particularly given the 1941 station move. If I decided to adjust Darwin, I’d do it like this:

Figure 6 A possible adjustment for Darwin. Black line shows the total amount of the adjustment, on the right scale, and shows the timing of the change.

I shifted the pre-1941 data down by about 0.6C. We end up with little change end to end in my “adjusted” data (shown in red), it’s neither warming nor cooling. However, it reduces the apparent cooling in the raw data. Post-1941, where the other records overlap, they are very close, so I wouldn’t adjust them in any way. Why should we adjust those, they all show exactly the same thing.

OK, so that’s how I’d homogenize the data if I had to, but I vote against adjusting it at all. It only changes one station record (Darwin Zero), and the rest are left untouched.

Then I went to look at what happens when the GHCN removes the “in-homogeneities” to “adjust” the data. Of the five raw datasets, the GHCN discards two, likely because they are short and duplicate existing longer records. The three remaining records are first “homogenized” and then averaged to give the “GHCN Adjusted” temperature record for Darwin.

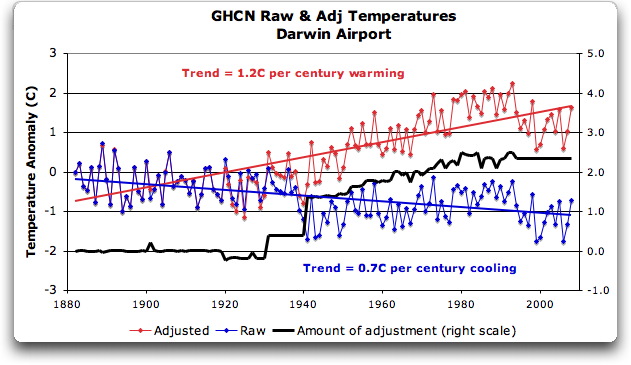

To my great surprise, here’s what I found. To explain the full effect, I am showing this with both datasets starting at the same point (rather than ending at the same point as they are often shown).

Figure 7. GHCN homogeneity adjustments to Darwin Airport combined record

YIKES! Before getting homogenized, temperatures in Darwin were falling at 0.7 Celcius per century … but after the homogenization, they were warming at 1.2 Celcius per century. And the adjustment that they made was over two degrees per century … when those guys “adjust”, they don’t mess around. And the adjustment is an odd shape, with the adjustment first going stepwise, then climbing roughly to stop at 2.4C.

Of course, that led me to look at exactly how the GHCN “adjusts” the temperature data. Here’s what they say

GHCN temperature data include two different datasets: the original data and a homogeneity- adjusted dataset. All homogeneity testing was done on annual time series. The homogeneity- adjustment technique used two steps.

The first step was creating a homogeneous reference series for each station (Peterson and Easterling 1994). Building a completely homogeneous reference series using data with unknown inhomogeneities may be impossible, but we used several techniques to minimize any potential inhomogeneities in the reference series.

…

In creating each year’s first difference reference series, we used the five most highly correlated neighboring stations that had enough data to accurately model the candidate station.

…

The final technique we used to minimize inhomogeneities in the reference series used the mean of the central three values (of the five neighboring station values) to create the first difference reference series.

Fair enough, that all sounds good. They pick five neighboring stations, and average them. Then they compare the average to the station in question. If it looks wonky compared to the average of the reference five, they check any historical records for changes, and if necessary, they homogenize the poor data mercilessly. I have some problems with what they do to homogenize it, but that’s how they identify the inhomogeneous stations.

OK … but given the scarcity of stations in Australia, I wondered how they would find five “neighboring stations” in 1941 …

So I looked it up. The nearest station that covers the year 1941 is 500 km away from Darwin. Not only is it 500 km away, it is the only station within 750 km of Darwin that covers the 1941 time period. (It’s also a pub, Daly Waters Pub to be exact, but hey, it’s Australia, good on ya.) So there simply aren’t five stations to make a “reference series” out of to check the 1936-1941 drop at Darwin.

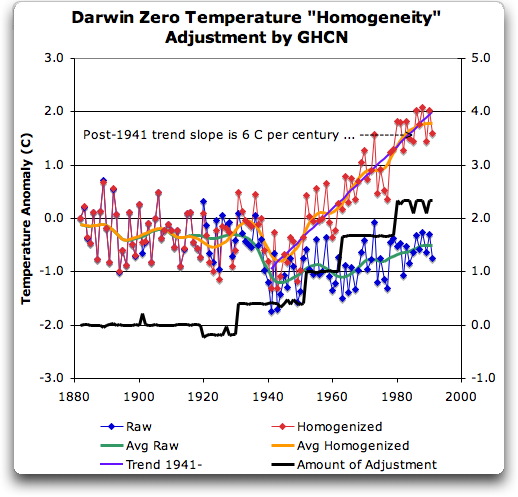

Intrigued by the curious shape of the average of the homogenized Darwin records, I then went to see how they had homogenized each of the individual station records. What made up that strange average shown in Fig. 7? I started at zero with the earliest record. Here is Station Zero at Darwin, showing the raw and the homogenized versions.

Figure 8 Darwin Zero Homogeneity Adjustments. Black line shows amount and timing of adjustments.

Yikes again, double yikes! What on earth justifies that adjustment? How can they do that? We have five different records covering Darwin from 1941 on. They all agree almost exactly. Why adjust them at all? They’ve just added a huge artificial totally imaginary trend to the last half of the raw data! Now it looks like the IPCC diagram in Figure 1, all right … but a six degree per century trend? And in the shape of a regular stepped pyramid climbing to heaven? What’s up with that?

Those, dear friends, are the clumsy fingerprints of someone messing with the data Egyptian style … they are indisputable evidence that the “homogenized” data has been changed to fit someone’s preconceptions about whether the earth is warming.

One thing is clear from this. People who say that “Climategate was only about scientists behaving badly, but the data is OK” are wrong. At least one part of the data is bad, too. The Smoking Gun for that statement is at Darwin Zero.

So once again, I’m left with an unsolved mystery. How and why did the GHCN “adjust” Darwin’s historical temperature to show radical warming? Why did they adjust it stepwise? Do Phil Jones and the CRU folks use the “adjusted” or the raw GHCN dataset? My guess is the adjusted one since it shows warming, but of course we still don’t know … because despite all of this, the CRU still hasn’t released the list of data that they actually use, just the station list.

Another odd fact, the GHCN adjusted Station 1 to match Darwin Zero’s strange adjustment, but they left Station 2 (which covers much of the same period, and as per Fig. 5 is in excellent agreement with Station Zero and Station 1) totally untouched. They only homogenized two of the three. Then they averaged them.

That way, you get an average that looks kinda real, I guess, it “hides the decline”.

Oh, and for what it’s worth, care to know the way that GISS deals with this problem? Well, they only use the Darwin data after 1963, a fine way of neatly avoiding the question … and also a fine way to throw away all of the inconveniently colder data prior to 1941. It’s likely a better choice than the GHCN monstrosity, but it’s a hard one to justify.

Now, I want to be clear here. The blatantly bogus GHCN adjustment for this one station does NOT mean that the earth is not warming. It also does NOT mean that the three records (CRU, GISS, and GHCN) are generally wrong either. This may be an isolated incident, we don’t know. But every time the data gets revised and homogenized, the trends keep increasing. Now GISS does their own adjustments. However, as they keep telling us, they get the same answer as GHCN gets … which makes their numbers suspicious as well.

And CRU? Who knows what they use? We’re still waiting on that one, no data yet …

What this does show is that there is at least one temperature station where the trend has been artificially increased to give a false warming where the raw data shows cooling. In addition, the average raw data for Northern Australia is quite different from the adjusted, so there must be a number of … mmm … let me say “interesting” adjustments in Northern Australia other than just Darwin.

And with the Latin saying “Falsus in unum, falsus in omis” (false in one, false in all) as our guide, until all of the station “adjustments” are examined, adjustments of CRU, GHCN, and GISS alike, we can’t trust anyone using homogenized numbers.

Regards to all, keep fighting the good fight,

w.

FURTHER READING:

My previous post on this subject.

The late and much missed John Daly, irrepressible as always.

More on Darwin history, it wasn’t Stevenson Screens.

NOTE: Figures 7 and 8 updated to fix a typo in the titles. 8:30PM PST 12/8 – Anthony

Willis,

I don’t know where the NOAA URL is that gives individual station data (as opposed to data sets) so I went to the NASA/GISS site with the individual stations data.

http://data.giss.nasa.gov/gistemp/station_data/

The Darwin raw data there (http://data.giss.nasa.gov/cgi-bin/gistemp/findstation.py?datatype=gistemp&data_set=0&name=Darwin) seems to correspond with the raw data you showed in your graphs. But the homogenized data (at http://data.giss.nasa.gov/cgi-bin/gistemp/findstation.py?datatype=gistemp&data_set=2&name=Darwin) does not look like what you show as homogenized data.

Could you give a URL for the site where you got the data (and indicate which file if it is an ftp or otherwise multiple file listing)?

Thanks.

It gets worse…

The latest adjustment for Darwin airport is +2.4 deg C

It would appear that as new data for that station is added each month going forward it will be immediately adjusted upwards by such a large amount by the “scientists”. How can this be right given that current temperature data with modern instruments can be expected to be more accurate than historical data?

Also if the UHI effect is taken into account any adjustment should be negative, not positive….

If this kind of fiddle is happening with current readings from many other stations around the world, then it is little wonder that the Met Office is claiming that the current decade is the hottest ever!

I am an engineer but thought it was obdivious to all what a scam the GW program is. Of course the data are “poop”. See Icecap, Dec 5, 2009 Alaska Trends by Dr Richard Keen for more “poop” in Alaska records–

Insightful comment:

vdb (01:05:25) “An adjustment trend slope of 6 degrees C per century? Maybe the thermometer was put in a fridge, and they cranked the fridge up by a degree in 1930, 1950, 1962 and 1980?”

–

I agree with ForestGirl (01:11:06) about the need for site profiles. When I used to do forest & soils research, we used to take 2 or 3 pages worth of notes on the physical setting of each study plot. The log notes were diligently incorporated into electronic databases with full awareness of their value to future investigators.

Boris Gimbarzevsky (01:07:15) “[…] the only time one sees homogeneity is in the winter where every place in my yard not close to the heated house is uniformly cold […]”

You raise some interesting observations Boris. I hope many will consider your suggestion. I’ve been studying coastal BC stations. Serious questions arise.

–

Those who seem to immediately conclude ~1941 issues at Darwin must relate to war activities need to review:

a) their Stat 101 notes on confounding.

b) climate oscillation indices (e.g. SOI, NAO, PDO, AMO, etc.)

c) Earth orientation parameters.

Don’t make the mistakes Mr. Jones has made!

–

Great article – only the 2nd really interesting article on WUWT since CRUgate broke. This thread has inspired me to dust off a cross-wavelet analysis of TMax vs. TMin that highlights …. (may be continued…)

In Burketown the data between 1907 and 1953 had been adjusted upwards by approx. one degree C making a clear unadjusted warming trend to an adjusted flat/cooling trend. So it is not a nation wide bias in one direction.

http://www.appinsys.com/GlobalWarming/climgraph.aspx?pltparms=GHCNT100XJanDecI188020080900111AS50194259000x

Is it just me but why does it seems that everytime someone tries to reconcile the raw data to the “value added” data unexplainable upward adjustments are almost always found ?

And why would Australia which is 79% the size of the USA use only 3 stations ?

If they told you they used 5 stations for the entire USA would anyone ever listen to them again ?

Garbage In –> Garbage Code –> Garbage2 Out

I’m coining the term G2O …

Entertain me for a moment in history.

I believe in the actual proven conspiracy Climategate, all the ingredients are there. Weather you believe in conspiracies or not is of no consequence. Hypothetically speaking, that there is a greater conspiracy of the Illuminati

using Man-made climate change to be used to their ends, Climategate may be Payback to the NWO conspirators, to commemorate the assassination of JFK. The E-mails were leaked on the same day of the week Kennedy died, 2 days before his actual assassination date. Perhaps they had to be leaked to the Internet on the day they were because the offices would be closed over the weekend. Close enough eah?

Just did a few calculations for raw v homogenised GHCN data for my home town of Brisbane using the Eagle Farm airport station and the trend flips from -0.6/100yrs to +0.6/100yrs looking at the data from 1950 – 2008. Not as dramatic but still interesting. The data pre 1978 is adjusted down and post 78 adjusted up. I have not looked into any reasons so I am just throwing it out there. I’ll get a few plots up tomorrow. I have no station history to see if there are reasons for this adjustment. Agree that a few dozen carefully examined stations by Willis and others who know what they are doing would be a good step.

Smokey,

My point being that smacking of this and that is not sufficient to make damning accusations. It is not only immoral to do so, it is very bad strategy. Do that enough, and at some point you are going end up having your ass handed to you on a silver platter.

That the adjustments applied to date almost always show warming is not necessarily illegitimate in the aggregate, much less is it any proof that the adjustments applied to any particular station are wrong, as Willis is claiming here.

It is entirely possible that the adjustments applied to the Darwin station are complete and justifiable, in which case Willis is a blathering idiot, not a very nice person for having made baseless accusations, and he, Jones and Mann can share a suite at the next American Sphincters Association convention.

It is also entirely possible that the adjustments that have been applied are legitimate, but that there are compensating adjustments (such as UHI or other siting issues) that have innocently or intentionally been left out. That leaves the current accusations just as off the mark. GHCN would be judged incompetant and/or culpable, but not for any of the reasons listed here.

It is also entriely possible that the adjustments that have been applied are incorrect, but that they were the result of a mistake or unconscious bias. GHCN is therefore error prone, but not criminal.

It is also entirely possible that illegitimate adjustments that have been applied and other legitimate adjustments left out, in a concerted effort to cook the books.

The information we have right now is consistent with all of these hypothesis. Stick to reporting the facts, and leave the fanciful storytelling to Team members describing their proxies.

Note that Anthony’s work here has been to catalogue station siting issues that potentially demand adjustment or other response (such as outright discarding) that are every bit as intensive as the adjustments seen here. Railing against adjustments per se is to slit one’s own throat, as is unsupported accusation of criminality …

JJ

This is a long thread, so sorry if my question has been asked previously, but when were these adjustments to the raw data first made? Before 1988 or after?

Exactly the same prevarication as NIWA. Lies disquised as data.

Shouldn’t that be “Crikey” not “Yikes”?

Anyone made a joke about those Southern Hemisphere thermometers being upside down, yet?

That’s obviously why the trend changed direction, once they were normalized.

I haven’t had the time to read all the comments, but following a link in the article and further, I found a comment which noted that the original Darwin station was at the Post Office. A station was installed at the Airport (at some point), and the Post Office was destroyed when Darwin was bombed in February 1942. The last monthly average for the Post Office station was January 1942. It is therefore possible that the discontinuity in the record is a switch to the Airport station for 1941 et seq.

JJ (09:59:35),

All of your “entirely possible” comments can be resolved by the full and complete disclosure of all of the raw and massaged data, and the hidden methodologies and “adjustments”, that the Team uses to come up with their scary AGW conclusions.

As they say, just open the books. Then everyone can see if they’ve been cooked. The fact that they’re still stonewalling tells me all I need to know about their veracity and accuracy. The leaked emails and code only reinforces my suspicion, and any contrary and defensive red faced arm-waving does nothing to convince me otherwise.

Smokey (10:12:52) :

But Smokey, if they open the books, you know what will be found.

Someone will have to go in and open the books.

Riveting reading! Thank you.

A little black humor

“Now it looks like the IPCC diagram in Figure 1, all right … but a six degree per century trend? And in the shape of a regular stepped pyramid climbing to heaven? What’s up with that?”

Interestingly, a study has found (through the “use of eclectic proxies for temperature and other variables where empirical data is lacking”) a link between climate modification and Aztec sacrificial rituals:

http://geoplasma.spaces.live.com/blog/cns!C00F2616F39D0B2B!736.entry

Here is the same analysis done on the Grand Canyon:

http://i45.tinypic.com/bguywn.jpg

The nearby hottest city around is nearby (Tucson) and likely has been “homogenized” with this pristine site.

Sources:

ftp://ftp.ncdc.noaa.gov/pub/data/ushcn/v2/monthly/

http://data.giss.nasa.gov/cgi-bin/gistemp/gistemp_station.py?id=425723760080&data_set=0&num_neighbors=1

So I didn’t see any mention; in the list of “inhomogeneity” factors, of the Weber grill effect.

Of course I believe at Darwin airport; when they say; “”just toss another shrimp on the barbie !” they simply lay it on the ground; which is hot enough to frazzle even the best kangaroo steaks.

This whole “data adjustment” business seems like a giant fraud to me.

If I place 12 thermometers in various places around my house, and read them every day for a hundred years, I can then perform ANY mathematical AlGorythm to that raw data, to obtain some out put data I can publish; I’ll call it GEST; the George E. Smith Temperature. Now if I move one of those thermometers; or maybe I just replace the old valve B&W TV set, with one of those modern gas hogging flat screen Plasma TVs, I would expect that the output of GEST to change, because the TV station thermometer is now getting goosed by my nifty new TV set.

Well so what; I simply put an asterisk in the report for the day I install my new TV, to tell all my interested neighbors that I have a new TV and from here on out, GEST is going to be different.

The fraud part comes in when I brazenly rename my GEST; and pompously call it GESHT for the George E. Smith House Temperature.

Well the AlGorythm that I perform on my 12 thermometer raw data to get GEST, in no way represents the temperature of MY HOUSE which is what GESHT fraudulently purports to be. And the reason it isn’t the true GESHT is that 12 thermometers is not sufficient to truly sample the temperature of my house; where the temperatures can range from + 400-450 F in the oven, when I am cooking that Kangaroo roast, down to maybe 10-20 F in the freezer, where I have the rest of those kangaroos I hit on the road stashed; and according to an argument by Galileo Galilei; in “Dialog on the Two World Systems.”, every temperature between 10F and 450 F must exist somewhere in my house, sometime, while I am doing the thanksgiving kangaroo.

Well you see GISStemp and HadCRUT have the same problem as GESHT; they are NOT representative of the mean globnal temperature, or even the mean global surface; or lower troposphere temperature. They are GISStemp, and HadCRUT respectively; the result of applying some quite arbitrary AlGorythm to a completely non representative sample of raw temperatures from various places around the world that do not together form a proper sampling of the continuous global function of temperature over space and time.

So GISStemp and HadCRUT are about the equivalent of the average telephone number in the phone directories of say Manhattan and East Anglia Respectively; they are not true measures of the mean global temperature (surface or lower tropo) of planet earth. If they were properly sampled; it would not matter if one of the stations gets a new Weber grill.

By the way; A really nice bunch of work there Willis; I’m going to have to print it out and try to digest it to see what you’ve uncovered

Don’t know if this has been pointed out yet, but it is possible that stupidity is still the culpret. The station at the 500km away pub could have been used to calculate the correction factor. If the process was automated, it would be one of the ‘nearest 5’. Of course, that is a methodology flaw and all of the corrections are suspect, for any sparse data.

Jean Parisot: “Would a going forward position be to use a well understood set of measurements and monitor changes for the next X years and see which models (hypotheses) are working.”

The key to any analysis is good data. That data simply doesn’t exist because the local temperature readings were never taken intending them to be used to estimate global temperature to anything like the present requirement and the proxy record, is far far worse.

Like the Irish say: “If I were going there, I wouldn’t’ start from here!” But we are here.

The first instinct may be to rip up all the suspect temperature sites, throw out all the tainted scientists and start again. But if we did that it would be many decades before we settled this issue once and for all. But we sure need to start spending serious money on temperature measurement (I have to express potential self interest as I used to design precision temperature control equipment). We can’t shirk, we have to get the best possible coverage of temperatures globally, in purpose made sites free from the taint of Urban heating – and certainly free from the taint of political meddling! At least that we we will know for certain what the climate is doing from that time on.

Then we have to go back to manual measuring the temperature!!! Yes, we’ve got to start measuring the temperature without automatic equipment, because the only way we will know the bias caused by automation is to get really accurate results for the discrepancy between manual and automated measurements. Similarly, we have to get some very accurate measurements and models so that we can back-calculate the Urban heating effect and take this out without some hair brained enviro-political-scientist just picking a figure at random. (Grrrr .. makes my blood boil!)

Then we have to get a hell of a lot more proxy measurements, we have to find ways to calibrate the proxy relationships so that we have a scientifically rigorous method based on extensive laboratory testing (i.e. for tree proxies, we might have to grow a few trees in labs at historic CO2 levels – even that is difficult). I’m sure there are good dendrochronologist out there, take out the “hide the decline” labs, and give the rest some decent money without the political bias, and … hopefully we’ll bring back the science and find out what really has been happening to the climate.

Basically, if we spent a fraction of the money the politicians wanted to tax us all on getting decent data then perhaps we wouldn’t be in this mess. It really hurts me to say this, but we have to learn all the lessons of Iraq:

1. Science shouldn’t be “sexed up” to fit the political needs.

&

2. Whilst it might seem sensible to oust all the bathists (aka climategate scientists) the only workable solution is to find a way to include these people in the new “regime” – minus the worst offenders, but unless we include the foot soldiers of the current regime, we’ll get the chaos we have right now in Iraq.

And I have to say it: the next time someone says: “we are in real imminent danger of WMD”, “the evidence is unequivocal”, can someone please remind the press that last lot of WMD was just a figment of the immigination of few oil-grabbing neocons.

A reminder when hearing “news” analyses: the emails are a bright, shiny butterfly, a magician’s diversion. The indisputable scientific misconduct is in the software and data management.

I did just leave a comment, and it totally vanished immediately, when I posted it.

Steve McIntyre showed a similar adjustment for the entire USHCN data set nearly three years ago. Original raw data removed, “value added” data replaces it, and nearly every correction is biased towards a warming trend.

http://www.climateaudit.org/?p=1142

Smokey,

“All of your “entirely possible” comments can be resolved by the full and complete disclosure of all of the raw and massaged data, and the hidden methodologies and “adjustments”, that the Team uses to come up with their scary AGW conclusions.”

Exactly.

So blog entries like this one should not conclude with unsupported accusations, they should conclude with reknewed demands for the data and methods. That is legitimate and powerful: You arent giving us the data, and here are some very suspicious results that make it look like you might be hiding something more than the decline.

Going futher afield into unsupported claims of ‘blatantly bogus’ and ‘false warming’ is, as you put it, red faced arm waving. And claims of ‘idisputable evidence of preconcieved notions’ yada yada yada is simply a lie itself. Thats Teamwork. Leave that to the experts.

JJ