by Willis Eschenbach

People keep saying “Yes, the Climategate scientists behaved badly. But that doesn’t mean the data is bad. That doesn’t mean the earth is not warming.”

Darwin Airport – by Dominic Perrin via Panoramio

Let me start with the second objection first. The earth has generally been warming since the Little Ice Age, around 1650. There is general agreement that the earth has warmed since then. See e.g. Akasofu . Climategate doesn’t affect that.

The second question, the integrity of the data, is different. People say “Yes, they destroyed emails, and hid from Freedom of information Acts, and messed with proxies, and fought to keep other scientists’ papers out of the journals … but that doesn’t affect the data, the data is still good.” Which sounds reasonable.

There are three main global temperature datasets. One is at the CRU, Climate Research Unit of the University of East Anglia, where we’ve been trying to get access to the raw numbers. One is at NOAA/GHCN, the Global Historical Climate Network. The final one is at NASA/GISS, the Goddard Institute for Space Studies. The three groups take raw data, and they “homogenize” it to remove things like when a station was moved to a warmer location and there’s a 2C jump in the temperature. The three global temperature records are usually called CRU, GISS, and GHCN. Both GISS and CRU, however, get almost all of their raw data from GHCN. All three produce very similar global historical temperature records from the raw data.

So I’m still on my multi-year quest to understand the climate data. You never know where this data chase will lead. This time, it has ended me up in Australia. I got to thinking about Professor Wibjorn Karlen’s statement about Australia that I quoted here:

Another example is Australia. NASA [GHCN] only presents 3 stations covering the period 1897-1992. What kind of data is the IPCC Australia diagram based on?

If any trend it is a slight cooling. However, if a shorter period (1949-2005) is used, the temperature has increased substantially. The Australians have many stations and have published more detailed maps of changes and trends.

The folks at CRU told Wibjorn that he was just plain wrong. Here’s what they said is right, the record that Wibjorn was talking about, Fig. 9.12 in the UN IPCC Fourth Assessment Report, showing Northern Australia:

Figure 1. Temperature trends and model results in Northern Australia. Black line is observations (From Fig. 9.12 from the UN IPCC Fourth Annual Report). Covers the area from 110E to 155E, and from 30S to 11S. Based on the CRU land temperature.) Data from the CRU.

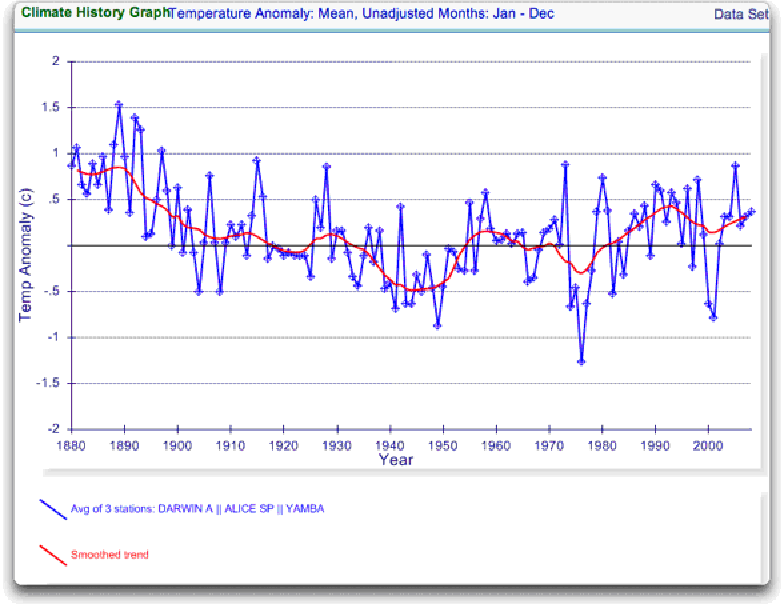

One of the things that was revealed in the released CRU emails is that the CRU basically uses the Global Historical Climate Network (GHCN) dataset for its raw data. So I looked at the GHCN dataset. There, I find three stations in North Australia as Wibjorn had said, and nine stations in all of Australia, that cover the period 1900-2000. Here is the average of the GHCN unadjusted data for those three Northern stations, from AIS:

Figure 2. GHCN Raw Data, All 100-yr stations in IPCC area above.

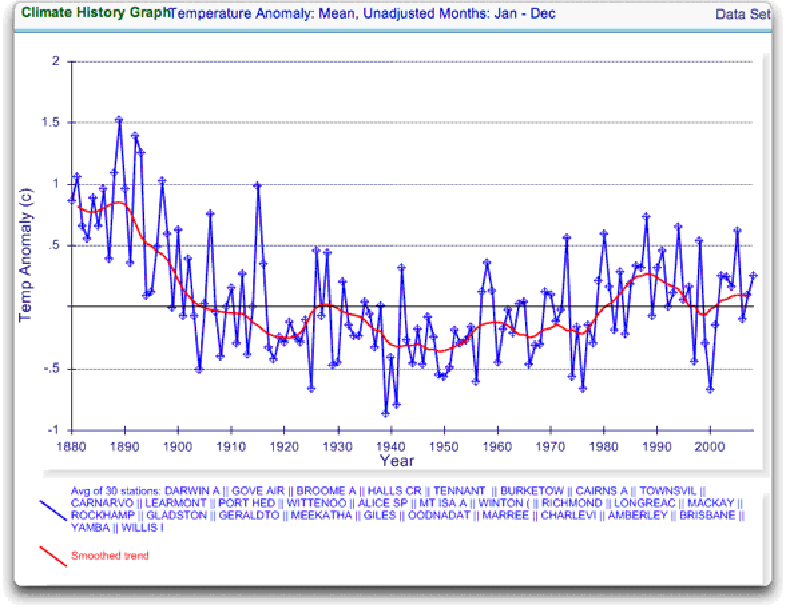

So once again Wibjorn is correct, this looks nothing like the corresponding IPCC temperature record for Australia. But it’s too soon to tell. Professor Karlen is only showing 3 stations. Three is not a lot of stations, but that’s all of the century-long Australian records we have in the IPCC specified region. OK, we’ve seen the longest stations record, so lets throw more records into the mix. Here’s every station in the UN IPCC specified region which contains temperature records that extend up to the year 2000 no matter when they started, which is 30 stations.

Figure 3. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

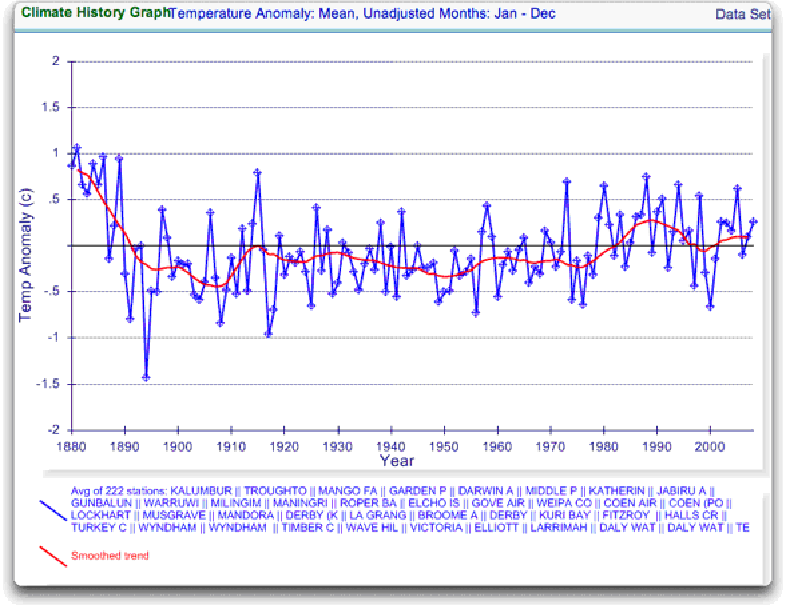

Still no similarity with IPCC. So I looked at every station in the area. That’s 222 stations. Here’s that result:

Figure 4. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

So you can see why Wibjorn was concerned. This looks nothing like the UN IPCC data, which came from the CRU, which was based on the GHCN data. Why the difference?

The answer is, these graphs all use the raw GHCN data. But the IPCC uses the “adjusted” data. GHCN adjusts the data to remove what it calls “inhomogeneities”. So on a whim I thought I’d take a look at the first station on the list, Darwin Airport, so I could see what an inhomogeneity might look like when it was at home. And I could find out how large the GHCN adjustment for Darwin inhomogeneities was.

First, what is an “inhomogeneity”? I can do no better than quote from GHCN:

Most long-term climate stations have undergone changes that make a time series of their observations inhomogeneous. There are many causes for the discontinuities, including changes in instruments, shelters, the environment around the shelter, the location of the station, the time of observation, and the method used to calculate mean temperature. Often several of these occur at the same time, as is often the case with the introduction of automatic weather stations that is occurring in many parts of the world. Before one can reliably use such climate data for analysis of longterm climate change, adjustments are needed to compensate for the nonclimatic discontinuities.

That makes sense. The raw data will have jumps from station moves and the like. We don’t want to think it’s warming just because the thermometer was moved to a warmer location. Unpleasant as it may seem, we have to adjust for those as best we can.

I always like to start with the rawest data, so I can understand the adjustments. At Darwin there are five separate individual station records that are combined to make up the final Darwin record. These are the individual records of stations in the area, which are numbered from zero to four:

Figure 5. Five individual temperature records for Darwin, plus station count (green line). This raw data is downloaded from GISS, but GISS use the GHCN raw data as the starting point for their analysis.

Darwin does have a few advantages over other stations with multiple records. There is a continuous record from 1941 to the present (Station 1). There is also a continuous record covering a century. finally, the stations are in very close agreement over the entire period of the record. In fact, where there are multiple stations in operation they are so close that you can’t see the records behind Station Zero.

This is an ideal station, because it also illustrates many of the problems with the raw temperature station data.

- There is no one record that covers the whole period.

- The shortest record is only nine years long.

- There are gaps of a month and more in almost all of the records.

- It looks like there are problems with the data at around 1941.

- Most of the datasets are missing months.

- For most of the period there are few nearby stations.

- There is no one year covered by all five records.

- The temperature dropped over a six year period, from a high in 1936 to a low in 1941. The station did move in 1941 … but what happened in the previous six years?

In resolving station records, it’s a judgment call. First off, you have to decide if what you are looking at needs any changes at all. In Darwin’s case, it’s a close call. The record seems to be screwed up around 1941, but not in the year of the move.

Also, although the 1941 temperature shift seems large, I see a similar sized shift from 1992 to 1999. Looking at the whole picture, I think I’d vote to leave it as it is, that’s always the best option when you don’t have other evidence. First do no harm.

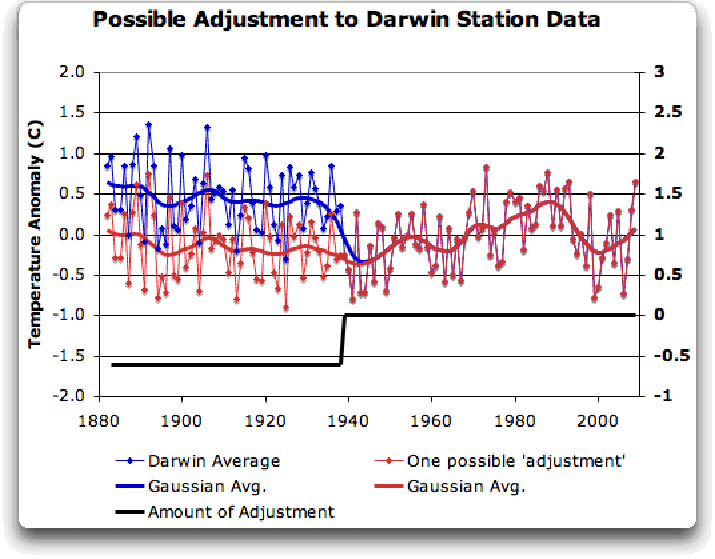

However, there’s a case to be made for adjusting it, particularly given the 1941 station move. If I decided to adjust Darwin, I’d do it like this:

Figure 6 A possible adjustment for Darwin. Black line shows the total amount of the adjustment, on the right scale, and shows the timing of the change.

I shifted the pre-1941 data down by about 0.6C. We end up with little change end to end in my “adjusted” data (shown in red), it’s neither warming nor cooling. However, it reduces the apparent cooling in the raw data. Post-1941, where the other records overlap, they are very close, so I wouldn’t adjust them in any way. Why should we adjust those, they all show exactly the same thing.

OK, so that’s how I’d homogenize the data if I had to, but I vote against adjusting it at all. It only changes one station record (Darwin Zero), and the rest are left untouched.

Then I went to look at what happens when the GHCN removes the “in-homogeneities” to “adjust” the data. Of the five raw datasets, the GHCN discards two, likely because they are short and duplicate existing longer records. The three remaining records are first “homogenized” and then averaged to give the “GHCN Adjusted” temperature record for Darwin.

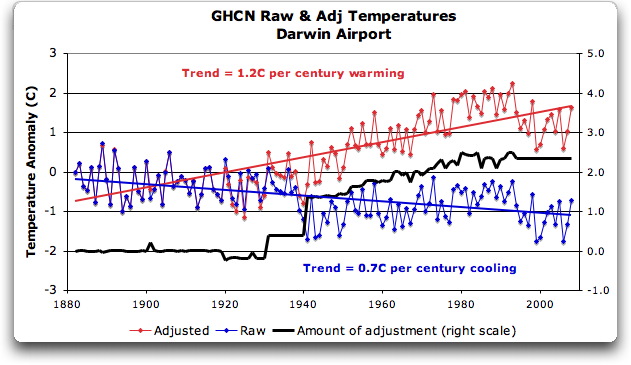

To my great surprise, here’s what I found. To explain the full effect, I am showing this with both datasets starting at the same point (rather than ending at the same point as they are often shown).

Figure 7. GHCN homogeneity adjustments to Darwin Airport combined record

YIKES! Before getting homogenized, temperatures in Darwin were falling at 0.7 Celcius per century … but after the homogenization, they were warming at 1.2 Celcius per century. And the adjustment that they made was over two degrees per century … when those guys “adjust”, they don’t mess around. And the adjustment is an odd shape, with the adjustment first going stepwise, then climbing roughly to stop at 2.4C.

Of course, that led me to look at exactly how the GHCN “adjusts” the temperature data. Here’s what they say

GHCN temperature data include two different datasets: the original data and a homogeneity- adjusted dataset. All homogeneity testing was done on annual time series. The homogeneity- adjustment technique used two steps.

The first step was creating a homogeneous reference series for each station (Peterson and Easterling 1994). Building a completely homogeneous reference series using data with unknown inhomogeneities may be impossible, but we used several techniques to minimize any potential inhomogeneities in the reference series.

…

In creating each year’s first difference reference series, we used the five most highly correlated neighboring stations that had enough data to accurately model the candidate station.

…

The final technique we used to minimize inhomogeneities in the reference series used the mean of the central three values (of the five neighboring station values) to create the first difference reference series.

Fair enough, that all sounds good. They pick five neighboring stations, and average them. Then they compare the average to the station in question. If it looks wonky compared to the average of the reference five, they check any historical records for changes, and if necessary, they homogenize the poor data mercilessly. I have some problems with what they do to homogenize it, but that’s how they identify the inhomogeneous stations.

OK … but given the scarcity of stations in Australia, I wondered how they would find five “neighboring stations” in 1941 …

So I looked it up. The nearest station that covers the year 1941 is 500 km away from Darwin. Not only is it 500 km away, it is the only station within 750 km of Darwin that covers the 1941 time period. (It’s also a pub, Daly Waters Pub to be exact, but hey, it’s Australia, good on ya.) So there simply aren’t five stations to make a “reference series” out of to check the 1936-1941 drop at Darwin.

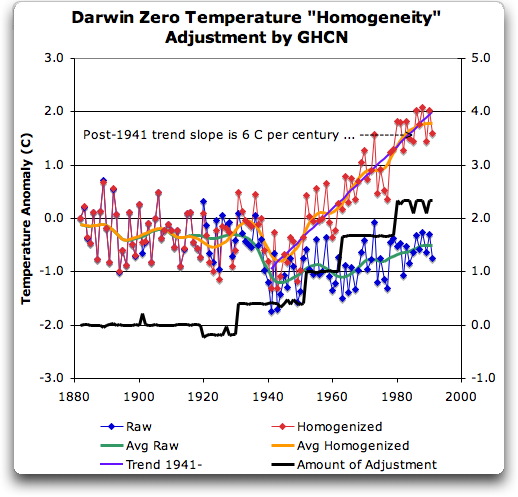

Intrigued by the curious shape of the average of the homogenized Darwin records, I then went to see how they had homogenized each of the individual station records. What made up that strange average shown in Fig. 7? I started at zero with the earliest record. Here is Station Zero at Darwin, showing the raw and the homogenized versions.

Figure 8 Darwin Zero Homogeneity Adjustments. Black line shows amount and timing of adjustments.

Yikes again, double yikes! What on earth justifies that adjustment? How can they do that? We have five different records covering Darwin from 1941 on. They all agree almost exactly. Why adjust them at all? They’ve just added a huge artificial totally imaginary trend to the last half of the raw data! Now it looks like the IPCC diagram in Figure 1, all right … but a six degree per century trend? And in the shape of a regular stepped pyramid climbing to heaven? What’s up with that?

Those, dear friends, are the clumsy fingerprints of someone messing with the data Egyptian style … they are indisputable evidence that the “homogenized” data has been changed to fit someone’s preconceptions about whether the earth is warming.

One thing is clear from this. People who say that “Climategate was only about scientists behaving badly, but the data is OK” are wrong. At least one part of the data is bad, too. The Smoking Gun for that statement is at Darwin Zero.

So once again, I’m left with an unsolved mystery. How and why did the GHCN “adjust” Darwin’s historical temperature to show radical warming? Why did they adjust it stepwise? Do Phil Jones and the CRU folks use the “adjusted” or the raw GHCN dataset? My guess is the adjusted one since it shows warming, but of course we still don’t know … because despite all of this, the CRU still hasn’t released the list of data that they actually use, just the station list.

Another odd fact, the GHCN adjusted Station 1 to match Darwin Zero’s strange adjustment, but they left Station 2 (which covers much of the same period, and as per Fig. 5 is in excellent agreement with Station Zero and Station 1) totally untouched. They only homogenized two of the three. Then they averaged them.

That way, you get an average that looks kinda real, I guess, it “hides the decline”.

Oh, and for what it’s worth, care to know the way that GISS deals with this problem? Well, they only use the Darwin data after 1963, a fine way of neatly avoiding the question … and also a fine way to throw away all of the inconveniently colder data prior to 1941. It’s likely a better choice than the GHCN monstrosity, but it’s a hard one to justify.

Now, I want to be clear here. The blatantly bogus GHCN adjustment for this one station does NOT mean that the earth is not warming. It also does NOT mean that the three records (CRU, GISS, and GHCN) are generally wrong either. This may be an isolated incident, we don’t know. But every time the data gets revised and homogenized, the trends keep increasing. Now GISS does their own adjustments. However, as they keep telling us, they get the same answer as GHCN gets … which makes their numbers suspicious as well.

And CRU? Who knows what they use? We’re still waiting on that one, no data yet …

What this does show is that there is at least one temperature station where the trend has been artificially increased to give a false warming where the raw data shows cooling. In addition, the average raw data for Northern Australia is quite different from the adjusted, so there must be a number of … mmm … let me say “interesting” adjustments in Northern Australia other than just Darwin.

And with the Latin saying “Falsus in unum, falsus in omis” (false in one, false in all) as our guide, until all of the station “adjustments” are examined, adjustments of CRU, GHCN, and GISS alike, we can’t trust anyone using homogenized numbers.

Regards to all, keep fighting the good fight,

w.

FURTHER READING:

My previous post on this subject.

The late and much missed John Daly, irrepressible as always.

More on Darwin history, it wasn’t Stevenson Screens.

NOTE: Figures 7 and 8 updated to fix a typo in the titles. 8:30PM PST 12/8 – Anthony

Why am I not surprised to see data “adjustments” and all kind of fudging of data being the hallmark of all “story lines” supporting AGW?

Back in the 90’s when computer models were first used to predict “catastrophic global warming”, the models could not used for “hind casting” i.e. to simulate past known temperatures. The results were way out of line with known past temperatures. Mount Pinatubo erupted in the early 90’s and the subsequent year, or two were significantly cooler because the volcanic eruption injected significant amount of aerosols into the upper part of the atmosphere. This gave the modelers an idea. Pollution was the reason why models could not do “hindcasting”. Unfortunately there was no world-wide pollution data available. No problem for the modelers. They just introduced enough ” pollution” into their models to make them simulate past temperatures accurately! While the overlying idea that aerosols reduce global warming was correct, introducing it as an adjustable variable to make their models simulate reality accurately, is not science, is data fudging. I would say that data fudging is endemic to global warming “science”.

Nick Stokes (13:22:23) :

Willis,

I had the same experience as Martin (09:36:00) : . The homogenised data plotted from the GISS site doesn’t look like your Darwin 0 data, You’ve referenced everything else very well – could you please give a reference for this data, as shown in your final graph (red in Fig 7)?

[REPLY – He’s not showing homogenized NASA/GISS data, he’s showing homogenized NOAA/GHCN data. ~ Evan]

Thanks, Evan, you are correct.

Both NOAA (GHCN) and NASA (GISS) start with about the same raw data. But they “homogenize” it in very different ways. GISS just cut off the Darwin data before 1963. Why 1963? Probably there’s a reason, but we don’t know why they didn’t keep the data back to say 1941.

w.

PS – if I miss a question anyone would like answered, just ask it again.

I don’t understand why certain people are so biased into believing the global warming hoax. The reality is that there are a lot of people that think a one world government and currency would be a good thing.

Willis,

Following my request for a reference (13:22:23), I now see that you have given a source here weschenbach (12:57:46). But do you have an explanation for why the plot as shown on the GISS interactive site for homogeneity adjusted data for Darwin Airport does not appear to be rising (as your Fig 7 is)? In fact, if you compare their plots of raw and homogeneity-adjusted for Darwin Airport 1941-2009, there’s virtually no change at all.

Willis, I managed to confuse myself with the two scales that you have on Darwin zero. The right hand scale seems to be the “amount of adjustment” scale. And the left seems to be the temperature anomaly scale. But the “amount of adjustment” scale is half the change of the anomaly scale. This makes it appear that the amount of adjustment is twice as large as what is seen in the resulting anomaly. I’ve unconfused myself, but it might be worthwhile to use the same relative scale in the future.

Still, fine job on your part. Thanks.

Willis (and parenthetically Evan)

I understand that the homogenized data shown is NOT the GISS homogenized data. And you did label it GHCN in the text. But what I — and I think Nick — don’t know is the site where you got. I found the raw data in the GHCN v2.mean.z file. (I checked the two long series — 0 and 1 — and they have the same values as the GISS raw data.) But where is the GHCN homogenized series data?

I got the raw data at the ftp site:

ftp://ftp.ncdc.noaa.gov/pub/data/ghcn/v2/

I just could find anything around there for the homogenized data. Help?

Thanks.

I don’t think this “proves” that someone went in and adjusted everything by hand to force a warming trend on the data…it does, however, prove that – at a minimum – the statistical training techniques they used are very poorly conceived.

I saw a lecture less than a month ago that talked about GISS’s homogenization techniques. There are time-series driven statistical techniques in common use all around the world (not just in climate studies) that try to keep jumps in the data from influencing analysis. There’s a +/- system that looks at the residuals above a trend line to see if there are unusual runs of above or below-average points and makes stepwise adjustments to stop that from happening…the problem may be that the weather/climate has significant auto-correlation (it may not be uncommon for 20 years to be colder and 20 years to be warmer based on oscillations in solar activity, the PDO, the AMO and vulcanism.

I think what we have here is a group of people starting with a few base assumptions and choosing the wrong methods for their data analysis.

I think the way to adjust the temperature record to account for inhomogeneities is to do historical research on each data site and only make an adjustment if you can find a physical explanation. That’s how a scientist should proceed…you need to begin with first principles and not let statistical methods run away with your data unchecked.

Amazing discovery Willis. Keep up the good work.

PS See NIST for Subsidiary SI Units

(not “Celcius”).

Martin:

“I just could[nt?] find anything around there for the homogenized data. Help?”

Wouldn’t that be the file v2.mean_adj.Z (two lines down at /pub/data/ghcn/v2/)?

This is exactly the way to get them.

Request access to as many raw data as possible and show how they have been tweaked.

Nobody has a right to “own” and hide such raw data.

Not at all must it be allowed to change them however, then to present the uneducated public an “optimized” version and suggest what to do.

Such processed data and results are nothing but garbage.

To do it this way is a crime, not even close to any kind of science.

Willis: “They’ve just added a huge artificial totally imaginary trend to the last half of the raw data!”

JJ: “You dont know that. You should not claim to know that which you do not. That is Teamspeak, leave it to the Team.”

I agree. McIntyre’s caution in this regard is the model to follow. We must not open ourselves to counterpunching by make roundhouse swings.

What really makes me laugh are news articles that say:

“The past decade is the warmest decade in 40 years” or “the average temperature of this decade is warmer than the previous decade”

While both are correct, it hides the fact that no warming has taken place this decade. Heck, it could even have cooled significantly in this decade and the statement would still be correct given the rapid warming in the 90’s. They are very misleading statements, but technically correct.

Nick Stokes (13:50:05) :

They are using the GISS adjustment, which means cutting off the pre-1963 data and adding a small (0.1C) adjustment to a few years.

I was looking at the GHCN adjustment.

Tilo Reber (14:00:49) :

Aw, nuts. I had noticed that and made a new graph, but I didn’t get it into the final draft. The correct graph is here. Evan, perhaps you could download it (or just change the link) to fix the head post?

Many thanks,

w.

http://homepage.mac.com/williseschenbach/Fig_9_darwin_and_adj.jpg

Chris Fountain (04:47:41) :

You Aussies are always ahead of the curve …

The station was at the Post Office until 1941.

Good things about a Willis Eschenbach article on WUWT:

1. Topical

2. Well-organized

3. Educational

4. Errors admitted and corrected

5. Willis is incredibly good-humored and patient

6. He tries to answer all questions

Bad things about a Willis Eschenbach article:

1. Some of the longest d….d comment threads on WUWT!

Willis, I posted a link to your piece on a generally warmist site I’ve been arguing on and it got this criticism. I don’t agree with it, the guy can’t spell your name for a start… but thought it might help you tighten your argumentation to make such dismissals more difficult. If you feel like responding to it here, I’d lke to post it there if that’s ok with you.

1. Eisenbach graphs the unadjusted data, and shows that it doesn’t match the adjusted data.

2. Eisenbach then asks why adjustments were made to this data, and then proceeds to completely fail to answer his own question by taking a sort of vague, vanilla explanation of why adjustments are made and stating that that one paragraph does not seem to apply to the record for this particular airport.

3. The train has already gone off the tracks at this point. . . a more meticulous person might have explored the possibility that the adjustments were possibly made to account for other things. Eisenbach seems to just jump to the conclusion that they must have been pulled out of a hat.

4. Eisenbach then makes a motion toward throwing them a bone by making one adjustment of his own. However, he does not give any clear explanation for this adjustment – anyone who was following his line of thought up to this point should have to conclude that he just pulled it out of a hat.

5. He follows that up with an explanation for why he wouldn’t do any further adjustments that shouldn’t have anything to do with the way climatologists normally decide to do adjustments. The decision on how to handle data like this should be made for consistent, quantitative reasons, never because someone’s just eyeballing a graph and tweaking it until they think it looks right.

5a. In the process of doing the above, he does an interesting thing: He quoted a GHCN paragraph that indicated that adjustments are made for multiple factors, including but not limited to station location. He agreed that that made sense, so presumably he thought all of it made sense. However, he followed that up with a detailed analysis that is based on the presumption that station location is the only valid reason to make an adjustment.

So now we’ve hit the second place where Mr. Eisenbach can’t even seem to agree with himself on how things should be done, and we’re still only halfway through the post. . .

MIke (12:14:18) :

“This would require the logs and the original temperature readings. Do we have those yet?”

We all need to do this.

“We all need to get it together”.

The data, that is.

Get that together, and we’ve got something we can work with.

Maybe it would be best to go with the Japanese JMA temperature dataset instead of the “western” nation’s sets.

Willis,

“Well, yes, I do know that it is huge, it is artificial, and that it is totally imaginary.”

No you dont. Granted, it is large. And all adjustments are ‘artificial’. But totally imaginary? You dont know that. Not yet.

“We have no less than five different thermometers at Darwin, all of which agree that the temperature did not climb at an unheard of rate (6 C per century) after 1941. Not sure how much clearer that could be.”

If it is legitimate to adjust one thermometer, and it may very well be, then it may also be legitimate to adjust all thermometers in the area similarly. If that is the case here, then what you may have discovered is not that they illegitimately applied an enormous false adjustment to two of the thermometers in the average, but that they failed to apply a huge legitimate adjustment to the third. Up goes the AGW.

You dont know. You have asked some very good questions here. Time to pose those same questions directly to the people who damn well should have the answers … then draw conclusions as to effect and motive.

Until then, be content that you have layed out some important facts in a fashion that the layman can readily grasp:

1) The instrumental record is not merely the wholey objective exercise of reading a thermometer and writing down the number accurately.

2) The instrumental record is subjected to various adjustments, the magnitude of which easily dwarfs the alleged ‘global warming’ signal.

3) These adjustments are not well documented outside of the small clique of researchers who compile these datasets – and perhaps not even within those circles.

4) The propriety of these adjustments cannot be ascertained without access to both the raw data, and very detailed method descriptions.

You’re doing good work. Dont ruin it by overreaching.

JJ

You say

“And CRU? Who knows what they use? We’re still waiting on that one, no data yet …”

The CRU said(before the server was taken offline because of the hack – http://www.cru.uea.ac.uk/cru/data/)

” The various datasets on the CRU website are provided for all to use, provided the sources are acknowledged. Acknowledgement should preferably be by citing one or more of the papers referenced on the appropriate page. The website can also be acknowledged if deemed necessary. CRU will endeavour to update the majority of the data pages at timely intervals although this cannot be guaranteed by specific dates.”

Who is lying?

Just took a look at the Australian Bureau of Meteorology figures for Darwin. They agree almost exactly (but no quite) with the GHCN unadjusted figures and are far, far from the GHCN adjusted figures. The BOM figures are here

It would be pretty hard to justify all those warming adjustments and few, if any cooling ones. Most everything I can think of would actually warm the area, such as:

-addition of parking lots, runways, etc

-clearing surrounding vegetation for new structures/runways

-urbanization of surroundings

What could possibly account for the massive warming adjustments made? There are no noticeable spikes in the temperature plot. Things I could think of would be resurfacing with high albedo material or moving the station to a bushier location. While those might explain one such adjustment, there are 3-4 of those, each one having to build on the next. I find that highly unlikely. Again, the vast majority of any adjustment I can think of would be cooling adjustments. It sure smells like data manipulation to me.

It would be interesting to do this analysis on many other stations.

Hi Willis

Can I just confirm that I am right with respect to the GHCN file you got the adjusted (homogenized) data from please (as Martin and Nick have previously queried)?

If GISS have not done a closely similar adjustment then you have opened a real can of worms.

Regards

A marvelous job. Well done.

The base data, GHCN, have also been incredibly biased via selective thermometer deletions.

A similar analysis on some of those effects would be “a beautiful thing”…

http://chiefio.wordpress.com/2009/11/03/ghcn-the-global-analysis/