by Willis Eschenbach

People keep saying “Yes, the Climategate scientists behaved badly. But that doesn’t mean the data is bad. That doesn’t mean the earth is not warming.”

Darwin Airport – by Dominic Perrin via Panoramio

Let me start with the second objection first. The earth has generally been warming since the Little Ice Age, around 1650. There is general agreement that the earth has warmed since then. See e.g. Akasofu . Climategate doesn’t affect that.

The second question, the integrity of the data, is different. People say “Yes, they destroyed emails, and hid from Freedom of information Acts, and messed with proxies, and fought to keep other scientists’ papers out of the journals … but that doesn’t affect the data, the data is still good.” Which sounds reasonable.

There are three main global temperature datasets. One is at the CRU, Climate Research Unit of the University of East Anglia, where we’ve been trying to get access to the raw numbers. One is at NOAA/GHCN, the Global Historical Climate Network. The final one is at NASA/GISS, the Goddard Institute for Space Studies. The three groups take raw data, and they “homogenize” it to remove things like when a station was moved to a warmer location and there’s a 2C jump in the temperature. The three global temperature records are usually called CRU, GISS, and GHCN. Both GISS and CRU, however, get almost all of their raw data from GHCN. All three produce very similar global historical temperature records from the raw data.

So I’m still on my multi-year quest to understand the climate data. You never know where this data chase will lead. This time, it has ended me up in Australia. I got to thinking about Professor Wibjorn Karlen’s statement about Australia that I quoted here:

Another example is Australia. NASA [GHCN] only presents 3 stations covering the period 1897-1992. What kind of data is the IPCC Australia diagram based on?

If any trend it is a slight cooling. However, if a shorter period (1949-2005) is used, the temperature has increased substantially. The Australians have many stations and have published more detailed maps of changes and trends.

The folks at CRU told Wibjorn that he was just plain wrong. Here’s what they said is right, the record that Wibjorn was talking about, Fig. 9.12 in the UN IPCC Fourth Assessment Report, showing Northern Australia:

Figure 1. Temperature trends and model results in Northern Australia. Black line is observations (From Fig. 9.12 from the UN IPCC Fourth Annual Report). Covers the area from 110E to 155E, and from 30S to 11S. Based on the CRU land temperature.) Data from the CRU.

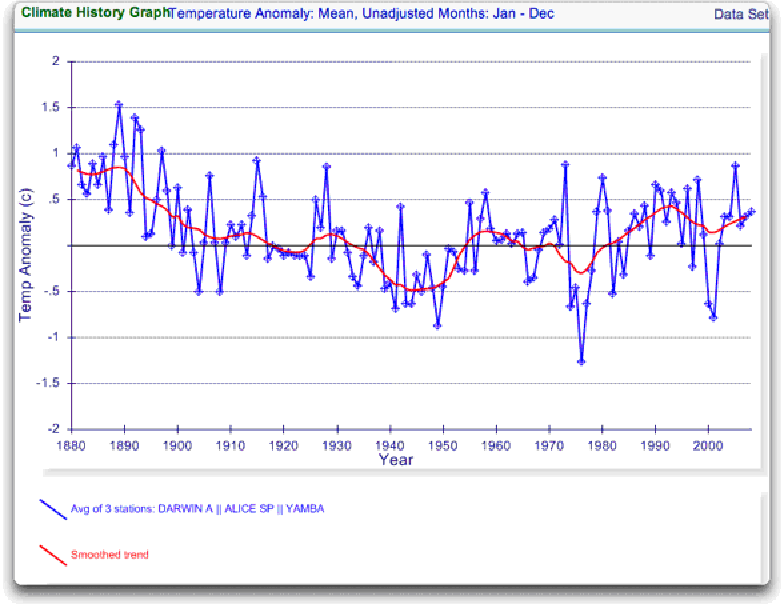

One of the things that was revealed in the released CRU emails is that the CRU basically uses the Global Historical Climate Network (GHCN) dataset for its raw data. So I looked at the GHCN dataset. There, I find three stations in North Australia as Wibjorn had said, and nine stations in all of Australia, that cover the period 1900-2000. Here is the average of the GHCN unadjusted data for those three Northern stations, from AIS:

Figure 2. GHCN Raw Data, All 100-yr stations in IPCC area above.

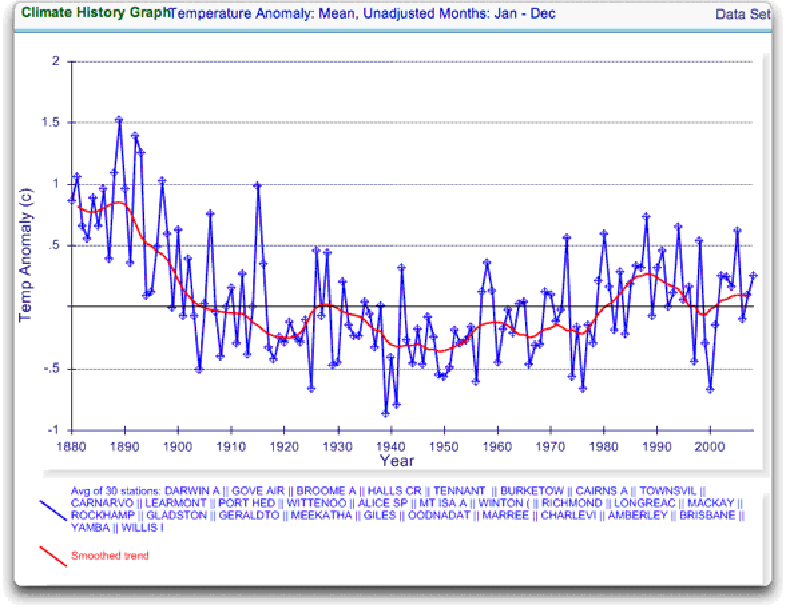

So once again Wibjorn is correct, this looks nothing like the corresponding IPCC temperature record for Australia. But it’s too soon to tell. Professor Karlen is only showing 3 stations. Three is not a lot of stations, but that’s all of the century-long Australian records we have in the IPCC specified region. OK, we’ve seen the longest stations record, so lets throw more records into the mix. Here’s every station in the UN IPCC specified region which contains temperature records that extend up to the year 2000 no matter when they started, which is 30 stations.

Figure 3. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

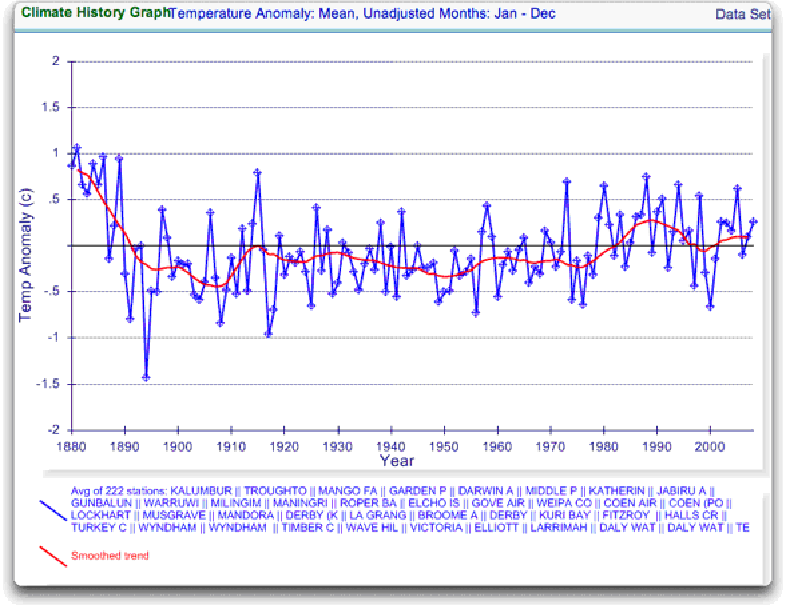

Still no similarity with IPCC. So I looked at every station in the area. That’s 222 stations. Here’s that result:

Figure 4. GHCN Raw Data, All stations extending to 2000 in IPCC area above.

So you can see why Wibjorn was concerned. This looks nothing like the UN IPCC data, which came from the CRU, which was based on the GHCN data. Why the difference?

The answer is, these graphs all use the raw GHCN data. But the IPCC uses the “adjusted” data. GHCN adjusts the data to remove what it calls “inhomogeneities”. So on a whim I thought I’d take a look at the first station on the list, Darwin Airport, so I could see what an inhomogeneity might look like when it was at home. And I could find out how large the GHCN adjustment for Darwin inhomogeneities was.

First, what is an “inhomogeneity”? I can do no better than quote from GHCN:

Most long-term climate stations have undergone changes that make a time series of their observations inhomogeneous. There are many causes for the discontinuities, including changes in instruments, shelters, the environment around the shelter, the location of the station, the time of observation, and the method used to calculate mean temperature. Often several of these occur at the same time, as is often the case with the introduction of automatic weather stations that is occurring in many parts of the world. Before one can reliably use such climate data for analysis of longterm climate change, adjustments are needed to compensate for the nonclimatic discontinuities.

That makes sense. The raw data will have jumps from station moves and the like. We don’t want to think it’s warming just because the thermometer was moved to a warmer location. Unpleasant as it may seem, we have to adjust for those as best we can.

I always like to start with the rawest data, so I can understand the adjustments. At Darwin there are five separate individual station records that are combined to make up the final Darwin record. These are the individual records of stations in the area, which are numbered from zero to four:

Figure 5. Five individual temperature records for Darwin, plus station count (green line). This raw data is downloaded from GISS, but GISS use the GHCN raw data as the starting point for their analysis.

Darwin does have a few advantages over other stations with multiple records. There is a continuous record from 1941 to the present (Station 1). There is also a continuous record covering a century. finally, the stations are in very close agreement over the entire period of the record. In fact, where there are multiple stations in operation they are so close that you can’t see the records behind Station Zero.

This is an ideal station, because it also illustrates many of the problems with the raw temperature station data.

- There is no one record that covers the whole period.

- The shortest record is only nine years long.

- There are gaps of a month and more in almost all of the records.

- It looks like there are problems with the data at around 1941.

- Most of the datasets are missing months.

- For most of the period there are few nearby stations.

- There is no one year covered by all five records.

- The temperature dropped over a six year period, from a high in 1936 to a low in 1941. The station did move in 1941 … but what happened in the previous six years?

In resolving station records, it’s a judgment call. First off, you have to decide if what you are looking at needs any changes at all. In Darwin’s case, it’s a close call. The record seems to be screwed up around 1941, but not in the year of the move.

Also, although the 1941 temperature shift seems large, I see a similar sized shift from 1992 to 1999. Looking at the whole picture, I think I’d vote to leave it as it is, that’s always the best option when you don’t have other evidence. First do no harm.

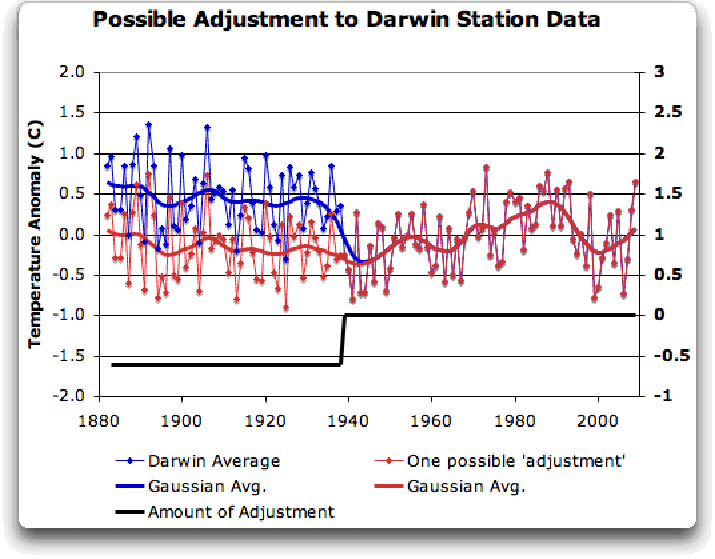

However, there’s a case to be made for adjusting it, particularly given the 1941 station move. If I decided to adjust Darwin, I’d do it like this:

Figure 6 A possible adjustment for Darwin. Black line shows the total amount of the adjustment, on the right scale, and shows the timing of the change.

I shifted the pre-1941 data down by about 0.6C. We end up with little change end to end in my “adjusted” data (shown in red), it’s neither warming nor cooling. However, it reduces the apparent cooling in the raw data. Post-1941, where the other records overlap, they are very close, so I wouldn’t adjust them in any way. Why should we adjust those, they all show exactly the same thing.

OK, so that’s how I’d homogenize the data if I had to, but I vote against adjusting it at all. It only changes one station record (Darwin Zero), and the rest are left untouched.

Then I went to look at what happens when the GHCN removes the “in-homogeneities” to “adjust” the data. Of the five raw datasets, the GHCN discards two, likely because they are short and duplicate existing longer records. The three remaining records are first “homogenized” and then averaged to give the “GHCN Adjusted” temperature record for Darwin.

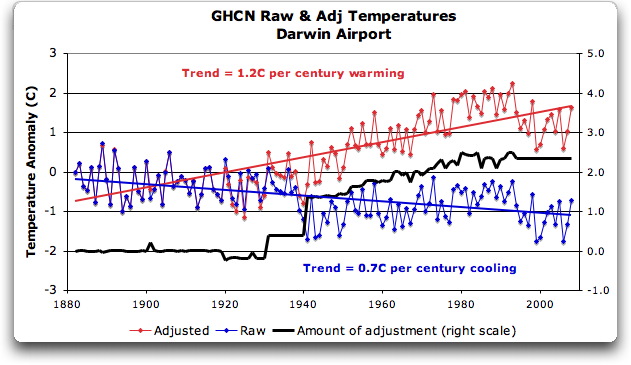

To my great surprise, here’s what I found. To explain the full effect, I am showing this with both datasets starting at the same point (rather than ending at the same point as they are often shown).

Figure 7. GHCN homogeneity adjustments to Darwin Airport combined record

YIKES! Before getting homogenized, temperatures in Darwin were falling at 0.7 Celcius per century … but after the homogenization, they were warming at 1.2 Celcius per century. And the adjustment that they made was over two degrees per century … when those guys “adjust”, they don’t mess around. And the adjustment is an odd shape, with the adjustment first going stepwise, then climbing roughly to stop at 2.4C.

Of course, that led me to look at exactly how the GHCN “adjusts” the temperature data. Here’s what they say

GHCN temperature data include two different datasets: the original data and a homogeneity- adjusted dataset. All homogeneity testing was done on annual time series. The homogeneity- adjustment technique used two steps.

The first step was creating a homogeneous reference series for each station (Peterson and Easterling 1994). Building a completely homogeneous reference series using data with unknown inhomogeneities may be impossible, but we used several techniques to minimize any potential inhomogeneities in the reference series.

…

In creating each year’s first difference reference series, we used the five most highly correlated neighboring stations that had enough data to accurately model the candidate station.

…

The final technique we used to minimize inhomogeneities in the reference series used the mean of the central three values (of the five neighboring station values) to create the first difference reference series.

Fair enough, that all sounds good. They pick five neighboring stations, and average them. Then they compare the average to the station in question. If it looks wonky compared to the average of the reference five, they check any historical records for changes, and if necessary, they homogenize the poor data mercilessly. I have some problems with what they do to homogenize it, but that’s how they identify the inhomogeneous stations.

OK … but given the scarcity of stations in Australia, I wondered how they would find five “neighboring stations” in 1941 …

So I looked it up. The nearest station that covers the year 1941 is 500 km away from Darwin. Not only is it 500 km away, it is the only station within 750 km of Darwin that covers the 1941 time period. (It’s also a pub, Daly Waters Pub to be exact, but hey, it’s Australia, good on ya.) So there simply aren’t five stations to make a “reference series” out of to check the 1936-1941 drop at Darwin.

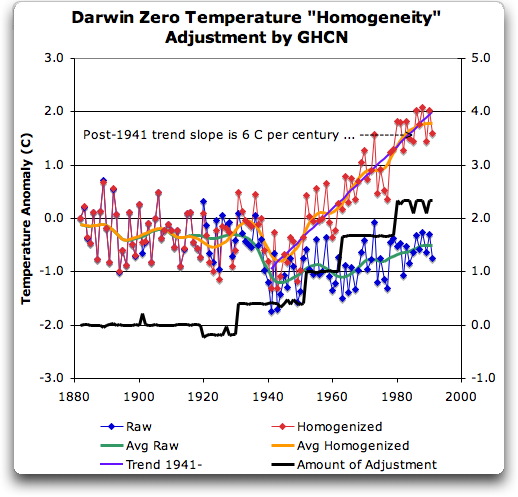

Intrigued by the curious shape of the average of the homogenized Darwin records, I then went to see how they had homogenized each of the individual station records. What made up that strange average shown in Fig. 7? I started at zero with the earliest record. Here is Station Zero at Darwin, showing the raw and the homogenized versions.

Figure 8 Darwin Zero Homogeneity Adjustments. Black line shows amount and timing of adjustments.

Yikes again, double yikes! What on earth justifies that adjustment? How can they do that? We have five different records covering Darwin from 1941 on. They all agree almost exactly. Why adjust them at all? They’ve just added a huge artificial totally imaginary trend to the last half of the raw data! Now it looks like the IPCC diagram in Figure 1, all right … but a six degree per century trend? And in the shape of a regular stepped pyramid climbing to heaven? What’s up with that?

Those, dear friends, are the clumsy fingerprints of someone messing with the data Egyptian style … they are indisputable evidence that the “homogenized” data has been changed to fit someone’s preconceptions about whether the earth is warming.

One thing is clear from this. People who say that “Climategate was only about scientists behaving badly, but the data is OK” are wrong. At least one part of the data is bad, too. The Smoking Gun for that statement is at Darwin Zero.

So once again, I’m left with an unsolved mystery. How and why did the GHCN “adjust” Darwin’s historical temperature to show radical warming? Why did they adjust it stepwise? Do Phil Jones and the CRU folks use the “adjusted” or the raw GHCN dataset? My guess is the adjusted one since it shows warming, but of course we still don’t know … because despite all of this, the CRU still hasn’t released the list of data that they actually use, just the station list.

Another odd fact, the GHCN adjusted Station 1 to match Darwin Zero’s strange adjustment, but they left Station 2 (which covers much of the same period, and as per Fig. 5 is in excellent agreement with Station Zero and Station 1) totally untouched. They only homogenized two of the three. Then they averaged them.

That way, you get an average that looks kinda real, I guess, it “hides the decline”.

Oh, and for what it’s worth, care to know the way that GISS deals with this problem? Well, they only use the Darwin data after 1963, a fine way of neatly avoiding the question … and also a fine way to throw away all of the inconveniently colder data prior to 1941. It’s likely a better choice than the GHCN monstrosity, but it’s a hard one to justify.

Now, I want to be clear here. The blatantly bogus GHCN adjustment for this one station does NOT mean that the earth is not warming. It also does NOT mean that the three records (CRU, GISS, and GHCN) are generally wrong either. This may be an isolated incident, we don’t know. But every time the data gets revised and homogenized, the trends keep increasing. Now GISS does their own adjustments. However, as they keep telling us, they get the same answer as GHCN gets … which makes their numbers suspicious as well.

And CRU? Who knows what they use? We’re still waiting on that one, no data yet …

What this does show is that there is at least one temperature station where the trend has been artificially increased to give a false warming where the raw data shows cooling. In addition, the average raw data for Northern Australia is quite different from the adjusted, so there must be a number of … mmm … let me say “interesting” adjustments in Northern Australia other than just Darwin.

And with the Latin saying “Falsus in unum, falsus in omis” (false in one, false in all) as our guide, until all of the station “adjustments” are examined, adjustments of CRU, GHCN, and GISS alike, we can’t trust anyone using homogenized numbers.

Regards to all, keep fighting the good fight,

w.

FURTHER READING:

My previous post on this subject.

The late and much missed John Daly, irrepressible as always.

More on Darwin history, it wasn’t Stevenson Screens.

NOTE: Figures 7 and 8 updated to fix a typo in the titles. 8:30PM PST 12/8 – Anthony

It’s a sad day for British Science!

I think a major story will be the leaker, more so than the leak. You will find the the IPCC etc will soon not be at all interested in investigating it. neither will UEA. The investigators may be under intense pressure to not release information about the leak. This explains the intense desire of the AGW to make sure the public thinks that the KGB/goblins etc were responsible. I think that, if is found that it was an internal leak, the IPCC and all associated with it might as well disband and go home.

I have just asked the Met Office if the data they have made available is raw or processed (e.g. to correct non-warming). They referred me to this statement:

“The data that we are providing is the database used to produce the global temperature series. Some of these data are the original underlying observations and some are observations adjusted to account for non climatic influences, for example changes in observations methods.”

So now I know. It’s the non-climatic influences I’m worried about…

I hope you guys can find examples where clear comparisons can be made along the lines of the current post.

A few months after Pearl Harbour, the Japanese bombed Darwin flat. Some good wrecks in the harbour 🙂

Never was a single smoking gun more clearly and devastatingly exposed.

Major kudos to Mr. Eschenbach for his outstanding and meticulous effort.

But: Now what ?? . . .

Given the magnitude and extent of this demonstrated ”artificial adjustment”, as already mentioned the ”false in one false in all” assumption is a fair starting point as far as reasonable suspicions. But to have a shot at convincing the general public let alone the MSM, I expect a significant number of other stations around the world will have to be shown to have undergone similar large and unjustified ”extended warping” of the data.

This is what computers are good at (IF the software is professionally done):

Seems like it should be possible to write a program that would take as input:

[1] The totally unadjusted raw data for individual stations; and:

[2] The end-result data after all tweaking by CRU, GISS, & GHCN.

Minor SIDEBAR detail:

Of course 1st you have to actually GET both sets of data. . .

Ideal program output would then be graphical comparison along the lines of the excellent presentation in this thread start. . . . . Having said that, as someone who spent 25 years on a complex software engineering project, I immediately add:

Yes: I am aware that done right, this would be a non-trivial software project.

And: Also recognize as was pointed out in prior comment by TheSkyIsFalling that metadata giving reasons for real-world adjustments for individual stations would need to be reviewed.

OTOH:

Surely would not need to do a huge number of stations world wide to reasonably demonstrate and prove a pervasive smoking gun if similar results were common; i.e.:

Seems like a few dozen or so similar examples would start to be pretty overwhelming hard evidence.

Interesting times, indeed. . . .

Ont he CRU curve ,I really dont understand what I see. Well, I think the black line is the raw data, since you mention that under the graph. But the red and blue shaded area? Model predictions? Or?

Willis,

Where was station zero located?

I am reasonably familiar with Darwin and might know where the station was located.

However, I cannot think of anything that would cause them to be 0.6C high for such a long period of time and with what looks like a gradually declining trend from 1880 to 1940.

Are you just trying to hide the decline? 🙂

While that large jump in 1941 looks suspicious I think it was later in the war that the Japanese bombed Darwin (would have to look it up, but as I recall, Pearl Harbor was late in ’41 wasn’t it. Something like December 7, 1941. While the RAAF was in the region, but probably largely operating from Tyndall, the US military presence in Darwin did not commence, I believe, until the US entered the war.) So, I suspect that the bombing did not cause the problems.

Mr. Eschenbach:

I appreciate your effort in this matter. Your post has been shared with a person who gives great authority to the existing ‘academic community’. That person has dismissed your findings as opinion and unsupported personal conjecture that the process is broken.

Part of our discussion has hinged on your statement “So I looked at the GHCN dataset.” While acknowledging that the blog venue doesn’t require the same level of source citation as a peer-reviewed journal, your sources have been questioned.

Could you provide a more detailed reference/ link to the HCN data in question [both raw and adjusted]? Thanks and regards.

Good grief. Enough with the unmitigated speculation and hyperbole.

Willis – excellent analysis, but you go a bridge too far with your conclusions.

“Yikes again, double yikes! What on earth justifies that adjustment? How can they do that? … Why adjust them at all?”

Those are very good questions. Making claims requires answers.

“They’ve just added a huge artificial totally imaginary trend to the last half of the raw data!”

You dont know that. You should not claim to know that which you do not. That is Teamspeak, leave it to the Team.

You have turned up what we already knew – that the alleged ‘global warming’ trend is a function of the adjustments applied to the raw data (or, as in the case of UHI and similar effects, not applied) as much or moreso than the raw data. That is worrying, but not necessarily illegitimate.

The example that you have done an excellent job of laying out here demonstrates that those who are making these adjutments need to have very good explanations for why they did so. Having stuck your neck out and called them dishonest, you had better pray that they dont have good explanations for those adjustment. If they do, the best that is going to happen is that you, and by the broad brush we, are going to be made to look like a bunch of biased, ranting fools.

Perhaps you should stick to pointing out worrying potential issues (again, good job of that) and save the claims of gross incompetance and malfeasance for after the questions you raise have been answered.

JJ

Willis,

Very very nice. Not much else to say ‘cept wow.

Breathtaking! Clear and precise. Yikes and Double Yikes indeed!

Could somebody help me out here? In The Times (http://www.timesonline.co.uk/tol/news/environment/article6936328.ece), we have the claim that CRU’s data was destroyed:

‘In a statement on its website, the CRU said: “We do not hold the original raw data but only the value-added (quality controlled and homogenised) data.”

The CRU is the world’s leading centre for reconstructing past climate and temperatures. Climate change sceptics have long been keen to examine exactly how its data were compiled. That is now impossible. ‘

However, over on RealClimate you’ve got Gavin Schmidt making claims like this:

[Response: Lots of things get lost over time, but no data has been deleted or destroyed in any real sense. All of the raw data is curated by the relevant Met. Services. – gavin]

[Response: No. If that was done it would be heinous, but it wasn’t. The original data rests with the met services that provided it. – gavin]

So which is it? Does the data exist somewhere or not? If it does then I don’t even understand why CRU even made such a dramatic announcement. Why didn’t they just say what Gavin Schmidt says above?

But on the other hand, reading this article it almost seems like the author DOES have access to raw data (and normalized data) which he used to calculate the Darwin adjustments. Is this the same data that CRU started with? If so, why not use this data to start with to reproduce CRU results? If the data does exist in some format I don’t see why there is any controversy at all.

Seems like somebody is being disingenuous but not sure who. This is something I find incredibly frustrating about this issue. The scientific issues are understandably opaque and subject to debate. That’s hard enough to get to the bottom of. But even simple things like, ‘Was the data destroyed or not?’ are subject to so much spin it’s nearly impossible for somebody who’s trying to be objective to sort it all out.

HEre’s a somewhat related post that I did on an Australian station a couple of years ago http://www.climateaudit.org/?p=1489

Given the amount of money they are talking about just for “Cap-n-Tax” (not to mention the IPCC money), this distortion is criminal.

Given the thoroughness of Willis Eschenbach’s methodology in tracing the Darwin temperature record, and what looks like a successful survey of US surface temperature stations (www.surfacestations.org), maybe a similar effort could be developed to audit these three main temperature databases. Establish a single method/process for review of the record, with required data formats, etc., publish a manual and let the globe have at it.

Interesting:

From: Phil Jones

To: Kevin Trenberth

Subject: One small thing

Date: Mon Jul 11 13:36:14 2005

Kevin,

In the caption to Fig 3.6.2, can you change 1882-2004 to 1866-2004 and

add a reference to Konnen (with umlaut over the o) et al. (1998). Reference

is in the list. Dennis must have picked up the MSLP file from our web site,

that has the early pre-1882 data in. These are fine as from 1869 they are Darwin,

with the few missing months (and 1866-68) infilled by regression with Jakarta.

This regression is very good (r>0.8). Much better than the infilling of Tahiti, which

is said in the text to be less reliable before 1935, which I agree with.

Cheers

Phil

Prof. Phil Jones

Climatic Research Unit Telephone +44 (0) 1603 592090

School of Environmental Sciences Fax +44 (0) 1603 507784

University of East Anglia

Norwich Email p.jones@xxxxxxxxx.xxx

NR4 7TJ

UK

Wonterful text.

Minor typos :

– figure 4, legend : bad copy-and-paste of the legend of figure 3 (remove the reference to the year 2000) ;

– the right Latin saying is “Falsus in uno, falsus in omnibus”.

kwik (08:37:09) :

“Ont he CRU curve ,I really dont understand what I see. Well, I think the black line is the raw data, since you mention that under the graph. But the red and blue shaded area? Model predictions? Or?”

First the black line in figure one is labeled “observations”, however that is not the Raw observation. That is the Observation after adjustment.

Second from what I understand the Red area is what the Model says the temp should be with CO2 forcing. The Blue are is what the Model says the temp should be without CO2 forcing.

Now when I looked at Fig 1 and and fig 2, to my Mark 1 eyeball the Raw looks like it correlates closely to what the models say the temp would be WITHOUT CO2 forcing (Blue shaded area).

Earlier I aked if Willis had laid the raw data over the the graph in Fig 1 and see if it does correspond with the blue area. The reason being if the IPCC’s own models without CO2 forcing matchs the Raw and Willis reconstruction, that in turn gives credence that people are ajusting the Raw to match the Models Red area or in other words why you get that huge adjustment.

(Also : 1850 instead of 1650 – second line after the photograph of the Darwin airport.)

Billy (08:49:21),

The obvious answer: Produce the raw data. The hand-written, signed and dated B-91 forms recording the daily temps at each surface station would be a good start.

JJ (08:48:13):

“…the alleged ‘global warming’ trend is a function of the adjustments applied to the raw data (or, as in the case of UHI and similar effects, not applied) as much or moreso than the raw data. That is worrying, but not necessarily illegitimate.”

What smacks of illegitimacy is the fact that when the data is massaged, it almost always shows warming: click1, click2, click3.

For the true global temperature, a record of temperatures from rural sites uncontaminated by UHI would show little if any global warming: click1, click2 [blink gif – takes a few seconds to load].

The CRU, the IPCC, the NOAA and the rest of the government funded sciences offices are trying to show an alarming increase in global temperatures. They almost always show a y-axis in tenths of a degree to exaggerate any minor fluctuations. But by using a chart with a less scary y-axis, we can see that nothing unusual is occurring: click

I think we (the internet community) can end this debate once an for all … Using the stations cherry picked by the IPCC we could set up station teams via internet volunteers to review the raw vs “value added” GHCN data and validate those adjustments … where an adjustment appears to have been applied without good reason the team should attempt to do their own adjustment based on logical and justifiable reasoning …

This should allow the world to have a verified record set of actual temp measurement for at least the last 100 years … we don’t have that now …

step one would be to classify station location for appropriateness … bad sites would be marked for adjustment or exclusion … adjustments should never be averages they should be delta adjustments based on nearby reliable (i.e. non bad) sites …

An Army of Davids so to speak …

No reason this can’t happen within a year or two if someone can coordinate it …

Set up clearly defined rules on site validation …

Set up clearly defined adjustment methods to measure the warmists “valued added” against …

Use those adjustments methods to re-adjust the eggregious site adjustments …

Create a peer review process to allow a second, third and forth set of eyes to validate the work done by the team …

Allow anyone to join a team … anyone … Warmists are Welcome 🙂

Team decisions should be a super majority i.e. >66%

“Some of these data are the original underlying observations and some are observations adjusted to account for non climatic influences, for example changes in observations methods.” – PeterS quoting the Met Office

And with no visibility whatsoever of what those “adjustments” were, despite knowing full well (or because they know?) that therein lies the principal suspicions of dodgy data manipulation.

Hm. I wonder if the Met Office even *have* records of how those adjustments were done? Or whether some of them were done with long-lost bits of code on long-dead computers and what they actually have is an archive of raw data A, an archive of adjusted data B and an embarrassing lack of repeatability of how they got from A to B in the first place. Maybe they’re looking at the likes of Darwin and going [snip] too – except they can’t and won’t admit they have a problem.

Or was that what ‘Harry’ was trying to do? Trying to replicate past adjustments in putting together the adjusted database? And did he ever “succeed”?

At what point do we declare that these temperature records and proxies have too little confidence to be of any use in determining if AGW exists?

Would a going forward position be to use a well understood set of measurements and monitor changes for the next X years and see which models (hypotheses) are working. It seems Copenhagen may fail and that the “catastrophe” hypothesis is dying under the weight of Climategate — so why not?

I think what is missing from the current “coverage” so-called is this kind of analysis to beat back the claims that the science underpinning (I would love to use the word undermining) the CRUgate emails.

What I typically see is a talking head who interviews a warmist, and the talking points are driven by the warmist – again, no debate.

1) Emails were hacked

2) The emails and specific comments have been taken out of context

3) The science is sound

Fine work as usual, Willis.

******

8 12 2009

JP (04:46:12) :

This subject was covered in a CA thread some years ago. I believe it came up when someone discovered the TOBS adjustment that NOAA began using. The TOBS adusted the 1930s down, but the 1990s up. Someone calculated that the TOBS accounted for 25-30% of the rise in global temps.

******

The TOBS issue was the first thing I thought, too, to explain the massive adjustments. They seem to use this as a catch-all adjustment because by nature the correction can be quite large in some specific instances (in both directions). The metadata to confirm this perhaps isn’t available — I don’t know.