Guest Post by Willis Eschenbach

The Inter-Tropical Convergence Zone from space

A while ago I started studying the question of the amplification of the tropical tropospheric temperature with respect to the surface. After months of research bore fruit, I started writing a paper. My intention was to have it published in a peer-reviewed journal. I finished the writing about a week ago.

During that time, I also wrote and published The Temperature Hypothesis here on WUWT. This got me to thinking about science, and about how we establish scientific facts. In climate science, the peer review process is badly broken. Among other problems, it is often an “old boy” system where very poor work is waved through. In common with other sciences, turnaround of ideas in journals takes weeks. Under pressure to publish, journals often do only the most cursory examination of the papers.

Upon reflection, I have decided to try a different way to examine the truth content of my paper. This is to invite all of the authors whose work I discuss, and other interested scientists of all stripes, to comment on the paper and on whether they can find any flaws in it. To facilitate the process I have provided all of the code and data that I used to do the analysis.

To make this process work will require cooperation. First, I ask for science and science only. No discussions of motives. No ad homs. No generalizations to larger spheres. No asides. No disrespect, we can be gentlemen and gentlewomen. No comments on politics, CO2, or AGW, no snowball earth. This thread has one purpose only, to establish whether my ideas stand: to either attack and destroy the ideas I put forth in the paper below, or to provide evidence and data to support the ideas I put forth below.

People think science is a cooperative endeavor. It is not. It is a war of ideas. An idea is put out, and scientists gather round to attack it and disprove it. Sometimes, other scientists may support and defend it. The more fierce the attack, the better … because if it can withstand the strongest attack, it is more likely true. When your worst scientific enemies and greatest disbelievers can’t show that you are wrong, your ideas are taken as scientific fact. (Until your ideas in turn are perhaps overthrown). Science is a blood sport, but all of the attack and parry is historically done in private. I propose to bring it out in public, and I offer my contribution below as the first victim.

Second, I will insist on a friendly, appreciative attitude towards the contributions of others. We are interested in working together to advance our primitive knowledge of how the climate works. We are doing that by trying to tear my ideas down, to disprove them, to find errors in them. To make this work we must do this with respect for the people involved and the ideas they put forwards. You don’t have to smash the guy to smash the idea, in fact it reduces your odds.

Third, no anonymous posting, please. If you are think your ideas are scientifically valid, please put your name on them.

With that in mind, I’d like to invite any and all of the following authors whose work I discuss below, to comment on and/or tear to shreds this study.

J. S. Boyle, J. R. Christy, W. D. Collins, K. W. Dixon, D. H. Douglass, C. Doutriaux, M. Free, Q. Fu, P. J. Gleckler, L. Haimberger, J. E. Hansen, G. S. Jones, P. D. Jones, T. R. Karl, S. A. Klein, J. R. Lanzante, C. Mears, G. A. Meehl, D. Nychka, B. D. Pearson, V Ramaswamy, R. Ruedy, G. Russell, B. D. Santer, G. A. Schmidt, D. J. Seidel, S. C. Sherwood, S. F. Singer, S. Solomon, K. E. Taylor, P. W. Thorne, M. F. Wehner, F. J. Wentz, and T. M. L. Wigley

(Man, all those 34 scientists on that side … and on this side … me. I’d better attack quick, while I have them outnumbered … )

I also invite anyone who has evidence, logic, theory, or data to disprove or to support my analysis to please contribute to the thread. Because at the end of this process, where I have exposed my ideas and the data and code to the attacks of anyone and everyone who can find flaws with them, I will have my own answer. If no one is able to disprove or find flaws in my analysis, I will consider it to be established science until someone can do so. Whether you consider it established science is up to you. However, it is certainly a more rigorous process than peer-review, and anyone who disagrees has had an opportunity to do so.

I see something in this nature, a web-based process, as the future of science. We need a place where scientific ideas can be brought up, discussed and debated by experts from all over the world, and either shot down or provisionally accepted in real time. Consider this an early experiment in that regard.

Three months to comment on a Journal paper is so 20th century. I’m amazed that the journals haven’t done something akin to this on the web, with various restrictions on reviewers and participants. Nothing sells journals like blood, and scientific blood is no different from any other.

So without further ado, here is my paper. Tear it apart or back it up, enjoy, ask questions, that’s science.

My best to everyone.

w.

A New Amplification Metric

ABSTRACT: A new method is proposed for exploring the amplification of the tropical tropospheric temperature with respect to the surface. The method looks at the change in amplification with the length of the record. The method is used to reveal the similarities and differences between the HadAT2 balloon, UAH MSU satellite, RSS MSU satellite, RATPAC balloon, AMSU satellite, NCEP Reanalysis, and five climate model datasets In general, the observational datasets (HadAT2, RATPAC, and satellite datasets) agree with each other. The climate model and reanalysis datasets disagree with the observations. They also disagree with each other, with no two being alike.

Background

“Amplification” is the term used for the general observation that in the tropics, the tropospheric temperatures at altitude tend to vary more than the surface temperature. If the surface temperature goes up by a degree, the tropical temperature aloft often goes up by more than a degree. If surface and tropospheric temperatures were to vary by exactly the same amount, the amplification would be 1.0. If the troposphere varies more than the surface, the amplification will be greater than one, and vice versa.

There are only a limited number of observational datasets of tropospheric temperatures. These include the HadAT2 and RATPAC weather balloon datasets, and two versions of the Microwave Sounding Unit (MSU) satellite data. At present the satellite record is about thirty years long, and the two balloon datasets are about 50 years in length.

Recently there has been much discussion of the the Santer et al. 2005, Douglass et al. 2007, and the Santer et al. 2008 papers on tropical tropospheric amplification. The issue involved is posed by Santer et al. 2005 in their abstract:

The month-to-month variability of tropical temperatures is larger in the troposphere than at the Earth’s surface. This amplification behavior is similar in a range of observations and climate model simulations, and is consistent with basic theory. On multi-decadal timescales, tropospheric amplification of surface warming is a robust feature of model simulations, but occurs in only one observational dataset [the RSS dataset]. Other observations show weak or even negative amplification. These results suggest that either different physical mechanisms control amplification processes on monthly and decadal timescales, and models fail to capture such behavior, or (more plausibly) that residual errors in several observational datasets used here affect their representation of long-term trends.

Metrics of Amplification

Santer 2005 utilizes two different metrics of amplification, viz:

We examine two different amplification metrics: RS(z), the ratio between the temporal standard deviations of monthly-mean tropospheric and TS anomalies, and Rβ(z), the ratio between the multi-decadal trends in these quantities.

Neither of these metrics, however, measures the amount of the amplification at altitude which is related to the surface variations. Ratios of standard deviations merely measure the size of the swings. They cannot indicate whether one is an amplified version of the other. The same is true of trend ratios. All they can show is the size of the difference, not whether the surface and atmosphere are actually correlated.

In order to measure whether one dataset is an amplified version of another, the simplest measure is the slope of the ordinary least squares regression line. This measures how much one temperature varies in relation to another.

Despite a variety of searches, I was unable to find published studies showing that the “amplification behavior is similar in a range of observations and climate model simulations” as Santer et al. 2005 states. To investigate whether the tropical amplification is “robust” at various timescales, I decided to calculate the tropical and global amplification (average slope of the regression line) at all time scales between three months and thirty years or more for a variety of temperature datasets. I started with the UAH and the RSS versions of the satellite record. Next I looked at the HadAT2 balloon (radiosonde) dataset. The results are shown in Figure 1.

To measure the amplification, for every time interval (e.g. 5 months) I calculated the amplification of all contiguous 5-month periods in the entire dataset. In other words, I exhaustively sub-sampled the full record for all possible contiguous 5-month periods. I took the average of the results for each time period. Details of the method are given in the Supplementary Online Material (SOM) Sections 2 & 3 below.

I plotted the results as a curve which shows the average amplification for each of the various time periods from three months to thirty years (the length of the MSU datasets). This shows the “temporal evolution” of amplification, how it changes as we look at longer and longer timescales. I show the results at a variety of pressure levels in Figure 1. In general, at all pressure levels, short term (3 – 48 month) amplifications are much smaller than longer term amplifications.

Figure 1. Change of amplification with length of observation. Left column is amplification in the tropics (20N/S), and the right column is global amplification. T2 and TMT are middle troposphere measurements. T2LT and TLT are lower troposphere. Starting date is January 1979. Shortest period shown is amplification over three months. Effective weighted altitudes (from the RSS weighting curves) are about 450 hPa for the lower altitude TLT (~ 6.5 km) and 350 hPa (~ 8 km) for the higher altitude TMT.

Tropical Observations 1979-2008

Figure 1(a). UAH and RSS satellite data. This was the first analysis done. It confirmed the sensitivity of this temporal evolution method, as it shows a clear difference between the two versions (RSS and MSU) of the MSU satellite data. Both of the datasets (UAH and RSS) are quite close in the first half of the record. They diverge in the second half.

The higher and lower altitude amplifications are very similar in both the RSS and the UAH versions. The oddity in Fig 1(a) is that I had expected the amplification at higher altitude (T2 and TMT) to be larger than at the lower altitude (T2LT and TLT) amplification. Instead, the higher altitude record had lower amplification. This suggests a strong stratospheric influence on the T2 and TMT datasets. Because of this, I have used only the lower altitude T2LT (UAH) and TLT (RSS) datasets in the remainder of this analysis.

Figure 1(b). HadAT2 radiosonde data. (Note the difference in vertical scale.) Despite the widely discussed data problems with the radiosonde data, this shows a clear picture of amplification increasing with altitude to 200 hPa, and decreasing above that. It confirms the existence of the tropical tropospheric “hot spot”, where the amplification is large. It conforms with the result expected from theoretical consideration of the effect of lapse rate. It also shows remarkable internal consistency. The amplification increases steadily with altitude up to 200 hPa, and decreases steadily with altitude above that. Note that the 1998 El Nino is visible as a “bump” in the records at about month 240. It is also visible in the satellite record, in Fig. 1(a).

Figure 1(c). HadAT2, overlaid with MSU satellite data. Same vertical scale as (a). The satellite and balloon data all agree in the first half of the record. In the latter half, the fit is much better for the UAH satellite data analysis than the corresponding RSS analysis. Note that the amplification of the UAH version is a good fit with the 500 hPa level of the HadAT2 data. This agrees with the theoretical effective weighted altitude of the T2LT measurement.

Global Observations 1979-2008

Figure 1(d). Global UAH and RSS satellite data. Note difference in vertical scale. There is little amplification at the global level.

Again, the UAH and RSS records are similar in the short term but not the long term.

Figure 1(e). Global HadAT2 radiosonde data. Note difference in vertical scale. Here we see that the amplification is clearly a tropical phenomenon. We do not see significant amplification at any level.

Figure 1(f). Global HadAT2, overlaid with MSU satellite data. Same vertical scale as (d). Once again, the satellite and balloon data all agree in the first half of the record. In the latter half, again the UAH analysis generally agrees with the observations. And again, the RSS version is a clear outlier.

Both in the tropics and globally, amplification of the levels above 850 hPa start low. After that they show a slow increase. The greatest amplification occurs at 8-10 years. After that, they all (except RSS) show a gradual decrease up to the 30 years end of the record.

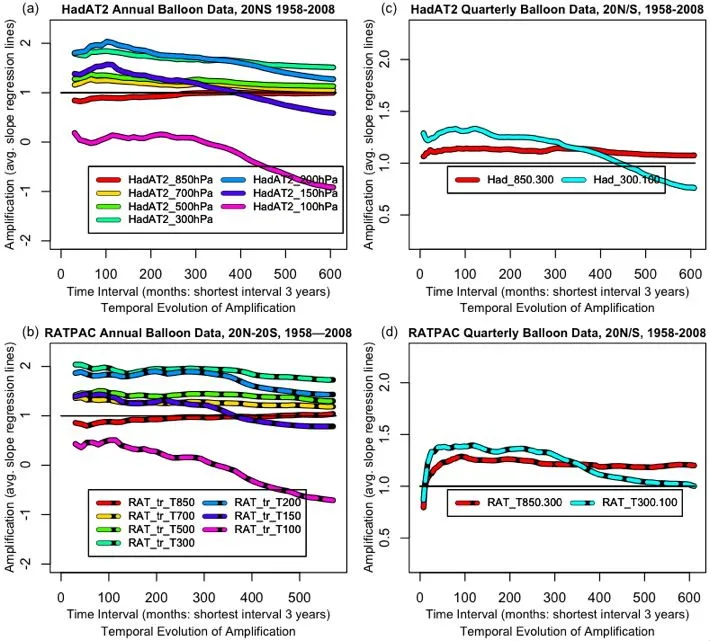

Having seen the agreement between the UAH T2LT and the HadAT2 datasets, I next compared the tropical RATPAC data with the HadAT2 data. The RATPAC data is annual and quarterly. I averaged the HadAT2 data annually and quarterly to match. They are shown in Fig. 2. Note that these are fifty-year datasets, a much longer timespan than Fig. 1.

Figure 2. RATPAC and HadAT2 Tropical Amplification, 3 years to 50 years. Left column is annual data. Right column is quarterly data.

There is very close agreement between the HadAT2 and the RATPAC datasets. The annual version shows a number of levels of pressure altitude. The quarterly version averages the troposphere down into two levels, one from 850-300 hPh, and one from 300-100 hPa. Both annual and quarterly RATPAC versions agree well with HadAT2.

Before going further, let me draw some conclusions about tropical amplification from Figs. 1 & 2.

1. Three of the four observational datasets (HadAT2, RATPAC, and UAH MSU) are in surprisingly close agreement. The fourth, the RSS MSU dataset, is a clear outlier. The very close correspondence between HadAT2 and RATPAC at all levels gives increased confidence that the observations are dependable. This is reinforced by the good agreement in Figs. 1(c) and 1(f) between the UAH MSU and the HadAT2 500 hPa level amplifications, both in the tropics and globally.

2. Figure 1(c) clearly shows the theoretically predicted “tropical hot spot”. It is most pronounced at 200 hPa at about 8-10 years. At its peak the 200 hPa level has an amplification of about 2. However, this gradually decays over time to a long-term (fifty year) amplification of about 1.5.

3. The lowest level, 850 hPa, has a short-term amplification of just under 1. It gradually increases over time to an amplification of about 1. It varies very little with the length of observations. RATPAC and HadAT2 are in excellent agreement regarding the amplification at the 850 hPa level.

4. The amplification of the next two levels (700 and 500 hPa) are quite similar. The higher level (500 hPa) has slightly greater amplification than the lower. Again, both datasets (RATPAC and HadAT2) agree very closely. This is supported by the UAH MSU satellite data, which agrees with the 500 hPa level of both the other datasets.

5. The amplification of the 300 and 200 hPa levels are also quite similar. The amplification of the higher level (200 hPa) exceeds that of the next lower level in the early part of the record. However, the 200 hPa amplification decreases over time more than that of the lower level (300 hPa). This accelerated long-term decay in amplification is also seen in the 150 and 100 hPa levels.

6. Between 700 and 200 hPa, amplification rises to a peak at around 8-10 years, and declines after that.

Observations and Models

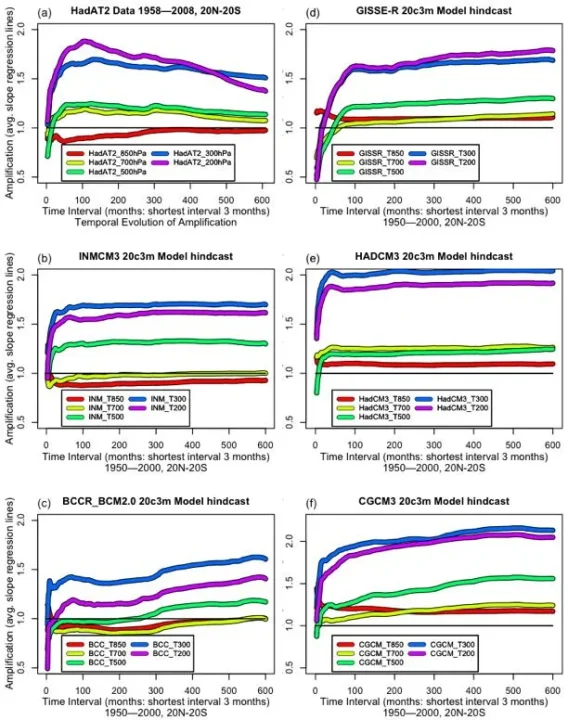

Because it is the most detailed of the observational records, I will take the HadAT2 as a comparison standard. Fig. 3(a) shows the full length (50 year) HadAT2 record. Figures 3(b) to (e) show the outputs from five climate models. These models were selected at random. They are simply the first five models I found to investigate.

In Fig. 3(a) the decline in the observed amplification at the 200 hPa level seen in the shorter 30 year record in Fig. 1(c) continues unabated to the end of the 50 year record. The 200 hPa amplification crosses over the 300 hPa level and keeps declining. The models in Fig. 3(b-f), however, show something completely different.

Figure 3. Three month to fifty year amplification for HadAT2 and for the output of various computer models.

The model results shown in Figs 3(b) to (e) were quite unexpected. It was not a surprise that the models disagreed with observations. It was a surprise that the model results varied so widely among themselves. The atmospheric amplification at the various pressure levels are very different in each of the models

In the observations, the greatest amplification is at 200 hPa at around eight to ten years. Only one of the models, the GISSE-R, Fig. 3(d), reproduces that slow buildup of amplification. Unfortunately, the model amplification continues to increase from there on to the end of the record, the opposite of the observations.

The observed amplification at all levels except 850 hPa peaks at 8-10 years and then decreases over time. This again is opposite to the models. Amplification in all levels above 850 hPa of all of the models either stay level, or they increase over time.

In the observations, amplification increases steadily with altitude from 850 hPa to 200 hPa. Not a single model showed that result. All of the models examined showed one or more inversions, instead of the expected steady increase in amplification with altitude shown in the observations.

The 850 hPa observations start slightly below 1, and gradually increase to 1. Only one model, BCCR (c), correctly reproduced this lowest level.

Variability of Observations and Models

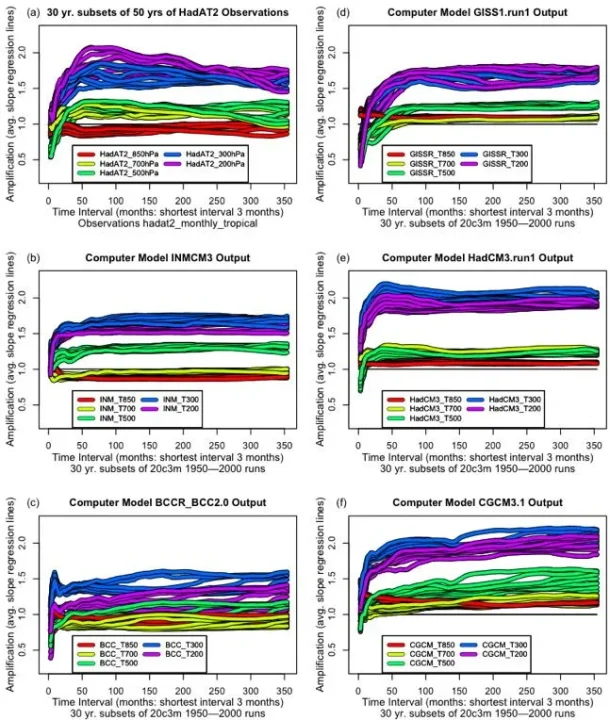

To investigate the natural variability in the amplification of both observations and models, I looked at thirty-year subsets of the various 50-year datasets. Figure 4 shows the amplification of thirty-year subsets of the datasets and model output. This shows how much variability there is in thirty year records. I show subsets taken at 32 month intervals, with the earliest ones at the back of the stack.

Once again, there are some conclusions that can be drawn from first looking at the observations, which are shown in Fig 4 (a).

1. The relationship between the various layers is maintained in all of the subsets. The lowest level (850 hPa) is always at the bottom. It is invariably below (smaller amplification than) the rest of the levels up to 200 hPa. The 700/500 hPa pair are always very close, with the higher almost always having the greater amplification. The 200 hPa level is above the 300 hPa level for all of the early part of the record. This order, with amplification steadily increasing with altitude up to 200 hPa, holds true for every one of the thirty-year subsets of the observations.

2. The 700 hPa amplification is never less than the 850 hPa amplification. As one goes lower, so does the other. They cross only at the short end of the time scale.

3. At all but the 850 hPa level, amplification peaks at somewhere around 8-10 years, and subsequently generally declines from that peak.

4. The amplification at 200 hPa is much larger than at 300 hPa at short (decade) timescales, but decreases faster than the 300 hPa amplification.

5. There is a surprising amount of variation in these thirty-year overlapping subsets. This implies that the satellite record is still too short to provide more than a snapshot of the variation in amplification.

Figure 4. Evolution of amplification in thirty year subsets of fifty-year datasets. The interval between subsets is 32 months. Fig. 4(a) is observations (HadAT2). The rest are model hindcasts.

The most obvious difference between the models and the observations is that most of the models have much less variability than the observations.

The next apparent difference is that the amplification in the models trend level or upwards with time, while the observed amplifications generally trend downwards.

Next, the pairings of levels are different. The only model which has the same pairings as the observations (700/500 and 300/200 hPa) is the HadCM3 model. And even in that model, both pairs are reversed from the observations. The other models have 700 hPa paired with (and often below) the 850 hPa level.

There is a final oddity in the model results. The short term (say four year) amplification at 200 hPa is very different in the various models. But at thirty years the models converge to a range of around 1.5 to 1.9 or so. This has led to a mistaken idea that the models reveal a “robust” long term amplification.

DISCUSSION

Having examined the changes in amplification over time for both observations and models, let us return to re-examine, one by one, the issues involved as stated at the beginning:

The month-to-month variability of tropical temperatures is larger in the troposphere than at the Earth’s surface.

This is clearly true. There is an obvious tropical “hot spot” of high amplification in the upper troposphere. It peaks at a pressure altitude of 200 hPa at about 8-10 years.

This amplification behavior is similar in a range of observations and climate model simulations, and is consistent with basic theory.

This amplification behavior is in fact similar in a range of observations. However, it is very dissimilar in a range of climate model simulations. While the observations are consistent with basic theory (amplification increasing with altitude up to 200 hPa), the climate models are inconsistent with that basic theory (higher levels often have lower amplitude than lower levels).

On multi-decadal timescales, tropospheric amplification of surface warming is a robust feature of model simulations, but occurs in only one observational dataset [the RSS dataset].

There are no “robust” features of amplification in the model simulations. They have very little in common. They are all very different from each other.

Multi-decadal amplification decreases gradually over the 50-year observational record. Three of the observation datasets (UAH, RATPAC, and HadAT2) all agree with each other in this regard. The RSS dataset is the outlier among the observations, staying level over time. This RSS behavior is similar to several of the models, which also stay level over time. One possible explanation of the RSS difference is that I understand it uses computer climate modeling to inform a portion of its analysis of the underlying MSU data.

Other observations show weak or even negative amplification.

None of the tropical observational datasets above 850 hPa show “negative amplification” (amplification less than 1) at timescales longer than a few years. On the other hand, all of the observational datasets show negative amplification at short timescales, as do all of the models.

These results suggest that either different physical mechanisms control amplification processes on monthly and decadal timescales, and models fail to capture such behavior, or (more plausibly) that residual errors in several observational datasets used here affect their representation of long-term trends.

The problem is not that the observations fail to capture the long term trends. It is that every model disagrees with every other model, as well as disagreeing with the observations.

It appears that different physical mechanisms do indeed control the amplification at different timescales. The models match the observations in part of this, in that amplification starts out low and then rises to a peak over a period of years. In most of the models examined to date, however, this happens on a much shorter time scale (months to a few years) compared with observations (8-10 years).

However, the models seem to be missing a longer-term mechanism which leads to long-term decrease in amplification. I suspect that the problem is related to the steady racheting up of the model temperature by CO2, which increases the longer term amplification and leads to upward trends. Whatever the cause may be, however, that behaviour is not seen in the observations, which decrease over time.

Conclusions

1. Temporal evolution of amplification appears to be a sensitive metric of the state of the atmosphere. It shows similar variations in the various balloon and satellite datasets with the exception of the RSS MSU satellite temperature dataset.

2. The wide difference between the individual models was unexpected. It was also surprising that none of them show steadily increasing amplification with altitude up to 200 hPa, as basic theory suggests and observations confirm Instead, levels are often flipped, with higher levels (below 200 hPa) having less amplification than lower levels.

3. From this, it appears that we have posed the wrong question regarding the comparison of models and data. The question is not why the observations do not show amplification at long timescales. The real question is why model amplification is different, both from observations and from other models, at almost all timescales.

4. Even in scientific disciplines which are well understood, taking the position when models disagree with observations that “more plausibly” the observations are incorrect is adventurous. In climate science, on the other hand, it is downright risky. We do not know enough about either the climate or the models to make that claim.

5. Observationally, amplification varies even at “climate length” time scales. The thirty year subsets of the observations showed large changes over time. Climate is ponderous and never at rest. Clearly there are amplification cycles and/or variations at play with long timescales.

6. While at first blush this analysis only applies to temperatures in the tropical troposphere, it would not be surprising to find this same kind of amplification behavior (different amplification at different timescales) in other natural phenomena. The concept of amplification, for example, is used in “adjusting” temperature records based on nearby stations. However, if the relationship (amplification) between the stations varies based on the time span of the observations, this method could likely be improved upon.

Additional Information

The Supplementary Online Material contains an analysis of amplification in the AMSU satellite dataset and the NCEP Reanalysis dataset. It also contains the data, the data sources, notes on the mathematical methods used, and the R function and R program used to do the analyses and to create the graphics used in this paper.

References

Douglass DH, Christy JR, Pearson BD, Singer SF. 2007. A comparison of tropical temperature trends with model predictions. International Journal of Climatology 27: Doi:10.1002/joc.1651.

Santer BD, et al. 2005. Amplification of surface temperature trends and variability in the tropical atmosphere. Science 309: 1551–1556.

Santer BD et al. 2008. Consistency of modelled and observed temperature trends in the tropical troposphere. Int. J. Climatol. 28: 1703–1722

SUPPLEMENTARY ONLINE MATERIAL

SOM Section 1. Further investigations.

AMSU dataset

A separate dataset from a single AMSU (Advanced Microwave Sounding Unit) on the NOAA-15 satellite is maintained at <http://discover.itsc.uah.edu/amsutemps/>. Although the dataset is short, it has the advantage of not having any splices in the record. The amplification of that dataset is shown in Fig. S2.

Figure S-1 Short-term Global Amplification of AMSU satellite data, MSU data, and HadAT2 data . The dataset is only ten years long.

Figure S-1(a). AMSU satellite data. This is a curious dataset. The 400 hPa level is a clear and dubious outlier. The distinctive “duck’s head facing right” shape of the second half of the 900 and 600 hPa levels is similar, while that of the 400 hPa level is quite different. It is very doubtful that one level would be that different from the levels above and below it. This was a strong indication of some unknown error with the 400 hPa data that is affecting the longer term amplification.

Figure S-1(b). Adjusted and unadjusted AMSU satellite data. To attempt to correct this error, I added a simple linear trend to the 400 hPa level. I did not adjust it until the amplification was right. Instead, I adjusted it until its long-term trend ended up proportionally between the long-term trends of the layers above and below. This gave the adjusted version (light green) of the amplification.

Figure S-1(c). Adjusted AMSU satellite data. After the adjustment of the 400 hPa trend, the amplifications fit together well. Curiously, despite being adjusted by a linear trend, the shape of the latter half of the 400 hPa level has changed. Now it has the “duck’s head facing right” shape of the 600 and 900 hPa levels. This unexpected change supports the idea that there is indeed an error in the trend of the 400 hPa data.

Figure S-1(d). Interpolated AMSU satellite data. Unfortunately, the referenced levels in the two datasets are at different pressure altitudes than HadAT2. To compare the AMSU to HadAT2, we need to interpolate. Fortunately, the HadAT2 dataset range (850 to 200) fits neatly inside the AMSU range (900 to 150). This, along with the similar and close nature of the signals at various levels, allows for linear interpolation to give the equivalent AMSU amplification at the HadAT2 levels. The interpolated version is shown.

Figure S-1(e). HadAT2 and RSS/UAH satellite data. The global observational data over this short period (ten years) is scattered. Also, the HadAT2 data has a more jagged and variable shape. We may be seeing the effects of the paucity of the observations. In the short-term (this is ten years and less) the RSS and UAH amplification records are quite similar. As before, both are close to the 500 hPa HadAT2 amplification.

Figure S-1(f). AMSU and HadAT2 data. Close, but not a good match. The 200, 700, and 850 hPa levels match, but 300 and 500 hPa are quite different. Overall, the satellite data seems more reliable. The AMSU data shows a gradual change in amplification with altitude. The HadAT2 data is bunched and sometimes inverted.

My conclusions from the AMSU dataset are:

1. It contains an error, which appears to be a linear trend error, in the 400 hPa level.

2. Other than that, it is the most internally coherent of the observational datasets.

3. It points up the weakness of using short (one decade) subsets of the HadAT2 dataset.

NCEP REANALYSIS

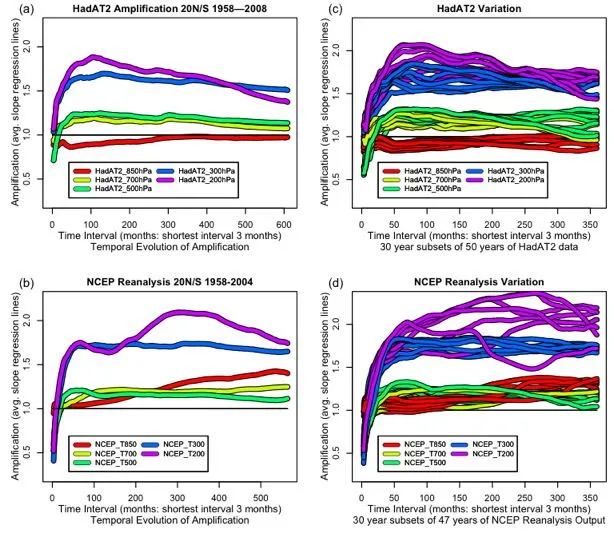

One of the attempts to provide a spatially and temporally complete global dataset despite having limited observational data is the NCEP reanalysis dataset. Figure S-2 compares the temporal evolution of amplification of HadAT2 and NCEP Reanalysis output

Figure S-2 Evolution of amplification of HadAt2 and NCAR. Left column is amplification from 3 months to 50 years, right column is amplification of 30 year subsets of the 50 year datasets. The interval between the individual realizations in the right column is 32 months.

The NCEP reanalysis data in Fig. S-2 (b) shows a fascinating pattern. The three middle levels (700,500, and 300 hPa) are close to the HadAT2 observations. The 300 hPa levels agree extremely well. And while the 700 and 500 hPa levels are flipped in NCEP, still they are in the right location and are very close to the observed values.

But at the same time, the amplification of the lowest and highest levels are way off the rails. The 850 hPa amplification starts at 1, and just keeps rising. And the 200 hPa amplification starts out reasonably, but then takes a big jump with a peak around thirty years. That seems doubtful.

The observation that there are problems at the lowest and highest levels is reinforced by the analysis of the variation of thirty year subsets in the right column of Fig. S-2. These show the 200 hPa amplification varying wildly over all of the different 30 year datasets. In one of the thirty year subsets the 200 hPa amplification dips down to almost touch the highest 850 hPa line. There is clearly something wrong with the NCEP output at the 200 hPa level.

In addition, in the full NCEP record shown in Fig. S2(b) and all of the 30 year subsets shown in Fig. S2(d), the lowest level (850 hPa) increases steadily over time. After about 20 years it has more amplification than either of the 700 and 500 hPa levels. This behavior is not seen in either the observations or any of the models.

Conclusions from the NCEP reanalysis

1. The 700, 500, and 300 hPa level of the NCEP reanalysis are accurate. The 850 and 200 hPa levels suffer from large problems of unknown origin.

2. Use of the NCEP reanalysis in other work seems inadvisable until the 850 and 200 hPa amplification problems are resolved.

SOM Section 2. Data Sources

KNMI was the source for much of the data. It has a wide range of monthly data and model results that you can subset in various ways. Start at http://climexpknmi.nl/selectfield_co2.cgi?someone@somewhere . It contains both Hadley and UAH data, as well as a few model atmospheric results. My thanks to Geert for his excellent site.

Surface data for all observational datasets is from the CruTEM dataset at http://climexp.knmi.nl/data/icrutem3_hadsst2_0-360E_-20-20N_n.dat

UAH data is at http://www.nsstc.uah.edu/data/msu/t2lt/uahncdc.lt

RSS data is at http://www.remss.com/data/msu/monthly_time_series/

HadAT2 balloon data is at http://hadobs.metoffice.com/hadat/hadat2/hadat2_monthly_tropical.txt

CGCM3.1 model atmospheric data is at http://sparc.seos.uvic.ca/data/cgcm3/cgcm3.shtml in the form of a large (250Mb) NC file.

Data for all other models is from the “ta” and “tas” datasets from the WCRP CMIP3 multi-model database at <https://esg.llnl.gov:8443/home/publicHomePage.do>

In particular, the datafiles used were:

GISSE-R: ta_A1.GISS1.20C3M.run1.nc, and tas_A1.GISS1.20C3M.run1.nc

HadCME: ta_A1_1950_Jan_to_1999_Dec.HadCM3.20c3m.run1.nc, and tas_A1.HadCM3.20c3m.run1.nc

BCCR: ta_A1_2.bccr_bcm2.0.nc, and tas_A1_2.bccr_bcm2.0.nc

INCM3: ta_A1.inmcm3.nc, and tas_A1.inmcm3.nc

As all of these are very large (1/4 to 1/2 a gigabyte) files, I have not included them in the online data. Instead, I have extracted the data of interest and saved this much smaller file with the rest of the online data.

SOM Section 3. Notes on the Function and Code.

The main function that does the calculations and created the graphics is called “amp”.

The input variables to the function, along with their default values are as follows:

datablock=NA : the input data for the function. The function requires the data to be in matrix form. By default the date is in the first column, the surface data in the second column, and the atmospheric data in any remaining columns. If the data is arranged in this way, no other variables are required The function can be called as amp(somedata), as all other variables have defaults.

sourcecols=2 : if the surface data is in some column other than #2, specify the column here

datacols=c(3:ncol(datablock)) : this is the default position for the atmospheric data, from column three onwards.

startrow=1 : if you wish to use some start row other than 1, specify it here.

endrow=nrow(datablock) : if you wish to use some end row other than the last datablock row, specify it here.

newplot=TRUE : boolean, “TRUE” indicates that the data will be plotted on a new blank chart

colors=NA : by default, the function gives a rainbow of colors. Specify other colors here as necessary.

plotb=-2 : the value at the bottom of the plot

plott=2 : the value at the top of the plot

periods_per_year=12 : twelve for monthly data, four for quarterly data, one for annual data

plottitle=”Temporal Evolution of Amplification” : the value of the plot title

plotsub=”Various Data” : the value of the plot subtitle

plotxlab=”Time Interval (months)” : label for the x values

plotylab=”Amplification” : label for the y values

linewidth=1 : width of the plot lines

linetype=”solid” : type of plot lines

drawlegend=TRUE : boolean, whether to draw a legend for the plot

SOM Section 4. Notes on the Method.

An example will serve to demonstrate the method used in the “app” function. The function calculates the amplification column by column. Suppose we want to calculation the amplification for the following dataset, where “x” is surface temperature, “y” is say T200, and each row is one month:

x y

1 4

4 7

3 9

Taking the “x” value, I create the following 3X3 square matrix, with each succeeding column offset vertically by one row. This probably has some kind of special matrix name I don’t know, and an easy way to calculate it. I do it by brute force in the function:

1 4 3

4 3 NA

3 NA NA

I do the same for the “y” value:

4 7 9

7 9 NA

9 NA NA

I also create same kind of 3X3 matrix for x times y, and for x squared.

Then I take the cumulative sums of the columns of the four matrices. These are used in the standard least squares trend formula to give a fifth square matrix:

slope of regression line = (N*sum(xy) – sum(x)*sum(y)) / (N*sum(x^2) – sum(x)^2)

I then average the rows to give me the average amplification at each timescale

This method exhaustively samples to find all contiguous sub-samples of each given length. This means that there will be extensive overlap (samples will not be independent). However, despite the lack of independence, using all available samples improves the accuracy of the method. This can be appreciated by considering a fifty year dataset. There are a number of thirty year contiguous subsets of a fifty year dataset, but if you restrict your analysis to non-overlapping subsets, you only can pick one of them … which way will give the best estimate of the true 30-year amplification?

SOM Section 5. Of Averages and Medians.

The distribution of the short-term amplifications is far from normal. In fact, it is not particularly normal at any scale. This is because the amplification is calculated as the slope of a line, and any slope is the result of a division. When the divisor approaches zero, very large numbers can result. This makes averages (means) inaccurate, particularly at the shorter time scales.

One alternative is the median. The problem with the median is that it is not a continuous function. This limits its accuracy, particularly in small samples. It also makes for a very ugly stair-step kind of graph.

Frustrated by this, I devised a continuous Gaussian mean function which outperforms the mean for some varieties of datasets, and outperforms the median on other datasets. It is usually in between the mean and median in value. In all datasets I tested, it equals or outperforms either the mean or the median.

To create this Gaussian mean I reasoned as follows. Suppose I have three numbers picked at random from some unknown stable distribution, let’s say they are 1,2, and 17. What is my best guess as to the actual underlying true center of the distribution?

Since we don’t know the true distribution, the best guess as to its shape has to be a normal distribution. With such a distribution, if we know the standard deviation, we can iteratively calculate the mean.

To do so, we start by calculating the standard deviation, and picking a number for the estimated mean, say 5. If that is the mean, the numbers (1,2,17) when measured in standard deviation units is (-0.6, -0.5, 1.2). Each of those standard deviations has an associated probability. I figured that the sum of these individual probabilities is proportional to the probability that the mean actually is 5.

I then iteratively adjust the estimated mean to maximize the total probability (the sum of the three individual probabilities. It turns out that the gaussian mean of (1, 2, 17) calculated by my method is 3.8. This compares with an average for the three numbers of 6.7, and a median of 2.

In general there is very little difference between my gaussian mean, the arithmetic mean, and the median. However, it is much better behaved than the mean in non-normal datasets. And unlike the median, it is a continuous function, which gives greater accuracy.

All three options are included in the amp() function, with two of them remarked out, so you can see the effects of each one. The only noticeable difference is that the mean is not very accurate at short time scales, and the gaussian mean and the mean are not discontinuous like the median.

I am too scientifically illiterate to comment on your thesis, but I applaud your courage in testing it in open forum. Good luck!

This is a welcome step in the right direction, Mr. Watts. I hope you receive the required response from your peers. I hope, too, that you will share the results in good time. A layman’s version for the climate science challenged readers like myself would be greatly appreciated if your busy schedule allows.

Good luck.

REPLY: Just so this is made clear, I did not author this. Willis Eschenbach did, as shown clearly under the title – Anthony

Could you provide a pdf?

reading it in print makes the review easier.

You may also consider one of the EGU (European Geophysical Union) journals, they are open access and the reviews are public, plus any registered reader can comment on the paper:

http://www.egu.eu/publications/list-of-publications.html

You have done an amazing amount of work here Anthony. I don’t have time to read all of it right now, but it seems thorough, as is all you do. There appear to be 5-6 pictures that are not displayed, just a box with a red ‘X’, and ‘show picture’ doesn’t bring them through. Congratulations on finishing this.

REPLY: Just so this is made clear, I did not author this. Willis Eschenbach did, as shown clearly under the title – Anthony

After laying your heart and soul on the line, all I have to offer is this:

I think it’s pretty when the yellow and green squigley lines twist around each other.

Don’t hate me

This is a very elegant way of presenting your paper and I suggest you present it in similar wording to the RealClimate community. How could they refuse and not applaud such a pleasant approach to peer review?

Nice work, Willis. I hope that your efforts will get some sort of open response from climate scientists, even if it’s pure rebuttal. That’s how understanding improves. I agree that an “open source” philosophy and open review via the internet will be an almost inevitable trend for science moving forward. It will be resisted, of course, but those resisting will eventually lose.

This paper is by Willis Eschenbach while I think it is great that Anthony has printed it you may want to address comments to Willis. I doubt I will I have only briefly skimmed it so far.

Willis E:

A few people seem to think Anthony wrote this — they must not know you or Anthony very well. 🙂

A couple of questions:

1. I assume you need a surface temperature baseline to which tropospheric temperature is compared to calculate amplification. Perhaps I missed it, but what data set are you using for the surface temperature?

2. To me it looks like all of the models show tropospheric amplification over all time scales analyzed. To me that would mean that tropospheric amplification is a robust result of the models — the details are not the same but the general concept of amplification is robust. Your definition seems to be different. Why do you say that tropospheric amplification (in general) is not a robust feature of the models?

Could there be some sort of relationship involving shear? Poleward, there is generally more shear than equatorward.

Heating in the tropics seems to vary with the interrelation of humidity and barometric pressure in the vertical. Can you model one and observe the other and vice versus?

Nice work and great idea to throw this out to the community at large. It would be nice to have a “sticky link” at the sidebar to follow the comments over time.

Willis,

Just some standard research style writing suggestions.

1. Do a search for the word “I” in your paper and change every sentence to remove the first person singular. For example change “I next did a graph of comparisons.” to “A graph of comparisons was completed.”

2. This paper is not narrow enough and should probably be done in parts or just narrowed down. You should be able to narrow down your topic to one simple sentence (two or fewer commas and no connecting “and”s) and have that sentence clearly define what the paper is about. If you can’t, your topic is not narrow enough. Your title should be that sentence. Your’s does not tell me what the paper is about and is waaayyy to broad.

3. Within each paper, follow standard research article section protocol. For example, ,

1. Abstract (should include the conclusion, it is NOT a teaser)

2. Introduction of general nature of paper (not too specific here)

3. Literature Review (and especially at the end of this review, what has not been done in all these papers but should be done)

4. Problem Statement (the why of this research endeavor, why do we need what you have done)

5. Research design, equipment, software, and procedures (is it a meta analysis, application of a new statistical technique, experiment, etc, and then the steps you followed)

6. Results (cold hard data)

7. Discussion (what cold hard data means)

8. Conclusions (new or expanded insight)

9. Recommendations (additional research needed, IE next step that everyone should consider)

10. Appendix (your codes, data, etc)

Or something like that.

4. Buy a manual on writing research papers. This paper betrays the writer as someone who has not done this before, or at least does not do it for a living. There are several good ones that will help you through the step by step process of writing up your study. Your offering here is in dire need of editing, needs to be MUCH shorter, and should be considered a first and rough draft only.

Kudos to Mr Eschenbach for giving his brainchild up to slaughter with such reckless abandon, I do enjoy observing the valiant efforts of both sides in a battle of science. I will certainly look forward to reading the discussion but I second the call for a layman’s version of this paper. I am still new to climate science and need some explanation.

Thank you Mr Watts for hosting this here – maybe it could develop into a science battle tab at the top with even more papers being discussed openly, warts and all. This kind of accessibility and transparency is exactly what (climate) science needs right now. – As long as you provide some form of commentary for the spectators, that is.

Unless I’m completely misreading the header to this article (“Guest Post by Willis Eschenbach”), we should be thanking Anthony for the forum (and bandwidth), but thanking Willis for the article itself.

Good work, Willis. My chops are way too rusty to make any substantive comments, but I commend you on your efforts. I hope it is the first step in a long and fruitful endeavor.

You have no error bars on your plots. Given the uncertainties attached to the observational data you use it’s necessary to present the error bars.

Why do you only select 5 models? The IPCC model output database contains output from all GCMs involved in the IPCC process. If you are only going to select a small subset of models you should give proper justification for doing so, demonstrate that this selection does not affect your results or use all of the available data. In addition, have you used ensemble model output or are you using single realisation model output? You discuss the inter model variability yet you include no discussion of how these model runs were setup. It’s therefore difficult to assess the inter model variability. This is compounded by the apparent lack of an assessment of the ensembles and the ensemble means from each model. These are pertinent points in light of your conclusion that inter model variability is unexpected and at odds with theory. You focus on trying to defend your central conclusion in a more thorough way.

You’ve not included your references in this version. Including references would ease the burden on the reviewer.

Some of your plots have very confusing legends. You should have either a standard legend at the bottom of each panel or have legends which describe each dataset in each plot.

REPLY: please read the section on posting comments, in red above. – Anthony

Recommend that you remove this comment. IMHO, it is inconsistent with the spirit that you purport to want to create.

“(Man, all those 34 scientists on that side … and on this side … me. I’d better attack quick, while I have them outnumbered … )”

As the averaging length gets up towards 1/2 of sample length surely end effects swamp any signal?

I don’t think potential responders to your invitation really needed all those admonishments not to do this, and not to do that. Hardly seems like a good way to start off a scientific discussion.

Pamela Gray (09:08:11):

“…”

Thanks a lot, Pamela. I needed your style writing guideline.

@Willis… I’ve read your paper and found it very useful. From the next paragraph in your paper:

“In the observations, amplification increases steadily with altitude from 850 hPa to 200 hPa.”

My first thought was on the natural explanation of this phenomenon through the processes of induced emission and negative induced emission. It would explain the amplification of the tropical upper troposhere temperature. The algorithm should be integrated to models.

Willis: Do you have any thoughts as to why there is a long term decline in the amplification factor as shown by the observational record? And having very limited statistical faculties – could I ask: if there was a ‘phase change’ mid or late in the data record, would this drag the trend line down – put another way, does your calculation of trend obscure a cyclic pattern?

I ask because from my reading of the climate models, they do not incorporate the major ocean cycles – such as the PDO, which appears to have a global signature over roughly 30 year time periods.

Fascinating work Willis! Certainly some food for thought…

My own opinion (at this point) of the “amplification” issue is that it provides strong evidence for a bias in the long term trends of the surface data sets. That amplification seems to vary with timescale is, essentially, my reasoning!

http://www.climateaudit.org/phpBB3/viewtopic.php?f=3&t=740

It seems to me that you reckon that their is some physical reason why the amplification declines in the long term…would not a warm bias in the surface data introduce precisely that effect? Readers here are surely aware of the problems with the surface data by now. 😉

BTW John Christy comments extensively of Santer et al. 08 in his EPA submission:

http://icecap.us/images/uploads/EPA_ChristyJR_Response_2.pdf

Willis,

I don’t have time right now to go through your interesting paper in detail, but a couple of quick comments are in order.

1) At some point you need to deal with issues of uncertainty in the models and data (systematic, random weather noise, etc.). Scientists such as Santer and Schmidt will argue that everything is in agreement because the uncertainties are so large. Specifically in your case, are the downward slopes of the amplification versus time for the nonRSS data statistically different from the flat or increasing slopes of the models?

2) I am having a hard time reconciling your graphs with those from Santer et al. and Douglas et al. For example, Santer et al. (2008) shows trends for HadAT2 and RATPAC-A that are below the surface trends for ALL pressures. This would imply an amplification below 1 for those data sets, but you have amplifications well above 1at 200 and 300 hPa. Why the discrepancy?

3) Using only two balloon data sets opens you to charges of “cherrypicking,” especially since these two were among the lowest-trend sets in Santer. What happens if your analysis is applied to RAOBCORE, IUK, and RICH, to name some others from Santer et al. (2008)?

I hope these comments are useful.

Willis, regarding: ” 4. Even in scientific disciplines which are well understood, taking the position when models disagree with observations that “more plausibly” the observations are incorrect is adventurous. In climate science, on the other hand, it is downright risky. We do not know enough about either the climate or the models to make that claim. ”

I’m certain that E. T. Jaynes (among others ) would agree wholeheartedly with this statement. Ref: “Probability Theory As Extended Logic”, http://bayes.wustl.edu/ .

Willis

great work though I am not really qualified to comment on the science to the layman it makes sense. I hope you keep up the good work.